Abstract

Background

The outcomes of planned implementation efforts have been mixed, with some applications failing to achieve the desired change or impact. While reasons for mixed findings in implementation research are multifaceted (e.g., Damschroder et al., 2009, 2022), how the implementation strategy (IS) was deployed (i.e., integrity) and its impact on the implementation outcomes of evidence-based innovations (EBIs) is under-studied and warrants further clarification.

Method

This article builds on the IS fidelity and mechanisms of change literature to create the Implementation Strategy Integrity Framework (ISIF). The ISIF was developed by a set of implementation science researchers in the Justice Community Opioid Innovation Network seeking to document the role of implementation strategies in influencing EBI outcomes.

Results

The authors identified four areas of documentation and measurement to examine the role of IS integrity on EBI outcomes. (a) Implementation Strategy Rigor (i.e., adherence, dose, and quality) requires those implementing the strategy/strategies to specify them, document adherence to the planned strategies, quality of execution, and any adaptations made. (b) Target User Responsiveness documents the extent and quality of targeted users’ participation in IS activities and how well the target users perform their roles in conducting actions intended by the implementation strategies. (c) Target Mechanism Activation notes to what degree the implementation strategies achieved the intended impact(s) on targeted factors that facilitate EBI use. Finally, (d) these three areas are combined with selected Inner and Outer Context variables to explain IS integrity’s potential moderating and mediating effects on EBI outcomes.

Conclusions

A framework that can define the integrity of an IS and allow for its subsequent use as an explanatory variable in EBI outcomes is necessary for better elucidating mechanisms of action. The ISIF offers a structured approach to operationalize, measure, and evaluate the application and related impacts of implementation strategies.

Plain Language Summary

Implementation science seeks better ways to implement evidence-based innovations (EBIs) (clinical practices, tools, forms, healthcare delivery processes, etc.) discovered in research trials. It relies on a dedicated strategy or set of strategies to promote consistent and appropriate use of these EBIs. Researchers have found that sometimes, how the implementation strategy (IS) or sets of strategies are applied can influence the uptake and effectiveness of the EBI. The Implementation Strategy Integrity Framework (ISIF) seeks to document how the IS or strategies are applied to better explain why a given strategy or strategy produces desired results in some situations while failing to do so in others. The authors identified four areas of documentation and measurement to examine the role of IS integrity on EBI outcomes. (a) Implementation Strategy Rigor (i.e., adherence, dose, and quality) requires those implementing the strategy/strategies to specify them, document adherence to the planned strategies, quality of execution, and any adaptations made. (b) Target User Responsiveness documents the extent and quality of targeted users’ participation in IS activities and how well the target users perform their roles in conducting actions intended by the implementation strategies. (c) Target Mechanism Activation notes to what degree the implementation strategies achieved the intended impact(s) on targeted factors that facilitate EBI use. Finally, (d) these three areas are combined with selected Inner and Outer context variables to explain the potential moderating and mediating effects of IS integrity on EBI outcomes.

Implementation Strategy (IS) Integrity Background

Developing and evaluating rigorous approaches to scaling up and sustaining evidence-based innovations (EBIs) are needed to close the research-to-practice gap. EBIs such as the use of motivational interviewing (Miller & Rollnick, 2012), the Youth Aware of Mental Health Program for suicide prevention (Wasserman, 2015), diabetic eye screening (American Diabetes Association, 2020), and many more are underutilized despite ample research evidence. While the importance of implementation science in supporting the delivery of EBIs is increasingly recognized, the outcomes of such implementation efforts have been mixed, with some studies failing to achieve the desired change or impact on targeted EBI implementation outcomes or facilitators of EBI implementation outcomes (Damschroder et al., 2009; Powell et al., 2014). To better understand factors that drive variation in EBI implementation outcomes, we propose the Implementation Strategy Integrity Framework (ISIF) to allow systematic exploration of the potential moderating or mediating role of IS integrity on EBI implementation and its resulting effectiveness outcomes.

In the context of a relatively young and evolving field, research studies employing Type 3 Hybrid Effectiveness-Implementation trial designs have found some IS to be ineffective (Forsetlund et al., 2021) and other strategies to have mixed results with respect to EBI implementation success (Ivers et al., 2012; Schouten et al., 2008). Emerging evidence suggests that a potential cause of this variation resides in how the strategy is applied (Alley et al., 2023; Assefa et al., 2019; Bond et al., 2008; Schwalbe et al., 2014). Fidelity in the use of EBIs has been shown to directly impact effectiveness outcomes of EBIs (Bond et al., 2008; Moyers et al., 2005). Similar to the importance of fidelity in deploying EBIs, there is a critical scientific and practical requirement to define and evaluate the integrity of implementation strategies in various environments. This involves assessing how these strategies are carried out and adhered to within supportive or hindering contexts. Understanding this integrity is crucial for comprehending the differences in EBI implementation strategies and their resulting outcomes. The purpose of using the term “integrity” is to connect the term with implementation strategies and differentiate it from EBI fidelity, which describes whether/how an EBI is delivered as intended under real-world conditions. Integrity, on the other hand, acknowledges the need to adjust to needs and circumstances in the implementation process (LeMahieu, 2011), especially when the target user may need to make inner context variables (e.g., provide staff with relevant training; create space on caseloads) more amenable to EBI use. Hence, “IS integrity” is defined as the rigor with which each strategy is delivered, whether/how target users act upon it to aid the implementation process, and its impact on EBI outcomes.

While a comprehensive understanding of the role of IS integrity lags behind the conceptual development of EBI fidelity, it is not unreasonable to view IS integrity as analogous to EBI fidelity in importance (Akiba et al., 2022). Measurement of IS integrity would allow for exploration of the extent to which wide variations in observed effects across Type 3 implementation studies are moderated or mediated by integrity and examination of Type III error (i.e., null findings resulting from IS integrity failure; Akiba et al., 2022). While the reasons for outcome variance in implementation research are multifaceted (Damschroder et al., 2022), the quality of the IS itself in improving EBI implementation outcomes remains relatively unstudied and warrants attention (Lewis et al., 2018). Indeed, Akiba et al. (2022) highlight the need to adequately describe implementation strategies, their action targets, and the mechanisms most responsible for changes in implementation outcomes. In particular, the rigor and the intended or desired impact with which implementation strategies are delivered is a key ingredient for predicting successful EBI uptake (Lewis et al., 2018; Slaughter et al., 2015). IS integrity is a critical precursor for understanding implementation outcomes—for example, penetration, effectiveness, adoption, implementation, and maintenance (Alley et al., 2023; Assefa et al., 2019; Bond et al., 2008; Slaughter et al., 2015; Stein et al., 2023)—as well as the targets or effectiveness outcomes of the EBI itself.

In implementation research, efforts have been made to classify implementation strategies into taxonomies (e.g., EPOC; ERIC) to systematically identify, define, and test them (Mowatt et al., 2001; Powell et al., 2015). Moreover, recommendations for describing and reporting implementation strategies introduced the dimensions of the action, actor, action targets, temporality, and dose as important elements to be captured (Proctor et al., 2013). “Action” is the IS or strategies being applied. The “actor” is the person(s) delivering the IS, and the “action targets” are the individual(s) or organizational entities participating in the IS (i.e., those whose behavior or procedures/protocols are to be changed). “Temporality” specifies when the strategy is to be used, and “dose” is the frequency and duration of the IS.

The limited research that has examined how to define and operationalize implementation strategies and their impact on EBI outcomes has drawn concepts and constructs from EBI implementation fidelity models (Slaughter et al., 2015). The term “rigor” in the ISIF refers to whether an IS was applied in practice as intended by the implementers or actors (Proctor et al., 2013). Constructs of Implementation Strategy Rigor include both adherence—whether the IS was applied by the implementer as designed and specified for temporality and dose (Garner et al., 2020) or adapted to adjust to changing conditions (Allen et al., 2012) or new cultural groups (Baumann et al., 2015); and quality—whether the IS was delivered in a way appropriate to achieving what was intended (Slaughter et al., 2015; Stein et al., 2023).

A framework that explains the moderating or mediating role of IS integrity on EBI implementation and its resulting effectiveness outcomes is needed (Akiba et al., 2022; Gearing et al., 2011) to assist researchers, practitioners, and policymakers in understanding potential reasons behind the success or failure of a specific EBI in the context of a specific implementation effort. This article proposes the ISIF to address this gap. Analogous to the concept of fidelity with which the EBI is deployed, we focus on the need to define and assess the integrity with which an IS is deployed and acted upon in the context of an environment that either supports or inhibits its success, to better understand variation in EBI implementation and targeted outcomes.

Below, we (a) present the framework definitions and dimensions, (b) describe the process of developing the framework, and (c) apply the framework to different implementation strategies and contexts through two case examples.

Framework Definitions and Dimensions

The Importance of Understanding the Impact of the Implementation Strategy Target User Responsiveness and Target Mechanism Activation on Implementation Outcomes

The need to better understand and assess the target user's role in IS application calls for constructs that focus on target user participation in the actor-initiated IS (i.e., responsiveness), to activate mechanisms (e.g., process, activity, or mental model) that lead to EBI use, goals, and outcomes as well as behaviors that directly facilitate EBI use, goals, and outcomes. The level of target user responsiveness to and engagement with the implementation strategies is assessed for target user participation. Typical items for this part of ISIF are: Did the target users attend training sessions, take online modules, or otherwise engage as intended in prescribed activities? The target user's responsiveness to the implementation strategies is intended to directly impact EBI use or stimulate “mechanisms of change” that support EBI implementation outcomes such as uptake, reach, and fidelity (Smith et al., 2020). Examples of potential mechanisms of change (Lewis et al., 2018) are awareness, self-efficacy, and skill-building. As described in more detail below, one example of applying an IS and achieving the intended result via hypothesized mechanisms is the implementation of medications for opioid use disorders (MOUDs) within jail settings. In this example, the IS includes delivering educational activities and stigma reduction training intended to increase perceived salience and awareness of the benefits of MOUD to target users (e.g., jail staff) in order to enhance their motivation to adopt it. Neither a decrease in stigma nor an increase in motivation are EBI outcomes, but both are intended to facilitate the EBI outcome of MOUD uptake, which in turn will affect clinical outcomes. Together, IS specification, IS Rigor (adherence/quality), IS Target User Responsiveness, and Target Mechanism Activation should lead to achieving Implementation Outcomes as described in Proctor's framework (Proctor et al., 2011).

Development of the Framework

In sum, defining and measuring IS integrity requires first defining the IS, determining whether it was delivered with rigor (adherence, dose, and quality), whether the target users participated in the IS and performed their roles, and then examining whether the strategy achieved its intended impact directly on EBI use or via a mechanism of change designed to support EBI implementation. Perhaps because of this daunting complexity, the extant literature has concentrated chiefly on whether and how implementation strategies are defined and reported (Garner et al., 2020; Powell et al., 2019; Proctor et al., 2013), but has not addressed how IS integrity may impact EBI implementation outcomes or how Inner Contextual factors such as lack of leadership support, staff turnover or Outer Context factors such as regulations, external community support, and funding may interact with IS integrity. The ISIF is designed to address these gaps by accounting for moderating or mediating effects of the IS on EBI implementation outcomes.

The ISIF was developed as part of the Justice Community Opioid Innovation Network (JCOIN) (Ducharme et al., 2021), a collaborative network in which 13 multisite clinical trials—ranging from Implementation-Effectiveness Hybrid type 1 to Hybrid type 3 designs—are examining strategies intended to improve connections to opioid use disorder (OUD) treatment for individuals engaged in the criminal legal system (courts, juvenile justice, probation/parole, jails, prisons). Each research project uses different implementation strategies to address targeted elements of the transition through the stages of the cascade of OUD care (Williams et al., 2019) among individuals who are transitioning between criminal-legal and community treatment settings and who use or are at risk for using opioids. JCOIN's Implementation Science Workgroup, launched in late 2019, developed the ISIF based on a need to measure planned and unplanned changes to each clinical trial's implementation strategies and their impact. These issues were made evermore complex in response to the unprecedented disruptions and resulting study changes caused by the COVID-19 pandemic. The workgroup included 15 members; nine were female (n = 60%) and all were Caucasian. The group comprised academic faculty and a doctoral student with combined experience in implementation science, family studies, criminal justice, public policy, engineering, and behavioral health.

Developing the ISIF began with a literature review of the EBI fidelity field and the limited literature on IS integrity. Items were borrowed from these literature sets, gaps with respect to understanding IS on EBI implementation outcomes were identified, and an initial draft of the ISIF was developed. Then, the workgroup followed an iterative process to develop the ISIF by testing the ISIF model against the experience of multiple JCOIN studies until the presented model was developed. Because JCOIN includes multiple studies and strategies (including strategies derived from implementation efforts unrelated to substance use or criminal legal systems), it presents a useful test case for developing a broadly applicable framework. As such, the ISIF was developed through a process that included multiple rounds of feedback based on JCOIN investigators’ expertise and experiences in EBI implementation.

A Conceptual Framework for IS Integrity

The ISIF highlights three components integral to assessing IS integrity: (a) rigor in IS delivery, (b) target user responsiveness, and (c) target mechanism activation. These three areas are combined with select Inner and Outer Context variables to explain the moderating and mediating effect of IS integrity on EBI outcomes. The active components of ISIF are the blue boxes in Figure 1, overlaid with Proctor et al.'s (2011) implementation outcomes framework.

Implementation Strategy Integrity Framework

IS Specification

Assessing IS integrity begins with naming and specifying the IS to be applied. Researchers are encouraged to map their chosen strategy onto the ERIC taxonomy (Powell et al., 2015). Then, following the guidance outlined by Proctor (Proctor et al., 2013), the IS should be specified by describing who or what will deliver the strategy (actor), to whom the strategy will apply (target users), how the strategy will be applied (for adherence and quality), when the strategy will be applied (temporality), and the amount of the strategy per target user (dose). For specification and application, the ISIF model can be used to focus on an individual IS, several implementation strategies simultaneously to disaggregate the EBI implementation outcome effect, or an IS bundle where strategies are combined and evaluated together.

Rigor in IS Delivery (Is Deliverer/Actor)

Following specification, rigor in the delivery of the IS should then be assessed via measurement of the strategy adherence and quality. The assessment of adherence in the ISIF—“Were the implementation strategies and their key components delivered as intended?”—incorporates temporality and dose, or “Were the strategies delivered at the timepoint(s) and with the frequency proposed?” Rigor implies that the IS's delivery will closely adhere to how it was planned at study onset. In the JCOIN context, it became clear that planned implementation strategies needed to adjust in response to a rapidly changing environment, notably the onset of COVID-19 and required safety protocols. When adaptations occur, researchers should first determine if the adaptations fall within acceptable limits. That is, do they fundamentally change the strategy? Second, these adaptations should be documented so that the revised approach becomes the new standard for assessing rigor. Third, the factors that caused the adaptations, including the unintended disruptions and facilitators, should be documented. Lastly, the implementation strategies should be documented to determine the quality of IS delivery, whether the delivery included all the desired elements, and whether there was variation in how the components were delivered. The Framework for Reporting Adaptations and Modifications-Enhanced Implementation Strategies (FRAME-IS) (Miller et al., 2021) is a notable framework for documenting such IS adaptations.

Target User Responsiveness

This portion of the ISIF evaluates the role of the target users during the implementation process. The evaluation of the impact on intended target users may vary for different target audiences. In the example of implementing MOUD in criminal legal settings, there may be different activities or impacts for healthcare staff versus correctional officers versus facility leadership (e.g., sheriffs/wardens). The impact of implementation strategies on users can be evaluated by assessing, first, the extent of the target users’ participation or whether they engaged in the implementation strategies as planned. For example, if two 3-hr trainings were delivered as intended, one would assess whether the target users attended the full 6 hr of training. Second, assess the quality of participation—essentially, “Did target users sufficiently engage in learning activities and learn what they were supposed to learn?” Assuming that active learning was a key element, postsession measurement of knowledge acquired is one method for assessing the degree of learning that occurred through the IS. Lastly, assess whether target users performed their intended role by completing any tasks or applying implementation strategies that they were requested to apply—for example, if program staff were asked to form a change team and conduct rapid cycle, plan-do-study-act, or pilot tests of incremental changes to clinical workflow, did they perform these tasks?

Target Mechanism Activation

A mechanism is a process, activity, or mental model (or opinion(s)) that causes or facilitates an EBI outcome via the target user. In EBI fidelity, the end goal is proper delivery of the purported active ingredients of the EBI that are thought to improve health outcomes. The ISIF shares these same end goals, specifically that the IS ultimately improves EBI use. However, there is a need to assess an interim outcome, namely, whether the IS achieved its intended impact on the implementation process. In causation models (Lewis et al., 2018), this impact is considered a “mechanism” by which the strategy promotes EBI use and manifests through changes to motivation, culture change, and skill-building, for example. Overall, the desired goal or impact is improving EBI use. As reflected in Figure 1, the action taken from the IS by the target users may mediate, through “Activation of the Target Mechanism,” the relationship between the strategy and the targeted EBI outcomes.

Contextual (Inner and Outer) Variables

Including contextual variables in the ISIF acknowledges that conditions within and outside the implementation setting can hinder or facilitate how strategies are delivered, received, or acted upon. Multiple implementation science conceptual frameworks—including the Diffusion of Innovations theory (Rogers, 2010), the Consolidated Framework for Implementation Research (Damschroder et al., 2009, 2022), the Promoting Action on Research Implementation in Health Services (PARIHS) Framework (Rycroft-Malone, 2004), and the Theoretical Domains Framework (Atkins et al., 2017)—describe how factors within the organization (Inner Context) and outside the organization (Outer Context) can impact the targeted EBI uptake. Contextual factors such as lack of leadership support, staff turnover or buy-in/support (Inner Context), or regulations, external community support, and funding (Outer Context) can inhibit the integrity of the implementation strategies and EBI outcomes. Specific to the JCOIN studies, in response to COVID-19, jails and prisons required safety protocols to be established before researchers could engage with individual detainees and staff; this required research teams to change from planned in-person engagement to videoconferencing or other methods for delivering their implementation strategies. Similarly, changes in state or local policy that impacted how people were processed through the criminal legal system necessitated adaptations to when, where, and how certain implementation strategies were deployed. Hence, Inner and Outer Context elements can be introduced in ISIF to account for their impact on implementation strategies and EBI outcomes. This model views Inner and Outer Context variables as moderators because of their ability to affect the direction and strength of the relationship between the IS and implementation outcomes (see Stein et al., 2023, for an example).

EBI Implementation and Outcomes

ISIF is informed by Proctor et al.'s (2011) implementation outcomes framework and thus shares the same ultimate outcomes. The key issue is whether implementing a given EBI was associated with desired implementation, service delivery, and patient health outcomes and whether and how IS integrity may have influenced those distal outcomes. Hence, at times, the impact of the target user performing their intended roles will map directly onto the EBI implementation outcomes (as noted in the direct line in Figure 1) and, at other times, will impact EBI implementation outcomes through target mechanism activation. As with the Proctor (Proctor et al., 2011) framework, not all outcomes are intended to be impacted or assessed. Instead, the intent is to provide a model connecting all the relevant elements that should be considered in the context of the study and the implementation strategies being used.

ISIF Case Examples

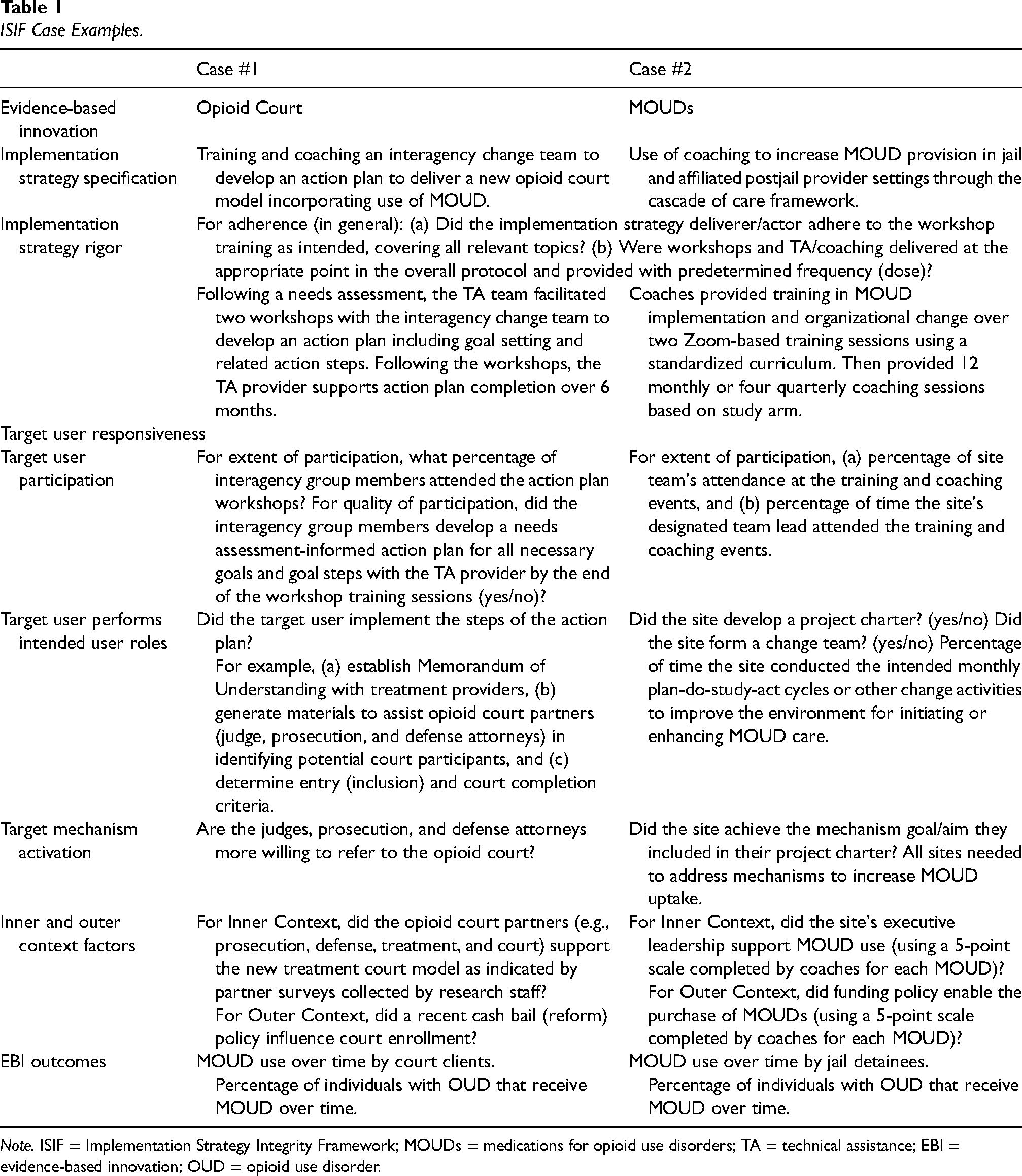

This section provides two examples of the application of the ISIF to JCOIN studies. For simplicity, these examples each focus on a single IS, and they use ISIF as an evaluation framework. Table 1 summarizes how the two cases would be assessed in the ISIF framework

ISIF Case Examples.

Note. ISIF = Implementation Strategy Integrity Framework; MOUDs = medications for opioid use disorders; TA = technical assistance; EBI = evidence-based innovation; OUD = opioid use disorder.

Case Example #1: Implementing Opioid Court Services

The first case example is a Hybrid 2 implementation-effectiveness study examining the impact of an implementation intervention on opioid court participants’ outcomes (initiation of MOUD, retention in court, and recidivism). An external technical assistance (TA) provider for a state court system provides coaching and TA on the delivery/implementation of a novel treatment court model: the opioid intervention court (protocol described in (Elkington et al., 2021). For the focus of the IS integrity analysis, the TA provider (actor) is training and coaching an interagency change team (i.e., court participant group, target users) to develop an action plan that details the practice change necessary to deliver the new opioid court model.

Case Example #2: Coaching Strategies to Promote Uptake of EBIs

A separate study is a Hybrid 3 IS comparative effectiveness trial that tested the use of external coaching compared to ECHO-based case management support on MOUD (buprenorphine, methadone, naltrexone) provision in jail and affiliated post-jail provider settings over a 12-month period (Molfenter et al., 2021). For the focus of the IS integrity analysis, the TA provider (actor) is training and coaching an agency change team (i.e., jail or community treatment provider group) and/or training alone via the ECHO platform. In the coaching strategy, sites were encouraged to implement the MOUD cascade of care elements that would activate target mechanisms that, in turn, would mediate MOUD use. Accompanying this example is a completed ISIF worksheet in the Supplementary Appendix.

Discussion

Implementation science is a quickly evolving field facing many challenges due to the complexity of implementation settings (Damschroder & Lowery, 2013), outcomes (Proctor et al., 2011), and strategies (Powell et al., 2015). Models and frameworks are needed to isolate factors that can explain whether an EBI's success or failure was the result of the EBI's effectiveness, the effectiveness of the strategies used to implement it, both, or neither. The ISIF builds on several conceptual pieces, including long-standing EBI fidelity-of-use frameworks (Carroll et al., 2007; Dusenbury et al., 2003); guidelines calling for more comprehensive IS reporting (Garner et al., 2020; Proctor et al., 2013; Rudd et al., 2020); Proctor's implementation outcomes nomenclature (Proctor et al., 2011); and foundational work regarding IS fidelity (Slaughter et al., 2015) and mechanisms of change (Lewis et al., 2018). Hence, while the case examples in this article focus on strategies used to promote MOUD use in criminal legal systems, the intent of this model is to be generalizable to all implementation strategies and settings, as well as other EBIs.

One of the more challenging issues in applying such a framework is that most implementation strategies contain an amalgamation of several strategies versus a single discrete strategy. While this article presents examples of how the ISIF can be used with discrete implementation strategies, the same or similar approaches can be used to account for multiple or bundled implementation strategies. One option is to select and closely monitor what is projected to be a dominant strategy. Case Example #2 (coaching) demonstrates this approach (Molfenter et al., 2021). Another approach is to “stack” implementation strategies. In this approach, one could conduct the integrity analysis with each identified IS to assess each strategy's impact and determine which strategies are necessary and sufficient. On the other hand, one might simultaneously investigate the combined impact of multiple implementation strategies using an additive score of the different strategies as assessed by the framework (Knight et al., 2022). Another combined strategy approach could use a checklist that accounts for the aspects of the different implementation strategies used and applied to each portion of the ISIF, and in so doing, identify which strategies are critical to the successful implementation of the EBI. The Veteran's Health Administration (VA) uses this approach to apply and monitor their Implementation Facilitation Framework (Kirchner et al., 2022; Smith et al., 2022). By focusing here on discrete implementation strategies, we offer the ISIF as a starting point for considering the complexities of various approaches to implementation and their impacts.

Within the JCOIN initiative, the ISIF has been used as a tool to assess the effect of planned IS changes on EBI implementation outcomes. For the eventual quantification of the relationships within ISIF, structural equation modeling will be one potential approach to enumerate the constructs and relationships within the framework. Another approach is to use more basic regression modeling to assess the framework's direct relationships and moderating or mediating effects while controlling for select contextual variables. Model validity testing will require large data sets, including sample size considerations regarding staff within organizations, organizations within systems, and multiple systems. Therefore, we recommend using theory to guide analyses of relationships among constructs, which naturally may include underpowered analyses. Using theory may lead to more confirmatory hypothesis testing with well-funded, well-powered studies and the ability to pool results from smaller studies during meta-analyses to develop stable effect sizes, perform statistical significance testing, and test the limits of the ISIF model proposed here. These shorter- and longer term goals require implementation research studies to clearly specify: (a) Implementation Strategy Rigor and the implementation strategies to be delivered in terms of adherence, temporality, dose, and quality; (b) Target User Responsiveness via the user's engagement with the IS and the intended impact of the implementation strategies (e.g., learning information, applying a skill); (c) Target Mechanism Activation; and (d) Inner and Outer Context Variables (e.g., funding, leadership, etc.) that may impact integrity of the implementation strategies and moderating and/or mediating effect on the targeted EBI outcomes.

In the interim, the intent is for ISIF to be used in both research and real-world practice—that is, as a guide in gathering additional details to understand how the implementation process might influence the ultimate success or failure of the implementation strategies and their impact on EBI uptake and effectiveness. Additionally, the ISIF allows for documentation and assessment of implementation process interaction with Inner and Outer contextual factors, an area for further development of the framework. In particular, understanding the moderating or mediating effects of Inner and Outer contextual factors on the IS is a critical next step in understanding the causal relationship between the IS and EBI outcomes (Lewis et al., 2018). Measure identification to assess for ISIF would ideally be made prospectively, although retrospective measure identification could be made as needed. It may be helpful to track progress and feed this data into real-time decision-making as it relates to implementation strategies and the implementation process (e.g., documenting adaptations with FRAME-IS (Miller et al., 2021) or another approach (Haley et al., 2021). A worksheet is provided in the Supplementary Appendix to help users apply the ISIF.

Conclusions

The specification, delivery, and measurement of implementation strategies in a reproducible manner is necessary to support practices related to scale-up and sustainment of EBIs within and across settings. Therefore, a framework that can define and establish the integrity of implementation strategies and determine their use as explanatory variables in EBI uptake and outcomes is warranted. ISIF offers a structured approach to operationalizing, measuring, and evaluating the process and related impacts of IS integrity. This approach can be used with studies testing implementation strategies and for reporting findings from Hybrid type 3 trials. The next steps include thorough testing, ongoing iteration, and qualitative analysis, working to increase the rigor of the framework, and refining, validating, and testing the ISIF using data from a diverse set of implementation projects.

Supplemental Material

sj-docx-1-irp-10.1177_26334895241297278 - Supplemental material for A conceptual framework for assessing implementation strategy integrity

Supplemental material, sj-docx-1-irp-10.1177_26334895241297278 for A conceptual framework for assessing implementation strategy integrity by Todd Molfenter, Lori Ducharme, Lynda Stein, Steven Belenko, Shannon Gwin Mitchell, Dennis P. Watson, Matthew C. Aalsma, Peter D. Friedmann, Jennifer E. Becan, Bryan R. Garner, Jessica Vechinski, Alida Bouris, Emily Claypool and Kate Elkington in Implementation Research and Practice

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Work on this manuscript was supported by the following grants, all awarded as part of the Justice Community Opioid Innovation Network, by the National Institute on Drug Abuse as part of the NIH HEAL Initiative: U2CDA050097 (TM, JV), U01DA050442 (LS, SB), UG1DA050077 (SGM), UG1DA050070 (MCA), UG1DA050067 (PDF), UG1DA050074 (JEB), UG1DA050069 (BRG), UG1DA050065 (DPW), UG1DA050066 (AB, EC), UG1DA050071 (KE). LD is the assigned NIDA Science Officer for JCOIN, and her role as collaborator is consistent with the project’s funding as a cooperative agreement. The funding agency otherwise had no role in study design, data collection and analysis, decision to publish, or manuscript preparation. The contents of this publication are solely the responsibility of the authors and do not represent the official views of NIDA or the NIH HEAL Initiative.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.