Abstract

In this article, we describe and implement the Brock, Dechert, and Scheinkman (1987, Working paper) test of independence of the elements of a time series.

1 Introduction

The question of whether time-series data are independently distributed has a long history in econometrics. In Stata,

The idea behind the BDS is fairly simple. If the data are independently and identically distributed (i.i.d.), then for a given distance ε, the probability that the difference between pairs of m-dimensional points is less than or equal to ε will be a constant. This probability is denoted Cm (ε) and is known as the correlation integral (Grassberger and Procaccia 1983). The intuition of the BDS test is that with i.i.d. data, Cm (ε) will simply be the product of the individual pairs, so Cm (ε) = C 1(ε) m . However, the sample analogues of these quantities will not satisfy this condition exactly. The BDS test provides a formal statistical evaluation of the significance of this divergence.

Although the intuition of the BDS test is straightforward, calculating the BDS statistic is not easy, and it is even more challenging to perform the computations with the speed necessary to make bootstrap resampling feasible. To complement earlier implementations of the test, LeBaron (1997) describes a fast algorithm and provides C source code to implement the test. The initial component of LeBaron’s approach is based on an efficient sorting algorithm that, for a time series of length T, requires O(T log T) operations and hence pays dividends for large values of T . The main disadvantage of this fast algorithm is that it is not completely transparent.

By contrast, the approach to compute the BDS test described in this article offers transparency of operation because it does not require sorting of the data to estimate the correlation integral. Furthermore, the implementation in Mata makes the procedure accessible on any machine running Stata. This desire to facilitate ease of use does imply a speed penalty. Consequently, our variant is intrinsically O(T 2), which makes it considerably slower than LeBaron’s algorithm when applied to a lengthy time series. However, the absence of overhead costs makes the approach efficient for smaller datasets.

In addition to describing the command that implements the test, we also outline an elegant simplification for the computation of the variance of the BDS statistic.

2 The BDS test

The correlation integral introduced by Grassberger and Procaccia (1983) is a method for measuring the frequency with which temporal patterns are repeated in data. Given observations of a time series xt, t = 1,…T, the m-history of the time series is

A time series expressed in this form is described as being embedded of dimension m, with m = 1 representing the case where only the original time series is considered. The sample correlation integral for a given ε > 0 and embedding dimension m = 1 is

where n = (T − m + 1) and I(S) is the indicator function taking the value 1 if S is a true statement and 0 otherwise. For ease of notation, the dependence of this quantity on ε is suppressed. Of course, if ε is chosen so that all pairs satisfy the condition, then

Strictly speaking, if there are T data points, the computation of

The correlation integral

Therefore, the mth-order correlation integral computes the joint probability

For a given value of ε, define Ij,k

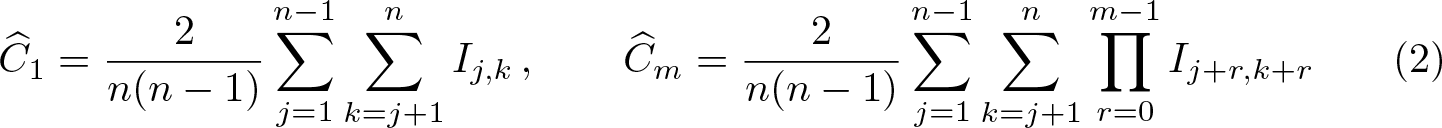

= I(|xj − xk| ≤ ε). Then,

in which occurrences of ε have been suppressed for representational simplicity.

The BDS test proceeds by noting that under the assumption of i.i.d. data, this probability will simply be the product of the individual probabilities for each pair if the observations are independent. Under this null hypothesis, it follows from (3) that

The BDS test provides a formal basis for judging the size of this error. Given a value for ε, Brock et al. (1996) defined the BDS statistic as

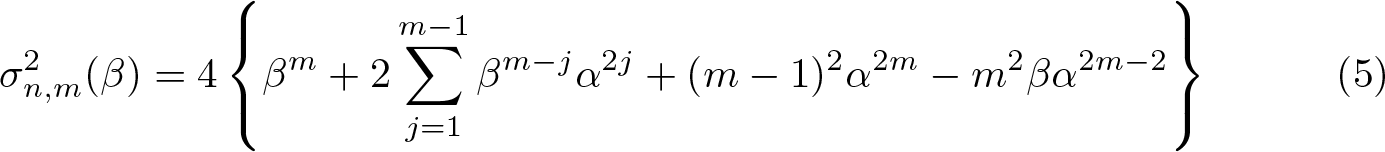

where the standard deviation σn,m is computed from the variance

in which α and β are defined as

Brock et al. (1996) demonstrate that under the null hypothesis of i.i.d. data, this statistic converges in distribution to the standard normal

for a given ε.

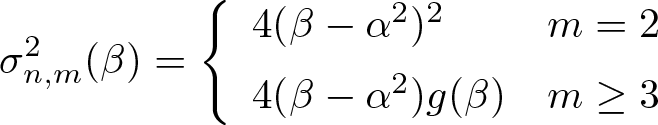

3 Simplifying the computation of the variance

It is clear from (3) that the expression for

A straightforward calculation indicates that

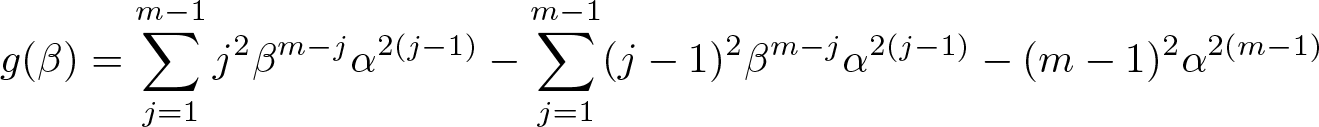

where g(β) is the polynomial of degree (m − 1) with expression

The simplest way to continue the analysis of

The second summation is reindexed by replacing j − 1 with j to obtain

Both summations are now combined to give

The case m = 2 can be incorporated into (6) to derive the final result

4 The bds command

The

4.1 Syntax

Before using the

Note that varname may not contain gaps within the specified sample. varname can contain time-series operators. The command can be applied to one unit of a panel.

4.2 Options

The command supports the following options:

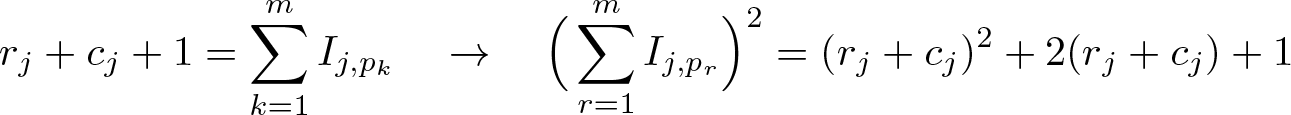

4.3 Stored results

5 The algorithm

Given ε > 0, the quantity Ij,k has value 1 if |xj − xk| ≤ ε and 0 otherwise. The first step in the computation of the BDS statistic is to create a lookup table, which in Stata can be visualized as an upper triangular matrix of one-byte integers with T rows and T columns. The (j, k)th entry of this matrix is 1 if |xj − xk| ≤ ε and 0 otherwise. 3 The (k, k)th diagonal entry of this lookup matrix compares |xk − xk| with ε and therefore is 1 for all values of k.

5.1 First-order correlation integral

The sample estimate of the first-order correlation integral is the expected value of Ij,k taken over all values of j and k satisfying j < k ≤ n, or equivalently, the fraction of all pairs (xj, xk ) for which |xj − xk| ≤ ε. Put simply, this is the total count of all the nonzero entries in the upper triangle of the lookup matrix, excluding the main diagonal divided by the total number of distinct pairings, namely, n(n − 1)/2.

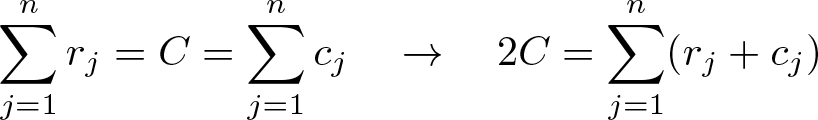

One way to think of this total count is as a sum of row counts r 1 , r 2 ,…, rn , in which rj denotes the sum of the jth row of the lookup matrix, excluding the contribution from the main diagonal. The alternative view is to think of the total count as a sum of column counts c 1 , c 2 ,…, cn , in which cj denotes the sum of the jth column of the lookup matrix, excluding the contribution from the main diagonal. In the former, rn = 0, and in the latter, c 1 = 0. Both strategies will give the same total count, but each will be composed of different partial counts. The explicit expressions for rj and cj are, respectively,

The total number of counts, say, C, is therefore

and the estimated value of the first-order correlation integral is therefore 2C/n(n − 1).

Value of α

The usual decomposition of a double summation into diagonal and off-diagonal contributions allows α in the first expression in (4) to have representation

Value of β

The calculation of the value of β begins by noting that (4) may be usefully rewritten as

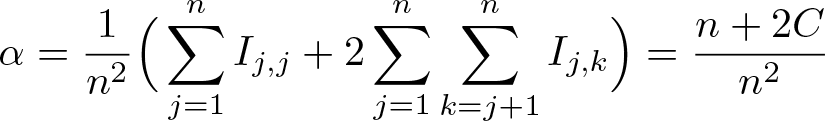

This representation indicates that the value of β is constructed from a count of all triples (xi, xj, xk ) for which Ii,jIj,k = 1, including the possibilities i = j, k = j, or both. Suppose that this counting process has reached xj . Then, the previous analysis has demonstrated that there are m = rj + cj + 1 data points within ε of xj , including xj itself. Clearly, the value of m will depend on j, but to maintain representational clarity, we suppress this dependence. Furthermore, suppose that these m data (which include xj ) are xp 1 ,…, xp m , ignoring again the dependence of p 1 ,…, pm on j.

Importantly, xp 1 ,…, xp m all share the property that Ij,p 1 = Ip 1 ,j · · · = Ip m ,j = Ij,p m = 1 for a fixed value of j. Consequently,

However, by construction,

In conclusion,

5.2 Higher-order correlation integrals

The sample estimate of the correlation integral at embedding dimension m ≤ M is the expected value of all quantities of type

taken over the n(n − 1)/2 possible values of (j, k) for which 1 ≤ j < k ≤ n, or equivalently, the fraction of all pairs (xj, xk ) for which expression (7) is 1, that is, Ij + p,k + p = 1 for all values of p satisfying 0 ≤ p < m. Given a pair (xj, xk ), the contributions made by that pair to the correlation integrals at each depth of embedding may be calculated simultaneously by noting that

This means that the computational penalty involved in the calculation of higher-order correlation integrals is small, provided the contributions from each pair, say, (xj, xk ), at all requested levels of embedding are done simultaneously.

The matrix representation of the lookup table allows (8) to be computed efficiently. Because (k +p)−(j +p) = k −j = r is independent of p (and consequently the depth of embedding), the value of Ij + p,k + p is the entry in the matrix lookup table at row (j + p) and column (j + p) + r. In overview, the contributions to the estimated correlation integral at embedding depth m can be visualized as the sum of the products of m consecutive elements of the super diagonals of the matrix lookup table taken over the entire upper triangle (that is, excluding the main diagonal) for values of m from m = 2 to m = M. The estimate of the correlation integral is this sum divided by n(n − 1)/2.

6 Empirical applications

6.1 Sunspots

Sunspots are regions on the surface of the sun with magnetic field strengths thousands of times stronger than the earth’s magnetic field. They appear as dark spots on the surface of the sun and typically last for several days. The data, yt , which are the annual averages of daily sunspot numbers from 1700 to 2017, were compiled by the Solar Influences Data Analysis Center in Belgium. The series is plotted in figure 1. This series not only is the longest directly observed index of solar activity but also has interesting time-series properties.

Plot of average annual sunspots series from 1700 to 2017

The sunspot numbers have been of interest to climatologists who hypothesized a link between sunspot activity and climate change. White and Liu (2008) provide evidence that the solar cycle may be the trigger for El Niño and La Niña episodes from 1900–2005, suggesting that higher solar activity implies weaker and less frequent El Niño events. This view is controversial, and no generally accepted statistical link has been established. The so-called Maunder Minimum 4 between 1645 and 1715 was a period in which sunspots were scarce and the winters harsh, strongly suggesting a link between solar activity and climate change. The current view is that there has been no significant long-term upward trend in solar activity since 1700. This implies that rising global temperatures since the industrial revolution cannot be attributed to increased solar activity.

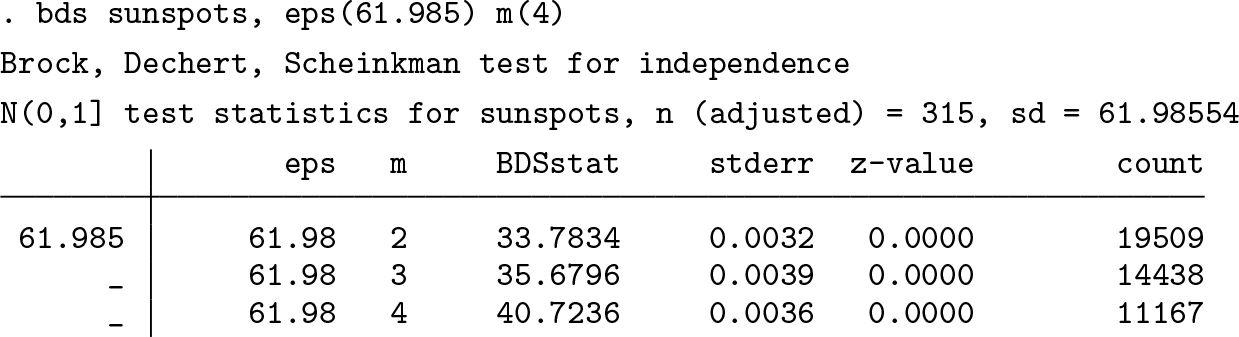

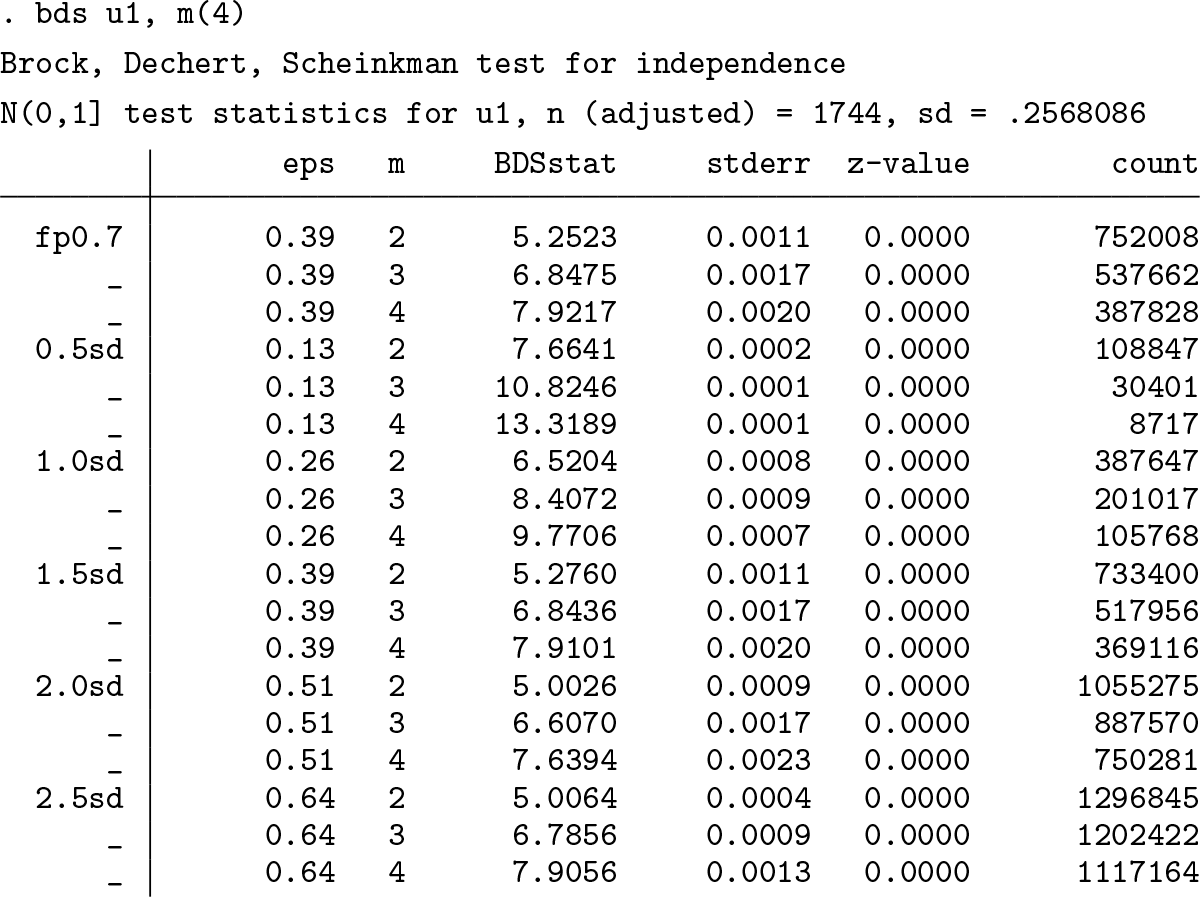

A simple application of the BDS test to the annual sunspot data confirms the strong rejection of the null hypothesis of i.i.d. data. The value of ε is set to one standard deviation of the data, and the maximum embedding dimension is 4.

In fact, the sunspot data have given rise to many attempts to use nonlinear modeling to capture the key features of the time series. In particular, many different self-exciting threshold autoregressive models have been proposed in the literature and applied to the sunspot data. Consider the following model, which is similar to those of Tong and Lim (1980) and Battaglia and Orfei (2005):

Fitting the model using the Stata command

The optimal threshold value returned by the search is k = 60.7, which is slightly larger than the values reported by Tong and Lim (1980) and Battaglia and Orfei (2005), although they use annual data over a shorter sample period in their work and include a much more complex dynamic structure. The autoregressive coefficients in both regimes have a somewhat similar pattern in terms of sign, although the difference in their sizes is slightly more marked in regime 2. These results suggest a rather complex dynamic pattern, although it is possible to conjecture that the large positive first-order autocorrelation coefficient is offset by a much smaller negative second-order autocorrelation coefficient, which implies that the process persists a little longer in regime 2.

The residuals from the threshold regression are plotted in figure 2. The first impression is that there is less structure in the residuals than in the original series, but the real question to be addressed is whether the residuals are now i.i.d. Application of the BDS, again with a maximum embedding dimension of 4 specified as one of the options, yields the following results:

Residuals obtained from fitting the model in (9) using the annual sunspot data

For choices of ε up to two standard deviations, the test statistic is significant and the null hypothesis of i.i.d. is strongly rejected. This result is a warning that the apparent lack of structure obtained from a visual impression can be misleading. Interestingly, at 2.5 standard deviations, the null hypothesis cannot be rejected. It may be that this choice of ε is simply too large for these data.

6.2 U.S. equity returns

Consider the example given in Hurn et al. (2020), in which real U.S. equity returns are to be forecast using an autoregressive [AR(1)] model based on the assumption of normality. The model is

If the observed values rt are indeed generated correctly according to this simple model, then the transformed quantity

takes values in the unit interval [0, 1] because of the fundamental property of Φ(·), which is the cumulative distribution function (CDF) of the standard normal distribution. This transformation is known as the probability integral transform (Diebold, Gunther, and Tay 1998). Furthermore, Rosenblatt (1952) demonstrates that if the model is correctly specified, the probability integral transform, ut , will be independent and uniformly distributed on the unit interval. The null hypothesis that the model is correctly specified can then be tested in terms of the BDS test applied to ut .

Using monthly observations for the period January 1871 to September 2016 on real equity returns, we find estimation of the model gives the following results. 5

The probability integral transform is given by

in which

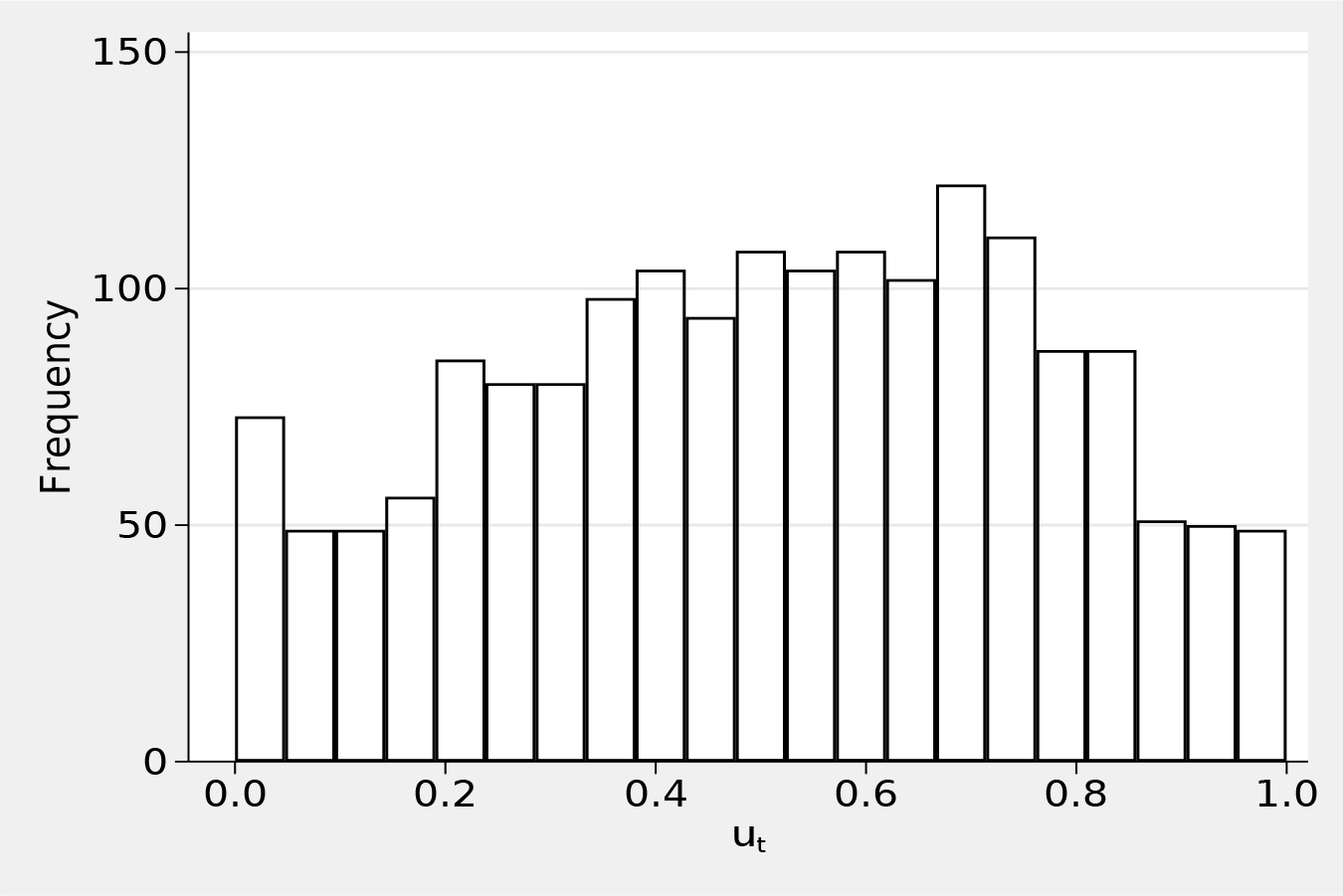

The null hypothesis is rejected, and the conclusion is that the AR(1) model of equity returns is misspecified because ut is not i.i.d. A histogram of the transformed time series, ut , given in figure 3 suggests that the distribution of ut is also not uniform. The interior peak of the distribution of ut and also the peak at zero suggest that equity returns, rt , are not consistent with the specification of an AR(1) model with normally distributed errors.

Probability integral transform applied to the forecast errors of the AR(1) model of United States equity returns, January 1871 to September 2016

An interesting feature of the results is that the BDS statistic is much smaller for the choice of ε based on the fraction of pairs rather than on the standard deviations. This may be suggestive that the fraction of pairs method for choosing ε is more robust to the distribution of the underlying series.

7 Conclusion

In this article, we introduced the

The fact that the procedure does not use compiled code but is written in Mata to run on all machines on which Stata is loaded means that the routine is computationally less efficient when the sample size is large. Consequently, this routine is not suitable for large-scale simulation studies. Two empirical examples demonstrated how the routine is implemented in practice.

8 Programs and supplemental materials

Supplemental Material, sj-zip-1-stj-10.1177_1536867X211025796 - The BDS test of independence

Supplemental Material, sj-zip-1-stj-10.1177_1536867X211025796 for The BDS test of independence by Christopher F. Baum, Stan Hurn and Kenneth Lindsay in The Stata Journal

Footnotes

8 Programs and supplemental materials

To install a snapshot of the corresponding software files as they existed at the time of publication of this article, type

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.