Abstract

Series of reports on AI misconduct from multiple renowned organizations have triggered a surge of public awareness, calling for more responsible and sustainable use of AI. Noting socially responsible AI (SRAI) as a new concept in the field of PA and HRD, we delved into the subject through an integrative literature review of 75 articles and incorporated three sustainability concepts into AI-enabled PA: corporate social responsibility (CSR), environment, social, and governance (ESG), and UN sustainable development goals (SDGs). Through a stakeholder view, we identified major SRAI stakeholders (subjects) and analyzed their essential considerations (objectives), the impediments of AI technology (causes), and the solutions (means). The analysis illuminated key requirements and considerations for enhancing AI-enabled PA to SRAI-empowered PA towards sustainability. Moreover, we proposed a holistic framework to thoroughly understand SRAI in theory and provided practical implications, recommendations, and future research directions for HRD scholars and professionals.

People analytics (PA) is an emerging frontier of HRD innovation, which emphasizes “decision science” based on data and evidence instead of subjective, unexplainable human intuition or so-called “gut feelings” (Ghatak, 2022; Yoon, 2018, 2021). In HRD, PA has been widely practiced and researched to help organizations in various areas such as job analysis, competence analytics, employee sentiment analysis, expertise estimation, applicants sorting, performance management and evaluation, turnover prediction and retention, onboarding and training, coaching, career planning, and many other HRD related activities (DiClaudio, 2019; Garcia-Arroyo & Osca, 2021; Ratnam et al., 2023; Tursunbayeva et al., 2018; van den Heuvel & Bondarouk, 2017; Shrivastava et al., 2018). However, HRD professionals still need to overcome many challenges on the way of adopting and implementing PA, including safeguarding the interests of its stakeholders (Angrave et al., 2016; Ratnam et al., 2023, Bondarouk et al., 2017). It is especially the case when PA is enabled by Artificial intelligence (AI).

In 2015, Amazon used AI to screen job applicants’ resumes and predict the likelihood of a candidate’s success in the company. The AI algorithms were later found to be biased against women because the AI was trained by data from the company’s past ten years of hired employees, which came as no surprise, predominantly consisted of men (Dastin, 2018). Similar instances of biased algorithms have been reported in Google and Facebook’s job advertisements, the U.S. criminal justice system, and Princeton Review’s education pricing for being discriminatory in terms of age, ethnicity, and the socio-economic status (Angwin et al., 2016; Angwin & Larson, 2015; Horwitz, 2021). Furthermore, data leakage has also raised serious concerns over AI-enabled PA given AI is largely fueled by data. For example, in March 2018, the share price of Facebook fell more than 24 percent only in a week and lost over $134 billion in market value due to the Cambridge Analytica scandal, in which millions of personal data from Facebook users had been exploited by the political consulting firm for tailored advertising campaigns during the 2016 U.S. presidential election without proper consents (Cabañas et al., 2020). These events highlighted how AI-enabled PA could pose organizations at risks of distrust among its stakeholders and how corporate’s irresponsibility can lead to severe consequences for both the organizations and the society at large. Additionally, the recent news of Elon Musk and the leading AI experts signing an open letter to call for a pause on AI (Narayan et al., 2023) also reflects this growing public demand for thorough considerations of social and ethical implications associated with AI. This emerging sociotechnical phenomenon underscores the importance of understanding socially responsible AI (SRAI) to ensure AI is leveraged in a manner that aligns with human values, addresses potential risks, and promotes the sustainable development of our society as a whole (Cheng et al., 2021; Tambe et al., 2019).

In the contexts of PA and human resource development (HRD), understanding SRAI is especially important because AI-enabled PA is a high-stakes application of AI and its outcomes could significantly affect people’s lives (Rudin, 2019,Meijerink et al., 2021). However, despite the surging demands, the concept of SRAI is still new and not well understood in HRD. Moreover, existing PA literature has been found to be overly optimistic and overlooking critical risks and ethical concerns (Andersen, 2017; Chowdhury et al., 2022; McCartney & Fu, 2022; Tursunbayeva et al., 2018). As the importance of ethical and responsible use of AI in PA cannot be overstated, this research aims to bridge the knowledge gap in existing literature and provide guidance to HRD scholars and practitioners on implementing SRAI-empowered PA, thereby contributing to organizations’ sustainability efforts.

Conceptualizing SRAI

Despite the increased focus that has motivated scholars to explore the concept of socially responsible AI (SRAI), prior studies are somewhat scattered and fail to present the full scope of the concept. For example, Cheng et al. (2021) defined Socially Responsible AI Algorithms (SRAs) by adapting AI to Carroll’s (1991) Corporate Social Responsibility (CSR) pyramid model while other scholars approached SRAI by exploring the potential linkage between AI and other sustainability-related concepts such as Environmental, Social, and Governance (ESG) (Sætra, 2021) and the United Nation’s (UN) Sustainable Development Goals (SDGs) (Di Vaio et al., 2020). Recognizing the need for a more comprehensive conceptualization of SRAI, we aim at incorporating AI with these three interrelated concepts that are essential to sustainability: CSR, ESG, and SDGs to unveil the true components of SRAI and foster a more sustainable future driven by SRAI practices in PA.

First, AI is a broad concept that encompasses all technologies and techniques that aim to enable machines to perform tasks that require human intelligence (Russell & Norvig, 2016). Though the development of AI is hardly a recent initiative, its success had not been recognized widely until machine learning posed a key breakthrough and revolutionized the AI history. Machine learning, which includes techniques such as supervised, unsupervised, and reinforcement learning, achieves AI by enabling machine to learn and generate results through four overarching phases: 1) data phase; 2) modeling phase; 3) results and evaluation phase; and 4) application and implication phase. In the data phase, data is gathered, proceed, and input for machine learning modeling. In the modeling phase, the machine learning algorithms are trained, and the training model is developed from the input data. In the result and application phase, the performance of the model is evaluated, often measured by measuring errors/accuracy. Based on this evaluation, adjustments may be implemented to enhance the model performance before applying the results to decision making. In reinforcement learning, these adjustments can involve fine-tuning the reward system, adjusting exploration-exploitation trade-offs, or refining the learning algorithm to enable the model to learn and improve over time. Finally, the applications and decisions are made based on the machine learning results followed by the social implications which could turn into either positive or negative impacts on people and the society. Given the uncertainties in AI’s potential implications, SRAI becomes crucial in guiding and ensuring responsible AI implementations that steer the implications towards a positive direction. This includes maximizing positive impacts, promoting the greater good, and effectively mitigating negative social consequences and potential harms of AI applications. Therefore, before further exploring SRAI, it is instrumental to understand sustainability and seek its synergy with AI to fully grasp and harness the concept of SRAI.

The idea of sustainability has recently gained renewed momentum and become “a hot topic” in business since its interception by the Brundtland Commission of the United Nations in the 1980s. It emphasizes the impact of organizations on the social and environmental spheres and assesses organization performance in line with sustainable development goals (Kramar, 2022). Organizations therefore ought to be responsible not only for their bottom line but also for those who contribute to the bottom line, namely, the environment, community, and society. The success of a company is no longer judged only by its short-term profits, but by its projected performance for future generations. Embracing the essence of sustainability, the role of SRAI is not limited to promote economic growth; it also aims to be accepted by all stakeholders, including owner, designer, user, manufacturer, environment, and society as an ethical means (Zhao, 2018). Moreover, as more and more calls for the awareness of stakeholders for social good as well as the encouragement for organizations to voluntarily add contractual clauses to regulate corporates’ AI-aided production and programs (Krkač, 2019), the closely related organizational profiles of sustainability, which include CSR, ESG, and SDGs, play a pivotal role in shaping the framework and understanding of SRAI.

The concept of CSR started in the 1920s and was defined by Frederick (1960) as “a public posture towards society’s economic and human resources and a willingness to see that those resources are used for broad social ends and not simply for the narrowly circumscribed interests of private persons and firms” (p.60). It was further defined by Van der Wiele et al. (2001) as “the obligation of the firm to use its resources in ways to benefit society, through committed participation as a member of society, taking into account the society at large and improving welfare of society at large independent of direct gains of the company” (p. 288). The latter definition linked the concept of CSR more closely to another hot topic in the literature of corporate finance: environmental, social and governance (ESG) investment. The recent trend of ESG investment has drawn the increasing attention and interest from corporate managers and investors (Dathe et al., 2022, Van der Wiele et al., 2001). The difference between ESG and CSR include the add-on of the governance explicitly and environmental considerations (Gillan et al., 2021, p.2). On the other hand, CSR delves deep into the social dimension, immersing itself in the realms of legal, ethical, and philanthropic responsibilities, compelling corporations to strive towards becoming a better corporate citizen (Carroll, 1991). Moreover, the UN Sustainable Development Goals (SDGs) constitute a comprehensive framework of objectives that member countries of the United Nations have pledged to achieve by 2030, with an aim to foster global sustainability on multiple fronts (UN United Nations, 2022). In the UN 2030 Agenda, there are 17 SDGs outlined to address three sustainability respects: economic (SDG #1, 2, 3, 8, 9), social (SDG #4, 5, 10, 11, 16, 17), and environmental (SDG #6, 7, 12, 13, 14, 15) (Kostoska & Kocarev, 2019; Rodenburg et al., 2021). Taken together, the ideas of CSR, ESG, and SDGs present a natural fit with overlapping components such as economic, social, and environmental aspects and ultimately, they all target sustainability. Moreover, the social component is further detailed in CSR, including legal, ethical, and philanthropic levels in a hierarchical order. Furthermore, by incorporating AI into the CSR/ESG/SDGs profile, we formulate SRAI with five key requirements and considerations towards sustainability: 1) economic; 2) legal; 3) ethical; 4) philanthropic; and 5) environmental. This inclusive definition of SRAI serves as a guiding framework for our review, ensuring a comprehensive analysis of AI’s impact on social responsibility and sustainable development. AI system that is responsible for the impacts of its decisions and activities on society and the environment and contribute to sustainable development through fulfilling stakeholders’ economic, legal, ethical, philanthropic, and environmental considerations and requirements.

Research Questions

Our inclusive SRAI definition provides the key components of SRAI and helps us understand how SRAI can contribute to sustainable development. Given the context-specificity of AI as well as the fact that SRAI is still a foreign concept in HRD and PA, we adopt Cheng et al.’s (2021) queries of responsible AI algorithms (SRAs) to operationalizes the concept of SRAI in the context of PA in a comprehensive fashion. The queries that Cheng et al. (2021) proposed to examine whether an AI implementation meets the requirements of SRAI contain four essentials: subjects, causes, means, and objectives. First, Subjects refer to the stakeholders affected by AI implementations, such as the organizations, supervisors, and current or potential employees. Second, Objectives reflect the economic, legal, ethical, philanthropic, and environmental values expected by the stakeholders and society as a whole. Third, Causes suggest the impediments of current AI technology that create societal problems such as measuring errors, bias, and privacy. Finally, Means are the technical and non-technical methods or interventions that can prevent, inform, or mitigate potential risks and harm to the stakeholders and maximize long-term interests. Guided by the queries of Cheng et al. (2021) about SRAs, we developed four research questions:

Who are the major stakeholders when implementing AI in PA? (Subjects);

What are the economic, legal, ethical, philanthropic, and environmental considerations and requirements when implementing AI in PA? (Objectives);

What are the impediments of AI that may cause AI-enabled PA to fail the economic, legal, ethical, philanthropic, and environmental considerations and requirements? (Causes); and

What are the solutions that can address the identified causes and help AI-enabled PA attend the economic, legal, ethical, philanthropic, and environmental requirements by institutionalizing SRAI in PA? (Means)

Method

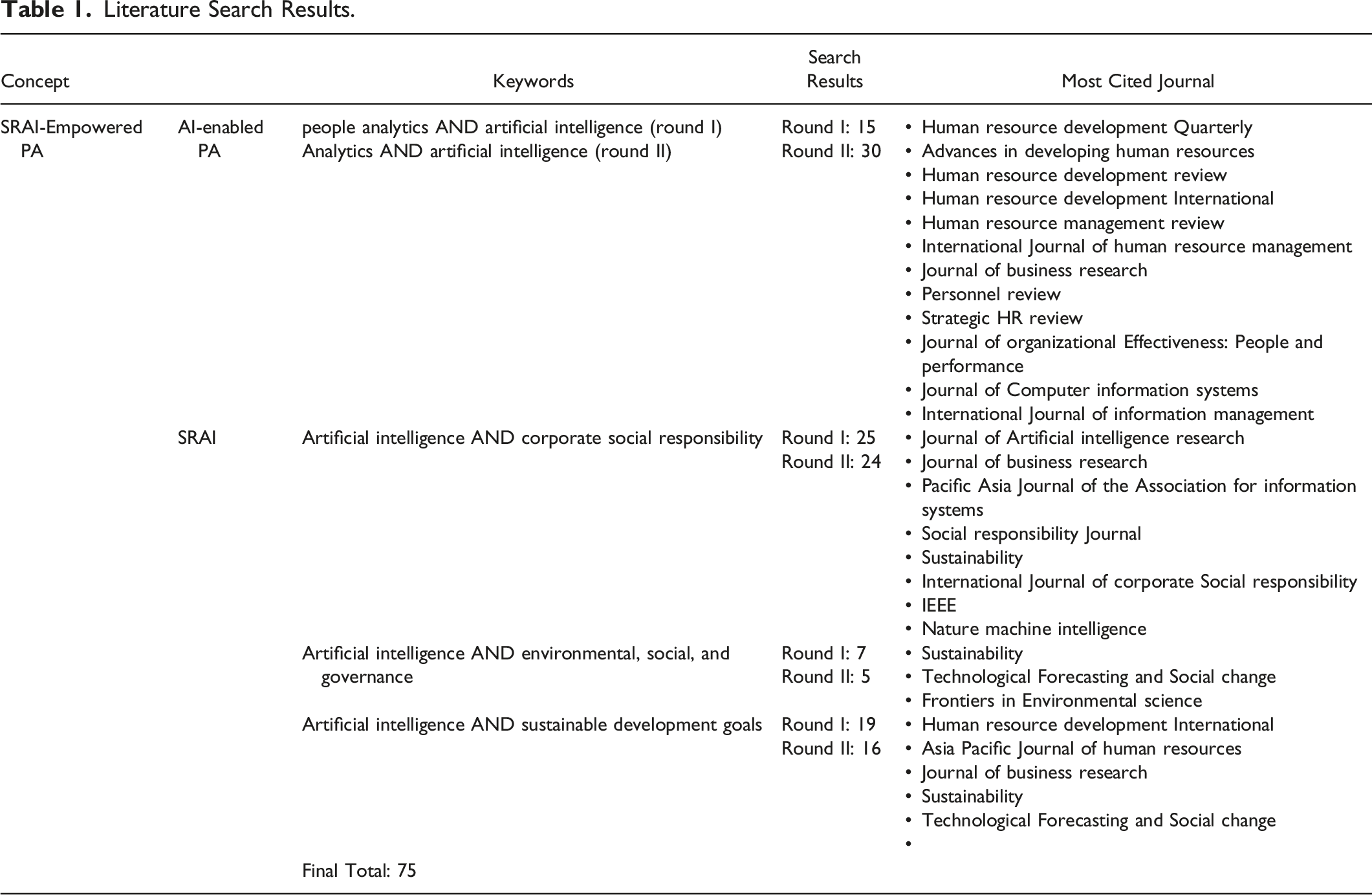

Literature Search Results.

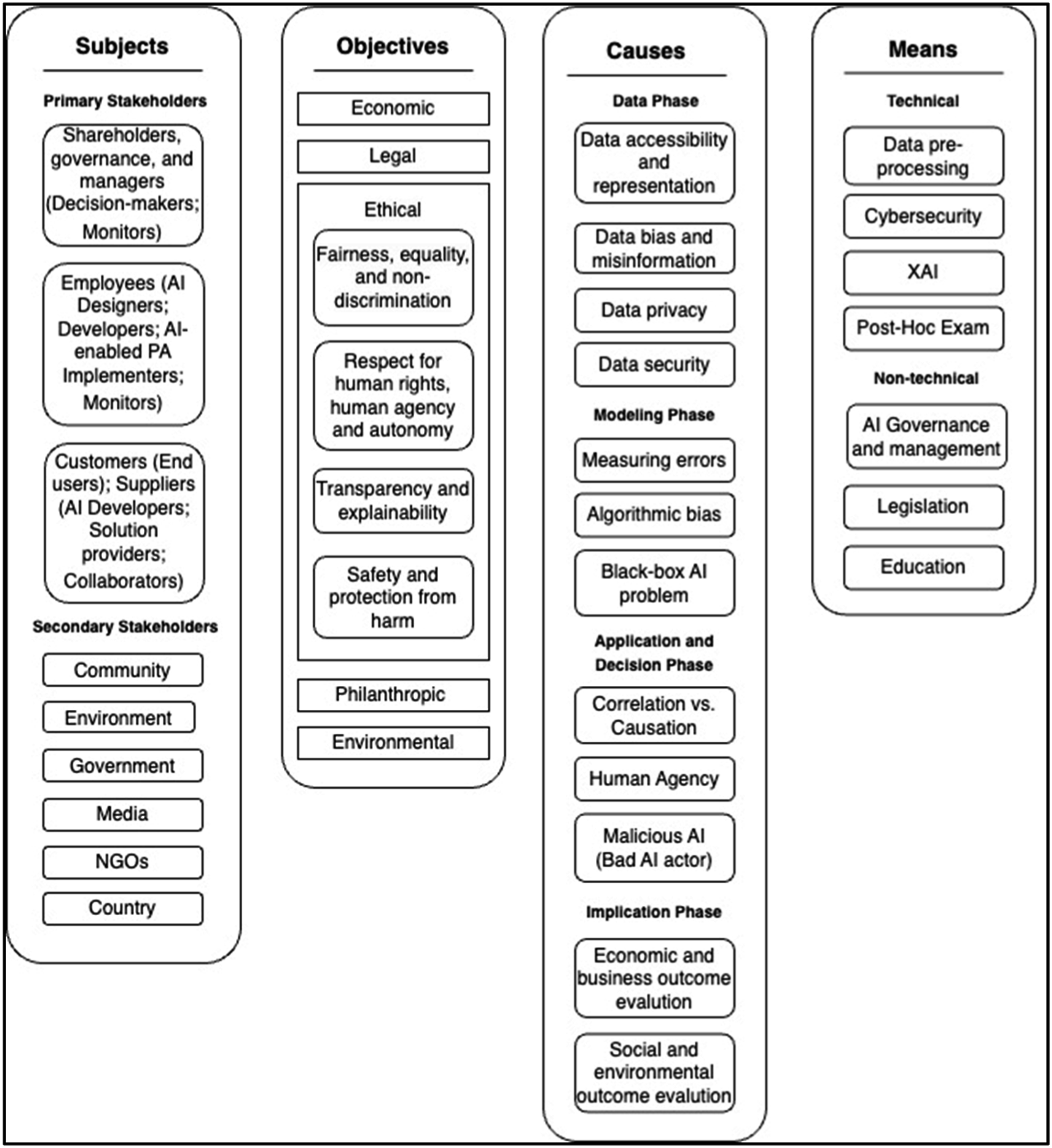

Finally, we conducted a more in-depth review and analyze the literature using Nvivo. The initial analysis was conducted through inductive coding to explore and identify the most salient concepts emerging from the articles without being constrained by any preconceived themes or theories. Next, we conducted deductive coding to classify the extracted concepts and themes into subjects, objectives, causes, and means. A coding protocol with a set of criteria for defining codes and themes was shared among the’ researchers to ensure consistency. The protocol also serves as a reference point for discussions and decision-making throughout the research process. The authors met regularly, engaging in extensive discussions to ensure a comprehensive, consistent, and reliable interpretation of the data. With any disagreements, the researchers went back to the original text(s) to re-interpret using different codes (deductive) and discuss further until reaching a consensus (inductive). Figure 1 shows the final codes and themes in the domains of subjects, objectives, causes, and means in addressing the research questions. Thematic map for the analysis of SRAI-empowered PA.

Findings

The Subjects (Stakeholders) (RQ1)

The subjects of SRAI-empowered PA refer to the stakeholders, including individuals or groups, who have an interest in or are impacted by the actions and decisions of AI-enabled PA in various aspects such as economic, legal, ethical, philanthropic, or environmental concerns. Stakeholder theory, introduced by Snider et al. (2003), serves as a useful framework for evaluating corporate performance. Stakeholder-based HRD is also advocated by Baek and Kim (2014). To identify and categorize organizations’ stakeholders, Coombs (1998) used criteria such as “interest, right, claim, or ownership in an organization” (p. 289). Commonly considered relevant stakeholders for organizations include employers/owners (top executives and decision-makers), employees, customers, suppliers, and the community.

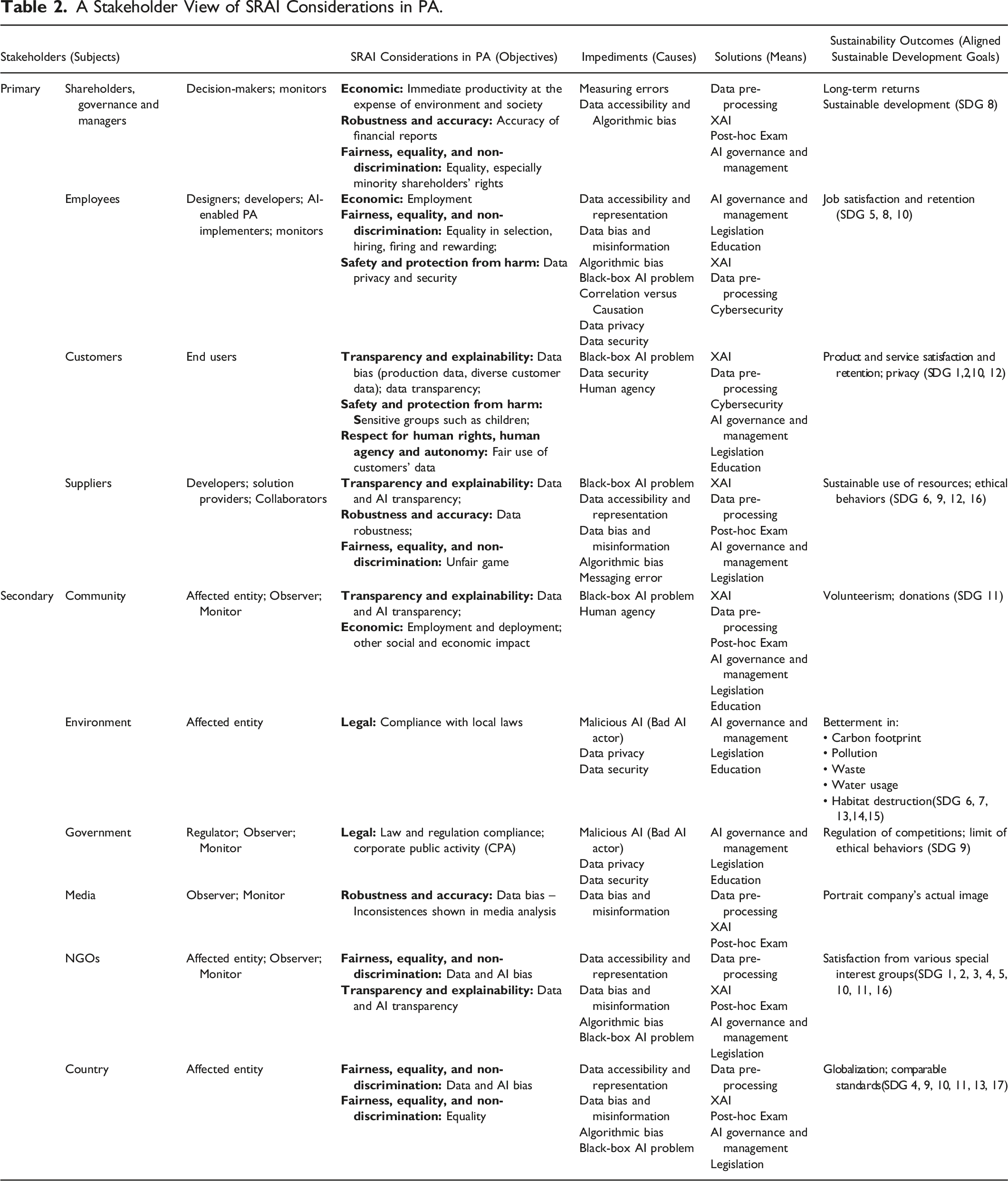

A Stakeholder View of SRAI Considerations in PA.

The Objectives (RQ2)

The objectives are the stakeholders’ considerations and requirements of AI-enabled PA in the economic, legal, ethical, philanthropic, or environmental respects. First, at the economic level, AI is expected to be functional and robust in solving problems and making profits. The economic requirement of AI-enabled PA are rooted in two key concepts: robustness and accuracy (Cheng et al., 2021; Tursunbayeva et al., 2021). Second, at the legal level, being lawful is another basic requirement for all AI applications. In the context of PA, AI-enabled PA is most prone to the US Constitution and Bill of Rights, data regulation, such as the General Data Protection Regulations (GDPR) (McCartney & Fu, 2022; Sun et al., 2018; Tursunbayeva et al., 2018, 2021), as well as the US Employment Act (Kim, 2017) and the Americans with Disabilities Act (Anderson, 2017). Third, at the ethical level, the most frequently discussed considerations and requiremnets in the literature include 1) fairness, equality, and non-discrimination; 2) respect for human rights, human agency and autonomy; 3) transparency and explainability; and 4) safety and protection from harm.

Fairness, Equality, and Non-Discrimination

Fairness, equality, and non-discrimination are key principles for SRAI. The concept involves ensuring that AI systems do not generate biased outcomes, ensuring fairness, and avoiding discrimination and stigmatization (Akter et al., 2021; Cheng et al., 2021; Jobin et al., 2019; Tursunbayeva et al., 2021).

Respect for Human Rights, Human Agency, and Autonomy

Within the realm of PA, this concept can be broken down into three layers: employee data privacy and consent (Tursunbayeva et al., 2018), limitations on managerial agency in decision-making (Tursunbayeva et al., 2021), and the potential for manipulation and behavior-shaping by AI. For instance, concerns have been raised about excessive surveillance and employee monitoring through AI-enabled PA, especially with the rise of work from home during the pandemic (Booth, 2019; Wakabayashi, 2018; Jones et al., 2019; Schwartz, 2020).

Transparency and Explainability

Transparency and explainability are considered to be among the most critical considerations for AI-enabled PA (Jobin et al., 2019; Tursunbayeva et al., 2021). The principle refers to the ability of AI to explain how it comes to its decisions in a way in which humans may get a sense of what AI is doing and why. Transparency and explainability are crucial for identifying and eliminating bias and discrimination, building user trust, and complying with regulations such as the GDPR (EU, 2016/679, Seo et al., 2022).

Safety and Protection from Harm

This principle reflects the requirement that AI-enabled PA must perform in safely and securely, avoiding malicious use as well as preventing unintentional harm and adverse impacts, even with good intentions (Jobin et al., 2019; Varshney & Alemzadeh, 2017). This can be the protection of employee data or the AI model from leakage, hacking, and other adversarial attacks. In addition, it is important to consider the potential unintended consequences of the AI-enabled PA’s decisions.

Additionally, at the philanthropic level, AI-enabled PA has a great potential to serve as a good AI citizen in enhancing people’s quality of life by addressing poverty, hunger, and societal well-being (Varshney, 2019). Finally, at the environmental level, AI-enabled PA could help to promote environmental well-being and sustainability in reducing climate change or enhancing the efficient and effective use of green energy and natural resources (Cheng et al., 2021; Varshney, 2019).

The Causes (RQ3)

As we have seen in the examples of biased algorithms that we mentioned earlier, AI misconduct is usually complex and can rarely be attributed to a single cause. In many cases, the underlying problems are caused by a course of pipeline in different phases of the machine learning process. Therefore, here we discuss why AI may fail in SRAI requirements by examining the causes thar could occur in the data, modeling, application and decision, and implication phases of AI.

Data Phase

We identified four common causes that could occur during the data phase: 1) data accessibility and representation; 2) data bias and misinformation; 3) data privacy; and 4) data security. First, lack of data accessibility has been identified as one of the most critical issues in PA (Andersen 2017; Ratnam & Devi, 2023; van der Togt & Rasmussen, 2017) and this is especially prone to AI-enabled PA as AI is majorly fueled by a large amount of data. Furthermore, low-quality data, including biased data and misinformation can cause AI to make a wrong decision in a way that replicates the same biases and mistakes presented in the training data (Cheng et al., 2021; Dastin, 2018; Tursunbayeva et al., 2021). Lastly, data privacy and security are frequently raised about excessive or unauthorized collection and use of personal data (Beigi & Liu, 2020; Boulemtafes et al., 2020; Jobin et al., 2019).

Modeling Phase

We identified three common causes that could occur during the modeling phase include: 1) measuring errors; 2) algorithmic bias; and 3) black-box AI problems. First, measuring errors refer to poor model performance in machine learning. For example, a poor image recognition model by Google was reported to mislabel black people as “gorillas” (Cheng et al., 2021). Second, algorithmic bias is systematic biases generated by the algorithm that can magnify, operationalize, and institutionalize biases, and persists even after introducing new data to retrain the model (Akter et al., 2021; Baeza-Yates, 2018; Getoor, 2019). Third, the black-box AI problem is closely related to the expectation for AI transparency and explainability, which reflects the issue where the inner workings of AI models are difficult to interpret, understand, or explain, leading to challenges in ensuring the accountability of AI decisions (Cheng et al., 2021; Guerra-Gomez, 2018; Kim, 2022).

Application and Decision-Making Phase

We identified three common causes that could occur during the application phase, including 1) correlation versus causation; 2) human agency; and 3) malicious use of AI (Bad AI actor). First, when the PA team misinterprets AI’s correlation results as causation, it will mislead the decisions. For example, scholars have criticized that many organizations misuse the socioeconomic status (SES) variables and the psychometrics and personality test results for hiring and promotion decisions without evident causal reasoning (O’Neil, 2018). Second, as we discussed earlier, overly relying on AI decisions could reduce the role of management in exercising their human agency in decision-making. Additionally, the use of AI for employee behavioral shaping can raise ethical concerns (Jones et al., 2019; Wakabayashi, 2018). Finally, the malicious use of AI, also known as a “bad AI actor,” refers to the use of AI for malicious purposes such as cyberattacks, misinformation campaigns, and autonomous weapons (Jobin et al., 2019; Knight, 2020).

Implication Phase

Although most research evaluates PA’s success from economic and business perspectives, it is important to consider the long-term implications of AI-enabled PA (Cheng et al., 2021; Di Vaio et al., 2020; Peeters et al., 2020; Schiemann et al., 2018; Sætra, 2021). This requires HR scientists to identify possible unforeseen implications and precisely specify the goals with domain expertise (Ratnam & Devi, 2023; Yoon, 2021).

The Means (RQ4)

Based on the literature, we identified the following technical and non-technical means to address the causes and facilitate SRAI-empowered PA.

Technical Means

There are four technical means, which include data pre-processing, cybersecurity, explainable AI (XAI), and post-Hoc examination.

Data Pre-Processing

Data pre-processing involves preparing and cleaning data to ensure that the data is accurate, complete, and unbiased. In many cases, the organic data can contain biases and inaccuracies that reflect social and cultural norms and beliefs. This must be addressed before the given dataset is fed into an AI-enabled PA system. Basic data pre-processing techniques include data cleaning, normalization, and feature engineering. Moreover, some researchers suggested that AI-enabled PA should use data aggregation methods or remove identifiable information to ensure data privacy and reduce the possible bias embedded (Bellamy et al., 2019; Boulemtafes et al., 2020).

Cybersecurity

Cybersecurity is critical throughout the entire process of AI-enabled PA to secure the safety of the data as well as the AI-enabled PA system against cyberattacks and malicious usage such as cyberbullying (Cheng et al., 2021). The PA team must take measures to detect, inform, and prevent potential cyberattacks and cyberbullying. This can be achieved by establishing clear guidelines for who can access and use the data, where the data should be stored, and when the data should be deleted (Boulemtafes et al., 2020; Jacobs, 2017).

Explainable AI

Explainable AI (XAI) refers to the type of AI that can provide clear and understandable explanations for the outcomes it produces. It is highly encouraged to use XAI as opposed to black-box AI in AI-enabled PA given that explainability is extremely important when AI is used for high-stakes applications such as PA, where the outcomes could significantly affect people’s lives (Rudin, 2019, Seo et al., 2022). Using XAI can enable stakeholders to better understand the decisions being made by AI-enabled PA, and facilitate their involvement in the decision-making process, ultimately improving the trust and credibility of AI-enabled PA (Guerra-Gomez, 2018).

Post-Hoc Examination

Post-hoc examination involves evaluating the outcomes produced by an AI-enabled PA after they have been generated. By using techniques such as sensitivity analysis and group or subgroup fairness evaluation, post-hoc examination can help to ensure that AI-enabled PA systems are fair, accurate, and unbiased (Shu et al., 2018, 2019).

Non-Technical Means

Finally, we identified three non-technical means that can help to support the successful implementation of SRAI-empowered PA. They are AI governance, management, legislation and education.

AI Governance and Management

AI governance and management refers to the development of policies, regulations, and procedures for the responsible and ethical use of AI-enabled PA (Cheng et al., 2021). Moreover, Ratnam and Devi (2023) identified that lack of management buy-in has been the most critical impediment for organizations to adopt PA successfully. This highlights the importance of the involvement of top management throughout the life cycle of AI-enabled PA.

Legislation

Legislation refers to the process of establishing and enacting laws and regulations that govern the use of AI. Through legislation, stakeholders of AI-enabled PA are forced to comply with ethical, legal, and societal norms, which can help mitigate risks and prevent potential harm to individuals and groups affected by AI. Legislation can also provide accountability mechanisms for resolving grievances related to the use of AI-enabled PA. (Cheng et al., 2021). The GDPR sets a good example of legislation that governs the collection, processing, and storage of personal data within the European Union (EU).

Education

Education involves educating stakeholders on the potential benefits and risks of AI-enabled PA. Education should cover not only the technical aspects of AI-enabled PA but also its ethical and social implications. Training programs should be developed for AI-enabled PA users, policymakers, and other relevant parties to ensure that they have the necessary knowledge and skills to use AI-enabled PA responsibly and ethically. Additionally, public awareness campaigns can help to raise awareness about AI-enabled PA and promote its acceptance among the general public (High-Level Expert Group on Artificial Intelligence, 2019).

Summary: A Stakeholder View for SRAI in PA

Table 2 lists the primary and secondary stakeholders involved in AI-enabled PA, their respective roles in the AI context, the aligned sustainable goals and SRAI considerations. Primary stakeholders have the ability to directly affect their organizations while secondary stakeholders indirectly influence their organizations (Parboteeah & Cullen, 2019).

Primary Stakeholders’ View

The primary stakeholders identified from the literature are shareholders, governance and managers; employees; customers and suppliers. Shareholders, governance, and managers, who focus on long-term returns and sustainable development, are the decision makers and monitors in the AI context (Ghatak, 2022; Jobin et al., 2019; Parboteeah & Cullen, 2019; Ratnam & Devi, 2023). Their major SRAI concerns include the accuracy of financial reporting, immediate productivity without hurting environment and society, and equal rights of minority shareholders.

Employees are involved as AI designers, developers, implementers, and monitors, organizations need to ensure their job satisfaction and retain good employees in order to sustain. Some of the SRAI considerations related to employees are equality in selection, hiring, firing, and rewarding, data bias, data privacy, and security, for which companies are ill prepared (Calvard & Jeske, 2018, Upadhyay & Khandelwal, 2018). The privacy and protection of data might be compromised in training data, as AI grants greater power to those who have control over data access (Stahl et al., 2023). Organizations need to avoid AI-led jobless growth and wage freeze (Thite, 2022). When organizations utilize hiring algorithms to shortlist job applicants without managerial insights, it can result in employment discrimination. This is because algorithms may exhibit a preference for a specific ethnic group, which is perceived to have better performance, while disregarding other groups (Sholtz, 2018).

Customers are the end users in the AI context. They can be beneficiaries or victims of AI products. In order to sustain, companies need to make sure their customers are satisfied with their products and their products are safe to use (Parboteeah & Cullen, 2019). For instance, AI-enabled robots must not pose any health, safety, or security risks, such as the occurrence of fatalities due to self-driving cars. Customer’s privacy needs to be protected as well. Specific SRAI concerns regarding customers are how to address issues of data privacy and data bias, how to ensure data transparency, how to promote fair use of customers’ data and how to protect sensitive groups and disadvantaged groups such as children (Ratnam & Devi, 2023).

The last major stakeholders are suppliers. Sustainable use of resources and ethical behaviors are expected from suppliers, who can join other major stakeholders to develop AI-enabled products, programs and system. They can be consulted as solution providers. Organizations need to choose socially responsible suppliers in their operations and expect sustainable use of environment and resources from their suppliers as well (Parboteeah & Cullen, 2019). Suppliers should also prioritize data transparency in their operations, provide robust data, and avoid unfair practices such as the use of sweatshops, labor exploitation, and poor working conditions.

Secondary Stakeholders’ View

Community, environment, government, media, NGOs, and country are considered secondary stakeholders with various interests. In the AI context, secondary stakeholders are affected by AI solutions more than other stakeholder groups while they can influence decisions made by AI-enabled PA by observing how it is implemented and regulate it. First, community relies on volunteerism and donations to sustain, of which SRAI is mostly concerned about data transparency, employment and deployment impact, as well as other social and economic factors in the AI-assisted process of companies (Stahl et al., 2023). Second, environment is the most passive stakeholder among all. The betterment in carbon footprint, pollution, waste, water usage, habitat destruction is needed to sustain our environment. Whether organizations’ AI-assisted decisions are in compliance with local laws regarding preventing or reducing pollution and noise as well as environment protection is the major SRAI concern (Kim, 2022). Third, government is the only secondary stakeholder that has the power to limit the impact of AI-enabled PA through regulating competitions and forcing ethical behaviors. Therefore, law and regulation compliance is a major SRAI concern for organizations. Another important SRAI concern is corporate public activity (CPA). CPA includes: “non-market activities such as lobbying, campaign contributions, and operation of a government relations office, commenting on proposed regulation and contributions to industry trade groups” (Parboteeah & Cullen, 2019, p.362). The government and policymakers should work towards establishing and enacting laws and regulations that govern the responsible and sustainable use of AI in PA.

Fourth, media include newspapers, television and the internet. Media analysis is recommended in company’s practice with this particular stakeholder. Media analysis is to regularly scan the media to discover what is being said about the company in order to find and address the discrepancies between what’s portrayed in the media and what they really are. Data accuracy is the major SRAI concern. Companies should not manipulate data to influence the view of media and public on the company. Instead, an ethical business always works on building a strong relationship by reinforcing strong company messages, addressing negative information, appreciating mixed messages and understanding missing messages (Parboteeah & Cullen, 2019). The media also plays a crucial role in educating the public regarding SRAI by disseminating accurate and unbiased information about the ethical, legal, and societal implications of AI-enabled PA through public awareness campaigns. These initiatives aim to demystify the technology and clarify its benefits and risks to the general public. Furthermore, the media should facilitate discussions and debates among experts, policymakers, and the public to address concerns and identify best practices for the responsible and ethical use of SRAI-empowered PA. This way, organizations can gain insights into how to leverage AI in PA responsibly and ethically.

Fifth, Non-profit organizations (NGO) include “special interest groups, activity groups, social movement organizations, charities, religious groups, protest groups, and other non-profit groups” (Fassin, 2009, p.503). Because of the diverse and complex composition of NGO, it is recommended that organizations take the following measures to work ethically with NGOs: identify a particular NGO stakeholder, prioritize services, facilitate institutionalizing ethical behaviors, visualize and map actions, and engage stakeholders (Parboteeah & Cullen, 2019).

Finally, to achieve the country’s sustainable development, it is essential to foster globalization and implement comparable standards across countries. To promote equality among countries, particularly in the treatment of disadvantaged developing countries, it is crucial to actively prevent data bias in AI-enabled PA (Cowls et al., 2021; Stahl et al., 2023). This can be achieved by actively promoting cross-border collaborations, sharing best practices, and establishing specific guidelines and frameworks to ensure the effective adoption of SRAI practices globally.

Discussion

This literature review identified the key considerations and requirements for implementing SRAI-empowered PA towards sustainability in the domains of subjects (stakeholders), objectives, causes, and means. As an emerging concept, SRAI has not been explored in depth both in HRD and PA. This paper contributes to our understanding of SRAI and provides insights into the key considerations for implementing SRAI-empowered PA. It also offers guidance on effectively addressing these considerations in practice. In the following, we discuss theoretical and practical implications for HRD, as well as the limitations of this review paper and recommendations for future research.

Implications for Theory

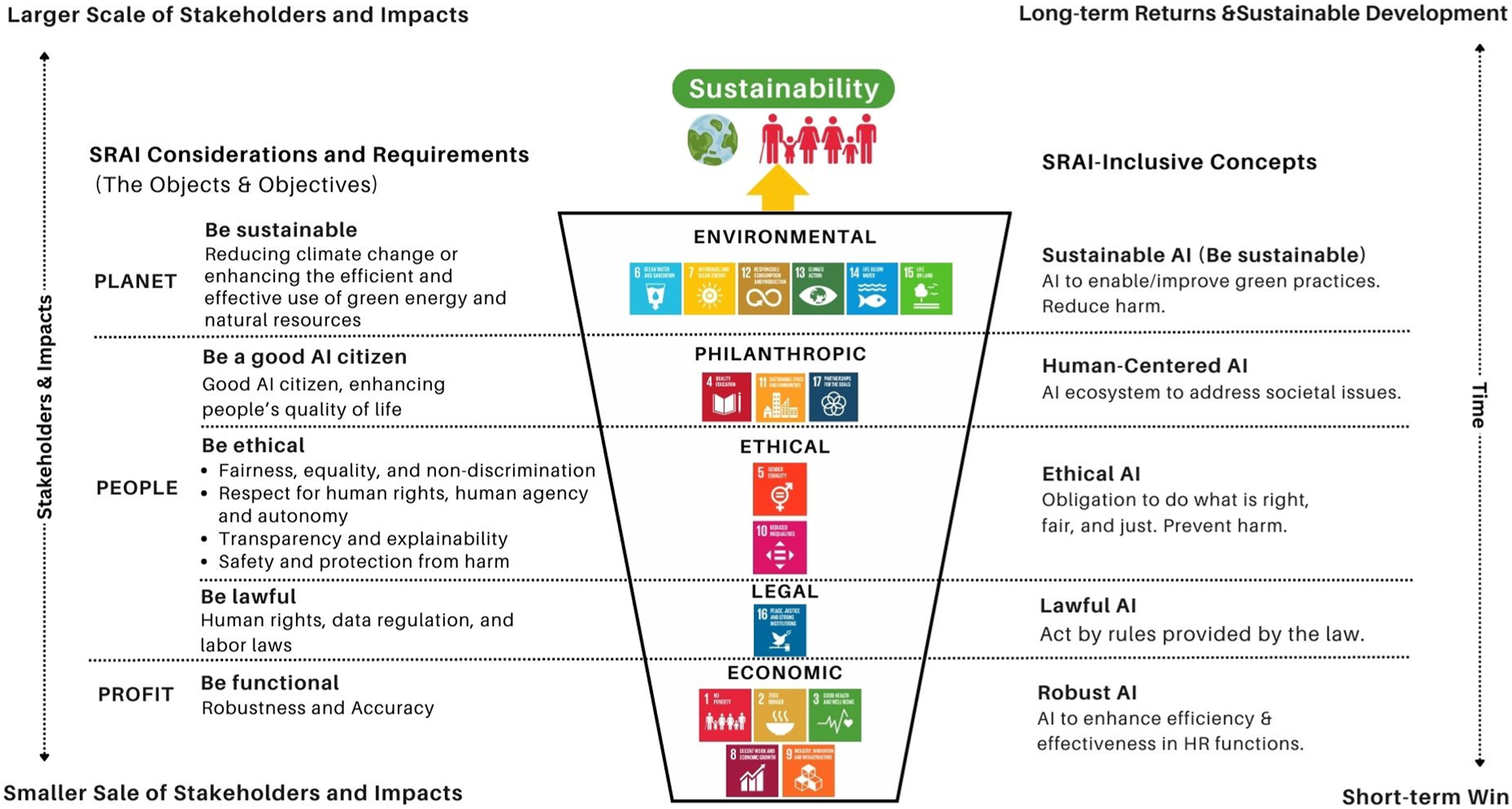

This integrative literature review intertwined the essential concepts of sustainability including corporate social responsibility (CSR), environment, social, and governance (ESG), and UN sustainable development goals (SDGs) coupled with the seamless integration of AI to forge a comprehensive understanding of socially responsible AI (SRAI). Drawing from our findings and the synthesized work, we present a comprehensive framework for SRAI which takes the form of an inverted pyramid model with five distinct hierarchical levels: economic, legal, ethical, philanthropic, and environmental (See Figure 2). The inverted pyramid of the SRAI framework.

In Figure 2, the first four layers from the bottom of the framework are the social aspects of Caroll’s CSR pyramid (Carroll, 1991). On top of these four levels, we put environmental respect inspired by the environmental concerns of ESG and SDGs that CSR fails to address. By adding the fifth layer, our framework extends Caroll’s CSR model and provides a more comprehensive approach to cover all aspects of CSR, ESG, and SDGs. In addition, the inverted pyramid structure also illustrates the five layers on the scale of stakeholder scope and impacts, and the outcomes in terms of the concern of time. For example, similar to Caroll’s CSR model, the economic layer (SDG #1, 2,3,8,9) is the most basic which involves a smaller scope of stakeholders and only addresses the lower level of stakeholders’ considerations and requirements. Meeting economic responsibility is considered essential and only a short-term win while inadequate to address sustainability for an organization. Similarly, the legal layer (SDG #16) only regulates minimal ethical behaviors and is considered a “must-have”. The ethical layer (SDG #5, 10), however, reflects a higher standard of performance expected by society than what is required by law. Further, the philanthropic layer (SDG #4, 11, 17) addresses expectations from the broader society and communities. It is voluntary while desired for long-term development. Finally, the top layer which is the environmental layer (SDG #6, 7, 12, 13, 14, 15) transcends the concerns from human to the natural environment. Building on the preceding four layers, the environmental layer leads the way towards our desired future in which humans and nature coexist in harmony and continuously develop in a sustainable manner. Additionally, our framework also incorporates the 3Ps of the Triple Bottom Line (TBL) (Elkington, 1994), in which the first layer focuses on profit; the second to fourth layers center around people; and the fifth layer emphasizes the environmental impact on the planet. Next, we conceptualize SRAI by adapting AI to the proposed framework with five core components: economic, legal, ethical, philanthropic, and environmental. The economic component of SRAI refers to AI’s functional responsibility in maximizing operational efficiency and effectiveness to optimize productivity and profits. The legal component of SRAI requires AI to operate in compliance with laws and regulations. The ethical component of SRAI involves the ethical principles of respect for human rights, privacy and safety, non-discrimination and fairness, transparency, and prevention of harm (non-maleficence) (Jobin et al., 2019). The philanthropic component of SRAI is the expectation of AI to be a good AI citizen that contributes to address societal challenges and improve people’s quality of life. The environmental component of SRAI recognizes the critical role that AI can play in addressing environmental challenges such as climate change and helping to enable or improve green practices such as optimizing energy consumption, enabling the development and management of renewable energy sources, aiding in sustainable agriculture, improving waste management, and realizing smart transportation (Sætra, 2021; Toniolo et al., 2020).

Our comprehensive framework for SRAI advances the frameworks created by the prior studies (Cheng et al., et al., 2021; Di Vaio et al., 2020; Sætra, 2021) which only presented SRAI in self-contained ways. It covers several competing concepts including Robust AI (Dietterich, 2019), Lawful AI (High-Level Expert Group on Artificial Intelligence, 2019), Ethical AI (Jobin et al., 2019), Human-Centered AI (Xu, 2019), and Sustainable AI (Larsson et al., 2019). Moreover, the inverted pyramid structure of our model also presents a creative work that defies Cheng’s et al. (2021) model of AI’s social responsibility as well as addresses the often critiques on Caroll’s CSR pyramid (Carroll, 1991) where economic considerations were overly emphasized in evaluating organizations’ outcomes (Baden, 2016). As the inverted shape of our proposed framework implies increasing importance and larger scale of stakeholders and time-lasting impact from the bottom to top, we also observed that the SDGs are insufficient in addressing the ethical and legal concerns, as they have fewer goals specifically related to these two critical aspects. Overall, we hope this comprehensive framework can help to advance the field’s understanding of SRAI and guide the further theory building efforts.

Implications for Practice

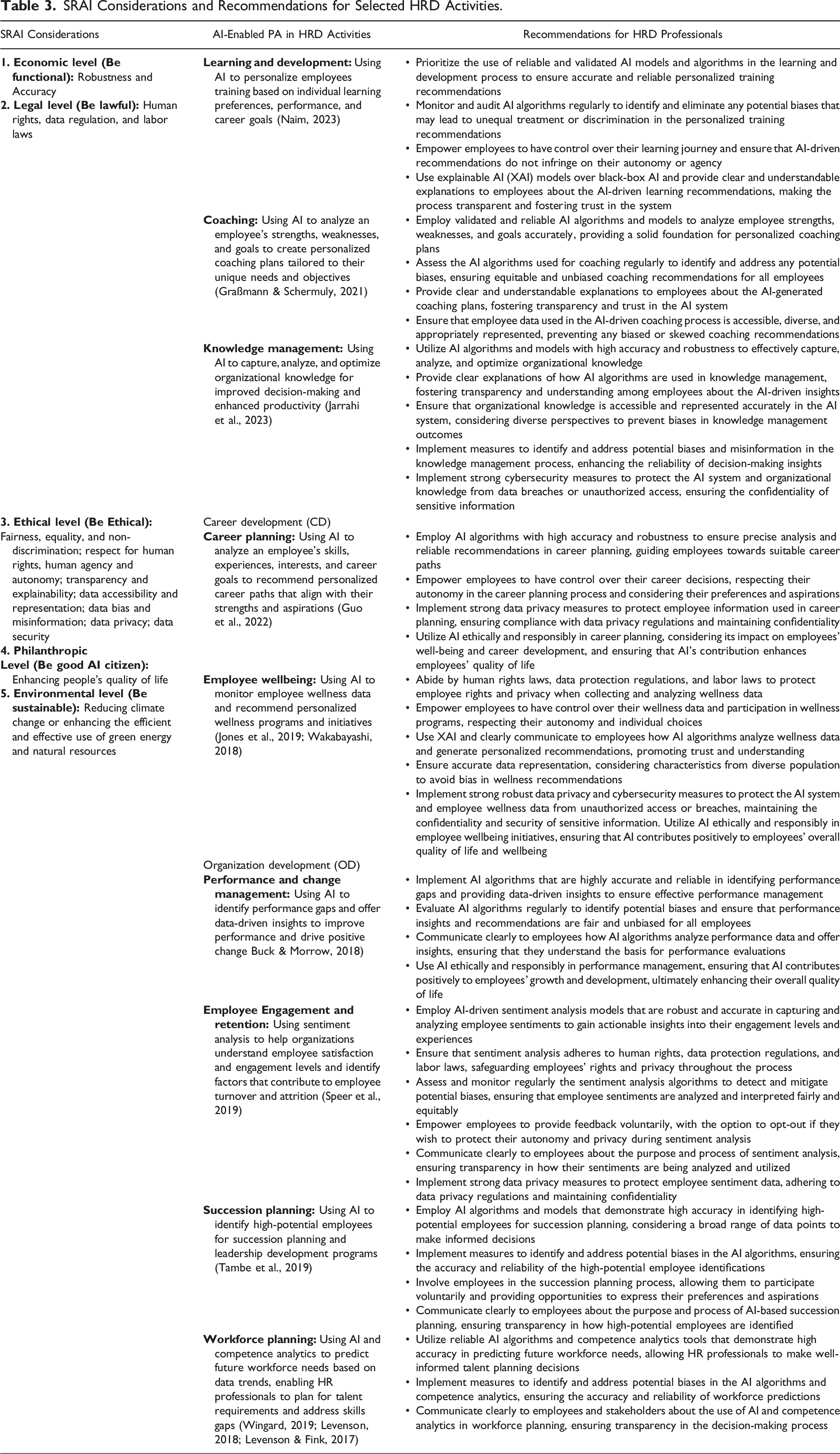

SRAI Considerations and Recommendations for Selected HRD Activities.

In summary, we recommend that HRD professionals should embrace the SRAI considerations in the economic, legal, ethical, philanthropic, and environmental respects when implementing AI-enabled PA in HR decision-making. To achieve this, it is crucial for professionals to receive AI competency training to understand how AI functions and familiarize themselves with pertinent regulations as well as the SRAI considerations, which will enable them to carefully execute, monitor, and evaluate AI-enabled PA. Moreover, they should develop training programs for the PA team, employees, and managers to ensure they have the necessary knowledge and skills to use and interpret AI-enabled PA responsibly and ethically. By doing so, organizations can make ethical and unbiased decisions that prioritize human values and contribute to an overall better quality of life (Kryscynski et al., 2018; Stahl et al., 2023; Thite, 2022).

Within the realm of SRAI, the pursuit of AI transparency, robustness, fairness, equality, respect for human rights, human agency, and autonomy, as well as data confidentiality pervade every facet of HRD, including training and development, career development, and organizational development (Bonaci et al., 2015; Stahl et al., 2023). Taking coaching as an example, when implementing AI-enabled PA, HRD professionals should ensure to use validated and reliable AI algorithms and models for personalized coaching plans and regularly assess the AI algorithms and models to identify and address any potential biases, ensuring equitable and unbiased coaching recommendations for all employees (Graßmann & Schermuly, 2021). They should conduct post-hoc examination and evaluation of AI-enabled PA outcomes to identify and address potential biases, errors, discrimination, and disinformation. Additionally, they must ensure that employee data used in the AI-driven coaching process is accessible, diverse, and appropriately represented, preventing biased or skewed coaching recommendations. Through regular engagement with stakeholders, potential issues can be identified and rectified, while fostering collaboration with individuals serves as a safeguard against bias and prejudice (Stahl et al., 2023). Overall, HRD professionals bear the crucial responsibility of harmonizing the ethical values of individuals and the organization, using AI algorithms to safeguard against any form of discrimination targeting specific groups and upholding transparency. They should leverage explainable AI (XAI) models over black-box AI to promote transparency, interpretability, trust and credibility, and stakeholder involvement in the decision-making process.

The SRAI also casts its watchful gaze upon the potential incongruities that may arise from media analysis. HRD professionals are entrusted with the pivotal task of assessing the ethical climate, establishing robust codes of ethics, and furnishing comprehensive CSR/ESG reports. By instituting a meticulous system of observation and control, coupled with rigorous ethics audits, the implementation of AI systems can be safeguarded within the realms of ethical practice. Lastly, the SRAI holds deep concerns regarding data privacy and security (Bonaci et al., 2015). For example, when applying AI-enabled PA in employee wellness programs, HRD professionals should comply labor laws to protect employee rights and privacy when collecting and analyzing wellness data and empower employees to have control over their wellness data and participation in wellness programs, respecting their autonomy and individual choices (Jones et al., 2019; Wakabayashi, 2018). They should implement strong robust data privacy and cybersecurity measures to protect the AI system and employee wellness data from unauthorized access or breaches, maintaining the confidentiality and security of sensitive information and ensuring that AI contributes positively to employees' overall quality of life and wellbeing. HRD professionals are called upon to champion an environment that encourages whistleblowing and fosters a supportive system for reporting any privacy or security-related issues. Compliance with data protection regulations and the creation of AI-enabled systems in alignment with privacy standards becomes imperative, accompanied by thorough training programs to empower employees in identifying and addressing privacy and security concerns throughout the implementation of AI-driven HR systems. An alert system can serve as an additional layer of vigilance, promptly detecting any potential issues in the process, and the HRD should implement robust cybersecurity protocols and procedures to ensure the safety of data and the AI-enabled PA system against cyberattacks and cyberbullying (Cheng et al., 2021).

Limitations and Future Research

It is important to note that this review paper has several limitations. The literature review was limited to a rather small number of articles as both the concepts of SRAI and PA are still in their infancy in research. Therefore, our research has not yet covered the recent explosion of deep learning, such as ChatGPT and other generative language tools. Second, it should be acknowledged that the SRAI framework may undergo changes over time, as the technology and its environment continue to evolve. Third, the SRAI-empowered PA guidelines are not exhaustive and may not cover all possible scenarios. As a result, HRD professionals should continuously review, adapt, and refine the guidelines through their practices to ensure that they align with the evolving technology and environment. This may involve collaborating with other experts and stakeholders, staying abreast of emerging research, and regularly evaluating the effectiveness and ethical implications of SRAI-empowered PA.

The findings of this review also suggested several areas for future research. First, in Figure 1, we identified the major themes for SRAI-empowered PA which enlightens the areas of future HRD research such as the impact of AI on various stakeholders in HRD, the essential considerations and potential risks of adopting AI in HRD practices, and the possible solutions. Moreover, the thematic map for SRAI-Empowered PA also created by this study provides a roadmap for future research to further study SRAI-empowered PA and ensure that their use of AI in PA research is responsible and aligned with the principles of sustainability. Second, we believe that there is a need for more empirical studies that investigate the dynamic interplay between the economic, legal, social, philanthropic, and environmental considerations as well as the social and environmental impacts of SRAI-empowered PA in the context of HRD. Third, it is necessary to continuously review and refine the SRAI framework and the SRAI-empowered PA recommendations as both the environment and technology are changing and advancing restlessly. For example, the recent development of Generative AI, a new type of AI that can generate data resembling the training data, shows its potential in creating learning materials and scenario simulations to assist HRD activities. Its ability to generate new data also can enhance predictive modeling in PA by contributing new data to model training and testing and identifying anomalies or outliers in the existing data. However, concerns about its ethical and responsible use, such as misinformation, lack of control, transparency, explainability, and intellectual property, have arisen due to its uncertainty (Jo, 2023; Zohny et al., 2023). As of the time of this research, we are unable to include this new development in our analysis due to ongoing development and limited research for review. We suggest future research to monitor and investigate this aspect of development and identify SRAI considerations and requirements regarding its application in HRD and PA. We also encourage future research to explore ways to minimize the above-mentioned limitations and be transparent about the scope of AI technology to be reviewed.

Conclusion

AI is increasingly being used in HRD and PA. Yet, amidst its soaring expansion, a delicate dichotomy emerges. For some HRD professionals, the very mention of AI evokes an unsettling sense of trepidation, a fear of the unknown. Conversely, there are people who are excessively optimistic about AI’s capability, crafting unrealistic fantasies akin to those portrayed in science fiction films. This delicate dance between fear and fascination all arises from the very same reason – a fundamental lack of understanding. Nonetheless, now in the era when AI is on the tip of everyone’s tongue, HRD professionals need to be more educated about where they truly stand and be aware of the potential challenges and impacts to each stakeholder group in order to proactively use AI to empower HRD practices like PA. This motivated us to conduct this research and facilitate the field’s understanding of socially responsible AI (SRAI) situated in the context of PA.

This integrative review paper introduces SRAI as a new concept for future research and interdisciplinary cooperation, providing guidance to HRD researchers and practitioners in implementing SRAI to ensure the sustainable development of organizations. The study fills the void in the literature by 1) providing an in-depth understanding of SRAI and its impact on organizations’ sustainability; 2) aligning SRAI with the 2030 U.N. sustainability goals; and 3) proposing an inclusive framework and practical guidance for HRD to practice SRAI-empowered PA in organizations. Through its transformative endeavor, the study has reimagined Carroll’s (1991) CSR pyramid model, fashioning it into an exquisite, inverted pyramid for SRAI. This innovative framework lays the foundation for the integration of AI into organizations’ sustainable development efforts. In terms of practical application, the study stands as a pivotal milestone that arms HRD professionals with the knowledge and tools to implement SRAI-empowered PA. Our aspiration is that this paper will offer valuable direction to both HRD researchers and practitioners who are working to implement SRAI-empowered PA to drive positive change in organizations and contribute to the betterment of society as a whole.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.