Abstract

Rapid-paced development and adaptability of artificial intelligence algorithms have secured their almost ubiquitous presence in the field of oncological imaging. Artificial intelligence models have been created for a variety of tasks, including risk stratification, automated detection and segmentation of lesions, characterization, grading and staging, prediction of prognosis, and treatment response. Soon, artificial intelligence could become an essential part of every step of oncological workup and patient management. Integration of neural networks and deep learning into radiological artificial intelligence algorithms allow for extrapolating imaging features otherwise inaccessible to human operators and pave the way to truly personalized management of oncological patients.

Although a significant proportion of currently available artificial intelligence solutions belong to basic and translational cancer imaging research, their progressive transfer to clinical routine is imminent, contributing to the development of a personalized approach in oncology. We thereby review the main applications of artificial intelligence in oncological imaging, describe the example of their successful integration into research and clinical practice, and highlight the challenges and future perspectives that will shape the field of oncological radiology.

Introduction

The dramatic increase in the amount of imaging data and storage and processing capacity over the past decades has promoted the frenzied development of artificial intelligence (AI) systems in diagnostic imaging. There is hardly a radiology field that did not face extensive AI research and fast-paced clinical assimilation. Cancer imaging, undoubtedly, is where its impact has so far been the greatest, ever since the introduction of the first computer-aided detection (CAD) systems in the 1980s. The rapid improvement and high-level performance of CAD systems in lung and breast cancer screening contributed to a growing interest in the development of AI-based tools and their continuous integration into routine cancer imaging.

The AI technological domain boasts a great diversity of architectures and task targets, which tend to be ultra-specialized. Oncological applications include the identification of patients at risk of developing cancer, automatic lesion detection with CAD systems, treatment planning tools, and models for predicting treatment response and prognosis. 1 Furthermore, radiomics and radiogenomics methods allow to personalize the risk profile of each patient through novel imaging markers not detectable by the human eye of even the most experienced radiologists. 2 These findings reflect the unique features of oncological patients and their diseases and can be used to devise patient-tailored management strategies and personalized targeted therapies according to the principles of precision medicine. 3

The aim of this article is to provide an overview of the applications of AI in oncological imaging, as well as to analyze obstacles to their wider use in clinical practice. The following AI application will be discussed: risk stratification, lesion detection and cancer screening, radiomics and radiogenomics, tumor segmentation and treatment planning, and treatment and prognosis prediction. Relevant AI terminology and concepts will be introduced before proceeding to the main discussion.

Artificial Intelligence: General Principles

Technology that mimics human intelligence to solve human problems is the core of what is collectively called AI. Developed as a branch of computer science, present-day AI is a broad field of knowledge that welcomes contributions from different disciplines. 4 While AI is still far from realizing its full potential, it has already shown outstanding results in a variety of fields, notably including the research and clinical activity of radiology departments. However, an almost habitual presence of AI in radiology coexists with an often-superficial knowledge of its inner workings and a degree of confusion about AI terminology. Terms such as AI, machine learning (ML), deep learning (DL), and neural networks are often used interchangeably, despite having substantial differences. 5

In the classical paradigm of computer science, a machine (ie, a computer) performs on an input the function for which it is programed to obtain an output. The problem is that it is not possible to translate the extremely complex cognitive process that underlies the work of an experienced radiologist into the programming code. This challenge can be addressed through an ML approach in which the model, like the human brain, could learn from its mistakes. ML algorithms attempt to approximate a required function recognizing meaningful patterns in data. 6 Most ML approaches used in medical imaging require some degree of human intervention to be trained and are called “supervised algorithms.” 7 Supervised algorithms are trained on sample data sets containing typical examples of inputs and corresponding outputs. In the simplest cases, the training dataset is labeled by human experts based on manually chosen characteristics of interest. For example, the training dataset may consist of both native chest computed tomography (CT) scans and examination in which lung nodules are highlighted and classified as benign or malignant. However, training datasets can also contain a mix of labeled and unlabeled images. In this case, the algorithm would quantitatively assess the voxels constituting lung nodules and decide what features make them appear benign or malignant. Finally, in advanced AI applications, the training dataset can consist of unlabeled data that the system will reclassify and organize based on common characteristics to try to predict subsequent inputs. This type of unsupervised learning generally makes use of so-called DL algorithms.

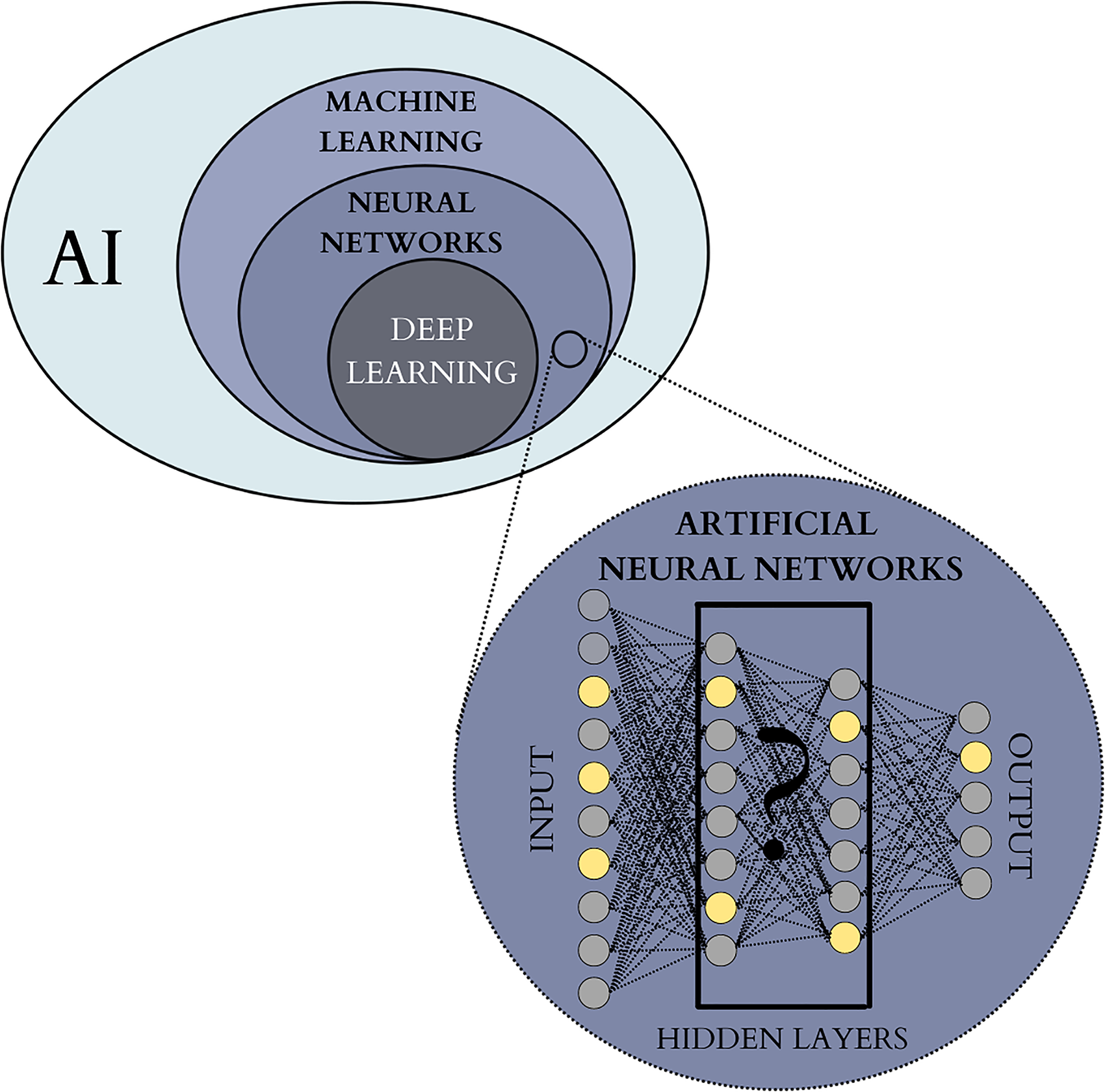

From an operational point of view, ML tools are built using artificial neural networks (ANN) (Figure 1) that has proved particularly fit for medical imaging. 6 ANN are inspired by the human brain and consist of several layers of interconnected “nodes” or “cells.” 8 The outermost layer is an input layer for initial data, while the innermost layer of the algorithm is the output layer. The cells in different levels are connected and activate one another as the information passes downstream and gets analyzed. The output of each cell depends on a single input value multiplied by a “weight” value. If the output of any individual node is above a specified threshold value, it becomes activated and sends data to the next layer of the network. Otherwise, no data is passed along. Thanks to their unique learning features and sensitive calibration range, ANN algorithms are powerful tools for analyzing large amounts of imaging data progressively shaping the weight of their connections to reduce the uncertainty of the approximation as they are exposed to more data samples. The number of middle layers depends on the algorithm's function and complexity, and an increased number of layers gives rise to so-called DL algorithms. DL uses ANN to discover intricate structures in large data sets with different and increasing levels of abstraction.6,9 DL models are well-suited for medical image analysis, allowing the detection of hidden patterns and uncover insightful outcomes, sometimes beyond what human experts can provide. 10

Hierarchy of AI domains frequently employed in oncological imaging. AI field encompasses the theory and development of a wide range of computer systems built to perform the tasks that traditionally required human intelligence. ML is a widely used AI approach based on self-learning algorithms that recognize data patterns in order to predict outcomes. Artificial neural networks are a widespread ML architecture inspired by a human brain, where the information is passed between “nodes” or “cells” organized into several layers. The number of hidden middle layers can vary and depends on the algorithm's function and complexity. When the overall number of layers exceeds 3, the algorithm is considered “deep” and defined as DL. The inner working of DL algorithms cannot be directly assessed by human operators and are often referred to as “black boxes”. Abbreviations: AI, artificial intelligence; DL, deep learning; ML, machine learning.

DL algorithms contribute to the fast development of radiomics, which has recently emerged as a state-of-the-art science in the field of individualized medicine. First defined in 2012 as “high throughput extraction of quantitative imaging features with the intent of creating mineable databases from radiological images,” 11 radiomics represents a new approach to medical imaging analysis and allows to further bridge the gap between raw image data and clinical and biological endpoints. 12 Radiomics is based on the assumption that biomedical images contain disease-specific information that is imperceptible to the human eye.13,14 Although radiomics is not necessarily AI-based, the advances in ML and DL algorithms have greatly facilitated the research and application of radiomics models. Thanks to AI methods and advanced mathematical analysis, radiomics models quantitatively assess large-scale extracted imaging data to identify imaging biomarkers that go beyond simple qualitative evaluation. In oncological imaging, radiomics features are related to tumor size, shape, intensity, and relationships between voxels and texture. These features collectively provide the so-called radiomics signature of the tumor. 15

Radiogenomics is a field closely related to and drawing from radiomics and is based on the hypothesis that extracted quantitative imaging data are a phenotypic manifestation of the mechanisms that occur at the genomic, transcriptomic, or proteomic levels. Radiogenomics combines large volumes of quantitative data extracted from medical images with individual genomic phenotypes to assess the genomic profile of tumors. This allows the creation of prediction models used to stratify patients, guide therapeutic strategies, and evaluate clinical outcomes. 16 Imaging data can be further combined with clinical and laboratory information and other personalized patient variables to improve the precision of diagnostic imaging, predict outcomes, and identify optimal management. 17

Risk Stratification

Identifying patients at risk of developing malignancy and referring them to personalized screening programs is one of the major challenges in modern oncology. AI algorithms allow the derivation of clinically important predictors from generic and often weakly correlated imaging features. When combined with clinical data, this information facilitates the identification of patients that may be at risk of developing malignant lesions. 18 Moreover, AI has the potential to increase the accuracy of radiological assessment of tumor aggressiveness and differentiation between benign and malignant lesions, allowing for more precise patients’ managament. 1

AI-based prediction models have been developed for a variety of imaging techniques and a wide range of malignancies, including lung, colorectal, thyroid, breast, and prostatic cancers. Breast cancer has traditionally attracted major interest for AI-based risk prediction models. Breast cancer remains the leading cause of female cancer mortality with the survival rates in developing countries being as low as 50% due to late detection. 19 A personalized, accurate risk scoring system would identify patients at high risk of developing breast tumors and in need of strict imaging monitoring. 20 International Breast Intervention Study (IBIS) model, or Tyrer–Cuzick (TC) model, is a scoring system guiding breast cancer screening and prevention. 21 It accounts for age, genotype, family history of breast cancer, age at menarche and first birth, menopausal status, atypical hyperplasia, lobular carcinoma in situ, height, and body mass index. Despite its widespread use, IBIS/TC model demonstrated limited accuracy in some high-risk patient populations. 22 Integration of DL-identified high-risk imaging features can refine the accuracy of the IBIS/TC model and overcome some of its limitations.23,24 Breast density is a mammographic feature closely related to the risk of breast cancer and is integral to the correct reporting of mammographic examinations. 25 It can be successfully assessed by AI algorithms with an excellent agreement and high intraclass correlation coefficient between the AI software and expert readers. 26 A hybrid DL model evaluated by Yala et al included mammographic breast density, age, weight, height, menarche age, menopausal status, detailed family history of breast and ovarian cancer, breast cancer gene (BRCA) mutation status, history of atypical hyperplasia, and history of lobular carcinoma in situ. The IBIS/TC and hybrid DL models showed an area under the curve (AUC) of 0.62 (95% confidence interval [CI]: 0.57-0.66), and 0.70 (95% CI: 0.66-0.75), respectively. The hybrid model placed 31% of patients in the top risk category, compared with 18% identified by the IBIS/TC model, and was able to identify the features associated with long-term risk beyond early detection of the disease. 27

Another aspect related to risk stratification includes the assessment of incidentally discovered benign lesions and identifying those that are more likely to develop malignancy in the future. This is particularly relevant when dealing with lung nodules, as radiologists routinely evaluate hundreds of lung nodules to assess their size, location, margins, and evolution. This information is then subjectively interpreted with the help of guidelines and about the clinical characteristics and history of the patient, to stratify the risk and customize therapeutic and monitoring protocols. AI can appreciably facilitate this challenging and time-consuming task. Baldwin et al assessed the performance of an AI-based lung cancer prediction convolutional neural network (LCP-CNN) compared with the multivariate Brock model, which estimates the risk of malignancy for CT-detected pulmonary nodules. LCP-CNN score demonstrated an improved AUC compared with Brock model (89.6%, 95% CI: 87.6-91.5 and 86.8%, 95% CI: 84.3-89.1, respectively) and allowed to identify a larger proportion of benign nodules with a reduced false-negative rate. Integration of LCP-CNN into the assessment of lung nodules detected on chest CT scans could potentially reduce diagnostic time delays. 28 Another CNN-based model integrating imaging features with clinical data and biomarkers achieved 94% sensitivity and 91% specificity for the differentiation of benign and malignant pulmonary nodules on CT imaging. 29 The results highlight the potential of AI to reduce the need for follow-up scans in low-scoring benign nodules, while accelerating the investigation and treatment of high-scoring malignant nodules and reducing the costs of follow-up examinations. In another study, the use of an auxiliary AI-based tool allowed to improve readers’ sensitivity and specificity in the classification of malignancy risk of indeterminate pulmonary nodules on chest CT. Moreover, it improved interobserver agreement for management recommendations of these indeterminate lung nodules. 30

In another approach, a DL signature was developed for N2 lymph node involvement prediction and prognosis stratification in clinical stage I nonsmall cell lung cancer (NSCLC). A multicenter study performed to test its clinical utility demonstrated an AUC of 0.82, 0.81, and 0.81 in an internal test set, external test cohort, and prospective test cohort, respectively. Moreover, higher DL scores were associated with more activation of tumor proliferation pathways and were predictive of poorer survival rates. 31

AI models have also been used for risk stratification in other types of lesions. An ANN-based DL algorithm allowed reliable differentiation of malignant versus nonmalignant breast nodules first assessed with breast ultrasound and initially reported as breast imaging reporting data systems (BI-RADS) 3 and 4. 32 Hamm et al developed ANN for automated characterization of liver nodules on magnetic resonance imaging (MRI), with a 92% sensitivity and 98% specificity in the training set and higher sensitivity and specificity compared to radiologists in the test dataset. 33 An ML model allowed to distinguish benign from malignant cystic renal lesions on CT with an AUC of 0.96 and a benefit in the clinical decision algorithm over management guidelines based on Bosniak classification. 34 Similarly, an AI-based DL model correctly predicted the majority of benign and malignant pancreatic cystic lesions and outperformed Fukuoka guidelines. 35 In the risk stratification of endometrial cancer, several features identified on T2-weighted MRI were selected to build a predictive ML model, which demonstrated an accuracy of 71% and 72% in the training and test datasets, respectively. 36 Interestingly, the study by Hsu et al identified that the body composition analysis by an AI algorithm can predict mortality in cancer, highlighting a significant correlation between sarcopenia and mortality in pancreatic cancer patients. 37

Lesion Detection and Screening

Screening for clinically occult malignant tumors represents one of the most important goals of oncological imaging and allows timely treatment of tumors that would otherwise pass unnoticed. Radiological screening programs evaluate hundreds of patients at a time, creating a considerable amount of imaging data to review. AI applications can be used to manage the workload and to reduce observational oversights and false-negative readings. 38 Improved detection of cancer through screening is thereby a significant area of interest in oncological imaging AI applications.

CAD tools are AI systems commonly employed in radiological screening. CAD programs are pattern recognition software that assists radiologists in identifying potential anomalies in radiology examinations. Although all CAD systems are somehow AI-based, their design can employ a wide range of architectures of varying complexity and depth. Relatively simple early CADs were designed to reflect radiologists’ perspectives and searched for the findings normally assessed by human readers. 38 For example, in breast cancer screening, CAD assessed mammograms for the presence of microcalcifications, structural distortions, and masses. Advanced present-day CAD systems make extensive use of DL models to extract information that is not immediately accessible to human operators with promising results and potential for improved accuracy compared with radiologists or clinicians. 39 Whereas earlier CAD programs were characterized by high sensitivity and low specificity, newer techniques with improved specificity could significantly facilitate cancer screening. However, radiologists’ experience and judgment remain central to defining the factual relevance of the result provided by CAD systems and deciding further steps.

In clinical routine, CAD systems can be used in a variety of ways. When CAD is used as a “first reader,” a primary CAD assessment is followed by an evaluation by a radiologist that only reviews the anomalies identified by CAD. Alternatively, a screening test can be initially assessed by a radiologist, followed by a secondary CAD review. Problematic areas identified by CAD software are then re-evaluated before the conclusion is formed. Finally, in a concurrent examination, a radiologist routinely assesses the images as the CAD marks remain visible.

Breast cancer screening represents one of the most successful examples of the long-standing implementation of CAD programs into clinical practice. 40 Early forms of breast screening CAD systems, developed in the 1980s, were limited by poor specificity and low diagnostic accuracy resulting in numerous false positives, unnecessary biopsies, and soaring costs. Integration of DL into recent CAD systems led to significant improvements in specificity. The accuracy of DL-based CADs for the detection of breast cancer on mammography is comparable to that of radiologists, although the latter tend to be slightly more specific at the expense of less sensitivity. 41 A single CAD reading can be equivalent to a double reading by 2 radiologists as required by standardized guidelines. 42

CAD-based screening also allows early detection of pulmonary nodules, defined as circumscribed round-shaped parenchymal lesions of <3 cm in diameter. 43 The first CADs for automatic detection of lung nodules on chest CT appeared in the early 2000s, although the lack of specificity impeded their widespread clinical use, similar to other early CAD programs. Thanks to the availability of large databases of chest CT scans and the integration of DL techniques into CAD architectures, modern systems demonstrate higher specificity and decreased false-positive rates. 44 CAD systems based on DL algorithms detect more pulmonary nodules on CT scans compared to double reading by radiologists 45 and allow to classify the detected nodules based on selected features. 46 Another DL-based CAD algorithm outperformed thoracic radiologists for the detection of malignant pulmonary nodules on chest x-rays and improved radiologists’ performance when used as a second reader. 47

CT colonography, or virtual colonoscopy, is a screening technique for the early identification of colonic polyps before they progress to colorectal cancer. Although CAD can improve polyp detection rates, several structures can result in false-positive readings, including haustral folds, coarse mucosa, diverticula, rectal tubes, extracolonic findings, and lipomas. 48 The experience of the reader is thereby essential for the appropriate interpretation and reporting (Figures 2 and 3). Several CAD algorithms improved the detection of prostatic malignancies on MRI, 49 including difficult-to-assess areas such as the central part of the gland or the transition zone. 50 Other possible applications of AI in cancer screening include the detection of metastatic lesions in patients with known primary cancers. Although conceptually this task is similar to detecting primary tumors, the results are currently characterized by low specificity. In the assessment patients with of melanoma, none of the CAD-detected pulmonary nodules proved to be malignant or clinically significant at a follow up. 51

Automatic segmentation of a pulmonary nodule identified on chest CT by the CAD system. CAD system allowed the identification of a solid pulmonary nodule on axial (A), coronal (B), and sagittal (C) views. The nodule was automatically segmented by an integrated AI interface on all 3 planes (D). Abbreviations: AI, artificial intelligence; CAD, computer-aided detection; CT, computed tomography.

Identification of colonic polyps on virtual colonoscopy reconstructions by CAD systems. CAD system identified the presence of a colonic polypoid lesion (target sign) on double-contrast barium enema-like (A), supine 2D axial (B), and endoluminal (C) views. Abbreviations: 2D, 2-dimensional; CAD, computer-aided detection.

Radiogenomics

Radiogenomics, an integration of “radiomics” and “genomics” notions through AI technology, is currently emerging as the state-of-the-art field of precision medicine in oncology. 52 Identification of an array of different genotypes and deregulated pathways involved in the pathogenesis of cancers through the advances in genomic technology lead to the paradigm change of how cancer is seen, classified, and managed with a shift toward a truly personalized approach to each case. Meticulous molecular characterization of malignant tumors is thus one of the mainstays of customized approaches in oncology. 53 However, a vast scale genome-based characterization of cancer is not yet routinely adapted due to the invasiveness, technical complexity, high costs, and timing limitations. 54

Radiogenomics allows a noninvasive and comprehensive characterization of tumor gene expression patterns through imaging phenotype. Distinct portions of tumors and metastases from the same tumor may be characterized by different molecular characteristics, which might change over time. As it is not possible to sample every portion of each tumor and metastatic lesions at multiple time points, the characterization of malignancies by biopsy suffers from significant limitations. 55 Unlike biopsy, radiogenomics analyzes the 3-dimensional tumor landscape in its complexity, does not depend on the heterogeneity of a bioptic sample, and can be used as a virtual biopsy tool. 56 Moreover, radiogenomics enables the noninvasive assessment of multiple lesions at different time points. 57 While most existing studies focus on the analysis of primary tumors, radiogenomics can potentially be applied to the analysis of metastatic lesions. Evaluation of the tumor genomic signature through radiogenomics can further improve our understanding of the natural history of the disease through quick, reproducible, and inexpensive assessment, leading to improved prediction of patient prognosis, individualized therapeutic approaches, optimized enrollment for targeted therapies, and better assessment of treatment responses. 58

Most existing radiogenomics, AI-based models deal with mutations of methylguanine methyltransferase (MGMT), isocitrate dehydrogenase (IDH) 1/2, BRCA1/2, Lumina A/B, estrogen receptor (ER), progesterone receptor (PR), epidermal growth factor receptor (EGFR), Ki-67, and human epidermal growth factor receptor 2 (HER2), due to the data availability. 52 In particular, several studies demonstrated the validity of radiogenomic features for the identification of genetic alterations in patients with pulmonary adenocarcinoma. Radiogenomics models could distinguish EGFR-mutated and EGFR-wildtype pulmonary adenocarcinomas, 59 as well as differentiate EGFR-positive and Kirsten rat sarcoma virus (KRAS)-positive cases. 60 Addition of radiomics data to a clinical prediction model significantly improved the prediction of EGFR status in pulmonary adenocarcinoma (P = .03). 60 EGFR mutation status in NSCLC can also be predicted with quantitative radiomics biomarkers from pretreatment CT scans. 61 In another study, anaplastic lymphoma kinase (ALK), receptor tyrosine kinase-1 (ROS-1), and rearranged during transfection (RET) fusion-positive pulmonary adenocarcinomas could be identified through a prediction model with a combination of clinical data and CT and positron emission tomography (PET) characteristics. 62

EGFR mutations inferred from routine MRI were also demonstrated in patients with glioblastoma based on perfusion patterns in perilesional edema. 63 Moreover, neuro-oncology boasts a variety of ML models developed for a more comprehensive characterization of gliomas. 64 Zhang et al created an algorithm to differentiate these malignancies into low and high grade, with an overall accuracy ranging between 94% and 96%. 65 An ANN model based on the texture analysis of T2-weighted, fluid-attenuated inversion recovery (FLAIR), and T1-weighted postcontrast MRI identified O-6-methylguanine-DNA methyltransferase promoter methylation status in patients newly diagnosed with glioblastoma with an accuracy of 87.7%, 66 allowing to predict the improved response to chemotherapy among patients with this epigenetic change. 67 Another model allowed to infer a variety of genetic mutations in gliomas through the analysis of multiparametric precontrast and postcontrast MRI, including O-6-methylguanine-DNA methyltransferase promoter methylation status, IDH1 mutation, and 1p/19q codeletion, with the accuracy of 83% to 94%. 68 Codeletion of chromosomes 1p and 19q can also be quantitatively assessed from the MRI texture of T2-weighted images with high sensitivity and specificity. 69

Promising radiogenomic models in breast imaging allow differentiating molecular subtypes of breast cancer based on MRI dynamic contrast enhancement imaging.70,71 Genetic pathways of breast tumors were associated with several MRI features, including tumor size, blurred tumor margin, and irregular tumor shape. 72 Messenger ribonucleic acid (mRNA) expressions in breast tumors showed significant association with tumor size and enhancement texture on MRI. 72 The biological behavior of breast cancer, in particular expression of HER2 and other receptors, can also be predicted on ultrasound imaging.73,74 In prostate imaging, radiomics evaluation of prostatic tumor profile on MRI allows to reliably predict the Gleason's grade as defined by pathology. 75 A study conducted among patients with colorectal cancer identified a radiogenomic signature that can reliably predict microsatellite instability status of the tumors and stratify patients into low-risk and high-risk groups. 76 Another radiomic signature identified KRAS/neuroblastoma rat sarcaoma virus (RAS) viral oncogene homolog (NRAS)/B-Raf proto-oncogene (BRAF) mutations in colorectal cancer, which reduce the response to monoclonal antibodies cetuximab and panitumumab. 77

Tumor Segmentation and Treatment Planning

Tumor segmentation serves several clinical and research purposes in oncological imaging. Segmentation is used to determine the volume of tumors, their morphology and relationships with the surrounding organs and tissues and is crucial for the imaging-based planning of surgery or radiotherapy. 78 Segmentation also plays an important role in the assessment of treatment response.

From an operational point of view, tumor segmentation requires the division of an image into multiple parts that are homogeneous with respect to one or more characteristics or features, such as colors, grayscale, spatial textures, or geometric shapes. 79 Before the advent of computer-assisted segmentation tools, contours were manually traced on each slice of imaging scans. 80 These 2-dimensional segmentations were then put together to create a 3-dimensional reconstruction of the lesion within the acquisition volume. Present-day AI segmentation systems greatly shorten the analysis times and improve the reproducibility and inter-reader variability of segmentations, especially when compared with inexperienced operators. 81 First machine-assisted segmentation tools were supervised algorithms based on line and edge detection, which traced image gradients along object boundaries. 81 Modern segmentation tools are predominantly based on a large variety of DL models. 82 While most image segmentation techniques use one imaging modality at one specific time point, their performance and applicability can be improved by combining images from several sources (multispectral segmentation) or integrating images over time (dynamic or temporal segmentation). 83 Multimodal images can be used to improve the segmentation accuracy by accounting for the advantages and disadvantages of individual imaging modalities. For example, CT provides a detailed definition of bone structures but low soft-tissue contrast, 84 whereas MRIs are characterized by high soft-tissue contrast but lower spatial resolution. Combined multimodality images would facilitate segmentation and provide additional imaging information. However, multimodal images need to be accurately co-registered to be consistent and are not always available for segmentation.

Several DL segmentation tools have been developed for use in oncological radiology (Figure 4) and are currently clinically available for lesion characterization, treatment planning, and follow up. Neuro-oncological imaging is one of the leading fields for the application of AI segmentation systems with remarkable potential for workflow and clinical impact. 85 For some types of brain tumors, such as low-grade gliomas, surgical resection is currently the first therapeutic option. Considering the diffuse nature of neural networks at the basis of cognitive functions, the choice of resection margins can dramatically affect the brain function and the patient's quality of life. The development of AI-based systems for the segmentation of brain tumors allows to individually optimize the so-called ”onco-functional balance” and propose tailored resection margins. AI can also be used in other phases of personalized anatomical–functional planning and intraoperative strategy. 86

Volumetric segmentation of a renal lesion. A complex renal cyst (A) was detected as a collateral finding during a CT angiography. The lesion was automatically segmented in axial (B), coronal (C), and sagittal (D) views. The volume of the lesion and mean attenuation values were calculated based on the 3-dimensional measurements (D). Abbreviation: CT, computed tomography.

Radiotherapy planning is another important field of application of AI in oncological imaging. Prostate radiotherapy is a well-established curative procedure that moves toward the use of MRI for targeting of adaptive radiotherapy processes. The lack of clear prostate boundaries, tissue heterogeneity, and wide interindividual variety of prostate morphology hinder MRI radiotherapy planning. Among different ML methods proposed for the automated segmentation of prostate tumors, CNN demonstrate the most promising results. Recent studies demonstrated that CNN-based segmentation systems successfully detect prostatic abnormalities and reliably segment the gland and its subzones for subsequent precision radiotherapy.87,88 Comelli et al proposed a DL MRI prostate segmentation model that could be efficiently applied for prostate delineation even in small training datasets with potential benefit for personalized patient management. 89

CT is customarily used before radiotherapy to calculate the absorbed dose through the assessment of the density of irradiated tissues. However, in everyday clinical practice, most patients receive both MRI and CT scanning as part of their radiotherapy workup, and radiotherapy can be moving toward the sole acquisition of MRI with an AI generation of synthetic CT images. 90 DL can be used to generate synthetic CT images from T1-weighted MRI sequences without any significant difference in dose distribution compared to standard CT imaging.91–93

Predicting Prognosis and Treatment Response

Response to treatment in solid tumors is a key element of oncological imaging. Spatial and temporal heterogeneity and complexity of tumor responses to treatment represent an ongoing challenge for oncological radiologists.94,95 The current standardized response assessment metrics, such as tumor size changes in response evaluation criteria in solid tumors (RECIST) criteria, do not reliably predict the underlying biological response. 96 For example, an initial increase in tumor size, called pseudoprogression, is commonly seen in immunotherapy and is a sign of favorable response to treatment. Conversely, an initial decrease in tumor size, known as pseudoresponse, may be associated with increased tumor aggressiveness as is observed with some anti-angiogenesis agents. AI is a valuable ally for radiologists in determining more accurate methods of treatment response assessment. The prognostic value of AI models has been demonstrated for a variety of oncological fields, including breast, lung, brain, prostate, and head and neck tumors. 97 A recent systematic review and meta-analysis by Chen et al concluded that radiomics has the potential to noninvasively predict the response and outcome of immunotherapy in patients with NSCLC. 98 Another model integrating DL radiomics features with circulating tumor cell count could predict the recurrence of patients with early-stage NSCLC treated with stereotactic body radiation therapy. 99 Although AI-based radiomic approaches have not yet been implemented as a decision-making tool in the clinical setting, additional external, and clinical validations can facilitate personalized treatment for patients with NSCLC.

Recent advances in ML algorithms were used for the development of multimodality models for accurate predictions of the survival of individuals with breast cancer.100–102 Ha et al reported an 88% accuracy of DL-CNN in predicting the response of breast cancer to neoadjuvant chemotherapy based on pretreatment MRI. 103 The delayed contrast enhancement on MRI of invasive HER2 + breast tumors could identify molecular cancer subtypes with better response to HER2 + targeted therapy. 104 A radiomic model predicted the pathological response to neoadjuvant chemotherapy in patients with locally advanced rectal cancer based on MRI. The performance of the model further improved when combined with standard clinical evaluation. 105 In hepatocellular carcinoma, AI can provide great benefits in patients’ management by predicting the response to a variety of treatments, including transarterial chemoembolization.106,107

Immunotherapy is one of the most promising tools in oncological treatment. However, despite its remarkable success rate, immunotherapy is still curbed by high costs and toxicities, while its clinical benefit is limited to a specific subset of patients. AI algorithms with integrated imaging biomarkers allow us to predict the response to immunotherapy, as well as identify early responders in order to optimize its cost-effectiveness and clinical impact. 108 For example, radiomic signature inferred from pretreatment and posttreatment CT scans of patients with NSCLC correlated to the density of tumor-infiltrating lymphocytes and the expression of programed cell death (PD) ligand-1 and identified early responders to immune checkpoint inhibitor therapy. 109 In a large multicenter study, a complex radiomic marker of CD8-cell infiltration predicted response to PD-1 and PD ligand-1 inhibitors. 110 Another radiomic marker based on precontrast and postcontrast CT scans and clinical data was able to predict response in patients with NSCLC undergoing anti-PD-1 immunotherapy, with an AUC up to 0.78. 111 CT radiomic biomarker could predict response to immunochemotherapy among patients with renal cell carcinoma. 112 Moreover, as described above, AI-based models can distinguish pseudoprogression from the response to immunotherapy. A model combining radiomics signature on PET/CT, tumor volume, and blood markers successfully predicted pseudoprogression in metastatic melanoma treated with immune checkpoint inhibition. 113 Finally, the use of AI and radiomics contribute and empower further research into cancer immunity in a bid to better understand the interplay of different genomic and molecular processes at the basis of tumoral response to immunotherapy. 113

The prediction of disease relapse is also crucial for the right treatment planning and AI can provide benefits in this field. Prognostic models integrate genomic profiles and clinical information to stratify the risk of relapse for the choice of the most appropriate therapeutic strategy in accordance with the principles of individualization in cancer treatment.114,115 Mantle cell lymphoma is an unusual lymphoid malignancy with a poor prognosis and short durations of treatment response. Although most patients present with aggressive disease, there is also an indolent subtype characterized by the translocation between chromosomes 11 and 14 (q13; q32) with overexpression of cyclin D1. The heterogeneity of mantle lymphoma and its outcomes necessitates precise prognosis prediction. Pretreatment CT-based AI model was able to predict relapse of mantle cell lymphoma patients with an accuracy of 70%. 116

Challenges and Future Perspectives

To date, AI algorithms in oncological radiology have primarily been applied to manage common and time-consuming problems, such as breast and lung cancer screening. 117 However, as described in this review, fast-paced research, and development of AI algorithms in oncological imaging led to rapid upscaling of their impact and increasing focus on ultra-specialized and high-precision tasks guiding medical decisions and improving patients’ therapies. However, several important issues must be addressed before they can be fully and successfully integrated into clinical practice.

Rigorous standards and high transparency in the development, training, testing, and validation of AI models are all essential prerequisites to make AI results reliable, explainable, and interpretable. 118 Large volumes of high-quality, representative, and well-curated data are needed for the development of robust AI algorithms, 119 as data is considered more critical than hardware and software in the success of AI applications. 120 Although increasing demand for diagnostic imaging examinations produces an exponential buildup of imaging data, it often lacks appropriate quality verification and association with laboratory and clinical parameters and patients’ outcomes. Patient privacy and informed permission are also important ethical and legal predicaments that require concrete legal steps. Expertise and training are needed to correctly label and segment the imaging data used for the validation of AI algorithms. As a result, small-size imaging datasets are often used, reducing the impact of the results, and limiting their applicability. Moreover, data used in the development of AI protocols can be affected by biases related to the clinical, social, and even geographical scenarios in which they were gathered. Reproducibility and generalizability of AI models is a major obstacle that potentially limits their performance in new datasets compared to the training data. Reproducibility of AI results is further complicated by the heterogeneity of acquisition protocols and a multitude of steps needed for the correct identification and processing of imaging features. The difficulty in prospectively collecting unbiased, good-quality, and conspicuous data highlights the essential role of large data sets created through multicenter and multi-institutional collaborations for training and rigorous validation of the algorithms. 121 Controlled prospective studies are needed to enable the shift from research to clinical routine. 122 This is particularly important for ML and DL algorithms that operate as “black box” models, where automated decision making cannot be directly assessed or validated by human operators. Moreover, the interest in unsupervised learning on unlabeled data is constantly rising. Increased algorithm transparency and explainability are needed before the large-scale integration of these models into clinical practice can be possible. 123

Interdisciplinarity should always be available when dealing with AI in healthcare, as it affects research results and their clinical value. The exchange of knowledge and skills between experts in different fields markedly impacts methodology, provides robustness to results, and facilitates their translation into everyday clinical practice. 124

Another important aspect that will determine the wide and routine diffusion of AI in the future is its perception and acceptance by both radiologists and patients. Healthcare specialists’ knowledge of AI has a significant impact on their willingness to learn and apply this technology in their job. 125 A survey on 1041 residents and radiologists highlighted that limited knowledge of AI was associated with fear of replacement, whereas intermediate to advanced levels of knowledge were linked with a positive attitude toward AI. 126 Therefore, dedicated training in the AI field may improve its clinical acceptance and use.

Patient education and engagement are also essential for the success of AI in clinical practice. 127 Surveys of patients’ perception of AI highlighted a generally positive attitude toward using AI-based systems, particularly in a supportive role. However, concerns about cyber-security, accuracy, and lack of empathy and face-to-face relationship have also been raised. 128 The need of providing AI explanations to ensure patients’ trust and acceptance is a crucial point.

Conclusions

AI is becoming increasingly integrated into oncological radiology workflow, and this tendency will likely continue in the future, leading to major improvements in patients’ management and quality of life. A wide variety of routine imaging tasks can be outsourced and automated thanks to AI, including disease detection and quantification and lesions segmentation.

Moreover, the use of AI radiogenomics in oncological imaging is undergoing exponential growth, contributing to the personalization and fine tuning of oncological treatments and approaches. In the next few years, machine learning and neural networks models will become a significant aid in every aspect of oncology, enabling sophisticated analysis of oncological patients and detailed disease characterization. AI technologies in oncological imaging have to overcome several important obstacles before they can be widely used in routine clinical practice. One of the main challenges consists in the effective organization and preprocessing of multi-institutional cohorts of large-scale data needed to obtain clinically reliable algorithms. Ultimately, robust AI-powered multidimensional disease profiling through imaging, clinical, and molecular data in patients with cancer will allow improving clinical strategies and further breach the gap to truly personalized medicine.

Footnotes

Abbreviations

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Correction (December 2022):

Article updated online to correct the article title “Artificial Intellgence in the Era of Precision Oncological Imaging” to “Artificial Intelligence in the Era of Precision Oncological Imaging” and captions of figures 2 and 3 have been revised too.