Abstract

The integration of digital health technologies, open-access data, and artificial intelligence (AI) is reshaping oncology by enabling more precise and personalized care. This review provides a focused update on AI, radiomics, and data integration in the context of liver oncology, with hepatocellular carcinoma (HCC) and colorectal liver metastases (CRLM) serving as key case models. Through multimodal datasets—including imaging, molecular profiles, and clinical records—AI and machine learning (ML) have demonstrated significant potential in improving early detection, risk stratification, and treatment response prediction in hepatic malignancies. Radiomics-driven tools have enabled non-invasive assessment of tumor biology, microvascular invasion, and therapeutic outcomes, particularly in HCC and CRLM. While applications in breast, lung, and non-metastatic colorectal cancers are briefly referenced for comparison, the central emphasis is on liver tumors as a representative field where AI-enabled precision oncology is rapidly advancing. Practical and ethical challenges surrounding clinical integration are also discussed, positioning liver oncology as a translational model for broader innovation in cancer care.

Introduction

In recent years, the field of oncology has undergone a profound transformation driven by the integration of emerging digital technologies, including artificial intelligence (AI), machine learning (ML), deep learning (DL), and telemedicine. These advancements are reshaping how cancer is diagnosed, treated, and monitored, contributing to a more efficient, personalized, and patient-centered model of care.1–3

AI broadly defined as the simulation of human intelligence by machines, encompasses a variety of subfields, including ML and DL. In oncology, these methods differ not only in computational architecture but also in applicability, scalability, and interpretability.

ML techniques, such as support vector machines (SVMs), random forests, and logistic regression, are particularly effective when applied to structured clinical or molecular data with a limited number of features. These models are relatively transparent and interpretable, making them suitable for applications like biomarker-based prognostic modeling or survival analysis. However, they typically require manual feature engineering and may underperform when faced with high-dimensional unstructured data like medical imaging.

In contrast, DL a subset of ML based on artificial neural networks with multiple hidden layersexcels at processing unstructured, high-dimensional datasets such as radiological images, whole-slide pathology scans, or genomic sequences. Convolutional neural networks (CNNs), recurrent neural networks (RNNs), and transformers have shown superior performance in tumor detection, segmentation, and classification tasks. For example, in breast cancer, CNNs have achieved radiologist-level accuracy in mammographic screening, 2 while in lung cancer, DL models have enabled early nodule detection on low-dose CT scans with high sensitivity. 3

Despite their high performance, DL models often suffer from reduced transparency (“black-box” problem), require large training datasets, and are more prone to overfitting if not properly regularized. Moreover, their interpretability remains a challenge in clinical settings where accountability and trust are essential.

Therefore, the choice between ML and DL depends heavily on data modality, volume, and the clinical question. Hybrid models are increasingly being used, such as radiogenomic pipelines that employ DL for feature extraction from images and ML classifiers for integrating multimodal features.

Understanding these distinctions is critical for evaluating the clinical utility of AI tools in oncology, particularly when comparing results across studies.

Despite the remarkable strides made in cancer diagnostics and therapeutics over the past few decades, cancer remains a major global health challenge and a leading cause of death worldwide. According to the World Health Organization, approximately 10 million cancer-related deaths occur annually, making it one of the foremost contributors to premature mortality. This enduring impact underscores the need for continued innovation and optimization in cancer care.

Projections for the coming decades paint a sobering picture. By 2040, the annual incidence of new cancer cases is expected to reach 28.4 million, representing a staggering 47% increase compared to 2020 levels. 1 This anticipated rise is attributed to a combination of factors, most notably the progressive aging of the global population, which inherently increases cancer susceptibility due to cumulative genetic mutations and diminished physiological resilience. 1

In response to this growing burden, researchers and clinicians are increasingly turning to digital innovation to improve accuracy and efficiency in oncology workflows. The integration of AI, ML and DL into oncological practice holds exceptional promise, allowing healthcare professionals to process vast datasets that include medical images, clinical records, and genomic data. These systems are capable of identifying complex patterns and correlations that may be imperceptible to human observers, enhancing diagnostic precision and supporting individualized treatment strategies.2–7

Currently, AI applications in oncology are centered around three core domains: medical imaging analysis, genomic data interpretation, and clinical decision support systems (CDSS). In imaging, particularly DL algorithms has shown remarkable performance in tasks such as tumor detection on mammograms, Computed Tomography (CT) scans, and Magnetic Resonance Imaging (MRIs). Notably, a 2020 study published in Nature demonstrated that an AI system could surpass radiologists in identifying breast cancer from mammographic images, significantly reducing both false positives and false negatives. 2 In lung cancer, convolutional neural networks have demonstrated similar capabilities, with early detection of pulmonary nodules from low-dose CT scans that rival expert interpretations. 3 These advances not only facilitate earlier diagnosis but also contribute to more effective treatment planning and monitoring.

In parallel, AI-driven models are increasingly used to interpret large volumes of sequencing data, identifying actionable mutations such as Epidermal Growth Factor Receptor (EGFR) in non-small-cell lung cancer or BRCA in breast and ovarian cancer, which can guide the use of targeted therapies. 7 This approach supports the development of precision oncology strategies tailored to the individual molecular profile of each patient. Moreover, AI-enhanced clinical decision support systems integrate patient-specific data with current evidence-based guidelines, enabling oncologists to make timely, personalized treatment recommendations. These systems are particularly useful in managing complex cases involving multiple comorbidities or advanced disease, where human judgment may benefit from additional data-driven support. 8

Despite these encouraging developments, challenges remain. Data privacy concerns, algorithmic bias, and the need for seamless integration into clinical workflows continue to represent significant barriers to widespread implementation. 8 Nonetheless, ongoing efforts to refine these systems, ensure fairness, and address ethical considerations are critical steps toward fully realizing the potential of AI in oncology.

In tandem with AI, other digital tools are expanding the reach of cancer care. Telemedicine has gained significant traction, breaking down geographical barriers and facilitating patient access to specialized services. At the same time, open data initiatives are enabling global collaboration, accelerating discoveries in cancer biology, and improving access to high-quality datasets for training and validation of AI models.9,10

In this review, we explore the role of artificial intelligence in oncology through a focused lens on liver malignancies, particularly hepatocellular carcinoma (HCC) and colorectal liver metastases (CRLM). These tumors exemplify the complexity of multimodal data integration, requiring advanced imaging interpretation, genomic characterization, and dynamic treatment adaptation. Rather than offering a comprehensive overview across all cancer types, we use HCC and CRLM as case studies to illustrate how AI is concretely advancing precision oncology, while also shedding light on the broader opportunities and persistent barriers to clinical implementation. This framing allows us to critically evaluate the translational maturity of AI applications and their implications for the wider field of oncologic innovation.

Harnessing AI and Radiomics for Enhanced Cancer Diagnosis and Prognosis

AI-Enhanced Imaging and Radiomics for Cancer Diagnosis and Prognostic Assessment

Traditional diagnostic methods in oncology, including radiological image interpretation, biopsy and histopathological examination, and standard biomarker evaluation have long served as the foundation for cancer diagnosis and staging. While effective, these methods are often limited by subjectivity, interobserver variability, and a relatively narrow scope of information per modality. For instance, radiological assessment relies heavily on the experience of the radiologist and may miss subtle patterns not easily detectable by the human eye. Histopathology, while definitive, is invasive and often not feasible in real-time decision-making.

In contrast, AI-based diagnostic approaches, particularly those using radiomics and deep learning (DL), provide quantitative, reproducible analyses that can uncover high-dimensional imaging features associated with tumor biology, heterogeneity, and even genetic profiles. These technologies can process and integrate large-scale imaging, clinical, and genomic data, offering a more comprehensive view of the tumor. While AI is not a substitute for traditional methods, it represents a transformative augmentation to standard care, offering enhanced diagnostic precision and aiding in earlier, data-driven clinical decisions.

Among the most impactful developments is the application of radiomics, a discipline that transforms medical images into high-dimensional, mineable data, revealing tissue characteristics beyond human perception.11–32 When combined with AI algorithms, radiomics enables the extraction of meaningful patterns from standard imaging modalities, supporting the discovery of non-invasive biomarkers (Figure 1).

Schematic Representation of the AI-driven Workflow in Liver Oncology. Integration of Imaging, Radiomic, Clinical, and Genomic Data Allows AI to Extract Predictive Features, Supporting Diagnosis, Treatment Planning, and Prognostic Stratification.

Several recent studies exemplify the successful translation of AI methodologies into clinical and preclinical healthcare domains, showcasing innovations that parallel the advances discussed in this review. For instance, Deepa et al 33 provided a comprehensive analysis of deep learning approaches for early detection, comparing algorithmic performance with traditional methods and highlighting key issues in model interpretability and data quality in dermatologic imaging.[ 33 provided a comprehensive analysis of deep learning approaches for early melanoma detection, comparing algorithmic performance with traditional methods and highlighting key issues in model interpretability and data quality in dermatologic imaging.

Another study by Kamiş et al 34 explored AI integration with surgical robotics and remote monitoring to enhance breast disease management, offering insights into cross-modality data fusion and the challenges of workflow integration in operative oncology.

Bhat & Kakunje 35 provided a broad overview of AI adoption across healthcare contexts, emphasizing infrastructural barriers, clinician training needs, and the balance between technological innovation and human-centered care.

Liver Oncology: HCC and Colorectal Liver Metastases

Liver malignancies have emerged as a primary field where AI technologies are demonstrating significant clinical potential. Radiomics-based models have been applied to HCC to assess imaging phenotypes predictive of microvascular invasion (MVI), tumor differentiation, and treatment response. 19 Radiomic features derived primarily from contrast-enhanced MRI and CT allow non-invasive evaluation of intratumoral heterogeneity, perfusion patterns, and peritumoral tissue characteristics. Several studies have shown that radiomic texture features such as entropy, kurtosis, and gray-level co-occurrence matrix metrics can predict MVI preoperatively, supporting risk stratification and guiding surgical and adjuvant decisions.

Radiomics has also been applied to estimate tumor grading and predict treatment response, particularly to transarterial chemoembolization (TACE). ML-driven radiomic tools can forecast tumor response, enabling selection of alternative strategies like systemic therapy with tyrosine kinase inhibitors or immunotherapy. Integrating such models into the pre-treatment workflow can refine patient selection, personalize approaches, and improve outcomes.

In CRLM, ML models trained on CT and MRI datasets have demonstrated the ability to predict molecular features such as RAS mutational status, a key determinant for anti-EGFR therapy.17–20 Radiomics has also been employed to evaluate tumor budding, growth patterns, and recurrence risk, supporting personalized surveillance and adjuvant strategies.22–24,28

Breast Cancer

AI applications in breast cancer have gained momentum, particularly through ML-based radiomics applied to dynamic contrast-enhanced MRI. These models predict histological subtypes and tumor aggressiveness, informing personalized treatment decisions.14,29–38 Kamiş et al 34 explored AI's integration with surgical robotics and remote monitoring in breast disease management, demonstrating how cross-modality data fusion enhances clinical workflows.

Lung Cancer

In lung cancer, DL algorithms have shown excellent performance in detecting small pulmonary nodules on low-dose CT scans, achieving diagnostic accuracies comparable to experienced radiologists.3,39–42 Advanced models combining radiomics with genomic and clinical data have predicted progression-free survival in non-small cell lung cancer (NSCLC). Notably, Song et al 43 demonstrated how KEAP1 and MET mutations serve as prognostic factors. Tools like quantitative imaging decision support systems (QIDS), assessed by Fusco et al, 40 offer robust standardization in thoracic oncology interpretation.

Colorectal Cancer (Non-Metastatic)

Radiomics has also contributed to non-metastatic colon cancer management. Caruso et al 44 demonstrated that baseline CT radiomics could identify high-risk patients, guiding follow-up intensity and adjuvant strategies. These models allow stratification based on likely outcomes, reducing overtreatment and enhancing precision oncology. 45

Cross-Disease Challenges and Considerations

Despite the growing accuracy of AI-supported diagnostic tools in oncology, the potential for false positives and false negatives remains a significant clinical concern. False positives, cases in which AI incorrectly flags a benign lesion as malignant, can lead to unnecessary anxiety, further testing, and even overtreatment. Conversely, false negatives when malignant findings are missed pose a greater risk by delaying diagnosis and reducing the likelihood of effective intervention. These errors may arise due to limitations in training data, image artifacts, poor generalizability to external populations, or technical variability across imaging platforms. Importantly, such diagnostic inaccuracies can erode clinician confidence and hinder the integration of AI systems into routine practice.

To mitigate these risks, AI models must be thoroughly validated using large, diverse, and prospectively collected datasets, and evaluated not only for overall accuracy, but also for sensitivity, specificity, and clinical impact. Furthermore, AI should be positioned as a decision support tool, complementing but not replacing human expertise, particularly in borderline or ambiguous cases. Continuous post-deployment monitoring and feedback loops are also essential to identify model drift or failure in real-world settings.

However, barriers to routine clinical use remain. Model generalizability, the need for external validation, and concerns related to data standardization and interpretability continue to pose challenges. Nevertheless, the non-invasive, reproducible, and scalable nature of AI-based imaging tools underscores their potential as valuable adjuncts in multidisciplinary cancer care particularly in liver oncology, where tissue heterogeneity and treatment complexity demand nuanced and individualized approaches.

Precision Oncology and Personalized Treatment Strategies

The evolution of personalized medicine marks a paradigm shift in oncology, moving from uniform protocols to therapeutic strategies tailored to each patient's molecular and clinical profile. Central to this evolution is the integration of genomic profiling, radiomics, and ML, enabling identification of predictive biomarkers and individualized treatment planning.

Advancements in high-throughput sequencing have facilitated the detection of actionable genomic alterations. In breast cancer, for instance, HER2 amplification has led to the development of HER2-targeted agents, significantly improving patient survival. 36 In NSCLC, EGFR mutations guide the administration of tyrosine kinase inhibitors, while in colorectal cancer, RAS and BRAF mutational statuses inform the selection of anti-EGFR or BRAF-targeted therapies. 17 Similarly, immune checkpoint inhibitor efficacy is increasingly linked to biomarkers like microsatellite instability (MSI) and tumor mutational burden (TMB). 46

AI augments these approaches by integrating radiologic, genomic, pathological, and clinical data into predictive models. A notable example is the study by Jiang et al, 47 which used radiomic features and deep learning to predict the tumor microenvironment (TME) and chemotherapy benefit in gastric cancer. Their multicenter model demonstrated strong performance (AUC: 0.909-0.937), offering non-invasive assessment of patient prognosis and potential immunotherapy responsiveness.

In ovarian cancer, Crispin-Ortuzar et al 48 developed a model incorporating radiogenomic features and clinical data to predict neoadjuvant chemotherapy response in high-grade serous carcinoma. The addition of radiomics improved prediction accuracy and reduced classification errors, highlighting its role in patient stratification and trial design.

Liver malignancies, particularly HCC, remain a key application area. Radiogenomic analyses have linked radiomic features with angiogenesis, epithelial-mesenchymal transition, and immune pathways—guiding personalized use of systemic therapies like sorafenib or immune checkpoint inhibitors. Radiomics has also been used to non-invasively assess microvascular invasion, tumor grade, and response to TACE, providing decision support in both curative and palliative settings.19,28

In CRLM, studies by Granata et al17,28 demonstrated the use of radiomics for non-invasive prediction of RAS mutation status and tumor budding—parameters relevant for therapeutic decision-making and prognostic stratification. Imaging features have also been used to estimate the risk of recurrence and guide postoperative follow-up.23,24

In breast cancer, ML applied to dynamic contrast-enhanced MRI has predicted histologic subtypes and tumor aggressiveness, aiding in therapeutic planning.14,29–38

For NSCLC, AI has supported mutation detection and prognosis estimation. For example, Song et al 43 showed that models incorporating imaging and genomic features could predict progression-free survival based on MET and KEAP1 status, while Fusco et al 40 validated QIDS systems to enhance thoracic oncology workflows.

Finally, in colon cancer, Caruso et al 44 used CT-based radiomics to identify high-risk patients preoperatively, enhancing decisions regarding adjuvant chemotherapy and surveillance protocols.

This shift toward precision oncology is not limited to treatment selection. AI models increasingly inform treatment timing, dosage, and sequencing—minimizing toxicity while preserving efficacy. By aligning therapy with tumor biology and patient-specific features, AI-driven personalization enhances outcomes and supports sustainable, patient-centered care. 45

Open Data and Collaborative Networks in Oncologic Research

The expansion of open data initiatives is accelerating progress in oncology by enabling large-scale collaboration, improving access to diverse datasets, and fostering the development of advanced AI models. Through shared repositories of genomic, clinical, and imaging data, researchers around the world can work collectively to uncover novel cancer biomarkers, develop more precise diagnostic tools, and optimize treatment strategies.49–52

Major platforms such as The Cancer Genome Atlas and the Genomic Data Commons have provided foundational resources, offering comprehensive molecular and clinical data across various tumor types.53–63 These repositories have facilitated pan-cancer analyses and allowed for the identification of shared mutational signatures and cancer-driving pathways, as evidenced by projects like the Pan-Cancer Analysis of Whole Genomes. 49

However, while genomic data sharing is relatively well-established, the integration of medical imaging data into these infrastructures remains limited. A systematic review by Gabelloni et al 50 found that, among over 9000 articles analyzed, only 54 biobanks met criteria to be classified as imaging biobanks. Most were disease-specific, lacked standardization, and were accessible only upon individual request. This fragmentation limits the potential of radiomics and AI, which require large, heterogeneous imaging datasets for robust algorithm training and validation. The lack of harmonized imaging datasets with corresponding pathology and outcome data slows the development of radiomics-based biomarkers that could support non-invasive decision-making in routine clinical practice.

Nevertheless, promising initiatives are beginning to emerge. The ChAImeleon project, a multicenter and multi-country effort funded by the European Union, is creating a pan-European imaging repository focused on five major cancers, including liver and colorectal tumors. 52 The goal is to provide high-quality, annotated imaging data to fuel the development of AI tools for diagnosis, prediction, and treatment response assessment. Projects like ChAImeleon reflect a shift toward building comprehensive infrastructures that connect imaging, clinical, genomic, and pathological data within unified platforms.

Despite the benefits, open data sharing raises significant concerns around privacy, data governance, and standardization. Protecting patient confidentiality is essential, especially when dealing with sensitive genomic or imaging information that could potentially be re-identified. Initiatives such as the Global Alliance for Genomics and Health are working to establish common frameworks for secure and ethical data sharing across institutions and borders. 51

As the field evolves, further efforts are needed to promote interoperability among datasets and repositories, ensure data quality and annotation consistency, and incentivize contributions from both academic and clinical institutions.

Challenges in Implementing These Innovations

Data Privacy and Security

One of the most pressing challenges in integrating AI and open data into oncology is ensuring the privacy and security of patient information. The application of AI in healthcare necessitates the aggregation and analysis of vast quantities of sensitive data, including genomic sequences, medical imaging, and electronic health records, all of which carry significant privacy implications if not adequately protected.

Compliance with international data protection frameworks such as the General Data Protection Regulation (GDPR) in Europe and the Health Insurance Portability and Accountability Act (HIPAA) in the United States is essential to guarantee lawful and ethical handling of personal health data.64,65 These regulations mandate strict protocols for data collection, processing, anonymization, and sharing, aiming to balance research utility with individual rights.

Despite anonymization efforts, the risk of re-identification remains a concern, especially with complex datasets that include imaging or genetic markers. Sophisticated algorithms can, in certain contexts, re-link anonymized data to individual patients by cross-referencing multiple data sources. This underscores the need for continuous advancement in data encryption, access control mechanisms, and secure computational environments to prevent unauthorized access or data breaches. Moreover, transparency with patients is critical. Individuals should be fully informed about how their data will be used, not only in direct care but also in research, AI development, or collaborative databases. Obtaining informed consent that clearly outlines data usage, storage duration, and potential future applications is an ethical obligation that should be systematically addressed. In the context of oncologic imaging, the secure handling of large imaging datasets becomes even more critical. The cross-border nature of many AI research collaborations (eg, through platforms like ChAImeleon 52 ) requires robust inter-institutional agreements and standardized security protocols to ensure data is transferred, processed, and stored safely at all stages. As AI systems become increasingly embedded in oncology workflows, the oncology community must remain vigilant in prioritizing data ethics, security infrastructure, and patient trust. Long-term adoption of digital tools depends not only on technological performance but also on their alignment with foundational principles of medical confidentiality and individual autonomy.

Addressing Bias and Fairness in AI Models

AI becomes increasingly integrated into oncology, the issue of algorithmic bias emerges as a critical barrier to equitable care. AI models are fundamentally shaped by the data on which they are trained. When these datasets lack diversity or fail to represent the full spectrum of patient populations, the resulting algorithms may systematically underperform for certain groups particularly those from underrepresented racial, ethnic, or socio-economic backgrounds. 66

This phenomenon has been well-documented across multiple healthcare domains. For example, AI tools developed predominantly with data from European or North American populations have demonstrated reduced accuracy when applied to patients of African, Asian, or Indigenous descent. Such disparities in model performance can lead to misdiagnosis, delayed treatment, or inappropriate therapeutic recommendations, ultimately exacerbating existing healthcare inequalities. 67

In oncology, these concerns are especially pronounced. Variations in tumor biology, imaging characteristics, and genomic profiles across populations may not be adequately captured in homogeneous training sets. For instance, radiomics models developed using imaging data from a single demographic or institution may not generalize well to external cohorts, particularly in complex diseases where genetic and environmental factors contribute to highly variable phenotypes.

To mitigate bias, there is a growing emphasis on the inclusion of diverse and representative datasets in AI development pipelines. This includes incorporating data from multiple geographic regions, healthcare systems, and demographic groups. In addition, algorithmic fairness techniques such as reweighting, adversarial debiasing, or fairness constraints can be applied during training to ensure that model output remains consistent across different subgroups.

Moreover, transparent reporting is essential. Developers must clearly disclose dataset composition, validation cohorts, and subgroup performance metrics. Without this information, clinicians and regulators cannot adequately assess whether an AI model is suitable for deployment in a given patient population.

Ultimately, equity in AI is not a purely technical issue, but a clinical and ethical imperative. As digital tools continue to shape the future of oncology, addressing bias should be considered a fundamental requirement, ensuring that innovation does not come at the expense of equity.

Beyond demographic and clinical representativeness, another key limitation that threatens the generalizability of AI models in oncology is overfitting. This occurs when algorithms learn overly specific patterns from training data—often due to small sample sizes or high-dimensional input features such as radiomics—and fail to replicate performance in independent or external datasets. In oncology, where clinical heterogeneity is high, overfitting can lead to false confidence in predictive accuracy, especially when models are evaluated without robust validation procedures. To address this, best practices now emphasize the use of cross-validation, external cohort testing, and feature regularization during model development, alongside transparent reporting of validation performance and dataset limitations. These steps are crucial to ensure that AI tools trained on historical data can truly support real-world clinical decision-making.

Integration with Clinical Workflows

While AI continues to show promise in enhancing cancer diagnosis, prognosis, and treatment planning, effective integration into clinical workflows remains one of its most significant challenges. The transition from research models to practical, real-time clinical tools requires more than technological readiness, it demands alignment with clinical routines, trust from healthcare professionals, and infrastructure capable of supporting AI-driven decision-making.

One of the foremost concerns among clinicians is the interpretability and reliability of AI outputs. Many current AI systems, particularly those based on DL, function as “black boxes,” providing predictions or classifications without a clear explanation of the underlying reasoning. In complex and high-stakes scenarios such as oncology where treatment decisions have profound consequences, physicians may be reluctant to rely on models whose inner workings they cannot fully understand or interrogate. 68

A major challenge in the clinical adoption of AI systems in oncology is the limited interpretability and transparency of many machine learning algorithms, particularly those based on deep learning. These models provide outputs (eg, malignancy scores, treatment recommendations) without revealing the underlying reasoning or feature importance. This opaqueness can reduce clinical trust, complicate regulatory approval, and limit the ability of physicians to justify AI-assisted decisions to patients or multidisciplinary teams. Moreover, in high-stakes fields like oncology, where treatment plans are life-altering, clinicians require tools they can interrogate and understand, not just accurate predictions. To address these issues, recent efforts have focused on the development of explainable AI (XAI) techniques. These include heatmaps or saliency maps in imaging, feature attribution scores in radiomics, and model-agnostic interpretation frameworks such as Shapley Additive Explanations (SHAP) or Local Interpretable Model Agnostic Explanation (LIME). 69 Such methods help visualize how different input features influence model predictions, improving transparency and accountability. However, explainability often comes at the cost of model complexity or performance, and there is no universal standard for what constitutes a clinically acceptable level of interpretability. Striking the right balance between predictive power and transparency remains an active area of research and a prerequisite for the ethical integration of AI in oncology.

Moreover, the lack of digital literacy and training among healthcare providers can hinder adoption. Most oncologists and radiologists have not received formal education in data science or AI technologies. Without structured training programs and user-friendly interfaces, the integration of AI into daily practice risks being superficial or even counterproductive. Overcoming this barrier requires not only the development of intuitive tools, but also ongoing professional education that empowers clinicians to interpret and confidently apply AI-driven insights.

Technical issues also persist. Integrating AI systems into existing hospital infrastructures and electronic health records (EHRs) often require significant customization and interoperability work. Without seamless integration, AI tools may remain isolated from the broader clinical ecosystem, limiting their utility and uptake. 70

There is also a concern regarding workflow disruption. If AI tools are not designed with real-world clinical pacing and decision-making in mind, they risk introducing inefficiencies rather than solving them. For example, if radiomics platforms require manual data export or extra steps outside the standard imaging workflow, clinicians may be discouraged from incorporating them into routine assessments regardless of the model's performance.

Lastly, medico-legal uncertainty adds to another layer of complexity. The question of accountability in cases where AI influences patient outcomes, especially adverse ones, remains unresolved in many regulatory environments. As a result, some clinicians may be hesitant to rely on AI-generated recommendations in the absence of clear legal and ethical frameworks.

To move from proof-of-concept to standard of care, future efforts must focus on co-designing AI systems with clinicians, ensuring alignment with established workflows, fostering interpretability, and clarifying responsibilities. Only through such integrated development can AI tools become not just innovative, but also indispensable and trusted partners in cancer care.

In parallel, a critical yet often overlooked barrier to clinical adoption of AI lies in the heterogeneity and limited standardization of medical data across institutions. Variability in imaging protocols, scanner vendors, acquisition parameters, and annotation methods can significantly affect the consistency of extracted radiomic features. These inconsistencies reduce model reproducibility and limit external validation, ultimately undermining clinical trust. Additionally, the lack of harmonized clinical data models and shared ontologies complicates the integration of multi-source datasets, spanning radiologic, genomic, and pathological domains, into unified AI frameworks. Efforts toward data harmonization, international reporting standards, and multicenter collaboration will be essential to ensure AI systems are generalizable and clinically reliable across diverse settings.

Beyond individual technological limitations, the systemic integration of AI tools into existing clinical workflows poses a significant challenge. Current oncology care pathways are often rigid, time-sensitive, and highly protocolized, leaving little room for incorporating complex algorithms that require additional processing time, user training, or data formatting. Moreover, most hospital information systems, including Picture Archiving and Communication System (PACS) and EHRs, were not designed with AI interoperability in mind, leading to frequent compatibility issues and fragmented implementations. Clinical teams often lack dedicated support for information technology maintenance or AI calibration, which may result in underutilized or abandoned tools after pilot phases. Importantly, the absence of unified standards for how AI output should be displayed and interpreted in clinical environments adds to the variability in adoption. To ensure sustainable integration, AI systems must be co-developed with clinicians, embedded into real-time decision-making interfaces, and rigorously evaluated for workflow efficiency, not just model accuracy.

Practical Barriers to Sustainable Implementation of AI in Oncology

Despite the growing enthusiasm around AI and digital health tools in oncology, several operational, regulatory, and infrastructural challenges may limit their long-term adoption and effectiveness in real-world clinical settings.

Sustainability and System Maintenance

AI platforms require ongoing updates, recalibration, and support to remain clinically relevant. This includes adapting to changes in imaging protocols, integrating evolving clinical guidelines, and addressing software-hardware compatibility. Without structured investment in long-term IT infrastructure and maintenance, particularly in low-resource settings, these tools risk obsolescence or inconsistent performance.

71

Standardization of Validation Procedures

The lack of standardized validation protocols for AI models presents a significant barrier to clinical adoption. Many studies rely on retrospective single-institution datasets with internal validation only, which limits external generalizability. There is growing consensus around the need for transparent and reproducible frameworks such as TRIPOD-AI, CONSORT-AI, and SPIRIT-AI to guide AI model evaluation and reporting.

49

Regulatory Complexity

The clinical implementation of AI tools remains hindered by evolving and heterogeneous regulatory requirements. Agencies such as the FDA and EMA are still defining approval processes for adaptive algorithms, including standards for explainability, performance drift monitoring, and software-as-a-medical-device classification. This uncertainty may delay integration into routine clinical pathways.

Cost-Effectiveness and Resource Burden

Although AI is often proposed as a cost-saving solution, its deployment typically requires high initial investment in IT systems, digital infrastructure, personnel training, and integration efforts. Moreover, cost-effectiveness data are still limited and context-dependent, varying by tumor type, healthcare setting, and population.

72

Health economic assessments are urgently needed to support evidence-based decision-making regarding AI adoption in cancer care.

Training and Digital Literacy Requirements

Successful integration of AI into oncology demands a well-prepared clinical workforce. However, most physicians have limited training in data science and algorithmic reasoning. Without dedicated educational programs and intuitive AI interfaces, there is a risk of misinterpretation, underutilization, or outright resistance among clinicians.

73

Limited Generalizability Across Clinical Settings

A critical limitation in AI-based oncology studies is the lack of generalizability across different clinical environments. Most models are trained on datasets derived from specific institutions, often using standardized imaging equipment, protocols, and relatively homogeneous patient populations. These models may perform suboptimal when deployed in settings with different scanners, ethnic backgrounds, or disease presentations. Without multi-institutional validation, there is a risk that AI tools optimized for one environment will fail to translate effectively into broader clinical practice.

Addressing these interconnected barriers is essential to transition AI from research applications to sustainable and equitable clinical tools. Future research, policy development, and clinical integration strategies must account not only for algorithmic performance, but also for infrastructure, regulation, economics, and clinical heterogeneity, to ensure long-term, patient-centered benefit.

Expanded Cross-Cutting Barriers to AI Implementation in Oncology

In addition to technical and infrastructural limitations, several critical cross-cutting barriers continue to affect the responsible adoption of AI technologies in oncology.

First, the heterogeneity of imaging protocols, molecular testing platforms, and data annotation practices introduces substantial challenges to reproducibility and model transferability across institutions. Differences in scanner models, acquisition parameters, biomarker assays, and genomic platforms can lead to variability in AI performance, highlighting the need for data harmonization frameworks and domain adaptation techniques to ensure model robustness across clinical settings. 74

Secondly, limited access to high-quality imaging and molecular diagnostics in low-resource or underserved settings raises concerns regarding the equitable distribution of AI benefits. AI models developed and validated in high-income environments may not translate well to contexts with lower diagnostic capabilities, risking disparities in performance and clinical utility. 75

Third, algorithmic bias remains a significant concern, particularly when training data do not adequately represent diverse patient populations. This can lead to systematic underperformance in subgroups based on race, gender, socioeconomic status, or geography, with direct implications for diagnosis, treatment selection, and outcomes. Addressing these biases requires inclusive data sourcing and the implementation of fairness-aware modeling techniques. 75

Fourth, patient acceptance of AI-driven healthcare interventions is often overlooked. Patients may express concerns about transparency, data privacy, and the dehumanization of care, especially in sensitive fields like oncology. Trust, shared decision-making, and clear communication about the role of AI are essential for meaningful clinical integration.75,76

Lastly, the legal and regulatory landscape surrounding AI-based diagnostics remains unclear in many jurisdictions. Questions of accountability, particularly in the event of misdiagnosis or harm, are unresolved, creating medico-legal uncertainty for clinicians, developers, and institutions alike. Establishing regulatory clarity and liability frameworks is therefore fundamental to the safe and responsible deployment of AI systems in cancer care.75,76

Ethical Considerations

The integration of AI into cancer care introduces a wide range of ethical challenges that extend beyond data privacy and clinical performance. As these technologies increasingly influence diagnosis, treatment selection, and prognostic evaluation, concerns regarding autonomy, accountability, and transparency become central to their responsible implementation.

One of the most debated issues is the “black box” nature of many AI models, particularly DL systems. These models often produce highly accurate output without offering clinicians or patients, a clear explanation of how decisions are made. In oncology, where trust, informed consent, and shared decision-making are essential pillars of care, this lack of explainability can erode confidence in AI-generated recommendations.16,77,78 Clinicians may hesitate to act on predictions that cannot be interpreted or challenged, especially in life-altering treatment scenarios.

This concern has led to increasing interest in the development of explainable AI (XAI), an area focused on making AI decision-making processes more transparent and understandable to end-users. By providing visualizations, feature importance rankings, or rule-based logic, XAI seeks to enhance interpretability without compromising performance. Organizations such as the Italian Society of Medical and Interventional Radiology (SIRM) have highlighted explainability as a critical step toward improving clinical trust in radiological AI tools. 78

Another key ethical issue relates to accountability. When an AI system contributes to a treatment decision that results in harm, it remains unclear who bears responsibility: the clinician who accepted the recommendation, the institution that implemented the tool, or the developers who trained the algorithm? This lack of clarity complicates risk management and has legal implications that are not yet well-defined in most jurisdictions. 77

There is also a risk that automation bias the tendency to over-rely on machine-generated outputs could affect clinical judgment. Particularly in high-pressure environments, clinicians might defer too readily to algorithmic suggestions, potentially overlooking contextual factors or patient preferences. Ensuring that AI remains a supportive tool rather than a replacement for clinical reasoning is essential to preserving the ethical integrity of care.

Finally, the equitable distribution of AI benefits should be considered. As discussed in the previous section, biases in training data can result in AI models that disproportionately fail certain populations. Ethically, there is an obligation to ensure that AI does not reinforce existing disparities but rather contributes to a more inclusive and just healthcare system.

To address these concerns, ethical frameworks should be developed alongside technological innovation. These should establish clear guidelines on transparency, accountability, consent, and equity, and involve stakeholders including clinicians, ethicists, patients, and regulators in the design and deployment of AI systems.

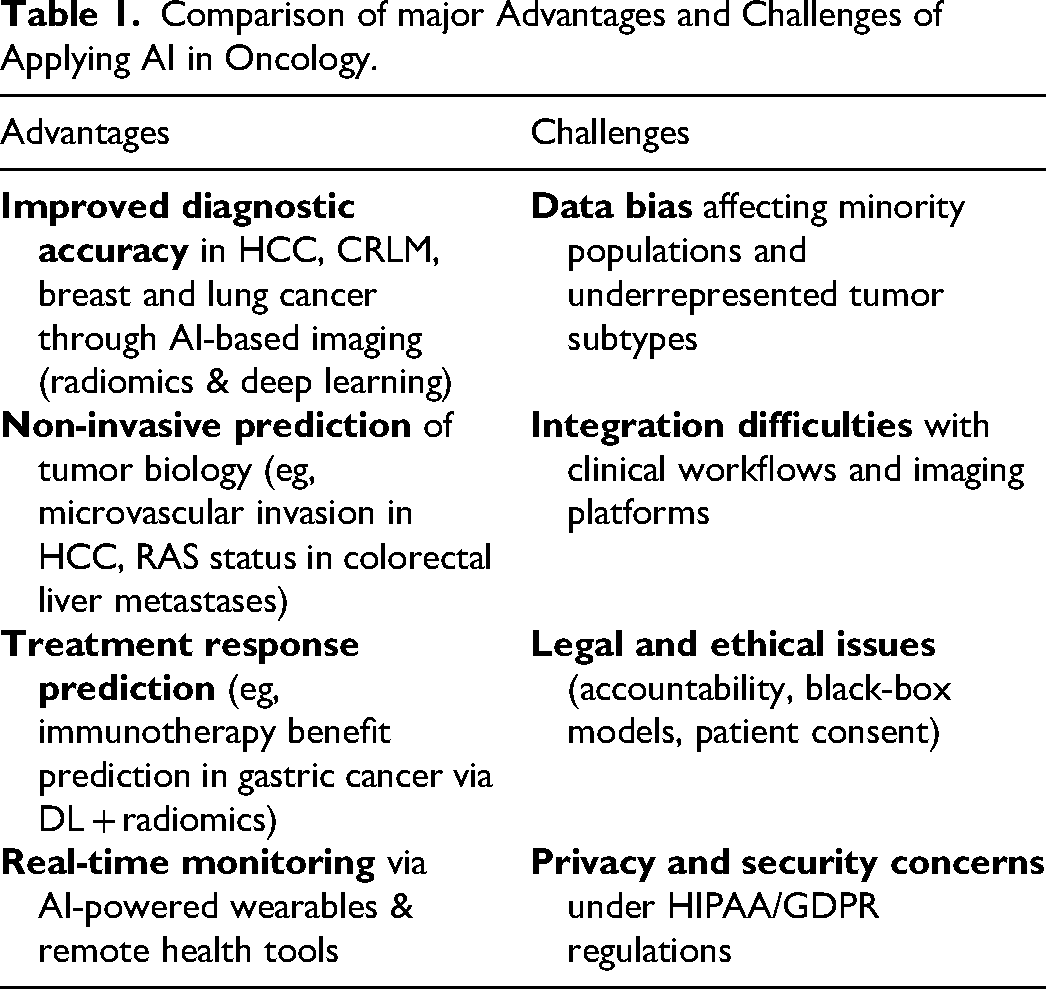

In summary, the ethical deployment of AI in oncology demands more than technical rigor; it requires a human-centered approach that respects the values of trust, fairness, and shared responsibility at the core of clinical care (Table 1).

Comparison of major Advantages and Challenges of Applying AI in Oncology.

The ethical dimensions of data sharing in oncology represent a critical challenge in the era of AI-driven medicine. While large, diverse datasets are essential for training robust and generalizable AI models, the process of aggregating data across institutions and borders raises serious concerns regarding patient privacy, data ownership, and informed consent. Many existing datasets used in AI development are derived from retrospective cohorts without explicit patient permission for secondary use in machine learning research. Furthermore, the de-identification of imaging and clinical data, though standard, is not infallible, particularly when AI models can potentially re-identify individuals through cross-modal analysis.

There is also an ethical tension between promoting open science and protecting individual autonomy. Institutions and researchers must navigate complex regulatory frameworks such as GDPR in Europe and HIPAA in the United States, which often differ in scope and interpretation. In low-resource settings, inequities in data access and control can further reinforce global disparities, raising questions about fair representation in model training and benefit sharing from AI-driven discoveries.

To ethically deploy AI in oncology, it is essential to develop transparent governance models for data sharing that respect patient rights, ensure accountability, and promote equitable collaboration. These should be supported by clear data stewardship policies, standardized consent mechanisms, and the inclusion of patient representatives in AI development and oversight processes.

While AI and digital health tools hold promise for transforming oncology care, there are growing concerns that their benefits may not be equitably distributed across patient populations. Disparities in access to digital infrastructure, such as high-speed internet, smartphones, or wearable monitoring devices, can limit the utility of AI-supported systems among underserved or socioeconomically disadvantaged groups. For example, rural populations, elderly patients, or individuals with low digital literacy may face barriers to participating in telemedicine programs or remote symptom tracking platforms. These access gaps risk exacerbating existing health inequities, particularly when clinical pathways or research protocols begin to rely on digital tools as standard components of care.

To avoid deepening the digital divide in oncology, developers and policymakers must adopt inclusive design strategies, ensure the availability of alternative care pathways, and support targeted interventions, such as device provision, digital literacy programs, and multilingual AI interfaces. Equity in digital health must be treated not as an afterthought, but as a core design and implementation principle to ensure that innovations benefit all patients, not just the digitally connected.

While open-access datasets are essential for fostering innovation and improving the generalizability of AI models in oncology, they raise important concerns regarding data privacy and potential re-identification risks. Even anonymized medical imaging and clinical data may be vulnerable when cross-referenced across datasets or combined with external information, particularly in large-scale, multi-institutional repositories. 79 Additionally, heterogeneous consent protocols and inconsistent governance standards across international data-sharing initiatives can compromise patient autonomy and regulatory compliance. 80 To address these challenges, secure infrastructures, dynamic consent models, and privacy-preserving technologies, such as federated learning and differential privacy, should be incorporated early in AI pipeline design.

Strategic Implementation Considerations

Beyond the core technical and ethical challenges, the successful adoption of AI and digital health solutions in oncology also requires addressing several strategic and operational considerations.

One pressing issue is the lack of seamless integration between digital platforms and existing electronic health records. Most hospital information systems are not designed to accommodate AI-driven modules, often leading to fragmented workflows and duplicated data entry. Interoperability remains limited, which hinders real-time clinical utility and continuity of care. 81

Additionally, the pace of technological innovation in AI while beneficial poses risks to consistency and reproducibility in oncology research. Models developed today may become outdated as software libraries evolve, hardware changes, or new imaging modalities emerge, potentially making previous results difficult to replicate. 68

To navigate these challenges, interdisciplinary collaboration between clinicians, data scientists, IT engineers, and regulatory experts is essential. AI tools that are co-designed across disciplines are more likely to meet clinical needs, minimize misalignment with workflows, and address both technical and human factors that influence usability and trust.

Moreover, AI models may produce inconsistent or conflicting results when applied to different datasets due to variations in demographics, imaging protocols, or disease prevalence. Without proper external validation and calibration, this variability can limit their generalizability and reliability in real-world settings. 82

Clinician resistance also represents a substantial barrier. Factors such as lack of trust, perceived loss of autonomy, insufficient training, or fears of job displacement can delay adoption. Facilitating AI literacy and maintaining physician oversight in AI-supported decisions are crucial for acceptance and confidence-building.

Importantly, the long-term clinical benefit-risk balance of AI and open-access data tools remains underexplored. While early evidence is promising in diagnostics and treatment stratification, the potential for over-reliance, false positives, or ethical missteps warrants systematic post-deployment monitoring and outcome tracking.

Finally, AI systems are inherently prone to technological obsolescence. Continuous retraining, software updates, regulatory re-evaluation, and infrastructure upgrades are necessary to maintain clinical utility and patient safety over time. Failing to plan for this lifecycle can undermine sustainability and trust in digital oncology platforms.

Augmented Insight – Rethinking Algorithmic Bias and Ethics in AI for Oncology

While the challenges of algorithmic bias, data privacy, and regulatory uncertainty are well documented, progress has been hindered by a lack of operational frameworks within oncology to measure, monitor, and mitigate these risks at scale.

For example, although fairness-aware ML algorithms have been proposed (eg, adversarial debiasing, equalized odds), they are rarely validated in multicenter oncology datasets stratified by race, socioeconomic status, or geographic setting. Current fairness metrics (such as disparate impact ratio or demographic parity) are seldom included in medical AI publications. One path forward is to establish equity reporting requirements in AI studies, similar to CONSORT-AI for trial design, but focused on subgroup generalizability.

On the regulatory side, ethical review boards and AI oversight committees remain fragmented and reactive. A proactive solution could be the creation of oncology-specific AI accreditation bodies, tasked with preclinical benchmarking, clinical validation oversight, and longitudinal monitoring of algorithmic drift.

In terms of data privacy, technical solutions like federated learning or homomorphic encryption are often cited, but lack clear deployment protocols in hospital environments. We argue that institutions should adopt tiered risk models for data sensitivity, enabling pragmatic trade-offs between model performance and privacy—for instance, allowing partial data localization in high-risk genomics while enabling federated analysis of de-identified imaging.

Lastly, ethical concerns around explainability often rest on the notion that clinicians must fully understand an algorithm's decision. However, in practice, many diagnostic tools (eg, lab assays, radiotracers) are used without full mechanistic transparency. The key issue may be less about explainability and more about accountability and traceability: knowing who approved the model, how it performed in validation, and what to do if it fails. Embedding auditability mechanisms into AI deployment pipelines is thus more practical than demanding model transparency per se.

In sum, to move from theory to transformation, oncology needs not only ethical reflection but institutional design, technical implementation, and workflow-aligned accountability structures.

Future Perspectives and Emerging Directions in Oncologic Innovation

The continue evolution of AI in oncology is expected to unlock new dimensions of clinical utility across diagnosis, treatment, monitoring, prognosis and research.83–86 Among the most promising applications is the development of advanced predictive analytics, capable of processing vast streams of patient data in real time, including vital signs, laboratory parameters, imaging findings, and treatment responses. These AI-driven systems will support clinicians in anticipating disease progression, identifying early signs of treatment resistance, and dynamically adjusting therapeutic strategies to improve outcomes and reduce complications. The integration of data from multiple modalities such as genomics, radiomics, and clinical records will further enhance the precision and personalization of care.

AI is also poised to reshape the drug discovery process, traditionally a time-consuming and high-cost endeavor. By analyzing large-scale molecular and clinical datasets, AI models can identify novel therapeutic targets and predict how specific tumors based on their genetic or epigenetic signatures will respond to available agents. This has profound implications for precision medicine, as it enables the design of treatment regimens tailored to the unique molecular characteristics of each patient's tumor, minimizing the trial-and-error approach and accelerating the delivery of effective therapies.

In parallel, the expansion of telemedicine and remote patient monitoring is redefining the delivery of oncologic care. Wearable sensors and AI-enabled mobile applications now allow continuous tracking of physiological parameters such as heart rate, oxygen saturation, and physical activity levels.87–95 These tools provide real-time insights into patient status, facilitate early intervention in case of adverse events, and contribute to reducing hospital admissions. Moreover, digital health platforms empower patients to actively participate in their care, offering personalized recommendations on nutrition, exercise, symptom tracking, and medication adherence ultimately enhancing autonomy and quality of life.

Another critical aspect shaping the future of oncology is the rise of global data-sharing networks. 96 As more institutions embrace open science principles, collaborative frameworks are enabling the pooling of diverse, high-quality datasets from around the world. These shared resources not only accelerate the discovery of new biomarkers and treatment targets but also improve the training and validation of AI algorithms,97–102 ensuring their applicability across different populations and clinical settings. Initiatives focused on structured, privacy-respecting data exchange will be pivotal to achieving broad-based innovation in cancer care.

In summary, the future of oncology will increasingly be driven by intelligent, interconnected systems capable of transforming large-scale data into actionable clinical insights. From predictive modeling and drug development to patient empowerment and global collaboration, AI is positioning itself as a cornerstone of next-generation cancer care delivering more precise, efficient, and equitable outcomes for patients worldwide.103–113

Conclusion

The incorporation of AI, machine learning, and radiomics is rapidly transforming the management of liver cancers, particularly HCC and CRLM. These malignancies provide a valuable case model for understanding how digital tools can enhance diagnostic accuracy, enable individualized treatment strategies, and expand access to precision oncology. While challenges remain, including data privacy, algorithmic bias, and integration into clinical workflows—the progress achieved in liver oncology demonstrates both the promise and limitations of AI-driven innovation. By treating HCC and CRLM as representative models, this review highlights how lessons learned in liver oncology can inform the responsible, scalable adoption of AI across other tumor types and ultimately advance the future of cancer care.

Nevertheless, the widespread adoption of these innovations is not without obstacles. Key challenges such as ensuring data privacy and security, addressing algorithmic bias, and integrating AI tools into clinical workflows must be carefully managed. Without robust ethical frameworks and interdisciplinary collaboration, the transformative potential of these technologies risks being underutilized or unevenly distributed.

Looking ahead, the ongoing advancement and refinement of AI-based systems hold the promise of a more predictive, personalized, and equitable model of oncology. By embracing these innovations within an ethically grounded and patient-centered approach, the healthcare community can significantly improve the quality, efficiency, and outcomes of cancer care on a global scale.

Footnotes

Abbreviations

Acknowledgements

The authors are grateful to Alessandra Trocino, librarian at the National Cancer Institute of Naples, Italy.

Ethics Approval and Consent to Participate

Not applicable.

Consent for Publication

Not applicable

Author's contributions section

V.G., R.F. and M.Z wrote the manuscript. Each authors performed investigations and approved the study.

Funding

This work was funded by the European Union - Next Generation EU - NRRP M6C2 - Investment 2.1 Enhancement and strengthening of biomedical research in the NHS - CUP H63C22000440006.

Competing Interests

The authors of this manuscript declare no relationships with any companies, whose products or services may be related to the subject matter of the article.

Availability of Data and Material

Data are reported in the manuscript.