Abstract

There is a need for tailored, psychometrically sound readiness assessment instruments that reflect the unique characteristics of community-based health promotion settings. We tailored the Readiness Diagnostic Scale (RDS) for use with community organizations using a health-promoting program for older adults (Choose to Move, CTM) as a case study. The 50-item RDS assesses organizational readiness across three domains: Motivation, Innovation-Specific Capacity, and General Capacity. Using a three-stage process we refined item wording for clarity during Stages 1 and 2, resulting in a 51-item prototype. In Stage 3, individuals from community-based organizations (n = 207) completed the prototype survey; data were used to assess psychometric properties of the prototype. Exploratory factor analysis supported a four-factor structure; nine items did not load on any factor and were removed, yielding a 42-item scale (RDS-CTM). Most items from original domains retained their structure; however, a new factor, General Capacity—Staff, emerged, emphasizing the role of staff in organizational readiness. The final model explained 59% of the variance, with strong factor loadings (0.48–0.92) and excellent reliability (α = .91–.95). The RDS-CTM is a practical tool for assessing organizational readiness to implement CTM. It can guide capacity-building efforts, inform resource allocation, and support decision-making around implementation support and program feasibility. Although specific for CTM, the RDS could be adapted for other health promotion programs in community settings, offering a standardized approach to readiness assessment across diverse initiatives. Future research should explore the RDS-CTM’s broader applicability and construct validation, using larger samples and confirmatory methods such as structural equation modeling.

Keywords

Background

Community-based, not-for-profit organizations play a pivotal role in the delivery of public health interventions. However, there are many barriers to implementation in this setting, such as underfunding, high staff turnover, and competing demands (Bach-Mortensen et al., 2018; Balis et al., 2022). Organizational readiness (“readiness”)—a “tangible and immediate indicator of an organization’s commitment to its decision to implement an intervention” (Damschroder et al., 2009)—plays a critical role in whether, and how well, evidence-based interventions (EBIs) are implemented (Greenhalgh et al., 2004; Weiner, 2009). Assessing readiness identifies strengths and gaps, which enables implementation support teams (“support teams”) to tailor their efforts and prioritize actions to enhance readiness. In this way, support teams help bridge the gap between research knowledge and real-world practice (Wandersman et al., 2012). Specifically, they select appropriate readiness-building implementation strategies (Proctor et al., 2013) that position organizations to adopt, implement, and sustain an EBI (Vax et al., 2022).

Selecting a Readiness Assessment Instrument

There are at least 19 valid and reliable instruments that measure readiness (Weiner et al., 2020). However, most are specific (e.g., language, content) to clinical settings and therefore not suited to the community-based, not-for-profit sector delivering health-promoting EBIs. Valid and reliable measures are foundational to implementation science. When organizations rely on instruments that lack construct validity for the unique needs and characteristics of this sector, there is a risk of misjudging readiness, which can lead to insufficient and/or ineffective implementation support, wasted resources, and ultimately, suboptimal program outcomes (Gauthreaux et al., 2024). Tailored and psychometrically sound readiness assessment instruments can support more effective program planning, tailored implementation support, and long-term sustainability—benefiting both service providers and the populations they serve.

Aims

In this article, we describe the multistage process we used to (1) tailor a readiness assessment instrument to meet the needs and priorities of not-for-profit organizations using a health-promoting EBI called Choose to Move (CTM) as a case study and (2) validate the tailored instrument by assessing its psychometric properties (factor structure validity, reliability).

Frameworks

We selected and received a copy of the original Readiness Diagnostic Scale (RDS; Domlyn & Wandersman, 2019; Durbin et al., 2022) with permission to use and modify as needed. The RDS aligns with the Interactive Systems Framework for Dissemination and Implementation (ISF; Wandersman et al., 2008, 2024)—a broader framework we used in our research to guide implementation of a health-promoting EBI for older adults (CTM described below; https://www.choosetomove.ca).

Within the ISF (Wandersman et al., 2024), readiness is understood as R = MC2 which proposes that an organization’s readiness is determined by its: (1) Motivation, (2) Innovation-Specific Capacities, and (3) General Organizational Capacities (Scaccia et al., 2015). The RDS was initially developed to assess readiness for health improvement processes in primary care (McClam et al., 2023; Scott et al., 2021) and has been evaluated for construct and criterion validity (Scott et al., 2017). The RDS has been used (with minor changes for context (Domlyn et al., 2021; Durbin et al., 2022) in various clinical, behavioral, and public health contexts. These settings generally have more structure, funding, and capacity than not-for-profit community organizations (Dias et al., 2023; Durbin et al., 2022; Scott et al., 2024). To date, no studies described a full tailoring process with follow-up psychometric testing within the not-for-profit, health promotion sector.

Our validation procedures were guided by Messick’s unified theory of validity (Messick, 1993), a foundational framework in educational and psychological measurement in the 1980s (Hubley & Zumbo, 2011; Zumbo et al., 2002). This modern view of validity shifted the traditional understanding of validity from being a collection of separated types of validity (e.g., content, construct), to a single unified concept focused on construct validity, the degree to which an instrument or a tool accurately measures the theoretical construct it is intended to measure (Hubley & Zumbo, 2011; Zumbo et al., 2002). According to Messick’s framework, construct validity is dependent on five sources of validity: content, response process, association among instrument scores and other variables, consequence, and factor structure (Hubley & Zumbo, 2011; Zumbo et al., 2002). Here, we focus primarily on factor structure validity of the RDS to obtain evidence supporting its construct validity.

CTM

CTM is a choice-based, coach- and peer-supported health promotion program for low-active, community-dwelling older adults. Within CTM, there are two intervention components: a 30-minute one-on-one consultation between older adults and an activity coach, and eight group meetings (online or in person) with other CTM participants, facilitated by an activity coach. Participation in CTM enhanced physical activity and mobility, and diminished social isolation and loneliness in older adults (McKay et al., 2018, 2021, 2023; Nettlefold et al., 2024). We have used a phased approach to scale-up (2015–2025+) CTM across British Columbia (BC), Canada, engaging >7800 (as of March 2025) older adults.

CTM is delivered by large and small, not-for-profit, community organizations. Large organizations (n = 2) have broad geographic reach across BC through recreation centers (n = 82) to implement CTM (McKay et al., 2018, 2021, 2023; Nettlefold et al., 2024). In 2018, we began working with small organizations (n = 28; e.g., neighborhood houses, seniors’ centers) that had reach across BC through community sites (n = 38). These organizations reach older adults with many intersecting structural barriers to health (e.g., populations underserved due to race, ethnicity, socioeconomic status, age, and/or geography). A CTM support team is embedded within the Active Aging Society (AAS; www.activeagingsociety.org), a not-for-profit organization with a mission to promote older adults’ physical and social health. The support team uses a suite of implementation strategies to build organizations’ readiness to adopt and implement CTM. In general, small organizations required additional support to better “fit” their local context and resource needs (Sims-Gould et al., 2022). The support team assessed readiness informally, via ad hoc, one-on-one conversations with organizational staff. To improve efficiency and promote scalability (Milat et al., 2014), we aim to formalize and streamline this process using a valid and reliable readiness assessment instrument.

Method

RDS (Original)

The RDS has undergone several iterations over time (Domlyn et al., 2021; Durbin et al., 2022; Scaccia et al., 2015). Although our version is grounded in the work of Durbin et al. (2022), the structure and item counts differ slightly due to ongoing refinements made by the development team to improve conceptual clarity and applicability across diverse implementation settings. The RDS version we received contains 50-items, across three domains (Supplemental Table S1). Each of the three domains is comprised of five to seven subdomains (19 subdomains total; Durbin et al., 2022). Briefly, Motivation is assessed using 14 items across seven subdomains (e.g., priority, relative advantage; 1–4 items per subdomain); Innovation-Specific Capacity is assessed using 12 items across five subdomains (e.g., knowledge and skills, champion; 2–3 items per subdomain); and General Capacity is assessed using 24 items across seven subdomains (e.g., culture, structure; 2–5 items per subdomain). Each item is scored from strongly disagree (1) to strongly agree (7) or don’t know (treated as missing). Scores of 5 or below at the domain, subdomain, and item level suggest areas for improvement (Durbin et al., 2022) that can be targeted for improvement (Domlyn et al., 2021).

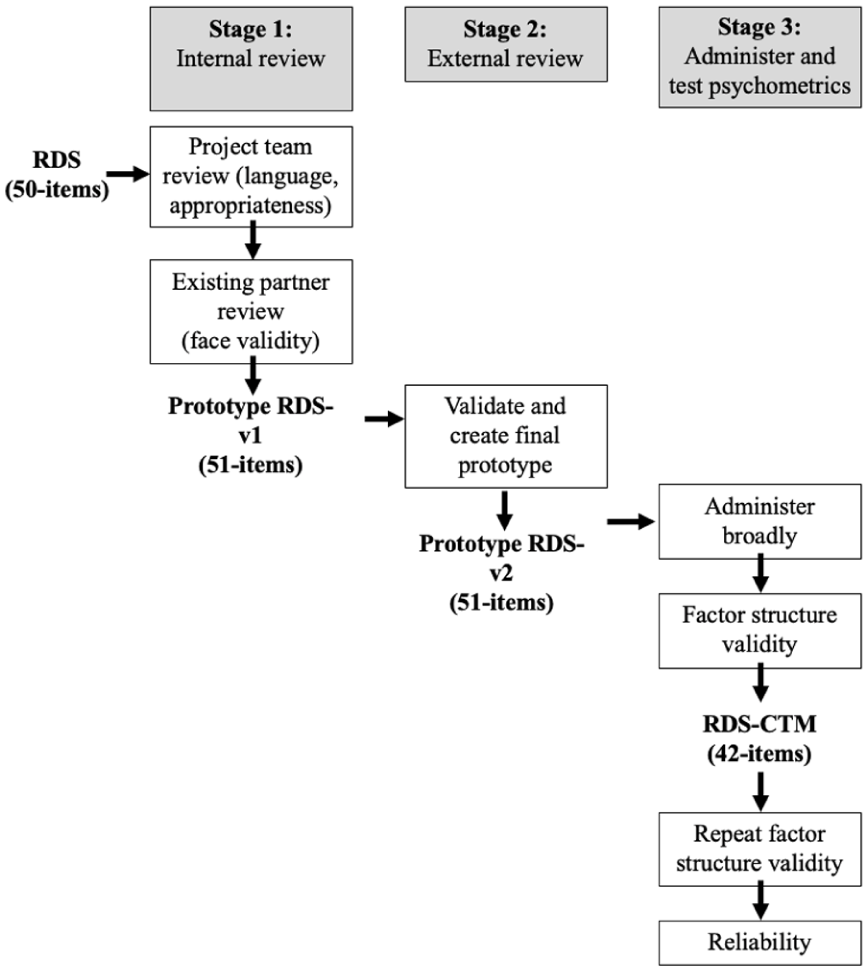

Overview of Tailoring and Testing the RDS for CTM

An overview of our multistage tailoring process is shown in Figure 1 and described in detail below. Briefly, in Stage 1, we developed an initial prototype of the survey tailored for the CTM context (RDS-v1). In Stage 2, we contracted an external not-for-profit agency (provides training and advocacy to the not-for-profit sector in BC) to review and support the creation of a final prototype, suitable for broad administration (RDS-v2). In Stage 3, we administered the final prototype (RDS-v2) broadly and used the data to test its psychometric properties. We refined the prototype based on the psychometric results and produced a final tailored version of the RDS for CTM (RDS-CTM). We conducted a follow-up evaluation of RDS-CTM psychometric properties. All data were collected and managed using Research Electronic Data Capture (REDCap) tools hosted at the University of British Columbia (Harris et al., 2009).

Overview of the Multistage Process to Tailor, Administer, and Test Psychometric Properties of the RDS-CTM

Participants and Procedure

Data Analysis

Results

Socio-demographic characteristics of included respondents are available in Supplemental Table S3.

Factor Structure Validity (RDS-v2; 51 Items)

The overall KMO statistic was 0.89 [deemed meritorious (Kaiser, 1974)], indicating a good level of sampling adequacy and common variance among RDS_v2 items. Bartlett’s (1950) test of sphericity was statistically significant (chi sq = 9,357.502, p < .001, df = 1,275), indicating that our correlation matrix was different from the identity matrix. Horn’s (1965) parallel analysis suggested that four factors should be retained (Supplemental Figure S1); PAF showed that four factors explained 56% of the cumulative variance in readiness with a wide range of item loadings (Furr, 2021).

Most items from the Motivation domain loaded onto Factor 1, while most Innovation-Specific Capacity items loaded onto Factor 2, and most General Capacity items loaded onto Factor 3. While no cross-loadings were identified, six items (isc1, isc2, isc3, isc6, gc13, and gc15) exhibited weak loadings on all four factors [<|0.45| based on our sample size of 207 (Hair, 1998; Yusoff, 2019)], and six items showed inappropriate loadings. Specifically, three items originally under Motivation (m1, m2, m14) and three items originally under General Capacity (gc19, gc20, gc21) loaded onto a new factor separate from other items in those domains. Conceptual review among the research team supported removal of the three Motivation items (m1, m2, and m14) along with the six items with weak loadings (gc13, gc15, isc1, isc2, isc3, and isc6) from further analysis (and the survey). The remaining three items in Factor 4 were identified as conceptually important and retained in the resulting survey, RDS-CTM, for a follow-up EFA (Supplemental Table S4).

Factor Structure Validity (RDS-CTM; 42 Items)

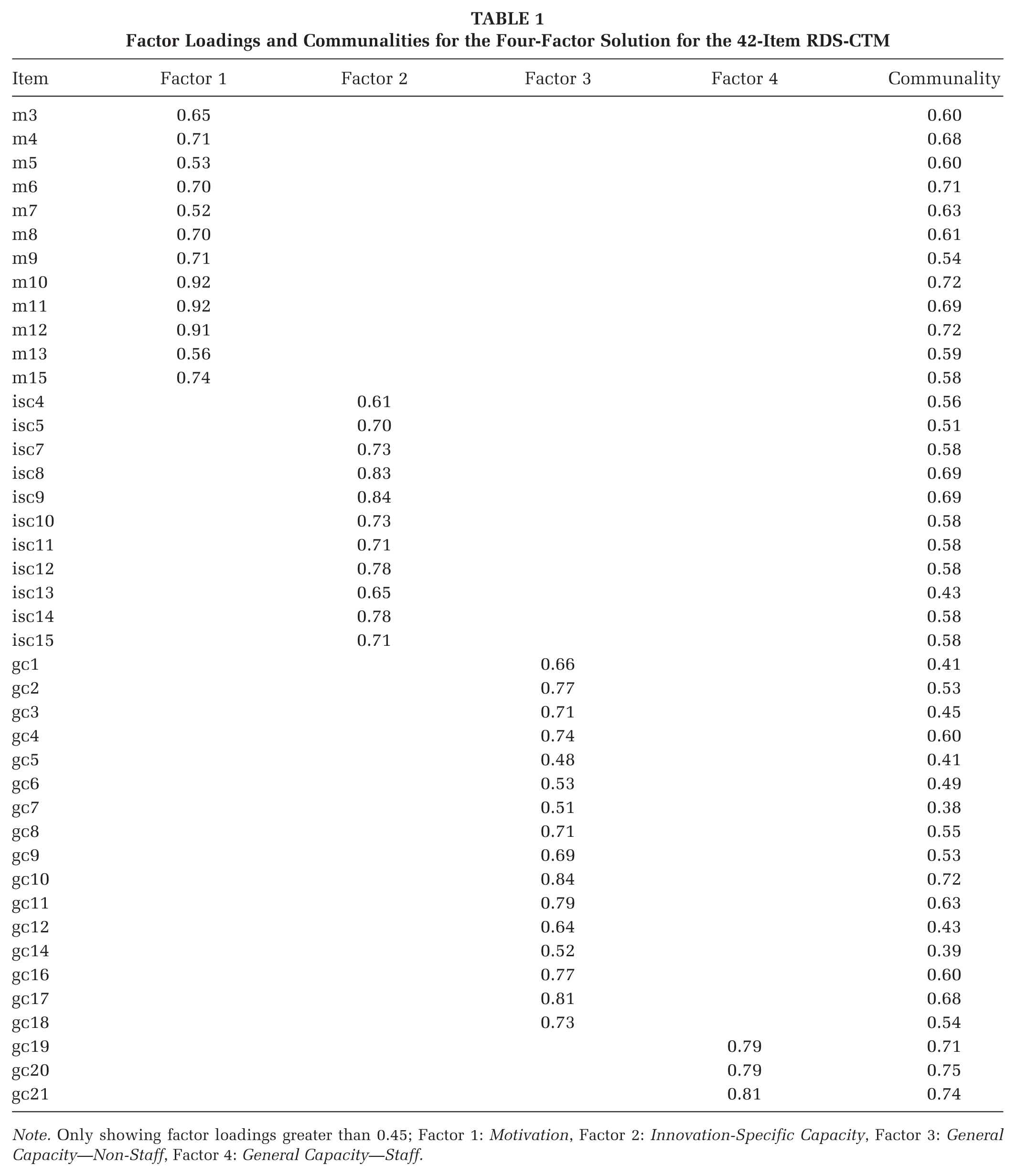

The overall KMO statistic improved to 0.90 [deemed marvelous (Kaiser, 1974)] and Bartlett’s (1950) test of sphericity remained statistically significant (chi sq = 7,434.273, p < .001, df = 861). Horn’s (1965) parallel analysis continued to suggest a four-factor solution. PAF showed that the four-factor solution explained 59% (vs. 56% for the RDS_v2) of the cumulative variance for the 42-item RDS-CTM with a wide range of item loadings [Factor 1= .52–.92; Factor 2= .61–.84; Factor 3= .48–.84; Factor 4= .79–.81; all exceeding the threshold of |0.45| based on our sample size of 207 (Hair, 1998)] with no cross-loadings (Table 1). More specifically, Motivation Items 3–15 loaded on Factor 1 (Motivation hereafter), Innovation-Specific Capacity Items 4–15 loaded on Factor 2 (Innovation-Specific Capacity hereafter), General Capacity Items 1–18 loaded onto Factor 3 (General Capacity—Non-Staff hereafter), and General Capacity Items 19–21 loaded onto Factor 4. As these items focused on the number of staff and their capacity, their corresponding factor was named General Capacity—Staff hereafter. With the exception of two items with communalities near .4 (gc 7, communality = .38; gc 14, communality = .39), items had communalities greater than .4. This is considered acceptable [40] and suggests that the items were well explained by the retained factors (MacCallum et al., 2001).

Factor Loadings and Communalities for the Four-Factor Solution for the 42-Item RDS-CTM

Note. Only showing factor loadings greater than 0.45; Factor 1: Motivation, Factor 2: Innovation-Specific Capacity, Factor 3: General Capacity—Non-Staff, Factor 4: General Capacity—Staff.

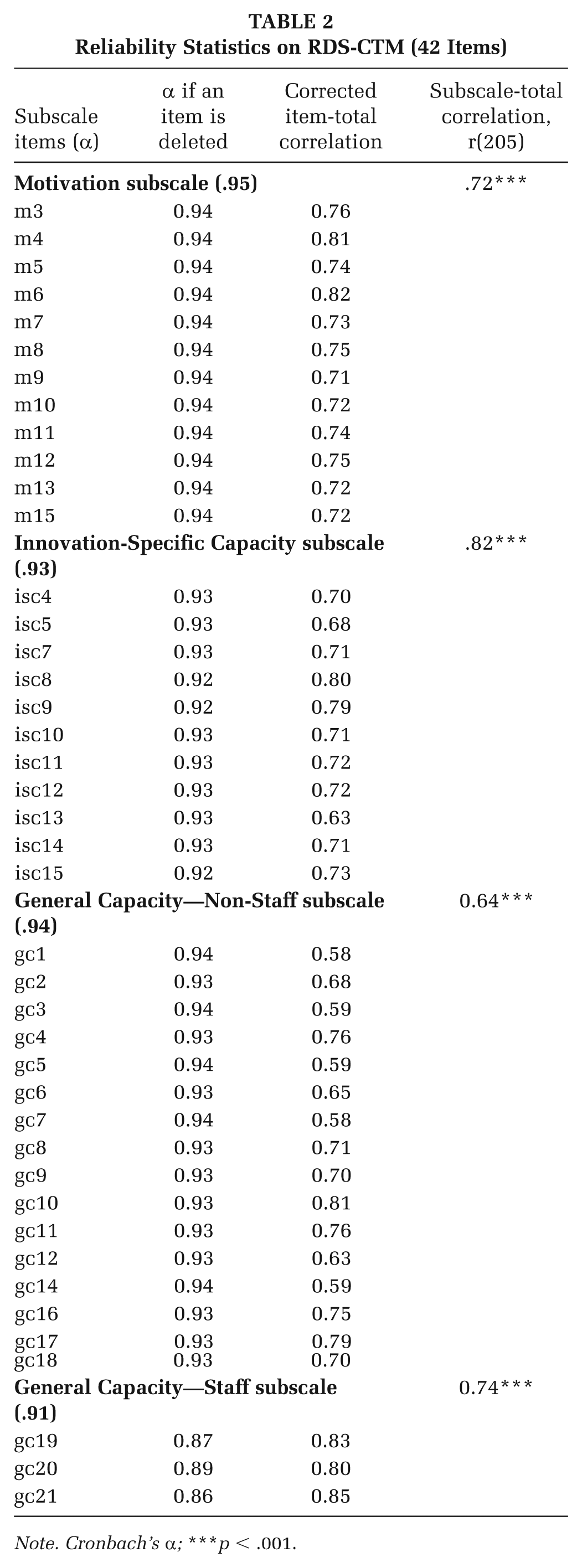

Reliability (RDS-CTM; 42 Items)

All four subscales exhibited excellent internal consistency, indicated by α > 0.90 (Field, 2013; Table 2). Internal consistency was not improved for any subscales after removal of each item individually. Corrected item-total correlations were acceptable [> 0.3 (Ferketich, 1991)]. Pearson correlations between subscale scores and the total score were strong [>0.5 (DeVellis, 2017; Furr, 2021; Rosenthal & Rosnow, 2008)] and statistically significant.

Reliability Statistics on RDS-CTM (42 Items)

Note. Cronbach’s α; ***p < .001.

Discussion

We describe a rigorous, multistage process to tailor and validate a readiness assessment instrument for community organizations delivering a health-promoting EBI. Our primary aim in conducting EFA was to assess the factor structure validity of the RDS and ensure it accurately captures key dimensions of organizational readiness in the context of community-based health promotion programs such as CTM. This work addresses an important gap in the literature regarding the construct validity of readiness instruments—particularly their factor structure—when used in community-based, nonclinical settings. Our study provides empirical support for the validity of the RDS-CTM, supporting its future use in this context. Our detailed description provides a comprehensive guide/template for other health promotion and implementation science researchers to assess the validity (particularly factor structure validity) of readiness instruments. Below, we discuss key findings, their implications, and areas for future research.

Importance of Tailoring Readiness Instruments

Community organizations often operate with limited resources and face unique challenges (Mazzucca et al., 2021). Thus, we must align instruments with their operational realities and constraints. Our systematic engagement with diverse experts ensured the language, content, and structure of the RDS-CTM were contextually appropriate. Decisions to retain or remove items were informed by feedback from participants, implementation science theory, and statistics (Scaccia et al., 2015; Wandersman et al., 2024). Our process underscores the value of engaging end-users early on in the research process to enhance the relevance and utility of public health intervention measures (e.g., readiness instruments; Leask et al., 2019).

Initial EFA: Removal of Items

In contrast to the original three-factor RDS, our initial EFA on the 51-item RDS-v2 extracted a four-factor structure. However, four Innovation-Specific Capacity items and two General Capacity items from the prototype were weak indicators of the extracted factors and were deemed unnecessary. Item removal decisions were informed by three parameters including items with factor loadings <.45, items that loaded onto multiple factors, and items that loaded onto a conceptually irrelevant factor. Specifically, three of the Innovation-Specific Capacity items (number, knowledge, and qualifications of staff; isc 1–3) were deemed not essential in the CTM context. First, organizations receive funding to hire a coach to deliver CTM. Second, all coaches receive CTM training prior to delivery. Training mitigates variability in perceived competence and knowledge among staff; thus, the relevance of these items was reduced (Fixsen et al., 2005). Similarly, removal of item isc6, which asked about the committee/team responsible for CTM delivery, was justified given that direct delivery of CTM to participants is the responsibility of the coach. This aligns with research emphasizing the need for clear descriptions of roles (Aarons et al., 2011) and consistent terminology in EBI implementation (Scaccia et al., 2015). General capacity prototype items gc13 and gc15 focused on sources of revenue and funding. The weak loadings of these items may be explained by the study sample; only 25% of respondents held leadership roles, and therefore, many respondents may be unable to answer questions related to funding. We justified removal of these items because organizations receive funding to deliver CTM; as such, their existing revenue or funding sources may be less relevant indicators of organizational readiness.

Three items that asked about the relative advantage and priority of CTM within the organization (m1, m2, m14) loaded onto a new fourth factor—General Capacity—Staff (described below) along with three items from the original General Capacity domain. We removed items m1, m2, and m14, as they may have been challenging for staff to answer without sufficient understanding of the CTM program.

Follow-up EFA: Four-Factor Structure

The 42-item RDS-CTM demonstrated good factor structure validity. A novel finding was the emergence of a fourth factor—General Capacity—Staff—which emphasizes that readiness instruments should be sensitive to the organization and program contexts they assess. When an organization has adequate levels and appropriate types of staff and volunteers to deliver CTM, the program might be perceived as more advantageous for the organization regardless of its actual value (Damschroder et al., 2009). The separation of General Capacity items into “General Capacity—Non-Staff” and “General Capacity—Staff” reflects the nuanced readiness roles of organizational infrastructure and human resources. This aligns with previous work (Lamont et al., 2024) recognizing the difference between general capacities that describe everyday functioning of an organization and those that align with the innovation. For CTM, staff capacity is critical to plan, implement, and evaluate CTM and may explain the need for a new factor.

RDS Reliability

Overall, our study provided evidence supporting the reliability of the RDS-CTM. We assessed item-level and subscale performance to evaluate the reliability and practical utility of the RDS-CTM. Corrected item-total correlations illustrated that each item is a good indicator of readiness (DeVellis, 2017; Field, 2013). Finally, strong and statistically significant Pearson correlations between each subscale score and the total score support a theoretical relationship (DeVellis, 2017; Furr, 2021; Rosenthal & Rosnow, 2008). Despite this, future research should examine potential item redundancy, indicated by excessively high Cronbach’s alphas (α ≥ .95), particularly in the “Motivation” subscale. It may be possible to further shorten the RDS-CTM without losing meaningful information (Streiner, 2003; Tavakol & Dennick, 2011).

Study Limitations

We experienced challenges within survey administration, with some survey responses presenting as fraudulent (e.g., bot responses). This highlights the need for robust survey design and administration protocols that ensure data quality. For example, techniques that assigned a unique site visitor (e.g., cookies or IP addresses) would have made data cleaning more efficient. Calculating the recruitment rate would aid reporting and guide future promotion of the survey.

After excluding fraudulent responses, our sample size was insufficient to investigate the higher-order factor structure of the original RDS, which includes domains and subdomains that load onto a broader, higher-order factor of readiness. While EFA is well-suited for discovering the number and nature of factors, it is exploratory and not designed to directly test or estimate higher-order factor structures. Examining such complex measurement models would require a much larger sample size to enable more advanced statistical methods such as structural equation modeling (Kline, 2016).

Conclusion

Our validated, context-specific instrument can now be used to assess readiness in community organizations to implement CTM. Future use of the RDS-CTM may enhance our understanding of organizational readiness and serve as a practical resource for support teams’ readiness-building efforts. Throughout the process, end-user engagement and theoretical grounding that was scientifically robust and contextually relevant were of tantamount importance.

Implications for Practice

Although specific to CTM, our approach serves as a model for other community-based health-promoting EBIs. Importantly, our analysis resulted in a more parsimonious version of the RDS, reducing respondent burden for community organizations that often have limited capacity. Determining readiness to adopt and implement an EBI can guide resource allocation and tailored implementation support, enhancing implementation. When EBIs are feasible and relevant, the return on investment for funders and communities improves and sets up all actors for long-term success.

Implications for Research

Our detailed reporting of the factor structure and reliability analysis provides a clear template for how researchers can support practitioners in other contexts by either adopting the revised RDS or applying similar analytic methods to evaluate and refine readiness instruments for their own use. This work contributes to a broader movement in implementation science to ensure that the instruments guiding practice are not only rigorous but also practical, user-oriented, and contextually appropriate.

Future research could explore whether and how dynamic relationships between readiness and implementation strategies influence both program (person-level) and scale-up (implementation-level) outcomes (Koorts et al., 2025). The potential role of readiness assessments to inform real-time decision-making in implementation support could be further investigated to determine how best to optimize the impact of health-promoting initiatives.

Future research should continue to test the RDS-CTM’s construct validity using confirmatory methods that allow examination of a higher-order factor structure as well as evaluate other validity subtypes such as content or association with other variables validity. RDS-CTM’s broader applicability across various health-promoting initiatives should also be tested. Key questions include whether the tool predicts the level and types of support organizations need and whether it helps support teams to streamline the implementation support process. As the RDS-CTM differentiates between General (staff vs. non-staff) and Innovation-Specific Capacities, this presents an opportunity to refine how readiness is conceptualized and measured in implementation science.

Supplemental Material

sj-docx-1-hpp-10.1177_15248399251387143 – Supplemental material for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing

Supplemental material, sj-docx-1-hpp-10.1177_15248399251387143 for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing by Thea Franke, Lindsay Nettlefold, Komalpreet Nandra, Joanie Sims Gould, Heather McKay and Farinaz Havaei in Health Promotion Practice

Supplemental Material

sj-docx-2-hpp-10.1177_15248399251387143 – Supplemental material for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing

Supplemental material, sj-docx-2-hpp-10.1177_15248399251387143 for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing by Thea Franke, Lindsay Nettlefold, Komalpreet Nandra, Joanie Sims Gould, Heather McKay and Farinaz Havaei in Health Promotion Practice

Supplemental Material

sj-docx-3-hpp-10.1177_15248399251387143 – Supplemental material for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing

Supplemental material, sj-docx-3-hpp-10.1177_15248399251387143 for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing by Thea Franke, Lindsay Nettlefold, Komalpreet Nandra, Joanie Sims Gould, Heather McKay and Farinaz Havaei in Health Promotion Practice

Supplemental Material

sj-docx-4-hpp-10.1177_15248399251387143 – Supplemental material for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing

Supplemental material, sj-docx-4-hpp-10.1177_15248399251387143 for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing by Thea Franke, Lindsay Nettlefold, Komalpreet Nandra, Joanie Sims Gould, Heather McKay and Farinaz Havaei in Health Promotion Practice

Supplemental Material

sj-docx-5-hpp-10.1177_15248399251387143 – Supplemental material for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing

Supplemental material, sj-docx-5-hpp-10.1177_15248399251387143 for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing by Thea Franke, Lindsay Nettlefold, Komalpreet Nandra, Joanie Sims Gould, Heather McKay and Farinaz Havaei in Health Promotion Practice

Supplemental Material

sj-docx-6-hpp-10.1177_15248399251387143 – Supplemental material for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing

Supplemental material, sj-docx-6-hpp-10.1177_15248399251387143 for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing by Thea Franke, Lindsay Nettlefold, Komalpreet Nandra, Joanie Sims Gould, Heather McKay and Farinaz Havaei in Health Promotion Practice

Supplemental Material

sj-docx-7-hpp-10.1177_15248399251387143 – Supplemental material for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing

Supplemental material, sj-docx-7-hpp-10.1177_15248399251387143 for Measuring Organizational Readiness in a Community Health Promotion Program: Instrument Tailoring and Psychometric Testing by Thea Franke, Lindsay Nettlefold, Komalpreet Nandra, Joanie Sims Gould, Heather McKay and Farinaz Havaei in Health Promotion Practice

Footnotes

Authors’ Note:

We are grateful to the British Columbia Ministry of Health and the Active Aging Society for their commitment to, and ongoing support of, Choose to Move. We thank our delivery partner organizations, facility managers and coordinators, activity coaches, and all the older adults who participated in Choose to Move. We also appreciate the many contributions of staff and trainees from the Active Aging Research Team. This study received funding from a Public Health Agency of Canada grant awarded to Dr. Heather McKay and team (023638).

Author Contributions

TF and LN conceptualized the original ideas. TF and LN collected and cleaned the data. KN and FH analyzed the data. TF, KN, FH, and LN wrote the first draft of the paper. JSG and HM provided intellectual content to the manuscript. All authors provided critical edits on drafts of the article and approved the final version. All authors read and approved the final manuscript.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Ethics Approval Statement

Ethics approval for this work was granted from the University of British Columbia Behavioural Research Ethics Board (H23-00199).

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.