Abstract

Background

While failure in social marketing practice represents an emerging research agenda, the discipline has not yet considered this concept systematically or cohesively. This lack of a clear conceptualization of failure in social marketing to aid practice thus presents a significant research gap.

Focus

This study aimed to conceptualize failures in social marketing practice.

Methods

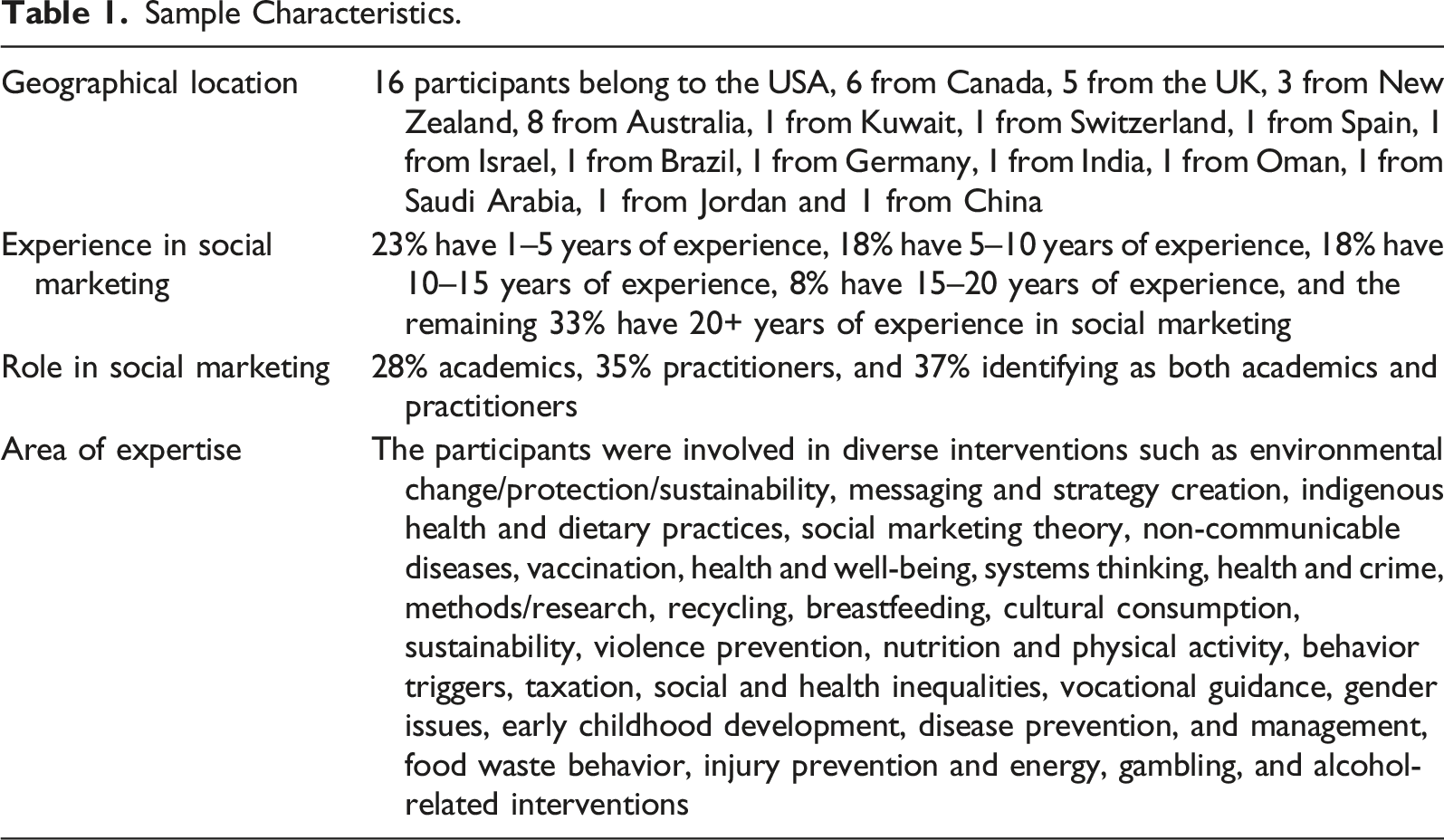

A qualitative survey was conducted using purposive sampling to solicit expert views of well-established social marketing academics and practitioners. Participants were asked to discuss failures in social marketing practice based on their experience in the field. A total of 49 participants provided their input to the survey. Thematic analysis was used to develop four themes addressing the research question.

Importance

It is widely acknowledged that reflecting and learning from past failures to promote future best practices is desirable for any discipline. As an empirically based social change discipline, social marketing would benefit from the elevation of failure within its broader research agenda.

Results

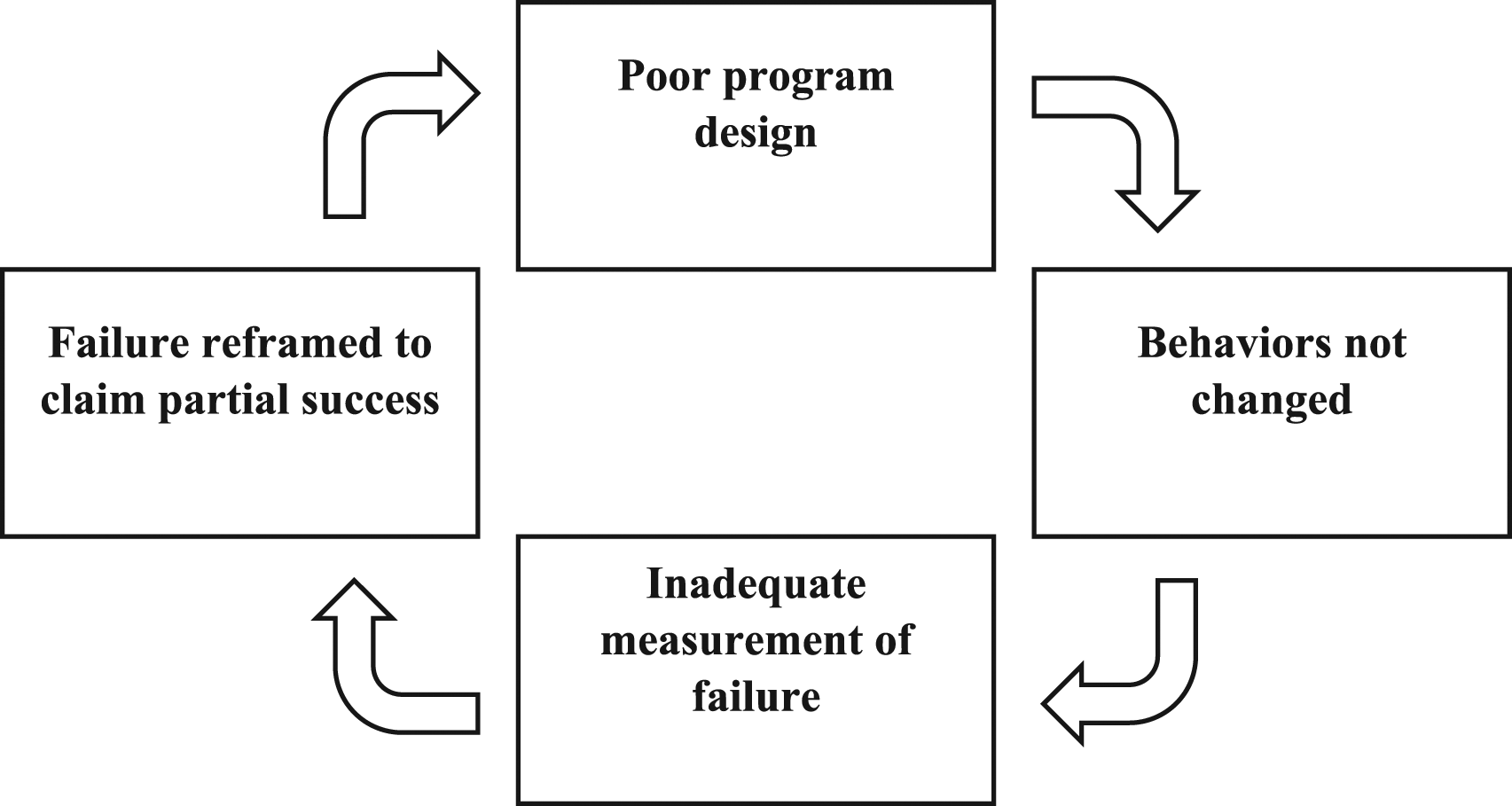

Four themes were identified: (1) Failures occur when the target behaviors are not achieved, (2) Tactics used to measure failures, (3) Process failure, and (4) Failures either not measured or reframed as lessons learned. A conceptual framework was created to characterize the nature of failures in social marketing practice, representing a feedback loop deemed problematic for the discipline.

Recommendations

We call for social marketers to explicitly acknowledge and address failures when describing and reporting on their work and project outcomes. Efforts should be made to adopt a reflexive stance and examine and address internal and external factors affecting the program’s failures.

Introduction

Learning from failure is not a new concept in many fields, such as organization and management (Baumard & Starbuck, 2005), psychology (Eskreis-Winkler & Fishbach, 2019), marketing (Kjeldgaard et al., 2021), behavioral science (Osman, McLachlan, Fenton, Neil, Löfstedt & Meder, 2020), entrepreneurship (Lattacher & Wdowiak, 2020) and human resource (Liu, You & Ye, 2020). The underlying causal pathways that characterize different types of failure expose new insights that can advance theory and practice (Osman et al., 2020). However, the culture of learning from failures in social marketing practice, requiring transparency and critical reflection upon programs that do not achieve the desired outcomes, is not well-established (Akbar, Foote, Soraghan, Millard & Spotswood, 2021a; Cook, Fries & Lynes, 2020, 2021). Unlike disciplines such as business and entrepreneurship (Kjeldgaard et al., 2021), failures are neither publicized nor celebrated in social marketing practice. Instead, there is a tendency to share successes, particularly from a program’s perspective (Akbar, Garnelo-Gomez, Ndupu, Barnes & Foster, 2021b; Lee & Kotler, 2016). Conversely, while failures have recently received some attention in the field, along with illustrations of factors emerging from practice that contributed to interventions' failure (Akbar et al., 2021; Cook et al., 2020, 2021; ), a systematic evaluation of failures is sorely lacking.

Crucially, as with many other disciplines, social marketing programs that yield inadequate or non-significant results are not voluntarily published (Smaldino & McElreath, 2016), effectively masking null results that could have otherwise proved insightful for other streams of research and for other practitioners. The lack of publications/conversations on failures in social marketing initiatives is associated with the practitioner landscape, intersecting with accessibility, capacity, costs, and funder expectations (Akbar et al., 2021a; Parsons, MacPherson & Villagomez, 2017). In the academic world, this might result from publication bias (Cateriano-Arévalo et al., 2022; Olsson & Sundell, 2023). In the practitioner’s world, the preoccupation with providing evidence to illustrate success is often linked to funders' expectations (Gordon, Russell-Bennett & Lefebvre, 2016; Parsons et al., 2017; Ver´ıssimo, Schmid, Kimario, & Eves, 2018) and overshadows the need to examine and report on what has not worked and why. In general, failures in social marketing practice are seen as setbacks, defeats, hurdles, and a program’s end instead of a learning opportunity. These underlying perceptions and realities demonstrate why social marketing practice would benefit from analyzing how failures are measured and conceptualized in current practice. We maintain that this is an underappreciated issue that the social marketing discipline would be well-served to elevate as a priority research agenda. Following recent thinking about failures in social marketing initiatives, this study aims to advance conversations on conceptualizing failures in the field. The data was collected from social marketers using a qualitative survey technique.

Background

Definitional and Conceptual Issues

It is widely acknowledged that there is no formal definition of failure, nor are there universally agreed-upon planning models, frameworks, or criteria in social marketing that gauge failures. However, the starting point of most discussions is critical social marketing directed toward the discipline itself (Brace-Govan, 2015; Gordon, 2011; Gordon et al., 2016; Gurrieri, Previte, & Brace-Govan, 2013). Critical social marketing is defined as “critical research from a marketing perspective on the impact commercial marketing has upon society, to build the evidence base, inform upstream efforts such as advocacy, policy, and regulation, and inform the development of downstream social marketing interventions” (Gordon, 2011, pp.89). Critical social marketing asks critical questions about the role of ethics and morals while planning and designing interventions and reflecting on approaches used for implementation. The scholarly discourse surrounding critical social marketing is not only restricted to critical reflection. Still, it aims to identify better practice, particularly by centering emancipatory themes such as equity and social justice. However, some questions regarding failures in practice remain unanswered: How often do social marketing initiatives fail? What are the criteria for measuring failure in social marketing practice? How are failures conceptualized in social marketing? While embarking on an initial inquiry into these questions, it became apparent from the literature that failed interventions are surprisingly common (Akbar et al., 2021a; Cook et al., 2021), that their prevalence presents looming consequences for the social marketing field, and that not all 'failures' are the same (Cook et al., 2020).

It is argued that both failures and successes lay the foundation of subsequent actions in which comprehensive structures can be built (Saeed, 2009). The literature on success in social marketing presents an outward look emphasizing planning and delivering successful outcomes. Two critical success factors can be identified; first, practitioners should adapt theoretical underpinning for designing successful social marketing interventions (Akbar et al., 2021b). Several theories and models are available to guide practitioners, such as Andreasen’s six-step criteria (Andreasen, 2002), 19-step criteria (Robinson-Maynard, Meaton & Lowry, 2013), the hierarchical model of social marketing (French & Russell-Bennett, 2015), the CBE model (Rundle-Thiele, Dietrich & Carins, 2021), and the GPDS model (Akbar & Barnes, 2023). Second, the context and structure of interventions (downstream, midstream, upstream) influence the measurement of success (Aceves-Martins et al., 2016; Coz & Kamin, 2020; Kim, Rundle-Thiele, Knox & Hodgkins, 2020; Xia, Deshpande & Bonates, 2016). This means success can be measured by assessing the intervention’s reach to the desired audience and through engagement with the messages, measuring changes in behaviors, or changes in the relevant policy. In contrast, failure factors are mainly associated with the mismanagement of parties involved in the behavior change program, suggesting an inward approach to reflect on the process used to develop the program (Akbar et al., 2021a; Cook et al., 2021).

Nevertheless, there are no clear criteria to gauge the frequency and type of failures. When planning interventions, practitioners must ask: What causal factors could be influential to the failure of the intervention? What precautionary measures should be taken to avoid failure? Without addressing these questions early on, program designers risk trialing interventions that may work but cannot be scaled to a wider population. Also, the interventions may not preserve their effects over time. The underlying mechanisms or other relevant factors (e.g., competing behaviors, stakeholders' needs, etc.) are not fully understood. They may compete with or undo the behavioral change achieved earlier, suggesting that interventions may not be successful without understanding the reasons for various types and nature of failures. We argue that the problem lies not just in that the questions mentioned above regarding the construct of failures in social marketing practice are unanswered but also in the conception of failure. This is because, in existing literature, failure is seen as complex and a struggle; it cannot transcend beyond its black-and-white confines (Saeed, 2009). Trivializing the concept of failure in this manner will only cause harm to the discipline; instead, the concept should be embraced as an opportunity for learning from past experiences, correcting errors, and creating the conditions to rise higher than ever before.

Current Practice and Failures

Since the emergence of the field, the social marketing literature has presented more interest in sharing and promoting social marketing success than its antithesis of failure (Andreasen, 2006; Cheng, Kotler & Lee, 2011; Lee & Kotler, 2016; Pettigrew, Pescud & Donovan, 2012; Sampogna et al., 2017). The literature heavily focuses on best practices in social marketing in textbooks, case studies, journals, blogs, and conference papers. Evidence of success is, of course, required in all forms of marketing, and clients and policymakers will always want to see examples of what has worked well (Escalas, 2012), effectively justifying the promotion of success in the field from a pragmatic standpoint (Akbar et al., 2021c). However, measuring and assessing failures crystallizes the dominant ways of evaluating success, making success a potential target for critique and alteration (Halberstam, 2011). In addition, measuring and evaluating failures as a practice thus becomes an epistemic window that points out that any utopian situation carries its dystopian version (Bradshaw, Fitchett & Hietanen, 2021). Thus, success is defined against the backdrop of failure. This suggests that understanding what is practiced and voiced as failure can provide insights into future practice.

Although the challenge from behavioral economics as a competing and possibly more popular approach may be fading, social marketers still feel pressure to promote success to reach the upstream level (Akbar et al., 2021c). While it seems sensible for social marketing to promote success to broaden the field’s horizon, this alone, without an accompanying discussion of failure, does not help social marketing advance. A critical discourse around potential failures is, therefore, necessary.

Many studies have critiqued one or more social marketing programs (Cook et al., 2020), referring to failures during program development, design, implementation, and evaluation stages (Silva & Silva, 2012; Xia et al., 2016). More recent empirical literature identified a wider range of factors that become the reasons for social marketing program failures (Akbar et al., 2021a; Cook et al., 2020, 2021). This literature establishes consistency in why social marketing programs fail, including poor formative research, poor strategy development; mismanagement of stakeholders; practitioner bias and preconceptions, and external influences such as power dynamics.

Poor formative research comes from not taking the time to understand the target audience or behaviors that need to be changed (Akbar et al., 2021a). Similarly, the mismanagement of stakeholders is another reason for failure, including the lack of a co-creation/co-design participatory approach and the inability to communicate with and engage influencers and community leaders (Akbar et al., 2021a; Cook et al., 2021; Willmott & Rundle-Thiele, 2022). These factors do not stand alone regarding program failures; they often interact with and contribute to failures at other stages, such as program implementation and control. For example, poor research in the formative stages will likely lead to poor strategy development and stakeholder mismanagement, thus resulting in the initiative failing to achieve the desired behavior change objectives. However, there are many other reasons for poor strategy development, including over-emphasis on awareness and education or misuse of messaging (Cook et al., 2020).

Additionally, preconceived notions and biases of those involved in the design and development of the program can impact the identification of the problem facing the priority group and the ensuing strategies developed to address the issue (Cook et al., 2021). Similarly, external influences, including those beyond the practitioner’s control, such as budget constraints, power dynamics, and clients' agendas, further contribute to program failure (Akbar et al., 2021a). The wide range of results and viewpoints evident within these early explorations suggest that the concept of failure in social marketing has not yet been considered cohesively or systematically.

Thus, the lack of a clear conceptualization of failure in social marketing initiatives represents a significant research agenda. Creating a more formalized opportunity to learn from and “normalize” failures is imperative, especially considering social marketing has recently marked its 50th anniversary as a social change discipline spanning the downstream, midstream, and upstream levels. Therefore, this study aims to conceptualize failure in social marketing practice by seeking the opinions of social marketing academics and practitioners. The results of this study include a discussion on the nature of failures in social marketing initiatives and their triggering effect for reflection and learning from past failures to promote future best practices.

Method

A qualitative approach was adopted for this study after reflecting on the methods used in previous studies on failures in social marketing (Akbar et al., 2021a; Cook et al., 2021; Cook et al., 2022). A qualitative survey method was utilized to gain insight (Cohen et al., 2017; Feilzer, 2010; Fritz et al., 2017) from experts on two main open-ended questions (i) how failures are measured in current social marketing practice and (ii) how failures should be conceptualized to inform future practice. The participants were asked to discuss failure in social marketing initiatives based on their experience in the field.

Sample Characteristics.

A thematic analysis was conducted to analyze the data (Bowen, 2009; Clarke & Braun, 2017; Roberts et al., 2019) using the QSR NVivo12 software package. The data collected in the qualitative survey are used to form a dataset all in answer to the research objective, i.e., how failures are measured in current social marketing practice and how failure should be conceptualized for future practice. The raw data were then coded. This involved deconstructing the data into smaller chunks, typically sentences or paragraphs, and assigning a code to each. Once all the data had been coded, the research team was then able to group the codes under thematic headings. Of interest were the recurring themes and the relationships between these themes that were evident from the data. The research team then identified excerpts from the data to support the thematic analysis.

Research Protocol

This research is ethically approved (2021/203) by Nottingham Trent University, UK. This research abides by the rigorous ethical procedure of Nottingham Trent University (more information is given on the university’s website) to protect research participants. All participants were required to give written consent before participating in the study.

Findings

Some participants found it difficult to differentiate between describing how failures are measured in the current practice and conceptualizing failure to inform future initiatives. As the data sets for the two questions overlapped in this way, they were aggregated for analysis. The following four themes were developed and are discussed below.

Failures Occur When the Target Behaviors are Not Achieved

One way of conceptualizing failure in social marketing initiatives is when the intended objectives have not been achieved. This was by far the most common response noted. The participants offered many examples: ‘Failure to meet objectives when achievement would have resulted in meaningful benefit for the priority population of interest.'

and, ‘Failure is the absence of visible changes in the enactment of proposed behaviors.'

Similarly, ‘Failure is to not reach a mix of behavioral measures (attitude change and behavior change) and lack of campaign reach.'

As the very essence of a social marketing program is to encourage the adoption of changed or new behavior (Lee & Kotler, 2016; Xia et al., 2016), it is perhaps not surprising that the lack of this should be perceived as the most obvious point of failure. However, it seems a very simplistic response with a lack of nuance. A program may have several objectives, some of which may be fully or partially achieved. For example, ‘Changes in awareness of and engagement of the issue usually via the communications lever, change in belief systems (or not), change in the perceived value exchange of adopting and maintaining the desired behavior (e.g., the benefit of the change is perceived to be greater than the perceived costs [money, time, effort, social standing, etc.]), change in reported behaviors and change of observed/data of desired behaviors.'

How objectives are described may also impact whether they are achieved; if the objective is to change a particular behavior, by how much, in which group, and when must all be stated at the beginning for measurement. Knowing whether the behavior change has been achieved to make the measurement could also prove problematic, and the measurement method should also be specified in the objective. ‘By tracking specific metrics related to specific project/program goals and objectives, established at the beginning/conceptualization and adjusted through adaptive management during the project.'

The results suggested that the occurrence of potential failure is often ignored from the beginning of initiatives if behavior change is observed and measured, thereby constituting success. If that is not sustained, that could then be defined as a failure in the longer term.

Tactics Used to Measure Failures

Several participants reported failure being measured through communication/engagement with the program; for example, ‘The target audience not getting the intended program influence,' and 'Changes in awareness of and engagement of the issue' or to measure 'Message recall and reported behavior or intent.'

Similarly, ‘… most often, social marketing is driven by communications and is rarely accompanied by organizational/governmental policy/legislation or product/service design. So, success/failure is often judged on communications awareness, message recall, and reported behavior or intent to behave. Often little effort is made to monitor and adapt to actual behavior.'

These measures do not observe the adoption (or otherwise) of the behavior itself but measure the reporting of it by individuals. This may not accurately reflect whether the behavior has changed at all, as it relies on individuals' narratives. It is fair to hypothesize that if failure is indicated in this way of self-reporting of not adopting the behavior, the failure may be more significant than the data suggest, as not everyone targeted is likely to have admitted defeat. Judging the usefulness or accuracy of this type of measurement without knowing the specifics of individual programs is difficult. Some participants highlighted numerical measures for failure, such as, ‘Less than 15–20% change from baseline at least 12–18 months after intervention' or simply 'numerical participation,' and 'I typically see people determine failure based on non-significant effect sizes.'

Of course, randomized control trials are not always feasible or appropriate, and some behaviors may lend themselves to numerical measures more than others (Kjeldgaard et al., 2021). It is much easier to count how many people attend a particular clinic following the provision of information than to count how many people cut down on drinking alcohol or taking up more exercise.

The participants also question the use of ‘huge expenditure’ for measuring failures and the ‘role of adaptive management during the project.’ The measurement of expenditure refers to the financial cost of failure. It indicates a cost/benefit approach to the measurement (Brent, 2009), while the mention of adaptive management indicates an ongoing approach to measurement that could maximize the possibility of success and minimize the effects of failure, suggesting both success and failures should be measured simultaneously. Additionally, ‘internal review and opinions of the team involved’ is also noted as a tool for measuring failure. This has potentially significant drawbacks, as it relies on internal views rather than assessing the failure. ‘Opinions' also give the impression that some views may be subjective rather than based on evidence.

The range of measures used indicates that these are necessarily related to the nature of the intervention but that there may be a reliance on self-reporting, potentially non-significant numerical measures, and reviews that may be subject to subjective bias.

Process Failure

Some participants defined failure as inadequacies in the process rather than the outcomes of the intervention program. For example: ‘Failure to conduct appropriate formative research, failure to engage the community, failure to design and deliver an integrated program, failure to understand the audience, failure to use indigenous knowledge.'

Similarly, ‘Non-adherence to strategy, user testing, and evaluation (far too often evaluation is cut from the budget) and putting too much emphasis on material design or the how.'

While it may be the case that some of these answers describe an individual’s bad experience of a particular program, there is a significant opportunity for failure to be ‘built in’ to programs at the design stage. Lack of research evidence, not understanding the audience, not testing the intervention prior to launch, and omitting assessment and evaluation at all stages are all deficiencies in program design that can lead to failure. ‘Interventions are generally evaluated in terms of whether or not they meet their objectives, which may include attitude change, behavior change, improvements in practice, etc. If an intervention does not meet its objectives, this could reflect inappropriate objectives, poor intervention design (including poor understanding of social marketing), poor implementation, weaknesses in evaluation design or study conduct, etc.'

Participants indicated there was more likely to be an emphasis on what the intervention involved rather than whether it was designed to succeed and that sometimes progress measurements are not heeded to avoid potential failure. While participants mentioned the worry of not meeting ethical standards, ‘failure to learn from program tracking metrics, not meeting program quality and ethical standards,’ they also bemoaned the lack of wider support from organizations or the government, ‘communications rarely accompanied by organizational/governmental policy/legislation or product/service design.'

The participants also questioned who is responsible for measuring failure; those designing and implementing the program or those funding it. They will have subtly different aims and different definitions of failure. ‘Depends on who is doing the measuring and what is being measured. Not achieving planned objectives or intended effects or, even worse, inadvertently making matters worse. If social marketers are looking at programs they didn't create, failure is often defined as a failure to implement some aspect of social marketing, inadequate or no segmentation, too much reliance on promotion, trying to increase knowledge rather than change behavior, etc. If we're looking at our own programs, we tend to think more in terms of what could be improved rather than what failed.'

The role of the person/company responsible for measuring failure is crucial. If the client organization funding the program is happy that the intervention has occurred, that may be a success even if the desired behavior change does not occur. In this case, there could be a mix of success and failure. Failure of this nature, in which the target audience does not engage with or adopt the desired behavior, whether in the short, medium, or long term, must be considered significant as it represents the end result of the intervention and, therefore, a potentially costly financial and reputational failure as well as a failure for individuals, communities, and society.

Additionally, the potential negative impact and unintended consequences are considered a point of failure because of failing to pre-test, a crucial aspect of social marketing planning (Bass et al., 2023; Brown et al., 2008). This could be the program’s effect inadvertently making the problem worse or creating another, different problem in solving the first. Participants mentioned an ‘increase in predicted unintended consequences (for example, increase in drinking as a result of an intervention to reduce driving after drinking).' In contrast, unspecified ‘unintended consequences such as political issues, financial issues, and pushback from citizens' were also noted. These could all undermine a program’s success and push it toward failure.

Failure Either Not Measured or Reframed as Lessons Learned

Considering that social marketing interventions often use public money or are funded by charitable organizations (Cheng et al., 2011), it is alarming that failures are not addressed when reviewing the results of programs. Most participants mentioned failures in social marketing initiatives are not measured at all. Participants stated that failure is ‘largely ignored,’ ‘rarely described,’ ‘rarely acknowledged,’ and ‘not reported at all.’ This lack of measurement may be due to several reasons, such as a disinclination to admit that the program has not worked, that money has been spent unwisely, and that resources may have been wasted. It may be that simply conducting the intervention is all that the funder requires, and the outcome is of less interest politically.

In some cases, failures are framed as ‘success.’ For example, 33% of the target audience visiting a clinic for a specific check-up or vaccination could be considered a failure as it represents a minority. Still, it could also be considered a success, as it could significantly improve the baseline figure. In this way, failures could be reported as successes. For example, ‘When behavior change fails, managers report the change in awareness and attitude and claim success.'

The notion of failure as an opportunity for ‘lessons learned’ also emerged in the results. For example, ‘There is no failure …. just more learning' and '… we reframe failure to “lessons learned” (growth mindset) so we can make improvements ….'

Learning from mistakes and articulating lessons learned is sensible. Still, some participants felt that failure is not discussed explicitly enough in academic publications (Deshpande, 2022; Akbar et al., 2021a), where the focus tends to be on success and that program designers are more interested in claiming success. This is, perhaps, a human reaction to attempt to find the plus points, learn from mistakes to inform future success and present the parts of the work that did succeed. The ‘spin,’ especially for sponsors and the public, may be essential for securing further funding and maintaining support for interventions. For example, ‘[social marketing] in general is quite good at evaluation, but it tends to focus on positive outcomes rather than learning about what does not work so well.'

It may be that the lack of a desire to tackle failure head-on and to name it as such could lead to less-than-effective evaluations of programs. It also seems clear that there may sometimes be pressure from managers and/or sponsors to frame failure as partial success or as lessons learned to avoid potential reputational damage. There is also a difference between not measuring, not acknowledging, and not reporting failure. Failure may well be recognized internally but not reported externally.

Discussion

This study aimed to conceptualize failures in social marketing practice. It is found that the concept of failure in social marketing initiatives was not well-established or well-nuanced, consistent with ongoing dialogue within social marketing (Akbar et al., 2021a; Cook et al., 2021). The measurement of failures is understood in several ways, from not measuring it at all to measuring it against the backdrop of success. The measurement process was shown to rely heavily on self-reporting and some quantitative measures that may sometimes be flawed. In addition, failure is either not acknowledged by the funders, practitioners, or those involved in program design or is framed as lessons learned for future success or as partial success in a relatively high proportion of cases. It is imperative not to take failures and successes as monolithic entities (Saeed, 2009) or consider success and failure only as a dichotomy (Willmott & Rundle-Thiele, 2022). Our findings suggest a strong association between failures and successes, but in some cases, these are incorrectly labeled and disregard partial victories, setbacks, and nuance.

Acknowledging the close relationship between failures and successes in social marketing initiatives is vital. Failures are mainly associated with the factors contributing to the desired behavior change not being achieved. It can also be viewed as a valuable learning experience that provides opportunities to reflect on what went wrong, identify areas for improvement, and adjust approaches for future initiatives (Deshpande, 2022). Success works oppositely, being associated only with achieving the desired behavior change, increasing awareness, or reaching the target audiences, depending on the context of the initiative (Liao, 2020). However, maintaining success can also be challenging and requires vigilance to prevent relapse. Still, success is well expressed within the discipline as opposed to failures (Carins, 2022). A better articulation of failure is essential to understand that failures may not always be binary outcomes; the key is to stay focused on the end goal and keep working towards it, even if progress is slow or non-linear. In social marketing, failure versus success is not as simple to understand. Most programs are multifaceted, under-resourced, and implemented in an environment where many variables outside the program can affect behaviors. Practitioners rarely have the funding to implement interventions long enough or intensely enough. They are often constrained by what their managers and funders want and support, suggesting that failures can be conceptualized as failing to make any discernible progress toward program objectives.

In terms of conceptualizing failure in social marketing initiatives, our findings present a variety of reactions. Failures were found to be defined not only through not achieving the intended outcomes but also through problems with program design leading to inadequacies of the process. Many defined and measured it simply by attempting to understand if the original objectives had been met. Disregarding or omitting social marketing principles, such as inadequate segmentation, overreliance on communication and promotion, and focusing on increasing knowledge and awareness rather than changing behavior, result in poor intervention designs. Such factors contribute to initiatives failing to achieve the intended objectives. This could be perceived as a spectrum of failure, ranging from objectives not met at one end to clear negative impacts at the other, indicating that the range of potential failures is considerable. Correspondingly, the person responsible for measuring failures and timing is equally significant.

The issues identified in this study are interconnected and presented as part of a cycle, as shown in Figure 1, demonstrating how inadequate acknowledgment of failure may feed into poor planning and design, leading to further failures. The power and influence of the sponsor/client/funders and the potential consequences of reporting failures may make it even more difficult to acknowledge. Feedback loop for failures in social marketing initiatives.

The proposed feedback loop yields important implications for practitioners, who should accept and embrace failure as an inherent phenomenon. While failure is a critical event that can cause a range of negative effects, understanding and acknowledging failure is also a chance for error correction, an opportunity for learning from experiences, and a prospect of achieving well-rounded outcomes. However, we argue that learning from failure does not happen automatically (Shepherd, 2003); practitioners must reflect critically and mindfully to transfer learning outcomes to future practice. Focusing on failure in this manner also identifies systemic issues, with a view toward a future where we can genuinely begin to address and dismantle these enabling factors.

Conclusion

The study presents the first attempt to conceptualize failure in social marketing practice as derived from those actively practicing it. The interconnected issues identified within the broad discussion of conceptualizing failure underscore the importance of establishing clear and measurable program objectives while integrating ongoing assessment and evaluation at every stage of program design and implementation. Success and failure in social marketing should not be viewed as a dichotomy or binary but rather can be represented as a pragmatic continuum that incorporates nuance. The failure to sufficiently acknowledge and address failure in social marketing practice creates and perpetuates a form of feedback loop which drives further failure.

Limitations/Future Research

The study has potential limitations. Firstly, the study includes data from 49 participants. Even though these include social marketing experts, a bigger sample would have offered further insights into failure measurement and conceptualization. Secondly, the data is based on a qualitative survey, resulting in an understanding of current practices on failures in social marketing; in-depth interviews would add further clarity and nuances in establishing the concept. Thirdly, identifying key segments related to social marketing program development and implementation (e.g., program designers and managers, sponsors and funders, stakeholders and target audiences) and gathering their views concerning the failure feedback loop via mixed methods research would add further weight to the discourse of social marketing. Fourthly, for developing practitioner- and sponsor-friendly tools and resources to support productive approaches to acknowledging and addressing failure, the feedback loop could be tested on case studies. Lastly, this study is based on two main questions (how failures are measured in current social marketing practice and how failures should be conceptualized to inform future practice). While this offers an initial conception of the proposed feedback loop, it hopes to inspire meaningfully more exploration using the following key questions that emerged from this study. • How far short of success is failure? • Why is there a reluctance to ‘own’ failure? • What are the dangers of not recognizing failure? • Is learning adequate for improved process design if failure is not appropriately acknowledged? • Are sponsors' viewpoints consistent with program managers' perceptions that failure should be played down or obscured to maintain funding and support? • How can a ‘safe to fail’ organizational culture be fostered? • What systemic structural factors within and outside organizations are related to failure? • How can these be addressed in the long term and side-stepped in the short term?

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.