Abstract

First-year seminars (FYS) comprise one of 11 researched interventions in postsecondary education known as High-Impact Practices, but few rigorous studies report significantly high impacts. This study examined a FYS employing propensity score matching to link cases and controls in a quasi-experimental design. One semester later cumulative grade point average (GPA), cumulative hours attempted, and 1-year later indicators (hours earned, hours attempted, and term GPA) were statistically different between the two groups. Three freshman survey items were also statistically significant and differences were observed within the same semester of the program. Quantitative differences did not appear immediately but appeared one term and 1 year later. Mean differences did not appear to diminish between groups over time. This analysis helps to fill a void in the paucity of studies clarifying the relationship between FYS participation and outcomes using comparison groups.

Keywords

First-year seminars (FYS) and first-year experiences are considered as High-Impact Practices (HIPs). Together they are one of 11 endorsed by the Association of American Colleges & Universities (Kuh, 2008). Although many institutions of higher education are implementing FYS, there is a lack of rigorous research supporting claims about their effectiveness. The research that does exist is promising but does not cover the breadth of FYS programs implemented at institutions of higher education. Yet, the high-impact nature of these programs as a whole is implied.

A keyword search including the phrases “first year seminar” or “first year experience” identified over 6,000 studies. Fewer than 60 of these studies used comparison groups, revealing a need for empirical and experimental research. We included a majority of them in this literature review. Brownell and Swaner (2010) also noted that there were “consistent problems in the research related to student outcomes” and the evidence for HIPs was “moderate at best, and very often weak.” In addition, the fidelity of implementation and exposure is insufficient to assume intervention quality (Kinzie et al., 2020).

Randomized controlled trials are the gold standard in research and there are only a handful of published studies of this type with positive outcomes for FYS students (e.g., Anderson & Card, 2015; Rutschow et al., 2012). Quasi-experimental designs (studies that use comparison groups) are the next most rigorous and address threats to internal and external validity in research when random assignment is impossible or unethical (Campbell & Stanley, 1963). Students grow, mature, and learn over time whether or not they partake in FYS. Single sample studies suffer from these maturation effects and it is impossible to distinguish program effects from external influences.

Our literature search revealed that most studies did not employ experimental or quasi-experimental designs. Single sample pretest/posttest and cross-sectional study designs were common. When such design choices are made, not only are practical significance and effect sizes unclear, validity and generalizability concerns remain unaddressed. In addition, several studies have noted limited, short term, diminishing, weak, or no evidence-based findings supporting FYS and other HIPs (Adkins, 2014; Barton & Donahue, 2009; Brownell & Swaner, 2010; Clark & Cundiff, 2011; Crissman, 2001; Culver & Bowman, 2020; Edwards, 2018; Kilgo et al., 2014; Sanchez et al., 2006; Tampke & Durodoye, 2013). The mixed outcomes and lack of rigorous research methods, juxtaposed with the supposedly high-impact imprimatur of FYS programs, prompted the present study.

We highlight literature that employs various approaches to remedy this problem. The first set of literature utilized the quasi-experimental approach. In one early study, Belcheir (1997) found higher retention and improved GPA for fall 1995 FYS participants compared with controls, but GPA effects disappeared over time. Other research also used the quasi-experimental approach and found higher GPA for FYS students compared with controls (Ben-Avie et al., 2012; Jamelske, 2009; Karp et al., 2017; Klatt & Ray, 2014; Shao et al., 2010; Shoemaker, 1995; Tampke & Durodoye, 2013; van Herpen et al., 2020; Vaughan et al., 2014; Wang et al., 2012). Subgroups, such as STEM-only students, had a higher first-term GPA for those that participated in an FYS (Ward et al., 2020). Retention was also improved for FYS students compared with controls (Clouse, 2012; Weiss et al., 2014; Wilkerson, 2009).

Not all results favored FYS participants compared with nonparticipants using the quasi-experimental approach. Several studies found no difference in first-year GPA or retention rates (Barton & Donahue, 2009; Clark & Cundiff, 2011; Crissman, 2001; Culver & Bowman, 2020; Edwards, 2018). Others found no statistical differences in graduation rates (Jenkins-Guarnieri et al., 2015; Sanchez et al., 2006).

Survey methods have been frequently used in the quasi-experimental approach toward FYS analyses. Modest differences about awareness of campus resources were found in studies by Al-Sheeb et al. (2018) and Keenan and Gabovitch (1995). Picard (2012) found a reduction in academic and career indecision among FYS participants compared with controls. Other analyses found gains for FYS participants over their counterparts on financial literacy (Anderson & Card, 2015), social adjustment (Conley et al., 2013; Enochs & Roland, 2006), self-assessed academic skills (Justice et al., 2009; Keenan & Gabovitch, 1995), and various attitude scales (Padgett et al., 2013; White, 2005). Finally, mixed significances were found for various other quantitative and qualitative measures (Culver & Bowman, 2020; Potts & Schultz, 2008; Rutschow et al., 2012).

Population

This study was undertaken at a large university in the southern United States where entering classes routinely approach 10,000 students. The FYS program was launched in fall 2019 and offered to about 25% of incoming freshmen. It was offered as a two-semester experience, but students were not required to take the spring course. Most sections were taken for zero credit, whereas a few sections were taken for one credit. In both cases, course performance was transcripted whether taken for a letter grade or pass/fail. Enrollment in sections was usually kept at or below 30 students and most course sections met for 1 hour, 1 day per week for the duration of the first semester in college (14 weeks). In fall 2019, the course was face-to-face only.

Method

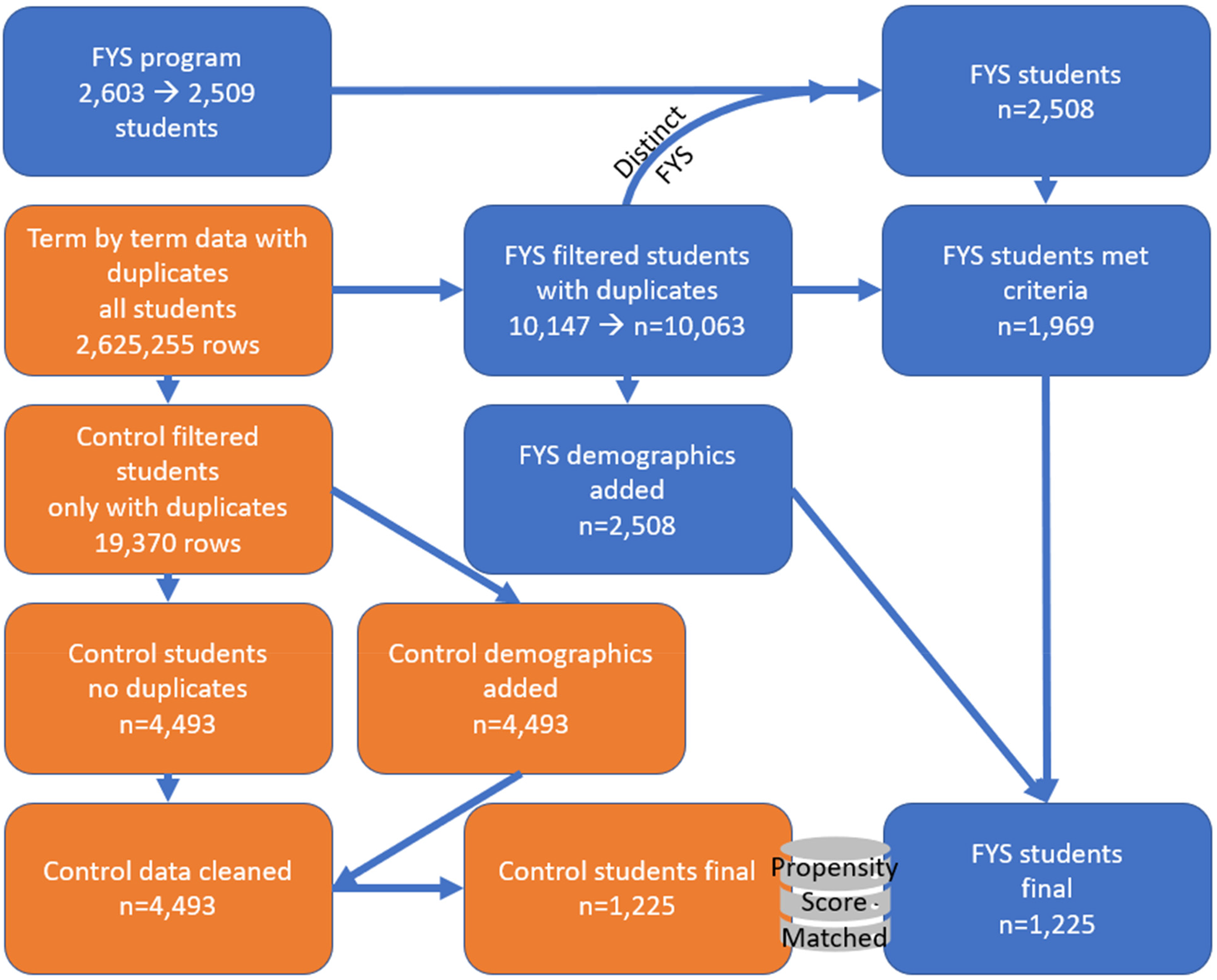

This analysis compared 2019 FYS participants to nonparticipants on qualitative and quantitative indicators that might offer evidence of differential outcomes. In other words, we adopted the quasi-experimental approach to establish an association between the FYS program and a variety of quantitative and qualitative student outcomes including first-year retention and physical, emotional, and social well-being. This analysis was designed as a retrospective case–control study. The analytic datasets came from two data sources: a FYS program file and a university student data file from the Office of the Registrar. All participants were first-time, full-time in college students. A diagram of the data management process is summarized in Figure 1. IRB approval was obtained to conduct this study.

Data management process for target and control groups.

Subject/participant characteristics related to the outcome should not differ by group membership. Thus, records were matched on background characteristics to control for selection bias using propensity score matching (Stuart, 2010). Propensity score matching obtains balanced samples by selecting case and control records with similar characteristics. To test whether the propensity score match algorithm obtained the desired balanced samples, an additional survey question was examined: “During your senior year in high school, how often did you…[ask] an insightful question in class.” Results indicated that response distributions to the question were similar regardless of whether respondents were part of the control or experimental group. The match criteria for these two groups were student residency flag (in-state vs. out-of-state), class level/standing, first-generation college student flag, gender, race/ethnicity, Hispanic flag, and age. All variables labeled as flags in this list were binary yes/no indicators.

A survey was offered to all freshmen in fall 2019 (response rate was 51%, n = 5,606). Survey data were merged into the study dataset to examine qualitative variables. The final sample size available for analysis included 1,225 experimental cases and an equal number of controls.

For both quantitative and qualitative analyses, only records with populated data fields for the seven match criteria listed earlier were used for specific statistical tests and therefore sample sizes were sometimes lower than 1,255.

SAS 9.4 software was used to conduct statistical analyses to generate descriptive statistics, conduct comparisons using independent samples t-tests or chi-squared tests, and develop a multiple regression model. Retention was calculated for each group as the number of students that were enrolled at the time period of interest divided by the starting number of students, 1,225. The outcomes of interest were first fall-term GPA, spring-term GPA, next fall-term GPA, retention to the spring term, retention to the next fall, and mean differences on specific survey items described below. The following hypotheses were tested:

H01: There was no difference in fall 2019 term GPA between the two groups. H02: There was no difference in spring 2020 term GPA between the two groups. H03: There was no difference in fall 2020 term GPA between the two groups. H04: There was no difference in spring 2020 cumulative GPA between the two groups. H05: There was no difference in fall 2020 cumulative GPA between the two groups. H06: There was no difference in fall 2019 cumulative credit hours attempted between the two groups. H07: There was no difference in spring 2020 cumulative credit hours attempted between the two groups. H08: There was no difference in fall 2020 cumulative credit hours attempted between the two groups. H09: There was no difference in fall 2019 cumulative credit hours earned between the two groups. H010: There was no difference in spring 2020 cumulative credit hours earned between the two groups. H011: There was no difference in fall 2020 cumulative credit hours earned between the two groups.

Credit hours attempted are credit hours that students registered for at the beginning of the semester. Credit hours earned are credit hours that students completed and received a grade for at the end of the semester.

When the p-value for the equality of variances test was less than .05, the variances could not be assumed to be equal between populations. In such cases (H02, H03, H04, H06, H07, H08, H09, H010, H011), the Satterthwaite t-statistic was used. Eleven statistical tests were conducted; therefore, the Bonferroni–Holm method was used to control familywise error.

For the freshman survey, students responded to the prompt: Please indicate the extent to which you agree with each of the following statements. Possible Likert scale responses were: strongly agree, agree, somewhat agree, somewhat disagree, disagree, or strongly disagree. Ten freshman survey items were examined in this study. Their corresponding hypotheses are as follows:

There was no difference between the two groups in respondent agreement of the statement…

H012: “…I feel valued as an individual at [university].” H013: “…I feel that I belong at [university].” H014: “…I am proud to be a student at [university].” H015: “…I feel welcomed at [university].” H016: “…I see myself as a part of the [university] community.” H017: “…I have established positive relationships with faculty members.” H018: “…I am a very good student.” H019: “…I am very capable of succeeding at [university].” H020: “…I know how to study to perform well on tests.” H021: “…I will ask for help when needed.”

Positive leaning response choices were trichotomized into the following groups based on observed response distributions: strongly agree/agree/somewhat agree, strongly agree/agree, and strongly agree alone. Responses not belonging to the positive options were considered negative responses that comprised the second group. In other words, these variables were dummy coded, which allowed the comparison of differences between target and comparison levels of a factor.

Results

Quantitative Results

Experimental cases were matched to controls using propensity score matching to address differences due to participation or no participation in the FYS. To confirm that samples were indeed balanced, we compared the percentage of students responding affirmatively (very often, often, or somewhat often) or negatively (occasionally, rarely, never) to the survey item about the frequency of asking an insightful question in class during their senior year of high school. There was no statistically significant difference between the groups (chi-square [df = 1] = 0.7255, p-value = .3944), lending additional support to the assumption that cases and controls were balanced.

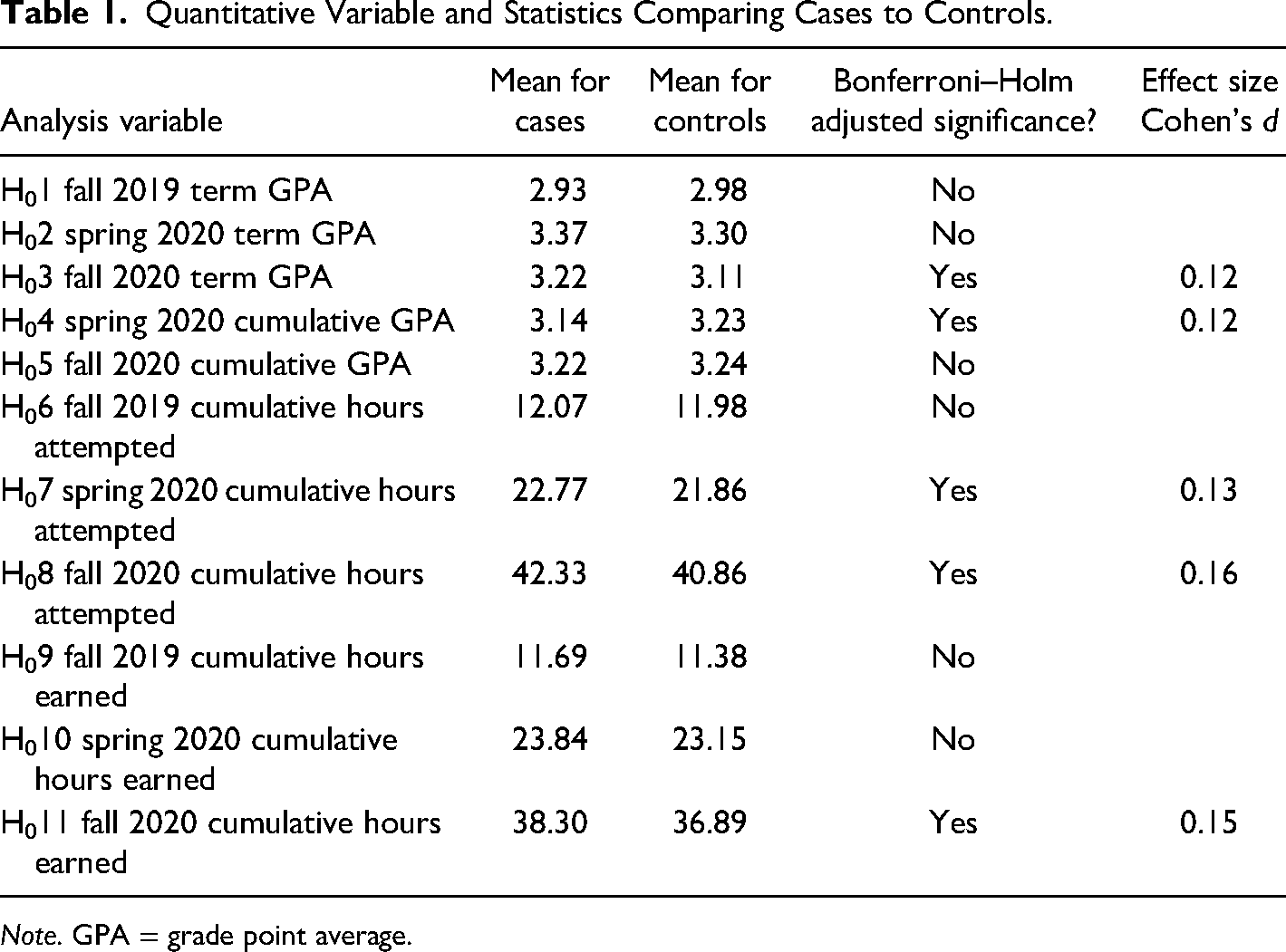

Results are summarized in Table 1. Fall 2020 term GPA (1 year later) was statistically different between the two groups (H03 p-value < .05). Spring 2020 cumulative GPA, fall 2020 cumulative hours attempted, spring 2020 cumulative hours earned, and fall 2020 cumulative hours earned were also statistically significant (H04, H07, H08, and H011 p-values < .05). Effect sizes are presented in bold for results that were statistically significant in Table 1. There was no statistical significance for the other quantitative hypotheses tested (p-values > .05).

Quantitative Variable and Statistics Comparing Cases to Controls.

Note. GPA = grade point average.

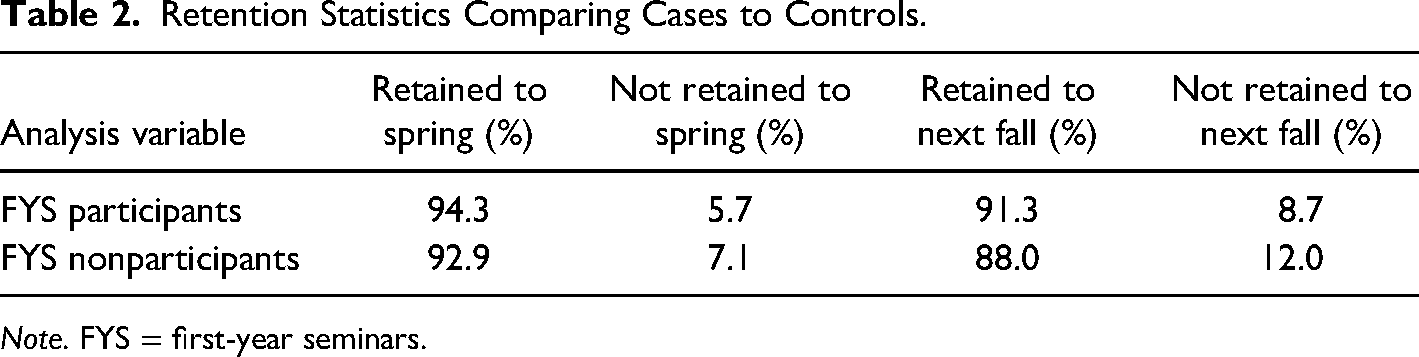

One year of follow-up data were available to examine the retention and the Pearson chi-square test was used to test whether the proportions for the two groups were equal. More FYS participants were retained to the subsequent spring term (p-value = .0434) and the following fall term (p-value = .0001) compared with non-FYS participants. Results are presented in Table 2.

Retention Statistics Comparing Cases to Controls.

Note. FYS = first-year seminars.

Qualitative Results

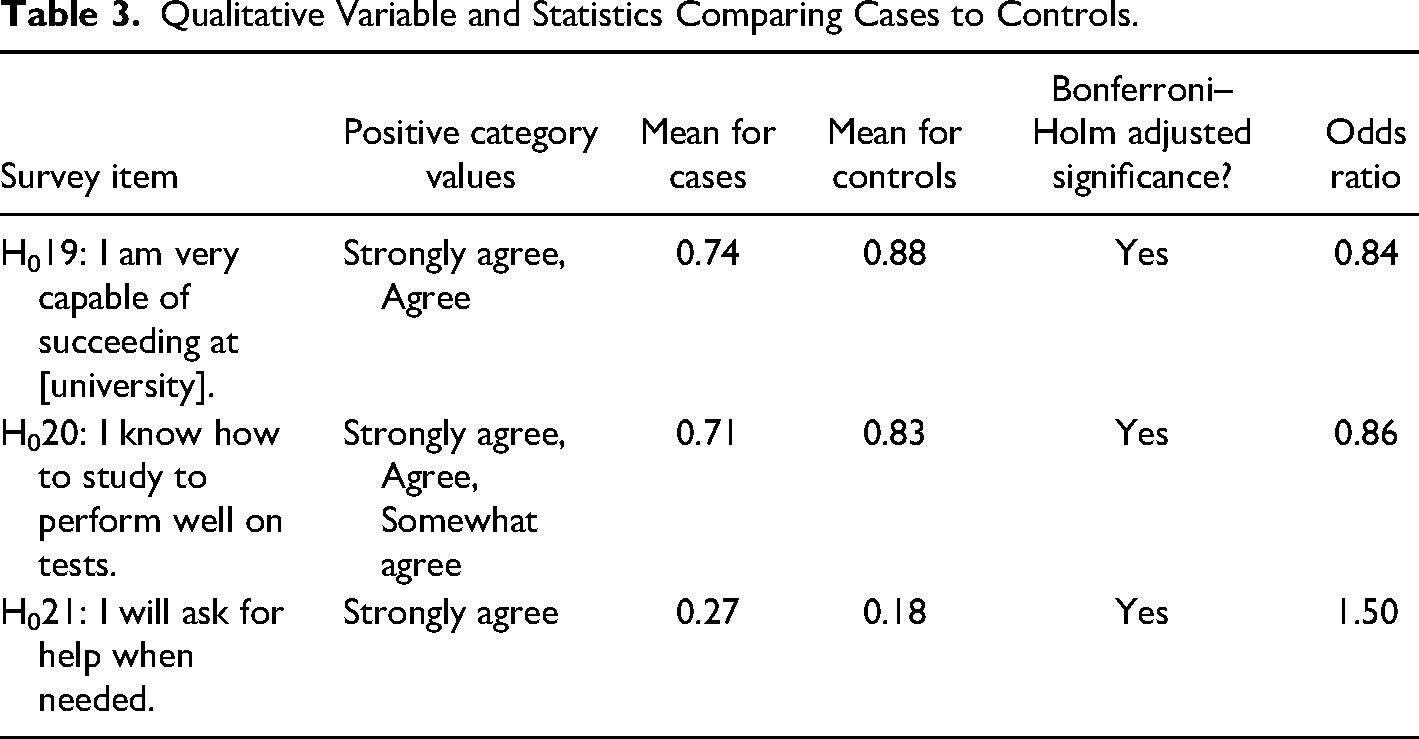

Qualitative results were examined using the freshman survey. Statistical differences were identified between FYS participants and nonparticipants for three-survey items, presented in Table 3. When the “strongly agree” and “agree” response choices were compared with all other response choices, the “I am very capable of succeeding at [university]” survey item was statistically significant with nonparticipants reporting stronger agreement than participants. The odds ratio for responding in the affirmative to this survey item for participants versus nonparticipants was 0.84. An interpretation of this result is that participants endorsed feeling capable of succeeding 16% less compared with nonparticipants. The same pattern was found when “strongly agree,” “agree,” and “somewhat agree” responses were dummy coded together for the survey item, “I know how to study to perform well on tests.” Therefore, nonparticipants reported statistically significant stronger agreement than FYS participants. The odds ratio for responding in the affirmative to this survey item for participants versus nonparticipants was 0.86. Nonparticipants endorsed knowing how to study to perform well on tests 14% more than participants.

Qualitative Variable and Statistics Comparing Cases to Controls.

However, when the “strongly agree” response choice was compared with all other response choices for the survey item “I will ask for help when needed,” the null hypothesis was rejected. FYS participants reported statistically significant stronger agreement than nonparticipants. The odds ratio for responding in the strong affirmative to this survey item for participants versus nonparticipants was 1.50. In other words, participants were 50% more likely to ask for help when needed than nonparticipants.

Discussion

Comparing FYS students to control students offers an opportunity of not only measuring educational gains from a common baseline but also any differential gains due to the program. This study addresses serious concerns with the validity of single sample study designs, the two most important of which are selection bias and maturation effects.

Term GPA was tested in the first, second, and third hypotheses. Although term GPA was not significant for the first two terms, the second fall-term GPA was statistically different and higher for FYS participants. The mean difference was small at 0.1 grade points and the calculated effect size was small as well (Cohen’s d = 0.12). An interesting observation was the trend for mean term GPA over these three semesters. FYS participants started with a slightly lower GPA in the first term, which became a slightly higher GPA in the second term, and continued to increase in the third term we measured. Although the first two-term GPAs were not statistically significant, the gap grew wide enough to be statistically significant in the third measured term.

Next, cumulative GPA was tested in the fourth and fifth hypotheses. Contrary to the expected findings, the cumulative GPA was higher for non-FYS students in the second semester and the difference was statistically significant. Non-FYS students had a 0.08 grade point advantage. However, FYS students caught up by the third term and there was no statistical difference at that point (Ho5). Statistically significant results for hours attempted and earned (Ho7, Ho8, and Ho11) favored FYS students. These GPA results are consistent with retention results to the next spring and second fall terms, which were statistically significant and favored FYS students.

Three qualitative responses were statistically significant. Two items favored nonparticipants (“I am very capable of succeeding at [university]” and “I know how to study to perform well on tests”) and one favored FYS students (“I will ask for help when needed”). FYS students are explicitly taught skills to achieve their academic goals and instructed in campus resources available to them, but we do not know why results present in this manner. Though effect size differences between groups were small for all three survey items, the odds ratio calculation identified that participants were 50% more likely to ask for help when needed than nonparticipants. The other odds ratios were lower.

Limitations

This study was conducted at a large public land-, sea-, and space-grant university with approximately 70,000 students. Results may not generalize to other institutions due to the physical environment, differential resources devoted to such a program, unique features of this FYS program compared with others, or other reasons. This FYS experience includes peer mentors who serve as key elements of every class. They engage students and instructors more or less successfully, which may affect the outcomes of interest in this study. Also, students had attended three to seven class sessions of the FYS when the freshman survey was offered. Survey results may be affected by the near-concurrent FYS and by not having completed the FYS by the time survey responses were collected.

Although the effort was made to use all relevant variables associated with the outcomes of interest, other unmeasured factors may influence the association between program participation and outcomes. For example, we did not account for income level because we did not have access to such data. There were hundreds of instructors and the fidelity of implementation of the FYS was not accounted for. However, the matching procedure used in our analysis did not identify differences in characteristics that we checked for between the two groups.

Control group students have access to many of the same resources to which the FYS students have access. Effect sizes for program participants may be attenuated as a result. Quantitative outcomes were examined at multiple points after the program ended and effect sizes typically attenuate the more distant the quantitative comparisons are measured from the intervention. Although the effects appear small, our results show they persist even 1 year later.

Future Research

This analysis adds discrete quantitative and qualitative measures to the conversation, both important elements of a comprehensive analysis of FYS programs. Replicability is important and we intend to examine the same outcomes for future student cohorts and subpopulations of students to determine if intervention effects and effect sizes are consistent across groups. To extend this work, we intend to examine students’ diverse background characteristics and the intersections of those characteristics on quantitative and qualitative outcomes. Finally, we intend to explore student outcomes when they engage in multiple HIPs.

Conclusion

High-impact practices are appealing because they purport to offer a high return on investment and to be of benefit to all students, especially underserved students (Culver & Bowman, 2020; Fidler & Godwin, 1994). Assessment of these practices is important to advance our knowledge of where and how these impacts are made. The research field on FYS must do justice to students by interrogating educational practices with high rigor. Generally, this study favored FYS program participants over nonparticipant equivalent students and statistical differences were observed between groups after controlling for background characteristics. Qualitative differences were observed immediately, whereas quantitative differences appeared in spring and the following fall semester later.

This study employed a matched case–control design to address the limitations of single-sample study designs. Namely, validity and generalizability to similar student populations are the greatest risks that single sample studies fail to address. Yet, single sample studies continue to represent the majority of published studies. The volume of studies that employ experimental and quasi-experimental approaches is lacking. The United States Congress (2015) supports the use of experimental and quasi-experimental designs to “permit the strongest causal inferences,” as do bodies such as the American Educational Research Association (2009). Our literature review identified disturbingly few studies underpinning the high-impact status conferred to FYS programs. Therefore, we join the chorus of calls for better research in the context of FYS programs and that they may be examined in comparison to other groups. Contrasts highlight differences in direction and magnitude and although this work is contextual and specific to our institution, it reinforces a model for others to adapt and follow. Collectively, such work will shed light on where and for whom impacts occur for programs that purport to be high impact.

FYS activities are developed, managed, and implemented in a unique manner at each institution of higher learning. Ward et al. (2020) utilized the FYS curriculum that varied markedly from our own and focused on science, technology, engineering, and mathematics (STEM) students. Weiss et al. (2014) included a comprehensive student services component. These are only two examples and illustrate that FYS programs require study in the unique contexts in which they are applied. This study adds value through the unique features of its FYS program.

Educational research has had limited impact on practice over recent decades and the quality of education research must first improve (Lagemann, 2000; Penuel et al., 2020). In part, this is because methodological strategies underutilize strong study designs such as the quasi-experimental approach of this study. We recommend institutions that have or are developing FYS adopt a conservative stance by considering these initiatives to be “proto-HIPs,” “emerging HIPs,” or “promising practices” until sufficient evidence warrants the designation of high impact. Educational research is most useful as practical research. That is, it should remain vigilant to threats to generalizability and especially threats to validity. Our practical approach used comparison groups and made the evidence plain in this analysis.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.