Abstract

Universities can neither ban nor ignore generative AI (GenAI); they must govern and design for it. This paper argues that the core challenge is not ‘cheating’ per se but the misalignment between legacy assessment designs and AI-mediated learning. We contribute (1) a practical, tiered governance model that aligns policy, pedagogy, and assessment operations; (2) an assessment redesign heuristic that integrates authenticity, cognitive demand, and evidence provenance; and (3) a risk-mitigation view adapted from the Swiss-cheese model that places student learning, rather than surveillance, at the centre of integrity work. Building on recent philosophical critiques of instrumental responses to GenAI in education, we position assessment as a socio-technical system where teacher judgement, student agency, and tool affordances co-evolve. We illustrate the approach with ready-to-adopt patterns (e.g. oral defence with artefact trail; cohort-specific data briefs; constrained-tools practicals) and specify implementable governance levers (role clarity, template language, moderation workflows, analytics). The result is a coherent pathway ‘beyond bans’ toward trustworthy assessment that is educative, fair, and feasible at scale.

Keywords

Introduction

Generative artificial intelligence presents a philosophical and practical challenge on a scale not experienced since the start of the Enlightenment. – Henry Kissinger, Eric Schmidt and Daniel Huttenlocher (2023)

The rapid proliferation of generative AI (GenAI) tools, such as ChatGPT, since late 2022 has sparked a considerable wave of academic inquiry, with researchers eager to explore the transformative potential of GenAI in teaching and learning. The potential benefits of GenAI in education are profound. For example, GenAl-driven personalised learning systems can adapt in real time to students’ needs, providing tailored content and feedback that enhances engagement and supports diverse learning styles (Abdelghani et al., 2023; Cotton et al., 2024; Jauhiainen and Guerra, 2023; Sullivan et al., 2023). Research on GenAI integration in English as a Foreign Language (EFL) classrooms has revealed positive effects on student motivation (Kim et al., 2024) while GenAl-based language learning tools have been shown to enhance intrinsic motivation, boost self-efficacy, and facilitate personalised learning experiences (Moybeka et al., 2023). These systems promise to address long-standing challenges in education, such as the provision of individual instructional support and feedback, resolving the achievement gap and improving student retention, by offering individualised support that is difficult to achieve in traditional, and often resource-poor, classroom settings.

At the same time, this enthusiastic uptake of GenAI has exposed a fault line in university assessment, surfacing concerns about the disruptive impacts of GenAI technologies and raising critical questions about academic integrity, the reliability of Al-generated content, and the broader societal implications of GenAl’s growing influence (Peters et al., 2024). The result is a widening gap between legacy task designs and the realities of AI-mediated learning and work. For instance, as GenAI-driven tools become more prevalent, there is a risk that disparities in access to technology will exacerbate existing inequalities in education (Lodge, 2024). Students and educators in under-resourced settings could struggle to leverage these tools effectively, potentially widening the gap between those who have access to advanced educational technologies and those who do not (Perkins et al., 2024). Ethical and responsible use of GenAI is another major focal point of these concerns, particularly as reports emerge of GenAI confidently passing standardised exams (Chau et al., 2024; Hargreaves, 2023; Raftery, 2023; Savelka et al., 2023), drafting peer-reviewed scientific articles (Kacena et al., 2024), and impersonating humans on social media platforms (Hajli et al., 2022). The area in which this frustration is arguably being felt most intensely is that of academic integrity, particularly in relation to the perceived rise in GenAI-assisted cheating on assessments (Lodge, Howard, et al., 2023).

Philosophical treatments of GenAI in education caution against purely instrumental responses that, for example, reduce teaching to prompt-engineering or policing. As Peters et al. (2024) argue, GenAI unsettles epistemic, ethical, and relational dimensions of education; what matters is not only what tools can do, but how their affordances reconfigure student agency, teacher judgement, and institutional responsibility. We take that cue in this paper: assessment must be approached as a socio-technical system, not a single tool choice or a detection arms race.

This paper makes three contributions. First, we propose a tiered governance model that connects high-level policy to the everyday mechanics of assessment (task briefs, moderation, documentation). Second, we adapt the Swiss-cheese model into a governance heuristic: rather than centring integrity on surveillance, we layer educative prevention, explicit expectations, design features facilitate deep engagement and proportionate checking. We situate our stance in recent critical scholarship on GenAI and education (Peters et al., 2024), then outline the governance model with actionable levers. We introduce the redesign heuristic with concrete patterns teachers can adopt immediately, while turning to integrity as a multi-layered defence that preserves trust while keeping learning central. We close with implementation guidance and implications for leaders, teachers, and students.

The initial response: Banning AI in education settings

A common first response to GenAI was to impose bans or blocks on its use by students and teachers. Schools and universities, motivated by integrity concerns, attempted to restrict access through policy prohibitions supported by technical controls and detection software (Shanklin, 2023; Volante et al., 2023). Yet in practice, such bans proved almost impossible to enforce in contexts where students and staff have ready access to personal devices and VPNs (Dwyer and Laird, 2024) and due to the absence of reliable detection tools. Predictably, this led to covert use rather than genuine prevention, heightening risks around data privacy and unsupervised application.

More importantly, prohibition discouraged transparency and professional dialogue about ethical and responsible use of GenAI. Students and educators who did use GenAI did so without guidance, while those interested in pedagogical experimentation were constrained by suspicion and fear of sanction (Evans, 2024). Consequently, this might have further stifled innovation and prevented the development of informed, ethical practices in these early moments. Bans might have reassured institutions in the short term, but they risked alienating learners and teachers from shaping appropriate GenAI use. The failures of prohibition illustrate why integration strategies must move beyond restriction and toward constructive governance and assessment redesign. Recognising these limitations, many institutions have made this shift from prohibition to more nuanced approaches that acknowledge GenAI’s inevitability and seek to embed it responsibly in teaching and assessment.

Embracing AI: Shifting perspectives in education

In response to the limits of prohibition, many institutions began shifting toward approaches that integrate GenAI in controlled and ethical ways. Rather than outright bans, schools and universities are developing clear usage guidelines, providing training, and fostering dialogue on how AI should be incorporated into assessment (Elwell, 2024; Moorhouse et al., 2023). Internationally, countries such as Japan, England, and the United States have issued guidance that reflects a similar pivot: recognising that students and educators are already using GenAI, and that policy must support rather than suppress this reality (Vidal et al., 2023).

In Australia, the Framework for Generative Artificial Intelligence in Schools (2023) (the Framework) positions integration as essential for equipping students with the skills and knowledge necessary to navigate and thrive in a world where GenAI is ubiquitous (Sharples, 2023). This integration goes beyond merely teaching students how to use GenAI tools; it involves fostering a deeper understanding of the underlying principles of Al more broadly, its potential applications, and its broader social and environmental implications (Chien, 2023; Cooper, 2023). These experiences not only prepare students for the demands of the future workforce (where it can be assumed that the use of GenAI to improve productivity and quality in professional work will be an expectation) but also encourage them to think critically about the role of GenAI in society and their responsibilities as informed citizens. Furthermore, this approach helps to demystify GenAI, making it more accessible and less intimidating for both students and educators.

While the Framework sets out important values such as equity, ethics, privacy, it remains largely aspirational. Statements such as ‘Generative AI tools are used in ways that are accessible, fair and respectful’ could apply equally to education in general, without offering actionable steps for teachers. This gap between principle and practice underscores the need for governance models that specify concrete responsibilities across levels.

At the same time, GenAI is already being used in classrooms to support both learning and teaching. Distinguishing between educational use (supporting students to develop skills, reflect, and iterate) and professional use (enhancing productivity and output) provides a useful frame for guiding appropriate application. For higher education especially, this dual lens clarifies the task: prepare students not only to learn with GenAI but also to use it responsibly as a professional tool. These limitations of current frameworks highlight why a more detailed, tiered governance model is needed: one that translates broad aspirations into actionable levers at every level of the system.

Academic dishonesty in the age of AI

Educators rightly worry about assessment integrity in the GenAI era, but the evidence suggests familiar problems in a new guise. A content analysis of media coverage reported widespread concern about misconduct and the practical difficulty of detecting undisclosed GenAI use (Sullivan et al., 2023). Detection tools remain unreliable and can produce unfair bias against some student groups (Chaka, 2023; Liang et al., 2023); workarounds (e.g. paraphrasing) also undermine plagiarism checks (Baron, 2024). In response, many commentaries have advocated a return to fully invigilated, pen-and-paper exams; Sullivan et al. (2023) note this as a common prescription, but such moves risk narrowing what we assess and under-preparing students for AI-mediated professional contexts (Cader et al., 2023). Rather than doubling down on surveillance or attempting to ‘AI-proof’ every task, we position integrity as a design problem: make student thinking visible, document process, and normalise declared GenAI use where appropriate. As Cope and Kalantzis (in Peters et al., 2024: 840) point out, ‘Cheating… is the smallest of the problems for education created by GPTs… More than remembering stuff… we rely increasingly on digital devices as our cognitive prostheses… As soon as we expand our notion of knowledge from individual to collective, from personal memory to “cyber-social” knowledge systems, we run into much bigger problems with generative AI’. This stance sets up the practical contributions that follow: governance levers that clarify expectations, and an assessment redesign heuristic that emphasises cognitive demand, evidence provenance, and transparent AI affordances. Together, these shifts reduce single-point vulnerabilities while preserving learning value.

Academic dishonesty: A historical perspective

Concerns about cheating in education long predate GenAI. Research has consistently shown that dishonesty undermines formative and summative purposes of assessment, limiting meaningful feedback and distorting judgements of student achievement (Scott, 2016; Zhao et al., 2023). Rates of contract cheating, plagiarism, and exam misconduct have remained relatively stable over decades, though methods have evolved with technology: from copying in class, to online ghost-writing, to mobile phone use during exams (Dawson, 2021; McCabe et al., 2001). Studies highlight both individual factors (self-control, pressure to succeed, low school identification) and contextual factors (peer norms, institutional culture, parental expectations) as drivers of dishonest behaviour (Batool et al., 2011; Klik et al., 2023; McCabe et al., 2001; Waltzer et al., 2023; Yu et al., 2017). Cheating is not limited to the tertiary level. High school students, too, engage in widespread cheating despite recognising it as morally wrong (Galloway, 2012; Jensen et al., 2002). For these students, factors such as fear of failure, pressure to succeed, low assignment value, and a school culture that prioritises performance over actual learning are significant motivators (Galloway, 2012; Waltzer et al., 2023).

Historically, one of the most common techniques for cheating has been copying from classmates during exams, either by directly observing their answers or by exchanging answers through subtle gestures and signals (Adams, 2011). The use of mobile phones has become a prevalent means of cheating in exams, allowing students to discreetly access information, search for answers, or communicate with others during exams. The shift to online learning and assessments, particularly during the COVID-19 pandemic, has introduced new challenges for maintaining academic integrity. The remote nature of online exams can make it easier for students to use unauthorised resources, collaborate with peers, or even have someone else take the test on their behalf (Verhoef and Coetser, 2021), although technology enabled remote supervision or invigilation of exams to address this issue continues to evolve. Lipson and Karthikeyan (2016) describe more sophisticated cases where students employ elaborate schemes such as coordinating with others to share answers or employing devices specifically designed to be discreet during exams. These evolving techniques reflect the adaptability of students to circumvent traditional monitoring practices, making it increasingly challenging for educators to detect and prevent dishonest behaviour.

Importantly, cheating has historically been normalised to some extent, with tolerance among students and even teachers increasing over time (Schab, 1991; Šorgo et al., 2015). This suggests that GenAI is not creating a new problem but providing another outlet for enduring behaviours. The key issue is not the technology itself but the design of assessments and the learning cultures in which they are situated (Lang, 2013). Recognising this continuity underscores our argument: effective responses to GenAI must target underlying drivers of dishonesty and redesign assessments to make student thinking visible, rather than attempting to ban or outpace the tools. This historical continuity highlights that the emergence of GenAI does not signal a wholly new integrity crisis but instead has amplified existing vulnerabilities.

The future of learning and assessment in a GenAI world

As educators navigate this complex landscape, the task is not to eliminate AI but to develop strategies that uphold standards while using its potential to enrich learning. The persistence of dishonest behaviours across decades (Dawson, 2021; McCabe et al., 2001) underscores that GenAI is less a new crisis than another expression of long-standing vulnerabilities. The root causes, such as pressure to succeed, low engagement, and misaligned assessments, remain. Addressing these drivers requires redesign, not prohibition.

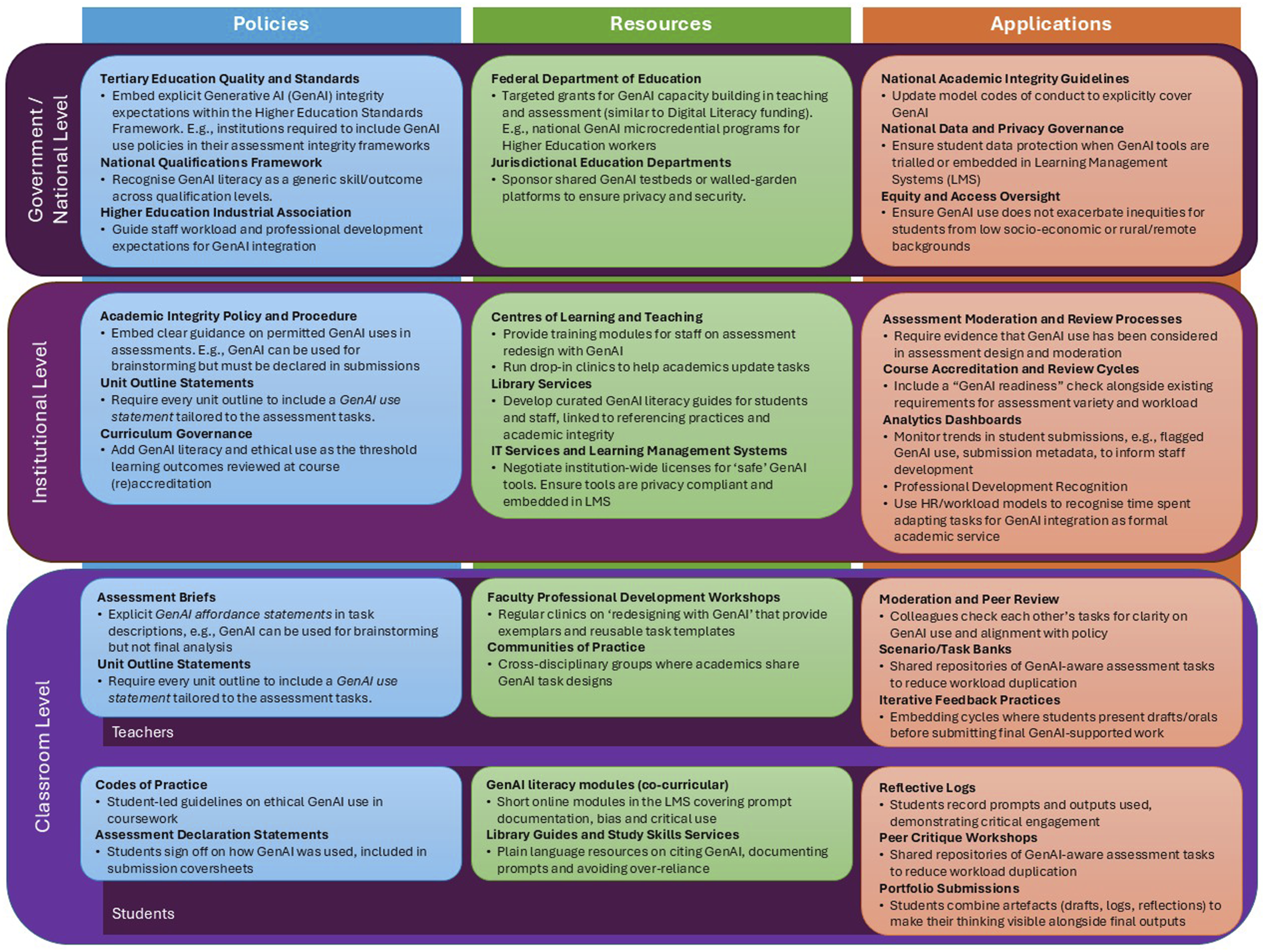

Current policy frameworks (e.g. Australian Framework for Generative Artificial Intelligence in Schools, 2023) articulate essential values of equity, ethics, and integrity, yet often stop short at aspirational statements. Without actionable guidance, teachers are left to bridge the gap between principle and practice. To address these gaps, while drawing on existing frameworks and research, the authors propose a tiered governance model for supporting and guiding the incorporation of GenAI into educational assessments (Figure 1). Designed to bridge the divide between high-level policy and classroom practice, this framework ensures that each level - from government to individual educators and students - are contributing to a cohesive, ethical, and practical Al integration. It draws from established models like the Australian Framework for Generative Al in Schools (2023), GenAI Strategies for Australian Higher Education: Emerging Practice (2024), the Al Assessment Scale (AIAS) (Perkins et al., 2024), and the ICE model (Volante et al., 2023), grounding its recommendations in both policy and pedagogical theory. The model operates as an interconnected system in which each tier is reliant on the others; no single level can achieve success without the support and alignment of the entire model. This framing recognises governance as a socio-technical system in which rules, norms, and human–AI practices interact (Peters et al., 2024). Rather than abstract tiers, each level has concrete levers that enable the integration of GenAI into assessment. Tiered governance framework for integrating Generative AI (GenAI) in assessment. This framework depicts the interdependent responsibilities of government, institutional, and classroom levels in embedding GenAI within educational assessment. Policies provide ethical guardrails and national consistency; institutional processes translate these into operational resourcing and oversight; and classroom-level practices enact authentic, educative uses of GenAI. Alignment across levels fosters coherent governance, bridging aspirational policy with classroom realities and promoting integrity through system-wide design rather than top-down control.

At the government and national level, policy levers include embedding explicit GenAI expectations in Higher Education standards frameworks (e.g. the Tertiary Education Quality and Standards Authority – TEQSA), incorporating AI literacy as a generic skill in national qualifications frameworks (e.g. the Australian Qualifications Framework), and updating professional development expectations through sector bodies (e.g. the Australian Higher Education Industrial Association). Resources are mobilised by the federal education department, which can fund GenAI microcredentials and capability-building grants, and by jurisdictional departments that sponsor safe GenAI testbeds. Applications are evident in the work of relevant national agencies, such as TEQSA, Universities Australia and the Office of the Australian Information Commissioner, which ensure that national integrity codes, privacy regulations, and equity programs address GenAI directly.

At the institutional level, policy levers include embedding GenAI Use Statements into unit outlines and revising academic integrity procedures. Resources are provided through centres for learning and teaching, library services, and IT governance, which deliver training, develop GenAI literacy guides, and secure compliant tools. Applications include assessment moderation and review processes, course accreditation checks that include ‘GenAI readiness’, and analytics dashboards that surface patterns of GenAI use.

At the classroom level, educators act as policy agents by including explicit GenAI affordance guidance in task briefs and rubrics. Resource levers are delivered through professional development workshops and communities of practice. Applications include moderation, peer review, shared task banks, and iterative feedback practices that normalise transparent GenAI use. For students, governance takes the form of codes of practice, assessment declarations, GenAI literacy modules, and reflective portfolios. These practices ensure that governance is lived at the point of teaching and learning, not only enacted at policy level.

At the same time, purely restrictive approaches to assessment are unsustainable and there is still a notable gap in the literature when it comes to understanding how educators are modifying existing assessments to incorporate GenAI. Frameworks such as the AI Assessment Scale (Perkins et al., 2024) and many similar adaptations provide useful starting points (Cacho, 2024; Elwell, 2024; Firth et al., 2024; Kılınç, 2024; Roe et al., 2024). However, examination of their application in real-world settings remains underexplored, and there is still much work to be done in translating these theoretical models into practical, actionable str but more detail is needed on how to adapt traditional tasks in practice.

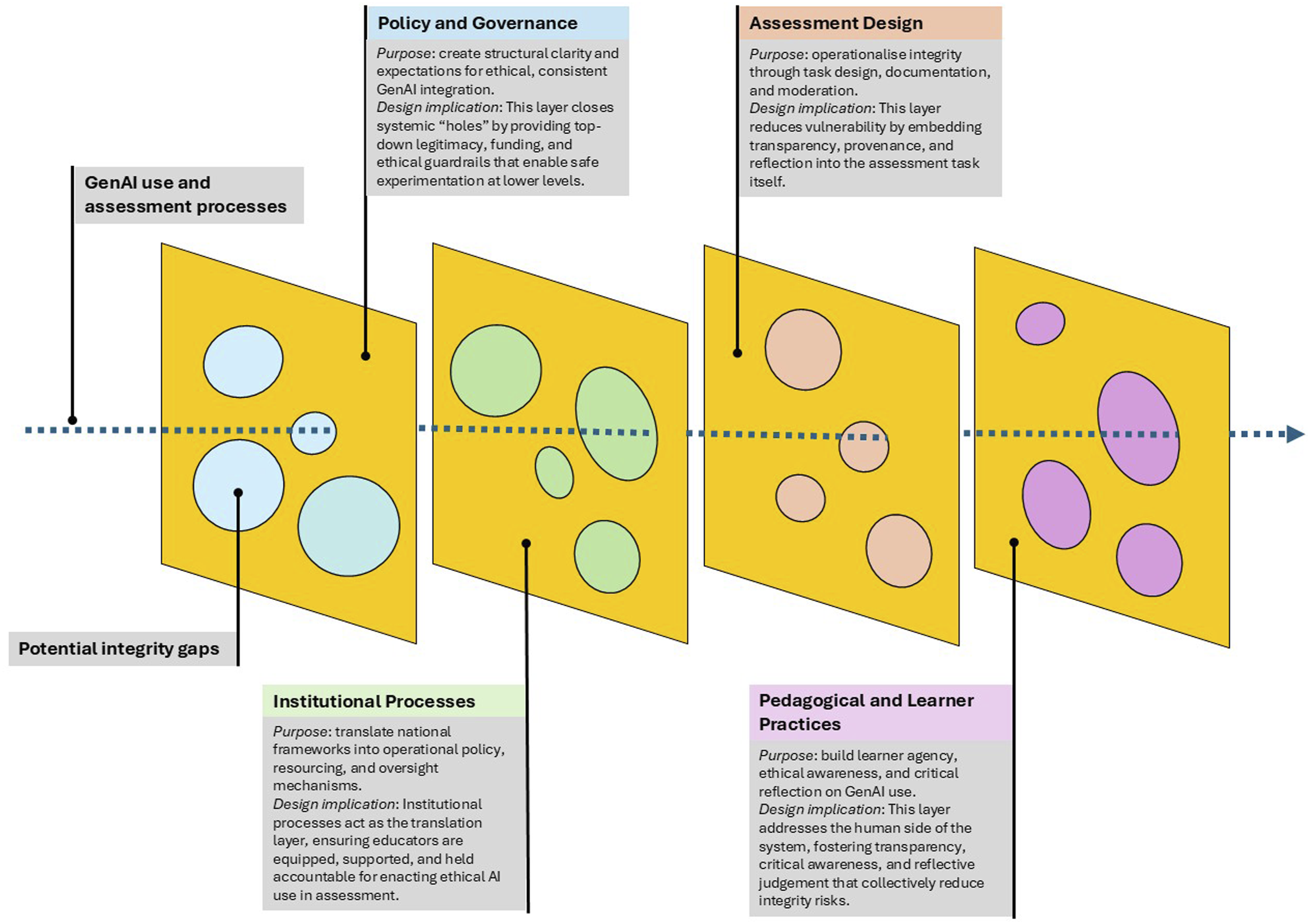

The shrinking range of ‘AI-proof’ tasks has led educators to explore more constructive designs that embed AI to foster critical thinking and higher-order learning (Beck and Levine, 2024; Volante et al., 2023). Authentic assessment remains the most robust mechanism for sustaining academic integrity in an era of generative AI. Moreover, effective models of authenticity emphasise the creation of assessment tasks that mirror the ways in which skills will be applied in practice (Gulikers et al., 2004). Yet authenticity alone is insufficient without systemic alignment that connects governance, institutional processes, and classroom practice. To capture this alignment, the Swiss cheese heuristic is reinterpreted here as a design-based framework for governance rather than a model of error prevention (Figure 2). The Swiss cheese heuristic for governance of AI-integrated assessment. Adapted from Reason’s (2000) system safety model, this heuristic represents how alignment across four layers – policy and governance, institutional processes, assessment design, and pedagogical and learner practices – creates resilience in GenAI-integrated assessment systems. Each layer contains inherent vulnerabilities (‘holes’), but coherence between layers mitigates risk, ensuring transparency, authenticity, and shared responsibility for ethical AI use. Together, these layers operationalise the principle of resilience through alignment rather than restriction.

Originally developed by Reason (2000) to explain how accidents occur through cumulative weaknesses in organisational defences, the Swiss cheese model is adapted in this paper to visualise how assessment integrity can be strengthened through layered, interdependent safeguards. Figure 2 illustrates how the same four levels identified in the governance model (Figure 1) operate as layered filters for integrity and design alignment, where coherence across levels creates resilience rather than restriction. Each slice represents a different level of the system: policy, institutional processes, assessment design, and pedagogical and learner practices. The ‘holes’ signify inevitable vulnerabilities: gaps in regulation, inconsistencies in implementation, or moments of ambiguity in classroom use. When layers are misaligned, these holes can line up, creating pathways for breaches of integrity or inequitable access. However, when the layers operate coherently, weaknesses in one domain are offset by strengths in another. For example, clear institutional policy on permissible GenAI use can complement assessment designs that require evidence of process and artefact provenance, such as prompt logs or draft portfolios. Likewise, reflective and peer-informed pedagogical practices can reinforce task-level transparency, ensuring that students engage critically rather than covertly with GenAI tools. In this way, integrity is achieved not through surveillance or restriction but through distributed responsibility and design alignment.

This adaptation also reframes GenAI not as a hazard to be blocked but as a catalyst for re-examining how transparency, authenticity, and cognitive demand are embedded in assessment systems. It moves the focus away from blaming individuals (the person-centred approach) and toward strengthening the integrity of the assessment process (the system-centred approach). The heuristic thus functions as both an analytic and practical tool: it invites educators and policymakers to consider where risks are likely to emerge, and where new design opportunities can create more trustworthy and educative uses of GenAI.

While the Swiss cheese heuristic highlights how alignment across governance layers can strengthen assessment integrity, educators also need design principles that can be enacted in real contexts. Three considerations are particularly important for integrating GenAI ethically and productively. (1) Cognitive demand: ensuring that tasks require forms of reasoning, synthesis, and judgement that exceed current AI capabilities; (2) Evidence provenance: embedding opportunities for students to document and reflect on their process (e.g. through draft artefacts or prompt logs); and (3) Transparency of AI affordances: making AI use explicit within task design, so that engagement is guided rather than concealed.

These principles move beyond general appeals for ‘authenticity’ (Gulikers et al., 2004) by specifying where integrity and learning value are achieved; that is, through the process, not just the product, of assessment. Nevertheless, educators must remain attentive to disciplinary and professional contexts. Where graduates are expected to use AI tools routinely, integrating GenAI within assessment tasks is both authentic and equitable. Conversely, in professions requiring rapid, unassisted decision-making, overreliance on AI during assessment may undermine genuine preparedness. The challenge is to calibrate assessment design so that it mirrors the cognitive and ethical demands of future professional practice.

Conclusion

The integration of GenAI into educational settings presents both challenges and opportunities. While AI-assisted cheating has intensified concerns about academic integrity, it is essential to recognise that cheating has long been a persistent issue driven by underlying motivational and systemic factors. Technology has merely introduced new methods rather than new motives. Rather than focussing narrowly on ‘AI-proofing’ assessments, educational institutions have an opportunity to rethink how GenAI can enhance, rather than compromise, learning. Strategies such as the Swiss cheese heuristic illustrate how layered, authentic design can mitigate risk through alignment rather than restriction.

A tiered governance model can further strengthen institutional responses by ensuring that policies, practices, and technologies are aligned at every level. At the institutional level, governance should establish clear policies and guidelines that promote transparency and trust; at the program level, academic leaders should support assessment redesign aligned with disciplinary contexts; and at the classroom level, educators should retain flexibility to implement AI-enhanced assessments tailored to their learning outcomes. Together, these levels create coherence between innovation and accountability.

However, the integration of GenAI in assessment must be approached with caution. A critical consideration is whether students, as future professionals, will consistently have access to GenAI in their roles. Where such access is expected, such as in research, policy, data analysis, and design, it may be both authentic and equitable to allow GenAI use in assessment, reflecting professional realities. Conversely, in disciplines that require rapid, unassisted decision-making, such as teaching, healthcare, or emergency response, heavy reliance on GenAI could leave graduates underprepared for the immediacy of professional judgement. Future research should therefore examine what authentic assessment looks like in contexts requiring real-time performance, exploring alternatives such as oral examinations, simulations, or video-based scenarios that better capture situational reasoning and adaptive expertise.

Equally important is the evolving relationship between GenAI and feedback. Unlike static or transactional technologies such as plagiarism detectors or calculators, GenAI interacts dynamically, generating alternatives, explanations, and critiques in dialogue with users (Lodge et al., 2023; Lodge, Howard, et al., 2023; Mollick, 2024). Students are therefore likely to perceive GenAI as a responsive companion rather than a neutral instrument, and one that offers reassurance, scaffolding, and iterative feedback (Pavlovic, 2024). This emerging relationship blurs traditional boundaries of formative assessment, where feedback has historically flowed from teacher to student. As students increasingly turn to GenAI for cognitive off-loading or formative guidance (Huynh, 2024), educators face the challenge of designing assessments that harness this interactivity to promote reflection and agency rather than dependency.

Ultimately, the goal is not to exclude GenAI but to embed it thoughtfully and to cultivate assessments that balance technological affordances with human judgement, creativity, and ethical awareness. By reframing GenAI as a collaborative partner in learning and assessment, educators can move beyond defensive policies toward a model of trust, transparency, and shared responsibility; one that prepares students to engage critically, confidently, and responsibly in a world increasingly shaped by intelligent systems.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.