Abstract

Generative AI (GenAI) has become popular with many university students since late 2022 and is incorporated in their daily life. To use GenAI tools effectively and equitably, and also to meet industry expectations, students should be supported to develop GenAI literacy skills during their time at university. Even though some universities have provided AI literacy training courses to students, the courses are not yet widely available to students across the higher education sector. Besides, there has been a shortage of GenAI literacy courses in particular. And more importantly, it is unclear what knowledge and skills that need to be included relating to GenAI literacy, from the student perspective. Building a strong understanding of student perceptions in this area is critical, because it will help enhance appropriateness and content relevance and promote student engagement. Adopting the four-dimensional AI literacy framework as the theoretical foundation, this paper aims to address the gaps. It explores the perceptions of university students on GenAI literacy in the UK and Hong Kong contexts. Survey data from 234 students were collected and analyzed using the descriptive analysis and Kruskal–Wallis test. The results show that factors such as country of studies and prior learning about AI greatly impacted GenAI literacy. However, factors such as age and educational level do not have a significant effect. Built upon the findings and the theoretical foundation, this paper proposes a new GenAI literacy framework. This framework is a revised version of the four-dimensional AI literacy framework and proposes the essential GenAI knowledge and skills for university students. The new framework also acknowledges the impact of the macro, meso, and micro factors on student GenAI literacy development.

Introduction

Artificial Intelligence (AI) plays a significant role in industry 4.0 and has now been widely used in business. The data from Statista (2023) suggest that the global AI market size will increase from nearly 100 million in 2021 to nearly 2 trillion in 2030. The rapid growth of generative AI (GenAI) has further accelerated AI adoption in major industries. For example, globally over 43% of employees have used ChatGPT and other large language models, such as Google Gemini and Copilot to help them with their daily work. In the context of higher education, it is believed GenAI has a great potential to transform not only the way universities teach but also the way students learn. Recent research has recommended a range of approaches that can be used to facilitate student learning, such as self-paced adaptive quizzes (Dijkstra et al., 2022), peer assessment evaluation (Jia et al., 2021), conversational partner (El Shazly, 2021), and computer code explanation and debugging (MacNeil et al., 2022). Owing to their user-friendly interface, and the fact that users are not required to have specialized AI knowledge and coding skills, GenAI tools quickly become very popular with university students on their studies and daily life.

The fast surge in GenAI in work, studies, and daily life places demands on users’ GenAI literacy skills and competences and will inevitably have a major impact on the employability of university graduates (O’Dea, 2024). As with other disruptive technologies, GenAI has both the positive and negative effects on the society. For example, it shows its efficiency in brainstorming new ideas, creating document outlines, translations, and multimedia production. On the negative side, as previously identified in the literature, there are some major ethical concerns associated with GenAI (O’Dea and O’Dea, 2023). Some students have already used ChatGPT to generate parts of or an entire essay. There are also output issues. Many students are unaware of the importance and necessity of fact-checking. In addition, evaluating and selecting the most appropriate GenAI tools to use, and optimizing the prompts to achieve the desire outputs are among some of the main challenges students are facing.

Consequently, it is important and essential for universities to support students to develop GenAI literacy. During the pre-GenAI era, there has been research on AI literacy (Long and Magerko, 2020; Ng et al., 2021). Some have offered a variety of definitions for the term. Kandlhofer and colleagues (2016) consider AI literacy as technological knowledge and understanding underpinning AI applications and services. Long and Magerko (2020) define AI literacy as competencies that enable users to understand, apply, and evaluate AI tools at work and in everyday life. In addition to these more general definitions, some research emphasizes the specific competencies AI literacy should comprise. For example, Ng and colleagues (2021) propose four competences, namely, knowledge and understanding of AI; use and apply AI; evaluate and create AI; and AI ethics. The definition offered by Zhang and colleagues (2023) includes three main competences, such as AI concept (e.g., the basic understanding of AI); ethical and societal implications; and AI career future. Moreover, it has been recognized that AI literacy competencies and skills are relevant to all users and should not be limited to computer scientists and AI professionals (Laupichler et al., 2022).

However, it is worth noting that AI and GenAI literacy are different. The former emphasizes the technical aspects of AI literacy skills. Users are normally expected to comprehend interdisciplinary skillsets encompassing machine learning, deep learning, mathematics, statistics, and computer science, so that they were able to understand, deploy, and configure AI solutions meaningfully. For this reason, AI tools tend to be mainly limited to users who are Science, Technology, Engineering, and Mathematics (STEM) subject experts. GenAI literacy however focuses more on developing the awareness of the ethical and social implications and learning how to use GenAI critically and responsibly. This is because, as discussed above, GenAI tools are designed for users with “non-STEM” background. They are highly intuitive and can produce text, image, video, or audio-based responses without difficulty. For GenAI users, possessing any of the interdisciplinary skill sets mentioned above can be helpful, but is not essential. Compared with the existing literature on AI literacy, less attention has been paid to GenAI literacy.

Another gap in the literature that needs to be addressed is the insufficient attention to student perspective on GenAI literacy, as existing research primarily focuses on educator perspective. For example, Chiu et al. (2023) discussed the impact of GenAI on practices, policies, and research direction using ChatGPT and Midjourney. Lim et al. (2022) analyzed the theoretical lens to help provide implications for the future of education from the perspective of management educators. However, gaining student views and understanding how they use GenAI is equally important, as this will potentially enable universities to provide students with sufficient GenAI literacy training and resources and support them using GenAI ethically and equitably in their studies and future work.

Using the 4-dimensional AI literacy framework as the theoretical foundation, this paper aims to address the gaps identified by exploring the perceptions of university students on GenAI literacy in the UK and Hong Kong contexts on these four dimensions. The AI literacy framework was proposed by Ng and colleagues (2021) and contains four dimensions, namely knowledge and understanding of AI; use and application of AI; evaluate and create AI; and AI ethics. This framework is felt appropriate mainly because it emphasizes AI literacy and has been applied across different university contexts.

The United Kingdom and Hong Kong are chosen for the paper partially because they are keen to adopt AI in the workplace and to develop AI competences for university graduates. For example, universities in the United Kingdom drew up a set of guidelines to foster students and staff’s AI literates to support their learning/teaching and assessment methods (Department for Education, 2024). Universities in Hong Kong notably have already integrated AI literacy into their existing curriculum (Ng et al., 2023a; Kong et al., 2023). In addition, the United Kingdom and Hong Kong represent a different level of AI capacity internationally in the areas such as talent, infrastructure, research, and development. For instance, the overall ranking on the AI usage for the United Kingdom is the fourth and for Hong Kong is 32nd (Global AI index, 2023). Among the indexes, the commercial responses that focus on the level of startup activities, investment, and business initiatives based on AI are comparable for both regions. This aligned with another report by Stanford University. It shows that Hong Kong has demonstrated the greatest growth in AI adoption in industry across the world and the United Kingdom ranks fourth. The importance of AI literacy education has been recognized, and some universities are offering some AI literacy courses to students. In addition to what has already been identified in the literature, this paper aims to shed further light on how individual differences and perceptions among students may have influenced their GenAI literacy competences and development. Two research questions are proposed as follows: • How do university students perceive their GenAI literacy level in the United Kingdom and Hong Kong? • How do the demographic factors (i.e., country/region, prior AI training, gender, and subject discipline) affect university students’ GenAI literacy?

Literature review

Current research on AI literacy

AI literacy is not a brand new topic. It has however received renewed attention after the appearance of ChatGPT since November 2022. One popular trend in this field is the publication of exploratory or scoping review papers, such as those by Laupichler and colleagues (2022), Ng and colleagues (2021, 2023b), and Zawacki-Richter and colleagues (2019). These papers lay the groundwork by summarizing and evaluating the existing literature, identifying gaps, and providing recommendations for future research. These reviews indicate that research in AI literacy is still in its early stage. The field would benefit from more research that is empirically rigorous and practically relevant. Increasingly, there is a call for re-conceptualizing AI literacy, incorporating the recent development in GenAI (Koh and Doroudi, 2023).

In addition, as discussed above, some papers, including the work of Kandlhofer and colleagues (2016), Long and Magerko (2020), and Ng and colleagues (2021), focus on defining AI literacy or proposing skills that need to be encompassed in AI literacy. These definitions are often used as the underpinning reference for developing AI curriculum at either the undergraduate or postgraduate level (Chiu, 2021; Lin and Van Brummelen, 2021; Ng et al., 2023a; Southworth et al., 2023). The courses can be broadly categorized into two groups: those that mainly focus on the technical skills of AI and those that focus on a combination of technical and non-technical skills (Laupichler et al., 2022; Long and Magerko, 2020; Southworth et al., 2023; Ng et al., 2023b). The first category contains topics such as machine learning, deep learning, robotics, and programming concepts. The non-technical skills in the second category include ethical, problem-solving, and creative skills. Users are also expected to develop their awareness of social responsibilities of using GenAI (Kong et al., 2023). For example, University of California (Southworth et al., 2023) developed a multi-disciplinary campus-wide AI curriculum. The curriculum is designed for undergraduate students with diverse technical backgrounds and focuses on developing their technical and ethical skills. The AI courses experienced a high level of enrollment. A similar type of AI course was introduced to postgraduate students studying Radiology at City, University of London (van de Venter et al., 2023). Particular attention was given to the applications of AI in medical care and medical imaging. Students felt that the course offered a valuable opportunity for them to acquire specific AI skills that are relevant to healthcare. The University of Hong Kong dropped the ban on AI usage in August 2023 and introduced new policies to fully integrate GenAI into learning and teaching. Training and online courses were then provided to equip students and staff with the knowledge and tools needed to be creative and efficient in designing teaching and learning activities (HKU, 2023a). Nevertheless, it is worth noting that, despite the recent surge of interest and efforts, research on AI curriculum in the context of higher education is limited. The extent to which students achieved the intended learning outcomes also remains uninvestigated.

The four cognitive domains of AI literacy framework

The theoretical grounding of this paper is the four-dimensional AI literacy framework proposed by Ng and colleagues (2021). Based on the result of an exploratory review, the framework identifies four dimensions for fostering AI literacy, including

Knowledge and understanding of AI refers to acquiring the fundamental knowledge of AI and also how to use AI tools. The fundamental knowledge means “a person’s understanding of the function and operation of currently available technology and applications on that technology, for example, an understanding of how to operate a tablet, download an app, and share a screenshot of something made in that app” (Yu and Golden, 2019). Use and apply AI describes individual understanding of how particular AI tools can be used in different scenarios and to assist users to complete tasks efficiently and creatively.

Evaluation and creation of AI is concerned with selecting the most appropriate AI tools, analyzing and evaluating AI outputs. Particular attention is paid to AI outcome’s evaluation. This is because some of the outputs produced by GenAI can be simply made up or incorrect, even though they may appear to be well formatted and authentic. This phenomenon is called hallucination or deepfake (concerning mainly with videos) and has gained wider recognition (Birhane et al., 2023). Compared with hallucinations, deepfakes are more malicious and harder to detect.

AI ethics can be viewed as an umbrella term and is concerned with areas such as fairness, accountability, and transparency. Fairness refers to the explanation regarding the approach to the processing and the outcomes of the processing. Accountability describes the roles and responsibility relating to the AI systems or tools and the outcomes produced. Transparency refers to information accessibility, concerning both the production and deployment of particular AI tools (Memarian and Doleck, 2023). Several prominent issues that have been raised relating to AI ethics include content bias and ownership. For instance, the GenAI outputs are likely to be biased if the training data sets are non-representative. Some known biases include gender and cultural bias (Baker and Hawn, 2021). To date, there does not seem to be a universal rule globally on intellectual property rights (IPR) in the era of GenAI, and some important questions raised and left unanswered. For instance, who owns the copyright of the generative AI productions? And are these productions protected under IPR laws?

This framework is felt appropriate because this study aims to gain an understanding of students’ perceived views on GenAI literacy in higher education and also how they use GenAI in their studies and daily life. Even though the framework was developed at the pre-GenAI era, the four dimensions cover the technical and application skills university students need to develop with regard to AI literacy, and hence are applicable to GenAI. In addition, this 4-dimensional framework has been adopted regularly as the foundation for AI curriculum design (Bellas et al., 2023; Celik, 2023; Southworth et al., 2023) and assessment methods (Laupichler et al., 2022; Moorhouse et al., 2023).

Apart from this 4-dimensional framework, there are other AI literacy frameworks in the literature. For example, the one proposed by Zhang and colleagues (2023) comprises three domains, namely, technical concepts and processes, ethical and societal implications, and career futures in the AI era. Even though both frameworks share similarities, the latter aims at K-12 education and focuses more on developing foundational knowledge and skills. Built upon a bibliometric analysis paper, Cetindamar and colleagues (2022) also proposed an AI framework containing four dimensions, such as technology-related, work-related, human–machine-related, and learning-related capabilities. This framework is however primarily concerned with commercial contexts and is designed to upskill employees.

Methods

Participants and demographic details

Convenience sampling is used in this study so that we can reach a large population of students. Participants were university students ranging from undergraduates to postgraduates studying in the United Kingdom or Hong Kong. In the United Kingdom, an invitation email was circulated among the authors’ academic network, who then helped distribute the email to their students. Similarly, in Hong Kong, the invitation email was sent out to students either directly or through instant messaging software from June to October 2023. The sample consisted of 234 participants.

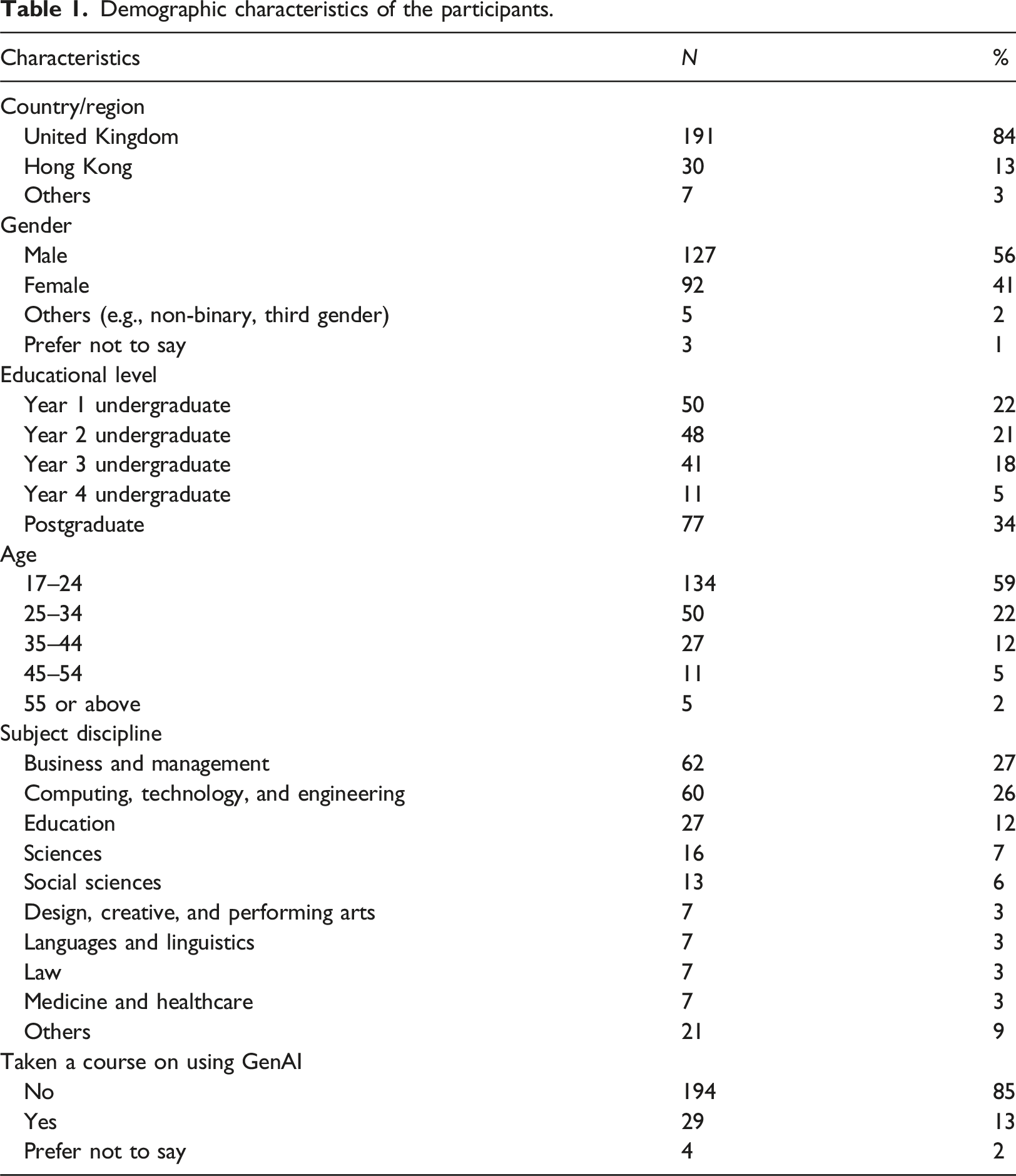

Demographic characteristics of the participants.

Study design and procedure

Quantitative data were collected via an online survey platform, Qualtrics. The survey included five sections and 24 items. In addition to the first section, six demographic characteristics of the participants (i.e., country/region of origin, gender, education, age, subject discipline and if taken a course in AI) were asked. These were examined against four dimensions corresponding to the four competencies of the Ng et al. (2021)’s framework, namely, “know and understand AI,” “use and apply AI,” “evaluate and create AI,” and “AI ethics, as explained in the section above (the four cognitive domains of AI literacy framework).

The AI literacy questionnaire has been in prior research (Wu et al., 2023). This research adopted the existing questionnaire, rather than developing a new one. Only 14 questions were chosen to balance response rate and research purpose, which may limit exploratory and confirmatory factor analysis (Hageman and Cheon, 2023); the limitation was addressed in the section later (limitation section). Instead of developing an original scale, the focus of this study was on assessing the reliability and validity of the established measure. The adapted version was validated by expert review for relevance, writing, and clarity and had a Cronbach’s alpha of 0.82, indicating good internal consistency.

Previous research conducted in Hong Kong (Memarian and Doleck, 2023; Ng et al., 2024) similarly reported favorable validity and reliability for AI literacy, further supporting their applicability in diverse learning environments. “Know and understand AI” was measured using five items (Q.9-13). The answers were rated on a five-point scale, ranging from either “strongly agree” to “strongly disagree,” or “very comfortable” to “very scared.” “Use and apply AI” was assessed using four items (Q.14-17). The items explored student experience in using GenAI tools in their studies and daily life (e.g., how often they used the tool, and what they used the tool for). Evaluate and create AI was measured using three items (Q.18-20). AI ethics was measured using two items (Q.21-22). These questions were referenced from Memarian and Doleck (2023) and prior author’s validated questionnaire (Ng et al., 2023). Apart from assessing student perceived AI literacy, some follow-up questions were asked to provide further explanations for the quantitative findings (e.g., understanding how students decide which software to use, reasons for indicating some of their responses).

This study only selected some of the questions, as we were conscious of the dilemma that asking too many questions would lower the response rate, while asking too few questions may not elicit the required information. The 14-question survey was chosen to balance response rate and data requirements. However, this limited selection of questions may hinder exploratory and confirmatory factor analysis (Hageman and Cheon, 2023). To address this, prior studies adopted the Kruskal–Wallis test (

The study was approved by the ethics committee, and the survey was conducted in the United Kingdom and Hong Kong simultaneously between 1st and 30th of June 2023. Before taking the survey, participants were provided with the research background and the purpose of the study. They were also asked for their informed consent to participate in the study.

Data analysis

A mix of descriptive and inferential statistics was used to analyze the results. Descriptive statistical measures such as the frequency, mean, and standard deviation are included. They provide an overview of the evidence-based observations, allowing for a further understanding of the data. In addition, inferential statistics involve drawing conclusions or making inferences based on the observed data. These inferences rely on the information provided by descriptive statistics, which help to summarize and characterize the data set (Braun and Clarke, 2021). The analysis was based on the demographic factors collected via the survey. These demographic factors were used to test the relationships between the respondents’ characteristics and the pattern of answers to the four cognitive domains. Each of the survey questions was analyzed for each demographic factor through the descriptive analysis and Kruskal–Wallis test.

To answer RQ1, we conducted a descriptive analysis to assess the perceptions of university students on AI literacy in the United Kingdom and Hong Kong. To answer RQ2, the Kruskal–Wallis test was used to determine whether the variables of country/region, gender, age, education, and subject and whether they had taken a course in AI had an influence on the attitude to and use of AI to facilitate student learning. A nonparametric test was used in this study since the data was not normally distributed (

Results

Students’ perceptions (RQ1)

The study investigated the influence of various demographic factors on AI literacy, including age, education, country of origin, gender, and subject discipline and whether participants had taken an AI course. The GenAI literacy survey provided insights into students’ perceptions. The results indicated that students had a positive response to the statement “know and understand AI” (M = 3.59, SD = 1.16). This response reflects their belief in their ability to grasp the fundamental functions of GenAI and utilize GenAI applications. Regarding the application of AI, students expressed confidence in applying GenAI knowledge, concepts, and applications to solve problems in various scenarios, such as writing and generating multimedia (M = 3.24, SD = 1.17). However, students were less confident in evaluating AI applications and comprehending underlying AI concepts to create artifacts (M = 2.45, SD = 1.17).

To further understand the perceptual differences between the demographic groups (i.e., the UK and Hong Kong contexts), we counted the number of students who rated the items greater than three out of five. The proportion of students who had taken a course by country/region shows that 38% of Hong Kong students have taken a course compared to 9% of UK students. However, among the students who had taken a course, 37% were from Hong Kong and 57% were from the United Kingdom. The proportion by gender shows that 21% of male students had taken a course in AI compared to 4% of female students. Also, 76% of the respondents from Hong Kong were male compared to 54% of those from the United Kingdom.

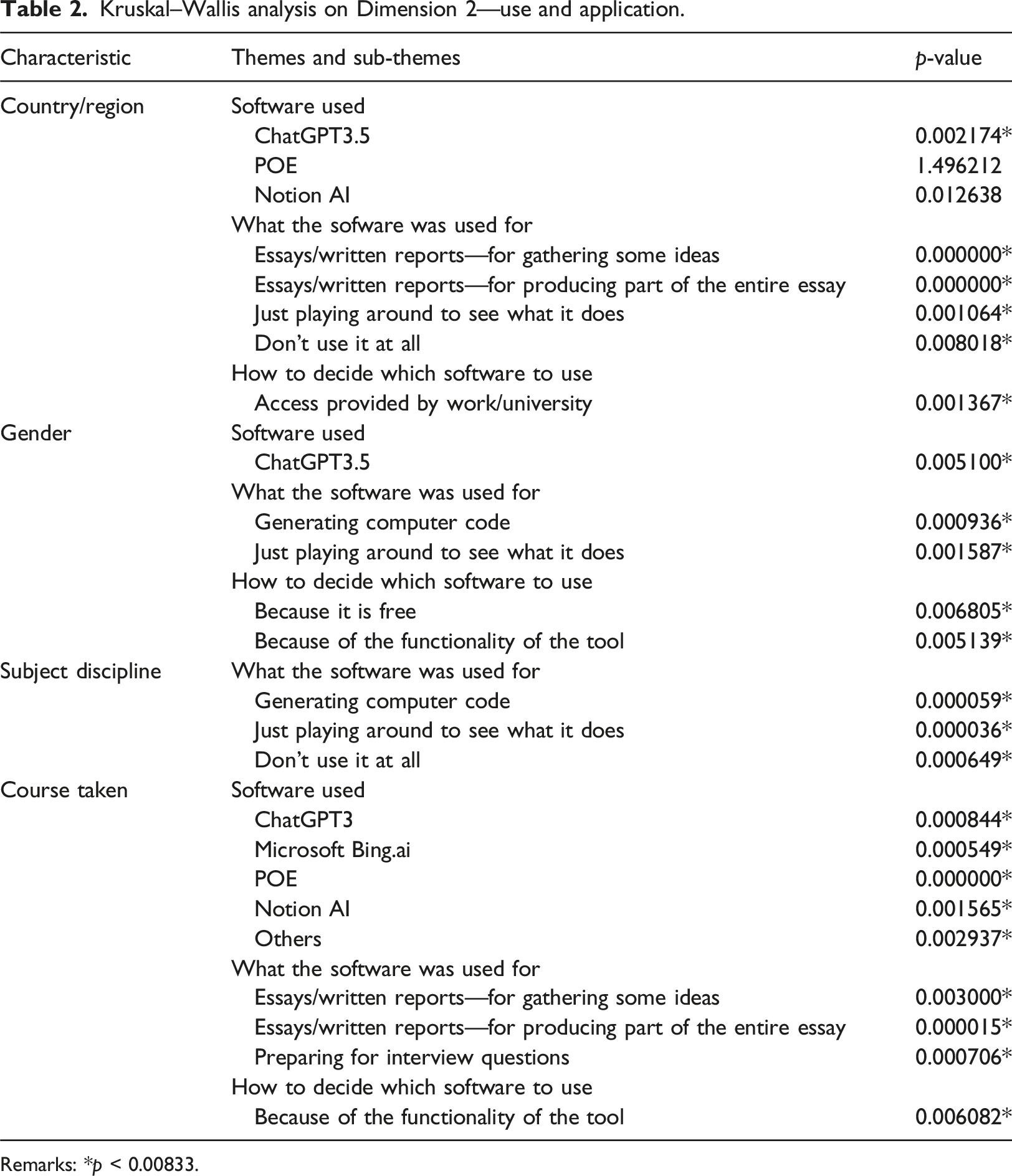

Kruskal–Wallis analysis on Dimension 2—use and application.

Remarks: *

Some interesting findings can be found. When it comes to the theme “software used,” it is found that students from Hong Kong and the United Kingdom had different uses for ChatGPT (

Factors affecting university students’ GenAI literacy in the UK and Hong Kong contexts (RQ2)

A chi-squared analysis was used to test for association between each demographic factor and students’ responses to the questions within each dimension. First, the impact of the country/region of origin on AI literacy was found to be ambiguous. This could be due to various factors such as variations in curriculum and technological readiness between the United Kingdom and Hong Kong that may influence the exposure and familiarity with AI technologies. Also, convenience sampling in authors’ academic networks could be a problem, since students were not chosen at random from a larger population. This might result in sampling bias. Whether participants had taken a course in AI, gender, and subject discipline were identified as factors that significantly influenced AI literacy (

Country/region of origin

Country/region of study exhibits a relation to all four dimensions, such as knowledge and understanding, use and apply, evaluate and create, and ethics. The results show significant differences between Hong Kong and the UK students in the following areas: basic understanding of AI, confidence in using AI frequency in using AI, and ethics. Put it succinctly, Hong Kong students appear to be more advanced in all four areas of the AI literacy framework. They are more knowledgeable about AI tools. They use them more and have a greater understanding of how to use them. They also intend to employ them more and evaluate the output more and are more aware of AI ethics when using these tools.

Students from Hong Kong universities display a more comprehensive understanding of AI literacy than their counterparts in the United Kingdom (75% vs 67%) when they were asked to rate their view relating to the question “I have a basic idea of what AI is” using a Likert scale rating from strongly agree to strongly disagree. Their knowledge and understanding in this area seemed to have helped boost their confidence in using AI to solve problems (89% vs 57%) and also the frequency in using AI in their studies and daily life (88% vs 27%). Hong Kong students also appear to be more aware of the limitations and capabilities of AI applications relevant to specific tasks compared to UK students (92% vs 66%). The differences in AI knowledge and understanding and experience in using AI between these two student groups had a direct impact on their intention of using AI tools in future. For example, 81% of Hong Kong students said that they planned to use the technology more in the future, compared to 32% of UK students.

With regard to the ethics dimension, students in both countries also show significant differences. Hong Kong students seem to have also developed more awareness in AI ethics and understood the importance and necessity of checking the outputs produced by AI. The Kruskal–Wallis analysis of the ethics dimension shows a statistically significant relationship between Hong Kong students and the statements “I incorporate ethical considerations when deciding whether to use data provided by an AI” (

Based on the results above, we anticipated that Hong Kong students in the study would have received more training on AI literacy than the UK students, because both groups show a significant difference in their AI literacy. However, the data suggest a very different picture. When they were asked to answer the question “have you taken a course on using generative AI such as ChatGPT,” 37% of Hong Kong students (

Whether taken a course about AI

Students were asked whether they took any training on using GenAI tools such as ChatGPT. Only 12% of respondents (

Whether students had taken a course in AI emerged as a significantly influential factor. Prior learning in AI plays a key role in shaping students’ positive perceptions, attitude, and behaviors toward AI. This experience can potentially help the respondents to achieve a higher level of cognitive understanding and think critically about their use of AI. For example, in terms of students’ feelings toward AI, knowing how to use AI to solve problems, and how often they use AI, significant results were observed (

The variable also demonstrates significant results in how students critically verify the outputs generated by AI. For instance, among those taking training on AI already, 64% updated approximately half or more of the AI outputs for their university work compared to 33% of those who had not taken a course. They also learnt to refine prompts to enhance the generated outcomes. In addition, the training these students received seemed to have empowered them with the ability to select appropriate AI tools to solve problems across different scenarios, such as drafting proposals, emails, and image editing. These abilities play a crucial role in enabling students to utilize AI tools more effectively, leading to the acquisition of accurate and reliable solutions.

Gender

Gender is also shown to play a significant role in the use of AI by students. Specifically, it demonstrates notable outcomes in the following areas: knowing how to use AI to solve problems, frequency of AI usage, software utilization, and usage intention.

Knowing how to use AI to solve problems

For example, the results indicate that individuals of different genders exhibit varying levels of proficiency in utilizing AI tools and techniques for problem-solving. 72% of males compared to 50% of females agreed that they knew how to use AI tools to solve problems, while 19% of males compared to 40% of females disagreed. This suggests that there may be differences in the acquisition of AI skills and knowledge based on gender, leading to diverse capabilities in leveraging AI for addressing challenges.

Frequency of AI usage

The frequency of AI usage also demonstrates significant differences based on gender. It appears that individuals of different genders vary in their engagement and utilization of AI technologies. When they were asked: “how often you used AI tools over the last semester”? 88.9% of male students (

Software utilization and usage intention

In addition, the judgment of the respondents about AI outputs was influenced by gender. 77.3% (

Subject discipline

The subject discipline variable yields significant results in AI literacy. The majority of respondents from computing and technology disciplines (42.9%) reported that they have a basic idea of what AI is, indicating a higher level of familiarity with the subject matter compared to students from other disciplines. Students from business and education disciplines expressed a higher level of comfort with AI compared to students from other subject disciplines. This suggests that students in these disciplines may have more positive confidence toward AI usage significantly (

When it comes to the four cognitive dimensions, subject discipline demonstrates significant results in terms of students’ knowledge of how to use AI to solve problems and their frequency of AI usage (

Regarding software usage, the only significant result was found for ChatGPT, indicating that students across different subject disciplines demonstrated a significant preference for using this specific AI tool. Furthermore, the only significant application identified was for programming, suggesting that students, regardless of their subject discipline, perceive AI as a valuable tool in programming tasks. While the training for computer and technology students aims to explore AI, future efforts are important to further enhance other skills (e.g., collaboration and communication) to effectively utilize AI in their future workplaces.

Overall, it appears that female students are less comfortable with the technology. They also consider themselves less knowledgeable and plan to use AI less than male students in the future. The variable of gender reveals significant results in several key aspects related to AI usage.

Discussion

Adopting the four-dimensional AI framework as the theoretical foundation, this paper aims to answer two questions:

Generative AI literacy across learner groups

To discuss RQ1, learner differences can be influenced by factors such as prior experience, gender, and subject discipline, according to prior research (e.g., Fisher et al., 2020; Lai and Hong, 2015; Paray and Kumar, 2020). Regarding prior AI course experience, examining its influence can shed light on the role of education and familiarity with AI concepts in shaping perceptions and understanding. Students with prior AI course experience might demonstrate different levels of knowledge and confidence compared to those without such experience. This can offer insights into the effectiveness of AI education and its potential implications for AI literacy and adoption. Second, gender can be another influential factor in AI perceptions. Research has shown that gender biases can exist in various domains, including technology. Exploring the impact of gender on AI perceptions can help identify any potential disparities or biases that may affect how individuals engage with AI technologies. Additionally, understanding gender differences can aid in designing inclusive AI systems that cater to diverse user needs and preferences. Subject discipline, or the academic field or area of expertise, is another important variable to consider. Different subject disciplines may have varying levels of exposure to AI and different perspectives on its applications. Analyzing the influence of subject discipline on AI perceptions can provide insights into how different disciplines perceive and utilize AI technology. It can also inform interdisciplinary collaborations and the integration of AI into various fields.

The need of generative AI literacy

This study, based on the above findings, contributes to the expanding body of evidence and highlights the urgent necessity for universities to offer GenAI literacy education to students. It extends the concept of AI literacy, which comprises four dimensions, and proposes a potential assessment to evaluate students’ GenAI literacy, although this assessment has not been validated again in this study.

Most of the current research examines students’ learning experiences with a focus on understanding AI and the implementation of AI education, according to a systematic review (Casal-Otero et al., 2023). This need arises primarily from industry expectations and the limited availability of AI courses at the university level. On top of AI literacy, the release of GenAI tools such as ChatGPT in November 2022 has accelerated the adoption of AI further. A recent report (IBM, 2022) shows that globally as of 2022, over 35% of businesses had already used AI in their daily operation. An additional 42% of businesses were currently exploring the potential opportunities for AI adoption. These figures are showing a continual upward trend. The top three business processes identified as being fueled by AI technologies include robotic process automation, natural language text understanding, and virtual agents/conversational interfaces (McKinsey, 2022). Consequently, employees with AI literacy skills are in great demand. As such, there is a need to rethink and revise the current ideas of AI literacy skills in the GenAI age.

When implementing GenAI in the classroom, inclusiveness becomes a significant concern (Lee et al., 2021; Xia et al., 2022). Students come from diverse backgrounds, including variations in gender, prior AI experiences, and subject discipline. It is acknowledged that students with prior knowledge (Hornberger et al., 2023) and a background in computer science are likely to have a higher level of GenAI literacy (Kong et al., 2021). As such, another contribution of this study is to investigate how individual differences contribute to variations in GenAI literacy.

This study highlights self-perceived differences in GenAI literacy based on country/region, gender, prior AI experience, and subject disciplines. These findings align with a recent systematic review (O'Dea et al., unpublished study) that examined 369 articles, revealing insufficient availability of AI courses for students studying non-computer science or data science disciplines. Despite the recognition of the importance of AI curriculum in better preparing university students for future careers, some places still lack adequate supply of AI courses. According to a report by UNESCO, only 16 out of 27 member countries have begun incorporating AI into computer curricula (UNESCO, 2023). Additionally, certain universities, such as the University of Hong Kong, have temporarily prohibited students from using ChatGPT or any other AI-based tool for learning, assessment, and coursework (HKU, 2023b). This cautious approach by educators reflects concerns surrounding the use of GenAI in the classroom (Chiu, 2023). The observed GenAI differences between the United Kingdom and Hong Kong serve as a reminder to educators and universities that some institutions or countries may have already started implementing GenAI, and simply prohibiting its use is not a long-term solution.

Furthermore, educators should address the issue of gender diversity, learners’ subject backgrounds, and prior experiences. The lack of gender diversity can have significant implications for the individuals for whom AI-based systems are developed (Casal-Otero et al., 2023). The literature emphasizes the importance of adopting a gender perspective in designing AI-related activities, with specific initiatives aimed at engaging girls (Solyst et al., 2023; Vachovsky et al., 2016). Another research conducted by Zhang and colleagues (2023) on pre-service teachers indicates that female teachers are more likely to experience anxiety about using AI-based tools than male teachers. A similar result was reported by Nouraldeen (2023) who conducted a survey to explore technology readiness among university students. The survey results reveal that male students are more ready than female students to adopt AI technology. More effort is needed to support female learners to foster their GenAI literacy and build more confidence in using the technology.

Also, it is crucial for universities to develop relevant training programs and guidelines on GenAI topics that span across subject disciplines, including integration into non-computer science subjects (Lin and Brummelen, 2021; Kong et al., 2021). They should take into account the needs and prior backgrounds of students to provide appropriate training (Chiu et al., 2023; Ng et al., 2023). It is important to note that students from non-computer science backgrounds, such as education and business, may initially have a lower perception of GenAI literacy. However, AI literacy is actively being taught among pre-service teachers (Lim, 2023) and business students (Cardon et al., 2023). Education students, for instance, need to acquire sufficient AI competencies and receive training in AI-related concepts and skills to become proficient in teaching AI and using AI for instruction in their future workplaces. While business students may not have a technical background, there are many platforms designed for business purposes that facilitate decision-making, prediction generation, and work efficiency enhancement for business leaders and managers. On the other hand, computer and technology students usually possess a strong technical foundation and perceive themselves as more capable of acquiring and retrieving AI knowledge due to their existing technical knowledge base (Ng et al., 2023).

Furthermore, this study has found that current AI training in higher education places a stronger emphasis on technical knowledge, such as machine learning, programming, and robotics, while giving less attention to AI ethics and the evaluation of AI applications (Farina et al., 2024; Schlagwein and Willcocks, 2023; Zohny et al., 2023). Therefore, the revised framework puts forward the following recommendations for AI courses. When designing an AI course, all three dimensions (knowledge, application, and evaluation) should be addressed, and the curriculum should strive for a balanced approach across these dimensions. When developing new AI courses, opportunities should be provided for students to translate their prior knowledge into practical applications. For instance, students should be able to select the most suitable AI tools to assist them in brainstorming new ideas, testing their knowledge and understanding, and supporting their academic research (Cooper, 2023; Kohnke et al., 2023).

At last, the data was collected in the post-pandemic period. The pandemic has catalyzed a significant shift toward online and blended teaching and learning approaches, where educators are increasingly leveraging emerging technologies to enhance their students’ learning outcomes (Ng et al., 2023). Notably, GenAI technology has gained widespread popularity in online learning environments during this period, as it offers various tools and functionalities to assist students in their learning.

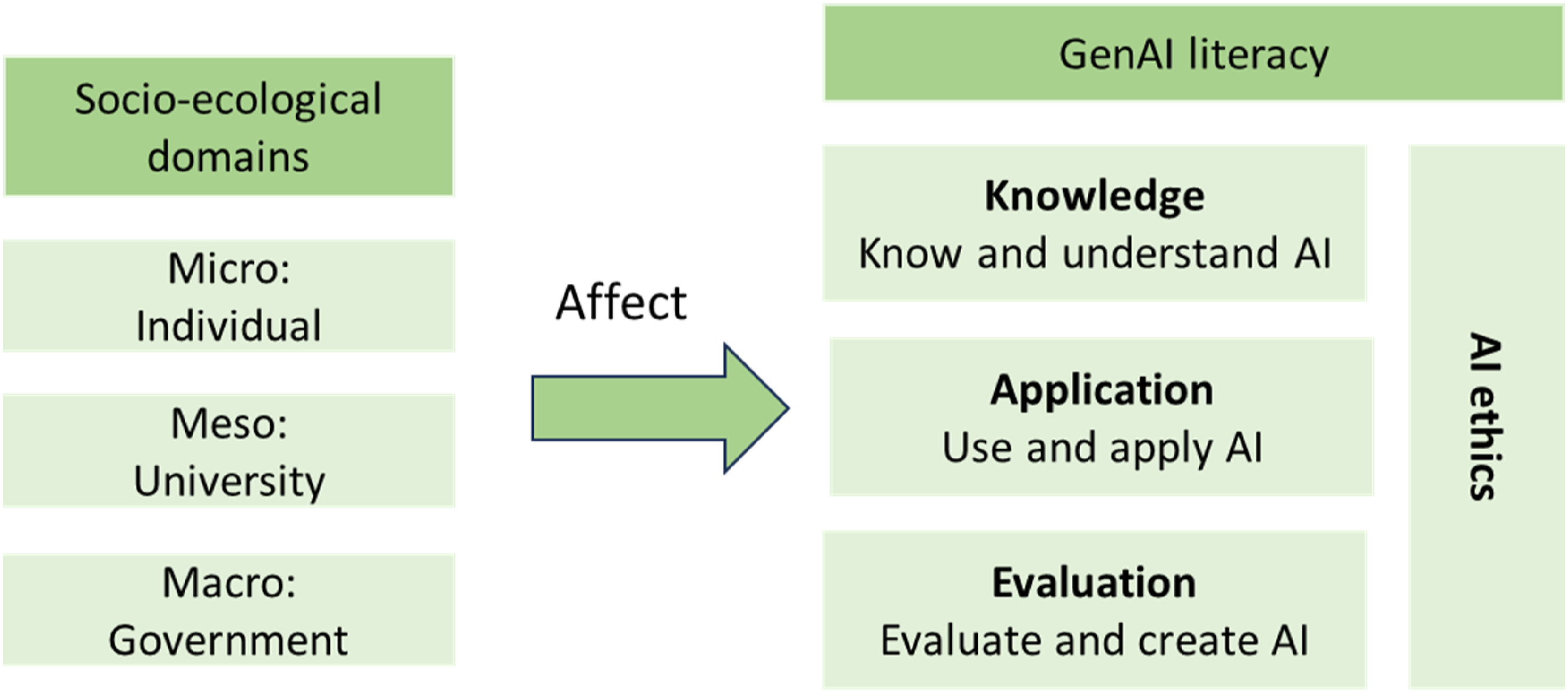

GenAI literacy framework

The AI literacy framework developed by Ng and colleagues (2021) includes four dimensions, namely, knowledge and understanding of AI, use and application of AI, evaluation and creation of AI, and AI ethics. Even though it is not stated explicitly, it appears that these dimensions are presented in a sequential order, with the final dimension being AI ethics. Developed upon the findings of this study, the revised framework has been renamed to be GenAI literacy framework and includes also four dimensions (see Figure 1). However, three have been renamed to GenAI literacy framework with socio-ecological domains.

First, AI ethics should carry more weight and become an integral part of each individual dimension. Our study shows that university students in both country contexts, regardless of their gender, subject disciplines, and with or without previous training, did not seem to fully understand the ethics of AI, in particular, with regard to the potential ethical concerns with AI adoption in their studies and in the workplace. The finding is reflected in existing research. Even though some institutions have started providing additional AI courses or modules to undergraduate and/or postgraduate students, AI ethics are either missing from the topics covered or not considered as a central theme (Moorhouse et al., 2023). For example, only three universities out of 50 discuss the ethics and limitations of GenAI (Moorhouse et al., 2023).

In this domain, individuals should consider aspects such as data ownership, privacy, and security (Sanderson et al., 2023). They should be mindful of the ethical concerns surrounding the use of recommendations or responses created by GenAI and understand the ethical implications of GenAI applications. It is important for individuals to check whether the content generated by GenAI is appropriate and ethical, ensure inclusivity and diversity in the data used to train GenAI, avoid incorporating others’ work without proper attribution, acknowledge that prompt information may be stored and impact future responses, make accurate references and citations in GenAI-driven responses, and critically consider biases that may arise from the responses provided (Fui-Hoon Nah et al., 2023; Schlagwein and Willcocks, 2023; Zohny et al., 2023). Finally, individuals should clearly indicate which parts of their work were completed with the assistance of AI tools.

Second, for the ease of recollection, we rename three dimensions knowledge and understand AI, use and apply AI, and evaluate and create AI to

Among these dimensions, knowledge is the prerequisite dimension of the other two and refers to the fundamental technical knowledge and basic understanding of AI ethics. Application is concerned with practical application of AI ethics and tools in different study and work scenarios. Evaluation examines whether the chosen AI tools are fit for purpose and can be sustained over time. Particular attention is paid to the ethics of using these tools in the long term. This new framework represents an iterative process for GenAI literacy development. For example, individuals are able to apply and evaluate AI tools in an ethical manner once they develop knowledge and understanding in these areas. On the other hand, their practical experience will motivate and support them to further develop their knowledge and understanding in AI.

And finally, we acknowledge the impact of socio-ecological factors on GenAI literacy and hence add the macro-, meso-, and micro-level factors to the framework (Berkovich, 2014). Macro-level factors are concerned with AI readiness and the associated plan at the country and government level. The guidance and policy offered by the government in supporting AI adoption significantly influences AI literacy development in industry, including education (Chan, 2023). As the data indicated in this study, students from Hong Kong seemed to be more advanced than their counterparts from the United Kingdom with regard to AI knowledge and application. This is likely because the Hong Kong government has a vision to take a lead on AI and subsequently has encouraged children to develop awareness and receive training on AI literacy from a much younger age (Su andYang, 2023). Meso-level factors are mainly concerned with the AI literacy training and support offered at the institutional level. Our data show that students who attended a university-led AI training course appeared to be more confident in using AI tools in their daily life and were keen to either explore more tools or use them more in the future. Micro factors refer to personal level factors, such as individual characteristics, and demographics, including gender, age, and subject disciplines. This study suggests significant differences in some of the areas mentioned.

As shown in the framework, the three hierarchical factors are proposed to incorporate the existing AI literacy framework to promote an inclusive-specific learning environment in higher education from micro to macro levels. The AI readiness policies implemented at the country level have a direct influence on the AI policies and training courses offered at the institutional level. These policies set the tone and framework for AI education and training within universities. The individual differences between students could impact how they develop and foster GenAI literacy. These differences may affect students’ abilities to comprehend AI concepts and apply AI knowledge in various contexts. Recognizing and addressing these individual differences is crucial for promoting inclusive and effective GenAI education (Kong et al., 2023; Xia et al., 2022).

Conclusion

In conclusion, this study addresses the research gap concerning the academic and professional benefits of GenAI tools for university students in the United Kingdom and Hong Kong. By exploring students’ perspectives using Ng’s four-dimensional framework, the study found that factors such as country of study, prior AI course experience, gender, and subject discipline significantly influence AI literacy. Based on these findings, we propose that GenAI literacy should encompass the four dimensions of knowledge, application, evaluation, and AI ethics, building upon the concept of AI literacy. Among these four factors, students demonstrate lower awareness of the importance of AI ethics. To cultivate AI readiness among young learners, educators should engage them more in evaluating the outputs of different GenAI applications and raise their awareness of AI ethics when utilizing these tools. Furthermore, the study incorporates macro-, meso-, and micro-level factors into the existing framework and highlights how individual differences contribute to variations in GenAI literacy. The study emphasizes the necessity of integrating GenAI technologies into higher education curricula and underscores the importance of focusing on AI ethics when engaging with AI tools.

Several limitations are addressed in this research that warrant further investigation in future studies. First, it is important to note that the sample size of the Hong Kong participants in this study was small, thus requiring future research to confirm the observed effects; particularly, the individual institutional contexts need to be taken into consideration, as the characteristics of the student population may vary significantly. Additionally, this finding serves as a reminder to governments and universities that certain countries/regions have already made significant strides in incorporating GenAI into their campuses. Third, the questionnaire adopted in this study is adapted to GenAI situations based on prior studies (Memarian and Doleck, 2023; Ng, Wu et al., 2023). At the same time, exploratory and confirmatory factor analysis may hardly be calculated in this study as this study has relied on few selected questions to determine the responses for each of the factors. Nevertheless, as Ng et al. (2024) designed the questionnaire on AI literacy contexts; this study helps contribute to adapt existing AI literacy measures for the context of generative AI across the United Kingdom and Hong Kong. By developing a GenAI-specific literacy questionnaire, this study provided novel insights into how demographic factors influence students’ understanding and perceptions of this emerging technology in ChatGPT world.

Consequently, there is a need to design learning programs and allocate resources to prevent universities from falling behind. Future research should also assess the effectiveness of these learning programs and resources. Regarding the new framework proposed, future research could delve into comparing GenAI literacy levels among different universities, since this study did not compare the GenAI differences between universities. In addition, further research is needed to explore the impact of micro level in more detail, because the sample size of this study was small, and data were collected only within two country contexts. While this study primarily focuses on students’ characteristics, it is crucial to explore the learning outcomes and how different groups of students may learn differently in programs that incorporate a more comprehensive understanding of AI.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.