Abstract

This review examines how psychology educators are responding to the rapid rise of generative artificial intelligence (GenAI), focusing on implications for assessment validity, academic integrity and organisational learning. Although universities have issued policy guidance, these frameworks often overlook psychology's distinctive epistemic, methodological and pedagogical practices. Drawing on empirical research, sector reports, survey findings and my experience as academic integrity lead in a UK university, the review identifies five interconnected challenges: unreliable detection technologies, ambiguity in marking and feedback, threats to the validity of psychology-specific assessments, increasingly complex integrity casework, and limited institutional support. It argues that psychology is well positioned to provide sector-wide leadership because of its emphasis on empirical reasoning, ethical judgement and reflective practice. The article synthesises emerging discipline-sensitive strategies and offers a forward-looking agenda for research, assessment design and staff development, emphasising approaches that foreground reasoning processes, ethical awareness and critical engagement with GenAI. It concludes by calling for a coordinated institutional response that integrates clearer policy, systematic staff training and strengthened communities of practice to support academic integrity and student learning in a GenAI-rich environment.

Keywords

Introduction

Psychology departments across the higher education sector are experiencing strong and immediate pressures arising from the widespread use of generative artificial intelligence (GenAI) tools. While these pressures are shared with other disciplines, psychology faces a distinctive constellation of challenges because its core learning outcomes depend heavily on empirical reasoning, ethical judgement, methodological understanding and reflective practice. As the academic integrity lead in a university psychology department, responsible for reviewing evidence, advising colleagues, interpreting policy and ensuring fair decision-making, I have observed how GenAI is disrupting long-established assumptions about authorship, learning and assessment. These disruptions are most visible in coursework formats that constitute the backbone of psychology education. Laboratory reports require students to demonstrate understanding of design principles, operational definitions, sampling decisions, ethical considerations and statistical interpretation. Critical evaluations require detailed engagement with empirical literature, methodological critique and evidence-based argumentation. Reflective assignments invite students to demonstrate metacognitive insight, personal development, and ethical awareness. GenAI can produce superficially polished versions of these outputs, but the reasoning processes that underpin them, the very constructs the assessments are intended to measure, may be absent, concealed or distorted.

Research indicates that students frequently use GenAI not only for legitimate support such as proofreading but also for summarising empirical literature, structuring essays or generating first drafts (Black & Tomlinson, 2025; Darvishi et al., 2024). While students may view these practices as efficient or benign, they raise significant questions about authorship, transparency, fairness and learning. In psychology, uncritical use risks obscuring weaknesses in methodological understanding, ethical reasoning and information literacy, especially when AI-generated content appears fluent yet lacks nuance or conceptual depth. Recent findings suggest that procedural reliance on GenAI is associated with lower learning outcomes (Pallant et al., 2025), reinforcing concerns that overuse may impair disciplinary skill development. Although sector bodies such as Jisc (2023), QAA (2024) and UCISA (2025) have highlighted the disruptive implications of GenAI, discipline-specific insights remain limited. Psychology educators report uncertainty about interpreting polished but shallow writing, preserving the validity of assessments designed to elicit reasoning, and managing rising integrity casework. Students, too, perceive psychology as particularly vulnerable to GenAI misuse (Acosta-Enriquez et al., 2024; Tierney et al., 2025).

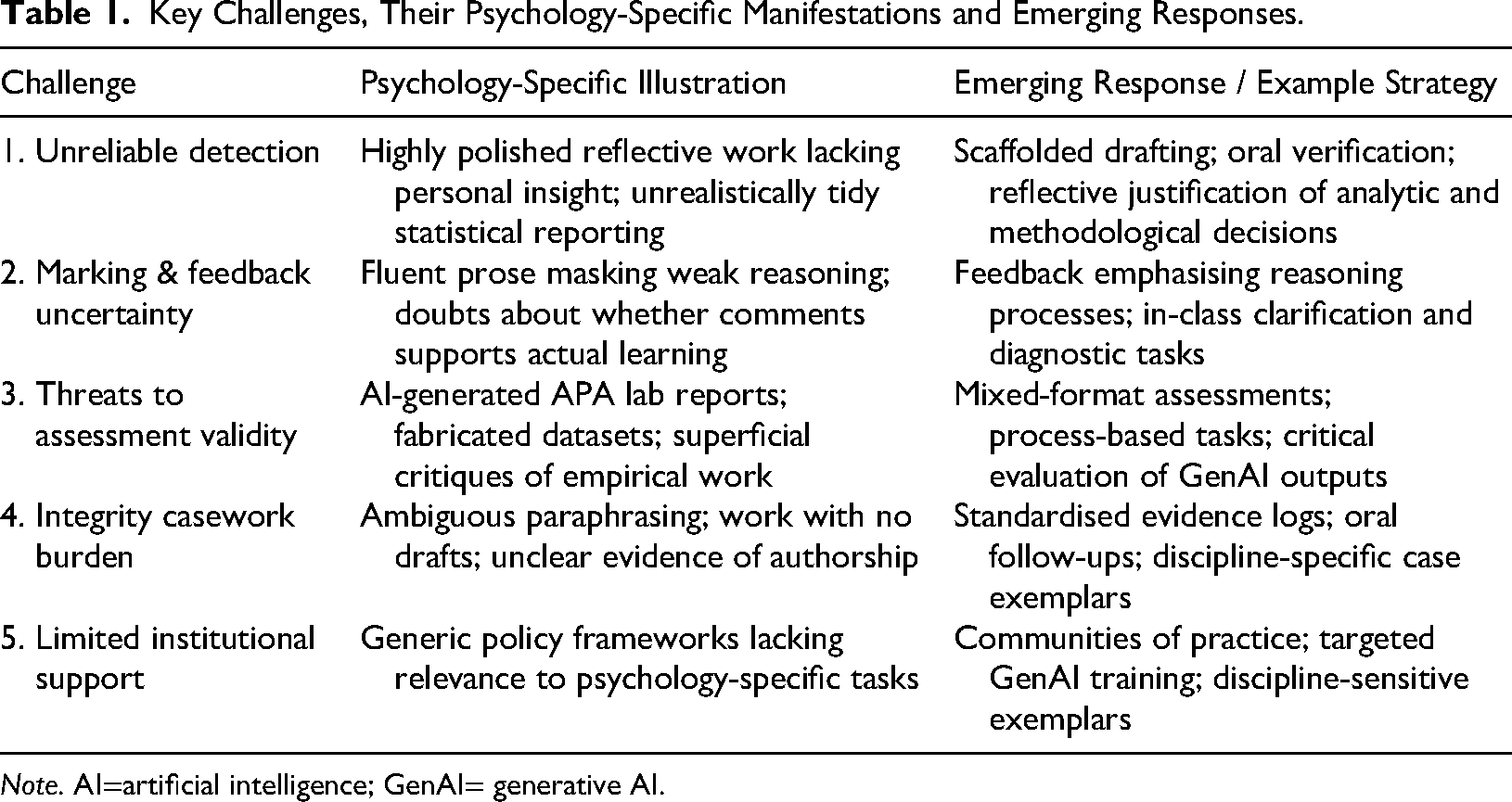

Taken together, these challenges highlight the need for a theoretically grounded account of how GenAI interacts with disciplinary practice. To provide such a framework, this review draws on three conceptual anchors – assessment validity, academic integrity and organisational learning – which together provide a coherent framework for understanding how GenAI reshapes psychological education. These anchors illuminate how GenAI disrupts not only specific assessments or marking processes but the wider ecology of teaching, learning and quality assurance within psychology. The review identifies five key challenges, namely: (1) unreliable detection, (2) marking and feedback uncertainty, (3) threats to validity, (4) burdens on integrity processes and (5) insufficient institutional support. Rather than merely cataloguing problems, the review uses these frameworks to provide a structured account of emerging disciplinary responses and to outline a constructive path forward for psychology education.

Challenges in Assessment and Integrity Practices: From Detection to Casework

The five challenges summarised in Table 1 are analytically distinct but practically interdependent: problems in detection feed into marking uncertainty; threats to validity exacerbate integrity casework; and limited institutional guidance compounds all of these issues. Table 1 highlights how each challenge manifests in psychology-specific contexts and summarises emerging responses.

Key Challenges, Their Psychology-Specific Manifestations and Emerging Responses.

The Detection Dilemma

Although educators across disciplines struggle to identify GenAI-generated content, psychology faces particular difficulties due to the nature of its assessments. Reflective writing, ethical analyses and empirical reports often reveal problems not through direct evidence of misconduct but through stylistic or conceptual incongruities: impersonal tone in reflective submissions, statistically implausible results sections or generic methodological commentary that appears detached from specific task requirements. Markers frequently describe a sense that ‘something is not right’ without being able to articulate or evidence the concern. This interpretive uncertainty is well documented (Kofinas et al., 2025), and colleagues often seek guidance on whether stylistic oddities merit escalation.

Detection tools such as Turnitin or GPTZero have been widely adopted, yet remain unreliable, unvalidated and prone to false positives, especially for non-native English speakers or students producing formulaic text (Chechitelli, 2023; Perkins, Roe, et al., 2024; Yeadon et al., 2023). Equally problematic are false negatives, where GenAI has been used lightly, such as for paraphrasing or structural editing, yet remains undetectable. These technologies cannot confirm GenAI authorship, as the QAA (2024) has emphasised, and their limitations leave staff uncertain about when and how to act. This ambiguity alters staff behaviour in ways that directly reshape assessment practice. Some markers become hesitant to raise cases without conclusive evidence; others adopt more defensive marking practices. The cumulative effect is a shift in the epistemic foundations of assessment: trust, once central to the marker–student relationship, becomes fragile and educators describe the emotional strain of feeling responsible for identifying unacknowledged GenAI use despite inadequate tools or support.

Marking and Feedback Uncertainty

When markers cannot be confident that submitted work reflects students’ reasoning, the feedback process becomes compromised. Psychology's feedback culture relies heavily on diagnosing misconceptions, guiding analytical development, and supporting reflective learning (Hattie & Timperley, 2007; Nicol & Macfarlane-Dick, 2006). Yet if the prose appears unusually fluent, overly structured or inconsistent with earlier work, markers may question the authenticity of the submission and, by extension, the value of offering detailed developmental commentary. These concerns echo wider sector observations that GenAI obscures authorship and disrupts markers’ interpretive confidence (Abdelaal & Al Sawy, 2024; Bobula, 2024).

This uncertainty is particularly acute in psychology because assessments are designed to reveal reasoning processes. A flawlessly written lab report that misinterprets analysis of variance (ANOVA) output raises the question of whether the misunderstanding lies with the student or with GenAI-generated text. Reflective assignments with generic or decontextualised insights raise similar doubts about the provenance of ideas. Faced with this ambiguity, some markers simplify feedback, shift towards generic comments, or avoid offering detailed developmental guidance. Such adaptations weaken the formative function of feedback and undermine students’ development of feedback literacy – the capacity to understand, interpret and act on comments (Carless & Boud, 2018). If feedback no longer maps reliably onto student reasoning, its pedagogical value diminishes. Psychology educators describe frustration and a sense of futility, uncertain whether feedback supports authentic learning or merely assists students in refining future GenAI use. This erosion of trust threatens one of the discipline's key pedagogical strengths: the use of feedback to scaffold the development of scientific reasoning, ethical judgement and reflective capacity.

Threats to Assessment Validity

While marking uncertainty affects day-to-day teaching practice, its implications for assessment validity are profound. Validity theory emphasises the need for alignment between intended learning outcomes, assessment tasks, and the constructs they measure (Biggs, 1996). In psychology, written assessments are intended to elicit students’ reasoning processes, methodological understanding, and critical engagement with evidence. GenAI undermines these assumptions by enabling students to generate plausible text without demonstrating the targeted cognitive processes, an issue increasingly noted across higher education (Abdelaal & Al Sawy, 2024; Bobula, 2024).

Laboratory reports illustrate this problem vividly: GenAI can generate APA-style writing, propose hypotheses and even simulate statistical outputs, yet these components may contain conceptual errors, fabricated data or inappropriate methodological choices. Literature reviews generated with AI tools often lack methodological nuance, misrepresent research findings or present fabricated citations. Research-design tasks can be similarly compromised when GenAI templates supersede students’ own reasoning.

Empirical evidence amplifies these concerns. AI-generated exam responses have been shown to match or exceed the quality of human-written answers (Scarfe et al., 2024), and GenAI tools can perform competitively on Multiple Choice Question assessments (Newton & Xiromeriti, 2024). Together, these findings heighten doubts about whether traditional coursework formats can still differentiate between superficial fluency and deep understanding.

From a validity perspective, these developments create misalignment between intended learning outcomes and the evidence used to judge student achievement. Instead of capturing students’ conceptual reasoning, assessments may now measure their ability to utilise GenAI effectively. Without intervention, the evidentiary value of key psychology assessments – lab reports, critiques and reflections – risks erosion. Departments are experimenting with process-focused alternatives such as scaffolded drafting, annotated bibliographies and oral components (Cotton et al., 2024; Francis et al., 2025; Lee et al., 2024; Malik et al., 2025; Tierney et al., 2025), but these innovations require systematic institutional support and time for evaluation.

Integrity Casework and Procedural Burden

As assessments become increasingly vulnerable to GenAI, integrity casework grows more complex. Unlike plagiarism, which involves identifiable sources, GenAI leaves no external trace. Students may use GenAI for drafting, paraphrasing, structuring or proofreading without malicious intent. Without drafts, version histories or explicit disclosures, even well-founded suspicions rarely meet evidentiary thresholds, a challenge widely recognised across the sector (Abdelaal & Al Sawy, 2024). Departments report increased case volumes, longer decision timelines and significant variation in case handling (Cotton et al., 2024; Slimi, 2023). Psychology educators describe the emotional and cognitive load associated with navigating ambiguous cases, supporting anxious students and interpreting policy in the absence of reliable detection tools. These patterns mirror findings elsewhere in higher education, where staff report heightened uncertainty and procedural strain arising from GenAI-related misconduct concerns (Tierney et al., 2025). Integrity leads face particular pressure as they mediate between institutional expectations, student welfare and staff concerns. From an organisational-learning perspective, these pressures are exacerbated by the absence of shared case exemplars, feedback loops, or cross-departmental learning structures. As a result, educators often work in isolation, improvising responses that lead to inconsistency and frustration. Strengthening these organisational mechanisms is essential if institutions are to respond coherently and support staff effectively.

Institutional Responses

Limitations of Current Institutional Guidance

Universities have responded to GenAI by issuing policy updates, staff briefings and guidance for students (e.g., Russell Group, 2023). These frameworks typically designate acceptable uses (e.g., idea generation and proofreading) and prohibited uses (e.g., producing assessable content). Yet such categorisations often lack relevance for psychology-specific tasks requiring personal reflection, ethical reasoning, methodological justification or empirical interpretation. Allowing GenAI for ‘structuring’, for instance, offers little clarity for educators evaluating impersonal reflective submissions. Policies relying on student disclosure, such as traffic-light systems or the AI Assessment Scale (Perkins, Furze, et al., 2024), are limited by unverifiability (Corbin et al., 2025). Studies show that institutional policies are frequently generic, inconsistently implemented and lacking in practical disciplinary guidance (An et al., 2025; McDonald et al., 2025). Moreover, guidance often arrives after module approval deadlines, constraining educators’ ability to redesign assessments or adjust marking procedures. These shortcomings contribute to reactive rather than strategic institutional responses. As one colleague reflected, ‘We are being asked to reinvent assessment on the fly’, a sentiment that underscores the need for discipline-sensitive policies and structured staff development, themes elaborated in the ‘Clarifying Policy and Supporting Fair Practice in Psychology’ and the ‘Supporting Psychology Educators: Training and Peer Learning’ sections.

Evolving Assessment Strategies in Psychology

Assessment redesign is one of the most active and consequential areas of adaptation within psychology departments, and one that sits at the intersection of assessment validity, academic integrity and disciplinary pedagogy. Psychology educators are acutely aware that many traditional assessment formats – laboratory reports, critical evaluations, reflective assignments and research proposals – were developed for a pre-GenAI era and assume that the written artefact provides direct evidence of students’ reasoning processes. With GenAI increasingly capable of producing fluent, structurally appropriate and superficially plausible psychological writing, these assumptions require re-evaluation (Cotton et al., 2024; Francis et al., 2025; Tierney et al., 2025).

Williams (2025) identifies two broad strategies that higher education departments are deploying:

Tasks requiring students to critique AI-generated literature reviews, identifying methodological inaccuracies, conceptual gaps or fabricated citations; Assignments where students annotate AI-produced statistical interpretations, explaining errors, misinterpretations or missing assumptions; Research-design exercises in which GenAI generates an initial set of hypotheses or interview questions and students must evaluate, refine, or reject them with reference to psychological theory and ethics; Reflective components in which students justify when and how they used GenAI, analysing its influence on their reasoning and identifying limitations or cognitive biases.

These integration-oriented assessments foreground evaluation, justification and critical thinking – capacities that GenAI cannot easily automate. They also make students’ reasoning processes visible to markers and help develop GenAI literacy. This literacy extends beyond basic tool competence and includes understanding how large language models generate text, recognising common statistical or methodological errors in AI outputs, identifying fabricated data or references, evaluating algorithmic bias, and reflecting on the cognitive risks of over-reliance (Darvishi et al., 2024; O’Donnell et al., 2024).

However, integration strategies also carry practical demands. They require educators to feel confident in using GenAI as a pedagogical tool, to anticipate its typical errors, and to design marking criteria that assess reasoning rather than merely the quality of prose. Staff report that such assessments take longer to mark and require more explicit guidance to students. Moreover, some students initially interpret integration tasks as endorsing unrestricted GenAI use, highlighting the need for clear communication about acceptable practices.

Across the sector, a growing number of psychology departments are piloting mixed-format assessments that blend mitigation and integration, such as combining an invigilated data-analysis task with a take-home critique of an AI-generated research summary. Early evaluations suggest that these formats can maintain assessment validity while building students’ evaluative judgement (Francis et al., 2025).

Overall, evolving assessment strategies in psychology reflect a discipline striving to preserve the evidential value of assessments while also recognising the pedagogical opportunities GenAI offers. Scaling these innovations will require institutions to acknowledge psychology's distinctive assessment landscape, provide protected time for redesign and invest in consistent staff development across programmes.

Gaps and Priorities in Staff Development

Staff development is a critical but underdeveloped component of institutional responses to GenAI. Existing training tends to focus on tool demonstrations or high-level policy guidance rather than the disciplinary reasoning, ethical judgement and interpretive skill that psychology educators must exercise when evaluating ambiguous submissions (Hutson et al., 2022). Sector surveys consistently highlight staff anxiety about fairness, clarity and assessment integrity (Lee et al., 2024; Tierney et al., 2025). Colleagues repeatedly ask the same questions: What forms of GenAI use are acceptable in a lab report? How should I respond when reflective writing feels impersonal? How can I mark ethically when authenticity is uncertain?

Effective staff development should therefore integrate ethical reasoning, reflective writing, empirical methods, and GenAI-related scenarios. Case-based learning, using anonymised examples of ambiguous writing, fabricated data, or inconsistent statistical reporting, has proved particularly powerful in early pilots because it engages educators with the tacit judgement required in practice. ‘Assessment clinics’, shared disclosure templates, and communities of practice can support consistency and provide spaces for collective reflection. From an organisational-learning perspective, such communities function as feedback and knowledge-transfer mechanisms that enable institutions to move beyond individual improvisation towards shared interpretive frameworks and coherent practice (Argyris & Schon, 1978; Senge, 2006). Without these structures, institutions risk perpetuating inconsistency, undermining staff wellbeing and missing opportunities for purposeful adaptation.

Towards a Constructive Vision for Psychology and AI

GenAI presents not only risks but opportunities for psychology educators to deepen student engagement with the epistemic foundations of the discipline (Al-Zahrani & Alasmari, 2024; Chan, 2023). Rather than viewing GenAI solely as a threat, educators can incorporate it meaningfully into teaching in ways that support inclusive, engaging, and reflective learning. Although students frequently use GenAI as a shortcut rather than as a tool for critical engagement (Darvishi et al., 2024), structured activities can help reorient its use. For example, dual-dialogue exercises between students and GenAI can stimulate metacognitive awareness, while classroom tasks that require students to critique or refine AI-generated drafts draw directly on psychology's strengths in analytical reasoning and reflective practice (Lee et al., 2024; Malik et al., 2025).

Currently, however, most institutional responses remain risk-focused (Bobula, 2024; O’Donnell et al., 2024; Slimi, 2023). Cotton et al. (2024) argue convincingly that institutions should move beyond reactive enforcement towards embedding GenAI literacy, ethical reflection and informed dialogue into curricula, an approach well suited to psychology. These activities directly support the learning outcomes outlined in the APA Guidelines for the Undergraduate Psychology Major (Version 3.0) (American Psychological Association, 2023), particularly those relating to ethical reasoning, scientific inquiry, and the evaluation of evidence – domains that GenAI now makes pedagogically indispensable.

Recommendations and Future Directions

The recommendations that follow draw explicitly on the paper's three conceptual anchors: assessment validity, academic integrity and organisational learning. They aim to support psychology departments in developing coherent and discipline-sensitive responses to GenAI.

Building the Evidence Base: Research Priorities for Psychology and GenAI

To respond effectively to GenAI, psychology requires a stronger empirical foundation that captures the complexity of how AI intersects with disciplinary learning, assessment and identity formation. Existing sector-wide studies tend to homogenise higher education, overlooking how psychology's epistemic values – empirical reasoning, methodological rigour and ethical reflection – shape both opportunities and risks associated with GenAI. A more robust evidence base should therefore pursue several interconnected strands.

First, research should examine

Second, there is a need to evaluate

Third, research should explore

Finally, there is a need to examine

Such research is essential not only for policy development but also for restoring the evidentiary foundations of assessment validity described in the ‘Threats to Assessment Validity’ section. Building this evidence base will allow psychology departments to make informed, discipline-specific policy decisions, clarify expectations for students, and evaluate whether redesigned assessments genuinely measure the competencies they target. This research is foundational to the sector's ability to respond coherently to GenAI and to safeguard assessment validity in the long term.

Clarifying Policy and Supporting Fair Practice in Psychology

Current academic integrity policies often lack the precision required for psychology-specific assessments, resulting in inconsistent interpretations and uncertainty for both staff and students. Strengthening policy frameworks is therefore essential to ensuring fairness, protecting academic standards and supporting staff decision-making.

First, institutions should develop

Second, departments would benefit from

Third, policies should outline

Finally, clearer

Overall, clearer and more discipline-sensitive policy frameworks will help psychology departments navigate the increased ambiguity GenAI introduces into assessment and integrity processes.

Supporting Psychology Educators: Training and Peer Learning

Given the interpretive complexity of evaluating GenAI-influenced work, psychology educators need sustained, practice-oriented professional development. Effective staff support should go beyond tool demonstrations and focus on developing interpretive judgement, disciplinary confidence and shared pedagogical frameworks.

One priority is

Another priority is

Departments should also invest in

Finally, staff development must include

Reimagining Assessment for a GenAI Era

Rather than focusing solely on preventing GenAI use, psychology has an opportunity to redesign assessments so that they foreground reasoning, judgement, ethical awareness and critical evaluation – competencies that GenAI cannot replicate. This requires both conceptual rethinking and practical experimentation.

One promising direction is the development of

Another direction involves

Psychology departments can also experiment with

Finally, programmes should integrate

Taken together, these innovations point towards a future in which assessments are designed not to avoid GenAI but to

Conclusion

GenAI is reshaping higher education, and psychology is experiencing these changes with particular intensity. The discipline's reliance on empirical analysis, reflective writing, ethical reasoning and methodological competence means that the challenges discussed throughout this review – unreliable detection, ambiguity in marking and feedback, threats to assessment validity, increased integrity casework, and uneven institutional support – strike at the heart of what psychology assessments are designed to measure. These pressures expose longstanding vulnerabilities in assessment design, highlight the limitations of generic institutional policies, and reveal gaps in staff preparedness.

Yet the expanded recommendations in the fourth section show that psychology is also exceptionally well placed to lead constructive and evidence-informed adaptation. Building a stronger empirical foundation for understanding GenAI use (‘Building the Evidence Base: Research Priorities for Psychology and GenAI’ section) will allow departments to evaluate whether redesigned assessments genuinely capture disciplinary competencies. Clearer, discipline-specific policy frameworks (‘Clarifying Policy and Supporting Fair Practice in Psychology’ section) can support fairness, strengthen authorship verification and reduce inconsistency in case handling. Sustained, practice-oriented staff development and communities of practice (‘Supporting Psychology Educators: Training and Peer Learning’ section) are essential for developing the interpretive judgement and confidence required to navigate GenAI-related ambiguity. Finally, reimagining assessment design to foreground reasoning, evaluative judgement, ethical awareness and process-based evidence (‘Reimagining Assessment for a GenAI Era’ section) provides a pathway towards assessments that can withstand GenAI rather than be undermined by it.

Taken together, these recommendations point to a coherent strategic response: one that integrates research, policy, training and assessment design rather than treating these components in isolation. Psychology can model this integrated approach because its epistemic values – critical evaluation, methodological rigour, ethical reflection and metacognitive awareness – align closely with the competencies students need to engage responsibly with GenAI. By embedding GenAI literacy into curricula, redesigning assessments to make reasoning visible, and strengthening organisational structures that support staff, psychology departments can create learning environments in which GenAI becomes a tool for deeper engagement rather than a threat to academic integrity.

If institutions invest in these discipline-sensitive strategies, psychology can help shape a future in which GenAI becomes an instrument for cultivating deeper empirical reasoning, stronger ethical judgement, and more resilient academic standards.

Footnotes

Acknowledgements

The authors would like to acknowledge the support from the University of Leeds, UK.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interest

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.