Abstract

The European Union – comprising 28 member states with individual sovereignty in the formation and implementation of education policy – has developed research and communication strategies to facilitate the exchange of best practices, gathering and dissemination of education statistics and, perhaps most importantly, advice and support for national policy reform. Additionally, shared programs have been implemented across the union, which have led to the formation of one of the largest transnational policy networks in the world. This paper examines the influence of international education surveys administered by the Organisation for Economic Cooperation and Development, outlining the key characteristics of the surveys and the most salient findings. We discuss the contribution of emerging European Union governance for the quality of education while also looking at the challenges ahead. These challenges include developing assessments to include value added, revising assessments to include broader skills and providing assessment feedback to teachers within an EU context in which national and Organisation for Economic Cooperation and Development assessments become complementary, rather than overlapping, survey measures.

A brief history of the European Union

The genesis of the European Union (EU) can be traced to the Second World War, when Europe was split into East and West as the 40-year-old Cold War began. Motivated by a desire to secure peace across the continent, the European Coal and Steel Community was created in 1951, based on the Schuman plan. Six countries signed a treaty to run their heavy industries, coal and steel, under a common management. This treaty was one of the first attempts to unite European countries economically. The growing desire for economic integration, including a common market, led to the Treaty of Rome in 1957, which established the European Economic Community (ECC). A key feature of the ECC was the coordination of economic policies. The ECC established both institutions and decision-making mechanisms, facilitating the expression of both national interests and a community vision. The ‘institutional balance’ was ensured through a tripartite structure consisting of a council that prepared standards, the commission, which drafted proposals, and the European Parliament, which played an advisory role. All three bodies, along with the Economic and Social Committee, were expected to work collaboratively to make prudent decisions in the area of education policy.

The ECC was expanded and renamed the EU in 1992 with the Maastricht Treaty, which was signed by 12 countries: Belgium, Denmark, Germany, Greece, Spain, France, Ireland, Italy, Luxembourg, the Netherlands, Portugal and the United Kingdom. This is often referred to as the EU12. In addition to ushering in economic and monetary union, the Maastricht Treaty created the opportunity for the Amsterdam Treaty of 1997, which introduced new ‘community’ policies in the area of education and culture. The Amsterdam Treaty also increased the powers of the European Parliament and facilitated the creation of the ‘Open Methods of Coordination’ (OMC) for policies in social fields, including education and training.

The Maastricht and Amsterdam Treaties were eventually superseded by the Treaty of Lisbon in 2007. Sweeping reforms abolished the former architecture in an attempt to improve decision-making in an enlarged EU that included 27 member states. At present, the EU comprises 28 member states that possess individual sovereignty in the formation and implementation of social policy, including those related to primary, secondary and higher education. Yet there remains ample guidance for coordination among the countries and from the EU. This guidance and coordination is reinforced by the Semester Approach as part of the EU’s growth strategy (known as Europe 2020), which focuses on five key objectives: employment, innovation, education, social inclusion and climate change/energy.

Education governance within the European Union

EU member states are responsible for developing, implementing and monitoring their education and training systems: education and culture are part of what is considered the national integrity and identity. EU institutions provide a supporting role to help improve the quality of education within member states and across the union. For example, in higher education the European Commission works closely with policy-makers to support the development of higher education policies that align with the Education and Training 2020 strategy (ET2020), as part of the Europe 2020 agenda. ET2020 contains five key priorities for higher education: (1) increasing the number of higher education graduates; (2) improving the quality and relevance of teaching and learning; (3) promoting mobility of students and staff and cross-border cooperation; (4) strengthening the ‘knowledge triangle’ linking education, research and innovation; and (5) creating effective governance and funding mechanisms for higher education. The overall success of these higher education priorities is judged against benchmarks with clear targets that are assessed on an annual basis in the Education and Training Monitor (see http://ec.europa.eu/education/tools/et-monitor_en.htm). Thus, it is clear that the EU has adopted an evidenced-based orientation to education policy implementation and reform.

The European Commission also plays an important role in supporting pre-primary to secondary level education throughout Europe. For example, the commission works with EU member states to raise the standards of teaching and teacher education for Europe’s six million teachers by: (1) facilitating the exchange of information and experience between policy-makers; and (2) supporting projects through the Erasmus+ program. The Erasmus+ program is multifaceted in that it cuts across various pre-primary, primary, secondary, higher and tertiary levels of education. In terms of compulsory schooling, it provides a variety of opportunities for staff and students to study, train and gain relevant work experience. School staff members, for instance, are provided with opportunities to undertake European professional development activities abroad that include structured courses or training, teaching assignments and job shadowing or observations. In order to encourage cooperation between schools, teachers are encouraged to network and run joint classroom projects with colleagues across Europe. Collectively, these activities are designed to support the sharing of best practice and testing of innovative approaches that address common challenges such as low basic skills or early school leaving.

Education governance has been primarily facilitated within the EU by the OMC since 1997. Since 2012, the OMC has been supplemented with the Semester Approach. The OMC is the principal means of spreading best practices to achieve greater convergence towards the main EU goals, including those outlined in ET2020, with the implied goal of improving the quality of education. These transnational goals are achieved through the establishment of common indicators and benchmarks, which in turn influence the development and monitoring of national and regional policies. The OMC functions as a ‘soft law’ adding to the traditional constitutional doctrines and values in which education (and culture) are fully part of the EU member states sovereignty. Critics have argued that OMC as ‘soft law’ undermines traditional constitutional doctrines and values and supports a limited view of social Europe (Lange and Alexiadou, 2010). However, to date, we lack evidence of the impact of the OMC on actual education policy-making in the EU member states.

The European Semester is an annual cycle of macro-economic, budgetary and structural policy coordination, which includes the issuance of country-specific recommendations (CSRs), adopted by the European Commission and endorsed by the council. Until now the European Commission has only issued CSRs directly connected to education and research in the context of the labour market and innovation . For example, a semester recommendation for Austria is: ‘The Austrian school system is characterised by a low number of early school leavers, well below the EU average. A strong and well-functioning system of vocational education and training provides a large pool of highly skilled workers. However, improving educational outcomes and hence the employability of young people with low socioeconomic status, in particular those from migrant backgrounds, remains a challenge. The evaluation of the implementation of a new secondary school system (Neue Mittelschule) has revealed weaknesses that still need to be addressed’ (European Commission, 2015: 5.).

International education surveys

Like much of the Western world, EU member states are grappling with the persistent problem of raising standards in education while improving equity and access for its increasingly diverse student population. In the 1990s, education ministers at the Organisation for Economic Cooperation and Development (OECD) decided that international education surveys might be helpful to establish the quality of learning in OECD countries, starting with the Program in International Student Assessment (PISA) (OECD, 1995, 1997), later followed by the Teaching and Learning International Survey (TALIS), the Program for the International Assessment of Adult Competencies (PIAAC) and the Assessment of Higher Education Learning Outcomes (AHELO). As notes to these meetings show, the ministers were well aware of the potential political fallout from these surveys in terms of national policy discussions. It is worth noting that the OECD’s interest in education dates back to 1964, when the OECD provided the launching pad for a dedicated area of study that is now referred to as the Economics of Education (Svennilson et al., 1962). The OECD meetings on the Economics of Education in the 1960s forcefully introduced the notion that economic growth may depend as much on the increase in human capital (at that time simply measured by the years of education in the labour force) as on the changes in physical capital (machines, buildings). This led member states to commission the OECD to gather statistics in the area of student achievement (Martens and Leibfried, 2008).

International education surveys highlight the educational differences that exist across national education systems. International agencies, such as the World Economic Forum, are increasingly utilizing such data to gauge national competitiveness (Duncan, 2014; Martens and Niemann, 2010; Morris, 2011; OECD, 2013a). Thus, it is not surprising that governments around the world are eager to improve their relative standing on these performance measures (Baird et al., 2011; Knodel et al., 2013; Ringarp and Rothland, 2010). Europe is no exception in this respect, but participation in these surveys has grown well beyond Europe or the OECD.

Across Europe, participation in the various international education surveys that are administered by the OECD is extremely high. The role of international education surveys as potential change agents is well established and widely recognized (Ritzen, 2013). Not surprisingly, as the popularity of these surveys has grown, there has been ample attention on the nature of the empirical evidence provided by these international education surveys, particularly for the formation of national education policies in primary, secondary and higher education sectors (Hunter, 2012; Meyer and Benavot, 2013; Morgan and Shahjahan, 2014; Sellar and Lingard, 2013). Often the results attract a great deal of media attention, which is sometimes reflected in parliamentary debates. Nevertheless, it is not clear whether these surveys have facilitated consistent or direct policy responses. Rather, education policy changes often involve the complex interplay of cultural and sociopolitical forces in which national responses to OECD surveys may primarily bolster already existing proposals for change (Carvalho and Costa, 2014; Pons, 2012; Takayama, 2008; Wiseman, 2013).

PISA triennial survey

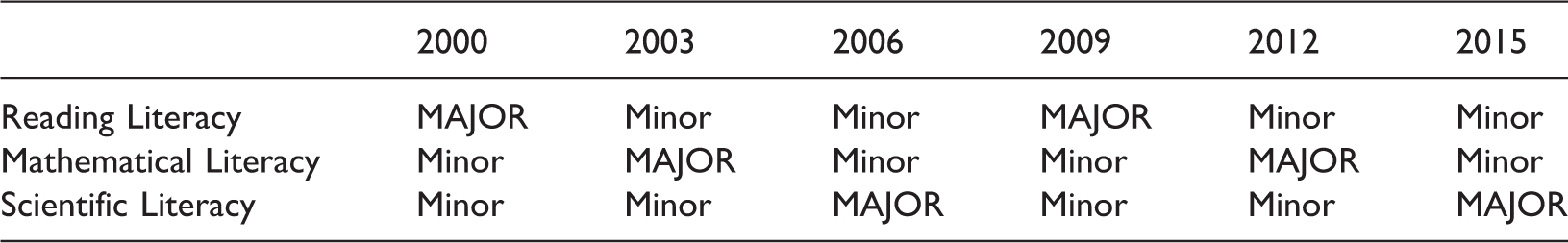

Chronology of Major and Minor Literacy Domains on the PISA Survey.

Thus far, each literacy has been twice assessed as a major domain. In addition to the major and minor literacy domains, the OECD notes that PISA regularly introduces new tests to assess skills relevant to modern society, such as creative problem-solving and financial literacy (introduced in 2012) and collaborative problem-solving (introduced in 2015).

The initial administration of PISA in 2000 included 43 countries, including all the OECD European countries. More than 70 countries/economies signed up to participate in PISA 2015, with the release of results scheduled for December 2016. Thus, global participation in PISA has expanded significantly. Not surprisingly, it has quickly become the standard metric upon which most compulsory education systems judge their relative standing. The PISA survey has even been likened to the ‘Olympics of education’ in the popular media – particularly in Europe and North America (see Alphonso, 2013; Petrelli and Winkler, 2008; Scardino, 2008). It has been referred to as ‘one of the largest non-experimental research exercises the world has even seen’ (Murphy, 2014: 898).

The current director of the OECD Education Division, Andreas Schleicher, has argued that PISA provides ‘policy-makers and practitioners with helpful tools to improve quality, equity and efficiency in education, by revealing some common characteristics of students, schools and education systems that do well’ (Schleicher, 2007: 356). Overall, the PISA survey allows national governments to evaluate education systems worldwide and provides benchmark data to participating countries/economies so they are able to ‘set policy targets against measurable goals achieved by other education systems, and learn from policies and practices applied elsewhere’ (OECD, 2014a: 2). This evaluation takes place against the background of the variance in the ‘institutions’ dominating education in the individual countries, such as the resources available, the degree of centralization, the degree of completion, etc. (OECD, 2012b), showing that the organization of education matters for PISA results.

The European Commission has released various reports in relation to OECD surveys, including the PISA triennial survey. The commission is quick to point out that PISA is used for one of the key ET2020 benchmarks, which states that by 2020 the proportion of 15-year-olds with low achievement in reading, mathematics and science should be less than 15%. Thus, it is not surprising, that the latest PISA results were scrutinized closely within the EU. Six main findings, each with their own implications for education and training policies, were reported in relation to the PISA 2012 results: (1) the EU as a whole is seriously lagging in the area of mathematics and will require significant policy reform to break this stagnation; (2) progress in reading and science is on track, but the slow pace of improvement requires member states to sustain efforts to address low achievement in schools; (3) boys lag behind girls in reading and require measures to motivate them so that 2020 benchmarks are met; (4) socioeconomic status (SES) remains a significant determinant of mathematics achievement, highlighting inequities in European education and training systems. A focus on regional discrepancies and more holistic, cross-section solutions are recommended as a first step to address this persistent problem; (5) migrant status has clear overlaps with the effects of SES, but it also exercises an independent influence on mathematics that is partly related to language difficulties; (6) early childhood education proves vital for the development of basic skills (European Commission, 2013a).

In addition to the European Commission, the Eurydice Network supports and facilitates European cooperation in the field of lifelong learning by providing information on education systems and policies in 37 countries. This network has reported that a significant number of EU countries have demanded more information on curriculum and teaching as a consequence of their results on the PISA survey (Eurydice Network, 2009). According to Grek (2009), the impact of PISA on education governance within Europe has been so pronounced that many countries may be ‘governing by numbers’. There is some support for this position, including from the OECD commissioned research by Breakspear (2012), who interviewed civil servants (one in each of the PISA countries). His results suggested that PISA exerted an ‘extremely’ high (i.e. England-UK, Denmark) or ‘very’ high (i.e. Austria, Hungary, Germany, Sweden, Poland, Ireland, Latvia, Greece, Norway, Slovak Republic, Estonia, Wales-UK, Slovenia, Belgium-French Community) level of influence on the policy-making process across Europe. In Germany, the lacklustre results produced ‘PISA-shock’, providing the impetus for large-scale reform (see Bank, 2013; Ertl, 2006; Grubera, 2006; Hartong, 2012; Waldow, 2009). Nevertheless, the German case is fairly atypical. Research suggests that a range of policy responses to PISA occur within distinct geopolitical and cultural contexts (see Carvalho and Costa, 2014; Volante, in press) and that the impact of PISA may be more pronounced in political debates rather than in direct education policies (see Ritzen, 2013).

Given some of the heightened reactions across Europe and other parts of the world, it is not surprising, that academics have scrutinized the influence of PISA on education governance and global policy reform (see Kamens, 2013; Mahon and McBride, 2009; Meyer and Benavot, 2013; Pereyra et al., 2011; Sellar and Lingard, 2013). Some critics dispute the legitimacy of PISA as a ‘soft power’ that promotes the uptake of policies through evidence-based decision-making, indicating that there is more than can be measured by educational research (Benavot, 2013; Bieber and Martens, 2011). Much of the criticism is directed at the economic nature of PISA (Bank, 2013). Often evidence-based education policies in ‘PISA-countries’ are adopted through the practice of ‘policy-borrowing’, whereby a low-achieving nation borrows education policies from a high achieving nation, assuming that this expresses evidence on the effectiveness of borrowed policies. These high performing nations are often referred to as ‘reference societies’ in the literature and serve as models to be emulated. European countries such as Finland, the Netherlands and Poland (because of their rapid increase in PISA performance) have been cited as reference countries. The OECD promotes policy-borrowing practices through PISA analyses and various policy briefs and reports that are contained in their extensive online library.

Interestingly, objections to PISA culminated in 2014, when a group of more than 80 high-profile academics from around the world sent an open letter to Andreas Schleicher via the Guardian newspaper in Britain. This letter outlined a litany of concerns with the PISA program and called for an immediate halt to the next round of testing (Andrews et al., 2014). These points were reiterated in another open letter, where the list of signatories grew from the initial 80 to more than 130 as of 6 May 2014 (see Meyer and Zahedi, 2014). These academic critics underscore the growing influence of PISA as a tool that promotes the convergence of education policies and facilitates global education governance by international organizations. This letter has been largely disregarded by OECD and governments within the EU alike, on the basis that it is up to national governments themselves to use the data and the analysis and that, in fact, they have been leading the PISA effort, through the OECD Ministerial Education Committee and the OECD Council.

It is somewhat surprising that the open letter was directed to a staff member of OECD while the responsibility is fully with the political leadership of OECD. The letter does not discredit the international nature of educational research, when properly taking country specific elements into account, as it offers ‘constructive ideas and suggestions’ for improvement of PISA, which might ensure a stronger ‘ownership’ of PISA results in the education community. Meyer and Zahedi (2014) argued that the some of the main concerns with PISA include:

avoid ranking; avoid giving the impression of quick fixes; assess skills broader than the cognitive and the economically useful; embed PISA into teachers’ professionalism and autonomy.

Despite these criticisms, it must be acknowledged that it is ultimately national governments who are responsible for formulating education policies that might be connected to PISA results.

Teaching and Learning International Survey

TALIS is the first international education survey to focus on the working conditions of teachers and the learning environments within primary and secondary schools (OECD, 2008) with the rationale that ‘beyond the influence of parents and other factors outside the school, teachers provide the most important influence on student learning’ (OECD, 2014d: 5).

The TALIS results are designed to shed light on a variety of issues that influence student learning and achievement. These include, but are not limited to, the changing demographics of the teaching profession; types of leadership practices demonstrated in schools; impediments to teachers’ professional development; teacher appraisal systems; as well as factors related to positive teacher self-efficacy and job satisfaction (OECD, 2008). TALIS is meant to be an important catalyst for change in that it recognizes and tries to better understand the complex factors that influence teaching and learning in schools as well as the teaching profession.

The initial administration of TALIS took place in 2008 and comprised 24 countries. Four research areas were addressed: school leadership; professional development; teacher appraisal and feedback; and teacher practices, beliefs and attitudes (OECD, 2008). TALIS 2013 expanded to include 24 OECD countries and 10 partner countries/economies. The 2013 administration included participation from numerous EU countries: Belgium (Flanders), Bulgaria, Czech Republic, Denmark, Estonia, Spain, Finland, France, Croatia, Italy, Latvia, the Netherlands, Poland, Portugal, Romania, Sweden, Slovakia and the United Kingdom (England). In total, 200 schools in each country/economy where surveyed. The sample for each school included 20 teachers and one school leader (OECD, 2013d). The information collected is based on questionnaires that are administered to teachers and their respective school principals (OECD, 2013c).

An interesting feature of TALIS 2013 was that it provided participating countries/economies the option to link PISA-TALIS surveys. The latter was accomplished by having countries/economies that participated in PISA 2012 exercise the option to implement TALIS in the same schools that participated in PISA (OECD, 2014c). Thus, it was possible to link student learning outcomes from PISA to teachers’ characteristics, which were surveyed in TALIS 2013 (OECD, 2014c). This link between PISA-TALIS is in keeping with the OECD’s perspective that improvements in teaching can lead to better student learning and more effective education systems (OECD, 2014d). The OECD notes that TALIS ‘sheds light on which [teaching] practices and policies can spur more effective teaching and learning environments’ (OECD, 2013c: 3). Moreover, the analyses from TALIS enable countries to see more clearly where imbalances might lie and also help teachers, schools and policy-makers learn from these practices at their own level and at other educational levels as well, in addition to institutional characteristics of the education system, such as the degree of competition between schools.

Contrary to PISA, the publication of the TALIS results have produced very little media and political attention. The European Commission released a report in 2014 that summarized the main findings from TALIS 2013 and the implications for education and training policies in Europe. Results indicated that (1) school leaders report shortages of qualified teachers; (2) steps are required to boost the attractiveness of the teaching profession; (3) while teachers feel well prepared for the subjects they teach, too few of them receive systematic support during their first years on the job; (4) teachers need more training on ICT, special needs teaching and teaching in multicultural and multilingual settings; (5) teachers who are involved in collaborative learning using innovative pedagogies are more satisfied with their jobs; (6) teachers feel that feedback is only used to fulfil administrative requirements; and (7) in school leaders’ views, resources, regulatory frameworks and school environments are critical factors for effective school management (European Commission, 2014). The European Commission argued that these results should contribute to shaping new political priorities for education and training.

Program for the International Assessment of Adult Competencies

PIAAC is designed to measure adult literacy skills in digital environments and follows a series of similar surveys administered by the OECD. For example, the International Adult Literacy Survey, was first implemented in 1994 and was later replaced by the Adult Literacy and Life skills Survey, which was administered in 2003 and then again between 2006 and 2008 (Morgan, 2011). PIACC builds on each of these previous surveys by examining ‘foundational’ information-processing skills in three key areas: literacy, numeracy and problem-solving. These core skills form the basis for the development of other higher-level skills that are considered essential for adults in home, school, work and community settings (OECD, 2015a). The PIACC survey also provides information on various generic skills such as co-operation, interpersonal communication and organizing one’s time. The OECD asserts that PIACC helps governments in assessing, monitoring and analysing the level and distribution of skills among their adult populations (OECD, 2013e). They also argue that the tools that accompany the PIAAC survey are designed to support countries/economies as they develop, implement and evaluate the development of skills and the optimal use of existing skills (OECD, 2013e).

The initial administration of PIACC took place between 2011 and 2012. The final sample included 166,000 adults in 22 OECD countries as well as the partner countries Russia and Cyprus (OECD, 2015a). Results from the first PIACC administration were released to the public in 2013 and suggested that a significant proportion of adults scored at the lowest levels of proficiency for literacy, numeracy and problem solving. PIACC also showed major differences between countries: ‘There are wide variations in the mean proficiency among older adults across countries, suggesting that the lower average scores in this group are affected not only by the process of biological ageing, but also by differences in education and labour-market structures that can enable adults to develop and maintain their skills as they age’ (OECD, 2013e:106).

The European Commission grouped the main PIACC findings into seven key areas, which they argued are relevant for EU education and training policies: (1) 20% of the working age population has low literacy and numeracy skills; (2) one in four unemployed adults has low literacy and numeracy skills; (3) adults with low proficiency are often caught in the ‘low skills trap’ and are less likely to participate in learning activities; (4) there are significant differences between individuals with similar qualifications across various member states; (5) 25% of adults lack the skills to effectively make use of ICT; (6) adult skills tend to deteriorate over time if they are not used frequently; and (7) sustaining skills brings significant positive economic and social outcomes (European Commission, 2013b). Despite these findings, PIACC results, unlike PISA results, were almost unnoticed in the media. They are absent from political discussions− presumably because they are too far removed from educational systems and educational practice.

Assessment of Higher Education Learning Outcomes

AHELO was first introduced as a feasibility study by the OECD to see if it is scientifically feasible to assess what higher education students know and can do upon graduation. Completed in December 2012, AHELO attempted to measure both students’ subject matter knowledge as well as their capacity to apply their knowledge in ‘concrete and often novel situations’ (OECD, 2009: 9). The initial administration included participants from 248 higher education institutions within several countries/economies: Australia, Belgium (Flanders), Canada (Ontario), Columbia, Egypt, Finland, Italy, Japan, Korea, Kuwait, Mexico, the Netherlands, Norway, Russia, Slovakia and the United States (CT, MO, PA). In total, 23,000 students nearing the end of their three or four-year degree were sampled in the areas of generic skills, economics and/or engineering (OECD, 2009). Unlike other OECD international surveys, AHELO’s focus was on institutions rather than comparisons at the national level. Participating institutions were provided with anonymized data to facilitate benchmarking against their institutional peers.

In order to share the most salient findings from their initial feasibility study, the OECD hosted a conference in Paris, France in March 2013 titled Measuring Learning Outcomes in Higher Education: Lessons Learnt from the AHELO Feasibility Study and Next Steps. Key findings were also outlined in the final AHELO feasibility report (OECD, 2013a). According to the OECD, the AHELO study demonstrated that it is ‘feasible to develop instruments with reliable and valid results across different countries, languages, cultures and institutional settings’ (OECD, 2012b: 4). OECD announced in October 2015 that it had to give up AHELO, partly because of the antagonism from American universities which are now highly ranked in the Shanghai Jiao-Tong or the Times Higher Education World University Rankings. These universities may have feared that they might be less effective in creating value-added in competencies than expected according to their ranking and hence would lose some of their prestige. If AHELO is to be relaunched as a regular assessment tool, then past practice suggests it will expand in scope, as have other OECD international education surveys (Morgan and Shahjahan, 2014).

Collectively, the various international education surveys administered by the OECD are designed to provide policy-makers in Europe and around the world with key insights to help reform their education and training systems. The OECD launched a one-stop location called Education GPS, which provides internationally comparable data and analyses on education policies and practices, opportunities and outcomes (see http://gpseducation.oecd.org/). Education GPS also provides extensive reports such as

Soft education governance and educational quality

The quality of education is increasingly recognized as a major economic and social asset for a country. This applies to quality aspects of cognitive learning (Hanushek and Woessman, 2012) and also in relation to so-called non-cognitive skills (Heckman and Kautz, 2014). Increasingly evidence has become available that links quality of education indices on the one hand and policy factors on the other (see OECD, 2012b; Hoareau et al., 2013). Thus, it seems logical for governments to grapple with adopting, implementing and evaluating policies that will maximize their human capital across a range of education sectors.

The political leadership of the OECD recognized the importance of education for economic development early (in the 1960s). In the same spirit, by the end of the 1990, the political leadership of the OECD (the Committee of Education Ministers, endorsed by the OECD Committee of Government Leaders) decided to engage in the collection of international comparable data to help themselves (i.e., the national governments) with information on the impact of their educational institutions, providing the basis for more evidence-based policies to promote quality and equity in education. Within the EU, these surveys, particularly PISA, play a substantial role in the OMC and less so in the Semester Approach.

Some, such as Zurn (2014), have suggested that international organizations, such as the OECD, are no longer a matter of executive multilateralism, but are politicized toward the adoption of universalistic positions. In doing so, the OECD may be perpetuating existing inequalities between different regions. There is, however, little evidence to suggest that the growing influence of the OECD on national education policy development within the EU fits this perspective. EU countries have retained their full independence in education policy-making. There is room for EU member states to manoeuvre, given the size and cultural differences that exist across the continent. The influence of the larger member states on policy reform in smaller EU countries has been fairly insignificant, perhaps with the exception of Poland (as one of the fastest risers in PISA). Indeed, it is the ‘example’ of the Netherlands and Finland that are often cited when international comparisons are made, and they are fairly small nations within the EU.

Critics have also argued that the impact of the OECD in its own right or through the EU has undermined the quality of education and infringed on national integrity and culture. However, other than an uncomfortable feeling of ‘not invented here’ or of not doing as well as one had thought, there is sparse empirical evidence, to date, suggesting the autonomy of national educational decision-making has been compromised. In contrast, despite the huge media attention and the intensive reform agenda in EU countries in the period 2008-2014 (OECD, 2015b), there is still a significant gap between what might be called ‘optimal education policies’ (in view of the evidence and with all its national differentiations) and educational practice, raising the issue of creating more room for change.

The EU’s OMC may have had some impact on national policy reforms. However, to date there is little empirical evidence to support this assertion. An exploratory case study for Slovenia suggested that ‘although relatively good results are visible in National/EU Progress Reports, its full potential has not been exploited’ (Lahj and Stremfel, 2011). The Semester Approach is perhaps still too recent to expect that serious changes in the education system have occurred as a result of the CSRs. Nevertheless, the interaction between the OECD efforts in assessment of education quality (and its distribution over socio-economic groups) with the EU Commission Programs, as well as the OMC and the CSR, is likely to retain importance for promoting both quality and equity in education within the EU.

The road ahead for education governance in the EU

The present structure of ‘soft’ EU education policy based on the OMC and CSR and supported by the OECD assessments and policy analysis is widely accepted politically by EU member states. There are no political indications that this structure is viewed as going against country sovereignty in education policy-making. There are no incentives at the EU level for following implicit or explicit advice, nor are there any penalties for not following it. The only direct contribution of the EU in education policy formation is in the education programs that have received broad support from the EU member states. The support for the present structure was demonstrated in a four-point letter by the UK Foreign Affairs Minister Hammond (2015) on the renegotiation of the UK’s EU membership: ‘We want a renegotiation of market regulation, “ever-closer union,” subsidiarity and welfare, but not on education.’ Interestingly, Eurosceptic parties all over Europe have consistently complained about too much infringement of the EU on national policies, but not in the area of education policy. At the same time, room for further strengthening the EU soft governance of education is likely to be limited, even though EU countries are acutely aware of the importance of improving their education policies in order to become more competitive economically and to better serve equality of opportunity.

The soft-law approach to strengthening EU governance in education takes the form of developing OECD assessments more strategically and encouraging stronger ownership by the education community. Several directions are worth exploring.

Strive for complementarity between OECD assessments and national assessments. Both types of assessments now are used concurrently, leading possibly to ‘over assessment’ and underutilization of test results. Focus on value-added assessment analyses rather than on ordinal rankings. Develop more specific feedback measures for teachers. Teachers have to be able to benefit from value-added measures in improving their guidance to pupils. Extend the tests to the OCEAN

1

skills and possibly to creativity and civic awareness.

Such assessments could be a richer background for the OMC processes and lend themselves to more useful CSRs.

Large-scale assessments are often considered with reluctance and suspicion by parents, even if they are not high-stakes tests and meant as guidance of teachers and parents. An example was the introduction of a national test for three to four year olds in the Netherlands. The test was introduced as an instrument to detect early language deficiencies so that children could be guided into remedial learning programs. This idea was based on the awareness of substantial differences in language abilities of three to four year olds. These differences are related to variations in talking and reading to small children between households often separated along lines of socio-economic classes, but increasingly along lines of foreign or indigenous origin. The parliamentary debate was stormy, incited by some education researchers, with the support of substantial parts of the population, who gathered under the banner: ‘No infant testing’. The end result was that the test is now voluntary both for schools and for parents. Schools may use it, if the parents give permission, as part of their guidance system to give the teacher systematic feedback on his/her own observations. Yet for children aged five to six, schools have to administer some kind of an evaluative test as part of their guidance.

Critics of compulsory low-stakes assessments are now common in most EU countries and remain unconvinced of the advantages of these assessments to promote the best guidance of pupils and to increase equality of opportunity. They point to:

the limitations in the predictive value of the tests; the limitations of cognitive tests; the commercial interests involved in testing; and concern that teaching could become geared to the test.

However, one of the most important deficiencies of large-scale testing remains the difficulty teachers often experience in utilizing these measures to help inform their future pedagogical practice (Copp, 2015; Volante and Cherubini, 2010).

Final thoughts

Educational structures are likely only to count in so far as they ‘empower’ the teacher (Hanushek and Woessman, 2012), who is well trained and financially compensated. Perhaps this is where the major challenge lies for EU educational policy reform: how to ensure a teaching profession that is attractive to the best and most motivated in the population. However, the competing interests of other sectors in an ageing continent, such as care for the elderly, are not making the challenge any simpler. Yet improved value-added measures in learning (cognitive and socio-emotional) might make a contribution to education governance across EU member states. At the same time, it is important to acknowledge the scientific and technical limitations of value-added measures, particularly as they might be inappropriately applied for teacher evaluation practices (see AERA, 2015). Rather, we envision the utilization of value-added measures, which meet the technical requirements outlined by the American Educational Research Association (AERA, 2015), and international education surveys as a vehicle to help inform deliberations of large-scale reform. Overall, international education surveys, when utilized judiciously, can provide governments within the EU and elsewhere around the world with important information to inform educational policy development.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.