Abstract

The integration of biometric data and sensory stimuli is reshaping architectural computing, expanding design possibilities beyond traditional visual and geometric parameters. Through ‘Hydnum’, named after a genus of fungi known for its distinctive geometric mycelial networks, we demonstrate the integration of real-time biodata with virtual and physical elements to create dynamic spatial experiences. Our computational framework synthesizes olfactory, auditory, and visual elements through a sophisticated scent delivery mechanism, bio-inspired geometric structures, and real-time audio synthesis, all modulated by users’ physiological responses mapped to Russell’s Circumplex Model of Affect. The system leverages biosignal processing and transformations to engage underutilized senses in architectural design, while the project’s modular scent system and cyber-physical artifacts create responsive environments. Through empirical testing and systematic integration of multiple sensory channels, this research advances biodigital architecture by providing a comprehensive framework for spaces that actively engage with users’ emotional and physiological states.

Introduction

The integration of digital technologies with biological data is transforming architectural design, particularly through the emerging field of biodigital architecture. 1 While traditional architectural computing has primarily focused on visual and geometric parameters, the incorporation of real-time biometric data and multisensory stimuli offers new possibilities for creating environments that dynamically respond to human physiological and emotional states. This evolution in architectural computing extends beyond conventional spatial design boundaries through novel frameworks that enable sophisticated integration of multiple sensory modalities.

At the intersection of these developments, Extended Reality (XR) technologies and “perforated systems” provide crucial platforms for creating cohesive architectural experiences. 2 These frameworks allow different sensory components - from olfactory stimuli to real-time audio synthesis - to function autonomously while maintaining networked connections that respond to users’ biological data. However, despite advances in biosignal processing and environmental responsiveness, the systematic integration of non-visual senses remains underexplored in computational design frameworks, particularly in ways that preserve individual creative freedom while ensuring system stability.

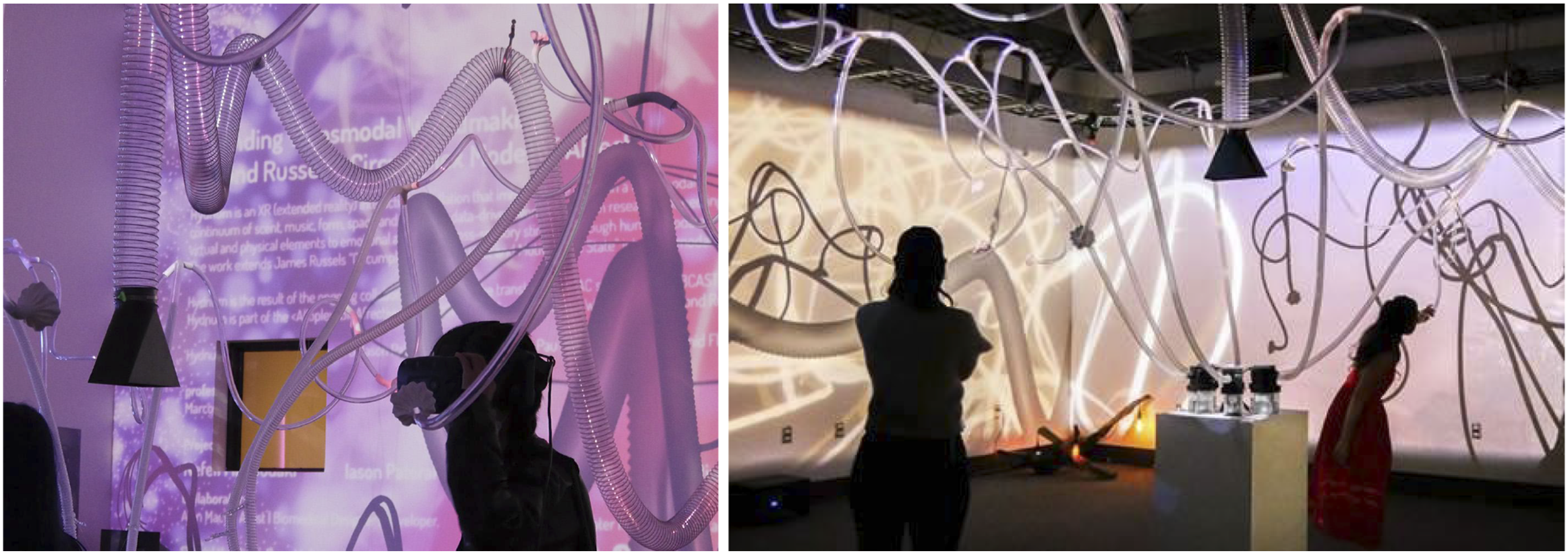

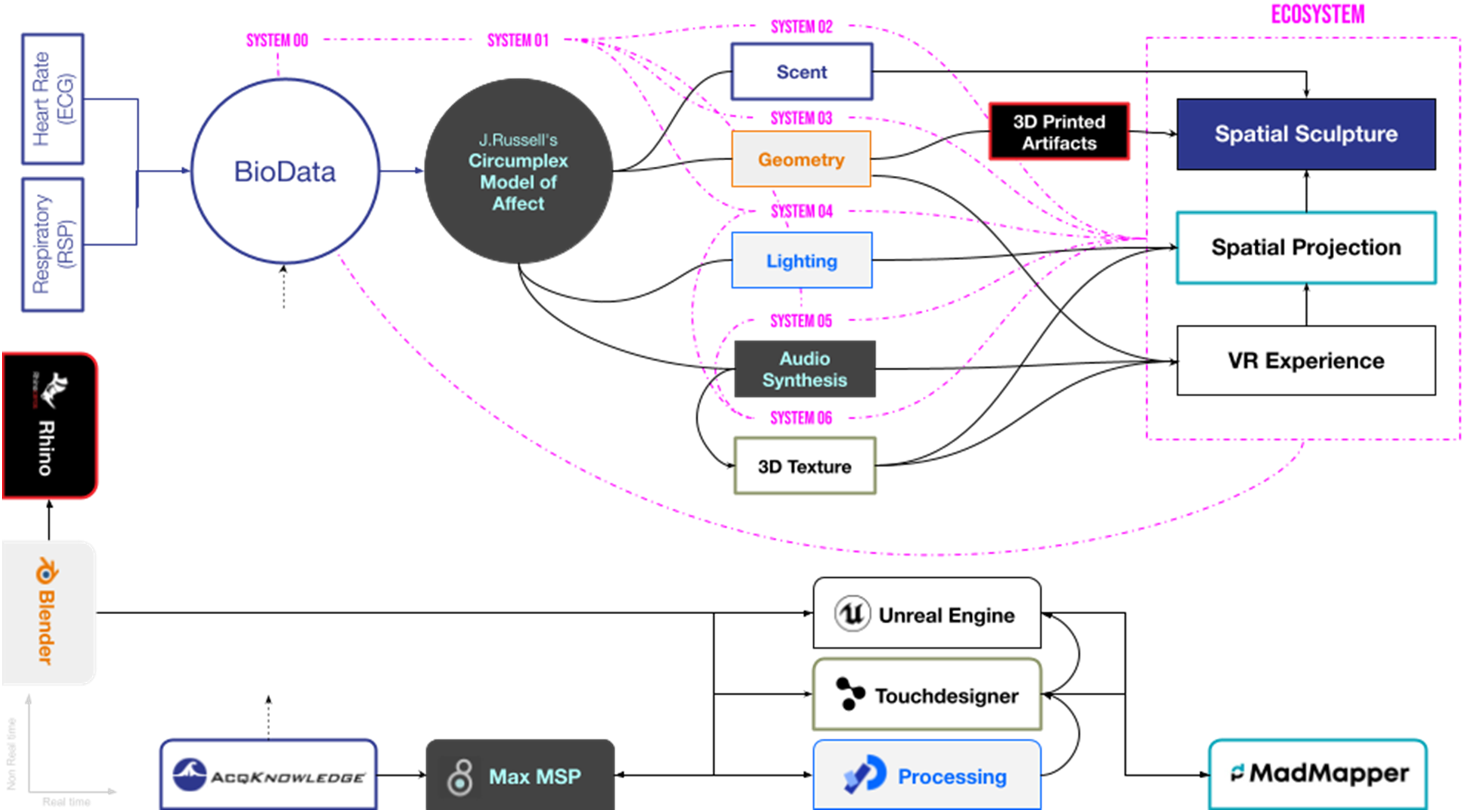

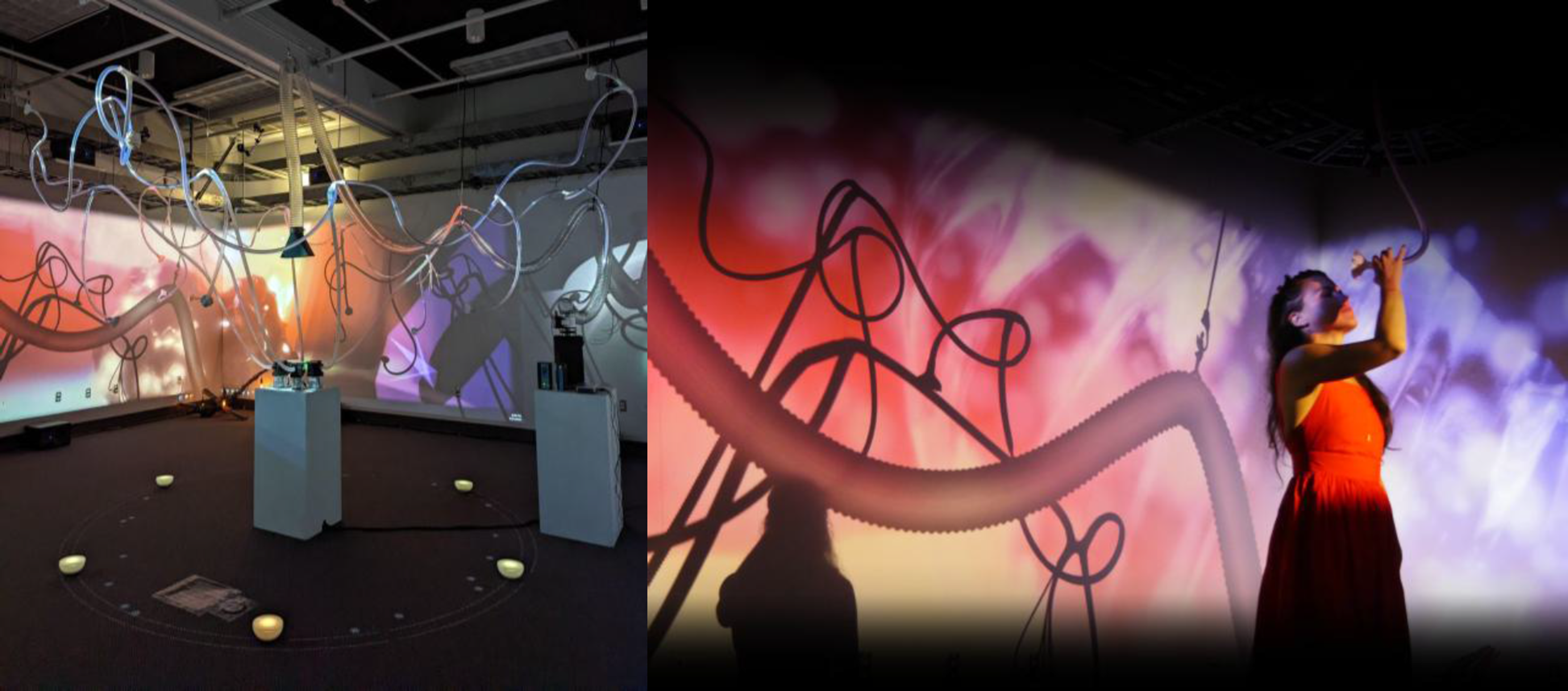

This paper presents ‘Hydnum’ (Figure 1), a computational framework that addresses these challenges by synthesizing real-time biodata with multisensory stimuli through a bio-inspired approach.

3

Drawing inspiration from fungal networks, our system demonstrates how biological data can be effectively mapped to architectural responses across olfactory, auditory, and morphological channels. These interactions are governed through the lens of emotional valence and arousal, producing a dynamic feedback loop between the participant’s physiological state and the installation’s spatial behaviors. Photos of the “Hydnum” installation showcasing the dendroid tube structure, the VR component, and the projected surfaces. Source: Authors, 2022.

Through empirical testing and systematic integration of olfactory, auditory, and visual elements within a perforated systems framework, we contribute to the growing field of biodigital architecture by offering a real-time, modular approach for spaces that engage users across multiple sensory dimensions. The project highlights how coordinated sensory channels can shape emotional resonance while enhancing users’ spatial awareness and groundedness within both physical and virtual environments.

Literature review

The integration of biological principles with computational design has fundamentally transformed architectural thinking and practice. Early theoretical frameworks, particularly Novak’s concept of “allogenesis”, 4 established crucial foundations for understanding how computational processes could generate new architectural forms inspired by biological systems. This approach envisioned architecture not as static form but as dynamic, evolving systems capable of responding to and integrating with their environment. Novak’s subsequent work on “NeuroNanoBioTectonics” 5 further explored the potential fusion of nanotechnology, biotechnology, and computational methods, proposing architectures that could blur the boundaries between biological and technological systems.

These early theoretical foundations have evolved alongside technological capabilities. Oxman’s 6 work on material ecology demonstrates how computational design can now respond to biological and environmental inputs in increasingly sophisticated ways, while Kolarevic and Parlac 7 explore the potential for buildings to adapt in real-time to occupant needs. This evolution in digital morphogenesis has been particularly accelerated by recent advances in computational power and artificial intelligence, enabling more complex and responsive forms of architectural expression. 3

Building on these principles of digital morphogenesis, the concept of “perforated systems” emerged as a framework for understanding collaborative computational environments. This approach, developed by Novak, conceptualizes interconnected generative modules that both transmit and receive data, functioning similarly to species within an ecosystem. 2 The framework allows each module to operate autonomously while maintaining networked connections with others, creating a dynamic environment that maximizes individual creative freedom while ensuring system stability. Such systems are particularly relevant in the context of contemporary urban environments, where digital content and physical architecture increasingly interact through real-time, generative processes. 8

The ecological metaphor in perforated systems extends beyond simple interconnection. Each module functions as a distinct “species” within the larger ecosystem, exhibiting behaviors that reflect its interactions with itself, other modules, and the environment. This bio-inspired approach to system design enables complex, emergent behaviors while maintaining the integrity of individual components. Recent implementations have demonstrated how this framework can effectively support the integration of multiple sensory modalities, from real-time audio synthesis to visual projections, creating cohesive yet dynamically responsive environments. 3

The evolution of immersive-mediated urban environments has fundamentally transformed how we conceive and experience architectural space. From early implementations in iconic locations like Times Square to contemporary architectural-scale displays emerging in various city centers, urban environments increasingly integrate digital and physical realms. 8 This transformation has evolved beyond simple digital overlays to create what Fox 9 terms “conversational spaces” - environments that engage in ongoing dialogue with their occupants through sophisticated feedback systems. However, the integration of digital content in urban spaces presents both opportunities and challenges. While many urban centers have become platforms for deterministic, commercially-driven content, emerging approaches using artificial intelligence and real-time generation systems offer possibilities for more dynamic and responsive environmental interactions. These systems enable “parametric urbanism,” where spatial computing extends beyond individual buildings to engage with the broader urban fabric. Recent implementations demonstrate how real-time biodata and environmental inputs can be integrated into these systems, creating urban-scale installations that respond to both individual and collective patterns of use. 3

While technological advancement has enabled new forms of urban-scale interaction, the fundamental question of how these spaces are experienced on a human level remains crucial. This brings us to consider the deeper phenomenological aspects of architectural experience and how they intersect with contemporary responsive systems.

The multisensory nature of architectural experience, as eloquently articulated by Pallasmaa, 10 forms a crucial foundation for understanding how we perceive and interact with space. Pallasmaa emphasizes that architecture engages our entire sensory apparatus, with particular attention to often-overlooked modalities such as smell, which he notes can persist more strongly in spatial memory than visual stimuli. This understanding of architecture as a complete sensory experience aligns with Böhme’s 11 concept of atmospheres as “emotionally felt spaces” and “a spatial sense of ambiance,” where the sensory qualities of space shape our emotional responses. This perspective complements Eliasson’s notion of atmosphere as a “reality machine”, 12 suggesting that our sensory engagement with spaces actively constructs our perception of reality rather than merely receiving it.

The integration of these phenomenological insights with contemporary responsive systems has led to new approaches in environmental design. The Circumplex Model of Affect 13 provides a framework for understanding and mapping these emotional responses, offering a systematic way to connect sensory stimuli with psychological states. Building on these principles, Picard’s 14 foundational work on affective computing demonstrates how systems can detect and respond to users’ affective states, while Spence 15 highlights the significance of multisensory design in shaping occupants’ emotional experiences. Together, these theories inform the creation of environments that actively engage with and support the well-being of their occupants through carefully orchestrated sensory elements.

These theoretical frameworks - from digital morphogenesis and perforated systems to sensory integration and emotional mapping - provide the foundation for our research methodology. Building on Novak’s concepts of perforated systems and the integration of sensory experience outlined by Pallasmaa, while incorporating contemporary approaches to urban-scale computing and emotional response, we demonstrate a computational framework that synthesizes these elements through real-time biodata integration. Our methodology specifically addresses how these theoretical principles can be implemented in practice through a sophisticated scent delivery mechanism, bio-inspired geometric structures, and real-time audio synthesis, all modulated by users’ physiological responses.

Methodology

Experiment framework

The experimental framework of this study was designed to investigate the transmodal interactions between olfactory stimuli and other sensory modalities, underpinned by the Circumplex Model of Affect. The experiment aimed to establish how distinct sensory channels, scent, sound, and spatial form, could be modulated through physiological inputs to create emotionally resonant experiences.

This model was selected for its simplicity and direct compatibility with biometric signals such as heart rate and heart rate variability, making it particularly suitable for real-time emotion estimation in computational environments. While the model’s limitations, such as its simplified dimensionality and limited representation of culturally or temporally nuanced emotions, are recognized, its functional clarity and precedent in affective computing justify its application here. Further discussion of these limitations and future directions for model refinement is provided in later sections.

The original data used for emotional mapping were primarily drawn from the project’s core team of designers and artists, whose physiological responses were recorded across a range of controlled affective states. While not intended as a generalized user study, these sessions served to test and calibrate the system’s responsiveness under real emotional fluctuations. The purpose of this framework is artistic rather than clinical, and as such, demographic specificity and statistical generalizability were not central objectives.

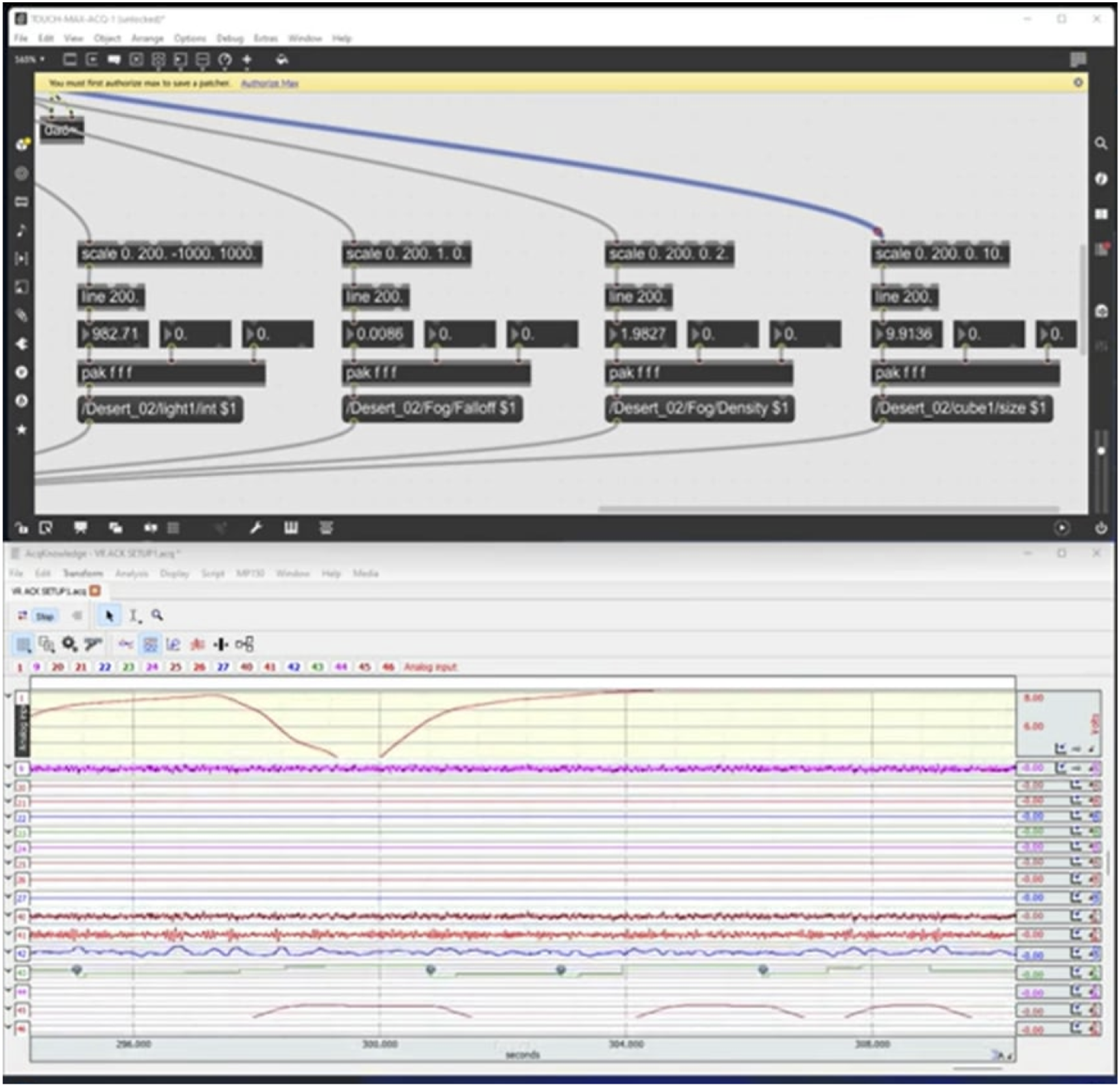

The experimental process unfolded in phases. The first phase established baseline physiological responses with no external stimuli. In subsequent trials, sensory modalities were introduced individually to isolate their effects. Participants were exposed to olfactory stimuli using a BIOPAC Modular Scent Delivery System (SDS200-SYS), followed by ambient lighting adjustments and an interactive audio layer that responded in real time to emotional data. Only during the final installation showcase were all modalities combined, allowing a cohesive multisensory synthesis of scent, sound, color, and bio-inspired morphology. Physiological data were acquired using the BIOPAC MP160 system and analyzed via AcqKnowledge software, with real-time ECG and respiration signals streamed through a Dual Wireless BioNomadix Transmitter. These biosignals were mapped to emotional coordinates, valence and arousal, based on established biometric correlations. Heart rate variability was used as a proxy for valence, and heart rate as a proxy for arousal. All the data were received and recorded in real time in Max MSP (Figure 2). Diagram showing the raw Biodata recordings from AcqKnowledge & Max MSP. Source: Authors, 2022.

The system supports two operational modes: a fully real-time mode, where live biodata actively modulate each sensory modality; and a simulated real-time mode, where pre-recorded data are streamed to generative systems of each modality, creating an immediate responsiveness. The latter was used for the final exhibition due to logistical constraints, allowing curated emotional states to drive the sensory components in a controlled manner. In both cases, the biodata were used as a generative input to drive the installation’s behavior, not solely for post-hoc validation, making the system fully interactive.

Communication between components was achieved via Open Sound Control (OSC), facilitating a modular architecture in which each sensory channel functioned independently while remaining synchronized through emotional data streams. This distributed approach, aligned with Novak’s concept of “perforated systems,” ensured that each modality could maintain creative autonomy while contributing to a cohesive, emotionally-responsive environment.

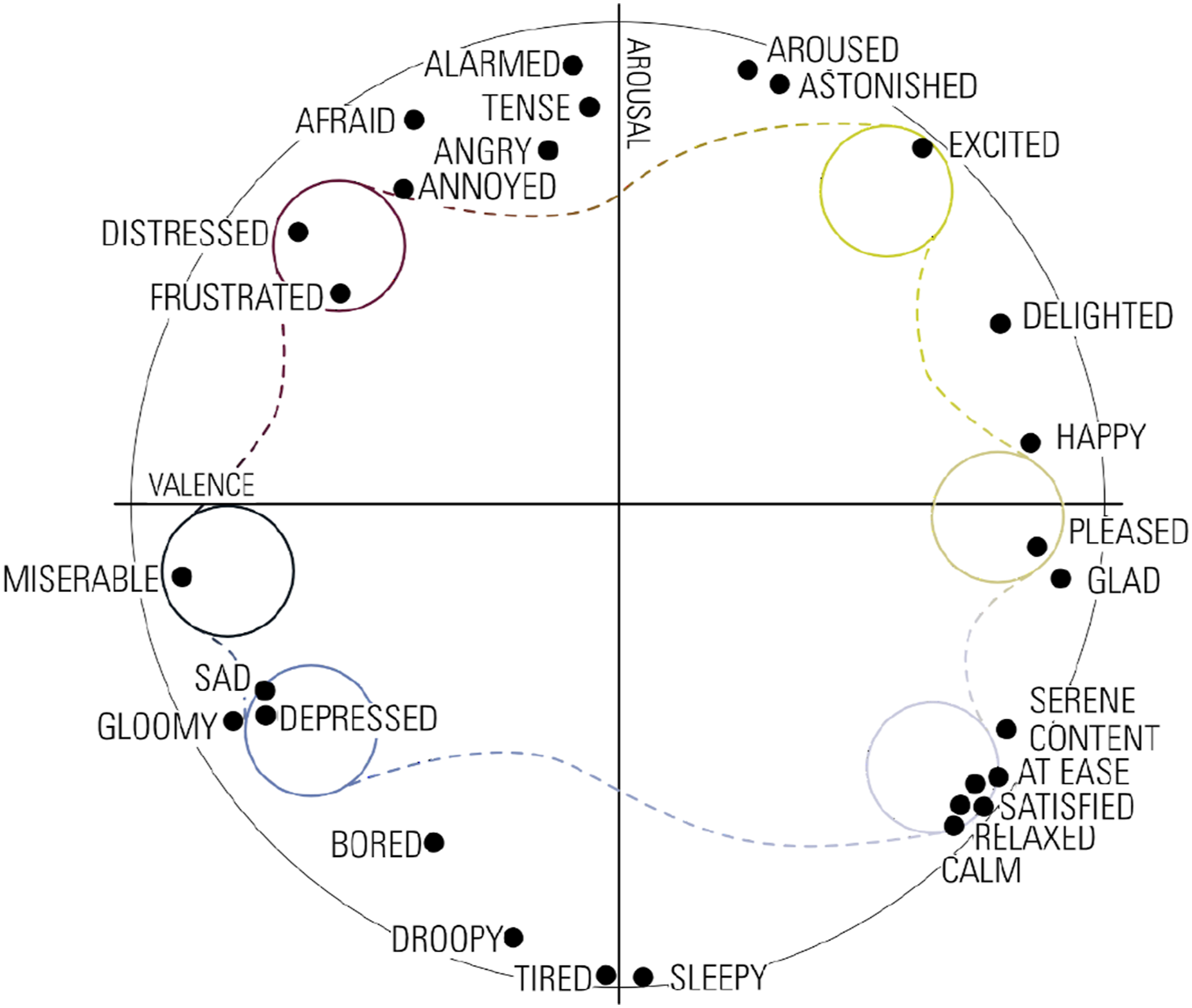

Sensory integration systems

The project developed an integrated virtual environment that responds to users’ biodata to influence their emotional states through multisensory stimulation. The system processes Heart Rate (HR) readings to determine emotional states, which then modulate synchronized visual, auditory, and olfactory outputs. Our implementation focused on six distinct emotional states: Arousal-Excitement, Pleasure, Arousal-Distress, Misery, Sleepiness-Depression, and Sleepiness-Contentment (Figure 3). Diagram showing the six chosen emotions on the Circumplex Model of Affect. Source: Authors, 2022.

Olfactory system

The olfactory component’s development was informed by research on the intersection of scent, spatial design, and emotional response. Pallasmaa 10 emphasizes that scent creates some of the most persistent memory traces of space, suggesting that olfactory elements are crucial in creating deep architectural experiences. This aligns with Barbara and Perliss’s 16 work on “invisible architecture,” which demonstrates how scent can define spatial boundaries and create emotional territories within architectural environments.

Recent studies in neuroarchitecture have shown that carefully designed scent distribution in space can significantly impact emotional well-being and spatial perception. Spence 17 documents how the strategic placement of scent delivery systems can create “sensory zones” that guide movement and emotional experience through space. This research informed our approach to spatial scent distribution and the design of our delivery mechanism.

While Henshaw

18

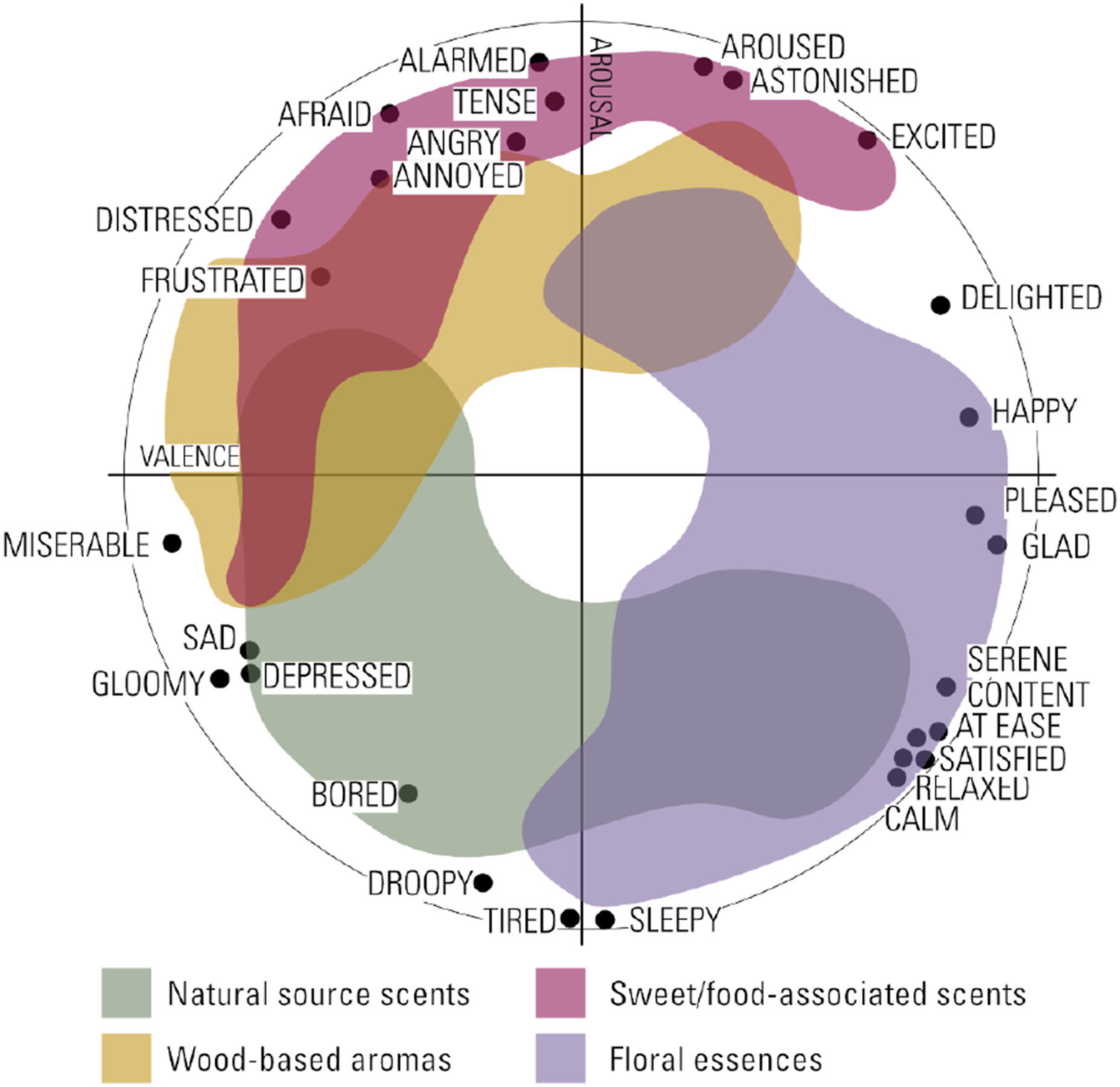

does not map individual scents to emotional states directly, her framework for understanding “urban smellscapes” highlights how scent typologies - natural, industrial, floral, and food-associated - can influence how space is experienced over time. These insights, combined with empirical testing and our experimental goals, formed the foundation of our olfactory design process. Each scent blend was developed to correspond to one of the six emotional states identified in the Circumplex Model of Affect used in this study. The palette includes: (1) Natural source scents (earth, grass, ocean) (2) Wood-based aromas (pine, sandalwood) (3) Floral essences (lavender, jasmine, rose) (4) Sweet/food-associated scents (vanilla, caramel)

Custom blends were refined through iterative testing and validation using physiological response data gathered with biomedical devices. Figure 4 illustrates the positioning of these categories on the circumplex diagram, showing how each scent group aligns with particular emotional states - for example, floral essences with calm/contentment, and sweet aromas with excitement/arousal. The spatial distribution of these scents was carefully considered, with particular attention to what McLean

19

terms “scent-scapes” - the way different odors interact and create atmospheric gradients within architectural space. Each blend’s efficacy was validated through physiological response testing, measuring changes in heart rate and heart rate variability as subjects moved through different scented zones of the physical installation. Range of each Scent Palette during the physiological response testing. Source: Authors, 2025.

Color-emotion mapping

The visual representation of emotional states was developed through a systematic approach to color-affect associations. Our methodology drew from extensive research in color psychology and emotion, particularly the work of Bartram et al. 20 on affective color in visualization. Their framework provides empirical evidence for consistent relationships between color properties and emotional dimensions, especially in interactive environments.

The implementation mapped emotional states to specific color parameters using both theoretical foundations and empirical testing. Following Ou et al.’s 21 color-emotion model, emotions with high arousal and positive valence were associated with bright, warm colors like red and yellow. Conversely, high arousal with negative valence was represented through intense, darker colors. Low arousal states with positive valence were depicted using soft, cool colors like light blue and green, while low arousal with negative valence employed muted, darker tones. These mappings align with prior work by Suk and Irtel, 22 demonstrating that color-emotion associations remain relatively stable across different viewing conditions and contexts.

The color mapping system was implemented in Processing, incorporating both computational and perceptual elements. Each color was systematically linked to specific valence and arousal values through a polar coordinate transformation, ensuring smooth transitions between emotional states. A gradient-based visualization was employed to reflect the continuous emotional transitions supported by the biometric system, rather than static or discrete categorizations. The resulting distribution of color associations is shown in Figure 5, which overlays the affective color space across Russell’s Circumplex Model of Affect. Diagram of the color mapping in the Circumplex Model of Affect, Source: Authors, 2025.

Audio synthesis framework

The audio component utilized the arousal and valence measures derived from biodata to synthesize Hydnum’s soundscape in real-time. Following Flatley’s 2 implementation, the system employed three distinct layers of sonic behavior, each responding to different aspects of the emotional mapping. Changes in these measures were systematically mapped to audio synthesis parameters, with arousal linked to event probability, tempo, and fundamental pitch, while valence controlled shifts in timbre and harmonic complexity.

The first layer consisted of 16 independent voices based on filtered pink noise, providing a dynamic ambient foundation. As detailed by Flatley, 2 these voices created an evolving soundscape that responded to arousal levels - producing subtle undulations during low arousal states and more rhythmic patterns at higher levels. The second layer implemented a polyphonic synthesizer incorporating frequency and amplitude modulation, with synthesis parameters controlled by both arousal and valence to produce dynamic spectromorphological changes. The third layer utilized two sinusoidal oscillators modulated in frequency, amplitude, duration, and spatial location, creating high-frequency elements that tracked emotional state changes.

The implementation incorporated Gaussian distributions for managing stochastic elements, building on historical precedents in algorithmic composition, notably Xenakis’s 23 work in stochastic music. Each of these layers incorporated additional data from the perforated system developed by Roselló that provided just intonation pitch content based on his theory of “formalized harmony”. 24 This theoretical framework enabled the integration of extended tuning systems within the responsive audio environment. The resulting audio was spatialized using a 16.2 channel system, with sound localization corresponding to positions within the circumplex model of affect. 2

Bio-inspired computational morphologies

The geometric structures were procedurally designed using Blender’s Geometry Nodes system, using a non-destructive parametric workflow that enabled real-time modulation based on emotional input data. This approach draws from D’Arcy Thompson’s 25 foundational work on the mathematical principles underlying biological form generation, while extending it through contemporary computational methods. The resulting system exemplifies what Oxman describes as a ‘material computation’ approach, where digital and biological principles converge to create adaptive geometries.

This approach aligned with Novak’s 4 concept of ‘allogenesis’, in which new forms emerge from the intersection of computational logic and biological inspiration. Drawing from species within the genus Hydnum, known for its unique spore-bearing structures and ectomycorrhizal symbioses, we developed a morphological system structured around three computational parameters: Main Body Definition, Surface Offset Modulation, and Edge-specific Offset. These elements were dynamically modulated by affective inputs derived from real-time biodata mapped through Russell’s Circumplex Model of Affect.

The emotional data were translated into geometric transformations using a differential growth approach, with each morphological parameter exhibiting additive, subtractive, or neutral behavior depending on its position in valence–arousal space. As illustrated in Figure 6, positive valence states triggered subtractive transformations in the main body and additive modulation on the surface, while high arousal states activated expansion at the edges. In contrast, negative valence and low arousal produced more constricted and neutral states across these parameters. These procedural transformations resulted in six distinct configurations - Excitement, Pleasure, Distress, Misery, Depression, and Contentment - each with its formal articulation. The resulting forms are shown in Figure 7, with a breakdown by parameter layer. Mapping of form generation parameters in the circumplex model of affect, source: Authors, 2025. Morphological process with six different emotional states, Source: Authors, 2025.

Each of the three morphological parameters was implemented through discrete procedural streams within a node-based geometry pipeline. These used mathematical operations such as sine modulation, vector displacement, and curvature-based logic. The Main Body was shaped through axial deformations aligned to mesh normals, producing variations in mass and thickness. The Surface Offset introduced undulating profiles using amplitude-controlled wave functions across mesh curvature. Edge Offset transformations relied on proximity-based vertex calculations and directional displacement to create rim modulations, either flaring, sharpening, or compressing based on emotional intensity. These composable deformation streams ensured consistent translation from emotional data into spatial form.

This setup enabled precise translation from biodata into continuous geometric transformations. The modular structure of each stream supported both real-time rendering and fabrication workflows, maintaining responsiveness across contexts. This differential parametric logic not only conveyed affective state but also shaped the spatial role of the forms in the installation. Each structure was designed to act as both a sculptural artifact and a nodal point within the broader sensory system, incorporating scent delivery tubes and projection surfaces where appropriate.

The VR environment (Figure 8) presented these six final computational morphologies at a larger scale. Users entered a virtual space where they stood beneath these structures, experiencing each from below, a perspective that reinforced their monumentality and implied affective weight. While the geometries themselves were tied to discrete emotional states based on earlier biodata recordings, the real-time aspect of the VR scene was enacted through dynamic environmental effects. Volumetric fog responded to ongoing biometric input by shifting color according to the current valence-arousal reading, aligned with the color-emotion mapping described in the previous section. Simultaneously, a spores-inspired particle system varied in density and motion, driven by fluctuations in heart rate and variability. This layering of static form and dynamic environmental feedback created an immersive and emotionally reactive space, merging computational morphogenesis with sensory affect in real time. Snapshots from the bio-inspired models within the VR environment, Source: Authors, 2022.

Integrated system architecture

The Hydnum installation embodies the perforated systems framework through an architecture where autonomous components function cohesively while maintaining their characteristics. This section examines how these components integrate to create a responsive multisensory environment that adapts to users’ emotional states in real-time.

The data flow architecture, illustrated in Figure 9, demonstrates how information moves through the system. At its core, the architecture employs a star topology with Max MSP serving as the central nervous system. This arrangement allows independent development and operation of sensory components while ensuring coordinated responses through standardized communication protocols. Data flow pipeline: Vertical/horizontal axis shows non-realtime/real-time software. Source: Authors, 2022.

Raw biosignals from the BIOPAC system enter this ecosystem through Max MSP, where they transform emotional coordinates based on Russell’s Circumplex Model. This emotional data forms the common language that enables communication across the perforated boundary of each system component. Rather than direct point-to-point connections, components subscribe to emotional state broadcasts, allowing them to respond according to their own interpretative frameworks while maintaining system-wide coherence.

The communication between components occurs through OSC messages that transmit emotional parameters across the network. This approach creates what Novak describes as “species interactions” within the computational ecosystem, where each component operates as an autonomous agent within a larger computational ecosystem, adapting responsively while preserving coherence. The loose coupling between components enables flexibility and resilience, as individual elements can be modified or replaced without destabilizing the entire ecosystem.

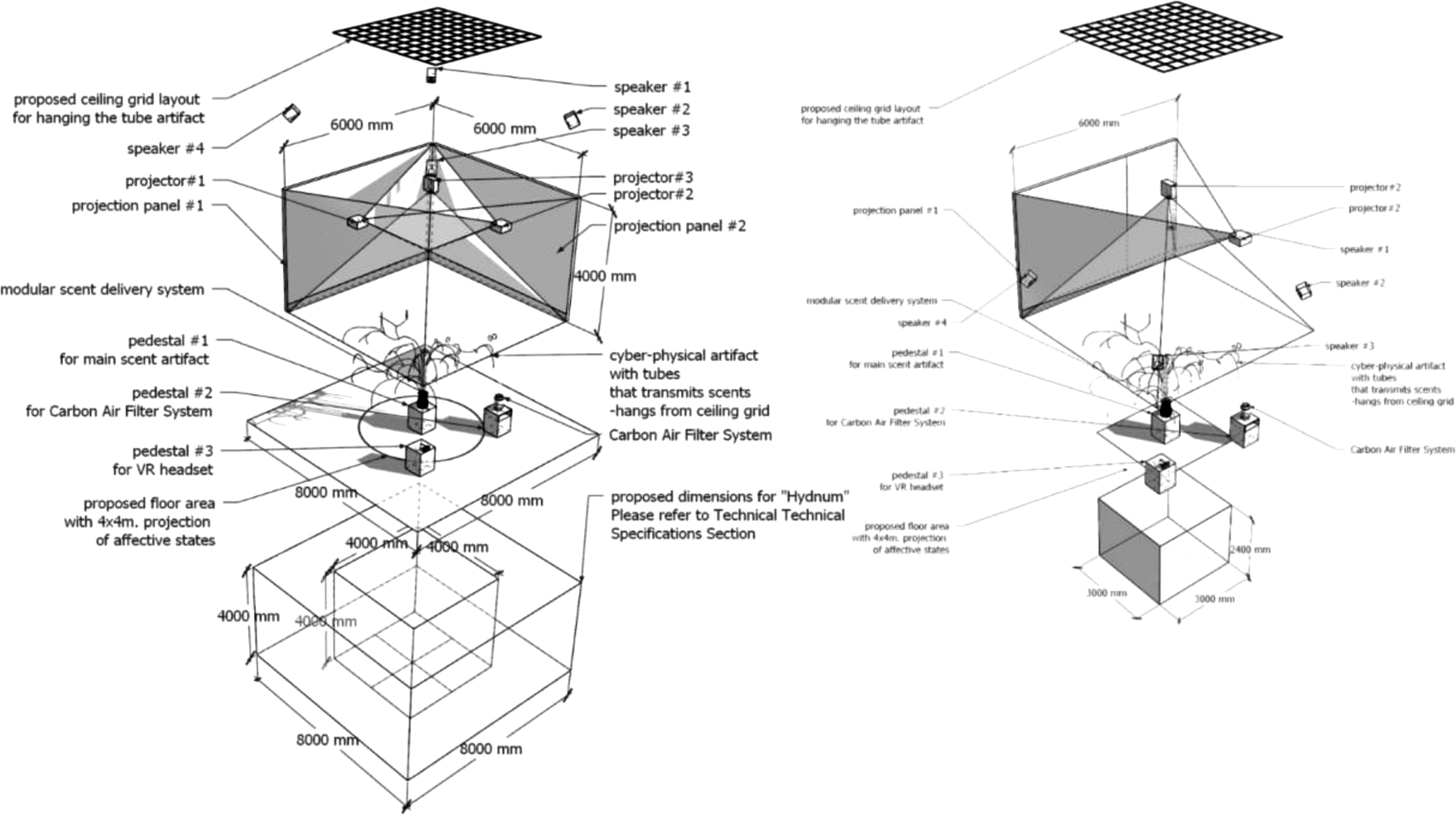

The architectural manifestation of this perforated system materializes through the physical installation space, where digital and physical elements converge to create a cohesive environmental experience (Figures 10 and 11). The installation space functions as a multisensory envelope surrounding visitors, with carefully choreographed relationships between the dendroid scent structure, projection surfaces, audio spatialization, and the VR environment. Images from the installation showing the floor and wall projections. Source: Authors, 2022. Exploded axonometric diagram of the installation layout showcasing different arrangements and setups. Source: Authors, 2022.

The dendroid tube structure serves as both sculptural element and functional infrastructure, embodying the morphological principles that govern the digital components. This physical structure creates a three-dimensional spatial composition that guides movement through the installation while distributing scent in patterns that correspond to the emotional mapping. The transparent medical tubing reveals the flow of scented air, making visible the otherwise imperceptible olfactory component of the experience.

This physical framework establishes zones of varying sensory intensity throughout the installation space, creating what might be called “emotional territories” within the architectural envelope. These territories are not rigidly defined but rather emerge from the overlapping influences of scent diffusion patterns, sound localization, and visual elements. Visitors can move freely between these territories, experiencing transitions between emotional states as embodied spatial experiences.

The projection mapping system extends the digital environment onto the physical architecture, blurring the boundary between virtual and physical space. Rather than treating projections as mere visual elements, they function as spatial transformations that respond to the emotional data, altering the perceived architectural qualities of the space. The floor projection of the circumplex model creates a navigable emotional map that helps visitors understand their position within the emotional landscape of the installation.

The audio component further defines the spatial qualities of the installation through a 16-channel spatialization system. Sound does not simply accompany the visual elements but actively shapes the perceived dimensions and atmosphere of the space. The audio system creates sonic architectures that expand and contract in response to emotional states, establishing dynamic spatial boundaries that complement the physical structure.

At the center of this multisensory environment, the VR component offers an immersive window into the computational morphologies generated by the system. While other visitors experience the collective environment, VR participants engage with the interior spaces of the generative forms, experiencing the emotional states from within. This creates a dual perception of the installation, both as an architectural space to be inhabited and as an entity to be explored from within.

The integration of these components creates what we might call a “responsive architectural atmosphere” that continuously adapts to the emotional states of its occupants. Unlike traditional responsive architecture that focuses primarily on environmental conditions or functional requirements, this approach creates spaces that engage directly with the emotional and physiological states of visitors.

The system’s temporal dimension is particularly important to its architectural conception. Rather than producing static emotional states, the system generates continuous transitions between states, creating emotional trajectories that unfold over time. These trajectories manifest across all sensory modalities simultaneously, producing synchronized emotional narratives that visitors experience as they move through the installation space.

The technical implementation maintains this temporal coherence through synchronized update cycles across all components. Max MSP broadcasts emotional state updates at 60 Hz, ensuring that all components receive identical emotional coordinates at consistent intervals. This synchronization is crucial to maintaining the perceptual unity of the installation, as it ensures that all sensory modalities respond in concert to changes in emotional state.

This approach to integration represents an evolution in architectural computing, where emotional states become fundamental design parameters that shape both digital and physical elements. By treating the installation as a perforated system of interconnected but autonomous components, Hydnum demonstrates how complex multisensory environments can respond cohesively to human emotional states while maintaining the creative integrity of each sensory dimension.

Results

The “Hydnum” study revealed compelling insights into how integrated multisensory environments can shape and enhance emotional experiences. Through the synthesis of olfactory, visual, and auditory stimuli with bio-inspired forms, the system demonstrated the capacity to create rich, emotionally resonant spaces that responded dynamically to users’ physiological states.

The integration of scent with other sensory modalities proved particularly effective in emotional modulation. When scents were synchronized with corresponding visual and audio elements, participants exhibited stronger physiological responses compared to single-modality stimulation. For instance, states of high arousal and positive valence were effectively induced through the combination of citrus-based scents, warm color spectrums, and higher-frequency audio patterns. This multisensory alignment created a more immersive experience, with the scent delivery system’s integration into the dendroid structure allowing for precise spatial distribution of aromatic elements.

The emotional mapping through Russell’s Circumplex Model manifested distinctly across all modalities. While the Circumplex Model of Affect provided a useful, interpretable framework for translating biometric inputs into sensory outputs, its bidimensional simplification limits the expression of more complex or culturally specific emotions. Future iterations of the system may benefit from integrating more nuanced affective models, such as Barrett’s Theory of Constructed Emotion, the PAD model, or machine learning - based latent emotion embeddings, to capture temporally dynamic and embodied emotional states.

In states of high arousal-excitement, the system generated expressive geometric transformations with pronounced edge expansions, vivid warm color gradients, and increased rhythmic and tonal complexity in the audio layer, all while emitting stimulating scent profiles including citrus and spice. Conversely, during states of sleepiness-contentment, the system responded with soft geometric undulations, cool muted color palettes, and low-frequency, slower audio compositions accompanied by calming scents such as lavender and sandalwood. These synchronized responses across modalities contributed to coherent affective environments that participants described as intuitive and emotionally engaging, particularly in their verbal reflections.

For example, in states of Excitement (high arousal, positive valence), the system combined: (1) Scents: citrus and spice blends (2) Colors: red-to-orange gradients (3) Audio: increased tempo, higher frequencies (4) Geometry: open, flared edge structures

The bio-inspired computational morphologies played a crucial role in unifying the sensory experience. The geometric structures, responding to emotional input data, created forms that both facilitated scent diffusion and provided visual representation of emotional states. In positive valence states, the forms exhibited more open, expansive characteristics, while negative valence produced more contained, intricate geometries. These morphological responses, combined with corresponding color modulations and sound designs, created environments that participants could navigate intuitively based on emotional resonance.

The physical manifestation of these computational forms through the parametric tube joint network successfully bridged the digital and physical realms. This hybrid reality experience, where digital morphology synchronized with physical sensory stimuli, created an environment that participants could engage with both virtually and physically. The integration of VR elements with the physical structure enhanced spatial understanding and emotional engagement, as users could observe and interact with the system’s responses to their emotional states across multiple dimensions.

Throughout the exhibition, although no formal questionnaires were administered, participants provided substantial verbal feedback, particularly on the scent and color components, which they described as aligning closely with their felt emotional states. These more immediate modalities (olfactory and visual) were consistently recognized and interpreted in ways that affirmed the effectiveness of the emotional mappings. In contrast, the bio-inspired morphologies and VR experience were described as offering a more novel form of engagement, less directly interpretable, but compelling in how they embodied emotional states through spatial and material change. This response highlights the value of multi-modal composition, where different channels convey affect with varying immediacy and depth.

Importantly, the biodata collected during the experiment was not merely used for post-hoc validation but actively drove the system’s behavior in both real-time and simulated live modes. This design enabled a continuous, emotion-responsive environment where the affective states of users influenced every layer of the installation. Most significantly, the perforated systems framework enabled smooth transitions between emotional states, with each modality contributing to a fluid, continuous experience. Rather than abrupt changes in individual elements, the system produced gradual transformations across all sensory channels, maintaining coherence while adapting to shifting emotional states. This unified response created what participants described as a more natural and immersive emotional journey, where the boundaries between different sensory inputs became less distinct and more experientially integrated.

The results demonstrate the potential of integrated multisensory environments are capable of generating felt emotional experiences across sensory channels. The system’s responsiveness, as perceived by participants, indicates a promising direction for biodigital architecture, where emotional and physiological states are not only measured but materially inscribed into the space. By treating sensory stimuli not as separate elements but as interconnected aspects of a unified system, “Hydnum” achieved a level of emotional resonance that validates the approach of biodigital architecture as a medium for creating emotionally responsive environments.

Discussion/conclusion

The findings from “Hydnum” demonstrate the potential of integrating multisensory stimuli with biodata in architectural environments. By moving beyond traditional visual-centric approaches to architectural computing, this research establishes a framework for creating spaces that engage with users’ emotional and physiological states across multiple sensory dimensions. The effectiveness of this integrated approach supports Pallasmaa’s assertions about the multisensory nature of architectural experience while extending them into the realm of responsive, biodigital systems.

The ability of synchronized multisensory stimuli to produce coherent, participant-reported emotional responses suggests new possibilities for architectural design. Rather than treating sensory elements as separate layers, this research highlights their value as parts of an interconnected system, responsive to real-time biometric input and functioning holistically. This approach aligns with Böhme’s concept of atmospheric architecture, but adds a dimension of continuous responsiveness enabled by physiological data.

The bio-inspired computational framework proved particularly effective in bridging digital and physical realms. Drawing from fungal networks as a generative and organizational model, “Hydnum” developed an organic integration that went beyond static biomimicry. This approach points towards biodigital architecture where biological principles shape not just form but system dynamics and adaptive behavior.

The implementation of perforated systems theory provided a solution for maintaining modular yet synchronized sensory responses, addressing a core challenge in responsive environments: balancing systemic coherence with flexibility. The resulting framework shows promise for future architectural computing models that require both distributed autonomy and dynamic feedback integration.

Several key implications emerge from this research

First, it demonstrates that emotional responsiveness in architecture can be orchestrated through multiple, interrelated sensory channels. This opens new directions for designing therapeutic environments, public spaces, and installations focused on emotional engagement.

Second, the success of the bio-inspired approach in creating cohesive multisensory feedback loops offers design principles for future biodigital systems, where form, behavior, and atmosphere are all informed by biological intelligence.

Third, the project introduces a repeatable framework for affective space design, grounded in real-time biodata and adaptable to emerging models of emotion processing. While Russell’s Circumplex Model of Affect provided a functional basis for translating biosignals into spatial responses, it offers a simplified view of emotion. Future iterations could integrate machine-learning-driven affective embeddings to capture temporal and cultural complexity.

However, several challenges and opportunities for future research remain. The long-term effects of emotionally responsive environments, their impact across populations, and their scalability un built contexts warrant deeper exploration. Questions around system maintenance, integration into existing infrastructure, and personalization across cultural or physiological differences also merit further investigation.

Future research could explore: (1) Systems that learn and adapt to user preferences over time. (2) Integration with personal biometric devices for individualized feedback. (3) Use of responsive materials that adapt to occupant states in real time.

In conclusion, “Hydnum” demonstrates the feasibility of designing emotionally responsive environments through multisensory integration and biodata interaction. The project contributes to the expanding field of biodigital architecture by offering a design method that directly engages with user affect

Footnotes

Acknowledgements

We would like to express our sincere gratitude to Alan Macy for his insightful discussions and expert mentorship, which were crucial in guiding our investigative process. Our thanks also extend to SBCAST and the transLAB for providing exceptional research facilities that were instrumental in hosting this project. We acknowledge BIOPAC Systems Inc. for supplying the specialized medical equipment that was essential to our work. Additionally, we would like to thank Pau Rosello Diaz for his significant contributions in developing biodata translations in Max MSP, as well as in the color-mapping processes, research, and implementation. No generative AI tools were used in generating original research content, analysis, or interpretation. However, AI-assisted tools such as Claude and Grammarly were used for text editing, reference formatting, and organizational improvements. The final manuscript was reviewed and approved by the authors to ensure accuracy and originality.

Funding

This work received in-kind support from the transLAB at the Media Arts and Technology Program, University of California, Santa Barbara, as well as from SBCAST (Santa Barbara Center for Art Science and Technology) and BIOPAC Systems, Inc. The authors also thank the Colleges of Engineering and Humanities and Fine Arts at UCSB for their generous support.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.