Abstract

Large-scale language-image (LLI) models have the potential to open new forms of critical practice through architectural research. Their success enables designers to research within discourses that are profoundly connected to the built environment but did not previously have the resources to engage in spatial research. Although LLI models do not generate coherent building ensembles, they offer an esthetic experience of an AI infused design practice. This paper contextualizes diffusion models architecturally. Through a comparison of approaches to diffusion models in architecture, this paper outlines data-centric methods that allow architects to design critically using computation. The design of text-driven latent spaces extends the histories of typological design to synthetic environments including non-building data into an architectural space. More than synthesizing quantic ratios in various arrangements, the architect contributes by assessing new categorical differences into generated work. The architects’ creativity can elevate LLI models with a synthetic architecture, nonexistent in the data sets the models learned from.

Keywords

Drawing from everything—AI as the point of departure

Diffusion models are a relatively new technology. Invented in 2015, 1 diffusion models became known as the centers of new AI systems that can transform text into image, video, audio, mesh, and vice versa. 2 Their success is rooted in their ability to combine with AI models such as transformers, clip guidance, and their training on massive databases, like Midjourney’s V4 on 4 billion 3 or Imagen on 15 billion images. 4 Therefore, those approaches are commonly grouped as large-scale language-image (LLI) models. 5 The research on LLI models has progressed rapidly, with new applications surfacing nearly daily. Concepted in the larger research realm of Natural-Language-Processing models, 6 the new design capacities of diffusion models anticipates a form of “natural-design-processing” that communicates from anyone or anything to anyone or anything or the prompting from any media to any media. Already current interfaces, like RunwayML, suggest a workflow that immersively lets a designer jump from sketch to 3d model; from object to its contextual collage; from image to animation; and from any media to any media. In the long view, direct communication, that is, Natural-Language-Processing ends expert labor divisions: in jargon, the middle-man, or institutionally speaking, automates third parties. Like a common thread, the emancipation from expert labor divisions weaves through the narratives of digital technologies: the Internet enables direct communication; 3D-printing direct fabrication; blockchain direct exchange 7 ; and now prompt-transformers direct design. In that line, LLI models contest the image of an architect as the expert who notates everything it takes to transform the words of a client into buildable form (Figure 1).

First fear: The automation of the architect?

For hundreds of years, and in Western cultures since the publication of Alberti’s treatise De re aedificatoria in the mid-fifteenth century, 8 architectural knowledge was defined as a notational science. Architects invented ways to notate, draw, compose, or codify—not to build (that was the role of the craftsperson) nor to house (that was the client’s realm). The role of an architect was only to mediate between both. Plans, sections, and other architectural representations quickly notated comprehensible forms on the one hand, and economic—and, later, cultural—investments on the other: the matter and methods to build. 9 Made by humans for humans, buildings had to be easy to understand. If a client’s intentions were easy to understand, more complex shapes could be built with less. One considered a symmetrical drawing beautiful because the mirrored half of an original was only half as difficult to understand, which, above all, halved the cost of planning and making. Deeply linked to the economics of a building, the composition of a building served as a kind of benchmark to value the design. Esthetics, therefore, equated with the utility of a building. Alberti identified three aspects that shaped the research in architecture for the generations to come: a beautiful design uses first a limited number of parts which keeps the needed equipment and workers on a construction site manageable. Second, those parts should have a clear form that is easy to produce and transport. Finally, those parts should be composed into a correlated arrangement using symmetries and other connotations to make it easier to comprehend the building. 8

In that line, research in architecture often follows the motivation to stretch the limits set by Alberti and the capacity to notate more with less. Digitalization stretched those limits into the infinite. Parametrics manages an infinite number of parts between two binary boundaries, mass customization allows for infinite variability of parts to the same costs, and now diffusion models allow for the instant composition of anything with anything into anything. Now, when theoretically anyone with access to a cellphone and the internet can draw anything from everything instantly, at a resolution indifferent to reality, architecture is hard to value through the expertise and the skill in notating buildings. In a profession compensated by the affordance it takes to notate, an architecture that advocates for qualities beyond common building standards can only draw from the extra labor to notate that additional value. 10 Famously asked in an interview, what his workers do in the morning while he usually paints, Corbusier responded: “carrying out newspaper and earning their living.” 11 The practice of what we culturally value as good architecture stays all too often uncompensated, carried out through a field-wide common practice of internships. 12 In this sense, if LLI models make the automation of composing buildings very directly tangible, they also hold the chance, if not the necessity, to value the work of an architect through the qualities that are left after the automation of the necessary, and that is what architects have always done: to be the advocates for the built environment.

Rather than the automation of a profession, the advent of large-scale language-image models, or more direct, “the advent of AI in architecture,” 13 advocates for a significant extension of architectural practice. Text-to-image prompters and future NLP models to come translate not only the desires of clients but anything that is easier to communicate through words—like communal decisions, legislative codes, and, in general, any kind of knowledge. When one can draw architecture as quickly as words, architecture can contribute to anything made from words with anything but words. Until today, architects and their design research are rarely part of interdisciplinary teams in collaborative decision processes, for very good reasons. Research by architecture consumes vast amounts of labor and material. The required skills needed to compose architectural representations hinder communal participation and usually produce singular, highly particular, non-reproducible artifacts. For matters of resources, inclusion, and quantic performance, architecture design is so valuable and scarce that it is hardly considered a medium to research. The speed and affordance of LLI models have the potential to change the perspectives on the profession and open the doors for new kinds of critical practice through research by architecture (Figure 2).

Affordances of diffusion models—Interdisciplinary notation tools

Despite the speed of current developments, how realistic is the advancement from the recent text-to-image prompters into text-to-building prompters? Preliminary, the question is data- and not model-centric. It poses fewer computational problems than questioning the access to data and affordances to compute those. First, the performative progress of LLI models is astonishing. From their theoretical introduction in 2015, seven years later, state-of-the-art models are trained on up to 15 billion images with 2 billion parameters 4 that generate images at two frames per second for a resolution of 256 × 256. 14 With the recent shift into video generation, AI artists like @ScottieFoxTTV begin already to demo VR environments generated in real time. 15 Second, compared to other data mediums, in particular text, the computational complexity of building construction might already be lower than the current large-scale language models process. For illustration, one of the current largest language models, Google’s Pathways Language Model (PaLM), translates languages learned with 540 billion parameters. 16 If you would train a similar model with architectural-related data, such a model could re-design the 1.5 billion existing homes worldwide, 17 each with 360 unique parameters per home. Moving beyond mass customization, mass individuation is already an inherent feature of contemporary AI systems.

The challenge of building an architectural model, and any model relying on large-scale data sets, lies in the availability of the required resources. The accuracy of LLI models depends on, and increases with, the size of the image set used for training the models. Learning from a vast amount of image-text pairs, diffusion models work hand in hand with algorithms that generate these pairs. The current labeling algorithm, CLIP, for Contrastive Language-Image Pre-training,18,19 not only label images with their content but also with contrasting, absent features. For each additional object category, the CLIP model adds a contrastive feature to all categories. Contrastive learning makes current AI systems successful but also multiplies the number of labels and resources required for training these models. 20 This method sets an upper bound on including more diverse labels, and as a result, the inherent bias of LLI models that many researchers have uncovered in recent months 21 cannot be overcome with current techniques. The start-up behind the open-source image-synthesizer, stable diffusion, had to build one of the largest computer clusters in the world to bring their product to life. 22 According to Midjourney’s CEO, David Holz, the cost of training one-model iteration is approximately one million US dollars. 3 In comparison, the average annualized award size of a US National Science Fund was $150,000 in 2021.

Besides the economic affordances that are out of scope for traditional forms of architectural practice or scholarly research, an even greater challenge is the required volume of data. Despite thousands of years of building history, architecture is a known as a data-poor discipline. 23 Architecture data sets at an architectural resolution are sparse for several reasons. Architectural notations, such as plans, are expert representations that hardly appear on social media or other web-based platforms from which large databases source today. Even within the field, architectural forms of representation differ depending on the context, graphics, and in meaning. For example, annotating a stair with an arrow in plan can indicate its up or downward direction depending on the country in which one drew the plan. One major challenge for LLI models is their lack of understanding of the underlying physical principles that govern the built environment. This can result in generated designs that are unrealistic or inefficient in terms of functionality and performance. Due to the ambiguity between different architectural drawings, the first data-driven applications appeared in large architecture practices with a sufficient, coherent oeuvre of work to train networks. An esthetic milestone is the DeepHimmelblau Research by Coop Himmelblau, which uses photos from built work to interpolate the compositional design space between those inherent to the practice oeuvre. 24 However, to not only automate but expand its own design space, Himmelblau would need access to additional data sets from other offices. Trained on a limited dataset of existing images and text, LLI models tend to be biased toward certain design styles and esthetics. This can result in a lack of diversity in the generated designs and limit the range of possible architectural outcomes. Furthermore, including only architectural representations that focus on formal expression and building construction, models trained exclusively on architectural data lack information around the life of buildings. Therefore, the generated designs may not always align with ethical and cultural values, leading to potential controversy and social implications.

Theoretically, notations from most buildings exist in the public realm. Most communities archive planning material. However, accessing these archives can be difficult; older building plans must first be digitized with expensive equipment in a time-consuming process. In addition, access to building information often needs the consent of multiple parties involved. The lack of coherence and access makes it more likely that large-scale data sets of buildings will evolve faster using non-classical representations, like photogrammetry or 3D scanning. Scan-to-BIM approaches are a well-established practice, especially for planning in existing contexts. 25 For large data sets, applications building on photogrammetry are the most promising. The real estate platform Zillow generates floor plan layouts from uploaded photos. 26 The Google Maps immersive view feature enables fully textured, three-dimensional interior walk-throughs on standard cell phones. 27 Apple presented in July 2022 the model GAUDI that generates coherent 3D scenes by learning how to link images from separate rooms. 28

Computational feasible but unaffordable economically, and without sufficient data, it is less likely to see the development of specific “large-scale language-architecture models” in the near future. Similar to LLI platforms that orient toward character modeling for gaming and movie markets, it is more likely that with increasing complexity and resolution, architects will have to adapt models developed for a different or broader context. On the other hand, do architects have to create and train large-scale AI systems to articulate a critical practice? Within a rule-based, algorithmic approach, architects had to invent algorithms that reflect architectural needs. 29 By adapting a culture of hacking and misuse of otherwise intended software, entire platforms, like David Rutten’s Grasshopper, could evolve from a single person’s initiative. Computational architects contributed significantly by developing specific algorithms for particular architectural problems that were otherwise undesignable for a larger audience without a specific plugin or tool. This is not so anymore, or at least is much more challenging, with today’s large-scale AI models that rely on the access and resources to learn from massive data sets. In theory, large-scale models, presumably universal, similar to search engines, can be applied in any context. This can open alternative ways to design critically while computationally. In computer science, with the arrival of large-scale models, there has been a shift from model-centric to data-centric research. 30 Therefore, could transdisciplinary approaches find architecture-specific approaches within large-scale models that would allow architects to articulate critical contributions through a computed architecture? (Figure 3).

LLI models and the deja vu of aesthetic histories

Diffusion models diffuse images into noise. During the blurring process, they perceive the change in the image’s appearance in relation to a meaning labeled through text. 31 By doing so, they learn esthetic relationships between meaning and appearances. The model acts as an objective observer that takes the relation between a canvas with its subject as input and compares it to other form-content-pairs by varying its perception by blurring. Diffusion models do not look for incentives within perceptual features alone, but at a higher level within the difference of perceived meaning. In short, they value esthetics. By that, diffusion models resonate and, in a way, reverse-engineer early esthetic classifications in art history. A field diverging from philosophy during the second half of the 19th century, early art historians were challenged to navigate through a new eclectic mélange of styles that resulted from global trade and, foremost, colonialism. Artworks separated from their context could not be valued with unknown histories but only through the perception of how information communicates through an artwork. Two examples of it: The historian Alois Riegl, who proposed to assess artworks based on the difference between close view and far view in his more elaborated evaluation of style. 32 He argued that different cultural groups compressed meaning to a perceivable while intellectual distance between the viewer and artwork. 33 For Riegl, a close view unveiling of meaning equated with sensual communication of content, while a far view demanded knowledge from a distance beyond a canvas, equating with rational thought. In a second exemplary treatise, art historian Heinrich Wölfflin saw differences in communicating information within the material features of painting by contrasting the linear with the painterly.34, 35 A painting drawn dominantly through lines encodes distinct, identifiable bodies while a thick brush painting merges and blurs content. Therefore, a linear drawing encodes rational information, while the painterly effect encodes sensual, poetic content. Both approaches are attractive in today’s context for two reasons: First, these formal concepts influenced modern mathematics more rigorously elaborated in their time. 36 Second, those theories re-appeared in the discussion of postdigital architecture in recent years. 37 Riegl’s and Wölfflin’s concepts serve as points of departure to develop new critical practices in architecture. 38 By that, architects respond to a crisis of visual representations that are since longer time culturally apparent but now directly unfold through the success of LLI models. These familiar concepts can help to approach LLI models architecturally. The Far View and the Painterly pioneered the decoding of text through recourse to motifs, in today’s terms: text-to-image.

The approach differs from linguistic approaches in architecture that seek direct feedback between signifying words and signified materiality. As the historian Antoine Picon pointed out, linguistic practices have a long history of failing in architecture. 39 In the past, historians have also used the failings of linguistic and primarily postmodern precedents to predict the misguidings of digital architecture because it is presumably based on code and thus on language. 40 By bipolar contrasting digital with analog techniques, protagonists, like Keneth Frampton, favor the analog because intelligence, and especially design, is a symbiosis of mind, body, matter, and media. A sketch, and particularly when using charcoal, establishes the grounding of thought with bodily poses pressing particles of coal to a canvas. Therefore, many architecture curricula still categorically reject digital design because it cannot think with matter. A digitally driven design is simply not intelligent to speak physically enough for an architectural act. Machine learning models, however, are built not from code but from neural networks, which embed information as multidimensional tensors. 23 In the case of designing with diffusion models, the implications reverse the forecast above. Instead of predictive outcomes, it is impossible to repeat the outcome of a prompt. As a designer, one not only experience an unprecedented variability to a combinatorial of terms but also the impossibility to repeat: One designs with indeterminacy. One of the reasons for it goes down to the very physics of computation: Diffusion models start from the infusion of noise into an image by computing random noise for every discrete pixel. The same random function would produce the same outcome in a strict procedural setup, but not on a GPU. GPUs are non-deterministic due to their architecture; how chips are printed; their age; and the design of the chips. Different GPUs produce different results depending on the number of kernels and their architecture. 41 Those parameters will change even with time that is why mathematicians use new GPUs for experiments. GPU drivers and math libraries help to leverage indeterminate results, and some text-to-image platforms also try to amplify a stable diffusion. Such tiny differences would not matter much if the model did not draw images from the difference in noise in the first place. Now, with words and images computed from indeterminant noise, a prompt is entangled with physics and computes discrete unique bodies. Indifferent to analog design, diffusion is entangled with physics in so many ways that computation becomes discrete: Variance turns into individuals and blocks into logs. The new default departure for design is no longer the abstract but the heterogeneity of a theoretically infinite latent space.

Yet, these latent spaces are categorically bounded by the trained model at the higher level of embedded information of comparably esthetic observations. Drawing from more than a century of criticism on the above listed esthetic concepts, one can highlight the following three compositional characteristics of a latent space: First, absorption in which the repetition of parts is irrelevant to the identity of the space; second, permutation in which the order of the parts is irrelevant to the identity of the space; and third, leveling in which the nesting of parts is irrelevant to the identity of the latent space. 42 The compositional variability of a latent space is indifferent to the repetition, order, and nesting of parts. By that, latent spaces come closest to the architectural use of the term typology, and more precisely, Anthony Vidler’s description of a third typology as “the city providing the material for classification, and the forms of its artifacts provide the basis for re-composition.” 43 Vidler referred to the work of an Italian group of architects, including Aldo Rossi. Focusing on collective housing, the group saw in a typology an open building proposal that offers resilience in form while being flexible in time and use by changing communities. 44 In resonating ways, a latent space can provide an open building proposal, with the capacity to change quantic ratios between building parts in various arrangements that can vary nested belongings of rooms between flats (Figure 4).

Generating categorial differences with elevated creativity

However, history has shown that once built by Rossi and Contemporaries, the proposed openness turned into hollow artifacts drawn and interpolated from a past that no one could anymore adapt to. Similarly, a latent space interpolates from existing projects, but who says that future living follows patterns of the past? More critically, how can one draw an inclusive living layouts from a latent space that learned only from plans that follow the single biopolitical scheme of the last century, namely, single-family homes? Urbanistically, how can a model propose carbon-negative features from cities that are not? Latent spaces represent interpolations between existing data but do not extrapolate into new categories.

In his lecture Creativity and AI, 45 Dennis Hassabis framed the incapacity of machine learning models to overcome categorial differences as the main step toward an AI model that can be defined as creative. According to Hassabis, models can interpolate and at best, extrapolate from data, but it is an open question if models will ever learn to invent. Inventing a new category would be for Hassabis an act that only creativity can achieve. Aligned in the sequence interpolation, extrapolation, and creativity, a creative act would not only jump from one category to the next but also invent its category or even different domain. When experiencing the architectural proposals generated with LLI models in the last months most authors and respondents would precisely be astounded by the creative outcome with unseen popularity for architectural work. 46 Who ever saw before houses made of clouds, 47 or feathers? 48 Of course, not the LLI models act creatively but the people who use them. With ease and without additional tools than a cellphone, architects collaborate and elevate AI-generated work to creative results. Therefore, the architects’ creativity crucially augments the affordances of LLI models and elevates them to a synthetic architecture that is nonexistent in the data sets the models learned from.

It turns out that the initial text input, or prompt-crafting, is less crucial than the selection of variations and their consecutive iteration based on compositional expertise, 49 their interpretation based on an environmental agenda, 50 or mapping of cultural injustice. 51 In all cases, architects use LLI models creatively while intentionally articulating their architectural position, innovation, and critical practice. A previous generation of computational architects would not have considered design intention possible without developing their algorithms and tools. 52 Through a popularity ranking of social media posts with AI artwork typical hashtags, one can observe that people with a background in architecture design nearly all high-ranked architecture proposals. Also, in most architects’ oeuvre, the generated content aligns with previous work in a way that it continues, enhances, or complements but rarely breaks with the designer’s practice. Therefore, one can argue that architecture as a profession, history, and practice matters. Without the background, skills, and experience of the individuals who generated images, the work would not have been innovative. Furthermore, although these models learned from data from questionable sources, or images of copyright-protected artworks, the models can only interpolate between existing images. It needs not only collaboration but also the expertise of a person to see new categorical differences to draw with LLI models in innovative ways. Very intentionally pulled from an endless sea of variabilities, generated artwork is not generic content of an AI model but authored artwork (Figure 5).

Synthetic architecture for advocating architects

Architects can now use LLI models to participate in anything made of words with anything but words. It was only recently that architecture design was considered a feasible medium to research. Yet, paradoxically, the most pressing issues of our time relate to the built environment. We rarely know how we could arrange dense, while rich environments. We rarely know how to integrate circular harvesting activities into buildings. We rarely know what their economic or social-cultural implications are. With LLI models, architects can contribute to issues that were previously too complex to address by generating a synthetic architecture, synthetic in multiple senses. First, the proposals are synthesized by fusing various inputs, like styles, materials, and spatial features. Second, they are synthesizing latent spaces comparable to previous notions of typology. Third, due to their universal applicability, LLI models allow to synthesize with and include non-standard and non-building, data into an architectural space. Fourth, they are synthetic, and nonexistent in the data sets of the models.

However, current LLI models do not generate coherent building ensembles, and they can only offer an esthetic experience of an AI infused design practice. The possibility of large-scale models generating a building that incorporates multiple mediums, such as text and image, remains an open research question. Above all, the models inherently lack data from the medium of which buildings are made, the real physics. This limitation arises from a philosophical view of embodiment that posits intelligence contingent upon existence in the real world and contact with physical objects. 53

Yet, large language models have exhibited commonsense physics, given the vast amount of text describing physical relationships. The computational researcher Blaise Aguera y Arcas has shown that these models are capable of drawing causal inferences between different languages and media. Arcas argues that large-scale language models demonstrate that language comprehension and intelligence “can be dissociated from all the embodied and emotional characteristics.” 54 As today’s chatbots like ChatGPT can write in various languages and even generate CAD scripts, it may be possible to use large-scale models to design buildings holistically, provided the necessary data is available (Figure 6).

In the recent past, synthetic data has played a crucial role in training deep learning models. 55 The typical workflow placed three-dimensional objects within a simulation environment to generate images of unregistered objects in multiple contexts. 56 Architects now have the opportunity to extend the concept of synthetic data by generating unseen futures. Adapting McGough’s general list of advantages of synthetic data, 57 synthetic architectures could help generate data by defining latent spaces at negligible resources, costs, and time. One can not only generate new buildings but test those as building typologies within an invite variability and synthetic contextual data up to its extreme to so-called “black swan” events that are rare but significant, like breaking structures or extreme weather conditions implicating climate change. The speed enables architects to build data-driven hypothesis-test design workflows. Analog to a sketch or diagram, the design hypothesis prospects a possible design space but additionally it can be tested in synthetic environments including non-building data into an architectural space. External to the design process, generated outcomes indifferent to reality communicate to broader audiences that allow to include external judgments, and convergent insights into future studies. Reciprocal learning between human and AI is extended to a wider audience opening the loop between designer and model (Figure 7).

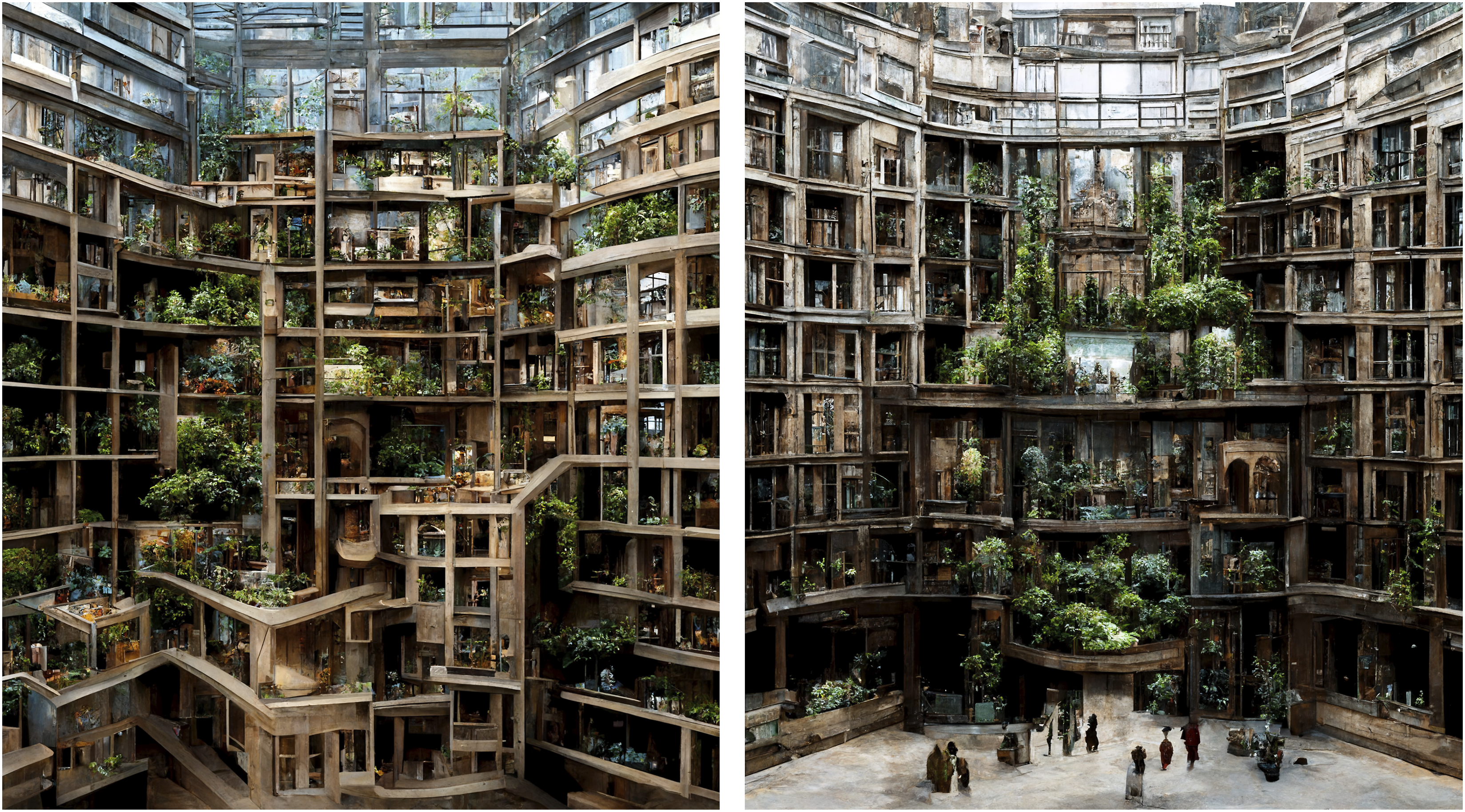

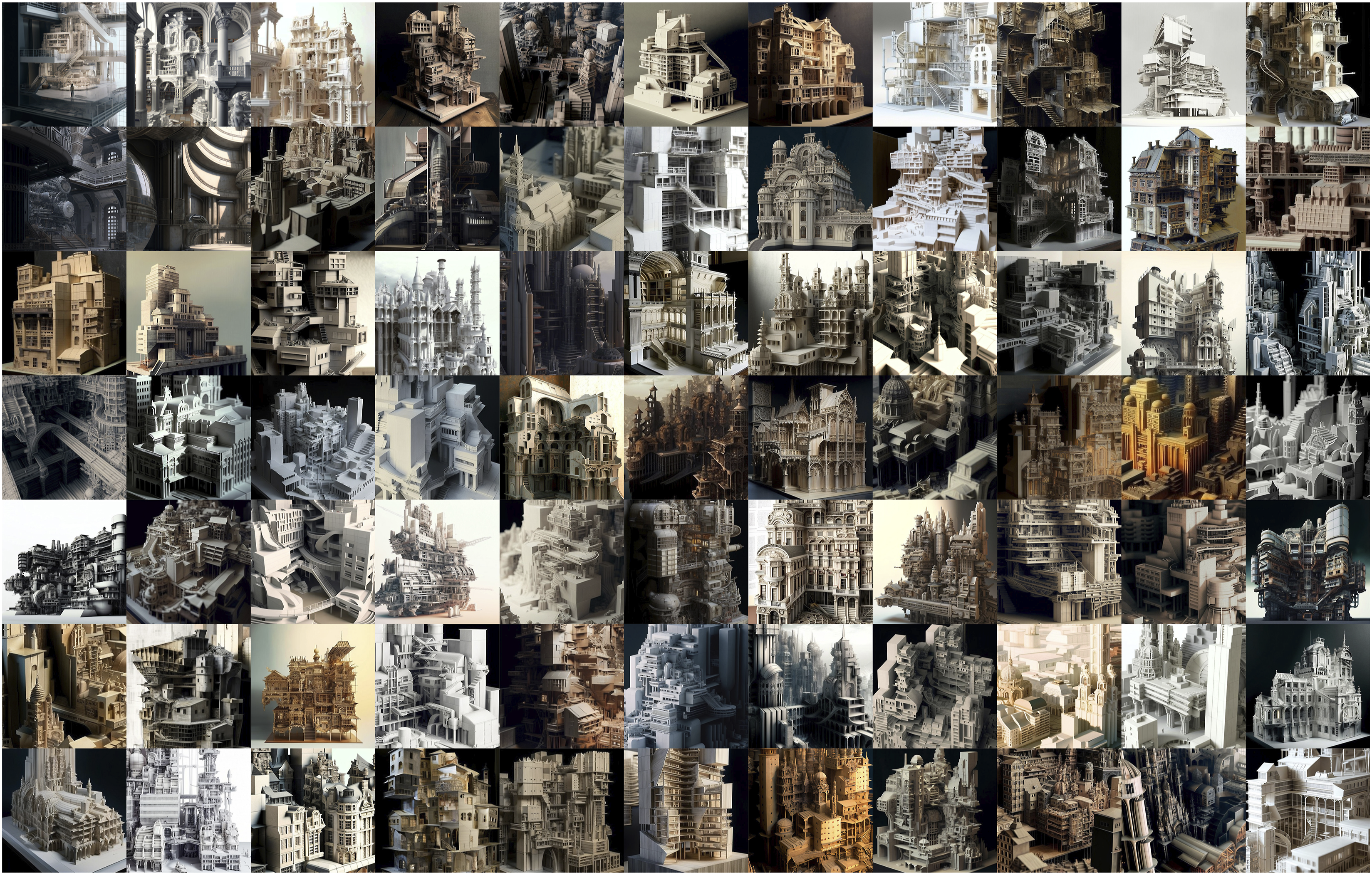

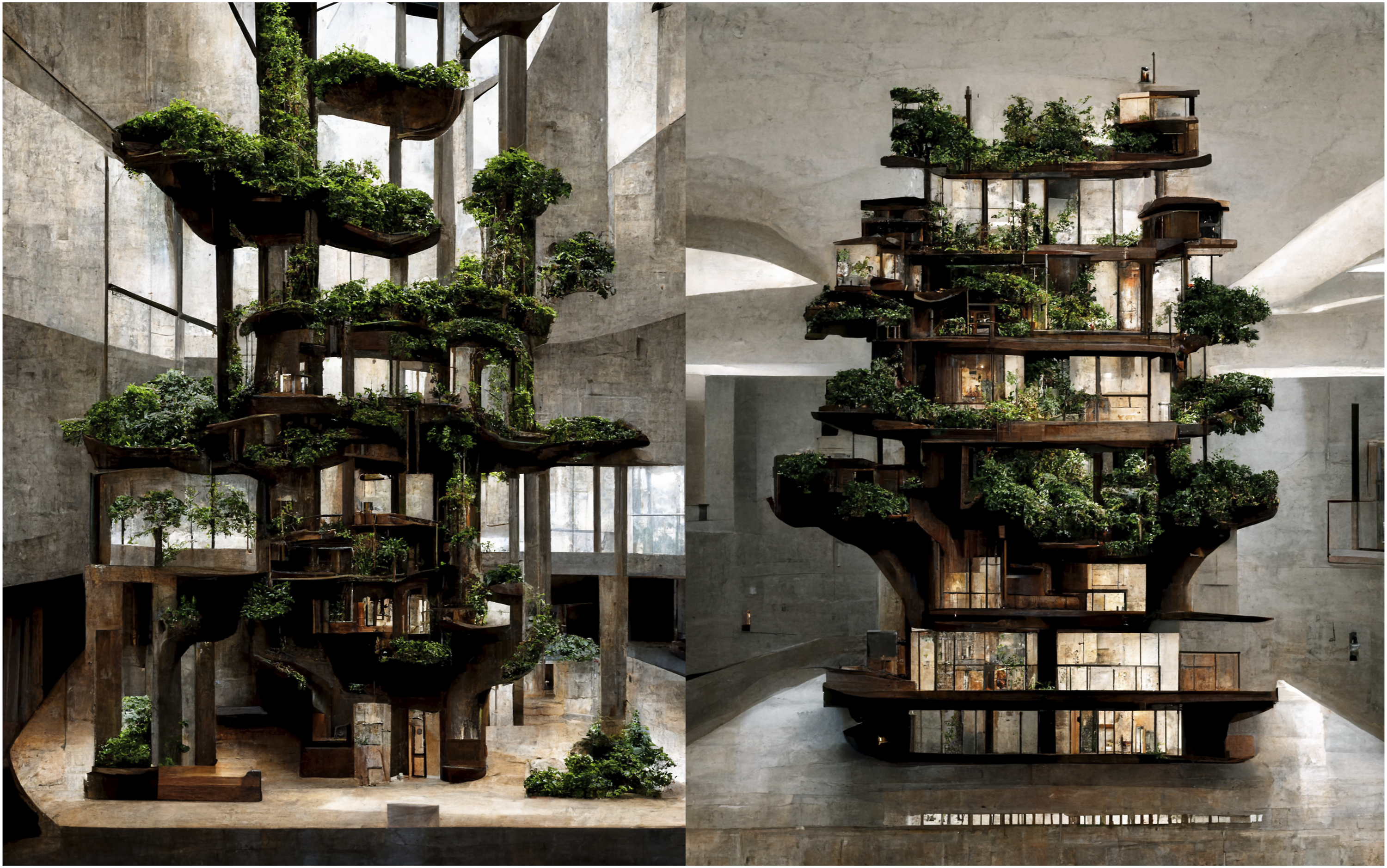

Designing with a model that has learned from anything makes it hard to exclude artifacts that are commonly included in contexts. Naturally, one of the new features of AI generated renderings became planted vegetation everywhere. When plants are new features in architectural representations at a time of environmental crisis, it says a lot of a field that defines itself through the expertise to notate the built environment. Large-scale language-image models trained on billions images created by millions of people advocate for an environmentally inclusive architecture, and critically elevated architects can help to advocate for what it takes to realize such a categorical different future (Figure 8). Selection of generated architecture models indifferent to reality and indifferent to analog model making. Diffusion model: Midjourney V3 test, October 3, 2022. Generated section models through urban blocks made of mass-timber remixed with large-scale vegetation. Calculated by processing time, each upscaled image costs 4 Cent or one GPU minute in Midjourney which equates to an hourly pay of $2.40. Diffusion Model: Midjourney V2, July 25, 2022. Urban interiors synthesized from London. Diffusion model: Midjourney V3, July 27, 2022. Typological remix with contextual considerations. Both images fuse urban farming with housing types situated in Bangkok. The left image proposes urban farming as a family business integrated into a one-family home, the right image shows a cooperative approach to filling the gap between two multi-family housing blocks. Diffusion model: Midjourney V3, August 29, 2022. Prompt: “Photo of a large architecture model. –seed 0815.” Selection of first-generation variants, diffusion model: Midjourney V4, November 15, 2022. Synthesizing treehouses, images from a study fusing trees into residential urban living typologies. Diffusion model: Midjourney V3, August 3, 2022. Designing cities with cities, from top left to right bottom: Singapore, Seoul, Bangkok, Barcelona, Barcelona, New York, Tokyo, Sao Paolo, Sanaa, Los Angeles, Rome, Bangkok, Hong Kong, and Vienna. Diffusion model: Midjourney V3, July - September 2022. Architecture, foresting architecture, generating a literally synthetic architecture built from forests, with forests, by forests. Diffusion model: Midjourney V3, September 4, 2022.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.