Abstract

This article describes the implementation of a digital case-exploration tool and its use in teacher education. The cases were constructed according to empirically based psychological theories and represent school-related scenarios in which the students could choose among options for action. After selecting an action, its psychological consequences are presented in text or video including theoretical explanations how and why the selected action would lead to the presented consequences. We present first quasi-experimental evaluation data demonstrating the tool's usefulness regarding learning outcomes, students’ active participation, and motivation.

Prospective schoolteachers expect that psychological content taught at university is not only relevant for school settings but also “ready to use” in practice. However, teaching psychology at university often focuses on understanding well-established theories and aims at overcoming everyday misconceptions of psychological issues (e.g., Menz et al., 2021). These often diverging demands can be reconciled by letting students explore cases situated in the school context and referring to empirically based theories (Zumbach & Mandl, 2008).

The purpose of this report is to (a) describe a new digital case exploration tool we developed for teacher education programs and (b) present results of a first evaluation.

Concept of the Digital Case Exploration Tool

Our case exploration tool is inspired by the concept of case-based learning (CBL). CBL is a student-centered teaching/learning approach that requires students to actively participate in analyzing and discussing cases “presented in a context students are likely to encounter in practice” (Nicklen et al., 2016, p. 195). Recent meta-analyses show that CBL improves psychology learning (e.g., F. Wu et al., 2023) and learning motivation (Wijnia et al., 2024). Whereas CBL is often applied in medical or nursing psychology (F. Wu et al., 2023) it has also been recommended for teacher education (e.g., Baier et al., 2021; Shulman, 1992; Zumbach & Mandl, 2008). More specifically, our case exploration tool is characterized by the following features:

Psychological contents: The main purpose of this tool is to foster teacher students’ knowledge about learning and motivation, especially about causal, hidden and long-term effects of teacher behavior and the application of this knowledge in school contexts. The tool comprises 12 cases using written text, audio, picture, and video components. The cases address operant conditioning, retrieval practice, reference norms in the assessment of academic performance, self-reference effect, Mayer's (2022) principles for the design of learning materials (personalization, pretraining, coherence, and spatial contiguity principle), learning from behavioral models, causal attribution, self-regulation, context-dependent memory, distributed versus massed learning. School-related cases: First, a description of a situation at school is given. For example, a student with specific abilities, goals, academic experience, etc., has failed a test. Then, several options are presented for intervening in this situation. For example, the learner is asked to take the role of the teacher and choose a feedback to the student from a list of different feedback options. Third, based on experiments and theories with a solid empirical basis the potential consequences of the chosen option are described. For example, the most probable effects a specific feedback option will have on the student's emotion, motivation, and further learning activities are explained. Active exploration: Learning from the cases requires active exploration by learners. After presentation of the initial situation, the learner is asked to choose among different actions that could be taken in this situation. In the previous example, the learner reads different types of feedback that can be given and chooses one of them. That is, learners do not receive any further information before they considered different interventions and actively opted for one action. Thus, students can try out and explore one or more of these options and receive immediate feedback in that they are faced with the probable consequences of the chosen action. This should help learners to (a) familiarize themselves with authentic school-related situations from a psychological perspective (b) decide how to intervene, (c) learn about the potential consequences of the chosen actions, and (d) understand why these consequences will emerge. Technical implementation: All components of a case (text, pictures, audio, and video sequences) are digitally represented on the university's moodle-based learning platform. Case representations make use of H5P elements (from the H5P group, e.g., branching scenario, drag the words, course presentation and essay). Students can access the case exploration tool at any time as often as they wish. No supervision is required. Therefore, using this tool allows for self-regulated and evidence-based learning strategies such as distributed learning (e.g., Bahrick, 1979; Cepeda et al., 2006), self-testing (e.g., Roediger & Karpicke, 2006; Schwieren et al., 2017), or successive relearning (e.g., Rawson et al., 2013). No user data is stored. Implementation in the curriculum: Psychological case explorations are part of the teacher education programs at the University of Münster, and the case exploration tool is used in seminars on educational psychology. The tool can be used within seminars (e.g., for applying previously discussed theories of learning and motivation to school-related practice) or out of class (e.g., for individual learning). The digital case exploration is particularly suitable for learning about long-term psychological processes. The testing effect, for example, is a robust finding, demonstrating that taking tests during learning phases facilitates later long-term memory retrieval (e.g., Roediger & Karpicke, 2006; Schwieren et al., 2017). Thus, the testing effect arises with considerable delay after learning. Similarly, positive learning outcomes of distributed learning usually arise after longer periods of time (Bahrick, 1979). Therefore, several effects on learning and motivation cannot be demonstrated/experienced within a single seminar session. In the digital case exploration tool, however, longer periods can be simulated by introducing a leap in time using statements about time passed by (e.g., “a few days later”) when describing action consequences.

Evaluation of Using the Digital Case Exploration Tool

We evaluated the tool in summer semester 2022 by comparing learning outcomes and motivation in two educational psychology seminars in the teacher education program of the University of Münster —one seminar using the digital case exploration tool, the other not using it. We expected higher learning outcome and motivation when working with the case exploration tool.

Method

Participants and Design

The seminar using the digital case exploration tool (experimental seminar) encompassed n = 27 students, the seminar not using it (control seminar) n = 26 students. Please note that the sample sizes for individual measures varied (see the Procedure section).

Both seminars consisted of 12 weekly sessions, covered the same contents (learning and motivation in educational settings) and were given by the same instructor. The case exploration tool did not offer additional contents, but provided the opportunity for actively exploring cases. In the experimental seminar, all cases but one were explored online out of class. Questions and comments on the cases were discussed in class. The corresponding time in the control seminar was used for other activities such as clarifying questions, discussing more detailed explanations, extended discussions, or group work on the same contents as in the experimental seminar. Measures of learning outcome (posttest) and motivation (pre- and posttest) were assessed as dependent variables. Prior knowledge was measured as a control variable. For exploratory purposes, results of the faculty's routine teaching evaluation were compared between experimental and control seminar.

Measures

Prior knowledge was measured with eight open-answer questions requiring short answers addressing technical terms. For each item up to 1 point was awarded. Two independent raters evaluated each answer and agreed on 94–100% of their ratings. Ratings were averaged to calculate the percentage of achieved points.

To fulfill the study requirements, participants took an obligatory written test. Performance in 16 closed- and four open-answer items was compared between experimental and control seminar. As closed-answer items, confidence weighted true-false (CWTF) items were used (Dutke & Barenberg, 2015). Participants were presented with one-sentence statements and indicated whether (a) they were sure, that the presented statement was incorrect, (b) they think that the statement was incorrect, but they were unsure, (c) they think that the statement was correct, but were unsure, or (d) that they were sure that the statement was correct. One point was awarded for a correct and confident response, 0.75 points for a correct but unconfident response, 0.25 points for an incorrect unconfident response, and 0 points for an incorrect confident response or no response. As a closed-answer measure, we calculated the percentage of points achieved per student.

The four open-answer items also contained three case descriptions (not used for digital case exploration) that students were asked to interpret referring to empirical evidence and using technical terms. A total of 14 points could be awarded. The percentage of points achieved was used as an open-answer measure.

In addition to the obligatory test, to evaluate the learning outcomes in a more differentiated way, we used an additional pencil and paper test, which included another set of 24 CWTF items. Twelve items referred to topics covered in the digital case exploration tool, whereas the other half referred to topics not covered in the case exploration tool. For both kinds of items, we calculated the percentage of correct answers. Further, we calculated three metacognitive indices proposed by Barenberg and Dutke (2019): (a) the absolute accuracy of confidence judgments (ranging between 0 and 1) indicating the precision of the confidence judgments, with higher values indicating higher precision; (b) discrimination indicating how reliably participants discriminated, based on their confidence judgments, between correctly and incorrectly answered items (ranging between 0 and 1), with higher values indicating better discrimination; (c) the bias of the confidence ratings, with positive values indicating overestimation of memory performance and negative values indicating underestimation, ranging between −1 and +1 (see the Appendix for formulas).

To further test the students’ ability to apply their knowledge, the additional test also included single-choice application items (three response options), 10 related to topics covered by the case exploration tool and nine related to different content covered in the seminar. For both types of items, the percentage of correct responses was calculated.

To measure motivation, we assessed amotivation, identified motivation, and intrinsic motivation each with three translated and adapted items from the Work Tasks Motivation Scale for Teachers (Fernet et al., 2008) and self-efficacy with 10 items adapted from the Scale for Teacher Self-Efficacy (Pfitzner-Eden, 2016).

Further, we obtained data from the routine teaching evaluation, in which all courses of the Faculty of Psychology at the University of Münster were evaluated (https://www.uni-muenster.de/imperia/md/content/PsyEval/instrumente/mfe_sr.pdf). We used the means of five scales (lecturer and didactics, demand, participation, materials, and subjective learning success) to compare experimental and control seminar.

Procedure

In the beginning of the semester, students were assured that providing data (beyond the obligatory test) was voluntary, anonymous, and will not influence the evaluation of their performance in the obligatory test. Informed consent was provided by the participants. The pretest measures (prior knowledge, amotivation, identified and intrinsic motivation) were obtained online immediately after the start of the semester. The posttest measures of motivation were assessed online at the end of the semester. The routine teaching evaluations took place a few weeks before the end of the semester. The posttest measures of learning were obtained in one of the last seminar sessions (additional test) or after the end of the semester (obligatory test). Students were informed that the additional test provided a good opportunity to prepare for the obligatory test. As the pretest measures, the obligatory test, the additional test, the other posttest measures, and the teaching evaluations were distinct assessments, they involved different sample sizes as not all students participated in each assessment. The specific sample sizes are detailed in the Results section.

Statistical Analysis

The mean values of prior knowledge and all variables measured in the end of the semester were compared between the experimental and the control seminar with independent-group t-tests. As we expected positive effects of digital case exploration on learning we performed one-tailed tests for learning outcome variables assessed in the end of the semester. The motivation measures, assessed in the beginning and at the end of semester, were subjected to 2 × 2 mixed ANOVAs with the factor seminar (experimental vs. control) and time (pre vs. post). We expected interactions demonstrating a more positive effect from pre to post for the experimental compared to the control seminar (one-tailed test).

Results

A comparison of experimental (n = 23 for this measure) and control seminar (n = 25) showed no difference in prior knowledge, t(47) = 0.60, p = .552.

Learning Outcomes

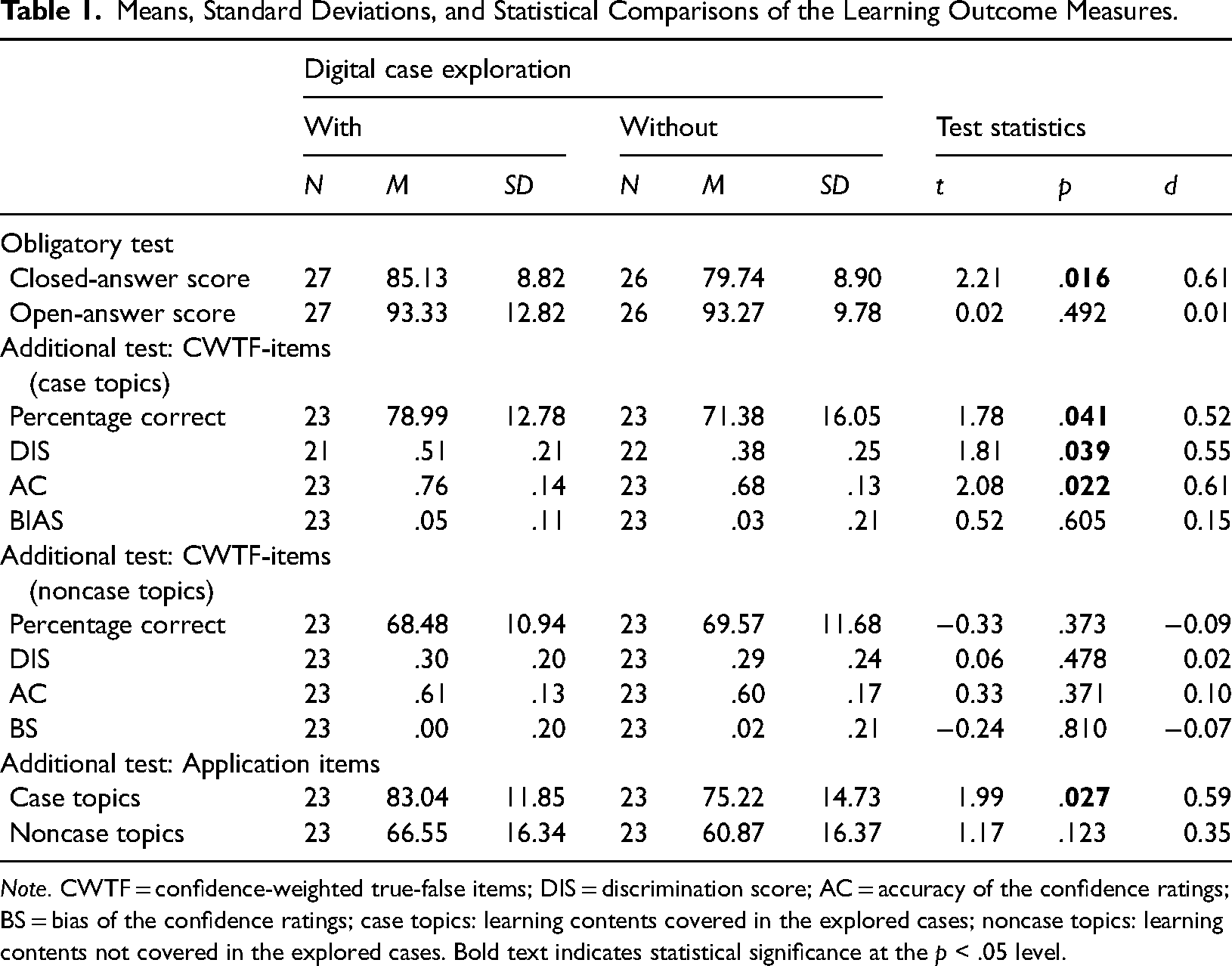

Means and standard deviations of the learning outcome measures and results of the statistical tests are indicated in Table 1.

Means, Standard Deviations, and Statistical Comparisons of the Learning Outcome Measures.

Note. CWTF = confidence-weighted true-false items; DIS = discrimination score; AC = accuracy of the confidence ratings; BS = bias of the confidence ratings; case topics: learning contents covered in the explored cases; noncase topics: learning contents not covered in the explored cases. Bold text indicates statistical significance at the p < .05 level.

The closed-item score of the obligatory end-semester test was significantly higher in the experimental compared to the control seminar. The open-answer score of the obligatory test surpassed 90% in both seminars and did not differentiate them.

In the additional test, the CWTF items focusing contents also covered in the case exploration tool showed significantly higher performance in the seminar with case exploration than in the control seminar. The discrimination score indicated better discrimination between correctly and incorrectly answered items in the experimental than in the control seminar. The absolute accuracy of the confidence judgments was significantly higher in the experimental than in the control seminar. The same measures resulting from the CWTF items on topics not covered in the case exploration tool did not show any significant difference between the experimental and the control seminar.

The same pattern of results was observed for the percentage of correct responses on the single choice application items in the additional test: Performance in items on topics covered in the explored cases was significantly higher in the experimental seminar than in the control seminar. For the application items on topics not covered in the explored cases, no significant difference was found.

Motivation

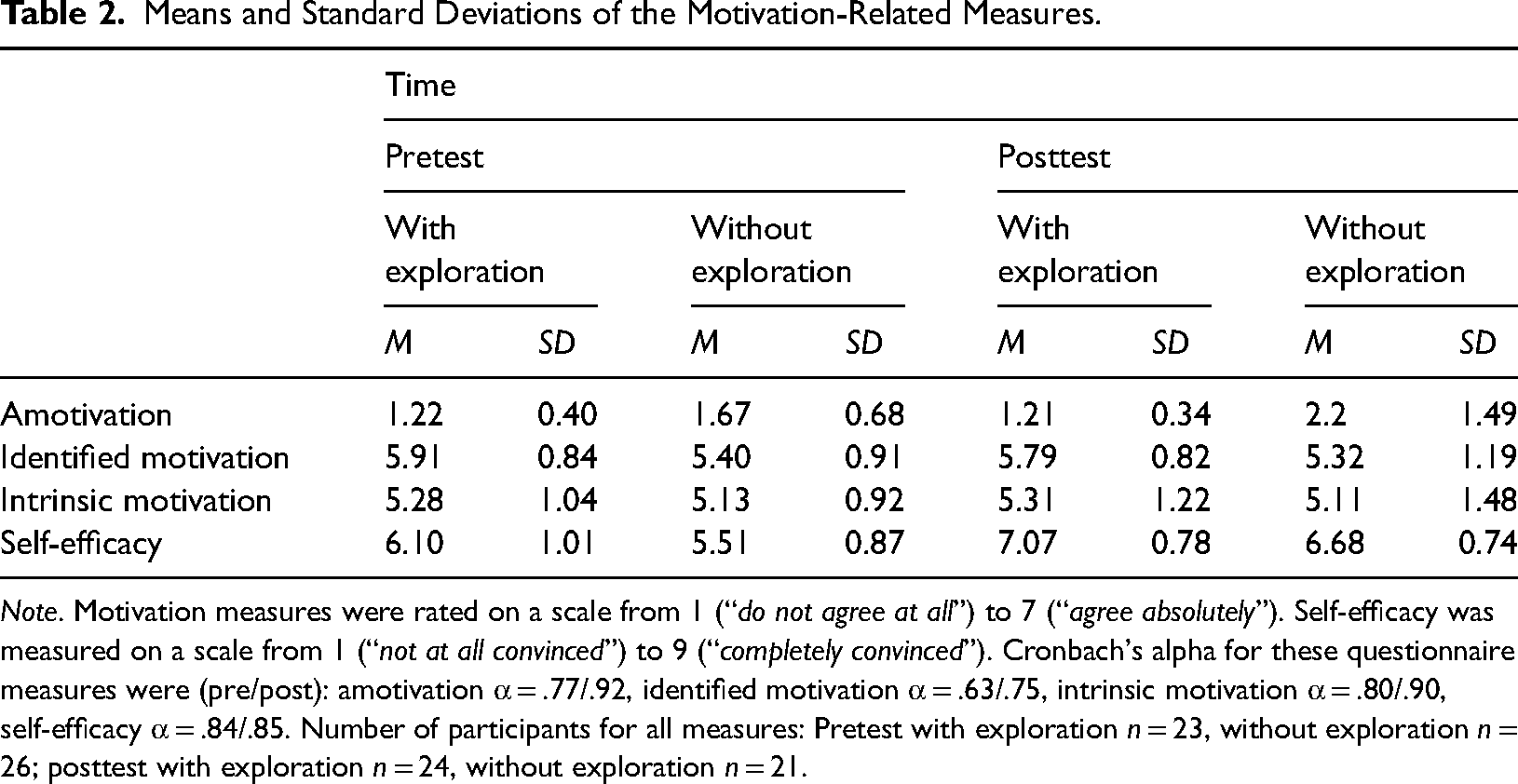

Means and standard deviations of the motivation-related measures are indicated in Table 2. For a motivation, the ANOVA (based on n = 20 in each group, including only participants who participated in both the pre- and posttests) yielded a main effect of the group factor, with more amotivation in the control seminar than the experimental seminar, F(1, 38) = 11.46, p = .002, ηp2 = .232. The interaction did not reach two-tailed significance, F(1, 38) = 3.30, p = .077, ηp2 = .080. Given our design and directed hypotheses this test is equivalent to a one-tailed t-test and can be considered significant in a one-tailed test (e.g., Gurbuz et al., 2023). Pairwise t-tests revealed that amotivation significantly increased from pre to post, t(19) = 1.82, p = .043, d = 0.41, whereas amotivation in the experimental seminar did not change.

Means and Standard Deviations of the Motivation-Related Measures.

Note. Motivation measures were rated on a scale from 1 (“do not agree at all”) to 7 (“agree absolutely”). Self-efficacy was measured on a scale from 1 (“not at all convinced”) to 9 (“completely convinced”). Cronbach's alpha for these questionnaire measures were (pre/post): amotivation α = .77/.92, identified motivation α = .63/.75, intrinsic motivation α = .80/.90, self-efficacy α = .84/.85. Number of participants for all measures: Pretest with exploration n = 23, without exploration n = 26; posttest with exploration n = 24, without exploration n = 21.

Analyzing identified motivation yielded only main effects, but no interaction indicative of an intervention effect. For intrinsic motivation neither significant main effects nor a significant interaction was found.

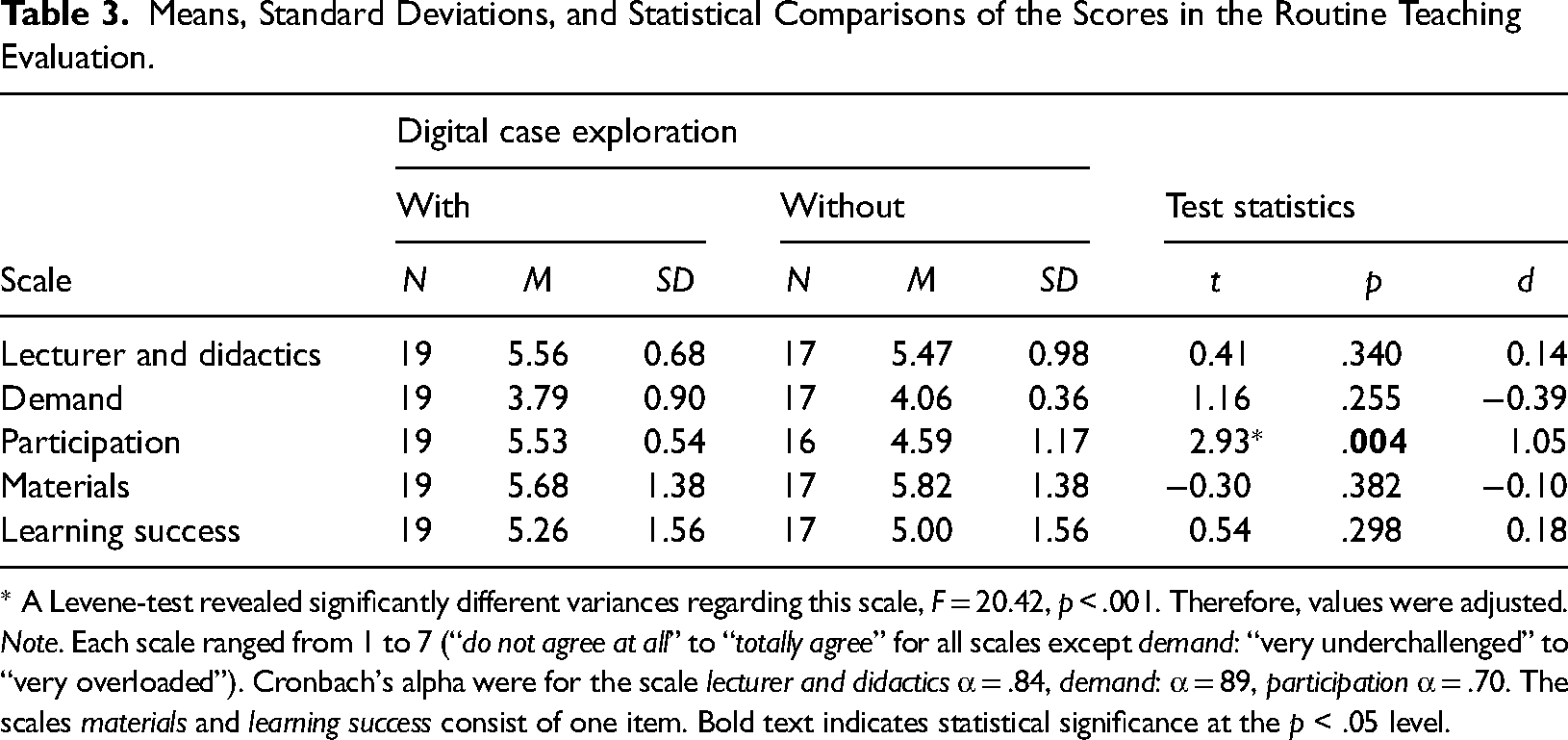

Means and standard deviations of the routine teaching evaluation scales are shown in Table 3. In only one scale a significant difference appeared. In the experimental seminar, students reported more active participation than in the control seminar.

Means, Standard Deviations, and Statistical Comparisons of the Scores in the Routine Teaching Evaluation.

* A Levene-test revealed significantly different variances regarding this scale, F = 20.42, p < .001. Therefore, values were adjusted.

Note. Each scale ranged from 1 to 7 (“do not agree at all” to “totally agree” for all scales except demand: “very underchallenged” to “very overloaded”). Cronbach's alpha were for the scale lecturer and didactics α = .84, demand: α = 89, participation α = .70. The scales materials and learning success consist of one item. Bold text indicates statistical significance at the p < .05 level.

Discussion

Students benefitted from the digital case-base exploration in several aspects and demonstrated higher learning outcomes compared to the control seminar. These benefits are reflected in the closed-answer items of the obligatory test (but not in the open-answer questions), in the additional test for both CWTF items and application items (when the items referred to contents in the explored cases, but not when they referred to learning contents not covered by the case exploration tool). We conclude: The digital case exploration tool supported learning and applying learned contents to new cases. However, transfer seemed to be limited to new cases sharing topics with the explored cases.

Remarkably, the positive effect emerged also with regard to metacognitive monitoring. Participants in the experimental seminar monitored their knowledge more accurately and discriminated more precisely between correctly and incorrectly answered items—on the basis of their confidence judgments.

Whereas the case exploration tool had no effects on self-efficacy, intrinsic and identified motivation, it seemed to alleviate the (over the semester) increasing amotivation observed in the control seminar. Regarding routine teaching evaluations, students rated both seminars similarly positive but reported higher active participation in the seminar using the case exploration tool compared to the control seminar.

Summarized, students benefitted from digital case exploration with regard to learning and applying knowledge, although transfer effects are limited. This highlights the potential of the case exploration system for enhancing psychology education for future teachers.

Limitations and Future Perspectives

An important limitation is that we compared two complete seminars without randomized assignment of participants. Due to the study regulations at our university randomly assigning students to different groups was not feasible. Despite this disadvantage, we opted for a test in an authentic teaching setting, before partializing out specific aspects for more stringent experimental tests. However, the quasi-experimental procedure leaves room for various alternative explanations that need to be explored in future research. Moreover, the sample size was small, which may also explain the absence of effects on motivation measures.

In this first evaluation, we did not focus on investigating what precisely caused the effects. Planful exploration as well as “trial and error” might have contributed to the results. Alternatively, the increase in material and repetition in the experimental seminar might also explain some results and need to be controlled in future investigations.

For evaluating the present results, it would be informative to have data about the amount of exploration performed by the students. This might be an interesting intervening variable, as the opportunity for exploration does not warrant its utilization. However, it was not possible to track individual exploration behavior in the university's digital learning platform using H5P elements.

As several main effects showed unmoderated advantages for the experimental seminar pre and post, we cannot rule out that effects were due to preexisting differences, although we controlled for prior knowledge. In future studies a stricter control of individual differences is advisable. Further, future research may compare digital case exploration (that is user-friendly and allows for the use of video) with analog case exploration (i.e., less intricate).

With regard to the further development of the tool, ideas to increase potential immersion might be considered, such as including virtual/augmented reality settings (B. Wu et al., 2020).

Overall, the first evaluation of the digital case exploration tool is deemed promising to invest further effort in optimizing the tool and generating and evaluating similar tools for other domains of psychology education.

Footnotes

Acknowledgments

Special thanks to Annelen Müller, Catharina Krüger, Jette Althaus, and Nele Spangenberg for video production and help with designing the cases.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the State of North Rhine-Westphalia (digiFellows).