Abstract

Psychological literacy refers to the ability of a psychology student to use psychological knowledge, rather than merely learn it, in the context of personal, social, and organizational issues. Embedding psychological literacy in assessment is a critical step in helping students develop this capacity. This report presents an innovative applied scenarios assignment for a social and developmental psychology module, designed to challenge students to make theoretical and evidence-based explanations or suggestions in relation to novel real-world situations. Across the scenarios, students are required to respond and adapt to a range of tasks and purposes and effectively communicate their knowledge to diverse audiences. Student evaluation (n = 142) of their experiences and perceived competencies developed from working on the scenarios compared to traditional essay assignments were analyzed. Findings suggest students valued the authentic nature of the assessment and the challenges it presents. They recognized the unique skills they developed, including application and communication skills, and felt that they gained better understanding of psychological content as a result. We hope this report will inspire readers to design similar assessment tasks that provide students with opportunities to practice, and thus develop, their psychological literacy.

The importance of psychological literacy, “the general capacity to adaptively and intentionally apply psychology to meet personal, professional and societal needs” (Cranney et al., 2012, p. iv), as a graduate attribute in psychology programs are now well recognized in the pedagogical literature (for reviews see Cranney & Dunn, 2011; Hulme & Cranney, 2022) and increasingly in national standards for psychology teaching (e.g., in the UK see, QAA, 2019 and in the USA, see APA, 2013). Given the critical role of assessment for learning (Boud & Falchikov, 2007; Sambell et al., 2013), the need to design assessment tasks that give students the opportunity to practice and to develop their psychological literacy is central to students achieving this learning outcome (Dunn et al., 2011; Hulme, 2014; Trapp & Akhurst, 2011; Trapp et al., 2011).

With this strategy in mind, we carried out a review of assessment across the psychology curriculum in our school with the aim of diversifying our assessments beyond the traditional essay. The goal was to enable students to develop a broader range of academic and employability skills through assessments that challenge students to (a) apply their knowledge of psychology to real-world issues and (b) effectively communicate psychological knowledge to diverse audiences. These two key components of psychological literacy (Dunn et al., 2011; McGovern et al., 2010) are not typically acquired through traditional essays. We therefore sought to take an authentic assessment approach and design assessments that reflect real-world contexts, involve meaningful tasks that are relevant to everyday life and/or the workplace, and lead to outputs that can be viewed as worthwhile for third parties as well as the student and teacher (see Villarroel et al., 2018). This approach has been shown to promote students’ deep learning and motivation (e.g., Villarroel et al., 2018; 2019).

In this report, we focus on our innovative Applied Scenarios assessment, designed for a core Year 2 module (Social and Developmental Psychology) that affords students the opportunity to develop both application and communication skills. (For other, recently developed, creative assessments with a similar focus on psychological literacy, see Elcock & Jones, 2015; Pownall et al., 2021; Riser et al., 2020.) Here, we outline the assessment, the resources we developed for supporting students, and findings from an evaluation of students’ experiences and perceived developed skills related to the assessment.

Applied Scenarios Assessment

The Applied Scenarios assessment requires students to provide theoretical and evidence-based explanations and suggestions in relation to specific real-world situations and problems. It aims to shape the way that students interact with the module content by encouraging them to make the links between theory and real-world applications. Psychology's relevance to society and how it can be used to address timely, global problems therefore takes a prominent role in the learning process.

Our use of scenarios is aligned with previous proposals for assessing psychological literacy through a variety of everyday complex scenarios that require students to apply their knowledge of psychology and their critical thinking to provide an informed and reasoned response (Bernstein, 2011; Halpern & Butler, 2011; McGovern et al., 2010). Across scenarios, there is a range of tasks and purposes that students need to respond and adapt to, involving a variety of formats and intended audiences. These include but are not limited to writing the text for a research-focused website for parents, explaining counterintuitive psychological phenomena to individuals from the general public, writing a pamphlet for teachers about how best to support children with a specific learning difficulty, and providing analysis for work or health organizations. To respond to the scenarios, students are encouraged to demonstrate and further develop higher-order skills including application, analysis, and evaluation (see Anderson et al., 2001).

A key aim was that the assessment would be meaningful for students, which is an essential feature of authentic assessment (Ashford-Rowe et al., 2014; Villarroel et al., 2018). The scenarios therefore simulate real situations where third parties stand to benefit from students’ knowledge. By requiring students to address their answers to different target audiences (including academics, teachers, parents, and organizations), we challenge them to tailor their communication appropriately, thus engaging this important aspect of psychological literacy (McGovern et al., 2010). Moreover, students are encouraged to be sensitive to the needs of the audience not only in terms of the language used but also in terms of the content provided. We restricted the word count to 750 words to challenge students to write concisely and select the most relevant and useful content for the target audience.

Semester 1 covers social content and Semester 2 developmental content, each delivered in ten lectures lasting two hours. At the end of each semester, students’ knowledge of the material is assessed via MCQ exams (worth 1/3 overall module grade). In addition, students are required each semester to choose two applied scenarios from a choice of six as the coursework component of the assessment, which they submit at the end of each semester (worth 2/3 overall module grade). In the Social Scenarios, students are asked to use social psychological theory and research to explain, or offer evidence-based guidance relating to, social processes in a variety of settings such as informal groups, schools, and organizations. In the developmental scenarios, students are asked to consider how psychological research relating to developmental mechanisms and neurodiversity can guide childcare and educational practices or policy. Below are two examples of our applied scenarios, one for the social and one for the developmental semester of the module, respectively:

By providing two scenario assessment points (one per semester), students are given an opportunity to reflect on the markers’ feedback on their first pair of scenarios and use it to develop their skills when working on their second pair of scenarios.

We developed a range of resources to support students with this alternative form of assessment. Most of these resources are designed to be available to the student at the time of their choosing and throughout the module. These include a video overview about the assessment and its requirements; a marking rubric that shows students explicitly what the assessment criteria are and their respective weighting; annotated example answers; a workshop on communicating with nonacademic audiences along with resources that demonstrate good practice examples of research summaries and blogs for parents and teachers written by academic researchers; FAQs document; assessment reports from previous years summarizing general feedback points; a tutorial plan that is used by academic tutors to support their tutees’ preparation for this assessment, and ongoing online forum support from the teaching team throughout the duration of the module.

Learning Outcomes

Students’ marks on this assessment over the last few years have been slightly higher compared to their performance on the traditional assessments previously assigned to this module, which included a coursework essay (for the developmental content) and an essay under exam conditions (for the social content). For example, in the academic year 2020–2021 (the first year in which the Applied Scenarios assessment was introduced in its current format), mean marks obtained across the social and developmental scenario assessments were as follows: M = 64.0, SD = 6.7, n = 232. These results are modestly but significantly higher than the 2018–2019 mean marks for the traditional social and developmental essay assessments: 2 M = 62.1, SD = 8.3, n = 228, t(458) = 2.80, p = .005, Cohen's d = .25. (Note that in the UK a score of 70% and above constitutes a “first-class” grade, which is the highest grade awarded and is defined as excellent to outstanding. Scores ranging from 60% to 69% are in the “second class, upper division” [or 2:1] classification and defined as good to very good.) This overall improvement in outcomes for the applied scenarios is encouraging but may be due to one component of the traditional assessment taking place under exam conditions, which is highly likely to lead to worse performance compared to coursework assessment. Nevertheless, we can at least be confident that attainment and outcomes have not been harmed by the change in assessment, with the benefit of giving students the opportunity to broaden their skills toolkit.

Student Evaluation

On the day of submission of the second assessment (developmental applied scenarios) in May 2023, we conducted an evaluation of students’ views on the applied scenarios via an anonymous survey hosted online through Qualtrics. Ethical approval was obtained from the School of Psychology ethics committee. In total, 142 out of 468 undergraduate students enrolled in the module (31%) completed the survey (gender identity: 88% woman, 11% man, 1% nonbinary). Participants ranged in age from 19 to 24 (M = 20.0 years) and reported their ethnicities as White (77%), Asian (11%), Black (6%), or Mixed (6%). Students were offered a £5 Amazon e-voucher as compensation for participation.

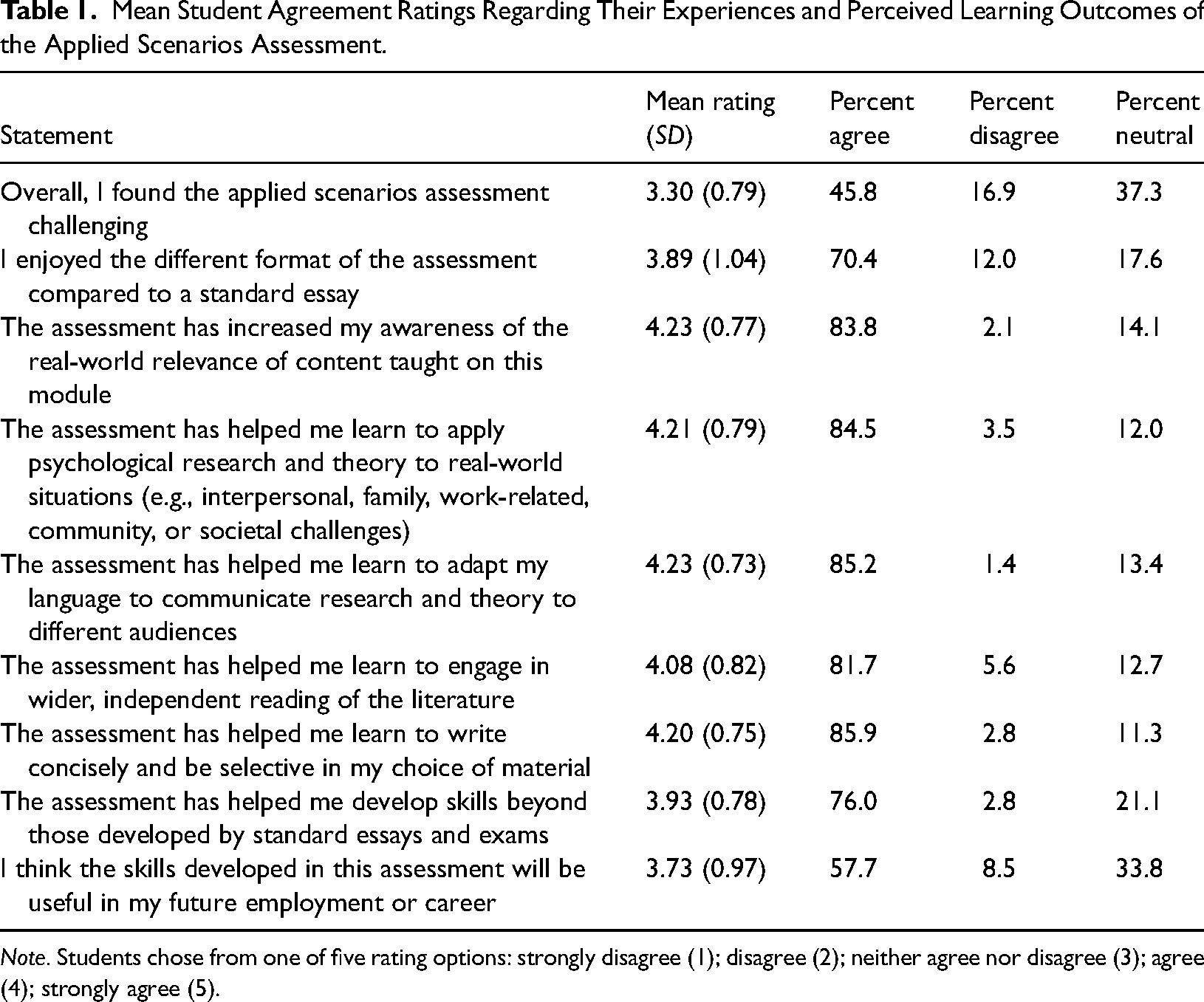

The survey contained a mix of quantitative and qualitative questions. These were ratings indicating agreement with statements relating to students’ experiences and perceived developments of competencies (see Table 1 for summary statistics regarding the responses to the quantitative questions), and free-text responses to the following two questions: “Please tell us why you enjoyed/didn’t enjoy working on the scenarios compared to a standard essay” and “please tell us what skills you feel you have developed by working on and completing the applied scenarios beyond those developed by writing standard essays.” Free-text responses were collected before the agreement ratings to ensure we captured students’ thoughts prior to any potential prompting by the statements. Students were asked to keep in mind both the social and developmental components of the applied scenarios when reflecting on the assessment, though we acknowledge that students may have focussed on the more recent developmental scenarios that they had just completed.

Mean Student Agreement Ratings Regarding Their Experiences and Perceived Learning Outcomes of the Applied Scenarios Assessment.

Note. Students chose from one of five rating options: strongly disagree (1); disagree (2); neither agree nor disagree (3); agree (4); strongly agree (5).

The free-text responses were coded using an inductive content analysis approach (Elo & Kyngas, 2008). Each participant's response per question was the unit of analysis and could contain multiple ideas. These were read closely and open-coded by the first author. Manifest coding was applied where codes reflected a summary of the ideas explicit in the data without interpreting the meaning behind the text. The coder then organized the codes descriptively into categories by combining similar codes which described common ideas to represent aspects that students enjoyed in the assessment, aspects they did not enjoy, and the unique skills they felt they gained. We will expand on what was in each category in the narrative below.

Results

What Did Students Enjoy/Not Enjoy About the Assessment Compared to a Standard Essay?

Overall, student engagement with this form of assessment was high with 70% of students reporting that they enjoyed it more than a standard essay and only 12% preferring essays (see Table 1). Students highlighted several aspects that they enjoyed in their free-text responses. The main categories are summarized below with supporting quotations:

In summary, students appreciated the authentic nature of the assessment, the additional challenges it posed compared to a traditional essay, the variety it offered and the short word limit. At the same time, some students referred to the following concerns that reduced their enjoyment of the scenarios compared to standard essays:

These raised concerns are extremely useful in flagging up where to target support in the future, whether this be through providing students with additional resources or through managing anxiety and expectations.

What Skills Did Students Feel They Gained from the Assessment Beyond Those Developed by Writing Standard Essays?

Encouragingly, students spontaneously identified developing many skills. The six main categories that emerged from the free-text responses are summarized below alongside supporting quotations.

In summary, students reported that they developed their ability to apply psychological evidence to real-life situations and to inform others, and that this in turn improved their understanding of psychological content. Students also felt that they improved their writing skills, both in terms of tailoring their communication to diverse audiences and in terms of learning to be more selective and concise. Finally, students reported developing their critical thinking and research skills such as obtaining and summarizing evidence.

The qualitative data were supported by the quantitative data. As can be seen in Table 1, students’ agreement ratings suggest that the assessment was successful in terms of their perceived development of all the key skills that make up the intended learning outcomes for this assessment. We were surprised, however, that only 46% students agreed that they found the assessment challenging with 37% students neither agreeing nor disagreeing and 17% disagreeing. This result appears at odds with students’ free-text comments where there were many references to the different ways in which students felt challenged by the scenarios. In addition, when it comes to rating whether the skills developed in this assessment will be useful in their future employment or career, it was interesting to note that although only 9% of students disagreed with the statement, 34% neither agreed nor disagreed. We consider possible reasons for these findings alongside strategies for addressing them in the Discussion.

Finally, we also asked students to indicate in the survey whether they revisited the feedback they received on their social scenarios (assessment 1) and attempted to make use of the markers’ suggested points for improvement when working on the developmental scenarios (assessment 2). Of the students who completed the survey 79% said that they had, which is an encouraging indication that a large proportion of students in our sample engaged with the feedback, although our data does not currently tell us how effective students were at acting on that feedback.

Discussion

Positive results from our evaluation suggest the applied scenarios assessment is well-liked by students who responded to the survey and is enriching their learning experience and psychological literacy skills development in the ways intended. Alongside these strengths, the evaluation also revealed some weaknesses.

First, while our aim was to challenge students with this assessment, students’ agreement ratings were mixed with respect to whether they found the assessment challenging, despite many students reporting feeling challenged in their free-text responses. One reason for this may be that the cited challenges from students (e.g., the need for more independent research, new ways of thinking, targeting the audience appropriately, writing within the 750-word limit) may have been offset by not having the stress of writing a longer essay (typically 2000 words on our course) or writing an essay under timed exam conditions. This may have led a large minority of students to feel, on balance, neutral about the challenging nature of this assessment. To investigate this finding further, we plan to ask students directly to explain the basis for their “challenging” rating in future evaluations so that we can better understand their reasoning.

Second, while most students (58%) agreed that the skills developed in the assessment will be useful in their future employment or career, a large minority (34%) neither agreed nor disagreed with this statement. One possibility is that this response reflects our sample's general uncertainty about their employment plans and awareness of necessary skills in the workplace at this stage of their undergraduate degree. Indeed, Bradley et al. (2019) found low levels of career engagement and career planning among Year 1 and Year 2 students in our School of Psychology, which increased notably for final-year students. If this is the case, students completing the evaluation who were uncertain about their career plans may have felt they could not answer this question without having a specific job in mind and so chose the neutral response. Nevertheless, these data suggest that a large minority of students may not recognize that the skills afforded by this form of assessment are likely to be needed in the workplace and to be valued by employers in any field of work. It is also possible that some students may perceive the skills developed as being useful specifically for psychology-related work rather than recognizing them as transferable to a wide range of work contexts. This was borne out in the free-text responses where any reference to employment was psychology-based. Consequently, some may not view the developed skills as relevant to their own future career if this is anticipated to be outside psychology.

Our findings suggest that we need to do more to help students recognize and appreciate why the ability to apply psychological understanding to real-world questions in the wider community, to be reflective on their own and others’ behavior and mental processes, to use evidence in a critical way, and to communicate clearly and appropriately to different audiences will be invaluable in whatever career path they take. As a strategy to address this issue in the future, we propose to include an interactive activity within the module, either as part of a lecture or as a guided online forum discussion, where we encourage students to reflect on and discuss the importance of these psychological literacy skills across a broad range of different work contexts.

Several limitations need to be acknowledged when interpreting our findings. Although our Applied Scenarios were designed to promote psychological literacy and the self-report measures suggest that students felt they developed key psychological literacy skills, we have no objective measure to verify whether the assessments did in fact increase psychological literacy in this cohort. Moreover, there is the possibility of a positive bias in the sample of students who provided an evaluation, compounded by the low response rate of 31% (which reflects a university-wide trend toward lower student survey response). It may be that students who participated were more engaged and felt more positively about the authentic assessment and what they gained from it than students who did not participate. We also acknowledge that as the free-text responses were coded by a single coder, without interrater reliability data, we have not guarded against the potential for researcher subjectivity to bias coding accuracy. In future student evaluations, we plan to use multiple raters and measure interrater agreement to minimize this risk.

Collecting data from a psychological literacy test pre- and postintervention alongside a control group would allow us to draw stronger conclusions about the extent to which the applied scenarios increase psychological literacy. Currently, this is not straightforward as there are no validated objective measures of psychological literacy available. Reliance on self-report skill development as measure of psychological literacy has been the dominant approach to date and measurement research is lacking (for reviews see, Cranney et al., 2022; Newell et al., 2020). Recently, however, Machin and Gasson (2022) have reported the ongoing development of a tool to fill this gap: the scenario-based multiple-choice Test of Psychological Literacy-Revised (ToPL-R). We hope to be able to use this test in the future to gauge changes in psychological literacy as a result of our assessments.

Our current findings provide proof of concept for embedding psychological literacy in authentic assessment to impact positively students’ learning experience and to help to broaden their skills. It is essential, however, that students are given the chance to continue to build on what they have learned from the applied scenarios assessment as they progress through their course. Indeed, partly as a consequence of this evaluation, we have been developing our local curriculum design to embed psychological literacy at the core of new final-year modules. In the future, we plan to track longitudinally students’ psychological literacy development to compare starting skill level with program completion level.

To conclude, we hope this report offers readers both the motivation and practical strategies needed to embed a similar authentic assessment of psychological literacy in their teaching practice.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.