Abstract

There is a widely acknowledged need to improve the reliability and efficiency of scientific research to increase the credibility of the published scientific literature and accelerate discovery. Widespread improvement requires a cultural shift in both thinking and practice, and better education will be instrumental to achieve this. Here we argue that education in reproducible science should start at the grassroots. We present our model of consortium-based student projects to train undergraduates in reproducible team science. We discuss how with careful design we have aligned collaboration with the current conventions for individual student assessment. We reflect on our experiences of several years running the GW4 Undergraduate Psychology Consortium offering insights we hope will be of practical use to others wishing to adopt a similar approach. We consider the pedagogical benefits of our approach in equipping students with 21st-century skills. Finally, we reflect on the need to shift incentives to reward to team science in global research and how this applies to the reward structures of student assessment.

Keywords

Introduction

Across the social and life sciences there has been a growing appreciation that many published findings may be unreliable (Begley & Ioannidis, 2015; Chalmers et al., 2014; Chan et al., 2014; Glasziou et al., 2014; Ioannidis et al., 2014; Salman et al., 2014). In one analysis, 85% of efforts in biomedical research were deemed to be wasted through inefficiency and poor methods (Macleod et al., 2014). In a recent survey in Nature, 90% of respondents agreed there was a “reproducibility crisis” (Baker, 2016). Small sample sizes and questionable research practices such as P-hacking, hypothesizing after the results are known, and selectively publishing positive results were all implicated as contributing factors. Psychology has not been immune. Classic findings have failed to replicate and experimental findings have failed to translate into effective interventions (Cristea, Kok, & Cuijpers, 2015; Doyen, Klein, Pichon, & Cleeremans, 2012; Ritchie, Wiseman, & French, 2012). In 2015, the Open Science Collaboration reported a replication rate of 39% for the 100 effects they attempted to replicate (Aarts et al., 2015).

In response there is a move towards rigorous and transparent scientific practices, such as pre-registering study protocols and making materials and data open-access; designing studies with sufficient statistical power; and team-science approaches to pool resources and expertise (Munafò et al., 2017). Until these “new” methods become the norm, however, the extra time and resources required for bigger samples and pre-registration, the reduced probability for leveraging chance findings, and the lack of conventions to adequately reward collaborative efforts may act as disincentives. Indeed, many, including ourselves, have argued that our current reward structures are failing to reward good science (Button et al., 2013; Button & Munafò, 2013; Munafò et al., 2017; Nosek, Spies, & Motyl, 2012).

Improved education coupled with shifting incentives are repeatedly identified as the key levers to promote better practices to address the “reproducibility crisis” (Munafò et al., 2017). When asked what factors could boost reproducibility, better training and teaching followed by incentives for better practice were ranked top by the respondents in the Nature survey (Baker, 2016). While there are moves to change the supra-order incentives structures researchers work within, these may take time to embed. For lower-order initiatives such as improved methods and education to be effective they will need to work alongside, rather than orthogonal to, the current reward and recognition structures.

We suggest that education in reproducible science should start at the grassroots. We present our model of consortium-based student projects to train undergraduates in reproducible team science. We discuss how our approach is carefully designed to align reproducible research methods training with the current conventions for individual student assessment, aligning quality teaching with the production of quality research.

The Wider “Reproducibility Crisis”: Causes and Solutions

The factors contributing to the reproducibility crisis are multi-layered, interacting, and complex. The solutions will need to be correspondingly multifaceted, each focusing on a specific element whilst also considering the wider eco-system. In the context of improving education, it is important to target the main areas which perpetuate the current issues. These include those operating at the individual study level, such as poor experimental design, the individual researchers’ behaviors, such as selective decisions of what and what not to publish, and the wider recognition structures in academic culture. We will discuss these briefly alongside some of the recommended solutions before discussing how we implement these solutions in our undergraduate training.

Low Statistical Power

Poor study design undermines the reliability of the subsequent study results. Studies consistently find statistical power to be low, 8 ∼ 50%, across both time and disciplines studied (Button et al., 2013; Cohen, 1962; Sedlmeier & Gigerenzer, 1989). Low statistical power increases the likelihood of obtaining both false-positive and false-negative results as well as generating inflated effect size estimates (Button et al., 2013). A reliance on under-powered studies therefore undermines the accumulation of knowledge.

Solutions include better education in the principles of sound research design. Performing sample size calculations is crucial to determining the appropriate study design; however, very few studies actually report a justification of their sample size (Bahor et al., 2017), and scientists seem overly optimistic about the likely size of effects under test. Researchers could use effect sizes from the existing literature, adjusted for publication bias, as the basis of their sample size calculations, or more work could be done around minimal clinically or theoretically important differences to inform sample size calculations (Angst, Aeschlimann, & Angst, 2017; Button et al., 2015; Jaeschke, Singer, & Guyatt, 1989).

Low-powered research persists due to perverse incentives and a poor understanding of the consequences of low power and/or a poor appreciation of likely effect sizes. However, it may also arise from a lack of resources to improve power. Team science is a solution to the latter problem. Instead of relying on the limited resources of single investigators, distributed collaboration across many study sites could enable high-powered designs. Multi-site testing also confers advantages in the generalizability of results across settings and populations sampled, the transfer of knowledge through bringing multiple theoretical and disciplinary perspectives, and the benefits of a diverse range of research cultures and experiences on the same project.

Multi-centre large-scale collaborations have a long and successful tradition in fields such as randomized controlled trials, and in genetic association analyses, and have improved the robustness of the resulting research literatures. Multi-site collaborative projects have also been advocated for other types of research, such as animal studies (Bath, Macleod, & Green, 2009; Dirnagl et al., 2013; Milidonis, Marshall, Macleod, & Sena, 2015), in an effort to maximize their power, enhance standardization, and optimize transparency and protection from biases. The Many Labs and Pipeline projects illustrate this potential in the social and behavioral sciences, with dozens of laboratories implementing the same research protocol to obtain highly precise estimates of effect sizes and evaluate variability across samples and settings (Ebersole et al., 2016; Klein et al., 2014; Schweinsberg et al., 2016).

Publication Bias and Questionable Research Practices

Studies that obtain positive and novel results are more likely to be published than studies that obtain negative results or report replications of prior results (Franco, Malhotra, & Simonovits, 2014; Sterling, 1959, 1975). This is known as publication bias (Sterling, 1959). Over 70% of authors admit to selectively reporting studies that “worked” – with those that failed to find significant results languishing in a file drawer (John, Loewenstein, & Prelec, 2012).

Questionable research practices refer to a body of behaviors that researchers employ to leverage chance to increase the likelihood of a publishable result. Examples include measuring multiple outcomes and switching the “primary outcome” to whichever produces the strongest effect; P-hacking – that is, running multiple variations of analyses until a significant p-value is obtained (Simmons, Nelson, & Simonsohn, 2011); and Hypothesising After the Results are Known (HARKing), where a hypothesis is retro-fitted to an unexpected result (Kerr, 1998). Such behaviors increase the probability of type I errors and undermine the reliability of results.

Pre-registration is the most powerful way to protect against all of these practices. It was introduced in clinical trials, to address publication bias and outcome switching, where it has now been standard practice for many years (Dickersin & Rennie, 2003; Lenzer, Hoffman, Furberg, Ioannidis, & Grp, 2013). In its simplest form it registers the basic study design and primary outcome. In its strongest form it involves registering the study with a commitment to make the results public, and detailed pre-specification of the study design, primary outcome, and analysis plan in advance of data collection. This makes the research discoverable and addresses questionable research practices such as outcome switching and P-hacking. More fundamentally, it allows a clear distinction between data-independent confirmatory research that is important for testing hypotheses, and data-contingent exploratory research that is important for generating hypotheses. Websites such as the Open Science Framework (http://osf.io/) and AsPredicted (http://AsPredicted.org/) offer services to pre-register studies, and over 150 journals now adopt the Registered Reports publishing format (Chambers, 2013; Nosek & Lakens, 2014).

Perverse Incentives

Poor experimental design, small sample sizes, undisclosed flexibility in data analysis, and a lack of transparency in reporting all undermine the purpose of the scientific endeavor (assuming the purpose is to generate knowledge). Why then do they persist? Publication is the currency of a successful academic career increasing the likelihood of employment, funding, promotion, and tenure. However, as we have discussed above, positive, novel, clean results are more likely to be published than negative results, replications, or “messy” results (Dickersin, 1990). Consequently, researchers are incentivized to focus on producing positive results irrespective of their reliability (Nosek et al., 2012; Smaldino & McElreath, 2016). Researcher behavior in the context of these perverse incentives has been modelled mathematically using simulations. Worryingly, these models suggest that researchers acting to maximize their “fitness” in the current incentive structures should spend most of their effort seeking novel results and conduct small studies that have only 10-40% statistical power (Higginson & Munafò, 2016).

Student Projects: The Problem Magnified

This perverse reward culture may be operating at the first stages of training, in how we teach and assess our undergraduate students. For example, in undergraduate psychology degrees in the UK, the culmination of a student’s research training is their final-year empirical dissertation (a research project akin to the senior thesis in the US system). It involves carrying out an extensive piece of empirical research, under the supervision of an assigned academic (much like the PhD supervision model). It requires the student to individually demonstrate a range of research skills including planning, considering, and resolving ethical issues, and the analysis and dissemination of findings (British Psychological Society (BPS), 2016). This empirical project typically involves the collection of original data, or equivalent alternatives such as secondary data analysis, and forms a substantial proportion of the student’s final grade (BPS, 2016).

This leads to numerous final-year research projects, individually conducted and assessed, and conducted with limited time and resources. Consequently, they may suffer from the problems seen in the wider research literature, such as small sample sizes, combined with questionable research practices which may yield a high rate of false-positive results. If these are selectively published, the students will be rewarded for being lucky rather than right. Indeed, an undergraduate publication is seen as highly competitive when applying for places on taught-Masters or for doctoral training.

As an example, suppose each year a psychology department has 100 final-year undergraduate students conducting a quantitative dissertation. Each student has been encouraged to “think creatively” and generate a novel, exciting hypothesis. Their hypothesis might therefore be quite unlikely to be true. Indeed, let us assume that only 10/100 students’ hypotheses are “true.” Now let us assume they are limited in time and resources and their samples sizes are correspondingly small for the effects under test, yielding an average statistical power of 20%. They set their significance criterion for rejecting the null hypothesis at 5%. Under these assumptions 20% of the 10 “true” effects will be discovered, yielding 2 true-positive results. For the remaining 90 non-associations, 5% will be declared as significant by chance, yielding on average between 4 and 5 false-positive results. Now let us assume supervisors encourage students to write up any interesting positive findings for publication; two-thirds of those will be false-positive. This is known as the positive predictive value (Sterne & Davey Smith, 2001). This would contaminate the scientific literature with unreliable results.

Developing a comprehensive pre-registration often takes several months, and unless effects under test are very large, a sample size of 200–300 participants may be required for sufficient power to test typical effects observed in psychology. Pre-registration of study protocols and designing studies with sufficient power to test hypotheses will therefore often require resources well beyond those available for the typical undergraduate dissertation project. Drawing on the above examples of large-scale collaborations in genetic epidemiology and multi-centre clinical trials, one solution to this is collaboration. By working together, students could pool their efforts and resources to obtain large data-sets. Any limitations imposed by the need for individual students’ assessment within an institution could be overcome by students working across universities.

The GW4 Undergraduate Psychology Consortium

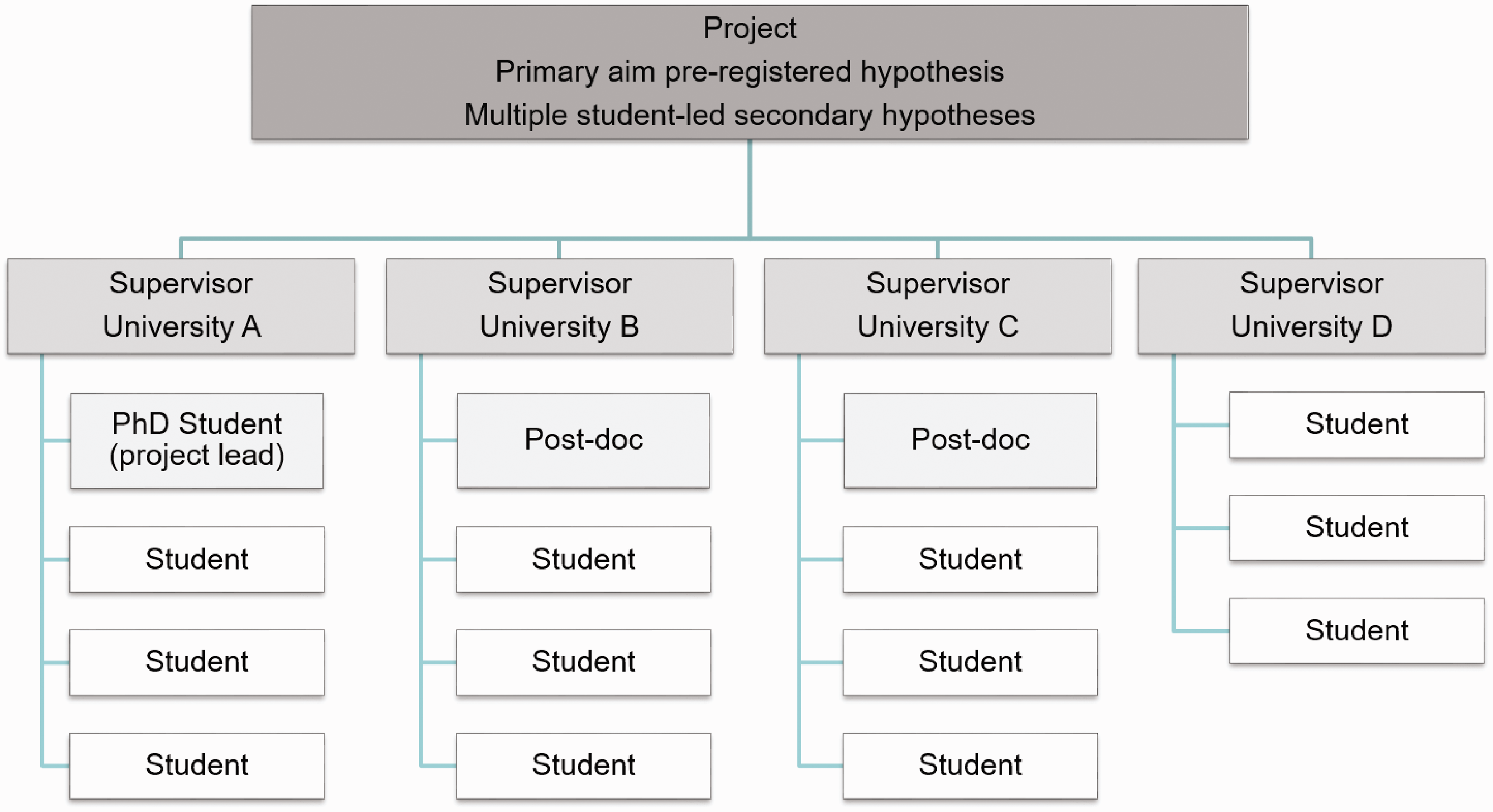

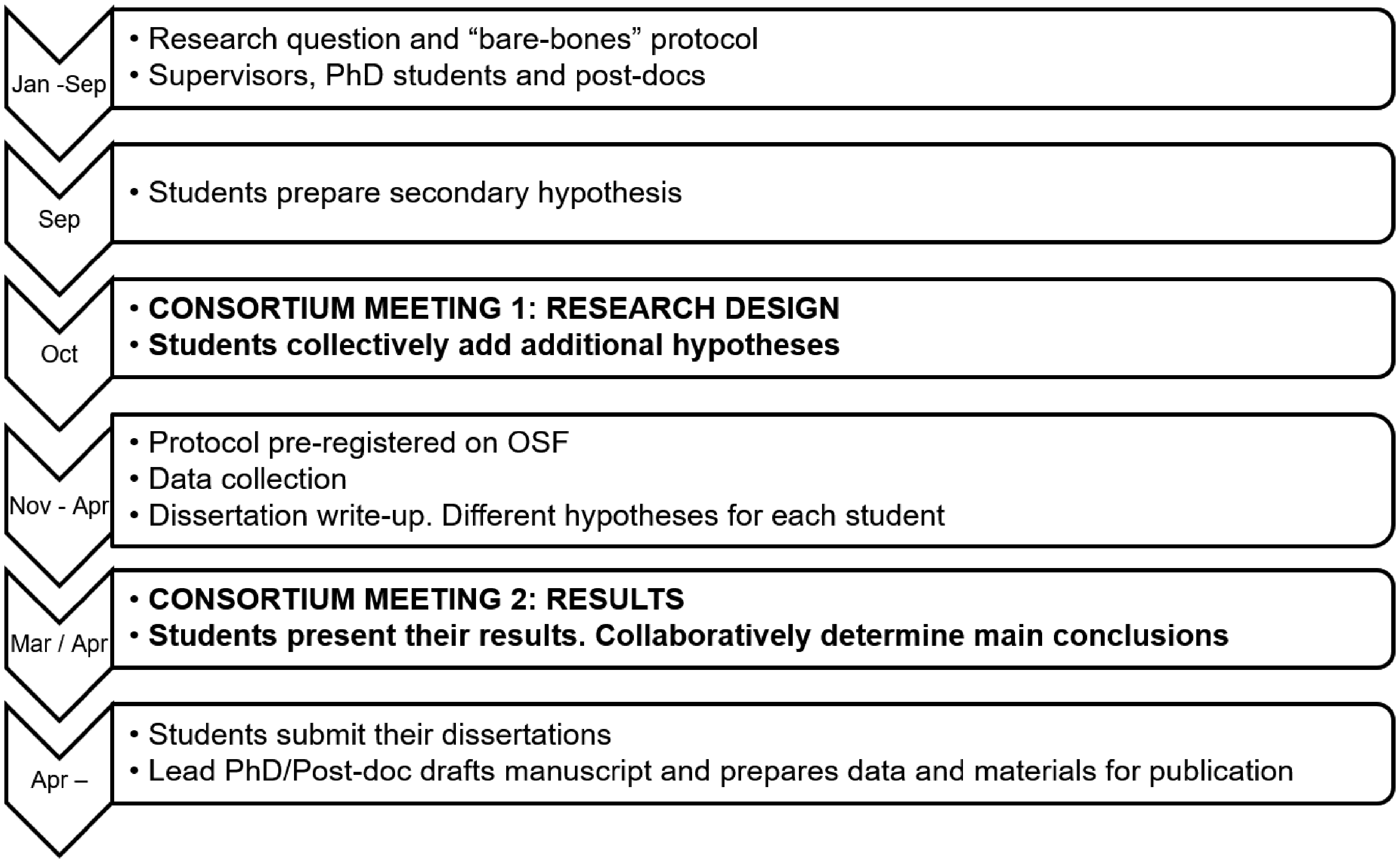

In 2016 we set up the GW4 Undergraduate Psychology Consortium, comprising academics and undergraduates and, more recently, PhD students and post-doctoral researchers, from the University of Bath, University of Bristol, Cardiff University and Exeter University, in the south-west of the UK (Button, Lawrence, Chambers, & Munafò, 2016). The composition of the consortium varies from year to year but a typical structure is provided in Figure 1. Students work in groups within and across the universities under the supervision of their local academic supervisor, and PhD student or post-doctoral researcher. Each year the consortium focuses on a single research project with a primary research question lead by one of the intuitions. To ensure fairness, the lead institution rotates from year to year sharing the rewards and responsibilities. The research cycle is typically 2 years (Figure 2). Rotating the lead ensures no single institution is over-burdened.

The typical structure of the GW4 Undergraduate Psychology Consortium. The typical timeline of the GW4 Undergraduate Psychology Consortium.

Assigning an individual study lead, or principal investigator, is essential for the effective coordination of the study. Given the extra work involved in pre-registration, coordination of multiple sites, and guaranteeing provision for the analysis and write-up of the project for publication after the undergraduate students have graduated, we have found it works best if the lead is a PhD student or post-doctoral researcher. The consortium benefits from additional support and dedicated oversight and this frees up the academics to focus on academic supervision. The lead PhD student or post-doctoral researcher benefits from gaining experience of leading a collaboration under the supervision of more experienced academics, and of mentoring undergraduate students. They also benefit from a first-author publication.

The empirical dissertation timeline and allocation procedures vary from university to university, and this is one of the biggest challenges in the consortium approach. Typically, students are assigned (with varying degrees of student choice) to their project supervisor anywhere from February through to September in their penultimate year of study. Information outlining the objectives and general procedure, including the homework element in the weeks prior to the start of term, are shared with the students during allocation. The final-year dissertation project then commences in October through to the dissertation deadline which ranges from early April through to late May.

Figure 2 shows the typical project timeline. The timeline from the preceding January through to June the lead PhD student or post-doctoral researcher and academic supervisory team develop a primary research question and bare-bones study protocol (containing hypotheses, rationale and background, methods, data analysis and management plan, ethical considerations). A few weeks before the start of term this is shared with the upcoming cohort of undergraduate students who are tasked in a homework exercise to think about what additional secondary questions they might like to add to the protocol. These secondary questions range from moderator analyses based on adding short individual difference measures, to focusing on a different outcome, or using the variables already in the design to address a different question. Each student, supported by their local supervisor, is instructed to prepare a 5-minute presentation outlining their proposed secondary research question, background and rationale, hypothesis, measures and methods, and analysis plan, including a graph of their hypothesized result.

In the first or second week of term in October we have the first of two consortium meetings. During this meeting the rationale for the consortium approach is presented, followed by the lead PhD student or post-doctoral researcher presenting an overview of the primary research question and procedure. Each student then presents their proposed secondary hypothesis. This is followed by general discussion of merits of each of the proposed hypotheses and corresponding measures and methods. The study protocol is then finalized through these collaborative discussions and all students are authors of the published study protocol. For example, suppose two students suggest a similar hypothesis, that the effect of X on Y is moderated by Z, but have proposed different methods of measuring Z. There is a clear issue of redundancy, and which measure to include is decided by considering issues such as psychometric properties, relevance to the population of interest, and so forth.

Following the first meeting the students are assigned various tasks relating to study set-up, such as constructing the surveys for data collection, piloting the experimental tasks and procedures, selecting stimuli, and drafting ethics applications, case-report forms, information sheets, debrief sheets, and recruitment advertisements. The project is managed centrally on the Open Science Framework (http://osf.io/).

The study protocol is pre-registered on the Open Science Framework and data collection runs from late November through to the end of February/March. Each site has a target sample size corresponding to a proportion of the total sample size calculated for the overall project, adjusted for their institutional requirements and recruitment challenges. Data collection finishes at the end of February/March to accommodate the students with the earliest dissertation deadlines.

In March/April we hold the second consortium meeting, akin to a mini-conference, where each student presents their dissertation results. There is a general discussion about the study findings, and the general conclusions of the final publication are mutually agreed; thus each student qualifies for authorship of any publications. To date, students have used the full data-set from across the consortium in their dissertations.

Aligning Rigorous Research with Individual Assessment and Program Standards

In the UK, psychology degrees are accredited by the British Psychological Society (BPS; the equivalent of the American Psychological Association (APA) in the US) which sets the program standard. Accredited psychology degrees include the acquisition of a range of research skills and methods, culminating in an ability to conduct research independently. Graduates must demonstrate competency in designing, conducting, and reporting on empirically based research projects under appropriate supervision (BPS, 2016). There are various opportunities for developing these skills through the degree, culminating in the final year in the empirical dissertation (BPS, 2016). The GW4 Undergraduate Psychology Consortium was designed specifically with this degree standard in mind. By collaborating, students benefit from pooled resources and data collection. The pre-registered primary research question and hypothesis means the integrity of the overall research project is retained. The addition of student-led secondary hypotheses means each student can have a different title and focus of their dissertation, so within an institution no two students are doing exactly the same thing. Thus, throughout, each student can demonstrate individual contributions to each element of the research but within the scaffold of a high-quality research project. In 2016, we presented the GW4 consortium approach to the BPS Undergraduate Education Committee, and they revised their accreditation guidance around the empirical dissertation to explicitly endorse group-based projects, provided students are able to demonstrate the required research skills individually (BPS, 2016).

Pedagogy

There is broad consensus amongst educators, business leaders, academics, and governmental agencies, that traditional academic skills that have focused on knowledge-based content and surface-learning are no longer sufficient to prepare our students for success in the 21st century. Instead, they have identified skills associated with deeper learning, such as analytic reasoning, complex problem-solving, and teamwork as crucial for meeting the demands of the modern-day workforce (Organisation for Economic Co-operation and Development (OECD) 2005). In science, collaboration is increasingly recognized by funders and key stakeholders as necessary to meet global challenges (Academy of Medical Sciences, 2016). The number of authors on a typical research paper increases year-on-year, and the typical lab-group runs as a team (Academy of Medical Sciences, 2016). For psychology to be well placed to meet these challenges we must find ways to train and support, and then adequately recognize and reward, psychologists who contribute to team efforts, starting with undergraduate education.

Our consortium approach to the undergraduate empirical dissertation has many pedagogical advantages. It is project-based learning grounded in social constructivism (Roessingh & Chambers, 2011). Students join a knowledge-generating community, where, in collaboration with others, they conduct real research in an authentic context. They gain the transferrable skills of collaboration, problem-solving, and creating new knowledge through co-production. Through peer-to-peer interaction, they actively apply their knowledge in discussion, particularly during the consortium meetings, but also in the day-to-day conduct of the study.

The consortium structure creates “zones of proximal development” where students contribute to each element of the research cycle, but within a scaffold which retains the integrity of the overall research. The “bare-bones” protocol drafted by the lead PhD student is an example of this. It provides the students with a scaffold of best practice which they can model in their preparation of their secondary hypothesis and corresponding background, method, and analysis plan.

One limitation of this approach is the scope for “social loafing” (Karau & Williams, 1993; Latane, Williams, & Harkins, 1979). This might occur where some members of the team are not as intrinsically motivated to work as hard on collaborative aspects, such as data collection, as others. For example, knowing that they will have access to the whole data-set, regardless of the number of participants that they themselves recruit, might demotivate some individuals. Social loafing may be more likely where assessment and/or reward structures do not recognize team work: for example, where a group is awarded a common mark regardless of individual contributions (Lee & Lim, 2012), or in terms of the dissertation, where the final grade reflects the write-up, and ignores the student’s teamwork contributions.

This reflects a wider academic debate surrounding the need for better recognition of individual contributions to team efforts. Following recommendations in the recent “Improving Recognition of Team Science Contributions in Biomedical Research Careers” (Academy of Medical Sciences, 2016) the consortium members could collectively agree milestones, credit, and responsibilities during the first consortium meeting. Contributions could be logged as the project progresses, and the student and/or supervisor could submit a statement of contribution alongside their individual dissertation (this is already standard practice at some universities). Supervisors can also promote a culture of collaboration by actively acknowledging good teamwork in their day-to-day mentoring and signposting where collaboration will be rewarded beyond the dissertation grade. For example, in the UK, dissertation supervisors are often asked to provide an academic reference. This provides an opportunity to acknowledge the student’s contribution to the wider team effort, providing evidence of both academic achievement in the dissertation grade, and softer 21st-century skills in appraisal of the student’s conduct during the study.

Evaluation

To date, 24 students have participated in and successfully completed their dissertation project as part of the GW4 Consortium. The consortium has published three pre-registrations (Button, Chambers, et al., 2016; Hobbs et al., 2018; Tzavella, Adams, Chambers, Lawrence, & Button, 2018) and has three manuscripts in preparation. Anecdotal evidence suggests that the scaffolding provided by the consortium approach helped the weaker students and enabled the stronger students to excel. We plan to test this quantitatively by comparing grades awarded for the dissertation for consortium versus cohort students, adjusted for grades awarded from non-dissertation units, when a sufficient number of students have completed to ensure their anonymity. We have been surveying the students at the end of each project for their feedback. They greatly valued participating in the consortium, particularly having access to a meaningfully large data-set; the opportunity to network with academics, students, and researchers from other universities; the sharing of ideas and knowledge; and contributing to pre-registration. They saw the disadvantages as trying to coordinate deadlines and workload with other universities and having less control over the study design.

Wider Applications and Other Collaborative Initiatives in Student Projects

The consortium model we have presented here is just one example of an approach tailored to our way of working, our departmental courses, and our national accreditation requirements for the empirical dissertation. Our consortium model would translate well to other educational systems which involve quantitative students’ projects which collect data to test hypotheses, where students must individually demonstrate competency in design, conduct and reporting, but the timescale and resources mean a student would struggle on their own to collect sufficient data. For example, in the US the APA recommends capstone experiences for undergraduate training, which can include projects similar to the UK quantitative dissertation, and many undergraduate programs have either required or elective projects that are similar in size and scope. However, a core element of our approach is the two consortium meetings, which provide the opportunity for the students to independently contribute ideas to the study design, and any final publication. The GW4 universities are in relatively close proximity to each other which means we can hold these meetings face-to-face in an afternoon. More geographically distributed collaborations may need to use conference calling, or other means to share ideas, which may lose some of the advantages for the students of networking in person with colleagues from other institutions. We have found that the protocol can become unwieldy if too many secondary hypotheses are added, so this limits the number of students from a single institution (our range is two to six). However, this will depend on the specific project design and institutional policy of how many students can address the same research question within an institution.

In undergraduate psychology methods teaching more broadly, there are lots of exciting new initiatives. For example, the Collaborative Replications and Education Project (CREP; https://osf.io/wfc6u/) is a large-scale replication project that students can participate in. A coordinating team identifies recently published research that could be replicated in the context of a semester-long undergraduate research methods course. A central commons provides the materials and guidance to incorporate the replications into projects or classes, and the data collected across sites are aggregated into manuscripts for publication. This approach is particularly suited to large-group methods teaching, which in the UK model typically occurs in the first and second years of the degree. It is less well suited to the final-year empirical dissertation in the UK model, where the students must demonstrate individual contributions to each element of the research, and work closely with an academic supervisor in a manner more akin to the relationship between a PhD student and their supervisor. The “Pipeline” (Schweinsberg et al., 2016), “Many Labs” (Ebersole et al., 2016; Klein et al., 2014), and Psychological Science Accelerator (https://psysciacc.org/) projects also offer opportunities to contribute to large-scale replication efforts with coordinated data collection across many locations simultaneously.

Conclusions

The need to improve the reliability and efficiency of scientific research is widely acknowledged. Improved education is repeatedly identified as a key lever to meet this need. Consortium-based student projects are one example of how reproducible research methods training can be aligned with the current conventions for individual student assessment, and the production of high-quality research. The consortium approach has many pedagogical benefits, equipping students with the 21st-century skills of collaboration, analytic reasoning, and complex problem solving. However, for these skills to be fully realized the assessment and marking criteria for the dissertation need to extend beyond individual assessment criteria to recognize and reward collaboration.

Footnotes

Acknowledgements

The GW4 Undergraduate Psychology Consortium was set up using a Teaching Development Fund from the University of Bath, awarded to KSB. MRM is a member of the UK Centre for Tobacco Control Studies, a UKCRC Public Health Research: Centre of Excellence. Funding from British Heart Foundation, Cancer Research UK, Economic and Social Research Council, Medical Research Council, and the National Institute for Health Research, under the auspices of the UK Clinical Research Collaboration, is gratefully acknowledged.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: CDC and MRM have received funding from the BBSRC (grant number BB/N019660/1) to convene a workshop on advanced methods for reproducible science.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.