Abstract

Background

Research is computationally reproducible when independent analysts can use the underlying data to reproduce the original results. Some research in psychology is computationally reproducible, although much is not.

Objective

To assess the computational reproducibility of research published in

Method

We identified key claims in 60 papers published with open data in ToP, PLaT, and SoTL-P between 2017 and 2025. We then sought to reproduce 101 results supporting these claims.

Results

We exactly reproduced 73 of the 101 results. For a further 23 results, the substantive interpretations of our results matched those of the original researchers. The majority of the reproductions were performed relatively quickly by a psychology undergraduate with 2 years of relevant experience.

Conclusion

Psychology learning and teaching research appears more computationally reproducible than research in other psychology sub-fields. However, reproducibility is undermined by several factors, including a lack of open data and code.

Teaching Implications

Computational reproducibility activities can be incorporated into undergraduate research methods classes, effectively address many research methods learning outcomes, and help students develop both subject-specific and transferable employability skills.

Keywords

Research is computationally reproducible when independent analysts can use the underlying data to reproduce the original results. Computational reproducibility relies on the availability of raw data and plays a central role in research transparency. Limited empirical data suggest that some psychology research is computationally reproducible, although much is not.

For example, Hardwicke et al. (2018) attempted to reproduce a “subset of ‘relatively straightforward and substantive’” (p. 9) results in 35 papers with “in principle reuseable” (p. 6) data published in

More recently, Crüwell et al. (2023) sought to reproduce all the results in all 14 research papers published in the April 2018 issue of

In the current study, we assessed the computational reproducibility of research published in

Method

Design

We conducted this preregistered (https://osf.io/efhnp) observational study in two phases. In Phase 1, we identified key claims in 60 empirical papers published with open data in ToP (

Sample

On May 29, 2025, we extracted a list of 1,039 empirical papers published since January 1, 2015, in ToP, PLaT, and SoTL-P. We then rapidly screened these papers for data availability statements indicating that the data underpinning the research were “open” (i.e., available without restrictions). This screening process generated a list of 103 papers reporting results that were potentially computationally reproducible. From these, we randomly sampled 60 (with one replacement due to data in a proprietary format we were unable to open or convert), with publication dates ranging from early 2017 to mid-2025.

Positionality

AA conducted this study as an 8-week BPS-funded research assistantship prior to entering the third year of the BSc Psychology at the University of Bristol. PA supervised the assistantship and worked with AA on the design and execution of this study. PA was “hoping for the best but expecting the worst” regarding the reproducibility of a field he has spent over 10 years working in and advocating for. Although there remains room for improvement, PA was pleasantly surprised by the results of this study.

Procedure

Phase 1: Claim Identification

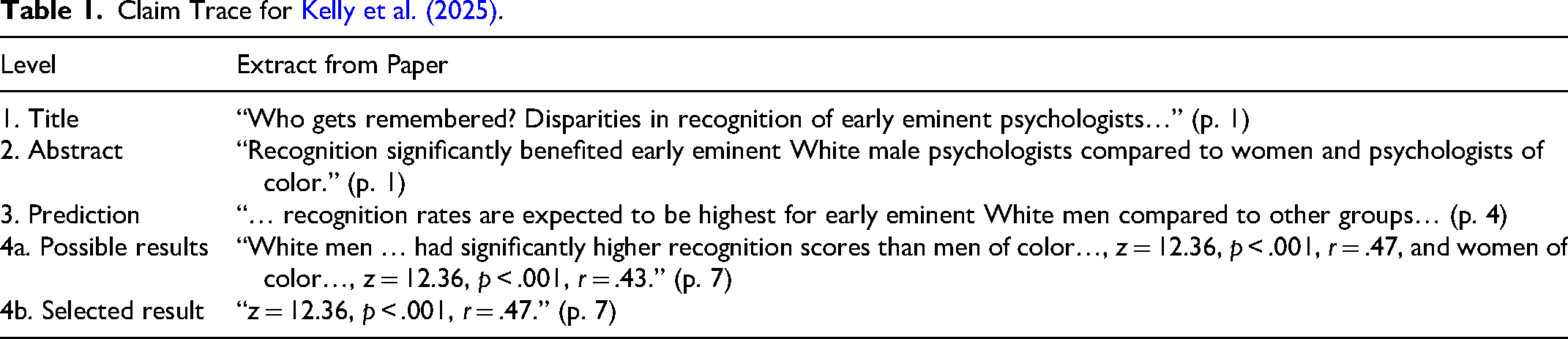

In replication and computational reproduction studies, various methods have been used to identify “central” or “key” claims in published research (e.g., Artner et al., 2021; Camerer et al., 2018). They are all somewhat subjective. We followed a protocol based on the SCORE Collaboration (2024). Specifically, AA developed a claim trace for each paper (see https://osf.io/52unq) comprised of four levels: title, statement from the abstract, prediction in the main text, and supporting result. When multiple results supported the claim (

Claim Trace for Kelly et al. (2025).

After developing each claim trace, AA prompted ChatGPT (GPT model 4o) to repeat this process. If the two claim traces matched (

In the 41 instances where there were multiple results supporting the claim, a second result was randomly selected from among these for reproduction. For example, in Kelly et al. (2025), the second selected result was “

Phase 2: Attempted Reproduction

If the code was available, AA executed it. If this produced errors that could not be resolved through troubleshooting and/or the results did not match those in the corresponding paper, AA sought advice from PA, and the outcomes were recorded. If the code was unavailable and the relevant statistics had been taught in the first 2 years of the BSc Psychology, AA attempted a manual reproduction in SPSS or JASP. When reproduction was unsuccessful, AA first sought advice from ChatGPT and then, if necessary, from PA before the outcomes were recorded. If the relevant statistics were unfamiliar to AA, he first consulted relevant instructional material on YouTube, then, if necessary, Coolican (2024), followed by ChatGPT, and finally PA. The outcomes following these processes are documented at https://osf.io/vqxpj. We coded a result as “reproducible” when there was an exact match (after rounding, where applicable) between the values we calculated and those in the original publication. If there were any differences, the result was coded as “not reproducible.”

It should be noted that, despite our initial cynicism, we made all reproduction attempts in “good faith.” Successful reproduction was approached as a challenge that we were motivated to overcome. Some reproductions were achieved quickly (e.g., < 15 min), whereas others took considerable time (e.g., 3–4 hr before either achieving success or reluctantly giving up). Finally, to prevent possible fixation on incorrect solutions, PA's attempts at reproduction were made blind to the methods AA had tried or the results he had achieved.

Deviations From Preregistration

First, when developing the claim traces, we observed that a single claim was often supported by multiple results. In these situations, we first selected the result with the largest effect size, and then randomly selected a second result supporting the same claim. Thus, for 41 of the 60 papers, we sought to reproduce two results. Second, we relaxed the time limits we had pre-registered for troubleshooting code and for each reproduction attempt. This was to feel comfortable that any reproduction failures were likely due to factors other than insufficient time. Third, in all situations where executing code did not lead to reproduction success for AA, PA was consulted. This is consistent with the approach taken for manual reproduction. Finally, rather than using the documentation for AA's unsuccessful reproduction attempts as a starting point for his own efforts, PA's reproduction attempts were made blind to AA's.

Results

Across all 60 studies, 43 (71.7%) of the initially selected results were reproducible. Of these, the majority (30 results) were achieved by AA with nothing more than his undergraduate lecture notes and some perseverance. YouTube (five results), ChatGPT (one result), and finally PA (seven results) were also useful when the lecture notes were lacking. For 41 studies, we also attempted to reproduce a second result. Of those attempts, 30 (73.2%) were successful (26 by AA, followed by an additional four by PA). In 27 (65.9%) of the 41 studies, we were able to reproduce both results, and in 7 (17.1%), we were unable to reproduce either result.

Despite our best efforts, we were unable to exactly reproduce 28 (27.7%) of the results we examined. We categorized these results as not reproducible due several reasons, including (a) probable rounding issues (six results); (b) the use of incorrect degrees of freedom (two results); (c) probable copy-and-paste errors (two results); (d) numerical differences between our results and those in the published papers which were usually modest, and which we were unable to explain (15 results); (e) unspecified exclusion criteria (two results); and (f) a dataset that we were unable to make sense of (one result). For results affected by (a), (b), and (d), there were no substantive differences between the interpretation of our results and those reported by the original authors. In the study affected by (c), the original authors’

Discussion

The present study aimed to assess the computational reproducibility of research published in ToP, PLaT, and SoTL-P. Of the 101 results in the 60 papers we focused on, 73 (72.3%) were exactly reproducible. For many of the others, the differences between our results and those in the original publications were negligible. Indeed, if we lowered the threshold for “reproducible” to include results that differed due to rounding issues or incorrect degrees of freedom, our reproduction success rate would rise to over 80%. We suspect it would rise even further if we engaged with the authors of the papers containing results we were unable to reproduce. When Hardwicke et al. (2018, 2021) did this, their success rate doubled.

On the surface, these results are pleasing. For context, they are comparable with those of Artner et al. (2021), who successfully replicated 70% of “key statistical claims” (p. 527) in 46 papers published in four psychology journals. Furthermore, our findings are considerably more positive than those of Hardwicke et al. (2018, 2021), Crüwell et al. (2023), and Obels et al. (2020), who used a range of methods to assess computational reproducibility and achieved success rates of no higher than 63%. However, there is still room for improvement. Only 10% of the papers we initially screened were accompanied by open data, which provided us with the opportunity to assess their computational reproducibility. It is reasonable to speculate that this 10% may be more reproducible than the 90% that were not open to such scrutiny. Although we understand that open data is not always possible, we find it difficult to believe that genuine restrictions (e.g., ethics, commercial sensitivities, national security, etc.) prevented even close to 90% of the authorship teams from preparing and making publicly available deidentified versions of their data files before publication. There are several free services available to support this (most notably, the Open Science Framework; https://osf.io), and many universities also maintain institutional data repositories. Furthermore, as we know from previous research (Gabelica et al., 2022), “available on request” most commonly means “not available.” Thus, the primary factor limiting the computational reproducibility of psychology learning and teaching research is a lack of open data.

Among papers for which data are available, there is no reason why the computational reproducibility rate should not be 100%. In our study, it was not. Factors that made successful (and attempted but ultimately unsuccessful) reproduction unnecessarily difficult included the absence of code; buggy and/or incomplete code; the absence of a codebook or intuitively named variables and (where applicable) value labels; vague or incompletely described data wrangling and analyses; and datasets in languages other than the language of the associated paper. Thus, we recommend that psychology learning and teaching researchers provide complete, verified, and reproducible code and a codebook; give variables meaningful names and labels that correspond to those used in the paper; and if not providing code, ensure that the data wrangling and analyses, as well as the calculations for any statistics for which there are multiple accepted versions (e.g., some measures of effect size) are completely and precisely described (in an online supplement if necessary). Furthermore, one dataset in our initial sample needed replacement because it was in a proprietary format we were unable to read. Therefore, we further recommend that psychology learning and teaching researchers convert data to an open format (e.g., CSV) and ensure it is readable by common data analysis packages prior to upload. Many of these recommendations are also reflected in the FAIR principles (see Wilkinson et al., 2016), which should be incorporated into the editorial policies of all psychology learning and teaching journals.

Implications for Teaching Reproducibility

This project was completed as a BPS-funded undergraduate summer research assistantship. Consistent with this, one of our aims was to reflect on the pedagogical potential and challenges of integrating authentic computational reproducibility activities into undergraduate syllabi. Such activities align with several learning outcomes specified by the BPS (2024), including critical thinking, managing and analyzing different types of quantitative data, using specialist software, and awareness of open science and reproducibility issues in research. When complemented with exercises like those developed by McCarley and Soicher (2022), computational reproducibility activities give students hands-on experience with the full range of benefits and challenges associated with reproducibility as both producers and consumers of research. They give students opportunities to explore what “best practice” can look like by observing where published research falls short, which they will carry forward into subsequent studies and, where applicable, capstone projects. As many of today's psychology students will be tomorrow's psychological scientists, such opportunities are vital to improving the future quality of psychological science. Furthermore, computational reproducibility activities help reinforce the point that mistakes are commonplace, even among experienced researchers (see Breznau et al., 2025, for a vivid example in the context of computational reproducibility research), and that a clear audit trail is vital for identifying and correcting these. Alongside multiverse studies (see Allen et al., 2025a), they also provide opportunities to discuss analytic alternatives and their implications. We did not attempt to judge the appropriateness of the data processing techniques and statistics used in the 60 studies, but within a classroom context, computational reproducibility activities afford students detailed knowledge of what researchers

On average, it took AA less than an hour to reach a conclusion regarding each initially selected result, followed by an additional 5–10 min for the second result, where applicable. Using only notes developed during his first 2 years of the BSc Psychology, his reproduction success rate was 52%. This was increased to 61% with a modest amount of further research. Notably, PA, with over 25 years of relevant experience (as a student, teacher, and researcher), could only increase the overall success rate to 72%. This suggests that, with appropriate scaffolding, computational reproducibility activities can be successfully deployed in undergraduate research methods classes. We would recommend that educators seeking to do this start with one or two results based on recently taught statistics, which are known to be reproducible with a modest amount of effort. Then, as students’ confidence builds, they can be given results that require greater effort, along with results that are not reproducible. Finally, students can be challenged to reproduce results of personal interest that they source from the primary literature.

Challenges like this can bridge a pedagogic gap by bringing students closer to authentic research practices. For Bauer et al. (2023), they represent a “great opportunity” (p. 121) for students to experience the work of a practicing scientist. Of course, not all psychology students are training for a career in research. Computational reproducibility activities can also help students develop a range of transferable skills, which are valued in a wide range of industries. These include analytical thinking, adaptability, integrity, flexibility with new systems, and familiarity with a range of software (Naufel et al., 2019). Explicitly connecting these skills to classroom activities is known to improve student outcomes (Miller & Favelle, 2022).

Limitations and Future Research

In this study, we established two benchmarks for assessing the quality of psychology learning and teaching research. The first is the proportion of studies that are sufficiently open to permit assessment of their computational reproducibility, which is disappointingly low. The second is the proportion of that open research that is computationally reproducible, which appears encouragingly high, though still short of ideal. However, substantial gaps remain in our understanding of the computational reproducibility of this field.

First, in most cases, we avoided attributing differences between our results and those reported in the original papers to errors made by the original researchers. Despite our best efforts, some differences could reflect mistakes on our part. Furthermore, although we were able to identify likely causes for certain differences (e.g., rounding or incorrect degrees of freedom), many remained unexplained. Future researchers could address these uncertainties by seeking the assistance of the original authors. When Hardwicke et al. (2021) did this, their reproducibility success rate increased dramatically.

Second, identifying key claims was often more ambiguous than anticipated. In many instances, there were several reasonable possibilities that we could have pursued. Moreover, when multiple results supported a single claim, our decision to select the largest effect, followed by a second randomly chosen effect, was arbitrary. Future work could overcome these limitations by examining a larger set of claims and/or results within each study. Researchers might, for example, adopt the approach of Crüwell et al. (2023), who sought to reproduce all the results reported in a single issue of

Third, only 10% of the research papers we initially screened were accompanied by open data and therefore eligible for inclusion in our sample. Within the context of psychology learning and teaching research, future researchers should explore the extent to which data described as ‘available on request’ are in fact available, and whether the results reported in the papers accompanying these data are reproducible.

Finally, we have proposed that authentic computational reproducibility activities can be successfully integrated into undergraduate research methods classes and briefly outlined potential strategies for doing this. However, these strategies have not been tested. Future researchers should, therefore, explore how to best implement and evaluate the effectiveness of such activities. Particular attention should be paid to the experiences of students who are less enthusiastic about research methods and statistics and who receive less support than AA received in the present study.

Conclusion

In this study, we were able to exactly reproduce 73 (72.3%) of 101 results reported in 60 papers published in ToP, PLaT, and SoTL-P between 2017 and 2025. An undergraduate student achieved the majority of these reproductions with access to the range of resources and experiences typical for someone approximately midway through an undergraduate degree in psychology. These results demonstrate two main points. First, psychology learning and teaching research is comparatively computationally reproducible, although there is clearly still room for improvement. We have provided several recommendations for researchers in this field to help them improve the reproducibility of their future work. Second, computational reproducibility activities can effectively address many undergraduate research methods and statistics learning outcomes and can help students develop both subject-specific and transferable employability skills. We have outlined these skills and suggested strategies that educators can explore for introducing authentic computational reproducibility activities into their undergraduate research methods syllabi.

Footnotes

Acknowledgements

We would like to acknowledge Dr. Dorota Bednarek for her role in securing the funding for this research and her support throughout this project, Dr. Marin Dujmović for his assistance with the initial data extraction and screening processes, and the MSc students who helped with the initial screening. This research was funded through the British Psychological Society's Undergraduate Research Assistantship Scheme.

Author Contributions

AA: conceptualisation, methodology, investigation, formal analysis, data curation, writing–original draft, and writing–review and editing. PA: conceptualisation, methodology, validation, writing–original draft, and writing–review and editing.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded through the British Psychological Society's Undergraduate Research Assistantship Scheme.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Open Practices

For publishing their material, Almuhanna and Allen received badges for open data, open materials and preregistered.

Transparency and Openness Statement

This study was preregistered at https://osf.io/efhnp. The raw data underpinning this research are available at ![]() .

.