Abstract

Data-driven decision-making (DDDM) is a difficult topic to cover, but typically required, in the applied educational psychology course or other courses required for teacher licensure in the United States. While a growing body of literature indicates in-service teachers are resistant to DDDM and underprepared to engage in it, little has been done to understand pre-service teachers while they are still in the ideal arena in which to address resistance and subsequently build DDDM skills. The purpose of this study was to examine pre-service teachers’ affective response to the classroom-level DDDM via their concerns profile (

Pre-service Teacher Concerns Regarding Classroom-Level Data-Driven Decision-Making

The purpose of the current study was to explore pre-service teachers’ concerns about classroom-level, teacher-directed data-driven decision-making (DDDM) while enrolled in a required applied educational psychology course in the United States (US). To date, little research on pre-service teachers and DDDM exists, and

Literature Review

In this literature review, the theoretical framework for this work—concerns theory—will be explained. Subsequently, DDDM and the role of US teacher education programs in preparing data-literate future educators will be discussed. Finally, current understandings of in-service teacher concerns about DDDM and the situational factors that may impact pre-service teacher concerns will be examined.

Concerns Theory

The Concerns-Based Adoption Model (CBAM) arose from the work of Hall and Hord (1987, 2011) and served as the theoretical framework for this study. Concerns theory is useful in understanding teachers’ affective responses, such as resistance, to educational innovations. More than 30 years of research supports that concerns are predictive of teachers’ adoption of targeted innovation (George, Hall, & Stiegelbauer, 2006). The remaining literature review presents Hall and Horde’s (1987; Hord, Rutherford, Huling-Austin, & Hall, 2005) CBAM, DDDM and DDDM in teacher education, research on in-service teacher concerns about DDDM, and the gap in the literature regarding future teachers’ concerns about DDDM.

Concerns are one’s thoughts, considerations, feelings, worries, satisfactions, and frustrations related to an innovation. More simply, they are an emotional response to an educational innovation, in this case DDDM (Hall & Hord, 1987). Concerns help us understand not only the likelihood of a teacher or future teacher adopting an educational innovation, but also the reasons why they may or may not adopt the new practices (George et al., 2006; Hall & Hord, 1987). This understanding can be used to help tailor and drive more impactful professional development. In the pre-service setting, understanding students’ concerns can help instructors increase interest, buy-in, and willingness to one day adopt the best practices they are presented with during teacher education training (Lotter, 2004).

Hall and Hord (2011) described the CBAM as “an empirically based conceptual framework that describes, explains, and predicts probable teacher behavior based upon relevant concerns as a teacher participates in developmental activities and implements an innovation” (as cited in Dunn, Airola, & Garrison, 2013a, p. 674). Hall and Hord’s (1987) CBAM includes seven stages of concern that follow a developmental pattern and are organized into three categories: Self, Task, and Impact. The seven stages are Unconcerned, Informational, Personal, Management, Consequence, Collaboration, and Refocusing. Self Concerns pertain to what one knows about an innovation and how the innovation will impact the individual. This level includes the first three stages of concern: Unconcerned, Informational, and Personal. Stage 0, or Unconcerned, reflects a lack of awareness or interest in a specified innovation. Stage 1, or Informational Concerns, indicates an awareness of the innovation as well as an interest in learning more or an awareness of a need to learn more about the innovation. Stage 2, or Personal Concerns, involves thoughts about how the innovation will impact the individual as well as concerns about their ability, personal adequacy, demands that will result from the innovation, and how they will be evaluated, punished, and rewarded. The Task level includes only one stage of concern, Stage 3, Management Concerns. Individuals with Management Concerns focus on logistics of implementation and the resources and support available to them for implementation (George et al., 2006; Hall & Hord, 1987).

The more mature or user concerns are the Impact Concerns. Impact-level concerns include stages four through six. Stage 4, or Consequence Concerns, reflects thoughts, worries, and preoccupations regarding how an innovation will help or hurt students. In Stage 5, or Collaboration, teachers express a desire to work with others to increase and improve use of the innovation. In Stage 6, or Refocusing, teachers are interested in modifying or improving the innovation to improve outcomes (George et al., 2006; Hall & Hord, 1987). It is not just the highest manifesting concern that must be considered when understanding teacher adoption of new innovations; the relative position or strength of concerns relative to one another also informs the understanding of where a teacher is in the change process related to adopting a new innovation. The Stages of Concern Questionnaire (SoCQ) and the SoCQ manual are the tools used to produce the concerns profile required to interpret peak concerns and implications of relational position of concerns.

The SoCQ manual explains, in detail, how to interpret profiles across various dimensions including user versus non-user profiles, strongest peaks, and relative position of stages as compared with adjacent stages. For example, a non-user profile has a peak in the personal or management stages of concern (Stages 0–3). A peak is 10 percentile points higher than adjacent stages. In addition, a peak that is 10 percentile points or higher than adjacent stages is a dominant feature in the teacher or faculty’s concerns system. For example, a peak at Stage 4 indicates teachers are thinking about how the innovation will affect their students. The relative position of Stages 1 and 2 to each other and the relative position of Stages 5 and 6 to each other have significant meaning. If Stage 1 is higher than Stage 2, the teacher has greater concerns about how the innovation will impact them and will resist learning more about the innovation. If Stage 6 is higher than Stage 5 by even one percentile point, the teacher or faculty are looking for ways to undermine implementation of the innovation.

DDDM

While the “data” in DDDM in the US do include end-of-year state mandated tests, data also include formative assessments, teacher-made tests, competency-based assignments and projects, conversations with students, as well as attendance and other behavioral data. For example, a teacher may use a prior year’s summative standardized exam to determine a student’s strengths and weaknesses, and use early formative assessment in the new school year to assess the stability of these attributes after summer break. By talking with the student and setting goals, the teacher collects further data to guide her practice and the student’s path. Over time, teacher-made tests and assignments will reveal more about the student, their growth toward goals, and further inform future instruction.

DDDM and Teacher Education

In the US, a number of groups influence or take part in the governance, supervision, and evaluation of teacher education programs. For example, the American Psychological Association (APA) developed and advocate for emphasis on DDDM for K-12 teaching and learning (APA, Coalition for Psychology in Schools and Education, 2015). Of their 20 principles for teaching and learning, three of APA’s principles relate to assessing student progress, understanding basic psychometrics, and understanding student data. While APA’s principles may be considered as recommendations for good practice, the Council of Chief State School Officers’ (CCSSO) principles have a far more direct influence on teacher education programs. Their principles also share significant overlap with the APA principles with regard to DDDM.

The CCSSO developed the Interstate Teacher Assessment and Support Consortium (InTASC) principles to outline what must be covered in teacher preparation course work. In addition, the Council for the Accreditation of Educator Preparation (CAEP) evaluates how well teacher education programs cover these standards. Subsequently, CAEP evaluation teams may either support or deny accreditation of programs based on how well these standards are met. The InTASC principles explicitly identify assessment skills as a critical training component and skill set related to instructional practice (CCSSO, 2011). Notably, the sixth InTASC standard states it is essential “[t]he teacher understands and uses multiple methods of assessment to engage learners in their own growth, to monitor learner progress, and to guide the teacher’s and learner’s decision making” (CCSSO, 2011, p. 9). Thus, future teachers should leave a teacher education program prepared to use classroom and standardized assessment data to identify learning disabilities, to discern gaps in student understanding, to determine instructional weaknesses/professional development needs, and to guide everyday and long-term instructional decision-making.

According to this oversight and accrediting bodies, US teacher preparation curriculum should teach future teachers to create and interpret formative and summative classroom assessments, to comprehend the purpose of and how to interpret the wide variety of standardized assessment scores (e.g., norm-referenced, criterion-referenced, grade equivalent), and how to connect data to their professional practice. Connecting data to practice includes tailoring instruction to meet individual student learning needs, whole-class learning needs, and identifying personal needs for professional development. Various teacher education programs may approach this in a myriad of ways, but it is mandated that these instructional practices be covered in some way.

Resistance to DDDM

This evidence-based instructional paradigm has repeatedly been shown to help teachers effectively facilitate student learning and achievement (Carlson, Borman, & Robinson, 2011; Evans, 2009; Scheurich & Skrla, 2003; Wayman, Midgley, & Stringfield, 2006). However, school cultures are often resistant to the idea of using data to drive instruction in the US (Dunn et al., 2013a) and across the globe (Brown, Lake, & Matters, 2010; Remesal, 2011; Schildkamp & Kuiper, 2010). This is not without just cause. The enactment of No Child Left Behind (NCLB) introduced a reward and punishment mechanism in association with standardized testing outcomes. Standardized tests can be powerful tools for educators, backed by decades of psychometric work, but NCLB’s model has bred more resistance to DDDM (Mertler, 2007; Dunn et al., 2013a).

The high-stakes carrot-and-stick model, connected to standardized testing outcomes, is contrary to decades of motivation research and has turned data into what teachers may perceive as a weapon wielded against them within their profession, the research literature, and the popular media. For example, the school funding, teacher evaluation, and teacher pay structure attached to DDDM serve as external rewards. An ample body of research indicates these types of external rewards do little to increase higher-level behaviors like DDDM, nothing to maintain them, and often much to decrease them (Gneezy, Meier, & Rey-Biel, 2011; Ryan & Deci, 2000). While DDDM entails the use of a wide array of student data to inform instructional decision-making, the negative impact of tying extrinsic motivators, namely funding and teacher pay, to standardized assessment has likely negatively colored how educators view DDDM (Mertler, 2007; Dunn et al., 2013a).

While the problem of resistance has been identified and minimally studied in practicing teachers, there is a scarcity of research on the issue of resistance to DDDM in pre-service teachers (Dantow & Hubbard, 2015; Dunn et al., 2013a). This is unfortunate, as teachers are the major change agents for any innovation as well as student success, and because teacher education is the ideal arena in which to address training needs. This study sought to begin to fill the gap in our understanding of future teachers’ affective responses to the DDDM pedagogical paradigm.

Concerns and DDDM

Dunn and her colleagues studied teacher concerns regarding DDDM (Dunn et al., 2013a; Dunn, Airola, Lo, & Garrision, 2013b; Dunn et al., 2013c). They worked with 1,579 teachers in 25 school districts, finding that the teachers were both resistant to DDDM and reluctant to engage in classroom-level DDDM. More specifically, the teachers’ concerns profile revealed they were “going through the motions” or superficially engaging in district desired DDDM practices. This was indicated by SoCQ peaks at Stage 0: Unconcerned and Stage 6: Refocusing, but the teachers were not really true adopters or users (Dunn et al., 2013c, p. 678). In addition, the teachers not only believed they knew of better innovations to use, but were also actively exploring other courses of action.

Of particular interest were the negative one–two split (Stage 2: Personal was higher than Stage 1: Informational) and the tailing-up (Stage 6: Refocusing was higher than Stage 5: Collaboration). The negative one–two split indicated that teachers were not open to learning more about DDDM because they were very worried about how DDDM would affect them (e.g., teacher evaluations). The tailing-up was extreme (16 percentile points) (Dunn et al., 2013a), and should “be heeded as an alarm” (George et al., 2006, p. 42) to the likelihood that teachers would seek to undermine implementation of the desired DDDM practices in their schools. Through targeted professional development efforts, the teachers’ profiles improved and many teachers became classroom-level data-users and active members of school-level data teams (Airola & Dunn, 2011).

For pre-service teachers, it seems a simple task to prepare them to use data to guide their instructional decision-making. Yet, many teacher educators may agree, this task is not as simple as it sounds, and recent research suggests that teachers are not adequately prepared to engage in DDDM practices (Davidson & Frohbieter, 2011; Dunn et al., 2013a; Mandinach & Gummer, 2013; Wayman & Cho, 2008), and that they are aware of this gap in their training (Means, Padilla, DeBarger, & Bakia, 2009). While literature regarding the lack of teacher readiness to engage in DDDM is readily accessible, no research has examined reasons why pre-service teachers may still be entering the profession underprepared.

The persistence of this phenomenon is somewhat surprising, as the national core standards for teacher preparation in the US are provided by CCSSO’s InTASC principles and required by their accrediting body, CAEP. These groups and APA specifically identify assessment skills as a critical training component and skill set related to instructional practice (APA, Coalition for Psychology in Schools and Education, 2015; CCSSO, 2011). Although the breadth, depth, and quality of coverage for InTASC’s sixth standard likely vary across different classrooms and campuses, it is expected to be covered in all teacher education programs. While these coverage-related issues would support valid lines of inquiry, it is also important to identify non-curricular issues that may impede new teacher readiness to engage in DDDM. In other words, it is important to ask, “If we are covering it nationally, why is there still such powerful resistance, and where does the resistance begin?” It was hypothesized that like in-service teachers, pre-service teachers may already be resistant to DDDM. Soon-to-be new teachers spent their formative years in the same school cultures that are often resistant to DDDM. Moreover, they are the products of the NCLB era.

The majority of traditional teacher education students, who will complete their teaching degree in four years and graduate in the spring of 2016, were in the third grade when NCLB was put into practice in 2002. In an ongoing study, Dunn and her colleagues are discovering that pre-service teachers not only equate DDDM with their K-12 experiences with standardized testing, but overwhelmingly, whether they were “good” or “bad” test-takers, viewed those experiences negatively (Dunn, Skutnik, Patti, & Sohn, in progress).

It may be that pre-service teacher resistance begins when they are still K-12 students, but it is important to first establish if and what kind of resistance exists in pre-service teachers. The purpose of the current study was to address our gap in understanding why, if they were taught about DDDM, newly minted teachers might continue to enter the workforce lacking readiness to engage in DDDM. To do so, the CBAM and the SoCQ were used to identify and interpret pre-service teachers’ concerns related to the adoption of DDDM practices. Based on the existing literature, it was expected that this sample of pre-service teachers would present a non-user concerns profile, which included resistance to the concept, indicating a low likelihood of adopting DDDM practices in their future classrooms.

Methods

The following section describes this study’s participants, procedures, measures, and data analysis techniques used to answer the following research question: After receiving DDDM training, what does the SoCQ concerns profile reveal about this sample of pre-service teachers’ concerns about DDDM?

Participants

The participants in this study were 78 fully admitted teacher education students enrolled in a required applied educational psychology course at a large Midsouthern university in the US. This course covers assessment and DDDM. At the time data were analyzed, 137 students had completed the surveys for a class assignment, but only 78 provided consent for inclusion in this study.

Participants were primarily female (91%) and predominantly Caucasian (89%). The remaining participants’ ethnicity may be described as follows: African American (

Procedures

Prior to collecting new data, Institutional Review Board approval was garnered. Subsequently, students were recruited by soliciting informed consent for the inclusion of their data from classroom activities in this study. As part of this class, students completed surveys to assess their concerns regarding DDDM. All data were collected online using Qualtrics. To submit responses, all items had to be answered. Thus, there are no missing data. Submission of data was not voluntary, as it was part of class activities. However, inclusion of one’s data for research purposes was voluntary.

Surveys were submitted after completing an online training unit and 6 hours of class meetings and related work regarding DDDM. The online unit presented information in a textbook-like fashion using an adaptive release function in Blackboard. After a small unit of reading, adaptive release required students to earn a 100 percent score on an imbedded quiz prior to moving on to the next small unit of DDDM-related text. The materials also included videos about how to interpret different types of scores as well as teacher testimonials related to how they used data to plan for individual students, class lessons, and parent–teacher conferences. Students also participated in a role play activity in which they took turns using data to play the part of teacher explaining to the parent how their student was doing in their hypothetical class.

Measure

The SoCQ was utilized to assess participants’ concerns about the possibility of using DDDM practices. SoCQ percentile scores were used to create a Stages of Concern profile (George et al., 2006; Hall et al., 1979). The SoCQ consists of 35 items, includes seven scales, and uses a seven-point Likert scale. The seven scales assess the seven stages of concern—Unconcerned (Stage 0), Informational (Stage 1), Personal (Stage 2), Management (Stage 3), Consequence (Stage 4), Collaboration (Stage 5), and Refocusing (Stage 6).

Stage 0 assessed if respondents were really considering DDDM (five items; example item: I am more concerned about another innovation). Stage 1 items assessed if respondents held concerns regarding a need for more information or possessing too little information about DDDM (five items, example item: I have a very limited knowledge of DDDM). Stage 2 items assessed if respondents were concerned about how the innovation would impact them (five items; example item: I would like to know the effect of DDDM on my professional status). Stage 3 items assessed if respondents were concerned about how to incorporate and then manage DDDM in their future day-to-day work (five items; example item: I am concerned about conflict between my interests and my responsibilities). Stage 4 items assessed if respondents were concerned about how the DDDM will impact others (five items; example item: I am concerned about how DDDM affects students). Stage 5 items assessed respondents’ concerns about working with colleagues on DDDM (five items; example item: I would like to coordinate my effort with others to maximize DDDM’s effects.) Stage 6 items assessed respondents concerns about improving upon or moving beyond DDDM (five items; example item: I am concerned about revising my use of DDDM).

More than 30 years of research supports the validity and reliability of the SoCQ (see George et al., 2006). The Cronbach’s alphas for the pre-service sample supported the reliability of the SoCQ in this study (0.84, 0.83, 0.87, 0.77, 0.63, 0.88, 0.75, respectively) according to Nunally’s (1967) cut off for acceptability and George and Mallory’s (2003) cut off for approaching acceptable (α > 0.60). While the Stage 4 (Consequence Concerns) subscale’s internal consistency level was lower relative to the other scales, this was unsurprising as pre-service teachers do not yet have a firm grasp of the implications of policy and practice on students. Yet, the participants’ understanding and experience levels did support responses that met internal consistency level for Stage 4 (α > 0.60). The SoCQ constructs are expected to correlate to varying degrees, sometimes very strongly, as concerns theory suggests, making the use of factor analyses techniques improper. The validity of the measure was determined via a 2-year, labor-intensive process in which “intercorrelations matrices, judgments of concerns based on interview data, and confirmation of expected group differences and changes overtime were used to investigate the validity of SoCQ scores” (George et al., 2006, p. 12).

Data Analysis

Data analysis included the use of percentile scores to produce profiles that were interpreted according to George and colleagues’ (2006) guidelines. More specifically, the SoCQ profile was created, analyzed, and interpreted according to the SoCQ manual (George et al., 2006). Scores for each of the seven scales or stages of concern were computed for respondents using raw scores. Raw scores were the sum of the responses to the five questions matching each of the seven stages of concern. The average responses for each question were converted to percentiles and graphed to create the SoCQ profile. The SoCQ profile graphically presented the percentiles to illustrate the relative intensity of the percentiles for each stage of concern for interpretation purposes.

Results

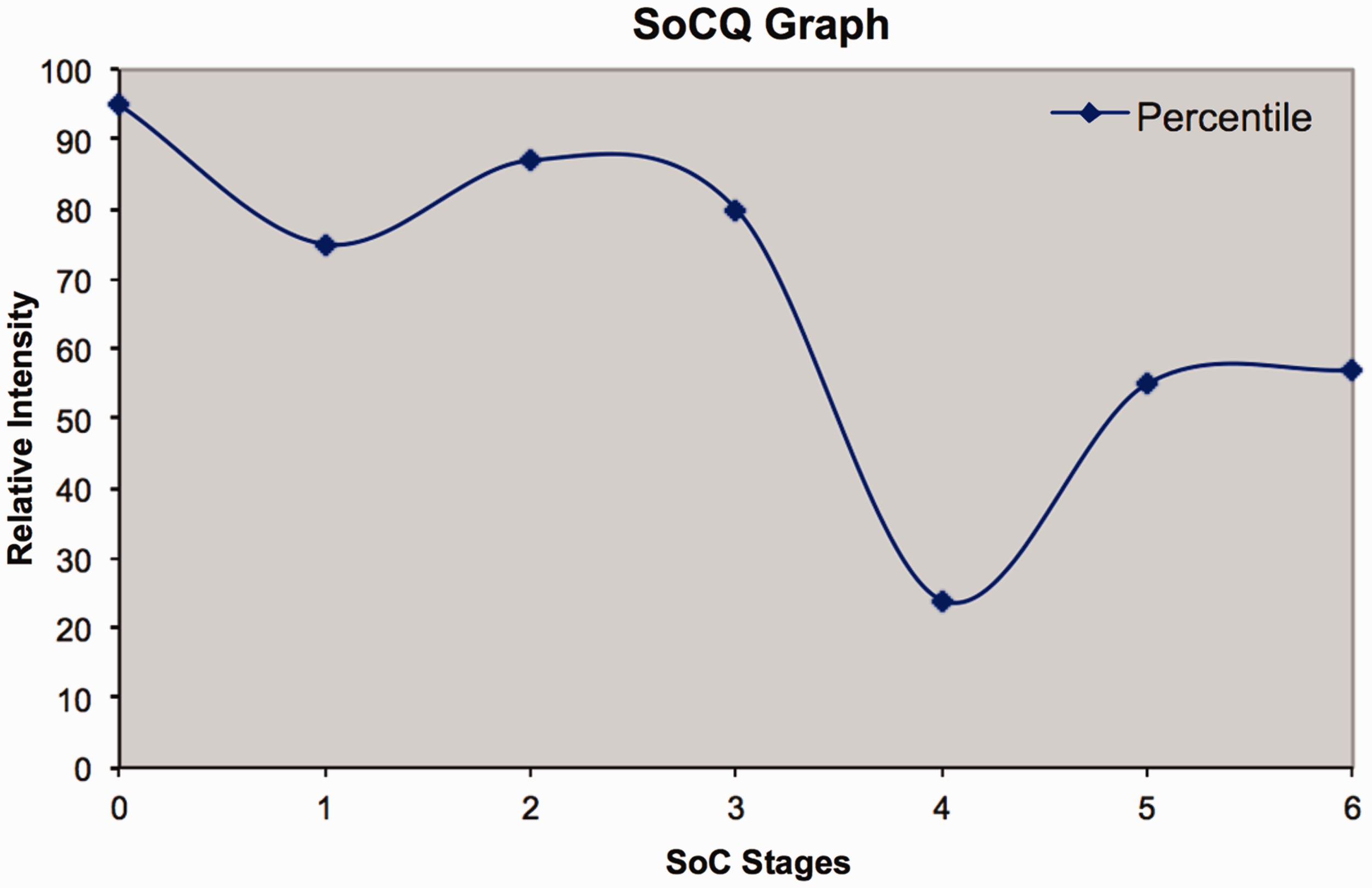

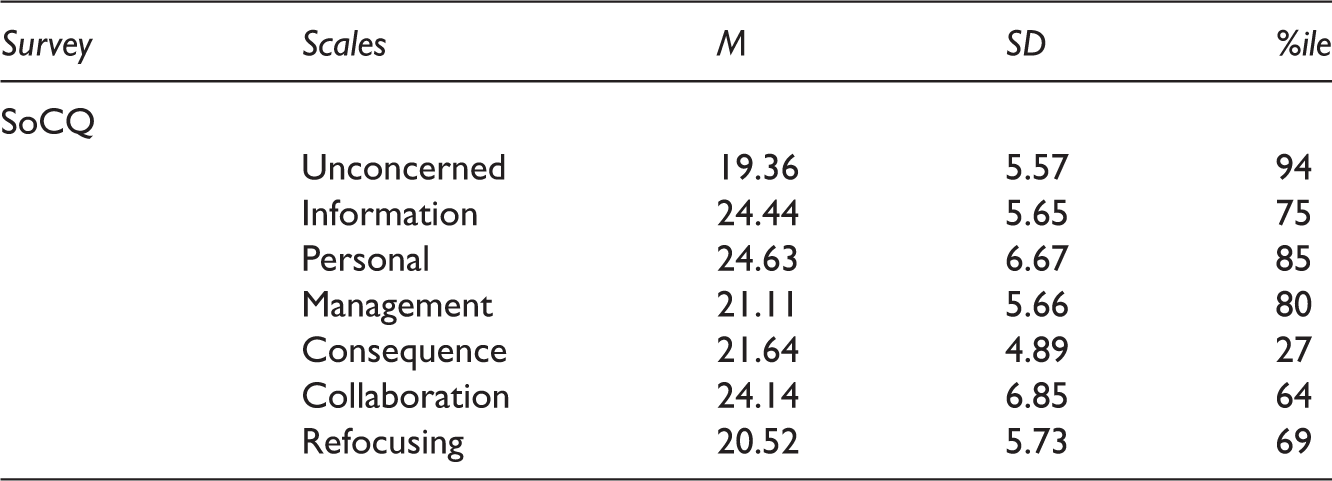

Means and standard deviations are presented in Table 1 for the SoCQ scales. Figure 1 presents the resultant SoCQ profile. Subsequently, the profile is explained in accordance with the SoCQ manual (George et al., 2006).

SoCQ Profile for Pre-service Teachers for DDDM. The Y-axis Represents the Percentile Score. The X-axis Numbers Present the Stages of Concern (0–6). 3DMEA and SoCQ Means, Standard Deviations, and Percentile

According to the SoCQ manual, Figure 1 is a non-user profile with both a negative one–two split and a tailing-up. The dominant peak at Stage 0 (Unconcerned) and the generally high self concerns (Stages 1 and 2) are indicative of a non-user profile as per George and colleague’s (2006) interpretation guidelines. The negative one–two split, in which Personal Concerns (Stage 1) are higher than Informational Concern (Stage 2), indicated that the respondents were not receptive to learning more about DDDM until their concerns regarding how DDDM will impact them, how they will be evaluated, and how they will be rewarded or punished are addressed. This suggests that these respondents were not open to learning about DDDM, and if presented with further information, their resistance would likely increase. The tailing-up was indicated by the five-percentile point increase from Stage 5 to Stage 6 (George et al., 2006). Even a small tailing-up reflects that the group may hold some reservations about the possibility of adopting DDDM practices in their future classroom, the respondents believe they know of other approaches that likely work better, and they will likely pursue those alternative instructional practices (George et al., 2006).

Limitations

The current study has several limitations. First, a convenience sample was utilized, and the sample was small, relatively homogeneous, and from one university. The aforementioned limitations curtail the generalizability of the findings. The use of only self-report survey data was another limitation of the study. Further, the only data analyzed followed instruction; future research is underway to utilize a pre–post design with a larger sample. No interviews were conducted nor observational data collected, which would have provided greater depth of understanding. In addition, the response rate was somewhat low (43%). This is not a horribly low response rate for an online survey that was not required as part of course work (Nulty, 2008). Because teacher education students are a relatively homogenous population, it is difficult to determine any particular differences between those who responded and those who did not. Finally, the pre-service teachers were not followed into their internship or professional practice; thus, developmental conclusions cannot be made based on the current data set. While future researchers should consider addressing these limitations, the present results provide interesting insights into pre-service teacher affective response to DDDM, which may impede the impact of DDDM-based education and training.

Discussion

As was hypothesized, the pre-service teachers presented a non-user, resistant-to-DDDM profile, and the results have implications for instruction in these required introductory educational psychology courses. The higher relative position of their Personal Concerns to their Informational Concerns indicated that providing more facts about DDDM was not just inappropriate, but likely counterproductive. The more information pushed at these pre-service teachers, who are worried about how DDDM may affect them—how they will be judged and the fairness of related processes—the less likely they are to retain the information and to adopt DDDM practices. This finding was similar to Dunn and her colleagues’ (2013c) results with practicing teachers. The pre-service teachers in this sample also manifested a tailing-up, denoting a resistance to DDDM practices and willingness to undermine them. Their tailing-up was nine percentile points smaller than that of the practicing teachers. As the pre-service teachers’ use of DDDM in the classroom is still in the future, it is not surprising that the tailing-up is somewhat smaller. What is important, however, is even a small tailing-up is a call to action as the CCSSO’s (2011) InTASC recommends that we train future teachers and expect practicing teachers to engage in DDDM at the classroom level. For teacher educators, whether working with practicing or future teachers, it is clear that concerns such as these must be considered in concert with DDDM content if any change is to result. In sum, the results of this study not only align with what is known about practicing teachers, but results also revealed that resistance to DDDM exists early upon entrance to a teacher education program and regardless of 2 weeks of DDDM training. Thus, these entrenched views and concerns must be understood, unpacked, and addressed specifically and prior to presenting DDDM content in order to decrease the likelihood that resistance to DDDM continues.

Future Research

The current line of study suggests there may be an important developmental pattern for teacher resistance to DDDM. While resistance to DDDM in practicing teachers has been identified in the literature (Brown et al., 2010; Dunn et al., 2013c; Remesal, 2011; Schildkamp & Kuiper, 2010), the results of this study suggest that resistance begins before teachers fully enter the profession. Ongoing research is starting to examine teacher education students’ reflections about their experiences with testing and how their teachers used data during their experiences as K-12 students (Dunn et al., in progress). However, much more work is needed to understand this systemic issue.

The existing evidence suggests that continuing to present more information and training to teachers and teacher education students may be ineffective and a poor use of resources; instead, future researchers and teacher educators may need to take a prescriptive approach, diagnosing possible resistance and other variables that may influence one’s willingness to learn about and adopt DDDM practices and prescribing appropriate instructional strategies to these challenges.

Recommendation for Practice

One evidence-based model for this type of prescriptive practice is the ‘teaching as persuasion’ metaphor or model. This popular metaphor, which was once prevalent in the literature, may need to be revived for preparing future and current teachers to use data. In this metaphor, the instructor uncovers reasons why an individual or a group may resist a new concept and share information that supports the valiance of the new concept (Murphy, 2001). By exposing issues and misunderstandings that lead to resistance to DDDM and addressing them, future teachers may be more open to learning about DDDM and likely to adopt DDDM practices in the future.

For example, a common misconception from the students in this study was that DDDM only includes standardized assessments, not teacher observations or classroom activities. One student’s pre-training comment regarding DDDM captures this misconception well: “I do not think teachers should base their instructional strategies and lessons so much on assessment and data, but also on classroom and student observations. Student scores on assessments are important, but there are also just as important factors.” This type of misconception is easy to deal with, if you know it exists. By presenting a case study with teacher notes, homework, tests, and standardized tests, a student may begin to better understand what DDDM truly is and feel less resistant to the idea. Future research should explore the impact of using a teaching as persuasion approach to presenting DDDM to future teachers.

Conclusions

Ultimately, what this study may do is help those of us who teach these introductory educational psychology courses that cover DDDM to better prepare future teachers to not only be open to data use, but to also effectively use data to drive classroom practice, improving teacher and learner outcomes. The current findings align with research on practicing teachers. Simply put, they are worried about how data may affect them, and they do not buy in to the idea of using data to drive instruction. Two weeks of material was not sufficient to change this entrenched belief system in pre-service teachers; more persuasive approaches to instruction must be implemented to help decrease student resistance to DDDM instructional materials and ultimately increase the likelihood that these future teachers will utilize data to guide their instructional decision-making. As teacher educators, educational psychologists must work to better understand these issues and to help our students see DDDM as a powerful tool for improving teaching and learning as suggested by InTASC.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The University of Tennessee's Office of Information Technology funded this research via their Project RITE (Research of Instructional Technology in Education) grant program.