Abstract

Applying qualitative methods, this paper examines the burgeoning of quality assurance databases, processes and networks of actors in the field of higher education in Europe. Our main argument is that there has been a move from the Bologna Process being the near singular focus for European-level coordination and harmonisation of higher education, towards the making of a much more diverse and complex quality assurance and evaluation infrastructure. This infrastructure involves a range of distinct but interdependent actors and processes and contains explicit and implicit interlinkages with the production of wider policy agendas, such as the rise of the European Education Area. The aim of this paper is to analyse the growth and complexity of Quality Assurance (QA) in higher education (HE) in Europe, as a way of understanding the multifaceted and continuously developing process of Europeanisation.

Keywords

Introduction

Since the foundation of the EU, higher education has always been a central to Europeanisation, a process that intensified with the Bologna (1999) process. However, as with higher education itself, Europeanisation is an organic, living entity, taking root and growing in unexpected ways. No matter the gardener’s efforts, the European space shapes and is being shaped by a project that develops multiple roots, takes different directions and is becoming ever more complex and difficult to disentangle (Carter et al., 2015). The aim of this paper is to analyse the growth and complexity of Quality Assurance (QA) in higher education (HE) in Europe, as a way of understanding the multifaceted and continuously developing process of Europeanisation. For this purpose, we will apply the concept of ‘epistemic infrastructure’ (Grek, 2022) in order to show how QA in HE in Europe, though still firmly rooted in the Bologna Process, has become a complex knowledge infrastructure with new materialities and actors that have significantly expanded in novel ways. As a result, it has led to the development of intricate webs of education actors and data that have strengthened the emergence of a European education policy space. In fact, the latter is not an imagined space any longer, either to be embraced or resisted; it has become the officially announced and strategically drawn European Education Area, as a single and unified EU policy arena.

Since the turn of the century, a powerful device for the construction of the European education policy space has been the incessant generation of statistical data to monitor performance (Lawn, 2011; Lawn and Grek, 2012; Grek, 2016). The datafication of education policy (Grek et al., 2020) occurred – and partly led to – a fixation on notions of quality assurance and evaluation (Ozga et al., 2011). Indeed, recent decades have seen the notion of ‘quality’ becoming central to attempts to control and develop both public and private institutions, as evident through the proliferation of terms such as ‘quality assurance’, ‘quality enhancement’, audit and ‘quality monitoring’ (Jarvis, 2014). While industrialisation brought the idea of quality assurance to the fore, as the means by which to ensure mass-produced goods could withstand an ‘objective’ quality test against a set of predetermined criteria, after the 1980s and the rise of New Public Management, ‘quality’ acquired a double meaning. It now relates not only to the quality of products or services but, crucially, represents a key criterion for judging how organisations are run. ‘Quality gurus’ emerge and quality assurance processes travel from organisation to organisation (Power, 2003). Quality must be measured quantitatively and at all times, and it represents the means through which organisations can be compared and become ‘known’ to citizens/consumers. In the case of transnational policy spaces and political projects, like the EU, quality and all its associated measurement processes, such as those of ‘quality assurance’, become a main mode of ‘soft’ governance (Lawn, 2011), operating through the setting of common benchmarks and standards and the promotion of constant self-regulation as a way to learn and to align oneself with international ‘best practice’.

Since the 1990s, this ‘soft governance’ turn has led to the creation and expansion of a European-level quality assurance system in higher education in Europe (Gornitzka and Stensaker, 2014). QA is often imagined as an instrument for greater internal mobility in Europe, while also advertising and guaranteeing the quality of European skilled labour and knowledge products, in line with European Union goals related to becoming the world’s most advanced knowledge economy. Applying qualitative methods, this paper examines the burgeoning of databases, QA processes and networks of actors in this field. Our main argument is that there has been a move from the Bologna Process being the near singular focus for European-level coordination and harmonisation of higher education, towards the making of a much more diverse and complex quality assurance and evaluation infrastructure. This infrastructure involves a range of distinct but interdependent actors and processes and contains explicit and implicit interlinkages with the production of wider policy agendas, such as the rise of the European Education Area.

The following section proceeds with contextualising the article within scholarship in the field of the sociology of quantification, and specifically the ways that education research has worked with the effects of data and number-making on education governance. We continue with an outline of some of the most significant events that have contributed to an increased emphasis on quality and QA in higher education in Europe. Here we also introduce the concept of an epistemic infrastructure (Grek, 2022; Tichenor et al., 2022) that we use to frame our work, distinguishing multiple interconnected layers of infrastructure. In line with this framework, we then pursue our main argument in three steps. First, we examine shifts in the materiality of HE quality assurance, in the form of standards, data and reports. Second, we explore networks of actors involved in European QA and measurement processes, their interdependencies, and their contestations; we examine European actors, such as ENQA and EQAR, but also the influence of global ones, such as the OECD. Finally, we reflect upon what these explorations reveal about how the epistemic infrastructure relating to QA and quality measurement in Europe has evolved over the last two decades, what the position of the Bologna Process has been in these dynamics and how QA has become a central feature and driver of not only the Europeanisation of HE per se, but also the governance of European education as a whole.

‘Governing by numbers’ in transnational education governance

Scholarship on the role of numbers in governing societies has been abundant and has attracted multiple fields of study, including sociology, history, political science, geography, anthropology, philosophy, STS and of course, education. Prominent authors have written lucidly about the role of numbers in the making of modern states and the governing role of measurement regimes in various areas of public and education policy in particular (Alonso and Starr, 1987; Desrosieres, 1998; Espeland and Stevens, 2008; Gorur, 2012; Hacking, 1990, Hacking, 2007; Landri et al., 2017; Lindblad et al., 2018; Lingard and Sellar, 2013; Madsen, 2022; Piattoeva and Boden, 2020; Popkewitz et al., 2020; Porter, 1995; Power, 1997; Rose, 1999). Similarly, anthropologies of numbers suggest that ‘our lives are increasingly governed by – and through – numbers, indicators, algorithms and audits and the ever-present concerns with the management of risk’ (Shore and Wright, 2015: 23; see also influential work by Merry, 2011; Sauder and Espeland, 2009; Strathern, 2000). Further, important insights and perspectives on indicators in particular come from STS (Bowker and Star, 1999; Lampland and Star, 2009; Latour, 1987; Saetnan et al., 2011), including actor network theory (Latour, 2005). Finally, there is a small but growing body of studies relating to specific uses of indicators and quantification in transnational governance contexts (e.g. Bhuta, 2012; Bogdandy and Goldmann, 2008; Fougner, 2008; Martens, 2007; Palan, 2006), as well as a focus on the role of data technologies and databases in particular (Decuypere et al., 2014; Ratner and Gad, 2018; Ratner and Ruppert, 2019; Williamson, 2016).

Nonetheless, despite the burgeoning number of publications on ‘governing by numbers’ in other policy fields, our understanding of the relationship of the politics of measurement and the making of transnational education governance is less well-examined. As Djelic and Sahlin-Andersson (2006) suggest, due to the fluidity and complexity of the intense cross-boundary networks and soft regulation regimes that dominate the transnational space, transnational education governance is a particularly productive field of enquiry on the role of numbers in governing (see e.g. Grek 2012; Grek, 2020, 2022). This lack of attention could be due to disciplinary boundaries; for example, scholars of International Relations (IR) and international law have not paid much attention to the field so far, although there is a rise in some interesting literature of the role of numbers in global political economy (e.g. Fougner, 2008; Martens, 2007; Palan, 2006).

What are the properties of numbers that would suggest such a central role in the production of transnational governance? By contrasting numbers to language, Hansen and Porter (2012) suggest that, although it took scholars a long time to recognise the constitutive nature of discourse, we are now well aware of the role of language in shaping reality. However, they suggest that numbers are characterised by additional qualities that make their influence much more pervasive than words: these elements are order; mobility; stability; combinability and precision. By using the example of the barcode, they lucidly illustrate ‘how numerical operations at different levels powerfully contribute to the ordering of the transnational activities of states, businesses and people’ (Hansen and Porter, 2012: 410). They suggest the need to focus not only on the nominal qualities of the numbers themselves but, according to Hacking (2007), ‘the people classified, the experts who classify, study and help them, the institutions within which the experts and their subjects interact, and through which authorities control’ (p. 295).

It is precisely on those data experts that this article focuses upon; following the literature on the capacities of numbers to both be stable yet travel fast and without borders, the paper sheds light on what Latour (1987) called ‘the few obligatory passage points’ (p. 245): in their movement, data go through successive reductions of complexity until they reach simplified enough state that can travel back ‘from the field to the laboratory, from a distant land to the map-maker’s table’ (Hansen and Porter, 2012: 412). Quality assurance, with all its organisational processes and data production machineries, especially in the field of higher education in Europe, has become such a crucial ‘obligatory passage point’ and hence the place where we will turn to next.

Quality assurance in higher education in Europe: Bologna and the making of an infrastructure

Although the story of efforts for the convergence of higher education in Europe goes as far back as the inception of the European political project in the early 1970s, it was the Bologna Declaration of 1999 that instituted a process that fundamentally reshaped European higher education (Curaj et al., 2018; Enders and Westerheijden, 2014; Schriewer, 2009). While the precise objectives have evolved over time in connection with the work of the Bologna Follow-Up Group (BFUG) and, in particular, the Ministerial Conferences of members, the main goals of the process have concentrated on mobility between, and the compatibility of, higher education systems and the pursuit of quality in higher education (Bergan, 2019). In practical terms, the drive towards these objectives have included a focus on the structuring of systems in accordance with the three-cycle approach (Bachelor, Master’s, Doctorate); the creation of an EHEA Qualifications Framework and the development of common standards and processes for QA (Bergan and Deca, 2018; Brøgger, 2019). This drive resulted in the announcement of an education space of enhanced mobility and competitiveness, the European Higher Education Area (EHEA) in 2010. Extending beyond the borders of the European Union, the EHEA’s 49 country? members – joined by the European Commission and a range of stakeholder organisations – have all agreed to pursue the goals of the Bologna Process, altering their HE systems to facilitate the mobility of students and staff between EHEA members and to enhance the employability of graduates (Barrett, 2017).

These processes of reform have been accompanied by the creation of a wealth of academic and practitioner publications describing the evolution of Bologna and the EHEA, evaluating the strengths and weaknesses of the EHEA and prescribing future directions for development. The mammoth edited volumes on higher education within the EHEA by Curaj et al. (2012, 2015, 2018) are a clear example of this body of literature. However, gaining analytical purchase on the transformations within HE since the initiation of the Bologna Process, requires stepping outside of an ‘insider’s perspective’ (Dale, 2007) and viewing the developments in their historical and political context. Corbett (2012), for example, highlights how European higher education cooperation and governance have changed with the onset of the Bologna process and the creation of the EHEA, with new European policy arenas being created where there had been relatively little European-level action. As both Dale (2007) and Corbett (2011) indicate, all this reflects wider transformations in the role of the university in the era of knowledge economies and, in particular, the notion of a Europe of Knowledge (Corbett, 2012; Dale, 2007). The rapid adoption of the push for Bologna reforms and the EHEA – with 45 countries involved by 2005 (Bergan, 2019) – speaks to their political resonance for this changing context.

One of the most significant dimensions of change associated with the Bologna Process has been the role and influence of the European Union in HE. The European Commission’s scope of action in education is restricted by the subsidiarity principle in education, but the Bologna Process has provided a means for the Commission to fulfil its supporting obligations and to work around such limitations (Brøgger, 2016; Capano and Piattoni, 2011). Despite being initially positioned outside the Bologna Process and the development of the EHEA, the European Commission has come to occupy a central role in driving the agenda (Dakowska, 2019; Robertson, 2008). Keeling describes, for example, how the European Commission began to dominate the higher education discourse in the 2000s, with the Commission’s involvement in the language politics around research policy and the Bologna Process contributing significantly to ‘the development of a widening pool of “common sense” understandings, roughly coherent lines of argument and “self-evident” statements of meaning about higher education in Europe’ (Keeling, 2006: 209). As Magalhães et al. (2012) explain, part of this process of European consolidation has been the ability of the European Commission to bring together, or to articulate (Veiga, 2019), multiple agendas and discourses in ways that expand the legitimacy of European-level action in the EHEA and in higher education more broadly. Of particular importance was the drawing together of the development of the EHEA with the economic agenda of the Lisbon Strategy, which sought to ‘to make the Union the most competitive and dynamic knowledge economy in the world’ (Krejsler et al., 2012), and the Modernisation Agenda for universities, inspired by the New Public Management school of thinking (Enders and Westerheijden, 2014). Crucial here also is the ability of the Commission to allocate funding to support activities that align with its conception of what the EHEA should be, especially given the lack of overall EHEA funding. Thus, either directly or indirectly, HE in Europe has become a key Europeanising force, not merely in the field of education but, given its role in promoting mobility, the European project as a whole.

Building on a growing literature that studies QA in HE in Europe (Brøgger, 2019), our analysis aims to examine, first, how the expansion of quality assurance processes in European higher education has occurred, and second, what this growth may mean for our understanding of the workings of Europeanisation. To do this, we explore the processes and practices of quality assurance and measurement through the analytical lens of ‘epistemic infrastructures’, building on a burgeoning body of work on processes and logics of quantification and evaluation and their governance effects (Mennicken and Salais, 2022; Tichenor et al., 2022). This reveals that the proliferation of the production of data for QA has created a complex socio-technical infrastructure, working at different levels and speeds, that has developed new materialities and interlinkages to facilitate reforms and connections with other policy infrastructures within the European education space.

A social theory interest in infrastructures first emerged in science and technology studies (STS) literature (Bowker, 1995; Star and Ruhleder, 1996) in order to describe the mix of materials, practices and meanings that comprise interlinked knowledge structures. This analytical lens allows for theorising quantification and the associated proliferation of data to measure performance as a meta-level phenomenon that governs not only through its explicit political effects but also through the creation of structures, connections and interdependencies that allow for new governing spaces to emerge. In the context of education policy, the concept of epistemic infrastructures (Bandola-Gill et al. 2022) is particularly useful for capturing the form, origins and consequences of the processes by which quantification has scaled up and linked different sites of calculation and governance. Building on our use of the term elsewhere (Bandola-Gill et al. 2022), this article conceptualises the emergence and consolidation of an epistemic infrastructure of measurement relating to quality assurance and measurement within European higher education as an interplay of three levels: the materialities of measurement; interlinkages between actors and between actors and measures and finally, the use of QA as the means to govern not only higher education but the European Education Area as a whole. Thus, our conceptualisation of epistemic infrastructures draws heavily on STS in order to analyse the role and effects of knowledge production for policy.

Methodologically, it relies heavily on ethnographic accounts of practices in organisations. More specifically, it involves discourse analysis of documents, interviews with actors and network analysis of interconnections and interdependencies between individual actors and organisations. This article draws heavily on two sources of data: discourse analysis of texts and interviews with actors. The texts we analyse were publicly available documents, found in the webpages of the organisations we scrutinise; they were selected on the basis of their strategic influence, and analysed on the basis of thematic coding, covering the last 20-year period. In terms of interviews, we primarily used the snowballing method: we interviewed actors from all key HE QA organisations in Europe, as well as other international organisations, such as the OECD and the European Commission. We interviewed a range of officials from those at top positions in these organisations, some retired actors, as well as those who are relatively fresh in the field.

Analytically, the first level of examination involves the materialities of measurement as the building blocks of epistemic infrastructures. Just as physical infrastructures are built from bricks, metal and concrete, epistemic infrastructures are constructed with data, indicators, surveys, reports, data visualisations and other such tools. These materialities encompass the complex systems of processes, standards and indicators that allow for constructing the concept of ‘quality assurance’ in practice. As we will show, through processes of harmonisation, the higher education data and metrics produced by various means and across countries and institutions have been transformed into European QA data and metrics – ones that allow for comparison, benchmarking and even competition between countries.

The second-order of the infrastructure consists of the interlinkages through which these diverse materialities are connected and held together. These interlinkages ‘are achieved through the interdependencies of different agencies and through practices of harmonization’ (Author: 6). At this level, the central role is played by epistemic communities, international organisations and varied networks of experts and QA agencies, with the implementation of QA processes requiring negotiation between and navigation of these emergent communities and networks. As we will see, fuelled by both competition and collaboration, organisations working on quality configure and reconfigure epistemic communities around particular policy goals, extending the terrain of European higher education policy in the process.

Finally, the third order level of analysis focuses on the exploration of what such growth and expansion of QA materialities and actors may mean for the governance of higher education in Europe, or even education as a European policy arena as a whole. As we will show, we observe an organic growth of actors and datasets in the field of quality assurance in Europe, boosted by the growth and expansion of quantification in policy-making; in this context, quality assurance does not represent merely a tool of governing higher education but has become a driver of Europeanisation per se, with universities being proclaimed as carriers of ‘global Europe’ and the ‘European way of life’(European Commission [EC], 2022).

The materiality of quality in European higher education

In this section, we explore major shifts that have occurred in the material infrastructure around quality assurance and measurement in European higher education since the inception of the Bologna Process; by ‘material infrastructure’ we mean the inscriptions (in the form of data, databases, standards, protocols, etc.) that are produced, reported, collected and exchanged between organisations and actors as they do their work of assuring the quality of HE in Europe. This evolving infrastructure acts to create particular ways of knowing what quality is and where it resides. We focus principally on three key such material inscriptions – the Standards and Guidelines for Quality Assurance in the EHEA (ESG) (developed by ENQA, see below), the European Quality Assurance Register for Higher Education (EQAR) and the European Tertiary Education Register (ETER) – which together illustrate the increase in both the scope and the complexity of the changing QA architecture. Crucially, we also point to how these three material inscriptions have helped create the foundations, and interconnections, that facilitate further diversification of the QA infrastructure. We conclude by bringing in other significant developments, which serve to underscore the scale of the expansion in the material infrastructure around QA and quality more broadly.

One of the first organisations to emerge in connection with the initial Bologna developments was the European Association for Quality Assurance in Higher Education (ENQA). ENQA is a stakeholder organisation whose membership comprises principally of quality assurance agencies (QAAs). QAAs perform reviews of higher education institutions and programmes, making them key actors in higher education systems. In addition to serving as the main representative of this key constituency in European higher education, ENQA has also taken a lead on developing the underlying infrastructure of QA in Europe (Ala-Vähälä and Saarinen, 2009). In 2003, the Bologna Ministerial Communique called on ENQA alongside the European Students’ Union (ESU, previously ESIB), European Universities Association (EUA) and European Association of Institutions in Higher Education (EURASHE) – the other members of what came be called the E4 – to develop an agreed set of standards, procedures and guidelines on QA (E4, 2011). This followed a recognition in the Berlin Communique of the Bologna Process that the ‘quality of higher education has proven to be at the heart of the setting up of a European Higher Education Area’, with Ministers stressing the ‘need to develop mutually shared criteria and methodologies on quality assurance’ (p. 3).

The outcome of the ENQA-led process was the 2005 creation of the European Standards and Guidelines (ESG), which were adopted as part of the Bologna Process’s Bergen Communique and which were framed as a step towards greater consistency in QA across the EHEA and enhanced trust and qualification recognition between different contexts (ENQA, 2005). The ESG outline standards and guidelines for different types of QA processes and the different actors involved in them. The standards set out broad and basic requirements in order for institutions and QAAs to be compliant with the ESG, such as that ‘institutions should have formal mechanisms for the approval, periodic review and monitoring of their programmes and awards’ (Standard 1.2). The guidelines provide ‘additional information about good practice and in some cases explain in more detail the meaning and importance of the standards’ (ENQA, 2005: 15), although it was not ‘considered appropriate to include detailed “procedures”’ (p. 11) in the guidelines. The first part of the ESG focuses on internal QA processes within higher education institutions, the second on QA by external actors (i.e. QAAs) and the third on QAAs themselves. For external QA processes, for example, ESG compliance requires that ‘Any formal decisions made as a result of an external quality assurance activity should be based on explicit published criteria that are applied consistently’ (Standard 2.3), while for QAAs it is required, for instance, that ‘Agencies should have clear and explicit goals and objectives for their work, contained in a publicly available statement’ (Standard 3.5). Backed by the force of their collective acceptance by the Bologna Process members, these standards and guidelines make claims about how we can come to know the presence or absence of ‘quality’.

As suggested by the lack of specification for the ‘mechanisms’, ‘criteria’ or ‘goals and objectives’ mentioned in the three standards above, a key characteristic of the ESG is the openness and ambiguity of the standards and guidelines (Brøgger and Madsen, 2022; Gornitzka and Stensaker, 2014). In part, this appears to be a response to the tension present throughout European-level education initiatives between the drive to harmonise practices to facilitate integration, and mobility, and the political and practical realities of Europe’s varied set of education systems. Therefore, while the ESG are working towards the ‘establishment of a widely shared set of underpinning values, expectations and good practice in relation to quality and its assurance’, the report states that diversity and variety is ‘generally acknowledged as being one of the glories of Europe’ and correspondingly ‘sets its face against a narrow, prescriptive and highly formulated approach to standards’ (ENQA, 2005: 10). Keeping a studied ambiguity in the formulation of the ESG likely serves as a means of ensuring its acceptability to a wider range of European states and education systems. Rather than a strict standardisation, this might be seen as ‘setting the outer borders within which there is scope for diversity’, as one actor in the space put it for the Bologna framework at large (SB int.). Such a description of the role and function of the ESG fits particularly well with our conceptualisation of this space as an epistemic infrastructure; that is, building the conditions and structures that, at a later stage and possibly by other actors, can be ‘filled’ with new inscriptions and procedures that will make the infrastructure intelligible and useful and grow it anew. Indeed, as another interviewee articulated, the balancing act of the ESG has been to have it ‘prescriptive enough in order to induce the change needed, but also general enough to have so many countries being able to work with it’ (CG int.). Perhaps because of this breadth and ambiguity, the ESG has been one of the most successful harmonising elements of the Bologna Process (Bergan, 2019). Pointing to the transformative power of the ESG, the same interviewee commented, for instance, that QAAs can push reforms with governments by saying that ‘we have the standards, and we have all the colleagues in Europe that are doing it like this, and then we have to align’ (CG int.); peer pressure is therefore strong, one of the most influential qualities of governing by data.

In 2015, the ESG were updated to reflect changes that had occurred with respect to other elements of Bologna, such as qualifications frameworks, as well as broader shifts, for example towards student-centred learning (ENQA, 2015). While compliance is by no means universal, the initial success of the ESG encouraged this evolution and expansion in scope. The inclusion of a new item in the ESGs, or indeed a changed interpretation, likely gives a higher likelihood of members adjusting their systems to incorporate the new directions. As one interviewee put it, ‘people think that if they put something more in the ESGs that it has the chance to be really implemented tomorrow in those. . . 49 countries which are members’ (CG int.). In recent years there have been plenty of prompts for further amendments to the ESG in connection with the popularisation of micro-credentials, for example, and the spread of digital learning approaches associated with the Covid-19 pandemic and other longer-term trends. While initially presented as a simple, technical instrument for QA practices, the ESG can be seen here to act as a governing instrument in higher education (Stensaker et al., 2010), with the potential for alterations to and expansions of the material infrastructure of the ESG to reflect new strategic choices and policy trends and, crucially, to induce corresponding changes in the education systems of member countries.

Analysing the updating of the ESG through the lens of an epistemic infrastructure is also useful for highlighting the way in which the continuous updating and movement of inscriptions of quality works to keep the momentum going for reforms. Although the ESG 2015 are less than a decade old, preliminary discussions are ongoing about the necessity and feasibility of another update. There is a sense that progress in reforms has stalled after 17 years, with the feeling in some members countries that they are ‘are repeating the same thing again and again’ (TL int.). Rearticulating the ESG offers one potential mechanism for rejuvenating the reform process. Furthermore, the centrality of the ESG for other developments related to quality in higher education incentivises the pursuit of reform, because of reforms to the ESG. As one interviewee described, ‘where the discussion about revising the ESG comes from is that there’s all this infrastructure is built around it. So if you want to change a lot of things. . . you go back to the source and the source is the ESG’ (TL int.). Thus, the ESG becomes the focal point for change in the infrastructure, without which momentum may be lost. Material inscriptions therefore are not only useful as the mere content of work undertaken as part of building the infrastructure; they can also act as levers for change, especially in moments when such change is seen as necessary.

Further, the foundational nature of the ESG can be seen in the case of the European Quality Assurance Register (EQAR), which was created in 2008 as part of following up on one of the recommendations of the initial ESG report. EQAR was the first legal entity to be created through the Bologna Process, and it functions, in some ways, as a guardian of the ESG. QAAs apply to be part of the register, and thus legitimated as trustworthy agents, and are only listed if they are judged to be compliant with the ESG by EQAR’s Register Committee (EQAR, 2020). Through this process EQAR transforms ‘QA agencies in Europe into QA agencies of Europe’ (Hartmann, 2017: 319). Register decisions are made on the basis of external reviews of QAAs that are generally coordinated by ENQA, who, along with the rest of the E4, are founding members of EQAR. The existence and effective functioning of EQAR and, to some extent, ENQA depends, therefore, on the ESG. Part of the power of EQAR and ENQA, however, is their ability, emerging from their recognised responsibility for carrying out the above duties, to create procedures and systems for interpreting the ESG so as to decide on compliance. The way in which these internal procedures and systems operate has the potential to affect which QAAs are labelled as EQAR registered, with acceptance on the register opening doors to performing QA activities in different countries, as well as which higher education institutions and programmes are recognised as being vetted by an EQAR-registered agency, which can influence, for example, how qualifications are recognised (or not) as students and graduates move between contexts.

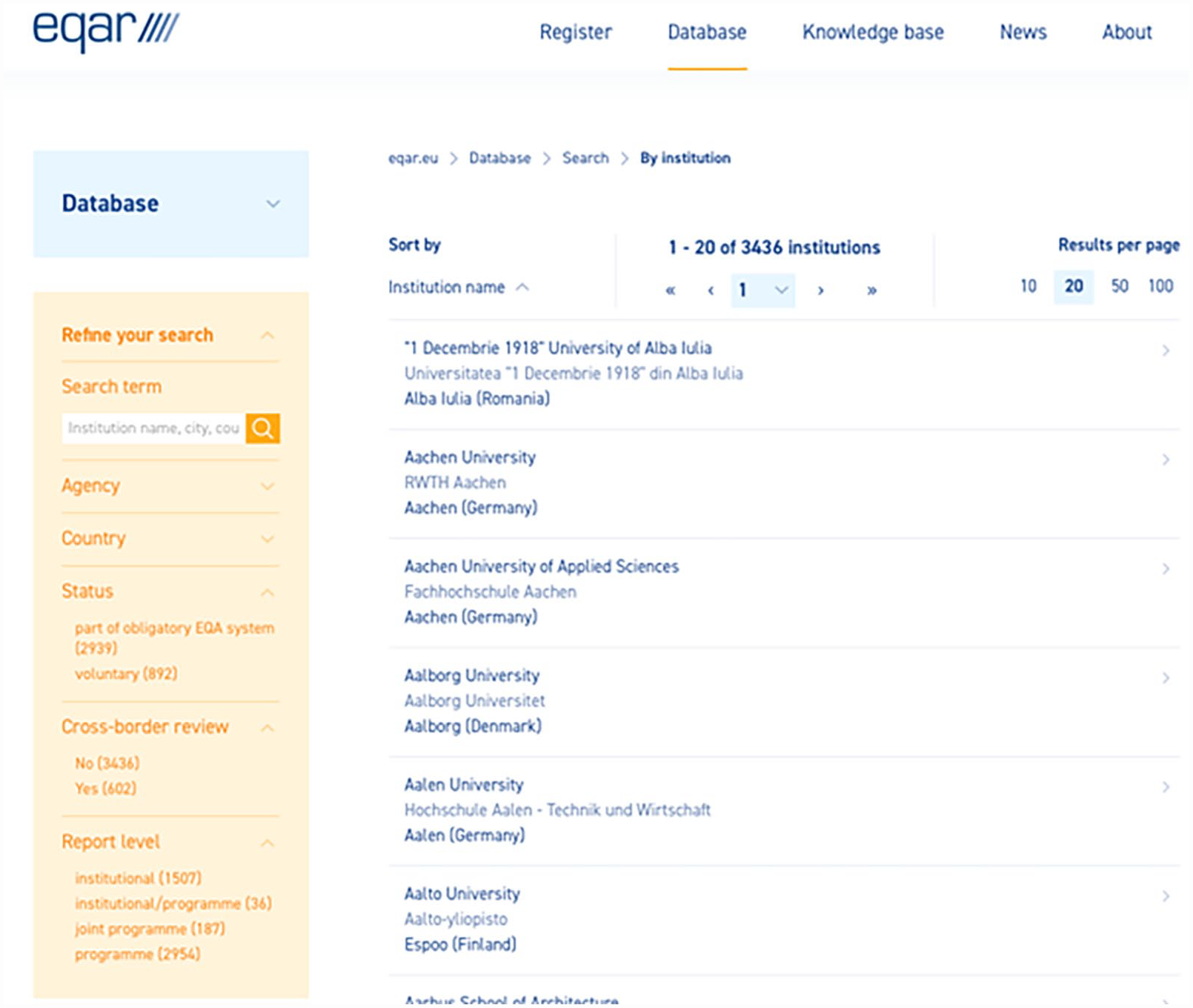

Through its register processes and reporting procedures, EQAR has been a key driving force behind an impressive expansion in the epistemic infrastructure around QA in Europe. As well as the reports prepared for admission onto the register and periodic renewal, EQAR also requires reports whenever a registered agency adjusts their practices in a way that might have an impact on their compliance with the ESG. A major expansion in EQAR’s data flows and capabilities came in 2017 when EQAR launched the Database of External Quality Assurance Results (DEQAR) (Figure 1):

The DEQAR database.

DEQAR collects and collates data not just on the QAAs that are part of the register but also, through the reports submitted by those QAAs, on the institutions and programmes that those QAAs have reviewed (EQAR, 2021). As of June 1st, 2022, DEQAR contained nearly 74,000 reports on over 3000 institutions. The foundational structure of the ESG is, again, key here, as one interviewee described: ‘DEQAR of course is also very closely related to the ESG standards for higher education and I think you couldn’t expand it to another sector or copy it or replicate it into another sector without having a similar kind of agreed European standard available. . .. If you don’t have an agreed standard, then what is the meaning of being in a database, what does it stand for?’ (CT int.). The processes of harmonisation connected with the ESG, therefore, have allowed for data produced across countries, QAAs and HE institutions to be transformed into European data and metrics.

The epistemic infrastructure relating to QA does not exist in isolation but is interlinked with other infrastructures and projects. Examining a third key development, the creation and growth of the European Tertiary Education Register (ETER), provides an example of such interlinkages and helps illustrate the increasing complexity of the infrastructure concerned with quality in European higher education. ETER started as an academic project funded by the European Commission, which has also supported EQAR and ENQA. ETER sought to respond to an absence in the higher education data infrastructure in Europe, as one interviewee put it: ‘a core function of ETER is to provide a list of institutions. You might think it’s a stupid task, but such a list did not exist before ETER in Europe’ (BL int.). Although conceptually simple, the creation of the register requires important processes of categorisation, standardisation and commensuration, which have built on existing data standards while agreeing and deciding upon new ones. The significance of simply having such a register available should not be understated, with an underlying standardised way of recognising and recording institutions and their characteristics being extremely valuable for the potential interoperability of different higher education data systems in Europe. Crucially, the existence of such a dataset opens the door for more extensive analysis of the state of European education through the use of the student, graduate, financial and other data collected for each institution in the database.

Through the DEQAR Connect project, funded by the European Commission, the quality assurance and measurement infrastructure provided by ETER and DEQAR have been linked together. As an interviewee described, DEQAR uses ‘ETER as an underlying data source of basic institutional information’, noting further that EQAR ‘only added the quality assurance related information to it’ (CT int.). Working in combination with ETER’s infrastructure on institutions allows EQAR to now present information on, for example, the proportion of a country’s students that are studying at an institution that has been reviewed by an EQAR-registered agency. This represents a significant expansion of the data that EQAR can provide and also moves EQAR closer to dealing with higher education institutions rather than just QAAs. Furthermore, in addition to being connected with ETER, DEQAR data is now being integrated into the workflows of national recognition centres, which offer authoritative advice and guidance to higher education institutions on the recognition of qualifications and assessments (CT int.). This points to an important connecting together of national-level infrastructure associated with qualification recognition with European-level infrastructure concerning QA.

As well as being significant in their own right, these three developments are illustrative of the broader proliferation of European-level infrastructure concerned with quality in higher education (Gornitzka and Stensaker, 2014). Other spaces for discussions on QA have also been built, principally the European Quality Assurance Forum, which generate materialities in the form of reports, minutes, presentations and more. Furthermore, in 2012, Eurydice took charge of the Bologna implementation reports, as they came to be called; the latter have become a central vehicle for evaluating movement across EHEA countries on the core commitments of Bologna, including on QA and recognition. Significantly, Eurydice draws on data and insights from a range of actors in European higher education in order to compile the reports, including EQAR and ENQA, pointing to the significance of the interlinkages between actors, the second layer of the infrastructure, to which we will turn next.

Actors’ alliances and interlinkages

No material infrastructure could have expanded to the extent and complexity that quality assurance processes in European universities has over the last 20 years without the efforts of a range of key actors. Following on from the previous discussion, this section focusses on the ways the infrastructure relating to quality assurance and measurement has extended to include a range of organisations that are creating new interdependencies and alliances but also new conflicts over policy influence and direction.

One of the more established actors in the field is the aforementioned ENQA. It has seen its influence grow substantially during the last decade, moving from being one of the many stakeholders in the Bologna Process to a much more strategic and policy-oriented role. ENQA was established in 2000 as the European Network for Quality Assurance in Higher Education, only to be renamed 4 years later to as an ‘Association’ (Ala-Vähälä and Saarinen, 2009). Although its remit from its inception has been to ‘represent QAAs in the EHEA’, to ‘support them nationally’ and to ‘provide them with services and networking’, in recent years its influence has grown. While it is primarily a stakeholder organisation, ENQA has developed a significant role in driving policy concerning QA and is trying to steer the field in new ways (Sarakinioti and Philippou, 2020). ENQA has played a key role in creating, updating and disseminating the ESG, as explained previously. However, according to its strategic plan 2021–2025, ENQA is also pursuing ‘knowledge-based development’ and exploring ‘new ways of quality assurance’ by becoming a forum for ‘. . .facilitating the discussion on any changes in higher education and its provision and the consequences these changes may entail’ (ENQA, 2021).

Such a broad strategic vision in terms of shaping the field has become a significant aspect of ENQA’s work. ENQA sees its role as a policy actor, strategically placed in close proximity to the European Commission: ‘ENQA is based in Brussels for a reason. So it’s mostly the director that is based there, who is joining different types of activities, meetings with the Commission’ (CG int.). ENQA derives its status from, on the one hand, its established connection with the BFUG through being a consultative member and, on the other, the sheer strength of the number of organisations it represents:

The weight of ENQA is being given by the members. So when you go to a table, when it’s about higher education policy, and then there you represent 55 quality assurance agency members which are compliant with the ESGs from 40 countries, and then you also represent 55 affiliates also from outside Europe, that also makes you an important network.(CG int.)

Indeed, the expansion of the work and of ENQA’s influence to other world regions has given it particular momentum. Not only does this increase networking, but, crucially, it promotes the standing of European higher education as a global higher education actor:

There are other networks of quality assurance agencies from all over the globe, African, Asian, United States, so the collaboration with those networks is important. So what we try to do is to learn from each other, but also our objective is to promote the European standards and guidelines, because of course we believe they are good.(CG int.)

A particularly revealing example of complex interdependencies and contestations in the epistemic infrastructure of QA is the relationship of ENQA with its sister organisation, EQAR. Throughout our examination of the two organisations, there has often been the potential for confusion – not only by us as researchers but crucially also in the field itself – about the distinctions between the two organisations and their work (Huisman et al., 2012). This seems to spark an inclination to differentiate one’s own organisation from the other as a way of sustaining the need for the continued existence of both, especially in a field ridden by complaints for duplicity of efforts and over-reliance on bureaucratic form-filling:

EQAR is not the one that is developing the policies or providing services to the members or representing them. They are just a register. . .. Of course, if for example there is a discussion on revising the ESGs of course they will be involved. But maybe you know that EQAR was founded by the E4. So ENQA is the founding member let’s say of EQAR. So they are our kids in a way. (CG int.)

EQAR actors, however, do not necessarily see themselves as ‘just a register’. They are also an organisation whose role has evolved and grown, such that EQAR can now suggest that they can and should influence policy on QA in more fundamental ways:

I would say that the role evolved over this, well, now nearly 15 years in two ways. On the one hand, let’s say, from the very beginning EQAR was a very technical and bureaucratic organisation. . ..But then I think very soon EQAR also became involved as an organisation that, let’s say, informs the policymaking discussions in the Bologna Process, because of course the governments and other stakeholders were keen to also have EQAR there as an organisation that can give some input and share the expertise that we make and gather from this work of registering agencies, of reviewing which agencies comply with the ESG and so on. And that has become or that has grown little by little over the years and now also there is quite some work done on maintaining a knowledge base on our website, on analysing what is happening in quality assurance in Europe. (CT int.)

Perhaps more so than the micro-disagreements of how the hierarchy or the dependencies amongst these organisations work, or the extent to which there is a degree of mission overlap or not, what is interesting here from an infrastructural point of view is that the growth and expansion of the work of these actors is seen as ‘organic’. For EQAR, an example of this spiralling of work into different directions and branches is the establishment of DEQAR. On the one hand, DEQAR was described as an ‘obvious’ or ‘not that far-fetched idea’. On the other, however, it has been portrayed as ‘a major change of our role in these 13 years. . .[since] . . .now we are dealing with the level of higher education institutions by having a database of them and that’s of course quite a big difference for our work’ (CT int.). In other words, although EQAR’s primary role was to work with QAAs, the expansion thanks to the creation of DEQAR means that EQAR now has links not only with QAAs but with European universities themselves. Such an organic growth and expansion of the QA activities is extraordinary and well beyond what the Bologna Process set out to achieve. ENQA and EQAR perform a lot more than just the technical role of inspecting HE institutions on the basis of the ESGs. Sitting at the BFUG table as experts in QA and representatives of QAAs, they contribute to shaping the future and strategic direction of EHEA. Furthermore, while maintaining their networking function and their allyship with other QA organisations, they preserve their own unique contribution and presence in the field.

A second key actor in the broader field of measuring and evaluating quality in European Higher Education is the Organisation for Economic Cooperation and Development (OECD). Although the OECD is best known in the field of education for the establishment and success of the Programme for International Student Assessment (PISA), less known but equally significant is their work in other areas and especially in higher education. Examples of this work in the European space abound. The Labour Market Relevance and Outcomes of Higher Education (OECD, 2022) is one such project, and it receives substantial support from the European Commission, with the main participant nations being Austria, Hungary, Portugal and Slovenia. The project explores issues such as the emergence of ‘alternative credentials’ and the use of ‘big data’ to understand graduate skills and digitalisation in higher education. Previous project participants were Norway, Mexico and four US states. The global nature and reach of the OECD is a valuable resource in the efforts to establish the EHEA as a global player. The ability of the OECD to offer comparative data from other competitor world regions is one of the main reasons for its increasing involvement with issues of quality in HE.

In its work to extend the comparisons and the evidence base beyond Europe, the OECD has made use of connections with the ETER project, as explained by an OECD interviewee:

We’ve been involved in that process for, I think, at least on the advisory board since the project’s inception. . . And part of what we’re doing at the moment is trying to develop the similar data source that draws on ETER, but also draws on other national data collections that are available for the non-EU/OECD countries.

Establishing such international comparisons and linking the European HE quality processes with those of other OECD countries is a key endeavour for both the European Commission and the OECD (Grek 2014, 2016; Sorensen and Robertson, 2020). Correspondingly, this is a well-established collaborative relationship that has grown substantially over the years, as another interviewee shares: ‘We’re involved, they invite us to all their working meetings and likewise we invite them to ours’ (DC int.). Of course, it is not only the Commission that benefits from the OECD’s expertise. This is a two-way relationship that influences and is advantageous to both. The OECD benefits from the data the Commission generates as well as, crucially, from the funding available from the Commission for their work:

We actually use quite heavily the EU’s surveys, for example, the Labour Force Survey and, similarly, labour force surveys in the non-EU countries to assess a range of labour market outcomes of higher education. . .. So we run this policy survey and when we’re running that, we would obviously take into account work that the European Commission has done previously. We would consult with them on that to make sure that we’re not, first of all, duplicating the work, because obviously we all have to be efficient, and then also to make sure that what we’re producing makes sense from their perspective as well as from the perspective of our member countries. (GG INT)

In terms of the kind of collaboration and work that the OECD offers, some interviewees stressed the OECD’s independence and expert function, as compared with a more politicised Commission, while others emphasised the benefits of continued dialogue, with the Commission setting the strategic direction and the funding and the OECD responding to these policy priorities. Similar to other areas of education policy, the relationship between the OECD and the European Commission is a ‘symbiotic relationship’, reaping substantial benefits for both organisations:

The European Union is definitely a voice in our, you know, through the European countries that sit in the Group of National Experts. Certainly we would understand very well the priorities of the European Union. (GG int.) There has evolved a division of labour and a symbiotic relationship between the Commission and the OECD over the last 15 years or so. . .They’re the people with the wallet! So, in a sense, we’re more likely to be working within the framework of problems and priorities that they’ve identified, so it might be the case that the Commission will say to us, we’re really concerned about digitalisation in higher education, in which case we would say, oh, well, we agree, we think that’s a really important topic and we could support you in a couple of different ways, here, we’ll give you a couple of examples of what we might do. But there, you see how that it’s a dialogue.(TW)

Finally, as the above suggests, the European Commission is a powerful actor in European quality assurance and evaluation processes. Through its membership of the BFUG, but more importantly through its provision of funding and its convening power (Brøgger, 2019; Cone and Brøgger, 2020; Dakowska, 2020), the Commission has been able to influence the education policy direction as a whole, both inside and outside Bologna, and, thus, has often been the driving force behind the building of QA infrastructure. The way in which the European Commission coordinates the higher education space is subtle, yet, over time, it is effective in generating change in actors. 1 The ‘pull’ of the Commission – through its funding, networks, data and indicators, and dominant discourses – changes the field in which European higher education actors operate such that it is that bit more likely that their next step will be in the preferred policy direction of the Commission, resulting, with enough time, in substantial movement in that direction. Note that this does not suggest that the Commission drags other actors along, that actors cannot or do not move away from the Commission’s preferred policy direction, or that the Commission is entirely alone in trying to stack the odds in its favour. Instead, it accounts for how – by making it that bit easier to move with and towards the Commission, due in no small part to it being the ‘wallet’ in the field – the Commission can softly direct the evolution over time of the infrastructure around QA and measurement in European higher education.

The recent push towards the announcement of a European Education Area, first as a policy to be implemented by 2025, and more recently as the umbrella strategy for all key policy initiatives in European education governance, is an example of the coordinating power of the Commission and a telling story of the centrality of higher education in processes of Europeanisation of education:

A vision for 2025 would be a Europe in which learning, studying and doing research would not be hampered by borders. . .. A continent in which people have a strong sense of their identity as Europeans, of Europe’s cultural heritage and its diversity. (European Commission [EC], 2017)

Indeed, in May 2018, the Commission put forward four flagship initiatives aimed at making the EEA a reality by 2025: (i) the mutual recognition of diplomas and learning periods abroad, (ii) the improvement of language learning, (iii) the European Student Card Initiative and (iv) the European Universities initiative. At least three of the four new policies are centred around developments in higher education, with a focus both on greater student mobility, through the new Student Card, and the European Universities initiative. The latter initiative aims to establish at least 20 European Universities by 2024 through new alliances amongst three different universities per European University, with, again, the goal of increasing mobility as well as diversity. Indeed, the latest Communique from the Commission (January 2022) declares European universities ‘a distinctive feature of our European way of life’.

While this is not the first time that education and culture come close in one entangled mix (Grek, 2008), it is interesting to see the ambitions and objectives for European universities aiming to become ‘the visible expression of a distinctly European approach’ and ‘lighthouses of our European way of life’ (EC, 2022; our emphasis – see also Lawn and Grek, 2012). Crucially for the project of Europeanisation, these developments are dependent on the European quality assurance and evaluation infrastructure that has been built, with significant involvement from the Commission, over the preceding two decades. The European Universities Initiative, for example, makes use of the European Approach for Quality Assurance of Joint Programmes, which emerged from the Bologna Process and is itself built upon EQAR and the ESG. As one interview describes, ‘instead of accrediting a joint programme in every single country that participates in it, which could lead to a lot of obstacles and it’s costly and takes a lot of time, [the European Approach] would allow one single EQAR registered quality assurance agency to accredit the joint programme’ (JA/KS int.). A further element of Europeanisation through QA is evident here as use of the European Approach would help ‘allow the accreditation of their programmes by any EQAR registered agency in Europe’ (JA/KS int.), which would also further the creation of a European ‘market’ in QAAs (Cone and Brøgger, 2020).

To further its ambitions into the future, major developments are being planned by the Commission for continuing the evolution of QA infrastructure. The 2022 Communique, for instance, proposes the creation of a European Quality Assurance and Recognition System. This system will build a European space ‘where the quality of qualification is assured, the qualifications are digitised and recognised automatically across Europe, doing away with the bureaucracy that hinders mobility, access to further learning and training or entering the labour market’. It seems that, yet again, the discourse and practices of quality assurance are being put to work for the fabrication of the ideal common Europe, where universities do not just promote a ‘European way of life’ but also bring it to fruition. The practices around quality assurance and evaluation, therefore, do not simply represent technical processes by which mobility in Europe is facilitated. Instead, as we will discuss in the next section, quality becomes a central governing device in an expansive and ever-changing epistemic infrastructure, through which new strategic directions are drawn, new and old actors are interlinked and the project of Europeanisation continues apace.

Discussion: Quality assurance and the governance of European education

Building on a rich set of policy documents and interview data, and using the concept of the epistemic infrastructure, the article focused on an analysis of how quality assurance in higher education has developed in Europe over the last two decades. We showed that, although the Bologna Process remains the trunk, the tree of QA in HE in Europe is now growing a multitude of branches, in all sorts of directions and in different speeds and strengths; ultimately, as we discussed, the ‘crown’ of this imagined tree is the project of Europeanisation – of education and perhaps, as we showed, of the building of the idea of Europe itself.

Our analysis began from, first, discussing what we called the material inscriptions of QA in HE in Europe: that is, the data, reports, protocols, powerpoint presentations and databases that provide the working tools for making QA happen. A first prominent characteristic of the materialities underpinning the QA infrastructure in Europe is the ‘organic’ growth of QA data and processes, emerging as they did from opportunities perceived and seized by particular actors at particular moments, rather than from being clearly tied to a pre-planned strategic progression. By ‘organic’, we do not mean that these materialities developed haphazardly or in an anarchic manner; what we are suggesting is that they developed naturally, over time and through the initiatives of new actors, without them having been pre-planned when Bologna came to existence. While the creation of DEQAR and its linking together with ETER, for example, certainly fit within the broad strategic vision of the Bologna Process, they came about because actors

Similarly, this organic growth of the data infrastructure also points to the role of its multi-layered temporality: on the one hand, the foundational nature of the ESG illustrates the potential for infrastructures to have a

Next, harmonisation and interoperability both depend on interlinkages amongst actors and material systems that lead to further interlinkages and interdependencies. The epistemic communities that have been constructed around European QA processes, evident in formations such as the E4 and the European Quality Assurance Forum, are closely connected, facilitating the creation and maintenance of links between actors and data systems (Dakowska, 2020; Fumasoli et al., 2018). Crucially, through such actors’ connections, organisations can be placed in contact with much wider networks of higher education agencies and can, thus, constitute and affect changes on European higher education as a distinct entity in itself. For example, on the one hand, the work of EQAR in registering, and legitimating, QAAs and their approach to knowing quality operates through its connection with ENQA; on the other, ENQA’s QAA membership and the links that provides to the higher education institutions with whom those QAAs work, results in DEQAR being able to produce and present knowledge about the quality of higher education institutions and programmes. Through the OECD, and indeed through some of the work of the E4, the QA infrastructure is also interlinked with knowledge systems around quality that extend beyond Europe, allowing the possibility of knowing quality in European higher education as a comparative construct. In particular, the collaboration between ETER and the OECD represents the interfacing of the European quality infrastructure with, and potential extension into, other world regions; the European Commission has explicitly stated its aspiration for European Higher Education to become a global actor. The reliance on OECD comparative data expands the infrastructure spatially to include not only a new and wider set of actors, but also to create a European HE QA system that may be replicated in new geographies and thus increase its clout and influence.

As well as coordination, however, there is also competition and differentiation between actors. The relationship between EQAR and ENQA, for example, has seen struggles over the relative positions of the two in the QA infrastructure and the policy space that it helps to constitute. Over time this has led to attempts at enhanced differentiation between the two organisations in order to more clearly delineate their respective positions in the infrastructure, resulting in, for example, the framing that EQAR is the ‘bad cop’ that ensures QAA compliance with the ESG while ENQA is the ‘good cop’ that supports QAAs to enhance their activities. Furthermore, the governance of EQAR itself represents a space of contestation as different actors –particularly the E4 with their distinct stakeholders and correspondingly distinct interests – pursue different visions and boundaries for what EQAR is and what it does (Ala-Vähälä and Saarinen, 2009). One important aspect of the changing roles and forms of actors in this space is the new forms of expertise (Dakowska, 2020) that the establishment and maintenance of these interlinkages require. The experts working in this field are no longer merely statisticians and data scientists. Increasingly, what is needed is a new type of expert: expert brokers whose main role is creating and managing the connections between different disparate groups of political actors (Grek 2013, 2020; Bandola-Gill et al. 2022). This work – that is, injecting the technical QA process with political clout and give it strategic direction, acceptance and credibility – is at the heart of building the epistemic infrastructure.

Perhaps most importantly for the analysis of how Europeanisation is enacted, a crucial way in which QA has expanded and transformed European higher education is through its strategic role in operationalising the European governance of education as a whole (Enders and Westerheijden, 2014). As Star argues, infrastructure tends to be ‘fixed in modular increments, not all at once or globally’ (1999, p. 382). Fitting this mould, the evolution of the QA infrastructure in Europe has occurred through the stitching together of distinct parts, which have emerged out of the facilitating environment created by the Bologna Process and the actions of the European Commission (Gornitzka and Stensaker, 2014), to generate an increasingly unified European higher education policy space. The very notion of the European Education Area (EEA), in fact, can be seen as one such attempt to gather together disparate existing initiatives into a common framework. Interestingly, a closer look at the development of the policies around the EEA shows how quality assurance in higher education is a key pillar in building the European education policy space. The harmonisation of data and standards, as well as the new interdependencies and linkages between actors, are both key here for enabling the construction of a new way of building Europe, through making it known and governed via quality assurance processes (Brøgger, 2021; Lawn and Grek, 2012; Ozga et al., 2011 ).

Developed through the epistemic infrastructures framework, these insights, taken together, paint a picture of a fluid and significantly multi-polar European policy space that has developed around QA and quality measurement in higher education. Although previous analyses have also pointed out to the role of data and quality assurance in the building of Europe (Grek et al. 2020; Ozga et al., 2011), this article has used rich accounts by the actors in the field, to show the multipolarity of the QA infrastructure, its expansion beyond Europe (with the involvement of the OECD) and its – by now – strategic application in building the European Education Area. Thus, our analysis aims to give flesh to the bones of what ‘governing by QA’ entails and where it has led to: an enhanced space of cooperation that has, by now, grown too large to fail.

To conclude, the article supports that Bologna is still a significant policy site for European policy on higher education. Nonetheless, the paper analysed insider perspectives in order to show how the roots of the Bologna Process have now reached so deep, as to create a number of other sites for policy work on European higher education. The tale of ‘Bologna and beyond’ in many senses tells the story of Europeanisation itself: slowly to start with, but with increasing momentum and pace, what began as a process from a singular centre (be it Bologna or Brussels) has now grown and diversified; it is no longer contained in a single policy document, or dealt with in a single database. Instead, it has become a multi-scalar, multi-actor epistemic infrastructure, capable or expanding and renewing itself as required. Critically for perceiving pathways for future development, Bologna does not stand weakened by this evolution and expansion: on the contrary, the interactions between data materialities and policy actors re-energise Bologna and generate and maintain momentum for significant reforms, that further enhance and evolve the Europeanisation of education and, as a result, Europeanisation per se.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This manuscript is part of a project that has received funding from the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation program, under grant agreement No 715125 METRO (ERC-2016-StG) (‘International Organisations and the Rise of a Global Metrological Field’, 2017-2022, PI: Sotiria Grek).