Abstract

Identifying three modes of policy legitimation in education, illustrated by shifts in Swedish educational assessment and grading policies over the past decades, the paper demonstrates significant trends with regard to national governments’ policymaking and borrowing. We observe a shift away from collaboracy – defined as policy legitimation located in partnerships and networks of stakeholders, researchers and other experts – towards more use of supranational agencies (called agency), such as the Organisation for Economic Co-operation and Development, the European Union and associated networks, as well as the use of individual consultants and private enterprises (called consultancy) to legitimate policy change. Given their political and high-stakes character for stakeholders, assessment and grading policies are suitable areas for investigating strategies and trends for policy legitimation in education. The European Union-affiliated Eurydice network synthesises policy descriptions for the European countries in an online database that is widely used by policymakers. Analysing Eurydice data for assessment and grading policies, the paper discusses functional equivalence of grading policies and validity problems related to the comparison of such policy information. Illuminating the roles of the Swedish Government and a consultant in reviewing and recommending grading policies, the paper discusses new ‘fast policy’ modes of policy legitimation in which comparative data is used to effectuate assessment reform.

Keywords

Introduction

The Scandinavian countries have a long tradition of utilising expert and stakeholder committees that – in a collaborative fashion – review and propose policy changes, to legitimate governments’ education reforms. After the turn of the millennium – and post the multiple ‘PISA shocks’ associated with international large scale assessments – a shift can be observed in Swedish policymaking towards more use of individual consultants and international agencies such as the Organisation for Economic Co-operation and Development (OECD) and the European Union. This article demonstrates how the traditional mode of policy legitimation – characteristic of Swedish policymaking – has been replaced by new fast modes of policy legitimation archetypical to global policymaking trends (Peck and Theodore, 2015).

A major and controversial issue for Swedish education in the last decades has been that of at what student age should schools embark on formal grading. When implementing new policies in this area it is particularly important for governments to ensure that the public and the teaching profession consider them as legitimate. Assessment and grading policies thus are suitable areas for investigating strategies and trends for policy legitimation in education. In this paper, we use assessment and grading policy as a case to show how comparative data on national states’ education policies from, for example, the OECD or the European Commission, which we call supranational agencies, are used by policymakers to legitimate governments’ ideologies.

The case of policy legitimation investigation created headlines in Swedish media in 2010–2011 as teachers and scholars opposed the government’s proposed assessment reform (Dagens Nyheter, 2015; Lärarnas Tidning 2014). The Swedish Minister of Education at the time, Jan Björklund (the Liberal party), nominated the neuroscience professor Martin Ingvar from the prestigious hospital and medical school, the Karolinska Institute, as an expert to investigate potential implications of grading younger students. As a single expert, Professor Ingvar produced a green paper report (SOU, 2010) reviewing literature on this issue, backing the policy of embarking on formal grading in Year 6 (when students are 12 years old) instead of Year 8 (age 14), which was the policy at the time. Subsequently, in a memorandum in 2014 (Utbilningsdepartementet, 2014), Professor Ingvar examined students’ ages when schools embark on formal grading in the European and OECD countries and recommended that Sweden further lower the use of grades to Year 4 (age 10). One of Ingvar’s major arguments for this recommendation, also put forward by the government, was that countries performing better than Sweden in the Programme for International Student Assessment (PISA) had a system of ‘early formal grading’. On the Swedish Television Broadcast’s Rapport (equivalent to the Six O’Clock News), the Minister stated that: Almost the entire world grades their students earlier than Sweden does. Most countries grade from Year 1. Our neighbour country Finland grades students from Year 3 or 4. Countries that excel in PISA grade students very early. (SVT, Rapport [Swedish Televsion, Six o’ clock news], 20 August 2014; authors’ translation)

By nominating a distinguished neuroscience professor to review and recommend new grading policies – putting forward implicit causal claims that early formal grading leads to higher achievement – the Minister sought to effectuate an assessment reform.

Basing a controversial reform on implicit causal claims about the ‘world situation’ prompted researchers to investigate how the information about countries’ grading policies was obtained. In the Ministry memorandum Professor Ingvar relied on implicit causal inferences when listing students’ ages when schools embark on formal grading in the OECD countries. The reference given for that list was: ‘see for example OECD 2013’ (Utbilningsdepartementet, 2014: 37). However, this OECD publication did not include such information. When called upon, Professor Ingvar said that the information came from the Ministry of Education, which had referred it to the OECD. When the Ministry was confronted with the lack of evidence in its references, the government official admitted that ‘there unfortunately was a mistake in the reference list’ (Lundahl, 7 October 2014, personal communication, U2014/5534/S School Unit, Ministry of Education; authors’ translation). The government official then explained that the information was gathered partly from the Eurydice network and partly through contacts with the ministries of education in other countries. The question of how this information was constructed remained unanswered and the information therefore difficult to verify.

The aim of this paper is to explore the basis for the inferences drawn by the Swedish Ministry of Education and its consultant, and thus the legitimacy of the policy recommendations put forward with respect to reformed grading policy in Sweden. The case is illustrative of new trends with regard to national governments’ policymaking and policy borrowing. We examine how policymakers legitimate change through the use of policy descriptions of other countries, as provided by supranational agencies, and the nomination of consultants to review and propose new policies based on this comparative policy data. The paper first elaborates on theoretical perspectives on policy borrowing and policy legitimation. Examples from educational assessment policymaking in Sweden and beyond are used to establish the distinctions between collaboracy, agency and consultancy modes of policy legitimation. Second, the data and methods used to analyse the contemporary case of policy legitimation of grading policy is outlined. Third, the paper undertakes a two-step analysis illuminating problems related to structure, labels and classifications of Eurydice data and the comparability of the meaning of ‘grades’ and ‘grading’ across countries. Fourth, the article discusses the emergence of new modes of policy legitimation in relation to other studies that observe similar developments within and beyond the Swedish context. The paper concludes by addressing the implications of these new modes of policy legitimation both for policymaking and research, and calls for educational research communities to give more attention to the (mis)use of Eurydice data in policy and research deliberations.

Theoretical perspectives on policy legitimation

Policy borrowing has received increased attention in educational research over the past few decades (Cowen and Kazamias, 2009; Schriewer, 2014; Steiner-Khamsi, 2004, 2010; Steiner-Khamsi and Waldow, 2012) in tandem with the growing international policy discourse sparked by international comparative studies of student achievement (Benveniste, 2002; Kamens, 2015; Petterson, 2008). While policy borrowing, strictly interpreted, refers to the situation when ‘policy makers in one country seek to employ ideas taken from the experience of another country’ (Phillips, 2004: 54), the term has digressed to a more general meaning related to how a nation’s policy is influenced by other countries. One aspect of policy borrowing is that of legitimating national policy by referring to policies in other countries.

Jürgen Schriewer (1988) discusses how descriptions of foreign educational systems and their practices serve as frames of reference to specify appropriate reforms of a given nation’s education policy. Simplifying Schriewer’s (1988) perspectives, we can say that policies can gain or sustain legitimacy by referring to (1) scientific principles or to (2) values or value-based ideologies. With the latter, reaching a consensus can be difficult. Thus, externalisation to ‘world situations’ can be a useful strategy for objectifying value-based reasons for decision-making in education, accomplished in the forms of historical descriptions and/or statistical documentations that are recognised as scientific (cf. Schriewer, 1988: 62–72). As such, referring to other countries can make value-based policymaking more legitimate, as the values – through externalisation to world situations – can reappear as scientific principles that have higher legitimation potential than values alone. For example, referring to Finland’s grading system and to their high rank in PISA in order to legitimate a similar grading system in Sweden can be perceived as legitimation by externalisation.

Policy-borrowing literature often draws on neo-institutional theories that give attention to what DiMaggio and Powell (1983) described as different processes of institutional isomorphism. The theory of institutional isomorphism suggests that organisations (or countries) become similar due to external or hierarchical pressure (coercive isomorphism), through modelling other organisations (mimic isomorphism) or through organisational norms (normative pressures). In the case of national states’ policymaking in education, these perspectives draw attention to how policymakers interact with one another, often facilitated by agencies such as the World Bank, UNESCO, the European Union and – especially these days – the OECD. These processes lead organisations to mimic one another’s behaviour, or countries to borrow one another’s policies.

Grek and Ozga (2010: 706) suggest that if one wants to predict and understand why and where policy is moving, one should be looking at the management of knowledge, rather than at policy itself. A nation’s policy legitimation is often mediated by structural comparisons of which data reduction and classification may or may not be standardised (see also Lundahl, 2014). Grek (2013: 698) views comparison not simply as informative or reflective: ‘In fact, it fabricates new realities and hence has become a mode of knowledge production in itself’. This type of policy legitimation is seldom made explicit, which becomes a problem in policy areas where the juridical and political terms are highly institutionalised and embedded in the nations’ distinct traditions. Educational assessment, particularly the formal assessments and national instruments underpinning meritocratic procedures, often relies on ‘taken for granted’ information because ‘all’ members of the national political contexts have undertaken these assessments. This implicitness becomes particularly problematic when self-reported representations of national policies inform other countries’ policymaking, which is the case with the Eurydice data that we investigate in this paper.

However, we believe that a strategic approach to synthesising and using other countries’ policies, which Schriewer brings to our attention, is better labelled ‘policy legitimation’ than ‘policy borrowing’, acknowledging that the domestic setting – the need to legitimate a government’s policies and ideologies – often is the important driver in this type of policy borrowing. We do not define policy legitimation as an entirely cynical and strategic part of policy deliberations. Even if politicians and other policymakers often have a most sincere belief that the outcomes of their reforms will be for the better, there are usually parallel strategies for maximising the chances that these beliefs will be received as legitimate.

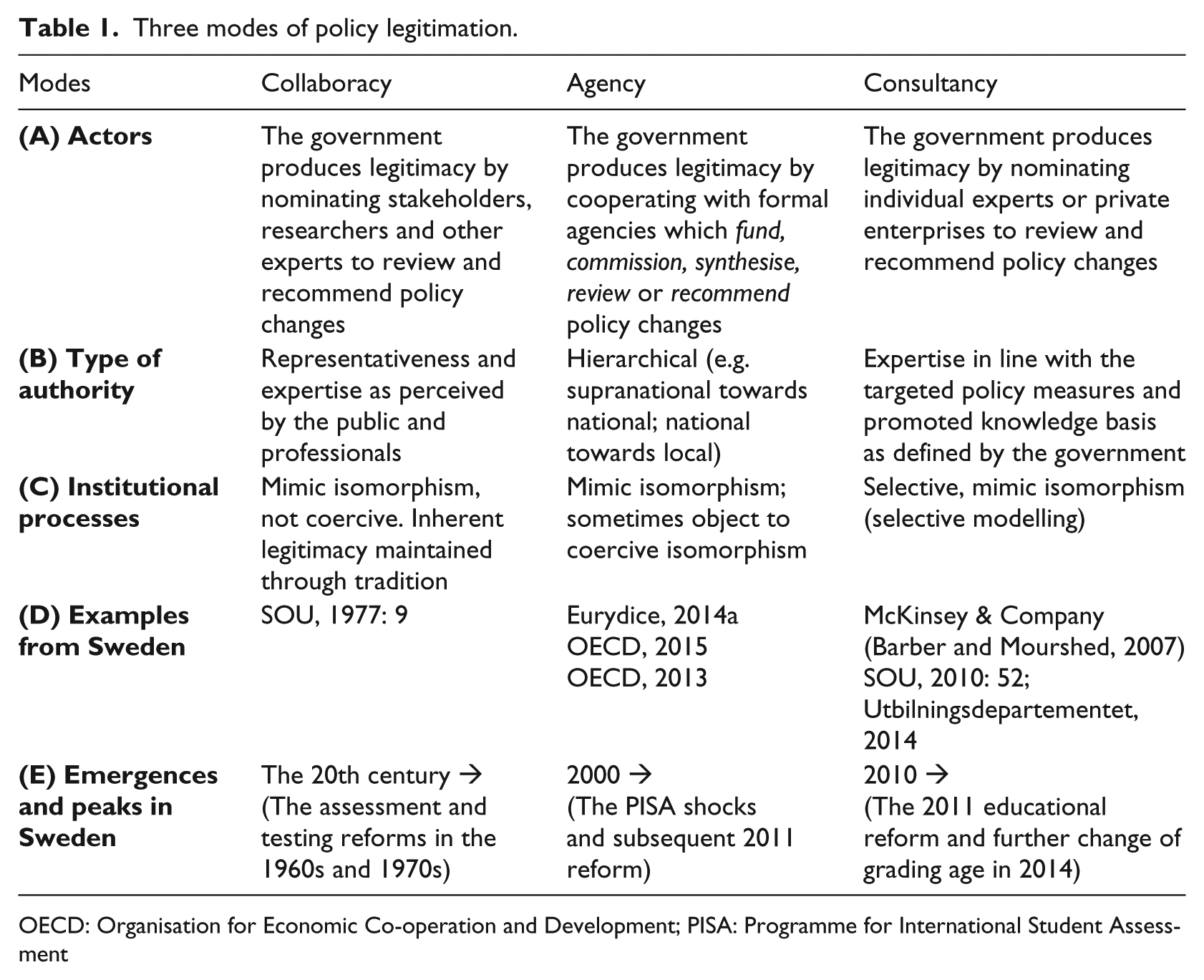

Table 1 outlines the three modes of policy legitimation – collaboracy, agency and consultancy – as a framework for understanding different types and sources of legitimacy in national states’ policymaking. The framework gives attention to the type of actors (A), their type of authority (B) and the type of institutional processes (isomorphism) (C) that produce the legitimacy. Further, Table 1 includes examples from Sweden’s involvement in international research and policy deliberations and use of agencies and consultants (D), in addition to the identified emergences and peaks of the three modes of policy legitimation (E).

Three modes of policy legitimation.

OECD: Organisation for Economic Co-operation and Development; PISA: Programme for International Student Assessment

Collaboracy mode of policy legitimation

Collaboracy 1 is a mode of policy legitimation in which established actors – stakeholders, researchers and other experts – take the existing professional practice as the point of departure when reviewing policies and practices elsewhere. It can be understood through a Weberian perspective on traditional authority (Zymek, 2003). In Sweden there is a long-standing tradition of collaborating with stakeholders when the government formulates and reviews policies (Musial, 1999). Before the government draws up a legislative proposal for a new policy, it may choose to appoint a special expert or group – officially known as a one-man committee of inquiry or a commission of inquiry – to investigate the issues in question. Reporting on matters in accordance with a set of instructions laid down by the government, these operate independently and may include or co-opt experts, public officials and politicians. The reports are published in the Swedish Government Official Reports series (Statens Offentliga Utredningar (SOU)). After a committee has submitted its report to the responsible minister, it is sent to relevant authorities, stakeholders and the public for consideration. These are given an opportunity to express their views before the government formulates and presents a legislative proposal to parliament. As such, reforms undertaken are prepared by everyone who is part of the education system. Characteristic of this mode of policy legitimation is when an expert committee in the 1970s reviewed and discussed grading age for up to a decade before conclusions and policy recommendations were presented to the government (Lundahl, 2006).

Agency mode of policy legitimation

The agency mode of policy legitimation involves formal agencies that shape policymaking by funding or commissioning policy interventions, by synthesising policy data from different countries, or by reviewing and recommending policies. In the context of national states’ policymaking we draw on Dale’s (2005) use of the concept supranational to give attention to a range of agencies which have been observed to increase influence on national states’ policymaking (Grek, 2009, 2013; Ozga et al., 2011), such as the OECD, the European Union, the World Bank and UNESCO. Whether these international features of policy brokering take the form of Europeanisation (Grek and Lawn, 2012) or globalisation (Dale, 2005), they both reflect and condition national states’ governing processes.

In the agency mode of policy legitimation, mimic isomorphism may be amplified by coercive power of the respective supranational agency, for example, through conditional benefits it offers. An example of coercive power is when the World Bank requires education systems to transform themselves to meet the demands of the global knowledge economy (Robertson, 2005) and western neo-liberal fiscal policies (Jones, 2004). This type of the agency mode of policy legitimation may effectively enforce countries to accept supranational agencies’ testing and accountability policies to receive benefits from, for example, the World Bank (Benveniste, 2002).

In this paper, we are, however, mainly concerned with mimic isomorphism, both with respect to the construction of comparative policy data that form the basis for modelling and with the way such policy information is used. This type of legitimation has become more prominent in the new millennium. Cussó and D’Amico (2005) observe increased competition between such agencies, which led UNESCO to align with the other main knowledge brokers and collectors of educational statistics. Dale (2005: 119) notes, ‘This places great power in the hands of the agencies setting up the statistical variables that would determine what the “proper” outcomes of education should be’. ‘Quick’ global and European-level policy comparisons increasingly inform national states’ policymaking (Grek and Lawn, 2009, 2012; Rizvi and Lingard, 2010). Lundahl and Waldow (2009) identify how ‘quick languages’, for example, in comparison with national states’ outcomes on international tests, frame and make educational policy discourse accessible to wider circles of participants. While public attention seldom reaches below the surface of these outcomes comparisons, government officials and politicians delve deeper into the datasets in search of recipes for successful policies. Thus, international agencies, such as the European Union and the OECD, are increasingly used by policymakers to provide synthesised comparative data that can be used in national reform agendas.

While this mode can be associated with the large influence of the PISA study (Grek, 2009), the OECD’s role in national states’ agency mode of policy legitimation, particularly in relation to accountability policies, long preceded the PISA tests. Established in 1968, the OECD’s Centre for Educational Research and Innovation began providing policy recommendations to member countries (Lundgren, 2011). Yet, following the implementation of the PISA tests, the countries increasingly gave emphasis to the agency’s policy reviews and recommendations (Pettersson, 2008). Drawing on Börzel and Panke (2013), Prøitz (2015) demonstrates a sequential approach of uploading and downloading that shaped the OECD’s (2013) policy review Synergies for Better Learning. In Sweden, the OECD report Improving schools in Sweden: An OECD perspective (OECD, 2015) is a recent example of how the government uses the OECD to review and recommend policies to legitimate its policies. The European Union-affiliated Eurydice network does not have an active role in recommending policies; however, its synthesised policy descriptions of the European countries are located in an online database that is widely used by policymakers to inform decision-making and by researchers undertaking comparative studies.

Consultancy mode of policy legitimation

The consultancy 2 mode of policy legitimation is related to governments’ utilisation of individual consultants or private enterprises to review and recommend policies. Lindblad et al. (2015) observe that global private enterprises such as McKinsey & Company have become increasingly involved in policymaking in recent years. Gunter et al. (2014: 519) observe that consultants are increasingly recognised as ‘external knowledge actors who trade knowledge, expertise and experience, and through consultancy as a relational transfer process they impact on structures, systems and organisational goals’. Coining the term ‘consultocracy’, Hood and Jackson (1991) identified a trend in which ‘non-elected consultants are replacing political debate conducted by publicly accountable politicians’ (Gunter et al., 2014: 519). Reviewing public policy studies, Gunter et al. (2014) observe that rapid, radical and often incoherent changes in public administration can be understood in view of consultancy businesses playing a substantial role in both responding to and generating reform. They argue that this has become the new ‘normal’ context of policymaking in the United Kingdom. We call these developments a new consultancy mode of policy legitimation.

McKinsey & Company’s report How the world’s best performing school systems come out on top (Barber and Mourshed, 2007) is an example of the increased influence of consultancy enterprises that also received extensive public attention in the Swedish media 3 . As such, policymaking in Sweden is increasingly conditioned by ‘the public eye’ (Rönnberg et al., 2013: 178). The need to accommodate the press and social media’s demands for brief information is characteristic of the consultancy mode of policy legitimation.

While in the UK it has become more common to nominate private enterprises to legitimate policy changes, in Sweden the nomination of one-man inquiries is typical of what we call consultancy 4 . The nomination of Processor Ingvar to undertake a review entitled Biological Factors and Gender Differences in School Outcomes (SOU, 2010: 52) and produce the memorandum A Better School Start for All: Assessment and Grading for Progression in Learning (Utbilningsdepartementet, 2014) – reviewing and recommending new policies for grading age – are recent examples of one-man inquiries in the field of educational assessment and grading.

The proposed classification of three modes of policy legitimation can be helpful in coming to terms with different strategies for undertaking and legitimating policy changes. In historical studies, such as the above brief examples from Sweden, the modes can be used to identify eras and milestones. They enable us to pinpoint how the time allowed for policy deliberations in the collaboracy era marks a contrast to the contemporary ‘fast policy’ era, where approaches that we have called agency and consultancy are more efficient modes of policy legitimation. The concepts contribute to the well-established literature on policy borrowing between countries. However, the modes we identify are principal ones that can also relate to domestic and local settings. Furthermore, the modes of policy legitimation should be understood as typologies that can occur to various extents, independently and simultaneously. For example, the collaboracy feature of circulating policy recommendations (that are proposed by expert and stakeholder committees) on referral still operates in Sweden, although with less legitimating power due to the new modes of policy legitimation that confront the traditional values and approaches to policymaking that the profession was accustomed to. In the following sections, the focus is on how consultancy operated in tandem with agency when the Swedish government used comparative data on countries’ assessment policies to effectuate an assessment reform.

Data and methods

The information provided by the Eurydice network is the main basis for the empirical analyses in this paper. As we have shown, data from Eurydice was used in attempts to effectuate an assessment reform implementing formal grading in lower school years by referring to ‘world situations’. To scrutinise the validity of implicit causal claims that this policy change relied upon, we undertake a two-step empirical investigation guided by two research questions. First, how is the representation of countries’ policies conditioned by the Eurydice database’s headings and classifications? Second, when asked to describe the system of formal grading in a country in Eurydice, what kinds of descriptions do the various countries provide?

Facilitated by the European Commission’s Education, Audiovisual, and Culture Executive Agency, Eurydice provides European-level analyses and facilitates comparison of education policies in Europe developed to assist policymakers responsible for national education policies (Eurydice, 2014a). Eurydice can be described as a form of Web-based encyclopaedia using a structure similar to, for example, the country systems report in the International Encyclopaedia of Education (Husén and Postletwaite, 1985, 1994; see also Lundahl, 2014).

Research into social knowledge has often been concerned with the (micro) processes that shape scientific knowledge (e.g. Camic et al., 2011). Encyclopaedias are often claimed to be collections of facts – that is, a knowledge storeroom. Typically, we perceive facts as ‘unconstructed by anyone’ (Latour and Woolgar, 1979/1986). But producing an encyclopaedia is not a straightforward and simple editorial process. Sections, headings, topics and the structure of the thematic articles are constantly changed based on new insights and on circumstances beyond anyone’s control. A better way to frame the knowledge in an encyclopaedia would be to understand it as a product of a specific epistemic culture – the actual and theoretical conditions of the production of knowledge (Knorr Cetina, 1999). To put it differently, it is not only ‘truth criteria’ (or the preservation/development of knowledge) that can be seen as a reason to produce an encyclopaedia. Encyclopaedias can be treated as we treat other kinds of knowledge. Knowledge is geographical, sociological and chronological (Burke, 2012). In other words, we can expect editors of an encyclopaedia such as Eurydice to struggle with geographical and periodical frames, translations and issues in deciding on relevance and limitations of content and of contributors.

There is not much written about the use of this type of comparative data describing countries’ educational systems (Lundahl, 2014). To investigate the quality of the comparative data used to effectuate the assessment reform in Sweden, we investigated the Eurydice data sources that the Ministry and its consultant used, and established an overview of European countries’ policies on educational assessment and grading. In addition to the respective country sections of the Eurydice material obtained during fall 2014, our analysis draws on two Eurydice schematic diagrams displaying the ‘Compulsory education in Europe’ (Eurydice, 2014b) and the ‘Structure of the European education systems 2014/15’, published in November 2014 (Eurydice, 2014c). We classified and structured each country’s information on primary and secondary education to provide a comparable overview, as shown in Appendix 1. The initial structure comparison of the Eurydice content demonstrated vast differences between the countries’ frameworks of reporting. This implied a need to undertake the analyses through an abductive approach to establish suitable categories for classification (Schreier, 2012).

Schriewer (1988: 33–34) emphasises that comparative research should ‘not consist in relating observable facts but in relating relationships or even patterns of relationship to each other’. Accordingly, procedures were undertaken for establishing the relationship between different segments of policies (e.g. school structure and tracked programmes), aiming to shed light on the (lack of) functional equivalence of the compared constructs (Schriewer, 2003). We do not possess the capacity and access to obtain insight into all policy structures and legal and political terminology, and thus we cannot give full accounts of these relationships. However, the vast differences that can be identified are sufficient to substantiate validity problems related to the comparability of education systems and the policy descriptions characterising these.

Substantial validity problems are related to the construction and use of data intended to facilitate such comparisons, including methodological challenges with regard to the classification of information from national education systems within standardised categories. Thus, different interpretations across the countries may cause variations in reporting on, for example, student age when schools embark on formal grading or the degree of student retention. These problems of constructing and using comparative policy data are equally complex for us as researchers and for policymakers aiming to legitimate policies by referring to other countries. Rather than aiming to achieve clear-cut distinctions that warrant generalisations, which may be what policymakers desire, our goal is to illuminate the immense (and implausible) challenge of painting a flawless portrait based on this mosaic of information.

Analysis of the construction and use of comparative policy data

In this section, we first analyse how the qualitative information about grading policies is structured in Eurydice and illuminate problems related to the construction and use of this ‘encyclopaedia’ with respect to the structure of headings and content and its implications for the comparison of countries’ policies. Acknowledging these shortcomings, we continue comparing Eurydice’s qualitative information about countries’ policies for student age at which countries embark on formal grading.

Analysis I: structure, labels and classifications in the Eurydice encyclopaedia

Appendix 1 provides the full accounts of the analysed Eurydice data. The methodological challenges of using Eurydice include but are not limited to the problems of classifying and synthesising information. Compiling and comparing information on the grading systems in Europe is affected by differences in: the age at which students start school, the length of compulsory education and its structure (e.g. comprehensive versus tracked programmes), associated selection procedures, practices for issuing formal certificates in early years, and the status and use of these. Eurydice reports, such as the comparison in ‘National testing of pupils in Europe’ (Eurydice, 2009), use other sources to substantiate inferences. In this study, however, we have purposively restricted the investigation to qualitative data in the online Eurydice database to illuminate problems of using (primarily) this type of data as the basis for comparison and borrowing.

Thus, a first challenge was to determine the age at which children start school, as this is regulated in different ways across the countries. In many countries preschool is compulsory; however, preschool is integrated in the compulsory education system to various extents. The length of compulsory education can therefore vary substantially depending upon how one classifies starting age. This, in turn, has implications for the determination of when schools embark on formal grading in the various countries.

In Eurydice, countries are classified according to education structure in order to facilitate comparison across countries. Sweden is classified as ‘single structure’, which relies on the assumption of primary and lower secondary being integrated, in contrast to countries such as England and Germany, which are classified and distinguished as ‘primary education’ and ‘secondary education’. This reflects a fundamental structural difference between the education systems, where a vast number of countries, like Sweden, have a comprehensive school system, whereas others, such as Germany and England, have a clearer separation at the intersection between primary and secondary education.

These differences are important to consider when comparing countries’ grading policies. In education systems where grades inform the admission to further education, grades given in Year 4, 5, 6 or 7 may have a different role from those in the Nordic countries, where there is no such transfer within compulsory education. In countries that employ a firm separation between primary and secondary education, the grades may serve an important role in admitting students to differentiated secondary programmes. These purposes of grading do not exist in countries with an integrated compulsory education system (called single-structure education in the Eurydice data).

However, among countries that in Eurydice are labelled and classified as the same type of education system structure, there are substantial differences. To better comprehend the structural differences that in Eurydice are distinguished in two categories (single structure and primary/secondary), we introduce a third category to distinguish between non-single structure countries that have a firm separation between primary and secondary education and countries that also track students when they commence (lower) secondary education. We use the following classification for countries’ education structures:

Single structure (comprehensive education)

Primary secondary structure (comprehensive lower secondary programmes)

Tracked secondary structure (differentiated lower secondary programmes)

In the first group, we find the countries Bulgaria, Croatia, Denmark, Estonia, Finland, Hungary, Iceland, Norway, Portugal, Slovakia, Slovenia, Spain, Sweden and Turkey. In the primary secondary group, we find Cyprus, France, Greece, Lithuania, Malta, Poland, Romania and countries in the United Kingdom. In the tracked secondary group we find the countries Austria, Belgium, Germany, Ireland, Lichtenstein, Luxembourg and The Netherlands.

When looking further into some countries it becomes evident that the Eurydice data may be misleading in its classification. One example is Latvia, which we first classified as a single-structure country based on the information from Eurydice. When investigating further, however 5 , it appears that students are differentiated already at lower secondary and thus should be classified into group 3. Italy is another example. It is not classified by Eurydice as a single-structure education system; however, according to the Eurydice data it is possible for schools to provide integrated comprehensive schooling 6 . Thus, for these two countries we have used the label ‘inconsistency’. This label may prove to be appropriate for several other countries too; Latvia and Italy are just mentioned as examples, to explicate the problems of classifying countries based on the information structure of the Eurydice database.

With respect to students’ age when they are differentiated (see Appendix 1), we generated the data from What was reported in the Eurydice section called ‘progression of pupils’. While we could classify most countries based on this information, nine countries had not provided the necessary information. Furthermore, even within the primary secondary structure countries we find contradictory information in the Eurydice data. For instance, France has a complex provision of different types of schools within the public education system. This is, however, not provided based on classical ‘tracked’ differentiation (such as in Germany). Thus, it was classified as primary secondary, but with differentiation at age 11 (after six years in school), hence a type of hybrid between the Primary Secondary and Tracked Secondary structures. England was even classified as Primary Secondary, with differentiation at age 16 (after 12 years of schooling), as Eurydice does not report that English students undertake secondary schooling that leads into different tracks. Looking closer into this, however, the provision of private (Academy) schools implies a substantial segregation of students based on socio-economic premises that in effect leads to earlier differentiation for many students.

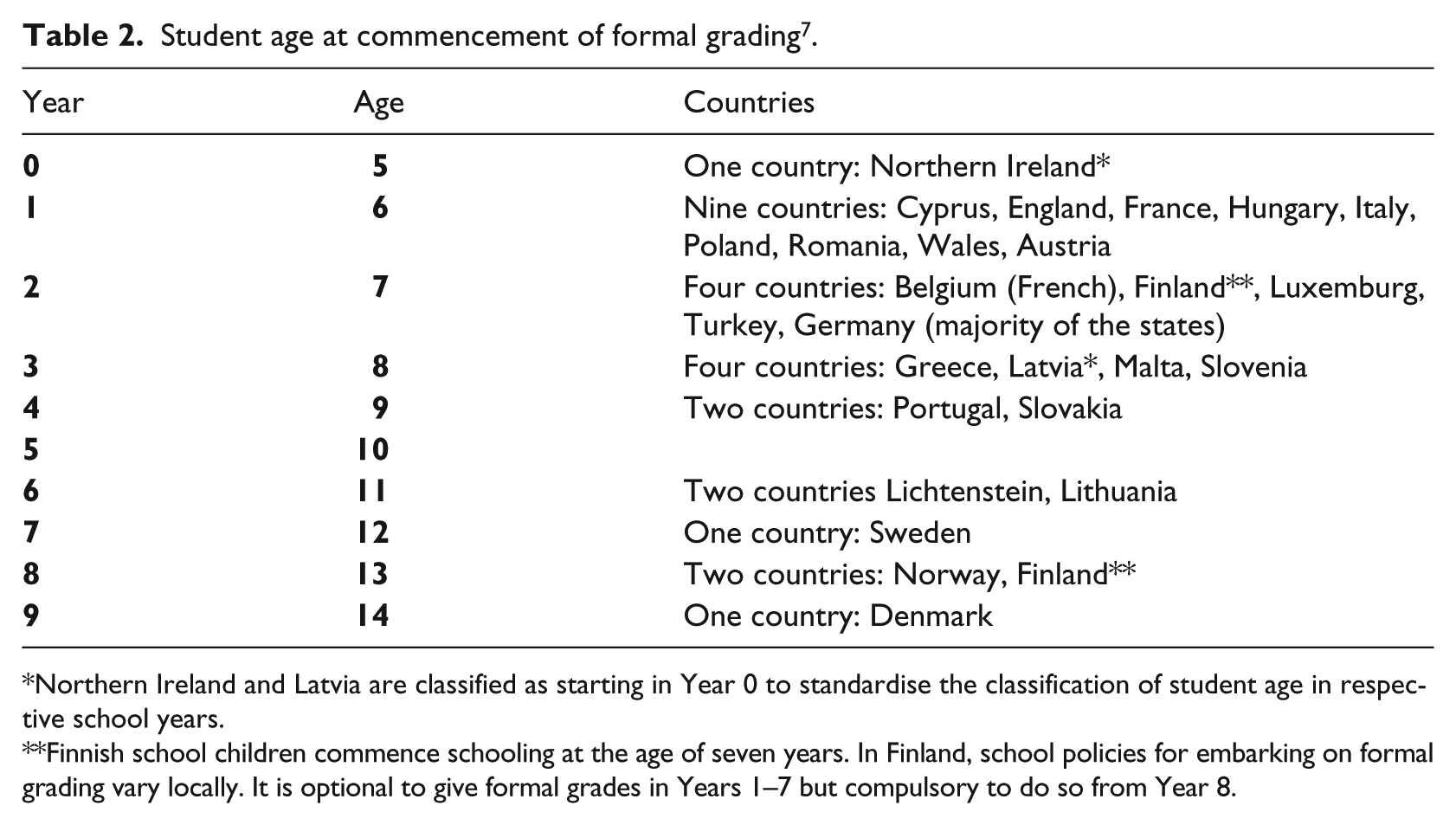

The above examples are mentioned to bring attention to the complexity associated with this type of data, and the need to use it with caution. Comparing a basic item such as the age at which children start school is a complex issue to which Eurydice and the OECD give much attention as it has substantial implications for comparing national states’ educational achievements. This has implications for the comparison of what year countries begin formal grading of students. The examples illustrate just a few of these complex factors that condition the premises of grading policies. They illuminate how difficult – or outright impossible – it is to arrive at a comparable notion of ‘grading age’. One should therefore use the information provided in Appendix 1 and Table 2 with utmost caution, and note that they have been generated to substantiate variations rather than to facilitate comparison.

Student age at commencement of formal grading 7 .

Northern Ireland and Latvia are classified as starting in Year 0 to standardise the classification of student age in respective school years.

Finnish school children commence schooling at the age of seven years. In Finland, school policies for embarking on formal grading vary locally. It is optional to give formal grades in Years 1–7 but compulsory to do so from Year 8.

Analysis II: grades as functional equivalent constructs of comparison?

When we examine the grading policy information in Eurydice, what on the surface appears as uncomplicated information – which the Swedish Ministry of Education and their consultant used as hard facts about grading – has embedded several nuances. Grading usually refers to a formal assessment at the end of a semester or school year based on the student’s achievement in relation to specific criteria or ranked relative to other students; for example, Brookhart (2015) defines a grade as given by teachers representing the sum of many achievements and not just a single test score. She perceives it as an internal tool of assessment rather than an external one, such as for various national tests and examinations. Other scholars have other perceptions of what a ‘grade’ is. So too do policymakers.

Thus, ‘grading’ is an extremely complex phenomenon that takes many different forms. For example, a policymaker may define it as formal grading when a student sits through a national test in, for example, Year 2, whereas other policymakers, or a person in charge of synthesising the information, may view the national test as a rare exception from the normal environment in which formal grading does not occur.

A problem, which indeed is what the Eurydice network aims to help policymakers with, is the issue of sharing information that for the most part is available in the nations’ domestic languages. For researchers, it is important to examine other sources, particularly scholarly articles about countries’ education policies, to control for misinterpretation or biased representations of national policies (Dale and Robertson, 2009). Thus, mastering the relevant language is pivotal. The problem of language and translations causes substantial ‘noise’ to the ‘uploading’ of policy data in Eurydice. In some cases, the English used is downright poor. Thus, it is with the greatest care that we may draw some conclusions based on comparisons of countries’ grading systems. This applies to both educational research and policymaking.

When studying how the European countries describe their grading systems in Eurydice, we first note that 11 countries do not even mention at which point they start grading student achievements. This means that it is difficult to control general statements about the number of countries that give grades, or about when and how such grades are given. This information is sometimes only available through hyperlinks to the countries’ national education authorities, in their respective native languages. Thus, nearly one-third of the countries do not appear to view grading age as important information to be provided in the Eurydice database.

Nevertheless, as shown in Table 2, it is clear – and in line with the Swedish Ministry and its consultant’s claims – that most countries report student grading commencing earlier than in Sweden. This, however, is for different reasons and done in very different ways. There are various systems and principles for grading students (see also Lundahl et al., 2015). Below, we use Eurydice data to describe a range of countries that embark on formal grading in the early years, giving attention to the vastly differing descriptions hiding beneath these numerical comparisons.

Austria provides one conclusive grade at the end of Year 1 (age six years), sometimes together with oral supplements. It is not until Year 2 that the children receive grades for all subjects. Latvia also employs a system in which the students receive a more qualitative overall grade aged five and then receive grades on a scale ranging from 1 to 10 from Year 1 (age six) in the native language and mathematics. For the other subjects, the teachers give more qualitative judgements up until Year 3 (age eight), when schools embark on formal grading across all subjects.

In Cyprus, the students receive a progress certificate each year from Year 1 (age six), which shows whether the student passed the Year or not. Without this, they are not allowed to move up to the next class. At the end of Year 6 (age 11), the students receive a leaving certificate from primary school. In Turkey, students take a proficiency test at the end of Year 1 (age six) to determine what education they will receive in Year 2 (age seven). At the end of each year from Year 2 to Year 8, students take an examination in which they must receive the judgement Fair (3) to be allowed to complete the year. Also, Lithuania, although not giving grades in early years, has approved results of Year 1 as a prerequisite for moving up. Students can move up before completing Years 1–3 if they are believed to be able to cope with what the curriculum covers in these years.

In Finland, students have the right to a judgement from their first Year (age seven). The forms of these judgements are determined locally, and it is up to the local authorities to decide whether they want to give grades or oral assessments until Year 7. From Year 8 (age 13) onwards, grades are always numerical. France employs a system were the students’ results on varies tests and examinations are summarised in a book (livret scolaire). This book is used as part of communications that teachers have with the children’s parents to give a continuous account of the child’s development. Hungary has a system in which teachers must give students regular ‘marks’ during the year and summarise them at the end with a rating. In Italy, at the end of each study period the students receive a summarised assessment document. Students are also graded on their conduct. In Poland, students are graded on a scale of 1 to 6, starting in Year 1 (age six).

These examples demonstrate that what, explicitly or implicitly, are perceived as grades are not functional equivalents when policymakers upload and download information to the Eurydice database and when policymakers and researchers use this information to compare countries’ grading policies. The different meaning of grading can be further illustrated with the examples of Cyprus, France and Sweden. In Cyprus grading is connected to retention, whereas in France it is considered a more formal feedback to the parents. In Sweden, the latter function, for example, is already achieved through what is called ‘written judgements’ (skriftliga omdömen): qualitative reports that parents receive from Year 1; but Swedish policymakers and researchers would not call that ‘grading’ in their Eurydice report.

We can draw the preliminary conclusion that the various content items related to grading policy are not functionally equivalent (Schriewer, 2003b), which undermines the validity of brief policy comparisons based on this type of data. Policymakers and researchers should consider that there are great differences with respect to the use of grades, when comparing ‘grading age’.

Discussion: the emergence of new modes of policy legitimation

The aim of this article was to demonstrate new trends with regard to national governments’ policymaking and policy borrowing, with increased use of individual consultants and international agencies to legitimate policy changes. As demonstrated above it is unlikely that a valid comparison of countries’ ‘grading age’ was even comprehensible for the Swedish policymakers. To make such a valid comparison would require substantial resources with respect to information generation, translation and validation procedures, requiring time that would far exceed what was assigned for the investigation.

Despite our illumination of the lack of a valid premise for drawing inferences from Eurydice with regard to student age at commencement of formal grading, we have also undertaken a correlation analysis relating the generated Eurydice information to the countries’ PISA scores (Lundahl et al., 2015). We find no evidence for the Minister’s remark that ‘early grading countries’ excel in PISA or that there is a causal relationship between these factors (which even in the event of any covariance would be a naïve and speculative inference to draw). Our analyses suggest that even if it is possible to rely on Eurydice data to reveal some fundamental differences when it comes to grading systems in Europe, there are large variations in how the countries describe these policies. The Eurydice data simply do not provide essential information on the reality of grading policies in Europe.

From the above theoretical and empirical accounts we can observe a historical development and shift in the modes of policy legitimation used by Swedish policymakers. The strong Swedish tradition of using stakeholder and expert committees that undertake comprehensive investigations declined after the beginning of this millennium. In the 1970s, the issue of formal grading was thoroughly discussed and investigated in Sweden in a traditional collaboracy fashion. Stakeholders and experts would work side by side in government committees for almost a decade to investigate ideological disputed questions related to student age at commencement of formal grading. Fast-forward to the post-millennium era of policymaking, and we see that both agency and consultancy modes of policy legitimation, within half-year sequences, are at play when seeking to effectuate reforms. Increasingly, governments have nominated experts rather than stakeholders to the committees. Nowadays, three-quarters of the expert inquiries (SOUs) are one-man inquiries conducted by single experts in one- to one-and-a-half-year sequences (Petersson, 2013). This has been particularly evident when it comes to the field of educational assessment and grading, wherein each of the four major government reports over the past decades has been formulated at a steadily increasing pace, ranging from the nine-year inquiry of the 1970s (SOU, 1977: 9) to the one-year or less inquiries of today (SOU, 2010: 52; Utbilningsdepartementet, 2014). Correspondingly, these have changed from being parliamentary to one-man investigations (Lundahl and Jönsson, 2010).

The increased emphasis on agency mode of policy legitimation may also indicate a shift from what Waldow (2009) describes as a Swedish distinct tradition of ‘silent borrowing’. Comparing committee reports (SOUs) in the 1960s and 1970s, Waldow (2009: 486) argues that ‘Swedish political culture in the second half of the twentieth century was characterised by the belief in the rational, more or less non-ideological steering of economic, social and educational policy’. The recent OECD (2015) report and the consultant’s review of OECD data may indicate a change toward more use of OECD data in SOU policy deliberations. On a more general level Ringarp and Waldow (2016) noted that, before 2007, international reference points were almost never used as an argument for reform in Swedish policy-making, despite Sweden was participating in many ways in the international education policy-making mainstream. This seems to have changed around the year 2007, when the ‘international argument’ became prominent in the education policy-making discourse as a legitimatory device and justification for change.

As government-nominated committees (SOUs) are long-standing institutions in Swedish policy deliberations, there is much power associated with these committees. The changed composure of these committees, however, may not be fully acknowledged among the stakeholders and public. Green papers signify an authority and legitimacy that may be more reliant on profound tradition than reflected in the contemporary procedures for nominating committee members. Thus, governments can nominate ideological allies as experts with a mandate to produce desired policy recommendations based on ‘fast policy’ reviews provided by, or based on data obtained from, agencies such as the OECD, the European Union and associated networks (such as Eurydice). Mediated through the recognised SOU institution and format, these policy reviews and recommendations can provide scientific legitimacy for policy changes in line with the government’s ideology. It is a ‘perfect medium’ for ‘levelling up’ values and ideologies – through ‘world situations’ – to scientific evidence that in turn can inform and legitimate reforms.

Ringarp and Waldow (2016) argue that in countries like Sweden, with self-confidence as a pioneer country in education, facing PISA scores below average undermines this self-confidence and consequently makes externalising to world situations more attractive as a legitimatory resource. Large-scale assessments may appear particularly attractive as a legitimatory reference, they write, ‘as referring to them combines externalising to world situations on the one hand with externalising to scientificalness on the other. Thereby, two powerful sources of legitimacy are tapped at the same time’ (2016: 6).

As the PISA studies undergo rigorous validation procedures with respect to both quantitative and qualitative comparisons, these studies have shaped an era of general ‘trust in numbers’ (Lundahl and Waldow, 2009). This gives reform arguments more legitimacy when based on supranational agencies such as the OECD and the European Union (more or less) irrespective of whether data undergo rigorous validation procedures. Given the national states’ extensive use of such comparisons in policymaking, there are reasons to critically examine the thoroughness of ‘express’ reviews and recommendations. It appears that simply referring to supranational agencies such as the European Union and the OECD as the basis for this type of knowledge brokering provides the desired legitimacy despite being based on vague, incomplete and at times misleading information. Our analysis demonstrates that comparisons of qualitative policy descriptions in Eurydice should be treated with utmost caution. To have some comparative value, the countries’ information on at what student age schools embark on formal grading should be tied explicitly to International Standard Classification of Education levels (as done for standardised tests in the Eurydice 2009 report) and the meaning of grading explicitly defined.

The above analysis also sheds light on substantial problems related to conceptualisation and classification when constructing policy information. This further relates to how agency and consultancy modes of policy legitimation can operate when governments use comparative policy data to show that reform ideas are in line with either the normal or the successful ‘world situation’. Our empirical analyses demonstrate that this information is difficult to validate, and we claim that in some cases the objects of comparison – such as student age when schools embark on formal grading – are not functionally equivalent at all. Lundahl et al. (2015) discovered in a systematic research review that there is limited comparative research on assessments in general, and on grading in particular – a situation that also cements the problem of implicit borrowing; an implicitness that becomes problematic given that countries’ ‘lending’ (Steiner-Khamsi, 2010) or ‘uploading’ (Prøitz, 2015) of national policies inform other countries’ policymaking.

Unfortunately, Eurydice does not call our attention to the principal challenges associated with the quality of the qualitative data that it provides to policymakers and researchers. Eurydice’s website slogan, Better knowledge for better policy, creates high expectations of sound policy data (Eurydice, 2015). While this may be true for the quantitative information provided, and its synthesised reports, our investigation suggests that the qualitative descriptions of the countries’ educational assessment policies do not meet these expectations. We argue that Eurydice fails to meet common academic standards of transparency with regard to the construction of comparative policy data, and furthermore that it should have been explicit about potential threats to the validity of the comparisons and information provided. This becomes particularly problematic when this type of comparative policy data – synthesised and facilitated by supranational agencies – is used by consultants working with limited resources, with short deadlines and a mandate with a narrow focus, in order to shed light on political issues on behalf of governments.

Gunter et al. (2014) identify the relationship between the state, public policy and knowledge construction as an important site for analysing the role of consultants. The present study has unravelled an example of the interplay between these actors and processes. It substantiates a phenomenon Grek (2013) has identified in which previous accounts of ‘knowledge and policy’ or ‘knowledge in policy’ shift to a new reality where knowledge is policy. ‘It becomes policy, since expertise and the selling of undisputed, universal policy solutions drift into one single entity and function’ (2013: 707). We have proposed three concepts of policy legitimation strategies that help come to terms with the interplay between national policymakers, their use of comparative data provided by supranational agency actors such as the European Union and the OECD, and the use of consultants to review and utilise this information in policy recommendations. The collaboracy, agency and consultancy modes of policy legitimation can moreover be viewed as characteristics and milestones related to how the act of policy legitimation has emerged over time.

Conclusion

Our theoretical discussion highlighted the improbability that comparative policy data translate well across countries, due to the distinct different national contexts of formulation and interpretation. Our empirical investigations illuminated validity problems related to structuring, labelling and classification of policy information. Further, we have demonstrated significant problems of identifying conceptual equivalence with respect to the meaning of ‘grading’. In other words, the validity in the comparisons of different nations’ grading systems is low. Thus we have illuminated that the Swedish policymakers’ approach to effectuate the assessment reform – claiming a need for coherence with the European and global ‘normality’, as suggested by a one-man expert review and based on Eurydice data – cannot be substantiated with valid scientific evidence.

In sum, the paper offers a remarkable example of how agency and consultancy modes of policy legitimation can operate in tandem when policymakers utilise comparative data to effectuate reforms. We have demonstrated that this approach relied on misinterpretations and inferences based on global and European-level information concerning policy structures that do not stand the test of scrutiny. As such, we have illuminated an example of European policy isomorphism (DiMaggio and Powell, 1983) that relies on, at best, wrong inferences that nevertheless created new national semantics for governing education that was used to effectuate a controversial assessment reform.

We have unravelled fundamental problems of transparency associated with this type of policy information with implications far beyond the Swedish case of policy legitimation investigated in this paper. What then causes governments to use this type of policy information? In a ‘fast policy’ era in which ‘the complex folding of policy lessons derive from one place into reformed and transformed arrangements elsewhere’ (Peck and Theodore, 2015: 3) – at an all-time high speed – governments are constantly searching for ways to legitimate their reforms. At the same time, the influence from supranational agencies such as the OECD and the European Union is increasing, the market for education consultancy companies is growing and, furthermore, individual consultants are more commonly used for inquiries nowadays. Single experts serve as governments’ consultants, with limited time frames, and risk reproducing data and information of poor validity due to shallow contextual understanding.

It can be questioned whether national policymakers, supranational agencies, consultancy companies and independent consultants have the time and capacity to fully grasp the fundamentally different premises of education systems that exist across countries. It is imperative that policymakers ask these types of questions of agencies and consultants to validate the policy reviews and recommendations they commission and receive. It is important for policy researchers to acknowledge that this ‘fast policy’ era may pose a threat to the legitimacy of comparative reviews and the scholarship of comparative educational research itself. Thus, we argue that the construction and use of comparative policy data in European policymaking should be given higher priority and be examined thoroughly in future research by the comparative education community.

Footnotes

Appendix 1. A comparison of Eurydice data on educational assessment

In a report to the Swedish Research Council (Lundahl et al., 2015) we generated a comparison of countries’ educational assessment and grading systems based on available data from the Eurydice network. The data were generated in fall 2014. Tables 3 and 4 give an overview of the complex information. The tables complement one another. Table 3 overviews the structure of compulsory education including required formal grading. Table 4 overviews the grading scales for the respective countries. Information about the classification of information for each column is given below. Some errors or lost information may occur. Asterisks (*) indicate amendments made to facilitate consistent classification. These are explained below the tables.

Acknowledgements

The authors are indebted to members of the research group Curriculum Studies, Leadership and Educational Governance (CLEG) at the University of Oslo – in particular, Adjunct Professor II Helen M Gunter – and members of the research group Education and Democracy, Örebro University, and to doctoral student Judit Novak at Uppsala University, for comments on the manuscript. The paper is part of the PhD project Assessment and Selection in the Scandinavian Education Systems (ASSESS), funded by the University of Oslo.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by the Swedish Research Council (grant number [dnr/ref] 2014-1952, From Paris to PISA. Governing Education by Comparison 1867-2015).