Abstract

Romania’s integration into the European Union is fraught with cultural stereotypes. One dominant narrative is that the country creates ‘forms without substance’: meaningless institutions without adequate personnel or intellectual capital. In this paper, we investigate whether this popular stereotype adequately describes higher education reforms in recent years. We ask, ‘what is the meaning of “quality” in the reforms of Romanian universities?’ We present our findings based on an analysis of policy documents and 186 semi-structured interviews with administrators, professors and students in five universities. The results show that people in universities have engaged in a process of interpretation and negotiation with the new quality standards. They are ‘forms in search of substance’, as meaning is created within and around the new institutional structures. We argue that ‘quality’ has come to mean ‘scoring high in evaluations’. This is not without problems for the actors in universities; the evaluation standards contain many contradictions, while evaluations themselves have important limitations. Such findings reflect earlier studies on the ‘audit culture’ in university life.

Introduction

Only this [the uncritical imitation and appropriation of western culture] can explain the vice that contaminated our public life, which is the lack of any sound fundament for the superficial forms that we keep receiving. What is dangerous in this respect is not so much the absence of the fundament itself, but the lack of any sense of need for this fundament in the public, the self-sufficiency with which our people believe and are believed to have done a deed when they produced or translated only an empty form of the foreigners. (Maiorescu, 1868)

‘Forms without substance’, ‘phantoms without bodies’, ‘pretentions without a foundation’ – these are clichés often employed in the contemporary Romanian public discourse to describe and justify institutional inefficiency (Cârlan, 2008: 174). The expressions were popularised in the second half of the 19th century by the literary critic Titu Maiorescu (1868), with the purpose of indicting the rapid modernisation of national culture through the generalised adoption of the western-originating type of institutions without having adequate personnel to ensure their functioning. In the context of Romania’s engagement with the West, this idea has recently been considered a sort of ‘brand’ for the Romanian society in general (Schifirnet, 2007).

In this paper, we discuss whether recent reforms in Romanian higher education have created such ‘forms without substance’. More specifically, we analyse a set of policy instruments associated with the emergence of a ‘quality culture’ in universities through so-called quality assurance (QA) and evaluation procedures. Specifically, these reforms include (a) the establishment of the Romanian Quality Assurance Agency ARACIS in 2005, and (b) the 2011 education law. Various commentators have argued that these reforms did not lead to any substantive changes (Paunescu et al., 2012; Vlăsceanu et al., 2011). Indeed, these reforms certainly aimed to create new institutions following a ‘western’ template. In the reform period (broadly between 2005 and 2011), Romania joined the European Union and became an important player in the Bologna Process. 1

We ask the following research question: ‘What is the meaning of quality in the university reforms in Romania from 2005 to 2011?’ More critically, we ask, ‘Did these reforms create meaningless institutions (“forms without substance”)?’ To answer these research questions, we present an analysis of the main policy documents produced in the reform period, in conjunction with data from 186 interviews conducted in five universities. We argue that the reforms created a process of interpretation and negotiation around different meanings of quality; we identify a dominant meaning of quality as ‘scoring high in evaluations’ and we conclude that the new institutions are not meaningless, but in contrast, the policies have created ‘forms in search of substance’, i.e. the policies created new problems, new careers and new concerns about quality in universities.

The paper proceeds as follows. We begin by introducing our analytical framework, drawing on debates about ‘quality cultures’ in higher education. We then outline considerations in our research design. The reforms will be outlined in more detail after the research design, followed by the presentation of our findings in three sections, discussing (1) the meaning of ‘quality’ in policy documents; (2) the types of interpretation and negotiation around the meaning of ‘quality’ inside universities; and (3) the boundaries to the discourse on quality. In our conclusion, we emphasise why it is important to move beyond the ‘forms without substance’ argument.

Theoretical framework: Quality as culture

We start our analysis from the observation that the meaning of quality is culturally bound; it is socially constructed. To say that something is socially constructed is to say that there is nothing natural or obvious about it (Hacking, 1999). Quality is ‘observer-relative’ (Searle, 1995); that is, we cannot make sense of this concept without analysing the meanings it has for the people who employ it. To answer our research question about the meaning of quality, we thus need to ask sub-questions like ‘quality for whom?’, ‘quality of what?’ and ‘how is quality related to other concerns?’ As argued by Taylor (1987: 42), meaning is always ‘for a subject, of something, in a field’. This puts our study in the tradition of interpretive research, analysing quality as a culture.

The debate on how higher education reforms are changing ‘cultures’ inside universities has been particularly visible in British research on the topic. Good examples are Power’s (1997) book on the ‘audit society’ and Strathern’s (2000) collection on ‘audit cultures’. From the perspective of Foucauldian notions of ‘governmentality’ (Gordon, 1991), these authors argue that various ‘audit instruments’ have established a professional obsession with the evaluation of academic work (Shore and Wright, 1999). As powerfully argued by Ball, ‘[it] is not that performativity gets in the way of “real” academic work or “proper” learning, it is a vehicle for changing what academic work and learning are!’ (Ball, 2003: 226). We aim to contribute to this discussion by empirically analysing quality culture in a quite different political-historical setting.

For some researchers, the argument that a ‘quality culture’ is socially constructed is simply intuitive. What passes for high quality research in a field like applied chemistry is probably quite different from ‘excellence’ in cultural anthropology. Similarly, what is good teaching in a German department of law is quite different from that in an American liberal arts college. Indeed, we cannot assume that quality in Romanian universities, departments and classrooms means the same as what it means to us. Perhaps for these reasons, many policy documents on higher education speak of promoting and establishing a ‘quality culture’ (European Association for Quality Assurance in Higher Education [ENQA], 2005; European Commission, 2009; European University Association [EUA], 2006). 2 In contrast to the policy literature, however, we think that quality cultures cannot simply be established: they already exist, even if they have fuzzy boundaries and are subject to change. As a consequence of their existence, quality cultures can be studied empirically from an interpretive perspective.

We aim to show that such ‘quality cultures’ can be identified in the practices of scientific disciplines, in departmental teaching norms, in the accepted forms of interaction between students and professors, and so on (cf. Becher and Trowler, 2001). In order to meaningfully study this phenomenon, we should engage with those people that use the term frequently. For this reason, we have spent considerable time in Romanian universities with professors, students and administrators, as well as reading various policy documents. Our next section discusses our methodological approach.

Methodological approach

This research was conducted in the context of the project ‘Higher education evidence-based policy-making: a necessary premise for progress in Romania’ 3 (2012–2014), commissioned by the Romanian Executive Agency for Higher Education, Research, Development and Innovation Funding (UEFISCDI). Given our own interpretive orientations (Schwartz-Shea and Yanow, 2012), we took the opportunity of this research encounter to examine meanings acquired by ‘quality’ in the language of professionals involved in recent reforms. As such, our research became focused on how policy documents defined quality and how people in universities engaged with these definitions.

The research team consisted of five members, whose personal characteristics were important in at least three ways. One dimension was nationality (three Romanians, one Dutch, and one Hungarian researcher), with language as the first evident implication since some of the interviews were conducted in English. 4 The second implication referred to our expectation that interviewees would try to present their activities in a socially or professionally desirable way, at least to the foreigners. This latter point became a topic of analysis in its own right, pointing to how Romanians construct their engagement with reforms inspired by Europeanisation processes. Thirdly, since we operated within a project assessing policy reforms, we were aware that we did not approach our interviewees in a ‘neutral’ sense. As the project progressed, we underwent a transformation in which we progressively became aware of the interpretive space and negotiation around quality standards.

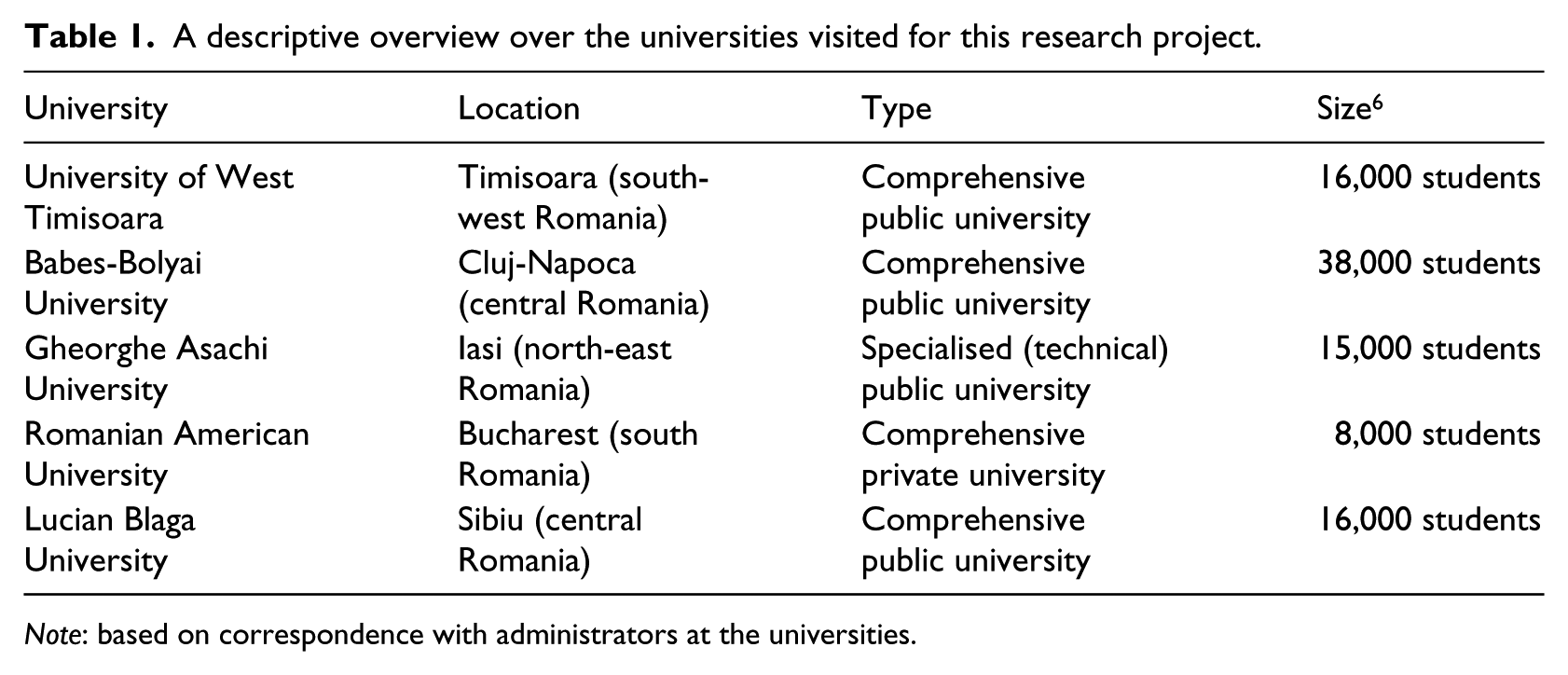

Operating within the methodological boundaries of an ‘evidence-based’ project, it was not an option to collect data through ethnographic immersion (Schatz, 2009). Instead, we relied on document analysis of legislation and relevant policy documents, in conjunction with short field visits (of 2–3 days) to five universities in the country, considered ‘representative’ at the national level in terms of size, profile, and location. Given space limitations, we cannot provide here thick descriptions of each university setting; 5 however, some of their main features are depicted in Table 1.

A descriptive overview over the universities visited for this research project.

Note: based on correspondence with administrators at the universities.

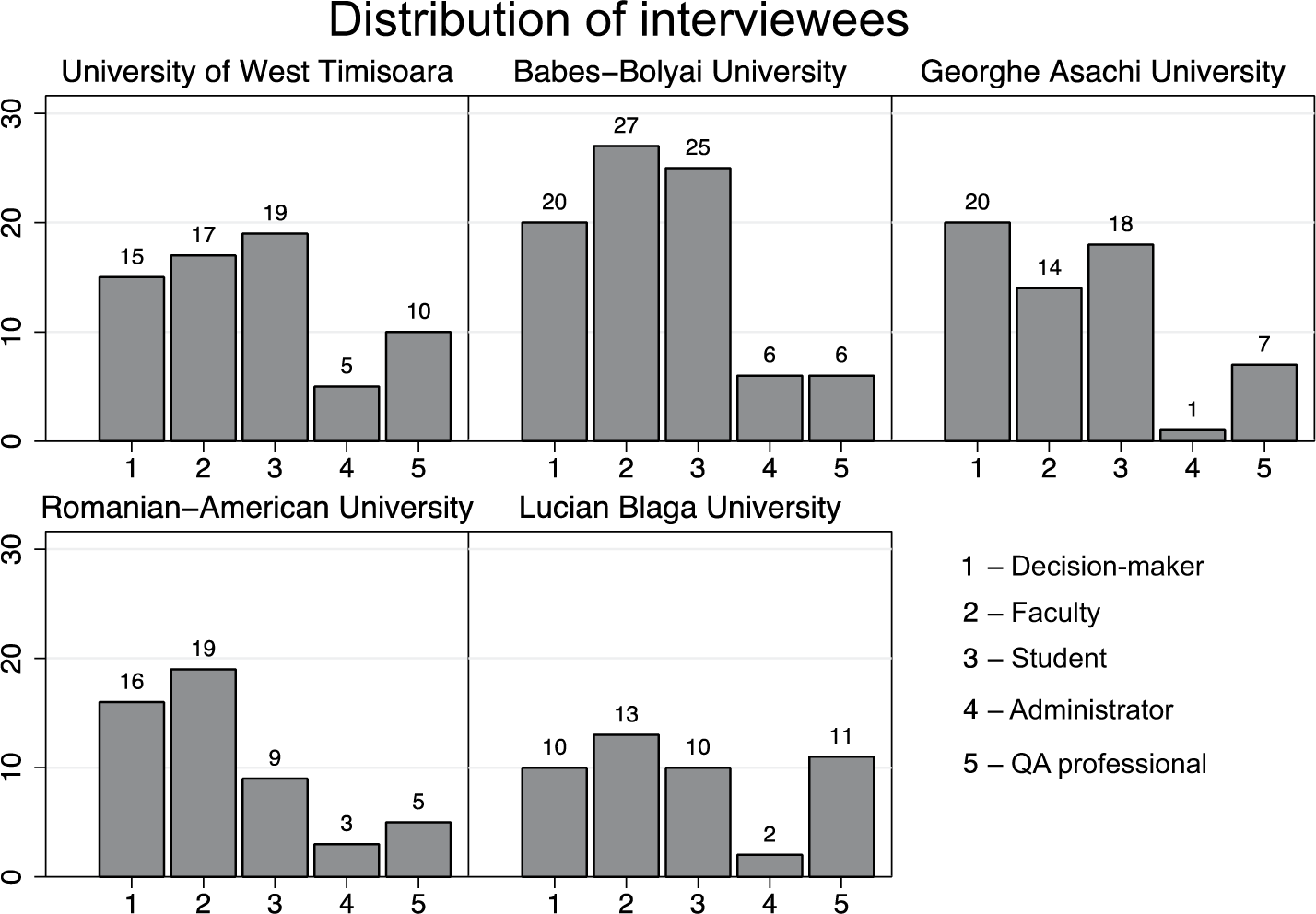

Between December 2012 and June 2013, we conducted 186 semi-structured interviews with 310 people in these universities. We systematically approached our interviewees, distinguishing from the onset among decision-makers (people in leadership positions in rectorates, faculties, and the university senates), QA professionals (administrators and/or faculty members working on quality assurance), regular faculty, administrators (secretarial support) and students. This analytical decision was based on the assumption that people at various hierarchical levels engage differently with the reforms, depending on the requirements of their position. Figure 1 shows an overview of the people interviewed in the five universities.

The distribution of interviewees by university and professional status.

Interviews lasted between 40 and 60 minutes and were organised in three parts, dealing with QA practices, productivities and meanings associated with such evaluations, following the work of Milliken (1999). Although our initial enquiry was limited to quality assurance activities, we soon came to realise that people in universities do not separate QA from other evaluation practices, which is why our questions gradually became broader.

The analysis of documents was a continuous, back-and-forth process, as we initially started with QA legislation but soon moved to other documents related to institutional rankings and evaluations, in addition to our interview notes. After each field visit, we held team meetings discussing our encounters, trying to identify main themes. We fully acknowledge that we can only provide interpretations of our interviewees’ self-presentations (Jacoby, 2006), in line with our own experiences. However, we believe that our different professional backgrounds allowed us to draw a ‘fuller’ picture of the topic than would have been possible in a narrative written only by academics or only by practitioners (Kurowska and Tallis, 2013). The coding took place after the completion of all visits using the computer software Atlas.ti, and it was reviewed by at least two team members for each university. The big categories of ‘practices’, ‘productivities’ and ‘meaning’ were carefully coded, identifying recurrent topics and considering the salience of individual codes. 7 Following Becker (1998: 128–138), we note that our interpretations of ‘quality’ hence formulated are: (a) ‘empirical generalisations’ resulting from our gradual abstraction of the data, in line with our theoretical inclinations; and (b) relational, meaning that they only make sense in the system of terms provided by the recent reforms. The analysis follows below.

The meaning of ‘quality’ in the reforms

In this section, we introduce the concept of ‘quality’ that is embedded in the university reforms. In relation to our research questions, we discuss (a) who defines quality, (b) what quality refers to, and (c) in relation to what kind of concerns it is defined. In response to these questions, we aim to show that (a) the meaning of ‘quality’ is defined by policy-makers, (b) ‘quality’ refers to various aspects of university life, such as the management of the universities, research and teaching, and (c) quality is defined in relation to the process of Europeanisation. However, when people in universities – or indeed we as researchers – try to make sense of these meanings, problems start to emerge because of the contradictions between different pieces of legislation and their corresponding methodologies.

In 2005, the government reformed an important piece of legislation dealing with quality in the form of an emergency ordinance complying with the European Standards and Guidelines on Quality Assurance in Higher Education (ENQA, 2005). Before this date, ‘quality’ was only regulated in an accreditation scheme, created in the wake of Romania’s transition towards a capitalist democracy. Already by 1993, as many as 250 such private universities had been created – a common phenomenon in post-communist countries (Scott, 2002). The new legislation created a new autonomous public institution – the Romanian agency for quality assurance in higher education (ARACIS) – that was entrusted with responsibilities in the authorisation of study programmes and external quality assurance more broadly.

More important for the purposes of this paper, the reforms introduced a new terminology around the concept of ‘quality’. The 2005 legislation defines ‘[education] quality’ as ‘the set of features of any training program and of any of its providers that fulfils the expectations of the beneficiaries, as well as the quality standards’. 8 In other words, quality is to be defined by the ‘beneficiaries’, which are separated into ‘direct beneficiaries’ (students) and ‘indirect beneficiaries’ (employers, society as a whole). 9 However, the provisions also add ‘quality standards’ to the definition of ‘quality’. It is here that we run into problems. Once we develop an overview of the different standards defined in the law and in subsequently released policy documents, we find a variety of standards, all embedded in different instruments to measure quality.

Indeed, the quality assurance procedures have been elaborated into the ‘Methodology for external evaluation’ that further defines ‘quality standards’. The methodology identifies three areas in which universities are to perform, namely ‘institutional capacity’, ‘educational effectiveness’, and ‘quality management’. Each of these is further specified into various ‘criteria, standards, and performance indicators’. In total, the methodology defines 14 criteria, 16 standards and 43 performance indicators. Interestingly, most of these standards refer to issues of management. In contrast, only very few are related to what actually goes on in the classroom. Over time, however, specific standards for different subject fields were elaborated that effectively set the type of modules taught in every single degree programme. This caused some commentators to blame ARACIS for the homogeneity found in study programmes in Romania (Paunescu et al., 2012).

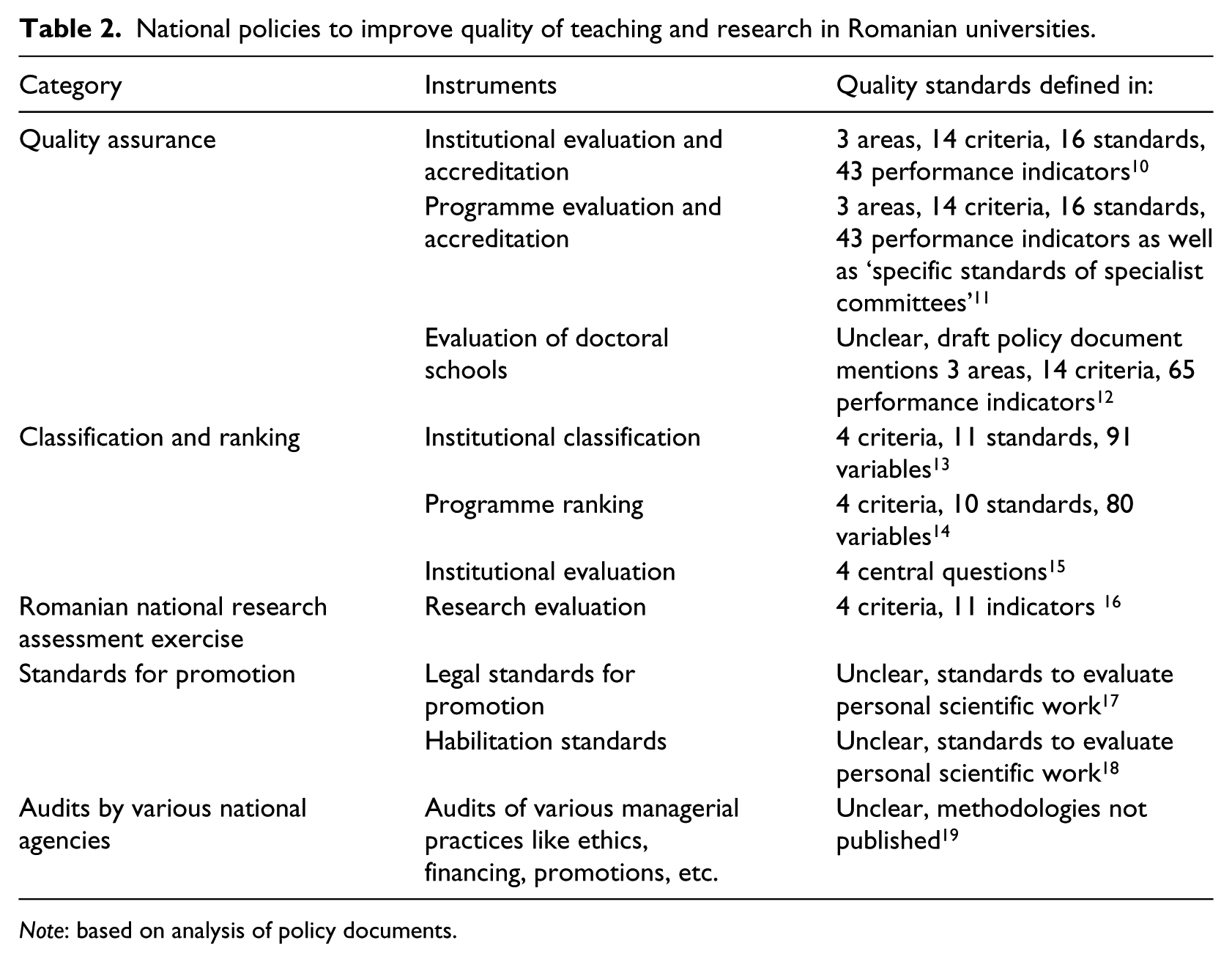

During the elaboration of a new law on education in 2011, the government created a new set of instruments to measure and evaluate quality. Table 2 sets out these different instruments and the associated quality standards. The most salient policy instrument was a classification of the universities, and an associated ranking of degree programmes. The methodology for the classification sets out four different dimensions of standards, namely ‘teaching and learning’, ‘research’, ‘relationships between the university and the environment’ and ‘institutional capacity’. These dimensions were further operationalised into 11 standards, and close to 100 variables.

National policies to improve quality of teaching and research in Romanian universities.

Note: based on analysis of policy documents.

At the very least, this table makes clear that policy-makers have undertaken various efforts to define ‘quality’. But at the same time, it is not straightforward to identify what the ‘quality standards’ are. In fact, the existence of so many instruments in parallel could signal the ‘forms without substance’ metaphor outlined in the introduction. There are at least 10 policy instruments to follow, each setting their own ‘standards’ for quality. Some of these standards are simply unclear, because they are written down in draft texts or have been amended so many times that the government is still to publish a final version. For instance, the draft methodology to evaluate doctoral schools has been questioned so much that the ministry has refused to publish a final version. Therefore, many of our interviewees point to contradictions between these instruments.

The frustration felt by many interviewees has much to do with the frequent changes to the legislative framework. The story of the Babes-Bolyai University in Cluj is perhaps indicative. The university had developed quality assurance structures since the late 1990s, as it mirrored the practices of its European partners, particularly in Germany. Parts of these practices were imported into the 2005 legislation, as the minister (Mircea Miclea) leading the reforms was also a professor at this university. The faculty at the university showed an appreciation for these reforms, and engaged in several European projects to create new administrative structures for quality assurance. But as the minister changed, the legislation began shifting as well, leading to frustration and resistance to the topic. Interviewees thus expressed their frustration that ‘the rules are changing a lot’. (Lecturer, Male, KG0502). The political process is blamed as ‘every new decision-maker wants to give their own touch, but they hardly ever have a long-term vision’ (Associate Professor, Female, NS0302). And as a result ‘we are forced by all these different institutions to do such evaluations’ (Decision-Maker, Professor, Male, AM0202).

In other words, there is no clear answer to what quality means in policy documents, even for those who are enthusiastically involved in the policy process. There are many contradictions and different points of view about quality in both education and research. However, people in the universities indicate that this talk is not just ‘cheap’; the discourse has set something in motion in people’s thinking and action. The next section will discuss in more detail what these debates produce inside universities.

Interpretation in the ‘quality culture’

The discourse on ‘quality’ is not something abstract that is debated ‘out there’. Despite all the changes, it is something that becomes embedded in power relations, and in (institutional) practices that affect people’s careers. In this section, we describe how people in universities make sense of the new quality standards. We argue that these standards lead to a reinterpretation of quality as ‘scoring high in evaluations’.

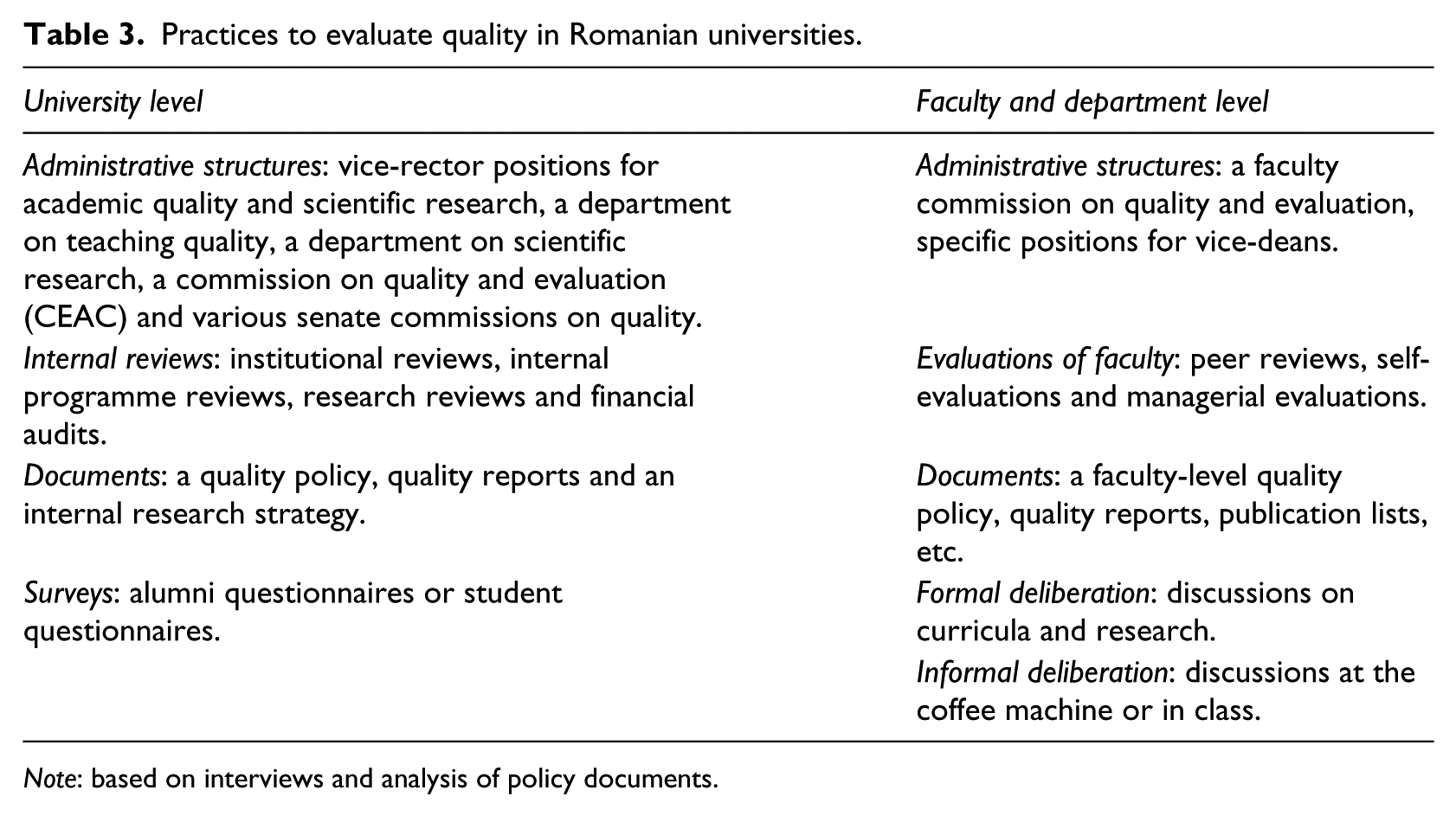

Most visibly, the reforms have created structures and activities within the universities. Table 3 below gives an overview of the new administrative procedures created by the policy instruments described earlier, broken down into the university level and the faculty and departmental level. These practices can be found in every university, at multiple levels of decision-making.

Practices to evaluate quality in Romanian universities.

Note: based on interviews and analysis of policy documents.

This growing administration opens up new types of careers and new positions. In fact, the ‘quality culture’ provides people with a career even if they do not produce much scientific work. There are professionals in all the new administrative structures described in Table 3: in specialised units, committees, the dean’s and the rector’s offices, and one can even work as an evaluator for ARACIS or as a research evaluator. And there are important incentives to aim for an administrative career: ‘if you do not have a management position, your voice is not taken into consideration’ (Lecturer, Male, AM0402).

Furthermore, the process of ‘sense-making’ of the reforms sometimes leads to professional pride about ostensibly minor issues. The Gheorghe Asachi University went through such a process as they developed ‘new procedures, and in a brief time the academic community came to realise its importance. Many of these procedures are now based upon our procedures manual’ (Decision-maker, Professor, Male, RS0403). This is referred to in the university as ‘The Book’, which lists all procedures (Decision-Maker, Female, Professor, KG0303). Other interviewees confirm that ‘when we created the procedures manual, people in other institutions were sceptical’ [but] ‘after a few ARACIS visits, we became a good practice example’ (Decision-maker, Associate Professor, Male, RS0203) for other universities in the country. Some in the university are thus ‘very proud of the manual of procedures’ as ‘it is best to act according to regulations so as to avoid mistakes’ (Decision-maker, Professor, Male, AM0803). But the source of this newly found pride is thin, as people reflect on its content:

Thinking back about it now, the manual is either done for fools who don’t know what to do and need a detailed cookbook for whatever activity, or to actually constrain people by imposing brakes on certain activities (Professor, Male, AM0403).

Attributing meaning to relatively meaningless issues like manuals seems to support the ‘forms without substance’ argument. However, we would like to argue that there is meaning in this web of procedures.

The rankings are the best example of this. Time and again, interviewees mention that ‘ARACIS has granted them a high degree of confidence and they have a good position in rankings’ (Decision-maker, Associate Professor, Male, AM1201), or that ‘our faculty (…) is ranked number one in the country’ (Decision-maker, Professor, Male, NS0102). In some sense, the rankings give some professional satisfaction as they allow people ‘to realise that most of us are doing our best’ (Decision-maker, Professor, Male, KG0702). The rankings are also strategic tools for change. University leaders are aware of the links between evaluation and professional pride and play on this factor. Indeed, ‘the ranking is done annually to motivate people, each person can compare themselves within their own department’ (Decision-maker, Associate Professor, Female, AM0903).

The Romanian American University (RAU) is a paradigmatic case. The university was established in the wake of Romania’s transition from a communist to a capitalist system. Although many other private universities did not take quality standards very seriously, RAU was able to use quality assurance to legitimise its position in the new market for higher education. ‘We are just what our image is on the market’ (Decision-maker, Professor, Male, KG0104), and quality assurance is seen to provide this image. QA procedures allowed the university to conclude that RAU ‘is the best private university in Romania’ (Lecturer, Female, OS0204). In the words of another interviewee, the goal is that ‘you should make students proud of the institution, and they can be proud only if you have a good quality’ (Professor, Female, NS0704). Indeed, ‘Evaluation also comes with ranking. This is good. We are the only private university that is high in the ranking’ (Decision-maker, Lecturer, Male, KG0404).

While these findings make a strong case for the argument that there is some ‘substance’ in the new institutions, there are also limitations. The question remains, indeed, how dominant the new practices are. The next section discusses this aspect in more detail.

Boundaries of the ‘quality culture’

In this section, we want to point to two major limitations to the ‘quality culture’ discourse in universities. One is related to contradictions within the legislation. As outlined by one of our interviewees:

Sometimes these criteria simply contradict each other: look for example at the ranking process that happened based on the Funeriu law [01/2011 on National Education]. The criteria that existed there focused on research, as opposed to teaching and outcomes, and they were even calculated after the evaluation process (Decision-maker, Associate Professor, Female, RS0105).

Consequently, people started to react strategically to the reforms: ‘we were even told from the university level: you do what you think is best, and don’t take the self-evaluation too seriously’ (Assistant Professor, Male, AM0502). Again, this points that the process of ‘sense-making’ is real, leading to a space for negotiation around the meaning of quality.

A second limitation arises from the intersection of evaluation procedures with questions about resources. This was obvious to us during fieldwork, when we had to conduct interviews in rooms with temperatures below 0 degrees Celsius because some of the faculties visited could not afford to pay their heating bills. Indeed, ‘many of the problems with regard to quality are contextual: the quality of enrolments and the financing system affect the quality of education’ (Decision-Maker, Professor, Male, KG0103). Some of these are literally ‘bread and butter’ issues, as salaries for early career academics are extremely low,

20

particularly after the austerity measures:

The fact that promotions were banned and that salaries were cut by 25% as a result of austerity measures led to increasing demotivation from the side of teaching staff. Most of our colleagues are idealists, and do not necessarily focus on money, but once you have financial problems, striving for excellence is hardly your top concern (Decision-maker, Lecturer, Female, RS0205).

Since the quality discourse intersects with other political concerns, universities have an argument to do nothing until other problems are solved. Thus, ‘if we had more money, we would keep people in a positive mentality’ (Decision-maker, Professor, Male, AM0205) and although ‘it is embarrassing to talk all the time about the lack of money’ interviewees think that they ‘are forced to make compromises’ (Lecturer, Male, AM1301).

What is perhaps most interesting about this new quality discourse is that the language used to express frustration is often the same language as that of policy-makers. For instance, the reasoning goes that if the policies didn’t change so much, they would follow up on evaluations; likewise, if there was more funding, they might consider implementing recommendations. Our interviewees do not talk of heroes versus villains, management versus academics, leaders versus opposition. They are honestly concerned with quality, and try to engage with the instruments available to them.

Discussion and conclusion

We have asked in this paper whether recent university reforms in Romanian higher education are ‘forms without substance’. We found that evaluations have become part of professional life and affect how faculties engage with teaching and research. Academics are subjected to an array of evaluation techniques, from rankings and league tables to job evaluations and research assessments. In line with earlier studies on audit cultures (Ball, 2003; Shore and Wright, 1999; Strathern, 2000), we discovered that such evaluations change the type of work that academic professionals do, the career paths they have and their feelings of professional pride. But there are important limitations: the evaluations often have no follow-up, the policies are constantly changing, resources are unavailable and the epistemological foundation of these instruments is questionable.

It would be too easy to conclude that the new structures are merely ‘forms without substance’ simply been copied from ‘the West’. While dominant in popular media, this argument disregards the serious engagement of management, faculty and students with concepts of quality in the reforms. More critically, the ‘forms without substance’ argument misrepresents the problems faced by management, faculty and students in the universities. As our paper has aimed to show, these groups have become caught in an ‘evaluation culture’ that is re-defining established notions of quality of scientific research, teaching and learning. Forms are always in search of substance, and meaning is created in negotiation with these forms.

Indeed, moving beyond the ‘forms without substance’ argument is not just important from an academic perspective; it is also crucial for Romania’s political future. For too long, Romania’s public debates have pitted liberal interventionism against conservative cultural criticism. European narratives of Romanian politics similarly do not move beyond established stereotypes. As long as this schema is reproduced, neither side will manage to engage reflexively with each other’s or their own concepts. As the governmentality literature has argued for many aspects of public life, intervention and critique are caught in the same language (Burchell et al., 1991; Dean, 2010). Only a reflexive attitude towards discourse opens up the conceptual cages that we have built for ourselves.

Footnotes

Acknowledgements

We would like to thank Ligia Deca, Oana Sarbu, Robi Santa, Norbert Sabic, Jana Bacevic, staff at UEFISCDI and participants at the ECER conference in Istanbul for very helpful feedback to this paper. Any errors and omissions are the authors’ alone.

Declaration of conflicting interest

The authors declare that there is no conflict of interest.

Funding

This research benefited from funding from the European Social Fund under the project ‘Higher education evidence-based policy-making: a necessary premise for progress in Romania’, commissioned by the Romanian Executive Agency for Higher Education, Research, Development and Innovation funding (UEFISCDI) - SMIS Code 34912.