Abstract

Artificial intelligence is reshaping market research from a set of analytical tools into an active participant in the research process. As AI begins to generate questions, analyse data, and produce insights, it challenges the field’s reliance on human judgement, methodological rigour, and interpretive expertise. This research note introduces the special issue on “AI in Market Research” and argues for a socio-technical perspective in which AI augments rather than replaces researchers. It highlights the epistemic, methodological, and ethical questions that will shape the future of market research.

Introduction

Artificial intelligence is rapidly reshaping the epistemic foundations of market research. Once viewed primarily as a set of computational tools designed to enhance data analysis, AI is increasingly positioned as an active participant in the research process itself, capable of generating research questions, simulating respondents, analysing complex data, and producing managerial insights. This shift raises profound questions for market research as a field. With intelligent systems increasingly performing core research functions, the focus shifts to how research is conducted, how knowledge is generated, and how judgement is exercised.

For decades, market research has been distinguished from adjacent domains such as data science and business analytics by its commitment to methodological rigour, theoretical grounding, and interpretive judgement. Research design, measurement, inference, and validation have traditionally relied on human expertise to ensure that insights are not merely predictive, but meaningful, trustworthy, and actionable. The recent acceleration of AI capabilities, particularly through large language models, challenges these boundaries by enabling end-to-end research workflows that appear to replicate, and in some cases, replace human researchers. As a result, market research now sits at a crossroads. It can either assimilate AI as a set of efficiency-enhancing tools, or it can critically re-examine how knowledge is produced when research itself becomes increasingly automated.

These developments require more than technical demonstrations of AI performance. They call for conceptual clarity about the role AI should play in market research, methodological scrutiny of how AI-enabled processes affect validity and inference, and normative reflection on the governance, ethics, and trustworthiness of AI-generated insights. While the existing literature has begun to document AI applications across specific stages of the research pipeline, far less attention has been paid to the implications of treating AI as a quasi-autonomous researcher rather than a bounded analytical aid. Without such reflection, there is a risk that market research will prioritise speed, scale, and automation at the expense of understanding, interpretability, and epistemic integrity.

The purpose of this research note is to introduce the special issue of ‘AI in Market Research’ in the International Journal of Market Research and situate contemporary developments in AI-enabled market research within a broader intellectual and methodological context. Instead of focusing on whether AI can perform specific research tasks, this research note examines how increasing AI autonomy is affecting the logic, practice, and legitimacy of market research. In doing so, it advances a socio-technical perspective that treats AI not as an independent source of knowledge, but as a system whose value depends on how it is embedded within human judgement, institutional norms, and methodological safeguards. This framing provides the foundation for the sections that follow, which trace the evolution of AI in market research, examine its role across the research pipeline, articulate a vision for governed augmentation, and identify the epistemic and methodological challenges that will shape the future of the field.

AI: From Tool to Autonomous Researcher

The application of artificial intelligence in market research has evolved gradually alongside and at times in conjunction with broader developments in marketing analytics and data availability. Early work largely conceptualised AI as an extension of advanced statistical modelling, valued for its ability to improve prediction, classification, and optimisation using data such as transactions, CRM records, and clickstream logs (Gupta et al., 2020; Hair & Sarstedt, 2021). In this phase, AI was primarily applied downstream, supporting segmentation, forecasting, and response modelling, while core market research activities such as research design, measurement, and inference remained grounded in traditional methodological frameworks. As a result, AI was rarely treated as integral to the research process itself, but rather as an analytical enhancement applied after data had been collected.

From the mid-2010s onward, the proliferation of unstructured digital data shifted AI more decisively into the analytical core of market research. Advances in natural language processing and deep learning enabled automated analysis of social media content, online reviews, and qualitative transcripts, allowing researchers to scale tasks such as sentiment analysis, emotion detection, and thematic discovery (Nguyen et al., 2023). This period marked an important expansion of AI into domains traditionally associated with interpretive and qualitative judgement. However, much of this work remained narrowly focused on specific stages of the research workflow, particularly data processing and analysis, with limited attention to how AI reshapes upstream research design or downstream interpretation and decision-making (Gupta et al., 2020; Ma & Sun, 2020). As a consequence, AI’s role in market research developed in a piecemeal fashion, advancing technical capability without a corresponding integration into market research logic and border practices.

The emergence of large language models and generative AI represents a qualitatively different moment in the evolution of AI in market research. Recent conceptual and review work increasingly positions LLMs not merely as analytical tools, but as an autonomous systems capable of supporting multiple stages of the research process. This includes research design and planning, conversational data collection, coding, synthesis, reporting, and scenario generation (Chintalapati & Pandey, 2022; Verma et al., 2021; Mariani et al., 2022). The AI appeal mainly comes from the promises of improving productivity and efficiency while overcoming human shortcomings such as subjectivity and bias.

This shift aligns with broader frameworks that conceptualise AI as a form of marketing intelligence infrastructure rather than a collection of isolated techniques (Huang & Rust, 2021). In principle, generative AI enables a more continuous, interactive, and adaptive research process, blurring traditional boundaries between marketing strategy development, data collection, analysis, and insight generation.

AI Across the Market Research Pipeline

Three dominant streams characterise the current literature on AI in market research. The first consists of conceptual and integrative frameworks that map AI capabilities onto marketing and research activities, including the mechanical, thinking, and feeling AI typology, AI-enabled marketing architectures and broader systematic reviews of AI in marketing (e.g., Huang & Rust, 2021). These contributions have been instrumental in legitimising AI within marketing scholarship, but they often stop short of specifying how AI should be operationalised within concrete research designs or methodological protocols.

The second stream comprises empirical analytics studies, particularly within digital and customer analytics, that demonstrate the effectiveness of machine learning and deep learning for prediction, classification, and pattern discovery using large-scale data (e.g., Lee et al., 2021). While technically sophisticated, this work frequently prioritises predictive accuracy over theoretical alignment or interpretability, reinforcing concerns that AI may optimise outcomes without improving understanding.

The third stream focuses on methodological and ethical governance, addressing issues of bias, explainability, measurement, and causal inference in AI-enabled research pipelines (e.g., Hair & Sarstedt, 2021; van Giffen et al., 2022). This literature highlights that distortions can be introduced at every stage of the AI pipeline, from data generation to deployment, yet these insights are rarely integrated into empirical demonstrations of AI-augmented market research.

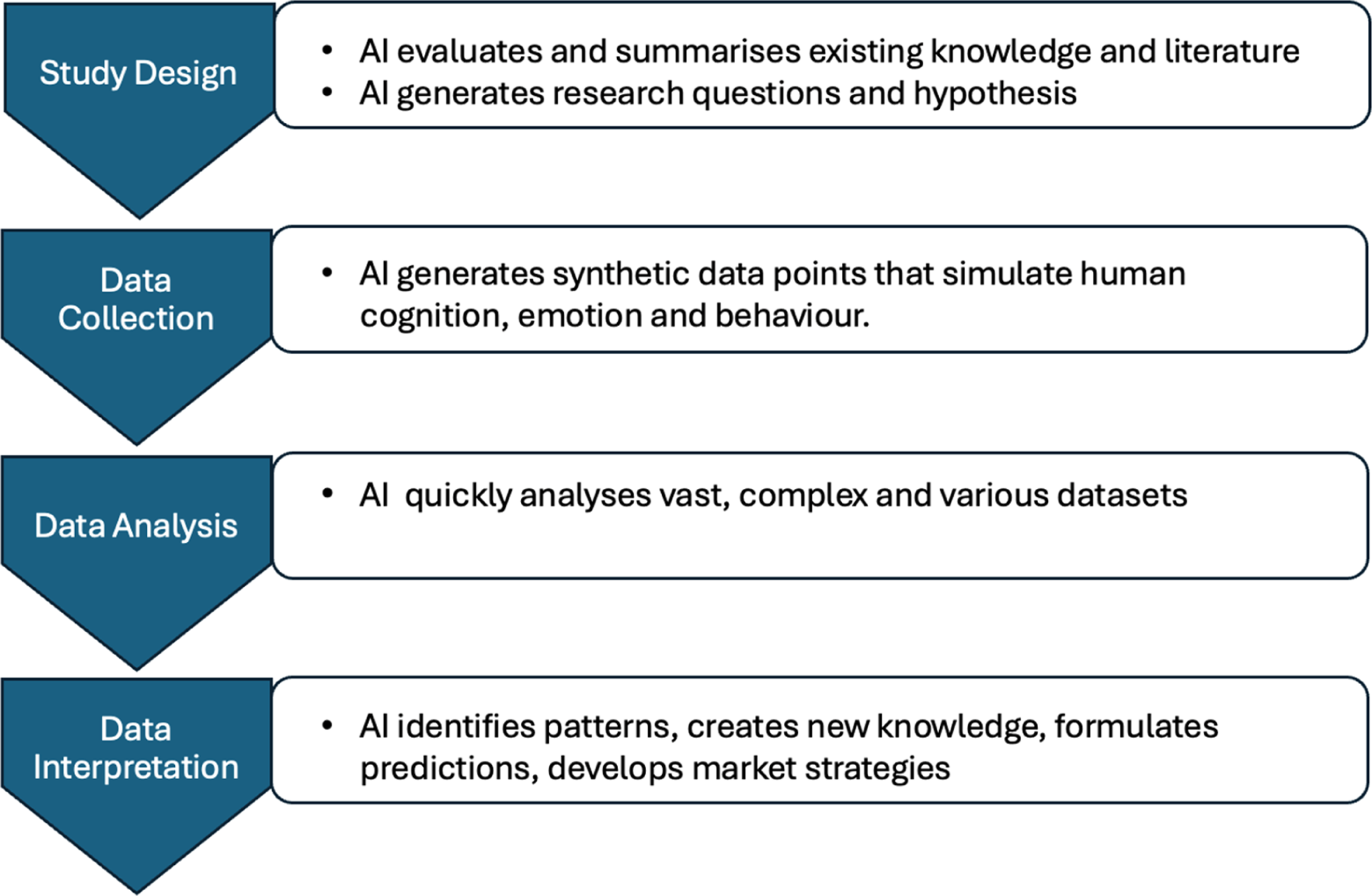

Across these streams, researchers have been imagining ways in which AI may improve the market research process (Wang et al., 2023). When looking at the research pipeline, AI can bring advantages of efficiency and productivity throughout the process (see Figure 1). AI tools can support researchers in developing study designs and planning, efficiently evaluate and summarise scientific literature, and generate research questions and hypothesis (Wagner et al., 2022). AI tools can speed up data collection processes by generating surrogate data points. Recent work suggests that LLMs tools can make human-like judgments across a variety of domains, encouraging use of AI as a replacement for human participants in surveys (Aher et al., 2023). AI tools can quickly analyse large and complex datasets and produce new knowledge, functioning as data analyst (Peterson et al., 2021). Finally, AI can support marketers in identifying patterns, generating insights, and developing strategies based on the research. AI in the research pipeline

The contemporary literature reveals a significant imbalance between conceptual ambition and methodological consolidation. Many LLM-focused contributions remain high-level and speculative, offering compelling narratives about AI-enabled research futures while providing limited empirical validation against market research–specific quality criteria such as construct validity, measurement equivalence, or causal interpretability. Empirical work continues to concentrate on downstream tasks such as summarisation, classification, and predictive modelling, rather than on the systematic evaluation of end-to-end research workflows. As Hair and Sarstedt (2021) caution, without explicit attention to measurement theory and inference, the increasing predictive power of AI risks undermining the epistemic foundations of marketing research rather than strengthening them.

Taken together, the literature suggests that AI in market research has reached an inflection point. The field has moved beyond treating AI as a set of isolated analytical tools, but it has not yet fully articulated how AI can function as a research-grade, end-to-end capability that respects the methodological, theoretical, and ethical standards of market research.

AI in Market Research

Against this backdrop, the International Journal of Market Research has organised a special issue on “AI in Market Research”. The underlying vision is that artificial intelligence should not be understood as a replacement for market research expertise, nor as a collection of disconnected analytical tools, but as an integrated, socio-technical capability that reshapes how market research is conceived, conducted, and evaluated. As the literature reviewed above demonstrates, the field has made substantial progress in applying AI to isolated stages of the research process, particularly in large-scale text analytics and predictive modelling. Yet, this progress has not been matched by equivalent advances in methodological integration, theory alignment, or governance. The risk is not that AI will fail to transform market research but that it will do so in ways that prioritise speed and automation over insight quality, interpretability, and trust.

This special issue advances a vision of AI-enabled market research grounded in governed augmentation rather than full automation. Within this vision, AI systems are designed to amplify human judgement, extend analytical capacity, and surface patterns that would otherwise remain hidden, while remaining accountable to established principles of research design, measurement, and inference. Generative models are treated not as epistemic authorities, but as collaborators whose outputs require validation, contextualisation, and theoretical grounding. Crucially, this perspective reframes efficiency gains as a means rather than an end, subordinating technical performance to the broader goals of insight credibility, construct validity, and ethical responsibility. This broader perspective aligns with the rationale outlined by Huang and Rust (2022) that multiple intelligences, specifically AI and human intelligence, are required, with human intelligence being crucial for higher level thinking and detecting boundary conditions of applying AI.

Across the special issue, the papers advance a socio-technical understanding of how artificial intelligence is reshaping marketing and market research, spanning adoption, methodology, analytics, and trust. The issue is conceptually anchored by Desveaud and Bawack (2025), who synthesise the AI adoption literature into a socio-technical framework that moves beyond traditional acceptance models by foregrounding the interaction between AI systems and human users. Their framework highlights that adoption is driven not only by technological performance, but also by relational, emotional, and ethical considerations embedded in AI–human interactions. This perspective provides a unifying lens for the special issue, emphasising that AI’s value in marketing emerges through how it is designed, experienced, and governed rather than through technical capability alone.

Building on this foundation, three papers demonstrate how AI can be productively embedded into core marketing and market research practices when human judgement remains central. Tipgomut et al. (2025) apply transformer-based machine learning models to nearly three million online reviews to show how emotional complexity, rather than simple sentiment polarity, shapes perceptions of review helpfulness. Their findings reveal a systematic negativity bias, particularly in high-price contexts, illustrating how AI-driven text analytics can uncover nuanced psychological mechanisms in large-scale consumer data. Complementing this analytics-focused contribution, Jayawardene and Ewing (2025) introduce Generative AI-Augmented Thematic Analysis (GAATA), a structured qualitative workflow that integrates large language models while preserving transparency, theoretical sensitivity, and researcher oversight. In parallel, Keane and McNaughton (2025) demonstrate how generative AI can enhance psychometric scale development by expanding item generation and improving efficiency, provided that researchers retain control over construct definition, validation, and documentation.

The special issue concludes by confronting the conditions under which these AI-enabled advances remain legitimate and sustainable. Ozturkcan and Bozdağ (2025) examine the dynamics of AI washing and AI booing, showing how exaggerated claims and opaque practices can trigger cycles of mistrust that undermine both consumer confidence and organisational credibility. Their contribution connects directly to the adoption framework and methodological papers by highlighting that trust, transparency, and ethical restraint are not peripheral concerns but foundational prerequisites for AI adoption and use. Collectively, these papers position AI as a methodological amplifier that enhances insight generation without displacing human expertise. Human intelligence remains key for recognizing limitations of AI, for maintaining ethical standards and considering other types of softer human judgment.

Big Challenges of the Future

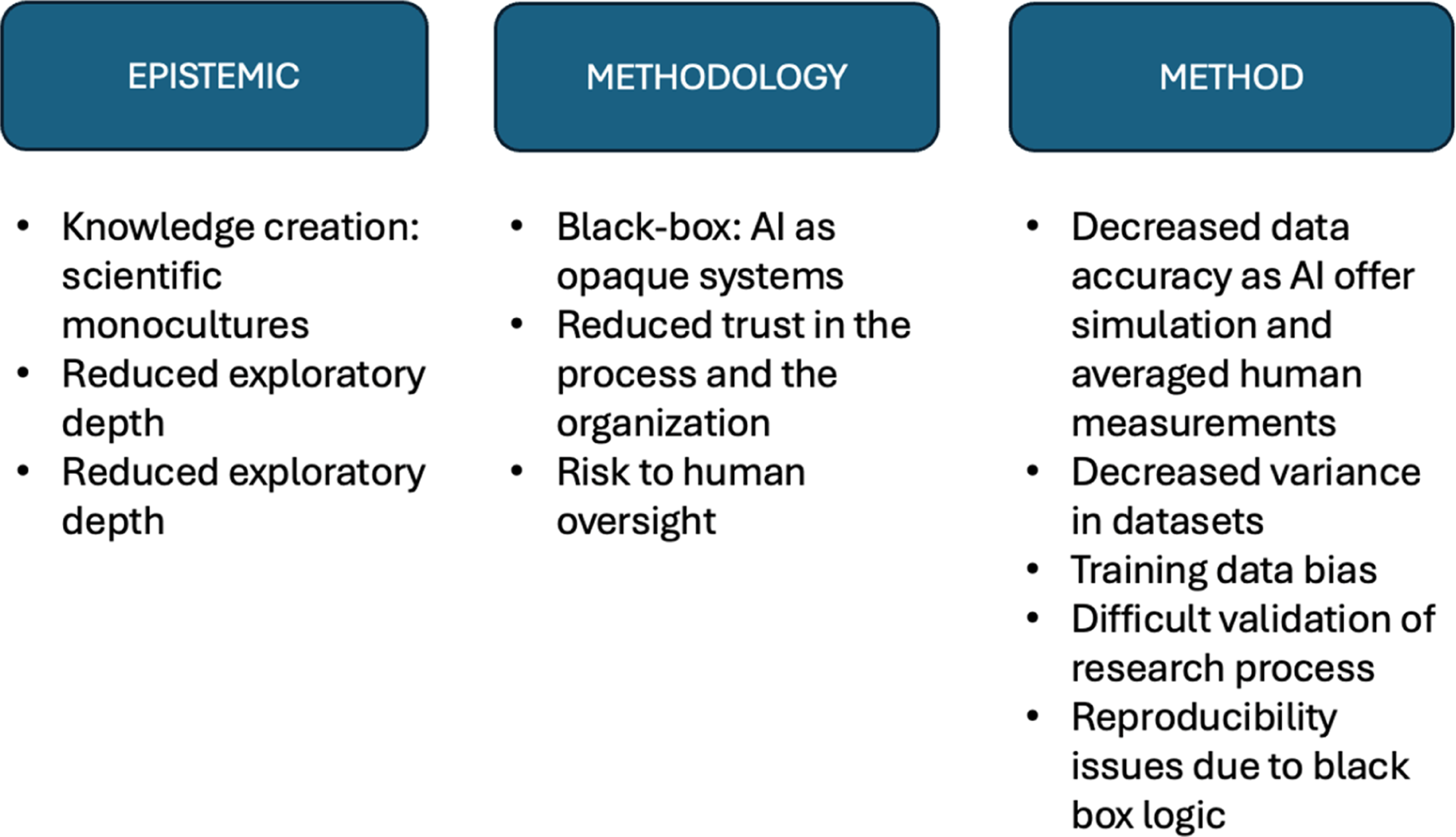

The papers in the special issue have addressed various aspects of how AI systems can enhance analytics and thereby enhance human decision making, while addressing boundary conditions and potential caveats. The rise of AI language models will further change the way market research is planned, performed and analysed. AI offers many advantages, beyond the topics addressed in the special issue, while posing important challenges to the market research process. We conclude this research note by looking ahead based on the framework in Figure 2. Challenges of AI in market research

Epistemic Challenges

While AI can clearly increase research productivity and efficiency across the entire pipeline, such novel possibilities do not always equate knowledge and insight creation. Messeri and Crockett (2024) for instance warn against the rise of scientific monocultures, in which certain methods and viewpoints that best fit AI tools dominate at the expense of alternatives, reducing exploratory depth and breadth while increasing vulnerability to error. The rapid spread of AI tools in science may lead to an era of market research that produces more results but yields less understanding and meaningful insights.

Methodology Challenges: The AI black-box

AI, particularly deep learning, can fully automate scientific workflow by leveraging predictions and conducting experiments using laboratory automation, creating so-called “self-driving laboratories” (Wang et al., 2023). However, access to the various steps and the reasoning behind such evaluations often remains opaque, which creates transparency and explainability issues over the process. AI methods often operate as black boxes and researchers are not able to fully explain how specific outcomes have been generated. This has relevant implications for development of trust in the process and poses challenges to the idea of a human oversight (e.g., human-in-the-loop mechanism) that would ensure AI actions aligns with the ethical, organizational and market standards.

Method Related Challenges

Data accuracy, bias and representativeness issues. When adopted in data collection, AI at times offers simulation of patterns rather than measurements of actual behaviour, although it can also support development of measurement instruments (Keane & McNaughton, 2025). Nevertheless, Large Language Models are trained on data from many individuals, yet they often compress a wide range of judgments into a single dominant viewpoint (Dillion et al., 2023). As a result, LLMs have the risk of approximating average human judgments more effectively than they capture the full spectrum of variation. However, understanding variability and individual differences in human cognition is essential for accurately understanding the human – and the consumer-mind. Moreover, AI and LLMs mainly reflect biases of their training data which can skew consumer insights especially for emerging, niche or non-western markets. Validation and reproducibility issues. Tracing reasoning steps or test reliability within AI models is almost impossible. Minor variations in implementations can result in considerable changes in final performances, which is problematic to ensure consistency over the research process (Wang et al., 2023).

Overall, AI systems can improve efficiency and productivity, enhancing the overall market research process. By building data-driven models and integrating them with simulations and scalable computing, AI can help systematically generate new methodological ideas and derive data insights, as illustrated in the contributions of Tipgomut et al. (2025), Jayawardene and Ewing (2025) and Keane and McNaughton (2025). However, realizing this potential requires careful attention to the challenges associated with AI deployment, as reflected in the notes of caution and boundary conditions discussed by Desveaud and Bawack (2025) and Ozturkcan and Bozdağ (2025). Next to this it is crucial to interpret AI results correctly and avoiding overreliance on potentially flawed predictions. As AI technologies advance, emphasizing reliable implementation and appropriate safeguards will help reduce risks while maximizing their value in the market research process.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.