Abstract

While survey research has expanded rapidly in recent times, little scholarly work has examined interviewer misbehaviour. Inspired by audit principles, this paper aims to identify themes of misbehaviours associated with interviewing. Using thematic analysis, it provides exploratory insights into misbehaviours vis-à-vis audit reports prepared by auditors through their observations of interviewers administering face-to-face surveys. 398 audit reports were reviewed and scrutinised for depictions of misbehaviour, and a total of eight themes were derived. These themes were then aggregated into three domains: issues with asking questions (78%), issues with probing answers (16%), and issues with conduct (5%). Analysis of the themes reveals behavioural patterns that point to actionable areas that researchers and market research agencies can adopt to curb misbehaviour. The findings are also discussed with respect to the utilisation of the audit perspective in deepening our understanding of interviewers and their behaviours.

Introduction

Interviewers are an inevitable cog in the data collection machine. This is especially so for face-to-face surveys where the interviewers need to secure respondents (e.g., contact and gain their cooperation, address any concerns), implement the survey design (e.g., set the rules, define the survey objective), and guide them through the survey (e.g., ask survey questions, probe and record answers). Given their pivotal role as a data collection agent, survey methodology has long recognised interviewers’ impact on survey participation (Loosveldt et al., 2004; Pashea & Kochel, 2016), and more importantly, the quality of the collected data (Crossley et al., 2021; Finn & Ranchhod, 2017).

Attempts to uncover potentially avoidable errors have led to scores of studies examining interviewer-related variables such as their behaviours (e.g., Börsch-Supan et al., 2013; Mneimneh et al., 2018), attitudes (e.g., Jäckle et al., 2012; Olson & Peytchev, 2007), characteristics (e.g., Blom & Korbmacher, 2013; Haunberger, 2010), and interviewer-respondent interaction (e.g., Bowling, 2005; West & Groves, 2013). While literature describing interviewer-related errors is manifold, less research has examined exactly what happens during the interview. A potential area of exploration is to adopt an observational approach (see Morgan et al., 2017) to study interviewers in their line of work. This represents a unique opportunity to observe their natural interviewing-related behaviours in a real-life setting without interference. Hence, the paper presented here thematically investigates audit reports of interviewers’ performance while administering face-to-face surveys. It has a twofold objective. Firstly, it identifies erroneous interviewer’s behaviours through the observations made by the auditor during the interview. Secondly, it suggests implications from how the audit practice (unbiased modes of examining and evaluating) can deepen our understanding of interviewers and their behaviours.

This paper begins with reviewing the current state of the research on interviewer misbehaviour. It then describes the thematic analysis, where 398 audit reports were reviewed and scrutinised for depictions of misbehaviour. Finally, the theoretical and practical implications of the findings (i.e., eight themes of misbehaviour) were discussed.

Research on interviewer misbehaviour

It is well-documented that interviewers influence survey outcomes in different ways. As the ones who have direct contact with the respondents, interviewers are expected to “maximise respondent participation … to control every situation … be as effective as possible” (Lemay & Durand, 2002, p. 30). Yet often, interviewers are known to commit errors of unintentional (e.g., accidental typos, incorrect clarifications) and intentional (e.g., rewording questions, manufacturing answers) nature that may induce differential measurement error (Dahlhamer et al., 2010; Olbrich et al., 2022; West & Blom, 2017). Such delinquency, also known as interviewer misbehaviour, arises through idiosyncrasies in how interviewers administer surveys and is a common problem in survey work (du Toit, 2016; Kiecker & Nelson, 1996; Koch et al., 2014).

The data is only useful if it is of high quality. While good data informs, poor data is consequential as it threatens the validity of inferences drawn from the surveys. Therefore, there are genuine concerns about interviewers becoming a significant source of survey error (Biemer & Lyberg, 2003; Dillon et al., 2020) and interviewer-induced anomalies (Blasius & Thiessen, 2021). In fact, Braunsberger et al. (2007) went so far as to suggest that web-based surveys are of higher data quality than telephone surveys because the interviewers are eliminated from the data collection process.

The major implication is the need for more research to understand these interviewer misbehaviours. A cursory review of the literature reveals that there were more publications surrounding “interviewer behaviour”, and most of them focus on situational variables (e.g., length of interview; Kaplowitz et al., 2012, provision of incentives; Shih & Fan, 2009) and person-centric variables (e.g., gender; Campanelli & O’muircheartaigh, 1999, age of interviewers; Merkle & Edelman, 2002) that influence the performance of interviewers. There was not much in-depth discussion about interviewer malpractices. This is hardly surprising given that scientific evidence of such deviant behaviours in surveys is rarely available (Bredl et al., 2013). Furthermore, the search suggests that lesser attention has been directed towards describing and examining the extent of interviewer misbehaviour.

Nevertheless, there are still some relevant studies. For instance, using planted respondents, Schyberger (1967) found that the 32 observed interviewers displayed a high frequency of deviation from instructed behaviours – i.e., adhering to the survey text, manner of probing for the respondent’s answers, and handling of interviewing aids. In a separate study by Belson (1965), he similarly observed that 30% of his interviewers had repeatedly deviated from the instructed behaviours.

Using reported and measured height data from large-scale surveys, Olbrich et al. (2022) identified substantial and unlikely variations in reported height (i.e., errors in height reporting) by several interviewers at different time points. The authors attributed such occurrences as potential cases of interviewer falsification. Such behavioural trends are not unique and have led researchers such as De Haas and Winker (2016, p. 654) to opine that “the situation that interviewers rather fabricate some of their assignments or parts of the questionnaire than delivering only complete falsifications is considered as quite typical in real survey settings”.

Other strands of research in the field have shown that deviation from the interview script (e.g., changing question wording, skipping questions) might impact data accuracy (du Toit, 2016; Dykema, 2005; Dykema et al., 1997). For example, Ramos-Goñi et al. (2018) noted that fewer data points were collected as interviewers did not follow the research protocol in fully explaining the tasks to respondents. Likewise, sample bias was observed in the study by Koch et al. (2014) as their interviewers did not adhere to the sampling protocol and conducted undocumented substitution of respondents. As a result, it affected their sample’s representativeness, leading to an inflated response rate.

Another related line of research attempts to contextualise these misbehaviours by elucidating the underlying interviewer’s motivations for engaging in such behaviours. For instance, misbehaviour may represent the degree of dishonesty espoused by the interviewers when they administered the survey (Hunt et al., 1984). It has also been attributed to the interviewers’ low mood and lack of interest in conducting the survey (Pashea & Kochel, 2016), leading them to cut corners and rush through the data collection process. That said, no two interviewers are exactly alike, and it is important to note that they are driven by different types of motivations. Hence, depending on the dynamic nature of motivations is of limited value in establishing the cause for poor interviewer performance.

These studies illustrated how deviation from the instructed behaviour (i.e., interviewer misbehaviour) would reduce the data quality and, in turn, detract from the data’s usefulness. Given the pervasive nature of these misbehaviours, researchers and market research agencies need to understand and ascertain what these misbehaviours are. The derived insights can be used to develop thorough and specific guidelines to train, monitor and provide feedback to the interviewers (Sebastian et al., 2012). However, most studies tend to simply highlight that measures have been taken to control for potential interviewer effects (e.g., Marsden & Hollstein, 2022; Ramos-Goñi et al., 2016). For those studies that documented the presence of misbehaviours, they tend to focus on one or two of them (e.g., Dykema, 2005; Olbrich et al., 2022).

There is generally a lack of studies that offer a repertoire of misbehaviours to look out for. This point is aptly underscored by van Meter (2005, p. 2) in his call to study survey interviewers: “We do not often have the interviewers’ appreciation of what the ‘survey business’ is like and how – in that work context – survey data is produced and can, eventually, be improved”. Following this train of thought, observing interviewers’ natural behaviours in their real-life work environment may provide headways to what van Meter had raised. This approach’s goal and primary value is to position observation data as the central component of the research design (Morgan et al., 2017). It should focus on collecting, evaluating, and describing misbehaviours as they occur without interference or attempts to correct the interviewers, which is aligned with audit principles.

An audit approach

While researchers and market research agencies seek to reduce misbehaviours and ensure interviewers deliver their surveys consistently and unbiasedly, what is missing from these academic endeavours is a regular process of looking at interviewer behavioural patterns to yield actionable findings at the individual level. Arguably, what insights can the field of survey methodology ‘learn’ from other disciplines, especially those that specialise in issues of verification, control, and accountability. An easy retort would be to adopt an audit perspective. As Humphrey and Owen (2000, p. 32) opined, “in cases where audit is being utilised as a new initiative, whether it be an academic, quality or a value-for-money audit, the values that tend to cluster around the audit function are those which have been traditionally associated with audit such as verification, checking, independence, assurance”. A report by Deloitte (2013) underscored how an audit function would support their clients by focusing on current and emerging risks and providing them with timely recommendations to make the necessary business decisions. For this paper, it pertains to the identification of interviewer misbehaviour.

This paper argues two ways in which an audit approach would help. Firstly, efforts to understand interviewer misbehaviour have been plagued by how the concept has been operationalised and measured. The adoption of rather different research designs and measurement approaches makes it prone to contradictory results across different studies. This paper argues that interviewer misbehaviour should instead be examined in a natural setting – i.e., when the interviewers are conducting the surveys. Further, it should ideally be conducted by an independent entity with no links to the researchers or interviewers, which may otherwise bias the observations. After all, Kiecker and Nelson (1996, p. 2) have warned about how “[interviewer] supervisors have been found to act in an unacceptable manner as they validate completed interviews …”. It would, therefore, be useful to have an independent audit body to observe interviewers on the ground. Secondly, researchers tend to lack access to the interviewers. Most survey data collection is contracted out to market research agencies (Blom & Korbmacher, 2013), who may regulate and control the researchers’ access to their interviewers. As a result, the researchers may have no influence on which interviewers they can observe and how they are trained to handle their various data collection responsibilities. Hence, it would be beneficial to have access to in-depth observations of interviewers.

With these goals in mind, this paper builds on a small body of literature on interviewer misbehaviour and adds to previous findings by examining the phenomenon using an audit approach. Specifically, it examines and thematically analyses 398 audit reports for depictions of interviewer misbehaviour. These reports were prepared by auditors commissioned by research organisations to shadow the interviewers. To the best of the authors’ knowledge, no other academic investigations have adopted this approach to understand interviewer misbehaviour.

Methods

Materials

The study materials are all audit reports prepared by auditors from RS Audit from 2015 to 2021, six years in total. These reports focus on interviewer behaviour observed whilst they administered face-to-face surveys.

Since its inception in 2015, RS Audit has supported research organisations in auditing their face-to-face surveys (commissioned to market research agencies) to ensure robustness in the survey process and quality of the data collected. For each project, RS Audit provides an independent perspective throughout the entire data collection process (i.e., from the setup of the project to the submission of the final dataset) and assists in advising the research organisation of data-related issues so that interventions can be implemented by the commissioned market research agency. The interviewer audit is one segment of the entire audit project.

As part of this appointment, RS Audit has accumulated many years of shadowing and auditing interviewers on the ground. All the auditors (n = 5) are members of the Institute of Internal Auditors (IIA), with an average of nine years of professional work experience (ranging from 4 to 16 years). They have relevant education, training, and experience in the market research industry.

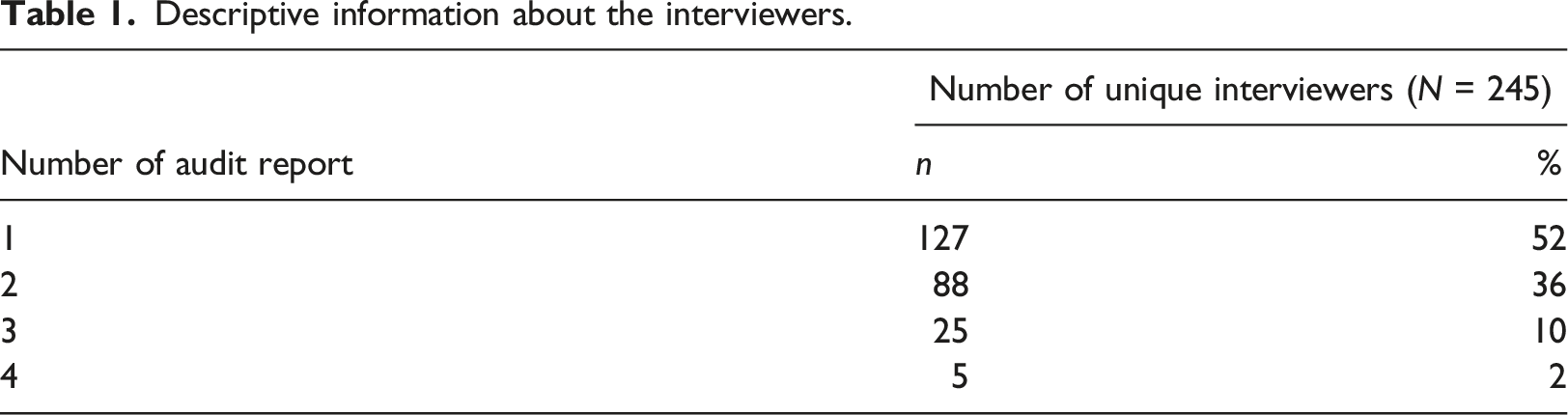

Descriptive information about the interviewers.

For this study, interviewer misbehaviour is defined as behaviours exhibited by the interviewers that are not in line with the instructed behaviours for the commissioned project. For example, it may refer to situations where interviewers did not read out the questionnaire text as required. Each audit report contains the following details: demographic information, misbehaviours observed during the face-to-face surveys, and an assessment of the misbehaviour on data quality. There is also a section of free text where the auditor is asked to detail the circumstances surrounding the misbehaviour and outline the reasons for their occurrences. The interviewers’ names found in the reports are anonymised in this paper.

Mode of analysis

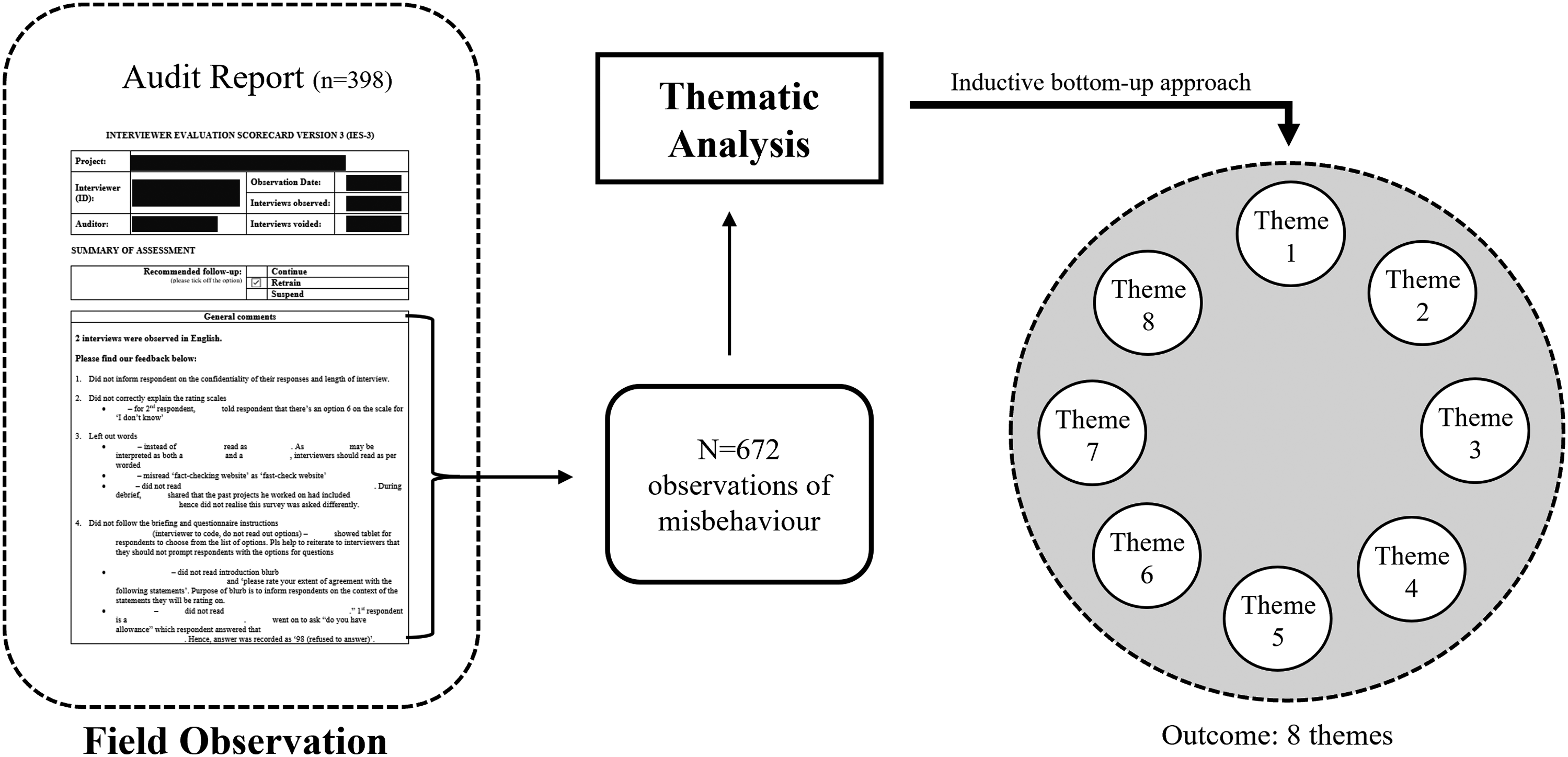

Thematic analysis with an inductive approach was utilised as the analytical method (see Figure 1). Since there was a dearth of literature in this domain, this approach allows us to inductively derive themes in a bottom-up manner by using observed behaviour as the starting point (Braun & Clarke, 2006). Two of the authors coded the audit reports independently. Broad descriptive categories were first identified based on similarities across the audit reports. Throughout the process, iterative discussions were conducted between the authors and revisions to the coding categories were made as and when the need arose. Study design.

Cohen’s kappa was computed to measure the agreement between the two coders. The mean kappa was .76 (p < .001), which indicates good intercoder reliability (McHugh, 2012). Due to the practical and specific nature of the content, there was little disparity, and consensus between the authors was obtained relatively quickly. These steps help to strengthen the reliability of the derived findings (see Barac et al., 2021). The analysis considered the frequency with which misbehaviour was raised and the extent to which the audit reports had elaborated upon such occurrences. After several iterations, the recurring ideas were refined into themes.

Results

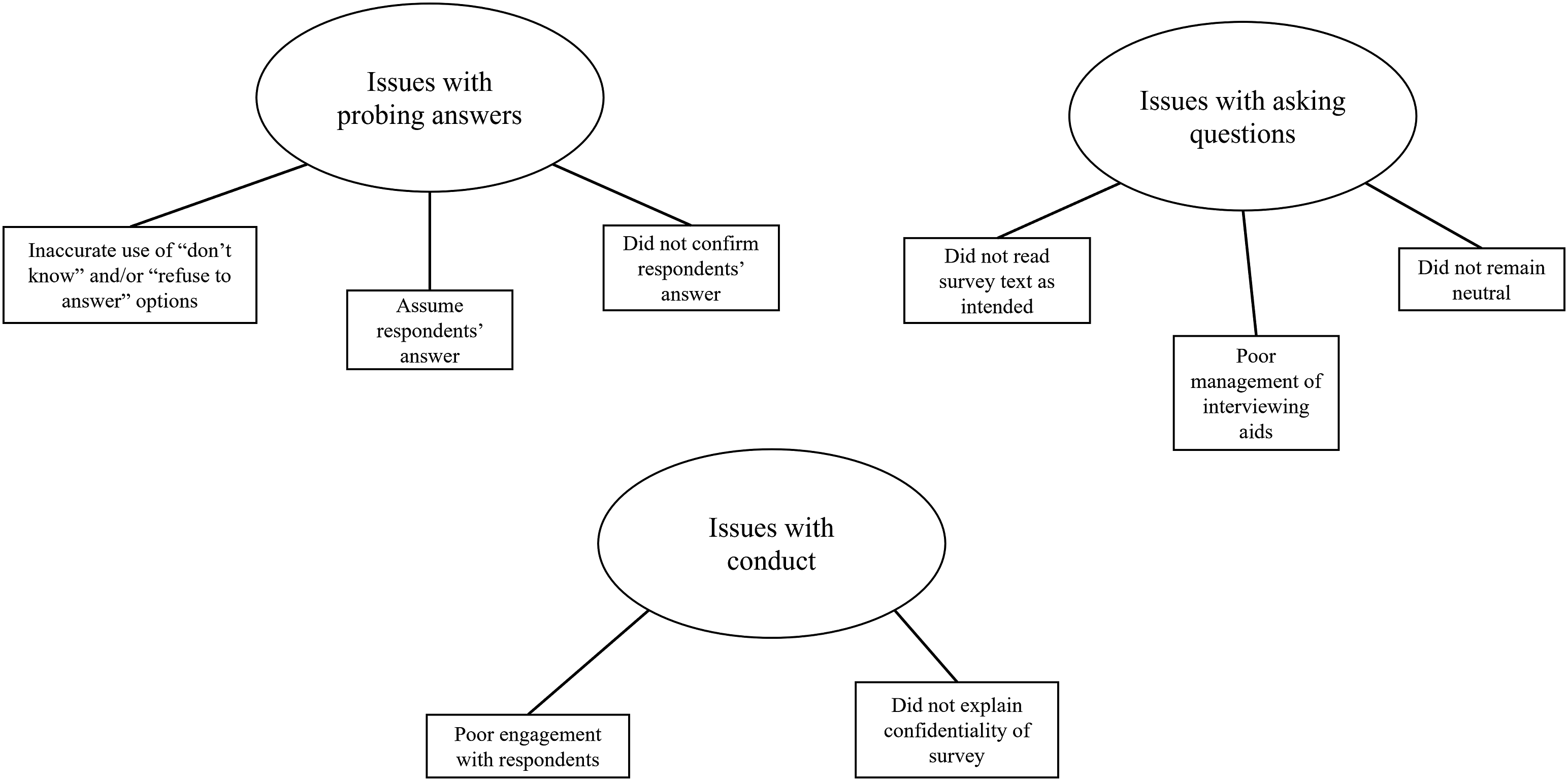

Eight themes of interviewer misbehaviour (N = 672) emerged from the thematic analysis of the audit reports and are further collapsed into three domains: (i) issues with asking questions, (ii) issues with probing answers, and (iii) issues with conduct.

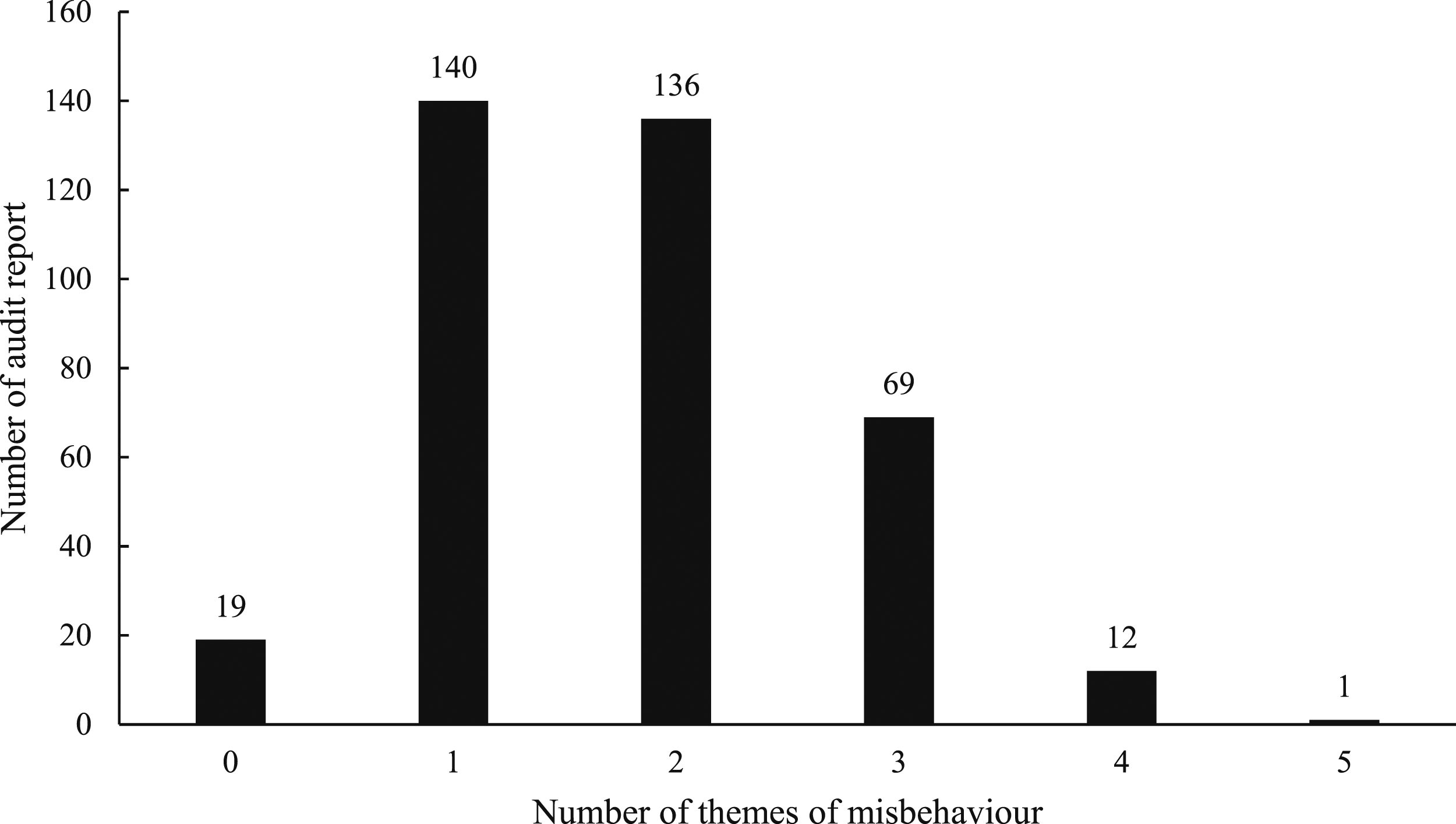

These themes (see Figure 2 for the thematic map) reflect the range of behaviours ascribed as interviewer misbehaviour and will be discussed using quoted verbatims from the audit reports. However, references to actual survey items were anonymised. There are several noteworthy observations across the dataset. As Figure 3 shows, there were, not surprisingly, 19 reports (5% out of 398) that did not have any documented misbehaviour. This corroborates extant anecdotal evidence of a high prevalence of interviewer misbehaviour. Most reports (69%) reported at least 1 to 2 types of misbehaviour. A smaller number of reports contained three or more types of misbehaviour (26%). Thematic map of interviewer misbehaviour. Frequency of themes of misbehaviour across audit reports.

Issues with asking questions

The audit reports documented 526 incidents of misbehaviours (78% of all misbehaviours) that are categorised under the domain of ‘asking questions’, and there are three themes of interest.

Did not read survey text as intended

Observations of interviewers not reading out the survey text – , required by the research design – made up the single largest theme, accounting for 37% (n = 246) of all misbehaviours. The occurrence of this category of misbehaviour raises concern that the respondents did not attain the correct or intended understanding of the questions. Thus, interviewers may affect the responses they obtain as a function of how they verbalise the questions. The description cited most frequently by the auditors was interviewers asking questions out of sequence and failing to read all instructions as intended: (1) INT only mentioned “now is your satisfaction”. Did not read out the question nor explain the scale. This is a different scale from XXX [previous question], hence it’s imperative that she explains the scale. As per project briefing, interviewers are briefed to read out scale in full. This is to help respondents better understand how the question/scale works. (2) INT did not read “including yourself, but not including employees/helpers”. During debrief, INT shared that the past projects he worked on had included employees/helpers hence did not realise this survey was asked differently. (3) INT skipped many sections instructions and rating scales. For example, for section XX, instead of elaborating on the three options on the showcard, INT would ask the respondent “Can or Cannot?”. This has altered the responses that respondent would provide, and no attempts were made to remind the respondents of other rating options.

Other notable descriptions included the misreading of survey instructions:

(4) [I]nstead of ‘smartphone’ read as ‘handphone’. As ‘handphone’ may be interpreted as both a smartphone and a feature phone, interviewers should read as per worded.

Adding to these descriptions is the inconsistent manner exhibited by the interviewer in explaining the rating scale to the respondents: (5) INT misinformed respondent on the no. of ratings for question when reading out the rating scale. INT rephrased it as “1, 2, 3, 4 just give me an answer” when question is using a 5-point rating scale. INT should be careful how INT prompts respondent as respondent could potentially be misled into thinking she should give an answer from ratings 1 to 4 only. (6) INT not to use percentage to explain the agreement scale (i.e. On a scale of 1–5, scale option ‘3’ does not meant 60%). (7) Respondent was asked to give his answer based on how much he agree with the statement [5-point Likert scale from strongly disagree to strongly agree] with 1 = 20%, 2 = 40%, 3 = 60%, 4 = 80% and 5 = 100%.

Did not remain neutral

The second most common misbehaviour was the recognition that interviewers did not remain neutral whilst asking questions (n = 160, 24% of all misbehaviours). Such behaviours may influence and skew the respondents’ answers as the respondents may have interpreted the survey questions differently – in comparison to the original intent of the questions – and are therefore not the true reflection of respondents’ sentiments. On numerous occasions, interviewers have rephrased or reworded the questions based on their interpretation: (8) INT use own words rather than using the clarification note in the CAPI [Computer-Assisted Personal Interviewing] (9) INT gave own example of person having to resign from this job to take care of his ill parent and emphasised on this, without stating that it was just an example. Resulted in respondents mistaking that specific example as the statement itself.

Furthermore, some interviewers have ‘infused’ their paraphrasing with specific prompts and/or personal opinions, which may or may not be accurate: (10) INT did not stay neutral and was seen leading respondents with specific prompts on multiple occasions – respondent ended up responding to INT’s prompts instead of the full spectrum of choices. For example, in section XX, INT will keep prompting the first respondent of the options ‘disagree’ and ‘strongly disagree’ as INT knew the respondent is not familiar with the XX.

One of the most common observations of contention was that of interviewers giggling when administering the survey. For instance, in one report, it was noted that: (11) INT was seen giggling when reading out the questions. INT needs to maintain a neutral stance when reading out the questions. By giggling, it might affect the respondent’s selection.

Poor management of interviewing aids

Eighteen percent (n = 120) of all misbehaviours described how interviewers manage their interviewing aids (e.g., showcard, letter of authorisation) inappropriately. These aids are meant to support interviewers when they administer the survey by providing the required documentation to prove their credibility and/or by showing the response sets (especially when it is a long list of options) for respondents to choose from. Some commonplace descriptions reflected in numerous audit reports concern the incorrect administration of the showcards, which may have the effect of confusing the respondents: (12) INT is not familiar with the showcard rotation and has difficulty entering responses on the tablet correctly … Showcard shows statements R6 to R1 while CAPI shows R1 to R6. Upon realising the statements’ numbering on tablet is different from the showcard, INT asked respondent to read the statements bottom-up from the showcard (i.e. R1 to R6) so to match the rotation on tablet, which has defeated the purpose of showcard’s rotation.

On other occasions, descriptions depict interviewers not utilising these aids and/or not knowing how to use them: (13) It is surprising to know INT has not heard of a showcard before this project. INT did not bring the showcard to observation and does not know how the showcard works. There are implications for not using the Showcard and INT doesn’t quite understand it. (14) When asked why showcards were not used (even when it was mentioned during interviewer briefing), INT admitted that he usually doesn’t use the showcards.

Issues with probing answers

The audit reports documented 112 incidents of misbehaviours (16% of all misbehaviours) categorised under the domain of ‘probing answers’ and three themes of interest arose.

Did not confirm respondents’ answer

The most frequently occurring misbehaviour type in this domain was interviewers not confirming respondents’ answers (n = 75, 11% of all misbehaviours) despite it being a survey instruction. This creates a situation where interviewers administered the survey differently, increasing the likelihood of interviewer effect being introduced during data collection. Descriptions of this type included a lack of attempts to clarify answers: (15) [D]id not read out the dependents on the list. Respondent mentioned the number of children depending on them, INT would key in the numbers and press next without clarifying if there are others who depend on them.

and this is especially so when the respondents gave straight-liner responses: (16) Respondent rated all ‘5’ on Section C and all ‘4’ on Section E. INT could have reminded respondent that there are other rating options available ….

Adding to these behaviours are the auditors’ observations that interviewers had not attempted to clarify contradictory responses. For instance, one report stated: (17) [D]id not clarify with respondent when it contradicts with the previous response captured. At the start of the survey, respondent mentioned he was ‘self-employed’ when answering personal monthly income. When asked for the job title at X, respondent said he had ‘retired’.

Assume respondents’ answer

A second misbehaviour type revolves around interviewers’ tendency to assume respondents’ answers (n = 20, 3% of all misbehaviours), which may alter the original intent of the questions. For example, several audit reports detailed how interviewers had assumed answers and skipped subsequent questions without asking them: (18) Respondent commented he doesn’t read digital newspapers and INT prompted “so you never go online?” and selected no for news aggregator websites without asking respondent. (19) INT assumes and chooses ‘option 1 – Walk’ by default for all respondents (dataset n = 2000 confirms this), without even asking respondents whether they agree.

Another related behaviour pertains to interviewers’ giving suggestive answers based on their assumptions about the respondents. While it may nudge respondents into replying, it has the negative consequence of influencing their answers: (20) [age intend to retire] Respondent said “from work? I don’t intend to stop, I enjoy what I’m doing” and INT suggested “just now you say your job is physically demanding, can I put 60? … Pls refrain from suggesting answers to respondents

Inaccurate use of “don’t know” and/or “refuse to answer” options

The last theme within this domain is related to the way that interviewers utilised the “don’t know” or “refuse to answer” options, and this accounted for 17 incidents (3% of all misbehaviours). The principal decision discussed was whether such options should be shown or offered to the respondents regarding the survey instructions. In the presence of such instructions, the interviewers should not proactively provide such options as it may prompt the respondents to select them for subsequent questions – especially for sensitive questions: (21) … subsequently, respondent remarked that he did not attend public seminars nor dialogues with senior Government officials, and selected ‘Don’t Know’ as well.

Furthermore, it includes errors arising from the misunderstanding that interviewers had about the respondents’ responses: (22) After reading out the first and second options on the screen, respondent commented “don’t need, all good” and INT conveniently coded 99 [refuse to answer] … INT shouldn’t have assumed that respondent was not willing to rank the options and chose refused to answer. Respondent may not be sure what the question is asking …

Issues with conduct

The audit reports documented 34 incidents of misbehaviours (5% of all misbehaviours) categorised under the domain of ‘conduct’ and two themes of interest arose.

Poor engagement with respondents

Several audit reports perceived poor interactions with respondents (n = 21, 3% of all misbehaviours) as a category of misbehaviour that can affect the quality of the collected data. These reports referred to situations where the interviewers did not prevent third parties (e.g., respondents’ family members) from interfering with the interviews, thereby influencing the respondents’ answers. This is due, at least in part, to how they communicate and manage the entire interview process: (23) Respondent’s wife was answering on behalf of the respondent. As a result, interview became a joint participation with respondent and his wife, and data captured does not accurately reflects respondent’s own views/opinions. INT did not inform them that they could only record the respondent’s answers.

In addition, the reports depicted other behaviours that undermined the rapport between interviewers and respondents. One such example revolves around the lack of assurance provided to elderly respondents: (24) [T]he questions regarding XX seemed to generate a mildly fearful response from this elderly respondent. Respondent would pass comments like “Is this answer right or wrong?” and “I don’t know if I should answer like this”. INT could have done more to assure respondent that there were no right or wrong answers. When interviewing more elderly respondents, INT will have to bear in mind that they will need to exercise more patience and provide more frequent reassurances should they meet this sort of fearful response.

Did not explain confidentiality of survey

Singapore maintains a firm stance towards adherence to the Personal Data Protection Act 2012 (PDPA), especially when it concerns the collection of personal data (Personal Data Protection Commission, 2023). In the context of survey studies, the responsibility to inform respondents about the PDPA, assure them of the confidentiality of the survey, and get their approval to proceed with data collection lies with the interviewers. The act of not explaining PDPA by the interviewers has therefore been flagged by the auditors as misbehaviour (n = 13, 2% of all misbehaviours): (25) INT did not explain the PDPA section. Furthermore, INT commented “you click here means you agree we do the survey” which is incorrect.

Discussion

Survey methodologists have long demonstrated that interviewers are crucial determinants of measurement error and that their misbehaviours directly impact survey quality (e.g., Finn & Ranchhod, 2017). Interviewers can function as a double-edged sword to either enhance or diminish data quality. In the ideal world, they act as key data collection agents by adhering closely to the survey protocols. Yet, in most cases, they may be divisive by engaging in a range of misbehaviours, affecting quality data collection. Given the pervasive nature of these misbehaviours, it is therefore critical that researchers and market research agencies gain a better understanding of defining and categorising misbehaviours.

This paper has sought to address these research needs by identifying misbehaviours through the observations made by auditors during face-to-face interviews (i.e., conducted by interviewers). A thematic analysis of 398 audit reports elicited eight themes, which can be further categorised into three domains (‘issues with probing answers’, ‘issues with asking questions’, and ‘issues with conduct’). While the final categorisation framework is very general, this paper does yield clear insights into common types of misbehaviours.

Theoretical implications for understanding misbehaviour

Theoretically, the findings can contribute to the under-researched state of interviewer misbehaviour. Firstly, there is a need to understand how the interviewer interacts with the environment (i.e., location of the interview) and the respondent. Misbehaviours can manifest at every juncture of these complex interactions. As highlighted earlier, different from the previous literature, which tends to focus on debates and arguments surrounding the substantial variability across interviewers (e.g., experience, personal characteristics; Brunton-Smith et al., 2017), the audit approach allows us to study misbehaviour based on observed interactions between interviewers and respondents in the field. Because an audit perspective specialises in verification, control, and accountability issues, it can enrich our understanding of complex topics, such as interviewer misbehaviours, “where contextual issues are of primary concern” (Morgan et al., 2017, p. 1066).

Secondly, the rich information available on the interviewers (i.e., 672 recorded incidents of misbehaviours across 398 audit reports) is a key strength of this paper. It is a pragmatic rather than a purist approach to use data conscientiously reported by auditors who have direct unintrusive touchpoints with the interviewers. It is one of the first attempts to position the audit perspective within this realm of interviewer-related research.

Lastly, and most importantly, this paper provides the first comprehensive taxonomy for observed interviewer misbehaviours (i.e., from an auditor’s perspective) within the context of field interviews in market research settings. A framework for categorising misbehaviour has been presented based on a thematic analysis of 398 audit reports. There is high ecological validity as the audit reports are based on real-world, large-scale research projects and are produced by auditors who are professionals working in the field for a significant number of years and are well-trained in identifying interviewer misbehaviours. Therefore, the derived findings allow us to present a more nuanced picture of misbehaviour than those found in the existing literature. Additionally, it could form the starting point for developing a better understanding of assessing interviewer performance based on the detection of certain prevalent types of misbehaviour.

Practical implications for understanding misbehaviour

A repertoire of misbehaviour

The first operational implication revolves around the identification of a range of misbehaviours. The results reveal that amongst the 398 audit reports, 95% of them contain at least one theme of misbehaviour. This suggests a high degree of observed misbehaviours across projects audited from 2015 to 2021. Furthermore, 13 reports (3%) had four or more observed misbehaviours. While the numbers are low, it is of concern, given that data quality has been compromised for some projects.

To circumvent such issues, various quality control measures can be introduced to curb and minimise such behavioural risks. For example, researchers and market research agencies can incorporate the identified misbehaviours into their interviewer training. Studies have shown that regular training can maintain data quality (du Toit, 2016; O’Brien et al., 2006). As Hollstein et al. (2020, p. 23) emphasised, “the results also point to the importance of thorough interviewer training and the need for interviewers to stick to their field manual”. However, some researchers, such as Schyberger (1967), have argued that the occurrences of such misbehaviours were not differentiated by the interviewer’s experience and training received. The key question is whether training interventions are the optimum means of mitigating misbehaviours and not relying solely on training as a means to an end.

In this vein, the findings also highlight the importance of process monitoring (see Hogan et al., 2007), which, in the current context, refers to the adoption of an auditing approach by researchers and market research agencies to shadow their interviewers. Ideally, this should be (i) performed by an independent entity who is experienced in the field of market research and (ii) impromptu in nature. Based on the authors’ experience, it would deter misbehaviour and encourage interviewers to discharge their responsibilities appropriately.

Prioritisation of misbehaviour

The second operational implication pertains to the issue of which misbehaviour should be prioritised or used for training and monitoring of interviewers. In other words, identifying misbehaviours that are more pervasive and indicative of problematic interviewer behaviour.

Given that ‘asking questions’ was featured in more than three-quarters of the recorded incidents, it points to an area of concern needing attention within the market research industry. For instance, Brunton-Smith et al. (2017) noted that some interviewers in their attempts to ‘help’ the respondents, may leave out words and paraphrase questions. These ad-hoc changes in wording, which from an interviewer’s perspective may be thought to have little (if any) effect on data quality, may inadvertently alter the intended meaning of the questions and contribute to unwanted measurement errors and, hence, affect data quality. As West et al. (2018, p. 183) argued, “by exposing respondents to the same question wording and response options, irrespective of which interviewers ask the questions, respondents’ answers should be comparable across interviewers.”

In another study, Blom and Korbmacher (2013, p. 10) noted that 40% of their interviewers would attempt to “explain what is actually meant by the question” if the respondents did not understand them. This echoes the descriptions detailed in many of the reviewed audit reports. One explanation put forth by Kiecker and Nelson (1996) suggests that interviewers who believe the survey is too long and complicated may try to mitigate negative effects on respondents by simplifying the interviews. While this may not be the sole motivation identified by the previous literature, it accords with a notable observation from one of the audit reports: (26) During debrief, INT dismissed saying that some of the words are too difficult for respondent to understand and so he rather asked the questions in his own ways.

It could also occur because of interviewers’ negligence, laziness, and lack of understanding of the research design. There is a wide range of motivations underpinning these behaviours, and even though this insight may help shed light on the ‘why’, it does not aid in detecting problematic interviews. Therefore, the findings imply that it is necessary to identify undesirable misbehaviour, such as ‘not reading survey text as intended’ or ‘not remaining neutral’ and offer a starting point for targeted training interventions to mitigate or minimise them.

In the categorisation of misbehaviour, there is sometimes the potential for some overlap between themes. For example, interviewers have been observed to ask questions out of sequence (i.e., ‘did not read survey text as intended’) whilst rewording them (i.e., ‘did not remain neutral’). They were also documented within the same audit report as failing to probe as instructed (i.e., ‘did not confirm respondents’ answer’) and fabricating responses (i.e., ‘assume respondents’ answers’). Hence, in contrast to viewing misbehaviour as an isolated behaviour, researchers and market research agencies must be cognisant of how certain misbehaviours may co-occur. Such observations were corroborated by the factor analysis conducted by Kiecker and Nelson (1996) on telephone interviewer misbehaviour, where certain misbehaviours were found to be closely related. It could be argued that interviewers were attempting to complete the survey and, in doing so, renegotiate and tailor their administration approach (with the unintentional or intentional manifestation of misbehaviour) to suit the respondents’ reactions.

Limitations

This paper is not without limitations. Firstly, while our approach has ecological validity in categorising misbehaviour from a thematic analysis of audit reports, it suffers from an inability to identify the exact reasons why misbehaviour occurs as well as their impact on data quality. Future studies can leverage the identified misbehaviours and adopt an experimental approach to make causal attribution between these misbehaviours and data quality.

Secondly, the presence of an auditor may affect the interviewer’s performance as each audit experience is unique. For example, misbehaviour may be elicited due to nervousness experienced by the interviewers from the auditor’s presence. In contrast, interviewers may adopt a more precautionary approach (i.e., be more careful) to reduce the manifestation of misbehaviour. These two scenarios can be attributed to the Hawthorne effect (see Perera, 2023) in action; individuals may change their behaviours when they are cognisant of others observing them. Hence, future research can explore the use of technology, such as voice recording the entire interview or asking interviewers to wear body-worn cameras in their line of work, to collect the required information for assessing misbehaviour.

Thirdly, the present study only focused on face-to-face surveys. Given that interviewers are also deployed for other research methods, such as Computer Assisted Telephone Interviewing (CATI), it would be interesting to validate the presence of these identified misbehaviours.

Lastly, the authors acknowledged that several aspects of the study design may induce individual differences across the interviewers. To begin with, the study did not account for the influence of respondents on the interviewers’ behaviour (see Haunberger, 2010) as different interviewers are assigned to different respondents. Although the 398 audit reports are unique, the interviewers being audited may not be – i.e., out of the entire pool of 245 interviewers, there are only 127 unique interviewers (52%); the rest have more than one audit report each. In addition, the audit reports are collected at different timings, which Johnson et al. (2009) reckon would elicit certain behaviours (e.g., interviewers might be rushing through interviews to meet the deadline). Nonetheless, that is not to say that all the derived themes of misbehaviour are irrelevant as the aim of this paper was to identify the potential repertoire of misbehaviours. It, therefore, suffices as an invaluable starting point for future investigation to explore the validity of these identified misbehaviours. For example, future studies can consider using in-depth interviews or focus group discussions with active interviewers to understand why certain misbehaviours were observed.

Conclusion

Limitations notwithstanding, this paper provides a thematic analysis of interviewer misbehaviour from a sample of audit reports. It provides evidence of eight themes of misbehaviour, which can be further delineated into three main domains. These findings present clear opportunities for researchers and market research agencies to better understand their interviewers by reflecting on the identified themes of misbehaviour and inform their interviewer training as well as misbehaviour detection. In summary, this paper can be regarded as only a first step towards establishing an avenue for measuring interviewer performance. Future research could investigate the impact of misbehaviours on data quality and their relationship with interviewer performance.

Footnotes

Acknowledgments

The authors would like to thank Ms Shu Hui Lee, Ms Geraldine Goh, Ms Jonea Lee and Shahir Sirajudeen for their assistance in the research.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.