Abstract

A key challenge for self-administered questionnaires (SaQ) is ensuring quality responses in the absence of a marketing professional providing direct guidance on issues as they arise for respondents. While numerous approaches to improving SaQ response quality have been investigated including validity checks, interactive design, and instructional manipulation checks, these are primarily targeted at situations where expected responses are of a factual nature or stated preferences. These interventions have not been evaluated in scenarios that require higher levels of engagement and judgment from respondents. While professional marketers are guided by codes of conduct, there is no equivalent code of conduct for SaQ respondents. This is particularly salient for SaQ that require higher levels of reflection and judgment, since in the absence of professional guidance, respondents rely more on their individual ethical ideologies and experience, leaving SaQ responses potentially devoid of the standards that normally set the expectations around data quality for marketing professionals. As marketing professionals are unable to provide guidance directly in a SaQ context, the approach used in this study is to offer varying levels of professional marketing guidance indirectly through specific codes of conduct reminders that are easily consumable by SaQ participants. We demonstrate that reminders and ethical ideologies moderate the relationship between the participant’s experience with SaQ and compliance with a code of conduct. Specifically, SaQ respondents produce fewer code of conduct infractions when receiving reminders than the control group, and this improves even more when the reminders coincide with the SaQ task. The paper concludes with implications for theory and practice.

Introduction

While self-administered questionnaires (SaQ) can facilitate convenient and inexpensive data collection from large, diverse, and representative respondent samples, there are numerous concerns over various aspects of the response quality such instruments produce (Barnette, 1999). Specifically, self-reports from inattentive (Fleischer et al., 2015), non-serious (Aust et al., 2013), or mischievous respondents (Hyman & Sierra, 2012) lead to responses that are of poor quality that potentially bias statistical findings, which in turn lead to poor quality analysis and subsequent business decisions (Smith et al., 2016).

Traditionally, the purveyor of the relationship between survey instrument and respondents were professional marketers employed within an organization or retained on behalf of an organization to conduct the research. These professional marketers received ongoing socialization from their profession and within their respective organization, training on research and ethics, and access to codes of conduct from their profession and their employers (Aggarwal et al., 2012). Collectively, these skills, norms, and resources helped guide the professional marketer’s activities and provided a framework to guide administering questionnaires and guidance to participants in a systematic and ethical manner to ensure a high-quality experience for the participant that in turn produced high quality data. While there are examples of spectacular ethical failures by professional marketers (De Cremer et al., 2010), the marketing literature generally points to support for the role of professional codes of conduct in improving research quality by marketers that in turn generates higher quality data (Craft, 2013). While professional codes of conduct guide professional marketers’ obligations to the respondents and the client organization, there is no expectation that they guide SaQ respondents, despite respondents, who of their own free will agree to participate in a market research project, having at least an implied ethical obligation to act in a professional manner and provide honest and truthful answers while maintaining confidentiality. These obligations of the participant are at times stated explicitly within the informed consent form or as part of a participation agreement in the case of a research panel, but it is not clear how these obligations are enacted in practice by respondents. Thus, the challenge is that the responses provided by SaQ participants, in the absence of a professional code of conduct, are influenced more by their individual ethical ideologies and previous experience with SaQ, leaving SaQ responses potentially devoid of the standards that normally set the expectations around data quality for marketing professionals. This is particularly salient for self-administered questionnaires that ask questions beyond simple facts, demographics, or personal preferences and delve into questions around design, judgment, and open-ended responses that are not as easily subjected to traditional data quality improvement measures. These types of questions require an increased level of engagement from participants since they are being asked to think creatively, apply ethical thinking to a situation, or to articulate nuanced thinking in a few short sentences. Thus, SaQ, in addition to representing a cultural shift away from synchronous communication and reliance on human intermediaries (Stern et al., 2014), pose additional challenges around providing background and additional guidance to participants that potentially represent a new burden. However, participants are not always diligent in reading and acting upon the guidance provided, which in turn increases noise and decreases the validity of the data collected (Oppenheimer et al., 2009). There have been numerous approaches to identify inattentiveness of participants in the data, including speeding and straight-lining, but this is often after the data is collected; thus, others have attempted to improve data quality before the survey is administered such as improving the survey design to make it visually appealing to keep participants engaged (Stern et al., 2014). An alternate approach is during the administration of the survey using validity checks that verify entries in the SaQ to ensure they meet specific well-defined criteria (e.g., a number within a certain range) (Christian et al., 2007) or the inclusion of instructional manipulation checks (IMC) (Morren & Paas, 2020). IMCs typically instruct participants to endorse a specific response or skip the question entirely, with failure to do as instructed indicating inattention and thus providing an opportunity to warn the participant about their attention levels and thus serve as an attention enhancing tool (Paas & Morren, 2018).

While codes of conduct are well established in a professional marketing context (Tsalikis & Fritzsche, 1989; Craft, 2013), little attention has been directed to the potential for codes of conduct within SaQ contexts, despite their potential to improve data quality. We bridge this gap by providing a simplified code of conduct to SaQ respondents and demonstrate that code of conduct reminders and ethical ideologies moderate the relationship between the participant’s experience with SaQ and the quality of data produced as measured by compliance with a code of conduct. Specifically, we demonstrate that SaQ respondents produce fewer code of conduct infractions when receiving reminders than the control group and this improved even more when the reminders coincided with SaQ task.

Our novel approach provides a generalizable mechanism for improving data quality in SaQ by embedding reminders that attune respondents to critical issues that could influence the quality of their responses. The scenario provided established a context to ask participants to make research design decisions, to use their judgment, and to engage with open-ended responses. The code of conduct reminders serves as a form of IMC, without accusing the participant of being inattentive, and instead providing a friendly reminder to key issues to be considered when responding to these nuanced survey questions. This provides an opportunity for participants to reflect on the issue more deeply and in turn to provide higher quality responses. The findings from this study result from applying deontological and teleological evaluation criteria underlying the H-V model (Hunt & Vitell, 2006) of ethical decision making to the situation of SaQ respondents to better understand how data quality issues emerge within a SaQ context. Deontological evaluation involves applying specific rules and guidelines (e.g., code of conduct principles) to a situation, while teleological evaluation focuses more on the consequences of the decision (e.g., impacts of the research design decisions) which in turn impact SaQ response quality (i.e., code of conduct infractions). Thus, it is the participant’s experience with SaQ combined with the interplay among teleological and deontological evaluations that drive SaQ response data quality that is investigated. The inclusion of ethical ideologies provides additional insights and opportunities to provide participant screening based upon whether respondents are skeptics or universalists, or alternatively to consider participant’s ethical ideology in interpreting SaQ survey results.

Theoretical Background and Hypothesis Development

Self-Administered Questionnaires Responses as Ethical Decision Making

The situation where SaQ respondents are asked questions that require deeper critical reflection and judgment, parallels the ethical decision-making process of evaluating a set of alternatives by applying a set of guiding principles. There are several well-established models of ethical decision making that have been validated and extended, and Jones (1991) provides a comprehensive synthesis of the major models of ethical decision making. The resulting integrated model (Jones, 1991) combines the key components of prior models and is organized around the four phases of ethical decision making and behavior proposed by Rest (1986), whereby an individual must (a) recognize the moral issue, (b) make a moral judgment, (c) place moral concerns ahead of other concerns, and then (d) act on those moral concerns (p. 368). A particularly useful model that was included in Jones (1991) integration and covers these four phases is the model proposed by Hunt and Vitell (1986). The Hunt and Vitell (H-V) model, while originating within the marketing ethics literature, is recognized to have broader applicability to ethical decision making in general, and has been revised, in part, based upon Jones (1991) critique and recommendation for the inclusion of the role of specific characteristics of the ethical issue under consideration (Hunt & Vitell, 2006). The purpose of the H-V model is to increase our understanding of ethical decision making through a process theory that explains and predicts in situations having ethical content and not to provide normative recommendations on what individuals should do in specific situations (Hunt & Vitell, 2006). However, while H-V is not a causal model, it is appropriate to develop causal models consistent with the underlying theory (p. 149–150). In the context of our study, we draw upon key aspects of the H-V model, namely in recognizing the deontological and teleological evaluations (described below) that inform SaQ responses.

A key assumption of the H-V model is that the individual must first perceive the decision as having ethical content as a precursor for subsequent processes to initiate. After the awareness of the ethical situation, the individual perceives various alternatives that could potentially address the ethical situation (but likely not the complete set of alternatives that are usually unknown thus leading to satisficing), which are then evaluated based upon deontological and teleological criteria.

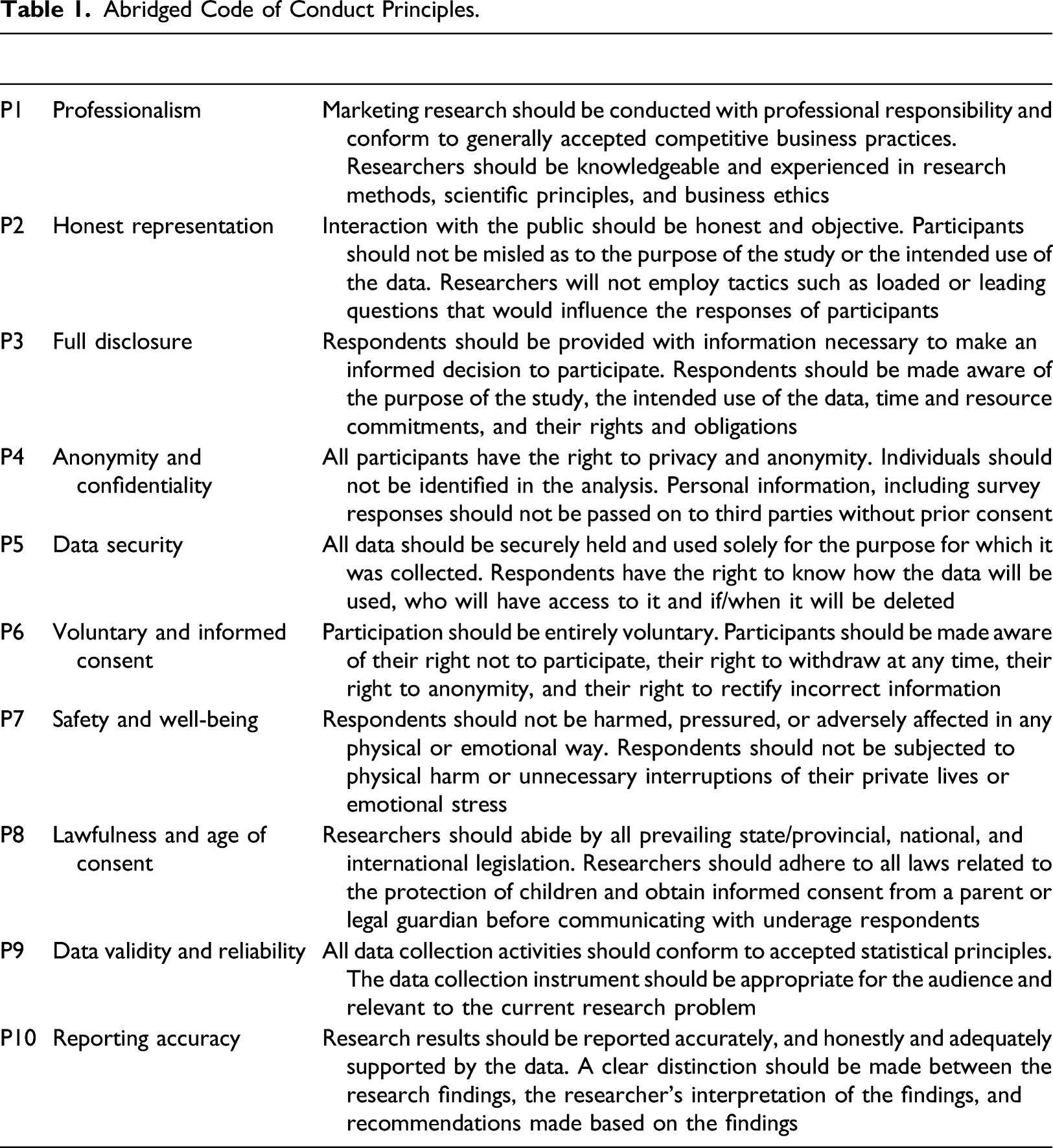

Abridged Code of Conduct Principles.

During teleological evaluation, the individual considers (1) the perceived consequences of each alternative for different stakeholders, (2) the probability that each consequence will occur for each stakeholder, (3) the desirability or undesirability of each consequence, and (4) the importance of each stakeholder (p. 145). Thus, teleological evaluation stresses the consequences of actions in the decision-making process. Within our study, participants are asked to role play in a scenario (Appendix 1) and consider specific stakeholder groups, primarily their hypothetical firm and client. The decisions are presented as having a time imperative such that a decision is needed in the short term but will potentially impact the long-term viability of the firm and the ability to secure future contracts with the client.

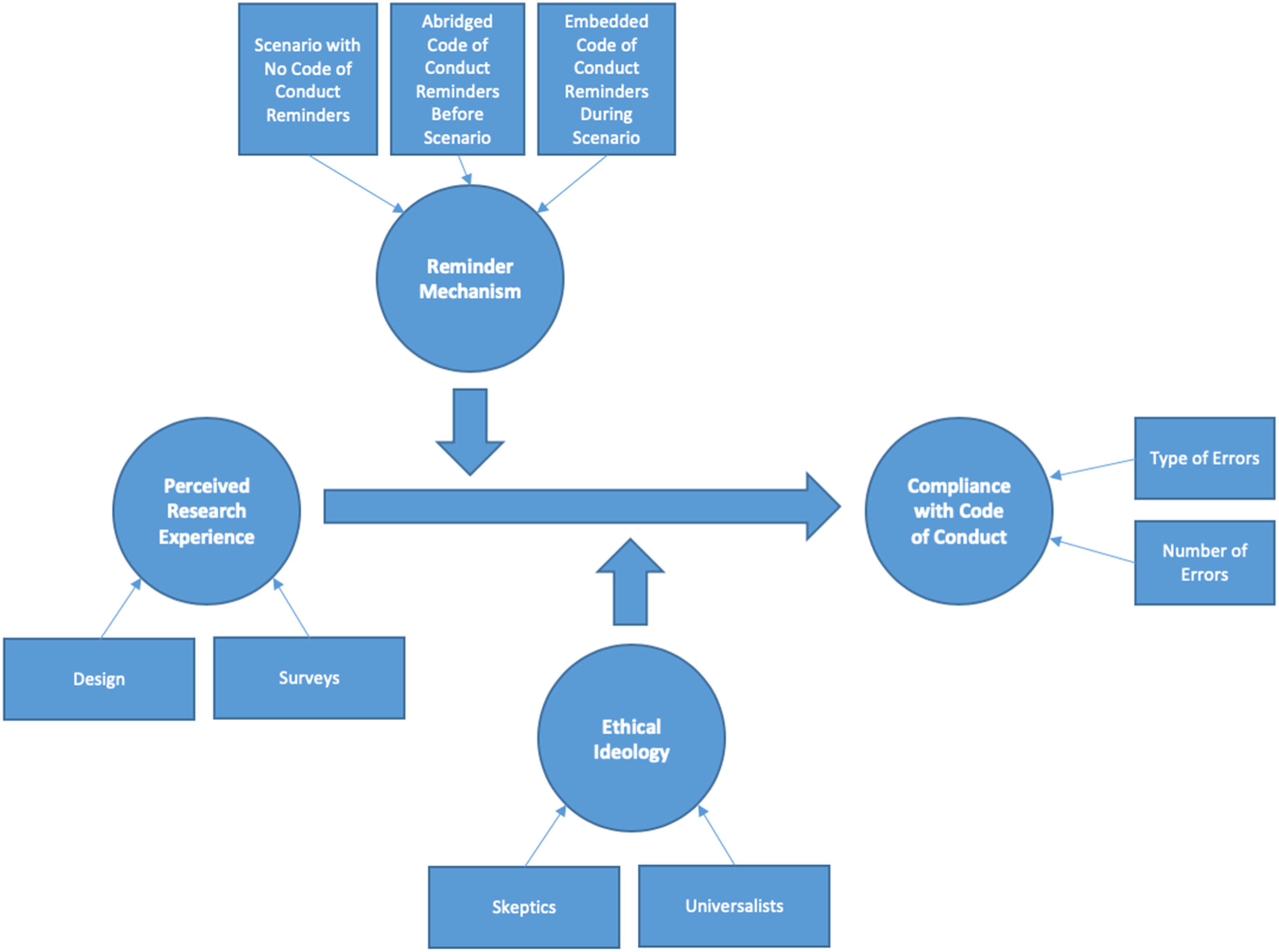

Empirical work testing various aspects of the H-V model demonstrate that professional marketers (Hunt & Vasquez-Parraga, 1993) and consumers (Vitell et al., 2001) rely primarily on deontological (principles and ethical norms) factors and only secondarily on teleological (consequences) factors in forming ethical judgments and in their intentions to act (Hunt & Vitell, 2006). The H-V model was developed specifically for ethical situations involving businesspeople and the professions, and as such takes into consideration the industrial, organizational, and professional environments as antecedents in the model. However, as a general theory of decision making that includes broader consideration of critical reflection and judgment, we apply the H-V model to SaQ marketing scenarios without the socialization and formal codes of conduct associated with the profession, using instead an abridged ethical code of conduct as the study treatment. The theoretical framework guiding this study (Figure 1) is motivated by the H-V model and was used to develop hypotheses on the impact of individual personal characteristics, defined in terms of the ethical ideologies and research design beliefs and norms, on the quality of the SaQ data produced under varying levels of reminders based upon an abridged ethical code of conduct. Theoretical framework.

Self-Administered Questionnaires Response Quality Challenges and Solutions

Total survey quality approach defines survey quality broadly, recognizing that producers and users of survey data differ on the criteria they use to evaluate “fitness for use,” with producers emphasizing metrics of accuracy (i.e., large sample size, high response rates, and internally consistent responses) needing to be considered simultaneously with user-focused metrics of relevance, accessibility, interpretability, comparability, coherence, and completeness (e.g., questionnaire content that is highly relevant to their research needs) (Biemer, 2010). In a total survey quality context, a survey error is defined as a deviation of a survey response from its underlying true value, (Biemer, 2010). That is, survey data quality is a complex multidimensional concept that necessities the simultaneous consideration that the survey data is both free from deficiencies and responsive to customers’ needs (Biemer, 2010). Thus, survey data could potentially be viewed by the producers as high quality, as it is free of deficiencies, while the end users view the same survey data as unfit for use (and thus of low quality) because it was released behind schedule and is now costly or difficult to access and interpret. In the context of this study, survey data quality is measured in terms of deviation from an established code of conduct to reflect the judgment and qualitative nature of the SaQ responses under consideration, in contrast to factual or revealed preference data where underlying true values can be more reasonably estimated.

Numerous approaches to improving response quality of SaQ have been proposed that address the issue at various points along the research process. These approaches either attempt to avoid the issue before the SaQ is administered, mitigate the impacts when the SaQ is administered, or attempt to deal with the data quality issues after the SaQ is completed. For example, at the instrument design phase, the use of validity checks provides a tool that is easily available within the web based SaQ to verify that data meets specific requirements (e.g., respondent enters a number within range) or that participants did not inadvertently skip a response by displaying an error message to the participant informing them of the mistake or to confirm their decision (Christian et al., 2007). The challenge with this approach is that error messages increase respondent frustration and termination (Best & Krueger, 2004) so this approach is best presented informatively so the error messages are not viewed as intrusive or accusatory. It is also challenging to implement such validity checks in a context where the responses are judgment calls or have an ethical dimension where no clear correct response range is specifiable. However, providing instructions is important, especially when there are multiple ways for respondents to provide the responses, and the researcher desires one specific format or approach, with deviations from expectations reducing overall data quality (Christian et al., 2007). In this regard, providing visual cues such as location and orientation of graphical elements can influence how respondents interpret meaning (Christian et al., 2007). Of course, SaQ respondents are not always diligent in reading and following instructions, which in turn increases noise and decreases the validity of the data (Oppenheimer et al., 2009). Attentive participants (a) aim to understand the question, (b) retrieve information from their memory, (c) form a judgment, (d) map the judgment to the response scale, and (e) edit their answer (Sudman et al., 1997). Weak satisficers follow all five stages less thoroughly, while strong satisficers skip multiple stages completely (Morren & Paas, 2020). This has led researchers to include instructional manipulation checks (IMC) to warn the participant about their attention levels and thus serve as an attention enhancing tool (Oppenheimer et al., 2009; Paas & Morren, 2018). IMCs can be short single line reminders, or they can be longer multiple line texts (Morren & Paas, 2020). Morren and Paas (2020) found that strong satisfiers are likely to speed and straight-line and fail both types of IMCs, while weak satisficers often speed and fail the long IMC only. Another approach is to use dynamic interactive survey questions that make SaQ more engaging, thus leading to improved data quality (Kostyk et al., 2019); however, the challenge here is ensuring that questions are not merely more entertaining, without necessarily increasing the validity of the data (Dolnicar et al., 2013). Finally, inattention indicators such as non-response, speeding, and straight-lining, while potentially representing different types of inattention, can also be used as the basis for data cleaning as a means of identifying cases for removal or coded with missing data from the dataset (Meade & Craig, 2012).

The challenge with these approaches to improving SaQ response quality such as validity checks, interactive design, dynamic interactive surveys, and instructional manipulation checks, is that they have primarily been investigated in situations where expected responses are of a factual nature or stated preferences and do not necessarily require broader engagement in terms of critical reflection and judgment that entail ethical aspects of the decision making to inform responses that in turn impact data quality. That is, much of the attention for SaQ focused on empirical questions, while less attention has been directed toward cognitively demanding questions (Bell & Bishai, 2021) or those with a strong ethical dimension that are guides by general moral positioning (Mauldin & DeCarlo, 2020).

Using Codes of Conduct to Improve Self-Administered Questionnaires Response Quality

In this section, enhanced IMCs in the forms of codes of conduct that arise in the context of ethical decision making, are presented as a novel approach to improve data quality of SaQ responses considering some of the challenges identified in the previous sections.

Schwartz (2016) review of the ethical decision-making literature highlights that awareness of an ethical issue requires supporting mechanisms including the firm’s ethical infrastructure, such as codes of conduct, training, meetings, or other disseminated ethical policy communications (Tenbrunsel et al., 2003). That is, simply creating the code of conduct, especially if done in isolation, without some other mechanisms (e.g., SaQ reminders) to help facilitate the use of the code of conduct in practice, is unlikely to improve awareness or increase compliance with the code of conduct.

Professional codes of conduct provide a framework to guide decision making in a systematic and ethical manner. Within the marketing discipline there is a normative tradition of developing guidelines or rules to assist marketers in making ethical decisions and behaving in an ethical manner (Hunt & Vitell, 1986). For example, the American Marketing Association provides a code of conduct as a framework for self-regulation, organizing and evaluating alternative courses of action, setting out ethical rules for conduct, enhancing the public’s confidence, and minimizing the need for governmental and/or intergovernmental legislation or regulation among professional marketers (Aggarwal et al., 2012; Kaptein & Schwartz, 2008).

Schwarz (2014) argues that in SaQ, the survey instrument represents the researcher’s half of the conversation and respondents naturally assume the material provided is relevant to this conversation. Thus, while codes of conduct and other tools are available to guide the professional marketer’s side of the conversation there is no equivalent guidance available to respondents. This situation is potentially exacerbated in the SaQ context where the researcher is no longer co-present with the respondent and thus unable to answer questions and provide additional guidance, thus making it easier for respondents to violate ethical principles that professionals, by drawing upon their expertise and following their codes of conduct, would be more likely to help participants avoid. This increases the likelihood of a poor-quality data originating from differences in understanding of the role of confidentiality of data, data repurposing, respondent anonymity, interviewer dishonesty, deception, treatment of minors, professionalism, and informed consent by SaQ respondents. That is, survey data quality in this context is dependent upon respondents providing answers that are consistent with the code of conduct, otherwise the response is invalid. For SaQ respondents there is no expectation of any professional training, no oversight of a professional organization, no code of conduct, and no established organization with any level of sophistication around ethical practices, guidelines, or ethical norms that could be embedded in an organizational culture (Dahlström & Edelman, 2013). That is, many factors investigated for professional marketers guided by foundational theories of ethical decision making are simply absent or are likely to manifest differently in the context of SaQ respondents.

While it is unclear how codes of conduct can be incorporated for SaQ respondents, we offer a number of potential strategies to improve critical reflection and awareness of ethical issues including (a) training on a code of conduct, (b) educational materials

In evaluating the practicality of each of these options, training is possible, although such a time commitment is likely not feasible, especially given the challenges for even professionals to stay current on these often-lengthy codes of conduct (Dean, 1992). An abridged version of the professional codes of conduct, in the form of a SaQ code of conduct, is one approach to addressing this concern. An abridged code of conduct would not cover the same breadth of situations that current professional codes address but potentially would provide an improvement over the no code of conduct situation that currently exists.

Providing educational materials

Finally, an expert system that allows amateurs to perform like professionals by guiding the user through a process (e.g., SaQ) in a structured manner is an ideal that is not currently available for implementation. Expert systems in marketing research have been envisioned for decades (c.f., Mentzer & Gandhi, 1992; Rangaswamy et al., 1989); however, the practical implementation of these systems remains elusive (Orzan, et al., 2011), especially for research and ethical decision-making processes that are inherently complex (Markus et al., 2002).

However, a key challenge for the code of conduct, independent of how it is implemented for SaQ respondents, is awareness of the code of conduct as a precursor to following the code (Tenbrunsel et al., 2003), which in turn is influenced by individual ethical positions and contextual factors of the respondent (Giacalone et al., 1995).

Ethical Ideologies

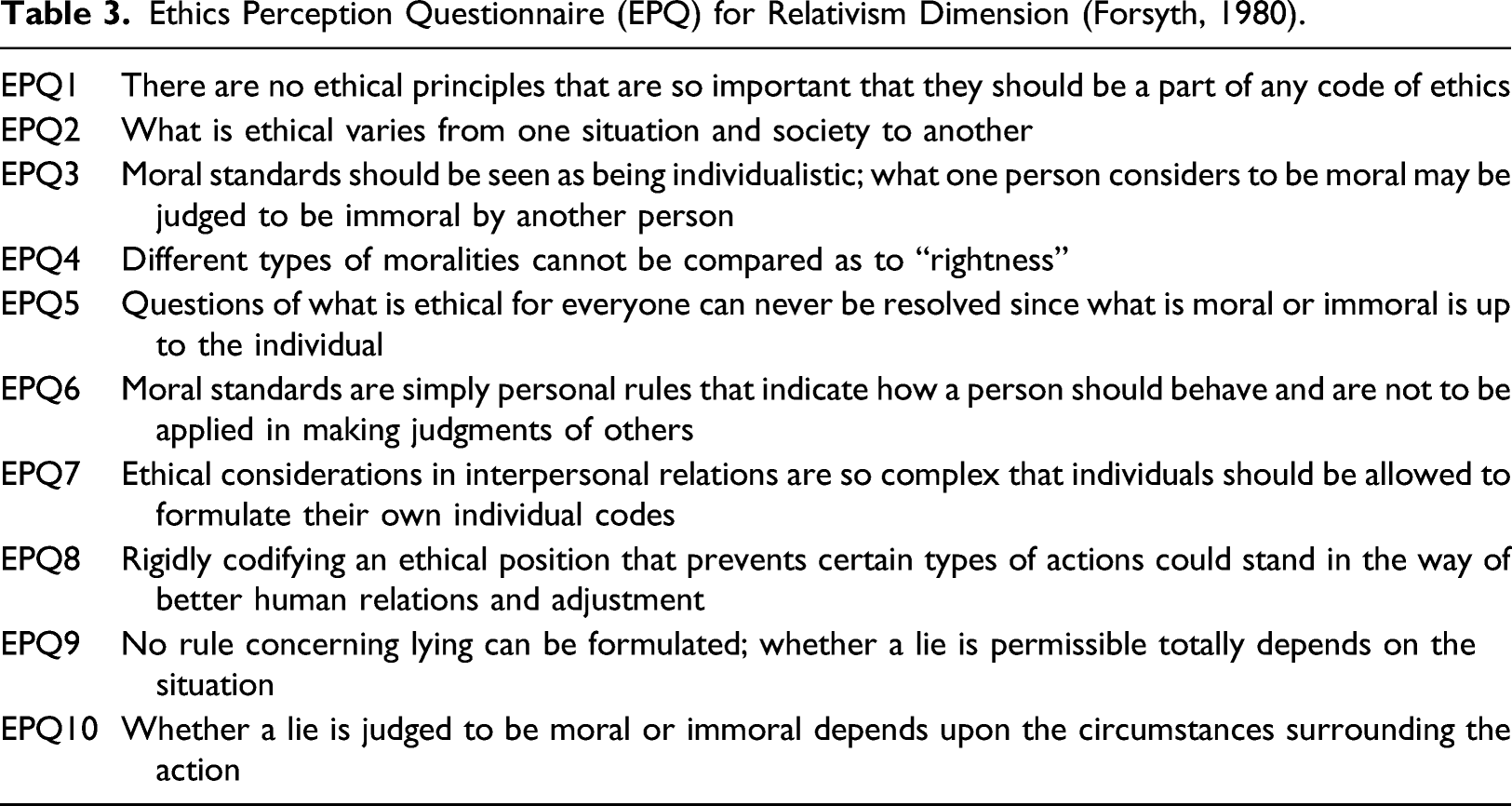

Ethical ideologies are considered in terms of the extent of the influence of relativism in the ethical decision-making process. For highly relativistic individuals, moral judgments are configurable in that they base their appraisals on aspects of a specific situation and action that they are evaluating, whereas individuals low in relativism rely more heavily on moral principles, norms, or laws to determine what is right and what is wrong (Forsyth, 1980). The taxonomy of ethical ideologies originally proposed by Forsyth (1980) was built around the intersection of two dimensions, namely idealism and relativism to produce a 2 × 2 matrix of whether individuals purport idealistic or non-idealistic values and believe in universal or relative moral rules, producing four ideal types that recognized individuals varied on each of these dimensions. However, given the emphasis placed on deontological evaluation by decision makers noted above, in our study we focus on the relativism dimension for the purposes of analysis. Collectively, those with high relativism scores are referred to as

Navigating the theoretical framework guiding our study (Figure 1) from left to right, we begin with the Perceived Research Experience of the participant as our independent variable. This is guided by the H-V model component that individuates the environment based upon codes of conduct and enforcement of those codes along with various informal professional and organizational norms. In our study, this is a key contribution since it extends the model to the consideration of SaQ respondents that are unlikely to have informal professional norms or strong organizational norms to draw upon. SaQ respondents are also unlikely to have access to any formal codes of conduct, either professionally or through their organizational or industrial environment. While SaQ facilitating relatively quick and inexpensive data collection from large, diverse, and representative samples underpins the value proposition of SaQ, it also provides the foundation for SaQ respondents to provide poor quality data that deviates from established codes of conduct. Thus, when respondents are faced with questions that require judgment or ethical considerations, their prior experience with SaQ that were most likely of a factual nature is unlikely to be relevant in this new situation that is more cognitively demanding with ethical dimensions. SaQ respondents are not supervised, so these is no oversight by professional marketers as was the case with in-person survey administration, thus the need for additional guidance in the form of the code of conduct reminders. That is, SaQ respondents, even when they purport experience with SaQ, this experience provides limited treatment of codes of conduct principles and thus does not improve the quality of SaQ responses when faced with questions with an ethical component. This leads to our first hypothesis.

High self-evaluations of SaQ experience by respondents will not improve SaQ response quality for cognitively demanding or ethical questions. The second component of the model in our study is the Reminder Mechanisms, which is the main treatment manipulated within this experimental design using an online SaQ. The use of reminders is well established within marketing research for improving response rates (Evans & Mathur, 2018) and is reflected indirectly within the use of instructional manipulation checks (Morren & Paas, 2020; Oppenheimer et al., 2009). In this study, such reminders are investigated as a possible moderator for compliance with an ethical code of conduct and represent the explicit recognition of the Perceived Ethical Problem component of the H-V model (Hunt & Vitell, 2006). Another contribution of our study is that we investigate the efficacy of a specific mechanism that facilitates improving the likelihood that the ethical aspects of a problem will be identified. This is key step in the H-V process model, without which the remaining processes are never enacted (Hunt & Vitell, 2006). Code of conduct reminders in this context serve as occasions for critical reflection of the situation so they are both aware of the immediate concerns as well as the broader implications (Guynn et al., 1998; Ruedy & Schweitzer, 2010). This leads to our second hypothesis.

Respondents reading an abridged code of conduct reminder immediately before completing a SaQ will have higher quality SaQ responses (i.e., produce fewer code infractions) when compared to those receiving no code of conduct reminders. Potential issues with an abridged code of conduct include recall of the content of the code and knowing which guiding principle applies in a particular situation without being overloaded to the point that the code is ignored (Dean, 1992; Kaptein and Schwartz, 2008). We hypothesize that there will be a further reduction in code of conduct infractions if specific guidelines from the abridged code of conduct are instead embedded within the scenario at the point of responding to the question (Ananny, 2016; Guynn et al., 1998) as this would reduce the issues of recall and knowing which principles to apply. This leads to our third hypothesis.

Respondents reading an embedded code of conduct reminder at key relevant points while completing a SaQ will have higher quality SaQ responses (i.e., produce fewer code infractions) compared to those reviewing an abridged code of conduct immediately before completing a SaQ. The third component of the model in our study is the ethical ideology of the SaQ respondent. As noted, this is focused on the role of relativism in the ethical decision-making process and maps to Hunt and Vitell (2006) deontological (rules) and teleological (consequences) evaluations that lead to specific judgments. This is investigated as a possible moderator for compliance with an ethical code of conduct. Skeptics view specific ethical principles as being unable to specify moral appraisals exactly and instead rely on their evaluation of the merits of the situation or are guided by personal values and perspectives on the specific context in guiding their decisions (Forsyth & Pope, 1984). Similarly, universalists are guided by a set of universal moral rules or in attaining the greatest good in their ethical decision making (Forsyth, 1992). Thus, in the absence of any specific reminders to draw upon general or specific ethical principles, the skeptics will look to the specifics of the situation and the universalists will apply the rules that they inherently use or are provided with when faced with scenarios that potentially have ethical content. There is no expectation that skeptics and universalists in the control group will be any more or less ethical than the other. This leads to our fourth hypothesis.

In the absence of code of conduct reminders (i.e., control group), skeptics and universalists will have equal quality (i.e., produce the same number of code infractions) of SaQ responses. However, in the presence of specific reminders of guiding ethical principles, we anticipate that the universalists will be more inclined to review the code of conduct and to draw upon those rules within the scenario, while the universalists will be less influenced by such reminders, and that this will be even more effective for the universalists when the reminders of specific ethical guidelines coincide with related ethical decisions. This leads to the following set of hypotheses.

In the presence of pre-read code of conduct reminders, skeptics will provide lower quality SaQ responses (i.e., produce more code infractions) on average than universalists completing a SaQ.

In the presence of embedded of conduct reminders, skeptics will provide lower quality SaQ responses (i.e., produce more code infractions) on average than universalists completing a SaQ. Finally, the dependent variable Compliance with Code of Conduct maps to Hunt and Vitell (2006) ethical behavior component of the model (Figure 1).

Study Design

In this section, we present an experimental study of the quality of the data provided by respondents completing an online SaQ based upon their compliance with marketing code of conduct principles under the conditions of no code of conduct reminders, providing an abridged code of conduct before, and providing an embedded contextualized code of conduct reminders during a marketing research design scenario.

Respondent Profile

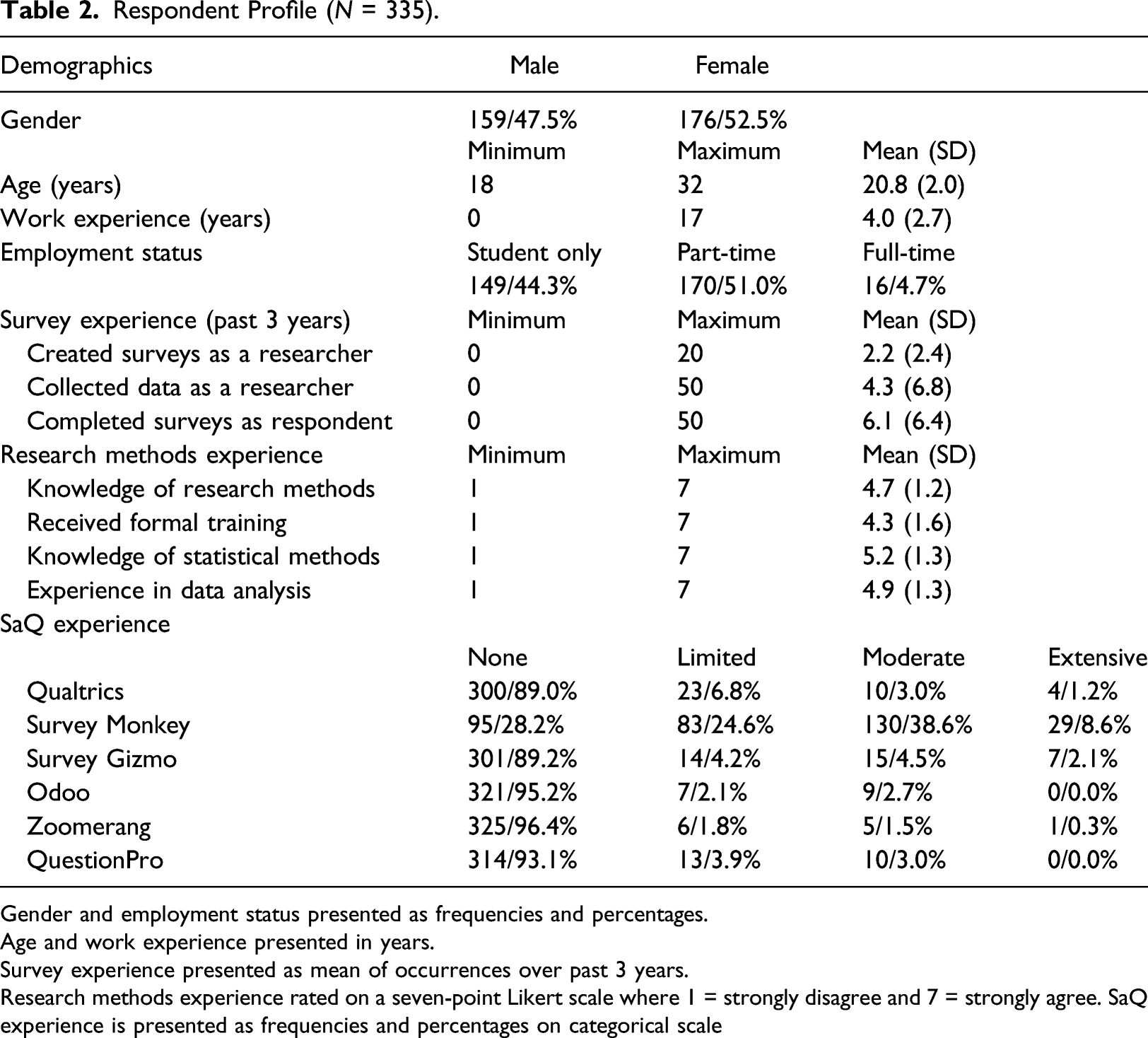

The sample frame for this study consisted of second- and third-year undergraduate students registered in an introductory marketing course at a mid-sized western university. At this stage of their undergraduate program, the participants would have completed mandatory courses in business skills, statistical principles, and business ethics. Participation was voluntary; however, all subjects completing the study received a 1% bonus toward their final grade in the course. The one-hour study was administered after hours in the faculty’s behavioral lab. Of the 356 students attending the study, 21 subjects failed to complete the required tasks, resulting in 335 valid responses.

Respondent Profile (

Gender and employment status presented as frequencies and percentages.

Age and work experience presented in years.

Survey experience presented as mean of occurrences over past 3 years.

Research methods experience rated on a seven-point Likert scale where 1 = strongly disagree and 7 = strongly agree. SaQ experience is presented as frequencies and percentages on categorical scale

The respondents considered themselves to be reasonably familiar with research methods including the use of online survey tools. Fifty-seven percent of the participants indicated that they had been exposed to some form of training in research methods and over 70% believed they were familiar with statistical principles for data analysis and reporting. Over the past 3 years 86% of the participants had been involved in the development and administration of at least one major research project for work, school, or extra-curricular activities. In their undergraduate program, each participant had, on average, created 2.2 surveys, collected data on 4.3 research projects, and completed 6.1 surveys as a respondent (Table 2).

The most used online survey tool was Survey Monkey with 72% of the respondents reporting at least some experience and 47% reporting moderate to extensive experience. Only 11% of the participants had any experience with Qualtrics (the university’s preferred supplier), 11% had at least some experience with Survey Gizmo, and 7% reported using Question Pro. 16 respondents (5%) reported using Odoo and 12 respondents (4%) indicted they had used Zoomerang. When asked to list other survey tools they were familiar with, seven respondents listed Google Survey, five respondents listed Doodle Poll, and three respondents reported using Facebook Survey (Table 2).

Survey Development

A high-involvement survey task (Appendix 1) was designed to evaluate the influence of codes of conduct on the reliability and validity of SaQ responses. A scenario described a medium-sized software enterprise marketing Smartphone apps to early adolescents (age = 10–14) and middle adolescents (age = 15–17). Because the fictitious company’s products were not meeting expectations, the scenario suggested the need for market research to assess customer satisfaction and demand. Participants were required to read the scenario and answer a series of open-ended questions about designing a survey, collecting data, and analyzing the results. The questions covered each stage of a research project including problem definition, data collection methods, sample characteristics and sample size, analysis and reporting of data, and demographics. The participants were also asked to provide instructions for survey administrators and respondents and recommendations for data management and security. The scenario was designed to require higher levels of engagement and judgment with the questions beyond simple recall or stated preferences.

To develop a set of codes of conduct applicable to the task, a content analysis was conducted using professional codes of conduct published by five leading marketing research associations. The content analysis examined ten general rules of professional conduct and 33 rules of professional conduct in marketing research published by the Marketing Research Society (London, UK), 63 ethical requirements for members of the Marketing Research Association (Washington, DC) and ten guiding principles governing members of the Council of American Survey Research Organizations (Port Jefferson, NY). Included in the analysis were 39 rules of good conduct and practice mandated by the Marketing Research and Intelligence Association (Toronto, Canada) and 23 articles governing members of the European Society for Opinion and Market Research (Amsterdam, Netherlands).

Compliance with the industry codes of conduct for this SaQ task was compared among respondents in each of three treatment conditions. Participants in the control group received no exposure or review of codes of conduct that would guide the design and execution of a research program. A second group was presented with the ten core principles at the beginning of the study. The participants in this group were required to review the ten statements as part of the introduction to the study but were not able to return to this page once they started answering the questions. A third group was provided with code of conduct guidelines throughout the study. As the participants in this group advanced through the questions, an on-screen window presented principles appropriate to the required task. As marketing professionals are unable to provide guidance directly in a SaQ context, the approach used in this study offered varying levels of professional marketing guidance indirectly through specific code of conduct reminders that were easily consumable by SaQ participants.

Ethics Perception Questionnaire (EPQ) for Relativism Dimension (Forsyth, 1980).

Results

Code of Conduct Compliance

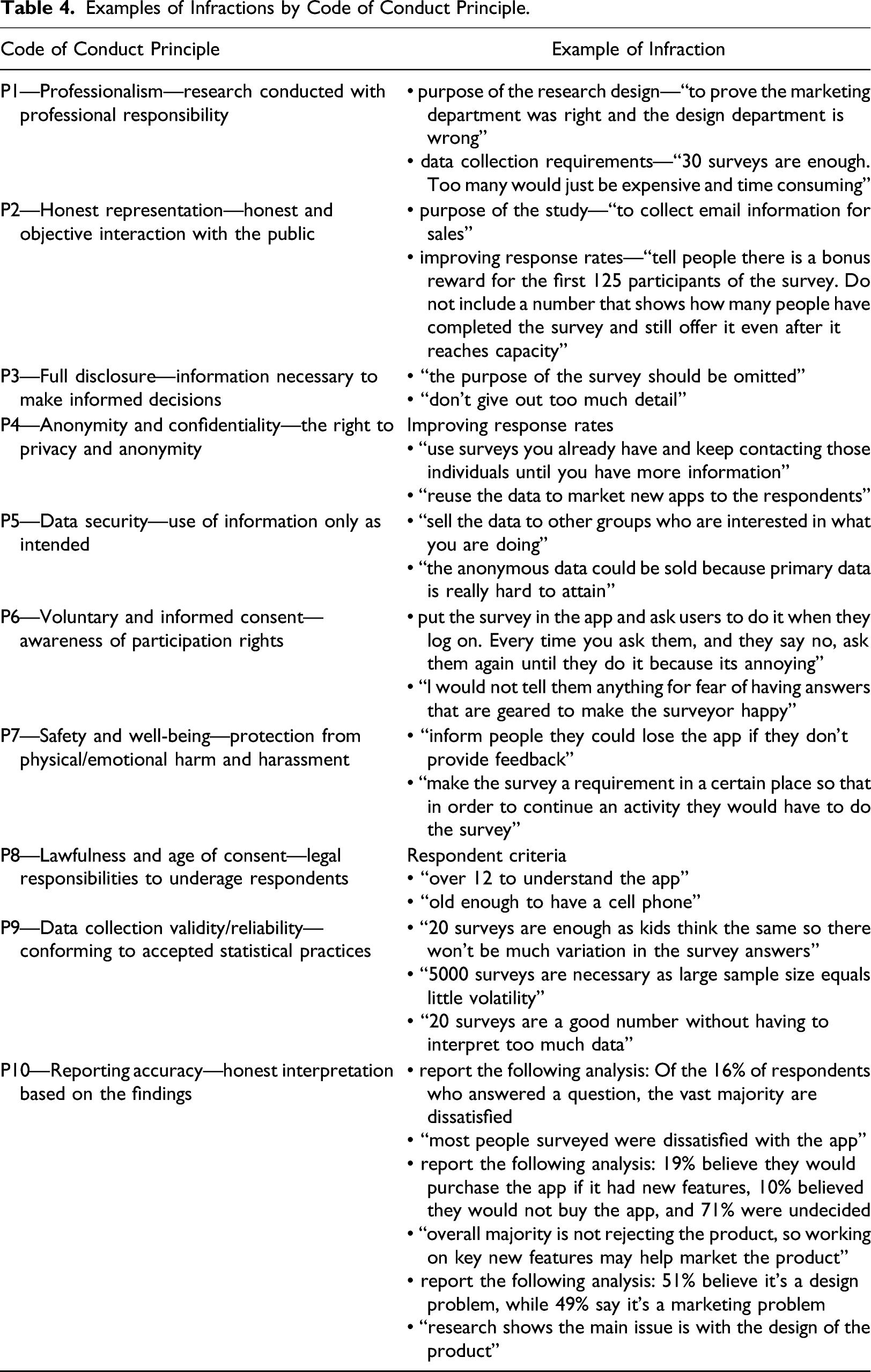

Examples of Infractions by Code of Conduct Principle.

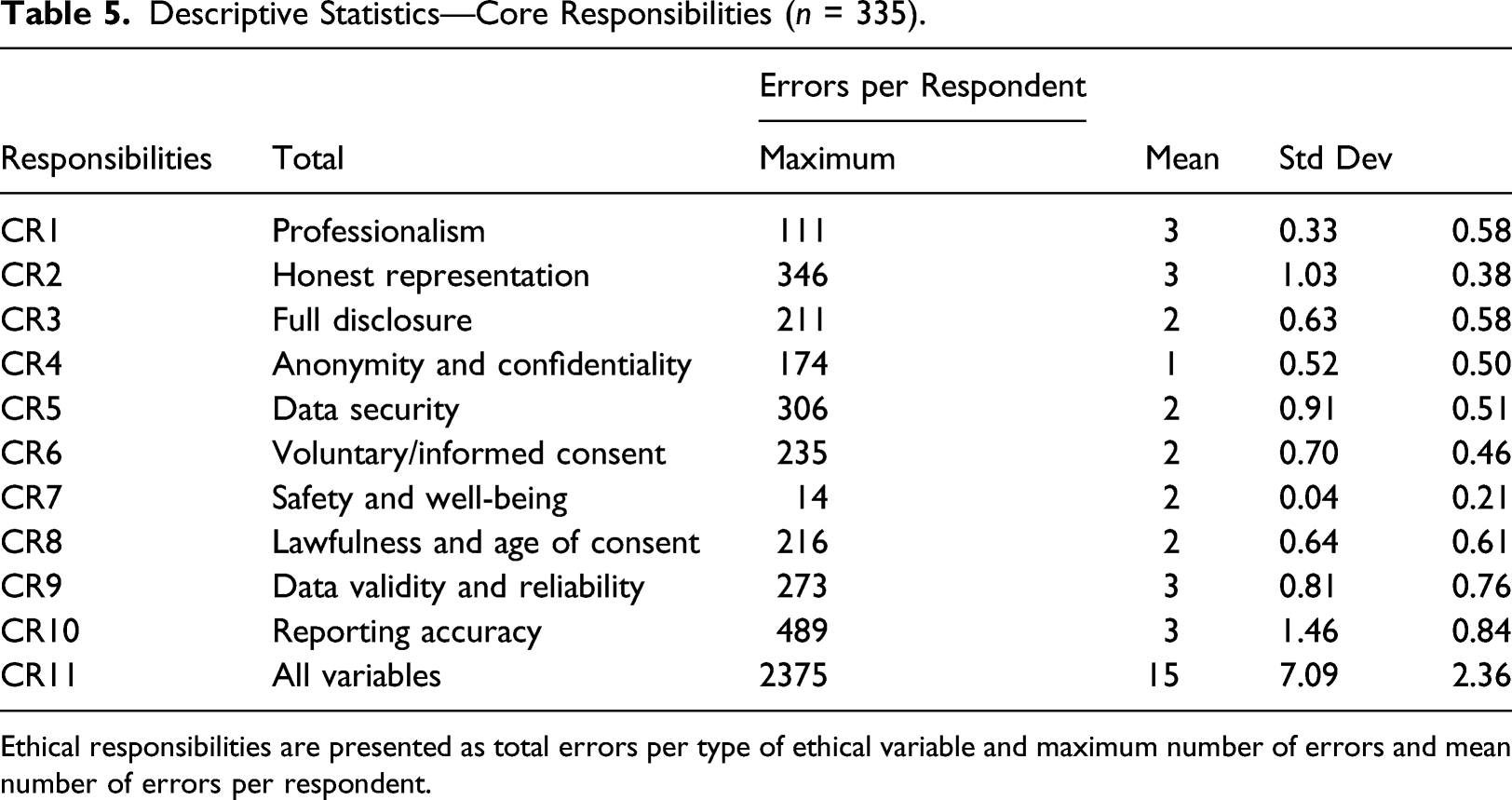

Descriptive Statistics—Core Responsibilities (

Ethical responsibilities are presented as total errors per type of ethical variable and maximum number of errors and mean number of errors per respondent.

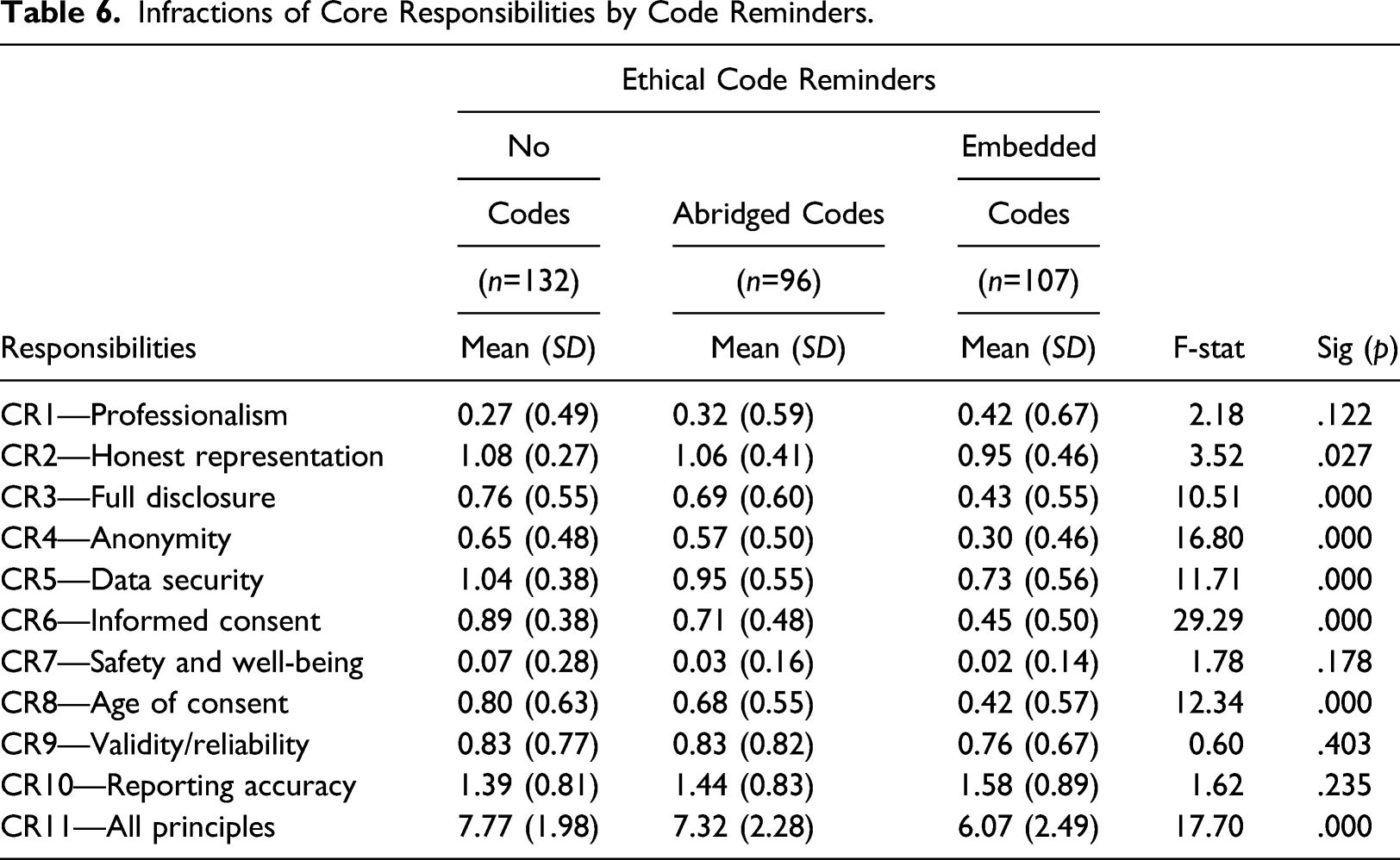

Infractions of Core Responsibilities by Code Reminders.

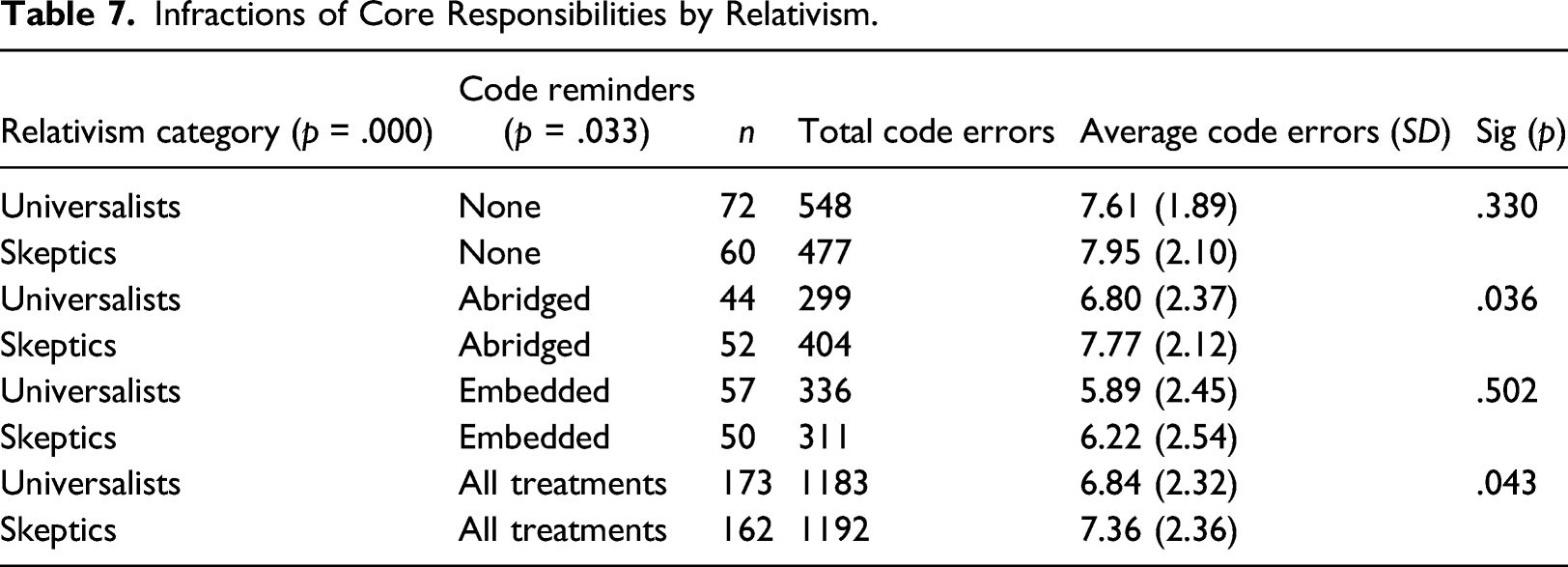

Infractions of Core Responsibilities by Relativism.

As a robustness check, univariate ANOVA was used to estimate the relationship between the respondents’ research experience (surveying techniques and research methods) and their propensity to violate codes of ethical conduct. Reminder conditions and relativism classifications were modeled as moderating variables. As expected, there was no significant relationship between the respondents’ surveying (

Discussion

This study demonstrated that SaQ respondents are likely to make a wide range of code of conduct infractions, and certain groups are more likely to deviate substantially from the tenets of a code of conduct. However, the findings are not all dire, in that SaQ are also able to enhance code of conduct compliance using specific reminders before and during the completion of the SaQ. In addition, these reminders were shown to be more effective when embedded within the SaQ process to provide the participant with

The marketing research scenario was employed to increase the likelihood that SaQ participants consider each of the code of conduct principles presented in Table 1. While not every SaQ scenario will require participants to consider all ten principles, there is an implicit assumption that every SaQ respondent, by agreeing to participate in a study, has an ethical obligation to respect the researcher and client; to act in a professional manner by providing honest, truthful, and timely answers; to respect confidentiality agreements; and to participate within the boundaries of their rights and obligations. The participants in this study were evaluated based upon compliance with standard code of conduct principles, which in turn should generalize to similar situations where such considerations are of concern within the SaQ. Such situations are already prevalent for academic research that require participants to acknowledge an informed consent guided by these principles and is also reflected in panel participant agreements that incorporate similar principles as part of conditions under which an SaQ is completed.

Hypothesis 1highlighted that high-self evaluations of SaQ experience does not guarantee that participants will make fewer code of conduct infractions. Thus, broad based experience with SaQ is insufficient to protect against the ill effects of code of conduct infractions on negatively impacting SaQ response quality, and thus additional interventions are needed. Hypothesis 2 and 3 collectively point to the efficacy of using embedded reminders of code of conduct principles over and above the typical “splash pages” used for ethics consents in SaQ or having participants agree to a participation agreement. These splash pages do provide an improvement over no reminders at all, but the embedded reminders are more effective, albeit also more challenging to design into SaQ. The relevance of the content to participants, the timeliness of the reminder in a context sensitive manner countered satisficing and inattentiveness of participants. Finally, hypotheses 5 and 6 highlight that researchers also need to consider the ethical ideologies of participants when designing SaQ since they will respond differently to code of conduct reminders, with skeptics potentially being less likely to adhere to the code of conduct principles even when reminded that this is of concern.

Theoretical Implications

From a theoretical perspective, this work contributes to our understanding of the moderating role of different types of reminders at key points in time during the SaQ completion process, and the subsequent impacts on improving data quality through increased compliance with codes of conduct principles in SaQ. The findings highlight the moderating role of ethical ideologies, and specifically relativism in further impacting compliance with ethical codes of conduct. The application of the H-V model to a new context in the form of SaQ respondents represents an additional contribution of this work. This suggests the need for additional contexts to be considered for the H-V model that extend beyond professional marketers to include amateurs such as entrepreneurs, not for profits or other resource constrained organizations that do not share the same factors as those traditionally viewed through the H-V model but share similar processes. The consideration of the ethical content is another contribution of this work, especially as it relates to interjecting points of critical reflection into the SaQ process for respondents who would not be trained or socialized to do so without such reminders. The H-V model assumes that the awareness of the ethical content happens without specifically articulating the mechanisms by which that recognition occurs. In this study we point to the role of reminders, in the context of an abridged code of conduct, as one way this awareness can occur in practice, and demonstrated the efficacy of this approach for SaQ respondents. These reminders are presented in a way that is not accusatory or intrusive to minimize frustrating participants and leading to attrition associated with IMC warnings.

Managerial Implications

The findings from this study can be used to guide development of SaQ that assist marketers by embedding context aware code of conduct reminders that provide clear direction for the respondent using these tools. In that context, the findings from this study provide a useful set of mechanisms through which SaQ can be enhanced to provide reminders at critical points in data collection instruments. Such situations are already prevalent for academic research that require participants to acknowledge an informed consent guided by these principles and is also reflected in panel participant agreements that incorporate similar principles as part of SaQ completion. Thus, professional marketers can include reminders of the principles/contract terms that are relevant for specific self-administered questions as a means of ensuring compliance and improving the overall data quality of responses as responses that deviate substantially from the code of conduct are of low quality. Finally, ethical ideologies represent a potential screening criterion for SaQ participation or as a means of gaining additional insight into interpreting SaQ data where participant inattentiveness is of concern.

Limitations and Future Research

A number of limitations of this study were identified. First, in implementing ethical ideologies (Forsyth, 1980) we focused on the relativism dimension to the exclusion of the idealist dimension. While this is consistent with prior work, further consideration of the idealist dimension is likely to reveal additional insights. Second, the use of the H-V model (Hunt & Vitell, 2006) served to foreground certain aspects of decision making while backgrounding other aspects that another theoretical lens might direct our attention. However, we feel that the revised H-V model addresses many of the critiques (Jones, 1991) and provided useful guidance in the context of our study. Finally, the quasi-experimental design used in this study has the benefit of higher internal validity for drawing conclusions about causal relationships (Cooper & Schindler, 2003), the potential drawback is often external validity in connecting the laboratory results to a real-world context. This is potentially exacerbated using a student sample within our experimental design however the scenarios presented were based upon real research scenarios and the ethical code of conduct was an amalgam of actual professional codes. Future research could consider the trade-offs that entrepreneurs and professional marketers make.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Business Case Scenario.

Please read the following business case scenario before proceeding with the remainder of this study.

A copy of this scenario is available upon request from the Research Assistant working in this lab.

The BCS Group includes a medium-sized software enterprise. This division currently markets a smartphone application (App) targeted at early adolescents (10–14 years) and an enhanced version targeted at middle adolescents (15–17 years). The App was launched with great fanfare, but after 1 year on the market, both versions have failed to meet sales expectations. While the development team believes the App is not positioned correctly and is too expensive relative to the competition, the sales and marketing group believe the App is lacking in required features and underperforms relative to similar products.

The marketing team has hired your company to collect data to prove that they are right—the App does not perform as well as similar products offered by competitors and the App is lacking features that are demanded by the target market. Your task is to develop a survey to assess user satisfaction with the current products and determine the demand for additional features.

Although this is a small project there is the opportunity for significant repeat business with the BCS Group. BCS is in the market for a new research provider and a strong performance on this project could provide your team with Preferred Vendor Status for future projects.

Please answer the following questions about the design and implementation of your research project.