Abstract

Individual learning strategies evoke (meta-)cognitive processes that enable effective goal-directed learning. Peer-directed academic help-seeking may provide new information, but related interaction processes are challenging. Applying learning strategies during help-seeking may enhance academic success. Competence in using social resources requires conditional knowledge about which strategy fits best for achieving a pursued goal. In this paper, a Situational Judgment Instrument to assess this competence and empirical data regarding the instrument’s subscales are presented. A first study with 38 undergraduates showed that organization and rehearsal were the easiest to identify correctly. Elaboration, evaluation, and argumentation on the other hand were more difficult to distinguish. In a second study with 120 first-semester students a hypothesized moderating effect of the competence on the link between help-seeking and academic success was not found. However, competence degree showed to be positively associated with students’ satisfaction but not with academic achievement. Implications for research and practice are discussed.

Keywords

Introduction

Learning at university compared to learning at school is demanding more self-responsibility from students for their learning process. Students at university learn with less guidance from lecturers and are confronted with various, more complex learning subjects and receive fewer feedback from institutional employees. Thus, having less strict time constraints makes a considerate management of time spent on academic responsibilities relevant for academic success (Thibodeaux et al., 2017). Thus, students’ ability to regulate their own learning process in order to pursue academic goals is fundamental to successful learning (Zimmerman and Martinez-Pons, 1986). They can do so by utilizing learning strategies that induce (meta-)cognitive processes which are beneficial for learning (Weinstein and Mayer, 1986; Zimmerman and Martinez-Pons, 1986). Fostering their learning process when facing knowledge-related problems is a special requirement for students. In these cases academic help-seeking which describes asking peers for information or explanations, is an effective reaction that can foster learning (Nelson-Le Gall, 1981; Richardson et al., 2012). Moreover, the tendency to seek help correlates with the use of individual (meta-)cognitive learning strategies (Karabenick and Knapp, 1991). Both priorly mentioned self-regulated behaviors can be integrated by applying learning strategies in social settings of academic help-seeking. The learning strategies may structure the help-seeking interaction between agents (see scripted collaboration in King, 2007). Thus, we expect that learning strategies performed socially with peers may result in more effective help-seeking episodes. Following one’s own academic goals by initiating structured help-seeking episodes can be considered a valuable competence for university students. In this article, a situational judgment instrument that measures students’ ability to choose adequate social learning strategies is presented and empirically tested. Study 1 provides empirical data on the instrument’s subscales. Study 2 empirically tests the assumption that the competence of using social resources strengthens the association between help-seeking behavior and academic success measures. The instrument itself and the empirical insights are valuable for practitioners and researchers in the field of self-regulated learning. In the following section, we introduce learning strategies and their potential for academic help-seeking episodes.

Learning strategies as useful tools for learning

Knowledge acquisition/encoding involves the processing of materials within short-term memory and their transformation into traces in long-term memory (Craik and Lockhart, 1972). Weinstein and Mayer (1986) conceptualize learning strategies as learner’s behavior during learning which affects the encoding of information (see encoding process; Cook and Mayer, 1983). The hypothesized encoding process was amended by the selecting-organizing-integrating-model of knowledge construction (Mayer, 1996) and is described in the following: The first stage selecting describes the necessity to focus attention to make sense of a text passage. In more detail, perceived information held in sensory memory is transferred to short-term memory (later developed to working memory; Mayer, 2021). Selecting processes are involved for example when taking notes of most relevant information. The second stage comprises organizing information by establishing internal connections within working memory among selected information. For example, when describing a process organizing is necessary. In the third stage integrating, this organized information is integrated by establishing connections to organized knowledge from long-term memory. Integrative processes can be fostered by identifying analogous concepts or providing explanations to oneself or peers. The three priorly stated encoding processes can be evoked by learners when actively implementing learning strategies (compare generative learning activities in Brod, 2021). From this perspective, learning goes beyond acquiring new information but involves the construction of organized mental representations and involves their integration with existing prior knowledge (Fiorella and Mayer, 2015).

Knowing when and why to apply learning strategies

Knowing about learning processes solely is not sufficient for students to make strategic use of learning methods. Self-regulated behavior requires on the one hand metacognitive awareness about necessary resources for tasks and on the other hand knowledge about regulation of the learning process (Winne and Perry, 2000). Metacognitive awareness describes knowledge about one’s own cognition and affects regulation of one’s own learning processes (Schraw and Moshman, 1995). Regulation involves (amongst other phases) the planning, monitoring, and evaluating of the learning process and its products. Awareness and regulation intertwined enable learners use their knowledge by strategically adapting their learning behavior to various difficulties in diverse learning situations. Different types of knowledge establish a skill to produce a behavior as an antecedence to independently produce behavior (Paris et al., 1983). The skill is understood as a compound of three types of knowledge: (1) declarative (i.e., knowing that), (2) procedural (i.e., knowing how), and (3) conditional knowledge (i.e., knowing when and why) (compare domain-knowledge; Alexander and Judy, 1988). Declarative and procedural knowledge is necessary to produce behavior on prompt, but conditional knowledge is required to make independent strategic use of behavior. Thus, simply knowing that a method exists and how one proceeds with it is not sufficient. Learners are required to know in what situations (when) and for what reason (why) a strategy is most useful (e.g., before writing an essay about similar theories (when), structuring these topics beforehand with the goal to gain an overview and derive categories for comparison (why)). In line with this argument, students have difficulties to identify the most adequate of two empirically tested learning strategies when provided with scenarios, but supplying them with metacognitive knowledge about learning processes (e.g., instruction or in-depth articles) improves their choices (McCabe, 2011). Moreover, interventions that let students plan their use of strategies, work through information material and get lecturer’s feedback can improve academic achievement (Biwer et al., 2022). In conclusion, regulating one’s own learning process, for example by applying (meta-)cognitive learning strategies, requires the skill to produce a strategy compatible with the goal and the learning situation (knowing what to do, how to do it, when to do it, and why doing it).

Advantages by implementing learning strategies with peers

In help-seeking episodes, known procedures of learning strategies may structure students’ interaction and make it more successful. In the following, we introduce relevant learning strategies and point out their potential benefit of being applied with a peer.

Organization strategies involve the identification of important concepts (highlighting), their structuring (clustering) and structured interrelation (linking; Weinstein and Mayer, 1986). This type of strategies does not solely induce organizing processes: Mapping activities involve selecting processes by identifying central concepts (e.g., creating nodes from text), then constructing relationships among them (e.g., spatial arrangement among concepts; and even labeling relationships) induces organization processes (see Chapter 3 in Fiorella and Mayer, 2015). Moreover, integrating processes can be induced by concept mapping as representations need to be translated (e.g., text to graph; Fiorella and Mayer, 2015). Moreover, the constrains on and the detailedness of the labeling may affect the level of induced integration processes (dependent of how far functions or effects are considered). These processes are likely to occur, for example, when constructing concept maps and (less pronounced) during mind mapping (Fiorella and Mayer, 2015). Empirically, although dyadic groups may not show better learning outcomes when constructing concept maps collaboratively, collaboratively constructed artifacts have shown to be more elaborated than individually constructed ones (Kwon and Cifuentes, 2009). Thus, it can be assumed that structuring learning domains with peers may improve the effectiveness of help-seeking episodes.

Elaboration strategies support integrating new knowledge by establishing connections between new information and prior knowledge (Mayer, 1996; Weinstein and Mayer, 1986). During the integration process, relevant information needs to be identified and integrated with concepts or schemata from prior knowledge (Weinstein and Mayer, 1986). Prompting learners with (self-)explanation tasks (see Chapter 7 in Fiorella and Mayer, 2015) or why-questions (elaborative interrogation) activates cognitive schemata and subsequently improves performance in factual and inference type tests (Dunlosky et al., 2013; McDaniel and Donnelly, 1996). Similarly, research in peer helping has shown that levels of elaboration matching the level of asked questions is beneficial for learning (Webb, 1989). Thus, it can be assumed that explanations from peers may facilitate elaboration during help-seeking episodes.

Evaluation or critical thinking skills are important capacities for higher education (Davies and Barnett, 2015). Necessary abilities are classified amongst others in elementary clarification (e.g. comprising analyzing stated reasons, asking for or providing clarifications) as well as inferences (e.g., judging conditional logic; Davies and Barnett, 2015; Ennis, 1985). Research has shown that critical thinking skills are developed over years of practice (O’Hare and McGuinness, 2009). Furthermore, group discussions enable synthesizing concepts, the evaluation of solutions and lead to higher scores for analytical tasks (Gokhale, 1995). From this perspective, critical discussion about the plausibility of arguments within help-seeking episodes can be expected to be beneficial for learning.

Argumentation in learning settings is a social activity in which reasons (arguments) for opposing opinions are exchanged and reacted to with the goal to change the conceptual understanding of the agents (Leitão, 2000). This includes (but is not limited to) the integration of information to produce (counter-)arguments and checking the consistency of provided reasons (Nussbaum, 2021). Discussion enables the involved agents to exchange their knowledge, provide clarifications, and integrate (some of) their knowledge (Asterhan and Schwarz, 2007; Felton et al., 2015). Furthermore, prompting argumentation promotes it’s application (Asterhan and Schwarz, 2007) and application of argumentative discourse improves knowledge on argumentation (Stegmann et al., 2007). Significant effects of interventions on fostering argumentation in computer-supported environments were reported in a meta-review of 12 studies (Wecker and Fischer, 2014). There were no systematic effects of argumentation interventions on domain-knowledge (Wecker and Fischer, 2014). Research of scientific argumentation in science education revealed that instructional scaffolds systematically improve conceptual change (i.e., changing misconceptions to understanding) of students (Li et al., 2022). Beyond that, the acquisition of argumentation-related competencies (e.g., formulating arguments and reacting to counterarguments) is worthwhile as they are related to critical thinking (Kuhn, 2019) as well as scientific arguments (Nussbaum, 2021). Applying this strategy with peers during help-seeking episodes may deepen understanding of learning content.

Self-testing or rehearsing one’s own knowledge after reading learning material improves long-term learning (testing effect; Fiorella and Mayer, 2015; Roediger and Karpicke, 2006). Understanding and responding to a self-made test assignment (self-testing) induces information retrieval which in turn strengthens memory traces (selection and organization) and facilitates interlinking with further prior knowledge (integration; see Chapter 6 in Fiorella and Mayer, 2015). Research has shown that generating questions and answering test tasks improve long-term retention test performance (Ebersbach et al., 2020). Testing in groups is expected to be beneficial for learning, because a group may come up with more ideas than one individual (Blumen and Rajaram, 2008). Research has yielded that delayed recall and performance on new questions can be improved by (collaborative) initial testing, that is, when being tested immediately after exposition (Cranney et al., 2009). Although this effect of initial group tests on delayed retention could not be replicated and thus warrants further study (Vojdanoska et al., 2010). In sum, collaborative testing with a peer is expected to mitigate the presented disadvantages and may furthermore foster coordinated help-seeking episodes with peers. In conclusion, seeking academic help and implementing learning strategies bears the potential to improve the outcomes of help-seeking behavior. In the following section the competence of using social resources by applying learning strategies is laid out.

The competence of using social resources for overcoming knowledge related obstacles

Peer students can act as social resources who hold valuable information (Lin, 1999). Seeking academic help is one approach for students to regulate their own learning by involving social resources. Moreover, it can be assumed that the quality of help-seeking episodes increases when adequate learning techniques are applied. Following a clear goal is crucial to determine the usefulness of a particular learning strategy in a specific situation. Learners improve their process by applying learning strategies in a way that promotes their goals (Paris et al., 1983). Those learners may benefit more from seeking academic help then others. In sum, it is conditional knowledge about various learning strategies that enables students to adapt their learning to a variety of knowledge-related obstacles (see Section “Knowing when and why to apply learning strategies”). All things considered, the competence of using social resources to overcome knowledge-related obstacles describes the conditional knowledge about applying (meta-)cognitive learning strategies with peers. As the respective strategy is contingent on the learner’s goal it enables individuals to improve their learning process. Students with high proficiency in social learning strategies are able to apply the most appropriate learning strategy across various learning situations to foster their learning progress. Such efficacious (meta-)cognitive learning strategies are, amongst others, organization, elaboration, evaluation, argumentation, and rehearsal (see Section “Advantages by implementing learning strategies with peers”). In Section “Situational assessment of the competence of using social resources,” we will describe an instrument to assess conditional knowledge about learning strategies. The characteristics of the newly developed instrument are then analyzed to answer research question 1.

RQ-1: In how far does the instrument and its subscales differentiate between the (meta-)cognitive learning strategies in social situations?

Modeling how the effective use of academic help-seeking enhances academic success

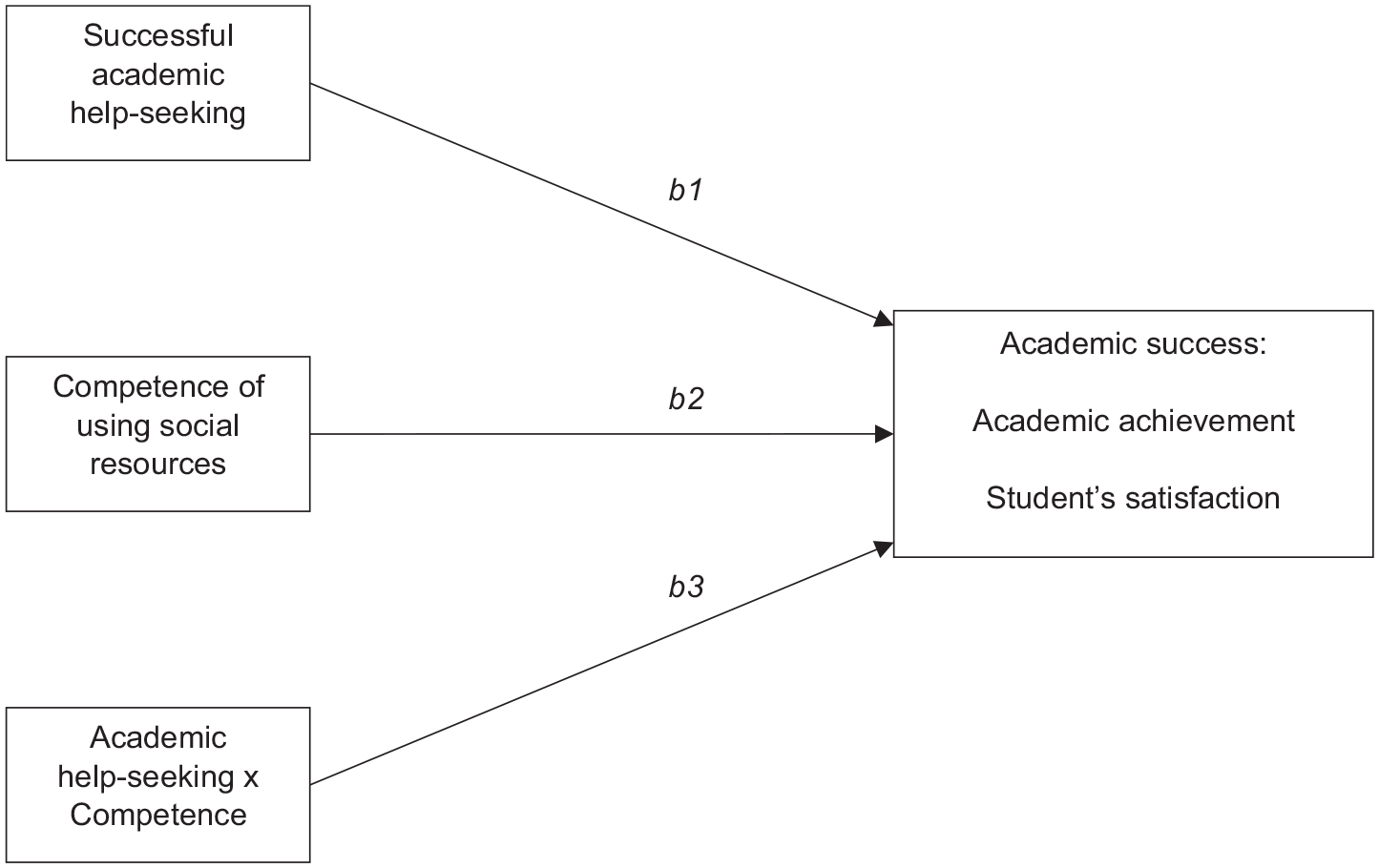

There is broad evidence that academic help-seeking is associated with academic success (Richardson et al., 2012). Additionally, we suggest that the competence of using social resources may improve the quality of help-seeking episodes (see Section “Advantages by implementing learning strategies with peers”). It can be assumed that the higher students’ proficiency to adapt to various learning situations, the more help-seeking episodes benefit from the application of learning techniques. Consequently, a higher competence of using social resources leads to more episodes involving goal-oriented processes, which is expected to increase academic success measures. These success measures can comprise performance measured as academic achievement or students’ satisfaction (York et al., 2015). That is why we pose the research question in how far the association between successful help-seeking episodes and academic success measures is moderated by the competence of using social resources. This can be modeled using a linear regression model in which academic success is predicted by first help-seeking, second the competence, and third the interaction between both variables (see Figure 1).

RQ-2: In how far is the association between academic help-seeking and academic success measures moderated by the competence of using social resources?

Moderation model describing the hypothesized associations between successful academic help-seeking behavior and academic success measures moderated by the competence of using social resources. Moderation is decomposed in multiple linear regression with three paths: Successful academic help-seeking behavior predicts academic success measures (path b1). The competence of using social resources predicts academic success measures (path b2). The interaction term of help-seeking and the competence predicts academic success measures (path b3).

Situational assessment of the competence of using social resources

The adequacy of a learning strategy inherently relies on the goal of the learner in a specific learning situation. Thus, we have developed a situation-specific test measuring students’ conditional knowledge to implement learning strategies with peers in various situations. Situational Judgment Tests are designed to assess individual abilities in scenarios as synthetic situations to predict performance (e.g., in a job; Gessner and Klimonski, 2006). For example, a scenario can be a typical knowledge-related problem during exam preparation with learning strategies as single-choice response options. The judgment for the most appropriate response involves the learner’s appraisal of the situation and the conditional knowledge of the response options. Situational Judgment Tests are commonly used in job application processes to assess social behavior such as leadership (Christian et al., 2010). Our instrument is based on an existing inventory from Waldeyer and colleagues (2019a, 2019b), who have systematically developed the Resource Management Inventory that assesses situation-specific resource-management knowledge and students’ self-reported ability to apply this knowledge. Assimilating this design, we developed a situational judgment instrument to measure the competence of using social resources (see Section “The competence of using social resources for overcoming knowledge related obstacles”). The application of the priorly mentioned (meta-)cognitive strategies in a social help-seeking context may have a beneficial impact on the quality of help-seeking episodes (see Section “Advantages by implementing learning strategies with peers”). With this goal in mind, the newly developed instrument aims to assess conditional strategy knowledge in various situations that are appropriate to make use of (meta-)cognitive learning strategies with peers. We utilize scenario-based qualitative assessment, by asking to identify the best strategy and we use offline standards for assessment as the scenarios are separated from the actual learning process (Wirth and Leutner, 2008).

The instrument uses learning strategies as response options for all scenarios. We harness organization, elaboration, and rehearsal similarly as described in the Inventory for Assessing Learning Strategies in Higher Education (Wild and Schiefele, 1994; German: Inventar zur Erfassung von Lernstrategien im Studium) (see Section “Advantages by implementing learning strategies with peers”). Additionally, we included the processes evaluation and argumentation.

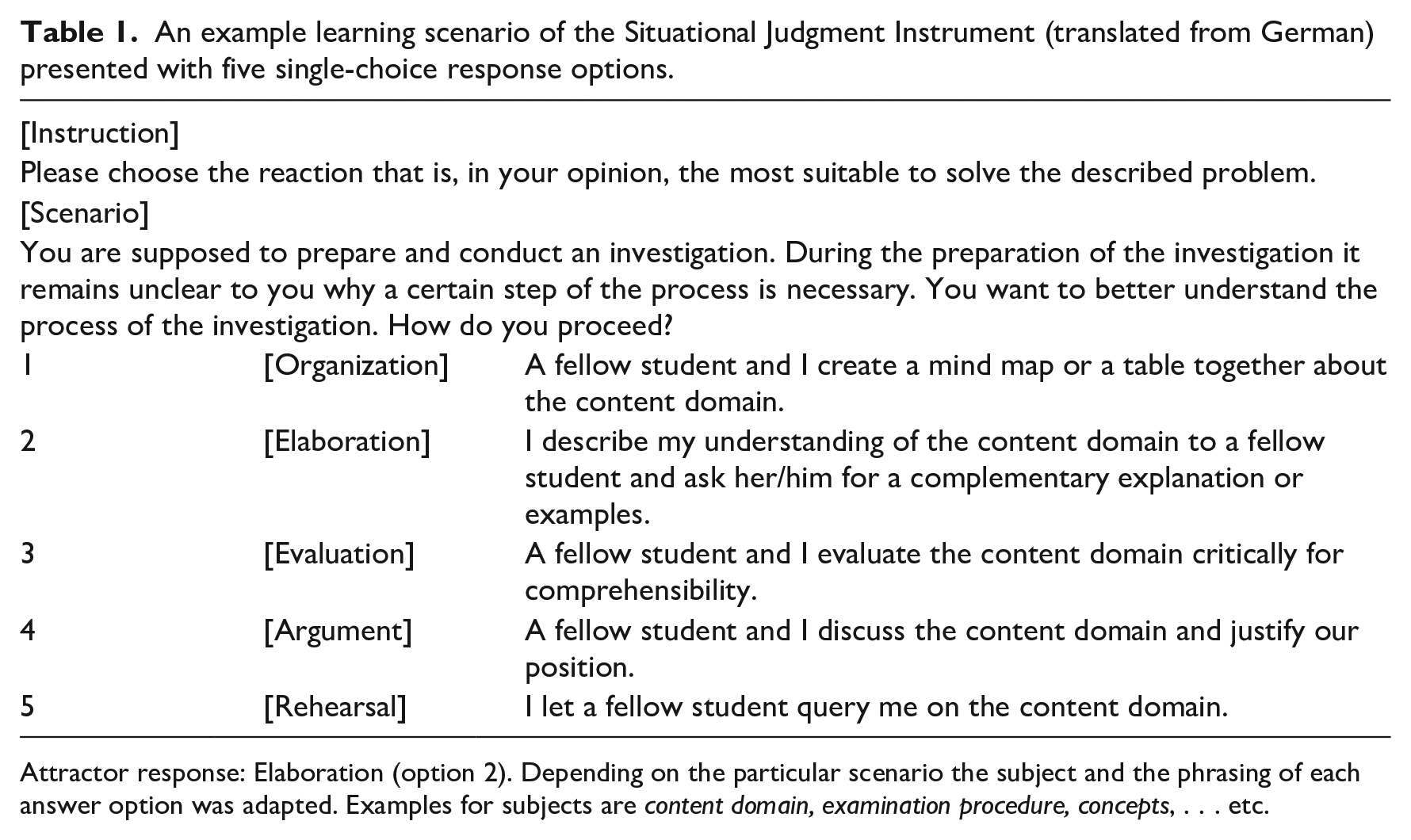

The scenarios are based on typical situations students of science, technology, engineering, mathematics study programs experience. Each scenario contains a problem and a goal description. Participants are instructed to identify the most appropriate learning strategy (i.e., attractor) among five response options (incorrect options are distractors; see Table 1). All translated scenarios can be found in Supplemental Appendix A. The more scenarios a student can react to adequately, the more versatile is their competence of using social resources. This competence is thus operationalized as the sum of correctly solved scenarios. The test results of the Situational Judgment Instrument indicate conditional strategy knowledge, which can be understood as a potential to make use of social learning strategies. However, it does not assess actual past behavior.

An example learning scenario of the Situational Judgment Instrument (translated from German) presented with five single-choice response options.

Attractor response: Elaboration (option 2). Depending on the particular scenario the subject and the phrasing of each answer option was adapted. Examples for subjects are content domain, examination procedure, concepts, . . . etc.

To develop the instrument, we first created scenarios in workshops with student assistants in bachelor’s and master’s programs that resulted in an initial set of overall 93 scenarios. We followed an iterative process including quantitative and qualitative approaches to improve the instrument. Overall 16 subject matter experts and seven students in science, technology, engineering, mathematics study programs provided valuable responses. Unfortunately, experts’ responses were too few to estimate validity measures and make them the prior criterion for choosing the scenarios for consideration. Nevertheless, the feedback during the process supported our decisions on formulation of the scenarios (e.g., adding a glossary to each scenario). In study 1 the pilot version of the instrument with 25 scenarios was evaluated with responses from 38 first-semester students. The resulting item difficulties guided the reduction down to 20 scenarios for the revised instrument that was used for study 2.

Study 1: Comparison of subscales

The sample of the pilot study (November ‘18) consists of N = 38 first-semester students, which were on average 20.32 years old (SD = 2.03, ranging from 18 to 26). Twenty-eight were female and 10 were male students. Seventy-four percent of the participants studied a program combining psychology and computer science, the remaining 26% studied chemistry for education. Twenty-four percent of the sample had prior experience with studying at another institution. Students were recruited at tutorial sessions of introductory courses. They answered a pilot version of the Situational Judgment Instrument (25 scenarios in five subscales) as part of an online survey. The study was approved by the local ethics committee (ethics vote ID: 2204PFSC6584).

Analysis of subscales’ differentiation

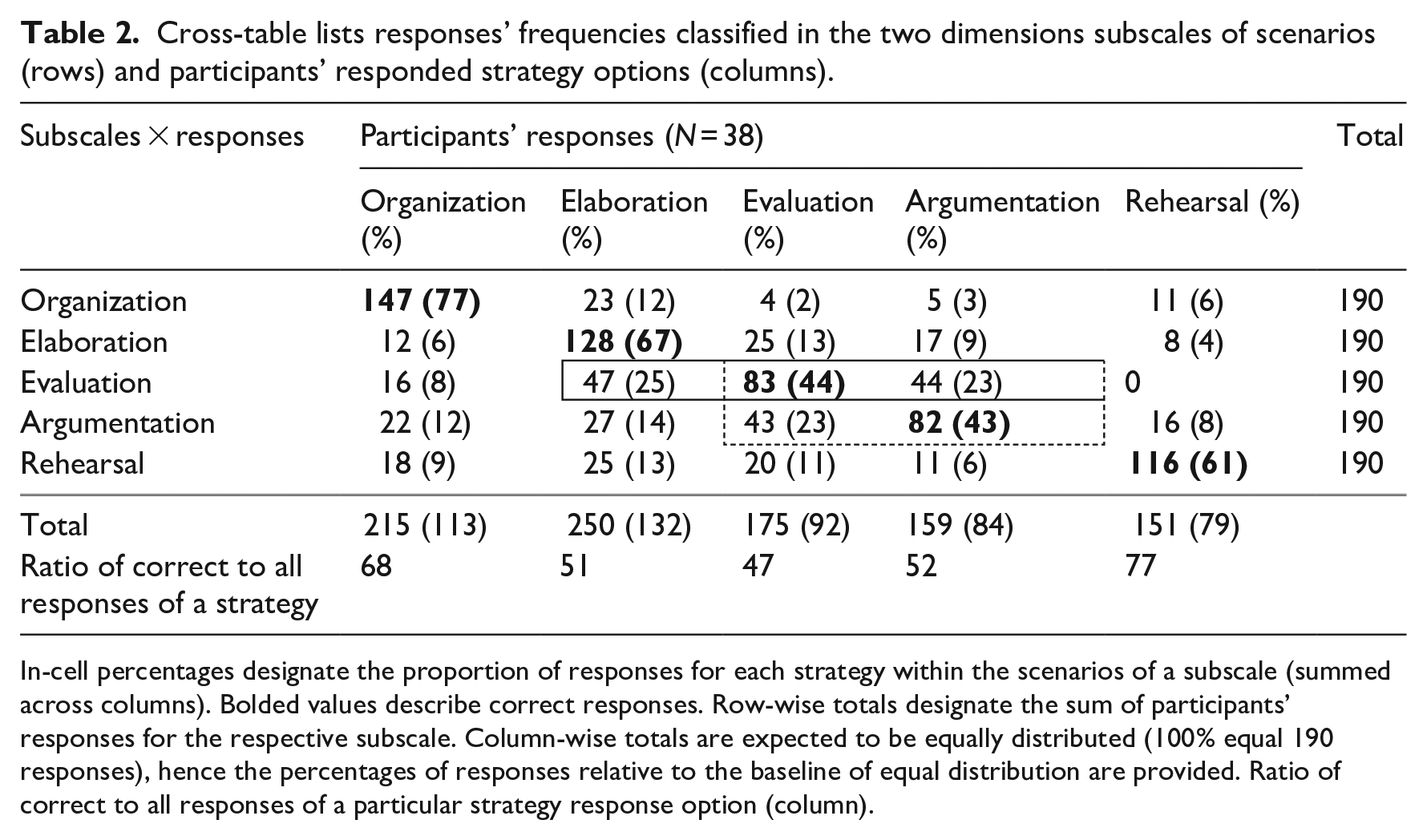

The following calculations provide an overview about the subscales’ ability to differentiate between (meta-)cognitive learning strategies (see RQ-1 in Section “The competence of using social resources for overcoming knowledge related obstacles”). Beyond scores of correct responses, it is of interest to identify the most influential strategies that erroneously were considered most appropriate (i.e., distractors) for each subscale. The sample’s responses for the pilot version of the Situational Judgment Instrument are depicted in Table 2. Each row represents a subscale of five scenarios whose attractor is indicated by the strategy name at the beginning of the row. The columns represent participants’ responses of a strategy option. Each cell with matching column and row (in boldface) are correct responses. The column-wise marginal totals are summed responses for a strategy option; percentages (in parentheses) indicate the deviation of the expectancy value assuming uniform distribution.

Cross-table lists responses’ frequencies classified in the two dimensions subscales of scenarios (rows) and participants’ responded strategy options (columns).

In-cell percentages designate the proportion of responses for each strategy within the scenarios of a subscale (summed across columns). Bolded values describe correct responses. Row-wise totals designate the sum of participants’ responses for the respective subscale. Column-wise totals are expected to be equally distributed (100% equal 190 responses), hence the percentages of responses relative to the baseline of equal distribution are provided. Ratio of correct to all responses of a particular strategy response option (column).

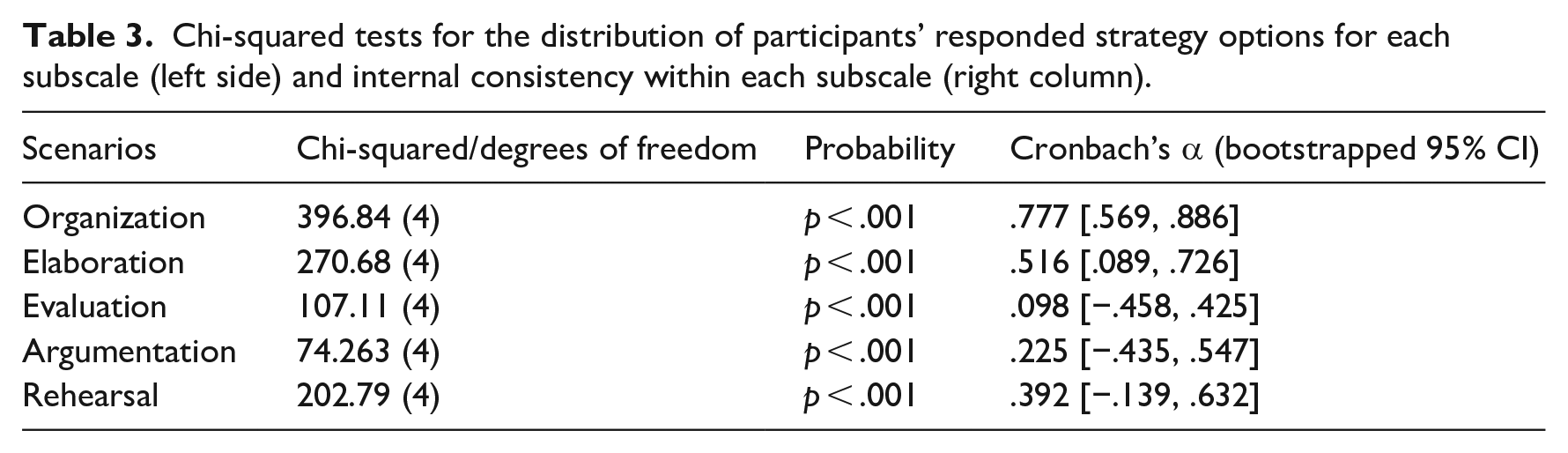

For organization scenarios 77% of the responses were correct, followed by elaboration (67%), rehearsal (61%). There were two subscales which received more incorrect than correct responses which are evaluation (44%) and argumentation (43%). All subscales received most responses on their attractor method compared to the distractors, which results in a statistically significant uneven distribution of responses for each subscale (see Table 3/Part a). Internal consistency (Cronbach’s alpha) across the five scenarios of each subscale are rather poor except for the organization subscale (see Table 3/Part b). As the scenarios in each subscale were answered more heterogenous than expected, extracting the correct answer seems to be difficult. Some scenarios were removed for study 2. Based on the descriptive statistics of correct responses, we (mostly) aligned the selection of scenarios to the goal of having four scenarios for each subscale with varying difficulties (see Section “Situational assessment of the competence of using social resources”).

Chi-squared tests for the distribution of participants’ responded strategy options for each subscale (left side) and internal consistency within each subscale (right column).

In the following, the subscales’ results will be discussed in groups based on obvious patterns in their correct and incorrect responses. Incorrect responses on subscales can be understood as their distracting strength from the actual attractor. Comparing subscales in this way provides a first insight into subscales’ ability to differentiate students with varying conditional knowledge. First, when focusing on incorrect instead of correct responses (see Table 2), it gets obvious that elaboration is the strongest distractor for nearly all subscales except argumentation (see column elaboration in Table 2). This may be due to the item wording, which is asking a peer to get further explanations or examples (see Table 1), which is a plausible strategy for various situations and a byproduct of other strategies such as evaluation or argumentation. Hence, the strong distracting strength of elaboration strategies may be due to their general formulation. Second, the three subscales organization, elaboration, and rehearsal have the highest share of correct responses (organization: 77%, elaboration: 67%, rehearsal: 61%) and all distractor strategies were selected roughly with similar frequency (distractors’ strength on average: organization: 6%, elaboration: 8%, rehearsal: 10%; see distractors per row in Table 2). As all three subscales’ scenarios were frequently solved correct (i.e., low item difficulty) the remaining share of incorrect answers is considerably low. The high rate of correct solutions seems reasonable because organization of materials, elaboration on aspects of materials and rehearsal of materials are typically done in distinct stages of the learning process. That could be one reason why students were able to differentiate these scenarios well. Third, the subscales evaluation and argumentation exhibit similar patterns in correct responses (evaluation: 44%, argumentation: 43%) and distracting strength of subscales. In particular, the responses on the evaluation subscale are distracted by elaboration and argumentation with similar strength (see row evaluation in Table 2). Moreover, evaluation and argumentation are distractors of the same strength of 23% for the respective other subscale (see rows evaluation x argumentation and argumentation x evaluation in Table 2). Thus, the results of similar correct responses and distracting strength combined indicate that evaluation and argumentation subscales have similarly discriminative strength in this instrument. This similarity is reflected from a conceptual perspective because the induced processes of both strategies seem comparable: The evaluation strategy response option checks for clarity and traceability of an existing argumentation and similarly, the argumentation strategy response option intends to construct a new clear argumentation and provide evidence for the point of view. In conclusion, the prior section provided evidence regarding three main aspects. At first, we found that organization, elaboration, and rehearsal strategies were among the easiest to solve and hence are less discriminative subscales. Second, the elaboration response option has the highest distractive strength across all strategies (exempt argumentation). Third, evaluation and argumentation are equal in their correct responses and have similar distractive strength affecting each other.

Furthermore, students’ response pattern (e.g., preferences) can be described independent from subscales. Each column represents the responses of a particular strategy across all scenarios (columns in Table 2). Participants responded most frequently with elaboration and organization responses (see totals row in Table 2), each of both responses is above the expectancy value of 190 mentions. Responses of evaluation, argumentation, and rehearsal strategies were slightly below the expected average value. A chi-square goodness-of-fit test comparing the summed responses confirms that the frequency of selected strategies differs significantly across strategies from an even distribution (Χ2(4) = 36.484, p < .001). This result indicates that students’ overall frequency of strategy response options differs across the five strategies. Moreover, the ratio of correct to all responses of a particular response option sheds a light on how precise students’ strategy responses were. The rehearsal strategy reaches the third rank in correct identification, but its rank is first measured on correct among all provided rehearsal responses, whereas the correct identification of elaboration response options is second highest, but their share in all provided elaboration response options is just third highest. The next section takes these considerations further and focusses on (in-)correct responses to the scenarios investigating students’ response patterns.

Analysis of correct and incorrect responses using signal detection measures

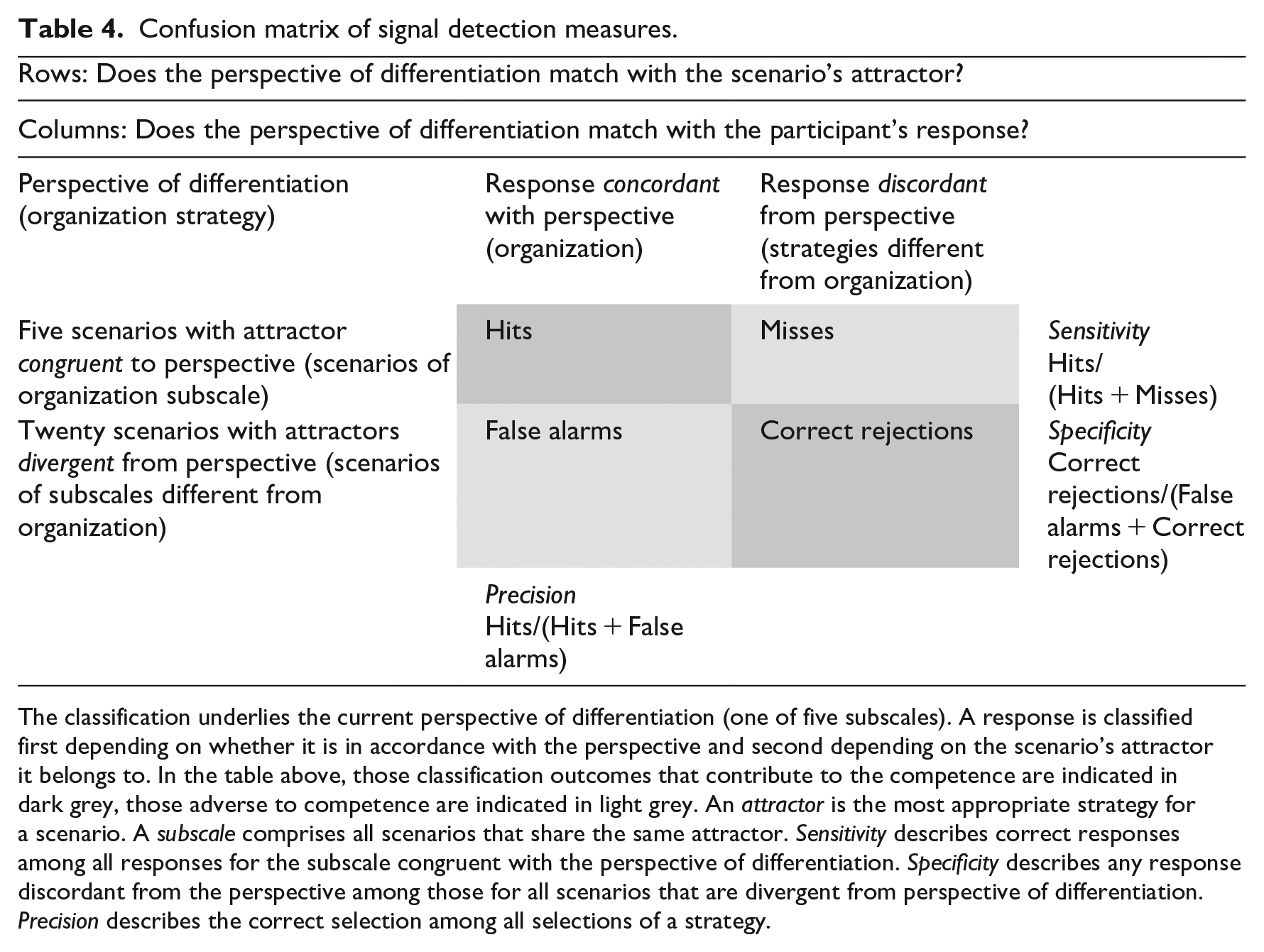

The following measures aggregate participants’ response behavior and describe the differentiation of the instrument’s subscales (see RQ-1 in Section “Situational assessment of the competence of using social resources”). Participants’ responses are classified using signal detection measures (Wenger et al., 2012; Swets, 1988). Thereby going beyond solely considering correctness for the scenarios, but additionally considering the frequencies of selected strategies. The classification logic for a strategy-response comprises two comparisons involving a designated learning strategy (e.g., organization): Is the designated learning strategy congruent or divergent with the attractor for the scenario? Is the designated learning strategy concordant or discordant with the response of the participants? Consequently, each classification hinges on the learning strategy from which perspective the comparisons are made (perspective of differentiation; see Table 4). The first comparison thus comprises the perspective and the scenario’s attractor. When both are matching/congruent a response can be classified as hit or miss (upper row in Table 4) and if not either false alarms or correct rejections (lower row). The second comparison checks whether the perspective and participant’s response are matching/concordant (columns in Table 4). Based on these two comparisons, the strategy-response is allocated to one of the four fields in the confusion matrix. The priorly described classification is done for each response of all 25 scenarios. Moreover, the whole classification is repeated for each of the five strategies as the perspective of differentiation (listed in Table 4).

Confusion matrix of signal detection measures.

The classification underlies the current perspective of differentiation (one of five subscales). A response is classified first depending on whether it is in accordance with the perspective and second depending on the scenario’s attractor it belongs to. In the table above, those classification outcomes that contribute to the competence are indicated in dark grey, those adverse to competence are indicated in light grey. An attractor is the most appropriate strategy for a scenario. A subscale comprises all scenarios that share the same attractor. Sensitivity describes correct responses among all responses for the subscale congruent with the perspective of differentiation. Specificity describes any response discordant from the perspective among those for all scenarios that are divergent from perspective of differentiation. Precision describes the correct selection among all selections of a strategy.

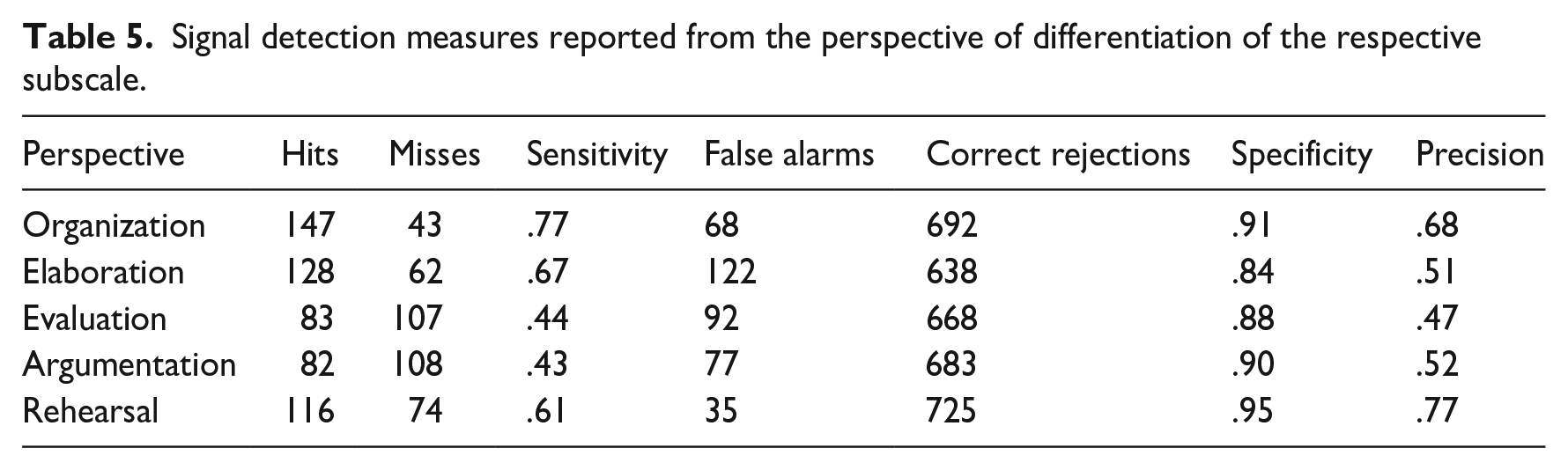

As an example, let it be presumed that the perspective of differentiation is the organization strategy. Moreover, let it be presumed that the current scenario is from the set of the organization-subscale (i.e., whose most appropriate strategy/attractor is organization). Thus, when comparing the perspective and the scenario’s attractor both are congruent (upper row in Table 4) and the response can either be classified as a hit or miss. Next, let it be presumed that the participant’s response is elaboration. Thus, when comparing the perspective and the response both are discordant (third column in Table 4) and, linking both classifications, the response is classified as a miss; hence the participant decided for an incorrect strategy. Next, when evaluating this situation from the perspective of the elaboration strategy this same response is classified as a false alarm. The formulas for three signal detection measures (Powers, 2020; Swets, 1988) are provided in Table 4. Sensitivity indicates correct among incorrect selections. Specificity represents those selections in which the necessity of selecting a strategy discordant from the perspective of differentiation was identified. High precision represents least incorrect among all selections of a strategy. These measures provide insights into students’ selection pattern and perhaps reveal pattern such as “one strategy fits most of the scenarios” (indicated by low precision). Next, Table 5 shows a row-wise overview of the estimated statistical measures for each subscale. The following detailed explanation takes the organization subscale as the perspective of differentiation (first row in Table 5). When students responded to the five scenarios with organization as an attractor, they were able to identify the correct strategy (sensitivity) in 77% of the decisions (on average: 3.9 of 5 scenarios). When responding to the other 20 scenarios (attractors divergent from organization), they identified that organization is not the most appropriate strategy (specificity) in 91% of the decisions (on average: 18.2 of 20 scenarios). Among all organization responses in all of the 25 scenarios, about 68% were identified correctly (precision).

Signal detection measures reported from the perspective of differentiation of the respective subscale.

Next, the reported measures in Table 5 are compared between the subscales, extreme values are highlighted and cautiously interpreted (for raw responses compare Table 2). First, sensitivity values describe the difficulty of the scenarios and have already been reported in Table 2. Second, for the 20 scenarios whose attractors are divergent from the perspective of differentiation, the participants’ responses that identified that the most appropriate strategy option is discordant from the target perspective of differentiation (specificity) range from 84% for elaboration up to 95% for rehearsal. Thus, high specificity for rehearsal strategies indicates that students selected the rehearsal strategy least incorrectly (few false alarms). On the other hand, low specificity of elaboration responses (due to many false alarms), indicates that students selected this strategy frequently in inadequate scenarios (see also distribution of responses for elaboration in Table 2). Third, for the strategy-responses concordant with the perspective of differentiation the ratio of correct responses among all responses of this strategy (precision) ranges from 47% for evaluation to 77% for rehearsal. This result indicates that evaluation strategies are selected most incorrect relative to their correct use (compare also last row in Table 2). For rehearsal strategies students provided the best ratio of correct responses among all responses of this strategy. Overall, the precision measure reveals that the strategy use of rehearsal is more specific than the use of organization, even despite of lower sensitivity values. In conclusion, the presented sensitivity values translate to subscale’s difficulty and the precision values provide insights into which strategies were selected least incorrectly.

Study 2: Seeking help proficiently may benefit academic success

Based on the modeled assumptions in Figure 1 we disentangle the effects of help-seeking behavior, the competence of using social resources, and academic success measures. Students with a higher competence are expected to make more effective use of help-seeking episodes and hence perform better on academic success indicators (compare RQ-2 in Section “Modeling how the effective use of academic help-seeking enhances academic success”). To test this hypothesis we have gathered data as a part of a larger study which accompanied students during their first-semester (Schlusche et al., 2021). The sample consists of n = 47 chemistry students (17 to 24 years old) and n = 73 civil engineering students, (18 to 31 years old). Data was collected at three measure points: 2 months after the semester started (t1; 12/2018), few weeks before the examination phase at the end of January 2019 (t2) and after exams in April 2019 (t3). The study was approved by the local ethics committee (ethics vote ID: 2108PFSC5731).

Measures

Successful academic help-seeking behavior was assessed with a single item for received support. The response options ranged from “very rarely” (1) to “very frequently” (5) on a 5-point equidistant response scale. The item “How often was the content-related or organizational support which you received during the past 2 weeks from peer students actually helpful?” (translated) assessed the frequency of successful help-seeking among requested help.

Students’ satisfaction with their study situation (Westermann et al., 1996) was assessed with the subscales satisfaction with contents of study program, conditions of studying, and coping with study-related strain with 12 items overall (chemistry: α = .85; engineering: α = .82). Answers ranging from “disagree” (0) to “agree” (3), higher values indicate higher satisfaction. Example items are: “I really enjoy what I study.” (translated) or “The external circumstances under which students in my subject study are frustrating.” (inverse item, translated).

Self-reported grades after the first semester weighted by credit points operationalized academic achievement (lower grades indicate better performance). Chemistry students received three grades maximum (19 credits, data of an additional four-credit practical course was not available) whereas civil engineering students received five grades maximum (29 credits). Calculation of academic achievement involved available grades for each participant that were weighted by their respective credit value. This weighting of grades accounts for scheduled workload. Students’ ratings and actual grades are expected to have at least substantial agreement (Cohen’s κ; Landis and Koch, 1977) based on project-data within the Research Unit (two samples: N1 = 31; N2 = 43).

The competence of using social resources was assessed with the Situational Judgment Instrument (see Section “Situational assessment of the competence of using social resources”; see Supplemental Appendix A). This competence is measured using written learning scenarios that the participants respond to with one of the five learning strategies: organization, elaboration, evaluation, argumentation, and rehearsal. These five strategies are single-choice response options for all scenarios. The Situational Judgment Instrument comprises 20 scenarios of knowledge-related obstacles during learning. Four scenarios share the same learning strategy as an attractor response. Each scenario describes a problem and a goal for the situation followed by the question “How do you proceed?” (see Table 1 for an example). The correct answers for all scenarios were summed up to a score which can range from 0 to 20. The subscales with the highest internal consistency are organization (chemistry: α = .74; engineering: α = .79) and rehearsal (chemistry: α = .68; engineering: α = .64); these are followed by elaboration (chemistry: α = .46; engineering: α = .42); whereas evaluation (chemistry: α = .34; engineering: α = .13) and argumentation (chemistry: α = .41; engineering: α = .28) demonstrate the lowest internal consistency. The whole scale comprising all 25 scenarios reaches internal consistency values higher than every individual subscale’s consistency (chemistry: α = .81; engineering: α = .82).

The application of (meta-)cognitive learning strategies was assessed with the Inventory for Assessing Learning Strategies in Higher Education (German: Inventar zur Erfassung von Lernstrategien im Studium (LIST)) developed by Wild and Schiefele (1994). Items were assessed on a 5-point scale assessing frequency (1: very rarely; 5: very frequently) grouped by the administered subscales organization, elaboration, investigation, and rehearsal.

Results

We hypothesized an association between successful academic help-seeking and academic success measures, which is expected to be moderated by the competence of using social resources (see RQ-2 in Section “Modeling how the effective use of academic help-seeking enhances academic success”). In the following, between-group comparisons are calculated to check for differences between both subsamples. The between-group comparisons were estimated either using the Wilcoxon Rank Sum Test (non-normally distributed) or Welch’s t-Test (normally distributed). Finally, two moderation models differing in their criterion academic achievement or students’ satisfaction are estimated. For regression analyses each dependent variable has been tested for homogeneity with Levene’s test. Moderation models have been estimated with PROCESS for R v.4.0.2 (and above) using percentile bootstrapping procedure and unstandardized, bootstrapped beta coefficients are reported (Hayes, 2018). Both predictors have been assessed twice at measure points 1 and 2. The academic success measures were assessed once at the end of the study at measure point 3. All regression models were calculated with predictors of measure point 2 explaining variance in criterions of measure point 3 thereby focusing on the weeks before the exams.

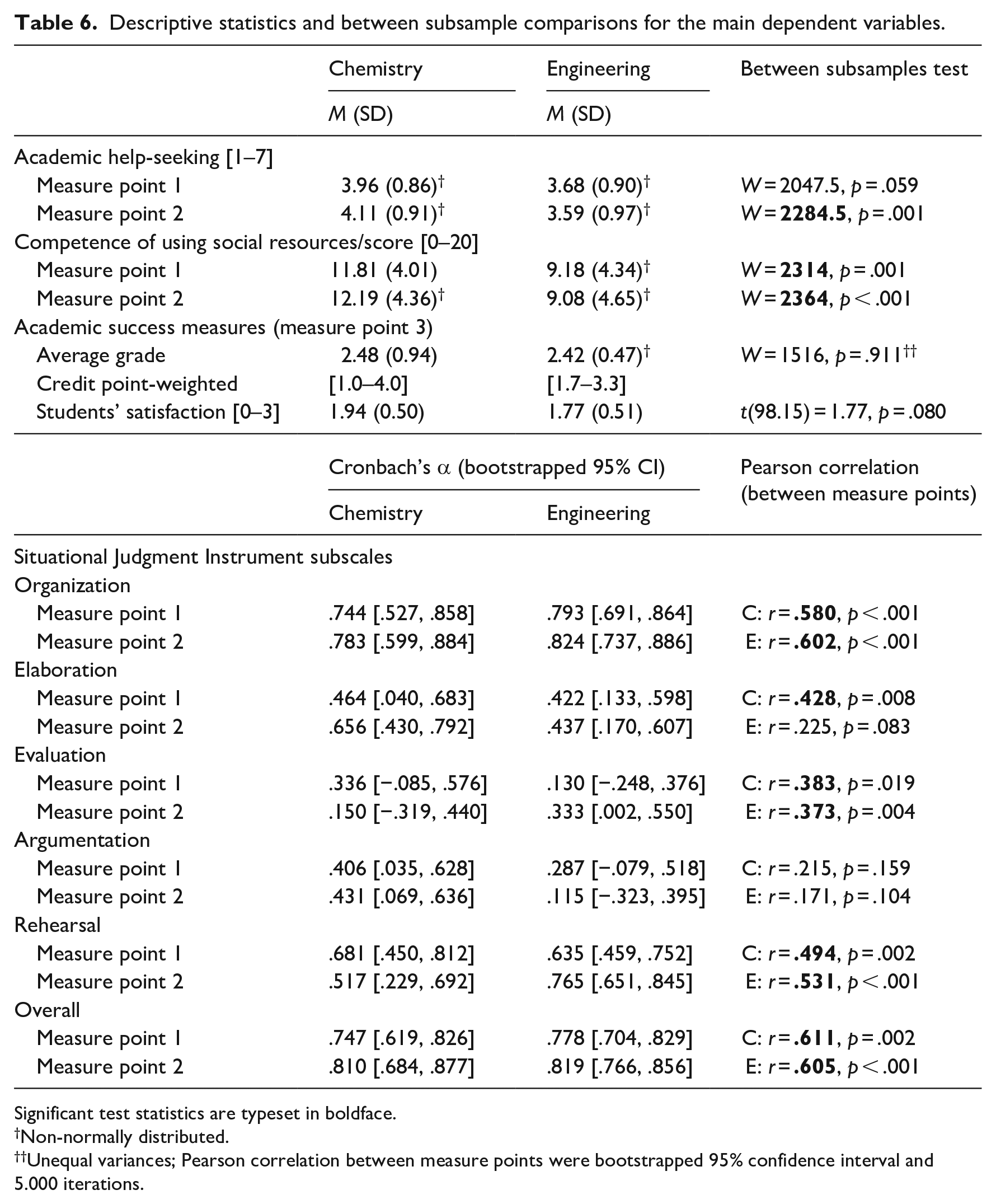

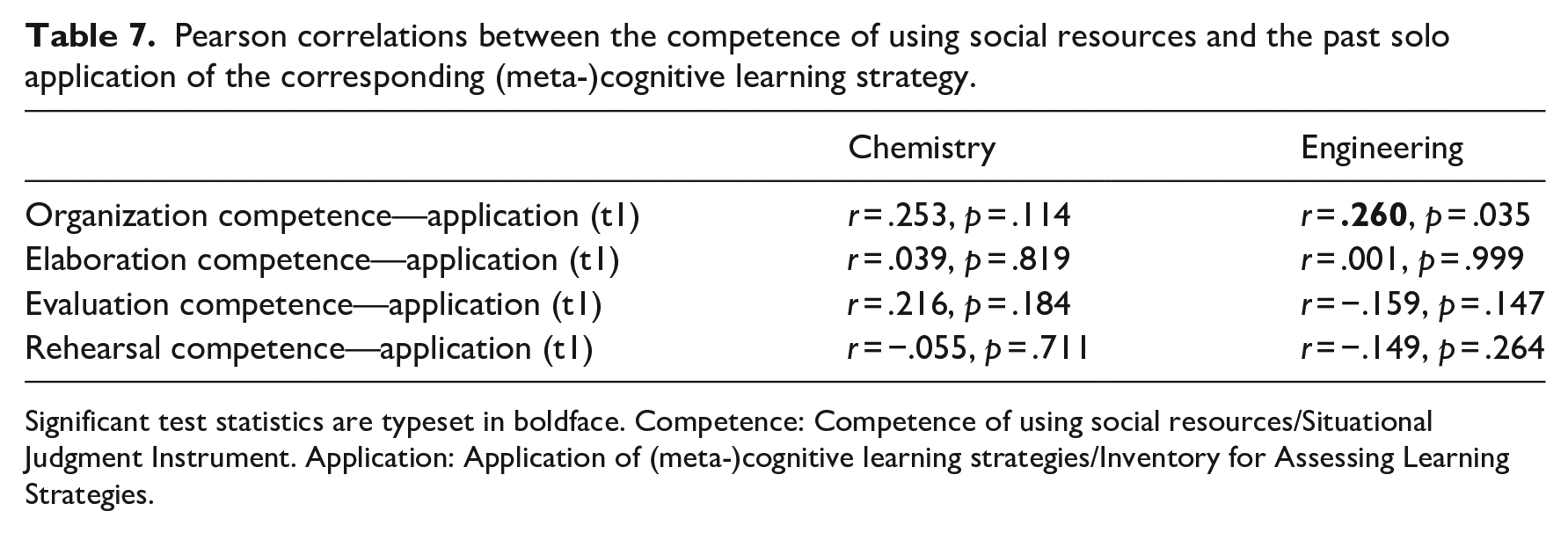

Descriptive statistics and comparisons for the main dependent variables split by subsample are presented in Table 6. Help was sought with similar frequency by both subsamples for measure point 1. However, chemistry students sought more help than engineering students at measure point 2. Further, the competence of using social resources is consistently higher for chemistry students. Finally, academic success measures do not differ between both subsamples. Due to the significant differences between both subsamples in both predictor variables the moderation analyses are performed separately for each subsample. Moreover, in the second half of Table 6 internal consistency and retest-reliability measures are reported. In the following the subscales of study 1 comprising five-items (see Table 3) are compared with the subscales of study 2 comprising four-items (see Table 6) regarding subscales’ internal consistency. First, the organization and elaboration subscales have similar internal consistency at both studies. Second, the rehearsal subscale seems to have improved descriptively in study 2. Third, evaluation and argumentation subscales show poor internal consistency for both studies. Overall, study 2 shows bootstrapped internal consistency values between .115 on argumentation and .824 on organization for engineering and .150 on evaluation and .783 on organization for chemistry students (see Table 6). Webster et al. (2020) report a range of internal consistency between .29 and .91 in their meta-analysis on situational judgment tests. Guenole et al. (2017) discuss that internal consistency among situational judgment tests is “on the whole quite poor” (Guenole et al., 2017: 3). Six-week retest-reliability between measure point correlations for organization, evaluation, and rehearsal subscales are statistically significant for both subsamples (see Table 6). For the elaboration subscale, scores at both measure points correlate for chemistry subsample, but not for the civil engineering subsample. The scores of the argumentation subscale do not correlate across measure points for both subsamples. Next, regarding indicators of convergent validity, the application of (meta-)cognitive learning strategies was assessed. These activities are grouped into subscales that correspond with the subscales of the Situational Judgment Instrument. Table 7 reports correlations at measure point 1 between the conditional strategy knowledge (Situational Judgment Instrument) and the frequency of learning strategies’ application (Inventory for Assessing Learning Strategies in Higher Education). Except for the engineering subsample on the organization subscales, there were no significant associations found. The actual application of strategies may depend on whether students were intensely studying. Thus, future studies should investigate this association as close as possible to the actual examination.

Descriptive statistics and between subsample comparisons for the main dependent variables.

Significant test statistics are typeset in boldface.

Non-normally distributed.

Unequal variances; Pearson correlation between measure points were bootstrapped 95% confidence interval and 5.000 iterations.

Pearson correlations between the competence of using social resources and the past solo application of the corresponding (meta-)cognitive learning strategy.

Significant test statistics are typeset in boldface. Competence: Competence of using social resources/Situational Judgment Instrument. Application: Application of (meta-)cognitive learning strategies/Inventory for Assessing Learning Strategies.

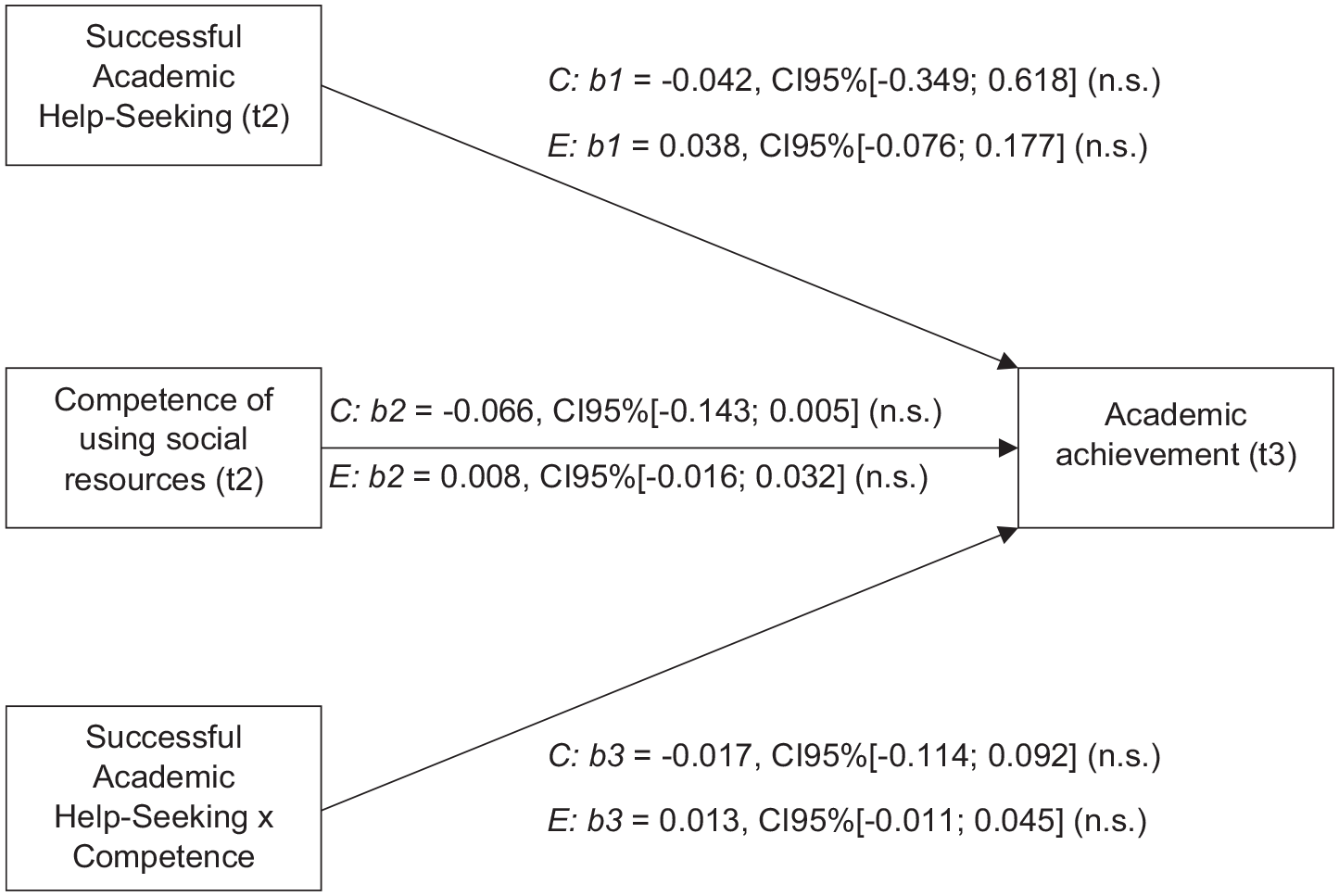

Moderation model predicting academic achievement

Regression’s residuals for engineering students were not normally distributed. To account for this, we applied bootstrapping procedures for all regression models. For each subsample it was tested whether students’ weighted average grade/academic achievement (AM) can be predicted by academic help-seeking behavior, the competence of using social resources and their interaction-term (see Figure 2). Bootstrapped unstandardized beta-coefficients and their 95% confidence intervals are reported. For chemistry students the model does not explain a significant amount of variance (F(3, 37) = 1.918, R2 = .108, p = .234). Thus, considering all predictors in one model, academic help-seeking (path b1), the competence of using social resources (path b2), and their interaction term (path b3) were not sufficient to predict the weighted average grade. Additionally, explorative bivariate correlation analyses showed that the competence of using social resources correlates with academic achievement (chemistry: competence (t1)—achievement (t3): r = −.293, p = .044, competence (t2)—achievement (t3): r = −.321, p = .038). It seems obvious that the association between the competence and achievement is not due to seeking help (as the interaction effect in the regression model is not significant). Hence, it can be assumed that the competence may be explained by more general constructs such as general cognitive ability (amongst other concepts). From this perspective, this result is in line with research from McDaniel et al. (2001), who point out that situational judgment tests are associated with general cognitive abilities.

Linear regression model predicting academic achievement factoring in successful academic help-seeking, the competence to make knowledge-related use of social resources and their interaction term. Bootstrapped unstandardized beta-coefficients and their 95% confidence intervals are reported.

For engineering students the model does not explain a significant amount of variance (F(3, 69) = 0.564, R2 = .024, p = .641). Thus, neither academic help-seeking (path b1), nor the competence of using social resources (path b2), nor their interaction-term (path b3) were sufficient to predict weighted average grade/academic achievement (AM). Overall, the hypothesized associations could not be found empirically for academic achievement (see the Section “Research data” for a link to a detailed description of the model and its output). In contrast to the chemistry subsample, for engineering students bivariate exploratory analyses did not find a relationship between competence of using social resources and achievement (engineering: competence (t1)—achievement (t3): r = .171, p = .118, competence (t2)—achievement (t3): r = .105, p = .354).

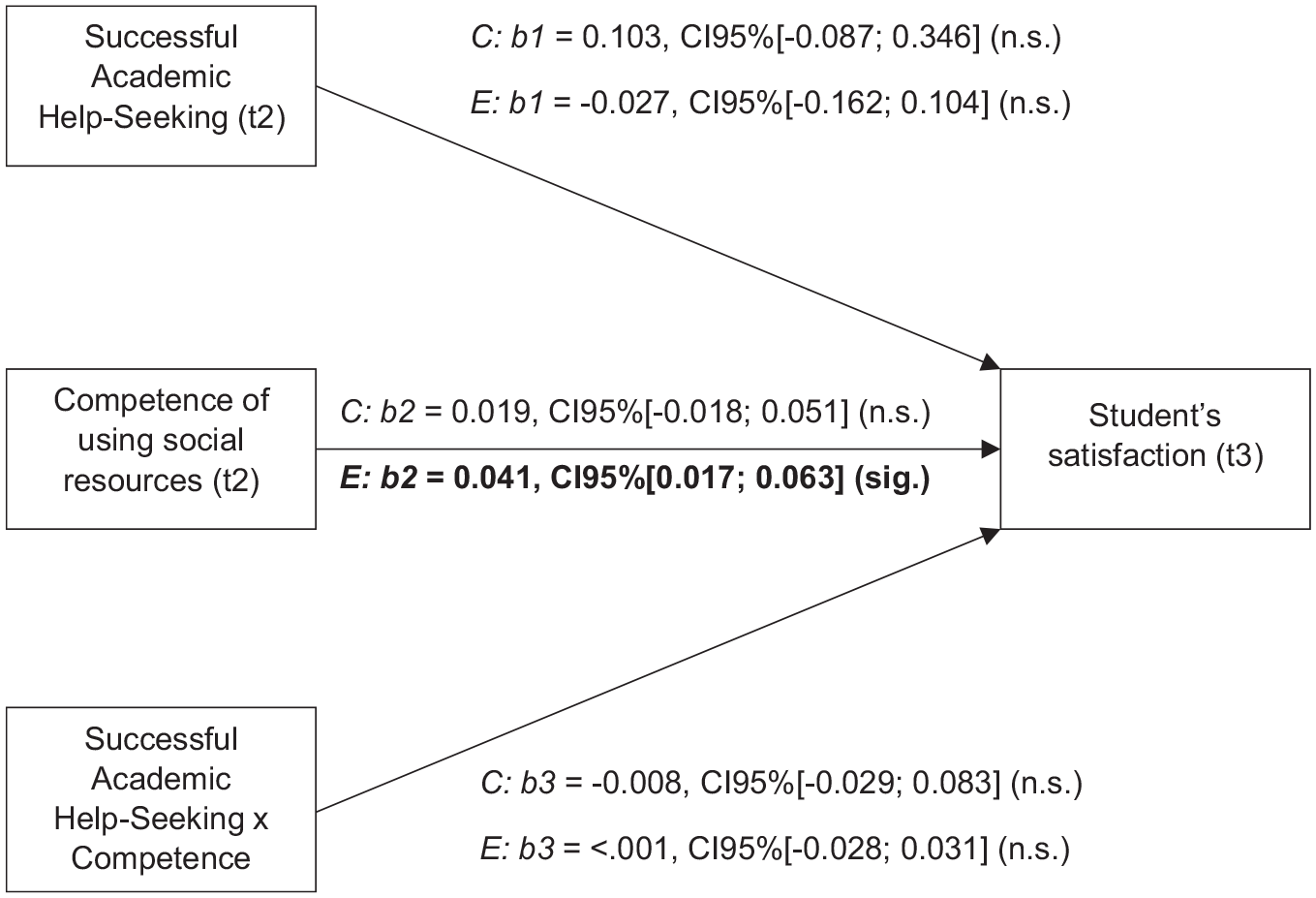

Moderation model predicting students’ satisfaction

For each subsample, we tested whether students’ satisfaction can be predicted by academic help-seeking behavior, the competence of using social resources, and their interaction-term (see Figure 3). For chemistry students the model does not explain a significant amount of variance (F(3, 43) = 1.596, R2 = .100, p = .204). No main effects on students’ satisfaction were found, neither for help-seeking behavior (path b1), nor for competence of using social resources (path b2). Similarly, their interaction effect did not reach statistical significance (path b3).

Linear regression model predicting student’s satisfaction factoring in successful academic help-seeking, the competence to make knowledge-related use of social resources and their interaction term. Bootstrapped unstandardized beta-coefficients and their 95% confidence intervals are reported.

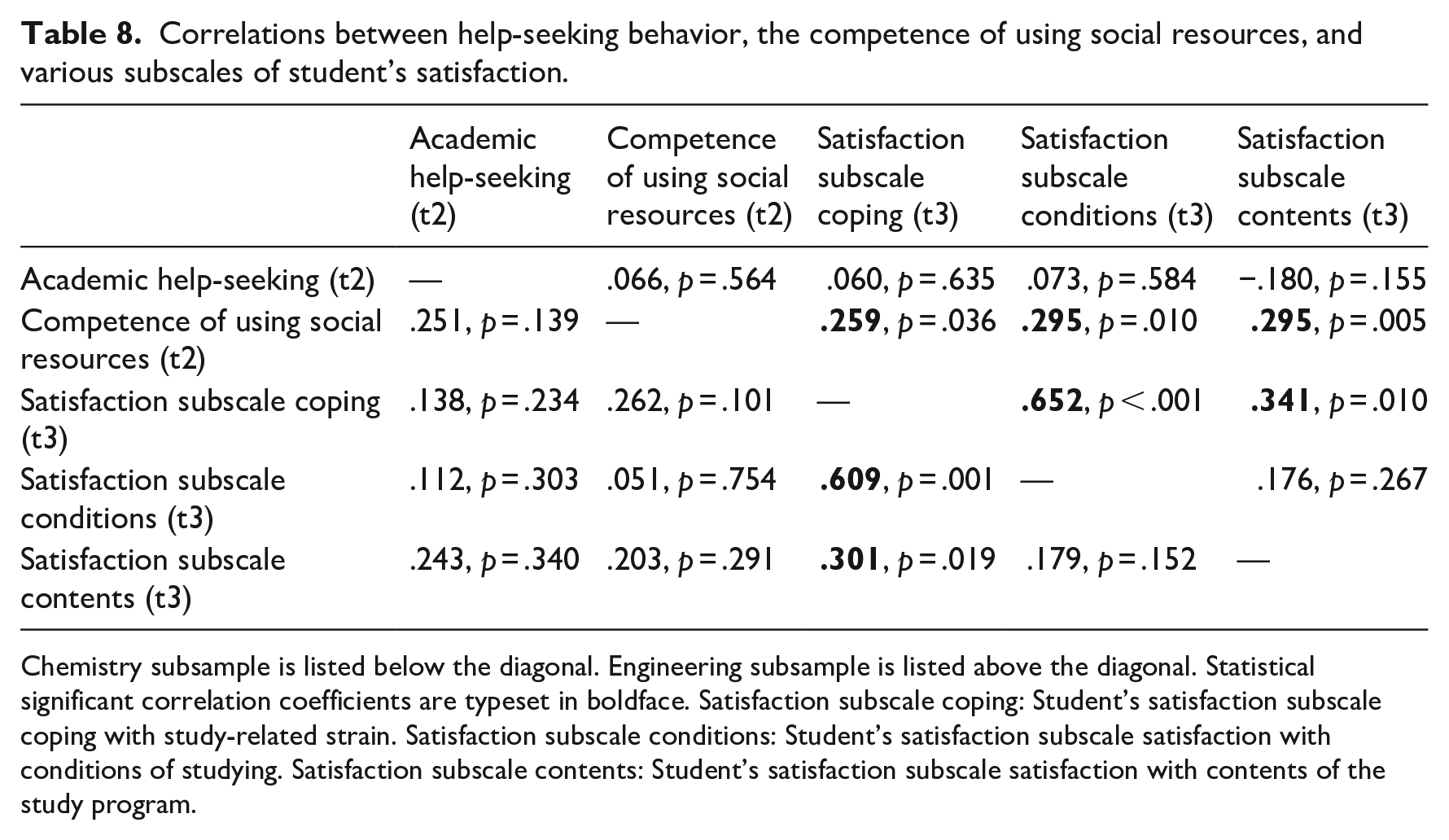

In contrast, for the engineering students the model does explain a significant amount of variance (F(3, 69) = 3.890, R2 = .145, p = .013). Although, no main effect of help-seeking behavior on students’ satisfaction was found (path b1). Nevertheless, we found a main effect of the competence of using social resources on students’ satisfaction (path b2), hence for students with average values on both predictors, an increase of the score for the competence of using social resources by 1 unit leads to an increase of satisfaction by 0.041. Finally, no interaction effect on students’ average satisfaction was found (path b3). Overall, when considering students’ satisfaction as the criterion, the hypothesized associations were not found for neither subsample. One exception is the association between the competence of using social resources and students’ satisfaction for the engineering subsample. A closer look focuses on the subscales of student’s satisfaction and their bivariate associations with the competence of using social resources (see Table 8). The competence was not related with chemistry students’ satisfaction (below diagonal), whereas for engineering students the competence is associated with every subscale of student’s satisfaction. Being competent to make use of social resources by applying (meta-)cognitive learning strategies correlates with students’ satisfaction and is not limited to the subscale of coping with study-related strain (for engineering students).

Correlations between help-seeking behavior, the competence of using social resources, and various subscales of student’s satisfaction.

Chemistry subsample is listed below the diagonal. Engineering subsample is listed above the diagonal. Statistical significant correlation coefficients are typeset in boldface. Satisfaction subscale coping: Student’s satisfaction subscale coping with study-related strain. Satisfaction subscale conditions: Student’s satisfaction subscale satisfaction with conditions of studying. Satisfaction subscale contents: Student’s satisfaction subscale satisfaction with contents of the study program.

Discussion

This article discusses the advantages of applying learning strategies during academic help-seeking episodes with peers. This hypothesized mechanism forms the basis for the competence of using social resources. Correspondingly, a Situational Judgment Instrument has been developed and first empirical data regarding the difficulty of it’s subscales is presented. Finally, the influence of academic help-seeking and the competence of using social resources on academic success measures was empirically tested. In the following, the results and their implications are discussed in detail.

Study 1: Differentiation of instrument’s subscales

With regard to RQ-1, we conducted item analyses and an analysis using signal detection measures describing in-/correct responses for learning strategies. Internal consistency was below .70 for all subscales, except organization. Low internal consistency is common among multidimensional situational judgment tests and thus retest reliability is a preferred measure (Lievens et al., 2008). Retest reliability was estimated for study 2 and found to be about .61 for the overall score; followed by the organization and the rehearsal subscales (see Table 6). A reason for low reliability could be a less sophisticated competence of first-year students, which may improve over the course of their studies and so may the reliability of the instrument as well. One can assume the application of learning strategies as an opportunity to gain experience and further develop this competence. But, research found that the application of learning strategies does not necessarily increase over the semesters (Fergus, 2022; Foerst et al., 2017).

A further aspect to discuss are the factors influencing subscales’ difficulty. Each learning strategy induces characteristic (meta-)cognitive processes, nevertheless when comparing empirical results across the instrument’s subscales, some strategies are less distinct than others. The three subscales organization, elaboration, and rehearsal received most correct responses and thus were least difficult for students to identify (see Table 2). This might be due to the fact that each of the three strategies promotes rather clearly distinguishable goals, and they are appropriate in different stages when learning new materials. For example, organization supports structuring of new material, elaboration supports understanding of associations within the material and rehearsal supports the retrieval of already encoded knowledge. This differentiation might explain why the correct responses to these subscales were least difficult. Regarding RQ-1, those subscales scarcely separate students with low from those with high ability. In contrast, the two subscales evaluation and argumentation, were solved correctly in less than 50% of cases. Both subscales show lowest internal consistency (see Tables 3 and 6). In the following this finding is discussed from three perspectives.

First, focusing on the content of the scenarios: Perhaps the scenarios that have the scientific method, it’s artifacts and approaches as a subject were less understood by students as they are still less familiar with these practices (see discussion later in Section “Outlook”).

Second, the strategies itself could be unclear: Difficulties in conditional strategy knowledge may stem from scarce application of these strategies. Klingsieck (2018) investigated the actual application of strategies and found a similar order of ranks: most frequently applied were organization strategies, followed by rehearsal, elaboration, and evaluation strategies. This is similar to our results of participants’ correct responses (see Table 2). In the light of these results it seems reasonable that the frequency of actual application of strategies might be associated with the quality of conditional strategy knowledge. Thus, a plausible explanation is that evaluation and argumentation are not used frequently, hence student’s are less able to identify them in correct situations.

A third perspective focusses less on students’ performance and more on the instrument’s design: the priorly stated difficulty of evaluation and argumentation scenarios is influenced by the difficulty of their distractor responses. A closer look at the strength of the distractors revealed that elaboration, evaluation, and argumentation are among the strongest distractors for each other and their respective strength is roughly homogenous (see respective rows in Table 2). All three strategies have in common that the intended (meta-)cognitive processes aim to integrate current materials with prior knowledge. Related to the expected cognitive processes there is a kind of generality of the elaboration option, because it aims for receiving explanations. Similarly, explanations may also be the byproduct of evaluation and argumentation. This conceptual generality provides an overlap with the goals and induced processes of scenarios designed for elaboration, evaluation, and argumentation. In line with the argument that the three mentioned strategies have a conceptual overlap is the approach by Weinstein and colleagues (2011) who divide (meta-)cognitive learning strategies in organization, elaboration, and rehearsal strategies. Among their criterions for elaboration are active cognitive processing and adding or modifying the material with the goal to increase sense-making or ease recall (Weinstein et al., 2011). They also provide examples of complex elaboration strategies such as discussions and analysis with peers (Weinstein et al., 2011), which describe the evaluation and argumentation strategies of the presented instrument in this article. Thus, disentangling elaboration, evaluation, and argumentation in heterogenous scenarios can be expected challenging for developers of and respondents to such instruments. In summary, the discrimination between those strategies may inherently require a higher competence and some experience with the scientific method as it is the scenarios’ subject.

Analyzing incorrect responses provides further insights into the instrument’s property to differentiate students’ conditional strategy knowledge. The precision measure describes correct among all selections of a strategy (see column precision in Table 5). Rehearsal and organization strategies are among the three strategies with most correct responses (sensitivity) and at the same time they were least selected for incorrect responses (precision, specificity). This high precision may be explained by taking a conceptual perspective on the induced (meta-)cognitive processes: Rehearsal and organization strategies may induce predominantly selection (and recall) processes. This distinguishes them from the processes induced by elaboration, evaluation, and argumentation strategies that aim more weighted at integration processes. The clear differentiation of the induced (meta-)cognitive processes seems a reasonable explanation for the high precision among rehearsal and organization strategies. In conclusion, first-semester students seem to have high abilities in identifying rehearsal or organization strategies. Due to similarities in (meta-)cognitive processes of elaboration, evaluation and argumentation strategies correct responses were difficult and not as selective as desired. Future studies should improve their selectivity by further investigating students’ understanding of these three response options and the goals of the scenarios for these subscales.

Study 2: The competence’s influence on academic success

Regarding research question 2, we have examined in how far successful help-seeking leads to increased academic success and whether the competence of using social resources may strengthen this association (moderation effect; see Section “Results”). Contrary to our expectations, for both examined study programs, academic help-seeking behavior was neither associated with academic achievement nor with students’ satisfaction. Academic achievement was not predicted neither by academic help-seeking nor by the competence of using social resources nor their interaction for any of the subsamples (see Figure 2). Nevertheless, only for the chemistry subsample explorative bivariate correlations between the competence and achievement were found. Next, research questions 2 was also addressed by examining students’ satisfaction as a criterion. It was found for both study programs that academic help-seeking does not predict students’ satisfaction. Notably, for engineering students, the competence of using social resources is associated with students’ satisfaction. When interpreting the priorly mentioned results, the rather low internal consistency should be considered (see Table 6), which however is not untypical for SJTs (Lievens et al., 2008). Subscales’ scores are associated when re-measured after weeks (retest reliability) except for the elaboration subscale for engineering and the argumentation for both subsamples. That said, most of the correlations are below .50 and they are expected to be higher to be considered good (Lievens et al., 2008; Neubauer and Hofer, 2022). Low reliability indicates that a further iteration of qualitative and quantitative research and evidence-based improvement is recommended for the instrument. Moreover, it was tested in how far the competence of using social resources is associated with the actual application of the corresponding single learning strategies. This could not be shown except for the organization subscale for engineering students (see Table 7). Research has shown that there are various reasons why students do not apply the most beneficial learning strategies despite knowing about them (e.g., lack of time; Foerst et al., 2017). When strategy application was measured there were still multiple weeks until the exams, hence one could consider that at that time the students had not yet started their sophisticated learning routines.

The lack of central empirical associations implies the necessity to reflect on the hypothesized model. In the following we discuss the operationalization of help-seeking and recommend adaptions to the items used. The results regarding both criterion variables academic achievement and student’s satisfaction are discussed in summary, as there were no relationships between successful academic help-seeking episodes and any tested academic success measure. Although we did not find an effect of help-seeking, a meta-analysis conducted by Richardson and colleagues (2012) estimated the influence of help-seeking strategies as r = .15, 95% CI [.08, .21]. In our study, the estimated beta coefficients for the linear regression of help-seeking predicting academic achievement and similarly to students’ satisfaction were far smaller (see Figures 2 and 3). There is a noticeable lack of correspondence between our empirical results and those of the meta-review, which may be caused by differences in the measurement of academic help-seeking: Our operationalization of successful academic help-seeking included content-related (e.g., learning domain) as well as organizational (e.g., learning at university) help. Both components may not contribute equally to academic achievement. Thus, future research is advised to differentiate both types of help sought by utilizing separate items. Next, from a conceptual perspective it is evident that seeking help contributes to the learning processes (and thus may improve success measures), but this association could not be found empirically. Hence, we conclude from this result that successful help sought is not sufficient to predict gain in academic success. For example, students asking the most questions (and may experience the most successful episodes) may have the most problems to begin with, but do not necessarily ascent to high performers in the cohort. Thus, we suggest that successful help-seeking episodes require a differentiation of qualitative aspects to estimate their contribution to student’s understanding relevant for academic success. Differentiating instrumental and executive help-seeking may be advantageous as both behaviors may have opposite effects on academic achievement (Algharaibeh, 2020). Additionally, surveying intentions and perceptions of help-seeking behavior may provide further indicators for qualitative differences in individual help-seeking behavior (Huet and colleagues, 2013; Karabenick, 2003). A qualitative differentiation of help-seeking behavior and considering intentions and perceptions toward help-seeking may improve the prediction of academic success measures.

In the following, a light is shed on the possible origin of the competence and possible reasons why it lead to the empirical results. For engineering students, a positive association between the competence of using social resources and students’ satisfaction was found, but without involvement of help-seeking behavior (see Section “Moderation model predicting students’ satisfaction”). The competence was expected to affect help-seeking episodes, instead it is directly associated with satisfaction. This may be due to the fact that satisfaction was measured comprising various subscales, amongst them coping with study-related strain. It can be expected that a higher conditional strategy knowledge for the individual application of learning strategies may overlap with the competence applying them with a peer. From this perspective, individual and social learning strategies may contribute to the ability of coping with study-related strain. In exploratory analyses bivariate associations of the competence with all subscales of students’ satisfaction were revealed for engineering students; for chemistry students the competence was not associated with students’ satisfaction (see Table 8). These mixed findings imply that further systematic investigation is needed. It seems plausible for engineering subsample that the competency could generalize to students’ satisfaction as the other subscales study conditions and contents of the study program are also associated with the competence (see Table 8). In conclusion, more research is necessary to empirically examine the instrument’s interrelatedness with conceptually underlying constructs (e.g., students’ satisfaction). Feraco and colleagues (2022) modeled expected influences of soft skills (adaptability, curiosity, initiative, leadership, perseverance, and social awareness) on the outcomes achievement or life satisfaction mediated via experienced emotions at school and self-regulated learning. Interestingly soft skills promote self-regulated learning and this behavior improves academic achievement. When taking emotional constructs into account soft skills promote positive emotions these positively influence self-regulated learning and this behavior improves life satisfaction and motivation. These findings illustrate a general competency such as soft skills can improve behaviors such as self-regulated learning as well as improving outcomes (i.e., achievement and satisfaction) mediated by positive emotions. Similarly, the competence to make use of peers may be interrelated with further, more general constructs that explain it’s direct association with students’ satisfaction.

The competence of using social resources was assessed as conditional strategy knowledge in typical but hypothetical scenarios. The construct has no behavioral component; hence it remains unclear in how far the competence of using social resources is applied during actual help-seeking episodes. There are plausible reasons to stick to simpler approaches when seeking help: Students may assess the required mental effort for social learning strategies as too high and as a consequence make insufficient use of them. Reducing the effort within the learning process (e.g., seeking less elaborated help) may depend on motivational factors such as the subjective value for understanding specific content. Similarly, Kirk-Johnson and colleagues (2019) have found that students who apply a learning strategy that is perceived as more effortful, namely retrieval practice (self-testing after units) compared to restudying (relearning materials), interpret their higher mental load as less effective for learning and are less likely to decide on the more effortful strategy later on. Similarly, Foerst and colleagues (2017) report that when asked for reasons why individual learning strategies were not applied, students cite a lack of time, insufficient perceived benefit, or effortful use (amongst others). Additionally, students reported to adapt their individual strategy use to various factors such as interest, previous knowledge, or assessment formats (García-Pérez et al., 2021). One reason why competence did not translate into success may have been a production deficit when strategies were available, leveling out potential differences in effectiveness of help-seeking. Hence, to point out the potential of social learning strategies, future studies should assess in how far learning strategies were applied successfully during actual help-seeking episodes.

Outlook

The article presented and discussed the Situational Judgment Instrument’s capabilities to measure conditional strategy knowledge. Beyond that, the instrument allows students to respond in how far they expect to successfully produce a particular learning strategy, thus it is capable to assess indicators of deficiencies in (un-)prompted production such as mediation deficiency, production deficiency, and utilization deficiency (see Supplemental Appendix B; Flavell, 1970; Miller, 1994; Winne, 1996).

For the future development of the instrument further empirical data on students’ understanding of the scenarios as well as students’ familiarity with application of the outlined strategies is necessary. The appropriateness of strategies varies by several factors such as the type of learning materials, students’ characteristics, and the criterion task which assesses students’ performance (Dunlosky et al., 2013). Thus, further empirical research on the type of materials and the situations in which students experience most content-related problems is of interest. An extensive overview of learning situations and appropriate learning strategies were reported in Dresel and colleagues (2015). Examples of scenarios that lead us to the conclusion that there are difficulties in understanding a few of them, are those scenarios that involve materials such as scientific experiment setups and journal articles. Difficulties are represented in low correctness of evaluation and argumentation strategies. First-semester students may have a varying amount of experience with these scenarios as they have had not dealt with such aspects of the scientific process yet. For instance, engineering students frequently report studying with old exams, reading course materials, solving practice problems, and summarizing information (Cervin-Ellqvist et al., 2021). It is likely that students better understand those familiar scenarios. That is why it is arguable in how far scientific-related scenarios fit in the learning situations of first-year students. From this perspective adapting the scenarios to better represent these routines may improve the fit for first-semester students’, this consequently may improve students’ understanding of the scenarios and thus improve external validity of the Situational Judgment Instrument. Next, familiarity with learning strategies is an important aspect answering the scenarios. Sinapuelas and Stacy (2015) interviewed first-year science and engineering students and classified four levels of learning approaches. For instance, level 1: gathering facts is similar to organization strategies and level 4: applying ideas (why is this true?) is similar to investigation strategies (Sinapuelas and Stacy, 2015). Higher levels of learning approaches increases performance in exams, but nevertheless most students applied lower levels of learning approaches (Sinapuelas and Stacy, 2015). In sum, future research is advised to measure the familiarity with strategies for example based on their application.

The identification of outliers and distracted low-scorers is difficult. Very low competence scores may be as expected due to lower expression of the competence, but on the other hand may be due to a lack of attention during the test administration. For example, low scores can be considered the mean value minus two times the standard deviation. Thus, in the chemistry subsample there were three but in engineering subsample there were 18 participants with a Situational Judgment Instrument competence score (measure point 1) of five or less. Future studies with larger samples and observed test administration may provide further evidence for adequate threshold values. Moreover, yes or no questions whether the answers were provided diligently or attention check items may help to further improve the quality of acquired data.

Beyond the presented data from first-semester students, a longitudinal description of the competence’s progression across semesters might be worthwhile. Coertjens and colleagues (2013) found an increased use of critical processing and analyzing strategies (amongst others) during the transition from school to higher education. In line with these findings, we suggest that over the course of studies, students are confronted with more integrative tasks that require them to rely on their prior knowledge. The requirement for and successful application of these techniques are expected to develop the competency to apply these techniques strategically. Future studies should survey cohorts of more advanced students about their competence using social resources and compare test scores as well as subscales characteristics. The instrument presented may inspire researchers to further investigate contributing factors to successful help-seeking episodes and may enable practitioners to provide interventions on learning strategies more selectively.

Supplemental Material

sj-docx-1-alh-10.1177_14697874231168343 – Supplemental material for Competence in (meta-)cognitive learning strategies during help-seeking to overcome knowledge-related difficulties

Supplemental material, sj-docx-1-alh-10.1177_14697874231168343 for Competence in (meta-)cognitive learning strategies during help-seeking to overcome knowledge-related difficulties by Christian Schlusche, Lenka Schnaubert and Daniel Bodemer in Active Learning in Higher Education

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the German Research Foundation (Deutsche Forschungsgemeinschaft, DFG) under Grant “BO 2511/7-1”.

Ethics statement

All procedures were performed in full accordance with the ethical guidelines of the German Psychological Society (DGPs, https://www.dgps.de/die-dgps/aufgaben-und-ziele/berufsethische-richtlinien/) and the APA Ethics code (![]() ) with informed consent from all subjects. At the beginning of the study, participants were informed that the data of this study will be used for research purposes. The two studies reported in this article were approved by the local ethics committee (study 1: 2204PFSC6584; study 2: 2108PFSC5731).

) with informed consent from all subjects. At the beginning of the study, participants were informed that the data of this study will be used for research purposes. The two studies reported in this article were approved by the local ethics committee (study 1: 2204PFSC6584; study 2: 2108PFSC5731).

Supplemental material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.