Abstract

Initiating effective feedback processes is a major goal in university teaching. However, systematic investigations of structural feedback elements making instructor feedback economic, concise, motivating and beneficial for learning are still scarce. In our study, we compare two feedback modes with respect to learning gains and changes in self-efficacy in a quasi-experimental pre-post design. Participants (N = 75 first-year students) received either scoresheet or textual instructor feedback on four individual assignments during a seminar. Outcome variables were knowledge gain, change in self-efficacy and changes in metacognitive monitoring. After the semester, we observed substantial knowledge gains for both feedback groups with only small advantages for scoresheet feedback. In contrast, self-efficacy was relatively stable across the semester and was not influenced by feedback mode. Achievement motivation measures normative ability and challenge-mastery goal orientation did not moderate the observed relationships but influenced knowledge gain and change in self-efficacy directly. Changes in metacognitive monitoring did not depend on feedback mode. Taken together, our data suggest that scoresheet and textual feedback conveying identical feedback content have comparable effects on achievement and self-evaluation measures. For university settings, scoresheets can be recommended as parsimonious feedback tools.

Keywords

Feedback is one of the most effective tools to foster successful learning (Hattie and Timperley, 2007). Contemporary views conceptualize feedback as a process or a series of processes (Henderson et al., 2019) in which learners try to understand evaluations of their performance in a specific situation. It is a major goal in university teaching to initiate effective feedback processes. However, feedback processes can have no or even detrimental effects on student learning (Forsythe and Johnson, 2017; Fyfe and Brown, 2020).

It has been found that students’ engagement with feedback in university quickly deteriorates as students become increasingly dissatisfied with feedback processes, for example, when the feedback process does not include opportunities of dialogue (Ali et al., 2018) or when it is not personalized (Ali et al., 2015). Even if instructors value feedback very highly, they often miss state-of-the-art feedback standards (Knight et al., 2018) because high-quality feedback might result in higher workload; an unrealizable demand given the limited resources of academics (Nicol et al., 2014). That is, instructors in undergraduate courses with many students face the challenge to offer high-quality feedback within an acceptable time frame. From that perspective, we aim to investigate structural elements of feedback that make instructor feedback economic, concise, motivating and beneficial for learning.

Design factors of effective feedback: How should feedback be presented?

A critical question in the feedback process is the choice of feedback mode. Some modes of written feedback meet high quality standards and also take into account the limited resources of lecturers. For example, semi-individualized template feedback with pre-written statements might be perceived as detailed and personalized feedback and offers an interesting combination of parsimony and effectiveness (Crisostomo and Chauhan, 2019). Another approach to concise and efficient written feedback is to provide feedback using rubrics in scoresheets. Rubrics and rubric-like assessment tools seem to be a powerful instrument for learning (Brookhart, 2018). Different views have been expressed on what an appropriate rubric should look like (cf. Brookhart, 2018). As a minimal criterion, we define rubrics in the context of assessment situations as listed requirements that can be marked as fulfilled (yes/no) or rated on a scale as to the degree of their fulfilment (not fulfilled . . . completely fulfilled).

The benefits of scoresheets are often investigated in case studies, evaluated qualitatively or with control groups without any feedback (Brookhart, 2018). Experimental research relying on control groups receiving different modes of feedback is needed to assess the specific impact of scoresheets on feedback effects. Most studies investigate written feedback in naturalistic settings (e.g., Dirkx et al., 2021; Nordrum et al., 2013), in which different feedback effects could be a result of the mere combination of feedback modes. For example, teachers provide more feedback (information on learning progress; Hattie and Timperley, 2007) in comments and more feed forward (information of specific next steps in learning; Hattie and Timperley, 2007) in scoresheets, if they use them concurrently for one assignment (Dirkx et al. 2021). With regard to limited resources of university instructors, an important question is whether one feedback mode (e.g. only scoresheets) suffices for effective feedback. Until now, (quasi-)experimental research on this question is still scare (Evans, 2013).

Feedback effects: What are possible feedback outcomes?

Feedback impacts on a wide range of dimensions, including cognitive skills, motivation and self-assessment skills (e.g. Henderson et al., 2019). So far, research suggests that feedback outcomes are independent of feedback mode. For example, audio, video and text feedback seem to have comparable effects on performance (Espasa et al., 2022). Moreover, self-efficacy (i.e. one’s belief in one’s ability to succeed or accomplish specific tasks; Bandura, 1997) was not differentially affected by written versus verbal feedback (Agricola et al., 2020). However, up to now, written feedback is the predominant feedback form in higher education (Agricola et al., 2020). Therefore, we want to investigate whether different modes of written feedback also have comparable outcome effects. To this end, we focus on three outcome dimensions.

First, we consider cognitive skills by looking at learning gains. Many instructors want to positively influence student’s learning when providing feedback. Meta-analytic evidence confirms that feedback positively affects students’ learning (Wisniewski et al., 2020). Most original studies measured learning in terms of student achievement and, on average, found medium effects (d = 0.51). However, it is possible that feedback mode influences how students can capitalize on feedback. Specifically, the clarity of rubrics in a scoresheet (cf. Brookhart, 2018) might make it easy for students to identify areas in which they can improve. In contrast, in long text passages of textual feedback, important information could be overlooked. Therefore, in the current study, we inspect whether scoresheet feedback has higher effects on learning gain than textual feedback.

Second, we regard motivational factors by looking at self-efficacy. Meta-analytic evidence shows that feedback effects are somewhat lower for motivational outcomes (d = 0.33) than for cognitive outcomes. Importantly, feedback can have unintended as well as intended outcomes on motivation (Fyfe and Brown, 2020; van de Ridder et al., 2015). For example, negative feedback framing using only a subtle phrase already reduces self-efficacy (van de Ridder et al., 2015). Possibly the evaluation with percentages and (un-)ticked boxes in scoresheets highlight errors and incompletenesses. In contrast, longer text passages with formulated assessments could more likely convey that the instructor is invested in the student’s learning. This in turn might affect motivational outcomes such as self-efficacy. Therefore, the current study investigates whether textual feedback has more positive effects on self-efficacy than scoresheet feedback.

Third, regarding self-assessment skills we look at the metacognitive skills of students to assess what knowledge they have already acquired and where they perceive knowledge gaps. This monitoring skill is an essential component to improve performance within self-regulated learning (Kostons et al., 2012) because inaccurate self-evaluations can reduce long-term retention (Dunlosky and Rawson, 2012). Metacognitive training that includes both performance and metacognitive feedback improves not only students’ monitoring accuracy but also performance in a final exam (Händel et al., 2020). However, so far, studies have not disentangled effects of performance feedback and metacognitive feedback on metacognitive skills. Therefore, in the current study, we want to investigate whether performance feedback alone influences metacognitive skills. In addition, the clarity of scoresheets could also offer an advantage over text feedback in terms of identifying knowledge gaps. Thus, we additionally investigate whether the different modes of providing performance feedback are differentially effective.

Feedback moderators: What influences feedback utilization?

Because of the multidimensionality of the feedback process, the utilization of feedback depends on motivational factors (Narciss, 2008). For example, openness to feedback is directly connected to the feedback situation. Openness to external feedback regards how sensitive students are to feedback, how they pay attention to feedback, consider feedback as important and respond to threats implied by feedback (King, 2016). After immediate verbal feedback, students who are very sensitive to feedback exhibited lower performance scores than their peers due to a higher tendency to form negative attributions (King, 2016). This shows that openness to feedback can directly influence how feedback is perceived. Therefore, we investigate openness to feedback as a moderating variable in the feedback process.

Feedback utilization could also be influenced by a student’s achievement motivation. Achievement goals emerge as learning and performance orientation towards a task and are strong predictors of academic performance (Grant and Dweck, 2003; Huang, 2012). Performance orientation is identified by outcome goals (wanting to perform really well), ability goals (demonstrate high abilities), normative ability goals (confirm superiority) and normative outcome goals (outperform others; Grant and Dweck, 2003). In contrast, learning orientation is identified by learning goals (acquiring new skills) and challenge-mastery goals (seeking challenges; Grant and Dweck, 2003). Learning goals are associated with higher intrinsic motivation and greater academic improvement over time (Rawsthorne and Elliot, 1999; Utman, 1997). This effect is mediated by a tendency to a deeper processing of learning material (Grant and Dweck, 2003). Since learning goals are particularly important when facing highly challenging situations with different materials (Richardson et al., 2012), it is likely that they influence performance in first year students. In addition, learning orientation influences learning indirectly by relating to feedback seeking behaviour in two ways: Students with higher challenge-mastery goal orientation, first, generally prefer inferring feedback information from interactions with others (monitoring), and second, show stronger engagement in active feedback seeking by inquiry (Leenknecht et al., 2019). For these reasons, we investigate achievement motivation as a second potential moderator in the feedback process.

Research questions

Thus far, research about written feedback modes offers some promising strategies such as semi-individualized textual feedback (Crisostomo and Chauhan, 2019) and rubric-based scoresheet feedback (Brookhart, 2018) that combine parsimony with effectiveness. However, it remains unclear whether different feedback modes are comparable in their effectiveness. We want to explicitly test whether effects of scoresheet feedback on learning and self-efficacy significantly differ from those of standardized textual feedback. Therefore, we want to assess how learning gain depends on feedback mode (RQ1). Furthermore, we want to investigate whether change in self-efficacy depends on feedback mode (RQ2). With regard to the complexity of the feedback process, we also examine if openness to feedback and achievement motivation have moderating effects on both outcomes (RQ3). Exploratorily, we will look into metacognitive calibration and test to what extent our feedback manipulation influences monitoring accuracy (RQ4).

Method

Sample

The study was pre-registered via OSF (see https://osf.io/a56yh, anonymized dataset and data analysis available under https://osf.io/38rwv/).We conducted the study between October 2019 and January 2020 at a German university, at which first-year students choose either developmental psychology or general psychology as their major field of study. Students were assigned to an obligatory seminar (four parallel seminars per major field of study) with respect to their thematic and scheduling priorities. Students attending developmental psychology seminars (N = 80) worked on assignments for partial course credit and filled out our questionnaires as a monitoring tool. Students were asked on a voluntary basis to give consent for their data to be used in this study without further compensation. All 80 students gave their informed consent. Five participants were excluded from subsequent analyses due to missing posttest data (n = 4) or irregular answer patterns (n = 1). The final sample consisted of 75 students (M = 20.48 years, SD = 2.79, 61 women, 14 men, none non-binary).

Design

We applied a quasi-experimental pre-post design with feedback mode as an independent variable. Participants of two courses received a scoresheet feedback, and the participants of the other two courses received textual feedback with standardized formulations. As dependent variables, knowledge gain, self-efficacy and four metacognitive self-assessment variables were measured at the beginning and the end of the semester. In addition, in the pretest questionnaire, we measured potential moderator variables (openness to feedback and achievement motivation).

Procedure

In the first session of the seminar, students were informed about the study aim and were asked, for experimental reasons, not to share their feedback with each other but to use it as a private learning tool. After that, students signed an informed consent which was stored separately from the study material. Subsequently, students filled out the pretest questionnaire.

For the focus topics of the study, students received an individual assignment at four sessions on a scientific text (overall two textbook chapters and two empirical articles). For each assignment, students had to answer two questions on the corresponding text (one retrieval, one elaborative task; all tasks see Appendix A). In the seminar session before an upcoming assignment was due, the instructor explained the assignment tasks. After that, students could download tasks and texts from the Moodle eLearning system. Students had to hand in their assignment two workdays before the topic was covered in the seminar. They received an individual feedback via Moodle on the evening before the next session. Assignment tasks were discussed at the beginning of the session and students were encouraged to ask questions about their assignments.

At week 10, students filled out the post-test questionnaire. One week later, we debriefed students and showed them preliminary results. Students could request individual feedback on their test scores.

Manipulation of the independent variable: The feedback sheet

The authors rated pseudonymized versions of student’s assignments and prepared individual feedback for each student. To ensure a consistent rating across our two groups, raters filled out a digitalized scoresheet. For the textual feedback-group this scoresheet was automatically transferred into a text by using macros. See Appendices B and C for sample feedbacks. Feedbacks consisted of four parts including the same content modules in both groups.

Part A of the feedback contained information about the correctness of answers. For retrieval tasks, responses were rated with respect to correctness and completeness, for example, whether a definition was correct and complete. In elaborative tasks, such as the development of a timeline, it was assessed whether facts were present, arranged in the correct order and correctly assigned to the corresponding age. At the end of this section, students received a summarizing evaluation. The scoresheet group received the number of points reached (e.g. 8/10 aspects) and an evaluation on a percentage basis (e.g. 80% of the contents were correct). If applicable, potential pre-defined bonus points were denoted. The textual feedback group received a textual evaluation of the proportion of correct content (e.g. predominantly fulfilled). Textual evaluation was classified as not fulfilled (below 10%), predominantly not fulfilled (10–24%), fulfilled to a small proportion (25–49%), half fulfilled (50–74%), predominantly fulfilled (75–99%), completely fulfilled (100%) or fulfilled beyond expectation (100% plus additional bonus points).

Part B of the feedback contained an evaluation of basic formal elements (e.g. suitable typeface, punctuality of submission). All criteria were rated as very good, ok or expandable, respectively, and were designed as a contrast to the rather strict first section.

In part C, structure and readability was rated in terms of sticking to the task, readability and clearness of the produced text, use of paragraphs and academic language. For some tasks, unique task requirements were added (e.g. sequence in reporting results from descriptive to inferential statistics). Criteria were rated as very good, ok or expandable.

Part D on key skills contained feed-forward elements. This section comprised recommendations regarding formal features (e.g. plan time ahead for punctual hand-in) and tips relevant to the assignment task (e.g. search the text for verbal descriptions of tables or graphs). Both feedback modes ended with a general thank you statement and encouragement for the next assignments.

Measures

Dependent variables

Content knowledge was measured with a self-developed task consisting of 20 items covering the topics of the four assignments. Of these items, 16 (four per topic) were constructed in multiple-choice format (one single right answer out of four options), and 4 (one per topic) were constructed in a free answer format (maximum points per item: two points, scoring in half point steps). The test comprised a mixture of questions regarding reproduction and knowledge application. Due to differences in assignment demands, the ratio of application to reproduction questions varied from 2:3 to 1:4. All free-format questions were rated independently by the two authors. Inter-rater reliability for the free-format questions was acceptable to good (Kendall’s τ b : 0.65 ⩽ τ b ⩽ .83). Inconsistencies were resolved by joint judgment in all instances. For each person, a sum score for content knowledge and a change score for knowledge gain (post minus pre) was computed for further analyses.

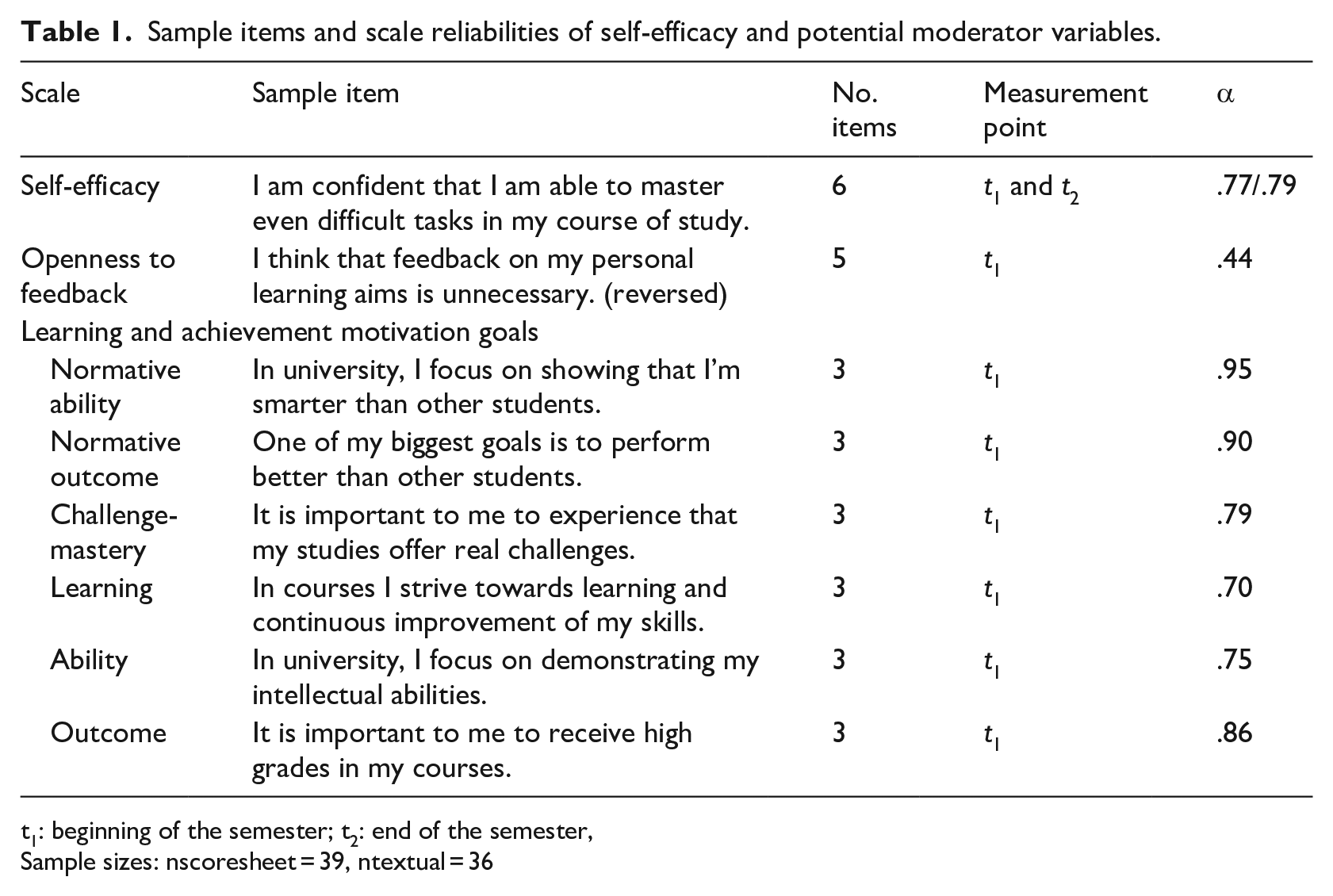

To measure academic self-efficacy, we used an adaptation of the self-efficacy scale by Schwarzer and Jerusalem (1999). We changed some item wordings to fit the university context. As in the original test, academic self-efficacy was measured on a four-point Likert-type scale. Reliabilities are depicted in Table 1. For each person, a mean score and a change score (post minus pre) was computed for further analyses.

Sample items and scale reliabilities of self-efficacy and potential moderator variables.

t1: beginning of the semester; t2: end of the semester,

Sample sizes: nscoresheet = 39, ntextual = 36

Metacognitive self-assessment was measured by asking students for each multiple choice item in the knowledge test whether they thought their response was correct or not. From these responses, we calculated the metacognitive calibration variables bias, accuracy, sensitivity and specificity (Händel et al., 2020). Bias is the signed difference between actual performance and rating of expected correctness, averaged over the 16 test items. Scores can range from −1 to 1, with a score close to zero indicating a realistic judgment. A negative score indicates underconfidence and a positive score indicates overconfidence (Händel et al., 2020; Schraw, 2009). Accuracy is a measure of judgment precision, since it accumulates the number of wrong judgments (overconfident and underconfident judgements) to a theoretical maximum of 1. Sensitivity represents the relative frequency of accurately detected correct answers from all correct answers. Specificity represents the relative frequency of accurately detected incorrect answers from all incorrect answers. Both relative measures have the advantage of taking into account, that each student has solved a given number of items correctly or incorrectly. Therefore, they give additional diagnostic information about how good a student is in predicting their own correct and incorrect responses (Händel et al., 2020).

Moderating variables

As potential moderators Openness to feedback (Meijer et al., 2013) and Achievement motivation (German version of the Achievement Goal Inventory by Sudmann et al., 2014) were measured at the beginning of the semester (see Table 1 for sample items). Due to unreliability of the openness to feedback scale, we dropped this variable as a potential moderator. For the Achievement Goal Inventory, mean scores of each subscale were computed for further analyses.

Data analysis

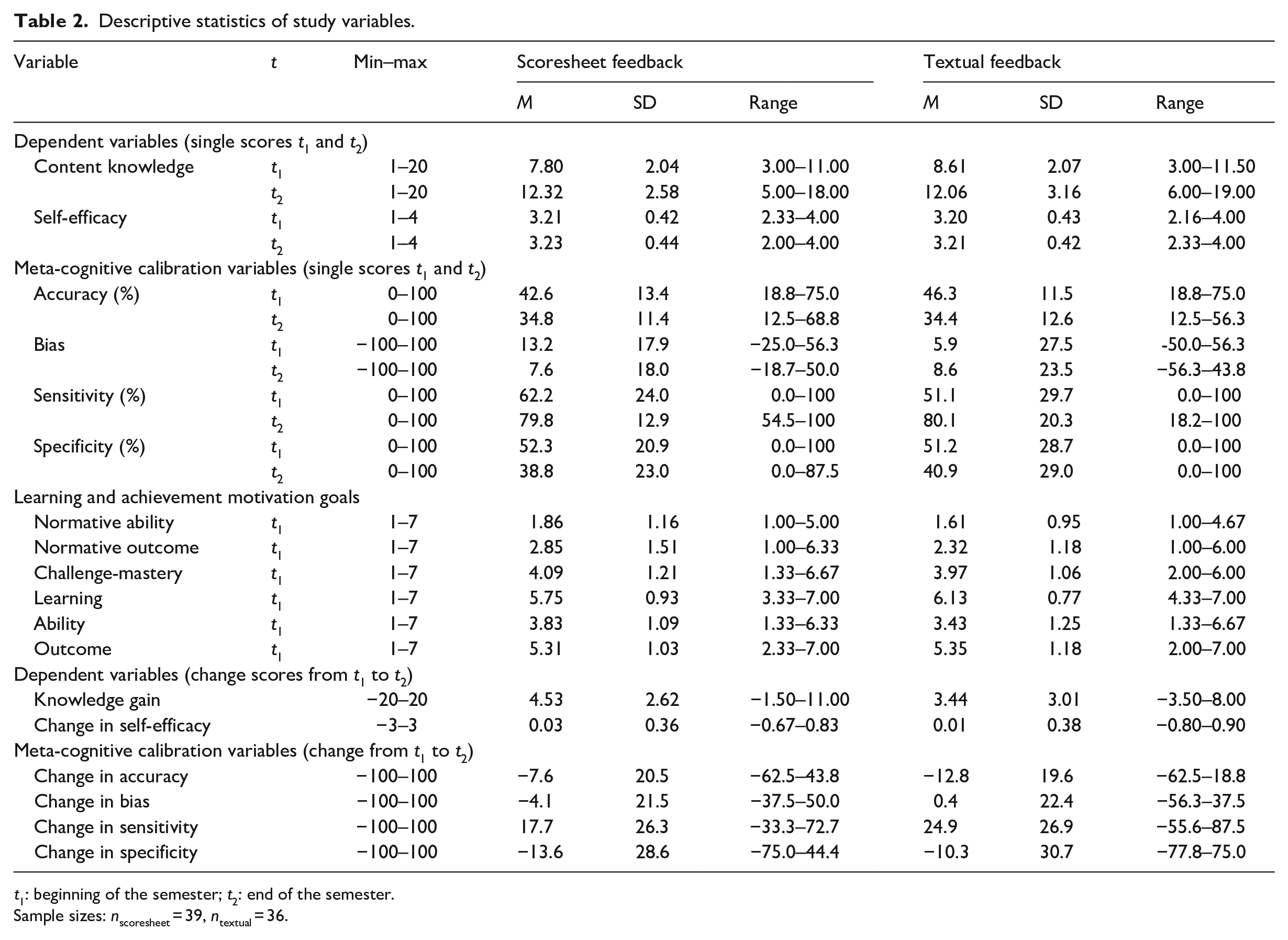

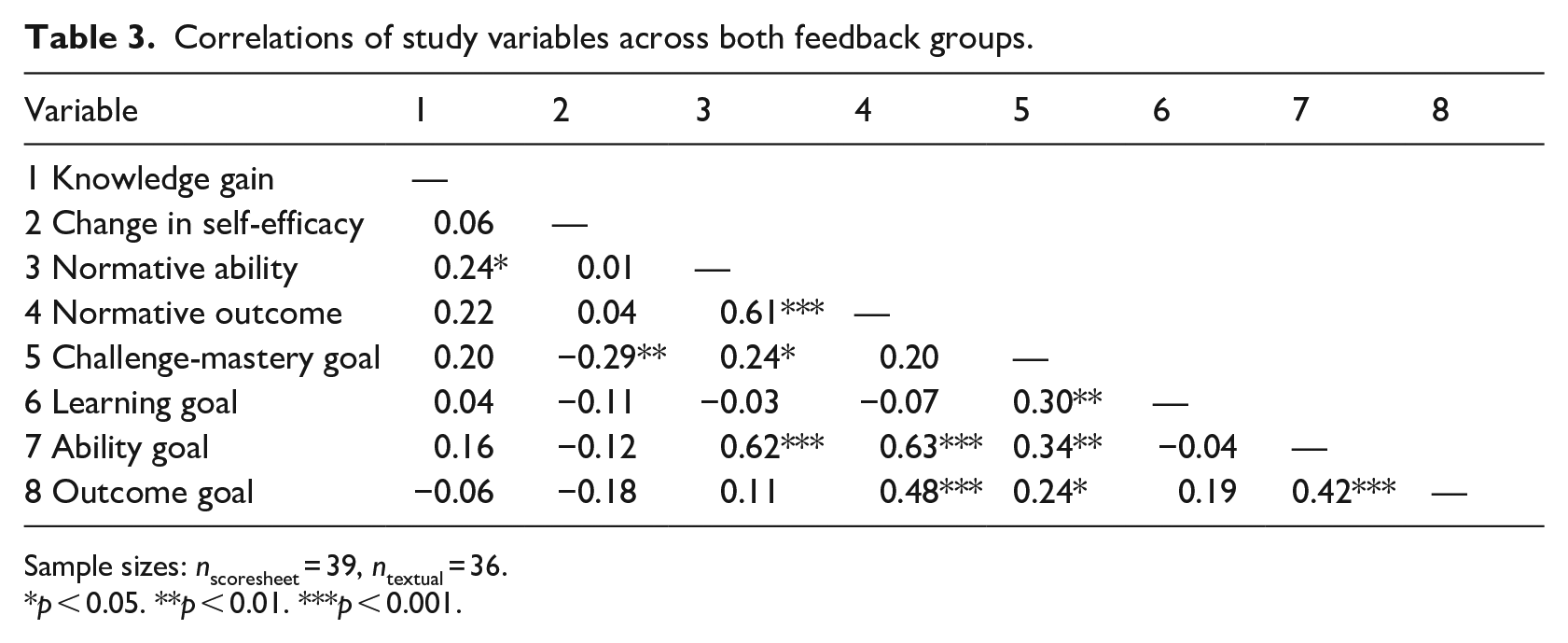

To assess whether feedback mode had effects on knowledge gain (RQ1) and on self-efficacy changes (RQ2), separate univariate, repeated-measures ANOVAS were conducted with the pre-post scores 1 . To check for potential moderators, we assessed whether the achievement motivation subscales correlated with knowledge gain and the self-efficacy change score. Normative ability substantially correlated with knowledge gain (r = 0.24), whereas challenge-mastery substantially correlated with self-efficacy changes (r = 0.29). Therefore, normative ability was entered as a covariate in the ANOVA for content knowledge and challenge-mastery goal was entered as a predictor in the ANOVA for self-efficacy (RQ3). We assessed main effects for time (meaning significant changes on our dependent variables during the semester) and interaction effects of time and experimental condition (meaning different changes of both experimental groups). Significant interactions were plotted using the simple slope analysis online-tool by Preacher et al. (2006). For the fourth research question, we assessed effects of feedback mode on changes in meta-cognitive calibration in univariate, repeated-measures ANOVAS.

Results

Descriptive statistics of pretest and posttest measures are displayed in Table 2. Correlations show that throughout the semester knowledge gain was higher if students reported higher normative ability goals (Table 3). In contrast, self-efficacy decreased if students elicited higher challenge-mastery goals.

Descriptive statistics of study variables.

t1: beginning of the semester; t2: end of the semester.

Sample sizes: nscoresheet = 39, ntextual = 36.

Correlations of study variables across both feedback groups.

Sample sizes: nscoresheet = 39, ntextual = 36.

p < 0.05. **p < 0.01. ***p < 0.001.

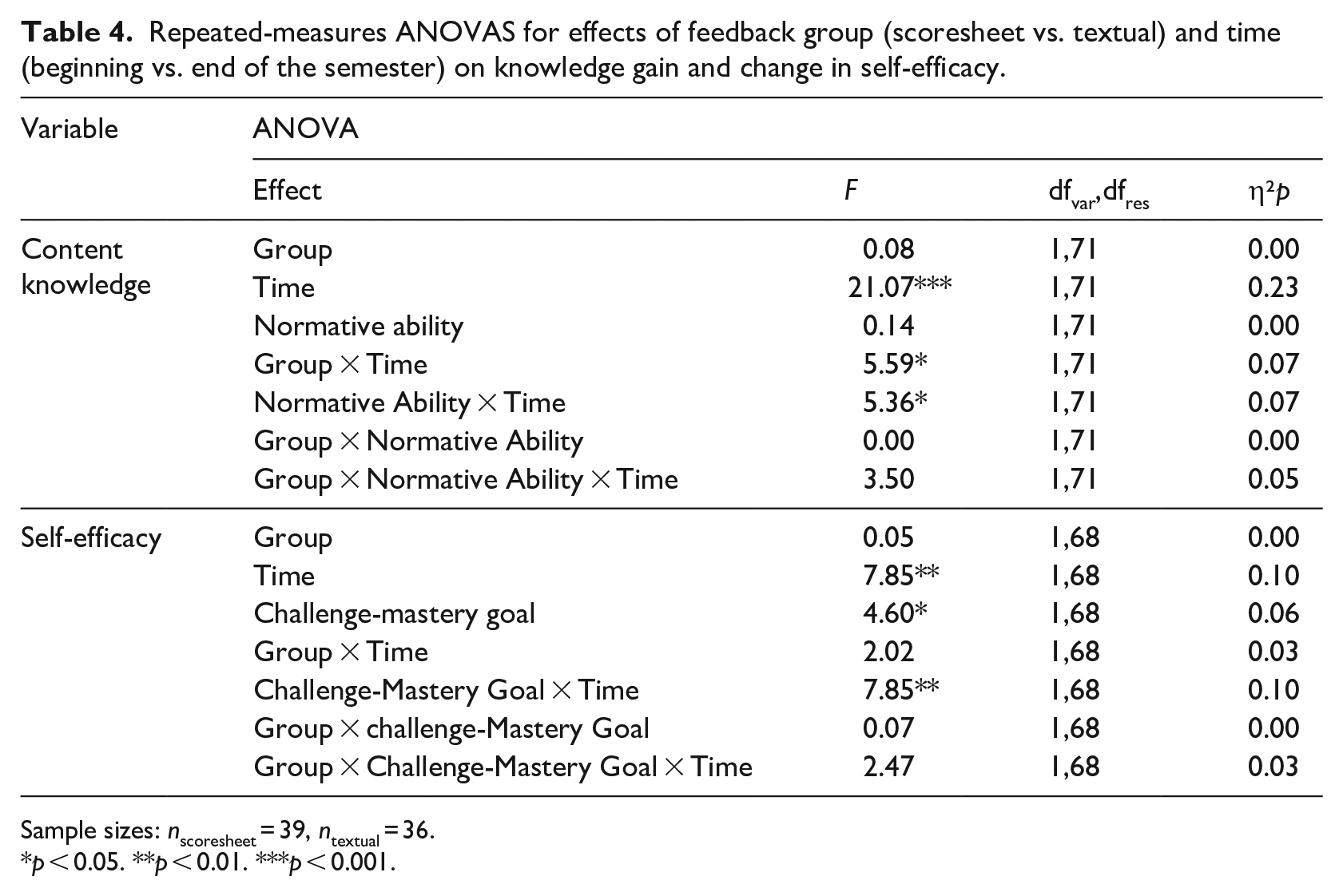

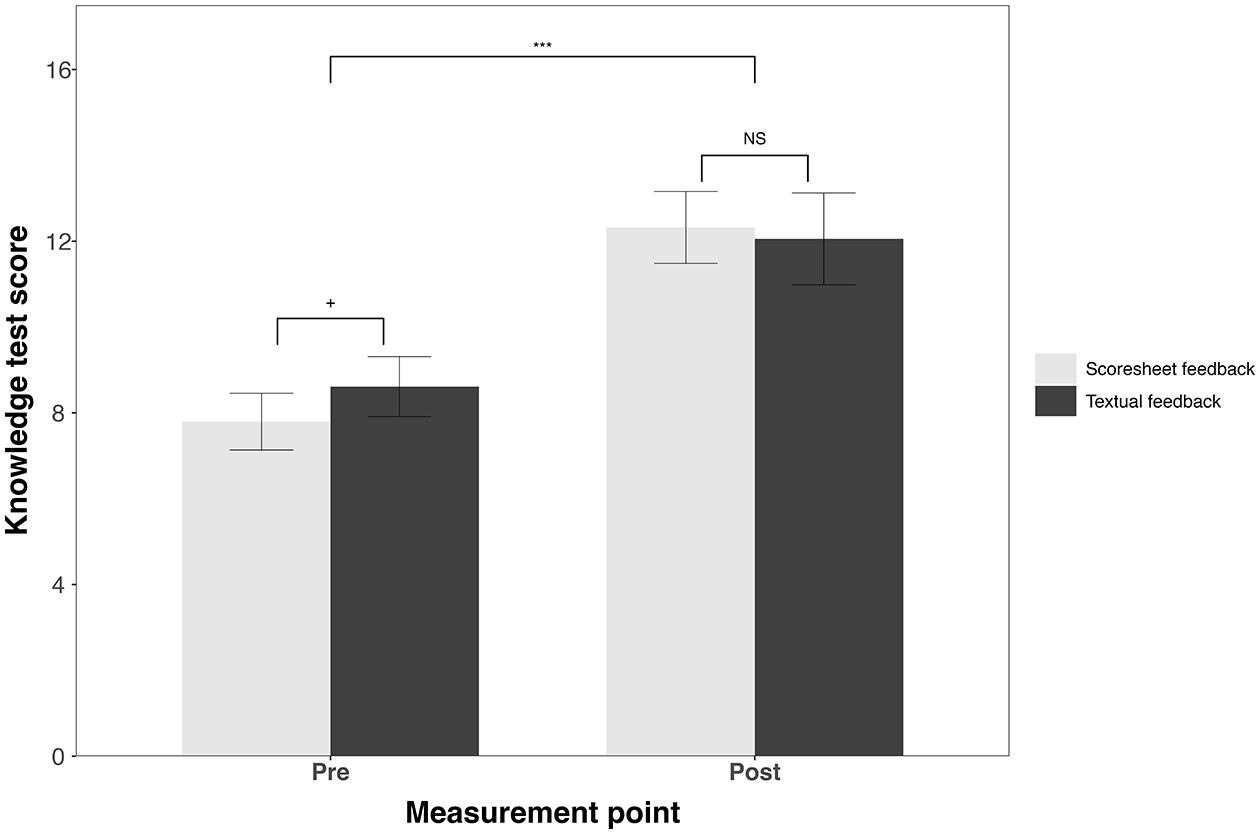

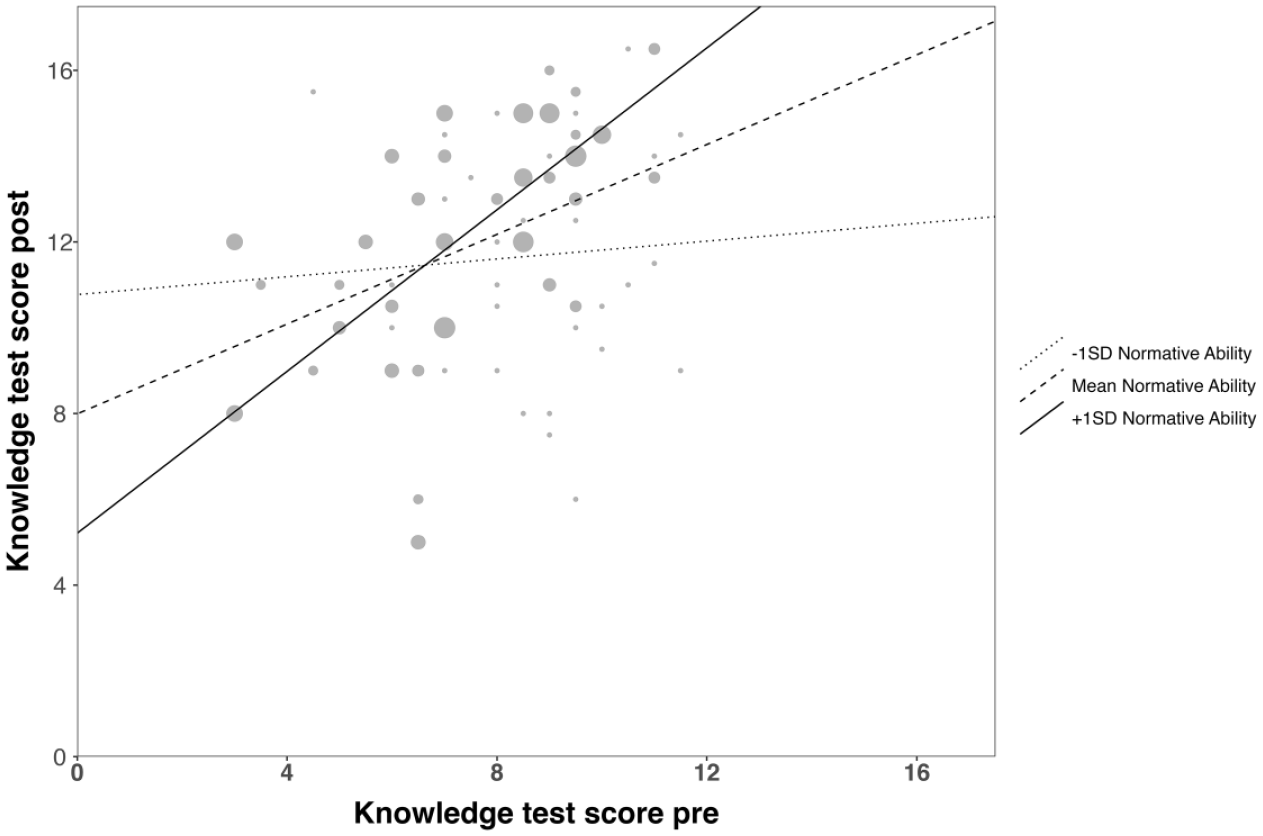

Results of our repeated-measures ANOVAS on the two main outcomes are depicted in Table 4. For content knowledge, we observed a significant main effect for time indicating that students answered on average four more questions correctly at the end of the semester than at the beginning of the semester. Knowledge gain of the scoresheet group (Mchange = 4.53, SD = 2.62) was significantly higher than knowledge gain of the textual-feedback group (Mchange = 3.44, SD = 3.01, see Figure 1). In addition, a significant normative ability x time interaction was found. The examination of the interaction plot revealed that students with higher normative ability goals had slightly higher than average knowledge gains (β = 0.94, p < 0.05) than students with lower normative ability goals, who had no significant knowledge gain (β = 0.10, p = 0.71, see Figure 2). The impact of normative ability goals did not differ for the two feedback conditions.

Repeated-measures ANOVAS for effects of feedback group (scoresheet vs. textual) and time (beginning vs. end of the semester) on knowledge gain and change in self-efficacy.

Sample sizes: nscoresheet = 39, ntextual = 36.

p < 0.05. **p < 0.01. ***p < 0.001.

Group (scoresheet vs. textual) × Time (beginning vs. end of the semester) interaction on knowledge.

Normality Ability × Time (beginning vs. end of the semester) interaction on knowledge.

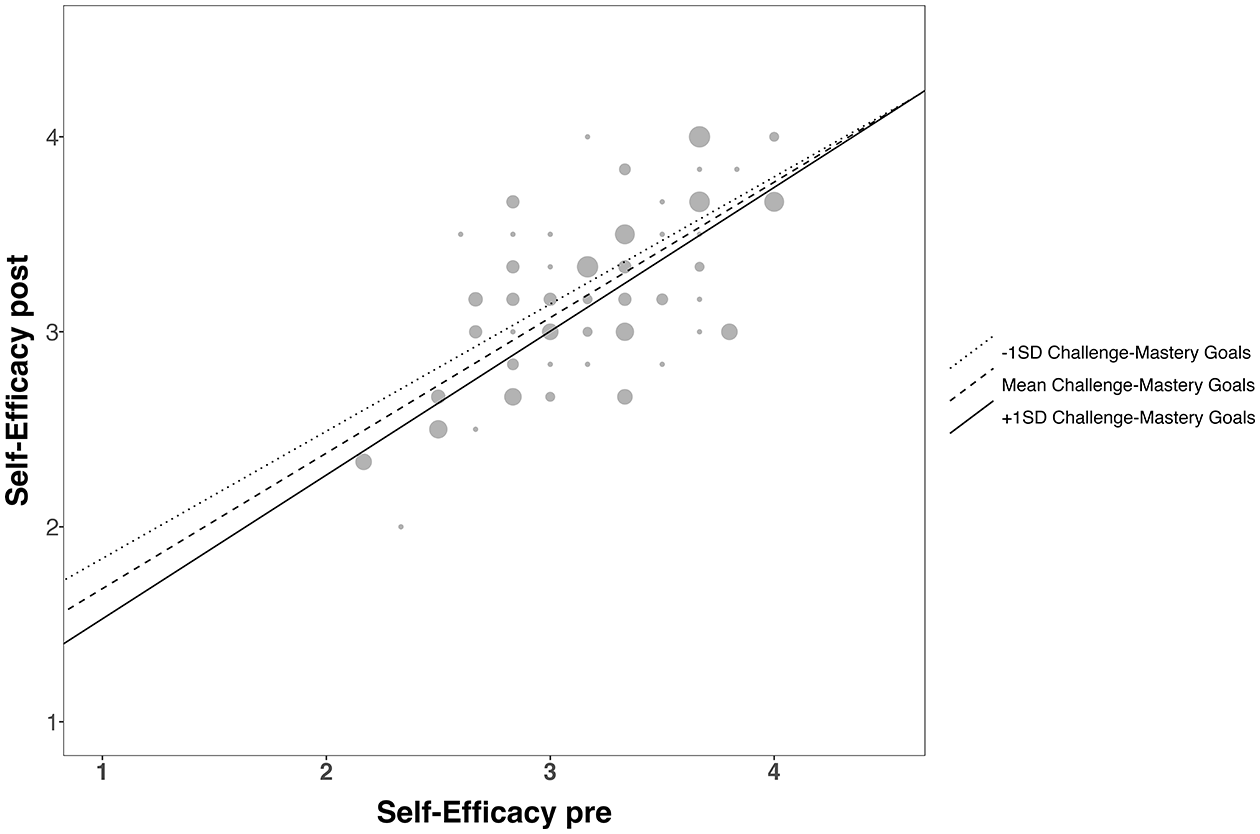

For self-efficacy, we observed a significant time effect representing a general small decline in academic self-efficacy, although raw scores did not indicate such a decline (Table 4). That is, our control for feedback mode and challenge-mastery goal orientation uncovered systematic changes of self-efficacy throughout the semester. The time effect was qualified by a significant interaction with challenge-mastery goal. Together with the observed negative correlation of challenge-mastery goal with change in self efficacy (r = −0.29), this indicates that the generally small decline is slightly more pronounced in students with higher challenge-mastery goals (β = 0.73, p < 0.05) than in students with lower challenge-mastery goal (β = 0.65, p < 0.05, see Figure 3). That is, challenge-mastery goal orientation moderates how self-efficacy changes in university first-year students throughout the semester.

Challenge-Mastery × Time (beginning vs. end of the semester) interaction on self-efficacy.

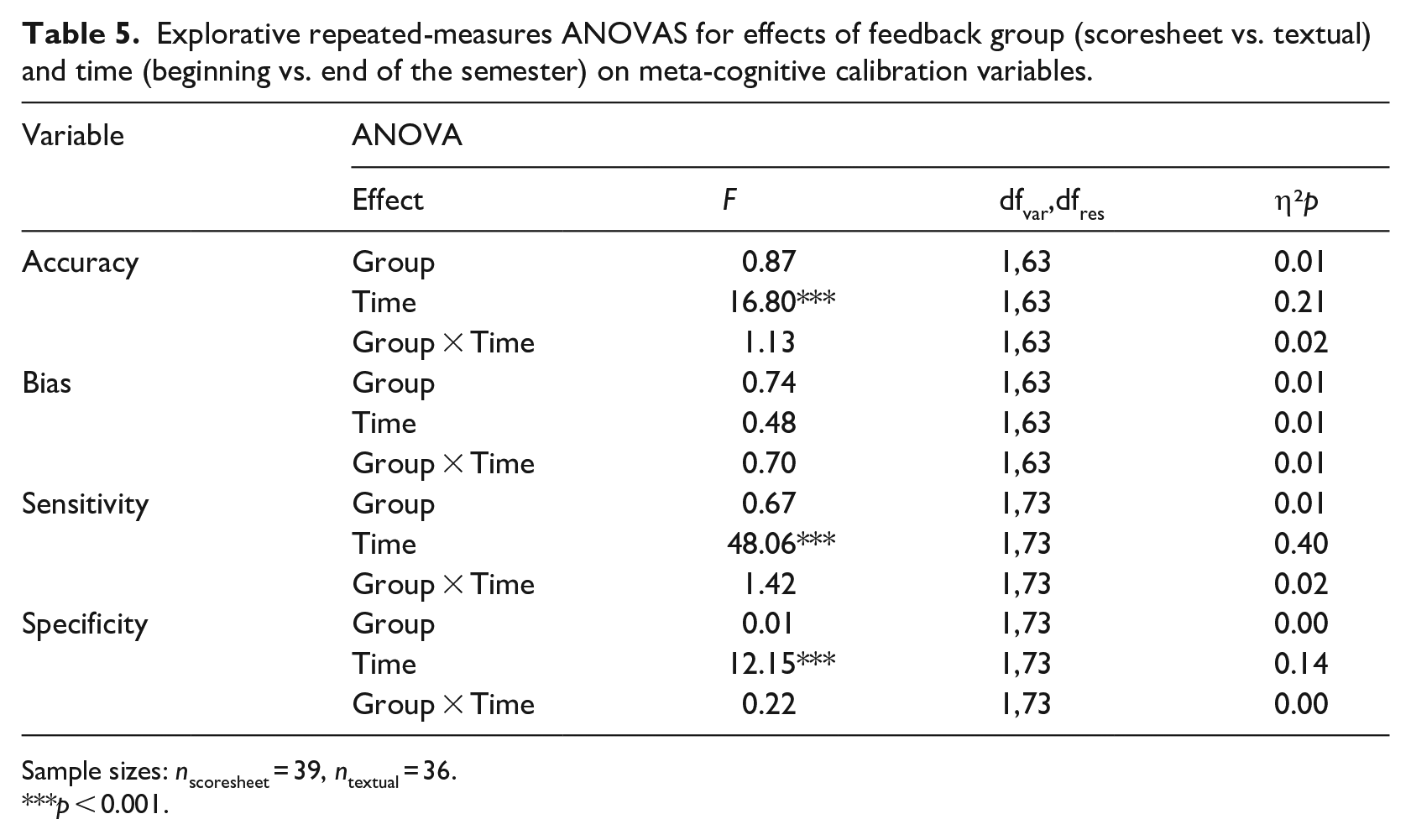

Four explorative repeated-measures ANOVAS were conducted with the metacognitive calibration variables (Table 5). We observed neither group differences nor time changes in the degree of over- or underestimation (bias). Accuracy and sensitivity of correctness of one’s own responses in the knowledge test increased throughout the semester, while specificity decreased. Thus, at the end of the semester, students predicted correctly-solved items better but incorrectly-solved items worse than at the beginning of the semester.

Explorative repeated-measures ANOVAS for effects of feedback group (scoresheet vs. textual) and time (beginning vs. end of the semester) on meta-cognitive calibration variables.

Sample sizes: nscoresheet = 39, ntextual = 36.

p < 0.001.

Discussion

Our study goal was to investigate structural elements of feedback that make instructor feedback economic, concise, motivating and beneficial for learning. Specifically, we aimed at disentangling influences of feedback mode and feedback content on learning and self-efficacy. The results extend previous findings in two important ways. First, when systematically manipulating feedback mode while excluding confounding influences of feedback content, feedback mode has only minimal influences on feedback effects. Second, achievement motivation variables do not moderate the relationship between feedback mode and feedback effects (i.e. students benefit from different feedback modes independently of their achievement motivation). To provide feedback that is not only motivating and beneficial for learning but also economic and timely, scoresheet feedback is a suitable feedback mode fulfilling these criteria.

Feedback effects on learning

As expected, both the scoresheet and the textual feedback group showed substantial knowledge gain throughout the semester. The results show medium to high effects exceeding typical effects of repeated testing (Rowland, 2014). Additionally, knowledge gain was somewhat higher in the scoresheet group compared to the textual feedback group. Presumably, students in the scoresheet group capitalized on the higher clarity and transparency of rubrics (cf. Brookhart, 2018)compared to the textual group that received longer text passages in their feedback. This implies that clarity and transparency of feedback as provided in the scoresheet group might enhance learning.

We assume that no matter which feedback mode is chosen, instructors need to think about the criteria they will use to evaluate coursework, ideally by formulating levels of expectations. This can serve as a direct basis for the creation of the scoresheet, so that hardly any additional effort is to be expected in the creation of the scoresheet compared to the textual feedback. In addition, score sheets can help instructors not to forget important points in their feedback. This contributes to the objectivity and reliability of the feedback process. Considering that actually giving feedback via a scoresheet is more economic compared to writing textual feedback, our result underlines our recommendation for scoresheet-based feedback in higher education.

Feedback effects on self-efficacy

We did not observe differential influences of feedback mode on changes in self-efficacy. That is, in the current study, neither feedback mode caused changes in self-efficacy any more than the other due to, for example, a stronger promotion of student’s sense of relatedness to the instructor (cf. Ajjawi et al., 2022). This result extends previous findings insofar as we now have evidence that neither textual feedback as compared to verbal feedback (Agricola et al., 2020) nor scoresheet feedback as compared to textual feedback are detrimental to self-efficacy. Overall, we found self-efficacy to be relatively stable throughout the semester, an observation in line with former studies (Agricola et al., 2020; Duijnhouwer et al., 2010). In the current study, the high stability of self-efficacy might be due to situational factors. Performance in the knowledge test was not used for grading and in addition, students did not receive formal grades on other occasions in the seminar. Thus, this study took place in a low-stakes environment, not threatening student’s self-efficacy by a graded knowledge test or an upcoming examination. Moreover, the posttest took place before students received any formal grading in the university context at all. The limited information students could have obtained about their own abilities until post-test apart from our positively-framed feedback might be a further reason for the high stability of self-efficacy throughout the semester.

Feedback moderators

We identified two achievement motivation variables influencing feedback effects in university first year students: the degree to which students want to show that they can outperform their peers (normative ability goals) and the degree to which students want to experience challenges in their studies (challenge-mastery goals). Both variables were independent predictors of feedback effects but not moderators in the association between feedback mode and feedback effects.

Students with higher normative ability goals showed more knowledge gain, regardless of the feedback condition. This might indicate that students who aimed at demonstrating their intellectual superiority to their peers interpreted the experimental context as a performance situation. The feeling of being challenged could have resulted in improved memorability for the pretest items, active search for solutions within the seminar context and/or more active usage of their respective feedback sheets (cf. Leenknecht et al., 2019).

In addition, more mastery-oriented students experienced a higher decline in self-efficacy throughout the semester independent of feedback mode. Possibly, more mastery-orientated students interpreted difficulties with answering knowledge questions as a failure in pursuing personal learning goals causing a decline in reported self-efficacy in the posttest. Moreover, more mastery-orientated students might have achieved a more realistic view about their own abilities throughout the semester, for example, by capitalizing more on feedback situations. Such an adaptation to the university context could protect mastery-oriented students from over-optimism, an adaptation strategy viewed as questionable (Haynes et al., 2006). However, these results have to be interpreted cautiously, since student ranking regarding their self-efficacy was comparably stable throughout the semester (r = 0.63).

Metacognitive calibration

Students improved on two of four metacognitive calibration variables throughout their first semester, indicating that their perception of whether they were able to give a correct answer to an item was more accurate at the end than at the beginning of the semester. Strikingly, students’ monitoring accuracy improved more strongly than improvements typically observed through metacognitive trainings (Händel et al., 2020). However, students’ ability to correctly detect wrong answers declined. In comparison to the other metacognitive calibration variables, specificity seems the variable which can be influenced least (Händel et al., 2020), a characteristic that might explain the observed result. Although other learning situations within the semester might have influenced this result, this finding signifies that both feedback modes could help students improve their self-assessment of their abilities.

Limitations and future research

One limitation of our study is, that by pseudonymizing our feedback we might have involuntarily eliminated the personal character of feedback, which is an important quality dimension (Ajjawi et al., 2022; Nicol, 2010). Informal comments from students at the end of the semester describing both feedback modes as ‘rather mechanical’ point in that direction. In addition, students rarely used the offered opportunity to personally ask questions about their feedback. To support students’ engagement with written feedback, in future studies it might be helpful to include more semi-personalized statements (cf. Crisostomo and Chauhan, 2019) or to address students using their names (Ajjawi et al., 2022). Nonetheless, our substantial effects on knowledge gain and metacognitive monitoring imply that these pitfalls of standardized feedback were not detrimental to students’ learning.

A second limitation of our study is that we compared only two feedback modes. It is possible that other feedback modes such as video feedback or face-to-face feedback influence learning gains and self-efficacy differently than the written modes we investigated. Yet, as we set out to quasi-experimentally test feedback that could be not only motivating and beneficial for learning but also economic and concise, we chose these two feedback modes because they are more feasible regarding technical demands and limited resources of university instructors. Nonetheless, an interesting direction for future research is to investigate whether other feedback modes influence feedback effects differently than the two written modes we used.

A third limitation of our study follows directly upon the previous point. To disentangle feedback effects from learning gain that would have occurred without feedback, we would have needed a no-feedback group. We deliberately decided against this control condition for two reasons. First, it was important for us to give all participants feedback that, based on previous research, would most likely be helpful to them. Even if our students did not write an exam at the end of the course, they should still have comparable learning opportunities in their first semester at university. Second, in a no-feedback group, we would have created a rather unnatural situation, as not getting any feedback on course work is not standard in higher education. Such a clear disadvantage of the control group could have had a negative impact on motivation. Thus, the usefulness of the control group and at the same time the validity of the study would have been questionable.

A fourth limitation of our study is that we tested in a low-stakes environment, meaning that students awaited no formal grading on course contents. This might have influenced the motivation with which students engaged in course topics. Therefore, it should be tested whether the findings of the current study can be replicated in a high-stakes situation, in which performance directly relates to grading.

Practical implications

The current study shows that different feedback modes are positively associated with knowledge gain in a university context, with a slight advantage for scoresheet feedback. This means, instructors relying on scoresheet feedback can justifiably expect that their feedback will be effective. However, the advantage of scoresheet feedback over textual feedback was only small indicating that form follows function. That is, content is more important than “superficial” feedback mode, at least for written feedback. Taking into account that teachers seem to prefer different feedback modes for different feedback content (cf. Dirkx et al., 2021), our findings imply that instructors can choose a feedback mode they find economic and suitable with regard to the feedback content they want to provide. Taken together, instructors and students can benefit from both scoresheet feedback and textual feedback on assignments. Our results support an elective choice of feedback mode based on economic requirements of university instructors.

Supplemental Material

sj-docx-1-alh-10.1177_14697874221131970 – Supplemental material for The impact of feedback mode on learning gain and self-efficacy: A quasi-experimental study

Supplemental material, sj-docx-1-alh-10.1177_14697874221131970 for The impact of feedback mode on learning gain and self-efficacy: A quasi-experimental study by Christine Johannes and Astrid Haase in Active Learning in Higher Education

Supplemental Material

sj-docx-2-alh-10.1177_14697874221131970 – Supplemental material for The impact of feedback mode on learning gain and self-efficacy: A quasi-experimental study

Supplemental material, sj-docx-2-alh-10.1177_14697874221131970 for The impact of feedback mode on learning gain and self-efficacy: A quasi-experimental study by Christine Johannes and Astrid Haase in Active Learning in Higher Education

Supplemental Material

sj-docx-3-alh-10.1177_14697874221131970 – Supplemental material for The impact of feedback mode on learning gain and self-efficacy: A quasi-experimental study

Supplemental material, sj-docx-3-alh-10.1177_14697874221131970 for The impact of feedback mode on learning gain and self-efficacy: A quasi-experimental study by Christine Johannes and Astrid Haase in Active Learning in Higher Education

Supplemental Material

sj-docx-4-alh-10.1177_14697874221131970 – Supplemental material for The impact of feedback mode on learning gain and self-efficacy: A quasi-experimental study

Supplemental material, sj-docx-4-alh-10.1177_14697874221131970 for The impact of feedback mode on learning gain and self-efficacy: A quasi-experimental study by Christine Johannes and Astrid Haase in Active Learning in Higher Education

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.