Abstract

Written feedback plays a key role in the acquisition of academic writing skills. Ideally, this feedback should include feed up, feed back and feed forward. However, written feedback alone is not enough to improve writing skills; students often struggle to interpret the feedback received and enhance their writing skills accordingly. Several studies have suggested that dialogue about written feedback is essential to promote the development of these skills. Yet, evidence of the effectiveness of face-to-face dialogue remains inconclusive. To bring this evidence into focus, we conducted a literature review of face-to-face dialogue intervention studies. The emphasis was on key elements of the interventions and outcomes in terms of student perceptions and other indicators, and the methodological characteristics of the studies. Subsequently, we analysed each selected intervention for the presence of feed-up, feed-back and feed-forward information. Most interventions used all three feedback elements – notably assessment criteria, student feedback, and revision, respectively – and combined lecturer–student as well as student–student dialogue. Students generally perceived the interventions as beneficial; they appreciated criteria and exemplars because they clarified what was expected of them and how they would be assessed. With regard to student outcomes, most interventions positively affected performance. The literature review suggests that feedback dialogue shows promise as an intervention to improve academic writing skills, but also call for future research into why and under which specific conditions face-to-face dialogue is effective.

Acquisition of academic writing skills

Students need academic skills to be able to carry out academic tasks and prepared for lifelong learning. One of the key academic skills is communication, including writing skills, such as essay or report writing, and oral skills, such as presentations (Qualifications and Curriculum Authority (QCA), 2000; Wingate, 2006). According to Sultan (2013), academic writing, at least in contemporary Western society, is a distinct style of writing used by those in academia and research communities that is noted for its detached objectivity, its use of critical analysis and its presentation of well-structured, clear arguments based on evidence and reason. (p. 139)

Hence, academic writing is an essential means of communication (Lillis and Turner, 2001; Murray, 2001; Sultan, 2013; Zhu, 2004). Examples of academic writing products can range from essays, bibliographies and theses up to scientific papers (Ganobcsik-Williams, 2004). However, acquiring academic writing skills is complicated for students in higher education (Carless, 2006; Elander et al., 2006).

The literature describes various approaches to academic writing instruction (Lea and Street, 2006; Wingate and Tribble, 2012). Many approaches address teaching academic writing skills outside the specific subject content, in extracurricular ‘study skills’ courses. These generic or bolt-on approaches assume that common features in academic writing can be taught separately (Etherington, 2008). However, other studies on effective academic writing methods show that enhancing student learning in this manner is ineffective (Ganobcsik-Williams, 2004; Tribble and Wingate, 2013; Wingate, 2006, 2012). The main argument is that learning to study well at university cannot be separated from subject content and the process of learning. In addition, many students begin their studies with little or no knowledge of the principles underpinning discipline-dependent academic discourse regarding academic writing. These principles are rarely explicitly documented by subject lecturers who often assume students understand what is required of them (Elton, 2010; Hunter and Tse, 2013). Knowing that learning to write well is difficult is convincing to realize that university students need support with academic writing (Wingate, 2006, 2012, 2014). Developing disciplinary writing requires an understanding of a distinct discourse on subject knowledge and tacit writing (Elton, 2010; Hunter and Tse, 2013; Wingate, 2006). Another argument against separate writing courses is that rising numbers of student are accessing higher education and their heterogeneous educational backgrounds require other ways to teach complex academic writing skills (Boscolo et al., 2007; Lillis and Turner, 2001; Tribble and Wingate, 2013; Wingate, 2006). Therefore, researchers are considering effective approaches that will enhance learning academic writing skills and also serve lifelong development. There is need to introduce student input into embedded subject-specific discourse and instruction, known as the built-in approach, in contrast to generic and often extracurricular teaching (Hunter and Tse, 2013; Lillis, 2001; Wingate, 2006; Wingate et al., 2011).

Academic writing support has already been studied extensively. Research on academic writing support ranges across, for example, the use of exemplars or worked examples (Carless and Chan, 2017; To and Carless, 2016; Yucel et al., 2014), the use of assessment criteria (Elander, 2002; Elander et al., 2006), the implementation of training or instruction (Taras, 2001, 2003), the use of different modes of feedback provision (McCarthy, 2017; Morris and Chikwa, 2016), the role of feedback in revision of writing products (Jonsson, 2012), the role of self and/or peer assessment (Taras, 2001, 2003), and the importance of the writing process itself (Cloutier, 2016). Each research direction contributes to improved insight into academic writing skills development. However, one of the most powerful single influences on achievement is feedback (Hattie and Timperley, 2007).

The role of feedback in development of academic writing skills

Hattie and Timperley (2007) define feedback as ‘a process through which learners make sense of information from various sources and use it to enhance their work of learning strategies’ (Carless and Boud, 2018: 2). Various studies have established the perceived value of feedback by lecturers and/or peers for students in the development of academic writing skills (Carless and Boud, 2018; Chalmers et al., 2017; Mulder et al., 2014; Ruegg, 2015; Sritrakarn, 2018). As feedback plays a powerful role in learning, it is an essential tool for improving academic writing practice (Carver, 2017; Schartel, 2012; Thurlings et al., 2013). To stimulate students to take responsibility in their own learning process and to become self-regulated learners, as indicated by Nicol and Macfarlane-Dick (2006), the uptake of feedback should be enabled in the development of so-called student feedback literacy (Carless and Boud, 2018). Carless and Boud (2018) define student feedback literacy as ‘the understandings, capacities and dispositions needed to make sense of information and use it to enhance work or learning strategies’ (p. 2). They formulate four-interrelated features of student feedback literacy: appreciating feedback, making judgements, managing affect and taking action. Appreciating feedback refers to the student’s recognition of the value of feedback and understanding their active role in the feedback process (Bunce et al., 2017; McLean et al., 2015). Making judgements denotes the student’s need to develop the competence to make decisions about the quality of work (Boud et al., 2013, 2015; Tai et al., 2017). Managing affect deals with the student’s control of affective responses when receiving feedback (Pitt and Norton, 2017; To, 2016) and, finally, taking action refers to the student engaging actively in making sense of feedback information and using it in subsequent work, thereby closing a feedback loop (Boud and Molloy, 2013; Price et al., 2011; Robinson et al., 2013; Shute, 2008).

Both lecturers and students consider written feedback instrumental in successfully acquiring academic writing skills (Carless et al., 2011; Price et al., 2010; Rae and Cochrane, 2008). The provision and receipt of written feedback has been suggested to enhance this learning process (Baker, 2016; Huisman et al., 2017; Zhang et al., 2017). Yet, written feedback alone is not strong enough to effect learning improvements because students do not always apply the feedback they receive (Carless et al., 2011; Duncan, 2007; Jonsson, 2012; Pokorny and Pickford, 2010). Jonsson (2012) offers two explanations for this: lecturers’ written feedback often lacks clarity and students lack strategies for productive use of feedback. These explanations are consistent with Hattie and Timperley’s (2007) theory that states that to enhance student learning effectively, feedback must be useful, of high quality and contain feed-back, feed-forward and feed-up information. By ‘feed-up information’, they mean making explicit what is expected from the learner, for instance, by providing assessment criteria; ‘feed-back information’ aims to inform students about their current performance, by specifying what is done well and what needs improvement; and ‘feed-forward information’ directs the learner as to how to proceed and improve. Black and Wiliam (2009) add to this discourse by arguing that it requires dialogue between student and lecturer and/or with student peers for feedback to be effective. The aim of this dialogue is to improve the students’ understanding of the feedback and help them move forward (Black and Wiliam, 2009; Hattie and Timperley, 2007). Peer-to-peer dialogue is considered particularly helpful because peers speak each other’s language, and they can motivate and help each other to move forward (Bloxham and Campbell, 2010; Bloxham and West, 2007; Cloutier, 2016; Elton, 2010; Nicol, 2010; Rae and Cochrane, 2008; Zhu and Carless, 2018).

What remains unclear, however, is whether students actually find face-to-face dialogue useful in improving their academic writing skills and, if so, why. Neither is it clear whether other outcomes, such as the quality of academic writing products, improve with the introduction of dialogue. There is therefore a need to further explore the following:

Which feedback elements (feed up, feed back and feed forward) do face-to-face dialogue interventions use and who are the participants in such interventions?

What are the outcomes of interventions in terms of student perceptions and other indicators and how can these be explained?

What are the main methodological characteristics of studies analysing the effectiveness of face-to-face dialogue interventions?

Methods

Search strategy

In April 2017, we searched the following online databases: Web of Science, EMBASE, ERIC, CINAHL, PsycINFO and Google Scholar. At first, we searched on ‘feed up’, ‘feed back’ and ‘feed forward’, but this strategy did not produce enough suitable articles so we added the term ‘feedback’. To minimize the chance of missing relevant articles, the scope was broad and included the following string of keywords and Boolean operators: ‘dialogue OR discussion OR conversation’ AND ‘feedback’ AND ‘writing’.

Inclusion and exclusion criteria

The electronic literature search was limited to English full-text studies published since 1990. Only articles that met the following inclusion criteria were selected: peer-reviewed, empirical studies with a particular focus on academic writing, published in the field of academic education, including all disciplines that discussed interventions employing face-to-face feedback dialogue. We excluded literature reviews and case studies, studies that did not focus on academic writing or studies that only addressed the online, digital or ICT aspects of the main topics.

Data extraction

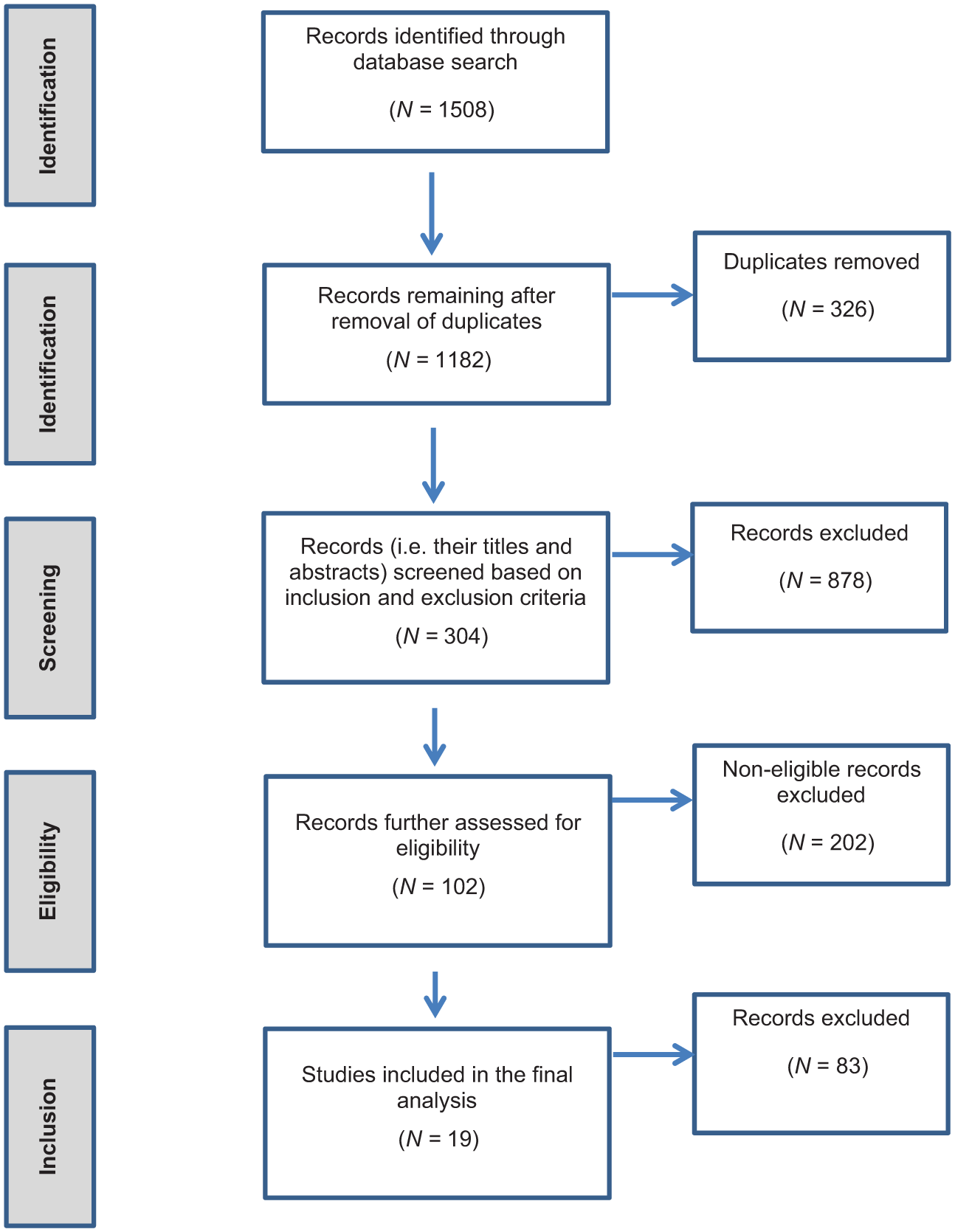

The first author performed the search, yielding 1508 records. After removal of duplicates, the titles and abstracts of the remaining records (N = 1182) were screened on the inclusion and exclusion criteria. The resulting records (N = 304) contained the topics ‘dialogue’, ‘feedback’ and ‘writing’. Further eligibility was subsequently assessed by reading the full articles on this list. After this phase, 102 articles remained for consideration. Of those, only articles that discussed a feedback intervention involving ‘face-to-face dialogue’ before submission of an academic writing assignment were included. As a result, the final review was based on 19 studies (Figure 1).

Flow chart depicting the process of study selection.

Data analysis

We scrutinized each intervention for the presence of feed-up, feed-back and feed-forward information (Black and Wiliam, 2009; Hattie and Timperley, 2007; Jonsson, 2012; Nicol and Macfarlane-Dick, 2006; Price et al., 2010; Rae and Cochrane, 2008). For the purpose of this review, we considered educational strategies such as assessment criteria, exemplars, worked examples and training (e.g. instructions or workshops) as expressions of feed-up information, written lecturer feedback and written peer review/assessments as feed-back information, and instructions to revise draft products as feed-forward information. In the next step, we checked which and how many participants were involved in the dialogue (student–student, lecturer–student or a combination of both). Since the studies did not describe the content of the face-to-face dialogues, we did not categorize them in terms of feed-up, feed-back and/or feed-forward. Third, we operationalized intervention outcomes in terms of students’ perceptions of the intervention, their marks and by text/dialogue analysis. Finally, in assessing the effectiveness of each intervention, we took into account the methodological characteristics of each study, including their study design, data sources and data collection methods (Creswell, 2014).

Results

Feedback elements used in the interventions and the participants in the dialogue

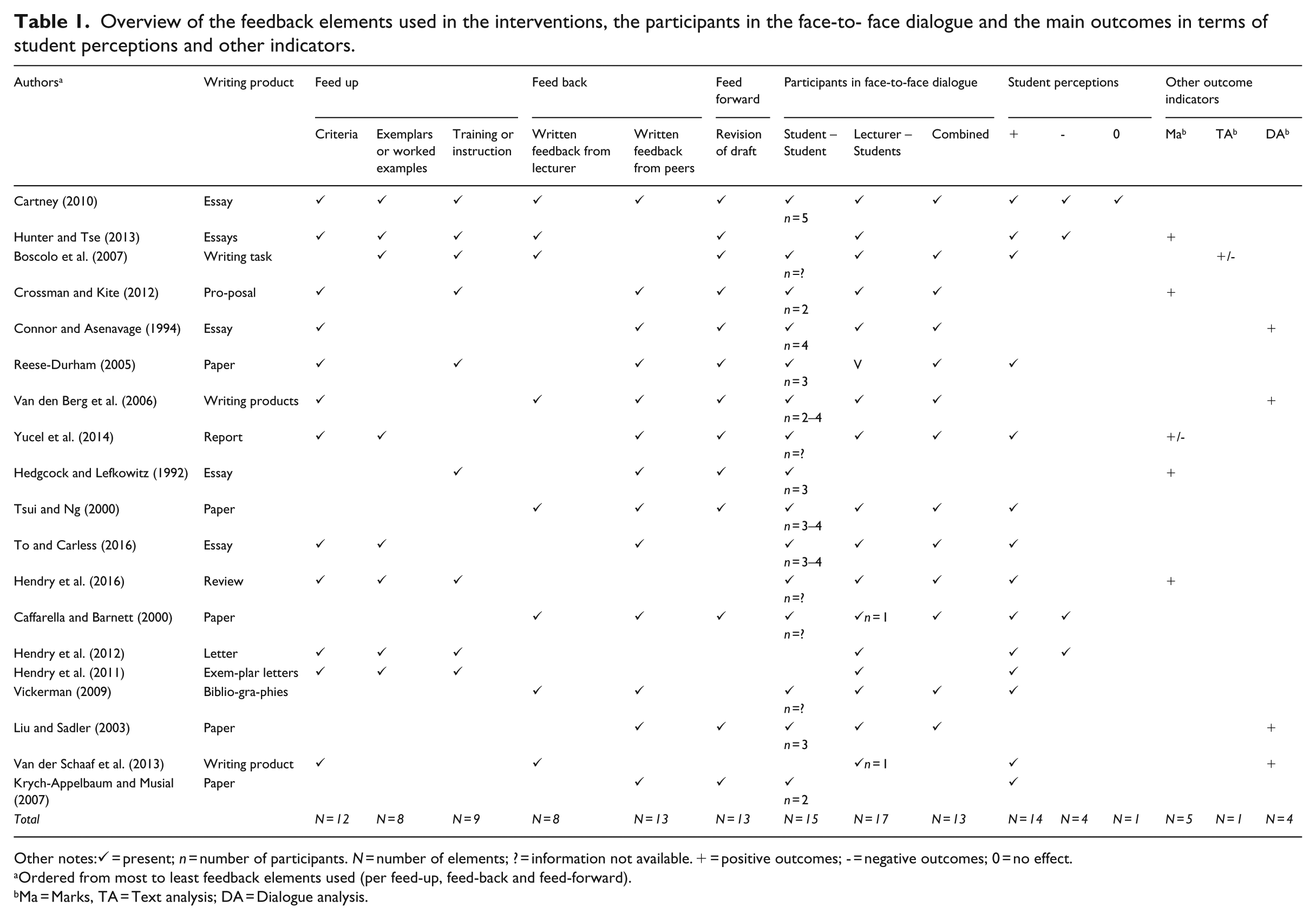

All the interventions focused on improving students’ writing products, such as an essay, paper or bibliography (see Table 1). In nine of the studies, the feedback students received contained all three feedback elements: feed up, feed back and feed forward. As regards feed-up information, assessment criteria were the tools most often used (N = 12), followed by training/instruction (N = 9) and exemplars or worked examples (N = 8). Written feed-back information was most often provided by peers during peer review or peer assessment (N = 13) and in eight interventions by lecturers; five interventions combined both strategies. All the interventions that provided feed-forward information instructed students to revise their drafts (N = 13).

Overview of the feedback elements used in the interventions, the participants in the face-to- face dialogue and the main outcomes in terms of student perceptions and other indicators.

Other notes:✓ = present; n = number of participants. N = number of elements; ? = information not available. + = positive outcomes; - = negative outcomes; 0 = no effect.

Ordered from most to least feedback elements used (per feed-up, feed-back and feed-forward).

Ma = Marks, TA = Text analysis; DA = Dialogue analysis.

Finally, with respect to the participants in the dialogue, most interventions used the student–lecturer format (N = 17), although the peer-to-peer dialogue was also used frequently (N = 15); the majority of the studies applied both formats (N = 13). Of the nine studies that provided students with all three feedback elements, seven used both the lecturer–student and the peer-to-peer dialogue format.

Outcomes of the intervention

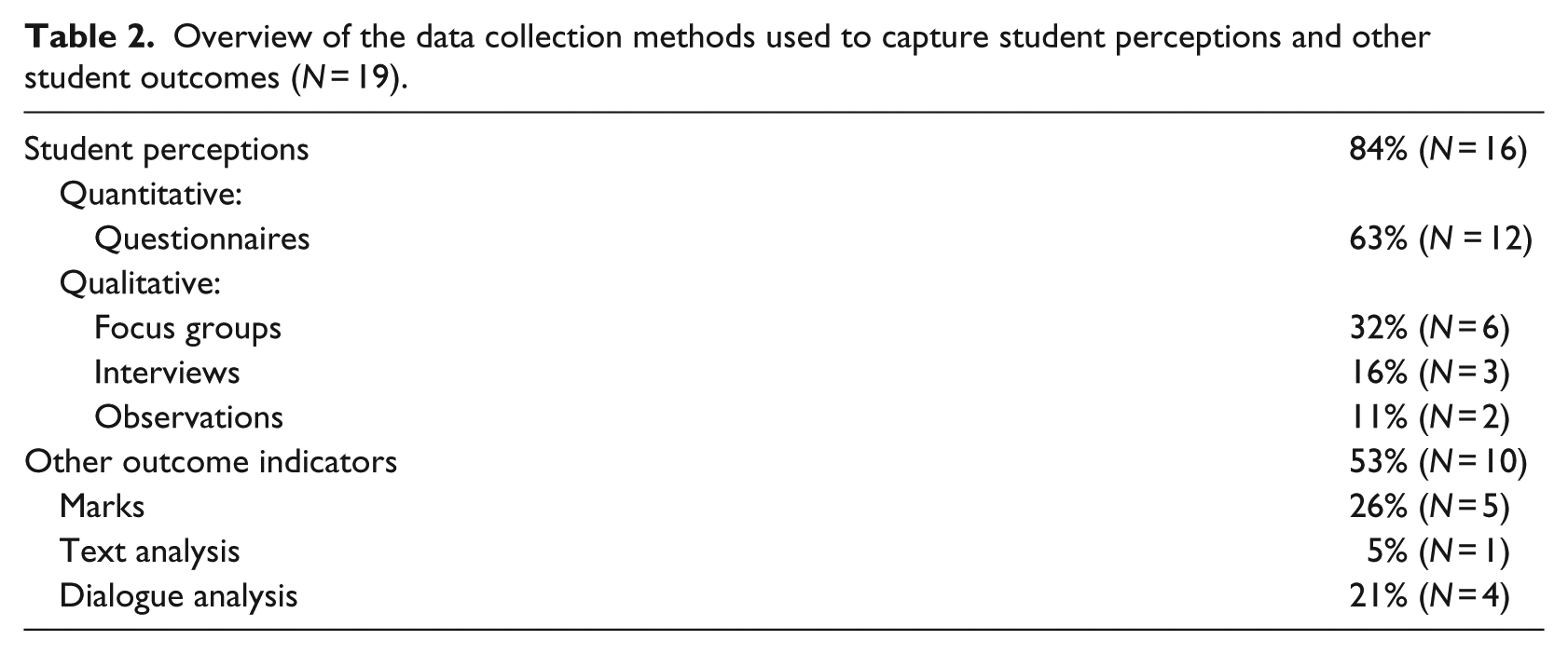

Intervention outcomes were measured mainly in terms of student perceptions (N = 14). Nearly half of the studies (N = 10), however, also used other indicators, such as marks or text/dialogue analysis. These results are also presented in Table 1; Table 2 outlines the data collection methods used.

Overview of the data collection methods used to capture student perceptions and other student outcomes (N = 19).

Student perceptions of the overall effectiveness of interventions

All 14 studies that discussed student perceptions reported that students experienced the interventions as predominantly positive. A few studies explained why students perceived interventions – or parts thereof – as useful, helpful and valuable (Cartney, 2010; Hendry et al., 2011, 2012; Tsui and Ng, 2000; Vickerman, 2009). Tsui and Ng (2000), for instance, observed that ‘peer comments enhance a sense of audience, raise learners’ awareness of their own strengths and weaknesses, encourage collaborative learning, and foster the ownership of text’. Yucel et al. (2014) explained that criteria and exemplars help clarify expectations and assessment procedures, thereby facilitating the provision of meaningful feedback and revision processes. Other studies demonstrated that understanding in students improved because of the clarity and constructiveness of written lecturer or peer feedback (Reese-Durham, 2005; Vickerman, 2009). A final reason for a positive perception of the intervention was that students felt it helped them to improve their products not only during the revision process, but also in future assignments (Cartney, 2010; Reese-Durham, 2005; Yucel et al., 2014). Despite these positives, four studies also reported negative aspects of the intervention, such as assessment anxiety (Cartney, 2010) or less useful marking sheets (Hendry et al., 2012).

Student perceptions of the effectiveness of dialogue

Of the 14 studies, 11 specifically addressed student perceptions of face-to-face-dialogue (Caffarella and Barnett, 2000; Cartney, 2010; Hendry et al., 2011, 2012, 2016; Hunter and Tse, 2013; Krych-Appelbaum and Musial, 2007; To and Carless, 2016; Van der Schaaf et al., 2013; Vickerman, 2009; Yucel et al., 2014). All but one study reported that these sessions helped students understand the reasoning behind the assignment and were therefore considered useful. According to Vickerman (2009), they helped students gain confidence in student-led discussion. In Cartney’s (2010) study, however, some students felt very uncomfortable providing oral feedback and thus preferred online discussions to the face-to-face dialogue. Yet, other students rather gave feedback orally, because that enabled them to clear up any misunderstandings and save time.

Other outcome indicators

Ten studies used other indicators alongside or instead of student perceptions to assess the effectiveness of the intervention. These studies measured outcomes on students’ marks or an analysis of texts or dialogues (Table 1). In four studies, students achieved significantly better marks for their written assignments (Crossman and Kite, 2012; Hedgcock and Lefkowitz, 1992; Hendry et al., 2016; Hunter and Tse, 2013), while in one study the opposite was true (Yucel et al., 2014). Crossman and Kite (2012) suggest that the improved results could be due to students reviewing the criteria repeatedly when writing papers and reviewing their peers’ work, thus coming to understand the criteria more fully. By contrast, Yucel et al. (2014) argue that poor results could be down to improved marker reliability, high-study workloads from other subjects and variations in performance levels across student cohorts.

Of the studies that used text or dialogue analysis as part of the intervention, three reported that oral discussion enhanced understanding in students (Liu and Sadler, 2003; Van den Berg et al., 2006; Van der Schaaf et al., 2013). Other reported beneficial effects were more explanations and revisions by students (Van den Berg et al., 2006) and more thinking activities in students (Van der Schaaf et al., 2013). One study, however, also produced contradictory findings: while some aspects of the text did improve, others did not (Boscolo et al., 2007). Overall, the intervention outcomes were generally positive in all 10 studies, with eight reporting clear benefits and two showing contradictory results.

Methodological characteristics of studies

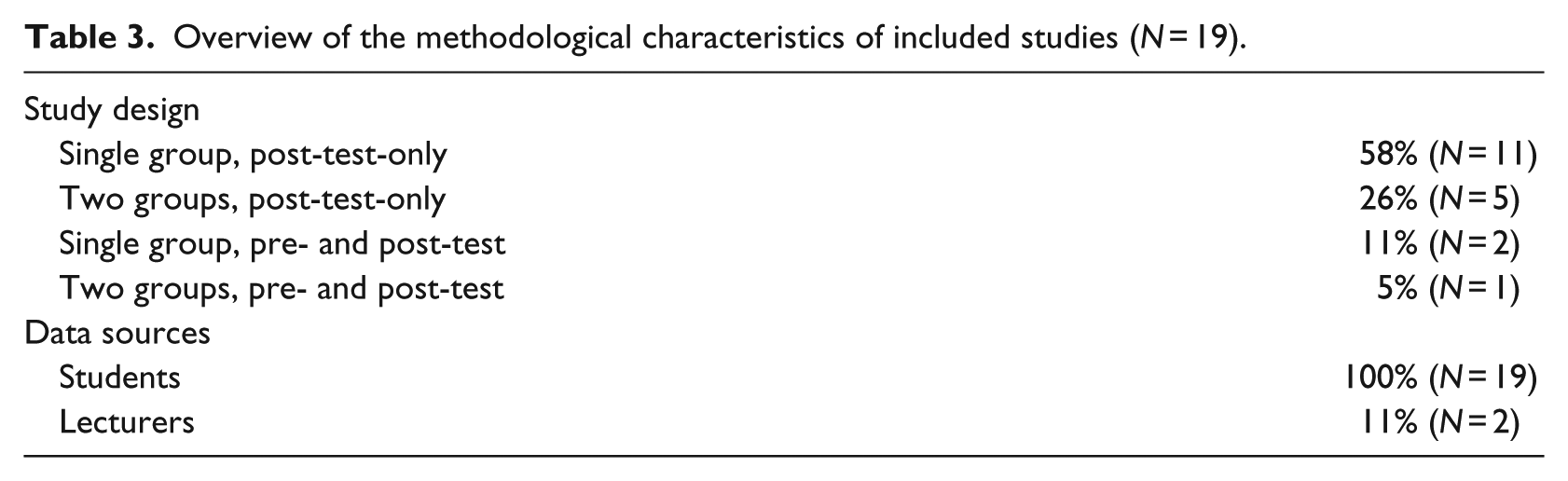

In terms of methodology, 84% (N = 16) of the studies used a post-test-only design, 58% (N = 11) of which involved a single group (Table 3). Students were the main data source in all included studies.

Overview of the methodological characteristics of included studies (N = 19).

Discussion and conclusion

Based on this review, it can be concluded that most studies used assessment criteria, worked examples and training/instruction as feed-up information. Peer review and written feedback by lecturers were frequently used as feed-back information. Most studies reported on interventions that asked students to revise text; this was considered to provide feed-forward information. Students generally perceived the interventions as positive. Most of the interventions dealing with other outcome measurements resulted in better outcomes as evidenced by marks or writing products. Using criteria and exemplars was considered beneficial, because they seemed to be especially important to clarify expectations. In general, a mix of feed-up, feed-back and feed-forward features seems to help improve academic writing skills. Face-to-face dialogue helped meaningful feedback and supported revision of writing products.

Our focus was to provide a review of studies that investigated feedback dialogue interventions and their outcomes in terms of student perceptions and other measurements in the context of academic writing. The added value of this review is the fresh insight into key features of the interventions it offers in terms of feed up, feed back and feed forward and the outcome of face-to-face dialogue interventions. This insight is necessary to enhance student learning in this context according the theoretical feedback model of Hattie and Timperley (2007). Face-to-face dialogue is supportive because it gives students the opportunity to ask for clarifications and explanations from lecturer and/or peers. This finding supports the role peers can play in the process of self-regulated learning, as described earlier by Zhang et al. (2017), who state that peer comments alone appeared to make the largest contribution to the revision of writing products.

Although the interventions contained dialogue between peers and/or students with lecturer, hardly any information was available about the content, tone and thus the quality of these dialogues. A reason for this finding could be that the dialogue was not the specific aim of the particular research. For example, some studies explicitly investigated the use of exemplars (Hendry et al., 2011, 2012; To and Carless, 2016), and therefore focused on feed up. On the other hand, Tsui and Ng (2000) focused on feed-back information and researched the use of written lecturer and peer feedback in the intervention. Revision as an example of feed-forward information is a crucial element in the writing process, which supports Jonsson’s (2012) claim that it provides opportunities for students to use feedback in order to improve writing skills. As for the methodological characteristics, the studies in this review were predominantly post-test designs, using a single group, possibly because most studies investigated the intervention in the course of a running educational programme. Potential ethical issues, such as offering the same programme or the same quality of education to each student, could have prevented the use of pre-test/post-test designs. Post-test designs, however, make it hard to determine the effectiveness of the intervention, because it is not clear what the pre-test conditions were (Creswell, 2014). Although the review shows predominantly positive self-perception, one needs to be careful in interpreting this finding. The positive perceptions might not be related to concrete improved academic writing skills.

This review has two major limitations. The first is the inadequate provision of relevant information in the included articles. Although this review provides improved insight into interventions containing face-to-face dialogue, it could not establish the effectiveness of the separate feed-up, feed-back and feed-forward features of the interventions and more exclusively the content and effectiveness of the face-to-face dialogue. While this review shows that interventions specifically including face-to-face dialogues mostly work well, the investigated studies provide very little insight into why and under what conditions this may be the case. Second, categorizing various educational strategies as feed-up, feed-back or feed-forward information is to some extent arbitrary. For example, criteria and exemplars were categorized as feed up, but criteria and exemplars could also give students suggestions on how to revise their text or move forward (feed-forward information). Furthermore, revision was categorized as feed forward, however, in practice revision can also be seen as the way or the result of how to proceed or improve. So, there might be some overlap between the various feed-up, feed-back and feed-forward strategies.

For future research, we recommend specifically investigating the content and effectiveness of face-to-face dialogue in the process of improving academic writing skills. More experimental study designs, for example, two group, pre-test/post-test designs including qualitative methods could establish why and under what conditions these dialogues work best. Besides measuring student perceptions, other outcome measurements such as marks or dialogue content analysis should be considered. An interesting aspect for future study could be the long-term impact of face-to-face dialogue on students’ writing skills. Finally, to determine the instructiveness of face-to-face dialogue, it is necessary to investigate the related instruction or facilitation method.

The implications of our findings for educational practice in higher education, especially for course designers, are twofold. First, from the student’s perspective, face-to-face dialogue in an intervention that includes feed up, feed back and/or feed forward seems to help the process of learning to write academic material. Second, this review provides concrete examples of feed-up, feed-back and feed-forward strategies including face-to-face dialogue that can be helpful when designing educational interventions to enhance student learning of academic writing skills, particularly the use of criteria and/or worked examples.

To conclude, our review has demonstrated that feedback dialogue interventions in the context of academic writing are helpful in most cases according to student perceptions and other outcome measurements such as marks or text analysis. Although face-to-face dialogue appears to support this process, the question of why and under which specific conditions it is effective needs further research.

Footnotes

Acknowledgements

The authors would like to acknowledge the thoughtful critique offered by the reviewers and editor of this article. Furthermore, we thank Angelique van den Heuvel and Ragini Werner for editing this manuscript.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.