Abstract

In dialogue with the work of Heather Love and colleagues, this article makes use of a peculiar ‘descriptive assemblage’ proposed by Harvey Sacks (1963) – that of the ‘commentator machine’ – to open up issues of ‘descriptive politics’ in the field of contemporary Artificial Intelligence (AI). We do so by reviewing the gameplay of Google DeepMind’s AlphaGo – an algorithm designed to outperform human players at the game of Go – with a focus on the incongruities of the much discussed, indeed (in)famous ‘move 37’ in a human-versus-machine challenge match in 2016 (e.g. Silver et al., 2017). Looking at move 37 in conjunction with the various layers of commentary that came to be woven around it, we explore the kinds of descriptive work involved in characterising the move, the troubles that work reveals and what we can learn about the practices and politics of description from encounters with ‘New AI’ applications like AlphaGo.

Keywords

Introduction

Heather Love and colleagues, in a series of much-discussed papers, argue for a turn away from both interpretation and explanation, the two dominant but mutually opposed methodological poles of 20th-century humanist and positivist inquiry, respectively, towards description. Studies conceived along the lines they envisage would focus on the surface rather than deep meanings and ‘build better descriptions’ (Marcus et al., 2016). Unusually for a humanities scholar, Love explicitly draws on qualitative interactionist research in the social sciences as a source of proximate inspiration for her descriptive re-engagements with literary works (e.g. Love, 2010, 2013). More specifically, Love suggests sociological studies in that tradition involve ‘forms of analysis that describe. . .but do not traffic in speculation about interiority, meaning, or depth’, and whose ‘exhaustive, fine-grained attention to phenomena’ offer ‘a [methodological] model’ (2013: 404). Aiming both ‘to see more and to look more attentively’ (2016: 14), this kind of descriptive work ‘defers virtuosic interpretation [or explanatory reduction] in order to attempt to formulate an accurate account of what . . . [the things being described are] like’ (2013: 412). In this way, descriptive studies help to open up the contingent bases upon which ‘realities are produced, activities are conducted, and sense is made’ (2013: 414, citing Streeck and Mehus) without according the analyst authority as a ‘privileged messenger or interpreter’ (2010: 373).

Across her papers, Love somewhat unexpectedly invokes ethnomethodology and conversation analysis, not widely known in the arts and humanities, in making the case for her descriptive (re)turn. However, some quoted passages and references aside, ethnomethodology and conversation analysis are not examined in any great depth by Love, her focus instead turning to Ryle and Geertz, Goffman and Latour (2010, 2013). Yet, in Garfinkel (1967, 2002) and Sacks (1992), and the work of subsequent ethnomethodologists and conversation analysts, problems of description are a recurrent concern, taken up time and again in distinctive ways while resisting analytic privileging and virtuosic interpretation far more consistently than the three other social scientists on this list: Geertz, Goffman and Latour (although the latter has outed himself as a ‘philosopher’ too). As a way of introducing ethnomethodology and conversation analysis’s contributions in that regard, we want to explore what Sacks’ early work in particular offers when it comes to rethinking the practices and politics of description in the ways Love and colleagues argue we should. 1 Love and colleagues’ work is intended as a provocation and, in the spirit of interdisciplinary debate, we would like to offer some Sacksian provocations in return. Based on ethnomethodological research we are currently involved in, to do that our discussion will take up Artificial Intelligence (AI) as a site in which descriptions play critical and frequently contested roles, not least when it comes to the characterisation of machine procedures as involving ‘learning’ or ‘intelligence’. We want first, however, to set out the terms of our own descriptive re-engagement with problems of description.

As a response to Love and colleagues’ methodological proposal that we stick to surface orders in the pursuit of better descriptions, we draw on the work of Sacks specifically to ask ‘which surfaces?’ and ‘better for what?’. These ‘tendentious’ questions (Garfinkel, 2002: 146) bring out issues of the work descriptions do, the connections they make and the consequences they have. In so doing, they provide us with a way of contributing to a debate which began in the arts and humanities from the other, sociological side without treating matters as settled on that side either. We will focus on one peculiar ‘descriptive assemblage’ (cf. Savage, 2009) proposed by Sacks in particular. That assemblage is sketched in his ‘Sociological Description’ (1963), where Sacks imagines a ‘commentator machine’ composed of two parts: a doing part and a saying part – with the machine doing things while simultaneously providing descriptive commentaries on those doings. We are interested in thinking further about the kind of commentator machine contemporary AI might be said to be, that is, in what the saying and doing parts are and how their relations can be ‘resolved’ in Sacks’ terms, along with the problems different kinds of observer of AI technologies have in describing them as well as the (often contentious) methods they use in resolving those problems. 2

Following Suchman (2007), we foreground the problems that arise in separating the machinery from the descriptive commentaries and the interpretive, explanatory and other kinds of work those commentaries do in the field of AI. Confronted with technologies that not only perform what we normally regard as human activities – like playing games, for example – but surpass humans at them, our vernacular descriptive practices come under some strain. Using a particular example, a move made by a game-playing algorithm, AlphaGo, on route to ‘beating’ a human player at Go over a five-game series in a staged challenge match in Seoul in 2016, we examine the move’s troubling character and, from there, what we can learn from it when it comes to describing what AI is, what it does and how. We begin, however, with Sacks and the lessons we take from him.

Sacks’ commentator machine and the problem of description

In his early paper, ‘Sociological Description’ (1963), Sacks opens by saying his concern ‘is to make . . . sociology strange’ (1963: 1). More particularly, he sets out to make sociology’s practices of description strange. In order to establish sociology’s descriptive strangeness, he asks us to imagine an encounter with a machine that ‘comments upon what it is doing’ (Sacks, 1963: 5). Sacks characterises it as having ‘two parts: one part is engaged in doing some job, and the other part synchronically narrates aloud what the first part does’ (Sacks, 1963: 5). It is in this sense, he says, that ‘the machine might be called a “commentator machine”, its parts “the doing” and “the saying” parts’ (Sacks, 1963: 5).

This sparely sketched ‘commentator machine’ provided Sacks with an analytic metaphor for social life and methods for studying it, with social activities and cultures cast as self-commentating or self-describing assemblages organised on analogous grounds. Deploying the metaphor enabled him to critically examine what was presupposed by different kinds of approaches to producing descriptions which would resolve the relationship between ‘the machine’s’ two parts, the saying and the doing parts. Working the metaphor through, Sacks distinguishes between (at least) three possible perspectives on the machine, each of which implies a different kind of ‘descriptive politics’ in methodological terms (cf. Savage, 2009). As Sacks puts it (1963: 7): ‘A common feature of the perspectives is an avowed concern to make sense of the object. In making sense of it each may be said to pose the problems understanding it consists of and to produce as solutions a ‘description’ of the object’.

The contrast Sacks is really after, however, is between two kinds of stances that epistemically divide the variants he outlines (cf. Coulter, 1989). On the one hand, there is the perspective of variously ‘knowledgeable’ observers; those who either already understand what the machine is doing or the commentary it offers or both, and who do not seek to problematise their understandings in producing their descriptions. On the other, there is the perspective of the ‘naive’ observer; someone who is neither fluent in the native language used in the auto-commentary nor understands the technical operations of the machine, but who would, as a result, be all the more curious as to ‘what is going on in the first place’ (1963: 6).

For Sacks, the ‘naive observer’, perhaps counter-intuitively, is best placed to arrive at an adequate account of the commentator machine’s workings. That is because, unlike the others, they will not take either the machine’s operations or its commentaries for granted and attempt to use them as the basis for producing their descriptions of the whole. In an echo of Wittgenstein (1953: e.g. §7, §23), Sacks argues they will instead treat the description as part of the action, not distinct from it, looking to see how it does things with language (cf. Austin, 1962) as much as how it does things with the materials it processes and the movements it makes. For the naive observer, Sacks’ model observer, the aim will be to arrive at an account of the activities of which the commentary is an embedded part and thus to arrive at an understanding of the specific sense in which it constitutes a commentator machine at all. The naive observer, in other words, will try to account for the descriptive commentary along with the rest of what the machine does; where proceeding otherwise, by assuming familiarity with actions, commentaries or both from the outset and employing one as a key for understanding the other by privileging doing over saying or vice versa, is precisely what makes sociology, for him, methodologically ‘strange’ (1963: 7).

With reference to the commentator machine, Sacks sought to place descriptions back among the locally occasioned, locally organised and locally accountable ensembles of practices that are constitutive features of our social lives – practices social scientists and arts and humanities scholars engage in as much as anyone else. For Sacks, attending to descriptions in the ways opened up by the commentator machine is, as a result, analytically revealing because it provides insights into those practices, our sayings and doings, and the work they perform in different sites and settings. In line with Love and colleagues, Sacks entire approach in this regard was to avoid being misled into searching for hidden as opposed to witnessable orders or projected depths (Livingston, 2008: 28–29). Rather than taking descriptions at face value or refusing to do so, his focus was on what descriptions do or fail to do in helping us see in actual situations ‘what . . . [things are] like’ (Love, 2013: 412). This is a particularly important methodological point to bear in mind when we start to consider contemporary AI, an area where it is easy to be led astray by descriptions, including, as we will show, the descriptions of those with a prima facie claim to interpretive and explanatory authority such as the designers of the technologies we are trying to make sense of.

While the commentator machine in Sacks’ hands is a metaphor, or analytic parable, for social activities, social lives, even cultures, we are interested in the analytic purchase we might gain by applying its lessons to the machine learning algorithms powering contemporary developments in artificial intelligence (cf. Jaton, 2017), actual ‘machines’ which often generate some sort of readable commentary on their own operations as they run. We find precedents for applying the lessons of the commentator machine to contemporary AI technologies in the ethnomethodological work of Lucy Suchman, specifically her Plans and Situated Actions/Human-Machine Reconfigurations (PSA/HMR) (1987/2007). Suchman’s work helps us focus on actual commentator machines, the human–machine configurations they rest on and the problems of description that arise when we start to deal with them. Confronted with technologies which not only seem to do ‘human’ things but get accounted for in ‘human’ terms, it can be difficult to know just what to make of them. While problems connected with reconciling description and performance arise regularly in the history of AI as technical troubles, they also make an appearance as incongruities in the course of situated encounters with AI’s working artefacts and instructively so as we will go on to demonstrate (cf. Suchman, 2019; Jones-Imhotep, 2020).

Developing Sacks’ insights as a way of examining these problems, we do not want to treat descriptive commentary as external to AI, we want to treat it as a constitutive element of the technology. Reflecting how we find AI in the world (c.f. Neyland, 2019), rather than drawing boundaries around the technology in narrow ways, this means treating all the work that goes into and is done with AI, including descriptions of what a given system might be said to be or be doing, as being as much part of the ‘assemblage’ as the hardware and software. Like Sacks’ naïve observer, and following the approach taken by Suchman, we want, in other words, to treat description as part of the phenomenon, not something we use to examine it (for further discussion of the latter, see, e.g. Quéré, 1992; Piriou, 2008). We shall do this by examining how layers of descriptive commentaries came to be woven around the doings of one ‘new AI’ system, in particular, Google DeepMind’s AlphaGo, as encountered in a live-streamed challenge match. 3

To err is . . . human?

In March 2016, between the 9th and the 16th, a challenge match was staged in which AlphaGo, one of the most promising next-generation game-playing AI machine-learning algorithms, was pitted against the human world champion, Lee Sedol, at Go in a five-game series. Beating human players at Go, an ancient strategy game played on a board with stones akin to but involving many more complex, ramifying conditional possibilities of play than Chess, was widely thought to be beyond the scope of what AI systems might ever achieve (cf. Collins, 2018: 94–95; Suchman, 2019; Sormani, forthcoming). However, AlphaGo’s creators, Google DeepMind, had developed a computational approach to automating Go gameplay designed to handle the game’s complexities not through brute force combinatorial processing – working out all possible ways of winning a match from a given position – but through ‘heuristics’ and decision-making calculations based on the probabilities of individual moves being effective play by play (see Google DeepMind 2020 for extended discussion). In the end, AlphaGo ‘won’ the series 4-1 leading its creators to declare it had ‘mastered’ the game (e.g. Silver et al., 2016).

The overall result was remarkable, highlighting the significance of Google DeepMind’s engineering accomplishments in developing a machine learning algorithm not only able to perform competently but to excel in a domain thought to be the preserve of humans alone, and professional Go players in particular. In the match’s wake, a great deal of attention centred on one particular move, move 37, in the second game of the series. That move came to be viewed as encapsulating what AlphaGo had achieved in the course of the challenge match as a whole.

In one sense, moves in a game like Go speak for themselves; a given move is the move that it is and has to be responded to as such. But what a given move might amount to as a move-in-the-game – both as a response to prior moves and as a prospective, recontextualising change to the state of play – is not always obvious, even to the initiated. Nor is the possible strategy a move may be enacting, particularly when it comes to the complex dialectic of move and countermove, strategy and counter-strategy that contingently characterises any given game of Go (where both ‘strategy’ and ‘counter-strategy’ are glosses for shifting move-by-move board configurations). Hence, competitive games, particularly high-profile games (where, in contrast to ‘teaching games’, players are unlikely to transparently narrate their broader strategies for winning to an opponent), are accompanied by third-party descriptive commentaries of many sorts. In this case, the games were livestreamed and the official livestream included live commentary by a pair of non-playing commentators, one a Go expert, the other an anchor or ‘host’ for the broadcast (thus turning them into ‘teaching games’, sites of instruction, for the home audience).

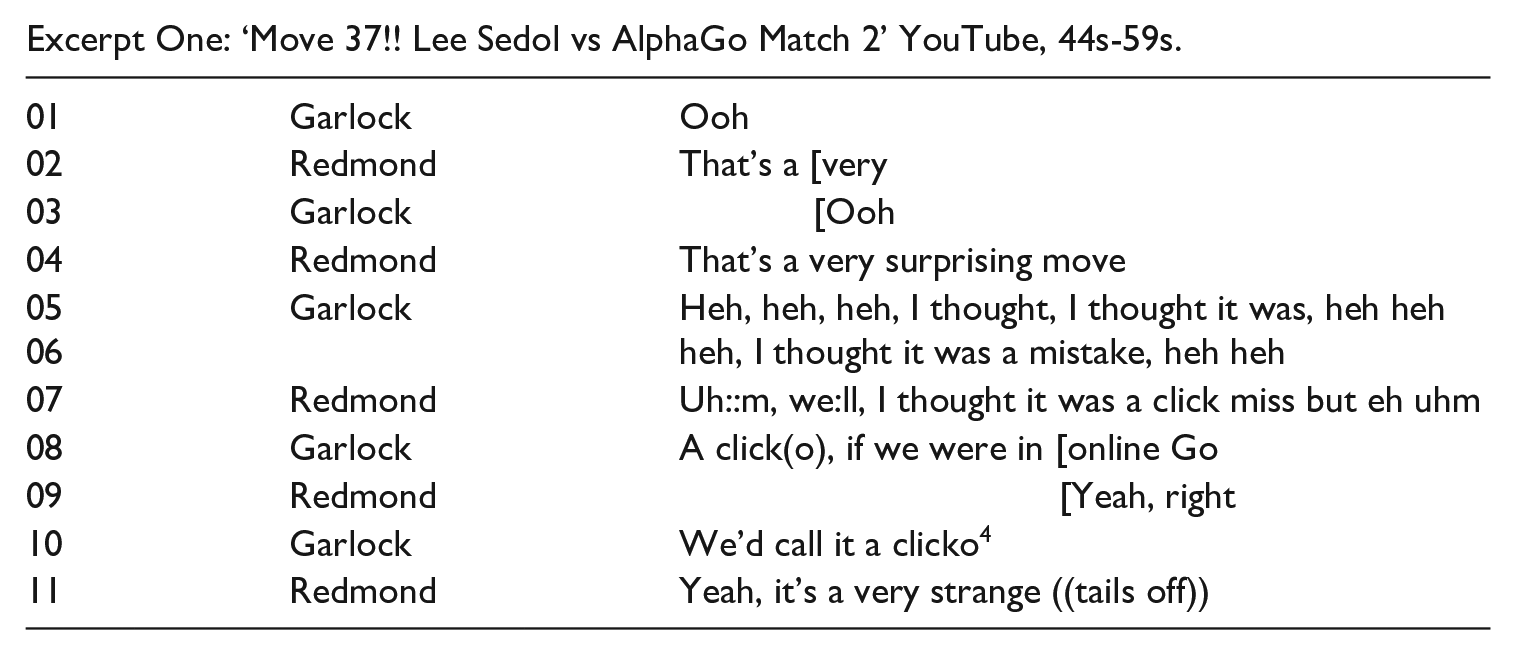

Move 37 can be watched here, https://www.youtube.com/watch?v=JNrXgpSEEIE, but what initially differentiates it is the fact it caught the pair of commentators out. At the start of the clip the professional Go player providing commentary, Michael Redmond, is discussing possible next moves by AlphaGo, whose turn it is to move, with his counterpart, the host Chris Garlock. Redmond is discussing areas of the board it would be unlikely for AlphaGo to move into and why, perhaps to narrow down the discussion to a more focused consideration of a smaller pool of likely next moves. They conduct this discussion using a demonstration board set up to show the state of play on the actual game board as it stands. This facilitates their commentary as they can play, replay or imagine alternative moves to help viewers follow ‘what is happening’. The actual game board, with one of AlphaGo’s programmers Aja Huang sitting proxy for the programme at it, is shown in an insert in the bottom right-hand corner, so viewers can follow the game as it plays out with the commentary picking up the play and elaborating on it (see Figure 1 below).

The challenge match livestream display just before move 37 is played out, with the commentary board and Michael Redmond in view and the match board in the bottom right hand corner.

Just as Redmond is discussing an area of the board to the middle right which he suggests it would be less than optimal for AlphaGo to move into given the overall balance of play at that stage, the insert video shows Aja Huang make a move for AlphaGo into exactly that territory. Redmond, unaware of the move as yet, continues to explain why such a move would be, in effect, a bad move and Garlock takes him up, elaborating further on AlphaGo’s ‘decision-making’ protocols. Redmond eventually notices the move, however, and moves the pieces on the demonstration board to reflect the change, looking back and forth from a monitor showing the game to the board to ensure he has placed AlphaGo’s stone correctly. Garlock notices the move too, the pair exchange glances in something like a ‘double take’ and the conversation shifts as Excerpt One shows.

Excerpt One: ‘Move 37!! Lee Sedol vs AlphaGo Match 2’ YouTube, 44s-59s.

In Sacks’ terms, what problems of understanding do AlphaGo’s gameplay in the broad and move 37 in a particular pose and what descriptions might be offered as solutions to those problems? In many respects, the problems of understanding are those thrown up by AI more generally.

The same or similar ‘vocabularies of motive’ (Mills, 1940) are regularly used in descriptions of both humans and machines, creators and computers, sometimes interchangeably, sometimes not, as properties of complex ‘thing-agents’ (Alač, 2016). Denied those descriptive vocabularies it is unclear whether we would be able to adequately describe the doings of AIs, nor would we suggest we necessarily ought to be so denied (cf. Collins, 2018: 93–99). There is, however, a tricky tension between, on the one hand, the technical operation and vocabulary of AI systems, imagined or actual (in terms of statistical tests, mechanisms, algorithms, etc.), and, on the other hand, the non-technical occasions and vocabularies of everyday operations and encounters with them (relating to evolving situations, motives, persons, etc.). Here, when AlphaGo’s play is recognisably orderly (i.e. the results of the algorithm are broadly recognisable as moves a human player might make) this tension can pass with civil inattention, yet as happens in the excerpt above, such tensions come to the foreground when the proxy vocabularies we use for convenience no longer seem to apply as neatly, that is, when our descriptions are shown to be wrong. This tricky tension can be considered as a historical or theoretical issue by philosophers or other analysts of technology, but we can also take it up as a practical, technical, and conceptual matter for participants in situ, as they struggle to make sense of and describe AI applications like AlphaGo in vernacular terms – for instance, when its technical performances are being demonstrated to and commented on by both advocates and an onlooking public as here. 5 As an example of such struggles, move 37 provides us with insights into how the technical workings, operational displays, claims and grammar of AI are variously topicalised and challenged in practical settings. For that reason, we want to further examine the ‘common, everyday methods of practical action and practical reasoning’ (Livingston, 1987: 4) that went into the staging of the challenge match (and move 37 within it) and commentaries on it, constituting the occasion as such (cf. Sharrock, 1989), as well as the descriptive politics, in Sacks’ methodological terms, that the livestreamed spectacle was entangled in.

We get a flavour of the descriptive politics involved from the account of move 37 offered by Cade Metz (2016) in Wired magazine, a prominent venue for popularising and promoting AI: “With the 37th move in the match’s second game, AlphaGo landed a surprise on the right-hand side of the 19-by-19 board that flummoxed even the world’s best Go players, including Lee Sedol. “That’s a very strange move,” said one commentator, himself a nine dan Go player, the highest rank there is. “I thought it was a mistake,” said the other. Lee Sedol . . . took nearly fifteen minutes to formulate a response. Fan Hui – the three-time European Go champion who played AlphaGo during a closed-door match in October, losing five games to none – reacted with incredulity. But then, drawing on his experience with AlphaGo – he has played the machine time and again in the five months since October – Fan Hui saw the beauty in this rather unusual move. Indeed, the move turned the course of the game. AlphaGo went on to win Game Two, and at the post-game press conference, Lee Sedol was in shock. “Yesterday, I was surprised,” he said through an interpreter, referring to his loss in Game One. “But today I am speechless . . .””

One of the points we want to stress is that the problems we face in describing AI are often highly localised. The question we want to ask is therefore as follows: how ought we to describe move 37? We could offer an account of this move as a move in the game, for instance. We could also offer accounts of the game as a whole, or the series of games in which the move featured. At this level, it would not seem to be too different from describing a human playing Go, particularly in overview. Metz’s retrospective commentary here makes the move visible in just those sorts of ways. It becomes a ‘turning point’, something ‘beautiful’, not just human but even ‘superhuman’, potentially, as AlphaGo’s creators subsequently came to label the performance of successor versions of AlphaGo (Silver et al., 2017: 354). Descriptions cast in these terms make move 37 and the ‘player’ that made it highly significant, perhaps world historical, foregrounding the performative and judgemental fallibility of humans when they come up against this new generation of intelligent machines.

But things are not quite that straightforward, particularly in real-time. Played out live, as Excerpt One shows, move 37 took the commentators Redmond and Garlock by surprise. Redmond is perhaps the more circumspect of the pair, describing the move as just that, a ‘surprise’ and committing to little else in terms of what made it unexpected. Garlock, however, concretises the move as a ‘mistake’ and mistakes, implying fallibility in reasoning and action and intentions gone wrong, seem to be a much more human affair. Hammers, cars, televisions, paintings and the like do not make mistakes, though mistakes may well have been made in their making. They can, however, break, glitch or malfunction, which is the kind of middle-ground the commentators come to jointly settle on, casting the move as a possible error, a ‘clicko’, a mechanical fault or inadvertent, random misfire. The commentators, with Redmond in the lead, did go on to revisit that description, coming to see the ways in which move 37 might represent a ‘good move’ not an error, but their initial unease here is instructive, making visible as it does when and where attributions of agency to machines, even sophisticated ones, start to become uncomfortable.

The challenge match was set up as a ‘symmetry spectacle’ (Sormani, 2020; forthcoming), with its screening format staging an equivalence between the two opponents, AlphaGo and Sedol. In turn, this display of equivalence afforded the commentator pairing with opportunities for conflation, with few distinctions drawn between the professional and/or programmed, human- and/or machine-like, qualities of the opponents and their play. Nonetheless, on this occasion, they demonstrate hesitancy around treating AlphaGo and Sedol symmetrically in and through their tentative descriptions of what move 37 may or may not have been. Although Latour (2005) and actor-network theorists following him argue human and non-human actants should always be treated symmetrically, such generalised treatments are blind to differences in the practical conditions under which it does or does not make sense to describe them as such. Here, the endpoint of the commentary pair’s initial descriptions is decidedly non-symmetrical and mechanically oriented, pointing towards the possibility of a fault in the algorithm in light of the ‘strange move’, even though that characterisation changed as the situation continued to unfold.

In another early work from the same period as ‘Sociological Description’, published posthumously, Sacks (1999: 38–39) offers a reflection on ‘seriousness’ which is helpful here. We quote him at length: “For inquirers the determination that . . . [a] subject is serious in producing his activity . . . means that the[ir] activity is analyzable . . . Chess players [for example] talk of the response to a move as ‘an answer’. In order for a move to be answered, as compared with merely being followed by the opponent’s move, it is necessary for the opponent to feel confident in assuming that the move to which he is to respond was produced by a strategy. The opponent must feel confident that the move was produced as part of a course of action which can be located by analyzing the move. The opponent doesn’t seek to answer the move, but the strategy by which the move is assumed to have been generated. Indeed, when chess players talk of the integrity of a player they mean that he will not make a move that is not justifiable as part of a strategy. Such a player has integrity because whatever he does can be responded to on the basis of an analysis of what he is trying to do by that and related future moves.”

Linking Sacks’ remarks here to Redmond and Garlock’s commentary, we would suggest that when we treat AlphaGo’s moves as responses to Sedol’s, we are producing redescriptions of the processes which generated them. A given move can be and often is treated as ‘produced as part of a course of action which can be located by analyzing the move’ (Sacks, 1999). Unlike human players, however, the loci of the ‘moves’ AlphaGo makes is not to be found in shared forms of practical reasoning but mechanical operations performed step-wise on available data. A lot, therefore, comes to hinge on what, adapting Sacks, we might term the engineered ‘integrity’ (Sacks, 1999) of AI technologies, that is, their capacity to arrive at outputs, machinic performances, which are capable of being responded to as if they were products of analysable courses of human action. When their doings cease to be analysable in that way, when they cease to be capable of being treated as if they were actions, troubles arise in dealing with the ‘it’ that has generated those doings and we withdraw attributions of ‘seriousness’ (Sacks, 1999) to them as agents. Indeed, under such circumstances, we quickly come to question ascriptions of agency to them as a whole and act towards them quite differently as a result (cf. Collins, 2018). 6

While Redmond and Garlock repaired this suspension of AlphaGo’s ‘seriousness’, the exposed tensions remain. Among other things, the possibility of this kind of surprise entitles us to ask a further question: namely, if a machine cannot play a ‘bad move’, because it merely ‘moves’, can it be said to have played a ‘good move’ either? In this sense, in suspending commitment to the equivalence between AlphaGo and Sedol, the hesitancy speaks to troubles in characterising AI’s doings that are far from easy to resolve. 7 As Collins (1990: 9) notes, ‘machines that work rarely do the same work as humans’ and those differences were sharply highlighted in the first startled descriptions produced by Redmond and Garlock as they tried to make sense of AlphaGo’s seemingly aberrant ‘turn’. In many respects, their hesitation in commentary invites us to reassess whether what we’re watching can be appropriately described as a match at all.

The discomfort we might feel might be dispelled by approaching the problem of description from other angles and seeking a different kind of commentary. We might ask, for instance, how AlphaGo came to arrive at that move, and that takes us into different territory altogether, away from troubles with the spectacle to the machinery involved. In response to that question, we can be given an overview of the methods which underpinned AlphaGo’s development and there can be contrasts with, for instance, other historical approaches to engineering game-playing algorithms (e.g. Silver et al., 2016). Alternatively, those responding might be concerned to show reproducibility, that is, the programme is robust and the move wasn’t random but systematic, exhibiting a kind of machinic predictability or integrity, to return to Sacks. Such strategies are equivalent to methodically describing the move by pointing to an underlying mechanism or process. That AlphaGo’s designers did offer that kind of commentary on move 37 is clear from Metz’s (2016) account: “Silver . . . and the rest of the team that built AlphaGo arrived in Korea well before the match, setting up the machine – and its all-important Internet connection – inside the Four Seasons, and in the days since, they’ve worked to ensure the system is in good working order before each game, while juggling interviews and photo ops with the throng of international media types. But they’re mostly here to watch the match – much like everyone else. One DeepMind researcher, Aja Huang, is actually in the match room during games, physically playing the moves that AlphaGo decrees. But the other researchers, including Silver, are little more than spectators. During a game, AlphaGo runs on its own. That’s not to say that Silver can relax during the games. “I can’t tell you how tense it is”, Silver tells me just before Game Three. During games, he sits inside the AlphaGo “control room”, watching various computer screens that monitor the health of the machine’s underlying infrastructure, display its running prediction of the game’s outcome, and provide live feeds from various match commentaries playing out in rooms down the hall. “It’s hard to know what to believe”, he says. “You’re listening to the commentators on the one hand. And you’re looking at AlphaGo’s evaluation on the other hand. And all the commentators are disagreeing”. During Game Two, when Move 37 [the putatively winning move] arrived, Silver had no more insight into this moment than anyone else . . . But after the game and all the effusive praise for the move, he returned to the control room and did a little digging . . . [There] Silver could revisit the precise calculations AlphaGo made in choosing Move 37. Drawing on its extensive training with millions upon millions of human moves, the machine actually calculates the probability that a human will make a particular play in the midst of a game. “That’s how it guides the moves it considers”, Silver says. For Move 37, the probability was one in ten thousand. In other words, AlphaGo knew this was not a move that a professional Go player would make. But, drawing on all its other training with millions of moves generated by games with itself, it came to view Move 37 in a different way. It came to realize that, although no professional would play it, the move would likely prove quite successful. “It discovered this for itself”, Silver says, “through its own process of introspection and analysis”.

We seem to learn something about the underlying processes from this account but those processes are difficult to disentangle from the descriptive commentary they are made available through. We are told the move was arrived at by ‘introspection and analysis’ by AlphaGo which ‘came to view’ the move in a way it ‘knew . . . a professional Go player’ would not, coming to ‘realize’ it ‘would likely prove quite successful’. This apparently ‘humanises’ AlphaGo, but at what cost? What else do we need to accept for this to constitute a solution to the problem of describing the move? Silver and colleagues underscore the point that, at least as far as its ultimate training stage is concerned, AlphaGo works ‘without human knowledge’ (its successors, AlphaGo Zero and MuZero, being advertised as working without such knowledge entirely, Silver et al., 2017).

8

Moreover, what we typically mean by introspection does not involve complex, precise statistical calculations across a dataset of millions of possible game moves performed mechanically. When playing Go, humans do not mechanically compute a set of combinations based on probabilities incorporating clearly defined criteria, but neither do they just throw pieces at the board. The ‘seriousness’ they bring to games, the analysability of their gameplay, is tied to the practical manner in which they approach it. This is emphatically not what AlphaGo does. As a result, the anthropomorphic description Silver and colleagues offer – a familiarisation of this unfamiliar technology with reference to the human forms of reasoning its processes are supposedly alike – is undermined in its elaboration. As Collins puts it (2018: 93): “Too often in the field of AI, words that describe human abilities are used to describe programming methods; the terminology confounds the analysis of the difference between what computers do and what humans do. The computers seem to be doing what humans do by definition: they ‘learn’, they ‘think’, they ‘decide’ and so forth . . . In this way, [what] the term ‘deep learning’ [means] could appear to have [been re]solved . . . through anthropomorphic definition . . .”

Silver and colleague’s account of how AlphaGo arrived at move 37, as reported by Metz, bypasses the problem of describing the move in just this way. AlphaGo’s doings, including move 37, are not made any more tractably understandable by this way of commentating upon them, quite the reverse. Their ‘tractable intelligibility’, in other words, is descriptively limited – that is, limited to the descriptive account offered as a ‘packaging device for elements of culture’ (Schegloff, 1992: xli). However, we do not need to look to Harry Collins, or Schegloff, for a response in those terms.

On YouTube, where our ‘Move 37!!’ clip was posted, anyone can comment and comment they did, drawing on vernacular not ‘expert’ or ‘technical’ competencies to do so. We want to focus on just one response here, that of ‘User X’ (anonymised), tucked away in the list of comments beneath the video clip. Picking up on Redmond’s speculations about what Black, that is, AlphaGo, might have been ‘thinking’ in making move 37 later in the clip, User X offered a short, ironic acknowledgement of the move, highlighting the absurd anthropomorphism in the livestreamed game commentary and the subsequent claims made about it by lampooning the commitments they implied: ‘Did he [the game commentator, e.g. Michael Redmond] say thinking? Alpha[Go] is thinking? Lol [laughing out loud]. That settles it then. Artificial Intelligence has finally become reality’. Elegantly deconstructing the use of definitional fiat to advance substantive empirical claims, User X’s comments undermine the idea that AlphaGo has done something simply because someone has said it has. Such naïve engagements point us to the ordinary resources that can be deployed by those curious about what might be going on with AI without being misdirected by talk of thinking. Faced with the assemblage of descriptive commentary that the intelligibility of AlphaGo’s moves is entangled with, User X here acts as a Sacksian critic, pointing to the doings being achieved through the sayings and suggesting we need not accept that things stand in the way those commentaries present them as so standing.

Conclusion

In 1985, some 30 years after his premature death, a book, Pandaemonium, 1660–1886: The Coming of the Machine as Seen by Contemporary Observers, was published based on the extensive research of the pioneering documentary filmmaker, Humphrey Jennings. In it, first-hand accounts of the appearance and rapid proliferation of new industrial technologies witnessed first-time-through were juxtaposed to produce a collage designed, among other things, to retopicalise a feature of our world – machines – now so familiar to us to have become ‘specifically unremarkable’ (Garfinkel, 2002: 118). Consulting the book, we find out, for instance, what might be involved in adequately describing to non-present others via written correspondence and textual reflections what it’s like to see a train. Just as importantly, we find those commentators searching for adequate means of explaining what a train is when they could not presume ‘mechanical experience’ among those they were writing for. The effect is fascinating, and slightly disorienting, making this akin to an anthropological ‘first encounter’ literature, but one written about the societies we ourselves inhabit in our ‘normally thoughtless’ ways (Garfinkel, 2002: 212–216). A particularly notable point is that these contemporary observers, operating in non-mechanical or barely mechanical contexts, did not lack methodic ways of making the ‘revolutionary’ technologies that emerged from the 17th, 18th and 19th century’s continuous ‘gales of creative destruction’ (Schumpeter, 2003 [1944]: 81–86) intelligible. The strange was not just made familiar, it was also judged and often penetratingly critiqued in and through the descriptive commentaries they offered. While they could not have foreseen how the processes whose initial progress they had witnessed might develop, there remains a great deal to be gleaned from how all those observers went about their work of description, something Jennings grasped when he began gathering these vernacular accounts together.

While we cannot claim to have done anything as grandiose as Jennings, we do think there is a great deal to be taken from an exploration of the coming of the digital machine as descriptively conveyed by contemporary observers – something we get at least an initial flavour of in the different kinds of commentaries woven around ‘move 37’. None of us have seen anything quite like AlphaGo before and while we are dependent on commentary for awareness of it – the staging of the match and its dissemination via YouTube and the reporting of journalists like Metz, for instance – that does not have to dictate the terms of its reception, nor does it fix what AI has to be taken as. Technologies, as Suchman, Collins and others have demonstrated, are not merely their technical properties; they are defined by how we involve them in our practices. In the case of AI technologies, those involvements are still developing and we need to see both saying and doing as interlinked practices central to their continuing elaboration. 9

As Demis Hassabis (2017), Google DeepMind’s (co-)founder, has conceded, AlphaGo does not know what ‘playing’ or ‘winning’ as such are. ‘Success’ – the point at which ‘the machine’ stops executing further runs of the algorithm it is built to process – is written into it is entirely mechanical, non-meaningful terms. 10 That is where these technologies have succeeded, as the designers themselves note (Silver et al., 2016, 2017), not in mimicking the human but as highly engineered problem-solving systems (which, we might add, recast ‘the human’ accordingly). But if we are judging these technologies by their effectiveness at advanced but, nonetheless, still mechanical forms of problem-solving and task execution, then we should be wary of using a language which projects ‘psychological’ and ‘agentic’ properties onto them – something Gilbert Ryle (1949) in his talk of ghosts and machines could be read as warning us about (Brooker et al., 2019). When we talk of AlphaGo’s ‘success’, we are at least partially talking about the success of Hassabis and others in developing the technology. In eliding the long-term and ongoing work involved in the creation, operation and maintenance of the technologies involved, as Lucy Suchman has discussed in several publications (e.g. 2019), we fail to get to grips with what we’re dealing with and fetishise the technology – at least in terms of how that ‘what’ is dealt with by participants and defined by the unfolding situation it happens to be part of (Hutchinson et al., 2008: 96).

It is due to our hesitancy around the claims being made on behalf of AI that we have wanted to take a step back and, following Sacks, treat descriptions of AI as a practice – one among and connected to others – to see what they’re for and what work they’re intended to do as a way into current thinking about AI. In other words, and as our title suggests, we have wanted to begin to puzzle out just what we’re doing when we’re describing AI in these or other kinds of ways. What we’ve seen in the case of AlphaGo is that ‘inquirers into the machine’, to return to Sacks a final time, will approach the work of making sense of it in different ways, according to their practical projects and interests, and generate different commentaries as a result. Moreover, these commentaries – descriptions of what an algorithm is doing, could be said to have done or could be said to be by virtue of its performance – can generate all sorts of troubles; commentators get AlphaGo’s ‘intention’ wrong and YouTube users contest the implications. AlphaGo, in this episode, was opened up to a ‘vulgar critique’, with move 37 establishing a situation in which the ‘mastery’ of the programme and/or those nominally responsible for explaining ‘it’ could be called into question via characterisations of what AlphaGo is good at, what it does, what it’s for, what it is similar to, and so on. The upshot for us is that rather than draw lines around technologies like AlphaGo, we need to explore the ‘configurations’ of humans, machines and their interrelations implicated in, and as, their worldly careers (Suchman, 2007). It is there that a concern for description has a role to play. Instead of trying to establish what ought to count as the boundaries of an AI system, for instance, we need to attend to the ways in which they are embedded in but also topicalised through our descriptive practices.

This leaves us with questions we feel are worth asking with respect to the problem of description, whether in the social sciences or the arts and humanities. How are descriptions put together, assembled or made? Why? By whom? What do they highlight, on which basis, for which purpose or purposes, to whose benefit and with which implications, if not at what cost? What might constitute a fruitful focus for thinking these matters through? Answers to this set of questions, posed with respect to determinate cases, may usefully yield working definitions of ‘the politics of description’ as Sacks took it up, leading to the kind of account we have tried to deliver here in relation to the contentious matter of whether a machine can rightfully be described as playing or is merely taking the fun out of Go (cf. Sormani, 2016).

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.