Abstract

Are development practitioners interested in research? If so, what kinds of research interest them, and for what purposes? Is the research they have access to meeting their needs for program design and management? These questions are central to understanding why research is used (or not), yet they are often overlooked by efforts to promote development research use. I interviewed practitioners in Washington, DC and Uganda to explore how they relate to research, and I identified six types of interest in research. Understanding practitioners’ diverse interests in research, and better aligning research agendas and knowledge mobilization efforts with them, may lead to more research use and more informed development practices.

Keywords

I. Introduction: Why Practitioners’ Interest in Research Matters

An American Research and Learning consultant 1 , whom I interviewed near her office in Washington, DC, described meeting with a Kenyan climate change program coordinator during a trip to Nairobi. The Kenyan coordinator admitted, with some embarrassment, that he had been carrying around a 2-page research summary in his notebook for the past year that he ‘had not found time to read yet’. The consultant was exasperated by this because the headquarters team had done everything right according to knowledge mobilization best practices—they had gotten an attractive, brief, non-technical research summary into the hands of the intended audience (the program staff). However, the last links in the chain—the staff reading, digesting, and using the information in some way—had not occurred (Interview 15, described later in this paper). The consultant was frustrated and unable to understand why the coordinator had not ‘found the time’ for it. However, she did not consider whether the 2-pager met the coordinator’s need for information—or whether it provided information that he perceived as valuable or actionable. She was working within the dominant development research knowledge mobilization framework that focuses on ‘push’ factors—how information that researchers produce can flow more effectively to users. This dominant knowledge framework overlooks ‘pull’ factors such as potential users’ interest in the research, as well as their autonomy within their institutional context to decide whether or not to consume, prioritize or use certain materials.

The question of development practitioners’ interest in research relates to the Evidence-based Policy field, which includes empirical works that inform our understanding of how frontline social services workers relate to research (Dobbins et al., 2009a; Nutley et al., 2007). The question of practitioner interest is also related to the broader question of research use, which is the subject of an intense effort by funders and advocates of certain types of development research (mostly randomized control trials, or RCTs) (Savedoff et al., 2006). This ‘knowledge mobilization’ work is concerned with certain barriers to research use and how to overcome them, but it does not consider the perspective of practitioners, what they may want from research, and whether the research being presented to them meets their perceived needs. The American consultant mentioned above is part of this knowledge mobilization effort, and it is not surprising that she had not considered the Kenyan colleague’s interest or demand for the materials when trying to make sense of his remarks, because this field of work does not generally ask what practitioners want.

Two other kinds of literature shed light on important aspects of this question of practitioners’ interest in research. The program evaluation field centres the question of use and usefulness to practitioners, providing a model of how social science could be more demand and user-driven (Patton, 2008). The adult education field’s literature on motivation and how experience influences it provides useful insights that help us theorize the role of interest (Gorges and Kandler, 2012). These literatures are discussed in the next section of this paper, but briefly, they do not directly address the question of interest in research among development practitioners. This gap in empirical work and theorizing about development practitioners’ interest in research motivated this research project. I define ‘research’ broadly, as the findings or written products of systematic inquiries that involve data analysis and interpretation. My focus is on practitioners’ direct engagement with written products such as reports and articles while keeping in mind that information about research is often accessed through professional networks and in short formats such as blogs and tweets.

I began this project by drawing on diverse pieces of the existing literature to develop a theoretical model about why and how interest in research matters to practitioners. This informed my questions about research interest, which I explored with Ugandan and American development professionals. I discussed their experiences with research, what research they find interesting, what research they would like to have and why, and how they have used research in the past. I then analyzed the interview data to inductively develop categories that represent different types of research interest. The contributions of this paper include a theoretical model which helps explain the role of practitioner interest in research use, and a typology of six types of research interest, which can be met by different types of research.

Through this research exercise, I find that practitioners seek out research for more diverse and complex reasons than researchers and research use advocates often account for. Also, many of the interests practitioners have are only partly met by available research and research production mechanisms. This raises questions about which research interests should be prioritized, who should influence research agendas, and how existing demands for research-based knowledge amongst practitioners can be met. It also suggests new opportunities to increase research use, by focusing on unmet needs and filling them. My hope is that this paper will bring attention to practitioners’ research interests and that a better understanding of practitioners’ information needs can lead to more interest from research funders, researchers and knowledge mobilizers to meet them.

Existing Literature Surrounding the Question of Practitioners’ Interest in Research

The question of practitioners’ relationship to research relates to two bodies of English-language literature, although both are focused on policymakers, not practitioners. 2 The first grew out of the UK’s New Labour Party’s slogan: ‘what matters is what works’, in the 1990s and early 2000s and the Government’s investments in producing, synthesizing and ranking research. What became known as ‘Evidence-Based Policy’ was a movement focused on quantification and the use of external, ‘unbiased’ impact evaluation to improve government services. Although the field’s premise and track record have met with extensive criticism (Cartwright and Hardie, 2012, Parkhurst, 2016), the resources invested in it and the influence of the concept have been significant. One group of studies which came out of this movement is particularly relevant to the question of this paper, and that is the empirical work on social services providers. Detailed studies about research use in the UK (and to a lesser extent Canada and Australia) documented how service providers such as nurses, teachers, probation officers and social workers related to and used research (Cherney et al., 2015; Davies et al., 2000; Dobbins et al., 2009a; Dobbins et al., 2009b; Estabrooks, 1999; Grayson, 2007; Nutley et al., 2007; Zardo et al., 2015). These empirical studies greatly improved theoretical models of research use from linear models (e.g., research evidence flows from scholars to policymakers, who passively receive it) to more dynamic models focused on relationships, social systems, and factors that inhibit and facilitate research use. For example, this research has shown that users are more likely to trust and read research shared by people they know, which has informed the concept of ‘knowledge brokers’ who translate and adapt research products for intended users, and researchers’ direct engagement with intended users (Boaz et al., 2019).

This scholarship built on Weiss’s (1979) conceptualization of the uses of research. Weiss hypothesized that research use (or ‘utilization’) could include the reframing of an issue (conceptual or enlightenment use), inform a specific decision (instrumental use), and provide political cover for a decision made for other reasons (political use). The studies in the early 2000s led to the conceptualization of a fourth type of use: the insights produced through the process of conducting research (process use) (Nutley et al., 2007). These theoretical frameworks and empirical findings informed my theoretical model of practitioner research use and how interest may play a role in it, as described below.

Starting in the early 2000s, U.S.-based development funders and economists conducting experimental impact evaluations of development programs began advocating for more research production and research utilization in the development field. Building off the Evidence-Based Policy movement and adopting the ‘What Works’ part of New Labour’s slogan, the Center for Global Development convened the ‘What Works Working Group’ in 2003 and brought together funders, researchers, and research use advocates whose organizations and rhetoric would become influential in the following decades (Donovan, 2018; Picciotto, 2012; Ravallion, 2018; Savedoff et al., 2006). Along with the Center for Global Development, the Abdul Latif Jameel Poverty Action Lab (J-PAL) at the Massachusetts Institute of Technology, Innovations for Poverty Action (IPA), the International Initiative for Impact Evaluation (3ie), and the UK-based Centre of Excellence for Development Impact and Learning (CEDIL), have been instrumental in advocating for the use of RCTs in development. These organizations and related academics are convinced that the failures of the development sector can be corrected by conducting more experiments and using what is learned about ‘what works’ to replicate effective program models around the world (Banerjee, 2007; Cameron et al., 2016; Duflo, 2016; Levine and Savedoff, 2015; Stewart, 2019). Critical literature has highlighted a number of concerns with development experiments themselves and the theory that they can and should guide development funding decisions, including methodological issues (see Fejerskov, 2022; Guérin et al., 2020; Kabeer, 2020; Kvangraven, 2020a; Stevano 2020), ethical issues (Berndt and Boeckler, 2016; Hoffmann, 2020; Kaplan et al., 2020), and questions about whether experimental results actually influence policymaking (Cartwright, 2011; Vivalt, 2017). Despite push-back against the randomista narrative by critical scholars and practitioners (see Eyben et al., 2015), the award of the 2020 Nobel Prize in Economics to three leading randomistas and the naming of another as chief economist to the U.S. Agency for International Development in 2022 demonstrates their continued influence (Kvangraven, 2020b; USAID Press Office 2022).

Efforts by development actors to enable research to influence policymakers are referred to by several different names, including ‘uptake’, ‘utilization’, ‘mobilization’ and ‘translation’, so for simplicity’s sake, here I use the term ‘knowledge mobilization’ to refer to activities relating to the production and use of research results, including synthesis, dissemination, transfer, exchange, and co-creation by researchers and knowledge users (Canadian Social Science Research Council). Like the earlier Evidence-Based Policy literature, this knowledge mobilization is focused on policymakers. In the development field, this means aid agencies and private foundations in high-income donor countries who control funds and the rules for spending them, and elected officials in low- and middle-income countries.

This development research knowledge mobilization field includes academic literature, but it is also a field of advocacy and communications, institution building and outreach that is important to analyse because of its intellectual and real-world influence. This includes three broad streams of work: (a) improving research access by creating research repositories and disseminating briefs 3 ; (b) promoting methodological hierarchies and capacity building to encourage policymakers to select and use experimental research; and (c) encouraging ‘knowledge brokers’, such as the policy staff at their organizations, to bridge gaps between researchers and users, and fostering exchanges between practitioners and researchers (Parkhurst, 2016). Although the last strategy mentioned—exchanges between practitioners and researchers—may sound like something that might surface practitioners’ interests, I could not find any documentation that suggests this occurs. Instead, they seem to be either efforts to ‘match-make’ program implementers and researchers who can conduct studies on their programs, or trainings to teach the benefits of using RCTs and related skills 4 .

The starting assumption of this knowledge mobilization work is that the most important questions are about ‘what works’—or what causal relationships can be established through experimental designs (Levine and Savedoff, 2015). For example, if you do X (e.g., provide farmers with vouchers for fertilizer), then people will do Y (e.g., farmers will use an optimal amount of fertilizer and produce more food). This assumes that researchers know the most important questions because they know what has been demonstrated to ‘work’ or ‘not work’ by RCTs and systematic reviews of them, and therefore they know the gaps in evidence 5 . The knowledge mobilizers see their role with respect to users being to educate them as to why the RCT-based evidence is the most credible and important for their work, but they do not ponder the question of what those users may want (Kaufman et al., 2022).

The dominant development paradigm implicitly assumes that essential knowledge is held by those at the ‘top’ of the aid hierarchy–those generally sitting in London and Washington and who are overwhelmingly wealthy North Americans and Europeans, and that it must flow ‘downward’ to field staff and the people living in low-income countries and communities. This hierarchy assumes that the materials American consultants in ‘headquarters’ send ‘to the field’ will be accepted by those working there because they come from headquarters. In the anecdote that began this paper about the American consultant who was upset that her two-page handout was not used by the Kenyan practitioner, the American was implicitly accepting these institutional, power and knowledge hierarchies within the aid system. Although this mindset is widely viewed as outdated and colonial (Mignolo and Escobar, 2013; New Humanitarian, 2022; Wallace, 2020), the top-down nature of funding flows and resulting power dynamics reinforce these paradigms, even as many consciously reject it on intellectual or moral grounds. It is important to acknowledge that the knowledge mobilization field is operating within these dominant frames, and that questioning assumptions about which (and whose) knowledge matters is to question this neo-colonial hierarchy.

As this brief overview explains, the Evidence-Based Policy literature provides useful insights into how individual service providers relate to research, but unfortunately, it does not extend to international development contexts 6 . Meanwhile, the development of research knowledge mobilization efforts are promoting a particular set of research products among policymakers. Thus, neither literature grapples with the question that is the focus of this paper: What do development practitioners want from research? However, there are two other literatures—evaluation and adult education—that are helpful in thinking about this question.

The American field of evaluation, linked by the American Evaluation Association and its annual meeting, is made up of professional evaluators who are hired by implementing organizations and donors to conduct evaluations of various types for the purpose of improving programs. Within their repertoire have always been impact evaluations that seek to determine the causal processes that lead to program outcomes. However, these professionals are largely separate from the knowledge mobilization actors described above (although a number of development RCTs have been presented at conferences in recent years). Rather confusingly, the term ‘impact evaluation’ is also used by academics who are seeking to make larger claims about ‘what works’ by running experiments funded directly by donors. They often need nongovernmental organizations to implement the interventions, but the purpose of these projects is to create knowledge about ‘what works’ generally, rather than improving specific programs. The ownership of these studies, and the control over the questions asked and methods used, are fundamentally different from the ethos and purpose behind the evaluation field.

Within the evaluation literature, understanding what inhibits or contributes to evaluation use has led to insights about the importance of early and often stakeholder engagement, building trust between evaluators and implementers, and appropriate forms of communication (King and Alkin, 2019; Patton, 2008). Many of these insights have been adopted by the knowledge mobilization efforts mentioned above, although the evaluation literature is rarely cited. However, perhaps the most important innovation within the evaluation profession has been the explicit focus on utilization. Pioneered and promoted by Patton (2008) and now largely mainstream within the evaluation profession, a utilization focus begins with the question of what clients want to know and how they will use evaluation findings. Nothing else about the evaluation design—the methodology, timeline, research team, and publication strategy—is decided before the needs and desires of the intended users are established. A utilization ‘mindset’ requires that evaluators ask users what they want to know, and specifically how they will use the findings of an evaluation. Key methodological and strategic questions are then made with that future use in mind. The evaluator acknowledges that there are a multitude of valid perspectives on what is most important or interesting and that they cannot make this decision themselves.

There is a striking difference between this utilization mindset and the one that currently drives most development research, in which researchers and funders decide what is to be researched, and the methodological commitments of those researchers determine what questions can be asked. There are differences, of course, between the field of evaluation and social science more broadly. Evaluations are, by definition, for the purpose of improving implementation. Their goal is to be useful, and the people who are able to make use of them are often the clients. There may be conflicts among potential users, and the evaluator must find a compromise so that the evaluation can move forward. Social science research can be conducted in the name of building specific knowledge, for the goal of generalizable knowledge, or for policy or practical use. Social science publications are a form of capital that academics need to advance their careers, and accessing funding is normally required, so aligning with funders’ interests is important. While most academics working on development issues care deeply about contributing to positive change, their work is seldom judged by its usefulness to implementers. Therefore, the satisfaction of implementers who wish to use research is less important for most social science researchers than it is for evaluators. In addition to the different motivations of researchers and evaluators, the Evidence-Based Policy movement and knowledge mobilization efforts are founded on the belief that researchers and the powerful people who fund them should determine what research questions should be prioritized. In contrast, evaluators are generally not seen as legitimate decision-makers about the purposes or goals of an evaluation.

In addition to the evaluation literature, the use of research has parallels with adult learning, a branch of education research that seeks to identify factors that influence positive learning outcomes for adults from diverse backgrounds in various learning situations. Adult learning is most often self-directed and self-motivated, arguably making it similar to learning from research in a work setting (Merriam and Bierema, 2014). Research use and adult learning often involve searching, reading, and making sense of written materials, evaluating new knowledge that may conflict with prior beliefs, and integrating or rejecting that knowledge. This process may lead to more questions and more searching, making both the adult learning and research use processes cyclical.

Adult learning literature sees motivation as a key precursor to understanding how learning unfolds: ‘Adults’ learning motivation can be viewed as a necessary prerequisite for adult learning’ (Gorges and Kandler, 2012: 611, emphasis added). Studies of adult learning have demonstrated a strong relationship between the perceived value of information and the interest in new knowledge. Adults are motivated to learn what they see as valuable and relevant knowledge (Rossing and Long, 1981). The knowledge mobilization scholarship does not, however, make this same connection that internal motivation is essential to research use processes and outcomes. It presumes that the existence of seemingly relevant research is sufficient to motivate us. This appears naïve when considering the parallel with adult education.

The Gorges and Kandler study (2012) found that negative memories of learning experiences diminish motivation. Applying this to research use, a person’s negative experiences with accessing research—not finding what they want, not being able to comprehend it, or not finding what they access to be credible—may influence their motivation to find and read research in the future. This idea that the end result of one engagement with research may affect the likelihood of future engagements is reflected in the model I propose about how research use occurs.

A person’s decision to read something, even something that lands in their inbox or on their desk, is an investment of valuable time and energy. Seeking out new information—whether that be at a library, using an online search engine, or asking a social network—requires time, determination, and patience. The costs will inevitably be weighed against the perceived value of the information the person is seeking. Although research use may be possible without interest (e.g., if a policy mandate requires that research be consulted or cited), most of the potential users targeted by knowledge mobilization advocates have significant autonomy to decide if and how to engage with research.

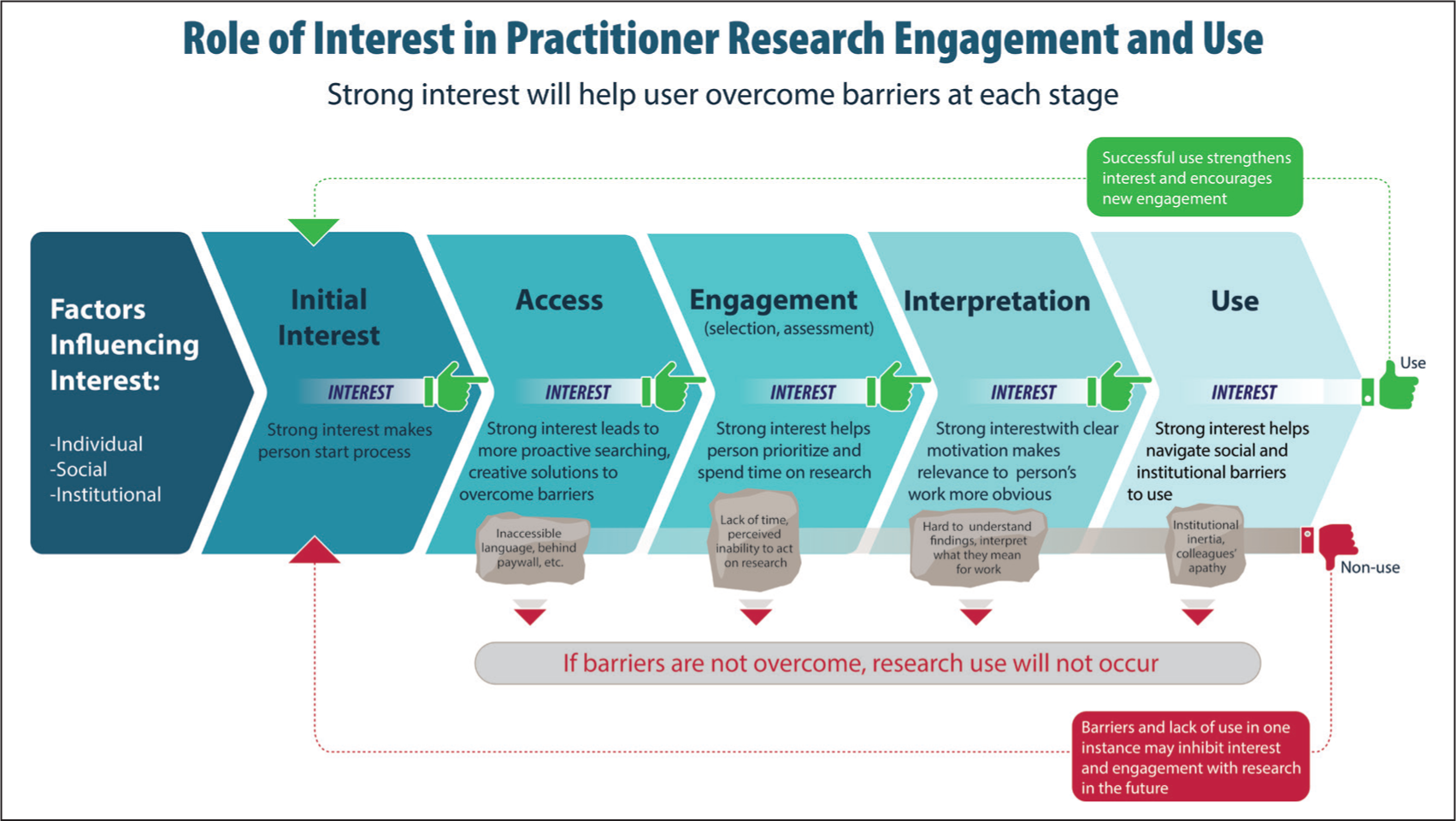

Proposed Role of Interest in Practitioner Research Engagement and Use.

The next section further elaborates on how I conceive of practitioners’ interest in research.

Practitioners’ Interest in Research

For this study, I developed a working definition of ‘interest in research’: a person’s desire to learn from research by engaging with research outputs. Research outputs include original research products, but also spin-off materials such as blogs, conference presentations, and other formats of presenting findings of a structured research effort. This meaning of ‘interest’ is distinct from the neoclassical economic use of the term. 7

Some may ask, since funders and researchers determine most research agendas, why does it matter what practitioners want? In response to that question: practitioners’ interest matters because it affects whether or not research is read and used. The theorized relationship between interest and outcomes is described in the diagram below. As this diagram suggests, an individual’s interest affects the outcomes of every step in the process. For example, even after a person has downloaded a research product and decided to engage with it, there are several reasons they may still abandon it: time constraints; mismatch between interest and contents of research; challenging writing style or technical methods, etc. Therefore, the strength of their interest will affect their decision about whether to move ahead in the process.

Research-engagement processes are iterative by nature. As mentioned regarding adult education, when a person finds relevant and credible research, this may stimulate interest, leading them to search for more research. Or the experience of effectively making changes in their organization because of what a person learned from research may provide positive reinforcement that will make them more inclined to spend time reading research in the future. Conversely, if a person has a negative experience with research (e.g., it is written in an inaccessible language, they are blocked by a paywall, or they are unable to convince colleagues of its importance), they may be less interested in engaging with research the next time (Fischer-Mackey, forthcoming). In summary, a person’s interest in research may respond to their experiences and change over time.

By ignoring interest, knowledge mobilization advocates like the Washington consultant mentioned at the beginning of this paper will continue to puzzle over non-use even when best practices of knowledge mobilization are followed—and the number of potential users who take the time to read the research will be disappointingly low.

Empirical research use studies are by their nature limited to users’ engagement with existing research—one cannot observe a user’s interaction with a product that does not exist—so the potential use of research that does not exist or is not accessible has been beyond the scope of the field (Boaz et al., 2019; Court and Young, 2003; Nutley et al., 2007). By focusing on the expressed interest in research (whether or not it is met by existing research), this paper complements empirical studies of research use, which are limited to research that actually exists. By asking practitioners what they value, do read, and would like to read if they could, this paper develops a typology of practitioners’ interests. Although this does not solve the challenge that people may not know what they want or why they want something, it does provide a starting point for new conversations about research agendas and areas for greater research impact.

A combination of conscious and unconscious drivers, or motivations, may lead to an active, conscious interest and (subsequently) expressed interest. There may be a disconnect between ‘real’ felt interest and expressed interest, but delving into this type of psychological question is beyond the scope of this paper. Given the lack of studies about practitioners’ interest in research, this focus on expressed interest is a logical place to start.

It is helpful to keep in mind that interest is not entirely dependent on individual characteristics. A particular instance of research interest may be motivated by multiple factors. For example, a practitioner may be motivated by curiosity and love of learning (an individual factor), a desire to seem up to date (a social factor), or a belief that their organization’s strategic review will provide an opportunity to change the course of the program they manage (an institutional factor). All three factors may contribute to their level of interest and type of interest.

Interest may also be influenced by the potential ways in which the research may be used. For example, if the practitioner discussed above can read a type of research that interests them, multiple types of use may occur: a reframing of the problem (conceptual use), an organizational decision to invest in a new solution (instrumental use), and even justification for not choosing a disfavoured course of action (political use) (Nutley et al., 2007). Thus, there may be multiple, interrelated motivations for a particular type of interest, and that interest may lead to multiple types of use. Even if research use is observed and the practitioner is interviewed, it may not be possible to understand her true interests and motivations, some of which may be unconscious or poorly understood by the user themself. Therefore, rather than hypothesizing about the motivations contributing to interest, this paper focuses on interest in research that practitioners can express when they are asked.

II. Methods

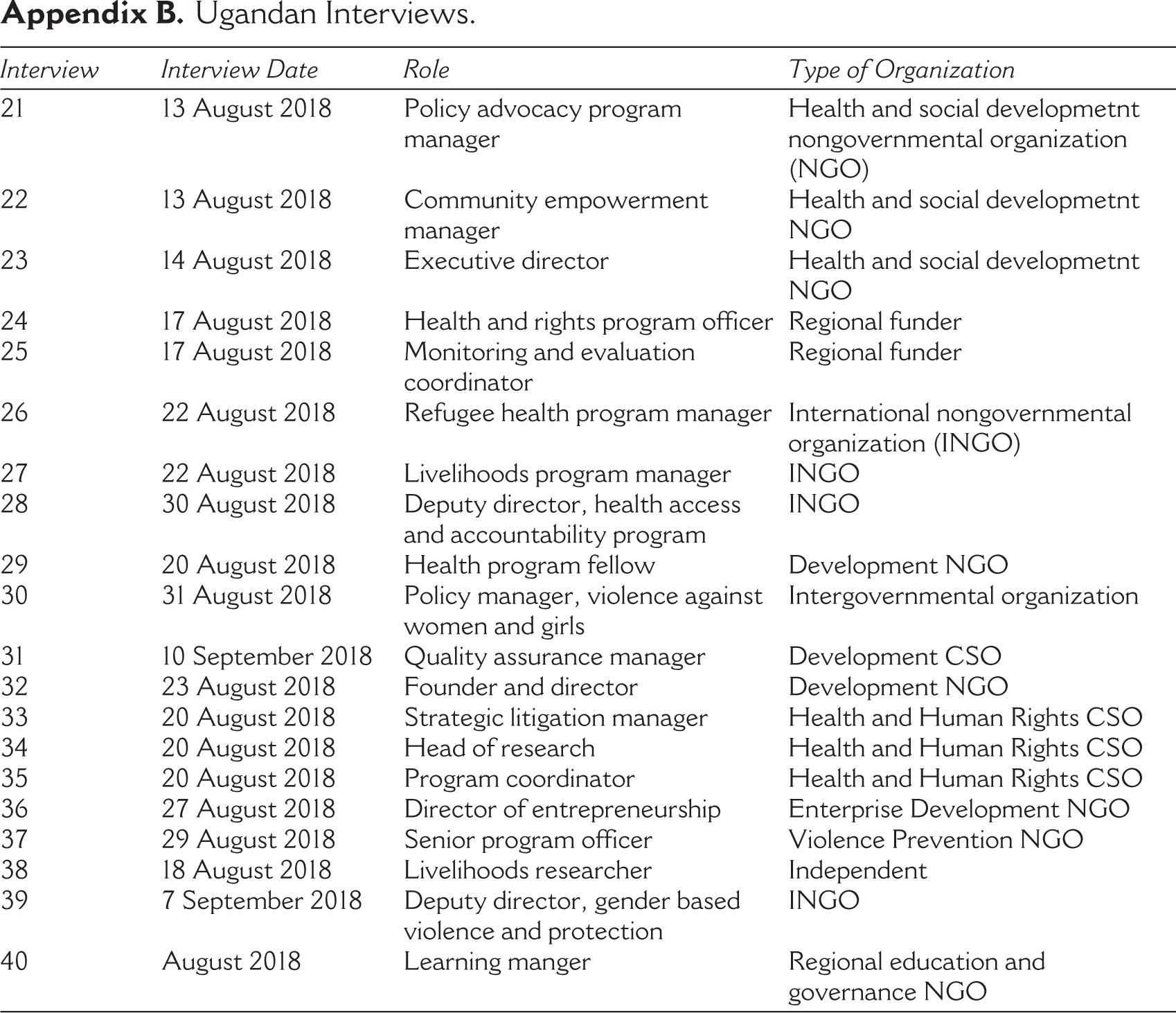

I conducted interviews in two sites as part of related projects about the role of research in the development sector. In both cases, I used a snowball sampling technique and interviewed professionals working in various sub-sectors of development. Over six weeks in 2018, I conducted 19 semi-structured interviews with Ugandan development practitioners working in Kampala in program management, advocacy, and monitoring and evaluation roles at national civil society organizations, national nongovernmental organizations (NGOs), international NGOs (INGOs), donor organizations, and inter-governmental organizations. Interviewees, all but one of whom had master’s level degrees and two of whom had doctorates, worked in sectors including health, human rights, women’s rights, economic development and entrepreneurship, and humanitarian and refugee relief. Many are regularly engaged with evaluations and program research.

In the winter and spring of 2019, I conducted semi-structured interviews with 19 practitioners working as technical specialists and managers at a large public development agency in Washington, DC. All but one had at least a master’s degree, and six had doctorates. They work in sectors including population health (family planning and HIV/AIDS), democracy and governance, and learning and/or research. They serve as advisors and technical resource persons for in-country teams who are designing and implementing programs, and they are often involved in commissioning and reviewing research. 8

The dividing line between researcher and practitioner is not always clear. However, the interviewees were all working primarily on programs or using research, not on conducting research. A distinction was made in the interviews between research they or their organizations were doing and the research that others were producing. Although the individuals with research experience may have had a qualitatively different view of research than those who did not, such a conclusion could not be made with the limited number of interviewees. Therefore, the practitioners interviewed are treated as one group, regardless of their background. The practitioners from the US and Uganda are also grouped together, and I do not attempt to draw any conclusions about the differences between Ugandan and American practitioners’ interests or the different contexts in which they work because of the small number of individuals in each context.

Interviews were semi-structured, and questions were asked about how interviewees perceived research, used research, and wished they could use research. I also asked about their perceptions of the role of research in their work, reasons for engaging with research, and their views of researchers. Interview data was analysed and coded in ATLAS.ti to inductively identify the different types of interest that interviewees have in research. In the interest of transparency, it is important to note that my experience as a development practitioner and evaluator for many years has contributed to my interest and perspective on these matters. A feeling of comradery with other practitioners may have aided in interview rapport and affected my interpretation of their statements.

Findings: Types of Interest

I inductively identified six different types of interest in research. This typology is not intended to be exhaustive nor definitive. Instead, it is meant to spur a conversation about the nature of interest, and the diversity of research that is potentially useful to practitioners. This exercise demonstrates that existing discussions by knowledge mobilizers only capture a small part of the practitioner’s interest in research and types of potential use.

The types of interest are divided into two categories: research about what is happening ‘here’ in a person’s own country or program, and research that is happening ‘elsewhere’ in the world. I discuss each in turn.

Research from ‘Here’

Practitioners understood their work to consist of addressing social and economic problems and thus often look to research to better understand the nature and scope of the problems they seek to address. Practitioners expressed interest in contextual information about a problem both to inform their own actions and to persuade others of a need to act.

To understand the nature and scope of a problem, practitioners want context-specific information. This may come in the form of rapid situational analyses (often consisting of interviews and focus groups, along with reviewing existing sources of information) or survey data that allow estimation of the prevalence of a problem. This process was described by a Ugandan interviewee (Interview 28) this way:

And in the process of designing [the program], doing the context analysis, we do not just literally jump into searching primary information by going to the field. But we take a step and look at certain resources, then try to understand context from what is already in place. And most of the time you build this particularly from, say, the existing bodies of research that we have. For instance, when you are looking a lot into the health sector you cannot do much without looking at the Uganda Demographic Health Survey.

If the information needed is not available, it is often collected. They need this information to inform program design, which is central to the mission of the organization.

A Washington-based technical adviser told me that, in Pakistan, a local research consultancy conducted targeted surveys to understand issues in particular provinces: ‘Any time there’s a research question like, “What’s the situation with gender in this province?” you could go to this research agency and just say, “We need this”’ (Interview 9). However, she noted that the research service was rarely used by others in her agency, even though its use was budgeted. A Ugandan interviewee said he wished that there was some mechanism by which practitioners could demand research and researchers would supply it—like in industry when they outsource a question to a lab, but his agency lacked resources for such research (Interview 36).

In addition to programming decisions, interviewees told me that information about problems is also necessary to inform advocacy strategies and to make policy claims. As one interviewee in Uganda relayed, in order to advocate for protection for marginalized populations, she needed information about how many people there were in the country and what types of discrimination they faced (Interview 39). In one example, she needed better information about albino people, including how many of them lived in Uganda and what types of discrimination they faced, in order to determine whether time and money should be spent developing an advocacy program related to albinos. In other cases, problems are well-known but need to be documented systematically so that the evidence can be used in advocacy efforts. For example, another Ugandan organization uses the documentation of cases of human rights abuses to publicly advocate for changes to national laws and policies (Interviews 33, 34, 35).

Practitioners interviewed believe that information about a problem their work seeks to address can be satisfied by many types of data and information, including by new research. Several interviewees noted that they want more of this type of research and that the usual constraints to getting it are both time and money.

A program is a collection of activities implemented in coordination to accomplish a specific set of goals. Increasingly, programs involve complex theories of change, are implemented by coalitions of actors, and take place in multiple geographic sites. Interviewees expressed an interest in understanding how and why a specific program is working—which some referred to as ‘operational research’ or ‘operational knowledge’. Program staff whom I interviewed would like more research about the actual functioning of programs for several reasons.

Some practitioners expressed a desire for operational knowledge to learn about and improve a program’s design or implementation. Operational research, many felt, can help identify bottlenecks and improve processes and performance. Several interviewees stated that they wished there were more operational research studies, but that there was little funding to support such research (Interviews 6, 2, 7). A Ugandan refugee program manager said: ‘I think there should be a little bit more operational research into humanitarian work and development work … the questions should be generated by the staff that are in a given setting’ (Interview 26).

One interviewee suggested that operational research is necessary to learn how to scale up programs. A public health specialist in Washington expressed a need for information on the how and why of behaviour change and to understand implementation challenges in order to scale up programs more effectively (Interview 5). It may be that the information needed for scaling up programs goes beyond operational research because of contextual differences, economies of scale, and other questions, but I was unable to follow up on this matter with the interviewee.

Interviewees described how operational knowledge may also be of interest for accountability purposes. Audits, transparency, and upwards accountability requirements are increasingly stringent. Research efforts that contribute to this type of knowledge may incorporate monitoring data, financial data, performance reports, or other routinized data. As one interviewee noted, accountability is never going to go away, and there will always be a need to have performance indicators (Interview 8).

Another interviewee suggested that more research should focus on what he called ‘donoring’ or the operations associated with awarding and dispersing grants and contracts intended to enable development. The role of the donor itself is rarely the topic of development evaluations, even among otherwise seemingly self-reflective donors. This interviewee’s sentiment that improvements can be gained by examining the how of giving aid, as opposed to simply the what of aid, is in line with agreements such as the Paris Declaration on Aid Effectiveness and the subsequent Accra Accord and elsewhere (Anderson et al., 2012).

Research that meets this interest in operations may include process evaluations and other types of evaluation, operational or systems research, and analysis of routine monitoring data. Many larger organizations have budgets for such research, but there is apparent interest in more information than is available.

The practitioners interviewed also expressed interest in research that provides justification or political protection for a particular program or organization. Research that demonstrates the positive impact of development programs is very important for an agency under threat from aid sceptics and budget hawks. In particular, those working on governance and democracy themes in Washington, DC describe themselves as being under constant threat, which makes their strategic use of research to legitimize their work a natural response to their institutional context.

One interviewee told me that her unit was founded to direct a new research program (primarily made up of RCTs and quasi-experimental studies) in order to provide legitimacy to an existing thematic area of work. She said,

‘We realized that as a sector, we really needed more evidence behind the sector, and we needed to tell our story better. So, we got [a public research agency] … and [they] basically advised us on how we could incorporate more rigorous evidence into the sector’ (Interview 4).

She is aware that her interest is in obtaining research that will provide her with political cover to do what she is already doing, not for learning purposes.

The same interviewee provided another example of how a specific study legitimized an approach. An RCT found a pilot program in Haiti providing legal services to people awaiting criminal trials in pretrial detention facilities to have positive outcomes and be cost-effective. The success of the pilot and its cost-effectiveness led the Haitian government to take over the program. She said:

We have a success story that we’re promoting right now from Haiti … the [impact evaluation] showed two things, a number of things but for me two headlines. One, it reduced by over 50—well around 55%, the number of prisoners being held in pretrial detention, for nine months or more. … Number two, the amount of money the government saved on detaining prisoners and paying all their costs was more than enough to pay for the lawyers to move their cases forward. As a result of this … the government of Haiti, the parliament of Haiti passed legislation to create the first-ever legal aid service in Haiti. (Interview 4, emphasis added)

Her emphasis on the numbers and cost-benefit analysis may indicate that such evidence is particularly important for policymakers. This example indicates that a program’s survival may hinge on the existence of a particular type of research that demonstrates impact. It is too soon to know the outcome of the legislation or whether the program will indeed survive, but according to the interviewee, the research was key to building political support for the program. Several interviewees mentioned this RCT’s positive findings, indicating that they had a strong interest in promoting it.

An interviewee shared another example of interest in demonstrating success in Afghanistan: ‘We did [an impact evaluation] in Afghanistan specifically on community-based education … We actually found that the impact evaluation was very useful in justifying because there are a lot of people even in the agency that question the concept of [the program]’ (Interview 10). This use of impact evaluation findings highlights the importance of research that legitimates an approach for stakeholders within the agency. Often, aid agencies are thought of as unitary actors, but according to many of my interviewees, intense competition between divisions and among actors with different viewpoints makes research an important tool for protecting territory or budgets even within the same organization (Interviews 1, 2, 5, 9, 12, and 16). Therefore, research can be valuable in intra-agency political struggles.

Success stories used for justification may take many forms, but these interviewees preferred experimental and quasi-experimental impact evaluations that link their intervention to a positive outcome. These positive findings may lead to the scale-up or replication of programs, but the studies may simply be used for political protection so that work can continue unchanged. This is not the type of use that RCT advocates hope for Innovations for Poverty Action (Savedoff et al., 2006).

The examples of interest in success stories all came from interviewees in DC, while none of the Ugandan interviews described this type of interest. This may be interpreted in at least three ways: DC interviewees work in a single agency under constant threat of budget cuts, and thus those interviewees are more focused on success stories than those in Uganda; RCTs carry more weight in DC, making interest in specifically RCT-linked success stories more prevalent; or I encountered this pattern by chance. Determining whether any of these explanations are correct would require additional research.

Research from Elsewhere

The next three types of research interest expressed by interviewees focus on what is happening elsewhere—in another program, country, or globally.

Practitioners are interested in research that keeps them on top of changes in their fields. In the health science field, changes are rapid and profound, making this particularly important. The clearest example shared with me was from a health advocacy professional in Uganda (Interview 24):

In my previous role I worked as a Technical Advisor for World Health Organisation, and I needed to be on top of recent information about disease control and programming in development work. So, I just had to be on the outlook for updates.

It is possible that the nature of scientific breakthroughs in the health field may make the need to be on top of new research more acute than in social fields where research progresses more incrementally. However, several interviewees from other sectors mentioned reading industry newsletters to stay up to date on new research (Interviews 1, 8, 12).

There is an interest in research that inspires. One way practitioners may be inspired is by example. Practitioners want to learn about some program or strategy that—in the face of great odds—was effective at addressing a complex and overwhelming challenge. This type of interest is for hope and inspiration, not a cookie-cutter program that can be replicated, or a principle that can be generalized. The interest in an inspiring example is not that the formula was discovered, but that positive change is possible. Research that describes a positive change can be inspiring, regardless of context.

A deputy director for gender-based violence working for an international NGO in a refugee settlement in northern Uganda told me that she wanted research that helped her understand how social norms about gender can be changed (Interview 39). She reads the reports of other non-profit organizations to find examples and learn about their work on gender issues in Uganda and the region. Ideally, she says, she will find creative strategies and examples of success to give her hope that changing seemingly intractable social problems is possible.

Another type of inspiration can be intellectual stimulation and new ways to conceptualize issues. An example of this type of interest came from a health and human rights research director in Uganda, who told me that a law journal article about presidential powers in the United States inspired her to think differently about the Ugandan presidency (Interview 34). This conceptual use of research, which Weiss (1977) refers to as the ‘enlightenment model’ of research, is an example of an idea inspiring a practitioner by providing a new way to think about an existing problem.

A practitioner’s interest in hopeful examples may be satisfied by the narratives provided in NGO reports and commissioned research and evaluations. Although such reports may be criticized for selection bias or failure to identify a causal mechanism, the fact that gains are made in difficult contexts, which provide some hope for forward momentum, may be sufficient for practitioners.

Another type of interest is for research that provides ‘rules of thumb’ or general principles to guide their work. This may be in the form of best practices, for example, as stated in WHO recommendations, or it may be general principles of how to work. While a consensus forms around some practices over time, others are contested, making research helpful to arbitrate between competing principles. Interviewees believe that best practices can help an organization avoid mistakes and improve its effectiveness.

As one health and learning manager in DC told me, when she first started in her position focused on research utilization, she heard that everyone knew what best practices were and it was just a question of getting them into programs (Interview 13). However, as she interviewed people, she found that there was no consensus on best practices. This lack of consensus led her to develop a process to codify what she calls ‘high-impact practices.’ She worked with stakeholders at partner agencies to develop a process that involved identifying areas of concern or promising innovations among field practitioners, conducting literature reviews, and distilling guidance about the practices or actions that should be implemented in different contexts. The idea is to develop flexible guidance that can be adapted to local needs and contexts while maintaining the best possible health and social outcomes. Although I was not able to speak with potential users of these materials to assess their level of interest, an interview with a former field practitioner in the same division suggested that field staff are interested in general guidance such as this (Interview 19).

Interviews with practitioners working on governance issues in DC indicated a desire for general principles about whether to support civil society that cooperates with government or plays a watchdog role (Interviews 16, 12). To satisfy such demands, some practitioners I interviewed look to literature reviews or systematic reviews. However, they noted that it is often difficult to draw inferences due to the differences between cases studied and their own work. Also, as Cornish (2015) has argued, systematic reviews that claim to provide a definitive answer on a particular social or political issue can lead to readers having an inappropriate level of confidence about what is ‘known’ about a topic. Systematic reviews that limit their scope to experimental studies, which are a small and unique subset of social and political change studies that are usually about induced, rather than organic, change, may be particularly prone to drawing invalid generalized conclusions.

One former Washington-based interviewee told me that he was involved in a research project designed to answer the question of whether decentralization ‘works’ at improving democratic governance (Interview 16). Such a desire for rules of thumb is not uncommon in the complex and difficult field of development. Although such solutions have proven elusive, as they did in this case, some practitioners still hope that further research will provide general guidelines about what to invest in and what to abandon.

III. Discussion

As this inductive exercise demonstrates, practitioners have diverse interests in research. Some interests, such as operational knowledge and legitimacy, are well-known within the development sector. Other interests identified are rather unique, such as the use of research to inspire hope. These different interests can be found in a single person—they are not contradictory or based solely on the position of the potential user. However, the interests that get prioritized when opportunities to conduct or commission research exist would depend on particular institutional and social factors.

A common refrain amongst researchers is that ‘practitioners are not interested in research’. My research demonstrates that practitioners are interested in what research might offer them, but this interest may look different than what researchers who are producing research or promoting research may expect. Also, this exercise reminds us that what is ‘useful’ depends on who you ask, as the types of ‘use’ articulated by interviewees were much more diverse than the replication of ‘what works’ that knowledge mobilizers are promoting.

If practitioners are interested in research but are not using the research that is offered to them (even in easy, 2-page formats), a plausible explanation for this is that the research being offered does not match their interests and needs. We cannot know how the research would be used if it met the expressed demands of the interviewees until such research is available to them. This will require a shift in how research agendas are set and how research funds and skills are used. Understanding research use in practice would also require a more expansive understanding of ‘use’ than the ideas promoted by knowledge mobilizers about replicating ‘what works’. The works of Weiss (1979) and the empirical Evidence-Based Policy literature provide the basis for a more comprehensive understanding of research use.

A common complaint from interviewees was that they could not find the context-specific information they needed. It is sometimes unclear to them whether such information exists already, or whether new research is needed. Based on my interviews and knowledge of the robust, yet poorly coordinated research and data ecosystems of Uganda, I suspect that many of the questions Ugandan practitioners have could be informed, if not fully answered, by existing information. However, finding such information is not easy. Journal paywalls, disparate and disconnected databases, and time and money are just some of the barriers to effective use of existing research and data. The skills to find and make sense of such information, and then apply it to the needs of practitioners, are seldom available to practitioners.

If we could imagine an effort to bring together resource librarians and development researchers with quantitative, qualitative and language skills so that practitioners could call them with questions, a great deal of existing research could be put into use, and unmet information needs could be satisfied. New databases, literature reviews and systematic reviews help in consolidating information in particular places, but a customized, demand-driven resource centre with live researchers would offer a different type of access to research findings and be able to answer real felt needs for information, insight, and advice. Considering the vast resources currently spent on original research and knowledge mobilization by large research funders, there could realistically be resources for a demand-driven centre along these lines (Kaufman, 2022). Of course, the quality of the information being shared from such a resource centre would only be as good as the information and researchers comprising it. A methodologically driven resource, like 3ie’s experiments-only database, could lead to more frustration and a skewed understanding of the existing research-based knowledge. Still, ideas such as this one that seek to meet the unmet needs of practitioners deserve attention from those interested in expanding research use and sharing of knowledge.

Another common frustration expressed by interviewees was that the time and funding for operational research were lacking. Skilled researchers and evaluators and adequate funding could partly address this problem if funding were increased or reallocated and practitioners had a greater say in what research is conducted. However, the short timelines of grants and the rush to begin and scale up programming without adequate planning time is a structural problem within the development system, and it requires dedicated attention by funders and implementers (Anderson et al., 2012). Therefore, larger structural factors beyond the research production processes are a barrier to addressing this problem.

Legitimation was an important use of research that practitioners expressed—particularly among those in Washington. RCTs were viewed as a valuable tool in legitimating a programmatic approach. The need for legitimation, and the use of research for it, will not be surprising to anyone familiar with the development sector’s competitive landscape (including intra-institutional competition). Just because practitioners want research that justifies their existing work, this does not mean that biased research should be produced just to please them. Researchers will always have an important role to play in refining research questions, developing sound methodologies, and conducting research according to the standards of their disciplines. Researchers working within traditional modes of social science may strive towards objectivity or even have a goal of generalizable knowledge, whereas critical or activist-aligned researchers may embrace an explicit discussion of their priors and biases, each in accordance with their own research paradigms. There are various legitimate types of research, just as there are various legitimate interests in research and uses of research.

Practitioners discussed the need for ‘rules of thumb’ to guide them. Somewhat surprisingly, they did not refer to development RCTs or the ‘what works’ framing when discussing this. As mentioned above, they did mention RCTs for legitimation purposes, but not for learning or generalized knowledge. In fact, many interviewees noted that very few of the approaches proven to ‘work’ by RCTs (Banerjee et al., 2017; Duflo, 2016), have been successfully replicated or scaled up. It may also be that the distinction between what worked in one instance and what will work in another instance, which Cartwright and Hardie (2012) beautifully explain, is readily apparent to practitioners who are steeped in the details and intricacies of the contexts in which they work. It might be surprising to knowledge mobilizers that the practitioners interviewed appeared sceptical of their central claim that RCTs could provide universal knowledge about ‘what works’. The demand-driven research resource centre I imagined above might be one way to better meet this demand for rules of thumb. Researchers at such a resource centre could conduct requested reviews to develop research-based guidelines on a topic or question.

The finding that practitioners are interested in research that inspires them is a reminder that others may not know what a person needs or wants. Practitioners require access to research of their choosing—not just research that others deem to be important for them. Similarly, to stay abreast of changes in the field, practitioners need to be able to access the latest and best research produced. Efforts to decrease barriers to research access, such as by granting journal access to those working in low-income countries, are important to democratizing knowledge and building equitable access to information within the development sector 9 .

IV. Conclusion

A natural conclusion from these findings is that more demand-driven research could help fill existing needs. This could involve a different pathway to use than the model currently used by knowledge mobilizers. A demand-driven model of use would involve the following basic elements: (a) understanding existing interest in research, (b) influencing research funders and researchers to produce research that meets existing interest and/or devote resources to locating and analysing existing research and data, and (c) continuing existing knowledge mobilization activities (language translation, dissemination, multiple formats) to ensure that new research reaches potential users.

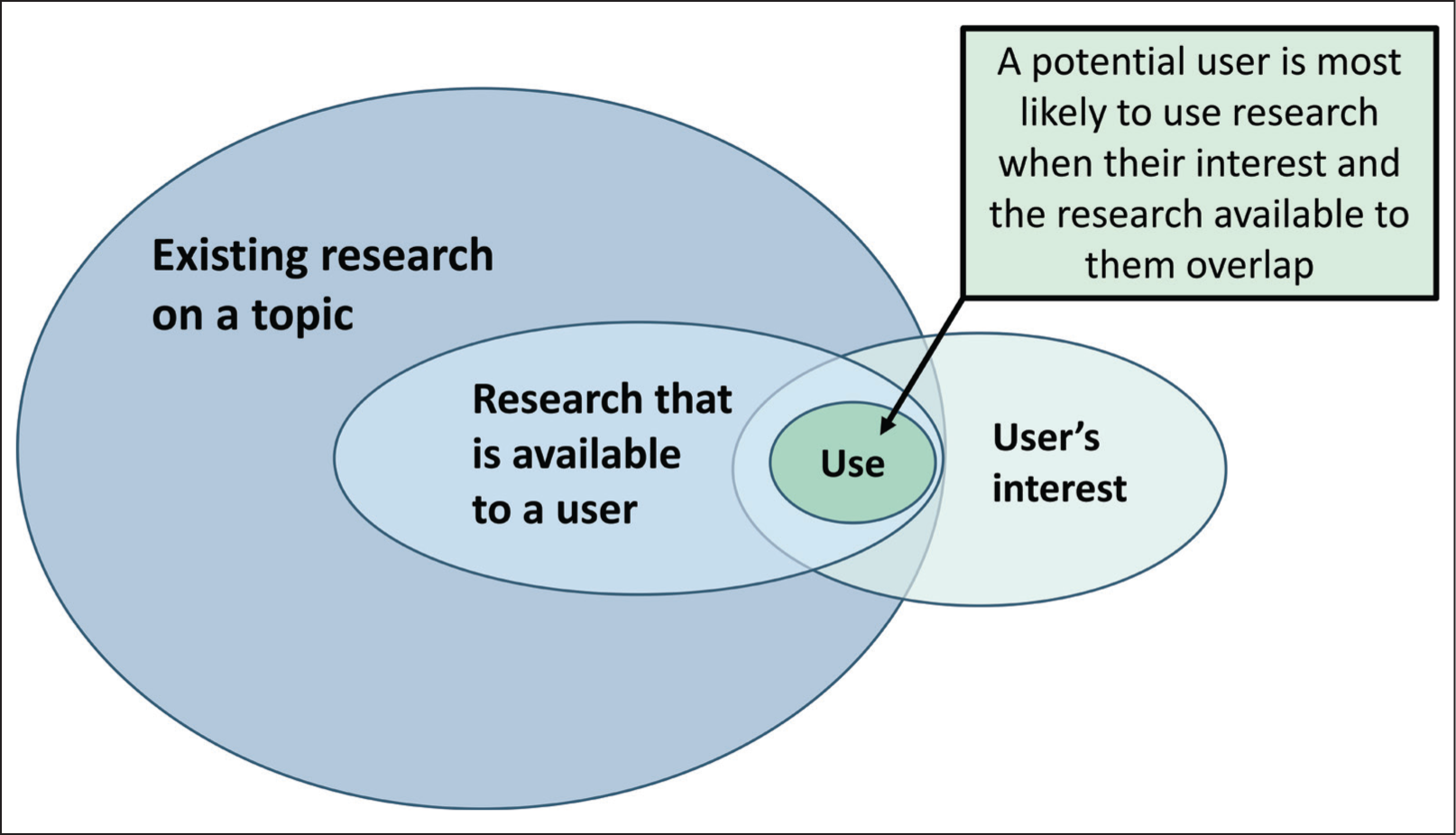

Figure 2 below presents a way of visualizing why this demand-driven model may be both strategic and effective. It depicts the role of interest as it relates to existing research, research access, and research use. Of the research that exists, only that which is known and accessible can be used. Most of the use that occurs will take place where interest in the research also exists, representing the overlapping Venn diagram. Research use will most likely occur when all factors align, including interest.

The Relationship Between Existing Research, Available Research, Interest, and Use.

This model supports the idea that an expedient way to increase research use would be to produce more research for which there is already interest among potential users. Importantly, awareness of research and access to it would remain critically important in determining whether new research is used.

Although the development research knowledge mobilization field has been speculating on barriers to policymakers’ research use for over 20 years, it has not systematically investigated policymakers’ (or practitioners’) experiences with or perspectives on experimental impact evaluations or other types of research. It has not commissioned empirical studies to understand what policymakers (or practitioners) want. And yet, it does seem that there is a new recognition that the types of research they are promoting may be one reason that research is not used. A final report from the Centre for Global Development’s Working Group on New Evidence Tools for Policy Impact (Kaufman et al., 2022) mentions several barriers to impact evaluation use. It paraphrases the work of Al-Ubaydli et al. (2019), stating:

Unless intentional efforts are made, many impact evaluations overlook the political economy of reform in different settings and contextual factors such as service quality and implementation capacity that influence the relationship between the program and its results. Whether scaling a pilot intervention, adjusting a widespread program, or introducing a new innovation, complementary analyses on context, cost structure, implementation feasibility, equity, and political economy matter for policy impact (Kaufman et al., 2022: 9).

This demonstrates a heightened awareness that the needs of policymakers may not be met by the content of many impact evaluations. Policymakers need contextual, political economy, implementation and capacity information, and impact evaluations rarely include such information. This recognition that users’ unmet needs are one reason for the lack of uptake suggests there may be an opening for more engagement with the findings of this research exploring users’ felt needs for research. Another hopeful signal in the Centre for Global Development report suggests a greater interest in meeting demands for research. A final paragraph under the third recommendation, which is related to partnerships and building capacity of researchers in low-income countries, states:

Last, philanthropic funders and other interested donors should commit to mobilizing and pooling additional resources through a

This idea of a demand-driven research fund is only mentioned in this stand-alone paragraph, and unfortunately, it is not elaborated further in the report. It recommends that low-income governments articulate research agendas, not practitioners, so this is not exactly aligned with my argument. However, shifting control of research funding and agendas to governments of low-income countries is one way to centre the questions and information needs of different people, and this is a welcomed acknowledgement that the research priorities and information needs of people other than researchers and funders are legitimate and deserve attention.

The inclusion of these two ideas—that experimental impact evaluations may not meet potential users’ information needs and that a demand-driven research fund would be valuable—within a report that reiterates much of the same research mobilization narrative of the last two decades, suggests that at least some of the Working Group members recognize that attention should be paid to users’ needs. This is hopeful because my research indicates that more inquiry into what users want is a fruitful, important, and perhaps strategic avenue for increasing research use and supporting informed development practice.

Those interested in promoting development research use may find that considering the perspectives and interests of practitioners is a productive area of inquiry. It may lead to new ideas, such as those I proposed here, to make research production and use more inclusive. This is important not just because making processes more inclusive and democratic is a way to address colonial legacies, but also because this may lead to more useful, and used research, which serves the shared goals of everyone working in development and development research.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author received no financial support for the research, authorship and/or publication of this article.

Appendix

Ugandan Interviews.

| Interview | Interview Date | Role | Type of Organization |

| 21 | 13 August 2018 | Policy advocacy program manager | Health and social developmetnt nongovernmental organization (NGO) |

| 22 | 13 August 2018 | Community empowerment manager | Health and social developmetnt NGO |

| 23 | 14 August 2018 | Executive director | Health and social developmetnt NGO |

| 24 | 17 August 2018 | Health and rights program officer | Regional funder |

| 25 | 17 August 2018 | Monitoring and evaluation coordinator | Regional funder |

| 26 | 22 August 2018 | Refugee health program manager | International nongovernmental organization (INGO) |

| 27 | 22 August 2018 | Livelihoods program manager | INGO |

| 28 | 30 August 2018 | Deputy director, health access and accountability program | INGO |

| 29 | 20 August 2018 | Health program fellow | Development NGO |

| 30 | 31 August 2018 | Policy manager, violence against women and girls | Intergovernmental organization |

| 31 | 10 September 2018 | Quality assurance manager | Development CSO |

| 32 | 23 August 2018 | Founder and director | Development NGO |

| 33 | 20 August 2018 | Strategic litigation manager | Health and Human Rights CSO |

| 34 | 20 August 2018 | Head of research | Health and Human Rights CSO |

| 35 | 20 August 2018 | Program coordinator | Health and Human Rights CSO |

| 36 | 27 August 2018 | Director of entrepreneurship | Enterprise Development NGO |

| 37 | 29 August 2018 | Senior program officer | Violence Prevention NGO |

| 38 | 18 August 2018 | Livelihoods researcher | Independent |

| 39 | 7 September 2018 | Deputy director, gender based violence and protection | INGO |

| 40 | August 2018 | Learning manger | Regional education and governance NGO |