Abstract

As news organizations increasingly adopt AI-driven technologies like news recommender systems (NRS), notions of responsible artificial intelligence (AI) design have attracted significant scholarly and public attention. Particularly, transparency and user control are crucial for the fulfilment of democratic freedoms and human rights. However, users’ desire for such features in NRS has not yet been sufficiently examined. Therefore, this study set out to comparatively examine how individual-level characteristics contribute to the desirability of transparency and user control in NRS across five nations (Netherlands, Poland, Switzerland, United Kingdom, United States; N = 5079). We show that the desire for features of responsible NRS is shaped by individual-level characteristics like NRS-related concerns and algorithmic awareness but does not always manifest equally across different national settings. By considering the audience’s view on features of responsible NRS, our study can be a stepping stone towards responsible journalistic AI.

Keywords

Artificial Intelligence (AI) is currently capturing the attention of scholars and practitioners alike. Broadly speaking, AI is concerned with the development of human intelligence in machines (Nilsson, 1998). AI systems use data and algorithms, i.e., a series of steps specifying how data should be used “to generate outputs such as content, predictions [or] recommendations” (EUAct, 2021: 39). As news organizations strive to save time and resources, they have increasingly turned to algorithmic and AI-driven technologies (Lin and Lewis, 2022), a prominent example of which are news recommender systems (NRS).

News recommender systems are algorithmic or AI-driven solutions that filter, suggest, and prioritize news content based on previous or similar users’ behavior, explicitly stated user preferences or popularity metrics (Karimi et al., 2018). Although NRS can benefit users by helping them navigate the plethora of available information, personalized news can have broader societal implications. If NRS rely too heavily on past consumption, they can work against the ideal of a broadly and diversely informed public (Helberger et al., 2022). Additionally, the black box nature of AI technologies, particularly relating to their opaqueness and limited controllability, has been widely criticized (Diakopoulos and Koliska, 2017; Pierson et al., 2023).

In this vein, scholars use the term “responsible NRS” to describe NRS designed to avert undesired effects on users’ news consumption by adhering to democratic, journalistic, and legal norms (Elahi et al., 2022). Notions of responsible NRS often revolve around the concepts of diversity, transparency, and user control. Diversity relates to the range of topics, viewpoints, and entities, i.e., people, groups, and organizations, covered in the news (Loecherbach et al., 2021). Consequently, diversity-aware recommenders facilitate exposure to and active engagement with diverse news (Mattis et al., 2022). In turn, transparency and control aim to work against the opaque and uncontrollable character of NRS (Harambam et al., 2018). While diversity in NRS has become a concept of much interest in theoretical and empirical research (e.g., Helberger, 2019; Vrijenhoek et al., 2021), less attention has been paid to transparency and user control.

Transparency pertains to a “set of best practices regarding how users should be provided with insights about a system” (Elahi et al., 2022: 110). This can include designated website sections with information about the use of AI and data for recommendations (Diakopoulos and Koliska, 2017).

User control refers to users’ “possibility to exert control over the algorithms that curate [their] news provision” (Harambam et al., 2018: 4). Users may, e.g., adjust their recommendation settings or turn off NRS altogether, thereby making “the ideals of transparency actionable” (Harambam et al., 2018: 4). Thus, transparency and control are “intertwined” concepts (Storms et al., 2022: 10).

Transparency and user control of NRS correspond to democratic freedoms and human rights. Users should be able to freely decide which information they want to receive, express their informational preferences, and consent to the collection and processing of their personal data (Eskens, 2019; Harambam et al., 2019). These freedoms also relate to citizen agency and autonomy, i.e., the capacity to exercise control over one’s attitudes and actions without coercion or manipulation (Rubel et al., 2021).

Offering transparency and control in NRS may also be beneficial from an organizational and economic perspective. Since NRS replace or supplement human editorial decisions, citizens must be able to trust these systems as much as they trust the journalists (Pierson et al., 2023). Measures like transparency and user control may help decrease feelings of uncertainty and vulnerability, thereby promoting trust among users (Van Drunen et al., 2022), and ultimately helping news organizations retain audiences (Harambam et al., 2019). Despite this, audience evaluations of these features have not been studied thoroughly. A closer examination of which individual-level factors contribute to a higher or lower desire for responsible NRS is crucial for several reasons.

Recommendations not only shape users’ news provision but are also necessarily shaped by the data users generate and provide to these systems. This reciprocity demonstrates that users must have the right “to have a say” in the design of NRS (Helberger et al., 2022: 1618). Furthermore, as transparency and user control are democratically desirable, citizens should ideally see merit in them. Consequently, we must understand “citizens’ normative perspective on the technologies that affect the democratic discourse” (Helberger et al., 2022: 1618, 1618). Assessing factors that explain the desire for responsible NRS design can help news organizations develop NRS that not only better meet user demand, potentially increasing audience satisfaction and engagement, but also comply with legal requirements and journalistic missions. Analyzing this desirability and how it differs depending on micro-level dispositions can thus inform both practitioners and scholars about NRS design.

Additionally, as citizens’ assessments of AI in journalism may differ across media systems (Thurman et al., 2019), a cross-national perspective on how individual-level factors’ significance varies in different macro-level contexts is also crucial.

Thus, we aim to contribute to existing research on responsible NRS by examining how individual characteristics relate to the desire for transparency and user control in NRS (RQ1) and how these evaluations (RQ2) and relationships (RQ3) vary across five Western countries.

Audience perspectives on responsible NRS

Research on AI in journalism increasingly focuses on the responsible use of AI technologies in news production and distribution (Helberger et al., 2022). Scholars have advocated for a normative perspective on AI, tying the importance of responsible AI to journalism’s democratic objectives (Lin and Lewis, 2022). Consequently, inquiries into responsible AI and NRS are often theoretical or conceptual (Elahi et al., 2022; Helberger, 2019) and detail their significance for journalism, audiences, and the public good.

Personalized recommendations, which both impact users’ interactions with news and rely on user-generated data, make audiences “active players” in shaping digital news flows (Helberger et al., 2022: 1618). This can have implications for the formation of an informed democratic citizenry by promoting information behavior based on users’ preferences rather than what journalists consider important information (Mitova et al., 2022). Such interdependencies highlight the importance of considering audience assessments of AI-driven technologies that influence news production and distribution and further raise the question if and under which conditions users appreciate normatively desirable features.

Therefore, a fast-growing strand of empirical and experimental research addresses users’ assessments of and engagement with responsible recommenders. Most attention has been paid to how audiences assess diversity in NRS (Bodó et al., 2019) and how NRS impact content diversity (Bountouridis et al., 2019; Möller et al., 2018). These studies show that users appreciate diversity in NRS (Bodó et al., 2019) and that recommenders can nudge users towards consuming more diverse news (Heitz et al., 2022; Loecherbach et al., 2021). However, users’ consumption diversity also hinges on individual-level and situational factors like users’ news use frequency (Bodó et al., 2019) and recommendations’ positioning on websites (Loecherbach et al., 2021).

Studies also indicate that users generally seem to desire transparency about and control of NRS and consider them important features (Harambam et al., 2019; Monzer et al., 2020; Van Drunen et al., 2022), even if they do not necessarily use these features (Moeller et al., 2023). Users sometimes regard them as “two sides of the same coin” (Storms et al., 2022: 11), as transparency without opportunities to influence recommendations is perceived to be “pointless” (Storms et al., 2022: 11). Audiences also believe that more control over NRS would increase their trust in and satisfaction with media brands and the NRS they employ (Monzer et al., 2020). Corroborating this, Van Drunen et al. (2022) show that transparency and control are important drivers of users’ trust in media organizations using NRS. Yet, Moeller et al.’s (2023) recent experimental study indicates that control mechanisms do not necessarily increase user satisfaction.

Overall, research has focused on assessing the general desirability of transparency and control or the effects of such measures on user satisfaction. Not much research has analyzed which factors explain a higher or lower desire for these features.

Individual-level explanations of the desire for transparency and control

Research on user perceptions toward NRS and similar technologies provides a promising starting point for our inquiries.

First, privacy concerns can contribute to less favorable attitudes towards NRS, e.g., regarding their fairness and usefulness (Araujo et al., 2020). Users with higher privacy concerns are more likely to engage in protective measures like reading privacy agreements (Kokolakis, 2017). As transparency aims to address concerns about how personal data is stored and used (Diakopoulos and Koliska, 2017), we assume that privacy concerns and the desire for transparency are positively related. Similarly, privacy concerns may trigger responses amongst users to achieve their privacy goals, like actively managing their digital behavior (Kokolakis, 2017), and may therefore relate to a higher desire to control which recommendations they receive:

Higher privacy concerns are associated with a higher desire for transparency.

Higher privacy concerns are associated with a higher desire for user control.

Additionally, it is crucial to consider users’ attitudes toward NRS specifically. Research has shown that users believe that transparency and user control can help them monitor and counteract potential algorithmic biases and manipulations (Eslami et al., 2015; Monzer et al., 2020). Moreover, if algorithms make mistakes, people are more likely to consciously evaluate the decision-making process and engage in corrective behavior, such as attempting to modify or train the algorithms (Eslami et al., 2015). Thus, if users are more concerned about NRS and the accuracy of recommendations, they might have a higher desire for transparency and control to mitigate unsatisfactory algorithmic outputs:

Higher NRS concerns are associated with a higher desire for transparency.

Higher NRS concerns are associated with a higher desire for user control.

Studies further reveal that knowledge about algorithms and how they operate affects how individuals make sense of and cope with risks related to algorithmic systems, even if these latter assessments are not necessarily factually correct (Swart, 2021). Higher knowledge of algorithms’ presence, functions, and impacts has been found to stimulate “behaviors which negotiate or challenge the algorithms” (Min, 2019: 4). Users aware of algorithms’ presence on social media are also more likely to engage in behaviors that aim to proactively influence which information is shown to them by, e.g., editing their profile and ad settings (Eslami et al., 2015; Swart, 2021). Thus, higher algorithmic awareness might increase users’ desire to acquire more knowledge about how NRS work, and exercise control over the recommendations they receive:

Higher algorithmic awareness is associated with a higher desire for transparency.

Higher algorithmic awareness is associated with a higher desire for user control.

An additional consideration is the extent to which users generally trust that media are fair, objective, and accurate when covering the news. Audiences must be able to trust NRS because their recommendations may impact the receipt of relevant and diverse information required for informed political choices (Pierson et al., 2023). While research on media trust in relation to NRS and user desire for responsible NRS is still limited, Van Drunen et al. (2022) find that although transparency and control mechanisms are slightly more important to users with high trust in the media, users with low media trust also find these features important.

Yet, research on trust more generally suggests a negative association between trust and the desire for such measures. Users who trust media less may view transparency and control mechanisms as a way to critically evaluate the credibility of news content (Fawzi et al., 2021) and the NRS employed by news organizations. However, as not much research has been done on trust as an explanatory variable in the context of NRS, we pose an open RQ:

How does media trust relate to the desire for transparency and user control?

Country-level influences on the desire for transparency and user control

The desirability of transparency and control may also depend on contextual factors. Although individual studies have examined users’ attitudes toward NRS comparatively (e.g., Thurman et al., 2019), the desire for responsible NRS has not been linked to macro-level specifics. Applied to transparency and control, several country-level factors may be particularly relevant. These factors include differences in cultural values, exposure to AI technology, democratic models, regulatory environments, and high-profile incidents.

Our analysis considers five Western media systems – the Netherlands, Poland, Switzerland, the United Kingdom, and the United States – where we expect these factors to vary to a greater or lesser extent. In terms of cultural differences, we have no hard evidence that the selected countries differ in the value they place on individual freedoms, privacy, and notions of autonomy and agency, which might then lead to different demands for transparency or user control.

Regarding exposure to AI technology in the media sector, news organizations in the Netherlands and Switzerland have been found to be more cautious in their experimentations with NRS, resulting in similar adoption rates (Bastian et al., 2021). In Poland, mostly larger outlets owned by foreign companies are experimenting with NRS (Kreft et al., 2021). Amongst the five countries, the adoption rate of NRS is highest in US and UK media (Bodó, 2019). This may make US and UK users more tech-savvy but also more aware of issues surrounding algorithmic transparency and user control.

With respect to democratic models, the United States and the United Kingdom are traditionally considered liberal democracies with a strong Anglo-Saxon culture of individualism and citizen autonomy (Kriesi, 2015). In liberal democracies, according to Helberger (2019), we should expect users to particularly value individual control and welcome the ability to customize NRS to their own interests. In the corporatist and consensual systems of the Netherlands and Switzerland, on the other hand, where deliberative elements, like high levels of public debate surrounding policy changes, are more pronounced (Kriesi, 2015), we should expect users to have higher transparency demands – e.g., about whether their media sufficiently inform them about different perspectives on issues of public debate (Helberger, 2019). As a post-communist democracy, Poland has a distinctive democratic culture in which the values of liberal and deliberative democracies are achieved to a lesser degree than in the remaining four countries (Haerpfer et al., 2022).

Regarding the regulatory environment, the Netherlands and Poland follow the European Union’s regulatory framework, including the General Data Protection Regulation (GDPR) and the proposed Artificial Intelligence Act (EUAct, 2021). Switzerland, as a non-EU member state, does not have a specific regulation for AI systems, nor does the United States have a comprehensive federal law regulating AI systems and algorithms (Policy, 2022). The UK government has outlined plans to regulate artificial intelligence responsibly, but these have not yet been implemented (UK Department for Science and Innovation, 2023).

Regarding high-profile events, the Netherlands is pursuing an additional rigid national regulatory course. The Dutch government wants more transparency about AI systems, following scandals surrounding the use of algorithms which disadvantaged minorities and triggered major public debate and critical media coverage (Grazette, 2023). While there have been incidents in other countries, in no other country have they also led to the establishment of new AI oversight bodies by the government due to their severity (Grazette, 2023).

Because the concrete implications of these contextual conditions on individual preferences for user control and transparency are still difficult to predict, we ask:

How does the desire for transparency and user control differ across countries?

It is also important to analyze whether the proposed relationships between individual-level dispositions and desirability of transparency and control are similar in strength and direction cross-nationally. Our theoretical elaborations suggest that the underlying processes and therefore the effects should be constant across different contexts. However, as these mechanisms have not yet been analyzed comparatively, we pose an open RQ:

How does the relationship between individual-level characteristics and the desire for transparency and user control in NRS vary across countries?

Method

Our study is based on a standardized, pre-registered 1 , cross-sectional survey 2 administered in five Western countries (the Netherlands, Switzerland (German-speaking), Poland, the United Kingdom, and the United States).

Sample

Participants were recruited by an international market research company in the Netherlands, Switzerland (German-speaking), Poland, the United Kingdom, and the United States. The five nations were selected due to the expected variation in several macro-level factors relevant to the desirability of transparency and control in NRS, including differences in exposure to AI technology, democratic models, regulatory environments, and high-profile incidents. We consider Western democracies as responsible NRS has been examined mostly from a democratic theory lens (Helberger, 2019). Therefore, a focus on democracies is most appropriate for this initial inquiry.

Data was collected between August and October 2022, resulting in a total sample of N = 5079 (United Kingdom: 1010, United States: 1006, Netherlands: 1026, Switzerland: 1025, Poland: 1012). The drop-out rates were between 7% in Poland and 20% in the United Kingdom. 3 The samples in each country were aimed at being representative in terms of age (18+), gender, and region. 4 Across countries, 50.52% of the participants are female, and the mean age is 47 (SD = 17.09). Regarding level of educational attainment, 13.82% report primary or lower secondary school, 40.97% higher secondary or short tertiary education, and 44.06% tertiary education as their highest educational qualification.

Measures

Dependent variables

Table A1 in Appendix A summarizes the two dependent variables across countries.

Independent variables

Control variables

We included gender, age, and level of educational attainment as control variables. In addition, we included an additive index of general news consumption across different channels and platforms, i.e., radio, TV, print or online newspapers, their mobile apps, social media and news aggregators (M = 25.28, SD = 7.46) 8 as a control variable, as news use and news source diversity have previously been linked to attitudes toward NRS (Bodó et al., 2019; Joris et al., 2021) and a higher preference for diversity in NRS (Heitz et al., 2022).

Lastly, we controlled for users’ perceived extent of NRS use by national news outlets. Such a subjective evaluation of the extent to which algorithmic technologies are currently in use can also affect attitudes and behavior, regardless of its factual accuracy (Swart, 2021). Respondents were asked to estimate for up to nine news outlets per country to what extent they believe the respective outlet uses NRS from 1 = “not at all” to 7 = “extensively” and combined into a mean index (α = 0.94; M = 4.30, SD = 1.23).

Table A3 in Appendix A summarizes the IV and CVs across countries. 9

Findings

On average, users across all five countries exhibit high levels of desire for transparency (M = 5.14) and even higher levels of desire for control (M = 5.42). Descriptively, the desire for transparency is highest in the Netherlands (M = 5.37), followed by the United States (M = 5.11), Poland (M = 5.10), Switzerland (M = 5.06), and, lowest in the United Kingdom (M = 5.05). The desire for user control is also highest in the Netherlands (M = 5.80), followed by the United States (M = 5.32), Poland (M = 5.39) and the United Kingdom (M = 5.32), and lowest in Switzerland (M = 5.15).

Individual-level explanations of desire for transparency (RQ1)

We estimate linear regressions with the desire for transparency as the dependent variable, privacy concerns, algorithmic awareness, NRS-specific concerns, and trust as independent variables, and gender, age, educational attainment, news consumption, and perceived NRS use as control variables. All analyses and diagnostics are reported in the supplemental material. 10

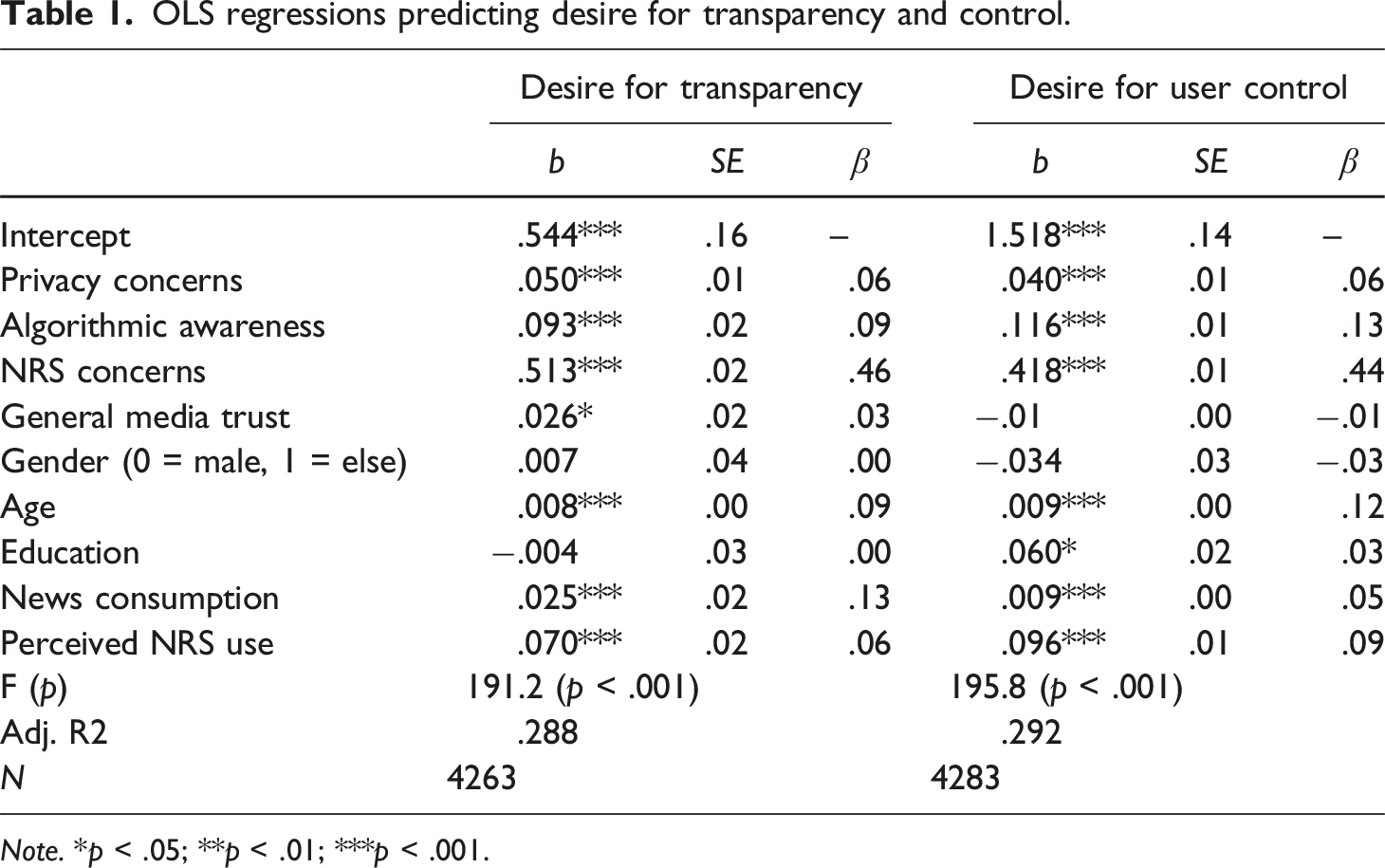

OLS regressions predicting desire for transparency and control.

Note. *p < .05; **p < .01; ***p < .001.

Answering RQ1.1, we see that general media trust is marginally significant at the p = .05 level (p = .051), indicating that users who trust the media more also want more transparency about NRS (β = 0.03). Amongst the control variables, age (β = 0.09, p < .001), news consumption (β = 0.13, p < .001) and perceived NRS use by news outlets (β = 0.06, p < .001) are positively associated with users’ desire for transparency. Particularly, users who consume more news across channels and platforms and are older desire more transparency. Level of educational attainment (p = .90) and gender (p = .85) are not associated with users’ desire for transparency.

Individual-level explanations of desire for user control (RQ1)

We estimate linear regressions with the desire for user control as the dependent variable, privacy concerns, algorithmic awareness, NRS-specific concerns, and trust as independent variables, and the same set of control variables. Our model explains 29.30% of the variance in users’ desire for control in NRS (second column of Table 1, F (9, 4243) = 195.8, p < .001).

In accordance with H2, the more one is concerned about one’s privacy online, the higher the desire for control (β = 0.06, p < .001), although the standardized regression coefficient indicates that the effect size is modest. As hypothesized in H4, the more users are aware of NRS, the more they want control opportunities (β = 0.13, p < .001). In line with H6, users have a higher desire for control if they have higher NRS-specific concerns (β = 0.44, p < .001). Again, NRS-specific concerns are the strongest predictor. For RQ1.1, the results show that media trust is not associated with the desire for control (β = 0.01, p = .49).

Amongst the control variables, only the effect of gender is not significant (p = .24). The older participants are (β = 0.12, p < .001), the more they consume news (β = 0.05, p < .005) and the more they believe news outlets use NRS (β = 0.09, p < .001), the more they desire control over NRS. The higher one’s level of educational attainment (β = 0.03; p = .018), the higher one’s desire for control over NRS. However, the effect sizes of the CVs are negligible.

Country-level influences on the desire for transparency (RQ2, RQ3)

To examine RQ2, we first examine differences between the desire for transparency across countries without the consideration of individual-level explanatory variables. A one-way ANOVA (F (4, 4802) = 7.35, p < .001) with a Tukey HSD Test for multiple comparisons shows that the desire for transparency is significantly higher in the Netherlands (M = 5.37) than in the United Kingdom (M = 5.05; p < .001, 95% C.I. = [0.131, 0.511]), Switzerland (M = 5.06; p < .001, 95% C.I. = [0.121, 0.494]), Poland M = 5.10; p < .001, 95% C.I. = [0.080, 0.494]) and the United States (M = 5.11; p = .004, 95% C.I. = [0.069, 0.447]). Yet, the means differences are not very pronounced. Additionally, the desire for transparency does not vary significantly between the remaining four countries.

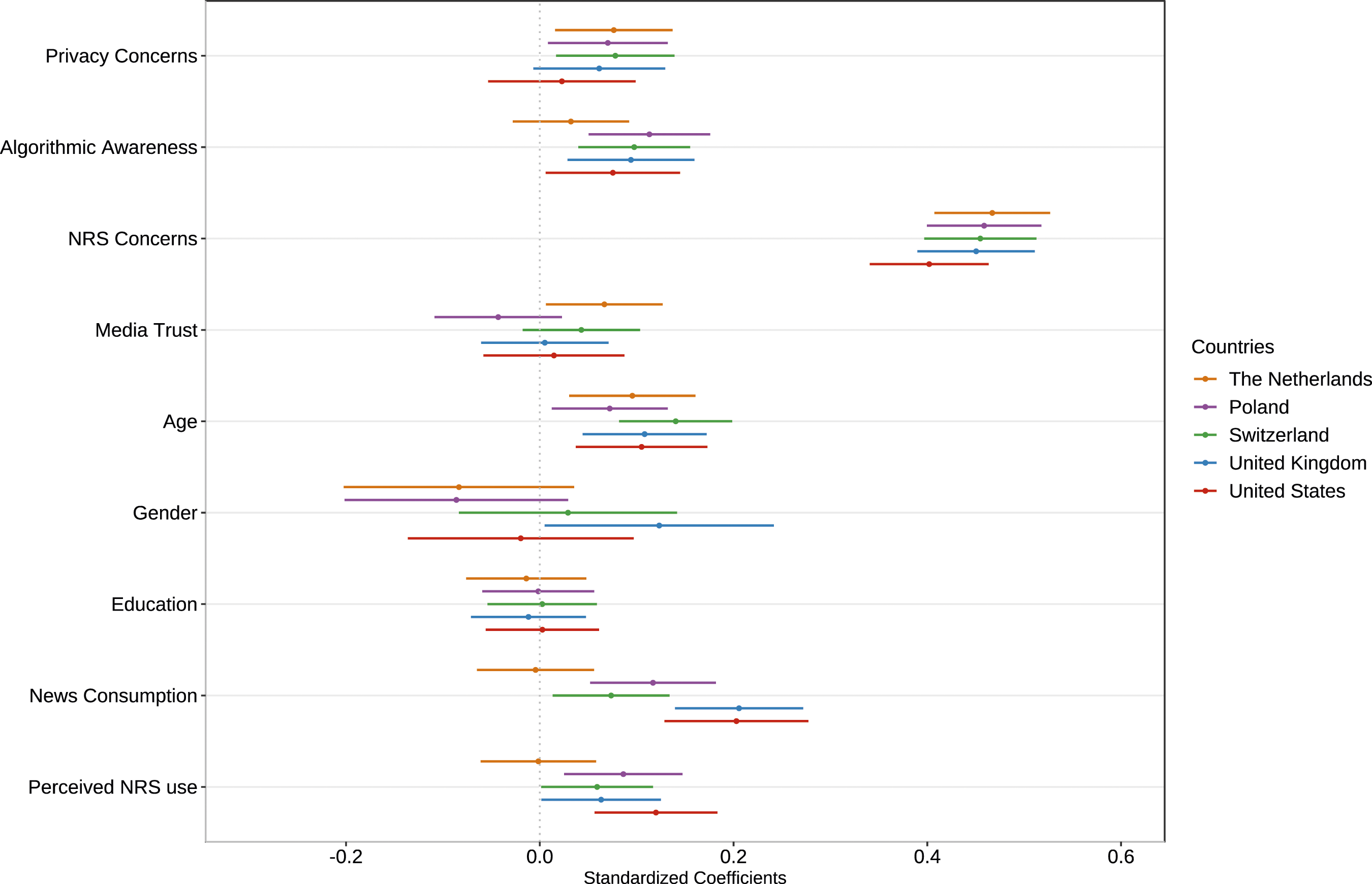

For RQ3, we compute linear regression models for each country separately and plot the effects of each predictor in explaining the desire for transparency with the respective confidence intervals. Overlapping lines indicate that the coefficients are not significantly different from each other.

Figure 1 illustrates that the relationships between the predictors and the desire for transparency do not vary much across countries (RQ3). However, there seem to be several patterns: The effect of algorithmic awareness is insignificant in the Netherlands but similar in the remaining four countries. A significant and positive effect of general media trust on the desire for transparency is only present in the Netherlands: Higher media trust is related to a higher desire for transparency only in the Netherlands. However, generally, the hypothesized effects of individual-level dispositions on the desire for transparency are, in most cases, similar. Comparison of predictors of desire for transparency across countries.

Country-level influences on the desire for user control (RQ2, RQ3)

Moving to RQ2 and the desire for user control, a one-way ANOVA (F (4, 4864) = 34.77, p < .001) with a Tukey HSD Test for multiple comparisons reveals that the desire for control also differs across countries. First, control is desired significantly more in the Netherlands (M = 5.80) than in Switzerland (M = 5.15; p < .001, 95% C.I. = [0.495, 0.808]), the United Kingdom (M = 5.32; p < .001, 95% C.I. = [0.321, 0.638]), Poland (M = 5.39; p < .001, 95% C.I. = [0.257, 0.573]) and the United States (M = 5.43; p < .001, 95% C.I. = [0.207, 0.524]). Second, user control is, on average, desired significantly less in Switzerland than in the Netherlands, the United States (p < .001, 95% C.I. = [0.128, 0.444]), Poland (p < .001, 95% C.I. = [0.079, 0.395]) and the United Kingdom (p = .03, 95% C.I. = [0.014, 0.330]). There are no significant differences between the other three countries.

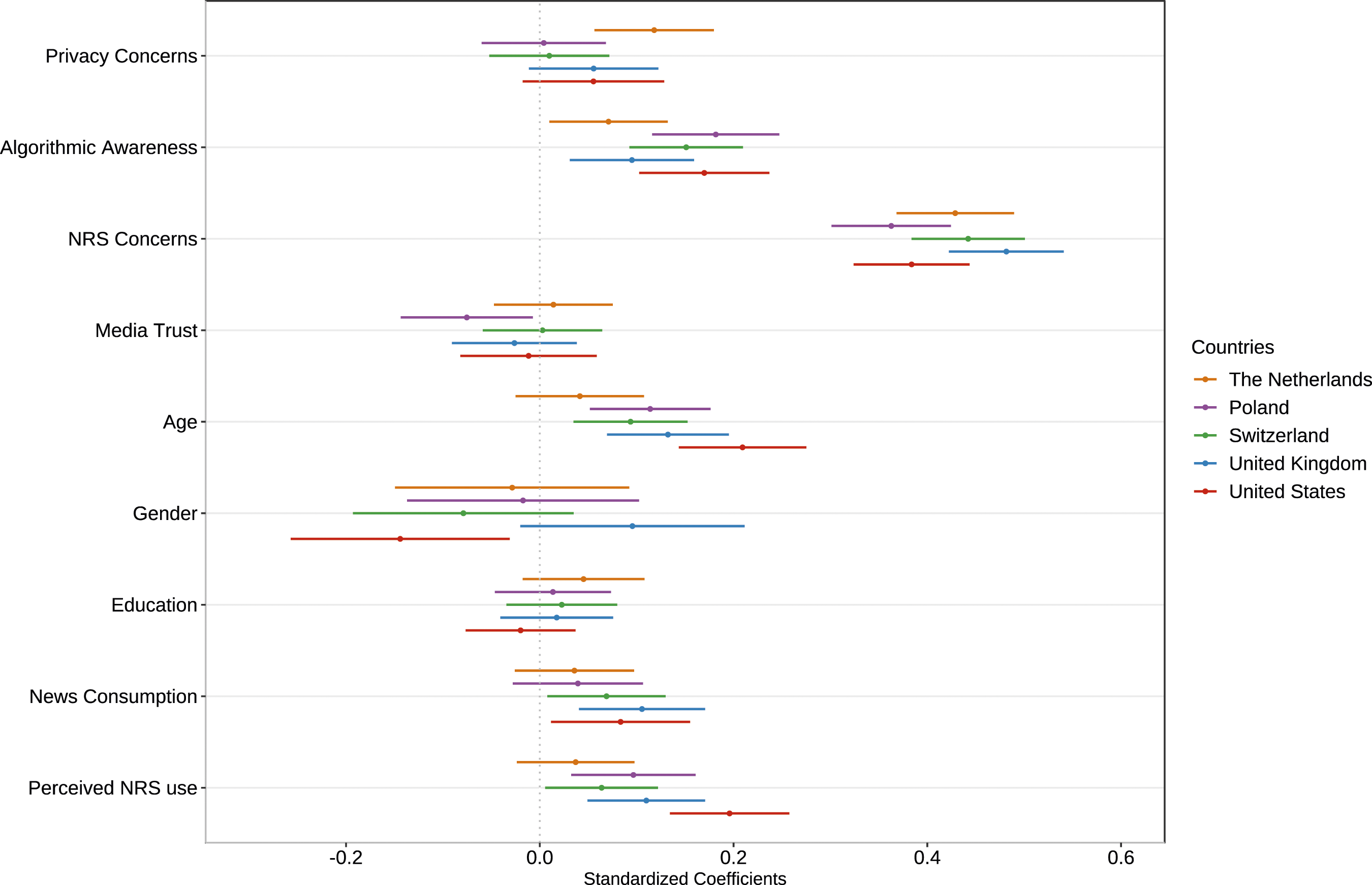

Regarding RQ3 and illustrated in Figure 2, the effect of privacy concerns on the desire for control over NRS is not significant in Poland and Switzerland and slightly more pronounced in the Netherlands. General media trust contributes significantly to the desire for user control only in Poland, where users who trust media less desire more control opportunities. Overall, however, there are only small differences in the strength of relationships between individual-level characteristics and the desire for control across countries. Comparison of predictors of desire for control across countries.

Discussion

As AI-driven applications like NRS make their way into newsrooms, features of responsible AI design like transparency and user control are becoming more important aspects to examine. Due to these features’ normative significance and audiences’ active role in shaping personalized news flows, we need to know what contributes to users’ higher or lower desire for such measures. Therefore, we set out to empirically investigate how individual-level characteristics relate to users’ desire for transparency in and control over NRS across five nations.

Our results show that users across countries generally want transparency and, even more so control mechanisms. This finding aligns with recent investigations (Moeller et al., 2023; Monzer et al., 2020; Van Drunen et al., 2022) and suggests that news consumers recognize the importance of these highly normative features.

Individual-level dispositions, which have been tied to users’ general attitudes toward NRS, also relate to the desire for transparency and user control (RQ1). Users who have more concerns about their data being collected online or about NRS specifically and are more aware of algorithms desire more transparency and control. However, general media trust is not clearly related to desire for such measures (RQ1.1). Perhaps users desire transparency and control in all personalized news environments, regardless of their trust in media.

Although our hypotheses were confirmed, the low effect sizes indicate that the explanatory power of the examined factors is rather limited. The role of NRS-related concerns is strongest, whereas algorithmic awareness and privacy concerns, which relate to data collection more generally, play a limited role. This could indicate that desire for transparency and control depends strongly on the specific context like the domain in which algorithmic systems are applied.

Users who consume news more frequently, regardless of the platform, desire more information about the makeup of NRS and opportunities to influence algorithmic news provision. Younger people who particularly rely on personalized services (Swart, 2021) desire transparency and control opportunities on news websites less. This may potentially be explained by younger users’ lower reliance on traditional news sources like legacy media (e.g., Peters et al., 2022).

Additionally, the desire for transparency and control is not necessarily related to the same set of individual characteristics or, more precisely, not to the same extent. Level of educational attainment, e.g., only relates to the desire for control. This makes theoretical sense, too, as the cognitive and behavioral thresholds to exercise control may be higher. Users might not be willing to take a more active role in curating their news, as this task is traditionally delegated to news organizations (Moeller et al., 2023). Future research should therefore differentiate between the possibly distinct mechanisms driving the desire for transparency and control by looking at a wider set of dispositions like, e.g., the need for cognition.

We further investigated how the desire for transparency and control differs across five national contexts (RQ2) and whether the proposed relationships vary in strength and direction (RQ3).

The Netherlands exhibits significantly higher levels of desire for transparency and user control than the remaining countries. Although we would have expected similar results for Switzerland, Swiss respondents do not want transparency and control in NRS as much as Dutch respondents. Moreover, the desire for user control is significantly lower among Swiss users than in the other four democracies.

A possible reason for these findings can be found in several critical incidents in the Netherlands that triggered large public debates in the context of scandalous algorithmic deployments by public institutions, e.g., in the area of welfare fraud detection (Grazette, 2023). This might have prompted Dutch citizens to engage more critically with the responsible use of AI technologies.

Conversely, Switzerland has not witnessed any far-reaching algorithm-related scandals so far (Binder and Egli, 2020). Moreover, Switzerland is the only direct democracy in our sample. Perhaps an environment with regular and institutionalized avenues for citizens to participate in decision-making may lead to participation fatigue (Kern and Hooghe, 2018) and lower the wish to actively exercise control in other domains like algorithmic recommendations.

Lastly, Poland, despite scoring lower on liberal and deliberative dimensions (Haerpfer et al., 2022), has similar levels of desire for transparency and control as the United Kingdom and the United States which have strong liberal traditions. This finding is consistent with recent research that indicates that the Polish media system shares characteristics with the US and UK (Humprecht et al., 2022), resulting in similar media consumption cultures.

Although the strength and direction of the effects are very similar across countries (RQ3), media trust only contributes to a higher desire for transparency in the Netherlands. The Netherlands has a strong ethos of media accountability, which also involves mechanisms of journalistic transparency (Bastian et al., 2021). Possibly, Dutch users more closely associate media performance with openness about and disclosure of news production and distribution practices.

Regarding user control, it is more difficult to identify clear differences. For example, the association between general media trust and desire for control is only significant in Poland: Polish users who trust news media less desire more control of NRS. This effect may be related to the specific political and media environment in Poland which has lower levels of media and institutional trust (Haerpfer et al., 2022).

Overall, however, differences are small, highlighting that the underlying processes are similar across different countries. This may indicate that normative features like transparency and control are equally and universally assessed by citizens in democratic countries.

Our study is not without limitations. First, we focused mostly on technological variables and attitudes as predictors and did not include any psychological dispositions which could also contribute to a lower or higher desire for these measures. Second, we only included Western democracies. Future comparative research should consider more countries and disentangle possible differences in audience evaluations by considering a wider set of structural factors at the media, cultural, and political system level like the level of AI adoption by the media or the presence of AI regulation.

Third, due to the use of cross-sectional data, we further cannot make any definitive claims about causal relationships between the proposed factors and the desire for transparency and user control.

Additionally, our results are based on users’ self-reports. Desire for responsible NRS might not always mean that users will make use of such features (Moeller et al., 2023). This inconsistency resembles the privacy paradox that describes the discrepancy between expressed privacy concerns and actual online behavior, which is rarely protective (Kokolakis, 2017). Even when users indicate their desire for transparency and control, such self-reports do not determine whether users would invest time and resources to familiarize themselves with these features or actively use them. To tap into this dichotomy, future research should employ experimental designs to study users’ interaction with responsible NRS in practice. Studies can examine under which conditions users actually use transparency and control mechanisms and how this varies against the backdrop of different motivational, cognitive, and contextual factors. Mirroring research on users’ willingness to pay for journalistic content (O’Brien et al., 2020), users may be more willing to try these features if they are easy to access and use than if they are not intuitive or cost much time or money.

Studying these conditions can then help news organizations and policymakers identify the controls that people “want to use” (Stray et al., 2022: 38). Yet, higher responsiveness to user demand might conflict with journalism’s democratic objectives. For example, previous research has shown that catering to users can lead to an emphasis on soft news over what journalists consider important (Nelson, 2021). Thus, audience evaluations must always be considered with a view of broader democratic values and journalistic missions.

This is also related to our guiding argument that transparency and user control are desirable. Although these features are democratically important, integrating too much transparency and control comes with risks, too. Full disclosure of the employed filtering mechanisms and data may overwhelm and annoy users or endanger competitive advantages (Diakopoulos and Koliska, 2017). Similarly, Moeller et al. (2023) show that providing control mechanisms may not be economically profitable, as such features do not necessarily increase user satisfaction and engagement. More importantly, if users can fully customize their news provision to match their news preferences and viewpoints, offering control over NRS might lead to a narrower, less diverse news diet (Loecherbach et al., 2021). Therefore, scholarship must devote more attention to the design of features of responsible NRS that satisfy both users and news organizations but also align with broader societal goals.

Our study has several practical implications for news organizations and policymakers. We show that users across countries appreciate control and transparency mechanisms but that these assessments are contingent on individual-level dispositions. Because more pronounced concerns about NRS are related to a higher desire for transparency and user control, providing users with more in-depth information about the inputs and outputs of NRS and integrating more opportunities to exercise control over NRS may prove a viable solution to mitigate user fears about potential biases and risks associated with news personalization, and ultimately build trust and satisfaction. Yet, our results also suggest that people who have higher algorithmic awareness desire such features more. In practical terms, offering news audiences the ability to read about how algorithmic recommendations work or to customize their newsfeed could possibly reinforce divides and inequalities between more and less resourceful, knowledgeable individuals. Therefore, when designing transparency and user control, news organizations should aim for accessible, user-friendly interfaces, explanations, and customization options that enable less tech-savvy users to also use these features.

As the democratic stakes of news recommender systems for journalism and the cultivation of an informed public become increasingly evident, we envision our study as a catalyst for an incisive scholarly dialogue on responsible journalistic AI that considers the rich tapestry of individual, situational, and structural factors at play.

Supplemental Material

Supplemental Material - Exploring users’ desire for transparency and control in news recommender systems: A five-nation study

Supplemental Material for Exploring users’ desire for transparency and control in news recommender systems: A five-nation study by Eliza Mitova, Sina Blassnig, Edina Strikovic, Aleksandra Urman, Claes de Vreese and Frank Esser in Journalism

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Swiss National Science Foundation within the National Research Programme on “Digital Transformation” (NRP77) under Grant [number 407740_197523].

Data availability statement

The data that support the findings of this study are available on request from the corresponding author. The data are not yet publicly available because they are part of a larger project but will be made openly available at the completion of the project by June 2024 at the latest.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.