Abstract

Digitalization of the media is often discussed in terms of effects on the user. What is often overlooked are the motivations from users, on the individual level, for the acceptance of new technologies. This study explores what individual-level factors make up favorable opportunity structures for the implementation of news recommender systems (NRS). We conduct a cross-sectional survey (n = 5073) in five countries (The Netherlands, Switzerland, Poland, the United Kingdom, and the United States) to analyze the correlations between users’ individual-level factors and their evaluations of NRS in terms of benefits and concerns. Our findings demonstrate universally critical evaluations of NRS and less-than-ideal conditions for the acceptance of NRS. We also show that while there are patterns of country differences, the perceived concerns of NRS are stronger overall and largely universal. Implications of these findings suggest a slow and intentional development and implementation of NRS rather than keeping pace with the fast development of technology.

Studies on the effects of the digitalization of the media environment are a fundamental aspect of recent communication research. Personalization of media, for example, has received much attention, with both benefits and concerns about algorithmic personalization discussed at length (Bozdag, 2013; Möller et al., 2018). Often, these developments are discussed in terms of effects, that is, what positive or negative effects new technologies can have on either the media users (or providers). What has been overlooked by comparative research are motivations from users, based on individual-level factors, for accepting new technologies. With this study, we aim to uncover what individual-level “opportunity structures” exist within the user base for an acceptance and positive evaluation of such new technologies.

In specific, we take a closer look at news recommender systems (NRS). Content recommendations based on algorithms—mechanisms set in place to make automated and personalized news recommendations based on metrics such as individual user history or general audience metrics—are a familiar concept, as social media platforms, online streaming services and ad placements largely rely on personal user information in order to tailor content to individual users. The same personalization principle is now also increasingly implemented in the distribution and presentation of news. These NRS generate personalized reading recommendations using various data, including explicit user preferences, popularity metrics of individual articles or contextual factors like the users’ device features, the time of day, or users’ current locations (Karimi et al., 2018). This raises both benefits and concerns: while NRS can help navigate the high-choice information environments and increase the diversity of users’ news diets, there is also concern regarding a lack of transparency about the algorithms behind NRS (Diakopoulos and Koliska, 2017; Sinha and Swearingen, 2002). How users perceive these benefits and concerns may be contingent on user types and other context factors. In other words, favorable conditions—that is, opportunity structures—for a positive attitude and an open-minded approach from users toward NRS may depend on individual-level factors. Our findings can play a key role in informing the evolution of these systems, specifically with regard to incorporating user needs.

We also examine whether the individual-level conditions of acceptance for these technologies are universal and examine individual-level determinants of NRS acceptance across country contexts. Thus, we set out to answer the following overarching research question:

Overall Research Question. How do individual-level factors contribute to favorable evaluations of NRS?

By approaching NRS research from a user perspective, specifically focusing on possible correlations between user NRS evaluations and their individual-level and country-level, we contribute to the discourse on opportunity structures of information environments (Esser et al., 2012). We also add to the discussion of individual-level factors creating the conditions for these structures and how they can account for discrepancies in user evaluations of new technologies. We aim to answer the proposed research question from a comparative angle and conduct a cross-sectional survey (n = 5073) with samples from five countries (CH, NL, UK, US, PL). The country selection offers different contexts with regard to media systems, market sizes, political cultures, and stages of development and implementation of NRS.

Individual-level determinants of attitudes toward NRS

NRS are algorithmic tools that create suggestions or a prioritization of news content based on previous user behavior, explicitly stated user preferences, popularity metrics or content-specific characteristics (Feng et al., 2020; Karimi et al., 2018). This change in news exposure—and with it, news consumption—is important to consider, as users’ understanding of the algorithms behind NRS might influence user evaluations and acceptance of NRS. Studies on user responses to artificial intelligence solutions have shown that users can be both accepting and critical of AI-based recommendation systems. Research shows that users, at times, prefer algorithmic recommendations over recommendations made by humans, which is known as algorithmic appreciation (Logg et al., 2019). This could be attributed to people separating digital interfaces from ideological biases that are, in turn, perceived to be more likely an inherent part of human decision-making (Sundar, 2008). Other studies find that the opposite can be true. For example, because of the lack of accountability in algorithms, people seem to be less likely to forgive mistakes made by an algorithm, which leads to a preference for human decision-makers over algorithmic solutions (Araujo et al., 2020). This is known as algorithmic aversion (Dietvorst et al., 2015). Algorithmic aversion and concern may result from a high expectation for the accuracy and objectivity of these systems, as well as an overestimation of their intelligence. This renders the possibility of errors obsolete so that users are much less tolerant of mistakes if/when they do happen.

While contextualizing factors matter in the evaluation of attitudes toward algorithmic decision-making, many studies approach this from the angle of the digital environment as the context. Rather than focusing on the influencing factors on the meso and macro level, the study at hand focuses on influencing factors of the individual user and the opportunity structures those individual-level factors create for user evaluations of NRS. When it comes to attitudes toward NRS and other algorithmic decision mechanisms in general, understanding drivers of the perceptions of these recommender systems is important as they play a part in users’ acceptance of them (Lee, 2018). This study adds to the existing body of research by examining how individual-level determinants shape perceptions of the benefits and concerns related to NRS. In specific, we focus on the correlation between users’ NRS evaluations and their (1) privacy concerns, (2) perceived information overload, (3) algorithmic awareness, and (4) preference for “algorithmically curated” social media platforms or news aggregators as opposed to “direct” use of news apps and news websites for political information.

We focus on these four individual-level factors because they each are closely and inextricably linked to the digitalization of the news environment. In addition to individual-level factors, we also look at country-level differences. These differences in country contexts are included as external contextual factors, in addition to individual-level differences, as variations in media systems, regulations of AI technology, and the resulting exposure to media personalization may relate to individuals’ evaluation of these systems.

Privacy concerns

Privacy concerns are particularly relevant when explaining attitudes toward as well as intention to use digital technologies (Kaptchuk et al., 2020; Smith et al., 2011; Venkatesh and Bala, 2008), as these technologies explicitly rely on data collected from the users. Higher privacy concerns have been shown to relate to less-favorable attitudes toward algorithmic or AI-driven technologies (e.g. Horowitz and Kahn, 2021), social networking sites (e.g. Mohamed and Ahmad, 2012), and NRS specifically (Araujo et al., 2020; Joris et al., 2021; Thurman et al., 2019). Earlier research also argues that privacy concerns are exacerbated in digital settings due to, among other factors, the large scale of data collection (Malhotra et al., 2004). The significance of privacy concerns for user evaluations can also be derived theoretically from a socio-psychological perspective. For instance, authors like Barbeite and Weiss (2004) have argued that particularly anxiety and concerns constitute cognitive dispositions that might exert an inhibiting effect on user behavior (see also Hoffmann and Lutz, 2021).

However, recent studies also indicate that higher privacy concerns do not necessarily lead to a reduced actual or intended use: when perceived benefits of use outweigh concerns, users have been found to rely on data-driven technologies despite being aware of possible privacy infringements (see e.g. Chen et al., 2018; Dienlin and Metzger, 2016; Fernandes and Costa, 2023; Festic et al., 2021). In other words, there is a tradeoff between privacy and information benefits. Thus, we expect that users with high privacy concerns are more cognizant of concerns related to NRS or exhibit lower levels of algorithmic appreciation. These considerations lead to our first hypothesis:

Hypothesis 1 (H1). Higher privacy concerns are associated with lower perceptions of NRS benefits.

Information overload

One key factor and touted benefit of the digitalization of news is that there are more (virtual) pages available for filling with news, and with that, more information at the users’ disposal. This can be beneficial, as there is a larger offer of issue and topic-specific content, so that users have more content and choice in their news selection. More choice, however, can also lead to users being exposed to more information than they can cope with, leading to information overload (Pentina and Tarafdar, 2014). Over the past years, information overload in the digital age has been the subject of much theoretical and empirical work (for an overview see Bawden and Robinson (2020)). For example, empirical studies have shown that information overload can lead to less political knowledge and affect decision-making and information behavior as individuals become less capable of processing and making sense of information (Van Erkel and Van Aelst, 2021). The concept of information overload is particularly relevant for personalized services. One main benefit of NRS as seen by scholars (e.g. Li and Unger, 2012; Zanker et al., 2019) as well as news audiences (Harambam et al., 2019) lies in its potential to help users cope with information overload and better navigate high-choice news environments. News personalization can, for example, decrease information overload by providing readers with customized news about what they are interested in and improve user information processing (Tam and Ho, 2006). Thus, it can be expected that users who perceive higher levels of information overload in their daily news consumption also see more merit in NRS:

Hypothesis 2 (H2). Higher perceived information overload is associated with higher perceived NRS benefits.

Algorithmic awareness

Research on the digital divide has extensively discussed the role of internet literacy and skills as a crucial resource in contemporary society (Van Deursen and Van Dijk, 2011). Within communication science, and algorithmic technology in particular, the concept of algorithmic awareness has emerged, referring to users’ knowledge of the functions of algorithms within the media environment and how their (the users’) experience is affected by these algorithms (Zarouali et al., 2021). Recent research shows that algorithmic awareness affects how individuals interact with, make sense of, and cope with risks related to algorithmic services (Eslami et al., 2015; Gruber et al., 2021; Klawitter and Hargittai, 2018). However, until now, algorithmic literacy in the context of NRS has not been the focus of research (see Cho et al., 2020; Gran et al., 2021 for exceptions). As with other aspects of personalization, algorithmic awareness has been mostly studied within social media (e.g. Powers, 2017; Swart, 2021). These studies are inconclusive with regard to users’ general algorithmic awareness and the effects this could have on their media diets or behavior.

Another way the relationship between knowledge and awareness of a specific technology—and intention to use this technology—can be explained is with reference to frameworks such as the technology acceptance model (Venkatesh, 2015), which argues that knowledge as well as self-efficacy are important predictors of one’s willingness to adopt a technology (Kim et al., 2010; Slade et al., 2015). This means that more knowledgeable individuals are more likely to be aware of both benefits and concerns (Bhattacherjee and Sanford, 2006; Petty and Cacioppo, 1986). Studies investigating correlations between personality traits and algorithmic awareness show, for example, that algorithmic awareness is positively correlated with open-mindedness (Fang and Jin, 2022). By extension, one could argue that algorithmic awareness might also be associated with higher perceived benefits. On the other hand, high algorithmic awareness in the form of algorithmic knowledge and skill may also translate into a more thorough awareness of the pitfalls of these solutions. Thus, we assume that individuals with higher algorithmic awareness perceive both more concerns and benefits of NRS than individuals who lack such awareness of the algorithmic domain. This leads us to pose two differing (but not mutually exclusive) hypotheses:

Hypothesis 3a (H3a). Higher algorithmic awareness is associated with higher perceptions of NRS concerns.

Hypothesis 3b (H3b). Higher algorithmic awareness is associated with higher perceptions of NRS benefits.

Preference for “curated” over “direct” news access

Besides individual dispositions and attitudes toward technology more generally, news consumption preferences can also account for different attitudes and expectations toward media. For example, a recent study (Fletcher et al., 2023) found that differences in media consumption habits contribute to variations in news exposure: the more users access news through algorithmically curated websites such as search engines, Facebook, Twitter, and Google News, for example, the more diverse their news consumption, both in terms of the number of visited news outlets as well as the outlets’ political slant (p. 17).

With regard to algorithmic personalization, however, research on media consumption patterns has been inconclusive. One study indicates that different media consumption patterns, including the frequency of news exposure as well as the diversity of the consumed news, do not necessarily account for varying degrees of appreciation for algorithmic personalization (Bodó et al., 2019). At the same time, these groups pose different requirements to personalized services. Within social media, for example, users have the lowest expectations regarding the diversity of recommended news items in terms of topics and depth (p. 121). Research found that even though audiences collectively believe that algorithmic personalization (based on past behavior) is superior to editorial choices, this is true for social media users, while users with a higher news interest prefer editorial selection over algorithmic one (Thurman et al., 2019). When distinguishing between news consumers, Joris et al. (2021) find that news omnivores and traditionalist news users do not significantly differ in their preferences for different filtering mechanisms (p. 602).

Considering these somewhat inconsistent findings, we further want to explore whether different usage patterns relate to different attitudes toward NRS. As users who heavily rely on algorithmically curated websites such as social media, search engines and news aggregators are most likely to be confronted with personalization in their daily Internet use, we expect that NRS appreciation will be higher for users with a high reliance on such outlets (see also Thurman et al., 2019). Alternatively, users who have a high appreciation for algorithmic curation and personalization may seek out websites that use those algorithms. This leads us to the following hypothesis:

Hypothesis 4 (H4). Higher reliance on algorithmically curated websites is associated with higher NRS appreciation.

We will not formulate a hypothesis for the opposite user group, which prefers direct news access via news apps and websites from legacy media. However, we will consider this alternative form of access via “news app use” as a control variable in our later analyses.

Country-level differences

While individual-level factors may contribute to favorable opportunity structures for NRS adoption, we also ask whether these structures are consistent across countries. Contextual factors at the country level, such as those related to the media system in which these recommendation technologies are deployed, may also be relevant for modeling divergent user evaluations of NRS.

Comparative studies suggest that macro-level factors may indeed shape audiences’ media choices and responses in different ways (see Goldman and Mutz, 2011; Steppat et al., 2020). These studies, as well as additional research on related topics such as technology acceptance (Straub et al., 1997), diffusion of innovation (Kumar, 2014), and new media use (Boomgaarden and Song, 2019) have identified relevant factors that lead us to expect that responses such as algorithmic appreciation or algorithmic aversion do not follow uniform pathways across countries.

In terms of media system factors, higher trust in public broadcasting and established newsgathering institutions may lead to greater skepticism toward NRS. For example, of the five countries studied, Dutch media users have the highest trust in public television news, the highest trust in news in general, a below-average preference for social media as a news source, and an above-average preference for print news, according to the Digital News Report (Newman et al., 2023). In contrast, Polish users have the lowest trust in public news, the highest preference for social media, and the lowest use of print news. This leads us to believe that in countries like Poland, where trust in traditional media is low, there may be a higher openness to algorithmic news recommenders as an alternative source of information.

In terms of other media system factors, it can be expected that citizens in more polarized news landscapes may see the benefits of NRS in having access to a greater diversity of news. Among the countries in our sample, media users in Poland and the US report the highest levels of perceived polarization (“the main news organizations in my countries are politically far apart”), while Dutch media users report the lowest levels (Newman et al., 2023).

To date, however, audience research on NRS has rarely taken a comparative approach. Although some studies have used cross-national samples (Kozyreva et al., 2021; Kunert and Thurman, 2019; Thurman et al., 2019; Van der Velden and Loecherbach, 2021), these studies have not addressed the issues of algorithmic appreciation and algorithmic aversion, nor have they focused on variations across these national contexts. Our approach is different. While we examine how individual-level factors influence NRS evaluations, we also examine what constellations of these relationships hold across countries. We hence turn to a final open research question in this regard:

Research Question 1 (RQ1). To what extent are correlations between individual-level factors and evaluations of NRS consistent across national contexts?

Methods

This study employs cross-sectional survey data (n = 5079) aiming at representative samples (age, gender, region). The criteria for quotas were not met by the research company leading to an oversampling of younger age in all countries but the Netherlands. Data in all other countries is representative from the ages of 18–65 (while the Netherlands is representative for all included age categories). 1 Data were collected between 6 September and 16 October 2022 in five countries: the United States (US), the United Kingdom (UK), Poland (PL), the Netherlands (NL), and Switzerland (CH; limited to the German-speaking part). The study was preregistered prior to receiving the data. 2

Sample

Participants were recruited through the international public opinion company Kantar. We aimed to recruit a total of n = 1000 participants per country, with a final sample of n = 5079 (United Kingdom: 1010, United States: 1006, NL: 1026, CH: 1025, PL: 1012). The drop-out rates ranged from 7% (Poland) to 20% (United Kingdom). The gender distribution of the sample is 50.52% female, 3 and the mean age is 47 (SD = 17.09). For education, 13.82% report primary or lower secondary school, 40.97% higher secondary or short tertiary education, and 44.06% tertiary education as their highest educational qualification attained. 4 The mean political orientation is 5.29 (SD = 2.36, “left” = 1, “right” = 10). 5

Design and procedure

Participants first answered questions about their news consumption habits and their use of algorithmically curated platforms. They then answered questions that were aimed at assessing their general trust in the news, their awareness of algorithmic interventions and tools on news sites, as well as indicated their attitudes about benefits and concerns regarding NRS. Prior to questions about their evaluations of NRS, users were provided with a definition of these systems, which stated that “NRS pertain to algorithmic recommender systems used by news brands on their websites or in their apps.” The last part of the survey consisted of demographic questions. 6

Operationalization

NRS evaluations

User evaluations of NRS (or NRS appreciation) are measured by looking at both perceived benefits of/positive attitudes toward NRS, as well as perceived concerns of/negative attitudes toward NRS.

First, positive attitudes toward NRS/perceived benefits are measured using six self-developed items based on Bodó et al. (2019), Joris et al. (2021) and Thurman et al. (2019) asking participants to what extent they agree with six statements about perceived benefits of NRS on a scale from 1 (strongly disagree) to 7 (strongly agree). The statements read as follows: “Algorithmic news recommenders may (1) save time looking for relevant content”; (2) “help me skip content that I have already read”; (3) “help me weed out irrelevant news content”; (4) “help me discover new topics and viewpoints”; (5) “help me discover news content I would have otherwise missed”; and (6) “help me dive deeper into topics that interest me.” These six items are combined into a mean index (α = 0.94, M = 4.29, SD = 1.52). A confirmatory factor analysis verifies that all items load on one factor (χ2 = 381.70, df = 9, p = 0.00, CFI = 0.98, RMSEA = 0.099).

Second, negative attitudes toward NRS/concerns are measured using nine items self-developed based on Bodó et al. (2019), Joris et al. (2021), Thurman et al. (2019), Wieland et al. (2021) and Monzer et al. (2020) asking participants to what extent they agree with nine statements about concerns/perceived risks of NRS on a scale from 1 (strongly disagree) to 7 (strongly agree). The statements read as follows: “Algorithmic news recommenders may (1) make me miss out on challenging viewpoints”; (2) “make me miss out on important information”; (3) “place society at a greater risk of indue manipulation”; (4) “may place my privacy at greater risk”; (5) “make society less tolerant of other opinions”; (6) “cause society to lose its ability to make independent decisions about its information consumption”; (7) “lead to a lack of common ground for discussion”; (8) “mean that I get a false impression of the mood of the country”; and (9) “Algorithmic news recommenders may mean that I get wrongly stereotyped.” These nine items are combined into a mean index (α = 0.93, M = 4.99, SD = 1.35). Here, too, a confirmatory factor analysis indicates that all items load on one factor (χ2 = 387.57, df = 27, p = 0.00, CFI = 0.98, RMSEA = 0.059).

Individual-level factors

Privacy Concerns are measured using two items based on Bodó et al. (2019) where participants indicate how acceptable it is for websites to collect information on their (1) clicks on websites and (2) clicks on ads (Scale: 1 = completely unacceptable to 7 = completely acceptable). The items were combined into a mean index (α = 0.90, M = 3.32, SD = 1.79).

Perceived information overload is measured using two items based on Van Erkel and Van Aelst (2021), where participants indicate to what extent they agree that (1) they regularly feel overwhelmed by too much information and (2) there is so much information available on certain topics of interest that they sometimes have difficulties to determine what is important and what is not (Scale: 1 = Fully disagree to 7 = Fully agree). The items were combined into a mean index (α = 0.76, M = 4.07, SD = 1.62).

Algorithmic awareness is measured in two ways: First, knowledge of NRS is measured using six items based on de Vries et al. (2022) and Zarouali et al. (2021), for which participants have to indicate whether the statements are true (1) or false (2). The items are recoded as dummy variables indicating whether the participants’ answers are correct (1) or not (0) and combined into a sum index leading to a theoretical range of 0–6 (α = 0.75, M = 3.51, SD = 1.92). Second, perceived algorithmic skills are measured using four items based on de Vries et al. (2022), for which participants have to indicate whether these statements apply to them (Scale: 1 = completely untrue to 7 = completely true). These four items were combined into a mean index (α = 0.84, M = 4.44, SD = 1.41).

Reliance on algorithmically compiled platforms is measured through self-report: Participants are asked how often they receive information about political news and societal issues from (1) news aggregators and (2) social media (Scale: 1 = never to 7 = very often). We will look at both items individually as well as combined in a mean index (α = 0.6, M = 3.61, SD = 1.82).

Frequency of use of apps is measured through self-report: Participants are asked how often they receive information about political news and societal issues from news websites and mobile apps (Scale: 1 = never to 7 = very often).

As control variables, age, gender, and education are measured through self-report. In addition, political orientation is measured as left-right orientation (Scale: 0 = left to 10 = right).

Findings

Individual-level factors

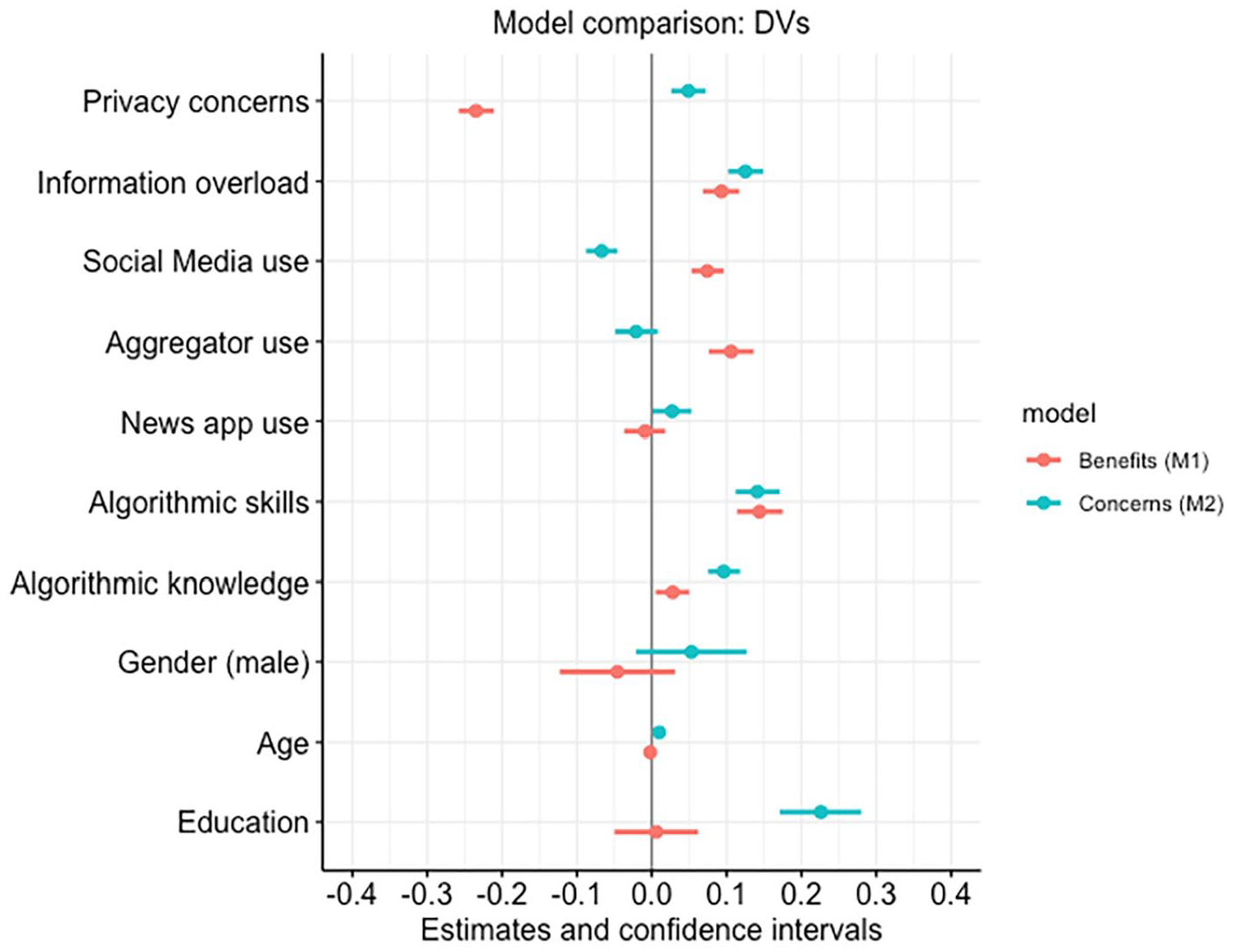

In order to test our hypotheses about individual-level drivers of attitudes toward NRS, we estimated linear regressions with privacy concerns (H1), perceived information overload (H2), algorithmic awareness (H3a/b), and reliance on algorithmically curated websites (H4) as our independent variables and perceived NRS benefits (Model 1) and perceived NRS concerns (Model 2), as our dependent variables (see Figure 1 for overview). Age, gender, and education were included as control variables.

Model comparison of the effect of individual-level factors on perceived NRS Benefits (Model 1) and perceived NRS Concerns (Model 2).

Our first hypothesis predicted that higher privacy concerns are associated with lower NRS appreciation. The regression model showed that privacy concerns indeed have a significant negative correlation with participants’ evaluations of NRS. This is the case with both perceived NRS benefits (b = −0.23, SE = 0.01, p < 0.001, Model 1) as well as perceived NRS concerns (b = 0.05, SE = 0.01, p < 0.001, Model 2). This means that the data supports H1, and that participants’ privacy concerns are a negative predictor of user evaluations of NRS. Users who are more concerned about having their private data collected online report lower perceived benefits of NRS and more perceived concerns toward NRS than those who are not as concerned about data collection.

Our second hypothesis, which predicted that higher perceived information overload is associated with higher perceived NRS benefits and appreciation for NRS, is also supported by the results of the regression in Model 1 (perceived NRS benefits). Higher levels of participants’ indicated information overload are significantly associated with higher perceived NRS benefits (b = 0.09, SE = 0.01, p < 0.001) as well as higher perceived NRS concerns (b = 0.12, SE = 0.01, p < 0.001). Because the individual-level factors were measured separately, they are not mutually exclusive and, in this case, respondents with a high sense of information overload associated this with both more benefits as well as more concerns about NRS.

We posed two separate hypotheses about algorithmic awareness (H3a and H3b), which predicted both a positive and negative relationship with NRS attitudes. Model 1 and Model 2 provide support for both hypotheses: an increase in algorithmic awareness is associated with higher levels of both perceived NRS benefits as well as concerns. Model 1 shows that higher levels of algorithmic awareness are associated with higher levels of perceived NRS benefits (bkn = 0.02, SEkn = 0.01, pkn < 0.05, bskill = 0.14, SEskill = 0.02, pskill < 0.001). This confirms H3a. However, algorithmic awareness is also associated with higher levels of perceived concerns about NRS, both in terms of algorithmic knowledge (b = 0.10, SE = 0.01, p < 0.05) and algorithmic skills (b = 0.14, SE = 0.02, p < 0.001). This also confirms H3b. Similar to information overload, algorithmic awareness also presents a very ambivalent, double-edged picture. With increasing knowledge and skills (=expression of awareness), not only the perceived benefits increase, but also the perceived concerns of NRS.

The results are clearest in the relationship between the reliance on algorithmically curated websites and user perceptions of benefits of NRS (H4). There is a significant positive relationship between the reliance on these platforms (across both indicators: social media and aggregators) and perceived NRS benefits (bSM = 0.07, SESM = 0.01, pSM < 0.001, bAgg = 0.10, SEAgg = 0.01, pAgg < 0.05). Results from Model 2 underline these findings, as higher levels of social media use—one of the indicators of reliance on algorithmically curated websites—are also associated with lower concerns about NRS (b = 0.07, SE = 0.01, p < 0.001).

Looking at all the individual-level factors, we can firmly say that at the individual level, the appreciation of NRS is dominant only among those users who indicate a higher reliance on algorithmically curated media outlets. All other independent variables are less clear-cut.

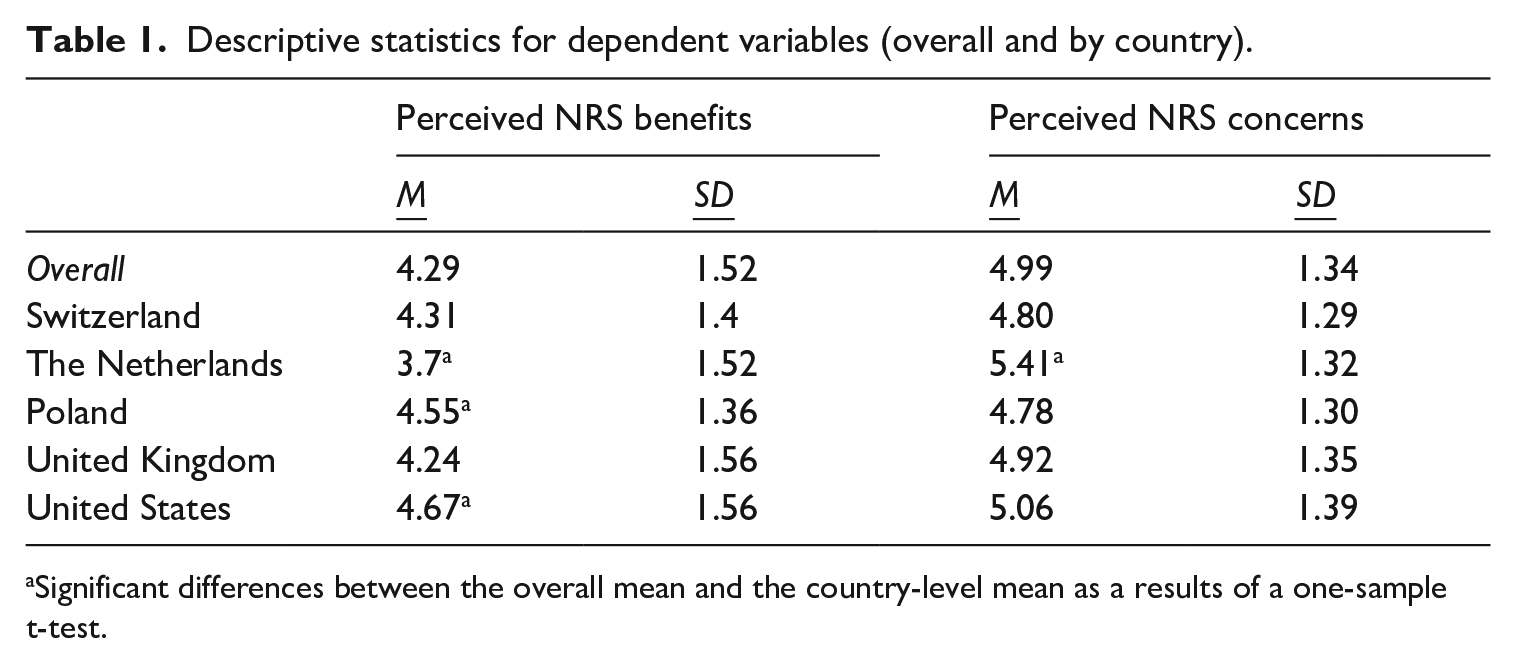

Country-level context

In addition, we ask in this study whether the acceptance of NRS, based on individual-level indicators, is consistent across various country contexts (RQ1). Table 1 shows the country means for both positive and negative NRS evaluations. Overall, users across all five countries report more perceived concerns with an overall mean of M = 4.99 (SD = 1.34) than benefits, where the overall mean is M = 4.29 (SD = 1.52). This is also evident in the country-level averages, as in each country the mean for perceived NRS concerns is consistently higher than the overall mean for perceived NRS benefits.

Descriptive statistics for dependent variables (overall and by country).

Significant differences between the overall mean and the country-level mean as a results of a one-sample t-test.

Using the overall averages as a baseline, the country results show that Switzerland and the United Kingdom follow this transnational trend. We also see three other patterns.

First, there are cases where the overall conditions for accepting NRS are less favorable. Results from a t-test show that respondents from the Netherlands, for example, report significantly lower benefits and significantly higher concerns than the overall average. A greater preference for and trust in traditional media has led Dutch users to be more skeptical of NRS.

Second, we also see a pattern of more favorable conditions for NRS acceptance, represented by the case of Poland: significantly above-average benefits and below-average concerns. A preference for social media and a distance from traditional media have fostered a rather unconcerned attitude toward NRS among Polish media users.

The third pattern we can identify in our cross-country evaluation of results is represented by the United States: significantly higher (than overall) levels of benefits and higher levels of concerns. This may partly be due to the high polarization of the media system, as well as the high choice media environment and open debates about digitalization.

What we can conclude from these results is that trends in NRS acceptance in terms of individual-level factors are not consistent across countries, so country-level contexts need to be taken into account.

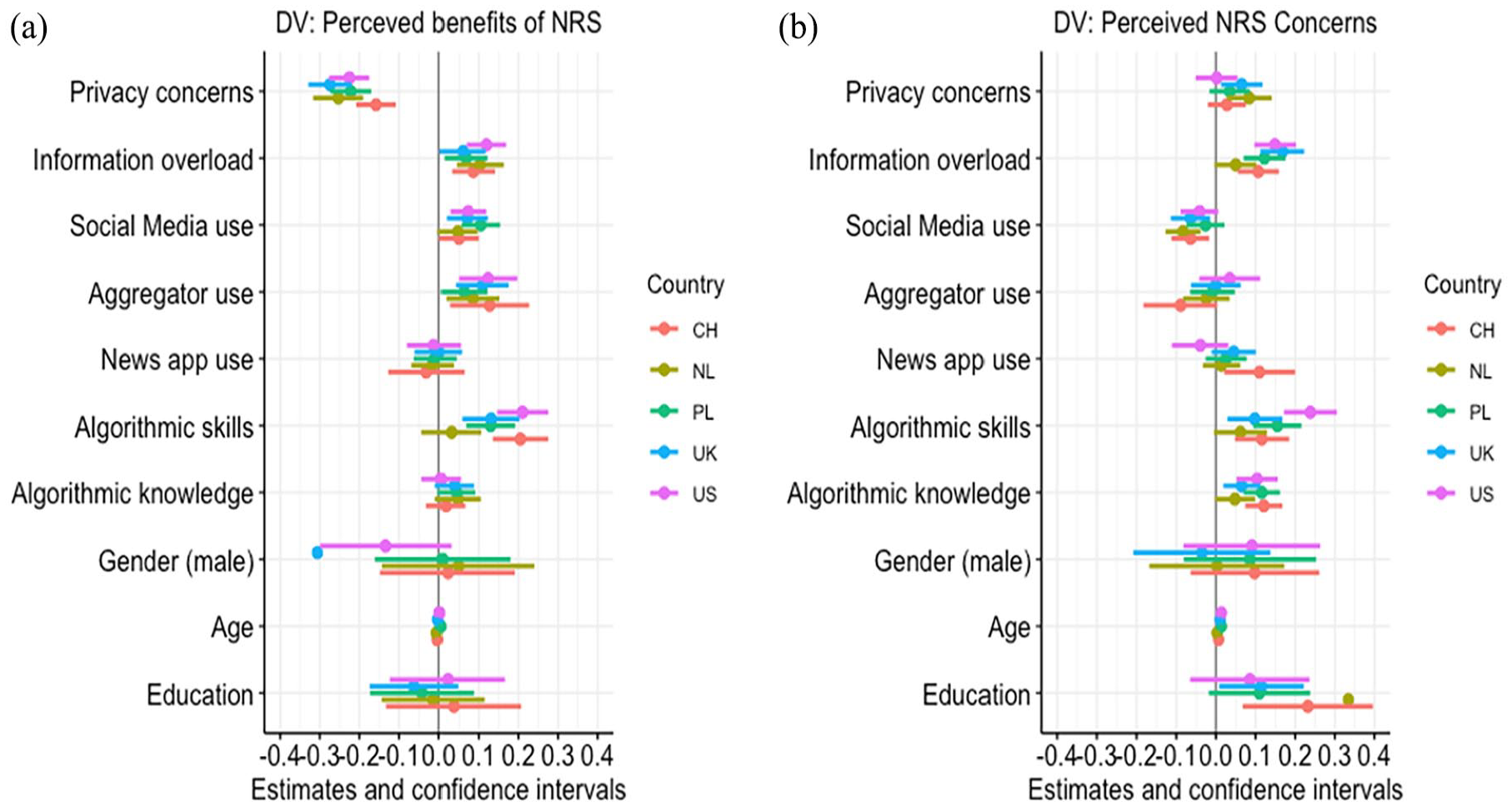

In addition to the overall country-level differences, we also examined country differences across each individual indicator. For this, we ran the linear regression models for the hypotheses above (individual-level indicators for NRS attitudes) for each country to observe first trends of differences between countries (Figure 2). When differentiated between the individual factors, country differences in attitudes toward NRS become less evident. Correlations between the individual-level factors and both variables measuring NRS evaluations seem consistent across countries. Notable exceptions here include the predictor of algorithmic skills and both perceived NRS benefits and concerns (see Figure 2), where we find no significant correlation in the Netherlands but a positive correlation in all other countries.

Linear regression models for: (a) perceived NRS benefits (Model 1) and (b) perceived NRS concerns (Model 2) per country.

Conclusion and discussion

Users play a critical and active role in the mechanisms of algorithmic recommendation technologies, and their evaluations of personalized recommendation systems can be a crucial element in informing the implementation and evolution of these systems. In this study, we investigate how user perceptions of NRS—that is, their perceived concerns and benefits of NRS—vary across individual dispositions in order to identify favorable opportunity structures within the user base for the acceptance of NRS systems. These can inform the implementation of NRS in a way that takes into consideration user needs and concerns and addresses those in order to foster acceptance of and trust in these systems.

With regard to individual dispositions, we are interested in users’ privacy concerns, perceived information overload, reliance on algorithmically curated websites, algorithmic knowledge, and use of news apps. While we had strong convictions for at least some of these individual-level factors in terms of their correlation to NRS evaluations, we find that only reliance on algorithmically curated websites and privacy concerns have a clear and strong correlation with perceived NRS benefits and concerns. When analyzing all individual-level factors, we see that a higher level of perceived NRS benefits is only clearly dominant in users with high reliance on algorithmically curated outlets. We also see that users with high privacy concerns indicate more NRS concerns and fewer perceived benefits. This is a clear picture and is the only unambivalent result: users who rely on aggregator websites and social media also tend to evaluate NRS positively, while those who indicate higher levels of privacy concerns evaluate NRS more negatively. Other correlations between individual-level factors and NRS evaluations are more ambivalent. With regard to information overload, even though we predicted that it would correlate with positive evaluations of NRS, we find that it is associated with both higher appreciation as well as higher perceived concerns of NRS. This finding indicates that even with a group of users that seemingly perfectly fit into a structure of favorable NRS conditions, the perceptions are also those of higher concerns, universally in all five countries. The same is the case for algorithmic awareness: both its indicators (skills and knowledge) are associated with higher appreciation of NRS attitudes, as well as higher perceived NRS concerns—although much more so with the latter. Negative correlations between algorithmic awareness and perceived NRS concerns are much stronger and present in all five countries, while evidence of perceived NRS benefits only represents a much smaller user group. With regard to the last indicator, use of news apps and websites, findings show that these are correlated with higher perceived NRS concerns.

These results raise the question of what the conditions of acceptance of NRS look like from the audience perspective or, in other words, what the opportunity structures for a favorable NRS evaluation by users look like. From these first results, we can already conclude that users overall seem more concerned than positive about the implementation of NRS and that the group of users who are unambiguously positive about NRS implementation is rather small.

Links between privacy concerns and NRS concerns are one unambiguous finding. However, this could also be indicative of an important critical and detailed evaluation of these systems. Instead of taking the evolving news environment for granted, users who are concerned about privacy may also be looking at these developments with a more critical lens, anticipating pitfalls such as the loss of user agency and transparency (Diakopoulos and Koliska, 2017; Sinha and Swearingen, 2002). Users with privacy concerns could be more sensitive to alerts of the threats that NRS can bring to the fore and be more intentional with the data they share, preferences they explicitly indicate on news websites and the way they interact with algorithms in general. The same goes for users that have more knowledge and awareness of personalization and algorithms: while it may seem like conflicting findings that users with high algorithmic awareness report higher levels of both perceived NRS benefits as well as concerns, these users may be more aware of both the risks and opportunities of these mechanisms, echoing theories of the technology acceptance model (Venkatesh, 2015). This, too, may translate into a more intentional use of news apps and websites, especially when it comes to explicit preferences and interactions with the algorithm (in the form of feedback about satisfaction with articles, for example). Therefore, even though findings correspond with previous research on the link between privacy concerns and less favorable attitudes toward AI-driven technologies (e.g. Araujo et al., 2020; Horowitz and Kahn, 2021; Mohamed and Ahmad, 2012), this link does not have to have negative implications such as the avoidance of news websites or other inhibiting effects on user behavior (Hoffman and Lutz, 2021). On the contrary, users who are invested in matters of privacy, and those who have high knowledge of algorithmic systems may, through their interactions with and use of news websites, play an active and crucial role in the shaping of these systems.

Our other main finding is that user evaluations are also more positive for users who already have a high reliance on algorithmically curated websites. For users that are already familiar with recommendations—that is, use social media and news aggregators frequently—the benefits seem to outweigh the potential risks. This is also reassuring in the case of NRS: because personalization of news is only in the beginning phase in most countries and users are generally not yet familiar with the implications of NRS and how their news diets may be affected, measuring attitudes toward NRS can seem at times speculative or hypothetical. This user group is also the only one where the perceived NRS benefits are clear. Overall, correlations between individual-level indicators and NRS attitudes point toward a concerned user population, which can create unfavorable opportunity structures for the implementation of NRS. In light of the need for news institutions to (re)design systems to foster trust (Bodó, 2021), these findings can signal the need for a cautious implementation of NRS and an intentional evaluation of the user contexts in which these systems are being released.

This is even more so important considering that our findings, within the country selection of the study, are universal: there were no major deviations across countries, rendering our results robust across the five countries selected. We find that (overall, as well as consistently across countries) users identify more concerns than benefits of NRS implementation. We also see some individual country trends in the deviation from the overall mean: First, evaluations in the Netherlands are more negative, hinting at less favorable opportunity structures for the implementation of NRS. This could be explained by the high trust in public broadcasters (Newman et al., 2023) and relatively low polarization (Newman et al., 2023), leading to greater skepticism of technologies that might compromise the integrity of the media. Second, we also see a pattern for more favorable conditions for NRS acceptance, which is represented by the case of Poland: above-average benefits and below-average concerns. In contrast to the Netherlands, we see higher polarization here (Newman et al., 2023), which may contribute to the positive evaluation of recommender systems as a way to access greater diversity in the news. The third pattern we can identify in our cross-country evaluation of results is represented by the United States, where we observed more perceived benefits as well as more perceived concerns. Here, the extraordinary constellation of the US media system, with its great diversity of choice, its polarization and its intense public debates about bias and fake news leads to an attitude that combines both perceived benefits and perceived concerns. A final explanation may also lie in the degree of digitization and innovation orientation of societies. Of the European countries in our sample, EU (2023) rankings on digitalization place the Netherlands in the top group and Poland in the bottom group; the World Digital Competitiveness Ranking places the United States, Netherlands and Switzerland in the top five places, the United Kingdom in 20th place, and Poland in 40th place (IMD, 2023). Countries with more experience in new technologies—such as the Netherlands—may also have more public debates about them, which can create an ambivalent to critical public image. What we can conclude from these results is that the overall trends in NRS acceptance deviate from the overall mean, so that country-level contexts have to be taken into account.

Our study also has some limitations. Because we base our findings on correlational data, we cannot make any causal claims. Therefore, when extrapolating the implications between individual-level characteristics and NRS evaluations, we are cognizant of the possibility of NRS only re-enforcing already pre-existing attitudes toward digital media and technologies. In other words, privacy concerns say as much about attitudes about NRS as attitudes about NRS may say about users’ privacy concerns. It would therefore be informative to additionally investigate these variables using experimental designs that can inform our knowledge of the causality and directionality of these relationships. The study also investigates attitudes about NRS that, in most of the surveyed countries, are relatively novel. Users may not have had ample time to form opinions about these systems or even be entirely aware of these technologies and their implications. While some of the findings may change as NRS become more common on news websites and apps, it is crucial to investigate the user perspective of news personalization before news media comprehensively adopt these systems, so that their development and deployment of the algorithms behind personalization can be informed by early user preferences. Future studies can then investigate whether user evaluations change over time and with higher rates of NRS adoption in the countries. This could be informative not only on the change in user evaluations over time, but also on the effect of familiarity of these systems on user attitudes toward them.

Despite these limitations, the study at hand contributes to the growing body of research on the user perspectives on NRS use, and the opportunity structures that individual-level user factors create for the adoption of NRS systems. Our findings demonstrate universally critical evaluations of NRS, and less-than-ideal conditions for the acceptance and appreciation of NRS. We also show that while there are patterns of country differences, the perceived concerns of NRS are stronger overall and consistently dominate perceived NRS benefits in all five investigated countries. Implications of these findings suggest a slow and intentional development and implementation of NRS rather than keeping pace with the fast development of the available technology. Findings also identify opportunities for media organizations to identify where user concerns are rooted and address those factors in the development of news personalization systems.

Footnotes

Funding

The author(s) disclose receipt of the following financial support for the research, authorship of this article: This work was supported by the Swiss National Science Foundation within the National Research Program on “Digital Transformation” (NRP77) under Grant Number (407740_197523).