Abstract

The algorithmic personalisation and recommendation of media content has resulted in considerable discussion on related ethical, epistemic and societal concerns. While technologies of personalisation are widely employed by social media platforms, they are currently also being instituted in journalistic media. The objective of this study is to explore how concerns about algorithms are articulated and addressed when technologies of personalisation meet with long-standing journalistic values, norms and publicist missions. It first distinguishes five normative concerns related to personalisation: autonomy, opacity, privacy, selective exposure and discrimination. It then traces the ways that these issues are navigated in the context of journalistic media in Finland where the implications of algorithmic media technologies have received considerable attention. The results indicate that personalisation challenges traditional notions of journalism, including those of choosing what is important and relevant and providing the same content to everyone. However, aspects of personalisation also have a long history within journalistic practices, and new technologies of personalisation are being adapted to accord with journalistic norms and aims. Based on these results, ethical blindspots concerning privacy and discrimination are also identified.

Keywords

Introduction

The introduction and increasing use of algorithms and artificial intelligence for the data-based production of knowledge has resulted in proposals that the media are undergoing an “algorithmic turn” (Napoli, 2014; Bucher, 2018). It has even been argued that media today should be conceived of as “the convergence of message-circulation technologies with data-extraction-and-analysis technologies,” generating value from data based on the continuous surveillance of audiences (Turow and Couldry, 2018: 415). Algorithmic recommendation and personalisation of content are regularly implemented by social media platforms, where content is directed at users on the basis of data connected with their socio-demographics, location, behavioural history, the behaviour of others and so on. Currently, however, algorithmic technologies of personalisation are also being instituted in journalistic media (Bodó, 2019; Helberger, 2019; Thurman et al., 2019). The potential of personalisation for journalism was initially met with some excitement (e.g., Thurman and Schifferes, 2012) but, in line with increasing concerns about the societal and ethical implications of algorithmic personalisation in social media, researchers have also identified a number of issues that these technologies might introduce to the context of journalism (Bucher, 2017; Just and Lazler, 2017; Hansen and Hartley, 2021; Hermann, 2021).

This research contributes to the developing discussion on personalisation in journalism. In line with recent research, we understand the technologies of personalisation as co-constituted by material arrangements and social practices of discourse and action (see Bucher, 2017). By tracing articulations of personalisation, we inquire how normative – epistemic, moral and societal – concerns are navigated in legacy media, and explore the encounter between technologies of personalisation and journalistic norms, values, aims and practices. Based on recent literature reviews of the ethical challenges of recommender systems and personalisation (Milano et al., 2020; Hermann, 2021), we discern and explore five areas of normative concern: autonomy, opacity, privacy, selective exposure and discrimination. We then study how these concerns are reflected on and addressed in articulations of the development of personalisation by representatives of Finnish journalistic media, where the ethical and societal implications of algorithmic technologies have attracted considerable attention (Haapanen, 2020, 2022; Rydenfelt, 2022). Our analysis indicates that personalisation challenges traditional notions of journalism, and points to ethical blindspots in the algorithmic technologies of personalisation. However, it also reveals that personalisation is not simply an “outside” technology introduced in a new context; rather, personalisation has its own history in journalism, and it is presently being adopted in ways that aspire to accord with journalistic norms and aims.

Theoretical background

Algorithms and artificial intelligence (AI) are distinct categories in the technological sense, but they are often employed as alternative, and possibly interchangeable, labels for the data-based and automated production of information and knowledge. Data-based automation is surrounded by a kind of “mythology” (boyd and Crawford, 2012) in that it is associated with knowledge-making and decision-making capabilities that may surpass those of humans. This mythology is present in official documents such as national AI strategies, which establish AI as a general-purpose technology that will inevitably and massively disrupt societies (Bareis and Katzenbach, 2022). Regardless of whether this is the case, these technologies and the expectations concerning them have powerfully shaped and continue to shape our daily practices, physical and digital environments, and interactions in professional and personal contexts (Taddeo and Floridi, 2018).

During the past decade, it has become clear that the changes ushered in by algorithms and AI across domains of application should be examined critically. Recent reviews of research literature on these technologies have identified and categorised findings concerning “the ethics of algorithms” – the normative and societal issues that these technologies may incur (Mittelstadt et al., 2016; Tsamados et al., 2021). Some of these are epistemic in nature. Algorithms and AI are used to produce action-guiding information, but that information may be erroneous or insufficient to provide justification for action. The means of algorithmic knowledge-making may also be inscrutable to human observers, a concern compounded by potential failures due to erroneous or biassed data. Other concerns can be regarded as moral. Decisions driven by algorithmic knowledge-making may be unfair, discriminating or ethically wrong. Algorithmic knowledge-making may result in potentially harmful rationalities as well as in practices of surveillance, prediction and intervention. Besides specific epistemic and moral concerns, algorithmic knowledge-making may impair our capabilities in identifying cause-and-effect relationships and assigning ethical responsibility (see Rydenfelt, 2022).

In the more particular context of media, AI and algorithms are perhaps most readily identified with personalisation, targeting and filtering of content in social media. In social media, personalisation of content is based on algorithmic technologies, including both human-generated algorithms and machine learning. However, these technologies of personalisation have also been introduced into journalistic media, in part due to a commercial push for their deployment. Much of the enthusiasm in journalism research has focused on the utilisation of AI and algorithms in the automated creation of content (Carlson, 2015; Caswell and Dörr, 2018; Clerwall, 2014 ). Currently, however, such automation systems remain modest, and these technologies are more commonly used in the personalisation of news content (Bodó, 2019; Haapanen, 2020, 2022; Helberger, 2019; Rydenfelt, 2022; Thurman et al., 2019). The prospects of personalisation have also been met with some excitement. For example, Thurman and Schifferes (2012) concluded that personalisation may support deliberative democracy by helping build and enlarge audiences and shift revenues from search providers, content aggregators and other intermediaries to the “content creators.” Personalisation has been argued to serve the needs and interests of audiences and make media content more valuable and enticing. News media hope that personalisation will increase audience loyalty in the long term (Bodó, 2019).

Contrasting with this optimism, the broad ethical risks and negative societal effects outlined in discussions on “the ethics of algorithms” translate to more specific concerns when algorithms and AI are used to personalise and recommend media content. Based on recent literature reviews of the ethical challenges of recommender systems (Milano et al., 2020) and mass personalisation (Hermann, 2021), we distinguish five thematic categories of such concerns over technologies of personalisation: autonomy, opacity, privacy, selective exposure and discrimination.

Loss of autonomy. It has been argued that technologies of personalisation act as additional gatekeepers of content or as new actors mediating user-content relationships (Wallace, 2018). Personalisation is often designed for the purpose of user retention (Seaver, 2019a), and may involve limiting the audience’s freedom of choice by nudging or even straightforward manipulation. In journalism, another layer of issues is introduced: content choices made by algorithms and the ways they are displayed may influence (or even replace) editorial decision-making and autonomy (Bucher, 2017; Just and Lazler, 2017; Hansen and Hartley, 2021). However, agency and responsibility are seldom attributed to algorithms, which are typically argued to operate under editorial control (Rydenfelt, 2022). Moreover, developing algorithms and models based on data requires technical expertise that journalists and other traditional newsroom workers rarely possess. As a result, software developers and other “technologists” have become part of the production of journalism, although their background training typically is not in the field (Haapanen, 2020).

Selective exposure. In a pioneering article on news recommender systems, Resnick et al. (1994) wondered whether systems of recommendation lead to the fragmentation of audiences into separate groups based on their interests. This issue continues to be debated in contemporary research on “echo chambers” and “filter bubbles” where technologies of personalisation results in audiences only being exposed to like-minded content or content that is of interest to them (Pariser, 2011; Colleoni et al., 2014; Flaxman et al., 2016). In research on journalism, it has been speculated that personalised content may be characterised by a lack of diversity, leading to societal harms via ideological polarisation, in turn raising concerns for the replacement of a shared public sphere by individualised and less transparent “realities” (Just and Lazler, 2017). While the existence of algorithmically generated filter bubbles and echo chambers remains debated (Zuiderveen Borgesius et al., 2016; Dubois and Blank, 2018; Bodó et al., 2019; Bruns, 2019), the negative consequences of selective exposure nevertheless exist in subtle and complicated ways. For example, in a test setting, users exposed to partisan news determined it to be more reliable than “mainstream” news, the consumption of which was concomitantly reduced (Bryanov et al., 2020; cf. Möller et al., 2018).

Discrimination. Personalisation of media content inevitably involves determining the kind of information that the audience should receive. Because of its scale and scope, mass personalisation has been dubbed “industrialised social discrimination” (Turow and Couldry, 2018). The “worthiness” of those receiving content may be determined based on existing economic, socio-demographic and psychological features and societal divides, posing a problem of equality and fairness (see Hermann, 2021). Personalisation has been argued to reinforce existing stereotypes (e.g., Bol et al., 2020). The harms of discrimination may be exacerbated if prejudices are introduced to the models and user profiles by the assumptions of their designers and the biases involved in the data used, for example by an under- or overrepresentation of demographic or societal groups (Tsamados et al., 2021).

Opacity. The opaque nature of personalisation is a recurring theme in discussion of its negative effects. It is claimed that transparency is essential to making algorithmic processes understandable, manageable and accountable, and it has been perceived as a necessary component in the responsible use of algorithms in general (Pasquale, 2015; Diakopoulos, 2016; Berger and Owetschkin, 2019; Rydenfelt et al., 2021). In addition, providing information on personalisation could, at least in principle, reduce the effects of biases and increase the autonomy of the audience or users (e.g., Milano et al., 2020). The push for transparency has also had an impact on practice. Transparency is one of the key principles in the European Union’s General Data Protection Regulation (GDPR) governing the use of personal data (Felzmann et al., 2019). Attribution policies have been devised with the aim of disclosing the involvement of algorithms in the production of journalistic content (Montal and Reich, 2017). However, transparency has been considered difficult to implement in practice. Accounts of the operation of algorithms may be hard to understand, and their accuracy cumbersome to maintain (Ananny and Crawford, 2018). In journalism research, algorithmic transparency has been criticised for a technical and formal implementation that does not necessarily provide the public with comprehensible information (e.g., Bastian et al., 2020; Haapanen, 2020).

Privacy risks. Given that the personalisation of content is based on collecting personal data to construct models of users and their interests, it has been argued that there is an inevitable tension between privacy and personalisation (Cloarec, 2020). Risks to privacy inhere in the fact that data about users may be collected or shared without their knowledge or consent, as well as abused and leaked (e.g., Milano et al., 2020). The standard approach to dealing with the issue of privacy is requiring that users provide informed consent. However, the cognitive, epistemic and politico-economic limitations of this approach have also been enumerated in research (e.g., Lehtiniemi and Kortesniemi, 2017). Further risks to privacy may emerge when personal data and information are constructed based on users’ past actions or similarity to other users.

Technologies of personalisation are regularly implemented by social media platforms. The notion of AI-induced disruptions across society and business suggests that the spread of these technologies to journalistic media is inevitable. Indeed, central actors in journalistic media are currently implementing or considering the prospects of personalisation and recommendation of content (e.g., Bodó, 2019). Nevertheless, concerns related to the ethical issues identified above could be expected to impede the introduction of these technologies, in particular by challenging journalistic values, publicist missions and editorial control (e.g., Hansen and Hartley, 2021). The objective of our study is to probe this apparent tension. Employing the ethical challenges we have identified as our analytic and theoretical lens, we inquire how the prospects of technologies of personalisation are articulated in legacy media in Finland. More specifically, we study how and to what extent these ethical and societal issues are navigated by journalistic professionals; how personalisation is articulated as it encounters conceptions of the values, aims and needs of journalism; and how and for what reasons legacy media may resist the introduction of personalisation.

Data and methodology

The empirical context of our research is Finnish legacy media, which is traditionally characterised by a strong ethos of serving the public as opposed to advancing specific political and ideological interests (Reunanen and Koljonen, 2018). Due to the introduction of news automation and personalisation in recent years, the ethical and societal implications of these technologies have been a topic of considerable public discussion. In 2018, the annual meeting of the Alliance of Independent Press Councils of Europe (AIPCE) brought together the chairs of European media councils and representatives of various media in Helsinki for a discussion on algorithms and media ethics. In 2019, the Finnish Council for Mass Media issued a statement concerning the use of algorithms and automation in journalistic contexts. 1 This appears to be the first statement globally on the topic by a body of media ethics and self-regulation. It stipulates, firstly, that the use of algorithms is always a journalistic decision that must be made on journalistic grounds. Such decision-making may not be transferred outside the newsroom, for example, by outsourcing it to an external provider of algorithms. Secondly, the audience has a right to know about the use of algorithms. The Council recommends that if a significant amount of content on a given page view is personalised, this procedure should be disclosed in an understandable way. The coverage of self-regulation in Finland is exceptionally high; almost all journalistic media subscribe to its code of ethics, based on which the Council can address complaints concerning their editorial work.

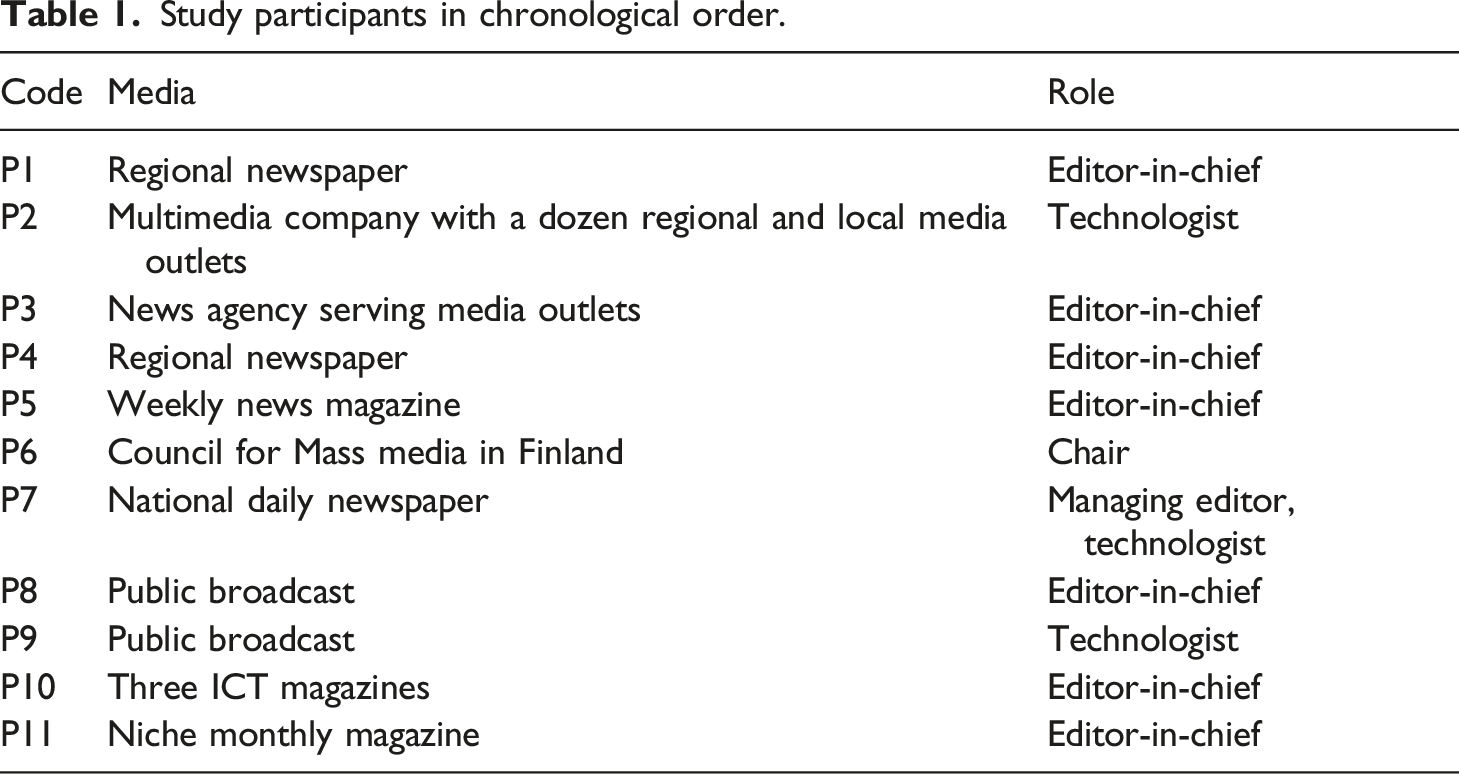

Study participants in chronological order.

The semi-structured interviews were conducted on Zoom between March and November 2021, and lasted approximately 80 min, with the shortest being 30 and the longest 100 min. They were video recorded and transcribed. All interviews were conducted by two researchers, leaving more opportunity for follow-up questions and discussion. Our main questions covered different themes of personalisation, including terminology, implementations and solutions, objectives and measurement, benefits and potential problems, transparency and regulation and the future of personalisation in their media and in journalistic media in general.

In our analysis, we began by grouping similar themes that emerged from the participants’ articulations. To provide an overview of the present state and views of personalisation in different types of media, we first distinguished articulations that pertained to its implementation and future prospects. We then connected these considerations to produce patterns based on the five types of ethical concerns drawn from our overview of theoretical literature. Lastly, we selected and translated quotations to illustrate these stances.

Instituting personalisation

The scope and future of personalisation

All of the study participants were prepared to articulate their thoughts about personalisation and related phenomena in their own journalistic media; many were also well acquainted with the existing technologies of personalisation in both social and journalistic media more broadly. However, from our first interviews, it became clear that different media varied considerably with respect to both implementation and attitudes to the prospects of personalisation. The participants commonly attributed this variation to their differing levels of resources as well as different profiles. A large national newspaper has introduced an extensive system of personalisation that influences many aspects of its content, including the front page, “read next” recommendations at the end of articles, paywall, newsletter and advertisement content. The data used by the algorithmic system includes the choices and past behaviour of both the particular reader and the audience in general. The operation of the algorithm is complemented by editorial and journalistic choices, and the current state of the development was described as an equilibrium reached between the two: “We have a good balance between manually selected and algorithmically personalised content, and so our development discussions focus on optimising existing solutions and fine-tuning metrics on how to measure them” (P7). The present balance was also described as satisfactory: “After a long development process, we are now at the level of personalisation we want to be” (P7).

Another medium making advances in personalisation was Finland’s national public broadcasting company. Its online news content is distributed in two services, its website and a news-watch mobile application. The website currently has no personalisation, but its introduction was on the drawing board: “It is the next wave of development to bring elements of personalisation [to the website]” (P8). The app, aimed at “heavy users,” involves several elements of personalisation. Users are allowed to pick interests from a list of keywords and exclude topics that they do not wish to follow. More implicitly, the app also displays content based on the user’s past activities and location data.

In smaller but regionally significant newspapers, developments of personalisation were described as closely monitored. Beyond small experiments in content recommendation, however, implementation was not considered timely, constrained, among other things, by financial and human resources: We’re following developments in the industry and considering where to spend our limited technology resources – what would be the appropriate low-hanging fruits. There are competing needs to develop technological capabilities all the time, so personalisation has not been a priority. For example, better use of analytics is now more important. (P4)

Another constraint mentioned by regional newspapers was the limited amount of content produced: “If we had a thousand stories per day, personalisation might fulfil a purpose, but limiting an already limited content does not make sense” (P1).

While editors of weekly and monthly magazines expected personalisation in news media to increase in the next five to 10 years, they expressed various doubts about its prospects in their own enterprise. One editor in chief described the monthly magazine as an artefact for the subscribers: “Above all, we are a print magazine – an artefact – for which a website and digital content are ancillary” (P11). The need for personalisation was also considered to be limited because the content was already specialised. As another editor-in-chief of magazines on themes of information and communication technologies remarked, “In Finland, the potential readership of ICT magazines is at most a few hundred thousand. We do not need a separate apparatus for personalisation” (P10).

Many participants noted that the concepts of personalisation, targeting and recommendation of content have not yet acquired discrete meanings. They were sometimes used interchangeably in discussions with colleagues, yet this appeared to occur without any confusion. These articulations also provided insights into how some aspects of personalisation are already embedded in long-standing journalistic practices. Personalisation was often referred to as “recommendation” or “targeting”, indicating that it was viewed as something that the media does for its audience. Targeting was associated with the directing of content to a specific audience; one participant referred to it as “tailoring” content for audiences (P5). Editors of magazines with specific thematic focus areas quoted this as a reason for their lack of further personalisation efforts: “The personalisation of the reporter’s work has happened already by starting to work in this magazine” (P10). Major national media, on the other hand, engaged in targeting by providing for different audience segments. A public broadcast editor compared targeting to producing stories with different points of view: the newsroom may report on the same government budget negotiations by “producing four different stories, because young people are interested in this, economics people in that, and so on” (P8). Such practices were understood as a default in journalism, with one participant noting their political history: decades back, the region had had a wider selection of “communist, social democrat, agrarian and right-wing coalition papers” which individuals could select “on ideological grounds” (P1). A long tradition of targeting news content based on local interests was also raised: for example, newspapers serving audiences across large regions produced several print editions directed at different localities and differing not only in their advertisements but also news content and its arrangement.

The participants also articulated a difference between a broader concept of implicit personalisation, and a narrower concept of explicit personalisation (see Thurman and Schifferes, 2012). The former involved what the participants often called targeting, which is based on data collected about user behaviour: for example their past engagement with content, subscription status, use of news apps or location. The latter was understood, as one participant put it, to involve “something more personal than targeting, something where a member of the audience can also influence the results” (P6). Explicit forms of personalisation would be based on personal information or data typically provided by the individual’s explicit choice, such as by selecting news categories or subscribing to specific content.

Autonomy: editorial control

In the context of journalism, algorithmic recommendation and personalisation of content has the potential to threaten the autonomy of editors and newsrooms in journalistic decision-making, possibly also obscuring editorial responsibility. The participants often addressed concerns related to editorial control implicitly and by comparing personalisation in journalistic media and social media platforms. We discerned a number of articulations of such fundamental differences that aimed to address concerns related to autonomy by highlighting the ways in which the journalists and the newsroom retain control and accountability.

One such difference is that the content displayed and recommended in the journalistic context is produced by the publication (or, in some cases, other publications of the same media group), meaning it has already undergone journalistic evaluation in accordance with editorial policies and values. A second is that personalisation does not affect individual stories: different readers may be offered different content, but not different versions of the same content. Some participants also connected the notion of personalisation to interactive articles where an audience member enters personal information, such as socioeconomic factors or diet and exercise habits, thereby automatically adjusting the content. However, the participants emphasised that these functions are transparent and adjustable by anyone by providing different information.

A third difference voiced is that the personalisation itself is done on “journalistic grounds.” The participants representing media with extensive personalisation emphasised this by their choice of terms, preferring to talk about “journalistic personalisation” or “journalistic recommendation.” The algorithmic systems were described as incorporating journalistic considerations: “Our systems have to be built so that they can take into account not only the logics of traditional recommendation algorithms but also to make note of, for example, journalistic weighing” (P9). For example, topics selected by the individual reader may be emphasised, but in an unusual news situation, the main news, as evaluated by the newsroom, will gain more visibility. Finally, the capability to justify the functioning of technologies of personalisation was emphasised: “When we talk about journalistic recommendation, it needs to be under the newsroom’s command, and the newsroom must understand it genuinely, at a level that one is able to publicly account for how it works” (P7).

Retaining control was not, however, always described as a straightforward task. One participant provided an example of the issues that they had faced in implementing personalisation of their news front page: Our personalisation engine started emphasising opinion texts on front pages. It took a while before we realised what it was all about. We publish the full content of our print newspaper online at 2 am, and that includes some 15 opinion pieces. For our personalisation engine, the publication of a large number of similar texts every night at exactly the same time made them special, and the engine began to rank those texts higher than necessary from a journalistic point of view. (P7)

As this example illustrates, problems with algorithmic personalisation and recommendation can be difficult to predict or understand despite attempts to retain full awareness and control of how these systems function (cf. Seaver, 2019b) by constantly monitoring their operation, identifying issues and moving to fix them.

Autonomy: the audience’s choices

When the technologies of personalisation enable explicit choices by readers, these were viewed as something to be appreciated and acknowledged: “If one personalises for oneself, who am I to judge?” (P10). Thus, instituting personalisation resulted in the articulation and augmentation of a value that has received little attention in research on journalism: audience autonomy. Personalisation was viewed as providing the opportunity to balance between journalistic and individual assessments of importance and was often considered benign or helpful in providing content of interest to the audience, especially when the whole news content is not personalised.

Participants also voiced some suspicion about the extent to which individual choice accords with the purposes of journalism in general. One participant contrasted the prospects of highly personalised news with the “greatness of the user interface of a traditional newspaper” that provides the reader with “both what is known to be relevant and what is not known to be relevant” (P3); such concerns were also connected with the issue of selective exposure discussed below. Moreover, despite the appreciation of readers’ choices voiced by participants, they were unclear whether people were always interested in such options, or even aware they were provided. The participants noted that the audience rather infrequently engaged with opportunities to personalise content: “In our service – and as far as I know, this also applies to other [Finnish] media where people can actively participate in personalisation – people do not often use these options” (P8).

Selective exposure: bubbles and chambers

The most central concerns with personalisation voiced by participants were connected to the issue of selective exposure and expressed in terms of “information bubbles,” “echo chambers,” the fragmentation of a shared view of reality and the polarisation of societal discourse. Connected with these different notions, these worries took somewhat different shapes. One concern was that personalisation will limit exposure to content that is relevant or important. Indeed, extensive personalisation was articulated as contradicting the whole concept of journalism as the selection, curation and production of information on the most important and relevant issues of the day. However, articulating and addressing such concerns, the participants also argued that the very concept of journalism limits the potential scope of personalisation within it, something also noted in previous studies (Bucher, 2017). As a public broadcast editor argued, journalism should continue to broadcast “the important things to everyone, while matters of taste can be personalised” (P8). Many participants argued that this is what the audience will continue to expect of journalism. An editor-in-chief of a weekly magazine noted that its subscribers still expected to receive “a package of a certain size and composition and prioritisation, with a beginning and an end” (P5).

A second concern was that a high level of personalisation may lead to diverging content for different individuals and groups, jeopardising an informational common ground among readers. However, some participants also suggested that technologies of personalisation will help journalists identify audiences within their readership based on different interests instead of concentrating on what unifies the audience. It was also argued that by recommending related – in many cases, previously published – content, personalisation may also help audiences access a broader picture of a topic, something unavailable in a traditional newspaper setting.

A third concern was that personalisation based on the interests and choices of the individual reader can lead to a lack of diversity in content. This was understood as a potential source of bias. In addition, as one participant argued, personalisation may result in less rather than more freedom for readers. This concern, however, was also almost immediately dismissed, echoing views expressed by journalists in previous research (Bodó, 2019). The participants described attempts to include an element of surprise in the algorithms that govern personalisation: There is a separate module built into our algorithm to ensure that the aspect of surprise is retained in its operation. This is related to the prevention of information bubbles, which is an individual-level problem, but also to another similar phenomenon, namely the narrowing of the supply of content, which can take place at the level of the whole medium. (P7)

Indeed, diversity itself was understood as one of the interests of audience members, and uniformity of content was considered a business risk. As one participant phrased it, “Simply blasting more of the same sort does not increase commitment” (P2).

Personalisation in journalism raises a multitude of concerns over selective exposure – the relevance and importance of content, its diversity as well as sustaining an informational common ground – discussed in terms of information bubbles and echo chambers. While the participants displayed awareness of such concerns and articulated ways in which journalistic media can navigate them, these concerns are independent of one another, and “solving” one may even contribute to the rise of another. For example, information shared by readers can be limited or of dubious relevance, and the availability of diverse content does not entail that individuals and groups encounter the same information; it may even lead to the opposite result. Thus, a factor like diversity can be seen as both productive and reductive of information bubbles, depending on which concern is considered relevant.

Discrimination: news vs. engagement

It has been suggested that technologies of personalisation enable discrimination on a massive scale by deeming some individuals or groups more “worthy” of being provided with particular content or by exploiting their weaknesses and fragilities (Hermann, 2021). The participants of our study did not address discrimination explicitly; however, as already noted, a recurring concern of participants was the effect of personalisation on providing informational common ground for audiences. For some, these concerns limited the journalistic use of personalisation considerably. An editor-in-chief of a major regional newspaper dismissed personalisation in journalism as unfit “for broad societal purposes, for education, for the finer goals” (P1), arguing that the point of the publication is to provide a unified view of what is important for its region and audience. This medium had chosen to go upstream with its own solutions: We used to have tools based on users’ previous behaviour and explicit choices. However, we stopped using them some years ago in order to strengthen our editorial decision-making. We see curating as an essential part of editorial work: we tell readers what they should read, and we also provide content that they themselves wouldn’t have chosen, and through that we create community among the people in this area. (P1)

Some participants also noted that while personalisation does not currently affect individual items of news, the technologies could be developed in this problematic direction: “When we know that a certain part [of the audience] prefers to hear a particular message, projecting a bit further into the future, it does not take too much to tailor the content by emphasising selected facts” (P5).

Aside from these concerns with the informational common ground, however, the participants did not articulate any particular issues connected with discrimination, as they did not view personalisation in journalism as attempting to exploit personal data collected from readers for direct financial gain, like targeted advertising. In a recent study based on interviews with journalists, Bodó (2019) has proposed that journalistic media follow a “news logic of personalisation” that aims to sell news to the audience, as opposed to a “platform logic of personalisation” that attempts to create engagement and sell audiences to advertisers. Participants in our study expressed similar views, emphasising the different business models employed. Personalisation in journalism was perceived as providing the potential for improved “user experience” (P8), leading to a more committed audience and serving, in turn, both journalistic and financial aims. Here, commitment was not simply identified with “engagement” or the amount of time that readers spend with content. Its aim was not to gather data and sell audiences. Rather, it was articulated as a broader notion driving consumption and subscriptions. Simple engagement was not considered the key to success: as one magazine editor-in-chief put it, “We do not want to maximise web traffic from social media but rather optimise so that it attracts those who are interested in reading and subscribing to our magazine” (P5).

Indeed, in one case, providing the content wanted by the audience was juxtaposed with increased engagement. Representatives of the public broadcasting company discussed whether they would best serve some parts of the audience by providing ways of “disengagement,” such as quick summaries of the most important daily news, a prospect not articulated by representatives of commercial media. Other participants also noted that in the competition for limited audience time, efficient ways of providing key content could also act as an advantage, increasing commitment and subscriptions.

Opacity: the instrumental role of transparency

The issue of opacity in personalisation was connected with concerns over both autonomy and selective exposure, with the former pertaining to both journalists and audience. Many participants emphasised that journalists cannot control the technologies of personalisation without an understanding of their aims and function. In the absence of information about personalisation, readers may be unaware of ways that it adjusts the content they consume and even of the fact that content may differ from person to person. Accordingly, transparency – the opposite of opacity – typically filled an instrumental role: it was viewed as enabling both informed choices and the prevention of selective exposure.

With respect to the audience, study participants articulated notions of transparency in connection with personalisation on two levels. Their primary concern was to ensure that the audience is aware of personalisation in general, and understands “which sections of the front page are personalised and that the reader has the opportunity to influence which articles appear in that section” (P2). One editor suggested that transparency could be implemented by “making it possible for the reader to turn off personalisation and compare changes that take place in the news display” (P7). Some participants also argued that transparency should involve information about why particular content is being displayed, telling the reader, for example, that “you are seeing this Finnish baseball story because you have been interested in baseball before” (P8).

However, the participants did not argue that transparency should extend to a full explication of the ways that personalisation is implemented. Moreover, although transparency concerning personalisation was a frequently voiced ideal, providing information on it might not be relevant to the audience. When asked for their views on audience understanding of personalisation, many participants observed that it appears to be of little interest, and awareness of it remains limited: the newsrooms rarely received inquiries about their personalisation practices.

Privacy risks: the exploitation of data

The central concerns of privacy and misuse of personal data prevalent in discussion of algorithms and their potential harms are less salient in empirical research on personalisation of content within journalism. Our study is no exception: direct concerns about data gathering and exploitation were not raised by the participants. One of the likely reasons for this is that much of the data in question is, at present, provided by the audience’s explicit selection of preferences. Moreover, as already noted, the participants’ articulations of the task and prospects of personalisation in journalism underscored the differences between the economic models of journalistic media and social media. Journalistic media focus on creating content that the audience wants and needs. Accordingly, personalisation was understood as a way of improving a content-based service rather than as a means to collect data to be sold or exploited for advertising purposes.

Discussion

Our analysis indicates that journalistic professionals attempt to address, in numerous ways, ethical and societal concerns with personalisation that are connected with journalistic aims and values, including (editorial) autonomy and selective exposure. However, other concerns more remote from traditional journalistic practices and largely due to the technological underpinnings of personalisation, such as discrimination and issues of privacy, received far less attention, amounting to potential ethical blindspots in the institutionalisation of these technologies.

Previous research has suggested that personalisation may sit uncomfortably with the aims of journalism by threatening the ideals of maintaining an informed democratic society (cf. Bucher, 2017: 926; Fürst, 2020; Helberger, 2019) and, in the case of public service media, universality (Van Den Bulck and Moe, 2018). Our analysis shows that extensive personalisation of content may challenge the notion that journalism provides the same content for all, choosing what is important and relevant and, in many cases, producing an object with a beginning and an end. These articulations of journalism are not only expressions of professional identities; they are also viewed as conforming with audience expectations. Moreover, our interviews highlight media plurality with respect to the prospects of personalisation, including its perceived pointlessness in connection with an already highly curated or limited pool of content. Even with larger media, personalisation is not required to filter and select from extensive corpora of information, as the relevant reservoir of content is limited by its status (mainly) as journalistic content. While many participants considered their media is likely to adopt personalisation in the near future, some were suspicious about its relevance in general. The overall ascent of personalisation cannot be described as inevitable.

In recent research literature, personalisation has been approached as a new phenomenon introduced to the journalistic context largely to replicate social media functions and emulate their success. Accordingly, personalisation could be expected to carry with it ethical issues connected with algorithmic knowledge production and decision-making more generally. Our analysis, however, also indicates that personalisation is hardly an “outside” technology; rather, many of its facets have a long history within journalistic practices. These historical roots may help to account for why the study participants displayed relatively limited concern about the institution of personalisation – especially when contrasted with the enduring discussion of the problems of related technologies in social media. While the technologies of personalisation based on data collected from the audience are relatively novel to journalism, these technologies are largely made to conform with and develop existing journalistic practices and values rather than supplant them with wholly new implementations and aims. It has been suggested that personalisation is being adopted as a way of “selling news” to audiences rather than in order to create engagement and sell audiences to advertisers (Bodó, 2019). Tracing the articulations that attempt to alleviate ethical and societal concerns related to the introduction of algorithmic personalisation, our analysis indicates the presence of further such considerations:

Personalisation is not a new phenomenon in journalism. While the collection of data based on the audience’s activities is a novelty, recommendation, targeting and “tailoring” of content has always been a part of journalistic practice, beginning with the selection of themes and assessment of their relevance and interest. Smaller magazines viewed their content as being already “personalised” with respect to the interests of their audience, while major news outlets produced different stories and perspectives on the same developments to different segments of the audience, and larger newspapers had a history of catering differently to various regions and localities in print. Moreover, many of the practices connected with recommending content were already developed when news websites were adopted. Personalisation is not a new practice simply brought into journalism from social media. Indeed, early personalisation systems were developed for contexts that are close to journalism, such as filtering Usenet news (e.g., Resnick et al., 1994).

Personalisation may accord with journalistic norms and criteria. At least one recent study documents how the introduction of personalisation by algorithmic means led to the realisation that editors are unable to monitor or control outcomes, resulting in attempts to regain control (Hansen and Hartley, 2021). However, our study shows that newsrooms and journalists claim to be able to institute technologies of personalisation in accordance with journalistic criteria and norms from the beginning, epitomised by the notion of “journalistic personalisation”. This aspiration is highlighted in the Finnish context by the Council for Mass Media statement endorsing the retention of journalistic decision-making in the hands of the newsroom, even when new technologies are implemented (Grundström et al., 2019; Rydenfelt, 2022).

Personalisation may help attain journalistic aims. While technologies of personalisation may counter journalistic aims, our analysis shows that personalisation is also expected to support their attainment by improving service and enabling the audience to find relevant content, in some cases adding depth by providing related content recommendations. Indeed, personalised recommendations can be designed to increase diversity, for example to stimulate exposure to information that people are likely to miss otherwise (Helberger et al., 2018). Our participants anticipated that such improvements would also help to increase audience commitment that, in turn, may contribute to subscriptions and financial gain.

Personalisation may enable the augmentation of little noticed values in journalism. Like any modification of existing journalistic practices, personalisation of content may trigger a renewed awareness and consideration of the core values of journalism (Bastian et al., 2020; Haapanen, 2020). Researchers have long noted that journalists have considered the audience both as a “public” that should be provided with content that is somehow ‘good’ for it, while the commercialisation of news media has led to the notion of audience as consumers that is typically segmented based on demographic profiles (Ang, 1991; see Willig, 2010). Anderson (2011) has argued that, with the ability to track how individuals consume news online in real time, news media has become more attuned to the wants and interests of the audience, although information about those interests is filtered in an algorithmic fashion (see Hansen and Hartley, 2021). Our study shows that personalisation may add another element to this development. In our analysis, a journalistic value that has received little attention emerges: audience autonomy. The participants voiced respect for the audience’s freedom to choose from available content, and personalisation was seen as potentially providing a balance between the newsroom’s assessments of relevance and those of individual readers.

On the other hand, our analysis indicates that some ethical concerns connected with personalisation in previous research presently receive little attention in journalistic practice. Issues concerning editorial control and autonomy were discussed extensively by participants, and concerns over selective exposure were articulated in various ways, enabling us to distinguish those connected with the importance and the diversity of content as well as the informational common ground. The focus on these issues may be due to their salience with respect to established journalistic practices and norms, as well as their visibility in public discussions on related phenomena such as “filter bubbles”. However, concerns that are less directly connected with journalistic aims and traditional ethical questions in the field and introduced by the technologies required for the implementation of personalisation received far less attention, amounting to potential ethical blindspots in the journalistic adaptation of technologies of personalisation:

Personalisation may result in inequalities. The nature of journalistic content as well as the journalistic aim to provide informational common ground counteract the possibility that personalisation may result in forms of discrimination. Our analysis suggests that journalists are well aware of the potential threats of splitting audiences into various groups and of extending personalisation “inside” news items, adjusting the content for different readers. Yet, even with protections in place, personalisation – especially when equipped with data on subscriptions and engagement with advertising – may result in the emergence of implicit user profiles that determine worthiness for different types of content and also exploit vulnerabilities.

Personalisation enables the further exploitation of data. Issues related to privacy were barely addressed in our empirical data, possibly because the journalists interviewed only reflected on the ways personal data may be put to “journalistic” use, while the use of data for other purposes, such as advertising, is typically handled by separate departments within media businesses. A related reason for the absence of concern over exploitation of data might be related to a focus on explicit understandings of personalisation. While the participants recognized implicit forms of personalisation based on behavioural profiling with data collected about users, they nevertheless largely discussed forms of personalisation that involve explicit user choices. In this way, personalisation may be assumed to be consensual, unproblematic and harmless with respect to privacy risks. However, data originally collected for one purpose may be exploited for another, indicating a need for increased consideration of the long-term uses and potential misuses of data.

Conclusion

The ethical and societal concerns presented by algorithmic personalisation of content are topics attracting considerable discussion that has focused on the use of these technologies in social media. Based on recent literature, we distinguished five areas of concern – autonomy, opacity, privacy, selective exposure and discrimination – and traced how they are addressed and navigated in the context of legacy media in Finland. While our analysis indicates that personalisation challenges traditional notions of journalism, such as choosing what is important and relevant and providing the same content to everyone, it also reveals that personalisation is not simply an “outside” technology being introduced to legacy media. Aspects of personalisation have a long history within journalistic practice. Our analysis also shows that journalists attempt to navigate and address ethical and societal concerns related to personalisation by highlighting the various ways in which these technologies are adapted to accord with journalistic norms as well as serve and underscore journalistic aims. However, our analysis also indicates ethical blindspots in algorithmic technologies of personalisation pertaining to concerns that are less directly connected with established journalistic practices, values and aims, in particular the possibility of (future) misuse of the data collected as well as potential discrimination and inequality in the distribution of journalistic content.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research has been supported by the Helsingin Sanomat Foundation. Lehtiniemi’s work has also been supported by the Academy of Finland project “Re-humanising Automated Decision-making” (grant 332993). Haapoja’s work has also been supported by the Kone Foundation projects “Algorithmic Systems, Power, and Interaction” and “Digital Ideologies: Interrogating the Politics of Information Systems.”