Abstract

This article investigates the persistence and transformation of Andrew Tate’s presence on YouTube following the removal of his official channels in August 2022. Combining two empirical approaches—a small-scale analysis of top-ranked videos from YouTube search results in 2022 and 2024, and a large-scale data set of over 112k videos—we examine how Tate-related content continues to circulate and how the platform moderates such material. Our findings show that Tate remains highly visible through a diffuse and decentralized network of actors who repackage his messaging into interviews, remixes, and YouTube-native formats. This configuration produces what we term the “Tate-space”: an ambient ideological environment where motivational rhetoric, aspirational masculinity, and far-right talking points converge. We find that YouTube’s substantial moderation efforts are outpaced by the speed and scale of recommendation-driven circulation and that deplatforming, while symbolically significant, fails to disrupt the cultural and logistical dynamics that sustain Tate’s influence.

Introduction

Online platforms facilitate interaction and inter-acknowledgment between users. They also function as broadcasters of political and ideological stances as well as sites where such stances are developed and debated. While social media platforms can serve social connectivity, participation, and marginalized voices, they can also embolden, mobilize, and finance disinformation flows and conspiracy (Shu et al., 2020), nationalism and populism (Marwick and Partin, 2022), and far-right extremism (Lewis, 2020). With this double-edged sword in mind, scholars have examined platform governance, that is, how norms and rules are or ought to be embedded and enforced within platforms (Gillespie, 2018; Gorwa, 2019; Van Dijck et al., 2019). Yet even if we were to agree on which norms and rules should apply, the question remains how governance mechanisms can be designed to promote them effectively, and whether existing mechanisms actually produce the outcomes they promise. To contribute empirically to these critical considerations, this article studies the efficacy of a specific content moderation technique—deplatforming (Rogers, 2020)–by examining YouTube’s removal of Andrew Tate, one of its most notorious content creators.

YouTube is a globally dominant medium, operating at a scale which invites complex problems. Early commentators even described it as “the great radicalizer” (Tufekci, 2018) because of the way its infrastructure could surface ever more extreme material. Indeed, YouTube has been repeatedly shown to feature many extreme political, conspiracy-centric communities and influencers (Lewis, 2020; Ribeiro et al., 2021). More specifically, Lewis (2018) observed and described an Alternative Influence Network (AIN) on YouTube as a “fully functioning media system,” composed of far-right micro-celebrities who “claim to provide an alternative media source for viewers to obtain news and political commentary” (p. 4). While the platform has tried to curtail these movements through frictional fact-checking labels, demonetization, and deplatforming, several studies have shown that conspiracy and alt-right channels still find ways to monetize their content (Ballard et al., 2022; Hua et al., 2022) or simply migrate to more lenient platforms (Rogers, 2020; Urman and Katz, 2022).

The platform has also been shown to host “manosphere” subcultures that promote men’s rights while adopting adversarial positions toward feminist and LGBTQ + communities (Ging, 2019; Papadamou et al., 2021). Moreover, Mamié et al. (2021) found that manosphere and alt-right conspiracy communities are interconnected through YouTube’s recommendation systems and exhibit substantial audience overlap. Andrew Tate, a British-American kickboxer, entrepreneur, and influencer, described as “the manosphere’s most prominent content creator” (Roberts et al., 2025: 20), embodies this convergence. Particularly popular among teenage boys and embroiled in a series of legal controversies, his two YouTube channels were removed in August 2022, following similar bans on other platforms (Spring, 2022).

This article examines how Andrew Tate has continued to maintain a presence on YouTube despite deplatforming, and how the platform has subsequently moderated content associated with him. We employed two empirical approaches: first, we collected smaller data sets of top-ranked Tate videos through YouTube’s search function in 2022 and 2024 to trace the evolution of surfaced content; second, we assembled a substantially larger data set of 112,466 videos to provide a broader overview. In both cases, we also assessed YouTube’s content removal efforts. Our findings show that Tate remains highly visible on the platform, now through a diffuse network of actors, formats, and thematic framings rather than through his own channels. This continued visibility facilitates the “ambient” spread of his violent ideologies and, crucially, appears to be helped by a recommendation system that outpaces YouTube’s comparatively slow moderation processes.

Literature review

Content moderation

Content moderation and deplatforming are key tools of platform governance, part of an arsenal of techniques for shaping user practices and interactions (Gillespie, 2018; Gorwa, 2019; Poell et al., 2022; Roberts, 2019). They require taking positions on specific types of content, continually negotiated with public expectations and other external pressures. Regulatory demands include universal prohibitions on content like child pornography (Karanicolas, 2020), but specific legislation also intervenes. The US Children’s Online Privacy Protection Act (COPPA), for example, prompted YouTube to launch a “made for kids” feature that sets separate rules for channels and videos targeting younger viewers. Conversely, laws like Section 230 of the US’ Communications Decency Act and the EU’s E-Commerce Directive immunize platforms from liability for both user-published content and content moderation (Grimmelmann, 2015). Furthermore, market forces, notably advertiser concerns following events like the 2017 “adpocalypse,” where several companies threatened to leave YouTube after their ads appeared on hateful videos, shape content policies and enforcement practices (Poell et al., 2022).

To navigate evolving external pressures and the inherent tension between protecting free speech and mitigating harm, platforms like YouTube continually revise their Community Guidelines and Terms of Service (ToS). This tension is frequently framed as a binary, exposing platforms to criticism for appearing to privilege one principle over the other. In response, Maddox and Malson (2020: 6) describe how platforms employ “vague imaginary lines” beyond which content becomes subject to moderation. YouTube regularly deploys this discursive strategy, affording itself considerable interpretive flexibility to adapt to emergent crises and shifting public expectations. The company states that their policies are designed to “maintain a responsible business that viewers, creators, and advertisers can rely on in order to continue to thrive” (YouTube, n.d.-d) and “keep YouTube fun and enjoyable for everyone” (YouTube, n.d.-e). The guidelines aim to “give everyone a voice and show them the world” (YouTube, n.d.-d) while acknowledging the existence of harmful behaviors like harassment and abuse. Hate speech, for example, is defined as “content that promotes violence or hatred against individuals or groups based on any of the following attributes,” including gender, ethnicity, and religion (YouTube, n.d.-b). While YouTube provides some definitions and examples, they remain vague when it comes to thresholds and consequences, stating that in “some rare cases, we may remove content or issue other penalties”

Like most platforms, YouTube combines machine learning and human reviews to flag, audit, and enforce content policies (YouTube, n.d.-c). Both methods raise concerns about transparency, bias, and the entanglement of values with corporate interests (Gillespie, 2018), issues that are especially troubling given that content moderation exerts speech power, amplifying some voices while silencing others. When content is found in violation of guidelines, YouTube employs “a combination of flexible and strict moderation techniques” (De Keulenaar et al., 2021: 120) to impose penalties. Violations result in video removal and “strikes,” with three strikes in 90 days—or a single, vaguely defined “severe abuse”–leading to deplatforming (YouTube, n.d.-a). In addition to removals, YouTube announced in 2019 that it would aim “to reduce the spread of content that comes right up to the line” by limiting “recommendations of borderline content and harmful misinformation,” reducing its reach “dramatically” (YouTube, 2019). Chen et al. (2023) suggest that the absence of “rabbit holes” in their representative behavioral and survey data may reflect these changes to recommendation and ranking. Taken together, these measures show that YouTube can modulate not only what is removed but also what is rendered visible, which further underscores the platform’s considerable discretion in managing content.

Deplatforming and replatforming

In this study, deplatforming refers to the complete removal of a person’s accounts from a platform. High-profile cases include the far-right YouTuber Alex Jones (Rauchfleisch and Kaiser, 2024) and US President Donald Trump, whose Twitter account was suspended in January 2021 and later reinstated after Elon Musk’s acquisition. As the most severe form of content moderation, deplatforming has garnered increased attention from researchers (Buntain et al., 2023; De Keulenaar et al., 2021; Innes and Innes, 2023; Pisharody et al., 2024; Rogers, 2020; Urman and Katz, 2022), yet its legitimacy and effectiveness as a public safeguard remain contested. Beyond its problematic effects on already marginalized populations (Alimardani and Elswah, 2021; Zolides, 2021), Rogers (2020) observes that deplatforming is often framed as politically motivated, with right-wing actors invoking “cancel culture” to claim victimhood. From a logistical standpoint, deplatforming also produces complex effects on user behavior and platform ecosystems. On Facebook, for example, Thomas and Wahedi (2023) observed a decline in hateful discourse after deplatforming opinion leaders. However, banned accounts often migrate to more permissive or niche platforms, sometimes bringing their most engaged audiences with them (Innes and Innes 2023; Rauchfleisch and Kaiser, 2024; Urman and Katz, 2022). Although such shifts may reduce mainstream visibility, they can also reinforce group cohesion and intensify radicalization, as studies on Reddit and Twitter find that deplatformed communities often become more toxic after migrating (Ali et al., 2021). However, as Pisharody et al. (2024) show for election-denial content on YouTube, accounts and material removed under stricter policies do not necessarily reappear even when rules are later relaxed.

The term replatforming can encompass a range of behaviors, including migration to alternative platforms. Here, however, we focus on efforts to reclaim the content and ideology of a deplatformed public figure on the same platform, though not necessarily by the figure themselves. As Innes and Innes (2023) argue, such efforts are often driven by follower admiration and may involve mimicking banned accounts through so-called “minion accounts” or editing and reposting existing material in other ways. They also note that deplatforming may trigger a “Streisand effect,” whereby attempts to suppress content inadvertently amplify attention to the banned persona. This dynamic not only restores previously removed content but can extend the reach of new material produced by the deplatformed figure on other platforms.

Andrew Tate

To explore the broader impact of deplatforming and subsequent replatforming on YouTube, this article takes Andrew Tate, a prominent “manosphere” influencer, as a case study. Tate first entered UK popular culture via Big Brother in 2016 before being removed from the show. His online persona, which combines dominance-oriented self-help with overt misogyny, alongside sustained controversy and legal issues, drove a sharp rise in visibility: he became the third most-Googled person in the UK in 2023 and now reaches a large international audience via his once-banned, later reinstated X (Twitter) account with over 10 million followers. During the early phase of this study, brothers Andrew and Tristan Tate were arrested and jailed in Romania on human-trafficking charges; they have since been released but remain under criminal investigation in Romania, the United Kingdom, and the United States (Kwai, 2025).

Ging (2019) defines the manosphere as a “loose confederacy of interest groups,” (p. 639) encompassing interconnected blogs, forums, and subcultures united by misogynistic, anti-feminist beliefs and narratives of male victimhood. Despite internal contradictions, figures like Tate provide “a simplistic but attractive framework for boys and men to make sense of a world where their entitlements have been eroded” (Roberts et al., 2025: 21). This includes crude evolutionary psychology revolving around “alpha” and “beta” masculinities where women are framed as irrational and hypergamous (Ging, 2019: 649), alongside “actionable” self-help advice on physical and mental discipline, relationships, wealth, and “hypermasculine” lifestyles. Haslop et al. (2024) highlight the aspirational nature of Tate’s brand: “Tate eulogizes men that fight, build, go to war; for Tate, action is getting up, working out, setting up a business” (p. 8).

This directly connects with Tate’s money-making ventures. In 2021, he founded Hustler’s University (HU), later rebranded as The Real World (TRW), an online platform teaching income generation through e-commerce, copywriting, drop-shipping, and cryptocurrency. A Buzzfeed investigation revealed that HU’s business advice is infused with Tate’s characteristic anti-LGBTQ and misogynistic rhetoric (Dahir, 2022). Between June and September 2022, HU’s subscriber base reportedly surged from 12,000 to 160,000, likely fueled by Tate’s heightened visibility following multiple deplatformings (Dahir, 2022), complementing the alleged “millions” in earnings (Scully, 2022) from a webcamming business he co-ran with his brother Tristan.

Following Instagram, Facebook, TikTok, and Twitter, YouTube terminated Tate’s two channels on August 22, 2022 (Spring, 2022). TateSpeech (768k subscribers) featured informal livestreams and monologues, while Tate Confidential (317k subscribers) was a more polished vlog format that later migrated to Rumble (Altaf, 2022). The company told the BBC the removals were due to multiple violations of Community Guidelines and ToS, including hate speech (Spring, 2022), and Bloomberg (D’Anastasio and Alba, 2022) reported that Tate had already received a strike in July 2022 for breaching Covid-19 misinformation policy. The specific videos that prompted these actions were not mentioned.

Methodology

This study investigates how Andrew Tate remains present on YouTube after his official accounts were removed. We were specifically interested in understanding what videos the platform promotes through its search function at different points in time, what content is produced and viewed on a larger scale, and how these items are subjected to moderation. To answer these questions, we collected two sets of data: a pair of search-based “visibility” snapshots indicating what YouTube surfaces to users at specific dates, and a large-scale video harvest estimating the universe of production over a year. Using both allows us to compare what is shown with what is made, and to examine removal patterns across scales.

Visibility approach

First, following a well-established tradition in digital methods research (Rogers, 2019), we queried for [Andrew Tate] to both inspect the company’s operational understanding of relevant content and approximate the ranked list of videos a user would have received at that time. Using the YouTube Data Tools (Rieder, 2015) with the default “relevance” setting, we collected 397 results on 1 December 2022 and 425 on 29 April 2024 in order to compare a snapshot taken shortly after deplatforming with a more recent one. To look more specifically for minion accounts, we also performed a channel search on the same dates, using the names of Tate’s two deleted channels as queries, retrieving 50 results each.

We analyzed top-ranked, most-viewed, and a random sample of 30 videos from both video search data sets to understand what YouTube deems not only appropriate but desirable first-page content, what creators were publishing, and what viewers were actually watching. The analysis involved predefined categories such as monetization, position, theme, and format, as well as an open coding scheme (Corbin and Strauss, 1990) to identify key points of discourse which were then consolidated and refined. We then grouped videos into three main categories that structure the bulk of our findings section.

Large-scale approach

Second, we aimed to collect as many videos as possible matching the query [Andrew Tate] within a defined timeframe. Using the YouTube Data Tools’ “daily search” feature, we queried for videos published after 15 October 2023 at weekly intervals between 23 April and 25 November 2024, to cover a period of at least 1 full year of video production, while keeping API quota costs in check. Similar to the approach proposed by Violot et al. (2024), this feature conducts a separate search for each day within the selected timeframe, thereby circumventing YouTube’s API limit of 500 videos per query. Each day in the period was thus queried individually several times, resulting in a data set of 121,921 videos. Since the API often includes clearly off-topic results (Rieder et al., 2025), we used relevant keywords (Appendix 1)—which we refer to as “Tatewords” throughout—to narrow our data set to 112,466 videos that directly correspond to Andrew Tate and the themes he is associated with. 1

In addition to quantitative analysis, we employed topic modeling on video titles to obtain an overview of the subject matter. We chose not to include video descriptions and tags due to their often irrelevant or “spammy” content. Following the recommendation of Egger and Yu (2022), we employed the LLM-based BERTopic (Grootendorst, 2022), which is modular and well-suited for exploratory data analysis. We used all-mpnet-base-v2, the highest ranked Sentence Transformer model 2 at the time of writing, for word embeddings and HDBSCAN for clustering. In contrast to traditional topic modeling, HDBSCAN does not require users to specify the number of desired topics but organizes granularity through a min_cluster_size parameter. After several rounds of experimentation and validation, we settled for a value of 350, which provided 60 topics and a good compromise between granularity and aggregation. Since BERTopic is better at detecting small, fine-grained clusters than broad categories, we inductively grouped outputs into 10 higher-level themes to gain a general picture (Appendix 2).

Content removal

To examine YouTube’s moderation practices, we rechecked all videos and channels in our data sets in December 2024. Since YouTube’s API does not specify why videos are unavailable, we wrote a custom scraper to collect this data from the web interface. Among nearly 36k inaccessible videos, 70.1% showed “video unavailable,” followed by “removed for violating YouTube’s Terms of Service” (22.1%) and “private video” (7.7%). These reasons are ambiguous. For instance, none of the 93 videos—all YouTube Shorts—by WealthTeachers, the most-viewed channel in our large-scale data set, were available by late 2024, yet only seven were flagged for violating ToS. We might assume the channel owner removed all Tate-related content after receiving strikes, but we cannot always be sure whether a video being unavailable is due to direct intervention by YouTube or other reasons. In addition, notifications varied across devices and locales, sometimes containing spelling errors, suggesting they are an inconsistently prepared afterthought. We therefore excluded these notifications from most analyses. However, because the query [Andrew Tate] shows much higher rates of missing/unavailable videos than less controversial queries (Rieder et al., 2025), we interpret this pattern as pointing to heightened YouTube moderation activity.

Limitations

Content removals significantly challenged our research, as we could not access all videos for qualitative analysis. We thus sometimes relied on titles, tags, video descriptions, and channel names to make assessments. For missing channels in our two smaller data sets, we reconstructed relevant information such as subscriber counts through SocialBlade, 3 a social media analytics company specializing in YouTube. Since we were not able to test the availability of videos and channels at regular intervals, we are not able to pinpoint the exact dates when content was removed. Such challenges are inherent to researching deplatformed content, however, and can only be mitigated through exceedingly complex and time-consuming research setups.

YouTube’s API also presents challenges for reliable data collection. First, while the API provides a “baseline” (Rieder et al., 2018), individual users may receive different search results based on their location or viewing history. Second, search results can change significantly over time. We thus configured our smaller video searches to retrieve up to 500 videos, broadening the scope beyond the usual top 10 or 20 results. Finally, the API’s search endpoint favors recent content, with results dropping off heavily for videos older than 20 days (Rieder et al., 2025). To compensate, we conducted weekly searches for our large-scale data set instead of relying on a single search.

Findings

Visibility approach

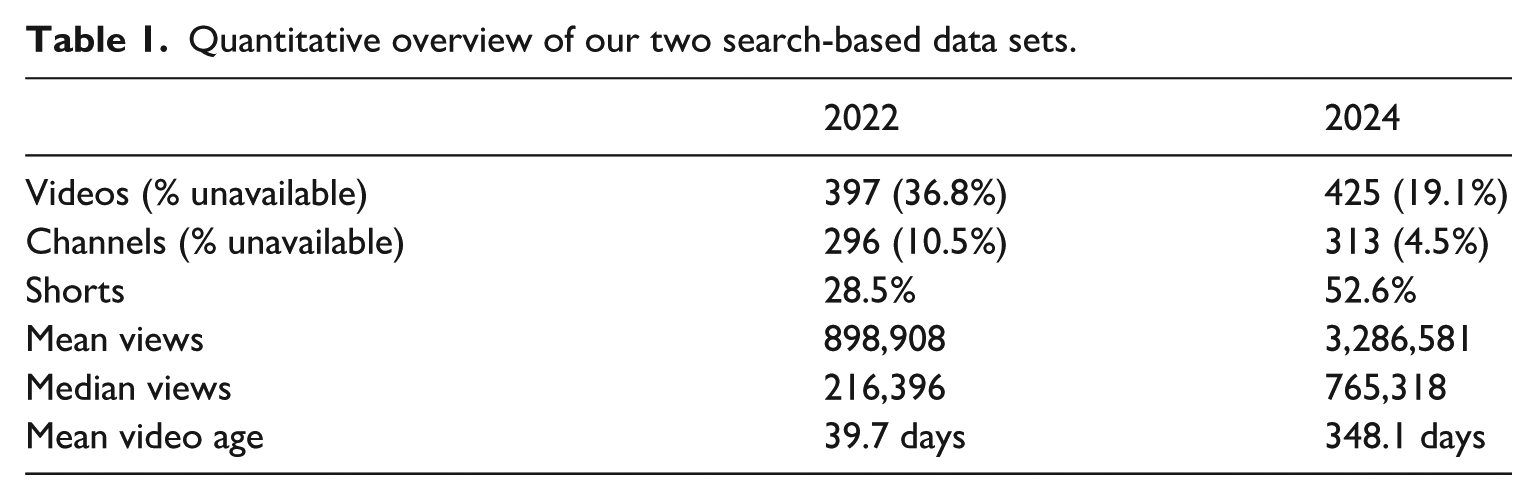

Although the 2022 and 2024 search-based data sets have similar video and channel counts, the 2024 sample shows mean and median views three to four times higher (Table 1). Part of this gap likely reflects recency bias: the 2022 snapshot covers only the prior 4 months of uploads, whereas the 2024 snapshot includes older content with more time to accumulate views. At the same time, the scale of the difference is consistent with increased visibility of Tate-related content. The concentration of younger videos in the 2022 sample may reflect a spike in attention (Rieder et al., 2018), supporting Dahir’s (2022) claim that media coverage of Tate’s deplatforming may have boosted his visibility.

Quantitative overview of our two search-based data sets.

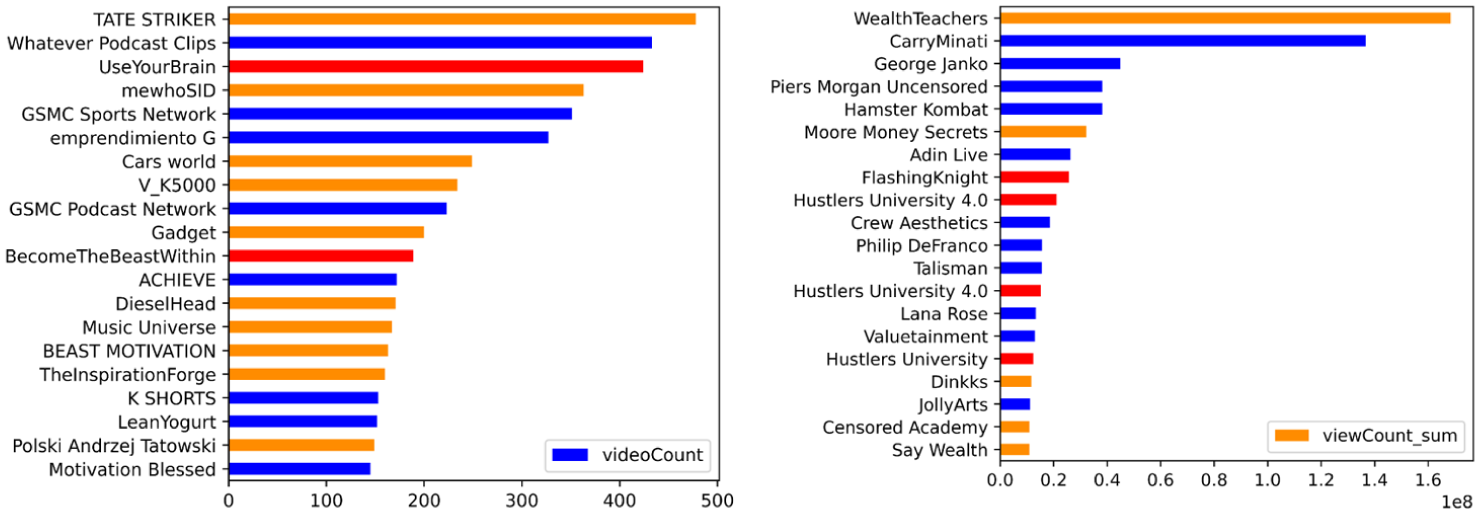

The 2022 data set had nearly twice as many videos missing when checking in December 2024, although these videos again had more time to be flagged and removed. However, since over 7 months passed between data collection and verification for both data sets, this is likely to not be significant, given that the median removal time on YouTube has been reported to be around 100 days (Papaevangelou and Votta, 2024). Overall, the 2024 sample appears more aligned with YouTube’s guidelines. Unlike in 2022, where top actors were heavily moderated, most high-visibility channels remained intact in 2024 (Figure 1), suggesting a systematic shift. This may reflect improved moderation practices, greater compliance by creators, or more algorithmic exposure for older videos that have proven resilient over time.

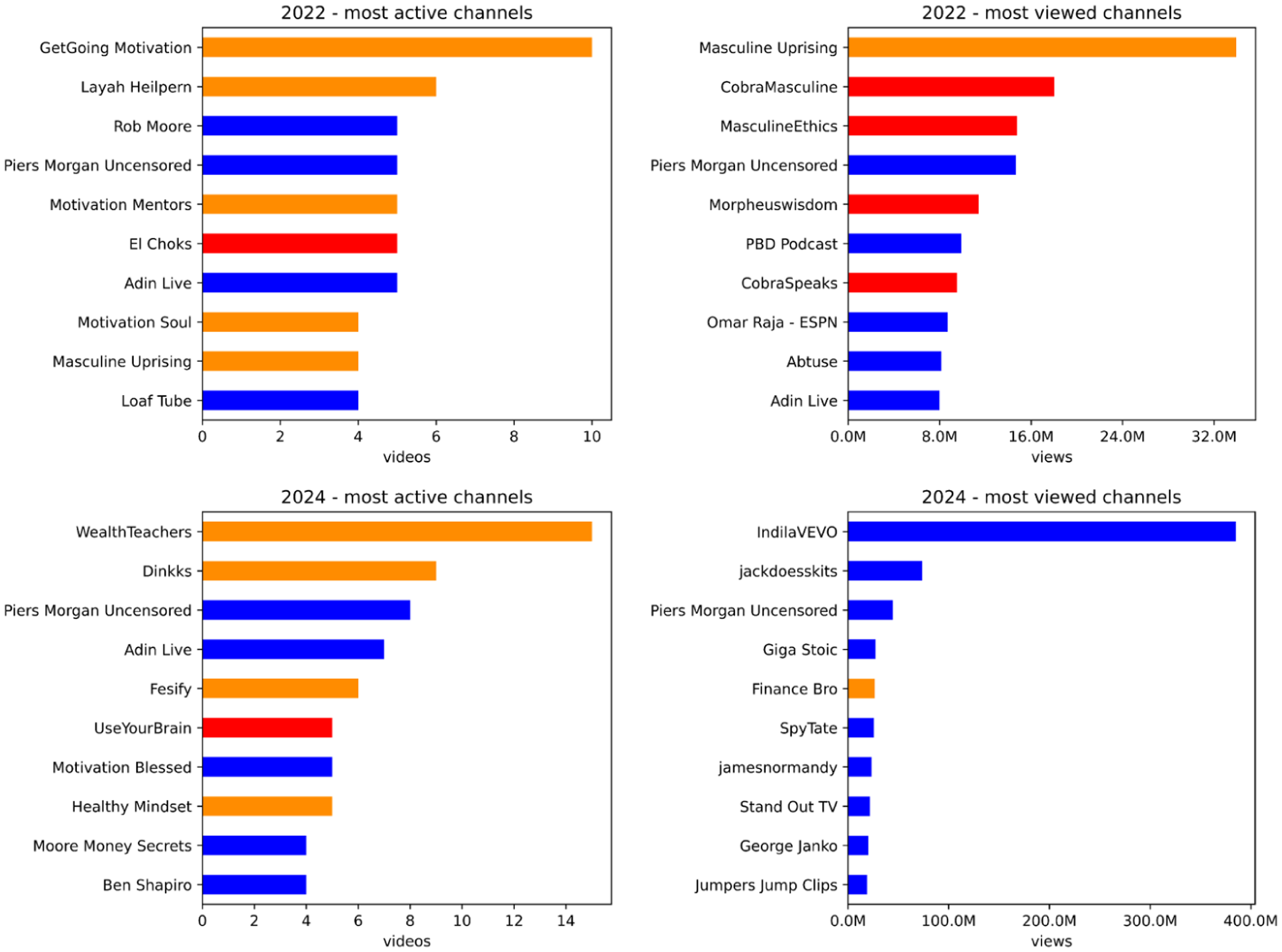

The most active and most successful channels in our search-based data sets; channels in orange had at least one video unavailable and channels in red were removed.

To qualitatively explore Andrew Tate’s presence on YouTube, we examined the content across both data sets and identified three broad categories that constitute the core of what we term the Tate-space: long-form interviews, ripped, reposted, and remixed content, and traditional YouTube-native formats such as reactions, sketches, or gaming videos. These categories are closely linked to recurring channel types and thematic patterns. The following sections discuss each one in turn.

Interviewed

Already in 2022, long-form interviews featured prominently, with five of the top 15 ranked videos falling into this category. These include Tate’s hour-long interview with Piers Morgan and a nearly 5-hour conversation with Patrick Bet-David, which grew from 10 to over 15 million views by 2024. Even the few unsympathetic interviews offer Tate ample space to elaborate on conspiracy theories, misogynistic views, and self-help narratives around finance and masculinity. In the 2023 Bet-David interview, for example, he condenses this into three premises—financial freedom, sexual access, and a strong male network—framed as tools to “resist the Matrix,” which he then sells through online courses. These themes tend to recur together, forming what we call the “Tate-package”: a tightly interwoven set of talking points that address young men’s anxieties about their social and economic status (Haslop et al., 2024). Although framed as news-style interviews, these videos are often categorized as “entertainment,” and their crass and transgressive content lends itself to easy extraction and reposting.

Interview channels were not the most viewed overall, but Piers Morgan Uncensored and PBD Podcast ranked just behind now-deleted Tate-specific channels (Figure 1). Unlike smaller channels, they were rarely demonetized or moderated, suggesting that channel size provides a form of legitimacy under YouTube’s moderation framework.

In 2024, interviews became even more dominant: 10 of the top 15 ranked videos were long-form conversations, often hosted by high-subscriber channels where Tate’s views would theoretically be contextualized and buffered by an independent interviewer. However, most hosts show clear sympathy toward Tate, helping amplify his ideas through a genre typically associated with credibility. These interviews are also highly lucrative. For example, Tate’s interviews are the most viewed videos on George Janko’s and Michael Franzese’s channels, and Piers Morgan Uncensored counts four Tate interviews among its top 10 videos, making it the third most watched channel in the 2024 sample (Figure 1), surpassed only by Indila (who performs Tate’s unofficial “theme song”) and jackdoesskits, a successful sketch channel.

Ripped, remixed, reposted

A second, more complex content category centers on videos using “secondhand” footage of Tate, typically sourced from interviews and appearances on other platforms. These videos, often accompanied by manosphere-coded Tatewords like “masculine,” “cobra,” “matrix,” or “motivation” (Figure 1), break down Tate’s ideology into soundbites, especially as YouTube Shorts, which made up 28.5% of content in 2022 and 52.6% in 2024.

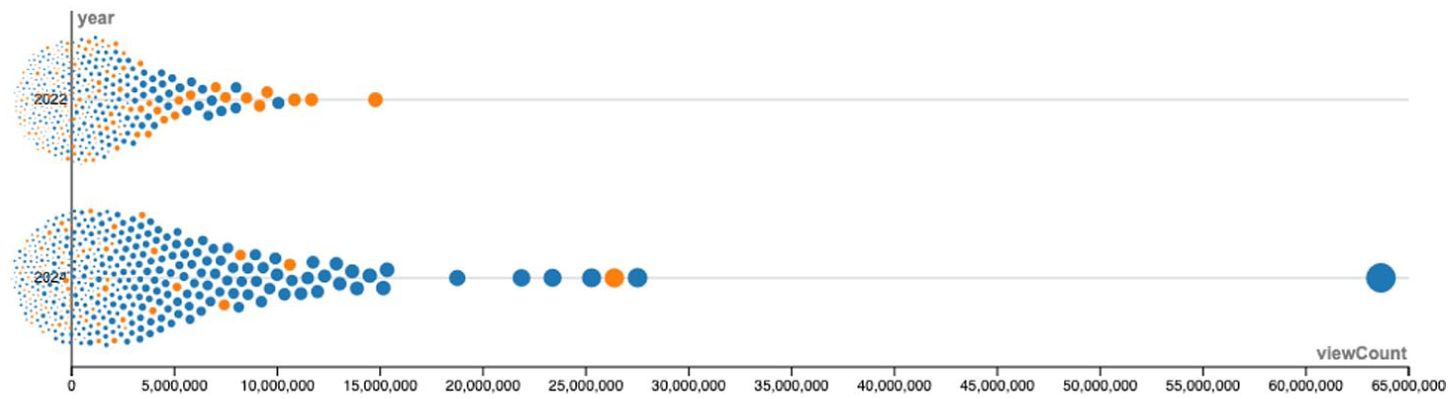

Unlike interview channels, these creators had fewer subscribers and were more heavily moderated. For instance, Masculine Uprising, one of the most visible channels in 2022, had all videos set to private by 2024. Other successful channels—CobraMasculine, MasculineEthics, Morpheuswisdom, and CobraSpeaks—were removed entirely (Figure 1). Despite attracting views in the (tens of) millions, none of these channels surpassed 250k subscribers, more than an order of magnitude fewer than the interview channels or mainstream creators such as jackdoesskits. This pattern suggests that their reach stems less from a stable subscriber base than from external linking (Chen et al., 2023) or, more likely, YouTube’s ranking and recommendation systems and affordances such as the Shorts shelf, which promote this highly affective and divisive content long before its eventual removal. Indeed, 9 of the 15 most viewed videos in 2022 were inaccessible by 2024 (Figure 2).

Distribution of removed (orange) and unremoved (blue) videos by view count. Indila’s “Tourner Dans Le Vide” (400M+ views) was excluded from the lower graph for readability.

Many of the channels in this category are clearly minion accounts dedicated to reposting Tate clips, often using short, punchy extracts. They are heavily moderated despite using tactics like name/image changes and deleting individual videos, engaging in what Gillespie (2018: 176) calls an “endless game of whack-a-mole.” To assess these actors more specifically, we conducted two channel searches in December 2022 using the names of Tate’s banned channels. We indeed found numerous channels that attempted to openly replatform Tate’s content, often using additions like “reuploads,” “backup,” or “deleted clips.” The most successful, TATE CONFIDENTIAL PLUS, grew to 40k subscribers before removal in early 2023 for ToS violations. But the average was only 251 subscribers (median: 2), and by 2024, all had been removed or had negligible visibility. A repeated search in 2024 found fewer channels using Tate’s name directly, none with substantial reach. Our video data sets thus confirm that “mindless” reposting is increasingly well-moderated. In 2022, channels using Tatewords had higher cumulative views than others (3,049,161 vs 1,209,717), but by 2024 this reversed (3,457,837 vs 4,445,311), with lower average rankings, indicating that more mainstream content now dominates.

Two caveats remain. First, the sheer volume of low-effort videos and YouTube’s slow moderation (Papaevangelou and Votta, 2024) allowed many to accumulate large view counts before deletion. Second, and more significantly, we identified a growing number of channels in 2024 that resemble minion accounts but show a more developed editorial and aesthetic “voice” and an increased ability to “toe the line.” These creators still rely on Tate’s speech and image but engage in semiotic play through TikTok-style “edits,” music overlays, or compilations. For example, the Short “Why Tate Hates His Sister”–the fifth most viewed video in 2024 (25M + views)–uses a 30-second interview clip with Tate’s brother Tristan but adds TikTok-style subtitles, emotional music, and photos of their “black lives matter, super left, liberal” family member softly dissolving and zooming in and out. Much of this content moves away from overt politics, however, instead leveraging Tate’s perceived “authenticity” and “realism” (Haslop et al., 2024) in clips focused on motivation and wealth. While the presentation is less crude and more aligned with YouTube’s content policies, they maintain an ambient ideological tone infused with misogyny and far-right talking points. Unlike less sophisticated minion accounts, these channels have cultivated their own subcultural vernacular and aesthetic, facilitated by editing tools like CapCut, like adopting Indila’s “Tourner Dans Le Vide” as Tate’s unofficial theme, frequently slowed down or reverberated to produce an affective, immersive soundscape.

While this broader, stylized remixing largely supports Tate, it also signals a diffusion of control. The aesthetic and editorial appropriations mark a shift from Tate as a dominant subject to a malleable object or symbol, absorbed into youth culture as a pop figure that channels repurpose for their own ideological and entrepreneurial ends.

YouTubeified

This shift continues in our third category, where Tate no longer appears as the primary speaker but as a figure talked about. These videos are much more prevalent in 2024 than in 2022. For example, the only video clearly critical of Tate in the 15 most highly ranked results in our 2024 data set is a news-style commentary by Ben Shapiro, a popular right-wing political pundit, titled “Andrew Tate: The Great Hoax.” But many of the videos in this category take a more neutral stance, reporting on topics like Tate’s endorsement of Trump or his legal troubles in Romania. Here, Tate is a “person of interest,” justifying the extensive coverage.

More significantly, we see a rise in content from “regular” YouTubers–creators not embedded in the manosphere–who sporadically engage with Tate through YouTube-native formats without necessarily endorsing his ideology. These actors often trivialize or parody Tate, repurposing his persona for entertainment rather than critique. Tate is “memefied” or “YouTubeified” in comedy sketches, gaming videos, and inside jokes, typically without using “live” footage. Here, Tate transcends the news cycle and enters the symbolic.

A good example is jackdoesskits (Figure 1): his Short “Mother calls Andrew Tate on child pt.4” is the second most viewed video in our 2024 data set, with over 63M views. In the sketch, he plays a vaping teenager, the mother, and Tate, riffing on Tate’s “breathe air” catchphrase. Originally, Tate used “breathe air” both to mock “dumbass” vape users and to urge followers to “wake up” from the Matrix, again mixing mundane self-improvement with hostile language and conspiratorial framing. To jackdoesskits, however, Tate becomes a memefied symbol rather than a subject of debate, a figure of play embedded in mainstream youth entertainment. This trend suggests a growing normalization of Tate within YouTube’s cultural mainstream. While such memeification softens his most extreme views, it also domesticates and popularizes his persona, enabling ideological proximity without direct endorsement. And for those curious about Tate beyond the meme, more radical material remains only a search away.

Large-scale approach

In this section, we quantify the truly massive scale of Tate-related content on YouTube through a large-scale data set (n = 112,466) that covers video production between Fall 2023 and Fall 2024. We observe that Shorts play an even larger role, here, with 78,867 (70.1%) videos being 60 seconds or less. Since these clips belong mostly to our second category, featuring Tate speaking with some level of enhancement like subtitles or music, they are exceedingly easy to produce. Although they have a lower average view count (11,009 vs 23,450) and a significantly lower average comment count (8.6 vs 96.7) compared to longer videos, their sheer volume means they still account for over half (868.5M) of total views. Some of the channels producing these Shorts appear to be clear minion accounts, for example, two highly successful but eventually deleted variants of HU (Figure 2). However, many others exhibit a higher degree of editorial appropriation, going beyond straightforward reposting. Notably, we found few attempts to directly upload Tate’s shows from Rumble, suggesting that replatforming in this context is a more complex and mediated phenomenon.

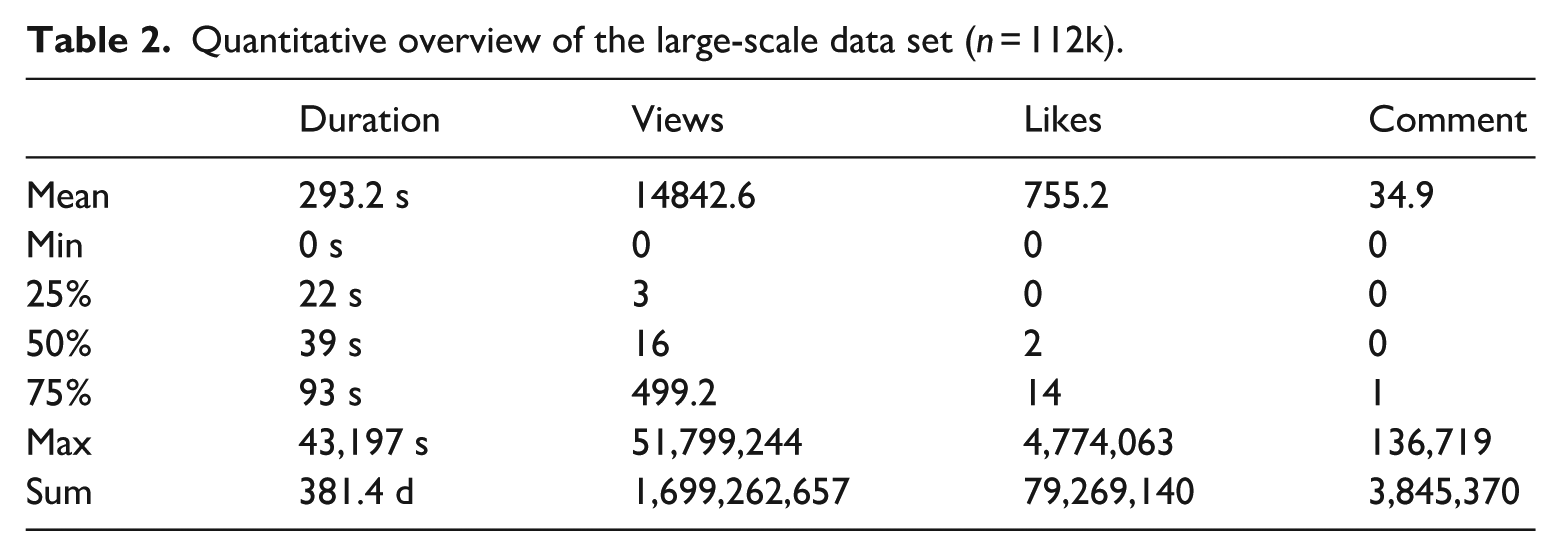

Table 2 shows that visibility is distributed very unequally, as three out of four Tate-related videos received fewer than 500 views. A small number of channels are nonetheless able to achieve great success within the Tate-space. A case in point is the channel WealthTeachers, who ranked 11th in terms of views in our 2024 search data set but moved to the top spot in the larger sample (Figure 3), with their 93 Shorts receiving almost 10% of total views, despite having only about 320k subscribers. We again encounter the contradiction noted above: this level of success can only be explained by a strong push from YouTube’s recommendation systems, 4 yet all but one of their clips were removed when checking in late 2024, seven of them with a ToS violation notice. This is part of a broader trend. Although 35,714 videos (31.8%) were missing 3 months after we ended our data collection, missing videos only had a slightly lower average view count (12,372 vs 15,991). They were also older, again suggesting that deletion is not immediate. Recommendation and moderation systems seem to work against each other and the latter is simply too slow to keep up with YouTube’s own “freshness” mandate (Covington et al., 2016).

Quantitative overview of the large-scale data set (n = 112k).

Most prolific and most successful videos in our large data set (orange: at least one video deleted, red: channel deleted).

Although the bulk of Tate-related videos is indeed made up of ripped, reposted, and remixed content, YouTube-native formats continue to play an important role, especially when looking at view counts. Three videos by Indian comedian CarryMinati that feature skits and roasts of Andrew Tate top our list, followed by news channel Hamster Combat. These findings further confirm the idea that Tate has become part of a larger cultural vernacular, playing different roles, from ideologue to celebrity. While critical voices like Coffeezilla also feature in the top 20 most viewed videos, we again notice the strong presence of long form interviews, in particular Piers Morgan Uncensored (four videos) and George Janko (two videos), which provide ample room for Tate to lay out his ideology, giving him full access to large audiences without the need for his own channel.

Thematic analysis

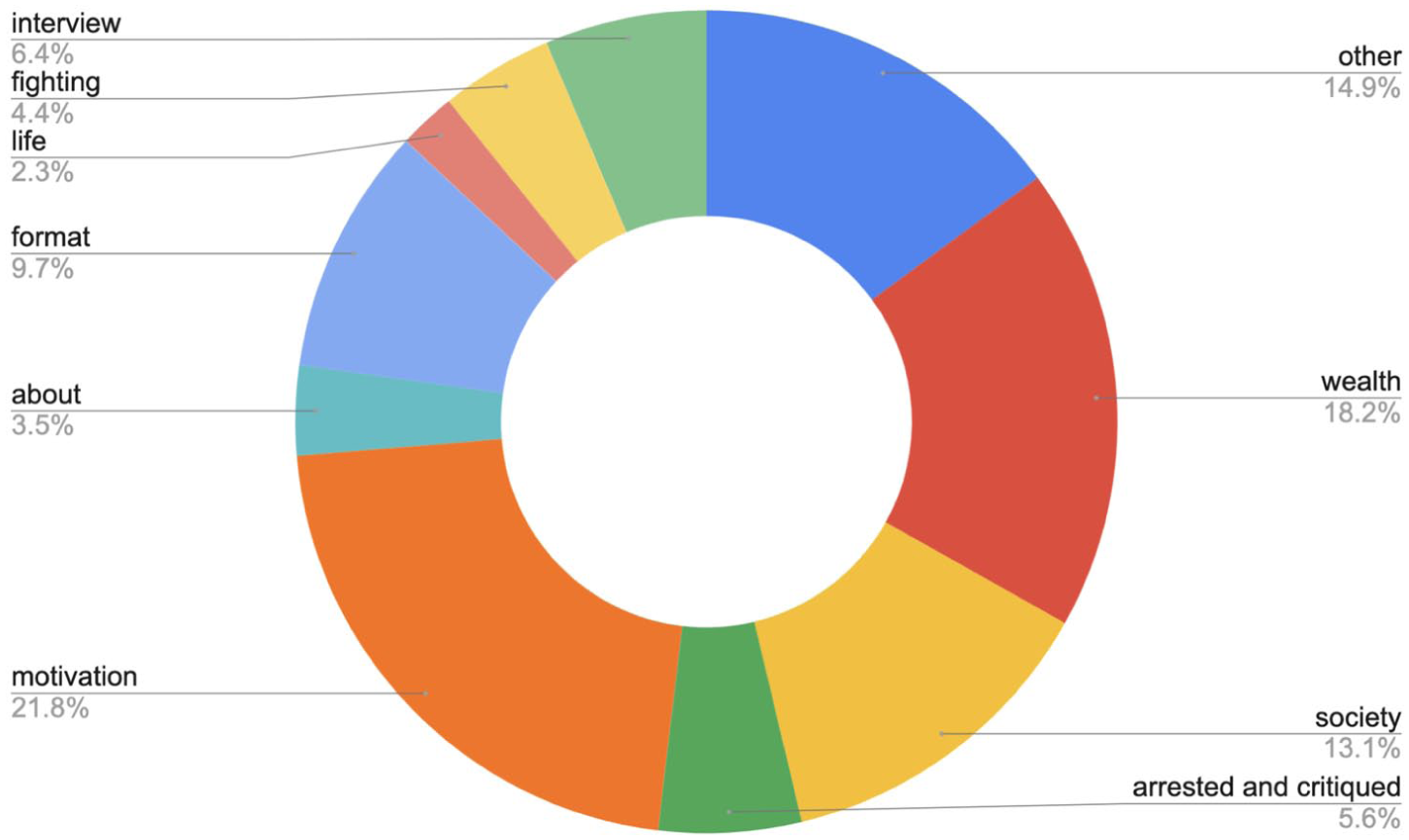

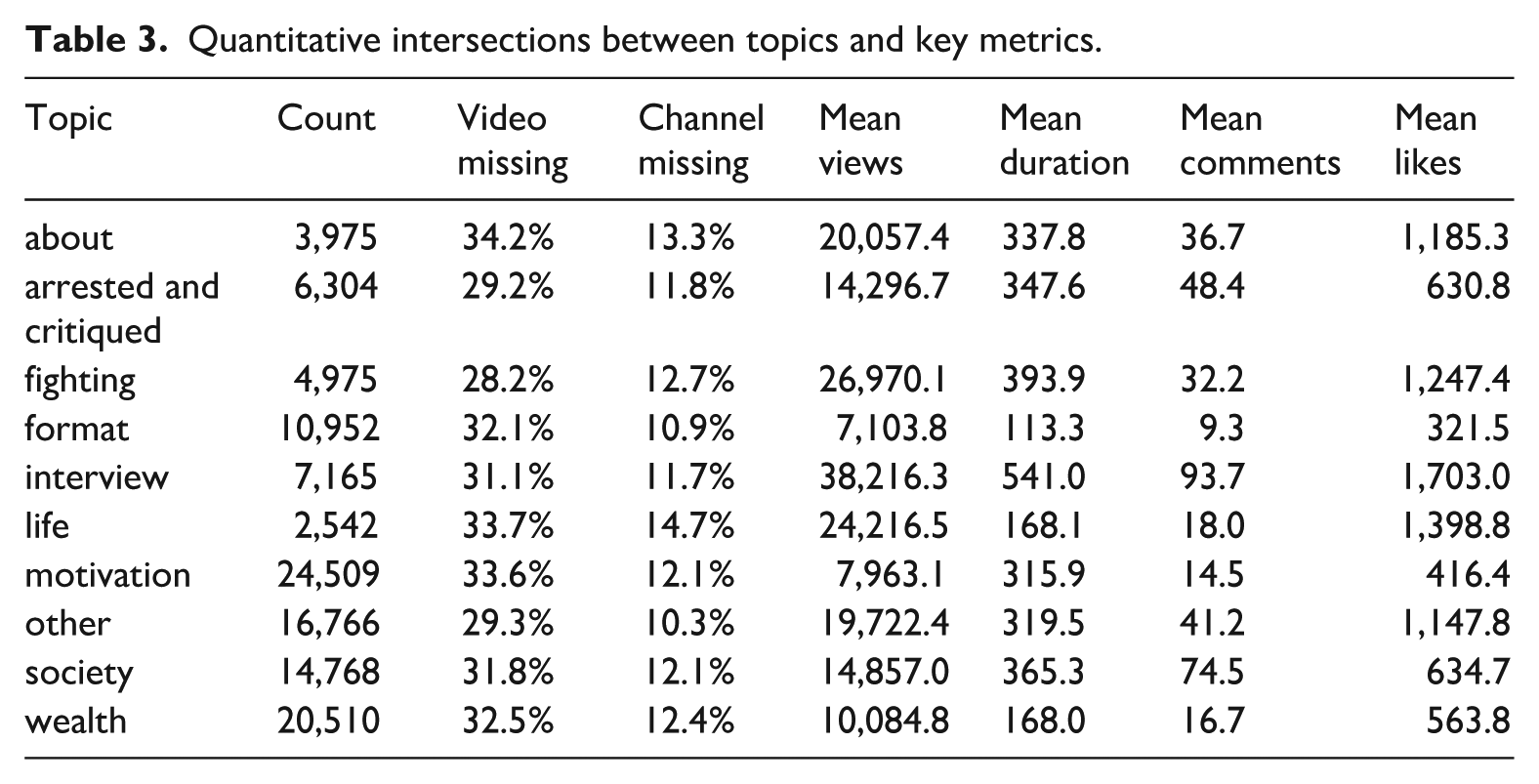

A thematic analysis, relying on the LLM-powered BERTopic package (Grootendorst, 2022), allows us to further nuance and quantify these findings. Figure 4 provides an overview of the ten main topic categories we inductively developed (Appendix 2) and Table 3 intersects these categories with key metrics. While the method performed very well when we examined results manually, assessment is probabilistic and the Tate-space is indeed characterized by the fluid connection between self-improvement, wealth acquisition, and politics.

Overview of BERTopic themes, manually classified into 10 categories.

Quantitative intersections between topics and key metrics.

Motivation, wealth, fighting, and society (e.g. gender relations, religion, and Palestine) together form the center of the Tate-package, and they represent over half of all videos. Videos in the formats category are not so much putting their themes to the front in their titles and instead highlight that they are Shorts, compilations, or edits. These are the shortest videos and they also score the lowest in terms of views, comments, and likes (Table 3). Interviews are on the exact other end of the spectrum: they are much longer than average and counts of views, comments, and likes are significantly higher. The categories about, life, and arrested and critiqued contain videos that report on Tate and the spectacle he constructs around himself.

Overall, the thematic analysis shows that the aspirational dimension of Tate’s message, highlighted by Haslop et al. (2024), is strongly reflected in content production, most prominently within the motivation and wealth categories. While Tate is known in mainstream contexts mainly for his misogynistic and conspiratorial views, his YouTube presence aligns more closely with his self-styled persona as an iconoclastic motivator, teacher, and commentator.

Discussion and conclusion

Stepping back from the intricacies of our data sets, three main clusters of findings emerge in evaluating Andrew Tate’s presence following the deplatforming of his two channels in 2022.

First, Tate appears to be more visible on the platform than ever. Comparing the two search-based data sets, we observed a substantial increase in views, and the large-scale data set reveals an overwhelming volume of videos produced in just one year, from October 2023 to October 2024. Unlike Lewis’ (2018) Alternative Influence Network, which was relatively small with 65 political influencers and characterized by personal connections and collaborations, we identified a complex and somewhat amorphous “content delivery system” consisting of tens of thousands of channels that show little evidence of coordination, despite revolving around a single central figure. This system is fueled by a smaller number of actors injecting primary content featuring Tate, taken from his own channels on other platforms and long-form interviews by influencers, podcasters, and journalists. Interviews, in particular, help extend Tate’s reach to audiences far more diverse than those on platforms like Rumble or Telegram. These primary materials are then picked up by an army of anonymous channels that mine them for soundbites and repackage them into Shorts or compilation videos. While many of these may be classified as minion accounts engaging in low-effort reposting, the most successful actors add a distinct editorial and aesthetic voice. Rather than simply mimicking the original content, they engage in interpretive reworking–akin to the “repetition-with-variation” that Hagen and Venturini (2024) describe as “memecry”–spinning the initial message in different directions and thereby extending its practical and cultural reach. At the same time, our close analysis of a limited set of channels suggests these actors, unlike Tate himself, are not necessarily “ideological entrepreneurs” (North, 1991) generating new worldviews. Instead, they appear more as content or topic entrepreneurs opportunistically investing in an ideological space from which they can profit.

Second, and directly connected to the last point, we noticed that the “hard” ideological core of Tate’s worldview is not the central component of his presence on YouTube. While Tate’s opinions on women and his conspiratorial worldview often shine through, especially when challenged by interviewers, the majority of the first-person content we analyzed circulates around topics like motivation, wealth, and fitness, connecting with the aspirational masculine subjectivities in a time of precarity and social crisis pointed out by Haslop et al. (2024). In fact, what characterizes what we have called the Tate-package is the circular relationship between these topics and Tate’s more extreme ideological positions, a relationship that does not always and not necessarily exist on the level of individual videos. Rather, the abundance and variety of circulating videos produces an ambient ideological effect where advice on money or fitness, humorous sketches, and more overtly misogynistic or conspiratorial statements circulate side by side; taken together, they establish a background atmosphere in which his harder positions become thinkable, even when they are not explicitly stated. On the one hand, the polysemic character of the “Tate-package” allows extreme views to weave in and out of otherwise benign, ironic, or motivational content and clips can be taken up by sincere audiences looking for motivation and by creators who are merely chasing views. And on the other hand, platform-level context collapse (Davis and Jurgenson, 2014) and YouTube-native remix practices detach Tate signifiers from their original setting, so that “breathe air” or “the Matrix” travel into other “platform vernaculars” (Gibbs et al., 2015) and even relatively mainstream spaces. What results is less a coherent pipeline than an atmospheric presence of Tate-ish ideas: users encounter fragments repeatedly, across channels and genres, which normalizes the persona and his worldview without requiring explicit endorsement. This is why moderation targeted at individual uploads can miss the broader phenomenon: the ideological payload is distributed, recombined, and economically incentivized by creators whose primary motive is not necessarily alignment with Tate. In that sense, ambient ideology describes a political effect of circulation itself, not just the presence of “problematic” content.

We can speculate that ranking, recommendation, and related affordances help to sustain this circulation, but even without considering direct navigational pathways, Tate’s uptake as both celebrity and meme has folded him into YouTube’s web of subcultures, including those that are satirical or critical. He is now fully anchored in the platform’s shared imaginaries, and the sheer volume of first-person or Tate-branded clips ensures that any residual curiosity can be satisfied instantly. In this sense, the ambient quality of the Tate-package is simultaneously a product of YouTube’s systems and vernaculars, Tate’s platform-ready persona, and a “perceived [. . .] crisis of masculinity” (Roberts et al., 2025), making moderation beyond deplatforming largely unrealistic.

Third, YouTube’s role in this configuration is both ambiguous and seemingly contradictory. On the one hand, the platform faces a structural dilemma: it cannot simply erase all traces of Andrew Tate, even if it wanted to. Interview formats and other forms of ostensibly “legitimate” journalistic reporting create protected spaces for Tate’s ideas, where censorship would be difficult to justify without triggering broader concerns around press freedom and platform overreach. Likewise, removing Shorts of Tate discussing mundane topics like morning pushups would be hard to defend on content-policy grounds. On the other hand, in cases where content is apparently problematic enough to warrant removal, affecting roughly one third of the videos in our large-scale data set, YouTube acts too slowly to prevent mass exposure and, paradoxically, seems to amplify visibility through ranking and recommendation. While we lack definitive evidence, algorithmic amplification remains the most plausible explanation for how low-subscriber channels routinely attract millions of views. This dynamic is particularly pronounced in Shorts, where rapid attention cycles render current moderation practices largely ineffective. Recommendation driven by a “freshness” mandate (Covington et al., 2016) undermines a moderation apparatus that either cannot keep pace with the scale and distributed nature of content flows, or functions primarily as a fig leaf, signaling action without meaningfully curbing exposure. In other words, while YouTube can easily deplatform a problematic actor’s account, it struggles to moderate a vernacular that has diffused into the platform’s symbolic and cultural architecture.

Overall, our study adds to the growing body of work highlighting the complexities and ambiguities of deplatforming. While we cannot definitively confirm–absent a counterfactual–that removing Tate’s accounts has strengthened his presence on YouTube, as Dahir (2022) speculates, it is clear that he continues to thrive in the current configuration. The replatforming we observe is not simply a re-uploading of deleted content, but something far more dynamic and potentially valuable, even without direct monetization. The interpretive work performed by interviewers, remixers, and memefiers constitutes an almost evolutionary process in which Tate’s message is continually tested, refined, and adapted to fit an ever-expanding range of contexts. His reach now extends well beyond the manosphere into a broader cultural vernacular, an outcome he could not have achieved alone. It is the distributed labor and creativity of thousands of actors that sustain and expand the Tate-space, facilitating the ambient spread of the Tate-package. Paradoxically, deplatforming may have created the vacuum that these actors are now working to fill. This does not imply that YouTube was wrong to remove his accounts, nor that these findings easily generalize to other cases. But it does suggest that modulating the logistical pathways of content circulation, while important, is insufficient to disrupt the deeper cultural dynamics at play. Even if YouTube were more effective, such interventions alone are unlikely to meaningfully counter the social and economic anxieties that Tate exploits so effectively. Deplatforming can blunt the sharpest edges of harmful content, but addressing the root causes will require far more than deleting videos.

Footnotes

Appendix 1

To filter irrelevant videos from our large-scale sample, we iteratively developed a list of “Tatewords”–keywords delineating Andrew Tate’s issue space–during the qualitative content analysis phase of the project. The final list included: tate, confidential, cobra, matrix, hustle, top-g, masculin*, mentality, wisdom, warrior, stoic, *naire, insight, wealth, alpha, sigma, empire, mind-set, boss, king, and speech.

Appendix 2

To analyze the 112k video titles in our large-scale data set, we employed the LLM-based BERTopic package (Grootendorst, 2022). BERTopic is particularly effective at identifying smaller, tightly connected clusters within large text corpora, yet tends to perform less intelligibly when the number of topics is reduced. We therefore retained a relatively high number of detected topics (60) and used inductive coding to merge them into broader, semantically coherent categories. As there is no “correct” number of topics, but rather a researcher-guided calibration of granularity, we regard this approach as situated between quantitative and qualitative analysis, with subject-matter expertise playing a crucial role. This further underscores the importance of the qualitative dimension of our study. The full list of initial topics and derived categories, including key terms and sample video titles, is available here: https://docs.google.com/spreadsheets/d/1ZXyxgbPTOuRTcYINAy9nezAxhK61TgkEixRdABMa_yk/edit

Similar to traditional topic-modeling methods such as LDA or NMF, the term “topic” is somewhat misleading here, as topics represent clusters of statistically associated words–or embeddings, in this case–rather than coherent semantic themes. This explains why some of our “topics,” such as format and interview, do not correspond to units of subject matter. A title like “Andrew Tate #andrewtate #viral #shorts” does not permit reliable inference about the video’s actual content without additional data collection, which is not feasible at the scale of our study. While this may appear to be a limitation, it in fact highlights an important aspect of YouTube’s “platform vernaculars” (Gibbs et al., 2015): the tendency among many creators to emphasize the formal rather than the substantive dimensions of their content.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work has been supported by the Platform Digitale Infrastructuur Social Science and Humanities (PDI-SSH) through the CAT4SMR grant.