Abstract

This article analyses the ‘free speech’ online forum Flashback, which adheres to a strict non-interference policy when it comes to user-generated content, but beyond this also forbids users from deleting their own content or accounts. Through a qualitative content analysis, this article sought to understand the relationship between the platform and its users with respect to this unconventional approach to moderation and content removal. This article discusses both the position(s) taken by Flashback as it pertains to its policy of minimal moderation, and the expectations as expressed by users navigating Flashbacks rules and their practical implementations. The article shows a discrepancy between how Flashback (incoherently) justifies minimal moderation and how users had imagined the platform operating. The article also discusses how Flashback maintains these policies through its community’s active encouragement via supportive posting and silencing of non-conformers, and the consequences that Flashback’s inaction has in terms of residual hate.

Keywords

Introduction

After a number of high-profile incidents in recent years, the salience of hateful, harmful and misleading content on social media has increasingly become the focus of public scrutiny. Following massive pressure to address public concerns for transparency and accountability, social media companies have begun tightening their content moderation policies in attempts to mitigate the spread of such content on their sites, but also (mainly) the negative publicity it brings (Roberts, 2018; Shen and Rose, 2019). Consequently, any public recognition of gaps in moderation practices will tend to prompt outward action and promises of improvement (Gerrard, 2018; Gillespie, 2020). On the opposite side of the spectrum, however, are digital platforms who pride themselves in acting as counterweights to the supposed over-moderation and user censorship of conventional social media platforms. These employ minimal levels of moderation under the pretence of freedom of speech which has often worked to disguise hateful—racist, sexist and otherwise discriminatory or illegal—content (Kilgo et al., 2018; Lima et al., 2018; Rogers, 2020) and sometimes even to legitimise violence (Munn, 2021).

Research into content moderation has thus far focused primarily on the largest US social media platforms and therein, their outspoken work to improve their moderation policies (Gillespie, 2020; Gillespie et al., 2020; Shen and Rose, 2019). Seldom, on the other hand, has research explored conscious omittance of moderation in digital ‘free speech’ settings. To address these gaps, this article analyses practices of minimal moderation in the large, Swedish, online discussion forum Flashback. Flashback is notorious in Sweden for its absolutist stance on facilitating freedom of speech in its user discussions, and not unrelatedly, for the extensive presence of hateful content on the platform (Åkerlund, 2021a, 2021b; Fernquist et al., 2019). Flashback not only has a rigorous policy of not interfering with users’ rights to freedom of speech but goes as far as forbidding users to themselves delete their own accounts, posts or threads, under any circumstances.

This article aims to explore the relationship between the platform and its users with respect to this unconventional approach to moderation and content removal. Through a qualitative content analysis, it investigates how minimal moderation policies are deliberately upheld, justified and understood by users in free speech settings, and by extension how such inaction contributes to the proliferation of hate. It does so by analysing how Flashback presents itself in text on the platform, and through content posted by users and platform representatives, exploring both the justifications on behalf of the Flashback forum as it pertains to its minimal moderation policy, as well as the expectations and experiences of platform users in their responses to these policies and their practical implementations. The article sheds light on the very particular case of minimal moderation and deletion on Flashback, but it also provides insight into deliberate platform inaction as an understudied, yet important, social perspective on content moderation.

Content moderation, platforms and users

For the purpose of this article, content moderation is narrowly defined as ‘the detection of, assessment of, and interventions taken on content or behaviour deemed unacceptable by platforms . . .’ (Gillespie et al., 2020: 2). However, it should be noted that content moderation also concerns issues such as the conditions under which moderation is carried out by the labour of professionals—so-called ‘commercial content moderation’ (Roberts, 2019)—or volunteer human moderators (Matias, 2019); the use of algorithmic or machine learning methods for moderation (Gillespie et al., 2020; Gonçalves et al., 2021; Myers West, 2018); user interventions such as flagging (Crawford and Gillespie, 2016) and counter speech (Ziegele et al., 2020); and the legislative and institutional contexts in which these activities take place (Gillespie, 2018; Gillespie et al., 2020).

Even though content moderation is at the centre of all platforms’ activities, many tend to downplay their authority when it comes to user-generated content (Gillespie, 2010, 2018). Despite their best efforts to appear as neutral conduits of user-generated content (the people in charge of), digital platforms are ultimately responsible for shaping how users can act and interact, and how content can be created and spread (Klinger and Svensson, 2015; Matamoros-Fernández, 2017). More than this, platforms subjectively decide on what is allowed and what is not and have executive power over the implementation of these rules (Gillespie, 2015).

Relatedly, in the United States, platforms have an especially great power over how moderation should take shape as legislation relieves them of responsibility for user-generated content while simultaneously allowing them to police it without gaining the statuses of publishers (Crawford and Gillespie, 2016; Gillespie, 2018). European countries generally adhere (at least on paper) to stricter platform regulation and now, the European Union is introducing new legislation—the Digital Services Act (DSA)—to keep up with the rapid development of digital services and address gaps in current regulation. Among the main goals of the new EU Regulation is to reduce illegal content online, including among other things, unlawful hate speech, discrimination and child sexual abuse materials (European Commission, 2022).

The proliferation of hate

While digital settings provide spaces for marginalised ideas and movements, previous research has also identified the many ways platform politics can enable hateful content and users. For instance, the recommendation systems and content feeds on social media sites like YouTube, Facebook and Reddit have been shown to support and even emphasise racist and sexist content (Gaudette et al., 2021; Massanari, 2017). The accessibility and ease of use of many ‘reaction’, ‘sharing’ and content organising features have likewise been shown to enable proliferation of polarising and degrading content without much reflection or effort (Munn, 2020). Research has also shown how users’ possibilities to ‘subscribe’ to or ‘follow’ certain users and channels is yet another way in which circulation and amplification of hateful content can take form. Notably, the emergence of far-right influencers would not have been possible without the constant exposure and status afforded by the ‘subscription’ or ‘follower’ logic (e.g. Van der Vegt et al., 2021). Finally, the enablement of hateful content has often also been connected to users’ possibilities for anonymity online. Concretely, anonymity has proven important for assisting socially stigmatised user practices, like those of the far right, and enabled users’ possibilities to act more radically than they might if they had been held personally accountable (Crosset et al., 2019; Urman and Katz, 2022; see also Suler, 2004).

Notably, many so-called ‘alternative’ social media platforms like Gab, Telegram and Parler, and online forums like Reddit, have incorporated possibilities for user anonymity while also capitalising on the perceived censorship of mainstream digital platforms. In doing so, previous research has shown how these sites have positioned themselves as non-restrictive, free and fair alternatives for elsewhere unwelcome users (Jasser et al., 2023; Lima et al., 2018; Rogers, 2020). In some of these ‘free speech’ settings, unrestricted opportunities for user expression have been established explicitly by platforms (Kilgo et al., 2018; Topinka, 2018) but even in settings where some rules and policies apply publicly, loopholes have been shown to weaken their impact (Gaudette et al., 2021). Ultimately, lenient moderation policies and enforcements have been identified as contributing factors in permitting overall hateful platform culture (Massanari, 2017).

However, the unrestricted conditions for ‘free speech’ in many of these settings have become increasingly constrained. Even sites like Reddit, which has previously moderated minimally, has acknowledged a need to toughen its moderation practices (Kilgo et al., 2018). And recently, Parler, known for its role in mobilising rioters in the 2021 storming of the US Capitol (Munn, 2021), has had to conform to stricter moderation policies to be allowed on the App Store (Young, 2022). Consequently, Flashback remains one of the few sizable platforms which has not yet yielded to pressures for increased moderation and compromises to its free speech policies.

Extant literature provides important insights into the ecosystem of free speech and fringe platforms (Baele et al., 2020; Dehghan and Nagappa, 2022; Rogers, 2020), having acknowledged, for instance, the centrality of free-speech arguments and the negative consequences that lack of moderation can have in these settings and beyond (Jasser et al., 2023; Lima et al., 2018; Munn, 2021; Topinka, 2018). Still, little is known about how minimal moderation policies are deliberately upheld, justified and understood by users in free speech settings.

An interplay between platform politics and imagined affordances

This article draws on the notion of ‘platform politics’ (Massanari, 2017) to understand how platform functions, rules and their implementations when it comes to moderation, set certain norms and provide opportunities and restraints for user action. This does not mean, however, that users have no agency. On the contrary, users’ interactions with, as well as understandings and expectations of, platforms contribute to shaping them (Bucher and Helmond, 2018). However, it is not so much the actual technological functions as they are implemented in code that matter to the imaginaries of every-day users. Instead, drawing on the concept of ‘imagined affordances’ (Nagy and Neff, 2015), this article recognises that users’ perceptions of platforms technological functions and policies—whether deliberately orchestrated or not—matter in how users engage with them. These imaginaries also impact users’ understandings of what actions are available to them in the given digital setting.

While balancing their efforts at self-centred gatekeeping, platforms must simultaneously provide a setting in which their userbase will want to stay, post and interact with others. In a ‘free speech’ type setting, this naturally means forming policies to promote and protect freedom of expression (Myers West, 2018). Moderators and administrators function as representatives of these policies and extensions of the platforms themselves. Moderators are expected to adequately discipline offending users, without alienating others (Squirrell, 2019). When they fail at this difficult task, or when users perceive that there is not enough information or explanation as to why, how and when content is moderated, they instead theorise and imagine what these reasons could be (Myers West, 2018). These perceptions then come to impact users’ understandings of moderation more generally and consequently also their behaviours on the given platform (Jhaver et al., 2019). Since users interpret platforms based on their own subjective experiences, their ideas about different aspects of platform politics might or might not align with how they are intended to be perceived from the perspective of the platform. Consequently, user reactions following platforms’ reinforcements of policy could be unexpected (Squirrell, 2019).

By combining these perspectives on platforms and users, this article goes beyond a technology-centric notion of content moderation to acknowledge the interconnectedness of platform policies, language use and user practices. By analysing forum rules, the extension and implementation of these policies through administrator and moderator postings, and user interactions in discussion threads, this article provides insights into the reasoning for inaction by the platform itself, as well as the inquisitions and motivations from users as to why they want their contents and accounts removed.

Case and method

The Flashback forum

Flashback was launched in 2000 as part of a broader initiative of bootlegged music, skinhead magazines, provocative newsletters and mailing lists, VPN, web hosting, and Internet service provider for those who shared ‘Flashback’s basic views on freedom of opinion and expression’ (Flashback, 2022b).

Not unlike 4chan (cf. Phillips, 2015), Flashback grew from hosting a narrow interest group in the early 2000s, to later accommodating a wide array of users and topics. Flashback is now considered one of the larger discussion forums in the world (Törnberg and Törnberg, 2016). According to the website header, around 1.5 million members have posted more than seventy-seven million posts in the forum. It has been among Sweden’s most visited websites over the last 20 years (Internetmuseum, 2021), and is estimated to be used by nearly one-third of Swedes (The Swedish Internet Foundation, 2019).

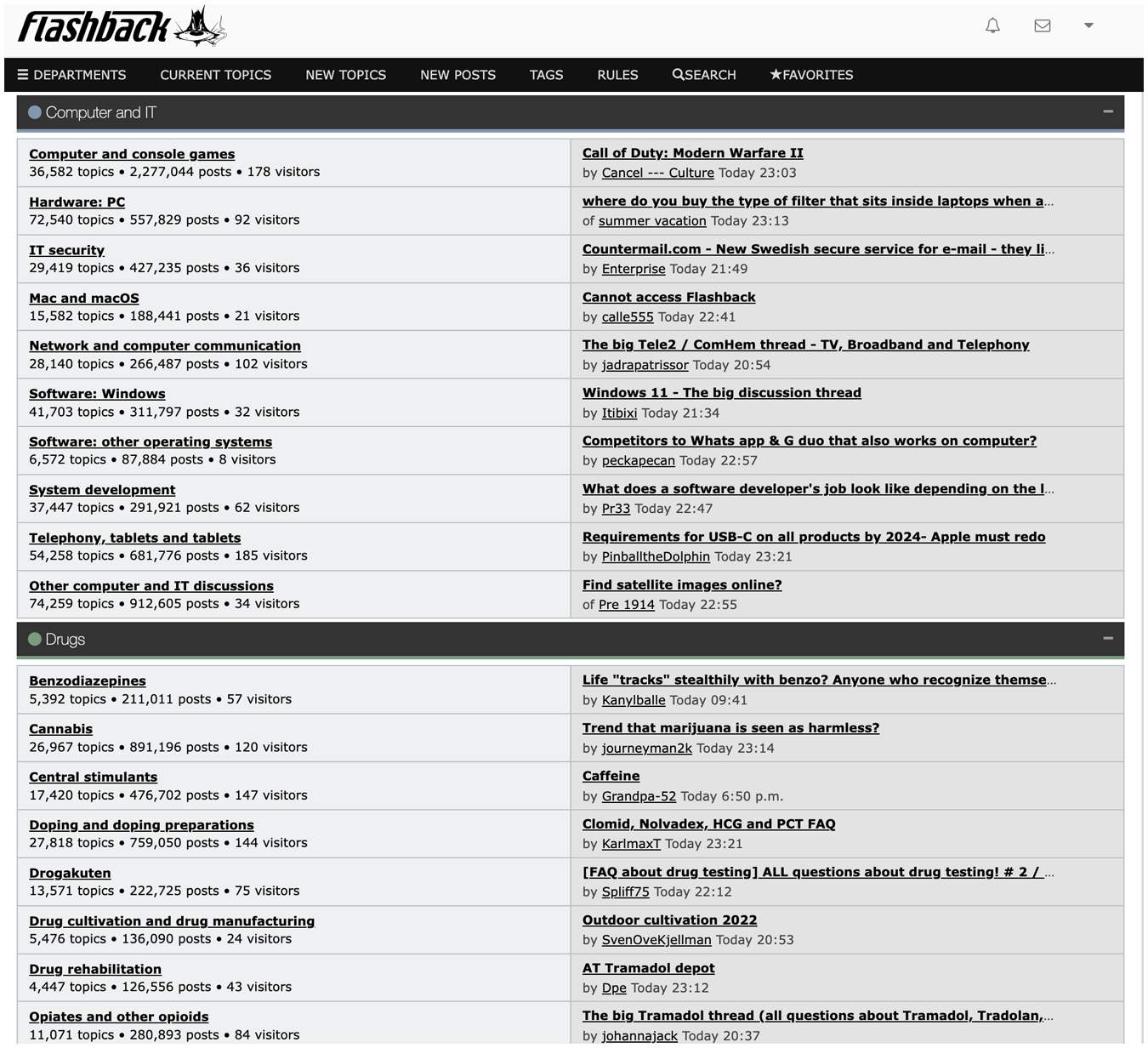

Flashback is financed through donations and ad revenue. Technologically, Flashback has a layout like an early Bulletin Board System, similar to those of 4chan and Reddit, but it only accommodates text-based posts (see Figure 1). The forum consists of 16 forum categories, 1 each with varying numbers of topical subcategories. These, in turn, are made up of user-generated threads, which hold user-generated posts and quotes. All moderation in the forum is carried out by 101 volunteer user moderators which are assigned and governed by Flashback’s six administrators.

Auto translated version the Flashback forum starting page.

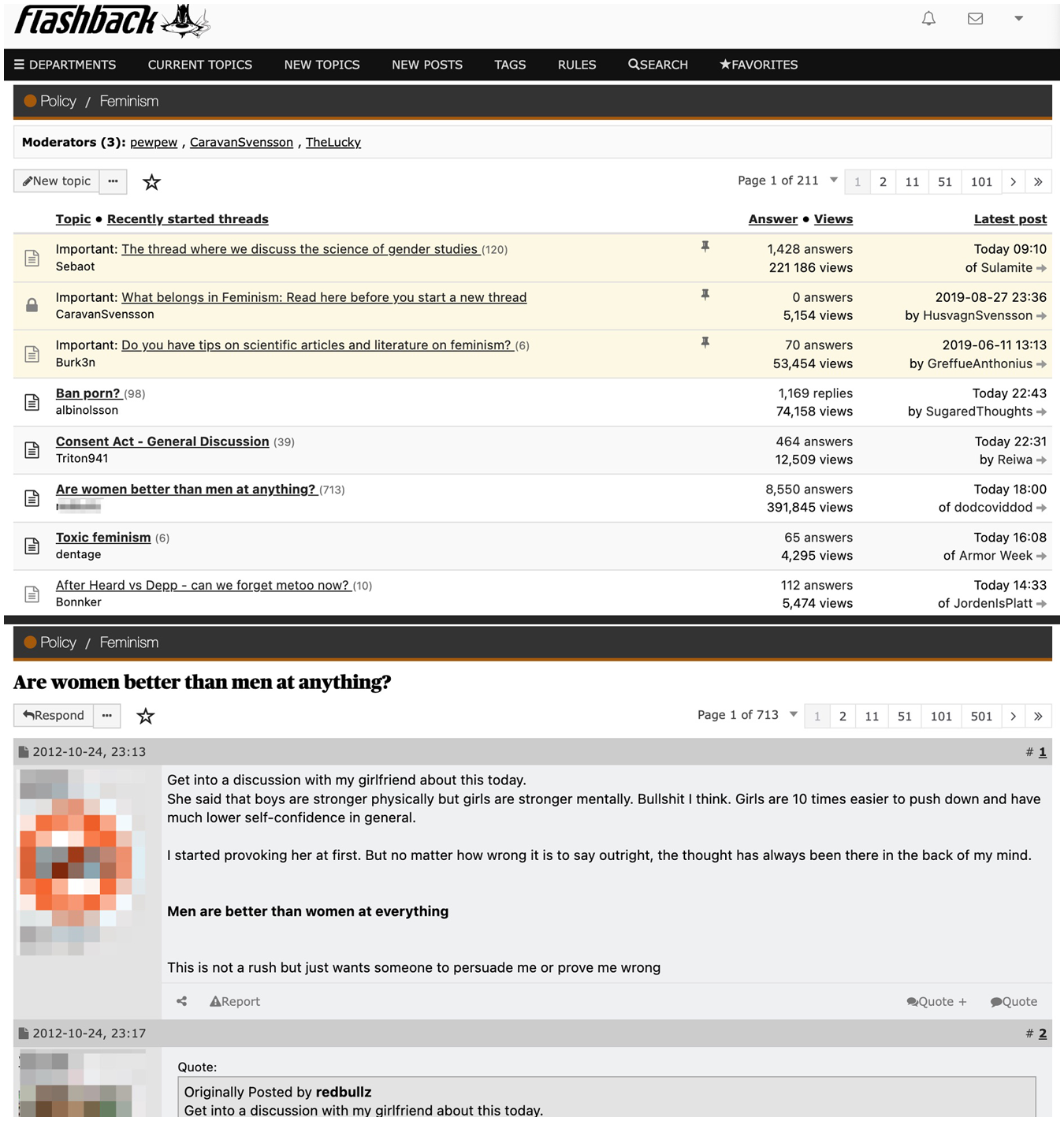

To join the forum, a user must sign up with birth date, choose a username and password and confirm that they have read and agree to abide by the forum’s rules and ‘netiquette’ instructions, which are linked on the sign-up page. Three days after activating their account, the user can begin posting content in the continuous stream of a thread through the ‘Reply’ button, quoting all or parts of one or several posts in a thread through the ‘Quote’ and ‘Quote+’ button, or start an entirely new thread through the ‘New topic’ button in any given subcategory (see Figure 2). These posts and threads are then publicly visible and accessible also to non-member visitors of the forum.

Auto translated post (top) and thread (bottom) views when logged in to Flashback.

A site of controversy

Flashback has received attention for its users’ efforts at grassroots journalism (Fernquist et al., 2019), but the platform has also been part of many public controversies throughout the years. Notably, in 2003, Flashback was sentenced by the Swedish Market Court to review all users’ post before making them public (Fallenius, 2003). According to Flashback, the platform has since bypassed this ruling by launching the forum under a new domain and by moving its ownership to a new company located in England. As such, they argue, the forum no longer has to adhere to Swedish jurisdiction (Flashback, 2022b).

While it has been questioned if the ownership actually has been moved abroad, and whether or not this matters in Swedish jurisdiction over Flashback (e.g. Ekbrand and Ludvigsson, 2018), the fact remains that the Flashback forum endures despite the prominence and influence of far-right discourse on the site (Åkerlund, 2021a, 2021b), including gender-based, Islamophobic, and xenophobic hate (Malmqvist, 2015; Törnberg and Törnberg, 2016). Like other ‘free speech’ sites (Jasser et al., 2023; Lima et al., 2018; Urman and Katz, 2022), the prominence of hate is likely at least in part the result of Flashback’s vast possibilities for user anonymity. However, despite this, and strict rules condemning user deanonymisation, news media have regularly ‘outed’ hateful Flashback accounts found to be used by public, Swedish figures.

Minimal moderation but not in the absence of rules

Flashback is not a ‘minimal moderation’ forum in the sense that it does not have rules at all, it is however minimal in the ways it enforces many of its rules. Flashback has a set of regulations and rules which state, among other netiquette instructions, that members must adhere to legal restrictions when it comes to issues like defamation, hate speech, child sexual abuse material, harassment, and threats (Flashback, 2021). If they do not, moderators are able to warn or suspend them. However, while Flashback forbids such expressions, there are, as also noted elsewhere (Åkerlund, 2021b), some loopholes through which users can get around these policies. For instance, while rule 1.03 on hate speech states that, It is forbidden to threaten or express contempt for particularly vulnerable groups, alluding to race, colour, national, or ethnic origin, religious beliefs, or sexual orientation.

It then goes on to note that, ‘derogatory statements against one or more designated ethnic groups are permitted, provided that the criticism is factual and valid . . .’. Likewise, while rule 1.05 on ‘child pornography’ [sic.] states that, It is forbidden to request or distribute links to pornographic images or movies with persons under 18 years of age. This rule exists to prevent the forum (via posts or PM) from being used for the purpose of spreading child pornography.

It then clarifies that ‘The rule makes exception for links where the act is justifiable in light of the given circumstances’. What constitutes a derogatory yet factual and valid statement against an ethnic group, or a justifiable act of child sexual exploitation remains unspecified by Flashback. Still, add-ons like these give users lots of wiggle room to express hateful and harmful ideas in discussions (Gaudette et al., 2021). Given that Flashback operates exclusively in a Swedish legal context and could thus create quite specific rules to cater to these conditions (cf. Myers West, 2018), and given the negative attention the forum has often gotten for its policies and users, it is difficult to imagine why the site would formulate rules as vaguely as this if it were not for the outward appearance of regulation (Roberts, 2018) but with an inward directive towards its users that hateful content is permitted (Myers West, 2018).

While Flashback has moderators, 2 these tend to focus more on cleaning and ordering of the forum, for instance making sure that threads are posted in the right category and that threads and posts are not duplicates (netiquette instruction 0.002; 0.04), that thread headings are descriptive of the thread topic (netiquette instruction 0.01), and that posts are not ‘off-topic’ or trolling, but instead focus on the subject at hand (netiquette instruction 0.03). While some content is deleted or moved, and threads are closed, this is seldom due to content being too inflammatory but rather for not adhering to the above-mentioned netiquette instructions. In fact, Flashback has a strict non-interference policy when it comes to users posted contents, and both administrators and moderators take a united stance towards the users on these issues, in support of the platform’s position.

In the platform’s FAQ section, it reads that users have 1 hour to edit any newly published post; accounts are never removed due to inactivity; usernames cannot be changed; and that despite the 18-year age limit, there will be no actions taken retrospectively of users who posted while minors. The FAQ also illustrates how users have attempted to negotiate content removal:

How do I delete my account?

You do not. No accounts are deleted. The same goes for posts and threads, where the only exception is for posts that violates any rules.

But if I want to get my account banned then? Will everything be deleted then?

No. The only thing that happens is that you can no longer write anything as that user . . .

Come on! If someone associates me with what I have written here my life will be ruined!

No. If you are still dissatisfied with the answer, you can contact Flashback through to the link ‘Contact us’.

The role of an FAQ is to establish certain norms in the community and to clarify rules so that they do not arise repeatedly (Squirrell, 2019). The above section of Flashback’s FAQ illustrates how moderation questions remain a reoccurring and opaque concern for users (see also Roberts, 2018). However, further clarifying the permanence of content and accounts would likely result in less affective, spontaneous posting and perhaps even reconsiderations to join the forum in the first place. Here, it is important to recognise that Flashback, like most digital platforms, are not freely provided communication tools for the general public, but operate on the basis of revenue (Gillespie, 2010).

Methodological approach

To gain an overview of Flashback’s official stance on moderation, the formulations made official by the platform in the Rules, FAQ and About sections were all noted. To capture forum content discussing its minimal interference policies of posts and users, data collection began with individual searches 3 via Flashback’s ‘advanced search’ function using a two-part search string. For a post to be sampled, it first needed to contain any of the words in the first part of the search string concerning deletion or removal. This needed to appear in combination with any word in the second part of the search string concerning variations of words relating to user account(s), post(s), comment(s), quote(s) or thread(s). While starting with narrow definitions of the above-mentioned terms, browsing through hits provided additional relevant variations of the search terms. 4 In doing so, the sampling was able to capture forum jargon regarding posting and users, not the least the use of ‘Swedified’ variants of their English translations.

Searches included all sections of the forum, from when it was first launched in May 2000 until December 29th, 2021. After initial screenings of non-related content about deletion or removal (like ‘How to remove user accounts on Windows XP’), a total of 168 threads containing exactly 2500 posts by administrators, moderators and ‘regular’ users were sampled for in-depth analysis. All posts were then read repeatedly and inductively coded based on their focus or focuses, and user type: whether users were a representative of the platform (administrator or moderator) or not. In total, 25 codes were identified and grouped into six main themes based on from which perspective (platform or user) they were posted. 5 In total, three platform justifications from the perspective of administrators and moderators, and three types of user responses from the perspective of regular users were identified.

To illustrate how platform justifications and user responses often interrelate, each of the identified platform justifications is contrasted with a common form of associated user response in the analysis. First, the analysis deals with freedom of speech and users’ accusations of hypocrisy, thereafter it focuses on forum quality and users’ adaptation to platform rules, and finally it analyses how the platform displaces responsibility and users’ expressions of desperation.

Analysis of platform justifications and user responses

Platform justification: protecting freedom of speech

The minimal moderation policy on Flashback is, like in comparable settings (Jasser et al., 2023; Massanari, 2017; Topinka, 2018), forcefully claimed as an act to protect freedom of speech. This is evident not the least through permanent references to freedom of speech in different outward-facing parts of the forum. For instance, in the ‘About’ section (Flashback, 2022a), Flashback highlights Sweden’s commendable heritage in being the first country to adopt freedom of press and goes on to connect this with Flashback’s long history in defending ‘the free word’. Moreover, Flashback’s rule section starts with a quote of article 19 of the United Nations’ Universal Declaration of Human Rights which states that, Everyone has the right to freedom of opinion and expression; this right includes freedom to hold opinions without interference and to seek, receive and impart information and ideas through any media and regardless of frontiers.

By connecting the platform to human rights and democratic ideals of freedom of expression, Flashback’s policies and actions are positioned as incontestable and noble.

Relatedly, claims of anti-censorship are made as supposed concerns for user safety—in protection of them being pressured or threatened. Moderators argue that if users were able to delete their own content, . . . every criminal or corrupt elite exposed on Flashback could have the information removed from the forum by figuring out who wrote the post, and then with threats and / or means of force compel that person to erase content. (Moderator, 2012)

6

. . . it would with certainty lead to censorship (either from those who do not appreciate the comment or those who regret posting and thus practice self-censorship). (Moderator, 2014)

By connecting freedom of speech to anti-censorship and care for its members, Flashback’s representatives work to create a sense of ‘us’ versus ‘them’—positioning the platforms as a protector on the side of its users, against its supposed enemies. By protecting users from being exposed to ‘criminals’, ‘corrupt elites’ or even themselves if they wanted to make the mistake of committing ‘self-censorship’, Flashback is represented by its moderators as moral and responsible, acting to preserve Sweden’s principles of free speech, and its users’ safety and integrity.

User response: accusations of hypocrisy

However, many users see Flashback’s views on freedom of speech and anonymity as hypocritical. Users argue that the platform’s view of freedom of speech stands in opposition to their supposed care of users by never letting anyone, not even those who have been ‘outed’, have an opportunity to be forgotten: I think it sounds strange with ‘real freedom of speech’ at the same time as you say that you should only write things that you can stand for. I, and many other users, have thought all these years that Flashback is the place where you can say what you want without having to risk being held accountable for it in real life. But here you come as a former moderator and clarified that with real freedom of speech you do best not to say the things you are thinking . . . (Flashback user, 2014) 10–15 years ago, everyone associated Flashback with security and anonymity. It was a ‘safe zone’ where you dared to say everything you did not dare to say otherwise, where you could discuss things that were not legal and feel safe. Flashback was on ‘our side’, on the people’s side, not on the authorities’ side. At least that’s what I used to think (Flashback user, 2018).

Users argue that they cannot act without inhibition (see also Suler, 2004) unless they can entrust Flashback with safeguarding their ‘real’ identities. Without this insurance, Flashback cannot be thought to strive for true freedom of speech. The excerpts above moreover illustrate how users’ imaginations of the platform have changed over time—as has also been identified in entirely different contexts when platform actions diverge from user expectations (Banchik, 2021)—from a ‘place where you can say what you want’ and a ‘safe zone’ on ‘our side’ to something that is no longer trusted and dependable.

In contrast to platform perspectives arguing for minimal moderation as an act of freedom of speech, critical users also form ‘folk theories’ (Myers West, 2018) about Flashback’s policies: Flashback’s entire business idea is not about ‘real freedom of expression’ but about creating as much turbulence as possible to generate as many visits as possible and thus get advertising revenue. Therefore, there are entire forum sections and threads that are primarily about filth and revelling in other people’s suffering. Therefore, no accounts are ever deleted. (Flashback user, 2015)

Through conspiracies about the platform’s true intentions (Jhaver et al., 2019) for inaction, some users make clear that they do not perceive free speech arguments as trustworthy framings of platform policies and implementations. In doing so, users’ reactions signal that the platform is not sufficiently able to balance users’ needs and expectations, instead its supposed economic intentions are exposed (see also Gillespie, 2018; Massanari, 2017).

Platform justification: safeguarding thread quality

Technological reasons are commonly used to justify the minimal moderation policy. Most notably, Flashback argues that because of the structure and functions of the forum itself, removing or anonymising content or users would have great negative impact on the quality of the discussions and threads. These justifications have a long history on the platform. An administrator as early as 2004 argued that ‘[i]f you were able to delete your posts, some threads could be completely destroyed . . .’. Other platform representatives have since echoed this same justification: It would have created chaos for users . . . If we—as an example—deleted your account and your posts, we would have a thread here that responded to a post that was deleted. No one would understand what was being discussed. (Administrator, 2010) [threads] would be unreadable as soon as more than one member in a thread requested to be disconnected to their posts. It would no longer possible to follow who said what. (Moderator, 2012)

By positioning the integrity of the threads in focus, without highlighting any possible technological solutions for this long-standing issue, it appears as though not interfering is the only possible option, preceding the needs of any individual user. In a sense, by arguing for the quality of the platform to be upheld only through inaction, Flashback’s administrators and moderators make it appear as though the current implementations of technological functions are out of the site’s control. In doing so, the platform minimises its own role as a gatekeeper of user-generated content (Gillespie, 2010, 2015).

User response: adaptation to platform rules

Some users suggest solutions addressing these alleged technological constraints. For instance, the potential of replacing users’ real account names with alternatives: Having a hash of the username and using it as a generator for username and avatar (Flashback user, 2014) Why can I not delete my username and make the name of all posts / threads by me instead ‘deleted profile’. (Flashback user, 2018)

These suggestions for improvements made by some users indicate a trust in platform explanations as transparent (Jhaver et al., 2019) and express a belief that there simply has not yet been a viable technological solution which would ensure thread integrity.

Many other users seem to have fully accepted the inflexibility of the platform structure and take it upon themselves to adapt their behaviours to better fit existing conditions. Some, for instance, suggest the use of multiple accounts to protect themselves, and to be intentionally deceitful in what information they share to place distance between their Flashback personas and their offline identities: It is also fully permitted to have an account other than the usual one if there is something you want to write that you know can be sensitive. (Flashback user, 2016) I use several different accounts (of course not in the same thread) to be able to have an extra layer of anonymity. [. . .] Another tip is to sneak in mistakes about yourself from time to time to mislead people if you are afraid of being ‘tracked’. (Flashback user, 2013)

When users navigate platform constraints in this way, they are not focusing on Flashback’s possibilities to change. Instead, users seem to have accepted the technological and social rules that apply on the platform. Potentially, the size of Flashback itself, with its numbers of current visitors, members, and posts featured centrally in its starting page banner (see Figure 1), discourages some users from thinking that any possibilities to impact the forum are available to them. Flashback has in these instances successfully positioned itself as unchangeable. This inflexibility in turn appears internalised by these users who seemingly accept that they themselves must change since the platform cannot.

Platform justification: displacing platform responsibility

The third and final type of justification given by Flashback for minimal moderation is that this is not in fact the responsibility of the platform at all. The platform, as argued by an administrator, promises to never hand out user account information, that traffic between Flashback’s servers and users’ browsers is encrypted, and that there are no external statistics or ad tools interfering with user privacy (Flashback administrator, 2014). In other words, Flashback sees its responsibilities as controlling only the administrative aspects of platform activity, but when it comes to user-generated content, Flashback cannot be held responsible: The responsibility for what you write about yourself in your own posts lies entirely with you. No one else. (Moderator, 2010) Flashback is aimed at people over 18 years old. People who are expected to take responsibility for their actions. Think before posting (Moderator, 2020)

In fact, it seems as though some user’s perceived lack of responsibility for their own actions is quite triggering for representatives of Flashback: If you want to be anonymous, you have all fucking opportunity in the world to be. But if you are stupid enough to use the same username on several sites and be careless with what you post, you can damn well blame yourself. [. . .] Flashback . . . cannot babysit and delete posts and users just because they are afraid that someone may find out who they are because of what they themselves have written and done. (Moderator, 2010) If you get outed because of things you wrote yourself, why should Flashback have some type of failsafe for your mistakes? (Moderator, 2013)

Flashback once again positions itself, through its moderators, on the side of its users, facing their common external threats—this time concerning privacy and user security. At the same time, representatives of the platform frame Flashback as nothing more than a neutral conduit for user interaction (Gillespie, 2010, 2015) and thus, if or when, users come to regret their actions, they themselves are to blame. Their frustration, furthermore, illustrates a perception that the disgruntled users are not conforming to community culture, nor using Flashback as the platform has intended (see also Nissenbaum and Shifman, 2017).

User response: expressions of desperation

The interest in some of these discussions, as showcased by a thread reaching as many as 80,000 views at the time of data collection, signals a widespread wish among Flashback’s users to know more about how the platform deals with moderation, and thereby, that the FAQ section does not adequately address these concerns (cf. Squirrell, 2019).

For some users, the need to have content removed is seemingly desperate; and unsurprisingly so, as user discussions illustrate the many kinds of illegal and hateful activity promoted by users on the platform. The potential consequences for users if they cannot get their content removed, seems at times overwhelming, as can be seen in one user’s distressed plea: I have found the world’s nicest girl, and now I have gone and written a lot of fucking desperate messages, I want them to GO AWAY! I do not want her to know, she will never forgive me . . . (Flashback user, 2008)

Other users argue the potential consequences for not getting content removed means ‘your whole social life is at stake’ (Flashback user 2010), or worse, that ‘life can pretty much be over’ (Flashback user 2011). Yet another member goes as far as jokingly considering suicide if their anonymity was to be unveiled: Think of the day when you yourself stand there with anxiety and your post remains as a monument of your sick mind and perversions. Then there is only one way out *bang!* (Flashback user 2008)

Not only do these statements indicate the self-perceived severity of users’ own actions on the platform, but they also signal the distance and incompatibility between their Flashback online personas and offline identities. Unlike on site like 4chan where platform ephemerality regulates the potential personal consequences of controversial posts (Phillips, 2015), on Flashback, they remain searchable always. Consequently, it is not surprising that many users are critical and express frustration due to the impossibility of never being able to move on: I think you should have the opportunity to delete threads you have created. Why? Life goes on and something that was written many years ago may not be as fun to have in your history all the time. (Flashback user 2014) EVERYTHING you write here is stored forever. [. . .] If someone around you figures out who you are on Flashback, you’re done. Things you may have written 15 years ago when you may have been a completely different person emerge and can ruin your life. (Flashback user 2018)

These perspectives make clear that some users had not imagined any repercussions to Flashback’s anonymity policies, trusting the platform entirely in its framing as being on the side of its users against perceived external threats. By pleading to the platform’s benevolence, users display a perception of the platform as having capacity to be compassionate and considering the needs of individual users before its general policies. In a sense, user’s desperations illustrate a lack of understanding about the potential real-life consequences of online participation more generally (cf. Suler, 2004; Van der Vegt et al., 2021), and especially so about the consequences of hate expressed online.

Discussion

This article has analysed the ‘free speech’ online forum Flashback, which adheres to a strict non-interference policy when it comes to user-generated content, but beyond this also forbids users from deleting their own content or accounts. Drawing on the notions of ‘platform politics’ and ‘imagined affordances’, this article sought to understand the relationship between the platform and its users with respect to this unconventional approach to moderation and content removal, and how such inaction contributes to the proliferation of hate in these settings. This article explored both the position taken by Flashback as it pertains to its policy of minimal moderation of user-generated content, and the expectations and experiences of users as expressed by users as they navigate Flashbacks rules and their practical implementations.

The analysis illustrated how Flashback justifies its minimal moderation policy through three arguments: as promoting freedom of speech and counteracting censorship; counteracting an inevitable decline in forum and thread quality if content and user accounts were to be deleted; and displacing responsibility onto users themselves for posting content without being aware of Flashback’s policies. Users, in turn, deal with Flashback’s conditions in three distinct ways: through accusations of hypocrisy of Flashback’s contradictory ideals and actions; by adapting their usage to fit the platform’s policies, and finally, by expressing their desperation and regret for their actions on the platform in pleads for Flashback to reconsider their position. Thus, there is a disconnect in the communication between moderators and administrators representing Flashback’s platform politics, on one hand, and users imagining its affordances, on the other. Flashback portrays its deliberate inaction as something for the greater good whereas users are driven by self-centred motives related to protecting their privacy and integrity.

Moreover, the justification used by Flashback constitute a politics of moderation which involves several different positions. The reason for not moderating is simultaneously a deliberate political position to protect free speech, a desire to change but an inability to do so due to technological limitations, and also not the concern and responsibility of the platform at all, as they are just providing the infrastructure. Still, the different forms Flashback’s justifications take are pragmatic and matter of fact, with little consideration of individual users’ needs. Even though platforms purposefully shape user activity (Gillespie, 2018), Flashback often positions itself in a way that masks the platform’s real control over its policies and their consequent implementations through moderation (or lack thereof) (see also Gillespie, 2010, 2015). While it does not appear as though it would be impossible to reach compromises with users in questions of moderation in ways that would neither risk free speech nor the integrity of posted content, while still maintaining high levels of user accountability, Flashback nonetheless remains adamant in its position not to act. In this sense, Flashback appears to take an intentional, ideological stance of deliberate inaction as a means to gain credibility, rather than attempting to appease disgruntled voices with policy modifications, as has often been seen elsewhere (Roberts, 2018; Shen and Rose, 2019).

Flashback describes itself as ‘politically and religiously independent’ (Flashback, 2022a) and is thus not officially in support of any specific, political orientations. Still, it avidly advocates the cyber-libertarian ideals on absolutist free speech and autonomy from state regulation so common in the early years of the commercial Internet (Dahlberg, 2010; Flew, 2021); ideals which have more recently been politicised by the far right in order to contest deplatforming and accusations of hate speech as censorship (Bromell, 2022; Rogers, 2020). Taking this a step further, Flashback even considers its users as potential threats to their own free speech and to counter this, the platform takes on a fiduciary role of governance over users and their content (see also Bietti, 2021). Paradoxically then, Flashback entrusts users’ freedom and responsibility to decide what to post, in a libertarian spirit, but then strips them of their autonomy and ability to make decisions about what to remove. There appears to be an inconsistency between the values of freedom that Flashback claim to stand by, and the authority it consequently wields on users who want to exercise their right to free speech in the form of changing what they have expressed and how. While Flashback promotes a sort of utilitarian approach through deliberate inaction, this platform politics serves an additional role, namely inflating statistics and creating the illusion of forum activity, size and popularity, as conspired by some users.

An important aspect of platform politics, as noted by Gillespie (2010) is their framing of what ‘these technologies are and are not, and what should and should not be expected of them’ (p. 359). Users engaging in discussions about moderation seem to have approached the platform with expectations that there are no rules—or at least, as presented in the rules section, that these are more for show than anything else. When users are faced with the realisation that their actions on Flashback (could come to) have real-life consequences, they describe their behaviours as more cautious and strategic, and that they come to reimagine what Flashback is and what it stands for—often with a sense of disappointment or defeat in not being protected by the platform. If users are so discontent with Flashback’s platform politics, why do they not change? Well, in fact, most of them are not. The platform could not have continued with these policies without users’ support. User and platform practices are co-constitutive (Massanari, 2017), and throughout the analysis of Flashback’s justifications, there were large numbers of ordinary Flashback users who echoed and sided with Flashback’s point of view.

Beyond just supporting Flashback’s platform politics, parts of the community also sought to mock and silence those who wanted platform assistance and clarifications. For instance, some looked into what the complaining users had posted on to shame them, ‘Are you ashamed of your inquiries for “medieval porn”?’ (Flashback user 2009) and ‘Is it hard to stand for what you wrote in the drug subforums?’ (Flashback user 2009). Many siding users dissociated themselves even further from users who criticised Flashback’s politics of inaction through the expression of pride in owning up to one’s embarrassing, socially stigmatising or even criminal content. As noted by several senior users, ‘if you don’t have the guts to stand by what you said, you are a loser in my eyes’. (Flashback user, 2014, then 8 years into membership) and ‘modifying and deleting posts is for insecure latte sissies. A real man owns what he said!’ (Flashback user 2014, then 6 years into membership).

So, when users imagine Flashback to be something which it disassociates with, or if they are not using Flashback as intended, the user base performs an important function in keeping users in line. While there is opportunity to address harm and hate online through the enablement of collective user efforts (Bietti, 2021), communities can also work to promote hate, if that is what it collectively pushes for (Massanari, 2017). Considering the tendency by communities on similar sites, like 4chan, to self-regulate, reprimand and silence non-conformers (Massanari, 2017; Nissenbaum and Shifman, 2017; Phillips, 2015) these findings are perhaps unsurprising. Beyond this, through strength in numbers, the community supporting Flashback’s platform politics could thus also work to collectively impede any attempts at increasing moderation and regulation on the platform, even if Flashback’s management decided it would change.

Another important aspect of platform politics is what platforms hide or otherwise choose not to highlight to their users (Gillespie, 2010). What is not brought forth by platform representatives in these discussions or in front-facing parts of forum text is that far-right content remain highly important and central on the platform (Åkerlund, 2021b) and that in turn, by never allowing anything or anyone to be removed, the platform inevitably promotes hateful, harmful, and illegal content. So, while longitudinal access to various content is a foundational premise for the Internet at large, and while any form of restriction over content prompts outrage from the far right and others, there is some content that goes beyond being ‘disagreeable’ and in fact, can do real harm (Maitra and McGowan, 2012). Moreover, exploiting the notion of ‘free speech’ to spread hate is something that has had violent, offline effects (Munn, 2021). Thus, Flashback’s politics of inaction and defiant conception of ‘free speech’ serves to enable the formation of residual hate and the continuous access and potential exposure to hateful materials by those who are its targets (see also Åkerlund, 2021b).

Following the implementation of the DSA, it is unclear how the Swedish government will address Flashback’s approach to content moderation and freedom of speech. However, against the backdrop that decisions about moderation are economically motivated (Roberts, 2018), honouring absolute free speech can be thought of as an important selling point for Flashback—something that sets it apart from other sites, especially in a Swedish context. Without this, Flashback would lose much of its appeal, and as previous research has shown, when moderation practices are tightened, rather than adapting, those who seek anonymous unaccountability to express hate turn elsewhere (Rogers, 2020). Consequently, Flashback’s continued relevance and economic welfare relies on its users and their current forms of engagement. Thus, the DSA might prove devastating to Flashback in its current form.

This article has begun exploring the justifications for, and understandings of, minimal moderation practices outside of major social media platforms. It should be noted that this article is a case study exploring a specific online forum in a specific national setting, this in turn means limitations in terms of generalisability. Future research efforts should continue to analyse these platform policies that enable residual hate. Not the least, there is need for research to further study the negotiations between platforms and users. For instance, future research efforts would do well to gain firsthand information from platform representatives and users themselves in these matters. Furthermore, while it is beyond the scope of this article to explore the lawfulness of Flashback’s inaction policies, it would be relevant for future studies to analyse users’ rights to be forgotten under GDPR (Bietti, 2021) on Flashback, especially in cases where user identities have been exposed.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.