Abstract

Social media are key arenas for public opinion formation, but are susceptible to coordinated social media manipulation (CSMM), that is, the orchestrated activity of multiple accounts to increase content visibility and deceive audiences. Despite advances in detecting and characterizing CSMM, the attribution problem—identifying the principals behind CSMM campaigns—has received little scholarly attention. In this article, we address this gap by synthesizing existing research and developing a theoretical model for understanding CSMM. We propose a consolidated definition of CSMM, identify its key observable and hidden characteristics, and present a rational choice model for inferring principals’ strategic decisions from campaign features. In addition, we present a typology of CSMM campaigns, linking variations in scale, elaborateness, and disguise to principals’ resources, stakes, and influence strategies. Our contribution provides researchers with conceptual and heuristic tools for attribution and invites interdisciplinary and comparative research on CSMM campaigns.

Keywords

Introduction

Social media platforms enable citizens to express their views publicly (Gil de Zúñiga et al., 2014) and mobilize connective action (Bennett and Segerberg, 2013). Yet, the very affordances that enable democratic engagement also render these platforms vulnerable to manipulation. Disinformation scholars increasingly focus on traces of manipulative behavior that go beyond spreading inaccurate content (Hameleers, 2023: 2). In this theoretical paper, we focus on coordinated social media manipulation (CSMM), understood as the orchestrated activity of multiple social media accounts designed to artificially amplify content visibility (Giglietto et al., 2020; Keller et al., 2020; Weber and Neumann, 2021). Since Russia’s interference in the 2016 United States elections (US Justice Department and Mueller, 2018), this disinformation technique has garnered growing interest from social scientists (DiResta et al., 2022; King et al., 2017; Lukito, 2020), computer scientists (Pacheco et al., 2021; Weber and Neumann, 2021), and platform operators (Gleicher, 2018) alike.

Despite the expanding literature on CSMM, two shortcomings persist. First, the concept that has largely defined this research field—‘coordinated inauthentic behavior’ (CIB), introduced by Meta (Gleicher, 2018)—has been criticized for its lack of clarity. The concept is vague in specifying ‘inauthenticity’ (Graham, 2024) and obscures the key normative issue: it is not inauthenticity per se that is problematic, but the manipulative intent behind such campaigns (Overdorf and Schwartz, 2020: 9). Second, while seminal case studies (Giglietto et al., 2020; Keller et al., 2020; King et al., 2017) and advances in detection methods (Pacheco et al., 2021; Schoch et al., 2022) have propelled the field forward, a major theoretical gap persists. We lack a framework that helps scholars to address what cybersecurity literature calls ‘attribution’—the challenge of linking an observed campaign to a principal, that is, the covert actor who commissioned the campaign (Keller et al., 2020), and identifying their motives (Rid and Buchanan, 2015: 10). This challenge is compounded by the fact that researchers—unlike platform operators or intelligence agencies—have limited access to relevant data. We address the attribution problem by providing a theoretical framework for reconstructing the decisions of the principal.

Against this backdrop, this article makes four contributions. First, we propose a definition of CSMM that clarifies its manipulative intent while remaining aligned with existing concepts. Second, we identify key observable and hidden characteristics of CSMM campaigns by reviewing the empirical literature. Third, aiming for a heuristic for inferring hidden information from observed features, we develop a theoretical model for reconstructing a principal’s choice to commission a CSMM campaign. We explicate the model’s assumptions and demonstrate how testable hypotheses can be derived from them. Fourth, we introduce a typology of CSMM campaigns to illustrate the model’s inferential logic. We conclude by discussing how our theoretical model and typology can serve as tools for guiding future empirical research and fostering interdisciplinary collaboration in the systematic detection, characterization, and attribution of CSMM campaigns.

Defining CSMM

Social media have become an indispensable part of today’s information ecology (Häussler, 2021), and have changed the logic of political communication (Klinger and Svensson, 2015). However, they are also prone to rampant information disorders (Wardle and Derakhshan, 2017). Researchers have identified a wide range of manipulative activities on social media, including foreign influence operations (Doroshenko and Lukito, 2021), interference in election campaigns (Keller et al., 2020; Lukito, 2020), and cryptocurrency manipulation (Pacheco et al., 2021). We hold that such manipulations constitute disinformation, based on a broad definition of the concept as ‘all practices of intentionally creating or disseminating deceptive content’ (Hameleers, 2023: 2).

Manipulation on social media has frequently been examined through the analytical lens of ‘inauthentic’ behavior. Particular attention has been drawn to social bots, that is, social media accounts whose actions are automated by software (for a review, see Ferrara, 2022). Automation and manipulation, however, are not synonymous (Overdorf and Schwartz, 2020: 9). Scholars have noted that disinformation campaigns continue to rely on human agents (Woolley, 2022: 119). Moreover, bot detection algorithms have been criticized for their imprecision (Rauchfleisch and Kaiser, 2020) and can be evaded by hybrid accounts controlled by both bots and humans (Grimme et al., 2018). As a consequence, researchers have shifted their focus from suspicious activity of individual accounts to group behavior (Grimme et al., 2018). This research was strongly influenced by the CIB concept introduced by Facebook in 2018, defined as ‘groups of accounts and pages working together to mislead people about who they are and what they’re doing’ (Gleicher, 2018). While the concept has inspired an entire branch of research (Giglietto et al., 2020; Mazza et al., 2022; Nizzoli et al., 2021), it has also been repeatedly criticized for its analytical shortcomings, particularly the ambiguous notion of ‘inauthenticity’ (Graham, 2024; Overdorf and Schwartz, 2020; Starbird et al., 2019).

One criticism is that CIB tends to equate inauthenticity with manipulative intent, thereby obscuring the fact that ‘inauthentic’ accounts—used by individuals who hide behind pseudonyms—can protect protestors in authoritarian regimes from state prosecution (Overdorf and Schwartz, 2020: 10). According to Overdorf and Schwartz (2020: 10), the normative problem is not inauthenticity in itself, but ‘the intention behind the act of creating a fake account.’ From a public sphere perspective, the intentional distortion of public debate is particularly worrisome (Keller and Klinger, 2019). Intent is excluded from the CIB concept because it is difficult to prove (Gleicher, 2018), yet it is integral to any definition of disinformation (Hameleers, 2023). Other critics have noted that platform operators’ emphasis on authenticity reflects their commercial interest in sustaining a credible userbase for advertisers (Graham, 2024). Notably, the notion of inauthenticity is tailored to Facebook’s real-name policy. This poses challenges for comparative research, as the concept does not translate well to platforms with default anonymity, such as Reddit. Alternative concepts that avoid the term ‘inauthenticity,’ such as ‘political astroturfing’ (Keller et al., 2020), are too narrowly focused on campaigns that mimic political grassroots movements.

We approach these shortcomings by introducing the concept of coordinated social media manipulation (CSMM), which we define as follows: The orchestrated activity of multiple accounts or pages (coordination) on social media platforms (social media) that is directed at influencing public opinion or public discourse by increasing or decreasing the visibility of specific content or actors through exploiting the affordances of these platforms (manipulation: influence) and that intentionally deceives the audience about the identity, motives, actions, or connections of the involved actors and/or about the popularity of the disseminated content (manipulation: deception).

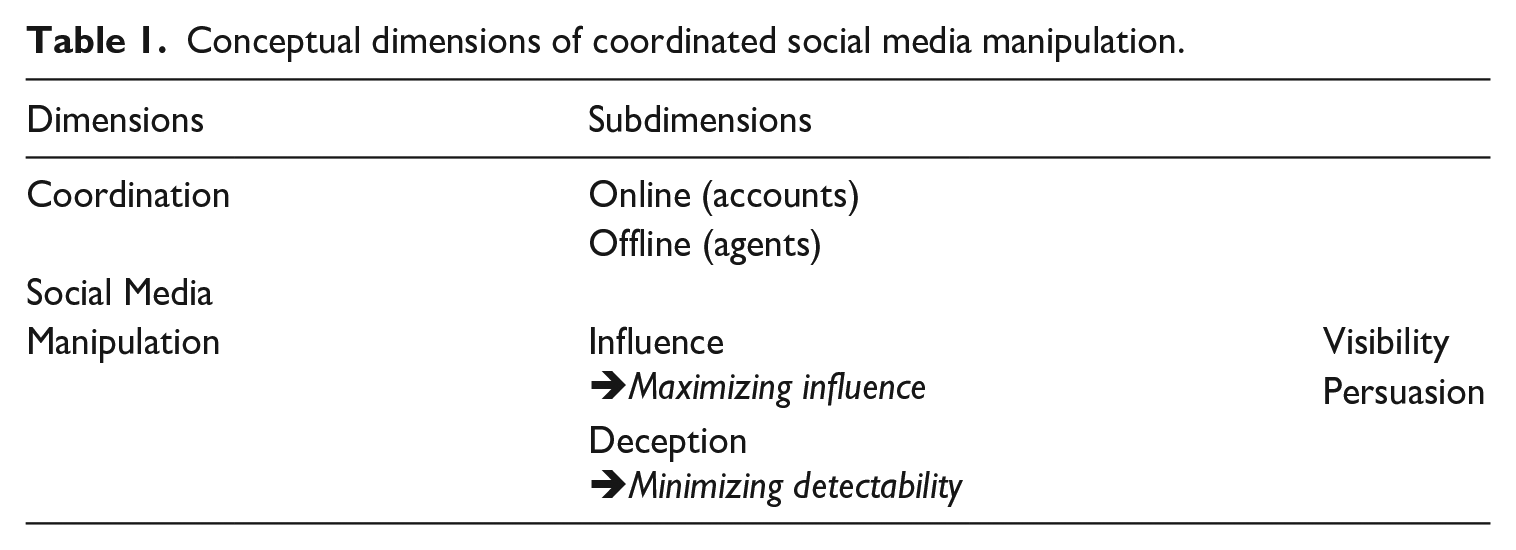

The definition comprises three dimensions—coordination, social media, and manipulation—as outlined in Table 1. Our aim with this definition is twofold: First, by aliging our concept with existing understandings of coordination (Gleicher, 2018; Mannocci et al., 2024), we aim to foster cumulative research. At the same time, we seek to foreground manipulation and clarify its defining elements—intentional influence and deception.

Conceptual dimensions of coordinated social media manipulation.

Our understanding of coordination echoes Grimme et al.’s (2018: 446) assertion that the activity of multiple accounts is fundamental to this disinformation technique. Malone and Crowston (1994: 90) provided a useful definition of coordination as ‘managing dependencies’ between the activities of interdependent actors (human or nonhuman). In the case of CSMM, coordination takes place both online and offline. Online, groups of accounts need to be coordinated, either manually by human agents or through automation. Offline, the human agents who control the accounts must likewise be coordinated (Keller et al., 2020). Both forms of coordination can yield suspicious patterns in digital trace data. In operational terms, coordination in the context of CSMM is ‘a latent, suspicious, or remarkable similarity between any number of users’ (Nizzoli et al., 2021: 444). Detection algorithms often employ network analysis to identify communities of accounts that synchronously and repeatedly share similar content (Giglietto et al., 2020; Graham et al., 2024; Schoch et al., 2022; Weber and Neumann, 2021).

In defining social media, we follow Carr and Hayes (2015: 50), who conceptualized these as ‘Internet-based, disentrained, and persistent channels of masspersonal communication facilitating perceptions of interactions among users, deriving value primarily from user-generated content.’

Our concept of manipulation comprises two subdimensions—influence and deception—following Susser et al.’s (2019: 4) definition of manipulation as ‘hidden influence.’ These subdimensions also highlight the two operational imperatives of CSMM campaigns—maximizing influence while minimizing detectability.

CSMM aims to influence target audiences by increasing content visibility through mass dissemination (Weber and Neumann, 2021: 5) and by persuading the audience to adopt specific beliefs, perceptions, or behaviors (Walter and Ophir, 2023: 422). This persuasion often extends to shaping ‘second-order beliefs’ (Earl et al., 2022: 7)—perceptions about what others believe, such as the prevalence of opinions in a population. CSMM may also exert an influence by stimulating user engagement (Weber and Neumann, 2021: 5). The influence is exerted in the form of campaigns, which—borrowing from social movement literature—can be defined as ‘sustained, organized public efforts making collective claims’ (Tilly et al., 2020: 6), characterized by coherence in content, timing, and actors.

The deceptive nature of CSMM lies in concealing critical aspects of its operation. Digital deception, as defined by Hancock (2009: 290), involves ‘intentional control of information in a technologically mediated message to create a false belief in the receiver of the message.’ In CSMM, false beliefs are fostered about the identity and motives of the sender, the actions and connections of coordinated accounts, and the popularity of disseminated content. To minimize detectability, campaigns are deliberately disguised, as exposure can undermine their effectiveness and pose risks to the principal (DiResta et al., 2022: 225). The two operational imperatives involve a tradeoff: the more effort invested in gaining influence, the greater the chances of being detected.

The logic of a CSMM campaign can be best grasped through a principal–agent framework, as suggested by Keller et al. (2020). CSMM campaigns are often organized centrally, ‘initiated by a principal directly instructing a group of users who respond to extrinsic rewards—the agents’ (Keller et al., 2020: 259). This makes CSMM a form of strategic communication (Lukito, 2020: 240). Principals commission CSMM campaigns to pursue specific goals, while agents execute these campaigns by operating the associated social media accounts (Keller et al., 2020). While both seek to remain hidden, their misaligned interests can result in detectable traces and campaign exposure—for instance, when agents create ‘just enough low-quality accounts and messages to meet their principal’s requirements instead of properly camouflaging their activity’ (Keller et al., 2020: 261).

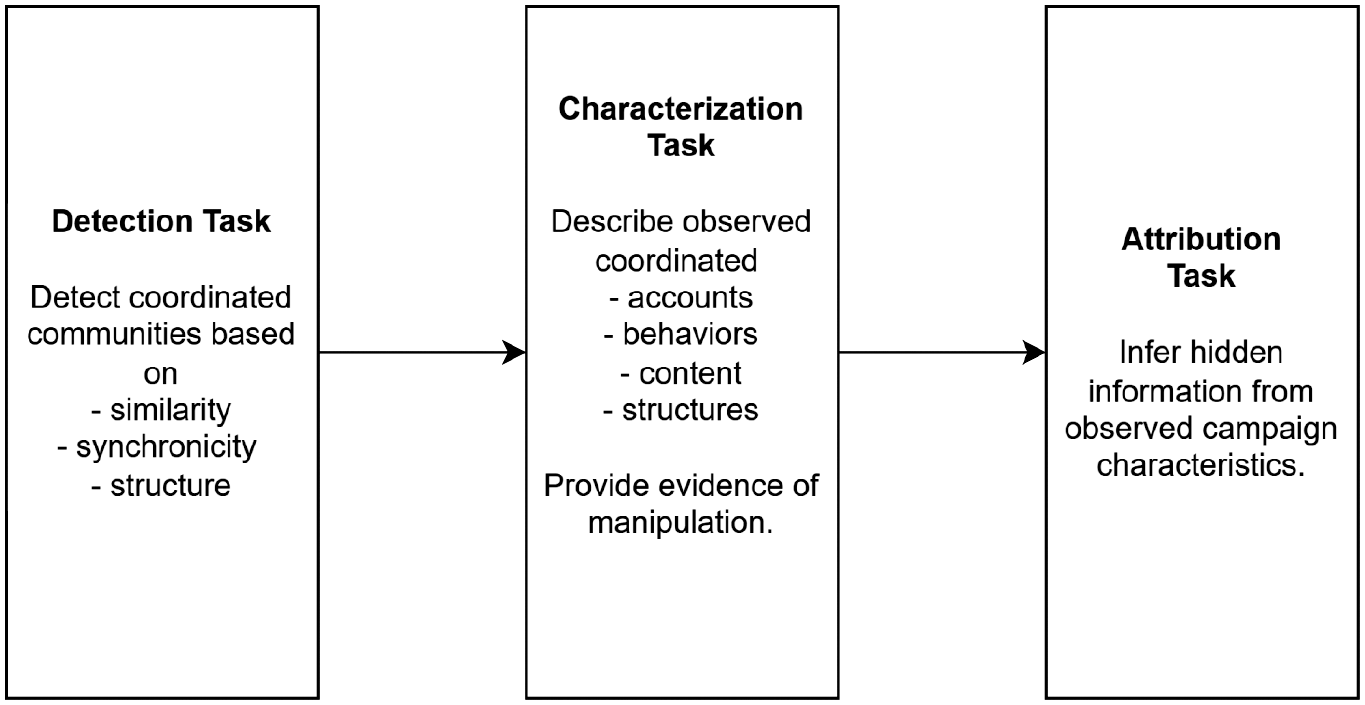

Therefore, CSMM campaigns have two sides: a visible side manifesting in observable characteristics and a hidden side involving information about the principal’s identity, aims, and strategy. Attributing covert operations to their source is a key cybersecurity challenge (Rid and Buchanan, 2015). Expanding Mannocci et al.’s (2024) scheme, we identified three key analytical tasks for CSMM researchers, as shown in Figure 1. The first task is to detect highly coordinated communities of accounts by identifying content similarity, synchronized behavior, network structures, and other traces of coordination. The second task involves characterizing the coordinated accounts, behaviors, content, and structures to provide evidence of manipulative intent. We add that the third task—attribution—is to infer hidden aspects of campaigns from their observable characteristics.

Three analytical tasks for CSMM researchers, expanding the scheme developed by Mannocci et al. (2024).

Currently, CSMM researchers have little guidance on how to approach attribution. This task is particularly challenging for researchers who typically lack access to non-public data such as IP addresses. To address this challenge, we provide heuristic tools by identifying key observable and hidden characteristics of CSMM campaigns, linking them in a theoretical model, and illustrating its inferential potential through a campaign typology.

Characteristics of CSMM

To identify the key observable and hidden characteristics of CSMM campaigns, we conducted a comprehensive literature review of multidisciplinary research. We retrieved 515 journal articles, preprints, and conference papers from the Web of Science and EBSCOhost databases. Four researchers and one student coder screened the abstracts for eligibility, retaining 62 relevant empirical studies. These included analyses of previously unknown campaigns (Elmas et al., 2021; Giglietto et al., 2020) as well as analyses of ground truth data from campaigns uncovered by authorities, platform operators, or third parties (Barrie and Siegel, 2021; Keller et al., 2020; King et al., 2017)—a crucial source for our analysis. Most of the ground truth data studies relied on datasets released by Twitter (e.g., Barrie and Siegel, 2021; Linvill and Warren, 2020; Luceri et al., 2024; Schoch et al., 2022). By contrast, Meta and Reddit provided far less access to such data (DiResta et al., 2022; Lukito, 2020). Notably, platform operators themselves remain cautious when it comes to making clear attribution claims (Nimmo et al., 2023). Appendix A in the online supplementary material contains the full list of reviewed literature and details the screening process.

Observable characteristics

Based on the reviewed literature, we have identified three key observable characteristics of CSMM—scale, elaborateness, and disguise. We focus on these variables because they capture core dimensions of the concept, are straightforward to operationalize, and provide clues about hidden aspects.

First, the scale of a CSMM campaign is defined by the number of coordinated accounts, disseminated posts, and platforms used, as well as the campaign duration. CSMM campaigns rely on scale to maximize visibility (Weber and Neumann, 2021: 5). The Russian-backed Internet Research Agency (IRA) ran large-scale CSMM campaigns to interfere in the United States and other foreign politics (US Justice Department and Mueller, 2018). The IRA campaigns have been well documented with datasets released by Twitter, Facebook, and Reddit—following pressure from Congress—that have been analyzed by various researchers (DiResta et al., 2022; Linvill and Warren, 2020; Lukito, 2020). These campaigns included thousands of accounts that disseminated numerous posts on various platforms—for example, 10.4 million Tweets and 116,000 Instagram posts (DiResta et al., 2022: 229). Through a longitudinal analysis, Lukito (2020) showed that the IRA’s coordinated manipulation commenced on Reddit and was echoed on Twitter, while Linvill and Warren (2020: 455) demonstrated that IRA Tweets responded to US political events. Badawy et al. (2018) found that these campaigns were heavily supported by bots. Another example of a large-scale CSMM campaign was the so-called ‘50c party,’ run by Chinese government employees. Analyzing ground-truth data obtained through leaked e-mail archives, King et al. (2017: 487, 495) estimated that this agency produced approximately 448 million posts in 2013 alone. Schliebs et al. (2021) revealed a small-scale CSMM campaign by uncovering 62 accounts that boosted the visibility of Chinese diplomats in the United Kingdom. To determine the extent of manual labor involved in this output, reliable bot detectors would be desirable (Ferrara, 2022; Rauchfleisch and Kaiser, 2020).

Second, CSMM campaigns vary in the elaborateness of the content they disseminate, which we argue impacts their persuasiveness. Less elaborate CSMM content includes spam (Francois et al., 2023: 3077) or short message fragments, such as those observed by Elmas et al. (2021), who documented the manipulation of Turkish Twitter hashtag trends. Other campaigns involve the coordinated reposting of hyperlinks, as seen in Facebook posts during the Italian and European Parliament elections (Giglietto et al., 2020). In contrast, highly elaborate CSMM campaigns employ carefully crafted narratives and appeal to political identities, such as the IRA campaign that targeted the #BlackLivesMatter movement (Freelon and Lokot, 2020; Starbird et al., 2019: 7). Until recently, implementing elaborate CSMM campaigns required significant resources, but advances in large language models (LLMs) potentially have reduced these costs drastically (Meier, 2024). Research on this topic is still scarce, but initial findings suggest that LLM-generated content is slowly finding its way into CSMM (Cinus et al., 2025; Yang et al., 2024).

Third, CSMM campaigns vary in the sophistication of their disguise techniques, which aim to minimize detectability but provide researchers with clues about what is at stake for the campaign’s principal. Effective camouflaging requires substantial investments in time, labor, and technical expertise. Some campaigns rely on poorly disguised fake accounts, such as those that use generic profile bios or pictures (Mazza et al., 2022: 4). More advanced techniques involve corrupting genuine accounts, as reported by Elmas et al. (2021: 3). An example of a highly sophisticated disguise is, again, the IRA campaign that undermined the #BlackLivesMatter movement by credibly impersonating activists (Starbird et al., 2019: 7). Disguise can involve techniques at the content-level, such as increasing elaboration to enhance credibility or using deletion to obscure traces of activity (Elmas et al., 2021). At the macro level, campaigns may blend with existing movements to camouflage their operations (Starbird et al., 2019: 11).

Hidden characteristics

CSMM campaigns are designed to hide the principal’s identity, the agents’ activities, the targeted audience, and the overall strategy—making reliable evidence difficult to obtain. Nevertheless, analyses of ground truth data released by authorities (Keller et al., 2020), platform operators (DiResta et al., 2022; Schoch et al., 2022), or obtained through leaks (King et al., 2017) have yielded invaluable insights.

Principals differ in the resources they can invest in their CSMM campaigns—such as funds to hire agents or resources to mobilize genuine users for coordinated activity. These resource differences often align with the principal type, which we broadly distinguish as political or nonpolitical.

Political principals may be government-backed or independent (Bradshaw and Howard, 2017: 15). The former often command substantial resources (DiResta et al., 2022; King et al., 2017). A comprehensive study of government-backed CSMM has been conducted by Schoch et al. (2022) who analyzed data on 35 campaigns released by Twitter. Authoritarian regimes frequently employ CSMM, as in the case of the Chinese ‘50c party’ (King et al., 2017) and campaigns supporting the Saudi Arabian regime on Twitter (Barrie and Siegel, 2021). The Russian government has been identified as the driving force behind the IRA’s manipulation of foreign online audiences (DiResta et al., 2022: 224; Lukito, 2020: 238). During Russia’s invasion of Ukraine in 2014, the IRA flooded social media with disorienting content to obscure the government’s involvement (Doroshenko and Lukito, 2021: 4683). A political principal who was not, at least not directly, affiliated with the government was reported by Keller et al. (2020), who analyzed a CSMM campaign launched by the South Korean National Intelligence Service (NIS) that supported the ruling party’s candidate, as documented in the records of a lawsuit. Righetti et al. (2022: 16) uncovered coordinated link sharing in support of the far-right political party Alternative für Deutschland, but could not verify this attribution. Genuine grassroots movements have also exhibited coordinated sharing behavior, such as in the pro-Navalny and pro-government Tweets analyzed by Kulichkina et al. (2024). Attribution in such cases is challenging, since CSMM campaigns may mimic social movements (Keller et al., 2020: 258), as in the case of IRA’s hijacking of #BlackLivesMatter (Freelon and Lokot, 2020; Starbird et al., 2019: 7).

Nonpolitical principals include profit-seeking corporations or individuals. Pacheco et al. (2021: 462) reported a campaign to manipulate cryptocurrencies, while Hobbs et al. (2020) documented a CSMM lobbying campaign for a coal mine. Commercial and political motives sometimes overlap (Giglietto et al., 2020: 881). For example, Pacheco et al. (2021: 459) uncovered a fraudulent Twitter network that pretended to raise funds for US election campaigns, making it difficult to disentangle political and commercial aims.

Agents in a CSMM campaign are the individuals who control social media accounts, either manually or through software. Campaigns may rely on human labor, automation, or a combination of both (Grimme et al., 2018), with CSMM researchers regularly observing semi-automatized behavior (Keller et al., 2020: 265; Linvill and Warren, 2020: 453). Although automation is likely to become more common with the increasing availability of LLMs (Meier, 2024), manual labor remains a key component of CSMM. Keller et al. (2020: 269) illustrated this human component by demonstrating that the coordinated activity of the NIS aligned with regular working hours. Human agents, often referred to as ‘trolls’ (Barrie and Siegel, 2021), operate within diverse organizational structures, ranging from hierarchical setups, as observed in Chinese agencies (King et al., 2017: 493), to informal teams, such as the Indonesian CSMM agents interviewed by Baulch et al. (2024: 9, 14). Agents may be organized in-house, as in case of the Russian GRU, or through outsourced agencies such as the IRA (DiResta et al., 2022). Outsourcing makes attributing a campaign more difficult (DiResta et al., 2022: 225) but also reduces a principal’s control over agents (Keller et al., 2020: 260). CSMM services can be purchased (Nimmo et al., 2023: 5) as easily as fake accounts (Mazza et al., 2022). Some agents are driven by political ideology, as interviews with Indonesian agents revealed (Seto, 2019: 198). Thus, the agents’ organizational structures shape the network characteristics of the coordinated communities (Keller et al., 2020).

The target audience of a CSMM campaign is the population whose beliefs and behaviors should be influenced (Earl et al., 2022: 7; Walter and Ophir, 2023: 422), with a key factor being the audience’s location relative to that of the principal. CSMM may target a domestic public (Barrie and Siegel, 2021; King et al., 2017), a foreign public (DiResta et al., 2022; Doroshenko and Lukito, 2021), or a global audience, as seen in the campaign against the Syrian White Helmets (Starbird et al., 2019: 10). The relationship between a principal and the target audience, along with the resources available to the target audience, determines the stakes for the principal in the case of exposure.

Researchers have identified diverse CSMM strategies. Weber and Neumann (2021: 5) distinguished three types: boosting, which involves ‘heavily reposting or duplicating of content’; pollution, defined as ‘flooding a community with repeated or objectionable content, causing the OSN [online social network] to shut it down’; and bullying, understood as ‘organised harassment’. Bullying can include hate speech (Barrie and Siegel, 2021: 5) and content that discredits opponents (Doroshenko and Lukito, 2021: 4666). Other researchers have highlighted additional strategies, such as increasing polarization in the target audience by amplifying content for opposing sides of a conflict—a tactic used by the IRA to infiltrate US online debates (Freelon and Lokot, 2020; Starbird et al., 2019: 7). Another approach is distraction, whereby campaigns flood social media with unrelated information to divert attention from pressing issues. King et al. (2017: 497) showed that the Chinese ‘50c party’ employed this strategy by focusing on national cheerleading rather than opposing regime critics to deflect public attention from grievances and collective action. However, these strategy types are not mutually exclusive and lack conceptual consistency. Some strategy types (e.g., bullying and polarization) are defined by content characteristics that are absent from other strategies, such as boosting (Weber and Neumann, 2021: 5). To resolve these inconsistencies, our typology builds on the influence strategy underpinning a campaign, defined by its relative emphasis on visibility and persuasion.

Theoretical model

A rational choice model of CSMM

The existing literature provides insights into the observable and hidden characteristics of CSMM campaigns, but a theoretical model that connects these elements is still lacking. To infer hidden information, we developed a model to reconstruct principals’ strategic choices when commissioning CSMM campaigns. While inference necessarily entails uncertainty (King et al., 1994: 8), we argue, that a model that systematizes the relationships between variable elements in a testable way can reduce the uncertainty of attribution (Rid and Buchanan, 2015: 4). The model presented here is intentionally kept simple (Esser, 1993: 119) to account for the data scarcity typically faced by CSMM researchers. Aiming at heuristic utility, we focus on the relationships between campaign scale, elaborateness, and disguise, and the principal’s resources, influence strategy, and stakes.

Our model builds on Esser’s (1999) rational choice framework, which considers macro- and meso-level outcomes as the aggregated results of micro-level decisions. Individuals act intentionally within opportunities and constraints, and have both resources and a desire for resources beyond their control. Esser’s framework formalizes an individual’s choice between behavioral alternatives as an evaluation of the expected utility of outcomes. The utility Uj of a specific outcome is weighted by the expected probability pij that alternative i will result in realizing this payoff. For each alternative, multiple payoffs U[j. . .n] and costs C[j. . .n], weighted by their probabilities pi[j. . .n], are summed, and the alternative that maximizes the expected utility EU (Esser, 1999: 43–44, 246ff.) is chosen. This formalization is useful for clarifying assumed relationships.

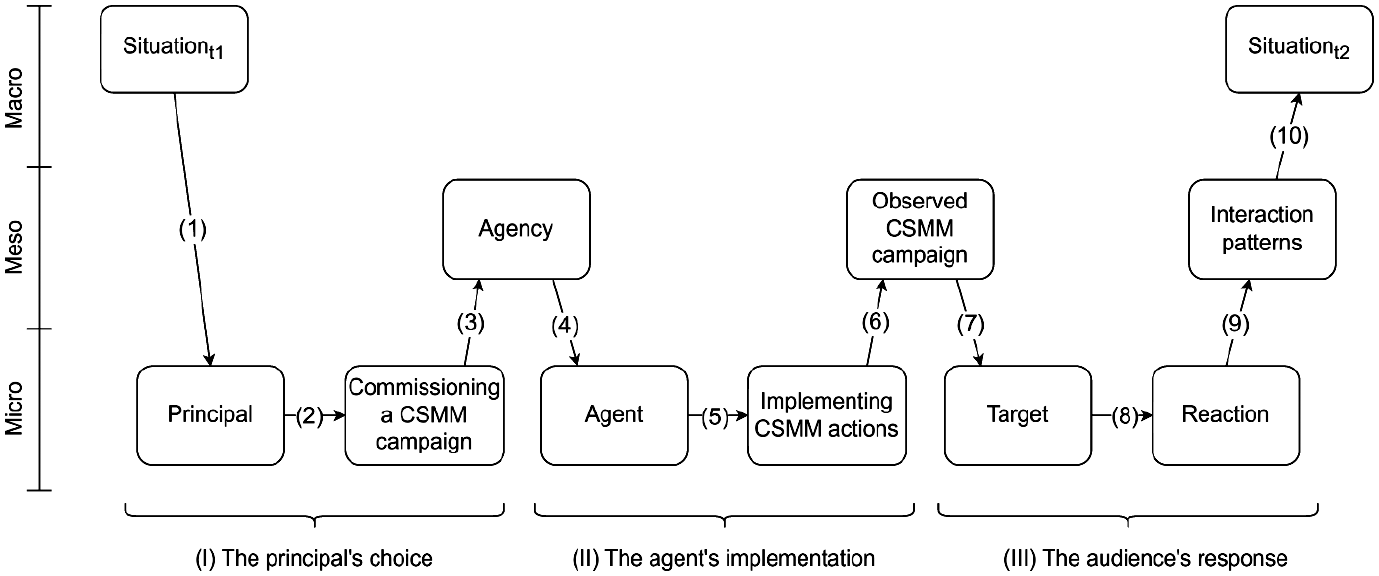

Following this framework, we model a CSMM campaign as a sequence of behavioral decisions of three actors, as illustrated in Figure 2. During Stage I, given a specific context (1), the principal chooses between the available options for commissioning a CSMM campaign (2). This choice results in a meso-level organization of agents (3). During Stage II, individual agents receive instructions from the supervisor of that agency (4) and decide whether to implement the campaign or defect (5), which results in the campaign’s observable characteristics (6). In Stage III, individuals in the target audience are influenced by the campaign (7) and react (or do not react) to it (8). Possible outcomes include no reaction, a reaction intended by the principal, or an unintended reaction. At the meso-level, this results in patterns of interaction with the campaign (9), which aggregate in the societal outcome of the campaign at the macro level (10). This model is, of course, a simplified representation of reality (Esser, 1993: 119). It can be easily expanded to account for more complex processes, such as audience responses, where researchers might want to integrate psychological processes (Zerback and Töpfl, 2022), dynamics of interpersonal communication, or interactions between the audience and campaign actors (Starbird et al., 2019).

Rational choice model of the three stages of a CSMM campaign.

For the attribution task, the principal’s choice about commissioning a specific CSMM campaign—that is, Stage I—is of utmost interest, as it sheds light on the principal’s range of options, strategy, and the campaign context. We concentrate on this stage, but note that researchers have offered significant insights into other aspects. Keller et al. (2020), for example, provided a compelling analysis of Stage II through the lens of principal–agent theory, while Zerback and Töpfl (2022) examined effects of CSMM campaigns (Stage III).

Modeling the principal’s choice

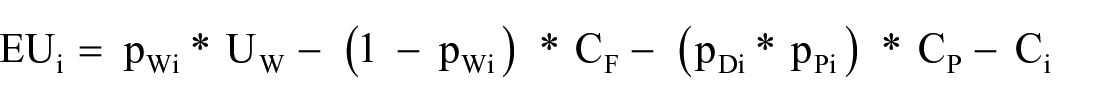

Focusing on Stage I and step (2) in Figure 1, we modeled principals’ strategic choices using an EU function. Again, we have kept the EU function simple to unlock its heuristic potential. The function has four crucial parts. First, a principal is interested in the potential payoff UW of a campaign, weighted by the probability pWi of its success. Second, the principal considers the costs CF in case of campaign failure or nonexecution. If a principal loses nothing by not commissioning a campaign, CF is zero. In other cases, CF might be larger than the potential gain UW. The probability of CF occurring is the inverse expected success probability 1-pW. Third, the principal worries about the penalty costs CP of being detected, such as reputational damage, legal consequences, or international sanctions. These costs are weighted by the detection probability pDi of campaign i and the expected probability pPi that the penalty will be imposed. Fourth, commissioning the CSMM campaign i involves certain costs Ci, which depend on the campaign design. For each available alternative i[1. . .n], the principal weights the expected costs and benefits, formalized as the expected utility EUi of alternative i:

Having developed this formula, we posit bridging hypotheses about how these terms correlate with observable and hidden CSMM characteristics. We focus on the observable characteristics scale, elaborateness, and disguise, and their relationship to the principal’s resources, stakes, and influence strategy. Focusing on such structural features helps researchers avoid errors resulting from content-based attribution, since campaign content can be intentionally misleading—as in the case of IRA campaigns that posed as progressive activists (Freelon and Lokot, 2020).

First, starting from the right side of the formula, we assume that the certain costs Ci of campaign i would increase with the scale of the campaign, the elaborateness of its content, and the sophistication of its disguise. Mass dissemination of elaborate content using sophisticated disguises requires more labor, time, and/or technical skills than relying, for example, on copying and pasting. We expect that the three observable characteristics would correlate positively with the resources commanded by a principal.

Second, the expected penalty (pD * pp) * CP resulting from detection should vary with campaign characteristics and the relationship between the principal and the target. The probability pD of a campaign being detected increases with its scale, as the number of opportunities of being monitored increases, and decreases with the sophistication of its disguise. Importantly, CP is also weighted by the expected probability pP of sanctions being imposed. This term varies according to the resources controlled by the target. Target audiences that control effective resources—such as a democratic parliament, armed forces, or United Nations security council membership—are better positioned to impose penalties on manipulators than less powerful targets, such as citizens of authoritarian countries. Principals who are subject to democratic scrutiny are likelier to be held accountable for manipulation than autocrats. Also, democratic principals may face greater reputation costs CP than autocrats. In the case of foreign influencing operations, the costs CP of being detected can increase dramatically—ranging from international sanctions to military conflict (DiResta et al., 2022: 225)—and depend on the target’s resources. Consequently, we argue that the effort put into a campaign’s disguise reveals what is at stake for the principal.

Third, in some cases, the costs CF—arising when principals miss the opportunity to realize Uw due to campaign failure or inaction—are negligible. However, in high-stakes scenarios—such as an autocrat facing a democratic uprising—inaction can have fatal consequences. In such cases, principals may attempt to flood social media with irrelevant content to disrupt online mobilization (King et al., 2017). We expect CF to be positively correlated with the resources controlled by the target audience.

Fourth, Uw denotes the payoff from campaign success. Ultimately, ‘campaign success’ is defined by the principal and can include increasing polarization in foreign populations (Freelon and Lokot, 2020), demobilizing domestic social movements (King et al., 2017), or monetization (Pacheco et al., 2021). Central to the size of UW is the relative advantage that a principal gains over contenders. Crucially, UW is weighted by the expected probability pWi, which indicates how suitable the principal considers a specific campaign i for influencing the target audience in the desired way. The balance between the scale and elaborateness of a campaign reveals the specific influence strategy of a CSMM campaign.

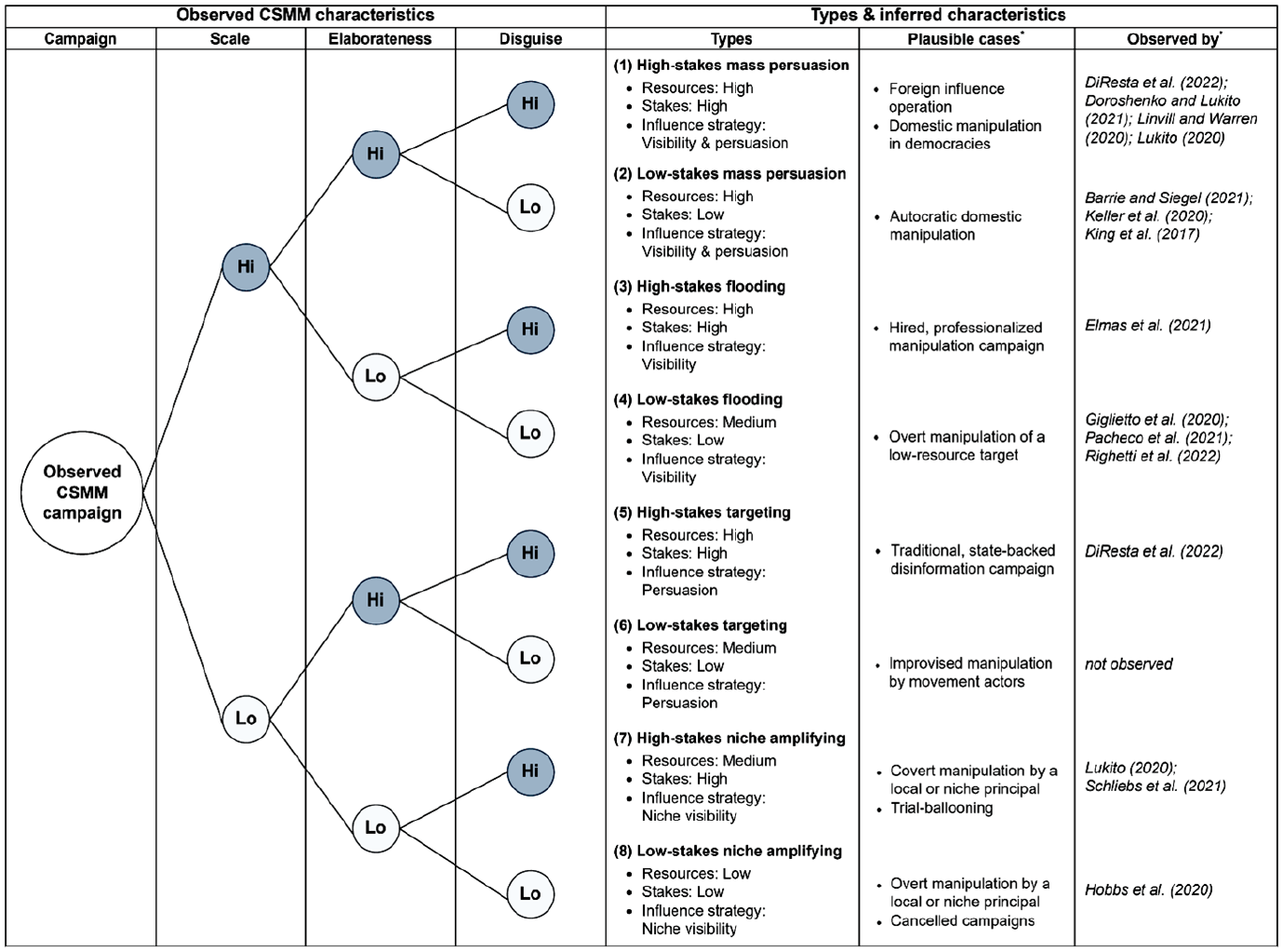

A typology of CSMM campaigns

Our theoretical model allows us to link observed and hidden campaign characteristics. Focusing on dichotomous manifestations of scale, elaborateness, and disguise, we constructed a typology of eight different CSMM campaigns, as shown in Figure 3. For each type, we provide examples from the literature and demonstrate which hidden information about the resources, stakes, and influence strategy of a campaign and its principal can be inferred. In addition, we present plausible cases of each campaign type to stimulate tests of the generalizability of our typology in future research.

Typology of CSMM campaigns and inferred hidden characteristics.

The first type, high-stakes mass persuasion, is characterized by a large-scale, high elaborateness, and a highly sophisticated disguise. According to our model, such campaigns are commissioned by principals who command significant resources. Since principals invested considerable efforts in the disguise of the campaign, the stakes must be high. Such campaigns strive to exert influence through mass persuasion by disseminating elaborate content on a large scale. The most striking examples of this type are Russia-backed IRA campaigns targeting foreign publics, which were notoriously large-scale campaigns that operated on multiple platforms over extended periods (DiResta et al., 2022: 229; Linvill and Warren, 2020: 448; Lukito, 2020). The Russian campaigns were also elaborate, employing refined rhetorical techniques to appeal to identities and emotions (Freelon and Lokot, 2020; Kondratov, 2024) and to mislead global audiences (Doroshenko and Lukito, 2021: 4683). These manipulations were extraordinarily well disguised, successfully mimicking activists and infiltrating online communities (Starbird et al., 2019: 7–8), showing that the principal considered the operations risky. Apart from state-backed foreign influence operations, manipulations of domestic audiences in democracies seem to be the most plausible cases of this type.

The second type, low-stakes mass persuasion, is characterized by a large scale and a high elaborateness but a less-sophisticated disguise. Such campaigns also aim for mass persuasion and require considerable resources, but the stakes in the case of detection appear to be lower. The most evident example of this type of campaign is the activity of the Chinese ‘50c party’ described by King et al. (2017: 495), for which the researchers estimated a yearly output of several million posts. Although the content disseminated by the ‘50c party’ appeared shallow, it was linguistically elaborate (King et al., 2017: 498). However, the accounts displayed no real effort to conceal their connections to the regime. Similar observations were made for coordinated Tweets linked to Saudi Arabia (Barrie and Siegel, 2021). We suspect that authoritarian regimes manipulating domestic audiences face little risk if they are exposed. An unexpected case of this type was the South Korean NIS’s campaign (Keller et al., 2020). Given that their goal was to manipulate a democratic election, a more sophisticated disguise would have been expected. The lack of camouflage may have resulted from a misjudgment of the detection risk by the NIS, since this was one of the earliest CSMM campaigns to be detected (Keller et al., 2020: 256).

The third type, high-stakes flooding, includes large-scale campaigns that disseminate unelaborate content but invest considerably in disguise. We infer that principals of such campaigns have sufficient resources and face high stakes, and that their influence strategy prioritizes visibility over persuasion. The campaign described by Elmas et al. (2021: 13), in which Turkish hashtag trends on Twitter were manipulated through the rapid mass dissemination of text snippets that were deleted within seconds, is an example of this type. A plausible explanation for this is that the effort invested in disguise was driven by commercial self-interest.

The fourth type, low-stakes flooding, follows the same influence strategy but neglects disguise. Hence, we assume that the stakes and resources invested would be considerably lower. Researchers have documented numerous examples of this type, including campaigns that repeatedly tweeted identical messages, as observed in the context of the Syrian civil war (Pacheco et al., 2020: 611); coordination based on retweeting (Schoch et al., 2022); and coordinated link sharing (Giglietto et al., 2020). These campaigns made little effort to conceal their activities, suggesting that the principals perceived little risk of exposure. The lowest common denominator of these campaigns might be that the target audiences were not able to effectively sanction the principals.

The fifth type, high-stakes targeting, is characterized by small-scale campaigns, which are relatively rarely reported in the literature. This type of campaign entails posting well-disguised, elaborate content aimed at a small audience. Nevertheless, such campaigns require considerable resources and operate in high-risk environments. The clearest example of such a campaign is the activity of the Russian intelligence service GRU, which used 33 Facebook pages to disseminate long-form content from feigned citizen-reporters in Syria (DiResta et al., 2022: 229). Overall, this campaign type resembles traditional disinformation strategies (DiResta et al., 2022: 239).

The sixth type, low-stakes targeting, is defined by small-scale campaigns with poor disguise and elaborate content. The stakes of such campaigns are arguably as low as the invested resources. However, the analyzed studies reported no campaigns with this combination of features.

The seventh type, high-stakes niche amplifying, is characterized by a similarly peculiar combination of features, involving small-scale campaigns with low elaborateness and sophisticated disguise. Schliebs et al. (2021) observed such a campaign executed by a group of accounts linked to the Chinese embassy in the United Kingdom. These accounts impersonated UK citizens but used repetitive vocabulary (Schliebs et al., 2021: 14). We suspect that this campaign had the narrow objective of increasing the visibility of the ambassador on Twitter and therefore required limited resources. The level of disguise might be explained by its manipulation of a foreign audience. Another example of this type is the IRA’s trial balloon campaigns on Reddit, as observed by Lukito (2020: 238).

Finally, in the eighth type, low-stakes niche amplifying, all three characteristics are low. Hobbs et al. (2020) documented a campaign of this type that disseminated duplicate Tweets lobbying for a coal mine in Australia, which, again, was a niche audience. We assume that the stakes were low for the principal—in the observed case allegedly a multinational company based in India.

Discussion and conclusion

CSMM campaigns can fundamentally distort public opinion formation and political decisions. Detecting and characterizing such campaigns and attributing them to their principals are essential tasks for social scientists, computer scientists, and platform operators to mitigate their impact. While researchers have made strides in detecting (Pacheco et al., 2021; Schoch et al., 2022; Weber and Neumann, 2021) and characterizing CSMM (DiResta et al., 2022; Keller et al., 2020; King et al., 2017), we have addressed the neglected attribution problem of determining the identity, motives, and strategies of the principals behind such campaigns (Rid and Buchanan, 2015).

This study made four key contributions. First, we synthesized extant conceptual contributions and formulated a consolidated definition of CSMM. Second, we identified key observable characteristics (scale, elaborateness, and disguise) and hidden features (principal resources, stakes, and influence strategy) of CSMM campaigns through a review of the empirical literature. Third, we developed a rational choice model that systematically links observable characteristics to the hidden aspects of principals’ strategic decision-making, clarifying how campaign attributes reflect the principals’ interests. Fourth, we constructed a typology of eight CSMM campaign types, illustrating how variations in scale, elaborateness, and disguise reveal insights into principals’ resources, stakes, and influence strategies.

Our theoretical model advances the field of CSMM research by bridging the gap between the observable campaign features and their hidden dimensions. It provides researchers with a systematic approach to infer the characteristics and motivations of principals and offers a foundation for generating testable hypotheses for empirical studies. We hope that our model and typology provide researchers who detect CSMM campaigns of unknown origin (e.g., Elmas et al., 2021; Pacheco et al., 2020) with a useful heuristic to attribute them. Our approach can inform comparative research across platforms and political contexts, facilitating a deeper understanding of CSMM dynamics.

However, our study has limitations. Attribution research is inherently constrained by the availability of data, as only detected campaigns can be analyzed. Many CSMM campaigns remain undetected, leaving gaps in our understanding (Martin et al., 2023: 4). One indication of such a restriction is that we found no examples of the sixth type of campaign, low-stakes targeting. In addition, although our focus on observable campaign features—scale, elaborateness, and disguise—provides a clear analytical framework, it does not account for all possible design choices, such as the organization of agents (DiResta et al., 2022; Keller et al., 2020; Starbird et al., 2019) and the strategies of distraction (King et al., 2017), polarization (Freelon and Lokot, 2020), and the discrediting of opponents (Doroshenko and Lukito, 2021). Moreover, we focused on user-generated content and did not consider ad-based influence operations (Kim et al., 2018). Researchers should explore these dimensions further to provide a more comprehensive understanding of CSMM, drawing on our model’s integration of these aspects.

We emphasize that our theoretical model and typology require systematic empirical validation by future researchers. Tests will be ideally based on ground truth data on CSMM campaigns and their attributed principals, spanning diverse platforms and contexts. However, obtaining such data are challenging. Some researchers have extracted aggregate data about influence operations and their origins from studies and media reports (Martin et al., 2023) or from platform operators’ threat reports (Mugurtay et al., 2024). Granular digital trace data, originally released by Twitter and providing the basis for several studies reviewed above (e.g., Luceri et al., 2024; Schoch et al., 2022), has been republished by Seckin et al. (2024). Although Meta continues to provide detailed reports on coordinated campaigns (Nimmo et al., 2023), it has not made any raw ground-truth data publicly available. We believe that a request within the European Union’s Digital Services Act could significantly improve this data basis.

To facilitate comparative research, CSMM scholars must also strive to integrate efforts across academic disciplines, working toward a shared conceptual and operational understanding of CSMM. Despite recent helpful syntheses (Mannocci et al., 2024), the field of research still lacks consensus on key issues, such as when group behavior qualifies as coordinated, how manipulative intent can be reliably discerned, and what evidence is sufficient to support attribution. Establishing common criteria is essential for facilitating direct comparisons across studies.

Different disciplines contribute uniquely to advancing CSMM research. Computer scientists and computational social scientists have significantly contributed to improving detection methods (Pacheco et al., 2021; Schoch et al., 2022; Weber and Neumann, 2021), crucially enhancing the ability to detect text-based coordination (Graham et al., 2024) and visual patterns of coordination (George et al., 2024). However, LLMs challenge these detection methods and affect the costs of what we have termed scale and elaborateness (Meier, 2024). Therefore, it is important to continue with pioneering studies that estimate the proportion of LLM-generated content in CSMM (Cinus et al., 2025) and to adapt available detection tools. Social scientists have deepened our understanding of the social processes underpinning CSMM campaigns (Keller et al., 2020; King et al., 2017; Zerback and Töpfl, 2022) and have conducted important comparative research (Lukito, 2020; Schoch et al., 2022). Cybersecurity and digital authoritarianism scholars have improved our ability to differentiate between coordinated and spontaneous online activity (Francois et al., 2023) and have examined how authoritarian regimes strategically deploy digital manipulation and repression techniques, including CSMM (Sirikupt, 2024). However, large-scale comparative studies to systematically examine the strategic choices that drive digital manipulation are lacking.

Advancing such a research agenda will require a deep integration of computational approaches with social science theories and research designs. Strengthening interdisciplinary collaboration will be essential for improving the evidence base to characterize and attribute coordinated campaigns, thereby driving progress in CSMM research and mitigation. Consistent with the cybersecurity literature (Rid and Buchanan, 2015: 7), we emphasize that effective detection, characterization, and attribution require methodological triangulation—integrating computational approaches with qualitative analyses of campaign details. This article aims to foster interdisciplinary dialogue and contribute to a more comprehensive understanding of CSMM.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448251350100 – Supplemental material for Attributing coordinated social media manipulation: A theoretical model and typology

Supplemental material, sj-pdf-1-nms-10.1177_14614448251350100 for Attributing coordinated social media manipulation: A theoretical model and typology by Daniel Thiele, Miriam Milzner, Annett Heft, Baoning Gong and Barbara Pfetsch in New Media & Society

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the German Federal Ministry of Education and Research under Grant number 16DII135 [in the context of the Weizenbaum Institute].

Supplemental Material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.