Abstract

Why and how do autocracies discursively conduct digital astroturfing against their populations? As these regimes increasingly co-opt social media to manipulate online political discourse, the current political disinformation literature continues to privilege a Cold War paradigm, focusing on countries that dominate Western foreign policy priorities and concerns. Its normative underpinnings overshadow other understudied cases that have also been weaponizing social media to manipulate domestic online discourses. Concurrently, findings from ethnographic interviews and big data approaches remain theoretically disconnected from the long-held logics of regime stabilization. I argue that regime digital astroturfing follows offline events that spark online discourses with collective action potential. In countering these discourses, regime-linked digital astroturfers exploit social media affordances to discursively cheerlead the regime and devalue regime challengers to create a semblance of popular support for the former. I adopt a mixed-methods approach informed by Contextual Text Coding to examine 14,430 tweets linked to the Royal Thai Army in February 2020 to subject my central propositions to empirical scrutiny. My findings demonstrated that astroturfing efforts on Twitter throughout this period, which discursively cheerleaded the military/autocratic regime of General Prayut Chan-o-cha and devalued their pro-democratic opposition with negative frames, were spurred by a mass shooting incident in the city of Korat that exposed the ruling elites to widespread online backlash. This contribution deepens case-specific knowledge about an understudied case in the literature and also strengthens our understanding about the adaptations of authoritarian legitimation strategies in the digital era and the militarization of social media communication.

The New Menu of Digital Deception

Why and how do autocratic regimes discursively conduct digital astroturfing against their populations? Autocracies have increasingly transcended the mere suppression of online criticisms to proactively subverting social media communication to manipulate online political discourse (Gunitsky 2015). 1 Since Russia’s interference in the 2016 US presidential elections (US Department of Justice 2019), there has been mounting evidence of digital astroturfing in autocracies in the Global South (Bradshaw and Howard 2019; Jones 2022). Falling under the purview of image management, these regimes have created legions of so-called “cyber troops” that masquerade as ordinary, autonomous individuals in disseminating online information to create a semblance of popular support for the regime and resentment for its challengers (Bradshaw and Howard 2019; Kovic et al. 2018). Whether government-run or outsourced (DiResta et al. 2022), regime-linked actors exploit social media’s relative anonymity to shape the public’s positive identification with autocratic rule (King et al. 2017).

Notwithstanding significant empirical advances in political disinformation research that demonstrate autocracies’ abilities to exploit properties of a complex hybrid media system to legitimize their rule (see Bradshaw et al. 2022; Chadwick 2017; King et al. 2017; Xia et al. 2019), the current literature suffers from two major shortcomings. The first is theoretical: while ethnographic interviews and big data approaches (e.g., topic modeling and sentiment analysis) have provided substantial insight into the organizational structures of digital astroturfing campaigns and the discursive elements of these deceptive activities (Ong and Cabañes 2019; Stanford Internet Observatory 2020; Xia et al. 2019), these findings remain disconnected from the long-held logics of regime stabilization and survival (cf. Gerschewski 2013; cf. Schlumberger et al. 2023). The theory presented here offers a causal logic for why we should pay closer attention to regime threat perception: it reveals valuable information about the stressors confronting a given regime, which, in turn, shape regime choices about digital astroturfing. The second is normative. Because the current literature privileges a Cold War paradigm, the fixation on revisionist autocracies like Russia and China overshadows other countries that have also weaponized social media to manipulate domestic online discourses (Jones 2022: 7–8).

Drawing on insights from research on collective action potential, this article employs a theoretical framework that situates authoritarian digital astroturfing through the lens of regime threat perception. My argument is twofold. First, regime digital astroturfing follows offline events that spark online discourses with collective action potential. Because of the underdeveloped institutional mechanisms for accommodating grievances that typically characterize autocratic rule (Mason 2004: 275), events such as natural disasters, mass shootings, and political scandals can spark online collective expressions that have the potential to mobilize real-world protest formation and related activities that threaten regime stability (King et al. 2013: 2). Second, in countering these discourses, regime-linked digital astroturfers exploit social media affordances to discursively cheerlead the regime and devalue regime challengers to create a semblance of popular support for the former.

To illustrate these propositions, I adopt a microapproach informed by Contextual Text Coding (CTC) to systematically code 14,430 tweets linked to the Royal Thai Army (RTA) in February 2020 based on their discursive tactics and frames embedded in them (Lichtenstein and Rucks-Ahidiana 2021). While recent data disclosures and Thailand-centric research have illustrated the broader tactical and discursive strategies of RTA digital astroturfing operations on platforms like Twitter and Facebook under the military government of General Prayut Chan-o-cha, they remain largely limited to exploratory data analysis and small-N qualitative analyses (cf. Goldstein et al. 2020; cf. Sombatpoonsiri 2018). 2 My micro-approach builds on these empirical knowledge and offers an in-depth, large-N qualitative study of textual data to highlight the types of tweet tactics and discursive frames that RTA-linked assets promoted, as well as linking the results to the broader theoretical construct of regime threat perception to illustrate an important causal factor driving these tweeting patterns.

My findings provide initial empirical support for my propositions. On February 8, 2020, an army sergeant wronged by his commanding officer committed a mass shooting in the city of Korat, which sparked widespread public backlash against the ruling elites. This incident prompted army-linked assets on Twitter to intensify their discursive campaigns to defend the army and the Prayut government while discrediting pro-democracy politicians over the course of the month. These digital astroturfers extensively cheerleaded the regime, particularly by discursively repairing civil–military relations through praiseworthy comments and selective retweets of pro-army and pro-government content. Concurrently, they also exploited the same platform affordances to discursively devalue and discredit its main political challenger, the Future Forward Party (FFP).

This article provides three important contributions that address noticeable gaps in the political disinformation literature. First, my framework shows how situating traces of regime digital astroturfing through the lens of regime threat perception generates ex ante expectations about when the strategy might be adopted. Second, because tweets are convulsed forms of data, a qualitative data-driven probe of trace data allows us to extract different types of grounded meaning concerning the tactical and discursive qualities of army-linked tweets that automated outputs overlook. Qualitative coding allows researchers to obtain deep, textured, and contextualized understandings of these tweets (Nelson 2020: 5; Tanweer et al. 2021: 14), thereby increasing nuance, interpretability, and recognition that they are shaped by the autocrat’s domestic threat environment. Finally, the choice of Thailand as a single case study answers increasing calls to expand the study of political disinformation beyond the Cold War paradigm (Wasserman and Madrid-Morales 2022: 52), thus providing updated empirical insights for an understudied case in the literature and further informs theory-building about the relationship between collective action potential and regime digital astroturfing.

This article consists of seven parts. The next section situates authoritarian digital astroturfing through the lens of regime threat perception vis-à-vis collective action potential. The third discusses how digital astroturfers discursively construct appearances of regime support and resentment of its challengers. The fourth introduces RTA-linked digital astroturfing under the Prayut government as a case study. The fifth explains my data collection and coding processes informed by the CTC approach. The sixth presents the quantitative results and a targeted qualitative analysis of the coded tweets. Finally, I discuss my findings’ implications for understanding autocratic legitimation in the digital age and the militarization of social media communication—two pertinent issues that straddle the fields of political communication and digital authoritarianism.

Regime Digital Astroturfing as a Response to the Perceived Threat of Collective Action Potential

A central assumption in the authoritarianism literature posits that political survival is a key objective of autocrats. Scholars have focused on the ways they, as rational actors, adopt a variety of strategies including repression, co-optation, and legitimation to ensure regime stability in the face of domestic threats (Frantz and Kendall-Taylor 2014; Gerschewski 2013; Schlumberger et al. 2023).

A great deal of existing research on contentious politics shows how mass protests can become a death knell for even the most well-entrenched autocracies (Geddes et al. 2014; Lynch 2012). Rulers are wary not just about these events in and of itself but also individuals who can lead discussions that initiate them (King et al. 2013: 2; Koesel and Bunce 2011). The rise of social media platforms, which create more interpersonal communications between different receivers that lead to information sharing at significantly greater speed and volume (Liang 2018: 528), presents a dilemma for autocrats. While these tools create an alternative avenue of persuasion that enhances authoritarian image management (Dukalskis 2021), they also enable regime challengers to bypass traditional media gatekeepers to promote their alternative political visions, thus initiating and sustaining political discussions about their governments that enhance collective action potential (Bennett and Segerberg 2011; Diamond 2010; Hamdy 2010; Hamdy and Gomaa 2012; Howard and Hussain 2013; Lim 2012).

Collective action potential refers to collective expression—many people communicating on social media on the same subject—about actual collective action (e.g., protests) and about events that seem likely to generate collective action but have not yet done so (King et al. 2013). Most autocracies routinely grapple with dissent and contentious actions that challenge the foundational ideological justification that legitimizes their claims to power (Schedler 2018: 47). Discourses with collective action potential, however, not only challenge hegemonic narratives, but they also facilitate the mobilization of anti-regime protests that have threatened autocratic survival and led to actual autocratic breakdowns in some cases (King et al. 2013; Lim 2012; Matsilele and Ruhanya 2020). Thus, we should expect the regime to employ digital astroturfing to manage online discourses with collective action potential. King et al.’s (2017) research on digital astroturfing in China provides empirical support for this intuition: Government-linked trolls on Weibo increased their social media activities and shifted topics during moments of social unrest.

While collective action potential is not always easily observable for the regime (Kuran 1991), scholars have developed useful indicators to help determine ex ante where perceived fears of collective action potential in the online sphere are likely to motivate regime response. Rulers may infer the level of collective action potential from the relative strength of the political opposition, such as the latter’s ability to organize protests or the number of votes, they receive during an election (Steinert 2023: 354). 3 In the digital era, regime challengers have also relied on social media platforms to mobilize citizens based on structural and/or incidental grievances. 4 King et al. (2014) observed that Chinese citizens can write the most vitriolic blog posts about even the top Chinese leaders without fear of censorship, but if they write in support of an ongoing protest—or even about a rally in favor of a popular policy or leader—they will be censored. Relatedly, Martin et al. (2023: 874) noted that the first domestic influence effort in Russia began in 2011, when protests in the country catalyzed the creation of a pro-Kremlin trolling force.

While authoritarian management of public discourses with high collective action potential is expected since legitimation is crucial for the maintenance of regime stability, 5 the nature of digital astroturfing is novel and significant as social media platforms become increasingly vulnerable to political manipulation by anti-democratic actors with access to manpower, computing power, and material resources. These deceptive activities are characterized by the appropriation of digital personas that grant operators—be they government-run operations executed by state organs (e.g., military and intelligence services) or outsourced operations conducted by contractors or citizen troll armies (DiResta et al. 2022)—anonymity in the online sphere (Xia et al. 2019). This relative anonymity impedes internet users’ abilities to distinguish between genuine behaviors and inauthentic coordinated behaviors. Thus, the regime can disguise state-linked actors as “credibility cues” to serve its stabilization goals (Chadwick and Stanyer 2022: 11–12). Bulut and Yörük (2017) found that Twitter trolls in Turkey hired by the Justice Development Party demonized protesters during the Gezi Park demonstrations in 2013. In Thailand and the Philippines, government-run and outsourced trolls have also been deployed to support authoritarian leaders in the respective countries (Ong and Cabañes 2019; Sombatpoonsiri 2018).

How Digital Astroturfers Manipulate Online Discourses With Collective Action Potential

Three common discursive tactics that digital astroturfers adopt to manipulate online discourses in favor of the ruling elites can be derived from current research on political disinformation and digital authoritarianism: cheerleading, devaluation, and hoax spreading (DiResta et al. 2022; Goldstein et al. 2020; King et al. 2017; Wong and Liang 2021). First, cheerleading refers to organized efforts aimed at amplifying information that promote and/or defend the regime; it entails the dissemination of legitimizing messages that encompass the creation of positive, beneficial, or ethical justifications to make the regime’s actions or views acceptable (see Igwebuike and Chimuanya 2020: 46; see von Soest and Grauvogel 2017: 3–5). Second, devaluation refers to the amplification of information that discredit political opponents and critics; it entails the dissemination of delegitimizing messages that create negative, unethical, or immoral justifications to render the actions and views of regime challengers as unacceptable (see Edel and Josua 2018; see Igwebuike and Chimuanya 2020: 46). Third, hoaxes are specific kinds of disinformation that contain deliberately false information made to masquerade as facts (Loveless 2020: 66). Scholars have, for instance, observed that Russian propaganda aims to create an information environment where the (objective) truth feels unknowable (Bradshaw et al. 2022; DiResta et al. 2022; Xia et al. 2019). 6 This includes peddling conspiracy theories that the United States had shot down Malaysia Airlines flight MH17 over eastern Ukraine in a botched attempt to target Putin’s personal jet. 7

These discursive tactics are borne out of complex usages of social media affordances, that is, their interactivity features (Barrie and Siegel 2021). For example, Twitter has addressivity markers such as “RT” (retweet), “#” (hashtag), and “@” (mentions/direct comments). While “@” allows users to express their thoughts on posts linked to the source account, RT allows them to repost certain content across and beyond peer networks (Honeycutt and Herring 2009: 1; Meraz and Papacharissi 2013: 144). Because social media companies seek to enhance their platforms’ values with design structures and incentives that increase a given content’s visibility (Van Dijck and Poell 2013: 6–9), algorithmic processes tend to popularize morally and emotionally evocative content regardless of whether they are factually true or not (Berger and Milkman 2012; Van Bavel et al. 2024). The combination of this popularity logic and addressivity markers, which heighten the relevancy of certain actors and certain content (Meraz and Papacharissi 2013: 144; Van Dijck and Poell 2013), enables regime-linked digital astroturfers to become agents of influence capable of manipulating online discourses by selectively flooding social media feeds with pro-regime posts.

Selective reposts and direct comments particularly aid discursive framing efforts that seek to “thematically reinforce clusters of facts, judgments, or beliefs” about the regime and its political rivals and other domestic challengers (Entman 1993: 52). By amplifying messages that align with hegemonic narratives dictated from above (Dukalskis 2017: 142), they drown out alternative political narratives and reinforce the regime’s legitimating claims even if people may not fully believe them (Dukalskis 2017: 26–9). Again, the Twitter trolls in Turkey hired by the Justice Development Party offer an illustrative example: They employed populist communication that extolled the Erdogan government’s provision of public services while demonizing the Gezi Park protesters (Bulut and Yörük 2017: 4104–8). Similarly, Singapore’s ruling People Action Party established “internet brigades” to “soft sell” the government’s controversial policies (Tan 2020: 1076).

Case Selection: RTA-Linked Digital Astroturfing on Twitter Under the Prayut Government

I adopt a single in-depth case study of RTA-linked digital astroturfing on Twitter under the military/autocratic regime of General Prayut Chan-o-cha to subject my central propositions to empirical scrutiny and to broaden our understandings of these operations in autocratic contexts beyond the Cold War paradigm. I chose to focus on the RTA because it is a military institution that is embedded in Thai politics (Kongkirati and Kanchoochat 2018). As military spending surged under the Prayut government (Punthong 2024: 142), the army’s online activities also became more proactive and penetrating. RTA units like the Internal Security Operations Command assumed authority over cyber surveillance (Pawakapan 2021: 3). The RTA also exhibited an in-house capacity to conduct digital astroturfing against the local population (Goldstein et al. 2020; Sombatpoonsiri 2022). 8 Several leaked internal documents also suggested that RTA employed 17,000 individuals to create and share disinformation, including training its personnel to avoid Twitter bans (Freedom House 2022).

Additionally, there are two important prima facie evidence of collective action potential in the Thai digital sphere—particularly on Twitter—that further make RTA-linked digital astroturfing an illuminating case study of how a government institution, that is, the military, can weaponize social media platforms to shape online political discussions amidst public backlash against the regime. This evidence is borne out by the country’s relatively higher internet freedom in comparison to other autocratic regimes and the strength of the political opposition.

In terms of internet freedom, Thailand—despite becoming a “closed autocracy” under Prayut’s rule—still enjoyed higher internet freedom than other closed autocracies in Asia such as China, Myanmar, and Vietnam, and even higher than electoral autocracies like Venezuela and Russia (Freedom House 2020: 31). To date, young Thai citizens on Twitter have used the platform to not only discuss their entertainment interests, including K-pop, Thai-pop, and Thai evening soap opera television shows, but also to explore controversial and political topics (Sinpeng 2021: 196). This relative internet freedom enabled regime challengers—particularly young pro-democracy supporters—to use openness and relative anonymity of Twitter to challenge the Prayut regime’s nationalist-royalist ideology that centers on the supremacy of nationalism, monarchism, and Theravada Buddhism as the foundation of Thai national identity (Dressel 2010; Sinpeng 2021; Sombatpoonsiri 2020: 110–11). After Prayut seized power from the democratically elected government of Yingluck Shinawatra in May 2014, student activists were at the forefront of offline and online pro-democracy activism, including initiating critical discussions about the monarchy, human rights abuses, and alleged corruption scandals (Lertchoosakul 2022). One-third of the most popular Twitter hashtags in 2020 were related to anti-government protest activities that included calls for monarchy reform (Sinpeng 2021: 196). This led General Apirat Kongsompong, then RTA commander-in-chief, to frame social media as a site of “hybrid warfare” that allowed critics to spread “fake news” and “propaganda” about the military and the monarchy. 9

Second, while Prayut successfully retained power after the 2019 general elections pro-democracy supporters managed to push the political party they supported to third place (McCargo 2019: 91). The popularity of FFP, a newly formed anti-regime political party in 2018 that advocated for the demilitarization of Thai politics and monarchy reform, accentuated the regime’s perceived threat of collective action potential. FFP began to use Twitter to mobilize support among the youth before the 2019 election, which allowed many first-time voters to use the platform to speak their minds about the military’s tight grip on Thai politics. 10 After finishing third with more 6.2 million popular votes in the 2019 general elections, FFP quickly became the main target of political repression and delegitimization before it was dissolved in February 2020 (McCargo 2019: 99). 11 In October 2020, Twitter independently disclosed 21,385 tweets from 926 fake accounts linked to the RTA which extensively praised the military and the monarchy while attacking FFP (Goldstein et al. 2020; Twitter 2020a).

Data and Method

My research design adopts a mixed-methods approach to preserve context-specific results for a large-N study of textual data (Lichtenstein and Rucks-Ahidiana 2021). I downloaded Twitter metadata (e.g., user account information, tweet, and tweet date and time) from its “Hashed Information Operations Archive” and selected a total of 14,430 tweets that the company flagged in February 2020 for my examination (Twitter 2020b). 12 Compared to other time periods in the archived dataset, this month alone recorded more than one-third (67.5%) of the total tweets in the disclosure, that is, 21,385 tweets. The high frequency of tweets coincided with widespread public backlash following the mass shooting incident in the city of Korat on February 8. The fact that the perpetrator was an army sergeant enhanced FFP’s calls for military reform. Additionally, the tweets also expanded to cover other social and political topics including the spread of the COVID-19 virus, threats to human security including drought and wildfires in northern Thailand, controversies involving FFP e.g., financial loans made to the party from its leader and one of the party’s senior members’ admission to overstaying in an army residential unit, the constitutional court’s decision to dissolve FFP, and the youth-led pro-democracy protests against the verdict. The varying themes and events during this month, therefore, offer a rich opportunity to interpret meaning and connect ideas that appear unrelated on the surface (Tanweer et al. 2021: 16)—that is, in the context of this study, how the discursive elements of RTA-linked digital astroturfing bore traces to the regime’s perceived threat of online discourses with collective action potential.

While this disclosure may be a small fraction of RTA-linked digital astroturfing activities on Twitter since operatives go to great lengths to hide their tracks (which may partly explain why only 926 accounts of the 17,000 army-linked operatives were detected) (Goldstein and Grossman 2021), the Thailand dataset contains the most credible and expansive trace data linked to a governmental actor available to date. While attribution problems are well-established (Iramine et al. 2020), 13 Twitter nevertheless has access to trace data including IP addresses and device IDs to detect digital astroturfing activities (DiResta et al. 2022: 230). Further, the company became increasingly specific in their attributions (i.e., not just to a country, but to a specific governmental actor) from 2018 to 2020 thanks to collaborations with journalists and civil society groups to expose them (Goldstein and Grossman 2021). In this regard, Twitter may have likely selected accounts based on information from FFP. The disclosure came eight months after a censure debate in late February 2020 where former FFP member of parliament Wiroj Lakkana-adisorn accused the Prayut government and the army of systematically disseminating propaganda on social media platforms. 14

Once I downloaded these tweets, I divided my coding process into three phases: identification of variables, development of a coding ontology, and implementation of the ontology. The ontology—iteratively developed in several stages using inductive and deductive reasoning—casted a wide net on the range of tactical orientations and associated valence frames to illustrate my central propositions. First, I examined a random 10 percent sample of tweets from February 2020 (n = 1,143) via an open, inductive coding to ground my intuition. This process allowed distinctive categories related to their tactical orientation and frames to emerge from the data. I further revised the categories against Edel and Josua’s (2018: 884) “endangered values versus harmful behavior” categories to account for additional frames. In total, four distinct tactical orientations and sixteen distinct, thematically cohesive valence frames were formally established.

Second, I developed operational definitions and coding parameters for each variable in the ontology: tweet_tactic, positive or negative valence frames (e.g., pos_national_unity and neg_division_society), account interaction ID, and account type (see Supplemental Information File, Appendix A, for the full set of discursive frames). Numerical values were used to best represent a tweet’s tactical orientation and associated frame(s). For tweet_tactic, coders chose from three ideal types of tactics derived from previous theoretical discussions and open coding: Cheerlead (1), Devalue (2), and Hoax spreading (3). Tweets that deviate from these three ideal types are considered “noisy” data, that is, data that contain errors (e.g., broken links and content suspended by Twitter) or irrelevant features (e.g., horoscopes, popular culture references like K-pop, jokes, and commercial content like advertisements). These tweets are coded as Engage (4). For each of the positive and negative frames established by the open coding process, coders entered a 1 or 0 depending on whether a tweet invokes them. Tweets that deviated from coding parameters were marked with an asterisk next to their value. Additional variables like account_interaction_id and account_interaction_type required textual elaborations to understand the nature of the accounts that RTA-linked digital astroturfers interacted with, such as their affiliations or known/inferred political leanings. These details reveal the communities around which these operations were organized, which, in turn, allows us to identify subsets of data for targeted qualitative analysis to enhance the contextualization of quantitative results. Furthermore, I created a notes column (i.e., notes_translation) for each tweet to document relevant context-specific knowledge, coding decisions, and interesting observations (e.g., deviations from coding parameters) to promote reflexivity in the coding process (Tanweer et al. 2021: 17).

Third, I implemented the coding ontology and hand-coded all 14,430 tweets to track both quantitative and qualitative data. Though computer-assisted coding offers a less time- and labor-intensive technique to analyze large-scale text-based datasets, the problems with decontextualization with this approach is well established (Lichtenstein and Rucks-Ahidiana 2021; Tanweer et al. 2021). Even if researchers can engage in pre-training, embedding, and fine-tuning for complex text classification for low-resource programming languages such as Thai, machine learning underperforms in nuanced portrayals of a topic (Nelson et al. 2018: 221). It also misclassifies linguistically similar but conceptually different codes when compared to hand-coding that enables more context-sensitive readings of the data (Lichtenstein and Rucks-Ahidiana 2021: 612). The challenges of coding the diverse types of accounts RTA-linked digital astroturfers interacted with, tweets that contain tweets or those that deviate from the ontology (e.g., when astroturfers praise or agree with regime opponents) make hand-coding better suited to capture these complexities in the dataset.

Additionally, I recruited and trained two native-Thai speakers on the codebook to build a consensus on coding protocols and thus limit randomness in responses. They familiarized themselves with the major social and political topics in February 2020, conducted five rounds of practice tests, and collaboratively discussed and reconciled coding differences. Finally, they independently applied the codebook on 1 percent randomly selected subsample of tweets (n = 146) in a test of intercoder reliability (ICR). 15 Calculated using Cohen’s kappa coefficient, the results indicated a near-perfect agreement. Tweet tactic scored 98 percent and each associated discursive frame, except for Praiseworthy (84%), scored above 90 percent (see Supplemental Information File, Appendix B).

Finally, I subjected all the coded tweets into three sets of analysis. The first and second set entailed quantitative analyses of the compiled data, quantizing the coding. I first used Gephi, a cloud-based visualization and network metric analysis software, to locate highly connected node groups coded in the account_interaction_id column. 16 Network visualization helps illustrate the most prevalent Twitter accounts and communities that RTA-linked digital astroturfers interacted with. Subsequently, I used descriptive statistics and tabulations based on counts of each categorical variable to highlight the most prevalent tweet tactics and their associated discursive frames in the dataset. Lastly, based on these quantitative trends, I conducted a targeted, context-sensitive qualitative analysis of the same data sources to offer a granular empirical illustration of why and how the Prayut regime discursively conducted digital astroturfing against its populations.

Results

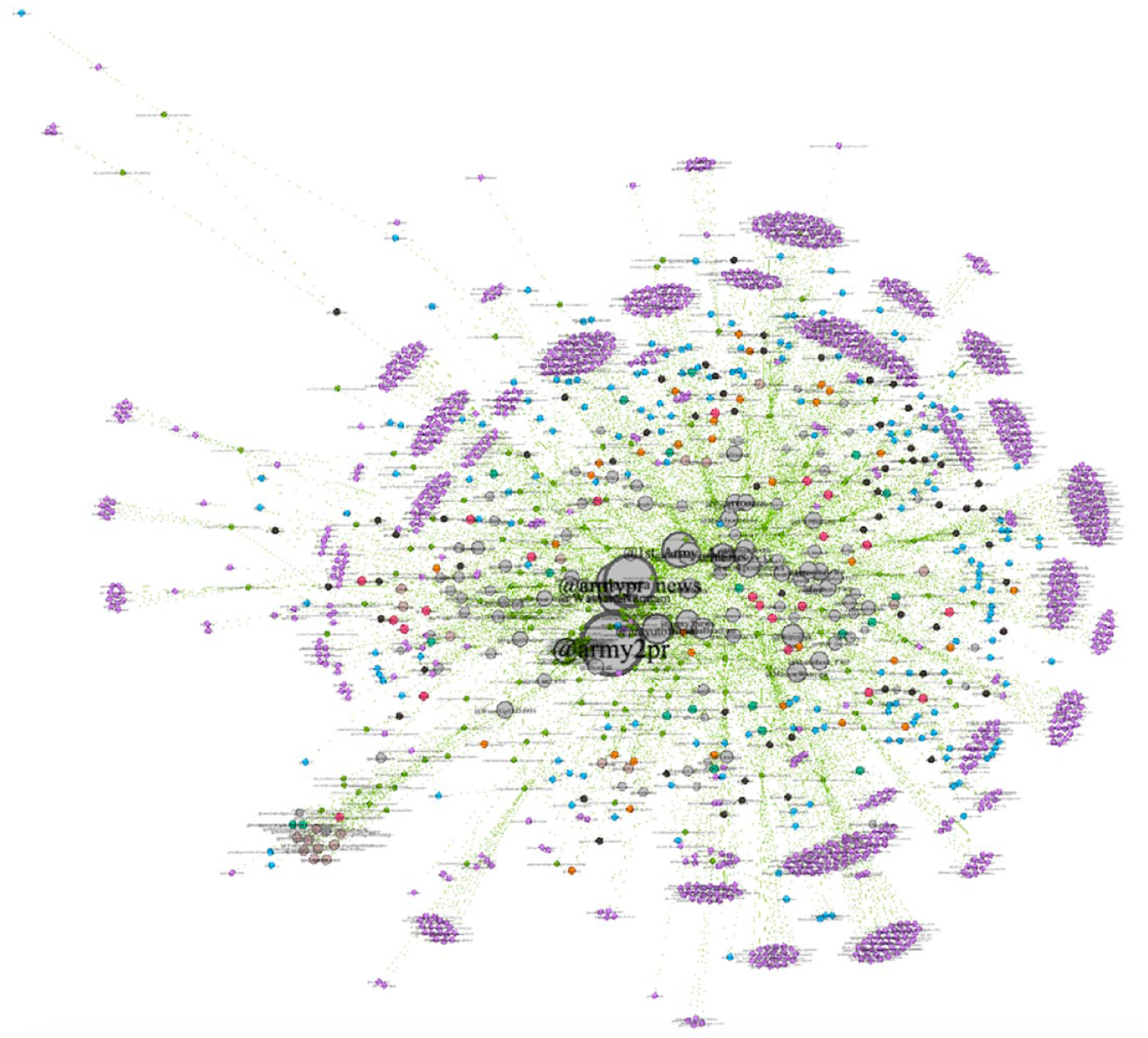

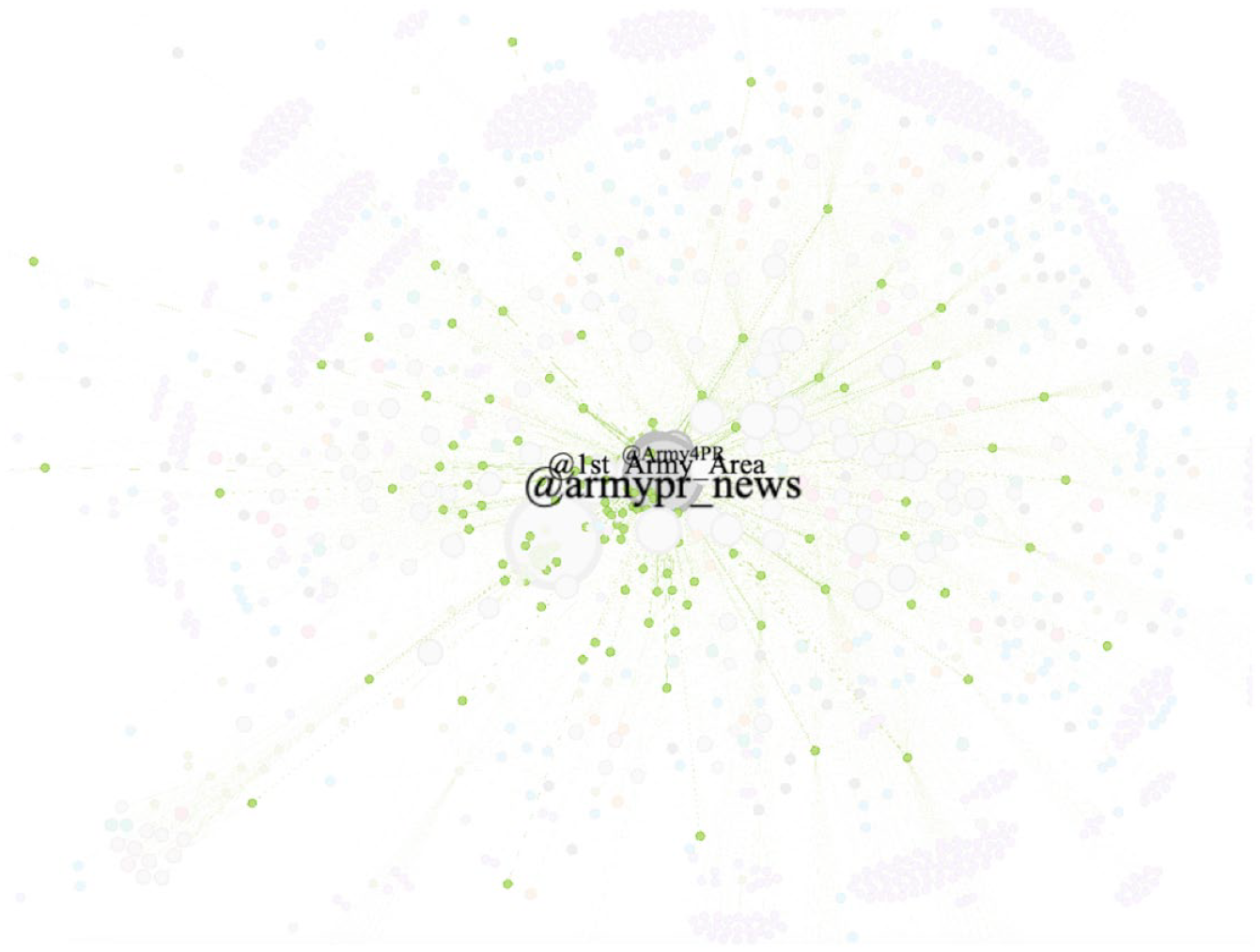

Public relations accounts of different RTA units, particularly @armypr_news, @1st_Army_Area, and @ARMRY4PR, collectively represented the largest node category with high incoming ties (n = 4,194) (see Figures 1 and 2). Other key node categories included anti-regime politician (n = 958), anti-regime political party (n = 209), anti-regime user (n = 1,647), and pro-regime user (n = 2,088) (see Supplemental Information File, Appendix A, for the full description of these actors). In addition, The Standard (n = 196), Voice TV Official (n = 149), and Thai Rath News (n = 85) were the top three news outlets (n = 1,997) that the digital astroturfers interacted with to promote positive stories about the army and the Prayut government. The Standard and Voice TV are online news outlets critical of the military, while Thai Rath appeals more to mainstream Thai-speaking audiences. 17 Among journalists, they typically interacted with Wassana Nanuam (n = 697) who is typically known for her reporting on military-related affairs.

Networks of RTA-linked digital astroturfing.

RTA-linked digital astroturfing assets that interacted with army public relations accounts.

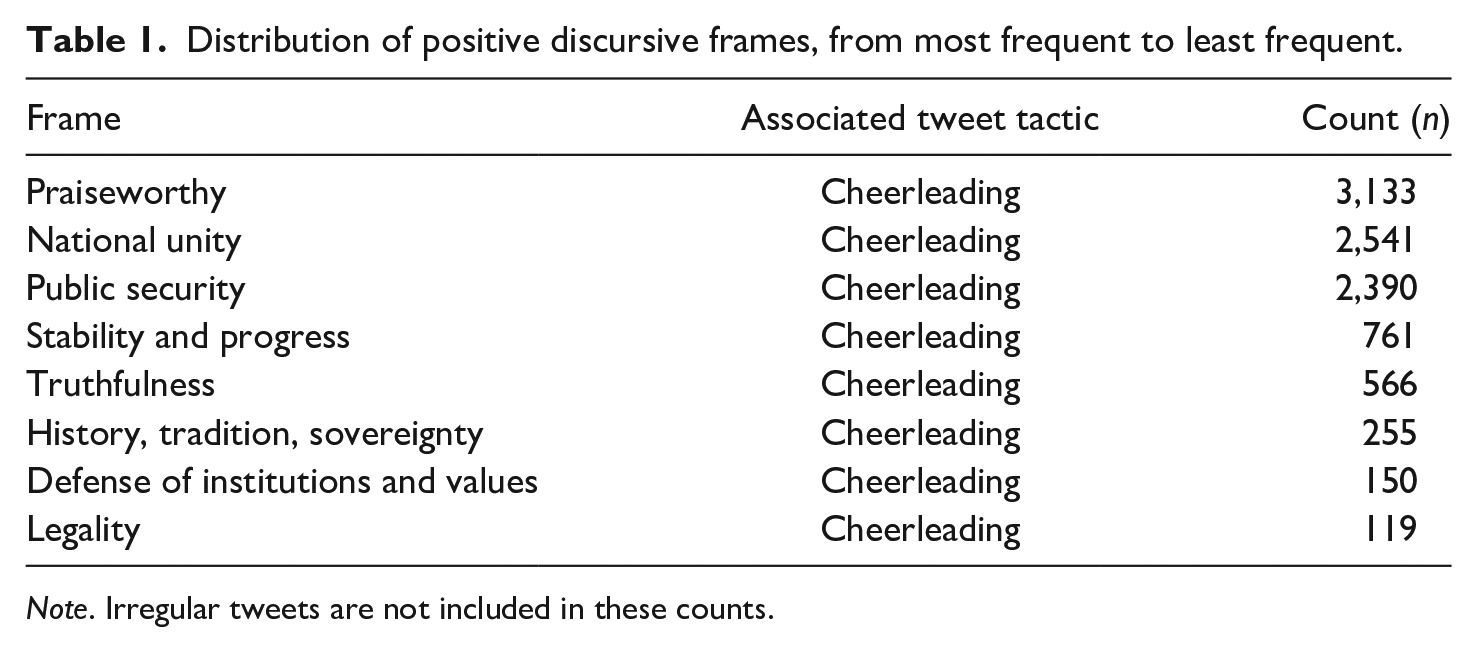

Cheerleading tweets represented 52 percent of the tweets in the dataset. From retweeting stories about the RTA’s COVID-19 monitoring operations along the Thai-Cambodian border (n = 28) to Prayut’s vision for a “smart” public transport system in Bangkok (n = 10), these tweets tried to promote the army and the Prayut government’s performance outputs and superior leadership qualities. The comments that typically accompanied these retweets were not based on rational argumentation; they were positive affective expressions that tried to create an empathetic bond with the army and the Prayut government by offering praise and motivation (e.g., “Keep fighting” and “Protecting and serving the people. Amazing. Sacrificing your free time for the people”). In fact, Praiseworthy was the most dominant discursive frame associated with retweets and comments in the Cheerleading (n = 3,133) category, followed by National Unity (n = 2,531) and Public Security (n = 2,390) (see Table 1). These three frames were commonly associated with retweets of stories about army soldiers providing services and protections to Thai citizens amidst incidents that threatened their livelihoods (e.g., mass shooting, wildfires, and drought). To amplify impressions of popular support for the army, digital astroturfers retweeted these posts with praises for the troops such as “@armypr_news Containing wildfires #armyforthepeople” and “@3_INFANTRY_DIV @siharatdecho. This is what they are call the soldiers of the people.” 18

Distribution of positive discursive frames, from most frequent to least frequent.

Note. Irregular tweets are not included in these counts.

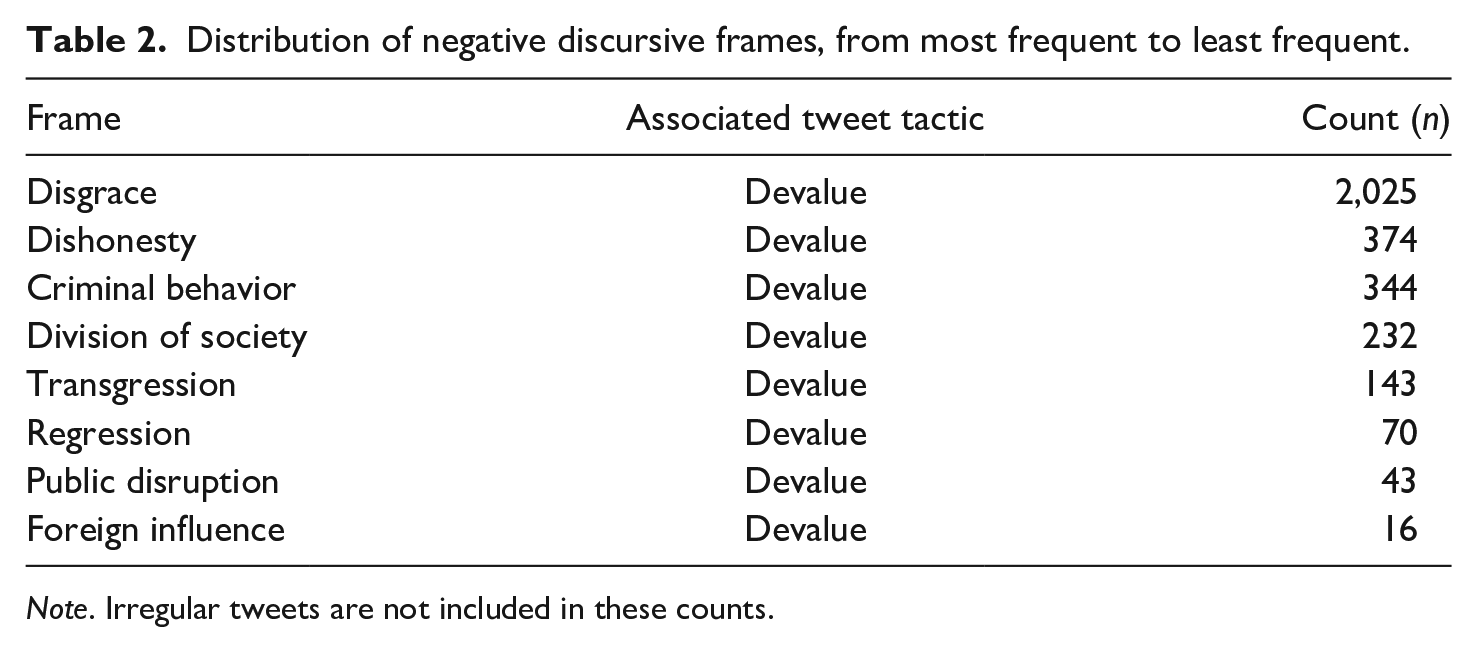

By contrast, digital astroturfers employed Devalue tweets (17.4%) to harass the Prayut regime’s pro-democracy rivals by framing them as morally inferior, felonious, and less competent actors whose ideological aspirations threaten the established political order. Disgrace was the most dominant discursive frame associated with this tactical category (n = 2,025) (see Table 2). These tweets mostly discredited FFP politicians and their supporters by insulting their individual abilities, integrity, charisma, intellect, and influence. The accounts of the party’s executive members such as Thanathorn Jungruangrungkit, Piyabutr Saengkanokkul, and Pannika Wanich were—compared to other anti-regime politicians—the primary targets of Devalue tweets. For instance, Devalue tweets that targeted Pannika were forty-two times higher than Pheu Thai’s Teerarat Samretwanich (n = 1), although both were elected female MPs from parties that opposed military rule. For example, army-linked digital astroturfers accused Pannika of bringing chaos to the country, framed her as a liar, and expressed joy in her party’s setbacks. Depending on the issue, some of the Devalue tweets directed at FFP contained more than one negative frame in a single tweet. For instance, these assets combined Disgrace and Transgression frames to accuse FFP of being so-called “nation haters”—a common trope that conservative Thais use to accuse the party of subverting the nationalist-royalist political order (see also Sombatpoonsiri 2022).

Distribution of negative discursive frames, from most frequent to least frequent.

Note. Irregular tweets are not included in these counts.

Contrary to findings from other contexts like Russia where online trolls propagated several hoaxes in the United States and Europe (Prier 2017: 67), 19 there was not a single instance of Hoax spreading by RTA-linked digital astroturfers in February 2020. There was, however, a small portion of tweets that deviated from the coding parameters (1%), notably tweets that expressed agreements with statements of the Prayut regime’s political rivals and critics (n = 173). Among these tweets were retweets of FFP politicians’ posts that expressed condolences and sadness about the Korat mass shooting, as well as retweets of several anti-regime users’ praise of the government security forces’ efforts to resolve the crisis. These tweeting patterns are functional to the regime’s official narrative of solidarity and efforts to restore the image of the army as the guardian of the public instead of being the cause of the incident. 20 Lastly, Engage tweets represented 29 percent of the tweets in the tactical category. While many of the seemingly mundane tweets in this category (e.g., posts about horoscopes and pop culture) are considered “noisy” data because their meanings cannot be easily interpreted and nor are their frames as discernible as the other tactical categories derived from the literature, the lack of informativeness may arguably constitute a form of “anticipatory obfuscation” that helps operatives masquerade themselves as autonomous individuals in order to avoid Twitter bans (Heavey et al. 2020: 1503–4; Xia et al. 2019).

Early February: Drowning Out Public Criticisms After the Korat Mass Shooting

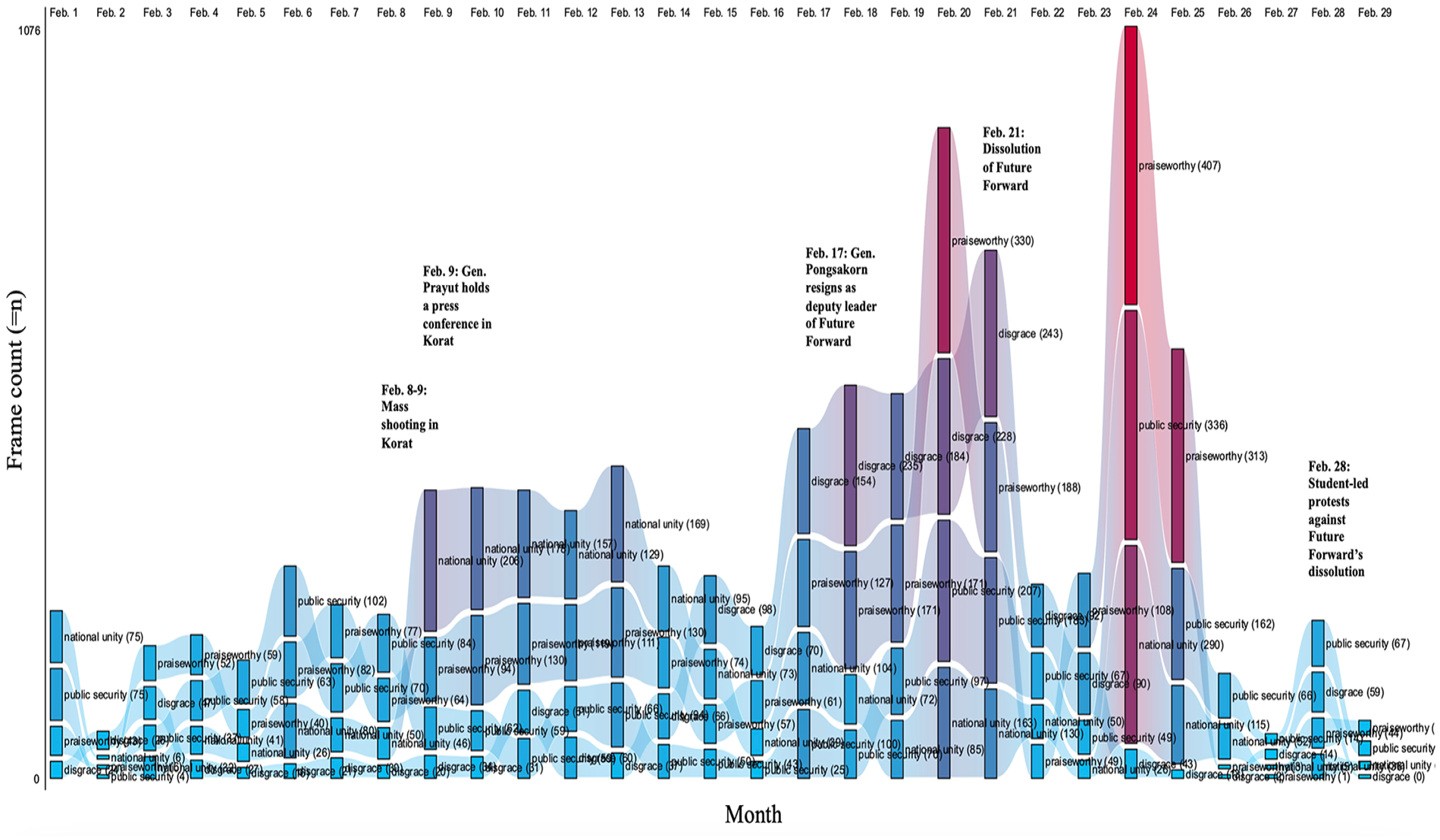

The distribution of valence frames in February 2020 followed offline events in Thai social and political life that sparked online discourses with collective action potential (see Figure 3). Not only did these discourses pose a challenge to regime image management, but they also enhanced the ability of pro-democracy parties to cultivate popularity. In particular, FFP was adept at using social media to challenge the nationalist-royalist ideology that underpins the established political order (Sinpeng 2021: 196). On February 8—just one week into the month—32-year-old RTA sergeant major Jakrapanth Thomma stole weapons from an army barrack near Korat before driving into the city center to shoot at unarmed civilians. After an extended gunfight that lasted almost twenty-four hours, Jakrapanth was shot dead by government security forces. His rampage resulted in twenty-nine fatalities and fifty-eight injuries. 21 It was later revealed that Jakrapanth was hoodwinked by his commanding officer in a private property deal. 22 The mass shooting, which was the first of its kind in Thailand, sparked widespread public backlash against the army leadership and the Prayut government. 23 As the hashtags “Reform the Military” and “PrayuthRIP” started trending on Twitter, anti-regime parties led by FFP and Pheu Thai renewed their calls for increasing the army’s transparency and accountability. 24

The shifting discursive elements of RTA-linked digital astroturfing operations in response to domestic offline events.

In the week prior to the crisis, as Figure 3 shows, Cheerleading and Devalue tweets were already in use to promote the army and to devalue FFP, respectively. However, there was an enormous spike in digital astroturfing tweets after February 8 (see also Goldstein et al. 2020: 9). In particular, RTA-linked assets intensified their propagation of Cheerleading tweets to discursively foster concordance between the government, the army, and the Thai people. National Unity was the most predominant frame associated with this tactical category, which included retweets of posts related to Prayut’s expression of condolences, his official visit to Korat, and statements about the government’s efforts to provide financial assistance to the victims’ families (see Figure 3). Several assets retweeted the following post from Prayut’s official Twitter account: “RT @prayutofficial: I feel sorry for this great loss like all Thai people in the country. I would like to join and mourn for the victims alongside the people of Korat and all the people of Thailand. We are all saddened by what happened and today I extend my support to everyone.” They also repeatedly commented on posts by Prayut’s official account, RTA public relations accounts (e.g., @armypr_news and @1st_Army_Area), and mainstream news outlets (e.g., @PostToday) with expressions of condolences, regret, and moral support for the victims’ families. Repetitive comments like “I give you my support and pray you are all safe” and “We need to support each other” discursively reinforces the regime’s official narrative of national solidarity amidst public backlash.

Digital astroturfers initially refrained from devaluing FFP. Instead, their retweets of FFP politicians’ messages of condolences were accompanied by comments that included, for example, “@Thanathorn_FWP @Piyabutr_FWP I offer my condolences to the families of the victims and honor the bravery of those who sacrificed themselves,” “@Pannika_FWP I would like to offer my condolences,” and “@wirojlak I offer my condolences to the relatives and families of the victims who passed away during this incident.” 25 The appeal to solidarity discursively neutralizes online criticisms about the army’s structural flaws that allowed the shooting to happen—a discourse that many FFP politicians like Thanathorn began to promote on Twitter as they renewed calls for reforming the army to prevent abuses of junior officers like Jakrapanth. 26

Mid-February: Promoting the Army’s Promised Reforms and Stigmatizing FFP

The intensification of the National Unity frame was not the only discursive frame used to defuse critical discussions about the army and the government. The Korat mass shooting prompted the army leadership to show responsibility. During a press conference on February 11, General Apirat apologized and shed tears as he pleaded the public not to blame the army. 27 He also pledged to undertake reforms, such as investigating illegal private businesses within the army, imposing restrictions on the types of weapons owned by army personnel, and ending the rights of retired officials to live on army residential properties. 28 A week later, the army announced the launch of a so-called “24-hour hotline” complaint center that would allow its rank-and-files and junior officers to directly report abuses to the commander-in-chief. 29

Consequently, army-linked digital astroturfers promoted tweets that contained a Truthfulness frame to reinforce Apirat’s narratives and to discursively portray the general as an upstanding and sincere individual. They frequently retweeted posts from @1st_ARMY_AREA that contained snippets of Apirat’s statements from his press conference that included: “The army apologizes and expresses its deepest condolences regarding this situation” and “Nobody in the world or in Thailand wanted this to happen. Please do not insult the army or the soldiers. If you want someone to blame, then blame me.” These assets also repetitively commented on these source accounts with praises for the army to drown out criticisms that the army leadership was not receptive to grievances of its rank-and-files. For example, when @armypr_news tweeted about the launch of the army’s complaint center, they parroted praises for the initiative, including “Another good start. I trust the army’s potential,” “This is very good,” and “If you feel wronged then notify them.”

Besides extensively cheerleading the army, RTA-linked digital astroturfers also devalued FFP politicians. In mid-February, Pongsakorn Rodchompoo—a retired-RTA lieutenant general-turned-FFP deputy leader and a strong proponent of military reform—became embroiled in a controversy that exposed the party to public criticisms. During his appearance on “Kayee Kao Talk with Yukol” talk show on February 14, Pongsakorn admitted to overstaying in an army residential unit despite his retirement. 30 As accusations of hypocrisy and conflicts of interests gained traction, he resigned from the party’s senior executive committee. 31

Pongsakorn’s resignation led right-wing activists from the pro-regime, nationalist-royalist community on Twitter to further discredit FFP’s leadership. Right-wing users such as @pookem, @political_drama, and @tigeryellowlive had mocked Pongsakorn’s physical appearance, signaled his hypocrisy, and shamed FFP for lying to the public. Many of their posts invoked frames functional to the digital astroturfers’ efforts to devalue FFP and to construct a semblance of popular resentment for the party. Retweets of right-wing criticisms of Pongsakorn’s misconduct that contained a combination of Disgrace and Dishonesty frames served to accentuate FFP’s image as an untrustworthy political party. Several assets, for example, retweeted the following posts from @pookem: “RT @pookem: Clean your house first, Thanathorn” and “RT @pookem: FFP should establish an executive committee to replace Baldhead before February 21 to absorb the blows [from his army housing scandal]. 32 Additionally, army-linked digital astroturfers also retweeted posts that contained a combination of Disgrace and Regression frames to portray Pongsakorn as Apirat’s reverse image: dishonorable and backward. Notably, they retweeted anti-Pongsakorn commentaries from @Juahuaheadline, an online pseudo-news outlet, and posted derisive comments such as “@Juahuaheadline I think they should strip him of his ranks. He does not do anything good for the country besides collaborating with people who want to destroy the country.”

Late February: Renewed Praise of the Army and Further Harassment of FFP and its Supporters

Army-linked digital astroturfers continued to devalue FFP up to February 21 when the constitutional court issued a ruling to dissolve the party (see Figure 3). The legal case against FFP centered around the question of whether a Thai political party was permitted to borrow money to fund its operations and under which conditions. After the March 2019 elections, conservative supporters of the Prayut regime accused FFP of violating the 2017 Political Parties Act. They maintained that the party unlawfully submitted itself to Thanathorn’s financial influence when it accepted his loans worth 191 million baht ($6 million) to finance its election campaigns. 33 Thanathorn, however, argued that the loan was meant to cement FFP’s independence from other financiers and should be classified as debt obligation. 34

As the court’s ruling neared, digital astroturfers doubled down on retweeting posts from the same batch of nationalist-royalist users, that is, @political_drama, @a_veerayut, and @tigeryellowlive. These posts invoked a combination of Disgrace and Dishonesty frames to discursively portray FFP as a party of liars that did not deserve any sympathy. They also swarmed official Twitter accounts of FFP politicians, pro-democracy celebrities (e.g., John Winyou), and liberal-leaning news outlets (e.g., The Standard and The Matter) with abusive comments prior to the ruling. They commented, for example, “@johnwinyou I hope your party gets dissolved” and “I pray for your destruction.” After the court ruled that FFP’s loan was outside the course of ordinary business and effectively dissolved the party, these assets celebrated the ruling and mocked FFP politicians. For example, some of them wrote: “@Thanathorn_FWP Really did not survive,” and “@thematterco Their [FFP] press conference is useless. The court already made its decision.” 35

When pro-FFP, student-led demonstrations erupted shortly after the court’s decision, digital astroturfers employed Devalue tweets and associated negative frames to manage online discourses with collective action potential. The Public Disruption frame is an illustrative example of their discursive efforts to construct protesters as potential inconveniences to public life. This was particularly reflected in comments that implicitly equated protests with public health risks as COVID-19 started to spread in the country: “@yingcheep Don’t go. COVID is spreading” and “@commonners @yingcheep Be careful of COVID-19 when you go to crowded places.” 36

As criticisms against FFP’s dissolution continued, 37 digital astroturfers renewed their efforts to discursively represent army soldiers according to a humanitarian logic, which involved highlighting the army’s commitment to the welfare of Thai citizens. Tweets that contained Public Security and Praiseworthy frames started to gain traction as digital astroturfers promoted content about the army’s provision of services to address the humanitarian and security needs of the public (see Figure 3), which included posts about army-led constructions of artesian wells in rural villages, distributions of water supplies to drought-affected communities, interdictions of drug-tracking rings, operations to combat wildfires and air pollution, and monitoring of the COVID-19 virus along the Thai–Cambodian border. In the same vein, they showered the soldiers with praises for their heroism in the comments section. The hashtags that accompanied many of these comments (e.g., “Army for the people,” “The army is always a refuge for the people,” and “Soldiers of the people”) are indicative of continued discursive efforts to restore the army’s reputation as the steward of the Thai people. Relatedly, they also propagated tweets that invoked a combination of Public Security, Praiseworthy, and Truthfulness frames in their efforts to enhance the army’s image as a transparent and accountable organization. Many of these assets continued to retweet posts from journalists and news outlets that reported on the army’s pledge to institute reforms to safeguard the welfare of its troops, including the 24-hour hotline complaint center and collaborations with the Ministry of Finance to tackle black money moving within the barracks. 38

Conclusion and Implications

This contribution sought to examine why and how autocratic regimes discursively conduct digital astroturfing against their populations. I have argued that (1) digital astroturfing bears trace to the regime’s threat perception of online discourses with collective action potential; and (2) regime-linked digital astroturfers exploit social media affordances to discursively create a semblance of popular support for the regime and resentment for regime challengers. My large-N qualitative study of RTA-linked tweets in February 2020 provides an initial, albeit in-depth empirical support for my propositions. RTA-linked assets extensively cheerleaded the regime through selective retweets of proregime content and comments after the Korat mass shooting, particularly those that helped create an empathetic bond with the army and the government. They also exploited the same platform affordance to devalue FFP, particularly by posting and retweeting content that framed and accentuated the latter’s perceived moral bankruptcy and wrongdoings.

These findings bear resemblance to the CCP’s strategy of “strategic distraction” in which the so-called “50 Cent Army” government-linked trolls flooded Weibo with pro-regime posts to divert public discourses away from collective action, grievances, and/or general negativity (King et al. 2017: 497). This enabled the party to channel boundaries of acceptable deliberation without having to censor as much as they might otherwise (King et al. 2017: 485). Beyond these initial similarities between Thailand and China, my findings also reveal ample digital opportunities for contemporary autocracies to adapt their legitimation strategies to new sociotechnological realities. Strikingly, the absence of the corporeality of RTA-linked digital astroturfers suggests that autocracies have learned to hide behind the attribution blur and claim plausible deniability to minimize blowback—all the while maintaining centralized control over operatives to ensure alignment with their hegemonic narratives (Conduit 2023). Outside Thailand, autocratic digital astroturfing has also reared its head in Russia (Pomerantsev 2019), Venezuela (Puyosa 2021), and Saudi Arabia (Berrie and Siegel 2021); these online efforts have enhanced strategic narratives functional to projecting an appearance of popular support amidst public criticisms in the name of regime stabilization. However, as Twitter attributions become more specific, these regimes have started co-opting nonstate, trendsetting social media influencers to help promote their narratives (Brockling et al. 2023).

The discursive elements of RTA-linked digital astroturfing also point to a growing normalization of digital militarism in which online information is transformed into a conflictual element that necessitates management by the military—an actor traditionally committed to managing security (Jackson et al. 2021; Massa and Anzera 2023). The “platformization” of military social media communication allows military organizations like the RTA and commercial actors to exploit the social media logic to shape collective actions toward a positive identification with soldiers and amplification of the feeling of danger related to concrete adversaries—actions that largely underlie present-day strategic communication (see Jackson 2019; Massa and Anzera 2023; Van Dijck and Poell 2013; Ventsel et al. 2019). Recently, there were allegations that the RTA hired YouTube influencers and even created their own TikTok accounts to humanize soldiers by depicting their lives in the barracks (Tantirapan 2024).

Future work is now needed to understand the broader novelty and effects of my findings. This includes expanding the coding process to cover additional timeframes to track the relationship between digital astroturfing and regime threat perception, as well as conducting comparative work to increase my argument’s external validity beyond Thailand. Furthermore, because the intensive repetition of selected snippets of biased and/or misleading information creates an “information fog” which may add credibility to propagandistic narratives among target users (Benkler et al. 2018: 33; Nissen 2015), scholars should systematically investigate the downstream effects of authoritarian digital astroturfing on collective action potential.

Supplemental Material

sj-docx-1-hij-10.1177_19401612241279158 – Supplemental material for Drowning Out Dissent: The Thai Military’s Quest to Fabricate Popular Support on Twitter

Supplemental material, sj-docx-1-hij-10.1177_19401612241279158 for Drowning Out Dissent: The Thai Military’s Quest to Fabricate Popular Support on Twitter by Chonlawit Sirikupt in The International Journal of Press/Politics

Footnotes

Acknowledgements

I would like to thank my Thai coders, Praiya Uranukul and Russakorn Nopparujkul, for their time, dedication, and generosity, as well as Pitchapa Jular who kindly put me in contact with them. I am immensely grateful to Oliver Schlumberger (Eberhard Karls Universität Tübingen) and Emily Kalah Gade (formerly Emory University) for their unwavering mentorship and enthusiasm for the project. I also extend my deepest gratitude to Thomas Demmelhuber, Žilvinas Švedkauskas, Koray Saglam, Mirjam Edel, Ahmed Maati, Stephanie Wagner, Carolin Ordon, Elizaveta Kovalchuk, Lisa Garbe, Kris Ruijgrok, Laura Schuhn, Janjira Sombatpoonsiri, Marcella Morris, Arica Schuett, Gabe Samuels, Ben Lefkowitz, Ava Sharifi, Kayla Salehian, Emily Tomas, and the three anonymous reviewers for their valuable critiques and suggestions on earlier drafts of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Ethical Considerations

Not applicable. The tweets collected and used for the analysis were obtained from Twitter’s public archive of data related to state-backed information operations called “Hashed Information Operations Archive.” Twitter noted that while no content has been redacted, some account-specific information was hashed to protect account privacy.

Consent to Participate

Not applicable.

Consent for Publication

Not applicable.

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.