Abstract

Research on knowledge collaboration in Wikipedia has predominately focused on metadata at the article level or editor-centric analyses, often overlooking the complexities of knowledge collaboration and its contextual dependencies. This study takes a novel, fine-grained approach to investigating revision mechanisms in Wikipedia’s knowledge collaboration. By considering modified sentences as carriers of collective knowledge and spaces in which epistemic power is negotiated, it reconstructs their revision sequences and examines how editorial, contextual, content, and temporal factors shape Wikipedia’s revision dynamics. A total of 140,593 revisions (by 48,643 editors) of 76,525 sentences in 537 Wikipedia articles related to climate change were analyzed using text mining, natural language processing, survival analysis, and meta-analysis. The findings expand our understanding of how epistemic power is negotiated through collective endeavors underlying bureaucratic rules and community moderation in Wikipedia.

Keywords

Introduction

In the post-truth era, online platforms struggle to cope with the proliferation of disinformation and misinformation. Despite deploying a mix of paid human labor, automated systems, and third-party or community-based fact-checking, social media giants such as YouTube, X (formerly Twitter), and Facebook continue to face criticism over the quality and reliability of the information they host. By contrast, Wikipedia—an encyclopedia everyone can edit—stands out as a bastion of trust (Bruckman, 2022; McDowell and Vetter, 2021). As “commons-based peer production,” Wikipedia is non-proprietarily created and coordinated, as well as contributed to, by hundreds of thousands of self-selected, widely distributed, and loosely connected individuals (Benkler, 2006). On the one hand, broad participation, loose connections, and diverse expertise and interests reflect the “many minds collaborating” (Niederer and Van Dijck, 2010; Ren and Yan, 2017). On the other hand, Wikipedia’s open nature presents challenges posed by vandalism, conflicts, and disagreements among editors, which can compromise accuracy and consistency and require effective governance. Questions are raised about how this collaborative model enables Wikipedia to become the most influential and reliable source of information.

Understanding how knowledge is collaboratively shaped and maintained in Wikipedia has been a long-standing inquiry in different disciplines (Ren et al., 2023). Empirical studies have either focused on illustrating how editors debate sources, reference guidelines, and negotiate consensus in contentious settings or have attempted to generalize patterns such as working routines or editor dynamics. However, qualitative studies are limited, as they do not allow for the inference of how different factors, such as contestations over the meaning of individual sentences or conflicts arising from violations of community-defined rules, impact knowledge collaboration. Meanwhile, quantitative studies cannot fully capture the complexities of knowledge collaboration as they only estimate statistical models based on metadata at the article level or merely from an editor-centric perspective without reflecting the underlying contextual environments in which each piece of knowledge is shaped and maintained.

To address these limitations, this study scrutinizes the mechanisms of how revisions shape and maintain collective knowledge, guided by the overarching research question (RQ1): What organizational patterns structure Wikipedia’s revision processes? Specifically, it analyzes the revision dynamics at the sentence level, guided by the operationalized research question (RQ2): What factors shape the revision mechanisms? This study introduces an explanatory model that identifies the mechanisms influencing knowledge persistence and modification across four dimensions: (1) editor characteristics, (2) context dependencies, (3) content relevance, and (4) temporal factors. It examines 140,593 revisions (by 48,643 editors) of 76,525 sentences in 537 English-language Wikipedia articles related to climate change as a case study. The contributions of this study are twofold: Methodologically, it offers a novel, fine-grained perspective on the actual revision processes by reconstructing the evolution of sentences, operationalizing key dimensions influencing knowledge collaboration, and estimating their impacts on knowledge collaboration. Empirically, the findings provide insights into the tensions raised by self-organizing in knowledge collaboration and corresponding responses through peer and bureaucratic control, revealing how power is negotiated through collective endeavors of mutual observation, iterative refinement, and community moderation.

Knowledge collaboration in Wikipedia

Knowledge collaboration in Wikipedia is enabled in different ways. Technologically, Wikipedia’s web application facilitates the coordinative processes. Each article has a main page to display the current version of the collective knowledge on a particular topic, a “talk” page for editors to discuss writing for agreeable content, and a “history” page for version control. Normatively, the platform rules and guidelines safeguard the quality and reliability of the collective knowledge. While the content policies—neutral point of view (NPOV), verifiability, and no original research—determine the nature of the information presented on Wikipedia, the conduct policies regulate editors’ behaviors.

The platform facilitates both indirect and organized coordination. Indirect coordination occurs on the article’s main page, where editors, even anonymous editors, can directly edit and present their edited version as the current version of most Wikipedia articles. This open model enables stigmergic coordination, a self-organizing mechanism in which individuals respond to and build upon existing work without direct communication or predefined planning (Heylighen, 2016). Editors focus on the current state of the article, working independently without negotiation, imposed sequences, or division of labor (Heylighen, 2016). By contrast, organized coordination occurs chiefly on the talk page, where most discussion posts on the relevant article contain requests or suggestions for coordination (Bipat et al., 2018; Morgan et al., 2013; Viegas et al., 2007). For both indirect and organized coordination, policies and guidelines are cited when editors evaluate, process, and validate the collective knowledge (McDowell and Vetter, 2021). They serve to enable self-governance and solve coordination and communication problems (Beschastnikh et al., 2021; Butler et al., 2008).

Collaborative processes involve an interplay between knowledge, actors, agents, rules, and norms over time. As disagreements, negotiations, and controversies inevitably arise, policies and guidelines grow, adapting to incorporate diverse perspectives, ranging from conflict resolution and decision-making to newcomer socialization (Osman, 2013). Automated tools, such as bots, have been introduced and implemented to enhance efficiency in dealing with reliability checks, vandalism, and edit wars on a large scale. To avoid systemic bias and facilitate accountability for specific topics, voluntary task forces have established projects such as WikiProject to assist in the coordination of articles on certain topics (Bruckman, 2022). These various collaborative initiatives and applications give rise to a constantly evolving landscape of knowledge collaboration in Wikipedia that seeks to reconcile competing interests and foster a cohesive understanding of collective knowledge (McDowell and Vetter, 2021).

Collectivities, organizing, and governance

Social science studies expected that Wikipedia, in the absence of bureaucratic organizations and relying on the voluntary community, would demonstrate the deliberative practices that generate a participatory, discourse-centric, and decentralized model of collaborative knowledge production (Benkler, 2006; Pfister, 2011). However, it is guided by a bureaucratic oversight mechanism that consists of community moderation and peer controls (Jemielniak, 2014; König, 2013; Niederer and Van Dijck, 2010).

Shaw and Hill (2014) identified the “iron law of oligarchy” in Wikipedia, where a monopoly of power is exercised and consolidated within a small group of early editors. Among the over 48 million registered editors, about 0.24% edit regularly, and only 0.01% edit actively (Wikipedia contributors, 2024). Based on their contributions and community consensus, editors have different access levels, such as unregistered editors, registered editors, administrators, and bureaucrats. The roles determine not only the editors’ activities but also the organizational structures (Arazy et al., 2015). For example, administrators can protect articles from editors without advanced permissions, while unregistered anonymous editors can only edit unprotected pages. Compared to unregistered editors, registered editors make the most reliable contributions (Anthony et al., 2009). The contributions of new editors are more likely to be reverted by experienced editors, negatively affecting the retention of desirable newcomers (Halfaker et al., 2011, 2013).

Moreover, Wikipedia’s governance extends beyond spontaneous collaboration to include bureaucratic rules and peer control (Jemielniak, 2014; Matias, 2019; Rijshouwer et al., 2023). Instead of relying solely on stigmergic spontaneity and good faith collaboration, the growing body of rules, guidelines, and automated tools enforced by editors ensures the quality of peer contributions and increases efficiency in performing tasks (Hwang and Shaw, 2022; Jemielniak, 2014). Peer control arises from the collective efforts of editors engaging in mutual scrutiny and editorial surveillance, enabled by technological affordances such as revision tracking, discourse monitoring, and IP address logging (Jemielniak, 2014; Pentzold, 2017). Rijshouwer et al. (2023) argue that Wikipedia’s shift from a charismatic, open, self-organizing community to a bottom-up bureaucracy is a necessary adaption to its growing size and complexity. Indeed, the legitimacy of integrating bureaucratization as an essential part of developing and maintaining a self-organized culture indeed depends on the community members rather than on the authority or the elites in the community (Jemielniak, 2014; Matias, 2019; Rijshouwer et al., 2023).

The discussion of the organizational structure in Wikipedia leads to the expectation that editor characteristics, including the editor’s role and previous engagement, shape revision processes. Regarding the editor’s role, edits made by editors with high access levels tend to remain more stable, whereas those revised by editors with low access levels are more vulnerable to revision. Editors with high access levels often play a more active role in the revision processes, overseeing and refining content. Regarding the editor’s engagement, reactive editors are inclined to create recurrent revisions, reinforcing both the iterative nature of Wikipedia’s knowledge construction and the community’s moderation efforts. Keegan et al. (2016) showed that Wikipedia relies heavily on single-contributor work sessions rather than multi-contributor teamwork. Thus, edits made by solo editors who expand their edits over multiple revision sessions are also expected to trigger revisions.

Power, moderation, and collaboration

Wikipedia’s revision processes reveal the complex power dynamics underlying moderation, contestation, and collaboration. Through the stigmergic affordances of the platform, individuals can reinforce interpretations, remove alternative perspectives, or contest revisions by unilaterally changing or reverting the presented content without informing or discussing it with others. Although this can cause conflicts, vandalism, and edit wars, Wikipedia’s governance constrains the exercise of power in such actions through community moderation processes and automated tools. The quality and robustness of articles, particularly those on contentious topics, remain high (McDowell and Vetter, 2021).

This gatekeeping process is circular, with the distinction in gatekeeping between the gated and gatekeepers being fluid. Despite the uneven distribution of privileges and responsibilities among editors, all editors are embedded in different accountability networks of community moderation where one can serve as a gatekeeper in one network while being regulated by others in another network (Nahon, 2011; Pentzold, 2017). Even the most experienced editors with the highest access level do not possess the ability to oversee or control the entire body of collective knowledge as projects scale; instead, they must engage in a continuous process of mutual observation and negotiate their work with the community and other editors (Jemielniak, 2014; Matias, 2019; Rijshouwer et al., 2023).

In the revision processes, power is distributed and managed by editors’ interactions with fellow editors, the community, and the wider system that relies on their labor (Matias, 2019). Beyond the roles delineated by access levels, several scholars (Arazy et al., 2016; Arazy et al., 2015; Faraj et al., 2011; Maki et al., 2017) have identified various functional roles, such as “shaper” and “defender,” created and taken by editors to incorporate the need for collaboration in a given moment. By taking on these roles, editors respond to the evolving needs of the community, such as resolving content disputes, enforcing policies, improving article quality, or addressing vandalism, based on their understanding of where their contributions will be most valuable (Faraj et al., 2011).

The literature supports the expectation that contextual dependencies serve as key drivers of revision mechanisms. On the one hand, moderation frequently drives revisions, particularly when editors engage in disputes grounded in content or conduct policies, or when they correct misleading information. Meanwhile, moderated edits can prompt further revision as editors refine or contest changes made through moderation. On the other hand, edits that require editorial engagement and community support for refinement often undergo additional revisions.

Decoding revision mechanisms

This paper examines knowledge collaboration on climate change as an exemplary case given the inherently diverse perspectives and viewpoints involved in the discourse. Previous studies on climate change in Wikipedia (Esteves Gonçalves da Costa and Cukierman, 2019; Weltevrede and Borra, 2016) have found that only a limited number of heavily edited topics within climate change attract the most reverts and instances of vandalism. This suggests that the revision mechanisms across all related articles are stable and not heavily influenced by vandalism, conflicts, or sudden critical issues. However, these studies, which examined only a small number of Wikipedia articles, are primarily descriptive and do not estimate how specific factors, such as questioning neutrality, shape knowledge collaboration.

Quantitative studies have examined various aspects of the general processes of knowledge collaboration in Wikipedia articles, such as community performance (Ren and Yan, 2017), participatory hierarchies (Champion and Hill, 2024; Halfaker et al., 2011, 2013; Shaw and Hill, 2014), and working routines (Arazy et al., 2016, 2020). However, most of these are solely actor-centric, relying on aggregated metadata such as the number of edits made by the involved editors and focusing exclusively on the article level, neglecting the complexities of knowledge collaboration and its contextual dependencies. Some studies have applied relational event models (Bürger et al., 2023; Lerner and Lomi, 2020) or sequence analysis (Arazy et al., 2020; Keegan et al., 2016) to investigate how different editor groups revise controversial articles. These network approaches construct revisions of an article into a sequence or map revision sequences onto a dynamic network among editors. However, the mechanisms driving revisions remain underexplored. It is difficult to discern whether the formation of network structures reflects individual decisions based on content-related factors or group dynamics within the community of editors.

This study fills this research gap by investigating Wikipedia’s sentence-level revision mechanisms. In particular, it views modified sentences as carriers of collective knowledge and spaces in which contestations and collaborations occur. From a heuristic perspective, editors tend to monitor articles passively and engage in editing only when they perceive a need to add, delete, or modify content. Other than articles, sentences embody the fundamental characteristics of Wikipedia (Benkler, 2006): modularity (consisting of small modules of tasks) and granularity (having the capacity to scale modules). I highlight the importance of accounting for both the content and context of knowledge collaborations, because the ideas, rather than the editors, are undergoing massive changes in knowledge collaboration (Faraj et al., 2011: 1230). Multiple ideas may evolve through a dynamic process of divergence and convergence, where distinct perspectives and contributions from various editors can lead to diverse development paths (Faraj et al., 2011). Therefore, I argue that analyzing sequential trajectories of revisions can provide a nuanced understanding of how each idea is shaped and maintained over time.

Building on the theoretical framework outlined in the previous sections, this study proposes an explanatory model that examines Wikipedia’s revision mechanisms at the sentence level. It identifies four dimensions that shape knowledge persistence and modification. In addition to (1) editor characteristics and (2) contextual dependencies as primary drivers of revision mechanisms that directly shape knowledge persistence and modification, this model investigates two additional dimensions: (3) temporal factors and (4) content relevance to account for variations in revision dynamics. It is expected that content affects the level of editorial attention a sentence receives and that highly relevant or contested content is more frequently revised; the more relevant the content, the more likely it is to undergo revision. Temporal factors reflect the stability of content, as sentences that have undergone multiple modifications are better established and thus less likely to be revised further. Together, these dimensions form the basis for analyzing Wikipedia’s revision mechanisms.

Data and methods

Data

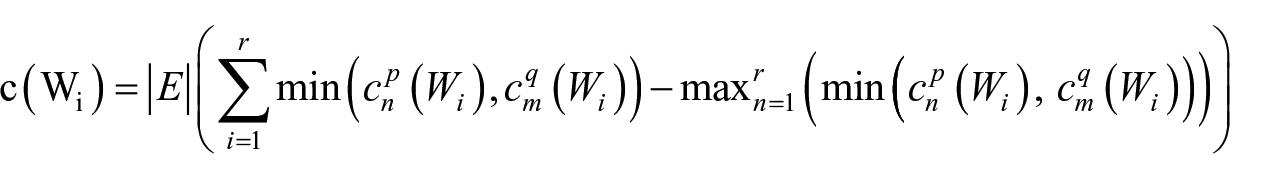

Via the Wikipedia API, I collected all revision details and the editor information for 1,000 English-language articles targeted in the WikiProject Climate change. 1 The articles span different areas that are related to climate change or relevant to climate communications, such as “Greta Thunberg,” “Antarctica,” “cattle,” and “Sustainable Development Goals” from the day the article was created until the day of data collection (7 December 2021). I first identified the modified sentences from each revision and then calculated their pairwise semantic similarity. Based on semantic similarity and temporal distance, revision sequences of each sentence were created (Supplemental material). 2 Each sequence captures the evolution of a sentence, including its (1) editor characteristics, (2) context dependencies, (3) content relevance, and (4) temporal factors (Figure 1).

Data structure.

Temporal variables

I utilized the converted Unix timestamp to process temporal variables. The time between revisions was calculated as the difference between the creation time of the revision and that of the subsequent revision. This analysis included the creation time of the first edit (start) at the sentence level, as well as the revision time (time), and the count of previous revisions (parity) for each revision.

Content

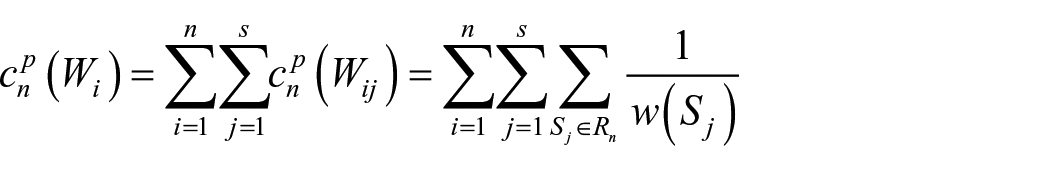

I extended Borra et al.’s (2014) approach to quantify the relevance of the individual terms and revised sentences, leveraging wikilinking and editor interactions related to the revised sentences as indicators. Wiki links 3 are textual elements that connect to other Wikipedia articles, enhancing viewers’ comprehension by linking to relevant topics, technical terms, or unfamiliar proper names (Borra et al., 2014). The method extracts terms that are highlighted by the editors through wikilinking to indicate disputed content. A wikilink can serve as a reference point for broader meanings, revealing the kinds of content that are more likely to prompt revisions. Terms are weighted based on the frequency of revisions involving sentences containing them, with these weights serving as term relevance scores. The relevance score of a sentence is calculated by averaging the relevance scores of the terms it contains.

The relevance value

Applying this approach, the most disputed content is related to “climate change”

4

(term score = 31,996.9) and its physical causes, such as “greenhouse gas” (79,046.9), related policies and debates such as the “intergovernmental panel on climate change” (24,836.1), and energy-related topics such as “fossil fuel” (16,753.2). Finally, the sentence score

Context: moderation and collaboration

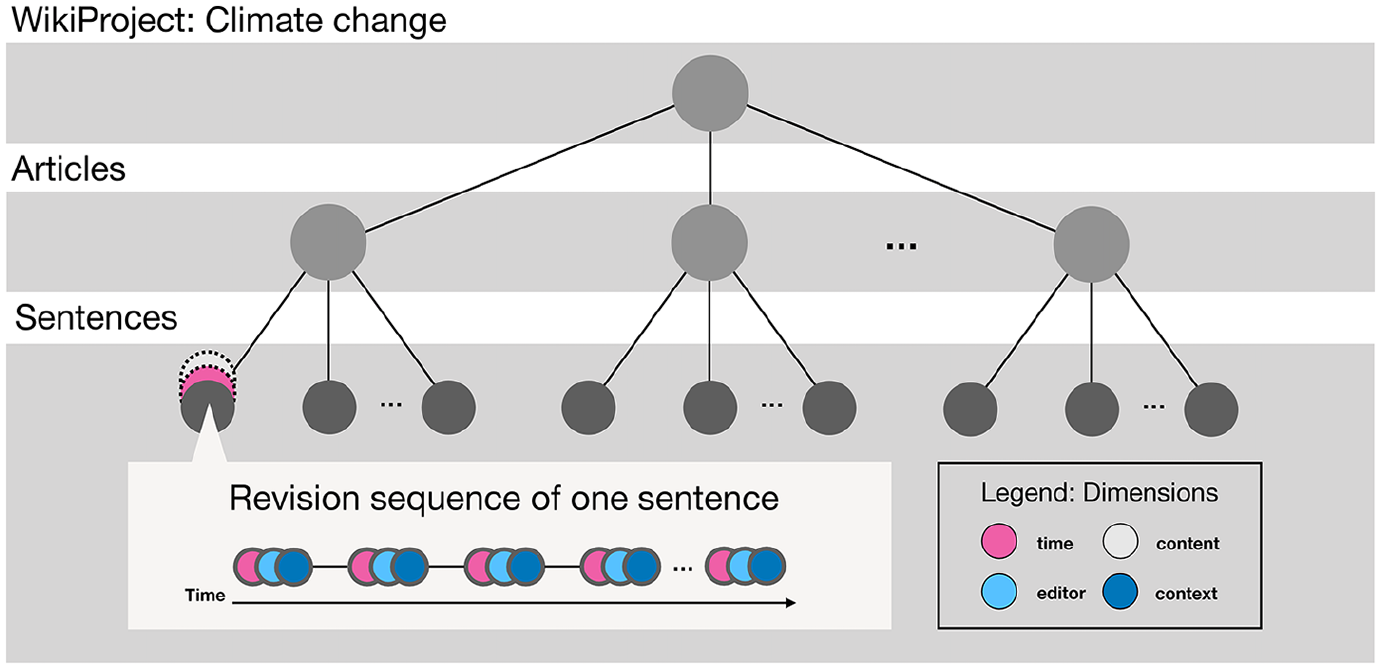

This study investigates (v1) moderation and (v2) collaboration in the edit summary 5 of each revision. While (v1) moderation is about ensuring edit quality through scrutiny of content for accuracy, neutrality, and completeness, as well as enforcing conduct policies to address disruptive behavior, (v2) collaboration involves task communication, which is characterized by coordination, responses to tasks, or updates on a task’s status. Furthermore, moderation was categorized into three detailed subcategories: (v1.1) content policies contestation when the revision challenges or disputes the content policies regarding accuracy, neutrality, or verifiability of the information presented in the sentences; (v1.2) conduct policies when the revision regulates a previous edit that fails to adhere to Wikipedia conduct policies on, for example, edit warring and harassment; (v1.3) climate denial and skepticism, when a revision identifies misleading information from climate skeptics. Finally, (v1.0) contestation encompasses general moderation edits that do not fall into any of the above subcategories.

The data to be annotated were first sampled through a cluster-based sampling strategy (Supplemental material) and then iteratively selected by applying the concept of active learning. Two trained coders annotated 13,306 edit summaries (Krippendorff’s alpha: v1 = 0.92, v1.1 = 0.86, v1.2 = 0.84, v1.3 = 1, v2 = 0.75; sample size for the reliability test = 250). Since only 0.6% of edits were related to climate denial in the annotated samples of edit summaries, v1.3 was discarded for training.

I built a binary classifier for each category by fine-tuning the pre-trained language model DistilBERT (Sanh et al., 2019). Hyperparameter tuning was conducted on the training set for 50 trials. The final model with the optimal parameter set yielding the best performance was used (see Table 1). To identify whether the found revision type corresponds to a specific sentence or multiple sentences, I compared (1) the quoted segments in each edit summary with the edited sentences from the same revision and (2) the substantive words (nouns, pronouns, verbs, adjectives, adverbs) of each sentence of one revision with that of the corresponding edit summary.

Model performance.

Editor’s role

Editors 6 are classified according to five mutually exclusive categories: (1) special role: non-automated editors with extended rights that are not granted automatically, such as administrator; (2) bots: automated or semi-automated accounts used by approved bots; (3) unregistered: anonymous, unregistered users; (4) blocked: editors who were previously involved in editing but were later blocked; and (5) common: the rest of the editors.

Editor’s engagement

The editor’s prior engagement with the sentence indicates whether and how often they have engaged with it. Adopting the approach of Keegan et al. (2016), each revision of a sentence in the order i is sorted into five groups according to its editor’s prior engagement: (1) solo: the editor immediately revised the sentence after their previous revision at i-1; (2) reactive editing—ping-pong: the editor engaged in ping-pong-playing editing at i-2 with another editor at i-1; (3) reactive—recent: the editor revised the sentence within a time frame (at i-3 to i-5) before the current edit; (4) reactive—inactive: the editor has made a prior revision before i-5; and (5) newcomer: the editor has no record of previous edit.

Shared frailty models and meta-analysis

Survival analysis has been used in fields such as medicine to model the occurrence of diseases following treatment interventions and mechanical engineering to predict the risk of component failure due to changes. In this study, it was employed to estimate revision hazard—the probability that an edit with certain characteristics would occur. This identifies which edits with specific characteristics are more likely to “survive” revision. Compared to regression models that use continuous dependent variables, the regression models in survival analysis can handle the time-to-event data and apply statistical techniques of censoring to ensure accurate estimation for incomplete event history. Survival analysis examines how factors affect a particular event’s likelihood compared to the reference group’s at a given time, using the hazard ratio (HR) as an estimate. While an HR greater than 1 indicates that an individual or group has a higher hazard rate than the reference group, a HR lower than 1 suggests a lower hazard rate. For example, an HR of 1.2 implies that the hazard rate for an individual or group is 20% higher than that of the reference group, meaning the event is expected to happen sooner.

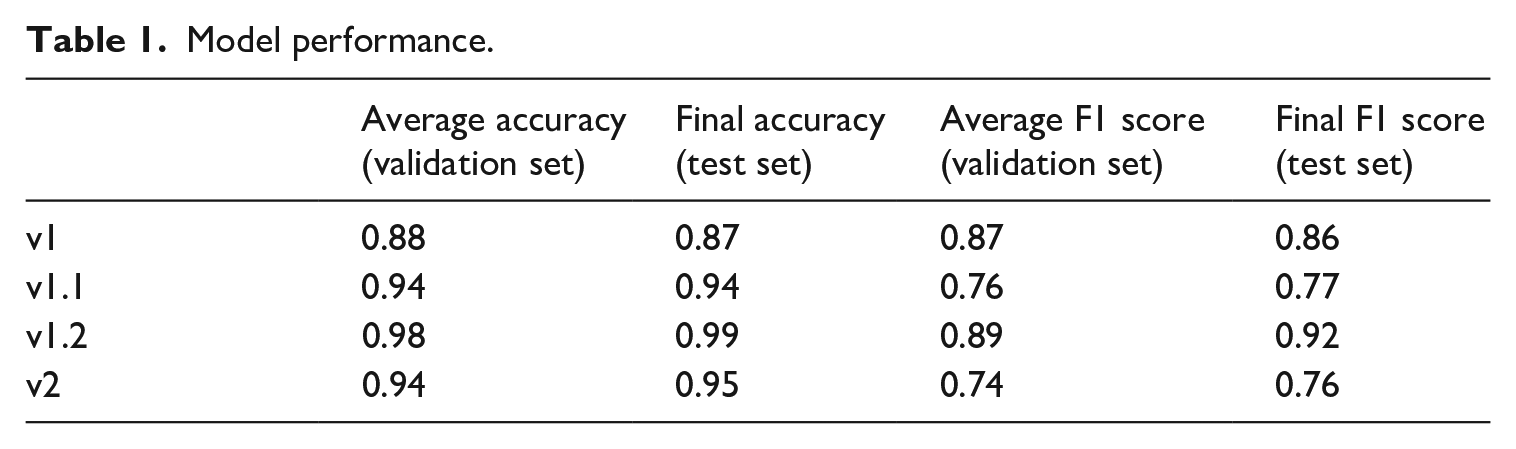

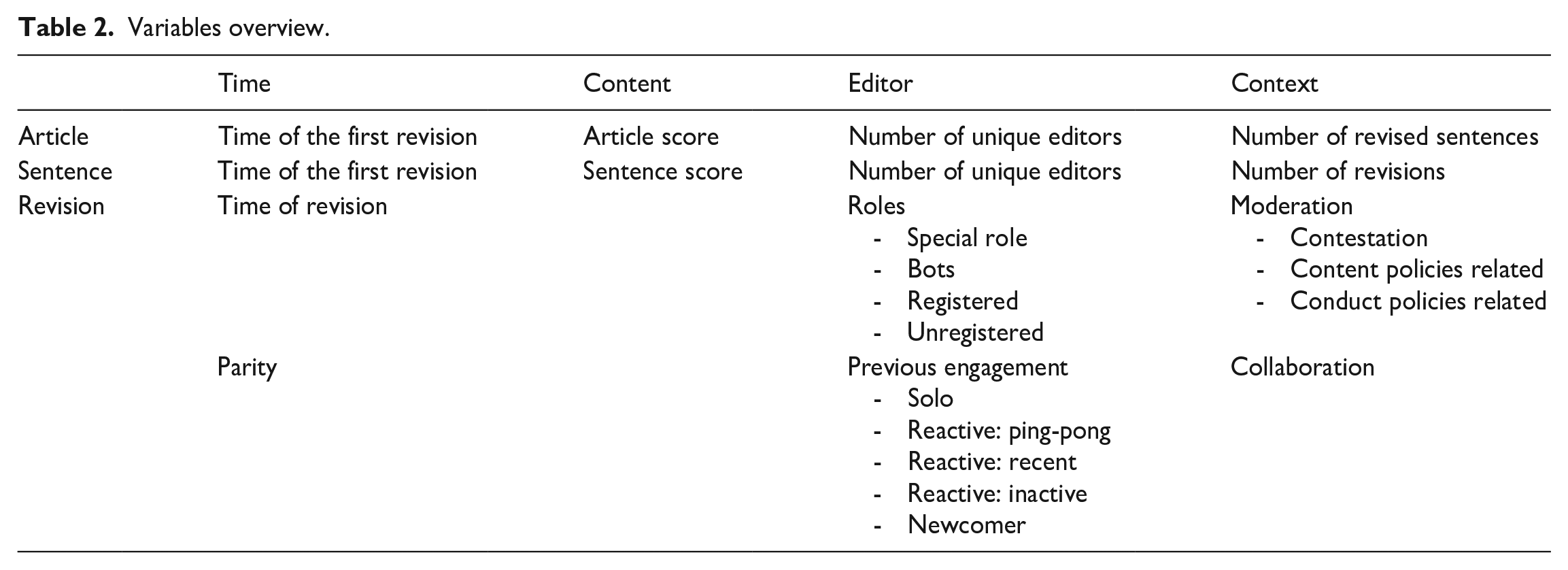

The data structure of this study is nested, with revisions grouped at the sentence level and sentences grouped at the article level. Therefore, the shared frailty model, a variant of the survival analysis that measures survival times’ dependence on recurrent events (Balan and Putter, 2020; Therneau, 2015), was applied. Conditional on the frailties of the sentences, the frailty model differentiates the revision hazards for revisions of each sentence. The dependent variable was the time and occurrence of revisions. The independent variables (Table 2) were the content relevance (content) and the start time of the first edit (start) for each sentence. For each revision, the variables were the revision time (time) and the count of previous revisions (parity). In addition, not only the characteristics of individual edits that were revised (editsrevised) but also those of their follow-up edits (editsrevising) impact the revision hazard. Thus, I incorporated editor’s prior activity (engagement), access level (role), and contextual information (moderation/collaboration) as key variables for both the editsrevised and editsrevising.

Variables overview.

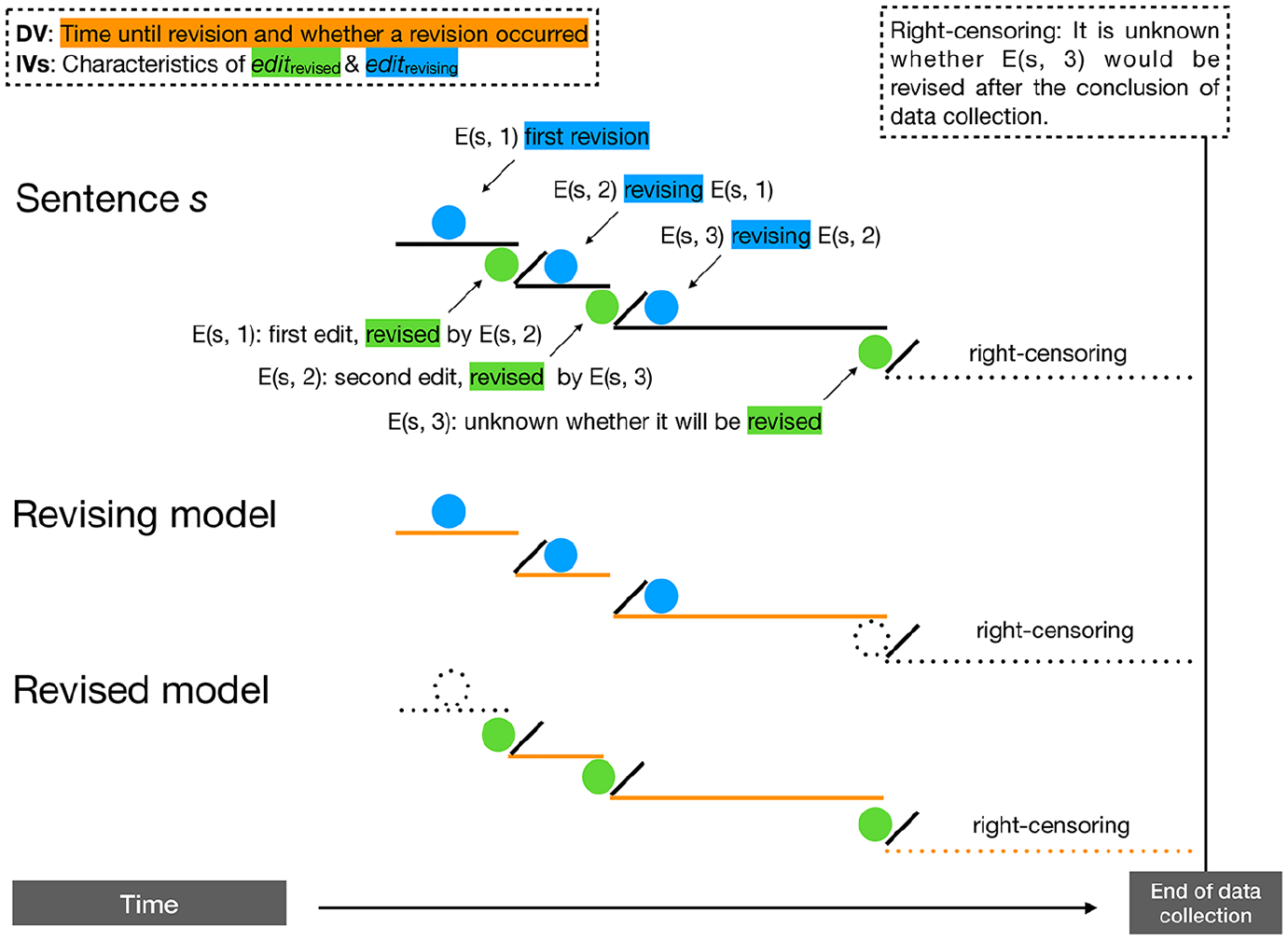

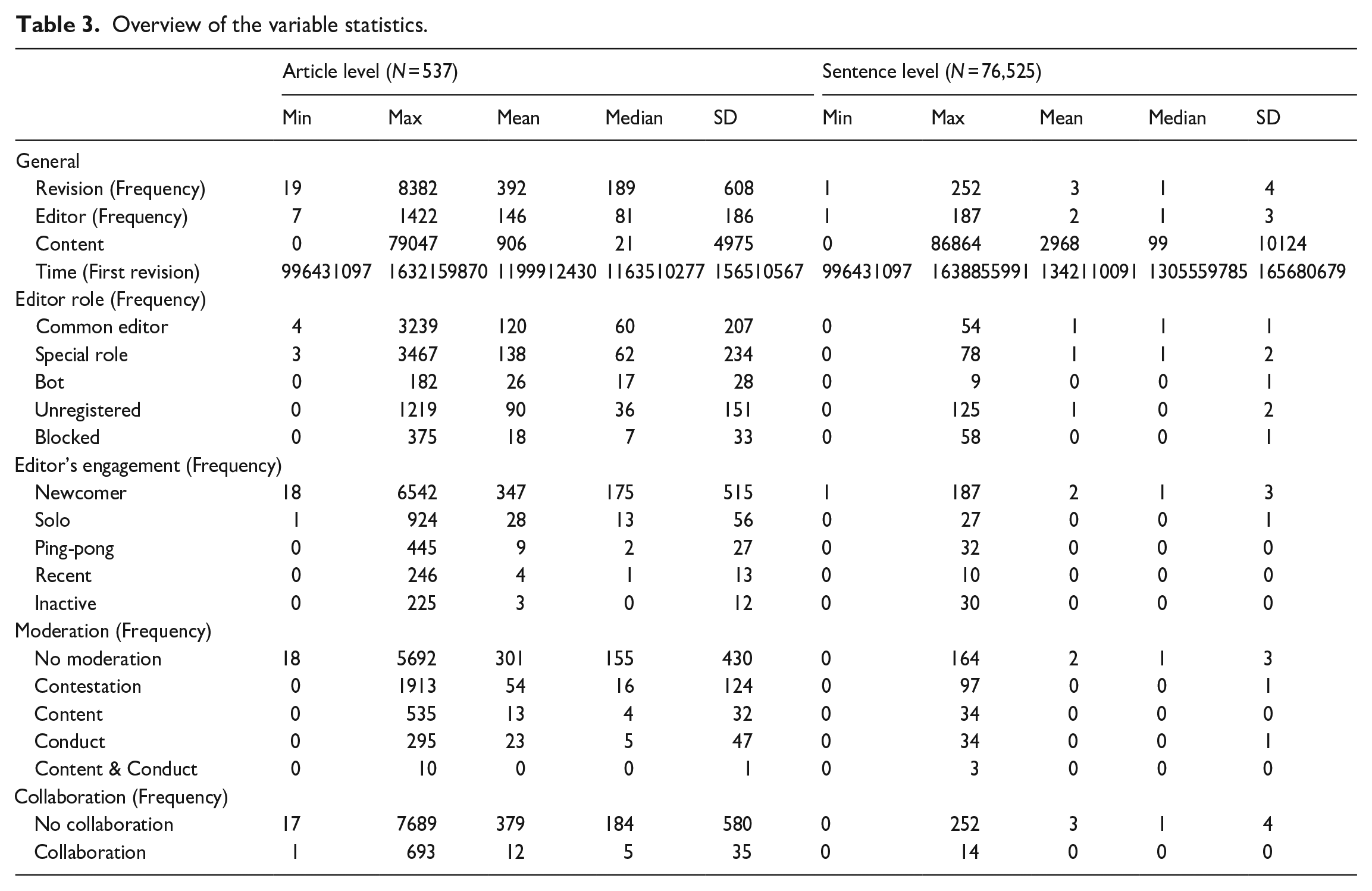

I fitted models for the revisions of the sentences of each article and employed meta-analytic approaches to synthesize the effect sizes of each model across the articles. The decision to fit the model article by article was made to acknowledge that the article operates within its own ecosystem with different flows of engaged editors, popularity, and content (Lerner and Lomi, 2020). For each article, I estimated (1) a revising model that investigates which editsrevising are more likely to occur and (2) a revised model that studies which editsrevised are more likely to survive from being revised. The time studied was the duration between each revision. In the revising model, the entire interval to examine started from the edit prior to the first revision until the last revision. In the revised model, the interval started from the first revision until the end of the study’s data collection, when edits that were not subject to further revision were processed through right-censoring (see Figure 2). The frailty models were estimated using the R package coxme (Therneau, 2015). After excluding the models that failed to converge, primarily because of insufficient data points to identify various effects, the revising models of 478 articles and the revised models of 516 articles (Supplemental material) were valid, including 537 articles with 76,525 sentences modified 210,391 times in 140,593 revisions by 48,643 editors in total (Table 3).

Visualization of the independent variables (IVs) and dependent variable (DV) in the two frailty models used in the study, illustrated with one article containing a single modified sentence. For each revision of sentence s at a given step i, the pair—the current revision E (s, i) (highlighted in blue) and the preceding revision E (s, i–1) (highlighted in green)—influences the revision hazard.

Overview of the variable statistics.

The effect sizes of each model were synthesized using the meta (Schwarzer, 2007) and dmetar (Harrer et al., 2019) in R. For each variable, vote counting, random-effects meta-analysis, a between-article heterogeneity test, and meta-regression analysis were conducted. The vote-counting approach provided an overview of the direction and statistical significance of the HRs. To account for the varying characteristics of Wikipedia articles, I employed random-effects models incorporating between-article heterogeneity to pool the effect sizes across the articles. I then conducted between-article heterogeneity tests to estimate how much each effect size varied across the articles. Meta-regression analyses were employed on the factors of articles that could moderate the effect sizes on the revision hazards in the articles as between-article moderators, including article score, 7 number of revised sentences, number of unique editors, and time of the first revision. Moreover, 31 articles in the dataset were under indefinite semi-protection, so non-autoconfirmed editors whose accounts were less than 4 days old or who had made fewer than 10 edits could not edit the articles. As protection could change the editing dynamics, especially for categories such as editor’s role and moderation (Ajmani et al., 2023; Hill and Shaw, 2015), subgroup analyses were conducted.

Findings

Vote counting and random-effects analysis

Temporal and contentfactors

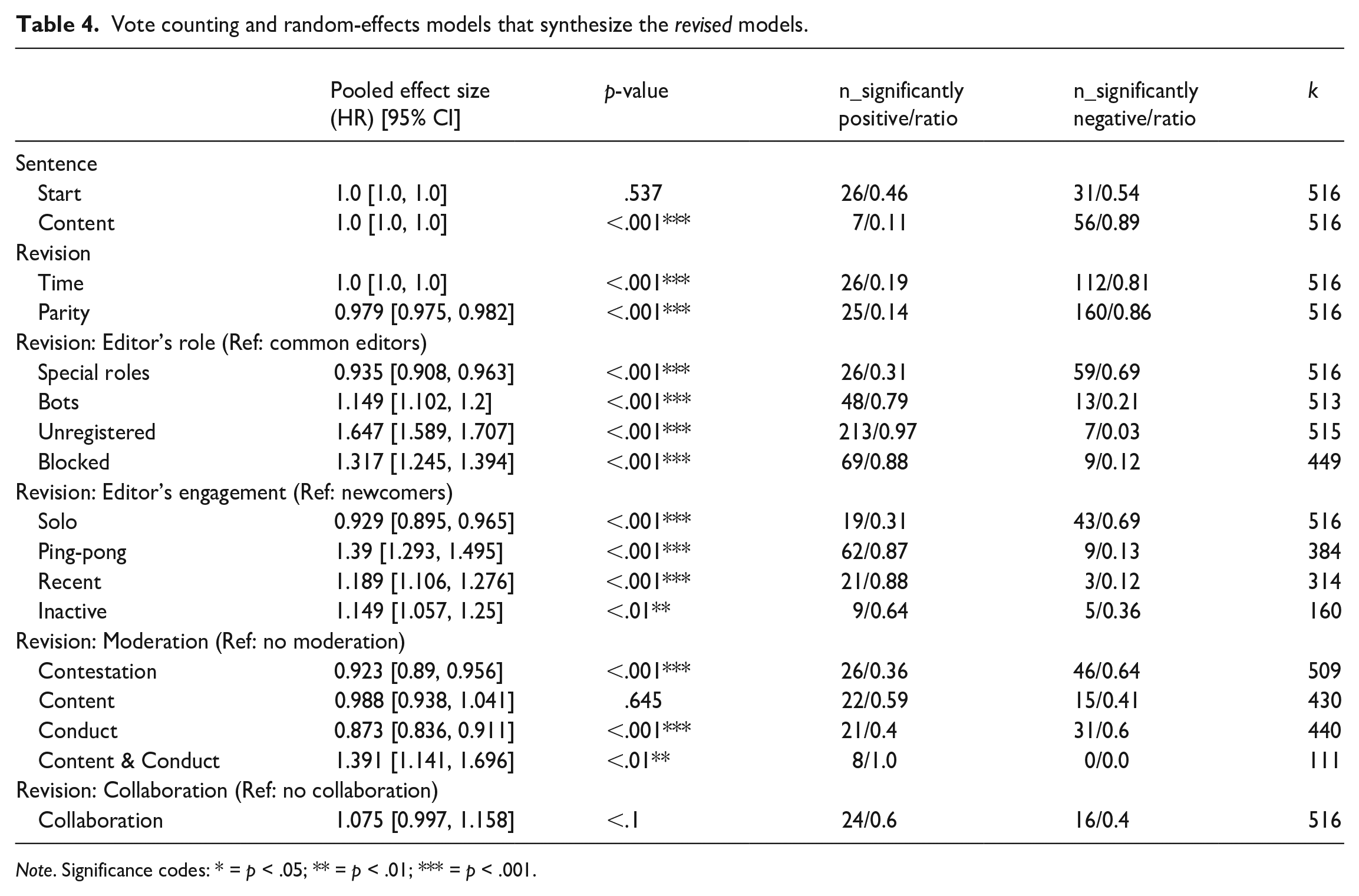

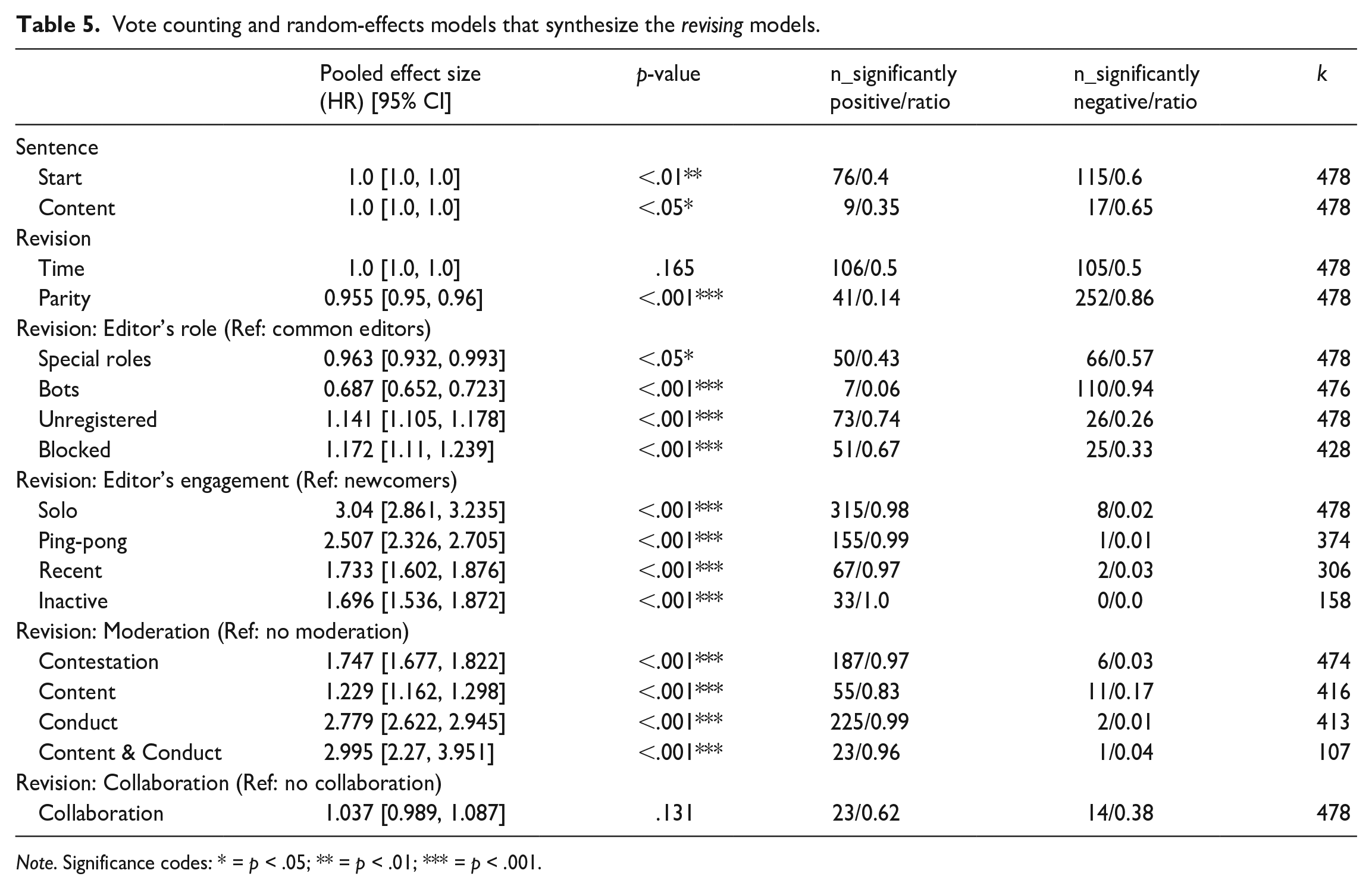

According to the results (see Tables 4 and 5), the pooled effect sizes of all variables related to time, parity, and content were relatively small (HRs ≈ 1.0), likely due to their large scales. At the sentence level, the revision hazard of the editsrevising was lower when the first revision started later (115 significantly negative cases vs 76 significantly positive cases). The increases in the sentence’s content score of the editsrevising (17 negative cases) and the editsrevised (56 negatives) had lower hazards. For each revision, there were more articles with significantly negative effects in the revision time of the editsrevised (122 negatives) than those with positive effects. An additional revision (parity), for both editsrevised (HR = 0.979, 95% confidence interval [CI]: [0.975, 0.982]; 160 cases) and editsrevising (HR = 0.955, CI: [0.95, 0.96]; 252 cases) was negatively associated with an increase in the revision hazard for most articles.

Vote counting and random-effects models that synthesize the revised models.

Note. Significance codes: * = p < .05; ** = p < .01; *** = p < .001.

Vote counting and random-effects models that synthesize the revising models.

Note. Significance codes: * = p < .05; ** = p < .01; *** = p < .001.

Editor’s role

The editsrevised of unregistered editors (HR = 1.647, CI: [1.589, 1.707]; 213 positives), blocked editors (HR = 1.317, CI: [1.245, 1.394]; 69 positives), and bots (HR = 1.149, CI: [1.102, 1.2]; 48 positives) were more likely to be revised than those of common editors. By contrast, the editsrevised submitted by editors with special roles (HR = 0.935, CI: [0.908, 0.963]; 59 negatives) had lower revision risks. Regarding the effect sizes of the editor’s role in the editsrevising, unregistered editors, despite the small overall effect size (HR = 1.141, CI: [1.105, 1.178]; 73 positives), and blocked editors (HR = 1.172, CI: [1.11, 1.239]; 51 positives) were more positively associated with revision hazard, while the bots, with a large overall effect size (HR = 0.687, CI: [0.652, 0.723]; 110 negatives) and editors with special roles (HR = 0.964, CI: [0.932, 0.993]; 66 negatives) primarily had a negative impact on the occurrence of revisions.

Editor’s engagement

All variables in editor engagement of the editsrevising had positive pooled effect sizes and were statistically significant. Compared to the editsrevising by newcomer editors, revision hazards were higher when the editsrevising were made by reactive editors who had previously edited a sentence. Editsrevising by reactive editors, especially solo editors who extended their previous edits to another revision (HR = 3.04, CI: [2.861, 3.235]; 315 positives) or ping-pong-playing editors who modified the editsrevising right before (HR = 2.507, CI: [2.326, 2.705]; 155 positives) showed a highly positive association with revision hazards. The largest effect size of the editsrevised in the category was among ping-pong-playing editors (HR = 1.39, CI: [1.293, 1.495]; 62 positives), while the smallest was among solo editors (HR = 0.929, CI: [0.895, 0.965]; 43 negatives), implying that edits made by solo editors are less likely to be revised.

Contextual dependencies: moderation and collaboration

Editsrevising were more likely to occur when they moderated a previous edit, particularly when this moderation was based on content and conduct policies (HR = 2.995, CI: [2.27, 3.951]; 23 cases), conduct policies (HR = 2.779, CI: [2.622, 2.945]; 225 positives), or general contestation (HR = 1.747, CI: [1.677, 1.822]; 187 positives). Revision hazard was also positively related to the editsrevising that revised previous edits violating content policies (HR = 1.229, CI: [1.162, 1.298]; 55 cases). By contrast, the effects of contestation in the editsrevised were not commonly found in most articles, where the pooled effect size of the moderation based on content policies (p = .645) was not significant. While editsrevised that were modified due to contestation (HR = 0.923, CI: [0.89, 0.956]; 46 negatives) and conduct-policy-related moderation (HR = 0.873, CI: [0.836, 0.911]; 31 negatives) had lower revision hazards, those moderated based on content and conduct policies had higher revision hazards (HR = 1.391, CI: [1.141, 1.696]; 8 positives). Collaboration-related edits were not significantly associated with revision hazard.

Heterogeneity analysis, meta-regression, and subgroup analysis

The between-article heterogeneity tests, which assessed the variation between the effect size of each variable, showed that effect size heterogeneity was statistically significant for all variables, with certain variables’ effects greatly depending on specific article-level characteristics (Supplemental material). Moderator analysis was conducted on the factors of articles that could moderate the effect sizes on the revision hazards in the investigated articles as between-article moderators, including article score, number of revised sentences, number of unique editors, and time of the first revision (Supplemental material). Only article score did not moderate any between-article heterogeneity in effect size estimates. Moreover, the subgroup analysis showed that the effect difference between protected articles and non-protected articles was significant for multiple variables (Supplemental material). Notably, the risks of revisions for editsrevised related to contestation and conduct-policy enforcement or for editsrevised made by special role editors or bots were smaller in protected articles than in non-protected articles, while revisions were more likely to occur due to the contestation of editsrevising in protected articles than in non-protected articles.

Summary

Across the 537 articles, the statistically significant pooled effect sizes were more common in the revising models (examining which editsrevising are likely to occur) than in the revised models (analyzing which editsrevised are likely to persist). These effect sizes of the editsrevising mainly fall into two category groups: editors’ engagement and contextual dependencies. Regarding editors’ engagement, solo and ping-pong-playing editors were more likely to contribute to revisions than newcomer editors. While newcomer editors who modified sentences only once were the most pervasive, their associated revision hazard was low. Regarding contextual dependencies, revision hazard was higher when editsrevising were conducted due to violated conduct policies or both content and conduct policies.

The editsrevised of reactive editors were positively associated with revision hazard. The moderation-based editsrevised, excluding those driven by content and conduct policy enforcement, were negatively related to revision hazard, suggesting that conflicts are relatively uncommon and do not extend over edit sessions. The category group editor’s role was crucial in the models of editsrevised, as blocked and unregistered editors, whose edits were more likely to be revised, destabilized collective knowledge construction. By contrast, editsrevised by special role editors had lower revision risks. This echoes previous Wikipedia research (Shaw and Hill, 2014) on power concentration showing that experienced editors make more reliable edits that are unlikely to be revised. However, special role editors or bots as characteristics of the editsrevising did not necessarily increase the likelihood of revision. Compared to common editors, edits made by bots had a higher risk of being revised.

The association between collaboration and revision hazards was not statistically significant in the models of editsrevised or editsrevising. This supports previous research (Bipat et al., 2018; Morgan et al., 2013; Viegas et al., 2007) indicating that indirect coordination is more common on Wikipedia’s talk pages. Edits with collaborative comments that serve as a starting point pointing to the “talk” page or because of negotiations on the talk page are less often found than the moderated edits conducted independently. Regarding temporal and content factors, revisions tended to occur when the first revision of the sentence started late or when content relevance was higher. By contrast, revisions were less likely to occur when the editsrevised were conducted later or frequently.

Conclusion and discussion

In response to Faraj et al.’s (2011: 1235) call to examine “the connections of ideas along the flow of people” in knowledge collaboration, I explored the challenge of understanding how collective knowledge is shaped and maintained on Wikipedia by shifting from an actor-centric focus to a sentence-level analysis of revision mechanisms. By considering modified sentences as carriers of collective knowledge, I investigated how individual pieces of knowledge evolve within Wikipedia’s ecosystem. I identified and analyzed the editorial, contextual, content, and temporal factors that influence the revision mechanisms by employing survival analysis and meta-analysis to model their impact.

The findings highlight tensions between fostering a self-organized culture and managing the project efficiently and coherently, with responses emerging through peer and bureaucratic control. On the one hand, conflicts arise among editors over how knowledge on Wikipedia should be shaped, as revisions are mainly triggered by moderation and facilitated by reactive editors consistently monitoring revisions. On the other hand, Wikipedia’s open model still ensures the stability and maintenance of knowledge through its revision mechanisms. While some revisions stem from general contestations, many are driven by efforts to regulate collective knowledge through established community policies and automated tools. Disruptive edits, such as those of blocked editors and those that violate community-defined rules, are effectively identified and corrected. Content can remain stable even amid frequent revisions, as edits that have previously been moderated or contested are less likely to be revised.

Epistemic power in the revision processes primarily resides in and is negotiated through collective endeavors of mutual observation, iterative refinement, and community moderation. Editors’ engagement in revising sentences, particularly through solo and ping-pong-style revisions, significantly impacts subsequent revisions. This highlights the presence of self-regulation and editorial oversight. Meanwhile, editors’ roles are not as crucial as their engagement or moderation when prompting revisions. Although edits made by elite editors have a lower risk of being revised, revisions are not necessarily driven by them. This aligns with previous research (Jemielniak, 2014; Pentzold, 2017), which suggests that while elite editors may possess more power, this power’s scope is limited. Therefore, the epistemic power in Wikipedia is not concentrated in the hands of a few elite editors with special roles but rather distributed and negotiated through collective processes enforced by highly committed editors who constantly surveil their knowledge areas, ready to engage in conflicts and contestations that shape the very fabric of collective knowledge.

When reflecting on climate change as a case, it is unsurprising that climate change, mitigation policies, and energy-related issues are the most relevant topics when editors disputed their revisions. However, the association between revision and content relevance at the sentence level was only slightly positive in a few instances. Similarly, content relevance at the article level did not mediate any of the variables. Accounting for the impacts of moderation-driven revisions and prior editor engagement revealed that Wikipedia’s knowledge collaborations are shaped less by subject-specific concerns and more by the broader imperative to converge collective knowledge in line with the shared understanding of engaged editors at a given moment. This dynamic suggests that the process of knowledge construction is driven more by ongoing community moderation than by the inherent relevance of the content itself. As for generalizability, this study’s analysis of a large-scale Wikipedia dataset related to climate change confirms several previous findings (Esteves Gonçalves da Costa and Cukierman, 2019; Weltevrede and Borra, 2016). Given its broad scope spanning a diverse range of articles that contribute to communicating climate change and other knowledge areas, this study provides insights into collaborative knowledge patterns that may be generalizable to other peer-based knowledge production contexts. However, caution is warranted when extrapolating the study’s results, as Wikipedia’s editor base is heterogeneous in expertise and commitment. In addition, the ideas, involved editors, and stages of collaboration also contribute to varied revision mechanisms. Indeed, heterogeneity tests among article models suggest that each article functions within its own unique ecosystem, underscoring the importance of considering context-specific factors when analyzing knowledge collaborations.

The findings further suggest that contestation and conflict over collective knowledge building are not endless, even in highly contentious subjects like climate change. As argued by McDowell and Vetter (2021), stable consensus can emerge through continuous moderation efforts guided by policies and rules and with millions of viewers engaging with the content. Unlike social media platforms, where epistemic power is often shaped by social networking, influential users, or viral content, Wikipedia’s power dynamics are rooted in the iterative process of constructing and moderating ideas, shaped, reconciled, and revised through an ongoing convergence of participating editors (Faraj et al., 2011). Despite the presence of a hierarchical structure among editors based on their commitment and previous contributions, power is not centralized. Instead, it is broadly distributed across accountability networks of community moderation (Nahon, 2011; Pentzold, 2017).

Meanwhile, it is essential to recognize the variability and diversity of knowledge on Wikipedia and the assemblage that constructs it. The blurring boundaries between citizen and professional, the engendering temporary roles of contributors, the dissipating context of knowledge, and the fluidity of organizational structures (Faraj et al., 2011; Neuberger et al., 2023) all contribute to multifaceted revision mechanisms that maintain resilience against vandalism and misinformation. Resilience emerges from a complex interplay of knowledge, actors, rules, algorithmic tools, and technological systems. However, systemic biases and inclusion challenges persist, as Wikipedia primarily relies on secondary sources that may already reflect existing inequalities. These issues are further amplified by the composition of its editing community, which influences what knowledge is included or excluded (Martini, 2024; McDowell and Vetter, 2021). As Wikipedia continues to serve as a foundational source for large language model training and remains widely cited across social media as an authoritative reference, examining how its representation of knowledge is constructed and maintained becomes crucial. By examining the intricacies of knowledge collaboration and the underlying power dynamics, we can better evaluate Wikipedia’s resilience, adaptability, and broader impact on shaping collective understanding of the world.

Limitations

To advance future research on revision mechanisms in Wikipedia, it is crucial to acknowledge the limitations of this study. First, while it identified key variables influencing revision mechanisms, knowledge collaboration on Wikipedia remains highly complex and multifaceted; the identified factors cannot fully capture the intricate mechanisms underlying revisions. Editor categorization could be refined by, for example, distinguishing between superusers or task forces from specific projects, as well as incorporating other nuanced dimensions, while taking the uncorrelatedness of the variables into account. Second, the inference of editorial context related to moderation and collaboration was based solely on edit summaries. The full scope of discussions and coordination on the talk page was not adequately represented. Future research could leverage large language models and text-mining technology to establish connections between discussions on the talk page and resulting revisions and to identify revisions driven by indirect coordination. Third, the computational requirements of the frailty model, combined with the large dataset’s size and nested structure, posed challenges for data analysis. To manage this complexity, I employed a two-step approach: first fitting frailty models to each article and then applying meta-analysis to synthesize the results. However, efficient frailty analysis that can accommodate multi-level analysis in large-scale datasets remains an area of exploration. Finally, the methods used in this study did not fully explore the variability between individual Wikipedia articles. Researchers could employ clustering analysis to group individual articles’ models based on shared characteristics, such as similar term score distributions, and compare the revision mechanisms across distinct article groups to uncover further patterns. In addition, qualitative research focusing on specific sentences and topics that undergo frequent revisions or have high content scores will provide nuanced insights into content negotiations and epistemic debates.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448251336418 – Supplemental material for Decoding revision mechanisms in Wikipedia: Collaboration, moderation, and collectivities

Supplemental material, sj-pdf-1-nms-10.1177_14614448251336418 for Decoding revision mechanisms in Wikipedia: Collaboration, moderation, and collectivities by Xixuan Zhang in New Media & Society

Footnotes

Acknowledgements

I would like to thank my advisors and colleagues for their valuable comments and suggestions. I am also grateful to Angelika Juhász and Svea Komm for their dedicated work in annotating the dataset, and to the HPC Service of FUB-IT, Freie Universität Berlin, for providing computing time. I extend my sincere thanks to the reviewer for their constructive feedback and thoughtful suggestions, which significantly improved this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Federal Ministry of Education and Research of Germany, funding code 16DII125.

Notes

Author biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.