Abstract

The emergence of deepfakes has raised concerns among researchers, policymakers, and the public. However, many of these concerns stem from alarmism rather than well-founded evidence. This article provides an overview of what is currently known about deepfakes based on a systematic review of empirical research. It also examines and critically assesses regulatory responses globally through qualitative content analysis of policy and legal documents. The findings highlight gaps in our knowledge of deepfakes, making it difficult to assess the appropriateness and need for regulatory action. While deepfake technology may not introduce entirely new and unique regulatory problems at present, it can amplify existing problems such as the spread of non-consensual pornography and disinformation. Effective oversight and enforcement of existing rules, along with careful consideration of required adjustments will therefore be crucial. Altogether, this underscores the importance of more empirical research into the evolving challenges posed by deepfakes and calls for adaptive policy approaches.

Introduction

“You thought fake news was bad? Deepfakes are where truth goes to die” (Schwartz, 2018). Such headlines have been widely circulating with the advent of the deepfake phenomenon. The term “deepfake” first appeared in 2017, coined by a Reddit user to describe pornographic content apparently featuring the faces of famous women (McCosker, 2022). Since then, it has become a buzzword for manipulated media that rely on neural networks trained on extensive datasets to “learn” patterns that enable the imitation of real individuals and the synthesizing of fictional ones (Haller, 2022). Due to the use of this technology, deepfakes are said to be distinct from previous forms of falsified media, specifically in terms of scale, scope, and accessibility (Shahzad et al., 2022).

Deepfakes have caused widespread concern. However, much of the current debate is driven by anecdotal and speculative alarmism than by well-founded evidence and reasonable predictions (Kalpokas and Kalpokiene, 2022). Journalists have painted a dystopian picture and created a sense of impending doom (Gosse and Burkell, 2020; Wahl-Jorgensen and Carlson, 2021; Westerlund, 2019; Yadlin-Segal and Oppenheim, 2021). This is accompanied by fear-mongering by the Artificial Intelligence (AI) industry (Nature, 2023). Prominent tech executives have called for a temporary halt to the development of advanced AI (Pause Giant AI and Experiments: An Open Letter, 2023), and Microsoft’s and Google’s CEOs have publicly warned about the threats posed by deepfakes (Bartz, 2023). While this could be interpreted as a corporate response to demands for greater accountability, there may be a hidden agenda to stifle emerging competition (Bennett, 2023) and profit from “panic-marketing” (Weiss-Blatt, 2023).

Despite alarmist warnings that deepfakes will “wreak havoc on society” (Toews, 2020) and pressure on governments to intervene (e.g. Open Letter: Disrupting the Deepfake Supply Chain, 2024), regulators have been more hesitant in their responses. For example, the European AI Act 1 classifies deepfakes as “limited risk AI systems” and sets minimal transparency requirements. Legislation criminalizing the distribution of certain deepfakes has been enacted in the United States and in China, which was accompanied, however, by concerns that governments could use such rules to curtail free speech and control information flows (Hine and Floridi, 2022).

A growing research field discusses deepfakes’ potential harm (see, for example, Chesney and Citron, 2019a for an overview), however, much less is known about the empirical evidence that substantiates these concerns. There are some literature reviews, but they focus exclusively on qualitative studies (Vasist and Krishnan, 2022b), or a limited number of empirical studies due to their publication date (Godulla et al., 2021; Vasist and Krishnan, 2022a). Moreover, there are no systematic overviews of dedicated regulatory responses to deepfakes. This article addresses these gaps and provides an up-to-date systematic literature review of what is currently empirically known about deepfakes and maps the emerging regulatory landscape through in-depth qualitative content analysis of policy and legal documents. This is to offer a comprehensive understanding of the deepfake phenomenon and to provide directions for future research and policymaking.

Systematic literature review

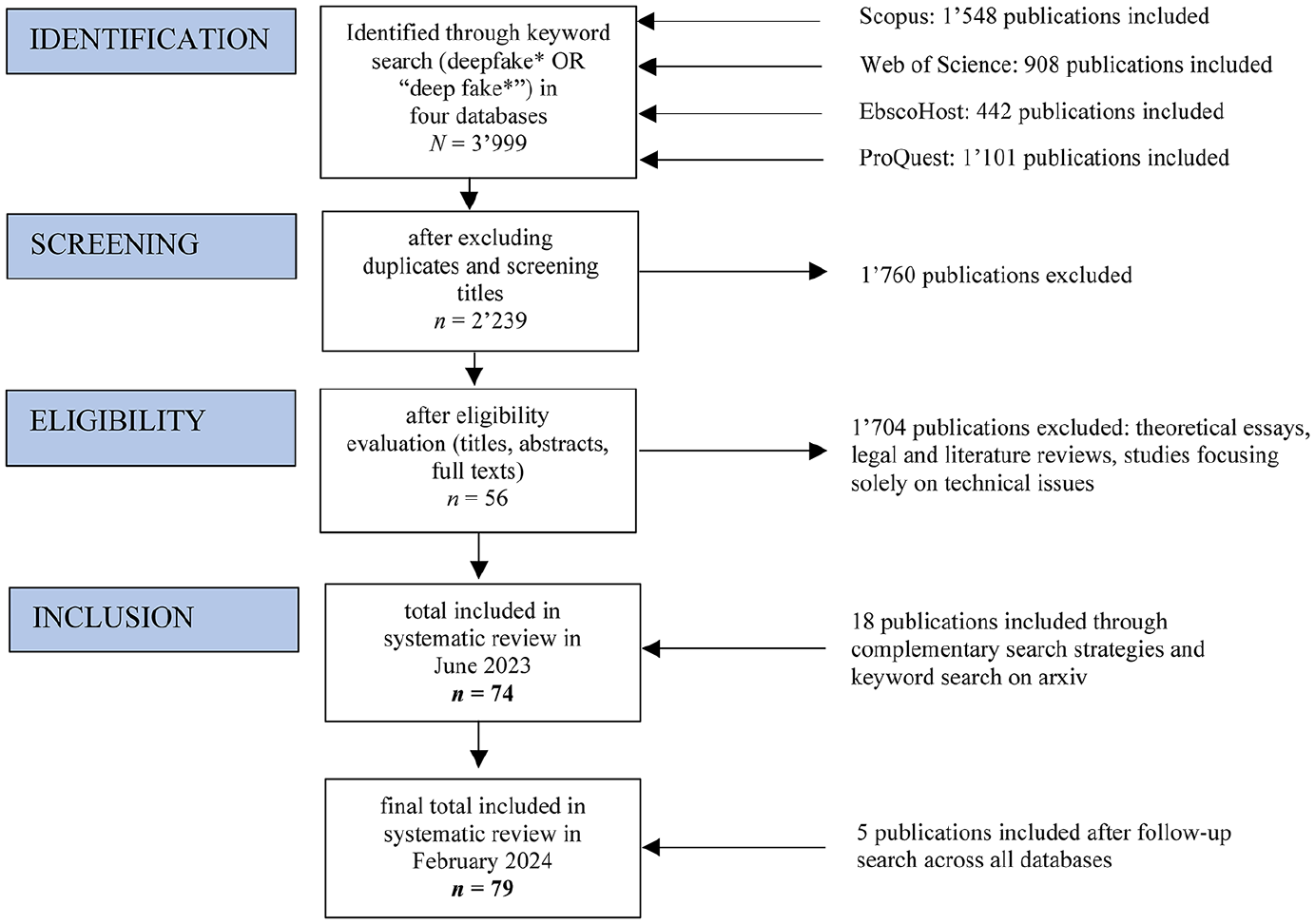

Considering calls for empirical evidence for regulation, we conducted a systematic literature review of empirical research on deepfakes to consolidate existing knowledge regarding their current uses, effects, consequences, and regulatory hurdles. Relevant literature was identified following the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) 2020 guidelines (Page et al., 2021; Figure 1). A detailed search of four databases (Scopus, Web of Science, EbscoHost, ProQuest) was conducted in June 2023. The scope was not confined to any specific research field as a systematic review aims to synthesize research from across disciplines. To ensure inclusion of all relevant literature, we conducted a broad search using the keywords “deepfake*” and “deep fake*.” Search results were restricted to journal articles and conference proceedings in English. All identified records (n = 3999) were exported to Zotero and screened for duplicates, inaccessible, or obviously irrelevant studies. Next, eligibility criteria were defined and evaluated based on titles, abstracts, and, if necessary, full texts. During this process, theoretical essays, legal and literature reviews, and studies dealing exclusively with technical issues were excluded, as the goal was to synthesize empirical research on deepfakes. Emphasizing empirical research may inadvertently favor topics and regulatory challenges that are easier to investigate empirically, potentially overshadowing other issues discussed in the literature, such as financial fraud (e.g. Abbas et al., 2023; Bateman, 2020). However, the analysis reveals that the regulatory problems identified as central in empirical literature align with those recognized as political priorities.

PRISMA flowchart of literature collection process.

To enhance the comprehensiveness of our literature review and address database search limitations, we identified additional studies by reviewing reference lists of already included publications and conducting reverse searches on publications that cite important papers in the field. As this primarily revealed relevant computer-science studies available on arXiv, this database was searched for additional studies. In February 2024, following the initial review of this article, a follow-up search across all databases uncovered five additional articles, totaling 79 for analysis (see Annex I in the supplemental material for the list of studies).

Given the diversity of research questions and methods employed across the studies, we opted for a qualitative approach based on a deductive–inductive coding scheme to review the literature. Titles, authors, publication years, and disciplines 2 provided information on the field’s evolution over time and across disciplines. The coding of the methods and the samples allowed comparison of results and methodological limitations. To shed light on the conceptual boundaries of the deepfake phenomenon, we also examined whether a definition of “deepfake” was provided and, if so, what conceptual elements were used in the definition. In addition, evidence of the prevalence of deepfakes and key regulatory challenges related to it were identified.

The following chapter offers a summary of the state of empirical research on deepfakes. Overall, empirical evidence remains limited, making it difficult to give informed assessments of current regulatory needs. Notably, there is limited research on how deepfakes are created, used, spread, and what potential positive and negative individual and societal impacts they can have. Thus we do not know whether the often-voiced concerns about the negative consequences of deepfakes align fully with the problems they may actually cause.

The research field over time and across disciplines

Empirical work on deepfakes emerged around 2020 and has steadily increased since then, with most studies being published in 2022. It comes from different disciplines, including the social sciences (n = 40), and information and computer science (n = 37). Empirical studies on deepfakes in the life sciences and law are scarce, with one study each.

Social-science research primarily focuses on the societal impact of deepfake disinformation and the psychological harm caused by deepfake pornography. These studies apply various methods, including online experiments, surveys, interviews, and content analyses. In contrast, information- and computer-science studies consist almost entirely of experimental studies that test automated versus human deepfake detection. Both the life science and law study rely on vignette surveys to assess user perceptions and ethical concerns related to deepfakes. A cross-citation analysis using LitMaps revealed that studies only rarely refer to each other across disciplinary boundaries, indicating a predominantly disciplinary rather than interdisciplinary nature. Future research could benefit from a more integrated knowledge on deepfakes.

With a few exceptions (e.g. Shahid et al., 2022), studies focus on the global north, especially the United States and European countries such as the United Kingdom, the Netherlands, and Germany, and only seven follow an internationally comparative approach. Because the potential negative effects of deepfakes could be greater in developing countries and under authoritarian regimes (Bateman, 2020; Gregory, 2021), there is need for more research in these specific contexts.

Definition of deepfake

Among the 79 articles, 61 provided a definition of the term “deepfake.” There is, however, no universally accepted definition and studies diverged in their interpretation of common conceptual elements. For example, there is agreement on the use of technology as a key characteristic of deepfakes, but the lack of clarity on the specific technology required blurs the conceptual boundaries between deepfakes and less sophisticated audiovisual manipulations known as “cheap fakes” (Paris and Donovan, 2019) or “shallow fakes” (Johnson, 2019). Similarly, no consensus emerged on the media covered by the term; 36 definitions include videos, 17 images, and 15 audio. Finally, following the definitions by Westerlund (2019) and Chesney and Citron (2019a), 23 studies highlighted the hyper-realistic nature of the content and 20 specified that deepfakes involve a false depiction of someone saying or doing something they never did. Definitions typically do not refer to false representations of objects or events. Expanding the scope to include such depictions may prove crucial given the emergence of deepfakes not involving people, such as falsified satellite images (Zhao et al., 2021) or the widely shared deepfake showing an explosion at the US Pentagon (Marcelo, 2023). Future research should contribute to clarifying the meaning and conceptual limits of deepfakes to prevent it from degenerating into a conceptually ambiguous buzzword akin to “fake news.” One potential approach could involve expanding the deepfakes concept to encompass the motives and actions of its creators, similar to the extension seen with “disinformation.” So far however, only four studies have specified (malicious) intent as a conceptual element. In addition, contextual approaches to defining deepfakes could be helpful in more accurately capturing the circumstances in which deepfake technology is used and its distinct manifestations. Understanding the context can inform the development of more sophisticated detection and response strategies and can provide the flexibility needed to adapt definitions as new uses emerge.

Prevalence of deepfakes

Empirical evidence on the prevalence of deepfakes is largely missing. No clear conclusion can therefore be drawn about the extent of the deepfake phenomenon and its specific manifestations. However, initial research sheds light on how accessible deepfake technology currently is and what types of deepfakes are commonly circulating. In terms of accessibility, less data input is required compared to previous technology (Amezaga and Hajek, 2022). This may be reinforced by recent advancements such as OpenAI’s “Sora” model, which generates video from text input (OpenAI, 2024). Nevertheless, studies suggest that advanced tools and time are required to create reasonably convincing deepfakes (Mehta et al., 2023; Weikmann and Lecheler, 2023). Accordingly, Gamage et al. (2022) observed an emerging marketplace for monetizing customized deepfake-production on Reddit. Regarding the circulation of deepfake content, a large part seems to be entertainment or humor (Cho et al., 2023; Dasilva et al., 2021), even during the Russo–Ukrainian war (Twomey et al., 2023). Such use of deepfakes has not been a primary concern for regulators, but this may be slowly shifting (see regulatory responses below). Some studies also referred to a report by Deeptrace, a Netherlands-based cybersecurity company, which found that 96% of the 14,678 identified deepfake videos in 2019 were pornographic (Ajder et al., 2019). According to a report by Home Security Heroes (2023), the number of deepfake videos rose to 95,820 in 2023, of which 98% were pornographic in nature. However, it is difficult to assess whether these are reliable numbers, and how they should be interpreted.

Key regulatory challenges

While deepfakes raise various concerns, the following three key regulatory challenges emerged in the analyzed empirical research: (1) people’s (in)ability to detect deepfakes, (2) deepfake disinformation, and (3) deepfake pornography. The respective findings are discussed in the following. Special attention is paid to the effectiveness of countermeasures and the policy recommendations by scholars.

Detection

The first regulatory challenge relates to people’s difficulties in detecting deepfakes—a concern that is frequently voiced in public discourse (Wahl-Jorgensen and Carlson, 2021). A range of experimental computer-science studies (n = 22) investigated human deepfake detection, often compared to AI detectors. They often drew on large datasets containing deepfake images (Bray et al., 2023; Hulzebosch et al., 2020; Lago et al., 2022; Liu et al., 2020; Nightingale and Farid, 2022; Preu et al., 2022; Rössler et al., 2019; Shen et al., 2021) or videos (Chen et al., 2022; Groh et al., 2022; Khodabakhsh et al., 2019; Kim et al., 2018; Köbis et al., 2021; Korshunov and Marcel, 2020; Lovato et al., 2023; Prasad et al., 2022; Somoray and Miller, 2023; Tahir et al., 2021; Ternovski et al., 2021; Wöhler et al., 2021), while deepfake audio has not been sufficiently studied, with the exception of Müller et al. (2022). Across these studies, participants, on average, correctly identified 63.3% of deepfakes. Whether this is cause for concern is a matter of interpretation, depends on the specific context and requires more research. Research further suggests that detection varies greatly between different deepfakes. For example, lower image or video resolution made it harder for people to recognize whether content was authentic or not (Groh et al., 2022; Hulzebosch et al., 2020; Rössler et al., 2019; Tahir et al., 2021). This might be because low resolution impedes determining the authenticity of content, including spotting visual discrepancies (Lago et al., 2022; Preu et al., 2022; Tahir et al., 2021; Wöhler et al., 2021) and background inconsistencies (Lago et al., 2022; Preu et al., 2022; Tahir et al., 2021). In addition, Lovato et al. (2023) found that people were better at identifying deepfakes if the perceived demographic characteristics (age, gender, and ethnicity) of the person depicted matched their own.

No consistent patterns emerged as to who is particularly vulnerable to being fooled by deepfakes. While gender (Sütterlin et al., 2021; Tahir et al., 2021) and education (Tahir et al., 2021) had limited impact, there was evidence that older individuals had greater difficulty in detecting deepfakes (Ahmed, 2023; Müller et al., 2022). Studies also showed that people are often overly confident regarding their detection ability, especially those who were worse or equally bad at detecting deepfakes (Bray et al., 2023; Köbis et al., 2021; Lago et al., 2022; Preu et al., 2022). This is referred to as the Dunning-Kruger effect (Kruger and Dunning, 1999).

While there is a scarcity of empirical research concerning the effects of regulatory interventions on deepfake detection, a few studies offered initial insights and recommendations. For example, Köbis et al. (2021) found that both raising awareness and introducing financial incentives had no effect on people’s detection accuracy. Similarly, providing immediate feedback to participants on whether they correctly identified a deepfake had limited effects in three experimental studies (Hulzebosch et al., 2020; Müller et al., 2022; Nightingale and Farid, 2022) and informing participants of common deepfake artifacts did not improve detection accuracy in another (Somoray and Miller, 2023). In contrast, introducing detailed walkthrough examples proved successful (Tahir et al., 2021), which may support the use of gamification approaches to literacy (see, for example, Glas et al., 2023 for a general overview of media literacy games). Groh et al. (2022) tested whether participants’ ability to detect deepfakes improved when they were provided information on how AI detectors classified such content. They revealed that participants tended to over-trust AI, adjusting their own classification accordingly, even when AI was inaccurate. Despite the demonstrated AI detectors’ superiority over human detection, this points to a religion-like, high share of (blind) faith-based versus knowledge-based trust in digital technology (Latzer, 2022). This may carry potential pitfalls, as AI detectors also have significant limitations and should therefore ideally be seen as Supplementary measures (Groh et al., 2022; Gupta et al., 2020; Korshunov and Marcel, 2020; Liu et al., 2020; Müller et al., 2022; Prasad et al., 2022; Tahir et al., 2021; Wöhler et al., 2021). Furthermore, the sole reliance on technical solutions to detect deepfakes could lead to a “cat-and-mouse” game (Lomtadze, 2019) as new technologies will find ways to circumvent current methods.

Overall, what is known about people’s ability to detect deepfakes in computer-science research remains inconclusive. While it was confirmed that some people struggle to distinguish authentic from inauthentic content, whether this presents a regulatory problem depends on the specific context. To gain a more comprehensive understanding, the next section turns to social-science research and the deceptive potential of political deepfake disinformation and its societal consequences.

Deepfake disinformation

Social-science research has often conceptualized deepfakes as a form of disinformation and investigated its effects on politics and society. A first set of studies investigated whether deepfakes pose greater threats than other forms of disinformation, an assumption based on the argument that visual content typically holds greater persuasive power (e.g. Sundar, 2008). There is not enough research to conclusively assess whether this holds true, but initial findings did not support the uniquely deceptive nature of deepfakes. In two experimental studies (Hwang et al., 2021; Lee and Shin, 2022), deepfake videos were considered more vivid and credible compared to textual disinformation with the same message, but the differences were small. Furthermore, a few large experimental studies found that political deepfake videos are not perceived as more credible and emotionally appealing (Barari et al., 2021), not more effective in changing issue agreement or the evaluation of politicians (Appel and Prietzel, 2022; Hameleers et al., 2022, 2023), and not more likely to create false memories (Murphy and Flynn, 2022) than audio and/or textual disinformation. One explanation for why people might not be deceived as easily by political deepfakes is that they can spot “unnatural” behavior or expression when they are familiar with the person (Groh et al., 2023; Hameleers et al., 2024; Kim et al., 2018; Thaw et al., 2020; Vaccari and Chadwick, 2020). Preliminary findings thus suggest that the feared mass-deception by deepfake disinformation might be overstated. This may also hold true for the concern that they can be used to manipulate voters (Diakopoulos and Johnson, 2021), which was examined by Dobber et al. (2021). They found that showing participants a deepfake video featuring a politician of the Dutch Christian Party making jokes about Christ’s crucifixion caused negative attitudes toward the politician. However, the effect was small and did not spill over to the politician’s party. The authors also demonstrated that microtargeting could amplify negative attitudinal effects, but this effect was evident only within a small subgroup of highly religious Christians who had previously supported the Christian party. Similarly, Hameleers et al. (2024) found that partisanship is a likely driver of delegitimization of politicians through deepfakes. Thus, deepfake-based disinformation campaigns must be highly targeted to succeed. Together with the above finding that creating deepfakes still requires considerable resources and skills, this may suggest that, at least for now, they might not be the most appropriate tool for spreading disinformation. This is supported by fact-checkers interviewed by Weikmann and Lecheler (2023), who reported that deepfakes have so far caused far less turmoil than less sophisticated forms of visual disinformation and decontextualized images.

However, as indicated by research, the fundamental challenge posed by deepfake disinformation is its potential to contribute to a general climate of uncertainty and doubt. For example, Vaccari and Chadwick (2020) showed in an experiment that watching a deepfake video left people uncertain about what is real and what is not, which in turn reduced overall trust in social media and the news. Drawing from survey data, Ahmed (2021b) arrived at a similar conclusion, suggesting that deepfakes amplify overall skepticism toward the media. This could become one of the unintended consequence of raising public awareness of deepfakes, as Ternovski et al. (2021) and Lewis et al. (2023) showed. In their experiments, a “prebunking” intervention, that is, warning people about deepfakes, did not increase their detection accuracy, but instead made people more skeptical and led them to distrust all content presented, even if authentic. This in turn could be exploited by politicians to deflect accusations by delegitimizing facts as fiction. This is what Chesney and Citron (2019b) call the “liar’s dividend” (p. 151). Accordingly, Twomey et al. (2023) found that during the Russo–Ukrainian war, Twitter users frequently denounced real content as deepfake, used “deepfake” as a blanket insult for disliked content, and supported deepfake conspiracy theories. Scholars have therefore recommended that mitigating measures must also focus on restoring trust in authentic content (Hameleers et al., 2024; Lewis et al., 2023; Tahir et al., 2021; Ternovski et al., 2021). Interventions could also focus on strengthening critical thinking, which—consistent with broader research on disinformation (Pennycook and Rand, 2019, 2020)—was identified as a relevant factor in preventing deception and the sharing of deepfakes (Ahmed, 2021c, 2023; Appel and Prietzel, 2022; Hameleers et al., 2024). In addition, corrective labels might provide another way of countering potential negative effects, as they reduced people’s intention to share (Ahmed, 2021a; Lee and Shin, 2022) and mitigated ethical concerns regarding political deepfakes (Kugler and Pace, 2021). The latter did however not work for deepfake pornography, which is explored in the next section.

Deepfake pornography

The third regulatory challenge identified in empirical research relates to deepfake pornography and the resulting harm for individuals affected. Although the initial application of deepfakes was for pornography, empirical research on it is scarce, with only nine studies focusing on this area. A comprehensive, cross-country study by Flynn et al. (2022), which combined surveys (N = 6109) and interviews (N = 118) across the United Kingdom, New Zealand, and Australia, offered initial evidence into the pervasiveness of non-consensual deepfake pornography. Of the survey respondents, 14.1% reported being affected (n = 864) by the creation, distribution, or threats of distribution of deepfake pornography featuring them; 7.6% reported having created or distributed such content (n = 466). Belonging to a marginalized community and being younger and male predicted both victimization and perpetration. In addition, victims experienced a range of emotional, psychological, occupational, and relational effects, many of which continued long after the abuse had first taken place. This could lead to a constant “visceral fear” (Citron, 2019: 1925) over who has or will see the images in the future. In a broader sense, deepfakes could thus disrupt peoples’ control over their own images, creating new forms of privacy invasions in terms of dignity, autonomy, and identity expression (Kugler and Pace, 2021). Studies further showed that victims are reluctant to speak up and report being affected by deepfake pornography due to a culture of victim blaming (Fido et al., 2022; Flynn et al., 2022; Winter and Salter, 2020) and normalization (Maddocks, 2020). Reporting was also hindered by missing or complex tools (De Angeli et al., 2021) and by authorities that discouraged victims from taking action because the perpetrator could not be identified (Flynn et al., 2022). Scholars therefore recommended creating better legal foundations and reporting mechanisms for deepfake pornography (Flynn et al., 2022; Kugler and Pace, 2021; Wang and Kim, 2022).

Altogether, the literature review indicated that deepfakes do not introduce fundamentally new and unique regulatory challenges. Instead, they add to the repertoire of tools available for spreading harmful or illegal content such as disinformation and non-consensual pornography. Consequently, the primary challenge lies in the effective oversight and enforcement of existing rules, along with careful considerations of required adjustments. This also necessitates consideration of potential unintended consequences when crafting countermeasures. Whether this is in line with emerging responses from regulators, is examined in the next section.

Regulatory responses to deepfakes

Research has generally begun to discuss the regulation of deepfakes and whether current laws are adequate to address them. In the United States, legal scholars have confirmed the general applicability of existing public and private law, but highlighted problems of enforceability such as identifying the responsible parties and cross-jurisdictional issues (e.g. Caldera, 2020; Chesney and Citron, 2019a; Hall, 2018; Langa, 2021; Meskys et al., 2020). In addition, discussions have intensified regarding the accountability of Internet platforms for the content they host, including deepfakes (O’Donnell, 2021), as have discussions on the need to strike a balance between regulatory measures and safeguarding freedom of speech (Bodi, 2021). Consequently, the emergence of deepfakes does not necessarily raise new regulatory questions, but intensifies existing ones (Barber, 2023). In the European Union (EU), problematic deepfakes fall under the scope of several European regulations designed to address harmful and illegal online content, notably the Digital Services Act (DSA, Regulation (EU) 2022/2065) and the General Data Protection Regulation (GDPR, Regulation (EU) 2016/679), in addition to national law and the AI Act (Karaboga, 2023). Here, legal scholars have highlighted the need to strengthen enforcement, grant additional legal rights to victims, and foster public awareness (e.g. Van der Sloot and Wagensveld, 2022; Van Huijstee et al., 2021).

In contrast, there has been relatively little scholarly focus on dedicated regulatory responses to deepfakes and no global overview currently exists. To bridge this gap, we conducted a qualitative content analysis (Puppis, 2019) using the coding software MAXQDA to examine enacted and proposed regulatory measures and policy debates surrounding deepfakes. Initially, by November 2023, we found 50 documents through a review of existing research, in-depth searches of regulatory authorities’ websites, and thorough monitoring of media coverage and policy blogs. Following the manuscript’s first review, we added 50 more documents in February 2024, showcasing the highly dynamic policy landscape (see Annex II in the supplemental material for the list of 100 documents).

Overall, a diverse spectrum of regulatory responses to deepfakes emerged, ranging from market-driven initiatives to state-imposed command-and-control-regulation, with various forms of self- and co-regulation in between (Latzer et al., 2002). Some policymakers have chosen to refrain from regulatory action altogether, either due to limited research on deepfakes or the belief that current laws and industry self-regulation adequately addresses them. Others rely on self- and co-regulation aimed at raising awareness as well as hard regulations that require transparency or ban or otherwise limit the production or distribution of certain deepfakes. The measures target different stages of the deepfake lifecycle and consequently vary in their focus, applying to producers of deepfake technology, users who create or disseminate deepfakes, or the platforms that host them.

In the following sections, we present the main approaches taken by policymakers in response to deepfakes categorized according to the intensity of state involvement. We also compare them with the findings from our literature review, with particular emphasis on the rationale behind the need for regulatory action, the actors held accountable, and whether the enacted or proposed measures appear adequate to address the regulatory challenges identified.

No state regulation

Despite widespread concern among both the public and scholars, policymakers and legislators have generally been cautious, partly opting for a wait-and-see approach. This is largely justified by the lack of empirical research on deepfakes, as identified in our literature review. Accordingly, Austria (14) and Belgium (15) plan to intensify research on deepfakes before considering further action. In the United States, efforts are underway to institutionalize research on deepfakes through taskforces, regular reports (44, 45, 46, 47), and a mandated intelligence assessment by the Secretary of Defense regarding national security threats posed by deepfakes (98). However, concurrently several deepfake laws have been adopted, criminalizing the dissemination of certain deepfakes (see below).

In some countries, policymakers have explicitly decided against regulatory action. In the Netherlands, the Ministry of Justice and Security reviewed the need for deepfake regulation prompted by a 2021 report that suggested regulatory options (Van der Sloot et al., 2021), but in 2023 decided not to criminalize all or even specific types of deepfakes. This decision was based on the belief that existing laws are sufficient, coupled with concerns about potential constraints on freedom of expression (23). Similarly, in 2023, the Swiss Federal Council denied a motion to regulate deepfakes, asserting that the use of deepfake applications does not create legal loopholes in criminal and civil law (100). It remains to be seen whether this will be reconsidered after the Swiss Foundation for Technology Assessment (TA-SWISS, 2023) publishes the results of an ongoing study on the impact of deepfakes.

In the absence of dedicated regulatory action, some of the aforementioned countries (14, 15, 23) have expressed their intent to strengthen public awareness of deepfakes to preemptively counter potential negative consequences, as discussed in the following.

Strengthening public awareness and fostering transparency

A second approach centers around soft measures to enhance public awareness of deepfakes. This includes increased efforts to educate the public on how to identify deepfakes and self- and co-regulatory approaches to transparency.

A first set of measures under discussion or already implemented in some countries involves raising awareness and improving people’s abilities to recognize deepfakes. The European Parliament has, for example, consistently advocated such action (8, 9). In addition, some member states, including Austria (14), Belgium (15), and the Netherlands (23), plan to adopt measures aimed at strengthening deepfake-specific literacy. Furthermore, the Italian Data Protection Authority (22) and the German Federal Office for Information Security (21) have already released information on how users can protect themselves from deepfakes, although the focus is primarily on general information about digital artifacts in deepfake content.

These measures have been justified by the assumption that the public cannot differentiate between deepfakes and authentic content, which was not fully supported by the literature review. While such awareness measures can serve as a starting point to mitigate some potential negative impacts of deepfakes, the literature review has further indicated their limited efficacy. For example, raising awareness in general might not significantly improve people’s ability to detect deepfakes and can sometimes even backfire and create uncertainty. Hence, measures aimed at strengthening public literacy should also focus on rebuilding trust in authentic content and recognize people’s tendency to overestimate their abilities.

Major Internet platforms have also made efforts to contribute to strengthening public deepfake literacy. For example, Meta and Google have created large public deepfake datasets to advance research on deepfake detection, which were used in several of the computational studies quoted above in the “detection” section. Platforms have also successively instituted deepfake policies and created technology designed to detect, label, or remove deepfakes. Early on, their primary focus was on deepfake pornography. Accordingly, Reddit banned deepfake pornography in 2018, followed by Pornhub, Discord, and X. The focus has since shifted to combating electoral interference and developing industry-wide standards. For example, 20 large tech companies signed a joint “tech accord” to tackle deceptive AI use in 2024 elections around the world (AI elections accord, 2024). Furthermore, the Coalition for Content Provenance and Authenticity (C2PA) (2023) introduced the “Content Credentials” watermarking system to trace the sources and integrity of digital content.

In addition, the EU continues to promote self-regulation aimed at fostering transparency, which has a long tradition for combating disinformation and other harmful content and which has recently been extended to include deepfakes. The 2018 Code of Practice on Disinformation, a self-regulatory framework initiated by the European Commission to tackle disinformation, was strengthened in 2022 (6) and now includes recommendations for labeling deepfake content. Although an evaluation of the initial code revealed that labels alone might not be an effective measure (European Regulators Group for Audiovisual Media Services, 2020), this approach was continued and legally backed up by the recently enacted Digital Services Act (7) and the AI Act (5), which is discussed in the next section.

Transparency, criminalization, and ex-ante control

While industry self-regulation has been a cornerstone in Internet governance, confidence in it has slowly declined and complementary hard regulation has been gradually adopted (Floridi, 2021; Shattock, 2021), also regarding deepfakes.

In the EU, deepfakes are addressed as part of broader frameworks regulating online platforms and AI. The DSA (7) requires providers of very large online platforms and search engines to label deepfakes. In addition, illegal deepfake content is subject to its stricter notice-and-action-procedures and systemic risk mitigation. A legally binding transparency approach was also included in the AI Act (5). Under its risk-based framework, AI systems generating deepfakes are classified as “limited risk.” Accordingly, deployers, defined as “any natural or legal person using an AI system under its authority,” must disclose whether content has been artificially generated or manipulated in a clear, timely, and accessible manner. Notably, the term “deployer” explicitly does not encompass the personal use of deepfakes. In addition, disclosure is not required if deepfakes are used by law enforcement and when such use is essential for exercising freedom of expression, arts, and sciences. An EU AI Office shall encourage codes of practice to aid rule implementation, while the European Commission is empowered to adopt further implementing acts. These mechanisms could alleviate concerns about platforms’ extensive discretion in rule implementation. Furthermore, AI system providers may be mandated to adopt technical detection and labeling solutions. Still, uncertainties and criticism persist regarding the enforcement of the transparency obligation and the efficacy of available mechanisms for sanctions (e.g. Karaboga, 2023; Van Huijstee et al., 2021). For example, labels are inadequate to mitigate harm related to deepfake pornography, as shown in the literature review. Therefore, exploring context-specific transparency measures could be an option.

Law enforcement’s use of deepfake-detection software was initially considered high risk in the European Commission’s AI Act proposal (1), but was later removed at the European Parliament’s request (2) due to an unreasonable distinction between private and public uses of deepfakes (4). However, certain deepfake applications may be classified as high risk in the future as the list is subject to adaptation. This aligns well with the need for adaptive policy approaches (Latzer, 2013) in the light of limited controllability and predictability of deepfake technology. Future research could thus help identify high-risk deepfake applications.

Other measures focus on criminalizing specific deepfake applications and adopting preemptive rules to curb the harmful use of deepfake technology. Such measures address the regulatory challenges described in the literature, focusing primarily on electoral manipulation through deepfake disinformation and deepfake pornography. However, they often rely on unverified assumptions about the prevalence and deceptive capacity of deepfakes and fuel unsubstantiated alarmist narratives. Moreover, the enforceability and appropriateness of some measures may be contested considering the findings of the literature review.

China was among the first countries to adopt rules relating to deepfakes. The “Provisions on the Administration of Deep Synthesis of Internet Information Services” (16), effective from 10 January 2023, consist of a range of instruments that target all “synthesized media,” including deepfakes. They generally prohibit the creation of synthesized media that violate national law or threaten national security and interests, harm the national image, or disrupt the economy. In addition, they implement a notice-and-action system that requires synthetic-media providers, that is, providers of apps for the production of synthetic content, to prominently label synthetic content, and platform operators to identify and remove content deemed “undesirable,” especially disinformation. Both rules have raised concerns of excessive censorship, because companies may over-police content to avoid legal liability and such rules can easily be abused to exert excessive government control of information (Kölling, 2023; Sheehan, 2023). App providers must also verify users’ identity to access their services, further increasing privacy concerns (Hine and Floridi, 2022). In addition, the “Interim Measures for the Management of Generative Artificial Intelligence Services” (17) require new generative AI products with “public opinion attributes” or “capabilities for social mobilization” to undergo a security review prior to release. App providers would also be tasked with helping users understand and responsibly use deepfakes to avoid harming others. How these demanding ex-ante requirements will be enforced remains to be seen.

In the United States, several state and federal bills are in force or under consideration that target specific harmful uses of deepfakes. They primarily target individual users and address two concerns: the dissemination of election-interfering deepfakes and non-consensual pornography. This approach aligns with the United States’ traditionally high level of free speech protection and its liability shield for platform providers (Geng, 2023).

The federal “Protect Elections from Deceptive AI Act” (55) and the US Federal Election Commission (52) aim to ban deceptive deepfake content in political ads. South Korea (99) recently enacted a similar ban, facing criticism that it might be misused for controlling elections (Park, 2024). Moreover, legislation criminalizing the distribution of deepfakes during elections has been successfully passed in Texas (42), California (41), Minnesota (29), Maryland (39), Washington (57) and Michigan (69). Proposals are pending in 30 more states in anticipation of the 2024 elections (28, 56, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 70, 71, 72, 73, 74, 75, 76, 78, 79, 80, 90, 91, 92, 95, 96, 97). Amid concerns over election interference, President Biden also signed an executive order advocating for the watermarking of AI-produced content (54), and the US Federal Communications Commission has prohibited AI-generated robocalls (87). Although these measures are more narrowly targeted, they have been criticized for potentially interfering with the right to free speech (Tashman, 2021), particularly in light of previous court decisions that rejected attempts to restrict election-related lies (Chesney and Citron, 2019a). At the same time, many have questioned their enforceability, particularly given the difficulty in identifying deepfake producers and determining harmful intent (e.g. Williams et al., 2019).

Another set of US bills is dedicated to deepfake pornography. At the federal level, the “Preventing Deepfakes of Intimate Images Act” (32) was introduced in the House of Representatives in 2023 and would make the dissemination of pornographic deepfakes illegal and provide additional legal options for victims. The “DEEP FAKES Accountability Act,” which initially failed twice (48, 49) before being reintroduced in 2023 (50), would require users to digitally watermark pornographic deepfake content, along with content shared to “incite violence, physical harm, provoke armed or diplomatic conflict, or disrupt official proceedings.” In addition, the bipartisan “DEFIANCE Act” (85) was introduced in early 2024 to establish civil remedies for victims of deepfake pornography, prompted by falsified explicit images of Taylor Swift circulating online. At the state level, legislation banning deepfake pornography is already in effect in Virginia (43), California (40), Minnesota (29), New York (31, 34), Illinois (81), Texas (82), Hawaii (83), and Georgia (84). Furthermore, a pending Maryland bill seeks to establish a dedicated taskforce to prevent and deal with deepfake pornography (38). Beyond the United States, deepfake pornography has also legally been declared a civil offense in Australia (12), granting the Australian eSafety-Commissioner the authority to require service providers or users to remove deepfake pornography posted online. Similar discussions have been underway in the United Kingdom, with the UK Law Commission recommending criminalizing the sharing of deepfake pornography (24), a measure included in the much-anticipated Online Safety Act passed in October 2023 (26). Concurrently, a coalition of bipartisan politicians in the United Kingdom has called for a comprehensive ban on all harmful deepfakes across the entire production and distribution process (27). France is also discussing an amendment to its penal code to specifically include the sharing of deepfake pornography (20), and Belgium has expressed its intention to develop a legal framework to prosecute and enforce the misuse of deepfakes, including pornography (15). Furthermore, the EU directive on combating violence against women would require all Member States to make the non-consensual sharing of deepfake pornography a criminal offense (10). Overall, the need for improved reporting mechanisms and stricter law enforcement could be supported by research on the long-lasting effects on victims and the lack of reporting tools described earlier. However, despite these measures, the burden of proof still falls on victims, who may be discouraged from reporting abuse due to victim blaming, raising doubts about their effectiveness.

While the mentioned proposals primarily tackle malicious deepfakes and often exclude those created or shared for entertainment purposes, there are increasing concerns in the United States regarding the protection of the creative industry against AI-generated content (Rose, 2024). In response, the “NO FAKES Act of 2023” (86) and “No AI FRAUD Act” (88) proposals aim to strengthen individuals’ right to publicity by protecting their voice and visual likeness from unauthorized AI recreation, even beyond their death. Similar legislation has been passed in New York (35) and is pending in Tennessee (77). In addition, the US Federal Trade Commission has proposed new rules to prohibit AI impersonation of individuals (89). However, some have questioned the necessity of these additional rules considering existing personality rights and warned about their potential to benefit big labels over individual artists (Rothman, 2023).

In sum, the content analysis revealed a spectrum of responses to deepfakes. Most prioritize public awareness and transparency over the criminalization and control of deepfake applications. In addition, there seems to be an understanding that existing laws—although sometimes extended in scope—are generally equipped to address deepfakes. Yet concerns about enforcement and efficacy persist, particularly when evaluated against the findings of the literature review.

Conclusion

This article offers a comprehensive review of existing empirical research on deepfakes and the regulatory responses to this emerging technology. The findings indicate that our understanding and knowledge of deepfakes is not yet sufficient to determine whether the commonly held concerns about their harmful impacts are materializing and how to effectively address them. At present, it seems that the challenges posed by deepfakes are not entirely unprecedented but rather an extension of ongoing discussions regarding the dissemination of harmful and illegal content. We therefore advocate the need for evidence-based knowledge and empirical research, over rushed and anecdotal assumptions. The ever-evolving landscape of deepfake technology necessitates adaptive policy approaches (Latzer, 2013) aimed at mitigating harm while safeguarding individual rights and addressing broader societal issues related to trust and truth. Risk-based approaches, as adopted in the AI Act, seem promising in striking this balance. Nonetheless, existing tools may not fully resolve current and future challenges, making critical oversight and periodic review essential. In addition, it is crucial to give careful consideration to adequate governance arrangements, considering both appropriate state and private involvement as highlighted in the governance-choice approach (Latzer et al., 2019). Altogether, this underscores the importance of conducting more empirical research to effectively address and understand the evolving regulatory challenges posed by deepfake technology. In particular, as noted in the respective sections above, future research should clearly define deepfakes and its diverse applications and explore the harmful and beneficial individual and societal impact they can have, also beyond the global north. Moreover, further research is needed to understand the intended and unintended consequences of countermeasures, thus strengthening evidence-based policymaking. Given the rapid advancement of technology, future research should also consolidate and integrate knowledge on deepfakes and novel phenomena in synthetic-media production across disciplinary boundaries.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448241253138 – Supplemental material for What we know and don’t know about deepfakes: An investigation into the state of the research and regulatory landscape

Supplemental material, sj-pdf-1-nms-10.1177_14614448241253138 for What we know and don’t know about deepfakes: An investigation into the state of the research and regulatory landscape by Alena Birrer and Natascha Just in New Media & Society

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Swiss National Science Foundation [grant number 209250].

Supplemental material

Supplemental material for this article is available online.

Notes

Author biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.