Abstract

The phenomenon of DeepFake has rapidly expanded, primarily driven by advancements in artificial intelligence technologies. Motivated by the growing presence of deceptive audio-visual content in political communication, this study investigates the various forms, distribution mechanisms, and societal impacts of DeepFakes, with a focus on the Romanian context. The paper contributes by classifying DeepFake content into distinct categories, analyzing over 400 misleading media materials, and identifying the technological, communicational, and intentional premises that enable such fabrications. Key findings reveal the strategic use of DeepFakes in political manipulation, disinformation campaigns, and financial fraud, with serious consequences for public trust and information integrity. The study underscores the urgent need for targeted regulations, media literacy, and platform accountability to mitigate the adverse effects of synthetic media in democratic societies.

Keywords

Introduction

The explosive growth of the DeepFake (DF) phenomenon, supported by developments in artificial intelligence, raises concerns about the manipulation of public opinion, especially in political contexts. In a dense electoral year, such as 2024, the risks of visual and audio disinformation increase exponentially, endangering the integrity of democratic processes. Romania is facing a wave of fake content, which has both emotional and behavioural impact on the public.

Romania’s political system is a semi-presidential democracy characterized by high levels of party fragmentation and electoral volatility. The 2024 electoral year marked an unprecedented concentration of four elections – local, European Parliament, parliamentary, and presidential – within a single 12-month period. This ‘super-electoral’ context intensified political competition and communication across both traditional and digital media channels.

The main political actors include the Social Democratic Party (PSD), currently leading the governing coalition; the National Liberal Party (PNL), its coalition partner and frequent rival; and the Alliance for the Union of Romanians (AUR), a far-right populist party that has gained significant traction on social media through nationalist and anti-establishment rhetoric. Other actors such as the USR (Save Romania Union) represent liberal and reformist agendas, but struggle to counter populist narratives online.

Romanian media ecosystems are marked by growing political polarization, algorithmic amplification of partisan content, and limited digital literacy among audiences – factors that create fertile ground for misinformation and AI-generated media. In this environment, DeepFakes are not only tools for satire or manipulation but also reflect deeper tensions in the interplay between technology, politics, and trust in institutions.

Although the current literature extensively deals with DF technologies and their detection, a deficit of empirical analyses applied to concrete cases in the Romanian space, an under-examination of the communicative and intentional dimension in the propagation of DFs, and a lack of focus on the intersection between regulation and practical applicability, especially in emerging legislation, are observed.

This study intends to analyze the socio-political effects of DF, including the impact on victims and the dissemination strategies used, a critical assessment of recent regulations, such as the Romanian law requiring the marking of imaginary content and also some proposals regarding directions for prevention, media education, and legislative interventions.

The central focus of this article is to examine how DeepFake media function as tools of political communication and disinformation within the Romanian context during the 2024 electoral year. Specifically, the study investigates (1) the forms and platforms through which DeepFakes circulate, (2) the communicational and intentional premises underlying their production, and (3) the implications these practices hold for media integrity and democratic accountability. By combining empirical content analysis with contextual interpretation, the article aims to contribute to the international debate on the societal governance of synthetic media.

Literature review

The phenomenon of DeepFake (DF) has attracted growing scholarly attention, with research evolving along three main analytical dimensions: technological, communicational, and regulatory. From a technological perspective, studies have focused on the mechanisms of DF generation and detection (Masood et al., 2023; Stroebel et al., 2023), highlighting the constant arms race between producers of synthetic content and forensic developers. The communicational dimension, on the other hand, examines the persuasive and psychological effects of DF within media and political discourse (Gallese, 2024; Hameleers et al., 2024; Jacobsen and Simpson, 2023). A third strand emphasizes the regulatory and ethical challenges posed by DF content, as governments and platforms attempt to balance innovation with the protection of democratic integrity (Birrer and Just, 2024; Romero; Moreno, 2024).

Despite this growing literature, most existing studies remain either technologically driven or globally generalized, with limited attention to how DFs function within specific national or cultural contexts. Few analyses integrate these dimensions into a comprehensive socio-political framework, particularly within Eastern European democracies. This study addresses this gap by combining empirical examination of DF content with interpretive analysis of communicational and regulatory dynamics in Romania during the 2024 electoral year.

Previous research has shown that DF content tends to thrive in networked media ecosystems where algorithmic amplification and emotional engagement drive visibility (Klikauer and Link, 2024; Whittaker et al., 2023). Scholars have further noted that the public often underestimates DF prevalence and overestimates their ability to recognize manipulated content (Lim, 2023). In the Romanian context, academic and institutional attention has recently intensified, particularly after the introduction of the 2024 Law on Responsible Use of Technology, which mandates labelling of AI-generated or manipulated media (Bouter et al., 2024). Yet, little empirical work has been conducted to assess the actual forms and social impacts of such content.

By situating the Romanian case within this broader international discourse, the present study contributes to understanding how DF practices intersect with local political communication, legal frameworks, and public trust in media systems.

Empirical observations made in recent years indicate an increase in the phenomenon of DF worldwide. Interest on this issue is also evident in scientific research (Jacobsen and Simpson, 2023; Karpinska-Krakowiak and Eisend, 2024; Lees, 2023; Romero; Moreno, 2024), especially in terms of the effects and the damage it can cause (Cover, 2022; Jacobs, 2024; Stroebel et al., 2023). DF is a technique used increasingly in political communication, financial scams, advertising, or entertainment, and evolves in connection with technological evolution, the one that facilitates better communication, but also with the development of new information manipulation techniques (Szabo, 2021). A simple Google search for

The numbers speak for themselves, and it is obvious that there is a major scientific interest worldwide for the DF phenomenon, with a major concern for finding ways to detect and combat it, highlighting an emotional involvement and fear of the effects generated in the social sphere (Gallese, 2024). This emotional aspect has become much more important than researching the phenomenon itself, from neutral, balanced positions and with scientific instruments. Interest in the emergence of DF is not only real, but seems justified in the context of perfecting and multiplying software applications through the involvement of so-called artificial intelligence. The investigation of DF became acutely necessary in 2024, because it is an electoral year around the world, with important elections in the United States, the European Union, but also in Romania, with no less than four types of elections, namely local, European Parliament, parliamentary, and presidential elections.

One major characteristic of DF is that these deceptive products succeed only if they are propagated on social networks and reach a large number of people. Concerns about the extent of this phenomenon have prompted influence groups and governments of democratic states to pressure platform owners to limit the spread of this type of fake content. An agreement was signed in Munich on 16th February 2024, by representatives of Adobe, Amazon, Google, IBM, meta, Microsoft, OpenAI, and TikTok who have committed limiting access to deepfake content on these networks.

In 2024, a normative act was adopted in Romania in the context of the deepfake phenomenon,

Deepfake categories

DF is an audio-video communication product built with false data in order to deceive the public and gain some advantages. As Momoc states (2024), one condition for such products is that they are made without the consent of those concerned. The process of realization consists essentially of the graphical manipulation of audio and image content, primarily video, but it can be in photos and drawings as well. A classification given by specialists speaks of several categories: (1) Manipulation of photos or drawings (Hausken, 2024). It is a method of rewriting history, when a person’s image is removed or inserted into the frame. This was the case with the retouching of photos during the time of Soviet dictator I. V. Stalin, when former comrades, who became DF in classic enemies, were removed from official photographs (Skopje, 2022); (2) Substitution of voice in audio content. It can be done by imitating or, as is often the case today, by generating fake content using Artificial Intelligence (AI) applications (Frank and Schönherr, 2021); (3) Making misleading media products by falsifying audio-video content (Masood, M. et al., 2023). It is not necessary for the whole body to be replaced, but might be only a part of it, or only certain sequences of speech or frames that are altered.

Specifically, a substitution of the voice of real people is made, who say things they have never said, designating them in roles they have never had, placing them in places where they were not at the supposed time of the action presented, or performing activities they have never done. These types of products are constructed artificially because they do not occur in the natural process of communication (De Vries, 2020). Computer specialists, but also other interested people with programming skills, generate computer programs (software, algorithms), lately included in more complex applications, later becoming tools for effectively achieve the malicious substitution in DF. On Google Scholar’s platform,

DF can be associated with the domain of fake news, defined as an action to present counterfeit communication content with real and false elements, to convince a person, group or wide audience of certain states of things that are (in whole or in part) contrary to reality, to the factual data of existence (Feher, 2024). However, DF is not limited to this, being also associated with manipulation techniques, disinformation, malinformation, and political communication. They are types of deceptive communication present not only in politics but also in advertising, or other areas of activity where the tendency to exaggerate and even mislead is obvious (Whittaker et al., 2023).

Although nowadays the production of DF content is mostly done with the help of artificial intelligence applications, the phenomenon is known long before, being part of human history. The imitation of various sounds, including of the human voice, is a type of activity that is lost in history. These imitations were made for their own pleasure or for the entertainment of others, that is, an amused audience. Humans have imitated the song of birds or the sounds of animals. In some situations, grumbling like a rat or barking like a dog, especially when the author could not be noticed, could induce others a feeling of repulsion or fear, and the goal was not only fun but also an attempt to keep opponents away. Many other sounds could be also produced, such as the noise of rain, the sirens of the intervention machines, or simulating of gunshots.

Of course, the imitation of the human voice is a current activity, substitution being done either for the purpose of amusement, often caricaturizing the way the imitated person speaks, but also for the fraudulent purposes (Eriksson et al., 2010). Replacement of voice can also be done in a serious field, such as the doubling of films, series, or other types of video productions from one language to another. In order to render the lines in another language, the voices of the original actors are replaced by those of local actors, who are well versed in the language in which they are translated. The selection is made from people with a vocal stamp close to that of the original (Fasoli et al., 2016), but in this case, the public knows that it is an imitation. When, with the help of artificial intelligence applications, information communication or entertainment content is made without human actors, purely digital, it is no more a mere imitation but a process of artificial simulation of the human voice (De Castell, Jenson & Thumlert, 2014).

DF premises

The creation and dissemination of DeepFakes rely on intertwined technological, communicational, and intentional dimensions. Technological capacity enables manipulation; communicational networks distribute it; and intentionality provides purpose. Together, these elements define DeepFakes as AI-generated or AI-altered media designed to distort virtual reality with the intention to deceive, ridicule, or provoke negative emotion. The three aspects should therefore be understood as interdependent rather than separate categories, forming the conceptual foundation of synthetic media manipulation.

Methodology

This study adopts a qualitative content analysis approach to examine the presence, nature, and communicative functions of DeepFake (DF) media in Romanian political and public communication. The analysis focuses on understanding how DF content circulates, what purposes it serves, and what implications it has for information integrity in democratic contexts.

Sampling and data collection

A total of 412 DF media items were identified and systematically archived during a 5-month period between April 1st and August 31st, 2024. The dataset was compiled through manual collection, not automated scraping, in order to ensure contextual accuracy and authenticity. Daily keyword searches (e.g., ‘deepfake’, ‘AI video’, ‘imaginary content’, and ‘manipulated media’) were conducted across seven major Romanian media platforms –

Inclusion criteria

Content was included in the dataset if it met two primary criteria: (1) Evidence of AI-based manipulation or substitution (voice, image, or hybrid) and (2) Relevance to public or political communication in Romania. Parody or humorous content was also retained when it referred to political figures or campaigns.

Coding framework and analytical dimensions

The dataset was coded using a structured codebook developed iteratively through a pilot test of 20 items. The final version included four primary analytical dimensions: (1) (2) (3) (4)

Each category was operationalized with clear definitions and sample indicators. A second researcher independently coded 50 cases, achieving Cohen’s κ = 0.87, indicating strong intercoder reliability.

Assessment of intentionality

Determining intent in online communication is a complex interpretive task. Following Phillips and Milner (2017), this study approached intentionality not as a fixed psychological state but as a (1) The framing or caption accompanying the post (e.g., irony, accusation, or campaign slogan); (2) The situational context (e.g., electoral message, parody, financial solicitation); and (3) Creator cues or self-descriptions (where identifiable).

This interpretive triangulation enabled differentiation between humorous imitation and manipulative disinformation, while acknowledging the fluid boundaries of online participation.

Analytical procedure

Once coded, the data were analyzed to identify recurring patterns in format, purpose, and dissemination platform. Cross-tabulations revealed dominant clusters of political versus entertainment content and relationships between media format and communicational intent. These analytical insights informed the subsequent discussion of DeepFake functions, communicative strategies, and perceived harms.

The content analysis was structured as follows: (1) Detection of DF characteristics, such as voice replacement, lip-sync, manipulated imagery, or AI-generated narratives. (2) Verification of content by cross-checking against trusted factual sources and official statements. (3) Assessment of intentionality and communicational strategy, including the use of fake accounts, meme formats, or amplification through networked distribution. (4) Impact evaluation, focusing on the reputational damage to public figures, disinformation impact on audiences, and potential material consequences.

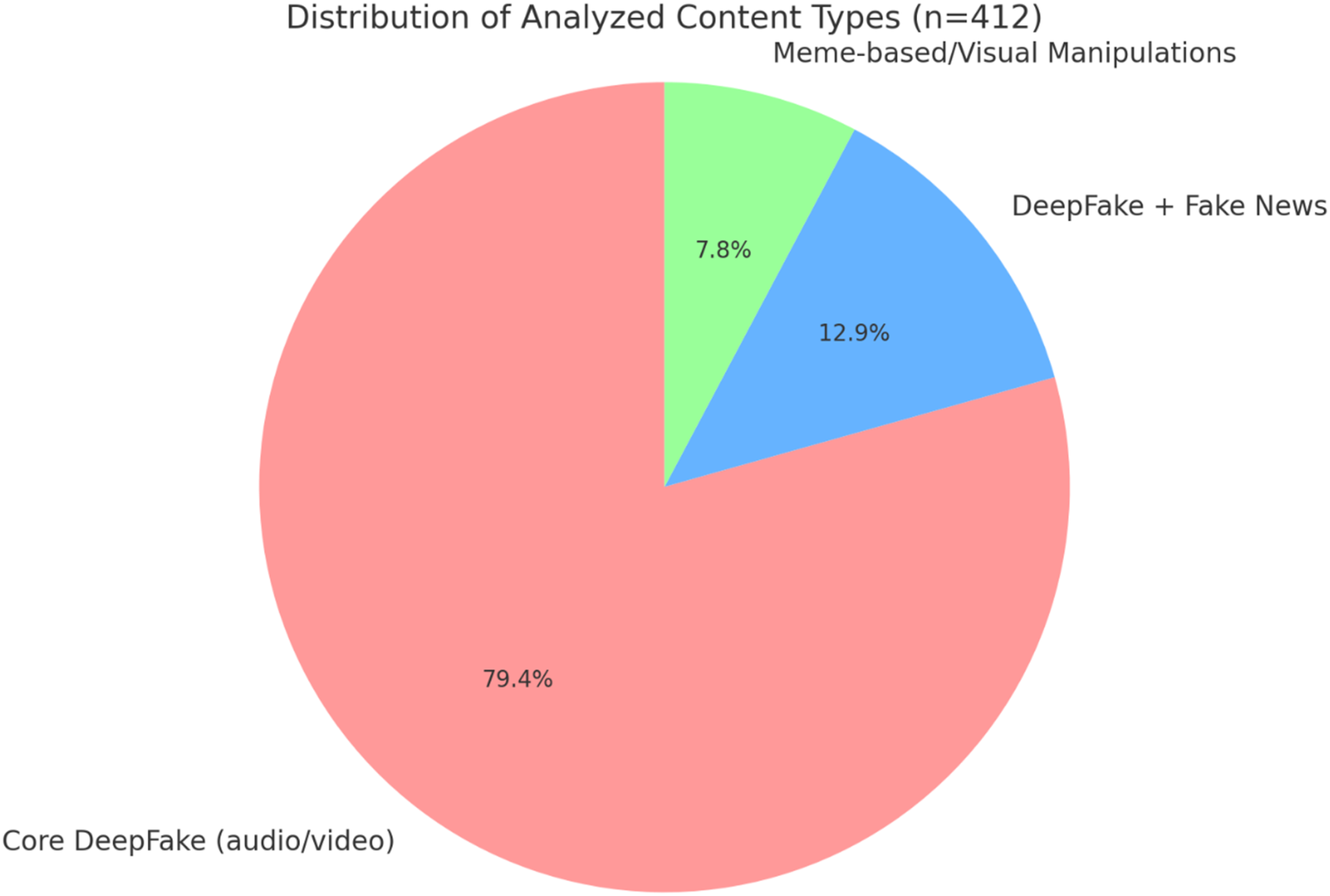

Out of the 412 analyzed pieces: (1) 327 were categorized as core DeepFake content (altered audio-video media), (2) 53 combined DF with fake news structures, (3) 32 were meme-based or image manipulations with humorous or satirical tones.

Each instance was also analyzed for its origin, platform of distribution, thematic focus (e.g., electoral manipulation, financial fraud, and political satire), and whether it had been flagged or labelled (e.g., ‘This material contains imaginary poses’).

Results

Undoubtedly, today humanity lives under cyber pressure, where the possibility of attacks is high (Bârgăoanu and Pana, 2024). Any personal incursion into the virtual space, in search of information, for communication or entertainment, implies the acceptance that the online universe also contains numerous dangers. Some threats are easier to detect, while others remain unknown, at least until they reach their goal of deceiving users. Requesting data, targeting counterfeit websites from fake or true social media accounts, taking control of equipment (computer or phone), or promising easy earnings are among the dangerous tricks used by impostors (Squillace and Cappella, 2024). DF is just one of many other tricks (Miotti & Akash, 2024), in order to influence human behaviour in the direction suggested by the attacker (Bârgăoanu and Pana, 2024).

Communication products that aim to deceive the public are more common on social media, while on media platforms, where there are still gatekeepers, generally these contents appear less often, are highlighted in context articles, which are aimed at fighting them, or are highlighted for their funny side. For this reason, it is important that the analysis of DF to be done from the technical point of view, the way of construction, and the means of production. The next step is to measure the effects, the influences exerted, because a complete understanding is possible (Mosco, 2023) only by investigating this process, from the idea of deception to its realization, and then by putting it into practice with various consequences (Van Doorn, 2023).

From the technical point of view, the identified DF materials are highly diverse, but they can be put into three categories:

Audio-visual DeepFakes involving manipulation of both image and/or voice

This category includes all cases where authentic footage is combined with fabricated audio, AI-generated voice substitution, or lip-sync technologies. It covers both full synthetic audio-visual reconstruction and the overlapping of altered audio on real video footage. The emphasis is on hybrid manipulation, regardless of whether the visual component is entirely AI-generated or partially authentic. In this case, lip-sync technologies are used to give the illusion that the character is speaking. The overlay audio message is made using apps that analyze the person’s previous speeches to play a new message, mimicking the voice of the deepfake subject (Septiawan, 2024).

These materials often merge authentic footage with fabricated audio, producing convincing yet deceptive statements attributed to real figures. Examples include AI-generated speeches by President Klaus Iohannis or Prime Minister Marcel Ciolacu circulating on social media.

This category includes, for example, the creation of

In this category there are real video content, but over which an altered audio message overlap. Video snippets are selected from people’s current activity to give more credibility to messages. Lip-sync applications are used to configure new replicas (Bohacek and Farid, 2024). The goal is to promote a new message, the one desired by the producer of the deceptive material.

On social networks are present dozens of DF videos with President Iohannis in the foreground saying various things. The images are real, usually taken from press conferences made at Cotroceni Palace. The speech is recommended in such a way as to convey a message other than the original one.

Static or meme-based DeepFakes

Static images (photos or drawings), memes in fact, where the original message is modified. These types of content can also appear in the form of very short videos, which brings them even closer to DF products. The alteration of the images (drawings, photographs, posters, and others of this kind) is done for amusement purposes, sometimes satire and irony being extremely acidic (Schmid et al., 2024).

In the Romanian public sphere, the products mocking the electoral offers of George Simion are well known. Some are very ingenious, such as: ‘Mountain apartments with sea view for all Romanians’ or ‘Solar panels that also work on night for all Romanians’. Of course, there are formulations that have humour, entertain the public, but they are also a warning signal to the vain electoral promises made by some political actors.

Hybrid and text-enhanced DeepFakes

Content that can be discussed as both DF and fakenews or manipulation through false advertising. In these situations, video images or photos of real people are accompanied by statements in the form of text, urging some actions (Winger et al., 2024). These statements are false in part or in full. Such a product heavily promoted on social networks is the one who manipulates a television interview given by actor Claudiu Bleont to Denise Rifai. The fake text promoted is a campaign for a non-existent investment project, but which promises to the gullible fabulous earnings. Not less than 6500 lei per month is promised by President Klaus Iohannis to investors in a campaign to promote an investment in a fake BRUA project for the construction of a gas pipeline.

Figure 1 provides a clear visualization of the distribution of the types of content analyzed: 79.4% of the content is classic DeepFake (altered audio/video); 12.9% is a combination of DeepFake and fake news, and 7.8% is memes or images manipulated for satirical purposes. Distribution of analyzed content.

The dataset represents the complete collection of 412 identified DeepFake media items, covering all major platforms and content forms. While selected examples are highlighted for illustrative purposes, the analysis considers the entire corpus. A cross-tabulation between media format and communicative purpose revealed that video-based DFs were predominantly political (61%), while image and meme-based DFs were mainly satirical (68%). TikTok and Facebook emerged as the primary distribution channels for manipulative and humorous DFs, respectively.

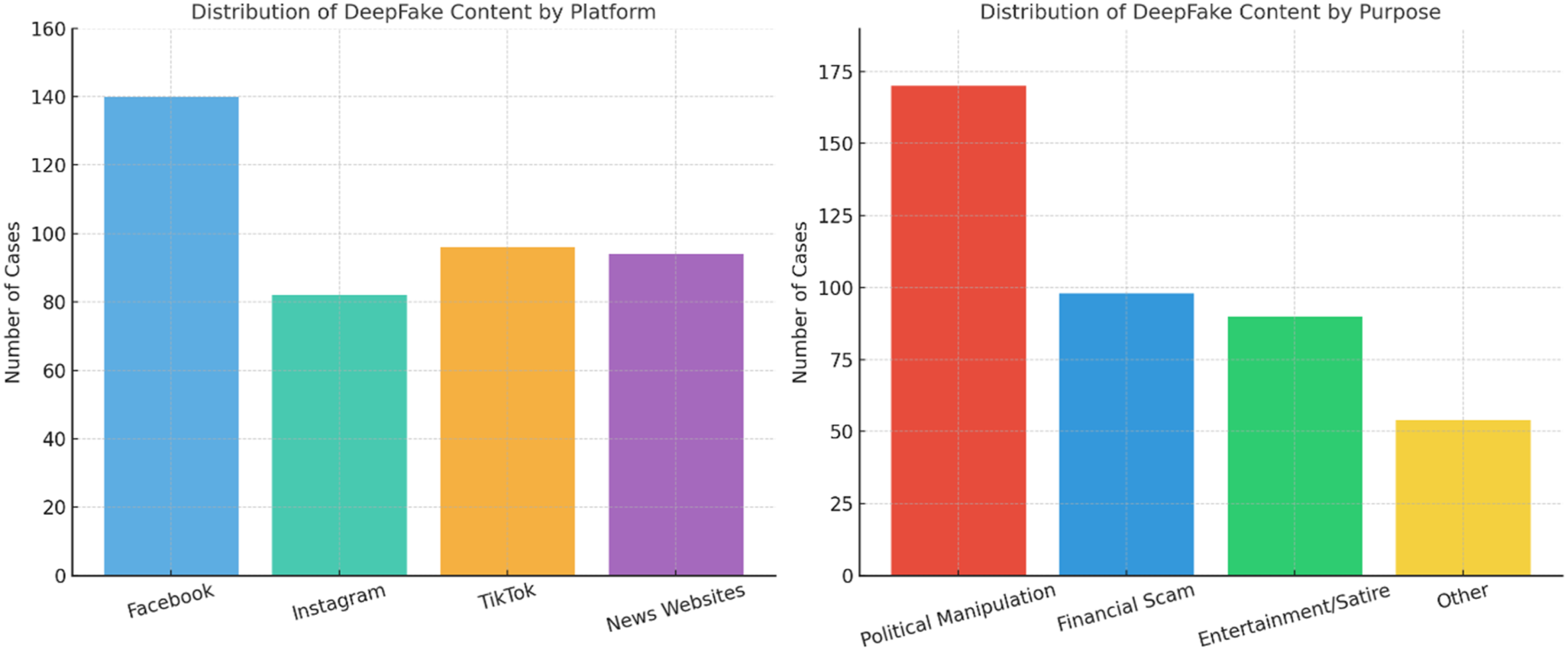

As is shown in Figure 2, Facebook is the dominant platform for the dissemination of DeepFakes (140 cases), TikTok and Instagram have a significant presence, especially for satirical and electoral content, while news sites present DF less frequently and often for informative or debunking purposes. Distribution of DF content by platform and by purpose.

If we talk about distribution by purpose, most of the content has a political purpose (170 cases), then financial scam-type DFfollow (98). Entertainment (satire, humour) is another important purpose (90 cases) and other forms (e.g., fictitious commercial campaigns and educational deepfake) represent a small share.

These figures illustrate not only distributional proportions but also the platform-specific logics of DF dissemination, suggesting that technological affordances shape both the intent and visibility of synthetic media.

Effects of deepfake

Functions and communicative purposes of DeepFake content

This section examines the communicative functions of DeepFake content as inferred from textual and visual analysis. The content reveals distinct strategic purposes: deception, ridicule, persuasion, and entertainment. Each serves different communicational goals, ranging from political manipulation to social commentary.

Deepfake content is divided into two categories, depending on the purpose pursued by their manufacturer: (1) Deceiving some people to do certain things or not to do something, the result being favourable to the individual or group initiator of the manipulation action. Deceptions to obtain material advantages are the most common in this category, but the advantages pursued can also be those of gaining prestige and influence, by creating a positive image for oneself, but especially through misinformation, denigrating opponents or putting them in embarrassing situations.

The five major DF of this type are: (a) The field of military confrontation. The wars, of which, in 2024, the Ukraine and the Gaza Strip were in full swing, involve a massive increase in the content of communication and disinformation, in order to create advantages for the issuer’s camp and deter opponents. A deepfake product of this kind of campaign is the one in which Volodymyr Zelenski, the president of Ukraine, appears, addressing his own army to give up the fight against the invading Russian army (Roberts, 2023). (b) Political field. In January 2024, a robocall automated phone campaign was initiated in the USA, in which a voice similar to that of Biden urged voters not to vote in primary elections (Miotti & Akash, 2024). This category of forgeries is also present in Romanian politics, such as that falsified product in which President Iohannis urges voters to vote for Diana Soşoacă. (c) Financial field. They are misleading products made and disseminated to make money, either by calling for illusory investments or for non-existent charitable actions (Belanche et al., 2024). (d) Real estate and tourism. Communication products for sales or rentals contain substantial improvements or even fake images to attract customers (Chesher, 2021; Zelenka et al., 2021). (e) Erotic domain. The case of Taylor Swift, whose body was used in DF porn products (Toparlak, 2023), is well known. DF porn is a complex field, posing problems from creating exaggerated expectations, to using in political communication for blackmail or image damage (Gosse and Burkell, 2020). 2. The second category includes DF content made for entertainment purposes. There are products where various people are made to say funny things. This light, soft deepfake category primarily follows entertainment, a course one often, although depreciative features are also present. This is the case of the video content in which Klaus Iohannis, the president of Romania, appears to announce that the opening of the school year is postponed. Then his clone announces that it’s a joke, followed by the urge: ‘Go to school, slugs’. This exhortation is echoed in another falsified communication product, where a group of children without material possibilities appears, and a voice, which seems to be that of the president, renders the same insulting exhortation. Lately, this second falsified video has disappeared, being almost impossible to find and view.

It is important to specify that there are some built-in, artificial communication products, which are not DF, because their purpose is not to cheat or to mislead the public and gain advantages. These softwares are used for professional purposes, where artificial intelligence performs complex tasks, such as presenting news with non-human announcers or other areas where such figures are needed (Li et al., 2024). An expanding field is that of the digitalization of education, where virtual teachers, generated with the help of AI, can have an important role in the dissemination of knowledge (Gidiotis and Hrastinski, 2024). In the social field, the role of such elements is important, such as the creation of companions for lonely, elderly, or sick people (Ahmed et al., 2024).

Also, AI constructs are used in the film industry, in productions such as science fiction, with cyborgs and humanoid robots. But the 2024 Hollywood film industry staff strike was also sparked by a dispute over the role of AI in the art sector, with actors fearing that they will be replaced, at least as voice, by technology (Paglieri, 2024). Among the most vocal actors was Scarlett Johansson, who, at the end of 2023, advertised the use of her voice by OpenAI for ChatGPT, an action that is obviously approaching an action of falsification, as long as the actress has never given her consent for this substitution (Jones, 2024). A similar experience was experienced by dubbing actor Paul Skye Lehrman, who one evening heard his voice on a podcast, talking about how AI affects the Hollywood industry. We observe as quite easily as ordinary and necessary activities can glide relatively easily to the deepfake area with important legal consequences.

Victims of DF

The notion of ‘victimhood’ in this context is understood as

As for the victims of DF content, we can identify two types: (a) The first category is represented by persons whose image and voice are falsified, generally persons with a certain notoriety or persons with various specializations (experts) in a certain field (Boudana and Segeva, 2024). From a first group are those who are known by the nature of their profession or concerns, are politicians, those with administrative functions, actors, musicians, athletes, etc. In the second group there are people who do not explicitly want to become notorious but have professions with an impact on a certain audience: doctors, teachers, political analysts, lawyers, businessmen, and so on, even the journalists. Illustrative for this category is that fake content from the European Football Championship (Germany, 2024), which appeared after the match Romania–Ukraine. The scans of the Romanian supporters were reviewed by interested persons to render a false message of support for Vladimir Putin. (b) The second category includes the public influenced by such misleading content, especially when they are credible (Hameleers et al., 2024). And here we can identify two areas with people being misled. The first is what is commonly known by the general public or public opinion. DF manipulation targets in this case an undifferentiated public, willing to believe the false information and not go too far. The goal is to mobilize this mass as public opinion for a cause or against it. In the second case, we are talking about specialized, niche audiences, targeted to be mobilized for a specific purpose. For example, political parties and their leaders make some gestures with the precise purpose of encouraging their own members and sympathizers in the first place. Also, financial scammers target unskilled people, but willing to make financial investments, being fooled with the illusion of large short-term gains.

The emergence of a significant number of DF victims is also possible due to the fact that there are no solid legal regulations that limit the phenomenon, or if they are, they are not complied with. In Romania, a good period after the adoption of the

As dissemination strategy, DF authors and beneficiaries use fake accounts, and create electronic platforms with the appearance of seriousness, often copying the visual identity elements of respectable companies, institutions, or organizations. The trend is to launch campaigns to attract potential victims. To this end, variants of the same misleading product are created, with minimal differences, such as changing some graphics or replacing some protagonists. They are launched at once, using the technique of flooding the market, so that the basic message reaches as many users as possible. On the other hand, it also leads to an increase in credibility, because victims see the content promoted by several known people. The technique has some efficiency in ensuring online persistence. Many misleading products are reported either by those whose image is used, or by other users, so some quickly disappear from the networks. Sure, they’re replaced by other variations.

Analytical discussion of the Romanian legal context

The Romanian

From a regulatory perspective, the law illustrates the difficulty of translating normative intentions into operational effectiveness. Its focus on disclosure aligns with global trends – such as the European Union’s

This gap exposes a critical paradox: while the law reinforces democratic principles of informed consent and media responsibility, it simultaneously relies on self-regulation by platforms and creators. As such, its impact on protecting individuals from reputational harm or political manipulation remains limited. The Romanian case thus exemplifies the broader tension between freedom of expression and information authenticity in emerging AI regulation, emphasizing the need for a balanced governance approach.

Conclusions

From a strictly technical point of view, DF communication products will be more and more present in the media and social media, benefiting from the technological advance that allows their diversification and refining, in the sense that the differences from reality, de facto, will be increasingly difficult to identify. DF products come in a wide variety of forms and in all areas of social, political or economic life. These developments show major problems in the management of the communication system, in which misleading products are produced and spread insidiously, in masked form or in contents relatively easy to identify by a trained mind, but much harder by people lacking media education.

Not all DF content can be considered dangerous. The research revealed two areas where these scams seem or even are acceptable. The first is entertainment. The second group is misinformation fakes, in products used by parties in conflict (war) or competition (political parties, for example). In times of crisis, content containing disinformation occurs in a much higher number than usual situations, each involved camp or interested person seeking to gain advantages or benefits including through this communication technique.

The research reveals the concern that exists around the world to combat DF, in the special case of Romania entering discussion the new legal regulation in the field. A specific regulatory trend in the fight against misleading audio/video products is found to be positive. However, the level is insufficient, and there is also the fear that too stringent rules may affect freedom of expression (Tan, 2022). There is a greater involvement in combating these types of content, so the very dangerous ones are quickly claimed, surviving less on social networks. However, the creators of such content are persistent and come back with misleading materials in which they keep many of the misleading features, and also the tendency to launch at once in the online space several variants of the same content.

As a result of technological advance, the research also reveals an explosive increase in the number of DF content. A highly creative industry has emerged, especially in the field of entertainment. In the foreground are brought politicians, leaders in the administration, businesspeople, but also artists or athletes, the producers being attentive to events, factual elements, taken over and modified in specific products. The funny effects are in these cases the ones pursued, the quality of humour being often of a low level. Deceptive content is increasingly used in political communication in Romania, falsifying messages to destroy the image of public persons, even if some of them do not seem dangerous and seemingly the intention is only to amuse. In these circumstances, it is necessary to broaden the scope of the investigation, especially to identify negative effects and find new tools to combat misleading communication.

The central takeaway of this study is that DeepFake media have become a structural feature of contemporary political communication rather than isolated anomalies. In the Romanian case, they reveal how technological creativity intersects with political opportunism and public vulnerability. While often framed as technological artefacts, DeepFakes in fact operate as communicational acts – designed to manipulate perception, shape discourse, or generate humour – within a fragile information ecosystem. Addressing their risks therefore requires not only technical detection or legal restriction, but also the cultivation of critical media literacy and ethical awareness among users and institutions.

Limitations and future research directions

While this research offers a detailed perspective on the DF phenomenon in Romanian political communication, several key limitations must be acknowledged. Firstly, the study is geographically restricted, focusing solely on the Romanian media space. This reduces the generalizability of the conclusions on a global scale, where political, cultural, and technological contexts may influence DF dynamics differently. Secondly, the research focuses on a limited number of media and social platforms (Facebook, Instagram, TikTok, and seven major news sites). Other influential platforms such as YouTube, Reddit, Telegram, or messaging apps like WhatsApp were not included, possibly omitting relevant content. Another important limitation is the manual identification of analyzed content, based on systematic searches but lacking the use of automated DF detection tools. This approach might lead to selection bias or omission errors. Additionally, the analysis was limited to a 5-month window, which restricts the study’s capacity to capture seasonal patterns or long-term developments – particularly relevant in an electoral year like 2024. The study also lacks empirical insights into user reception – how audiences perceive, interpret, and react to DF content. Furthermore, although legal regulations are mentioned, such as the Law on the Responsible Use of Technology, no in-depth legal analysis is provided regarding its enforcement or public perception.

Based on these limitations, several future research directions are proposed. A comparative international approach could examine how DF content is produced and regulated in different countries, identifying effective legislative and strategic models. Secondly, incorporating AI-based detection systems would allow more scalable and accurate content analysis.

Further studies should include qualitative and quantitative research on user behaviour to assess vulnerability levels, media literacy, and psychological impact. A longitudinal approach, spanning a full electoral cycle or multiple years, could offer insight into the evolution of DF use across time. Moreover, a focused legal analysis evaluating the effectiveness and implementation of current regulations would be beneficial. Lastly, future work should address the ethical dimension of DF usage and explore how technology companies respond to DF-related challenges, thus contributing to the responsible governance of AI in communication spaces.

Future research should examine how national regulations such as Romania’s 2024 law interact with broader European digital governance frameworks and whether hybrid regulatory models could ensure more effective protection against DeepFake harms.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.