Abstract

The proliferation of deceptive content online has led to the recognition that some actors in the digital media ecosystem profit from disinformation’s rapid spread. The reason is that a market designed to monetize engagement with fringe audiences encourages actors to create content that can go viral, hence creating financial incentives to circulate controversial claims, adversarial narratives, and deceptive content. The theoretical claim of this piece is that the actors and practices of digital media platforms can be analyzed through their market practices. Through this lens, scholars can study whether digital markets such as programmatic advertising, commercial content moderation, and influencer marketing make money from circulating disinformation. To show how disinformation is an expected outcome, not breakage, of the current media market in digital platforms, the article analyzes the business models of pre-digital broadcasting media, partisan media, and digital media platforms, finding qualitatively different forms of disinformation in each media market iteration.

Keywords

Introduction

As deceptive content circulates on digital platforms (Vaidhyanathan, 2022; Venturini, 2022), a stream of disinformation research is attempting to understand the phenomenon and counter it (Bak et al., 2023; Weikmann and Lecheler, 2022). Disinformation research includes topics such as media manipulation (Marwick and Lewis, 2017), polarization (Kreiss and McGregor, 2023), and its impact on democratic institutions (McKay and Tenove, 2021). One emerging area focuses on the digital markets that enable profit from disinformation’s rapid spread. Examples include programmatic advertising (Braun and Eklund, 2019), commercial content moderation (Roberts, 2021), influencer monetization schemes (Hua et al., 2022), and algorithmic optimization (Giansiracusa, 2021). These studies have revealed cases in which market actors benefit financially from the spread of deceptive content online.

The literature calls for more research on the actors that profit from the proliferation of deceptive content on social media (Dholakia et al., 2023; Di Domenico et al., 2022). Previous research has called out “the avarice of advertising” in benefiting from disinformation and ignoring their responsibility in funding it (Giansiracusa, 2021: 151). Media experts urge “the advertising industry to make the sort of judgments—about truth and falsehood, acceptable and unacceptable speech—that have long been the domain of journalists” (Braun and Eklund, 2019: 19). Moreover, policymakers call on business managers and private sector actors to take responsibility and lead the reform on the democratic governance of digital platforms (Belfer Center and Shorenstein Center, 2023: 9).

This conceptual article proposes that insights drawn from constructive market studies (Araujo et al., 2008; Kjellberg and Murto, 2021; Mason et al., 2015) and market-shaping (Diaz Ruiz et al., 2020; Flaig and Ottosson, 2022; Nenonen and Storbacka, 2021) can identify how market actors benefit from circulating (or ignoring) misinformation and disinformation on social media. Conceptually, the relationship between disinformation research and constructive market studies (s (CMS) is one between “domain theory” and “method theory” (Lukka and Vinnari, 2014: 1309). Domain theory refers to knowledge on a substantive issue, that is, disinformation and method theory refers to the conceptual approach used to study it.

This article is organized as follows. After this introduction, the section “Domain theory: the anatomy of disinformation research” reviews the literature on disinformation research, presenting relevant definitions and reviewing the commercial underpinnings of digital platforms. The section “Method theory: CMS” introduces CMS and market-shaping; the theoretical lenses through which digital media platforms can be theorized as markets that can nudge content creators to circulate deceptive content to entice engagement. The section “The practices of media markets” contrasts the market practices of digital media platforms with the previous market configurations of pre-digital broadcasting and partisan media (Törnberg and Uitermark, 2022). The section “A market practices approach to disinformation” proposes a theoretical framework in which the monetization of attention and consumer engagement maximization can prompt digital media actors to prioritize profitability over polarizing content designed for a fringe but engaged audience. Finally, the section “Conclusion and roads ahead” suggests opportunities for future research.

Domain theory: the anatomy of disinformation research

According to the Shorenstein Center at Harvard University, disinformation research is an academic field that studies “the spread and impacts of misinformation, disinformation, and media manipulation,” including “how it spreads through online and offline channels, why people are susceptible to believing bad information, and successful strategies for mitigating its impact” (Shorenstein Center, 2023). Accordingly, disinformation simultaneously refers to a research stream and one of its manifestations.

A recent report on the democratic governance of digital platforms identifies several pressing issues (Belfer Center and Shorenstein Center, 2023: 9–10), including how social media amplifies the reach of disinformation at a large scale and the emergence of echo chambers. However, the report does not identify as a key issue how market actors benefit financially from the rapid spread of disinformation. This gap prevents the design of a system that reduces the financial incentives to profit from the circulation of misinformation. It is possible to create effective governance mechanisms for digital platforms by mapping the markets and market-like dynamics of disinformation.

Conceptualizing misinformation and disinformation

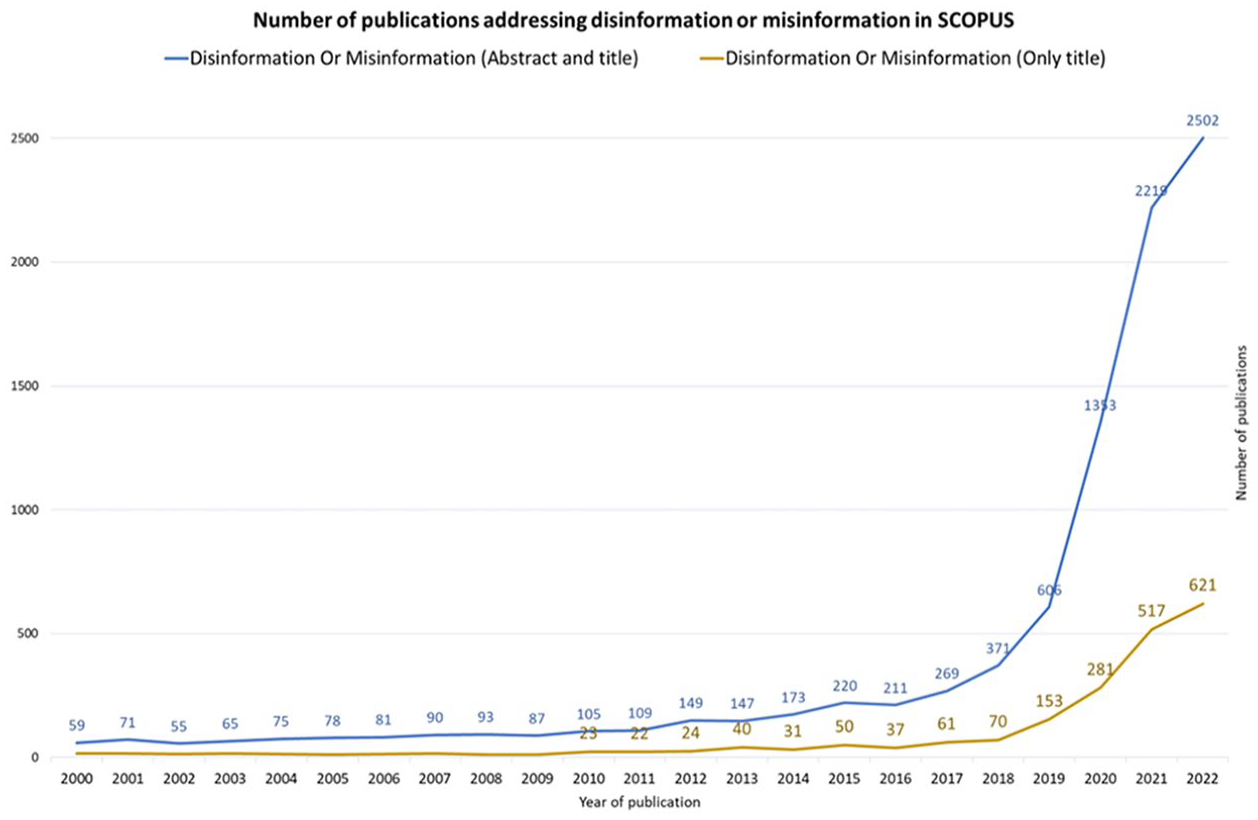

Figure 1 shows how disinformation research has grown substantially, expanding into several academic fields, such as health sciences (Gisondi et al., 2022), computer sciences (Varma et al., 2021), media studies (Bak et al., 2023), and political communication (Freelon and Wells, 2020). While the increase in publications has led to a better understanding of the topic, it has also resulted in theoretical confusion. Kapantai et al. (2021: 1137) argue that, despite the numerous studies, most of them are isolated and ad hoc approaches, leading to fragmentation in the field. Table 1 introduces recent reviews on disinformation research.

Rapid growth of disinformation research.

Recent reviews on disinformation research.

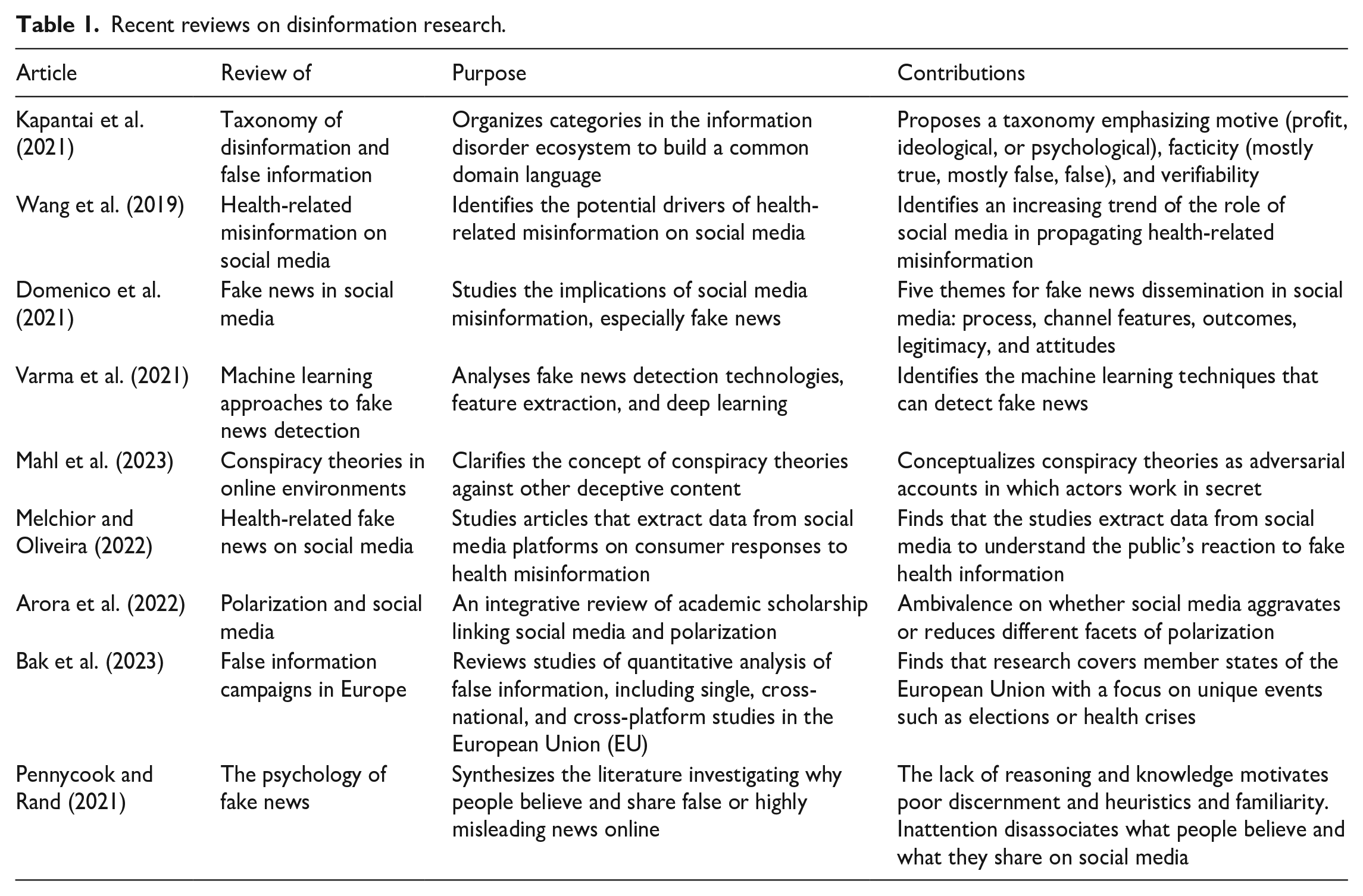

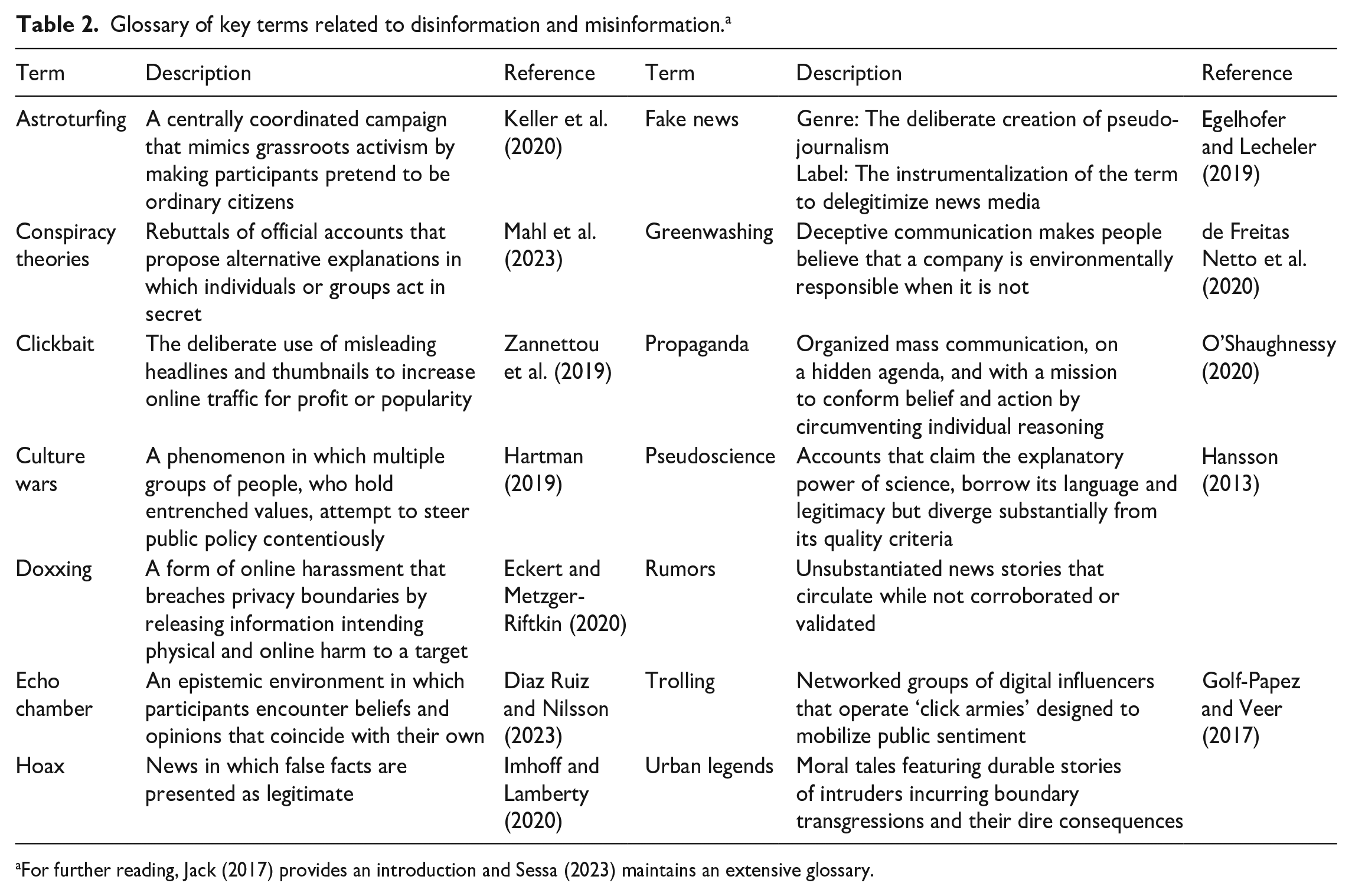

Disinformation research encompasses various related phenomena such as propaganda, astroturfing, conspiracy theories, fake news, and pseudoscience (see Table 2). As the terminology multiplies (Zannettou et al., 2019), researchers have mapped dozens of terms (Jack, 2017) and composed glossaries (Sessa, 2023). Some categorizations may reduce the conceptual overlap. For instance, Kapantai et al. (2021) introduced 11 types of disinformation phenomena based on motive (e.g. profit, ideological, or psychological), factuality (e.g. mostly true), and verifiability.

Glossary of key terms related to disinformation and misinformation. a

For further reading, Jack (2017) provides an introduction and Sessa (2023) maintains an extensive glossary.

This article focuses on two terms: disinformation and misinformation. The main difference between them is the presence of intent (Fallis, 2015: 402).

Early studies on misinformation concentrated on statements that were not factual or conveyed false information. Therefore, misinformation initially refers to the information’s quality, whether inaccurate, incomplete, or false (Fallis, 2015). Recent studies define misinformation as deception rather than accuracy because it can include falsehoods, selective truths, and half-truths (Chadwick and Stanyer, 2022; O’Shaughnessy, 2020).

Researchers seeking to understand how to correct misinformation (Lewandowsky et al., 2012) have focused on fact-checking. However, one can fact-check news, but not beliefs (Diaz Ruiz and Nilsson, 2023: 29), and studies show that fact-checking can backfire (Ecker et al., 2020; Nyhan and Reifler, 2010). Others studied what makes people susceptible to misinformation (Del Vicario et al., 2016; Lewandowsky et al., 2017), including the sociality that enables its circulation (Altay and Acerbi, 2023), and its effects such as radicalization (Radanielina Hita and Grégoire, 2023).

Unlike misinformation, disinformation is an orchestrated activity in which actors insert “strategic deceptions that may appear very credible to those consuming them . . . [per] intentional falsehoods spreading as news stories or simulated documentary formats to advance political goals” (Bennett and Livingston, 2018: 124). The term originates in military intelligence, as an adaptation from the Russian language (dezinformatsiya, дезинформация; Newman, 2022: 86). During the Cold War, the Soviet Union used disinformation to influence policies, distract the public, and manipulate the media, for example, by instilling uncertainty, shape expectations, and tarnish the reputation of political opponents (Samoilenko and Karnysheva, 2020).

Today, disinformation refers to how public and private actors push “adversarial narratives” to achieve their strategic goals, whether political or commercial (Rogers, 2022). For example, the far-right’s portrayal of immigrants as illegal aliens is “less about the individual stories or facts, and clearly more about the overarching narrative. That narrative is intentionally misleading, and more importantly, adversarial against immigrants” (Rogers, 2022). Disinformation research also studies deceptions for financial gain, such as selling fake cures for COVID-19 (Di Domenico et al., 2022). For the European Commission (2018), disinformation has a commercial angle, as it “includes all forms of false, inaccurate, or misleading information designed, presented, and promoted to intentionally cause harm or for profit” (p. 10).

Digital platforms define disinformation as deliberate efforts to mislead using digital technologies. “We refer to these deliberate efforts to deceive and mislead using the speed, scale, and technologies of the open web as disinformation” (Google, 2019: 2). According to Facebook’s parent company, Meta, malice is the crucial characteristic of disinformation, meaning the intention to harm or knowledge that actions will cause harm (Meta-Facebook, 2021). However, even though digital platforms acknowledge the spread of disinformation, there is growing evidence that their efforts may be incompatible with their business models. One example is how Facebook whistleblower Frances Haugen, a former manager, testified before the US Senate Commerce Committee, revealing that “Facebook repeatedly chose to maximize online engagement instead of minimizing harm to users” (Stacey and Bradshaw, 2021). Haugen’s claim raises questions about what makes these two options mutually exclusive.

Digital platforms and disinformation markets

Previous research on media studies identified the existence of financial incentives behind the spread of disinformation; however, their proper examination remains a gap in the literature. Therefore, the study of the market structures of digital media platforms is a productive step. For Dholakia et al. (2023: 5), “In the age of superabundant information, the emergence and rapid ascendance of misinformation, is not paradoxical,” continuing, “with profit-driven media, the conditions for the rise of misinformation have been in place for decades, and social media have given a massive boost to these conditions.”

Vaidhyanathan’s (2022) “Anti-Social Media” conceptualized social media platforms such as Facebook as having interdependent mechanisms, including a pleasure machine, an attention machine, and a surveillance machine. The pleasure machine is responsible for providing small tokens of validation and maintaining relationships to entice users to return to the platform repeatedly. Traffic, engagement, and clicks power an attention machine that allocates content competitively. Facebook uses this attention to build a surveillance machine. “Surveillance capitalism,” explained by Zuboff (2023), involves monitoring and farming user data, such as photos, likes, friendships, and behavior, to generate a data economy for commercialization to third parties.

Researchers studying the digital infrastructures that allow problematic content to reach the public have identified the role played by algorithms (Giansiracusa, 2021) and cookies (Mellet and Beauvisage, 2020). On social media, algorithms are computational rules determining how users access data such as search results, user-generated content, and advertisements. Algorithms link content to users via HTTP cookies, which refer to code stored on users’ devices that convert online behavior into data that platforms can use to match users with content and ads (Mellet and Beauvisage, 2020). Algorithms are not neutral or passive; they are performative tools that shape consumer behavior (Airoldi and Rokka, 2022) and can reify social prejudices (Noble, 2018). Digital marketers (Ryan et al., 2023) influence algorithms through exchange and non-exchange interventions to drive traffic.

The structure of digital media markets tempts brand managers and digital marketers to ignore whether their ads support disinformation (Braun and Eklund, 2019; Giansiracusa, 2021: 151). One example is programmatic advertising (Braun and Eklund, 2019), a digital market that automates ad placement with online publishers, allowing AdTech firms to bid for content allocation in real time, promising brands the ability to reach their audience. However, since programmatic advertising does not consider the publisher or the nature of the sponsored content, brands can unknowingly place ads on disinformation sites.

Regarding content curation, digital platforms have pledged to remove misinformation and disinformation quickly. Their partial success has sparked research on the commercial aspects of content moderation, as digital platforms outsource their curatorial responsibilities to a network of firms (Roberts, 2016, 2021). Content moderators have commercial incentives and operate under opaque rules; hence, moderators struggle with mutually exclusive interests. They must balance the enforcement of well-being guidelines with the financial benefits of overlooking problematic content when it has the potential to go viral.

Digital platforms host distinct actors that learn to use (and abuse) market infrastructures to drive traffic. One example is the influencer industry (Hund, 2023), which often uses controversial content to game the algorithm to drive engagement. Influencers use a combination of data analytics and algorithm optimization practices to create content that resonates with fringe but engaged audiences (Jin et al., 2019). For instance, a study of far-right micro-celebrities shows that fringe communities can quickly turn into echo chambers that demand increasingly polarizing content (Lewis, 2020), as influencers increasingly rely financially on direct-from-consumer monetization schemes such as affiliate marketing programs, cryptocurrency donations, and merchandising (Hua et al., 2022). As a result of these financial arrangements, influencers must continuously offer “red meat” and “dog whistles” to keep their base engaged.

Method theory: CMS

CMS is a multidisciplinary field that emerged from Economic Sociology (Callon, 1999; Fligstein and Dauter, 2007; Fourcade, 2007) and has developed in economics (Roth, 2008), management (Gurses and Ozcan, 2015), science and technology studies (Knorr-Cetina, 2006; Mackenzie et al., 2007), marketing (Araujo et al., 2008; Nenonen et al., 2019), and consumer research (Diaz Ruiz and Makkar, 2021; Martin and Schouten, 2014). According to Kjellberg and Murto (2021), this research field aims to understand the changes occurring in different market systems and how various actors influence or are influenced by them (Kjellberg et al., 2015; Nenonen and Storbacka, 2021). This approach has been used before to study media markets. For example, Gurses and Ozcan (2015) compared a failed and a successful attempt to introduce pay TV in the United States, identifying how entrepreneurs legitimized their offerings despite resistance from incumbents.

CMS aims to study “really existing markets” (Boyer, 1997) situated at the meso-level of the economy, such as industries and business networks. Markets are empirically heterogeneous, meaning they do not necessarily reflect a single theoretical model. In other words, there is not just one market but several, each featuring unique organizing traits, not all operating as per an ideal (Mountford and Geiger, 2021). Instead, CMS theorizes that markets (in plural) can be studied as both entities and processes (Mele et al., 2022), which means assessing the arrangements of configurations of a market that ostensibly exists (the market as a noun) and the practices, devices, and activities that recursively constitute it (market as a verb). For instance, researchers study the collaborative actions of micro-level individual companies and consumers (Giesler and Fischer, 2017; Maciel and Fischer, 2020).

One of the primary motivations for studying markets is the misleading assumption that markets are self-correcting entities (Callon, 1998a). Research shows that markets often require interventions to correct negative externalities. In Economics, a negative externality is an undesirable consequence that transaction costs cannot capture—pollution being the traditional example (Shive and Forster, 2020). A polluting firm makes business decisions based only on the direct costs and shareholder profit without assuming the external damage to society and the environment. Neither the producer nor the user pays for externalities because pricing mechanisms often fail to capture them. On digital platforms, one could argue that the damage that social media disinformation causes to individuals and the fabric of democratic society constitutes a negative externality.

The field is constructive because it focuses on how the multiple understandings, sayings, and doings in markets shape and manifest actual markets (Callon, 1998b; Kjellberg and Helgesson, 2006, 2007). A constructive or performative approach stresses the emergent character of markets, which means that market actors bring markets to existence through their day-to-day practices (Araujo et al., 2008). It identifies the chains of reification (how ideas about markets become concrete in practice) and abstraction (how actual practices inform concepts and theoretical ideas). Therefore, the market-shaping framework conceptualizes markets as malleable complex systems subject to change (Flaig and Ottosson, 2022; Nenonen and Storbacka, 2021). The “shaping” part means that new ideas, actors, norms, and business models shape (and are changed by) markets. For digital platforms, CMS allows us to move away from stale discussions of efficacy (e.g. “Are digital media platforms really markets?”) and instead attend to the concrete effects of market-like practices (e.g. “How does the monetization of engagement metrics incentivize clickbait?”).

The assumption of distributed agency dilutes individual actors’ capacity to singlehandedly affect markets. Instead, an assemblage of humans and non-humans shapes markets—for example, machines, artifacts, infrastructures, and computer programs. Studies in financial markets show that devices are essential to shape them (Knorr-Cetina and Preda, 2005); hence, as non-human actors stabilize the market system, they remain fragile and can change through new practices (Knorr-Cetina, 2006). For example, MacKenzie (2006) studied how a piece of financial mathematics meant for estimating prices for derivatives shaped a new market by making certain trading practices calculable.

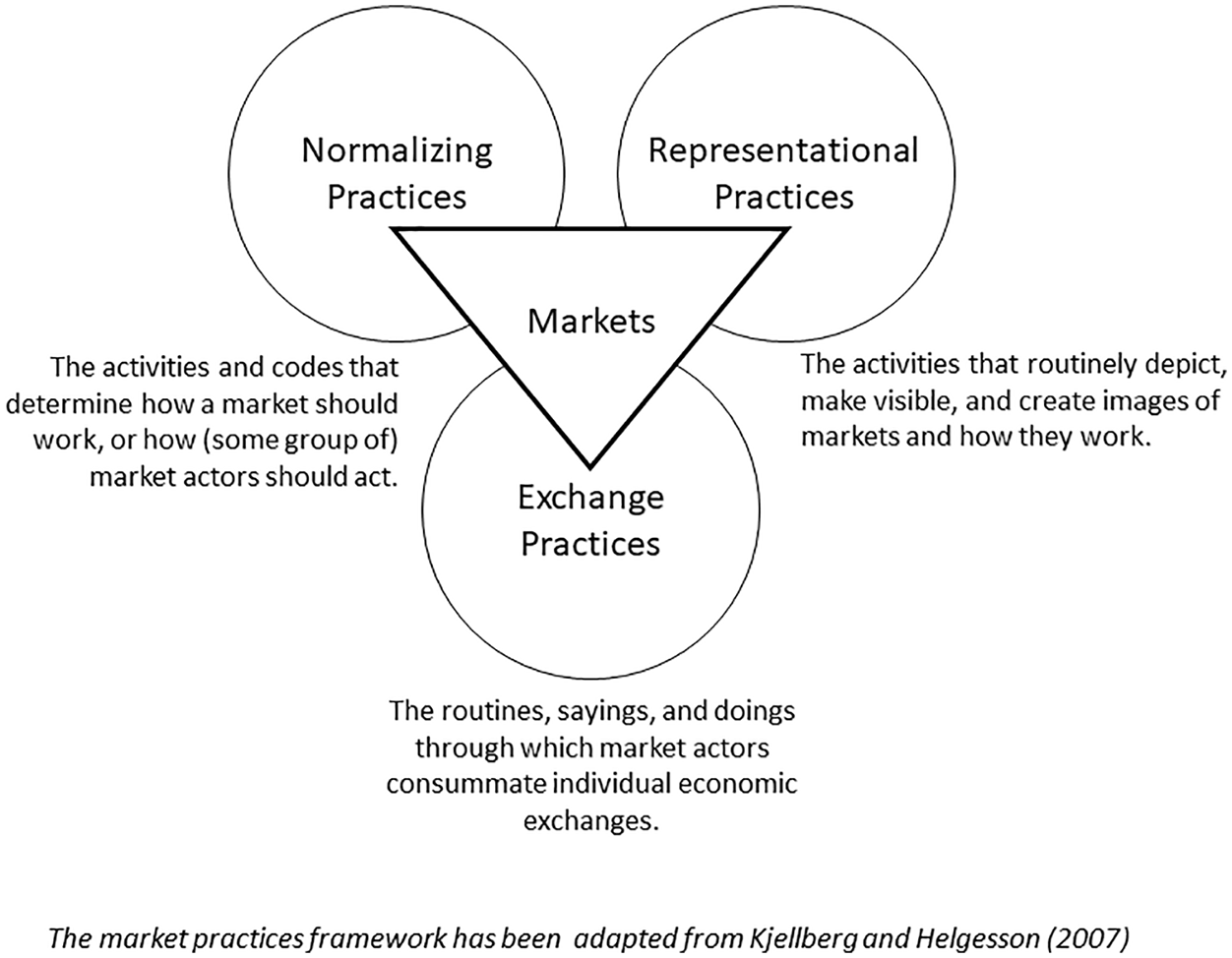

Markets can be studied through their practices. Figure 2 is an adaptation of the framework by Kjellberg and Helgesson (2006, 2007) proposing that markets can be analyzed through exchange, normalizing, and representational practices. Exchange practices comprise calculative and valuing activities that enable commercial transactions to take place. Normalizing practices include the conventions, implicit rules, and formal regulations market actors follow. Finally, representational practices comprise sensemaking, categorizations, and depictions that inform the collective understanding of how the market should work. Through these three practices, this article examines digital media platforms as performative market systems that nudge actors to act in a certain way.

The market practices framework in constructive market studies.

The practices of media markets

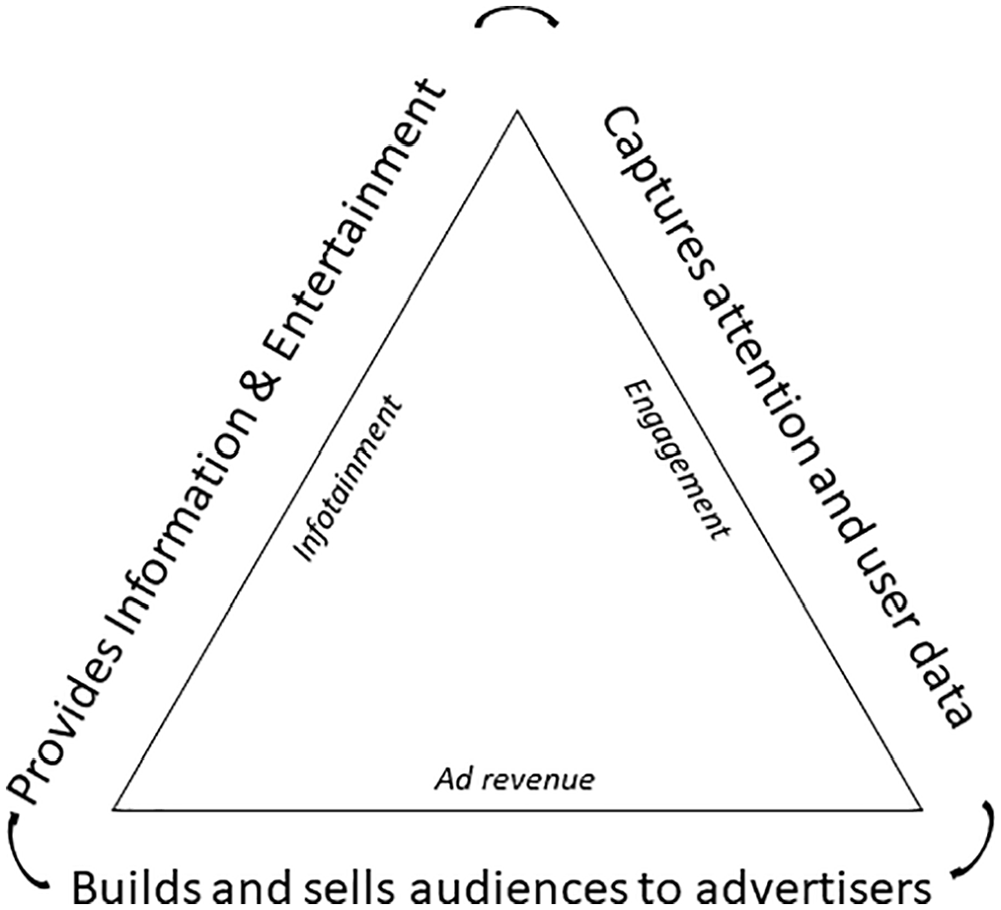

A market practices approach (Kjellberg and Helgesson, 2006, 2007) is helpful in analyzing how this business model changed over time. The starting point is a bird’s eye view of the media’s business model, a narrative description of how an organization creates, delivers, and captures value. Figure 3 illustrates the media’s triple-product business model (Dholakia et al., 2023). Media outlets provide information and entertainment to the public (i.e. infotainment), often at no cost, while also capturing their attention and collecting user data to sell to advertisers (Zuboff, 2023).

The triple-product business model of digital media markets.

Market practices of mainstream media: the broadcasting model

Exchange practices—mass audience

The broadcasting business model of the pre-digital era of the 20th century relied on a mass audience (Webster and Phalen, 1997). Operating a broadcasting station was costly, and licensees needed a large audience to attract advertisers (Törnberg and Uitermark, 2022). As licenses only covered a limited geographical area, media organizations aimed to maximize viewership (Webster and Phalen, 1997), targeting the so-called “mainstream” market of moderate centrists and middle-of-the-road consumers.

Representational practices—the marketplace of ideas

The mainstream media market has been legitimized through the “marketplace of ideas” metaphor, which suggests that democratic societies should encourage competition of ideas and voices, similar to commercial markets (Entman and Wildman, 1992; Gillespie, 2010). It states that democratic societies should allow market-like competition to frame public debate (Ho and Schauer, 2015). This metaphor is based on John Stuart Mill’s (1859) “On Liberty,” which proposes a self-sustaining mechanism for public discourse. For Mill, free speech allows individuals to choose from competing ideas, just like markets deliver the best goods efficiently by letting individuals choose from competing offers. This representation offers an analogy to a market where competition eliminates weak ideas. Just as consumers evaluate products by comparing their quality to other alternatives, rational individuals evaluate ideas as long as there is no coercion or censorship. The metaphor proposes that ideas should be judged based on their merits.

Normative practices—separation from advertising revenue

Formal rules and informal conventions affect the content we see in the media. During the broadcasting model, governments offered detailed guidelines for accepted speech and the aesthetics of neutrality. One example was the now-defunct Fairness Doctrine, removed in 1987. This US regulation required broadcast licensees to provide coverage of issues of public importance by offering multiple perspectives (Napoli, 2021). Broadcasting networks adhere to detailed standards regarding, for instance, the acquisition of information, confidentiality, and the use of verifiable sources. One essential consideration in broadcasting media is the strict separation of the newsroom from advertising. For example, the Finnish broadcasting corporation YLE has guidelines against providing advertisements in connection with its content. The guidelines take a critical attitude towards materials that have commercial interests related to them. We only publish such materials if the publishing decision can be supported by journalistic considerations or the content is such that it should be published. We refuse to be used as a tool for advertising or marketing communication (YLE, 2021).

Market practices of partisan media: minimum viable markets

Exchange practices—minimum viable audiences

Changes in political culture and technology led to the creation of new markets for media that catered to minimum viable audiences. A minimum viable audience is the smallest group of people that can sustain a media organization, by offering enough revenue-making opportunities for growth. With the added advantage of reduced broadcasting costs and subscription fees, cable news altered the media’s approach as it became good business to target an identity-driven segment. Building upon the marketing technique of market segmentation, an organization can target a group of relatively homogeneous consumers that have similar values, goals, and attitudes. Cable media networks and syndicated talk radio shows targeted market segments based on ideological identification.

Representational practices—controversy

In marketing, the logic of market segmentation includes the creation of a common sense of identity. Partisan media organizations such as Fox News built engagement by appealing to controversy and grievances to build a common sense of identification with its audience (Brock et al., 2012). Smaller audiences meant shifting programming from the general audience to tailored content for smaller market segments. In the United States, one prominent segment included white and militant Christians (Benkler, 2020: 51). This change has resulted in a partisan media landscape, especially on the right, with individuals gravitating toward media outlets that align with their beliefs. A prominent example is Rush Limbaugh, an American talk-radio host who built a career by prosecuting grievances against liberals (Jamieson and Cappella, 2008).

Normative practices—opinion

One normative difference between traditional broadcasters and partisan organizations is the distinction between factual news and opinion. Journalists in partisan organizations subscribe to ethical codes of journalistic conduct, but opinion pundits and commentators do not. Commentators often use the neutral aesthetics of news, but they are not journalists from a legal standpoint. This difference allows these media organizations to distribute their viewpoints freely even if they are confused with news. However, even an opinion has limits. Libel laws prohibit the distribution of content that knowingly and intentionally harms third parties, opening the potential liability of civil litigation.

Market practices of digital media platforms: surveillance and attention

Exchange practices—attention economies

In digital media platforms, a constellation of influencers and content creators can focus on fringe communities, producing content to exploit the platform’s monetization schemes. Content creators are producing an overabundance of information, which means that digital media platforms have become a marketplace of attention. “In an information-rich world, the wealth of information means a dearth of something else: a scarcity of whatever it is that information consumes. What information consumes is rather obvious: it consumes the attention of its recipients” (Simon, 1971: 40–41). Rather than allocating content to viewers, digital media platforms do it the other way around: they allocate attention competitively, making content creators compete for views and likes. The system remunerates the content creators with the highest engagement metrics: views, comments, and likes.

Representational practices—platforms

In the literature, contemporary digital media markets are called “platforms” (Gillespie, 2010). This term carries loaded meanings, as it evokes a place where one can be heard, such as the public squares of the past. The notion of platforms offers a promise of political openness that neatly aligns with the concept of a marketplace of ideas. “YouTube is designed as an open-armed, egalitarian facilitation of expression, not an elitist gatekeeper with normative restrictions” (Gillespie, 2010: 352).

The platform representation has led to the belief that all content has equal value. However, media organizations such as The New York Times, The Guardian, and Aljazeera maintain content on digital media platforms that is much more expensive to produce than the opinion of a YouTuber mimicking the aesthetics of journalism. Nevertheless, both voices compete on the same platform even though influencers do not hold the same standards as journalists. Digital media platforms promise to democratize user-generated content (Gillespie, 2010), even though social media professions are not as egalitarian as they may appear initially (Hund, 2023).

Normative practices—community guidelines

Digital platforms allow content creators to earn revenue, following specific regulations for what content is permitted. Normative practices include the monetization guidelines to generate income by sharing advertiser revenue. For instance, YouTube has established guidelines determining what content is permitted and who can generate revenue on the platform (Hua et al., 2022). These formal rules determine which content is acceptable, which can be removed, and when to deplatform content creators. Failure to comply with these policies can result in de-monetization, leading to a loss of ad revenue.

Whereas platforms offer norms, some viewers converge upon fringe channels, offering content creators alternative norms for valuing and monetizing content in ways that differ from the platform’s guidelines (Lewis, 2020). Reports indicate that YouTube’s community guidelines are insufficient in reducing problematic content (Tufekci, 2018), partly because some content creators circumvent guidelines by seeking notoriety in fringe communities and capturing direct-from-consumer funds. Hua et al. (2022) show how problematic content creators use alternative monetization strategies to skirt community guidelines and circumvent de-monetization by establishing direct revenue streams with their fans, including requests for donations, cryptocurrencies, merchandise sales, and affiliate marketing programs. Some sites enable deplatformed individuals to receive cryptocurrencies, thus providing a financial line to problematic content.

A market practices approach to disinformation

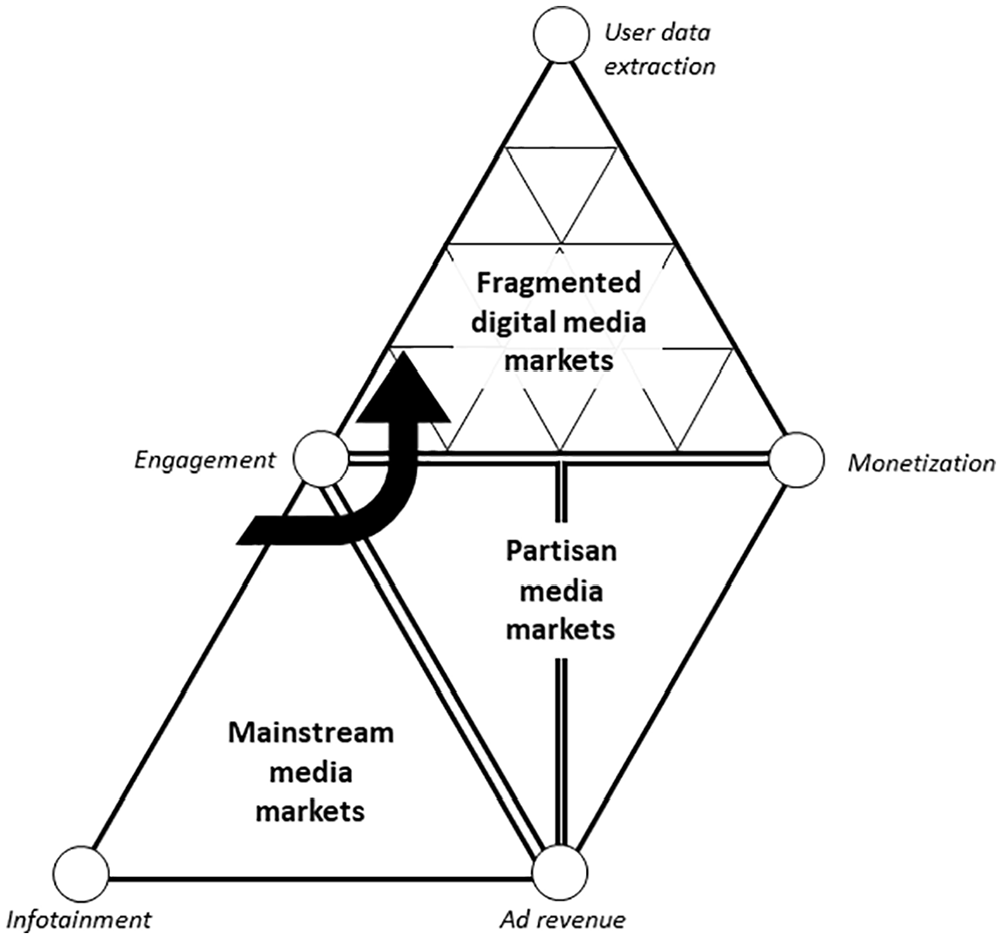

CMS can offer a framework for digital media platforms’ commercial practices underlying disinformation research. The framework depicts how the changes in business models nudge market actors toward content that can lead to qualitatively different types of misinformation. Figure 4 guides the argument. It builds upon the triple-product model by Dholakia et al. (2023) of media (see Figure 3), illustrating how media markets trade infotainment for user attention and commercialize it through ad revenue. The diagram shows the increasingly complex monetization systems of three stages of media markets (mainstream media markets, partisan media markets, and fragmented digital media platforms).

A market shaping approach to fragmentation and monetization in media markets.

Disinformation slant in mainstream media markets: a hegemonic worldview

The triangle at the bottom left of Figure 4 depicts the market structure of a pre-digital era in which broadcasters targeted a mass audience. “Media concentration (three broadcast networks as well as a growing number of one-newspaper towns) made reporting from a consensus viewpoint and avoiding offending any part of your audience good business” (Benkler, 2020: 50). As a result, this business model emphasized consensus aligned with the beliefs and values of the majority, even if agreements were manufactured. Broadcasters appealed to middle-of-the-road consumers, avoiding polarizing large swaths of their audience (Törnberg and Uitermark, 2022; Webster and Phalen, 1997). Therefore, broadcasters had a financial incentive to ignore minority voices and their grievances. As a result, broadcasters’ misinformation slant omits critical voices, ignoring minorities, neglecting their interests, and constructing the appearance of consensus.

Disinformation slant of partisan media markets: identity-based grievances

Figure 4 depicts a triangle split into two sections. The figure represents how changes in the fabric of society, the advent of cable TV, and regulatory changes allowed media organizations to target niche or small segments of the population. Nenonen and Storbacka (2020) refer to a minimum viable market. Here, it means the smallest possible market segment that a media organization can serve to sustain its business. Targeting a minimum viable audience where media actors could position themselves through identity politics made business sense.

Along with identity politics came a confrontational media. By appealing to shared grievances, media organizations could position their messaging to respond to actual or perceived opponents—the so-called culture wars (Hartman, 2019). Partisan media organizations do not need to reach the majority or centrists to make business sense. As Benkler (2020) explains, right-wing partisan organizations in the United States found a large and receptive audience of identity-led beliefs. As a result, partisan media’s misinformation slant is toward confrontational narratives, ideological purity, and overemphasizing minority viewpoints.

Disinformation slant of digital media markets: engaging content

In Figure 4, the multiple small triangles represent audience fragmentation, including the fringe communities that sometimes spouse problematic beliefs. The combination of algorithms and HTTP cookies allow content personalization using personal data and predictive analytics to learn what content triggers engagement. As influencers and digital marketers work with engagement metrics, they learn that controversial and emotional responses are highly engaging and tend to go viral (Tellis et al., 2019). This feedback loop is performative as it incentivizes the use of attention-hacking techniques meant to capture eyeballs by any means and at any cost. As a result, clickbait and polarizing content thrives on digital platforms.

Audience fragmentation, monetization schemes, and user-data extraction are supercharging conditions for the spread of misinformation. The reason is that the digital market monetizes highly engaging content through ad revenue-sharing programs. By extracting user data and modeling it through predictive analytics (Zuboff, 2023), AdTech firms help advertisers to move from mass audiences to personalized content. The new business model aims to maximize consumer engagement, including the time and attention spent on the platform. One problem is that the engagement model was designed for entertainment, not information. The business model nudges content creators to produce highly engaging content, which users will see, interact with, comment on, and share with their network. Since highly engaging content is often emotional and controversial, content creators lean toward polarizing opinions as these will gather more reposts, comments, and shares.

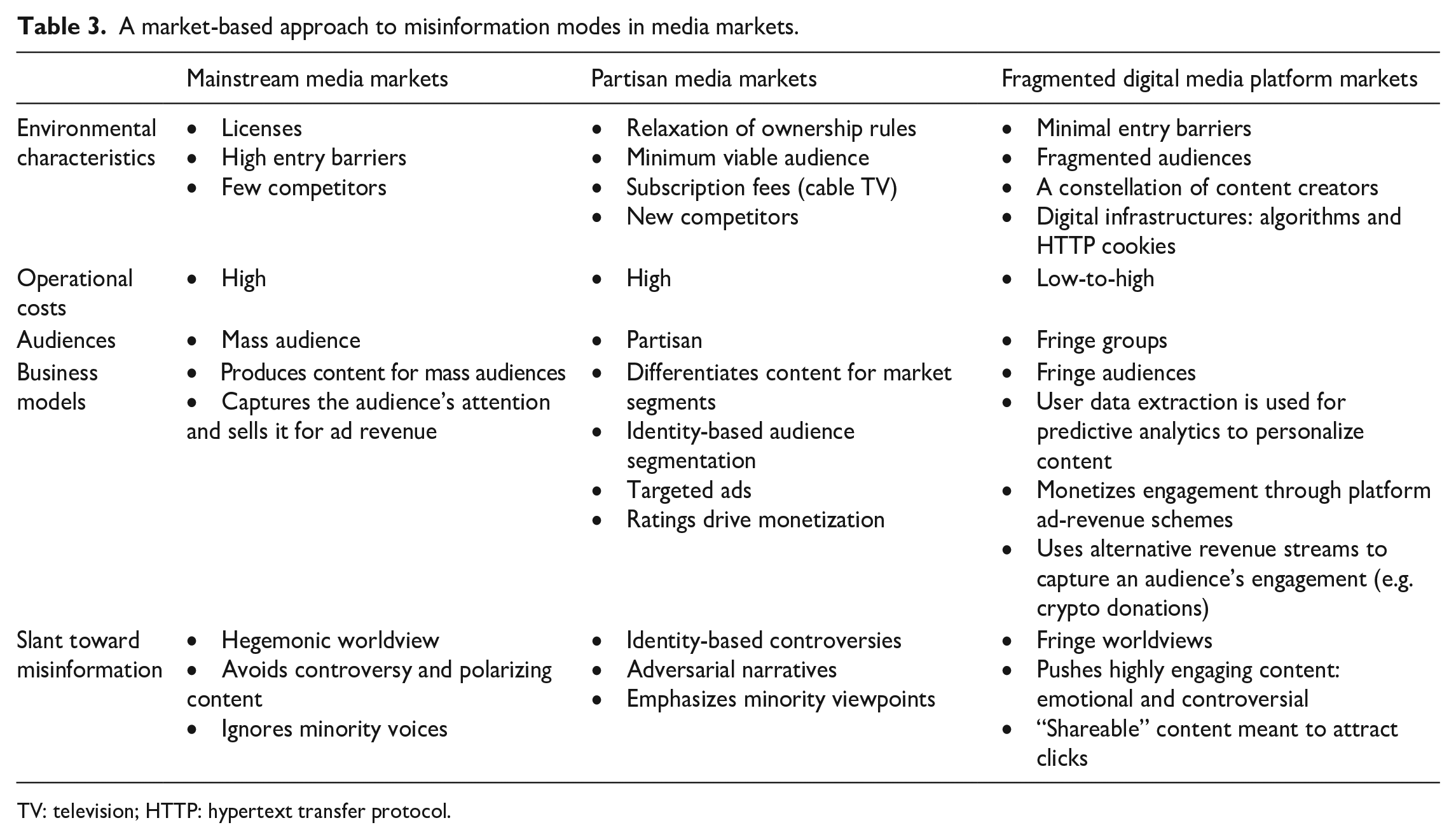

Table 3 summarizes the market-based approach to disinformation research, arguing that, far from an aberration, misinformation in this type of market is an expected outcome.

A market-based approach to misinformation modes in media markets.

TV: television; HTTP: hypertext transfer protocol.

Conclusion and roads ahead

It is not new to say that the rapid spread of disinformation on social media is a lucrative business. Existing research on media studies has noted that financial incentives reward the spread of disinformation (Braun and Eklund, 2019). However, policymakers and researchers can benefit from thoroughly examining the structure of digital media markets. Market-based theoretical lenses, such as CMS (Kjellberg and Murto, 2021) or market-shaping (Diaz Ruiz et al., 2020; Nenonen and Storbacka, 2021), are structured around such questions and have the potential to produce a fuller and more actionable understanding of the market apparatus in need of fixing. Policymakers can create meaningful interventions to restructure the market infrastructures that create financial rewards from spreading disinformation.

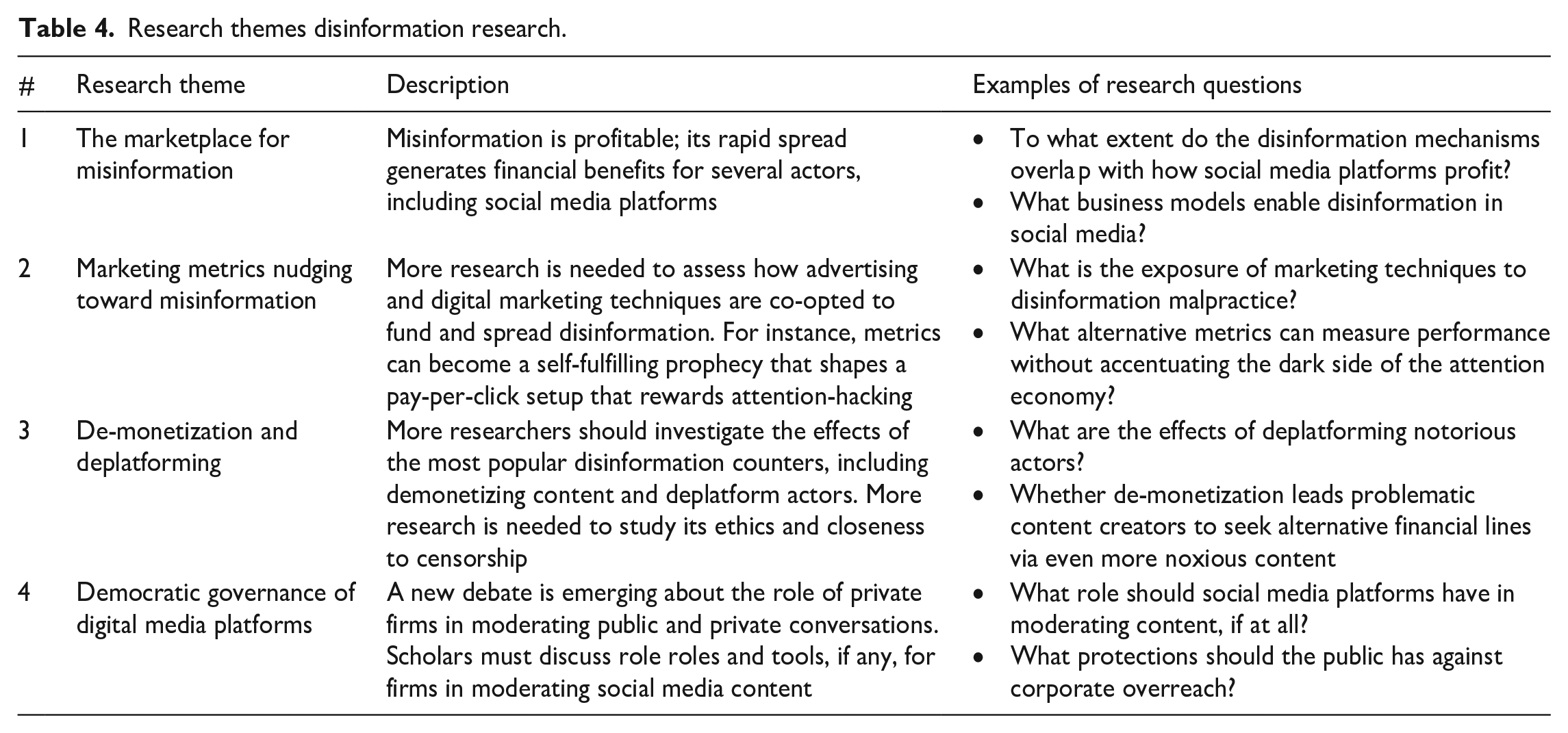

Looking ahead, Table 4 outlines potential areas for disinformation research. The first area, “the marketplace for misinformation,” aims to study who benefits from spreading disinformation. Since various market actors have different business models, researchers can analyze multiple actors in media markets, not just digital platforms. AdTech companies, brand managers, influencers, and publishers all stand to benefit differently from misinformation. There is much to learn about how specific digital media markets, such as programmatic advertising or influencer monetization schemers, seek financial gain from encouraging or ignoring misinformation on social media. For instance, with the current disentanglement of ads from publishers, it becomes easy for advertisers to remain unaware of whether their ads fund disinformation. Therefore, future research can investigate how well-known brands invertedly fund disinformation.

Research themes disinformation research.

The second area explores how marketing metrics such as “online engagement” incentivize the production of content that seeks attention at any cost. Due to its monetization potential, engagement determines the value of online content. ‘The media’s dependence on social media, analytics and metrics, sensationalism, novelty over newsworthiness, and clickbait makes them vulnerable’ (Marwick and Lewis, 2017: 1). By leveraging user data extraction to predict what will generate the most likes, comments, shares, views, and clicks, “engagement metrics” aim to maximize their time spent on the platform and the intensity of interaction. Since we know that highly engaging content is emotional and controversial, it is unsurprising that influencers produce provocative and controversial content. For example, far-right organizations “have developed techniques of ‘attention hacking” to increase the visibility of their ideas through the strategic use of social media, memes, and bots—as well as by targeting journalists, bloggers, and influencers to help spread their content’ (Marwick and Lewis, 2017: 1). Future research should investigate how to create a digital media market less dependent on engagement and attention hacking techniques.

The third area invites further research into the market-based strategies for commercial content moderation (Roberts, 2016), including demonetizing and deplatforming problematic actors (Rogers, 2020). Today, several strategies have been proposed to counter disinformation, including an emerging toolbox that can quietly decelerate the spread of problematic content and limit the reach of pernicious groups. However, these groups often set up backup channels and alternative monetization schemes to thrive financially even after being banned from ad-revenue schemes (Hua et al., 2022). Future research should investigate how actors exploit social media through loopholes and unethical practices to monetize disinformation.

The fourth area calls for more research on the democratic governance of digital platforms (Belfer Center and Shorenstein Center, 2023). Tech companies often claim to be democratic platforms (Gillespie, 2010), and now society finds itself at the odd intersection in which tech moguls aim to steer and moderate public discourse, while profiting from farming private data under surveillance capitalism (Zuboff, 2023). Scholars and policymakers must discuss whether digital platforms should be public goods or media markets, and whether unelected technocrats should be involved in managing the voices of a democratic society.

Overall, the current version of digital media markets may encourage problematic behavior by rewarding it financially. Therefore, it is essential to reimagine digital media platforms’ business models and ecosystems. Simply relying on free-market conditions is insufficient to correct the negative externalities that the spread of misinformation has on society. Instead, future researchers can use a market-shaping approach to reimagine a better media market.

Footnotes

Author’s note

The article is not currently being considered for publication by any other print or electronic journal.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.