Abstract

In this study, we investigate dysfunctional information sharing on WhatsApp and Facebook, focusing on two explanatory variables—frequency of political talk and cross-cutting exposure—and potential remedies, such as witnessing, experiencing, and performing social corrections. Results suggest that dysfunctional sharing is pervasive, with nearly a quarter reporting sharing misinformation on Facebook and WhatsApp, but social corrections also occur relatively frequently. Platform matters, with corrections being more likely to be experienced or expressed on WhatsApp than Facebook. Taken together, our results suggest that the intimate nature of WhatsApp communication has important consequences for the dynamics of misinformation sharing, particularly with regard to facilitating social corrections.

In the run-up to the 2018 Brazilian elections, false and misleading information was widely circulated through the mobile instant messaging service WhatsApp (First Draft, 2019). Researchers estimated that roughly half of all images circulating through the service were likely altered or distorted to convey false information (Tardáguila et al., 2018). Another study reported by The Guardian sampled WhatsApp messages prior to the election and found evidence of a politically right-leaning coordinated campaign to spread misinformation and bolster Jair Bolsonaro, who ultimately won the election (Avelar, 2019). Similar concerns have been raised in other democratic countries, including India and Indonesia, about the use of WhatsApp to spread false and misleading information in an effort to affect public opinion and alter election outcomes.

Mobile instant messaging services (MIMS), such as WhatsApp, Snapchat, and Facebook Messenger, are increasingly being used for more than casual communication (Gil de Zúñiga et al., 2019; Valeriani and Vaccari, 2017). These private messaging applications became important venues for people to talk about political issues and news, access information, and communicate with businesses. The Reuters Digital News Report has been consistently capturing this trend: while the use of Facebook is declining worldwide, the use of messaging apps is on the rise (Nielsen et al., 2019).

Yet, political communication scholars have largely researched social networking sites (SNSs), and less attention has been paid to these burgeoning private messaging apps. One challenge for the study of private messaging apps is that they are end-to-end encrypted and by design are not broadly visible nor accessible to those outside of conversations. Nevertheless, as concerns spread globally around political propaganda and the intentional spread of politically false information, scholars are challenged to identify and measure the extent and effects of misinformation in democracies, in both public and private social applications.

In light of these concerns, this study aims to address this challenge by examining political misinformation on WhatsApp in Brazil. Data compiled by “We Are Social” 1 places WhatsApp in third place among all social platforms, with 1.5 billion monthly active users, only behind Facebook and YouTube. In Brazil, WhatsApp is the second most popular social media application, with over 120 million users in 2017. The concerns around misinformation on WhatsApp have led government institutions, media outlets, and NGOs to create fact-checking services to mitigate the spread of false and misleading information in Brazil, and the platform itself has begun funding social science research on this problem, including this study.

One important dimension of research is understanding who are more likely to share misinformation on these private messaging apps. In spite of concerns with coordinated disinformation efforts and computational propaganda (Jamieson, 2018; Wooley and Howard, 2018), regular users are responsible for spreading misinformation in their own networks—a behavior that Chadwick et al. (2018) have described as “democratically dysfunctional news sharing.” Although motivations for sharing news on social media may be varied, and we cannot assume that those who engage with and share misinformation have the intention to trick or troll others, understanding the types of users and behaviors associated with dysfunctional news sharing is an important step toward devising strategies to combat the spread of misinformation online.

We adopt a comparative approach to examine dysfunctional sharing on WhatsApp and Facebook as semipublic platforms have been consistently scrutinized for facilitating the spread of misinformation because of algorithmic curation and an engagement-driven news feed (Guess et al., 2019). Considering the different affordances in these two platforms, comparing misinformation sharing dynamics on WhatsApp to Facebook may help understand the differences between private and semipublic venues. We conducted a survey on a representative sample of Internet users in Brazil (N = 1615) to examine dysfunctional sharing on Facebook and WhatsApp by examining both accidental misinformation sharing as well as intentional misinformation sharing, in which people recognize the information is incorrect and choose to share it anyway. 2 Specifically, we investigate the relationship between dysfunctional sharing and (1) frequency of political talk; (2) cross-cutting exposure; and (3) social corrections (experiencing, witnessing, and performing).

Our findings provide further evidence of a participation versus misinformation paradox: those who are more engaged in political talk are significantly more likely to have shared misinformation in the platform they use to discuss politics, and also significantly more likely to disinform—in the latter, the relationships are cross-platform, as political talk on WhatsApp is associated with intentional misinformation sharing on Facebook and vice versa. We also find that instead of tempering the spread of false information, exposure to cross-cutting political views is positively associated with both types of dysfunctional sharing. Those who share misinformation are more likely to experience a social correction and to witness others being corrected, suggesting that false information does not go unnoticed in peoples’ networks. Finally, we find that people are significantly more likely to experience, perform, and witness social correction on WhatsApp than on Facebook, suggesting that the closer social ties maintained through WhatsApp might provide a sense of safety that supports these behaviors.

Changing patterns in online news access: from social media to private messaging

Social media platforms and mobile messaging applications are a part of people’s daily lives in most Western democracies. While many of these platforms have been developed primarily to foster social networking and to enable peer-to-peer communication, they are also becoming an important source of news (Gil de Zúñiga et al., 2019; Nielsen et al., 2019). Social media adds another layer to an already crowded and diverse hybrid media environment online by blurring the lines between information producers, news media, and regular people, and by providing a space that serves for both social and entertainment purposes, as well as by fulfilling political and informational goals (Chadwick, 2013).

Scholars have long warned that the high-choice environment of online news could have detrimental effects on democracy (Lewandowsky et al., 2012)—particularly by enabling users to consume information that supports their worldviews, while avoiding contrary perspectives (Prior, 2007). However, research on selective exposure has found little evidence of online echo chambers, which can be partially explained by the blurred boundaries between different online platforms where people access information (Borgesius et al., 2016; Chadwick, 2013). Likewise, the concern that social media algorithms create filter bubbles has been consistently challenged by research on platforms such as Facebook and Twitter, which finds that most social media users are routinely exposed to counter-attitudinal information and political difference (Bakshy et al., 2015; Valeriani and Vaccari, 2016), and tend to access a higher number of online news sources than those who are not using these platforms (Fletcher and Nielsen, 2018).

There are two important dynamics that help explain how social media changes the landscape of information consumption. First, it allows people to maintain large social networks, which primarily comprise weak ties formed by friends, coworkers, acquaintances, and colleagues from different stages in life (Ellison and boyd, 2013). Because these ties are accumulated over time and formed on the basis of friendship or association, and not for political purposes, they have the potential to broaden one’s exposure to diverse viewpoints (Bakshy et al., 2015). Similarly, because of the social dimension of information sharing on social media, people who are not seeking news may be exposed to it inadvertently (Fletcher and Nielsen, 2018). In this context, individuals can be influenced by their personal networks to consume and select political news, as people who frequently share or endorse political information on social media may also influence their peers to select information (Anspach, 2017).

The use of social media is positively associated with several important democratic outcomes (Gil de Zúñiga et al., 2012; Valenzuela et al., 2019; Valeriani and Vaccari, 2016). Seeking information on social networking sites, in particular, is associated with increasing political participation on- and offline, even for those who are accidentally exposed to political content (Boulianne, 2015, 2019) Social media serves the function of informing citizens about political news and events; it can offer opportunities for involvement and engagement through the influence of weak social ties and is also a forum for opinion expression and engagement in informal political talk (Boulianne, 2015, 2019). While WhatsApp has been less studied than other social media platforms, there is some indication that political discussion on WhatsApp can foster participation and activism, particularly for younger citizens (Gil de Zuñiga et al., 2019).

Research in this field has traditionally concentrated in the United States and Western European countries. Less is known about the political consequences of the use of social media in the Global South, despite the high social media penetration in populous countries such as Brazil, India, and Indonesia. Researchers studying Chile have warned that the “positive” effects of social media may also be associated with problematic ones: increased political engagement, which is positively related to social media use for news, leads to information sharing—which includes misinformation (Valenzuela et al., 2019)—suggesting that the dynamics around political uses of social media and misinformation need further consideration.

Our study contributes to this literature by examining the use of Facebook and WhatsApp in Brazil, the fourth largest democracy in the world with a population of about 211 million. Internet access in Brazil currently reaches 75% of the population (IBGE, 2018). Facebook remains the largest social media platform in Brazil, with over 127 million users in 2018. WhatsApp is at a close second place, with over 120 million users. The growing access to digital media in Brazil is shifting the landscape of news production, access, and circulation. According to the Reuters Digital News Report in 2019, 87% of Brazilians are now consuming news online—54% of the population uses Facebook, while 53% use WhatsApp, suggesting that both platforms are becoming central to Brazilians’ news diet (Nielsen et al., 2019). While several researchers have examined Facebook and Twitter for accessing news or talking about politics, little is known about how people are using a private messaging space for these purposes. Likewise, research has yet to unveil how the private nature of WhatsApp, along with the maintenance of closer social ties, changes how citizens interact with information and misinformation.

Online misinformation

There has long been concern about the spread of misinformation in society. Misinformation can be understood as occurring when a piece of information that is initially considered valid or true is then subsequently recognized as incorrect (Lewandowsky et al., 2012), or in “cases in which people’s beliefs about factual matters are not supported by clear evidence and expert opinion” (Nyhan and Reifler, 2010: 305). The core idea is that people believe in something that is factually incorrect. Others have distinguished misinformation from disinformation, the latter of which is defined as efforts to intentionally mislead (Jack, 2017). The challenge in this distinction is sorting out the motivations people have for communicating verifiably incorrect information. For the purposes of this study, we use the term misinformation to apply broadly to the phenomenon of sharing (intentionally or accidentally), being exposed to, or believing in verifiably false information.

Brazilians are particularly worried about political misinformation, registering the highest percentage of concern among 38 countries (Newman et al., 2018). There is clear evidence that WhatsApp has been successfully used to spread false and misleading information during elections in Brazil, as well as in India and Indonesia (Banaji et al., 2019; Resende et al., 2019), despite the company implementing changes to curb virality, banning automated accounts, and working closely with local authorities (WhatsApp, 2019). It has been reported that candidates, parties, and supporters engage in coordinated action using chat groups to spread messages and to mobilize supporters to share political content with their own peers. Some of these campaign activities have been considered illegal in Brazil’s 2018 election (Campos Mello, 2019).

Research on social media and misinformation has focused on Facebook and Twitter (see, for example, Valenzuela et al., 2019), and little is known about the role of private messaging apps in this context. People, however, are increasingly turning to messaging applications to consume and talk about news, as well as to participate in other forms of political engagement (Gil de Zuñiga et al., 2019; Nielsen et al., 2019). Given the research that shows a positive relationship between social media use, information consumption, and political participation (Boulianne, 2015, 2019), it becomes increasingly important to shift our attention to messaging platforms, such as WhatsApp—the largest private messaging app in the world—and to understand how they are different or similar to social media platforms, such as Facebook.

WhatsApp has unique affordances that make it challenging to research. Messages are protected by end-to-end encryption, meaning that their content cannot be unveiled. 3 WhatsApp is primarily used to chat with closer contacts, as accounts are connected to a mobile number, and communication happens in private one-to-one conversations or group chats, which can have up to 254 people. Despite its increasing use for news, WhatsApp does not feature a “news feed.” Because of these features, it becomes challenging to research and track illegitimate or malicious activities and to study the content of conversations.

There are several reasons why it is important to understand behaviors that are associated with misinformation sharing on Facebook and WhatsApp, as well as to examine the differences between these platforms. First, regular users actively contribute to the circulation of fake news on social media (Chadwick et al., 2018), and misinformation spreads faster than truth (Vosoughi et al., 2018)—at least on Twitter. Second, while scholars have focused on automated accounts and coordinated propaganda, falsehoods shared by regular people may be more effective in misinforming or persuading others due to the influence of personal relationships (Anspach, 2017). Platform affordances may further influence the circulation of misinformation: on Facebook, the news feed favors content shared by people with whom a user tends to engage with, which may increase the likelihood that people will see false information. WhatsApp differs greatly from other social media platforms with regard to sharing mechanisms: while it is possible to forward content from one chat to another (including groups), the lack of a news feed means that the content shared on WhatsApp is not easily traceable to a source, as forwarded information does not come with any metadata about its origin. This also means that users who share “original” content on WhatsApp are either creating that content, uploading images, video, or audio that they found elsewhere, or posting a link. Due to these characteristics, it is harder for WhatsApp users to trace the origins of any content received through chats or groups and to determine its credibility. Further, because of end-to-end encryption, the company cannot fact-check all the content that is shared by users—unlike platforms such as Facebook, Twitter, or Instagram—and has focused instead on detecting abnormal traceable behaviors (e.g. mass-texting, mass-creation of groups, etc.) and limiting virality.

Based on prior research that finds consistent relationships between social media use, WhatsApp use, and political participation (Bouliane, 2015, 2019; Gil de Zuñiga et al., 2019; Valenzuela et al., 2019; Valeriani and Vaccari, 2017), we hypothesize that sharing misinformation accidentally is positively associated with frequency of political talk on these platforms. Scholars provide compelling evidence that political talk is important for a well-informed citizenry, and it plays an important role as a precursor of other forms of political engagement (Kim and Kim, 2008). We postulate this hypothesis because of the consideration that users who accidentally share misinformation are engaging in information sharing, which is a form of political participation online (Valenzuela et al., 2019).

H1. Frequency of political talk on (a) Facebook and (b) WhatsApp is positively associated with sharing misinformation accidentally in the platform.

A second dimension of dysfunctional sharing is doing so on purpose—a behavior that is more challenging to measure, at least in self-reported data (Chadwick et al., 2018). While sharing accidentally and on purpose are different behaviors, existing research has not, to our knowledge, investigated these two types of dysfunctional sharing separately. Thus:

RQ1. Is frequency of political talk on (a) Facebook and (b) WhatsApp associated with sharing misinformation on purpose in the platform?

Information sharing on social media is affected by users’ perceptions of their networks. The predominance of weak ties on Facebook has been associated with exposure to diverse viewpoints (Bakshy et al., 2015; Bode, 2012; Kim, 2011), and users tend to consider whether others will share their opinions before posting on social media (Vraga et al., 2015; Weeks et al., 2017). While the risk of “context collapse” might constrain expression on Facebook, there is evidence that mobile messaging apps provide a “safer” space for political talk (Valeriani and Vaccari, 2017), which could in turn incentivize more active sharing of information among well-known peers. In this context, we seek to understand how political talk and cross-cutting exposure—when one is exposed to ideologically diverse perspectives (Mutz, 2006)—may help explain how WhatsApp and Facebook users engage with misinformation online.

Engagement in cross-cutting political talk may play an important role in mitigating the spread of misinformation. Scholars have argued that motivated reasoning—one’s willingness to accept information that is consistent with one’s viewpoints—helps explain why people believe in falsehoods (Nyhan and Reifler, 2010). Exposure to cross-cutting perspectives may have the potential to mitigate misinformation insofar as falsehoods might be challenged by those on the other side. Based on this literature, we pose the following questions to investigate the relationship between sharing misinformation and exposure to cross-cutting political perspectives.

RQ2. How does exposure to disagreement on (a) Facebook and (b) WhatsApp affect accidental misinformation sharing?

RQ3. How does the presence of disagreement on (a) Facebook and (b) WhatsApp affect purposeful misinformation sharing?

Scholars have explored the effectiveness of potential remedies to combat online misinformation, such as corrective affordances and fact-checking. Misinformation is notably hard to correct (Lewandowsky et al., 2012), particularly when it relates to preexisting attitudes, which is often the case around political topics (Nyhan and Reifler, 2015; Thorson, 2016). Prior research has found a significant effect of algorithmic correction (e.g., related stories) and anonymous social corrections on Facebook in reducing health-related misperceptions (Bode and Vraga, 2015, 2018) However, research investigating political misinformation suggests that corrections are often ineffective, emphasizing the role of motivated reasoning in the maintenance of misperceptions (Nyhan and Reifler, 2010, 2015) While we cannot investigate the effectiveness of social corrections using cross-sectional data, we propose a distinction between the three types of corrective behaviors—exposure to people correcting others online, performing a correction, and being corrected by someone else—and investigate the relationship between these behaviors and sharing misinformation on WhatsApp and Facebook.

RQ4. How does (a) experiencing, (b) witnessing, or (c) performing a social correction affect the likelihood of posting misinformation accidentally and on purpose on WhatsApp and Facebook?

RQ5. Are there differences between platforms in terms of (a) experiencing, (b) witnessing, or (c) performing a social correction?

Method

We conducted a survey with a nationally representative sample of Internet users in Brazil (N = 1615), using demographic quotas to proportionally represent regional differences based on age, gender, and education. 4 Sample size was calculated considering a 2% margin of error and 95% confidence level. The survey was conducted by Ibope, a large national survey company, and combines online panel interviews (N = 1431) and random digit dial interviews (N = 184). We supplemented the online panel data with phone interviews to reach demographics that are not using online panels—for example, respondents with lower education levels and older people, who may primarily or exclusively have mobile Internet access. Among Brazilian Internet users, it is estimated that 97% use a mobile device and that WhatsApp is installed on virtually all of them. Given our interests, our sample only includes WhatsApp users. Questions about Facebook were only asked to respondents who use it (89% of the sample). The data was collected from May 21 to July 3, 2019. Response rates based on AAPOR’s RR1 standards (2016) were of 26% for phone-based interviews. As the web-based surveys were conducted on a large nonprobability panel using demographic quotas, AAPOR’s standards do not apply (AAPOR, 2016), and the completion rate of 25% for the online panel was calculated based on the number of questionnaires started and completed.

While we acknowledge that self-reported measurements for misinformation sharing are imperfect as they rely on respondents’ recall, may suffer from desirability biases, and may not reflect actual experiences, other approaches that have been applied to social media platforms (e.g., collecting user data) cannot be applied to an encrypted messaging application. In the absence of user data, we believe that a nationally representative survey was better suited to provide an overview of how WhatsApp has been used as a source of information and as a place for political discussion.

Measures 5

Dependent variables 6

Accidental misinformation sharing

We measured accidental sharing using the following question: “In the past month, do you recall sharing a news story that seemed accurate at the time, but you later found out was exaggerated or made up on Facebook / WhatsApp?” The options were “yes,” “no,” and “I don’t remember.”

Purposeful misinformation sharing

We conceptualize purposeful misinformation sharing as a self-reported measure of having shared information on Facebook or WhatsApp in spite of thinking it was false. The item was measured with the following question: “In the past month, do you recall sharing a news story that you thought was made up or exaggerated when you shared it on Facebook / WhatsApp?.” The options were “yes,” “no,” and “I don’t remember.”

Independent variables

Frequency of political discussion

Frequency of political discussion was measured using a 5-point Likert-type scale ranging from never (1) to every day or almost every day (5). The question asked was “Considering the past month, how often did you talk about politics and social issues (such as elections, government, education, violence, etc.) on Facebook/WhatsApp?” (MFB = 3.08, SDFB = 4.59, MWA = 3.13, SDWA = 3.66).

Cross-cutting exposure

Frequency of cross-cutting exposure was measured with a 5-item Likert-type scale ranging from never (1) to always (5). Participants were asked “Thinking about the content about politics and social issues your friends shared on Facebook/WhatsApp in the past month, how often do you generally disagree with the views expressed by them?” (MFB = 3.32, SDFB = 0.91, MWA = 3.22, SDWA = 0.96).

Political information in WhatsApp groups

This binary variable refers to seeing conversations about politics in WhatsApp group chats. It includes respondents who participated in WhatsApp groups, defined as conversations with three or more contacts (88.6%), and saw conversations about politics in all of them (20.07%), in more than half (20.84%), or in less than half of them (33.12%).

Experiencing social correction

This item was measured with the following question: “In the past month, did any of these situations happen to you . . . I was told the news I had shared on Facebook/WhatsApp was exaggerated, inaccurate, or made up.” Participants could answer “yes,” “no,” or “I don’t know.”

Witnessing social correction

This item was measured with the following question: “In the past month, did any of these situations happen to you . . . I saw someone else being called out for sharing news on Facebook/WhatsApp that was exaggerated, inaccurate, or made up.” Participants could answer “yes,” “no,” or “I don’t know.”

Performing social corrections

This item was measured with the following question: “In the past month, did any of these situations happen to you . . . I told someone that the news they had shared on Facebook/WhatsApp was exaggerated, inaccurate, or made up.” Participants could answer “yes,” “no,” or “I don’t know.”

Sharing to correct

This item was measured with the following question: “In the past month, did any of these situations happen to you . . . I shared news that I knew was inaccurate to alert others that it was circulating on Facebook/WhatsApp.” Participants could answer “yes,” “no,” or “I don’t know.”

Media use

Participants were asked how frequently they used a set of media sources to consume information about politics and social issues, using a 5-point scale ranging from never to every day or almost every day. For the purposes of this paper, we included the following sources: Legacy news 7 (M = 3.12, SD = 1.14), Social media (Facebook, Twitter, YouTube) (M = 3.44, SD = 1.50), WhatsApp (M = 3.08, SD = 1.55).

Misinformed beliefs

We used a battery of 15 statements to measure if participants held misinformed beliefs and added their answers to generate a score. Items were derived out of an initial 40 pretested items, chosen for diversity of topics, that were not significantly correlated with key demographics on the pretest. We included 5 truthful statements and 10 false statements. Each incorrect answer counted one point; correct and “I don’t know” answers did not count points.

Political knowledge

Political knowledge was measured using five items, aligned with prior research (Carpini and Keeter, 1993; Pereira, 2013). We asked about the offices held by the vice president of Brazil and the president of Argentina, the name of the president of the Senate, Jair Bolsonaro’s party in the 2018 elections, and the party holding the majority of seats in the House of Representatives. Correct answers to these questions were combined to form a political knowledge score (M = 2.11, SD = 1.61).

Political extremism

Given Brazil’s multiparty system, ideology was measured with a 10-point scale ranging from left to right (M = 6.3, SD = 2.62). We recoded responses 1 and 2 on the left and 9 and 10 on the right as a binary of political extremism—30% of the respondents fell into this category, while 45.5% were not “extreme,” and 23% responded they did not know.

Demographics

Age was measured using an open-ended question (M = 36, SD = 13) and analyzed as a continuous variable. Education was measured with a single question and 5 answer items, ranging from completed pre-school (1) to completed college or above (5). The sample was 53.3% female and 46.7% male, which mirrors the distribution of Internet users.

Results

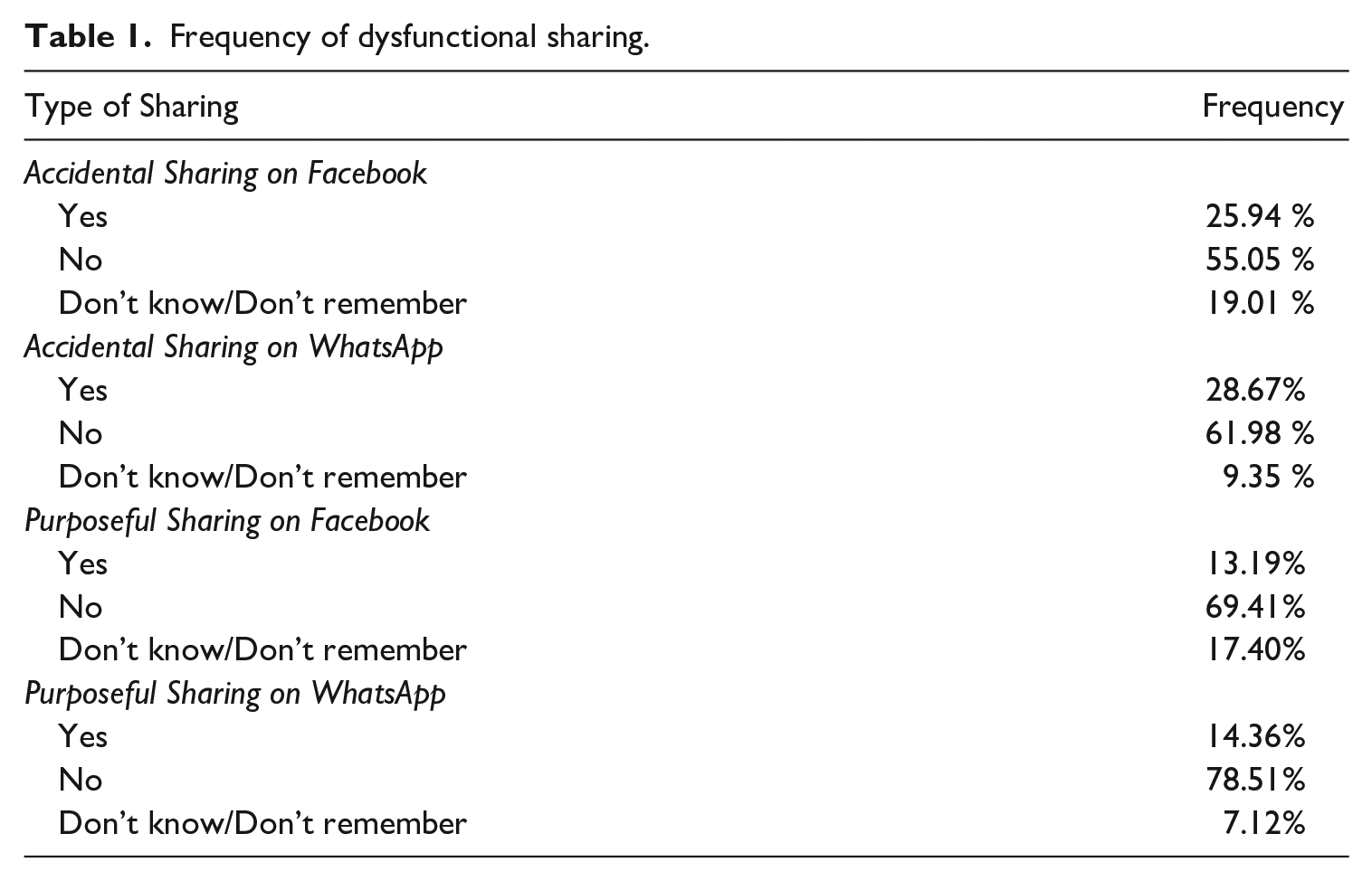

We first present some descriptive statistics to establish the prevalence of democratically dysfunctional sharing behaviors. As shown in Table 1, 25% of respondents acknowledged having shared misinformation accidentally on Facebook and on WhatsApp, t(2733) = −0.23, p = 0.8, d = 0.01 (MWA = 1.684, MFB = 1.680). Purposeful sharing was reported at a lower rate, with only 13% on Facebook and 14% on WhatsApp, t(2785) =−0.37, p = 0.7, d = 0.01 (MWA = 1.845, MFB = 1.840). The differences between platforms for both types of sharing were not significant.

Frequency of dysfunctional sharing.

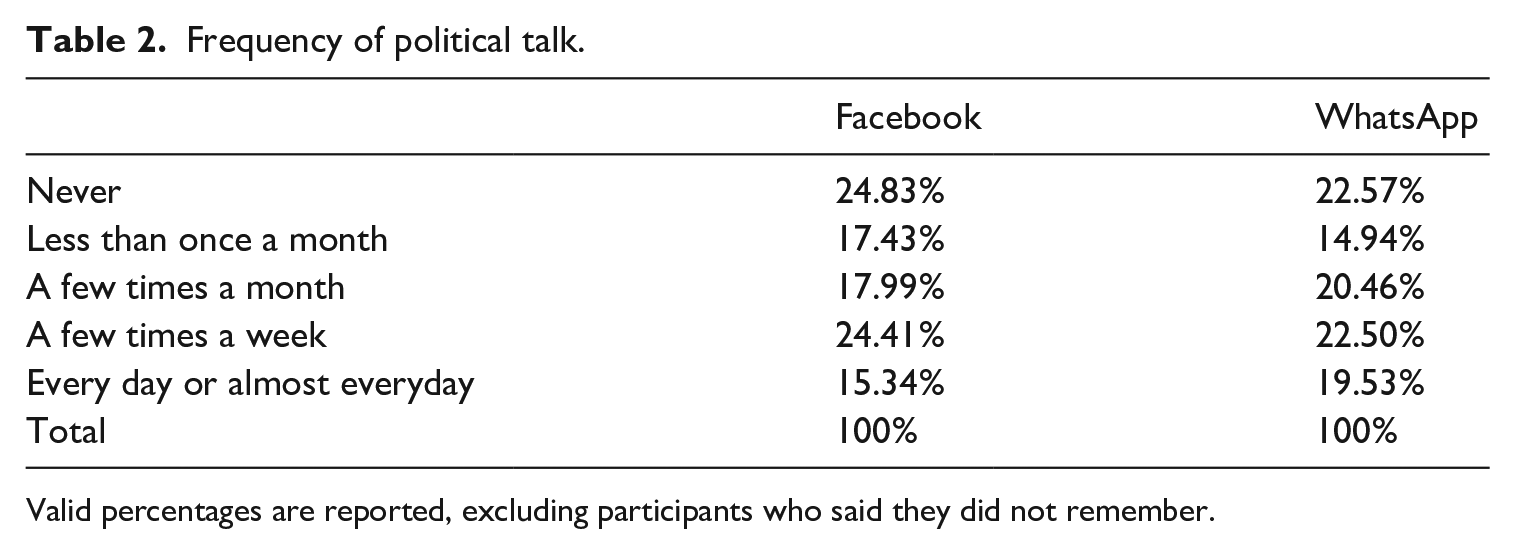

Focusing on our key explanatory variables, Table 2 presents the frequency of political talk. Participants in our sample used WhatsApp more frequently than Facebook to engage in discussions, particularly those who talk about politics on a daily basis. About 24% of our sample did not use Facebook to talk about politics, and 22% did not use WhatsApp for this purpose. The differences between platforms are significant, with WhatsApp being used more frequently than Facebook, t(3011) = −2.6, p = 0.009, d = 0.09 (MWA = 3.01, MFB = 2.88).

Frequency of political talk.

Valid percentages are reported, excluding participants who said they did not remember.

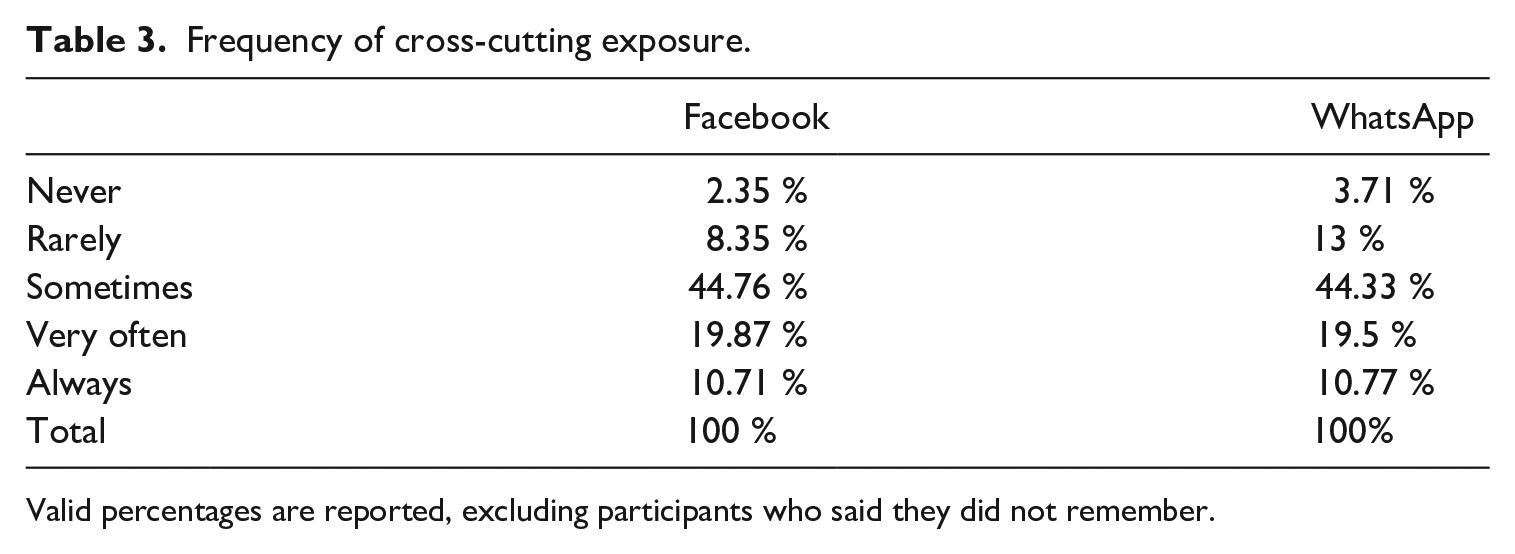

Most respondents acknowledge seeing cross-cutting political opinions on Facebook and WhatsApp, with about a third of them saying they see it very often or always (Table 3). Notably, respondents are more likely to witness disagreement on Facebook than WhatsApp, t(2863)= 2.9, p = 0.004, d = −0.11 (MWA = 3.22, MFB = 3.32).

Frequency of cross-cutting exposure.

Valid percentages are reported, excluding participants who said they did not remember.

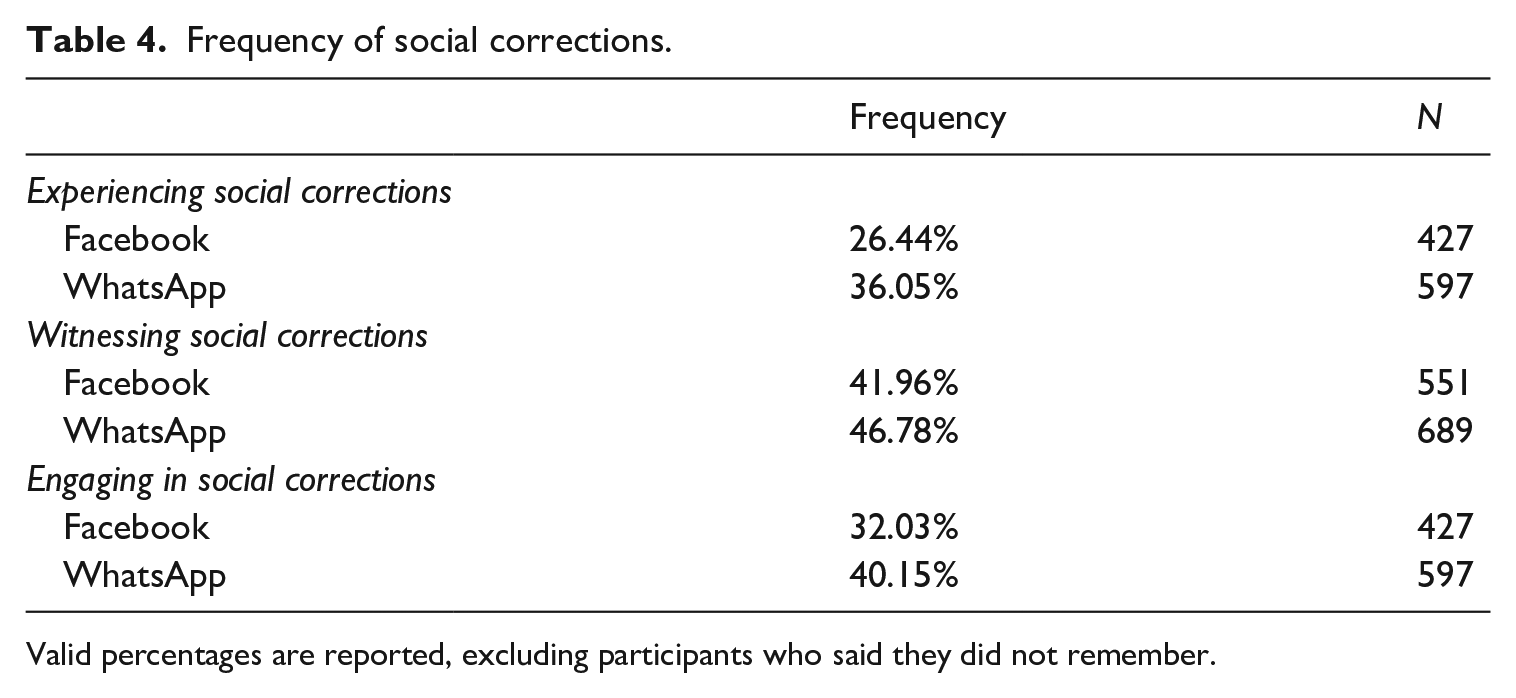

Next, we present the distributions of the three types of social correction in Table 4. At the bivariate level, people report having experienced, witnessed, and engaged in social corrections more frequently on WhatsApp than on Facebook. Social corrections occur relatively frequently in both platforms, with 42% of the respondents having witnessed it on Facebook and 47% on WhatsApp.

Frequency of social corrections.

Valid percentages are reported, excluding participants who said they did not remember.

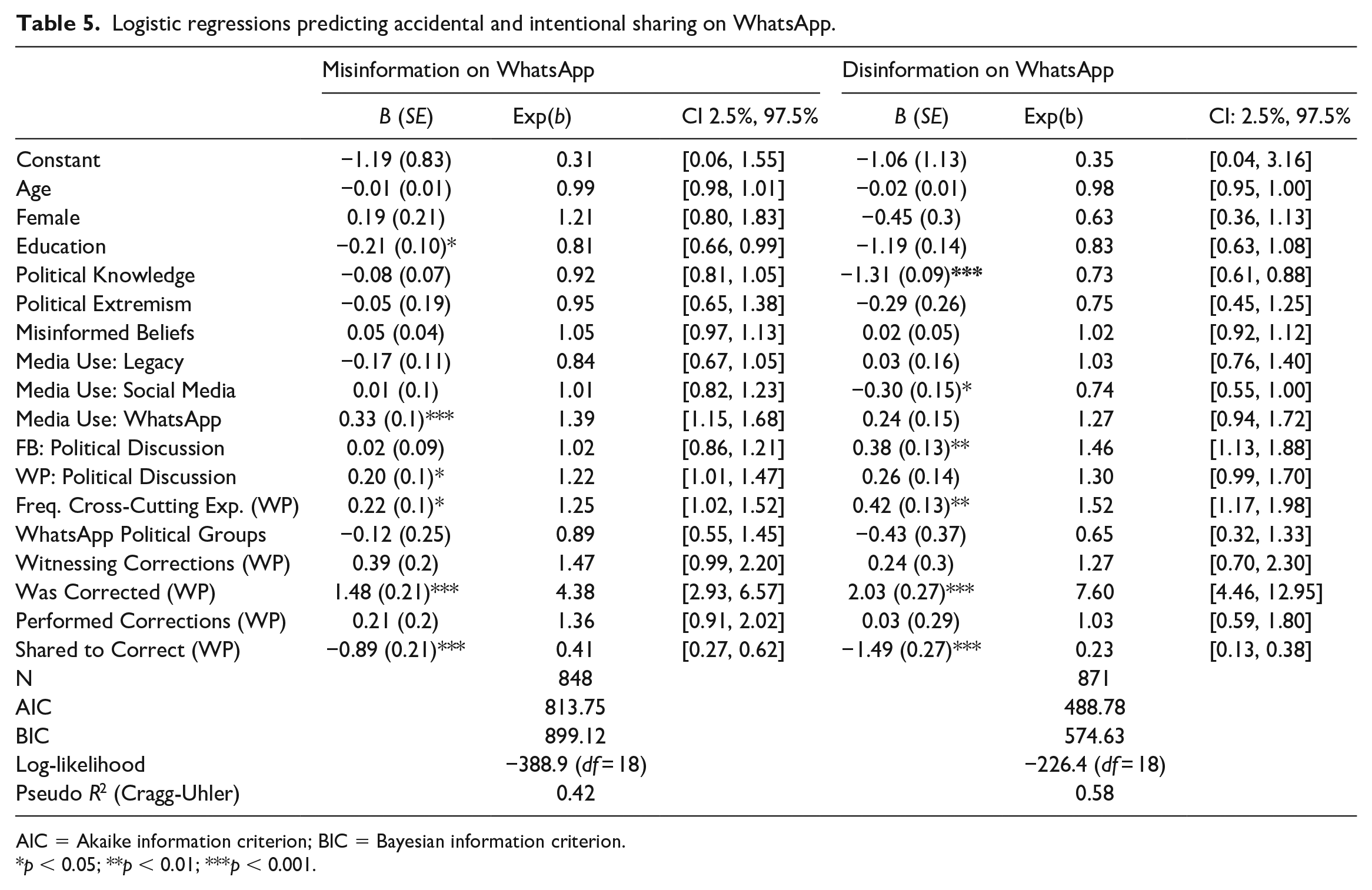

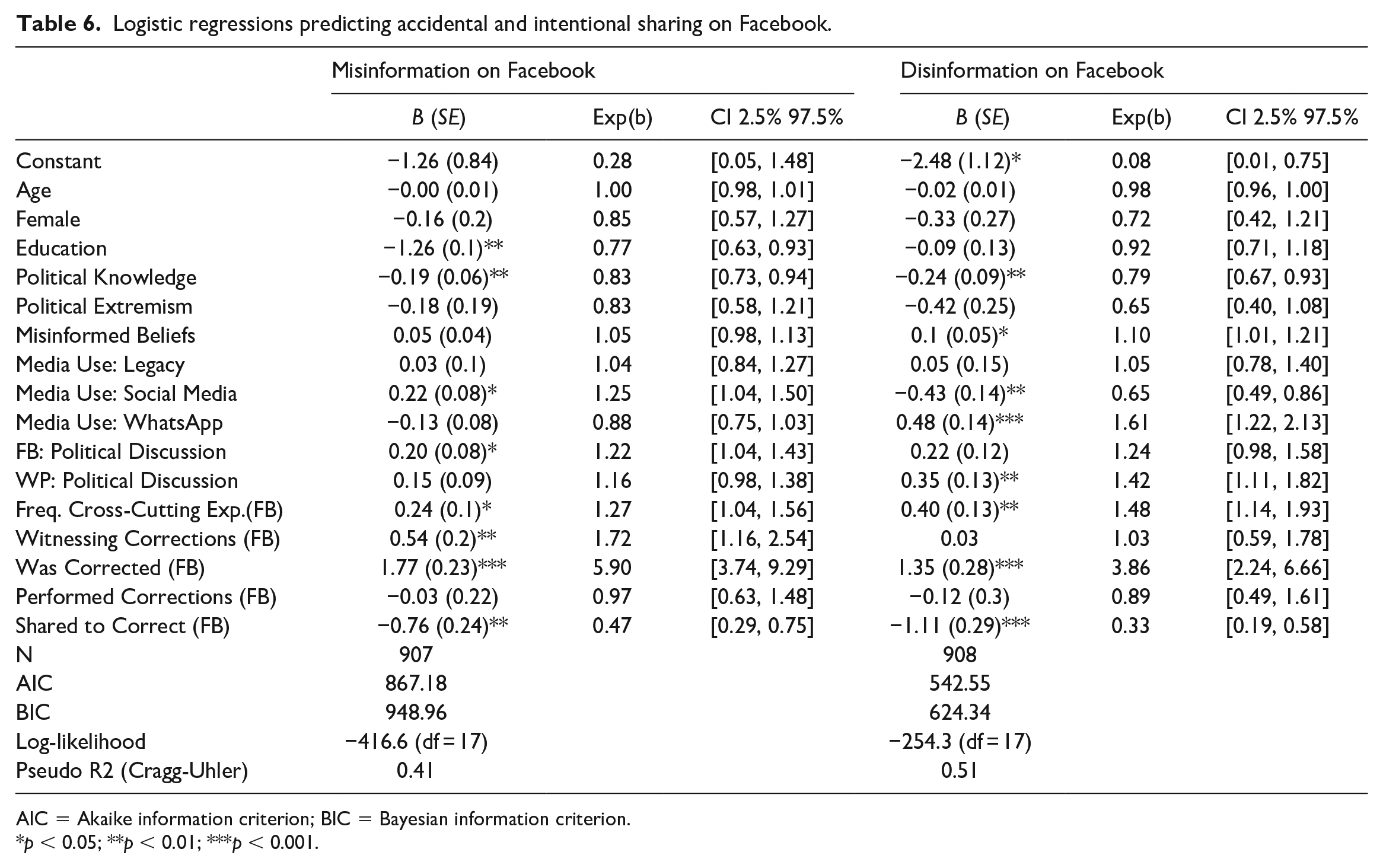

We use a set of logistic regression models to address our hypotheses and research questions, controlling for age, gender, level of education, frequency of political talk, frequency of news use, political knowledge, political extremism, and holding misinformed beliefs. The models had the following platform-specific independent variables: frequency of cross-cutting exposure, witnessing social corrections, performing social corrections, being corrected, and sharing misinformation to correct it. 8 Table 5 presents the models for on WhatsApp, and Table 6 presents the models for Facebook.

Logistic regressions predicting accidental and intentional sharing on WhatsApp.

AIC = Akaike information criterion; BIC = Bayesian information criterion.

*p < 0.05; **p < 0.01; ***p < 0.001.

Logistic regressions predicting accidental and intentional sharing on Facebook.

AIC = Akaike information criterion; BIC = Bayesian information criterion.

*p < 0.05; **p < 0.01; ***p < 0.001.

Examining the demographic controls, we find that age and gender are not associated with either form of dysfunctional sharing. However, education has a negative relationship with accidental misinformation sharing on Facebook and on WhatsApp—the higher one’s level of education, the less likely one is to share misinformation accidentally. These relationships are not significant for intentional sharing. Political knowledge was negatively associated with both types of dysfunctional sharing on Facebook and with accidental sharing on WhatsApp. Political extremism was not a significant predictor in either of the four models.

Frequency of legacy media use was not a significant predictor of dysfunctional sharing in either platform, but those who use social media as a source of news are more likely to have accidentally shared misinformation on Facebook, and less likely to have shared misinformation intentionally on both Facebook and WhatsApp. Frequency of WhatsApp use for news is positively associated with accidental sharing on WhatsApp and intentional sharing on Facebook. Finally, holding misinformed beliefs was a significant predictor of sharing misinformation intentionally on Facebook, increasing the likelihood of intentional sharing by 10%.

The results confirm our hypothesis that frequency of political talk on both Facebook and WhatsApp would be positively associated with sharing misinformation accidentally. Respondents who talk about politics more frequently on WhatsApp or on Facebook are each about 22% more likely to say they shared misinformation accidentally on the platform.

Our first research question further explored this relationship with regard to intentional misinformation sharing. Interestingly, we only find cross-platform relationships: those who talk about politics on Facebook are 46% more likely to engage in intentional misinformation sharing on WhatsApp, and those who talk about politics more frequently on WhatsApp are 42% more likely to share misinformation intentionally on Facebook.

Answering our second research question, being exposed to diverse opinions frequently on Facebook increases the likelihood of sharing misinformation on Facebook by 27%, and on WhatsApp by 25%. Cross-cutting exposure is also a strong predictor of intentional sharing: on WhatsApp, those exposed to cross-cutting talk are 52% more likely to have shared misinformation intentionally on WhatsApp, and 48% more likely to do so on Facebook (RQ3).

The fourth research question inquired about the effects of social corrections on intentional misinformation sharing. Being corrected for sharing misinformation is strongly and positively related to both types of sharing across platforms. Having witnessed someone else being corrected is also positively associated with having shared misinformation on Facebook, but not on WhatsApp. There was no significant relationship between performing a social correction and either type of dysfunctional sharing. Notably, those who shared misinformation accidentally and intentionally on both WhatsApp and Facebook are significantly less likely to have done so to alert others about it.

Finally, we investigated platform effects on social corrections (RQ5). Respondents were more likely to have experienced, t(2804) = 2.3, p = 0.02, d = 0.09, performed, t(2808) = 4.5, p < 0.001, d = 2.67, and witnessed social corrections on WhatsApp than on Facebook, t(2754) = 2.6, p = 0.01, d = 0.1, suggesting consistent platform differences.

Discussion

Contributing to the debate about the relationship between social media and the spread of misinformation online, this study has sought to understand how indicators of democratically relevant social media use, such as engaging in political talk and being exposed to cross-cutting discussions, are related to dysfunctional information sharing. Our results suggest that talking about politics on Facebook and on WhatsApp is strongly associated with sharing misinformation. These findings can be interpreted in light of research on news sharing, which suggests that more active social media users—and particularly those who use social media for news—are more likely to engage in sharing (Choi and Lee, 2015; Weeks and Holbert, 2013). If we consider that information sharing is typically associated with higher interest and involvement with online news (Kalogeropoulos et al., 2017), it is unsurprising that those who actively participate in political discussions on both Facebook and WhatsApp are also more likely to share misinformation accidentally simply because they already tend to share news more frequently.

Prior research using observational data found that older social media users were more likely to share misinformation on Facebook (Guess et al., 2019). In our sample, age and gender were not significant predictors of these behaviors, and education was the only significant key demographic. These differences might be related to the use of self-reported measures in our study—it is possible that people share misinformation accidentally at a higher rate than they acknowledge, and it may also be the case that they share without knowing it is false. Contrary to prior findings in the US context (Guess et al., 2019), we also did not find a relationship between extreme ideological positions and dysfunctional sharing. These results might reflect the complex nature of partisanship and political ideology in the context of multiparty systems with high levels of dissatisfaction with the political system and democratic instability: not only do citizens face challenges to navigate the political ideologies of their chosen candidates and parties (as these may vary in local, regional, and national coalitions), but they may also not find themselves strongly affiliated with either side of the political spectrum. Thus, in countries with a more diverse partisan spectrum, hyperpersonalized political preferences, and democratic instability, ideologic extremity may not reflect ideology and partisanship in the same way it does in more stable bipartisan systems.

When it comes to purposeful sharing, we observed a cross-platform relationship: talking about politics on Facebook is associated with intentional sharing on WhatsApp and vice versa. It is possible that being constantly exposed to others’ opinions by talking politics on WhatsApp or Facebook constraints sharing misinformation due to fear of social sanctions or of sharing minoritarian opinions. In this context, a platform where the users are less active in discussing politics might offer a “safer” space for sharing misinformation. Another explanation might be that users who share misinformation intentionally are aware of its potential backlash and therefore refrain from sharing it among their more frequent discussion peers. This perspective might be supported by our findings that political talk on either platform is positively associated with accidental misinformation sharing in the platform but not with purposeful sharing—suggesting that these behaviors might be associated with distinct social costs to users. Different sharing affordances may also help explain the differences between accidental and purposeful sharing: while accidental sharing might be a consequence of a more active sharing behavior in general, which would leverage in-platform forwarding and sharing mechanisms, purposeful sharing might be less dependent on the ease of sharing in-platform, which helps explain our cross-platform results.

Contrary to prior research on Twitter (Chadwick et al., 2018), we find that exposure to cross-cutting political talk was a predictor of accidental and intentional misinformation sharing in both platforms. The relationship was stronger for intentional sharing, suggesting that exposure to heterogeneous information might influence users to knowingly spread false content in both their WhatsApp and Facebook networks. Our findings on the positive relationships between frequency of political talk and cross-cutting exposure and dysfunctional news sharing are aligned with what Valenzuela et al. (2019) describe as the paradox of participation and misinformation: behaviors that are associated with positive political outcomes (e.g., participation, cross-cutting exposure) may have detrimental civic consequences (e.g., spreading misinformation). However, it is worth noting that our measure for cross-cutting exposure captures users’ perceptions of the opinion climate around them, and not the actual content they saw. Nevertheless, the finding that cross-cutting exposure in a given platform was more strongly related to intentional sharing—a cross-platform behavior—than accidental sharing might suggest that for those who intentionally share misinformation, the perception of a heterogeneous opinion environment may lead them to use a different platform to disseminate this type of content—potentially to avoid backlash, or in search for a more receptive audience.

While we do not have information on users’ motivations to share dysfunctional news content, we controlled for one civic motivation—sharing false information to correct it or alert others, which had a negative effect on all four models. This is not sufficient to imply that respondents were intentionally trying to deceive, but it suggests that the motivations behind purposeful misinformation sharing were not aligned with the civic goal of informing others.

The finding that membership in WhatsApp groups where politics is discussed is not associated with dysfunctional sharing has important consequences for research on political uses of WhatsApp, as scholars have relied on the analysis of large groups dedicated to politics (Bradshaw and Howard, 2018; Resende et al., 2019), which do not reflect the experience of most users (WhatsApp, 2019). This naturally poses a challenge to future research, given the severe limitations in obtaining data from encrypted platforms and the reliance on public discussion groups for content analyses.

We also investigated the role of social corrections. Those who share misinformation on Facebook and WhatsApp are significantly more likely to have experienced a social correction, which may temper concerns raised by Chadwick et al. (2018) that these behaviors are not noticed nor corrected in private channels and on social media. Witnessing a correction is only positively associated with sharing misinformation accidentally on Facebook. This might be explained by the more public nature of the platform. On WhatsApp, it is possible that corrections occur in one-to-one chats even when misinformation is shared in groups, making it less visible to bystanders given the private nature of the platform.

Looking at differences between platforms and the three types of social corrections at the bivariate level, we find that WhatsApp users are more likely than Facebook users to perform, experience, and witness social corrections. Considering that WhatsApp conversations tend to be among closer peers, it is possible that users perceive it as a safer space than Facebook to correct others because they have a better sense of how their friends may react, and users may also be more willing to acknowledge such corrections given their private nature. While these results suggest that misinformation does not go unchallenged on social media and on WhatsApp, we cannot assess the direction of the relationship based on cross-sectional data. Particularly with regard to accidental sharing, it is possible that the positive relationship signals that the correction raised awareness to the fact that a particular piece of information was not true.

Our study has limitations. First, our measures reflect what respondents perceive to be misinformation, not actual behaviors, and we are unable to assess what types and topics of misinformation respondents share. Relatedly, our measure can only account for those who are aware they have shared misinformation, and it is possible that those who said they did not engage in any type of dysfunctional sharing might be unaware of it. Second, we cannot make causal claims on the nature of the relationships that we find using cross-sectional data, which has important implications for understanding findings around social corrections. In spite of these, we believe this study makes an important contribution by exploring the behaviors associated with dysfunctional sharing in two of the most used social platforms globally by examining the case of Brazil. While our findings cannot be extrapolated to other countries, they provide insights to help scholars understand the potential democratic consequences of the move toward private communication, which may soon become the new norm given Facebook’s move toward encryption with Messenger and Instagram, and the increasing popularity of alternative messaging applications such as Telegram and Signal.

Conclusion

Given the rising concern with the spread of misinformation in mobile messaging applications, particularly in countries where these tools are widely used for a variety of purposes, we investigated behaviors that help explain misinformation sharing on WhatsApp and Facebook. Our results provide further evidence of a “paradox” between positive and negative engagement on social media (Valenzuela et al., 2019), and we extend it to mobile messaging apps: behaviors that are routinely associated with democratic gains, such as talking about politics, using social media for news, and being exposed to cross-cutting opinions (Gil de Zuñiga et al., 2019; Valeriani and Vaccari, 2017), are also associated with dysfunctional information sharing.

While these behaviors are fairly common, our results suggest that those who share false information online are likely to be corrected by others in their network. These findings help alleviate concerns that those who share misinformation online are not challenged for doing so and highlights important dynamics in terms of how different social ties, as well as more private discussion environments, play a role in this context. While we cannot assess whether social corrections are effective in mitigating misinformed beliefs, closer relationships may have the potential to help reduce misinformed beliefs insofar as personal connections can influence information selection (Anspach, 2017). More research is needed, however, to investigate whether social corrections by strong and weak ties are effective remedies not only to prevent misinformation sharing but also to mitigate misinformed beliefs.

As mobile messaging applications become a gateway for people to discuss politics and to access information, it is important to understand the extent to which engaging in these activities may also increase exposure to misinformation, particularly given that messages circulating in those apps often have little context and may not include some of the visual and contextual cues that have been shown to help users assess the credibility of online news, such as “related stories,” links to a source, or independent fact-checks (Bode and Vraga, 2015). While messaging platforms may not be able to fact-check messages due to encryption, our findings suggest that mobile messaging apps should gear their interfaces to help users assess the credibility of a message before sharing with peers. Rather than simply focusing on mechanisms that limit the speed and the extent of a message spreading, as WhatsApp has done so far, future platform changes to curb misinformation need to consider that context around messages is crucial to help users identify problematic content, which may prevent them to accidentally forward misinformation to their peers.

Supplemental Material

Appendixx – Supplemental material for Dysfunctional information sharing on WhatsApp and Facebook: The role of political talk, cross-cutting exposure and social corrections

Supplemental material, Appendixx for Dysfunctional information sharing on WhatsApp and Facebook: The role of political talk, cross-cutting exposure and social corrections by Patrícia Rossini, Jennifer Stromer-Galley, Erica Anita Baptista and Vanessa Veiga de Oliveira in New Media & Society

Footnotes

Acknowledgements

The authors would like to thank the anonymous reviewers for their useful comments and colleagues who provided feedback to earlier versions of this manuscript presented at the 2019 APSA Political Communication preconference and the Fifth Conference of the International Journal of Press/Politics.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The fieldwork for this research was supported by a “WhatsApp Misinformation and Social Science Research Award” granted to the authors as an unrestricted gift.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.