Abstract

This article examines YouTubers’ ‘bot like’ behaviour on Twitter and conceptualises it as a defiance of platform power in delimiting the boundaries of ‘authenticity’. This entrepreneurial capture of ‘botness’ is understudied and deserves attention. We focus on a platform with a clear monetisation scheme, YouTube, and on patterns of ‘inauthentic’ behaviour in how people shared YouTube videos on Twitter during the early stages of the COVID-19 pandemic (February–May 2020). The global coronavirus crisis forced social media platforms to take unprecedented (mostly automated) steps to moderate content and to introduce new policies on ‘appropriate’ conduct, which we argue may have an impact on emerging social media content creators’ attempts to boost visibility online.

Keywords

Introduction

In her 2019 book How to do Nothing: Resisting the Attention Economy, Jenny Odell argues that so much of our lives have become entangled with algorithmic technologies that we have started to behave more and more like bots 1 – automated programmes designed to perform tasks online. This is particularly visible in the way people create, consume, and share information on social media platforms, which are intentionally designed to be ‘frictionless’ (Casey and Lemley, 2020) in ways that make ‘bot-like’ behaviour easier: click, zoom, like, share, follow, swipe, refresh, subscribe, join, and so on. 2 People also communicate online in an increasingly ‘machine-friendly language’ using ‘a string of emoticons and hashtags and links and references – a simple string of symbols that doesn’t necessarily require the higher-level grammar and syntax of ordinary human speech and writing’ (Seife, 2018). One of the reasons why people may choose to act like a bot online is to build and maintain personal and professional brands. In increasingly competitive ‘platformed media systems’ (Rieder et al., 2020), social media content creators – or ‘cultural workers’, ‘cultural labourers’ and ‘digital influencers’ (Cunningham and Craig, 2019; Duffy et al., 2019) – have developed a wide range of tactics to boost visibility, one of which is the adoption of mechanical and repetitive cross-platform posting strategies that resemble automated agents like bots (Petre et al., 2019). These practices, though, are sometimes at odds with what digital platforms consider to be ‘appropriate’ behaviour online. There is a risk, therefore, that platforms may exploit the ambiguity of terms such as ‘authenticity’ and ‘spam’ to reinforce their power by making ‘important decisions about who and what get to be categorised as deviant’ (Carmi, 2020: 5; Petre et al., 2019).

While the use of so-called manipulative ‘spammy’ techniques and technology companies’ efforts to deal with them has a long history far predating the current moment (see Brunton, 2013), the spectre of platform-enabled Russian interference through ‘coordinated efforts’ between ‘fake accounts’ in the 2016 US election and other forms of ‘platform manipulation’ during global crises such as the COVID-19 pandemic and the Russian-Ukrainian war have given a new dimension to the material impacts of ‘bot-like’ behaviour on social media. Not only have users become more sophisticated in how they appropriate technological affordances for bulk messaging on social media, but this ‘inauthentic’ behaviour has both ‘facilitated’ and ‘legitimised’ the emergence of opaque platform policies and enforcement practices that claim to be cracking down on platform ‘manipulation’ (Douek, 2021: 276). During information crises, concepts such as ‘automation’ and ‘inauthentic’ behaviour on social media are largely thought about and studied in relation to ‘problematic’ conduct and content (Douek, 2021; Gorwa and Guilbeault, 2020; Petre et al., 2019). But researchers are increasingly calling for scholarship that critically engages with this language of ‘inauthenticity’ and pushes back against the tendency to equate it with ‘bad actors’, ‘foreign interference’, ‘sophisticated coordinated threats’ and ‘misinformation’ (Douek, 2021; Gorwa and Guilbeault, 2020; Petre et al., 2019).

This article responds to these calls by providing empirical data on YouTube content creators’ ‘bot-like’ behaviour on Twitter 3 during the global outbreak of novel coronavirus (COVID-19), and it conceptualises this practice as a defiance of platform power in delimiting the boundaries of ‘authenticity’. van Dijck et al. (2019) conceptualise platform power as much more than the economic dominance that some social media companies have in the market. They argue that platforms’ architectures and norms create new ‘hierarchies and dependencies’ that are important to consider in analyses of platform power. That is how we also approach platform power in this paper: we are interested in investigating how users defy platforms’ impositions of what it means to be ‘authentic’ as well as in how platforms assert their power in defining and moderating behavioural categories in a way that caters to their business interests.

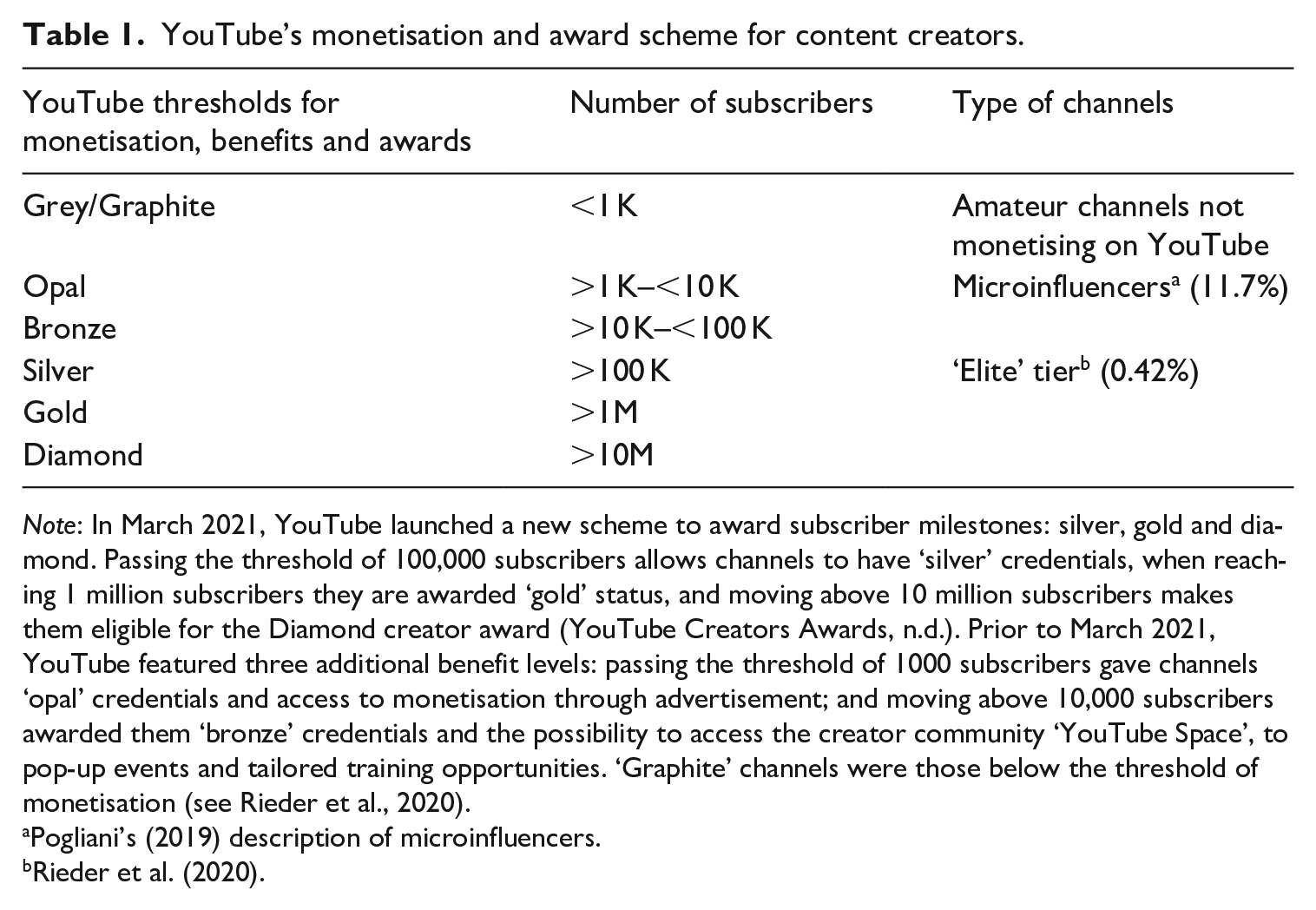

Our research joins the call for ‘platform observability’ as a method for generating meaningful insights about large socio-technical systems, their interconnections and how to better regulate them (Rieder and Hofmann, 2020). Since we were interested in the role of professionalisation 4 on social media as a motive for engaging in ‘inauthentic’ behaviour, we chose Twitter and YouTube as our preferred platforms for observation over time. The use of automation or ‘bot expression’ (Lamo and Calo, 2019) is central to Twitter’s user culture (Gorwa and Guilbeault, 2020), and YouTube is one of the few mainstream platforms with a clear monetisation scheme. Channels can monetise on YouTube when they reach the threshold of 1000 subscribers (see Table 1), although research shows that YouTube’s incentive scheme mainly benefits a few ‘elite’ content creators (Rieder et al., 2020). As a result, non-established YouTubers often use diverse optimisation tactics to gain visibility in order to increase their chances to monetise, including taking advantage of newsworthy events to attract new audiences and cross-promoting their content across platforms (Bishop, 2019; Knuutila et al., 2020). We selected the COVID-19 pandemic as a useful event to examine content creators’ struggle for visibility during what has been referred to by the World Health Organization as an ‘infodemic’ – to describe the risks associated with the circulation of too much information, including false and misleading content, during the pandemic. While much of the academic, journalistic and policy discussion around COVID-19 has centred on how ‘bad actors’ used digital platforms’ infrastructures to mislead and harm (e.g. Knuutila et al., 2020), this article focuses on apparent misuses 5 of Twitter by ‘good’ actors during an ‘infodemic’. Our study asks:

YouTube’s monetisation and award scheme for content creators.

Note: In March 2021, YouTube launched a new scheme to award subscriber milestones: silver, gold and diamond. Passing the threshold of 100,000 subscribers allows channels to have ‘silver’ credentials, when reaching 1 million subscribers they are awarded ‘gold’ status, and moving above 10 million subscribers makes them eligible for the Diamond creator award (YouTube Creators Awards, n.d.). Prior to March 2021, YouTube featured three additional benefit levels: passing the threshold of 1000 subscribers gave channels ‘opal’ credentials and access to monetisation through advertisement; and moving above 10,000 subscribers awarded them ‘bronze’ credentials and the possibility to access the creator community ‘YouTube Space’, to pop-up events and tailored training opportunities. ‘Graphite’ channels were those below the threshold of monetisation (see Rieder et al., 2020/).

Pogliani’s (2019) description of microinfluencers.

RQ1: Does the quest for professionalisation on YouTube play a role in patterns of ‘bot-like’ behaviour on Twitter during a global information crisis such as COVID-19?

RQ2: (A) What are the patterns of behaviour we see over time in how people share YouTube videos on Twitter ‘inauthentically’ during newsworthy events? (B) How are patterns of ‘inauthentic’ behaviour on Twitter linked to ‘problematic’ 6 content being uploaded on YouTube?

The remainder of the article is structured as follows. First, we review the literature on authenticity and ‘bot-like’ or ‘inauthentic’ behaviour on social media and the challenges associated with conceptualising and regulating this practice. Second, we introduce why we chose the COVID-19 pandemic to study bot-like cross-platform strategies between YouTube and Twitter. We then move on to describe the methods used to study the links between professionalisation on YouTube and ‘bot-like’ behaviour on Twitter and discuss our findings in relation to platform power. We conclude with a reflection on the need to consider new ‘authenticities’ online that defy platform power and on the shortcomings of platform governance in relation to ‘inauthentic’ behaviour online.

Social media platforms and authenticity

Social media platforms expect users to behave in an authentic manner. What platforms mean by being authentic, though, is both ambiguous and narrow (Hallinan et al., 2022). As captured in their policies, platforms often link authenticity to identity – users are generally expected to be their own selves online, and it is normally not okay to impersonate others (with a few exceptions and variations across platforms; see Hallinan et al., 2022). Platforms also associate authenticity with content (e.g. manipulated media is generally banned) and with behaviour–fake engagement, repetitive posting, coordination and scams are largely banned. In a sense, through their policies, social media platforms authorise what it means to be authentic in their services; authenticity becoming ‘a claim that is made by or for someone, thing, or performance and either accepted or rejected by relevant others’ (Peterson, 2005: 1086). Users, though, are constantly challenging platforms’ imposition of what it means to be authentic (Haimson and Hoffmann, 2016) and, especially in the case of content creators, negotiating notions of authenticity with their audiences (Abidin, 2018; Baym, 2018). In any domain, what it means to be authentic is socially constructed and implicitly polemic (Peterson, 2005; Trilling, 1972), and social media is no exception.

Authenticity as a core value of social media platforms (Hallinan et al., 2022) is also often conceptualised in opposition to fakeness or inauthenticity (Haimson and Hoffmann, 2016). As the vocabulary around ‘inauthenticity’, ‘fake engagement’, ‘platform manipulation,’ and ‘spammy’ behaviour has entered firmly into social media platforms’ policies and discourse, scholars have voiced concern about researchers doing parallel work to detect or measure this type of behaviour without paying enough attention to what these terms mean and do (Carmi, 2020; Douek, 2021; Gorwa and Guilbeault, 2020; Petre et al., 2019). In general, platforms use the concept of ‘inauthentic’ behaviour to largely describe the use of their services to mislead people (see Douek, 2021). Rhetorically, these terms allow tech companies to deflect criticism for nefarious uses of their platforms onto ‘bad actors’ rather than acknowledging the ways their very own architecture, affordances and incentive structure actively enable the sorts of practices they delegitimise as ‘manipulative’ or ‘disruptive’ (Acker and Donovan, 2019). In practice, although some platforms like Twitter acknowledge the use of good automation (Twitter Help Center, 2017), the opacity with which they moderate this type of behaviour allows them to assert their power over users in ways that are even less transparent than with content-based moderation (Douek, 2021; Haan, 2020).

Petre et al. (2019) argue that the ways in which platform governance wraps itself in moralistic language like ‘authentic’ versus ‘inauthentic’ and ‘genuine’ versus ‘spammy’ risks reinforcing huge power imbalances between platforms and content creators. The authors describe this as an example of ‘platform paternalism’ and draw attention to the ‘striking double-standard’ at work wherein tech companies focus on scalable solutions to content moderation, including the use of automation, whereas they demand from content creators ‘nearly the polar opposite – an artisanal, non-instrumental approach to cultural production’ (Petre et al., 2019: 8). This idea is echoed in Carmi’s (2020) research on the power dynamics involved in determining who and what get to be categorised as ‘antisocial’ on the Internet. In her analysis of the construction and negotiation of ‘spam’ as a ‘deviant’ media category, Carmi explains how historically, advertising companies, under the European Commission’s soft law approach to Internet regulation, conceptualised ‘spam’ to cater to their own interests. She argues that marketing companies interested in profiting from what users did online needed the media category ‘spam’ to define ‘deviant’ content and conduct and to differentiate it from another form of behaviour online (or marketing practice) they wanted to legitimise: ‘cookies’. In the same way that spam has been historically constructed as a ‘flexible’ category that caters to the interests of the advertising industry’s business model (Carmi, 2020: 118), we argue that platforms have also constructed the notion of ‘inauthentic behaviour’ as a flexible category and there is a need to unpack what work the term ‘inauthenticity’ is doing as its use shifts.

The impact of COVID-19 on platform policies and content moderation

Events such as the outbreak of the coronavirus disease in late 2019 are an interesting locus of research because they prompt controversies around multiple topics (e.g. information disorders and lockdowns) that are generative of unforeseen connections among actors, themes, and objects in public ‘debates’ (Callon et al., 2011). One of these unforeseen connections is how COVID-19 pushed social media platforms to take unprecedented (mostly automated) steps to moderate content (Gerrard, 2020; Vijaya and Derella, 2020) and to develop new policies on ‘appropriate’ communication (e.g. Krishnan et al., 2021), which we argue may have an impact on emerging social media content creators’ attempts to gain visibility online.

In the case of Twitter, the platform introduced a ‘COVID-19 misleading information policy’ in December 2021 to tackle the spread of information likely to harm during the pandemic (Twitter Help Center, 2021). But issues related to inauthentic behaviour on platforms were also highlighted during the time. For example, controversy arose when then-President Trump’s tweet announcing he had contracted COVID-19 was ‘spammed’ with several repetitive tweets in Amharic in late 2020 (Sung, 2020). Several claims also emerged that bots, or ‘automated software’ helped distribute COVID-19 misinformation (Ayers et al., 2021) and hate speech (Uyheng and Carley, 2020).

Ostensibly to deal with these kinds of issues, in May 2022, the platform announced the introduction of an updated ‘copypasta 7 and duplicate content policy’ to ‘help people find credible and authentic information’ by limiting the visibility of duplicative or repetitive tweets (Twitter Help Center, 2022, emphasis ours). One month earlier, Twitter also updated its policy on ‘platform manipulation and spam’ to give more prominence to the fact that users ‘can’t use automation to create Twitter accounts’ (Twitter Help Center, 2022). The term ‘authenticity’, though, appeared for the first time in June 2019 under its ‘Platform Manipulation and Spam’ Policy (Haan, 2020: 638). Twitter defines ‘platform manipulation’ as conduct ‘intended to artificially amplify or suppress information or engage in behavior that manipulates or disrupts people’s experience’ (Twitter Help Center, 2022). Importantly, Twitter includes as examples of ‘platform manipulation’ and ‘spam’ both account-based factors (e.g. creating duplicate, very similar or fake accounts) (Hinesley, 2019) and behaviour-based factors (e.g. bulk posting and ‘repeatedly posting identical or nearly identical Tweets’) (Twitter Help Center, 2022). Twitter’s policies read as an attempt to (appear to) reconcile the interests of the various different stakeholders that the platform depends on for its success. The interests of content creators to gain visibility for their content and monetise on a crowded and competitive platform, the interests of ordinary users to experience a feed that is not full of repetitive or misleading content and the interests of regulators to see more action taken against ‘bad actors’ online. The complexity and the trade-offs involved in satisfying (or appearing to satisfy) these different interests, as well as Twitter’s constant manoeuvring to define the contours of permissible conduct in a way that satisfies its own business objectives, all get lost in an uncritical use of the term ‘inauthentic behaviour’ (Douek, 2021).

To bring some clarity to this discussion, it is helpful to begin with a distinction between automated speakers (e.g. bot accounts), automated behaviour (e.g. the use of automated tools to tweet when the user is not on Twitter – see Haan, 2020), and ‘bot-like’ 8 behaviour (e.g. users manually or automatically copying and pasting the same or a very similar message multiple times on a single day, or what Freelon et al. [2020: 1197] call ‘low-cost digital activities’) – a distinction that is sometimes muddied in journalistic and academic discourse (Gorwa and Guilbeault, 2020: 235). Almost a decade ago, scholars – some of whom worked at Twitter – urged researchers to think about four archetypical Twitter users: humans, bots, bot-assisted-humans and human-assisted-bots (Chu et al., 2012). They referred to the latter two types (‘bot-assisted-humans’ and ‘human-assisted-bots’) collectively as ‘cyborgs’. These scholars had estimated that there was a Twitter user ratio of 5:4:1 (human: cyborg: bot), which reflects the reality that the use of automation is central to Twitter’s user culture (Gorwa and Guilbeault, 2020). Indeed, Twitter’s policies and affordances promote automation and practices that would be considered ‘inauthentic’ on platforms like Facebook. Twitter has a relatively open API for developers to build on, no real names policy, and allows users to set up multiple accounts (Gorwa and Guilbeault, 2020; Haan, 2020; Pielemeier, 2020).

Shifts in policy and the indeterminate categories that many of these policies rely on means that empirically researching the implications and/or the enforcement of these policies from the outside is highly challenging. Our aim in this article was not to examine the enforcement (or lack thereof) of Twitter’s ‘inauthenticity’ policies during the early months of COVID-19. Instead, we sought to bring our empirical research into conversation with evolving policy developments on Twitter because we believe that our findings carry implications for policies on ‘inauthenticity’ that go beyond questions of enforcement. Further, establishing what counts as a bot or bot-like behaviour is far from straightforward. Twitter and other platforms do not reveal what the thresholds for ‘inauthentic’ behaviour are, arguing that giving more details would allow ‘bad actors’ to creatively circumvent these policies (Douek, 2021). Similarly, those with experience working on these issues in industry argue that ‘arbitrary static thresholding’ is unwise because users are constantly adjusting their tactics anyway (Lala, 2022). In research circles, scholars have tried to shed light on what counts as ‘inauthentic’ behaviour for individual accounts. Kollanyi et al. (2016), in a highly cited preprint, argued that ‘highly automated’ accounts were those tweeting more than 50 times per day. However, further research has contested this claim since active users – for example, political activists or media organisations – may tweet more than 50 times daily without necessarily being bots (Martini et al., 2021). Regardless of thresholds, labelling ‘bot-like’ activity on Twitter as ‘inauthentic’ paints the practice in overly negative terms, when in fact, automated communication on social media is a new form of expression that is not always harmful, malicious or easily dismissed (Lamo and Calo, 2019). To help illustrate the power dynamics involved in defining ‘inauthentic’ behaviour solely in negative terms, in the next section, we explain the methods used to empirically investigate a particular form of ‘inauthentic’ behaviour on social media – repetitive cross-platform strategies between YouTube and Twitter – that cannot always be framed as a misuse of platforms to mislead.

Studying the links between professionalisation and ‘inauthentic’ behaviour on social media

For our data collection, we accessed Twitter’s standard search API to gather tweets 9 containing a YouTube URL 10 matching either of two keywords (coronavirus OR Wuhan) during the early stages of the pandemic: from the 1st February to 31 May 2020 (4 months). We collected a total number of 1,716,203 unique tweets, which contained 830,058 unique YouTube video IDs. Most of these tweets were in English (1,051,015) followed by Spanish (229,359), French (81,284), Portuguese (72,989) and Italian (41,963) (See Table 5 in Supplemental Appendix for a complete language breakdown). These numbers show how Twitter accounts using languages other than English might have chosen to tag their tweets with the English keywords ‘coronavirus’ or ‘wuhan’ to tap into global conversations around the pandemic. 11

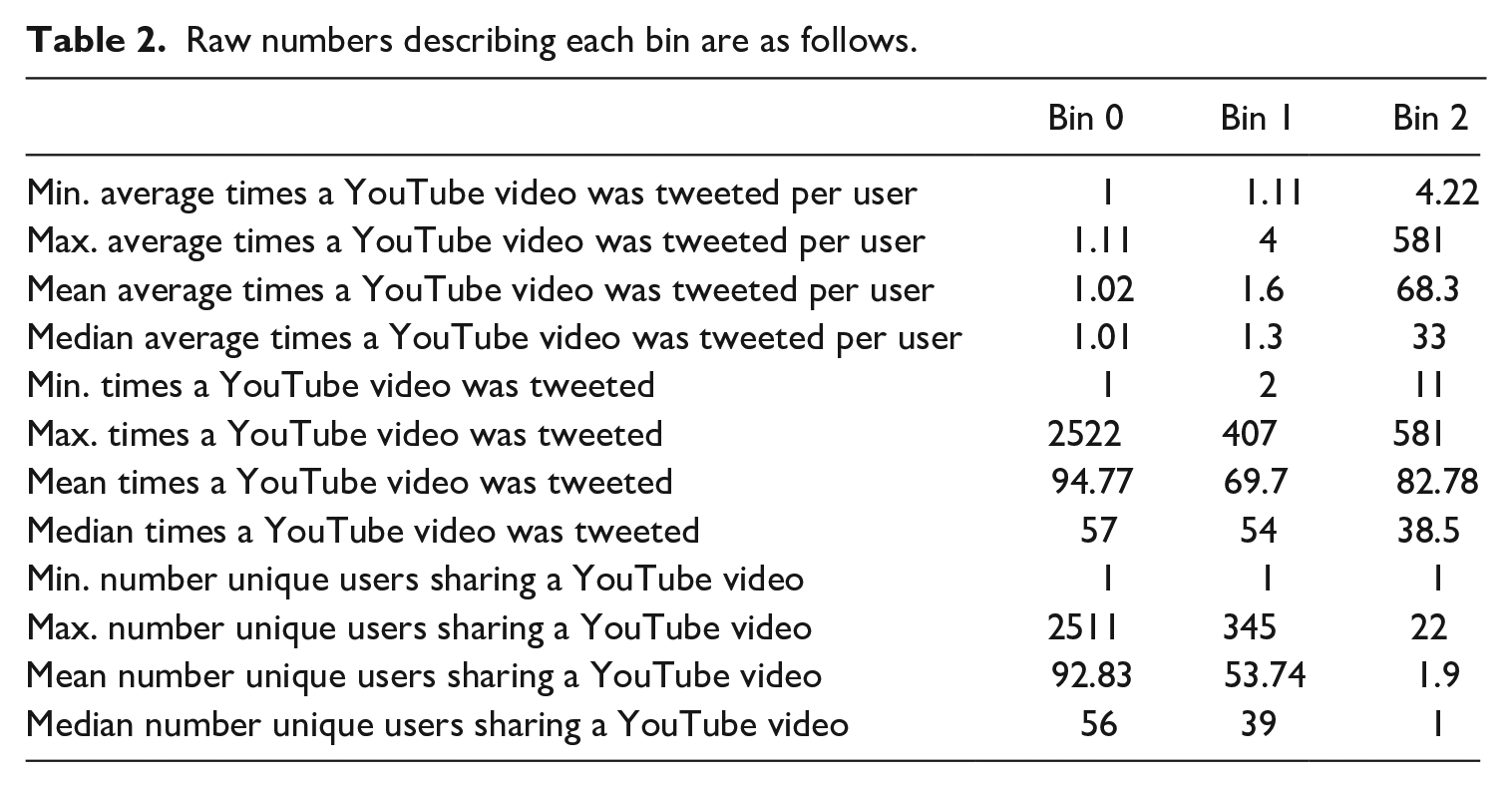

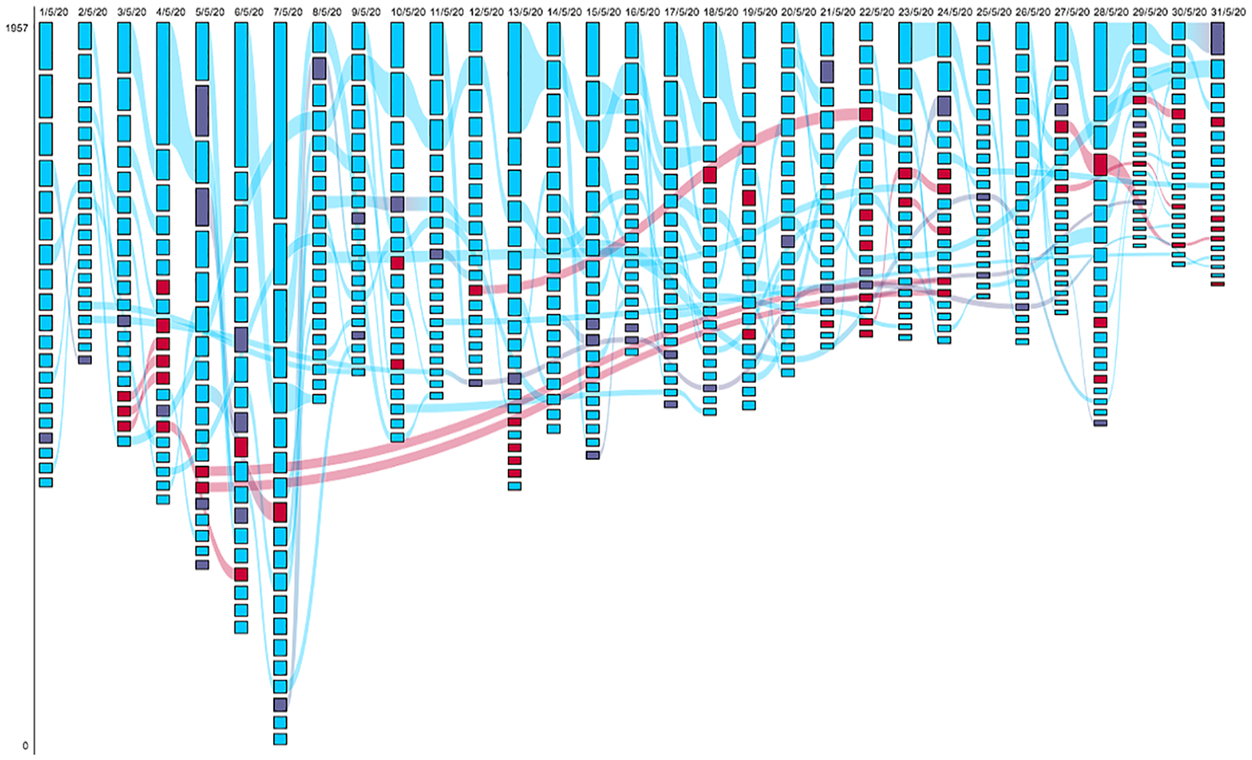

To answer our research questions, we had to first prepare the data for analysis. Following Rieder et al.’s (2018) mixed-methods methodology to study patterns of change over time on social media, we generated files that contained the top 20 most shared unique YouTube videos on Twitter every day over the period of 4 months (February–May 2020). To visualise our data and identify patterns in how users shared YouTube videos on Twitter, we used the RankFlow tool (Rieder, 2016) – which creates flow diagrams that make changes over time easily observable – and created rank flow morphologies for every month of the study period. Since we wanted to identify patterns of ‘inauthentic’ behaviour in how YouTube content creators share videos on Twitter (our RQ2-A), we were interested not only in how many times a YouTube video was shared on any given day, but in the relationship between the number of times a video was tweeted and the number of unique users that shared that video. To this end, we considered Twitter accounts that engaged in ‘inauthentic’ behaviour as those with a posting frequency above the 95th percentile (Giglietto et al., 2020). Posting frequency is not the only signal to identify ‘inauthentic’ behaviour on Twitter, and misinformation scholars have shown that users coordinate online to amplify the spread and reach of content by, for example, retweeting identical content repeatedly within one second of each other (Graham et al., 2020). However, in this article, we were not interested in coordinated efforts to push certain information on Twitter. Rather, we wanted to study the correlation between Twitter users repeatedly sharing the same YouTube video on any given day, and content creators wanting to boost their visibility and hence, potentially monetise on YouTube. Based on this, we categorised the most shared YouTube videos on Twitter into three analytical categories – what we called ‘Bin 0’, ‘Bin 1’, and ‘Bin 2’ – based on the average times these videos were tweeted per user (see the statistics pertaining to each category in Table 2):

Raw numbers describing each bin are as follows.

Bin 0: Videos falling under this category are those that were shared on Twitter very few times per user on average – one time per user on average. This category represents the lowest 90% of average times tweeted per user.

Bin 1: Videos falling under this category are those that were tweeted a minimum of one time and maximum of four times per user on average. This category represents the 90–95% of average times tweeted per user.

Bin 2: ‘Inauthentic’ or ‘bot-like’ behaviour. Videos falling under this category are those that were tweeted a very high number of times per user on average, relative to the dataset – i.e. 68 times per user on average (a minimum of four times and a maximum of 581 times per user on average). This category represents the top 5% of average times tweeted per user.

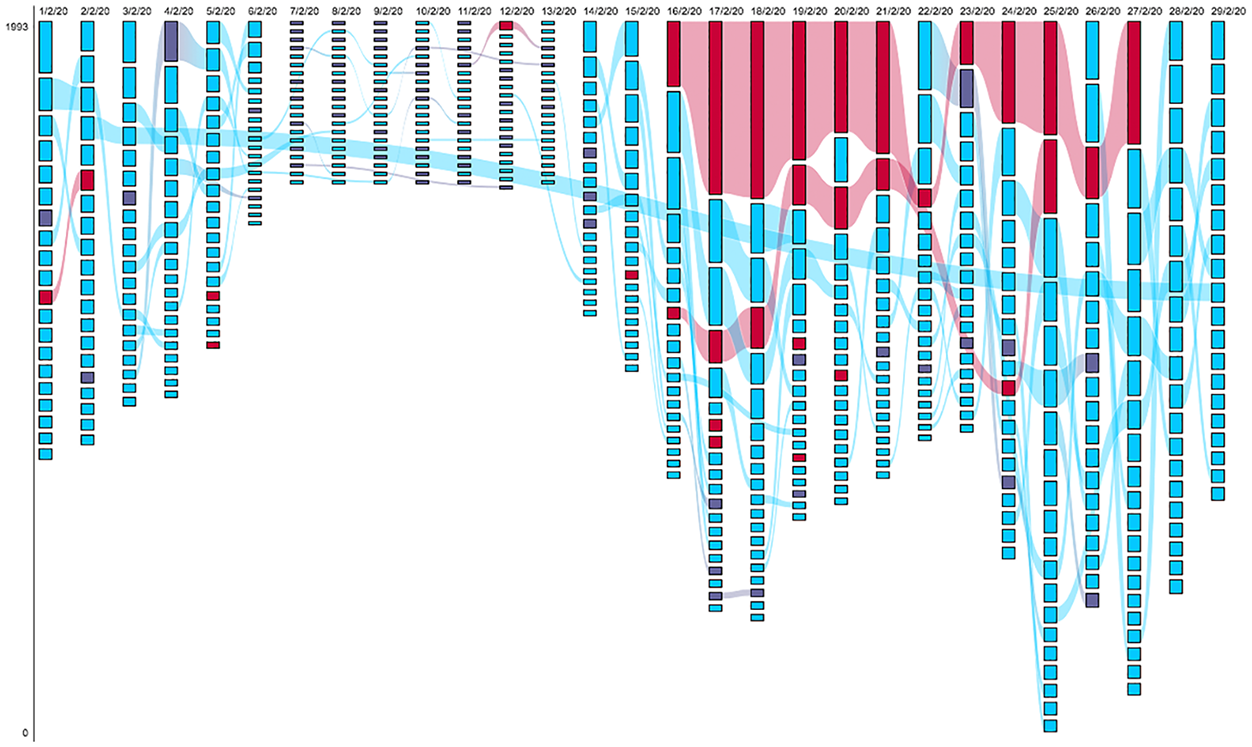

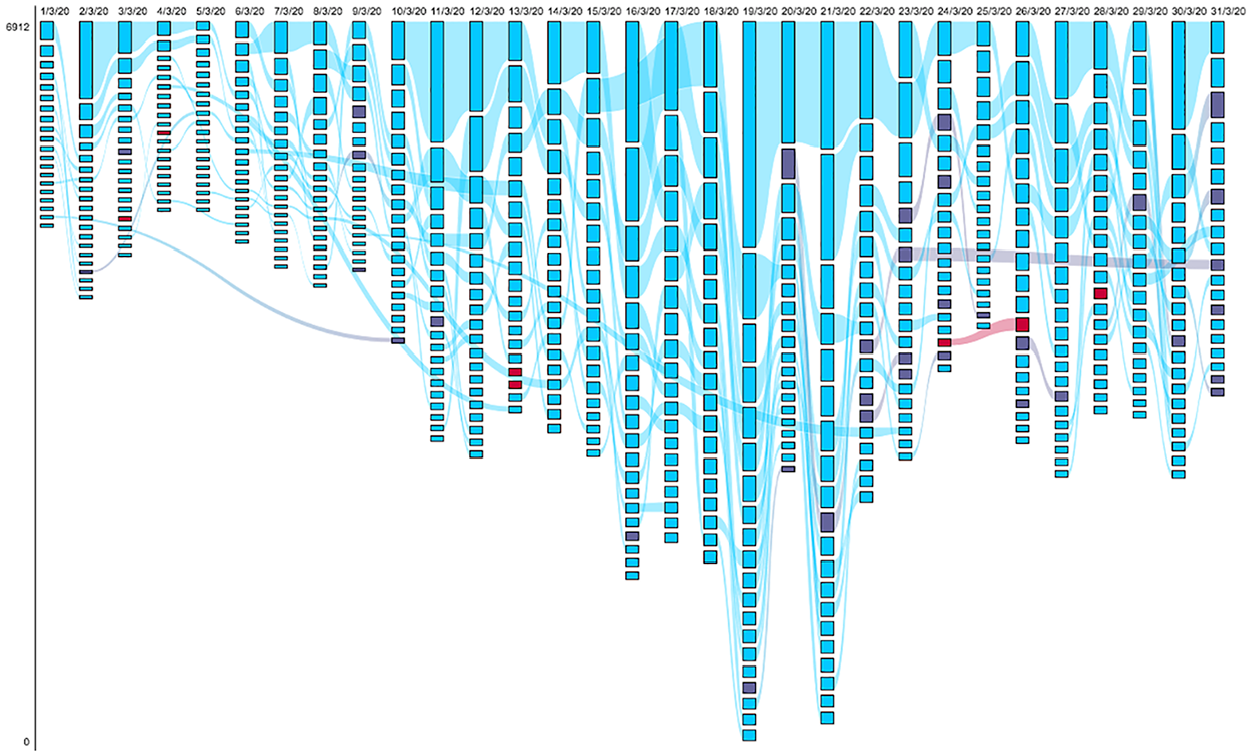

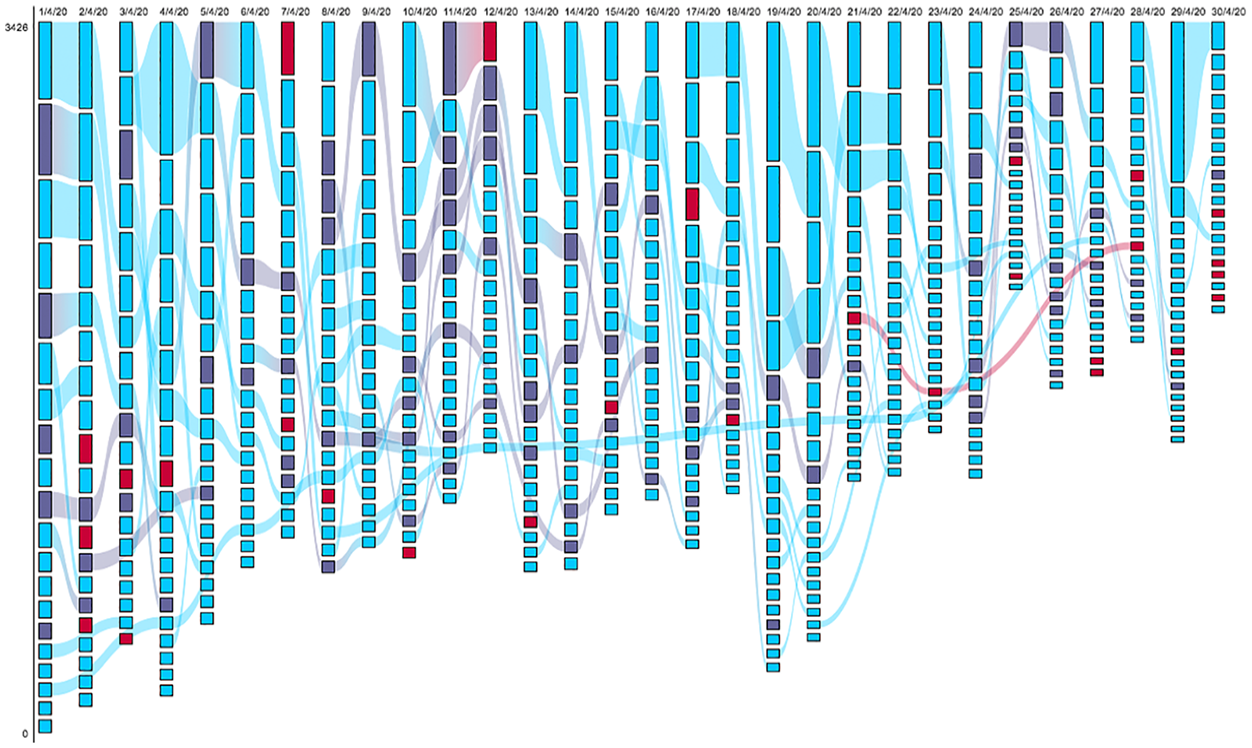

The categorisation of YouTube videos into ‘bins’ facilitated our analysis of patterns of behaviour into how people shared these videos on Twitter during the early stages of COVID-19. It also allowed us to determine the threshold of ‘inauthentic’ or ‘bot-like’ behaviour based on our data by paying attention to average posting frequency rather than randomly establishing a fixed number of times – for example, 50 times – to consider when posting behaviour is likely to be automated or could be considered ‘bot-like’ (Kollanyi et al., 2016). One of the authors of this article made a modification to the RankFlow tool, 12 which is open source, so that we could visualise two metrics in our rank flow morphologies: number of times a given YouTube video was shared and the ‘bins’ each tweeted video was categorised into according to our scheme. Figures 2 to 5 show the rank morphologies for February, March, April and May 2020, respectively, and are found later on in this article.

To study whether and to what extent professionalisation was a motive for the ‘inauthentic’ behaviour witnessed on Twitter (our RQ1) – the tweeted videos categorised into our ‘Bin 2’ – we qualitatively analysed both the YouTube channels that hosted these videos on YouTube, and the users that shared these videos on Twitter. We also examined the relationship between the YouTube content shared and the Twitter account(s) sharing it: that is, we were interested in determining whether users sharing these videos on Twitter were the same content creators that uploaded those videos onto YouTube. 13 In cases where multiple Twitter accounts shared one same video on a single day, we checked the main account sharing – which was always responsible for 90%+ of sharing – to determine whether there was a link between this account and the YouTube channel hosting the video shared on Twitter. We then manually coded the 61 YouTube videos shared via ‘bot-like’ behaviour on Twitter (which corresponded to 51 channels) – our Bin 2 – by three sharing motives: commercial (Twitter users predominantly shared these YouTube videos on Twitter to push the sale of products/services external to YouTube), professionalisation (Twitter users predominantly shared these YouTube videos on Twitter to potentially amass an audience and eventually monetise on YouTube), and other (Twitter users predominantly shared these YouTube videos on Twitter for socialising/gaining temporary virality and/or to presumably share information that resonated with their beliefs) (see Table 6 in Supplemental Appendix for a detailed explanation of these sharing motives).

For our qualitative analysis, we also wanted to investigate how the cases in ‘Bin 2’ that we coded as content creators engaging in ‘bot-like’ behaviour on Twitter for professionalisation purposes mapped onto Twitter’s official policies on ‘authenticity’ (Twitter Help Center, 2020). To do this, we focused mainly on the text of the tweets accompanying the YouTube videos and identified a number of the features Twitter highlights as (potential) violations of its ‘Platform Manipulation and Spam’ policies. Most notably, we identified the repetitive posting by content creators of ‘nearly identical tweets’ and ‘tweeting with excessive, unrelated hashtags in a single tweet’. These are all examples of ‘misuse(s) of Twitter product features’ under Twitter’s ‘Platform Manipulation and Spam’ policy.

Another possibility would have been to look at the timing of sharing behaviour: whether the videos were shared with a regular frequency (e.g. every hour), which could suggest the use of automation by bot or cyborg Twitter accounts (see Chu et al., 2012) and which could therefore invoke Twitter’s policies on ‘automation’ (Twitter Help Center, 2017) including its stated efforts to clamp down on ‘malicious’ uses of automation (Hinesley, 2019). We did not explore this in the current study, not least because we wanted to avoid contributing to an unhelpful tendency by some of the research in this space to conflate inauthentic behaviour on Twitter with inauthentic Twitter accounts (Gorwa and Guilbeault, 2020; Haan, 2020; Martini et al., 2021). While we cannot exclude that some of the behaviour we picked up in ‘Bin 2’ may have relied on the use of automation, we make no claims about the ‘authenticity’ or ‘botness’ of the Twitter accounts themselves, nor about the degree of automation relied upon by the owners of those accounts to share their videos.

Last, given the tendency by platforms and dominant scholarship to associate ‘inauthentic’ behaviour with nefarious content and ‘bad actors’, we studied the videos associated with the 17 channels shared for professionalisation purposes in our ‘Bin 2’ in order to determine whether they could reasonably be considered to violate, or come close to violating, Twitter’s or YouTube’s rules (our RQ2-B). It is important to note here that in the process of determining whether videos contained content likely to violate Twitter’s and YouTube’s policies, we considered Twitter’s and YouTube’s content policies broadly (See Twitter Help Center, n.d.; YouTube, n.d.) instead of limiting ourselves to evaluating the videos only against policies on misinformation.

YouTubers’ engagement with ‘bot-like’ behaviour on Twitter

General overview

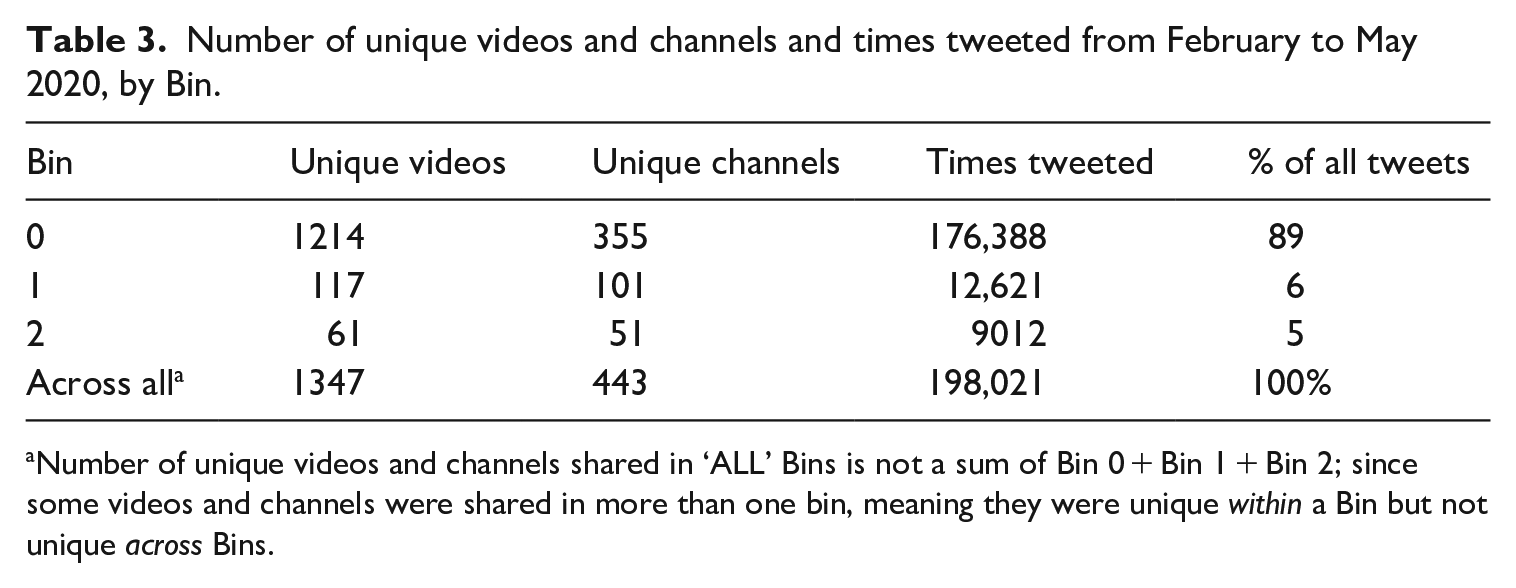

Across the whole dataset sampled for visualisation with the RankFlow tool, there were 1598 unique YouTube videos tweeted 221,813 times over a 4-month period. At time of data analysis, information could not be gathered for 16% (n = 251) of these videos, which accounted for 11% (n = 23,792) of total tweets. When information about a video cannot be collected, nor can information be collected about the channel with which it is associated. Table 3 shows the breakdown of unique videos and channels by ‘Bin’ and the number of times those videos and channels were tweeted per ‘Bin’, overall. Table 3 excludes the 251 videos and their associated channels for which data could not be gathered.

Number of unique videos and channels and times tweeted from February to May 2020, by Bin.

Number of unique videos and channels shared in ‘ALL’ Bins is not a sum of Bin 0 + Bin 1 + Bin 2; since some videos and channels were shared in more than one bin, meaning they were unique within a Bin but not unique across Bins.

As a first step to make sense of our data, we looked at the top 25 channels responsible for uploading the most shared videos per ‘Bin’ (by number of tweets) over the whole study period (February–May 2020), to see whether there were qualitative differences in the types of channels which videos were categorised into ‘Bins’. We wanted to understand whether the videos shared ‘inauthentically’ on Twitter (our ‘Bin 2’) were uploaded by less-known channels, and garnered less of a following on YouTube, relative to the channels that hosted the videos categorised in our ‘Bin 0’ and ‘Bin 1’. This would offer preliminary support for our professionalisation hypothesis, which posits that the amplification of YouTube content through the use of ‘inauthentic’ practices, including ‘spammy’ behaviour, on Twitter is at least in part a function of smaller, lesser-known YouTube ‘micro-influencers’ (Pogliani, 2019) struggling for visibility. Our results were in line with this hypothesis. A comparative analysis of the top 25 channels responsible for the most shared videos in our ‘Bins’ showed that the vast majority of the channels in ‘Bin 0’, and to a somewhat lesser extent ‘Bin 1’, were well-known channels – that is, mainstream media and entertainment channels. In contrast, the channels that uploaded the videos that were ‘inauthentically’ shared on Twitter (our ‘Bin 2’) were far less recognisable (see Table 7 in Supplemental Appendix).

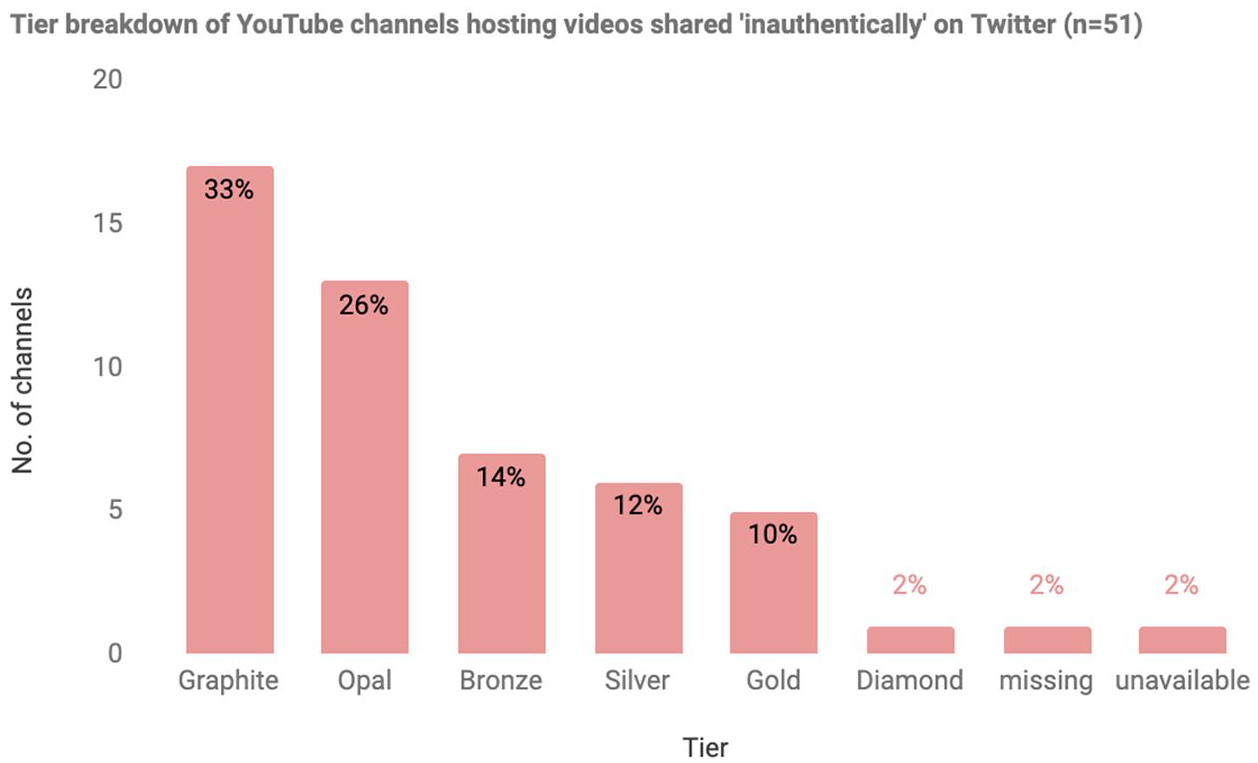

All 51 YouTube channels that uploaded the videos that were shared ‘inauthentically’ on Twitter (our ‘Bin 2’) across our study period are overwhelmingly clustered in the lower tiers in YouTube’s monetisation scheme (see Table 1): 73% (n = 37/51) of the channels fit within the ‘micro-influencer’ (Pogliani, 2019) category and 33% of them (n = 17/51) fell below YouTube’s threshold for monetisation (see Figure 1). This suggests that these channels could have an incentive for engaging in ‘inauthentic’ behaviour on Twitter in order to get discovered by a broader audience outside YouTube, which could potentially result in growing their subscriber count on the video platform and eventually further monetise. Most of the YouTube channels published in English (61%), although we also identified content in Spanish (14%), Hindi (8%), Italian (6%), Japanese (4%), Portuguese (4%) and French (2%). 14

Breakdown by YouTube’s monetisation scheme of all 51 YouTube channels that uploaded the videos that were shared ‘inauthentically’ on Twitter (our ‘Bin 2’).

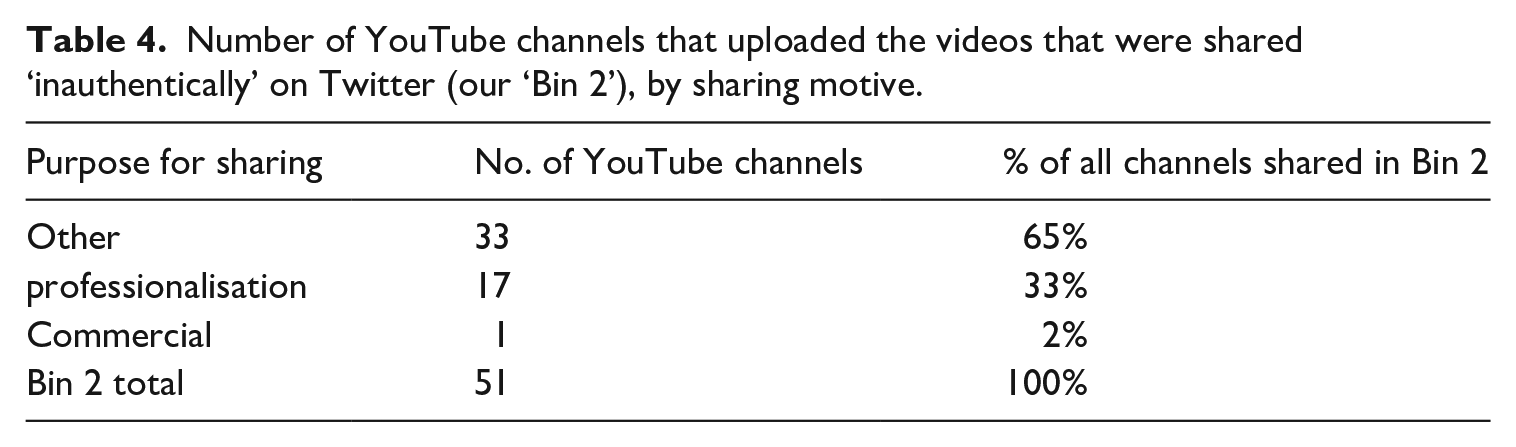

In our qualitative examination to determine the sharing motive of YouTube videos on Twitter, we identified ‘professionalisation’ as a motive for sharing 33% (n = 17/51) of the YouTube videos in ‘Bin 2’ over the 4-month period under study (see Table 4). These were cases of YouTube content creators using their own Twitter accounts to promote their videos on Twitter a median average of 32 times a day. 15 Their motivations appeared to be varied, and included musicians and artists tapping into the COVID-19 public debate to share their artistic content, news channels from emerging YouTube markets trying to amass an audience on YouTube, business entrepreneurs (i.e. online marketing, sales, etc.), and YouTube-native Infotainment shows offering their takes on the pandemic.

Number of YouTube channels that uploaded the videos that were shared ‘inauthentically’ on Twitter (our ‘Bin 2’), by sharing motive.

The videos shared for motives other than YouTube professionalisation, which we mainly coded as ‘Other’, are not the main focus of this article. Nevertheless, the patterns we observed in the ‘Other’ category point to interesting possibilities for future research. We observed Twitter users engaging in ‘inauthentic’ behaviour to spread the content of YouTube channels engaging in disinformation, which has already received significant scholarly attention (e.g. Knuutila et al., 2020). Yet in our ‘Other’ category we also observed two cases in which Twitter users ‘inauthentically’ shared content 16 for the ‘social good’ – as previous literature has also observed (see Thorson et al., 2013). We also observed two videos (both from the channel AllExpressNews) being shared 4080 and 936 times, respectively, for apparently commercial reasons.

The 21 videos shared with a professionalisation motive over the 4 months under study, which is the focus of the article, were associated with 17 channels. Notably, we found that of these 21 videos, the vast majority (over two-thirds, n = 15) were not in breach of Twitter’s and YouTube’s content policies. We coded five videos as violating or coming close to violating Twitter’s and YouTube’s community guidelines. These videos, which related to the spread of anti-Chinese sentiment and health misinformation, may have fallen afoul of the platforms’ broader policies around misinformation, but would likely not all have met the thresholds for enforcement action under Twitter’s rules related to URLs. 17

It is also noteworthy that overwhelmingly, those YouTubers using ‘spammy’ techniques on Twitter to promote their content with a professionalisation motive were not lazily hijacking the COVID-19 discussion on Twitter. Over 80% of the videos (n = 17/21) shared were about COVID-19 and were uploaded to YouTube in the same month that they were shared on Twitter. In other words, content creators seeking to professionalise were not simply exploiting the COVID-19 crisis to make their YouTube channels known but were engaging in creative labour to produce relevant and timely YouTube content that they then turned to Twitter to amplify.

Patterns in YouTube’s ‘bot-like’ behaviour on Twitter over time

Overall, the rank flow morphologies helped us identify patterns in how YouTubers engaged in ‘inauthentic’ behaviour on Twitter over time. As Figures 2 to 5 illustrate, there was an increase in the number of Youtubers who went to Twitter to share their videos in a ‘spammy’ way from February to May 2020 (see bars in red). In the cases of videos that were ‘inauthentically’ shared on Twitter for a professionalisation motive, while in February and March the content creators that repeatedly promoted their videos on Twitter were sharing finance-related tips and echoing the World Health Organization’s advice on COVID-19, as the pandemic went on and lockdowns started to occur in different parts of the world, other creators largely used Twitter to share their funny and artistic takes on how to cope with staying at home.

As the visualisation shown in Figure 2 indicates, sharing behaviour in February was overwhelmingly ‘viral’ in nature (Bin 0, light blue), but the red bars and flows visible over the period 16–27 February clearly show ‘inauthentic’ sharing taking place over the second half of the month. The flows show a single video being shared daily for 12 days between 16 and 27 February, and another video shared over 8 days in the period between 16 and 25 February. Both videos correspond to the YouTube channel AllExpressNews. Each video was shared between 39 and 581 times per day, and overwhelmingly by a single Twitter account (bitcoinconnect) which has since been suspended and was previously identified as a bot by a data scientist often cited in media reports who refers to himself on Twitter as Conspirador Norteño (2019). Both YouTube videos’ descriptions contain links to external websites encouraging purchases of face masks and gun holsters, leading us to classify the sharing motive for this channel’s videos as ‘commercial’.

Rankflow visualisation of sharing behaviour of YouTube videos on Twitter in February 2020.

In February 2020, from Bin 2, we identified just two examples of videos shared with a ‘professionalisation’ motive, both from business entrepreneurs apparently invested not only in monetising on YouTube but also in using YouTube as a platform to build their online persona and push their business or finance-related services. One was a video from US YouTube channel Kenneth Holland, run by an individual promising business strategy advice to Internet entrepreneurs. The video, which covered how COVID-19 is affecting e-commerce, was shared 26 times on 12 April, from the channel owner’s own Twitter account. The other video which was shared was from UK MarketOracleTV, a channel run by an individual claiming to provide financial market forecasts. The video was shared 21 times on 15 February by the YouTube channel’s official Twitter account.

March is the month where we see the least ‘inauthentic’ sharing behaviour of YouTube videos on Twitter as indicated by the dearth of red bars and flows in the visualisation shown in Figure 3. We identified just one case of a video being shared with a ‘professionalisation’ motive in March. This was a video from the Indian channel Green light – the club culture. The channel is a self-described ‘storytelling’ platform featuring, among other things, videos narrating Hindi and Bengali stories as well as COVID-related content. The video shared in March is a montage of various people from different parts of India recording themselves urging people to follow lockdown rules and was shared 125 times on 28 March, by the official Twitter account of Green light – the club culture.

Rankflow visualisation of sharing behaviour of YouTube videos on Twitter in March 2020.

As Figure 4 illustrates, the number of unique YouTube videos shared via ‘inauthentic’ behaviour increased in April, when compared to both February (Figure 2) and March (Figure 3). In April, we identified roughly one-third of the videos (n = 9) shared through ‘spammy’ behaviour as being shared with a professionalisation motive. These nine videos came from six YouTube channels that offered a mixture of infotainment, artistic material, news and spiritual content. Two videos came from John Edwards Presents: HonesTEA, an infotainment US channel whose videos were shared repeatedly by the YouTube channel owner’s own Twitter account on 2 April. One of the videos, shared 158 times, was unavailable at time of analysis, but the other, shared 125 times, provided commentary on celebrity gossip and the COVID-19 pandemic. Also, on 2 April, a video from Be Less Stupid, an Infotainment US channel about liberal politics, was shared 70 times by the channel owner’s official Twitter account, which has since been suspended. The video criticised Trump’s mishandling of the pandemic.

Rankflow visualisation of sharing behaviour of YouTube videos on Twitter in April 2020.

On 10 April, a video from an amateur scientist, 18 who claimed to have invented a COVID-19 vaccine, was shared 58 times by the channel owner’s own Twitter account, which remained active at time of analysis. A music video in English with no apparent link to COVID-19 was shared 58 times on 13 April. The video came from the channel TreaSuave_3M and was shared by the channel owner’s own (still active) Twitter account in an apparent case of blatant self-promotion. A few days later, three videos in Hindu from local Indian news outlet First India News Rajasthan were shared by the news outlet’s official Twitter account 71 times, 172 times and 57 times, respectively, on 15, 17 and 18 April, in each case providing extensive commentary on COVID-19 in India, and containing a number of apparently misleading health claims. 19

As Figure 5 indicates, May is the month where we observe the greatest number of unique videos shared through ‘inauthentic’ behaviour in our 4-month period of analysis. We identified content from 27 unique YouTube channels being shared in this way and found that videos from one-third of channels (n = 9/27) were shared with a professionalisation motive. The remaining videos from two-thirds of channels (n = 18/27) were shared for other reasons (our ‘other’ category). We identified 10 videos (from nine channels) shared with a professionalisation motive; and all these videos were repeatedly tweeted by the official content creator’s own Twitter accounts. Two of the videos were from channels covering current affairs and social issues: a video from Primera Voz, about families in Colombia suffering from hunger due to COVID-related unemployment, was shared 41 times on 10 May. A video from Argentinian channel Cartago TV, which draws attention to the exacerbation of the femicide crisis as a result of COVID-19, was shared 23 times on 22 May. In addition, we also observed ‘subject matter experts’ using ‘spammy’ tactics to share their own content in May. These included a video from Venezuelan channel Pedagogía Económica con Víctor Álvarez R. providing commentary on the political economy of Venezuela which was shared 20 times on 22 May. Two videos, in English, featuring Elvis Presley music concert footage and photos from the channel of Presley devotee The Elvis Cup Guy were shared 53 times and 24 times on 23 and 28 May, respectively.

Rankflow visualisation of sharing behaviour of YouTube videos on Twitter in May 2020.

Interestingly, in May we also observed the use of ‘spammy’ behaviour to share more playful types of content, which was likely driven by the desire for content creators to keep themselves and others entertained through long periods of lockdown. A video in Spanish from Oscar Cortés featuring a song performed by a young boy, dedicated to his mother, and in which he laments his inability to spend Mother’s Day with her due to COVID-19, was shared 32 times on 10 May. A ‘Horror Comedy’ about running out of snacks during COVID-19 lockdown from US channel Pillow Talk TV was shared 83 times in total over 22, 23 and 24 May by not only the channel’s official Twitter account but also the accounts of Pillow Talk TV’s two directors. A video from US artist David Henry Sterry, in which he performs his ‘tragicomic’ song ‘the Rona Blues’ was shared 32 times on 24 May. Finally, a video featuring a father and son performing their song ‘Rona on the Run’, from the US channel History Tunes was shared 32 times on 28 May.

Bot-like behaviour as defiance of platform power

As pressure to deal with harmful content and conduct mounts and platforms are pushed to take more aggressive action (Bartolo and Matamoros-Fernández, 2023), the question of how platforms define ‘inauthenticity’ and operationalised their policies around platform ‘manipulation’ and ‘spam’ become all the more pressing. Academic efforts to identify highly automated behaviour on social media have come under criticism for failing to distinguish between the activity of ‘bad actors’ and the accounts of news organisations or political activists exploiting platform affordances in efforts to engage in what might be considered legitimate democratic debate (Martini et al., 2021: 4). And on the regulation side, scholars have cautioned that a broad brush approach to regulating speech by virtue of how ‘automated’ or ‘inauthentic’ its source or means of amplification is, and without due regard for the content of that speech, has troubling implications for free expression (Douek, 2021; Lamo and Calo, 2019; Pielemeier, 2020: 936).

Our article has addressed these tensions by empirically investigating patterns of ‘bot-like’ behaviour on Twitter during the COVID-19 pandemic and by critically addressing the power dynamics involved in platforms’ tendency to conceptualise ‘inauthenticity’ as a communication practice carried out by ‘bad actors’. More precisely, we were interested in the question of what it means to behave ‘authentically’ on social media, especially given the fact that platforms define ‘authenticity’ in their policies rather narrowly (Hallinan et al., 2022) and that for many content creators it is almost impossible to adhere to these definitions in their attempts to ‘make it’ on social media. We were also interested in the question of how platforms’ policies on ‘inauthentic’, ‘manipulative’ and ‘spammy’ behaviour might, in theory, run up against YouTube content creators’ efforts to professionalise in contexts of labour precarity where the odds are increasingly stacked against them.

Our study found evidence of YouTube content creators engaging in ‘inauthentic’ behaviour on Twitter over a 4-month period (February to May 2020) – sometimes ostensibly through automated means, other times potentially through low-resource means such as copying and pasting the same message on Twitter many times. These social media content creators temporarily used their Twitter accounts as ‘amplifier accounts’ (Neudert et al., 2019) for their YouTube content – frequently tweeting their videos a minimum of 11 times and a maximum of 172 times. These practices, we have argued, easily fit Twitter’s definition of ‘inauthentic’ behaviour in that these Twitter accounts used the service to ‘artificially amplify’ information (Twitter Help Center, 2020), primarily through what appear to be copypasta strategies. However, it challenges Twitter’s language of ‘inauthenticity’ – which suggests users deploy these tactics to ‘manipulate’ or ‘disrupt’ people’s experiences on Twitter. Rather, YouTube content creators used the tools available to them – that is, the ease of automation on Twitter (Gorwa and Guilbeault, 2020) – to assert their influence (however incomplete) over platforms’ power in modulating visibility.

This is why we have characterised YouTube content creators’ ‘bot-like’ behaviour on Twitter as a defiance of platform power. It is a defiance because content creators do not follow Twitter’s rules as to how they are supposed to behave on the platform. It is also a defiance because, by acting like a bot, they challenge platforms’ definitions of ‘inauthentic’ behaviour that often equate ‘inauthenticity’ with ‘bad actors’ wanting to mislead. Platforms’ architectures do not reward an ‘artisanal, non-instrumental approach to cultural production’ (Petre et al., 2019: 8) and our study has shown how YouTube content creators resort to behaving ‘inauthentically’ on Twitter to potentially be seen in highly competitive social media spaces (Bishop, 2019; Knuutila et al., 2020; Rieder et al., 2020). While a defiance of platform norms, the ‘bot-like’ behaviour discussed in this article does not necessarily pose a major challenge to Twitter in a material sense. First because this conduct is largely not sustained over time which could potentially limit Twitter’s ability or willingness to moderate these accounts, as we unpack further down. But also, even if Twitter would notice these largely single-day instances of ‘bot-like’ behaviour that go against its policies, the platform can easily apply ‘soft moderation’ remedies (Gillespie, 2022) such as demoting these accounts for posting ‘inauthentically’ without users even noticing it, a particularly opaque and, for creators, disempowering, form of moderation (Savolainen, 2022). In this way, Twitter retains its advantageous position and continue to exert its power over ‘defiant’ users. Existing literature has highlighted how social media users constantly negotiate platforms’ definitions of what it means to be authentic (Abidin, 2018; Baym, 2018; Haimson and Hoffmann, 2016), but this scholarship largely refers to performances of the self and content. Our article adds to this literature by focusing not so much on the identities of content creators or on whether their content is perceived as reflecting ‘genuine’ or ‘authentic’ performances of the self by their audiences but rather on ‘new authenticities’ associated purely with posting behaviour on social media, especially on Twitter.

The use of the Rankflow visualisations further allowed us to observe something important about the nature and pattern of this defiance of platform power. At first glance, observing several flows between red bars in 1 month would indicate sustained attempts by a small number of Twitter accounts to amplify the same video over several days, pointing to a potential ‘spammy’ campaign by committed, highly motivated actors. In April we saw three videos from local Indian news outlet First India News Rajasthan’s YouTube channel shared by the outlet’s official Twitter account repeatedly over 3 days. And in May, there was repeat sharing of content from The Elvis Cup Guy and Pillow Talk TV channels over 2 and 3 days, respectively. In most cases of videos shared for professionalisation motives, however, what we observed were isolated red bars with no flow between them. In other words, the ‘inauthentic’ sharing of YouTube videos on Twitter by content creators in our sample consisted mainly of one-off – or more accurately single-day – instances of ‘spammy’ behaviour, rather than sustained efforts over several days. There are at least two possible (and potentially complementary) interpretations of this finding. One interpretation is that the one-off nature of this behaviour reflects the fact that repeat sharing of this kind is a predominantly low-resource, artisanal strategy for gaining visibility, one that is not easy to sustain over several days without resorting to the use of more sophisticated automation tools or methods that may be beyond creators’ reach. Another interpretation is that accounts are discouraged from engaging in this ‘spammy’ sharing behaviour over several days by Twitter’s content moderation rules, in which case the sporadic nature of the bot-like behaviour we observed might suggest content creators were testing the limits of platform enforcement.

Conclusion

In this article we have argued that the quest for professionalisation on YouTube is a motive for engaging in ‘inauthentic’ behaviour on Twitter. Responding to the call for more empirical evidence to ground debates around ‘inauthenticity’ online (Douek, 2021; Gorwa and Guilbeault, 2020; Petre et al., 2019), we have demonstrated that some forms of ‘inauthentic’ behaviour on Twitter – more precisely the practice of duplicating content by manual or automated means – are not always ‘deviant’ or meant to mislead. We double down on Douek (2021) and other scholars’ call for clearer policies on what is permissible online behaviour on social media, including definitions of ‘inauthentic’ behaviour that are less skewed towards ‘bad actors’ using these sites to deceive, and that are more attentive to the ways in which ‘good actors’ use platforms’ architecture to be seen in an increasingly competitive platformed media ecosystem.

Drawing on other scholars’ observations on the power dynamics involved in tracing the definitional contours of behavioural practices on the Internet, such as in the case of ‘spam’ (Carmi, 2020), our article has addressed the potential power imbalances deriving from platforms’ conceptualisation of ‘inauthentic’ conduct online on their own terms. First, by blaming ‘bad actors’ for ‘inauthentic’ behaviour (understood as the misuse of social media to mislead, as companies like Twitter generally define the concept), platforms obscure the multiple ways they use their own architecture to mislead people: from the way they collect and sell users’ personal data to third parties to the way they algorithmically present information to keep users on their services for longer – which is never framed as ‘inauthentic’. Second, by conceptualising ‘inauthentic’ behaviour as a problematic communication practice carried out by users, platforms deflect responsibility for how their systems are often designed precisely to promote and facilitate ‘inauthentic’ conduct online (Gorwa and Guilbeault, 2020; Haan, 2020; Pielemeier, 2020). Third, by creating the ‘inauthentic’ behaviour category, platforms legitimise vague platform policies and opaque enforcement practices, claiming to be discreetly tackling platform ‘manipulation’ outside of the view of highly adversarial actors bent on ‘gaming’ the rules (Douek, 2021). In fact, the COVID-19 crisis intensified ‘platform paternalism’ (Petre et al., 2019): while platforms relied more on automation to moderate content during the pandemic, with the introduction of new policies, they demanded from users a higher degree of ‘artisanal’ practices in how they were sharing information on their services. Nonetheless, our study has shown that social media users still defy platform power by not conforming to their policies and by constantly negotiating and testing the limits of platforms’ enforcement.

Drawing on Rieder et al.’s (2018) approach to study change over time on social media platforms, the paper has also proposed a method to investigate, at the individual account level, users’ sharing of YouTube videos on Twitter in a ‘spammy’ manner. We have followed a data-driven approach to determine the threshold for ‘inauthenticity’ on Twitter, which has allowed us to identify ‘suspicious’ patterns of behaviour based on our dataset rather than using other researchers’ somewhat arbitrary thresholds of what ‘inauthentic’ behaviour is. Further research could draw on our method to investigate whether content creators from other platforms with clear monetisation schemes, such as Twitch, also engage in ‘inauthentic’ behaviour on Twitter to professionalise. Importantly, there is also a need for more work on what a consideration of multiple platforms means for our understanding of platform power and the defiance thereof.

Our analysis did not, and could not, measure whether and to what extent Twitter’s enforcement of its platform ‘manipulation’ rules had unintended consequences for aspiring content creators eager to gain visibility at all costs., ‘Spammy’, ‘copypasta’ and similar content is frequently subject to ‘soft moderation’ on Twitter (e.g. visibility reduction rather than removal), which is inherently much more opaque and hard to detect by external researchers (Gillespie, 2022) and platform policies have impacts even when they are not enforced: discouraging certain behaviour, and prompting content creators to adjust their tactics to try to avoid sanction. There is a need for more empirical research that examines uses of ‘bot-like’ activity not related to the spread of content that violates platforms’ policies. Twitter acknowledges and ostensibly supports the use of ‘good’ automation (including by news organisations, artistic bots, etc.) (Twitter Help Center, 2017, 2020) and has announced that it will allow users to add an ‘automated’ label to their accounts (Perez, 2021). But a lack of transparency into how the platform differentiates between good and bad ‘bot-like’ behaviour when enforcing its rules limits researchers’ capacity to examine how micro-influencers trying to professionalise would fare in this moderation system. COVID-19 is a reminder that in the rush to address ‘inauthenticity’ on platforms, the potential problematic implications for content creators (and not just political free speech) deserve further scholarly attention. Future work could draw on our research to conduct interviews with YouTube content creators about their cross-promotion strategies and about the extent to which they have been impacted by Twitter’s ‘inauthenticity’ rules.

Finally, this research is also a reminder of the challenges inherent in balancing the competing interests of diverse stakeholders on a global platform like Twitter: the interests of content creators to build an audience and monetise in a hyper-competitive environment, the interests of ordinary users to see relevant content and to experience a feed that does not appear to be overrun by lots of unoriginal, repetitive content, and the interests of regulators to see more action taken against ‘problematic’ behaviour online. In its public communications, Twitter regularly appeals to all these interests, which tends to elide the difficult trade-offs ultimately involved in satisfying them. In focusing on the efforts of content creators seeking professionalisation on social media, we do not mean to suggest that these creators’ interests should override all other interests. Instead, we hope to have contributed to scholarship complicating platforms’ narratives around ‘authentic behaviour’ and ‘spam’ and offered a path forward for studying their underlying dynamics without relying on platforms’ own framings of these issues – framings which, we have argued, are neither neutral nor complete.

Supplemental Material

sj-jpg-1-nms-10.1177_14614448231201648 – Supplemental material for Acting like a bot as a defiance of platform power: Examining YouTubers’ patterns of ‘inauthentic’ behaviour on Twitter during COVID-19

Supplemental material, sj-jpg-1-nms-10.1177_14614448231201648 for Acting like a bot as a defiance of platform power: Examining YouTubers’ patterns of ‘inauthentic’ behaviour on Twitter during COVID-19 by Ariadna Matamoros-Fernández, Louisa Bartolo and Betsy Alpert in New Media & Society

Supplemental Material

sj-jpg-2-nms-10.1177_14614448231201648 – Supplemental material for Acting like a bot as a defiance of platform power: Examining YouTubers’ patterns of ‘inauthentic’ behaviour on Twitter during COVID-19

Supplemental material, sj-jpg-2-nms-10.1177_14614448231201648 for Acting like a bot as a defiance of platform power: Examining YouTubers’ patterns of ‘inauthentic’ behaviour on Twitter during COVID-19 by Ariadna Matamoros-Fernández, Louisa Bartolo and Betsy Alpert in New Media & Society

Supplemental Material

sj-jpg-3-nms-10.1177_14614448231201648 – Supplemental material for Acting like a bot as a defiance of platform power: Examining YouTubers’ patterns of ‘inauthentic’ behaviour on Twitter during COVID-19

Supplemental material, sj-jpg-3-nms-10.1177_14614448231201648 for Acting like a bot as a defiance of platform power: Examining YouTubers’ patterns of ‘inauthentic’ behaviour on Twitter during COVID-19 by Ariadna Matamoros-Fernández, Louisa Bartolo and Betsy Alpert in New Media & Society

Supplemental Material

sj-jpg-4-nms-10.1177_14614448231201648 – Supplemental material for Acting like a bot as a defiance of platform power: Examining YouTubers’ patterns of ‘inauthentic’ behaviour on Twitter during COVID-19

Supplemental material, sj-jpg-4-nms-10.1177_14614448231201648 for Acting like a bot as a defiance of platform power: Examining YouTubers’ patterns of ‘inauthentic’ behaviour on Twitter during COVID-19 by Ariadna Matamoros-Fernández, Louisa Bartolo and Betsy Alpert in New Media & Society

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.