Abstract

This article presents a minor history of the beneficial community-created bots that once flourished on Twitter, showcasing their important but overlooked role in enhancing platform cultures, accessibility, and user experience. We present a typology of Twitter’s community-created bots, positioning them as marginal but active characters, tracing how and why they were crafted and co-evolved with, and in connection to, platform changes. The article offers a counter-narrative to the dominant discourse and association of bots with nefarious actors, showcasing the value of bot-making practices as forms of community-led innovation that have enriched social spaces through automation. In the context of current trends towards platform enclosure, the article argues for the continued importance of user innovation cultures in an AI-infused and potentially decentralised internet, proposing that we should not forget, overlook, or undermine the potential of creative, purposeful and community-initiated automation in fostering accessible, sociable, and joyful communication environments.

Introduction

This paper presents a history of beneficial community-created bots on Twitter/X 1 in the context of the platform’s changing business strategy, culture, and affordances. In doing so, it contributes to both web history (Brügger and Milligan, 2018) and platform studies (Burgess, 2021). With Nicoll (2019), who in turn draws on Joseph (2008) and others, we employ the term ‘minor history’, not to imply insignificance, but rather to describe a set of objects, subjects, and spaces that are, for various reasons, ancillary to conventional narratives’ (Nicoll, 2019: 13). We respond to the need for alternative histories of the internet that deploy as epistemological tools lesser-known events, and that highlight the contributions of ordinary user-communities (Peters, 2024); accordingly, we reposition bots as marginal but active and significant characters in, rather than merely artefacts of, Twitter’s history. Through our focus on the often-overlooked roles of community-created bots, we offer a counter-historical narrative to two dominant ideas: first, that bots have only ever been disruptive or polluting elements of the information and media environment; and second, that platform companies are solely or even primarily responsible for platforms’ affordances, pleasures, and forms of usefulness. Through narrating the minor history of bots, we aim to complement and enrich scholarly accounts which have foregrounded internal company struggles (Bilton, 2014), techno-capitalist dynamics such as a platformisation (Helmond, 2015; van Dijck, 2013) and its impact on developers (Randerath, 2024), and the activities of user-communities in relation to changing platform architectures (Burgess and Baym, 2020).

Our focus on bots as overlooked characters in this history highlights how users have enriched platform cultures through automation, and how their contributions have been not only appropriated, but also progressively backgrounded and repressed as the platform economy developed. In the context of social media, the term ‘bot’ has over time become synonymous with repetitive, spam-like, manipulative, or otherwise unwanted content and activity (Assenmacher et al., 2024). While it would be disingenuous to deny the role of bots in distributing malinformation, spam, and malware of various kinds, this orthodox framing of bots as pollutants and harmful agents both serves and is reinforced by platform policies and protocols that inhibit user control and third-party innovation (Burgess and Baym, 2020). By tracing the alternate histories of useful and helpful community-created bots, we aim to disentangle this bundle of ideas and the powerful interests that attach to them. 2

For the purposes of this paper, we focus on bots that operate as user-accounts with a degree of automation within the communicative environments of platforms. Rather than evaluating them in terms of their technical operation, we situate bots as characters that have purpose, ethical intent, and impact within the social worlds of the platforms they inhabit. We argue that the prevailing narrative around automated content discursively situates bots as a convenient shorthand for wider platform and societal issues (from misinformation to scams) that remain highly contested and poorly understood, while disregarding the beneficial contributions of community-led innovative bot-making. Our minor history of Twitter’s community-created bots is therefore partly a reparative one, highlighting the positive contributions bots have made to the usability, sociability, and enjoyability of platforms. It also provides a complementary perspective on the better-known processes of commercial platformisation and enclosure that have characterised the digital media environment over the past two decades. In telling this story, we hope to shed light on what has been lost in this process, alongside more hopeful stories of what could have been, and what may still arise, given the yet-to-be realised possibilities of more democratic social media futures.

Drawing on a collection of archived sources (Helmond and van der Vlist, 2019) including first-person accounts, blogs, and resources developed by bot-makers and scholars, we present a typology of how early Twitter users contributed positive, beneficial, and joyful additions to the platform through voluntarily developed and maintained bots. Tracing the stories of key examples, we showcase the innovative role of bots in fulfilling voids and deficits in platform cultures and design features. In building this typology, we demonstrate the utility of a pragmatic ethics of and approach to bots, one that defines and governs them not only based on the fact or degree of their automation, but also their social agency, ethical characteristics, and their impacts on the practical usability and cultures of platforms (Gorwa and Guilbeault, 2020).

The histories of bots on Twitter are entwined with the evolution of the platform. This paper accordingly focuses on the impact of platform changes on the trajectory of bots, while also demonstrating how community uses of automation have transformed Twitter’s platform culture and user experience. The paper has two main sections. We first situate bots within Twitter’s platform biography (Burgess and Baym, 2020), beginning with its early phase as a welcoming space for user-led innovation, and tracing its technical enclosure following key shifts in business strategy and ownership. We then present a typology of bots that flourished during these earlier periods, with a focus on their beneficial contributions to user experience and culture. We conclude with reflections on the future of community-created bots in a landscape shaped by increased platform enclosure, diminishing user-agency, and the integration of Generative AI.

Positioning social media bots

From the mid-twentieth century, bot-making, as with most forms of programming and software development, was primarily confined to elite university labs and institutions. During the 1980s (alongside the uptake of the personal computer) and into the 1990s (with the growth in home internet access), bot-making became domesticated as a practice of computational experimentation and creativity available to hobbyists and amateur enthusiasts (Flores, 2021; Latzko-Toth, 2016; Leistert, 2017). In the 2000s, the emergence of social media coincided with the general ‘Web 2.0’ ethos of open innovation, catalysing the expansion of bot-creator communities (Flores, 2021b). The arrival of bots to commercial social media sites (later called platforms) had a transformative effect on their social roles as rather than operating in isolation on the web, bots could now access, share content, and interact with user-communities, and their automated posting mechanisms shifted how audiences consumed information.

Social media platforms today make extensive use of their own bots and other automated systems for purposes such as content moderation, curation, and user interaction. The rise of Generative AI via Large Language Models (LLMs) has also seen prominent platform companies such as Meta launch their own LLM-powered bots targeting user attention through the appeal of automated entertainment and companionship (Henrickson and Carlon, 2024). In parallel, social media platforms have also long been populated by bots created outside platform companies, including by users and third-party developers. These bots are often positioned by platforms as foreign bodies that violate platform rules and protocols relating to authentic content and valid terms of use (Hallinan et al., 2022), as agents of deception and/or coordinated political interference (Leistert, 2017). Their capacity to mimic human behaviour at speed and scale and thereby spread misinformation, promote hyperpartisan news, and distribute spam and malware, have been subject to extensive attention. 3

However, as we explore through the remainder of this paper, externally created bots have also been developed and deployed for beneficial purposes by artisans, tinkerers, and user-communities, to improve platform accessibility and usability, to assist in creating welcoming environments, to educate and raise awareness around social and political issues, and for the purposes of enjoyment and creative expression. The dominant framing of bots as coordinated bad actors that need to be detected and restricted has elided their historical role – and future potential – as active, welcome and positive contributors to platform cultures (Woolley and Kumleben, 2020). The story of community-created bots on Twitter offers a powerful object lesson in this regard.

Experimental and pioneer bot-making in early Twitter

Early (pre-2010) Twitter was a rich environment for the emergence of experimental, creative and useful bots. The platform emerged and grew to prominence at the right time, it offered the ideal technical affordances, and its approach to governance was relatively hospitable to bot-making. The generally open innovation systems of Web 2.0 – of which Twitter was a key participant – saw a flourishing of community-created bots, and of bot-making communities that set aesthetic and ethical standards for how bots should be used and how they should behave in social media environments (Kazemi, 2013; Sample, 2014). As a central social network for tech developers and tinkerers, Twitter was a key place to experiment with and discuss the norms around the development and deployment of bots, and there were enough Twitter users who appreciated these automated creations to make developing and sustaining them worthwhile. Additionally, Twitter’s protocols made it a far more favourable environment for bot creation than its contemporaries, via its relatively permissive API access rules, and by allowing anonymous and multiple accounts, in direct contrast to Facebook’s ‘real name web’ policies (Livingstone, 2021).

Twitter’s early community-created bots functioned in a variety of ways. There were ‘closed’ bots that posted preset content on a schedule and did not interact with other accounts, ‘open’ bots that searched through open and/or live data sources to provide answers or updates, ‘ebook’ or ‘sample’ bots that posted specific sections from a text corpus, and ‘mashup’ bots that combined multiple texts (Flores, 2021b). There were also character bots that performed and interacted with other accounts as personas (Nishimura, 2016), bots that generated art or images, and emoji bots that assembled narratives and stories. Others copied or repurposed existing bots’ features or relied substantially on manual curation or intervention.

As with many other user innovations in the early years of the platform (Burgess and Baym, 2020), early Twitter bots were simple and added utility, fun or novelty. For instance, @everyword, created by Allison Parrish in 2007, tweeted one word every thirty minutes from an alphabetised dictionary. @everyword attracted over one hundred thousand followers with part of its allure arising from the joy of unexpectedly stumbling across its contribution amidst a sea of standard twitter posts (Zhou, 2021). With followers observing the machinic progress in completing a set mission, @everyword set in motion a movement of bots that would play with this methodical form of decontextualisation, such as @finnegansreader, which gradually tweeted every line from Finnegan’s Wake, and @everylotnyc, which periodically posted Google Street View images of lots in New York City (Zhou, 2021). It is notable that from the onset, these forms of Twitter bots were explicit about disclosing and embracing their status and automated capabilities as being bots, rather than imitating or pretending to be human users. As Flores (2021b) notes, while more broadly bots were designed with the aim to pass the Turing Test, there was a later shift, motivated by practical and aesthetic reasons, that led some developers to embrace the novelty of the bot’s robotic character, which is very apparent with early Twitter’s community-created bots.

Growing pains and threats to survival at the height of Twitter’s popularity

In the late 2000s and early 2010s, during Twitter’s period of most significant growth and transformations, the platform underwent major changes and updates to its API. One significant change occurred in 2009 (referred to as the ‘Twitpocalypse’) when Twitter hit its limit for 32-bit signed integers, largely wiping out the existing ecosystem of bots. This created complications for third-party developers and automated accounts, causing crashes and negative tweet identifier counts (Siegler, 2009). Another important update took place in 2010 (known as the ‘OAuthpocalypse’) when Twitter phased out support for Basic Auth, which was relied upon by many third-party developers, and replaced it with the more advanced OAuth (Siegler, 2010). With each of these upgrades, successive waves of bots that could no longer function under the new computational environments disappeared. Each time, though, a handful survived, including @everyword (2007), which continued until it completed its task of tweeting all words in the American English Dictionary in 2014 (Dubbin, 2015). Despite these disruptions and changes, the culture of the platform remained favourable to future bot creation and the foundations had already been established for a new wave of community-created bots to flourish that would engage in an even more extensive range of innovative, creative, and useful activities.

Following the 2009 and 2010 upgrades, Twitter continued to develop as a flourishing space for bots, with the #botALLY and #bot hashtags becoming a meeting place for bot-makers and their supporters (Flores, 2021b). By 2014 an extensive collection of creative and functional bots had emerged on Twitter. As we expand upon in the typology, the bots during this period formed a diverse ecosystem of automated characters; while some improved the useability and accessibility of the platform, others offered artistic and literary contributions, satirised human behaviour, partook in political and social activism, and strived to increase transparency and educational services. The @VeryOldTweets bot created by internet artist and bot-maker Darius Kazemi even memorialised Twitter itself by retweeting the earliest tweets from the platform’s first 90 days of existence, four times per day. Reflecting upon the rich potentials of bot-making during this prosperous time, Flores (2014: para. 3) observed that ‘every bot can be copied, forked, elaborated, repurposed, inverted, corrupted, improved, and can inspire the creation of dozens of new bots’, and some bots were even created to generate and propose new ideas and spinoffs for additional automated accounts.

Community-created bots emerged at the intersection between user innovation and Twitter’s evolving technical affordances. Many Twitter bots depended on access to the read/write functions of Twitter APIs – the protocols that enable software access to and actions on the platform, for example, to post when tagged or in response to tweets mentioning particular terms, users, or words. Relatively open and free developer access to the API enabled third-party developers such as Cheap Bots, Done Quick! to simplify the bot creation and deployment process, making bots ‘easy to make and free to run’. 4 However, not all bots on Twitter relied upon the API, operating more like standard accounts that were programmed to post on a preset schedule (a feature readily available via the third-party Twitter client Tweetdeck), or using tools for browser automation like Selenium. Although there are key differences in how they functioned, both API and non-API dependent bots were only able to work according to their intended purpose because they operated within an architectural and cultural framework that supported, enabled and widely welcomed their presence. In the next section we outline how this environment has become less hospitable through platform redesign, business model changes, and deeper cultural shifts following Musk’s takeover of the platform in 2022.

Post-2022: Musk’s war on bots

‘Fighting the bots’ was a central theme of Musk’s campaign to take over Twitter (Assenmacher et al., 2024). In April 2022, early in the acquisition process, Musk posted, ‘If our twitter bid succeeds, we will defeat the spam bots or die trying!’ 5 While this tweet was his most widely reported on the topic, it is just one of many targeting ‘bots’ as a catch-all term for problems with information integrity, authentic content, and follower count inflation on the platform under the previous owners, assertions that Musk used strategically in negotiations. 6 As Assenmacher et al. (2024) point out, while questions about who or what is considered a bot, and queries about their prevalence and influence in society has been subject to research debate for years, the discursive impact of the dominant narrative from Musk was that ‘bots’ equalled ‘bad’ and accordingly were treated as a class and scapegoated for a range of complex platform and societal problems.

In February 2023, several months into the new ownership regime (a period which included various summary shutdowns of access to the platform), Musk foreshadowed drastic changes to the API, removing free access on the grounds that it was exploited by bad actors, and in particular operators of spam and misinformation bots. 7 Commentators noted the chilling effect this move would have on public service organisations, many of whom used automated accounts to post emergency information, (see e.g. Stokel-Walker, 2023). Following the backlash, in March 2023, Twitter announced new pricing for developer access to its API. 8 While preserving free write-only access (sufficient for only the most basic uses of the platform) including for so-called ‘good’ bots, the model did not discriminate among different types of developers, provided no definition of ‘good’ bots, seemingly leaving it to the company (or Musk’s) discretion, and was structured in such a way as to make it prohibitively expensive for small developers to continue to run the various utilities, third-party clients, and automated accounts that had previously been built using free read/write API access. These changes made operating bots widely infeasible: on April 6th, 2023, an announcement appeared on the Cheap Bots, Done Quick! website stating that they would be shutting down after the changes made it impossible for the service to continue to function. As an alternative, the operators promptly announced the launch of a similar service called Cheap Bots, Toot Sweet! for those interested in transferring their bots to Mastodon, and set up Blue Bots, Done Quick! on Bluesky.

From the perspective of Twitter’s third-party developers (including researchers as well as bot-makers), this was the latest micro-event in a slow-moving disaster. It was part of an ongoing ‘API apocalypse’ or ‘APIcalypse’ as per Bruns (2019), marked by the enclosure and monetisation of previously open innovation affordances in the form of data access via the Twitter API, which had for more than two decades enabled bot-makers to program their bots to work with and respond to other accounts on the platform. In platform-historical terms, we position Musk’s takeover and subsequent actions as a dramatic turn in a far longer history that cumulatively has resulted in a platform less hospitable to third-party and community innovation in general, and to useful, fun, and positive community-created bots, in particular. While the platform has become progressively more hostile to beneficial bots (and the communities that crafted and enjoyed them), recent research and reporting suggest that, under Musk, Twitter’s problems with spam and political misinformation, including the use of bots for this purpose, are worse than ever (Graham and Fitzgerald, 2023).

A typology of community-created Twitter bots

In what follows, we outline a typology of beneficial community-created bots that thrived during the earlier days of Twitter. As discussed above, these bots underwent waves of threatened extinction and regeneration: after becoming casualties to early API and other protocol changes, they re-emerged during the platform’s growth and expansion period, but have been negatively impacted, lost, or moved elsewhere following Twitter’s transformation to X.

The typology serves as a counter-narrative to the notion that bots, by nature of being automated, are fundamentally negative contributors or pollutants that need to be eradicated from platform environments. In positioning bots as minor characters within Twitter’s history, we examine them not as a sum of their technical features in isolation, but as holding agency, purpose, intent, and influence within the social environment for which they were crafted. In addition to selecting for benefit and user innovation, in building this typology we further categorised bots with regard to their ethical characteristics and intended purpose (building on Gorwa and Guilbeault, 2020), and in relation to the platform voids and deficits they were designed to address. Understanding the implications and purposes of bots in this way enables us analytically to distinguish between bots that, while designed to operate through similar mechanisms, impact on and contribute to platform cultures and user-communities very differently. The typology is not intended to be exhaustive; rather, it is purposive and selective, focussing in particular on what has been lost, and what is at risk but might yet be preserved, under platform governance regimes that take a zero-tolerance attitude to bots, and under the kinds of data access arrangements under which it has become increasingly infeasible to operate these kinds of bots.

Drawing on our own prior research on Twitter’s platform culture and history (Burgess and Baym, 2020; Weller et al., 2014), the bot studies literature, archived online sources, and accounts by bot creators, we articulate the contributions of community-created bots to the Twitter platform, identifying how the API changes and broader cultural shift has impacted their operations, and the extent to which they might have resonances or afterlives on other platforms. We draw on the extensive but seldom utilised archive of commentaries, bot standards, and documentation made by prominent bot-makers and scholars developed during this time, situating these materials within Twitter’s multi-layered platform history (Helmond and van der Vlist, 2019). By foregrounding paradigmatic examples of bot types, we showcase their origins, uses, and benefits, and highlight the voids in platform design and user experience that they were intended to address – aims that, in many cases, they fulfilled.

The broad categories of bots in our typology, expanded in further detail below, are as follows: (1) (2) (3) (4) (5)

Accessibility bots

Accessibility bots on Twitter were developed by users to bridge gaps in the platform’s official design and overcome barriers to use, with a notable contribution being the creation of ‘alt text’ bots. While Twitter had long integrated the ability to add images to text posts, it persistently lacked the feature for users to provide text descriptions (‘alt text’) for these images. Alt text descriptions can be converted into simulated speech by audio screen readers, enabling individuals who are blind, have low vision, or use screen readers to accurately understand the image’s content (Hanley et al., 2021), and as is common with accessibility features, they also provide context and commentary for all users on the platform. As Sato (2022: para 4) explains, alt text conveys meaning, and ‘with no description in the tweet and no alt text added, any humour, delight, or joy would be flattened. Their screen reader would read out the handle of the account, display name, timestamp of the tweet, but nothing else other than the fact that there’s a photo’.

To encourage and assist users in adopting alt text and other inclusive practices of communication, Twitter’s user-communities developed a series of bots that simplified the process and reminded users to include these elements when posting images. Before Twitter introduced the official alt text badge (i.e. superimposed on annotated images), when content creators had used alt text, it was difficult to locate. To address this issue, Fynn Heintz (a software engineer but not a Twitter employee) developed the @get_altText bot which, when tagged, would locate the alt text description and tweet it in a reply (Sato, 2022). Other bots served as reminders and educators on the importance of adding alt text, either through offering practical tips (such as @A11Awareness), private messages, or public nudging. @AltTxtReminder, for example, created by software engineer Hannah Kolbeck, would send reminders as private messages (to those who had opted into following it) when they tweeted an image that lacked a description. Kolbeck explained that the bot was originally created to serve around ten people in her immediate Twitter circle and expanded rapidly to benefit thousands of followers demonstrating the high demand for this type of service (Sato, 2022). The @UKGovAltBot created by Matt Eason by contrast called public attention to inaccessible tweets from UK government accounts (DiBenedetto, 2023), nudging official accounts to engage in more inclusive practices. Despite having extensive followers and support, both @AltTxtReminder and @UKGovAltBot were suspended for violating platform rules and are no longer on Twitter. @A11yAwareness bot, by contrast, has survived Twitter’s changes under Musk and continues its work raising awareness about web accessibility, posting practical tips for best practice such as suitable placement of emojis for text readers.

As many accessibility bots relied on intensive API usage for scanning and retweeting, they were particularly affected by the introduction of the paid API structure. Since these bots were operated by volunteers, they also lacked the financial resources to pay for access. While numerous accessibility bots faced discontinuation due to unfeasible costs, @A11Awareness, with some modifications and increased manual intervention, was among the few that could continue. The creator – web accessibility advocate and developer, Patrick Garvin – explained that because @A11yAwareness utilises scripts it is far less API intensive than other bots that scan large portions of Twitter or frequently retweet (DiBenedetto, 2023). Reflecting upon the fate of other accessibility bots, Garvin noted that many of these services were created by people with disability or their caregivers, highlighting the additional blow to advocacy efforts in addition to the loss of valuable accessibility resources (DiBenedetto, 2023).

Alongside changes to the API fee structure and subsequent suspension of community-created accessibility bots, during Musk’s early takeover period, the platform also halted official initiatives towards inclusive use. In February 2023, Twitter announced the dissolution of its official accessibility team, raising concerns about the platform’s viability for those who relied on these features, including people with disability (Heasley, 2023). Important features managed by the Accessibility Team, such as captions for live discussions in Twitter Spaces and alt text badges were left without a dedicated maintenance team (Knibbs, 2022). Additionally, initiatives under development, like the closed caption button, were paused or scrapped (Heasley, 2023). Without an official dedicated team and with conditions hindering volunteer-driven solutions via bot-making, Twitter became increasingly hostile, leading many bot creators and the communities they served to migrate to other platforms. In moving @AllyAwareness to BlueSky, the bot-creator, Garvin, expressed appreciation for the emerging platform as being a space that reunited the accessibility advocates who were so instrumental in making Twitter a hub for accessibility education and advocacy between 2020 to 2022. 9 The migration of community-created accessibility bots to emerging platforms offers a fresh chance to prioritise the voices of those most affected by accessibility issues. Even as technology and AI evolve to automate these functions, community-driven initiatives like this are likely to continue to play an essential role in improving and correcting applications in practice, in identifying flaws in everyday implementation and use cases, and as a direct form of advocacy from the people who use (or support those that use) these services.

Creative and literary bots

While accessibility bots gained more prominence during Twitter’s later growth phase, creative and literary bots were among the earliest and most prolific forms of user-led application of automation, building and expanding on the electronic literature movements associated with the early web (Flores, 2021a). Creative bots utilise literary, artistic, or cultural texts and references, sometimes amalgamating several texts or drawing from one text alone (Bollmer and Rodley, 2016). They exhibit characteristics of closed, open, and mashup bots, functioning with the API or independently, either on a set schedule or in reaction to specific conditions or triggers. These bots, while creative and playful, are far from frivolous or trivial; they are embedded in reflexive and well-documented cultures of early bot-making (Flores, 2021b; Kazemi, 2013). They have been recognised as a genre of e-literature (Flores, 2021b) and have played a significant role in the development of ethics around bot creation, with Darius Kazemi publishing a blog in 2013 laying out Twitter bot etiquette to ensure bots of this kind would continue to be welcomed and not become intrusive and or violate API allowances (Kazemi, 2013).

By reformatting, decontextualising, and repurposing existing and new texts, creative and literary bots possess an almost boundless potential, capable of materialising in various forms and drawing from a wide range of source materials. They can either utilise or remix existing texts, or produce their own content, in the form of poetry, art, images, quotes, or a combination of these elements. Creative and literary bots vary significantly in how they function and since the earliest years, they have had an active and widely beloved presence on Twitter.

One example of a popular closed source bot that draws on a single literary text is @Ulyssesreader, created by Finnish programmer Timo Koola. @Ulyssesreader tweeted small portions of the novel Ulysses by James Joyce, progressively tweeting the complete book in small snippets over a three month period. The bot is technically simple but experiments creatively with the status and reception of the novel as a textual object. Unlike the physical book, which might be picked up and read in one go (unlikely in the case of Ulysses!), or even discarded if the reader gives up on the task, @Ulyssesreader allows a Twitter user following the account, at least in theory, to consume the work steadily, 140 characters at a time– or to encounter small, evocative tracers of the book’s style and tone completely out of context, which may offer pleasure to the reader in other ways. The creator of @Ulyssesreader framed the usefulness of the bot in practical terms, reporting that it was motivated by his second attempt to read the famously difficult 250,000 word novel, and was designed to set a feasible pace to complete the text in its entirety (Broughton, 2017). @Ulyssesreader finished and restarted its ‘reading’ of the novel repeatedly over a 9-year period (Emre, 2022) until the account was finally deleted from Twitter at the beginning of 2023. @Ulyssesreader’s code remains on GitHub, 10 but at the time of writing the bot doesn’t appear to have been re-established on any other platform.

Other creative and literary bots on Twitter engaged with multiple texts, employing intertextuality and remixing techniques to create novel and surprising content. For example, @the_ephemerides, developed by Allison Parrish, selected raw images from NASA’s Outer Planets Unified Search Catalogue, pairing them with computer-generated poems produced by merging the texts Astrology: How to Make and Read Your Own Horoscope (Sepharial, 1920) and The Ocean and its Wonders (Ballantyne, 1874; Carter, 2020). The outcome was a captivating visual depiction of space and the outer planets, accompanied by poetry that is unexpected and frequently nonsensical.

While creative and literary bots attracted extensive followings, few of these bots have remained on Twitter with many bot-makers and artists leaving the platform following API restrictions and changes in the platform culture and conditions under Musk. Fortunately, many of these creations have been documented though GitHub and personal websites, and some have afterlives, being re-established on alternative and emerging platforms, particularly through the arrival of Blue Bots, Done Quick!, to Bluesky. This service, provided by Olaf Moriarty Solstrand (@olafmoriarty.no) offers a free tool to build Bluesky bots using Tracery code, largely recreating the earlier Twitter service (Cheap Bots, Done Quick!) for the BlueSky platform landscape.

With AI-generated content becoming more prevalent in contemporary platform environments, creative and artistic bot-making is facing a new wave of questions around ethical and acceptable bot-making practices. In December 2024, the Blue Bots, Done Quick! account specifically laid out one non-negotiable standard, being that the service does not contain AI and never will. 11 Similarly, Mark Sample (creator of numerous Twitter bots that have relocated to Bluesky) also emphasised in a post in December 2024 that his bots are the ‘good kind’ that ‘don’t use AI’. 12 These statements hint towards an emerging etiquette and aesthetic in creative and artistic bot-making on the platform, arising in response to the prevalence of, and attitudes towards, generative AI; although it is anticipated that there will also be parallel developments in emerging creative bot-making powered by AI (and standards around this) that will also arise on the platform and beyond. These ongoing negotiations echo the earlier processes in establishing bot-making standards on Twitter, and warrant further scholarly exploration as they evolve as illustrative of community-led creative and artisan bot-making ethics.

Novelty bots

Novelty bots made Twitter a more joyful, serendipitous, and fun place to be, trading in affect, humour, and nostalgia. In contrast to literary and artistic bots, novelty bots do not draw upon, or generate new, literary or artistic texts, but instead became a point of interest that were welcomed by many due to their wholesome contributions that brightened users’ feeds. The entirely wholesome @tinycarebot, for example, would tweet gentle suggestions to engage in self-care, such as ‘please remember to look at nature’ or ‘don’t forget to take a second to listen to some music that helps you feel safe please’. The creator, Jonny Sun, explained that the bot was motivated by his own affective challenges with ‘doomscrolling’ on Twitter (Lang, 2016) and its message clearly resonated, attracting over 140,000 followers. The bot was updated during the COVID-19 pandemic to also tweet tips such as reminding people to wash their hands and to check in on their friends, showcasing how novelty bots, while predominately wholesome, can also serve useful purposes. @tinycarebot last tweeted on March 28, 2023, has left Twitter and resurfaced on Bluesky under the same name.

Novelty bots are not limited to text, with many employing images or emojis. Adopting the whimsical description ‘a mighty locomotive sweeps through rugged landscapes’ every four hours the widely beloved ‘trains botting’ @choochoobot, created by artist and activist Parker Higgins (@xor), generated train landscapes comprised of emojis such as trees, cactus, and animals. The bot attracted fan-made canon in the form of commentary that speculated about the scenarios, design, and what they meant (Doctorow, 2016), and inspired other train emoji bots such as @AoIR_trains (created during an Association of Internet Researchers Conference by Stuart Geiger and Tim Highfield). Like many novelty bots, @choochoobot made its final posts on Twitter in July 2023, and has relocated to Mastodon as @choochoo and Bluesky as @choochoo.xor.blue.

Despite the challenging environment, some novelty bots managed to remain active on Twitter. One notable example is @PossumEveryHour, created and maintained by user @ThunderySteak. As the name implies, this bot tweets a picture of a possum each hour, with most images having been contributed by other users, showcasing the collective interest and collaborative nature of some bot creations. While in February 2023 the account announced it would depart Twitter in response to decisions around the payment model for API access, it remained open, with pinned posts indicating that it was able to navigate the developer API free tier system, albeit with multiple issues such as temporary suspensions without explanations. This decision exemplifies the choice of some bot-makers to stay on the platform, one that is far more likely to be available to bots with simpler technical requirements that do not depend on extensive API access. The @PossumEveryHour account has also been established on Mastodon and Bluesky with further expansion announced on the webpage. 13 The revival of some novelty bots on Mastodon and Bluesky suggests that many users continue to appreciate the unexpected sprinkle of delight they bring to online spaces that can otherwise be filled with gloomy news cycles. Their resurgence hints at nostalgia, while also serving as a reminder of the playful potentials, limitations, and applications of current and emerging technology, including generative AI and AI-powered bots.

Social and political activist bots

One of the key legacies of Twitter’s bot-making communities is the activist bot. While political and social bots have attracted attention for their role in disseminating misinformation and attempting to infiltrate political and social debates (Albadi et al., 2019; Dubois and McKelvey, 2019), Twitter also hosted bots with a mission to raise awareness, provide commentary, and to prompt and actively participate in political and social conversations. These bots were not attempting to trick users into believing they were human; rather they were transparent about being bots with a purpose to shed light on injustices.

In 2014, bot-maker and scholar Mark Sample coined the term ‘protest bot’. Similar to the protest songs that came before them, protest bots strived to ‘reveal the injustice and inequality of the world and imagines alternatives’ (Sample, 2014: para. 3). The concept of protest bots emerged as part of a broader discussion around bot etiquette, in which some proponents argued that bots could go beyond ephemerality, novelty or artifice to express sincere convictions, cultivated over time. Inspired by ideas around the journalism of conviction, Sample proposed that such protest bots, or ‘bots of conviction’, could be designed to operate in ‘topical, data-based, cumulative, oppositional and uncanny’ ways (Sample, 2014: para. 10).

Sample is known for creating several such bots, one of the most famous being @NRA_Tally bot (2014), a mashup bot which works to critique the National Rifle Association’s (NRA’s) rhetorical strategies by using real data on US mass shootings to generate believable but fictional headlines and corresponding public relations statements by the NRA in responses to hypothetical shooting incidents. Reflecting upon the bot, Sample states that it was an attempt to unsettle the public debate about gun violence ‘just for a minute’, noting that because bots do not back down or cower, ‘bots of conviction are also bots of persistence’ (Sample, 2014: para. 42). Livingston (2021: 736) refers to these types of bots as ‘tactical media’, intended to shock viewers into reconsidering complex issues. Similarly, Finn (2017: 195) describes them as ‘algorithmic criticism’ that sparks collective conversations. On April 6, 2023, after nine years on the platform, @NRA_Tally ceased posting on Twitter.

Another responsive protest bot is @ElizaRBarr, which described itself as ‘good natured, always inquisitive. Also a bot. Ignore me and I will go away’. @ElizaRBarr was inspired by Joesph Weizenbaum’s bot ELIZA (developed between 1964 and 1966), asking the type of polite and detached questions and comments typically associated with psychotherapists, such as, ‘That’s interesting’, or, ‘Tell me more about that’. During the height of GamerGate, @ElizaRBarr was launched to engage with belligerent and hostile GamerGaters who were harassing other users on Twitter (Flores, 2021b). Instead of responding in defence, the bot would instead deflect by asking questions like, ‘What are your feelings now?’ or, ‘Does that question interest you?’. @ELIZARBarr arose in response to toxic and harmful user practices targeting women (Burgess and Matamoros-Fernández, 2016) that were enabled by the affordances of Twitter but were not addressed by the platform. In this way, its contribution may be interpreted as an extension of the view of platform practices as an alternative trajectory for platform moderation (Kennedy et al., 2016).

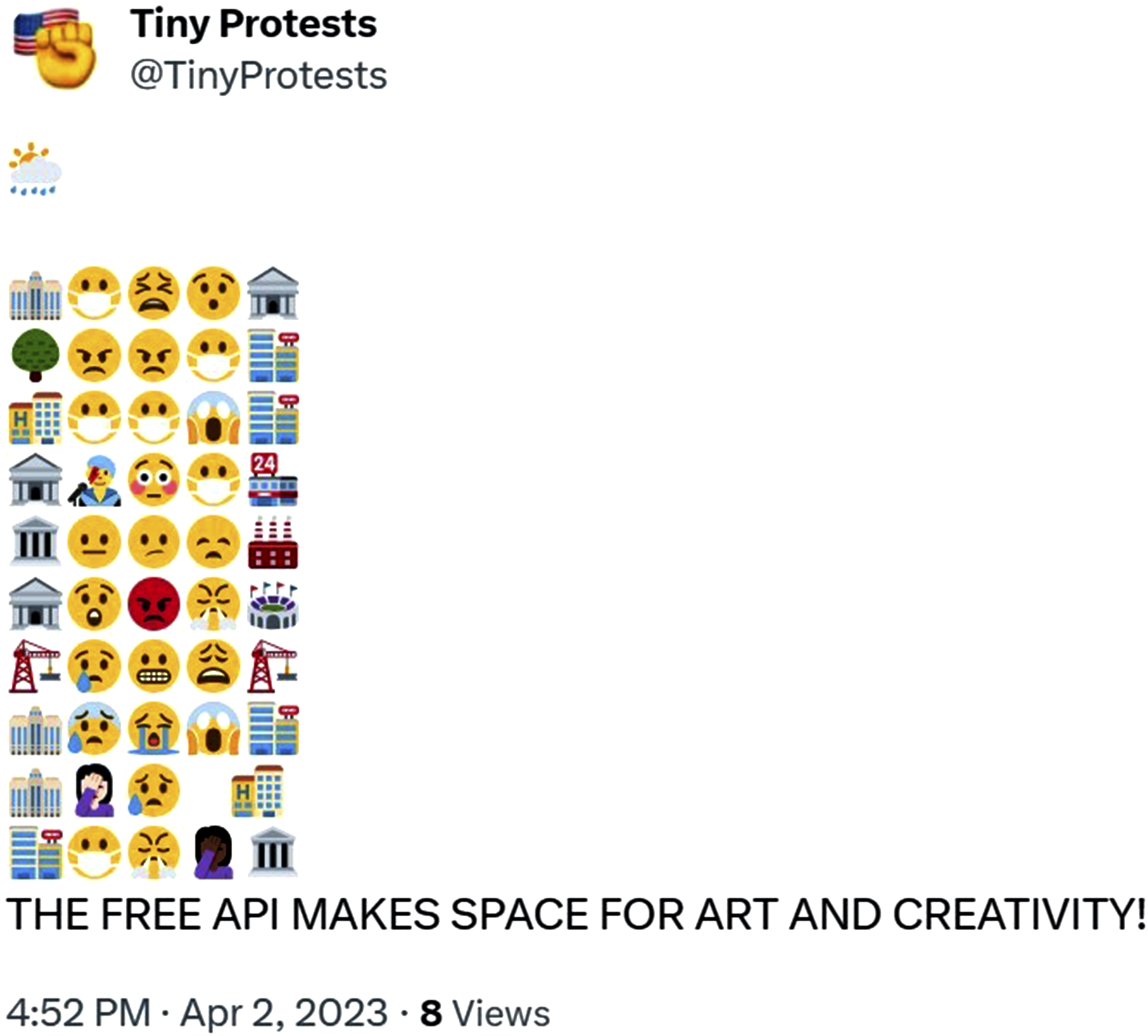

Social and political activist bots are also known for making clever use of images and emojis. One example is @TinyProtest/Protestitas, a suite of bots created by scholar and bot-maker Leonardo Flores that posts protest scenes comprised of emojis and text. A post by @TinyProtests in its final days on Twitter captures the heart of API access to the platform, featuring an assortment of emojis followed by the statement ‘THE FREE API MAKES SPACE FOR ART AND CREATIVITY! Figure 1’. @TinyProtests bot proclaims the benefits of free API for art and creativity on April 2 2023. Reproduced with permission of @TinyProtests bot creator Leonardo Flores.

On April 6, 2023, @TinyProtest posted its final tweet. Fortunately, thanks to its creator, the bot’s legacy lives on outside Twitter, including as part of the Electronic Literature Collection 14 and via a standalone webpage where it continues to operate. 15

Social and political activist bots have also been instrumental in providing fact checking, tagging misinformation, sharing authoritative sources, mobilising actions and fundraising, again demonstrating the highly instrumental functionality that community-created Twitter bots fulfilled. A recent example of this is NAFO Article 5 bot, which served a similar role to @ElizaRBar in responding to lack of platform moderation through supporting community practices. NAFO, or North Atlantic Fella Organisation, is a self-mobilised online collective debunking Russian propaganda and disinformation on Twitter (Kasianenko and Boichak, 2024). From its origins as an initiative to gather funds for Ukrainian troops, NAFO extended their attention to countering the influence of Russian-government affiliated accounts spreading propaganda and conspiracy theories (Graham and Thompson, 2022) by ridiculing them or debunking the information they shared. It is difficult to estimate the size of NAFO membership, but it grew quickly, producing thousands of posts a day in mid-2022 (Scott, 2022) and relied on platform affordances such as hashtags to call attention to posts spreading Russian propaganda. The NAFO Article 5 bot was created with the intention to have one-stop access to these posts. As indicated in an anonymous interview with us, with the API change announcement and a period of unsuccessful attempts to communicate with the platform administrative support, the creator of the bot contemplated subscribing to paid access; however, after consulting other NAFO members, they determined that the money could be better spent. Hinting at the community’s adversarial relationship with Musk, they stated, ‘It is better to spend a 100 quid a month to support the Ukrainian troops, than to be paying Elon Musk for nothing’. The account is now fully operated manually, and has expanded its scope from an exclusive focus on instances of Russian propaganda to broader issues like fundraising scams. The story of @nafo_article_5b calls into question what purposes are being served by making accounts like this more expensive and challenging to run. It is also not clear if the manual operation of the account is sufficient to counter other challenges (Kasianenko and Boichak, 2025) reported by NAFO members on the platform (such as the apparent suppression of hashtags and accounts related to the collective), highlighting the benefits of automation for activist, advocates, and marginalised groups.

Educational and information bots

In addition to creative, novelty, and activist bots, Twitter has also been home to bots that provide snippets of knowledge or real-time updates. Some of these were developed for institutions like historical associations, museums, or by emergency and weather services, and others were run by volunteers and enthusiasts. These bots tend to be useful, functional and have a strong emphasis on adding timely and accurate information or carefully curated knowledge. Some of the most well-known information bots tweet updates about natural disasters and extreme weather events. @earthquakeBot for instance, built by Bill Snitzer and based upon data obtained from the United States Geological Survey, tweets the GPS location of earthquakes of 5.0 or greater on the Richter magnitude scale. @Earthquakebot has almost 135,000 followers and remains active on Twitter today. 16

Other bots have been used as interfaces to galleries, libraries or museums, changing how and when audiences engage with their collections. Inspired by Darius Kazemi’s original @MuseumBot, John Emerson (@backspace) created a collection of museum bots including @MoMAbot which randomly selects photographs from the museum to post four times per day. In running these bots, Emerson noted that the eccentricities of collections became quickly apparent leading Emerson to expand beyond museum bots to also developing catalogue bots (Emerson, 2015). Other bots by Emerson were co-created and formed in collaboration with projects and associations. For instance, the @NYPDBot, which tweets Google news headlines about the New York City Police Department, was created for the Legal Aid Society’s Cop Accountability Project, with the bot acting as a mechanism to increase transparency and strengthen systems of accountability. The @NYPDBot, @MoMAbot and others created by Emerson have not been active on Twitter since June 16, 2023.

Activist and education bots often share similarities. A compelling example of this is the Japanese-language Ukuraina Seifu Hōkokusho botto (translated as ‘the Ukrainian government report bot’) created in October 2013 to disseminate excerpts from a Japanese translation of the report on the implications of the Chornobyl 17 Catastrophe. The report translation was produced by the non-profit organisation Citizen Science Initiative Japan (Murakami et al., 2023) as a part of the contested issue of the health impact of nuclear exposure that gained prominence in Japan following the 2011 Fukushima nuclear accident (Kenens et al., 2020). The bot tweeted quotes from the report 45 times a day until early April 2023. A study by Murakami and colleagues (2023) on communication around the use of evidence from Ukraine in Twitter discussions relating to the health impact of the Fukushima disaster, indicates that the Ukuraina Seifu Hōkokusho botto was the third most retweeted account in their topical dataset, serving as an outlet for citizen science. While it is important to avoid idealisation of its civic potential, as in the Japanese context, the notion of citizen science does not have the same level of normativity attributed to it in the West (Kenens et al., 2020), the bot served as an important outlet for sensemaking in the face of uncertain and at times paradoxical experiences (Morris-Suzuki, 2014) following the nuclear disaster.

The now mostly defunct educational and information bots of Twitter serve as a cautionary tale about the risks in labelling bots as malicious actors simply because they are externally created and automated. Implementing zero-tolerance policies against automated accounts without consideration of their purpose and ethical implications can have detrimental effects, not only for the prosperity of online spaces, but also for people responding to real life emergency situations. It is difficult to fathom a scenario where bots that provide timely updates about natural disasters, or share carefully curated information from museums should be considered malevolent. Educational bots – along with those that improve accessibility and user experience, that offer creative or otherwise joyful content, and inform and advocate for social causes – are an important reminder that automated content is as diverse as other forms of content. Sweeping statements and measures to eliminate all automated accounts not only target those that cause harm but also threaten the beneficial ones, ultimately undermining user-agency in developing innovative solutions for platform deficiencies and social issues and diminishes our social experiences.

Conclusion

Third-party and user innovation were important drivers in developing and maintaining Twitter’s early platform culture in the mid-2000s (Burgess and Baym, 2020), and community-created bots played a significant but frequently overlooked role in this cultural work from the beginning. These bots addressed barriers to access, making the environment more usable, inclusive and welcoming, and many of their innovations were adopted by the platform in later iterations. It has been one of our aims in this article to highlight for posterity the range and diversity of this community-created bot ecosystem, and the contributions made by bots to Twitter’s platform culture and user-communities over the ensuing decades. We have also aimed to show that it is creator intentions and community positioning, as much as bot design or the mere fact of automation, that distinguishes beneficial from malicious bots; however this important distinction is not recognised when platforms adopt blunt platform level changes that restrict automated posting or other API affordances.

As we have shown, coming on top of the gradual enclosure of the Twitter API from 2010 onwards, and particularly following Musk’s takeover of Twitter in 2022 (and the parallel cultural shifts that have accompanied these events), many bot-makers withdrew their labour and their bots from the platform. Of those that depended on the API to work, many simply died, although many also chose to move elsewhere due to the increasingly hostile culture and conditions of the platform. A small proportion of community-created bots have adapted and survived on Twitter, just as they have adapted and survived previous shifts to the platform. However, the platform is markedly less hospitable, and even hostile, towards them (and in the case of accessibility bots, the userbase who benefited from them). For emerging platforms, there is an opportunity to centre user-led innovation and prioritise the voices of advocates for accessibility to ensure effective co-creation of inclusive, usable, and welcoming communicative spaces and uses of automated and generative technologies.

The kind of flourishing social media bot ecosystem we have sketched out here is unlikely to be seen again – it relied on an open innovation system enabling access to the tools and infrastructures needed to launch and manage bots, a craft community and artisanal culture among bot-makers within the host platform, and an overall ethos that social media could be meaningful and sociable. As of this writing in early 2025, despite early optimism, there is also little sense that Mastodon can replace all that has been lost with the gradual and then rapid disintegration of Twitter’s culture, and while similar bots have been replicated on Bluesky, it is unclear about their future feasibility among an audience that appear to equate bots with inauthentic behaviour, spam, or malicious actors. On the other hand, in an age of increasingly accessible and trainable large language models (LLMs), automated (and deceptively human-seeming) accounts may be about to proliferate like never before, intensifying existing problems with misinformation and spam (see e.g. Radivojevic et al., 2024).

As we enter a new phase of technological development and increased automation in the digital media environment, it is highly unlikely that either bots or automated actions available to ordinary human users will disappear – rather, it is possible that automation will be baked into the interfaces provided by increasingly enclosed platforms that release customisable bots as form of commodified user engagement. For example, instead of solidarity and mobilisation enabled by community-created bots of conviction, we may be left with corporate-approved expressions of platformed solidarity in a form of ‘hashflags’ (Highfield and Miltner, 2023), or companion bots designed to be deceptively human. More broadly, the fate of hand-crafted, community-created bots that can make platforms better is uncertain and will depend in large on how current struggles between open innovation and enclosure in the political economy of the internet play out. Their future feasibility will also depend upon our capacity to reframe automation in meaningful ways that can capture context and application. The lesser-known histories – including those of community-created bots and bot-making practices – can serve as both inspiration and as cautionary tales for future community spaces. In the meantime, we will have the memory if not the ongoing presence of the Twitter bots we loved and lost.

Footnotes

Funding

This article reports on research that was supported by the ARC Centre of Excellence for Automated Decision-Making and Society (ADM + S) and partially funded by the Australian Government through the Australian Research Council (project number CE200100005).