Abstract

Partisanship, polarization, and platforms are foundational to how people perceive contentious issues. Using a probability sample (n = 825), we examine these factors in tandem across four political claims concerning US presidential elections and the COVID-19 pandemic. We find Democrats and Republicans differ in their belief in true and false claims, with each party believing more in pro-attitudinal claims than in counter-attitudinal claims. These results are especially pronounced for affectively polarized partisans. We also find interactions between partisanship and platform use where Republicans who use Google or Twitter are more likely to believe in false claims about COVID-19 than Republicans who do not use these platforms. Our findings highlight that Americans’ beliefs in political claims are associated with their political identity through both partisanship and polarization, and the use of search and social platforms appears critical to these relationships. These findings have implications for understanding why realities are malleable to voter preferences in liberal democracies.

Keywords

They live, we are likely to say, in different worlds. More accurately, they live in the same world, but they think and feel in different ones.

Divisiveness is on the rise, especially in the United States. Americans disagree about policy issues more than a few decades ago (Pew Research Center, 2021). Disagreement by itself is not necessarily problematic. Liberal democracies rest on the idea that the exchange of ideas among parties who disagree will eventually lead to the best ideas winning out, as long as people are well-informed and willing to deliberate (Fishkin, 2011; Gutmann and Thompson, 2009). Being well-informed is particularly important; if people disagree about the objective state of the world, how can they engage in a rational exchange of ideas? As Daniel P. Moynihan (1983: A17) pointed out, “[E]veryone is entitled to his own opinion, but not his own facts.” Yet Americans increasingly live—or, as Lippmann (1922) put it, think and feel—in different worlds, where different assertions are perceived as facts (Jones, 2020). This tendency is epitomized by the 2020 US presidential election, where many Republicans believed Donald Trump’s claims, despite no sustained evidence, that the election was “stolen” from him, even after Joe Biden’s inauguration (Reuters, 2021). From a democratic perspective, this tendency is worrisome because democracies require voters to make informed decisions (e.g. Fishkin, 2011; Gutmann and Thompson, 2009) and because perceptual differences might undermine trust in the process. The idea that one’s party is treated unfairly might, for example, erode trust in political institutions.

For this reason, we examine the propensity for Democrats and Republicans to be more willing to believe in pro-attitudinal than counter-attitudinal claims about two contentious contemporary issues—the COVID-19 pandemic and the integrity of US elections—regardless of the veracity of the claims. Using a nationally representative probability sample (n = 825) of the adult US population, we examine Americans’ belief in two claims concerning COVID-19 as well as two claims concerning recent US elections. This approach allows us to bridge prior work, which has often focused on knowledge (i.e. belief in true information) (e.g. Chaffee et al., 2001) or misperceptions (i.e. belief in false information) (e.g. Kuklinski et al., 2000). We incorporate measures of online platform use across four search and social platforms to understand how exposure to political information on various digital media contributes to (in)accurate beliefs. Finally, we consider affective polarization to understand how media use and disdain for out-partisans might contribute to perceptual biases. Implications exist for those aiming to combat false narratives by understanding who is prone to perceptual biases and why.

Perceptual biases: believing different facts to be true

Partisans’ inclination to believe in different facts is closely tied to perceptual bias, where partisans “have higher levels of knowledge for facts that confirm their worldview and lower levels of knowledge for facts that challenge them” (Jerit and Barabas, 2012: 672). This tendency might be rooted in partisanship as a social identity. According to social identity theory, people generally categorize others into ingroup and outgroup membership (Tajfel and Turner, 1979), favoring the ingroup over the outgroup (Hewstone et al., 2002), not least in political contexts, where partisans compete with the outgroup for scarce resources (Achen and Bartels, 2017; Mason, 2018).

Similar to motivated reasoning (Kunda, 1990), perceptual bias is influenced by partisanship where political motivations shape how people process information. 1 In the current research, we examine Democrats’ and Republicans’ beliefs in four political statements about US politics and the COVID-19 pandemic. Based on an abundance of research on biased processing and differential beliefs (e.g. Druckman and McGrath, 2019; Nyhan and Reifler, 2010), we expect Democrats will be more inclined than Republicans to believe in statements that fit the Democratic Party’s narrative and vice versa. This expectation is foundational to the rest of the study, which seeks to explain when and why the tendency occurs.

Research demonstrates that Democrats and Republicans think “in different worlds” across a variety of issues. Trust in climate science is, for example, higher among Democrats than Republicans (Pew Research Center, 2016). Americans’ perceptions of the US economy also change depending on circumstances. When Americans’ preferred party holds power, they tend to perceive the US economy as healthier than when the opposing party holds power (Achen and Bartels, 2017; Bartels, 2002). Perceptual biases also permeate how Americans view foreign affairs. Following the Iraq War, as it became clear that weapons of mass destruction (WMDs) did not exist, partisans interpreted this fact differently. More Republicans than Democrats thought that WMDs existed but simply were not found (Gaines et al., 2007). In short, perceptual biases are well-documented (for recent reviews, see: Achen and Bartels, 2017; Flynn et al., 2017; Mason, 2018), and have grown more pronounced in recent decades (Jones, 2020), particularly in highly polarized countries like the United States.

The current study examines two contentious contemporary issues, which may elicit partisan asymmetries in belief: the COVID-19 pandemic and recent US presidential elections. Behavioral responses to COVID-19 were characterized by partisan differences from the outset. In March 2020, Democrats were significantly more likely to report participating in Centers for Disease Control and Prevention (CDC)-recommended health behaviors like hand-washing and social distancing (Gadarian et al., 2021). Arguably, differences in reported health behaviors are reflective of differences in perceived realities of the pandemic. Similarly, responses to the 2020 US presidential election reflected opposing realities toward US elections, drawn along partisan lines. In a survey of voters following Election Day, 65% of Trump voters reported believing Trump won the election (Pennycook and Rand, 2021). Furthermore, both events were covered extensively by media in the United States, which may contribute to the development of perceptual biases (Jerit and Barabas, 2012). In each case, a portion of the public holds a belief that runs counter to the best available information, suggesting that perceptual biases might exist for these key events. Thus, we suggest:

H1. Americans will be more inclined to believe in pro-attitudinal than counter-attitudinal claims, regardless of the veracity of the claims.

By considering the truth or falsity of claims, we integrate prior work, which has often focused on the implications of pro-/counter-attitudinal information for either knowledge (i.e. belief in true information; Chaffee et al., 2001; Guess and Coppock, 2020) or misperceptions (i.e. belief in false information; Kuklinski et al., 2000). Here, we assess partisan differences in belief about political claims across four cases. The claims consisted of (a) two true claims about US elections (one favorable to Democrats, one favorable to Republicans) and (b) two false claims about COVID-19 (one favorable to Democrats, one favorable to Republicans). 2

Affective polarization and belief in political claims

Americans’ perceptions of political realities may be tied to affective polarization, or partisans’ increasing disdain for their political opponents (Iyengar et al., 2012, 2019). More affectively polarized people tend to be prone to misperceptions such as believing the nation is more politically divided than reality (Westfall et al., 2015) or that political opponents hold more extreme views than they do (Yudkin et al., 2019). Historical trends also point to a potential relationship between perceptual biases and affective polarization: both have gradually intensified in the United States over the past half-century (Iyengar et al., 2019; Jones, 2020). Affective polarization may shape people’s willingness to believe in false claims fitting their party’s narrative. Partisan media use is shown to contribute to misperceptions about political candidates, an association that is indirect through increased affective polarization (Garrett et al., 2019). It is hardly surprising that being prejudiced against a given political candidate would make people more inclined to believe in negative misinformation about that candidate. We, however, hypothesize that the problematic contribution of affective polarization on perceptual biases is more general; in particular, we expect affective polarization to predict what people believe about political claims across topics and veracity:

H2. Affective polarization will moderate the relationship between partisanship and political beliefs; specifically, differences in political beliefs will be greater for more affectively polarized partisans.

Platforms and belief in political claims

People’s information environment may contribute to the development of perceptual biases (Jerit and Barabas, 2012). Sizable portions of the American public not only report using search and social platforms, but they also turn to these online spaces for news (Shearer, 2021) or passively rely on news to find them there (Gil de Zúñiga et al., 2017). Platforms shape how individuals process political information. People gather political information from the content they see on social media, even that which appears non-political (Settle, 2018). Yet, social media news use is not associated with political knowledge gains (Gil de Zúñiga et al., 2017; Shehata and Strömbäck, 2021), which suggests that while people may form political opinions based on social media content, they are not necessarily forming accurate views. In addition, user-generated content is treated by journalists and political campaigns as an indicator of public opinion (McGregor, 2019, 2020) furthering the likelihood that the claims that exist online are shared and re-shared in ways that could perpetuate the perception that they are true. Based on these uses of social media for political information, it is imperative to understand how specific platforms might elicit differences in perceptions.

There are features of search engines and social media that could shape people’s beliefs in political claims. These features include the ideological engagement privileged by the affordances of each platform, algorithmic influences in some cases, and network structures that lend credibility to information. For search engines, showcasing information through search results might be influential to how people come to (dis)believe information. Flaxman et al. (2016) find ideological segregation is greatest between users of search engines compared with those who directly visit news sites, use news aggregators, or consume social media. For social media platforms, networks are important to how people (dis)believe information. Online social networks are largely homogeneous (Himelboim et al., 2013), increasing the likelihood information is believed. In addition, some features contributing to belief in political claims are specific to each platform. Thus, we discuss each search and social platform of interest separately before posing hypotheses.

Facebook is composed of largely homogeneous networks of primarily strong ties (Bakshy et al., 2015). These networks may provide a space where information is privileged as true simply because a close, like-minded tie shared it. Studies provide evidence of this belief in information, even problematic information, with findings tying Facebook use to increases in belief in COVID-19 conspiracies as well as conspiracies surrounding topics like Jeffrey Epstein and 9/11 (Stecula and Pickup, 2021). Based on this work, Facebook users are accustomed to trustworthy networks of like-minded information-sharing and may be more likely to believe political claims in general.

Google is characterized by its use for information-seeking and the confidence users must place in the search results returned to them. In a study of web traffic data, Cardenal et al. (2019) find Google use reduces selective exposure, or the likelihood of users selecting like-minded search results. In addition, Google searches are shown to reduce a person’s likelihood of believing misinformation (Kobayashi et al., 2021), suggesting search engines may be useful tools for producing counterarguments. These normatively positive outcomes may be the result of a greater diversity of content provided through search results (Trielli and Diakopoulos, 2022) though research is mixed on whether this is the case (Borra and Weber, 2012). Overall, Google users appear to be exposed to diverse content that may help build resistance to misinformation, leading to less bias toward pro-attitudinal content and less belief in false claims.

Twitter—unlike Facebook—is made up of large swaths of loosely connected, weak ties (Valenzuela et al., 2018). In addition, Twitter users are shown to be high in “need for cognition” (Hughes et al., 2012) and more likely to feel a need to stay abreast of current events (Lee and Oh, 2013). Thus, they may be more apt to seek accurate or greater quantities of information. This possibility is supported by evidence that Twitter usage curbs belief in COVID-19 conspiracies (Theocharis et al., 2021). By contrast, political misinformation is known to circulate on Twitter in “echo chambers” (Shin et al., 2017) and spread further than true claims (Vosoughi et al., 2018). This literature presents a complex understanding of the platform where misinformation is known to spread rapidly but Twitter users may also be skilled information seekers and resilient to such false claims. Thus, we pose a research question to understand if Twitter use is associated with belief in true and false political claims.

YouTube

YouTube users are motivated to view content for the purpose of information seeking (Rosenthal, 2018); although, sharing behaviors on YouTube are more aligned with the goals of entertainment or social interaction (Haridakis and Hanson, 2009). When selecting video content, research suggests that users are likely to choose videos consistent with their prior attitudes (Bae, 2018). Furthermore, comprehension is also greater when people view political YouTube videos that align with preexisting beliefs (Bowyer et al., 2017). Limited research exists on whether people believe content on YouTube; rather, scholars have focused on engagement or the sheer presence of misinformation on the platform (Knuutila et al., 2020). However, like Facebook, use of YouTube for news is shown to increase belief in COVID-19 conspiracies (Theocharis et al., 2021). While YouTube users selectively choose content—which we unpack in the following section—the primary motivation of information-seeking on the platform would likely lead them to process claims as true.

Based on these differences in (a) user motivations and (b) features across the platforms, we anticipate the use of different platforms will be associated with the believability of (mis)information such that:

H3a. Use of Google will be associated with lesser belief in false claims and greater belief in true claims.

H3b. Use of Facebook and YouTube will be associated with greater belief in true and false claims.

RQ1. Is Twitter use associated with belief in true and false claims?

Partisan-platform dynamics

Research on platform uses and features suggests people selectively choose content from search engines and social platforms; thus, we anticipate that partisanship will amplify the above-hypothesized platform effects for belief in true and false claims.

For social media, there is evidence that partisans seek out like-minded sources and may double down on partisan beliefs in the face of counter-attitudinal content. On Facebook, a majority of the national news pages liked by Republicans are conservative outlets (58.3%) whereas 40% of pages liked by Democrats or Independents are liberal outlets; half of national news pages liked by Democrats are considered non-biased media (Haenschen, 2021). In addition, use of Facebook is associated with greater selective exposure for Democrats and less selective exposure for Republicans (Cardenal et al., 2019). On Twitter, Republican participants became more conservative after following a liberal bot; similar effects were not found for Democrats after following a conservative bot (Bail et al., 2018). This pattern continues when considering misinformation on social media where false claims tend to benefit Republicans more often than Democrats (Allcott and Gentzkow, 2017).

In the case of search engine use on platforms like Google, a similar pattern exists where use of platforms is associated with exposure to like-minded content. Use of Google is associated with greater selective exposure for Republicans and less selective exposure for Democrats (Cardenal et al., 2019). Cardenal et al. (2019) hypothesize a possible explanation for this result, suggesting that conservatives might use Google to find a specific newspaper that they want to read while liberals may use search terms and choose among relevant results accordingly. This explanation aligns with research that suggests people who are more skeptical of media are more likely to seek out non-mainstream news (Tsfati and Cappella, 2003), which has been observed for conservative audiences (Tripodi, 2018).

Overall, research points to a tendency to prioritize like-minded partisan sources on social media or follow partisan-specific patterns of search behavior on Google. We, therefore, expect partisanship to amplify the effects of platform usage on political beliefs.

H4. The relationship between partisanship and political beliefs will be moderated by social media and search engine usage. Specifically, differences in political beliefs will be (a) lower among partisans who use search engines (Google) and (b) higher among partisans who use social media (Facebook, Twitter).

Method

We use a nationally representative survey (n = 1010) of the adult US population, conducted by the National Opinion Research Center (NORC) through their AmeriSpeak panel in August 2020. Participants were selected with area probability and address-based sampling. Recruitment took place via mail, telephone, and face-to-face interviews. Panelists completed the survey online (n = 978) or over the phone (n = 32). Because the study focused on partisans, Independents (n = 185), who did not answer the partisanship question or who said they leaned toward neither side were removed. This study’s 95% margin of error, adjusted for the design effect, is ±4.09%. 3 The sample is weighted based on demographics (age, gender, race/ethnicity, education), Census division, and housing tenure.

Participants

The final sample (n = 825) was 52.1% female, 68.0% White, 10.2% Black, 14.5% Hispanic, 7.3% other, and represented all age groups (26.8% aged 18–29; 24.1% aged 30–44; 27.2% aged 45–59; and 21.9% aged 60 or above), education levels (19.2% high school or less, 43.5% some college, and 37.3% college degree or more), and both major political parties (55.8% Democrats; 44.2% Republican). Leaners were treated as partisans, consistent with previous research (Petrocik, 2009). A power analysis found that this sample size was sufficient to detect small to moderate effect sizes with α = .05 and power = .80.

Measures

Belief in information

Participants rated four statements from (1) definitely true to (5) definitely false: (a) Russia tried to interfere in the 2016 presidential election; (b) since February 2020, the flu has resulted in more deaths than the coronavirus; (c) Trump failed to send US health experts to China to investigate coronavirus; and (d) it is illegal to mail ballots to every registered voter. Items were reversed such that a higher score reflected greater belief. The rationale for the selection of these specific claims and additional contextual details are provided in the Online Appendix (S1).

Affective polarization

Affective polarization was defined as how negative partisans felt toward their outparty (see Overgaard et al., 2022; Wojcieszak and Warner, 2020), on an 11-point feeling thermometer where a higher number indicated greater polarization (M = 7.95, SD = 2.05). The findings remain robust when another common operationalization, inparty feelings minus outparty feelings (e.g. Iyengar et al., 2019), is used (Table S3).

Platform use

Platform use was measured by asking respondents which of the following platforms they had used in the past 7 days: Facebook (72.5%); Twitter (23.2%); Google (76.5%); and YouTube (71.6%). Only 2.4% of participants said they used the Internet but did not access any of the platform options in the week before the study and 0.7% said they did not use the Internet.

Education

Education was measured on a 5-point scale by asking participants the highest level of education they had attained. Education was then collapsed into three categories: (a) high school diploma or less, (b) some college, and (c) college degree or higher. “High school diploma or less” served as the reference category in the regression analyses below.

Results

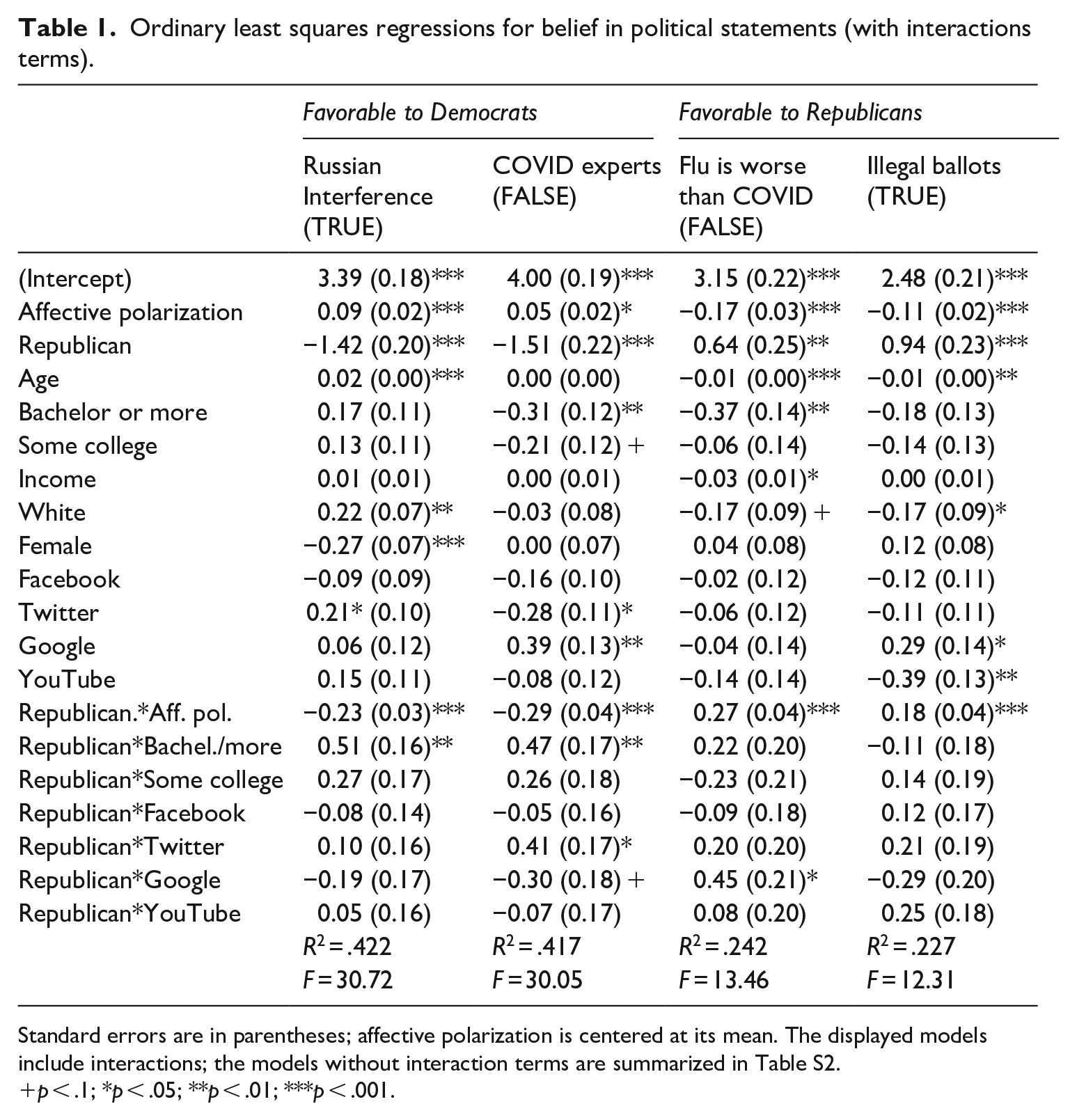

We tested the hypotheses and research questions using ordinary least squares regressions. The full models, with controls, are presented in Tables 1 and S2. 4

Main effects

H1 predicted Americans’ political identities would align with their beliefs in political information. We tested H1 by regressing belief in each of the four claims on political identity. 5 As expected, Republicans were less inclined than Democrats to believe the two claims supportive of the Democratic Party; Republicans were less likely to believe that Russia tried to interfere in the 2016 US presidential election (b = −1.29, SE = 0.07, p < .001) and that Trump failed to send US health experts to China to investigate the coronavirus (b = −1.46, SE = 0.08, p < .001). 6 Republicans were more inclined than Democrats to believe the two claims supportive of the Republican Party; Republicans were more likely to believe the flu had killed more people than COVID-19 (b = 1.02, SE = 0.09, p < .001) and that it was illegal to mail ballots to every registered voter in the United States (b = 1.02, SE = 0.08, p < .001). H1 is supported. For full main effects models, see Table S2.

H3a predicted Google use would be associated with lower levels of belief in true and false claims, whereas H3b predicted Facebook and YouTube use would be associated with greater levels of belief in true and false claims. As shown in Table S2, Google use was associated with greater belief in the false claim about COVID-19 experts (b = 0.29, SE = 0.09, p = .002) and had no significant association with belief in any other statement. Facebook use was associated with lower belief in the false statement about COVID-19 experts (b = −0.18, SE = 0.08, p = .031) but had no association with belief in the other statements. YouTube use was associated with lower belief in the true statement about the illegality of mailing ballots (b = −0.22, SE = 0.09, p = .018) but had no significant association with belief in other statements. In the case of Google, Facebook, and YouTube, findings are significant for one of the four cases tested, and in each case, the findings run contrary to the expectations of H3a and H3b. Finally, RQ1 asked if Twitter use was associated with belief in true and false claims. Twitter use was associated with believing the true claim about Russian interference in the 2016 US election (b = 0.31, SE = 0.08, p < .001), but was not significantly associated with belief in any other claims.

Interactions

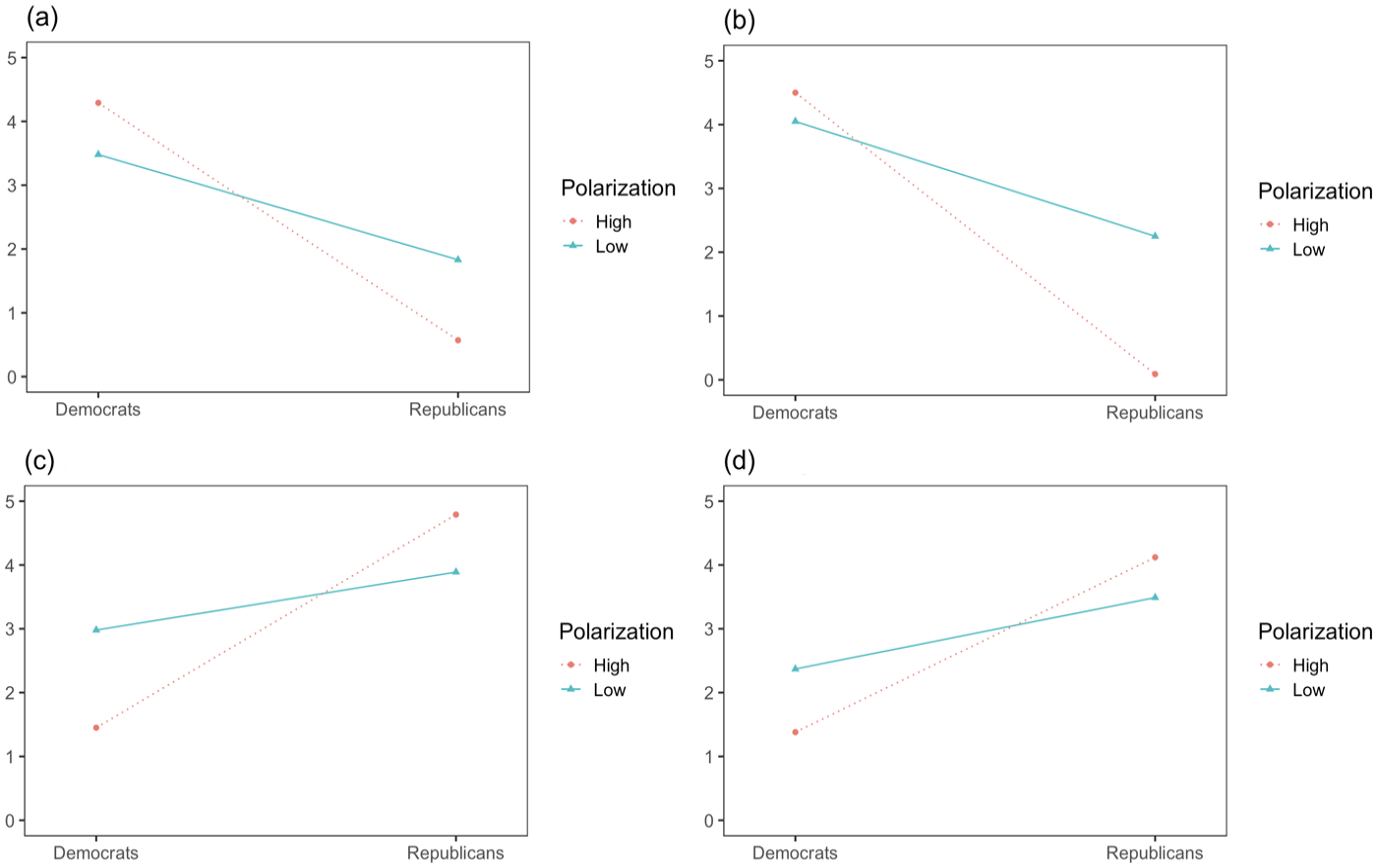

We tested H2 and H4 by adding interaction terms to our model between partisanship and (a) affective polarization, (b) education level, 7 and (c) each of the four platforms of interest (see Table 1). 8 H2 predicted more affectively polarized partisans would exhibit greater differences in political beliefs. H2 was supported for each of the four claims. As shown in Figure 1, affective polarization was associated with a decrease in Republicans’ (relative to Democrats’) beliefs in the two statements favoring Democrats: (true) Russia interfered in the 2016 US election (b = −0.23, SE = 0.03, p < .001), and (false) President Trump’s failure to send COVID-19 experts to China (b = −0.29, SE = 0.04, p < .001). Conversely, affective polarization was associated with an increase in Republicans’ (relative to Democrats’) beliefs in the two statements favoring Republicans: (true) the illegality of mailing ballots to all registered voters (b = 0.18, SE = 0.04, p < .001) and (false) the flu is worse than COVID-19 (b = 0.27, SE = 0.04, p < .001).

Ordinary least squares regressions for belief in political statements (with interactions terms).

Standard errors are in parentheses; affective polarization is centered at its mean. The displayed models include interactions; the models without interaction terms are summarized in Table S2.

p < .1; *p < .05; **p < .01; ***p < .001.

Interaction between partisanship and affective polarization on belief in claims regarding (a) Russian interference, (b) COVID-19 experts, (c) the flu being worse than COVID-19, and (d) illegal ballots. The Y-axis denotes belief in claims.

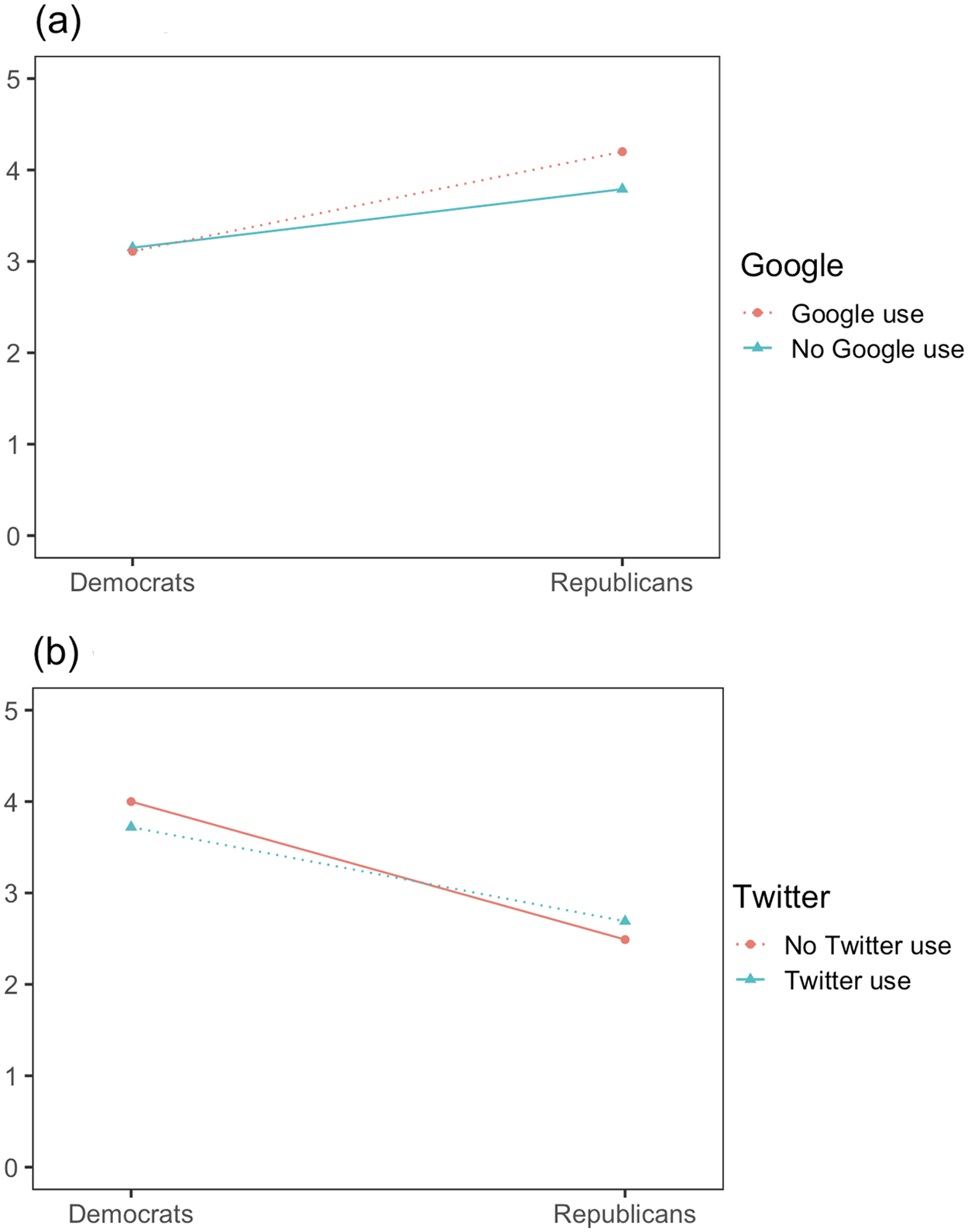

Finally, H4a predicted differences in belief in political claims would be lower among partisans who use search engines. Counter to this expectation, Google use was associated with greater partisan differences in belief in the false statement about the flu being worse than COVID-19 (b = 0.45, SE = 0.21, p = .032) and had no significant association with partisan differences for any other claims. H4a is not supported. H4b, which predicted partisan differences in belief would be greater among social media users than among non-users, is also not supported. For the false claim about Trump failing to send COVID-19 experts to China, the association between partisanship and belief in the claim was stronger among Twitter non-users than among Twitter users (b = 0.41, SE = 0.17, p < .05), counter to our expectations (see Figure 2). Twitter had no moderating effect on the differences in Democrats’ versus Republicans’ belief in any other claims. Furthermore, Facebook had no significant association with belief for the four claims.

Interaction between Google use and partisanship for the claim that the flu is worse than COVID-19 (a) and interaction between Twitter use and partisanship for the claim regarding COVID-19 experts (b). The Y-axis denotes belief in claims.

Discussion

This study investigates the propensity for partisans to live “in different worlds,” where different representations of reality—particularly those that favor one’s preferred party—are thought to be true. Using a probability sample of US adults, we demonstrate, consistent with prior research, that Democrats and Republicans are more inclined to believe pro-attitudinal than counter-attitudinal claims. This general tendency is observed for true and false claims alike, and we find that affective polarization and platform usage contribute to these relationships. To advance this research, we theorize that this tendency is explained by demographic, communication, and political factors. We present evidence that people’s inclination to believe congenial claims is associated with different platform usage (i.e. search engines and social media) and partisans’ disdain for the “other side.” Our findings have theoretical and societal implications, especially for understanding how realities are malleable to partisan preferences in liberal democracies.

We find that, regardless of the truth of claims, Democrats and Republicans are more likely to believe congenial claims about COVID-19 and US elections; this echoes prior evidence of people living “in different worlds,” disagreeing not only about politics but also about the objective state of the world (Achen and Bartels, 2017; Jones, 2020). This finding is worrisome because democracies depend on citizens to make informed decisions and because it can undermine trust in political institutions. Following the 2020 US presidential election, many Republicans believed Trump’s claims that the election had been stolen from him, even months after President Biden’s inauguration (Reuters, 2021). When a substantial part of the electorate believes rules are broken to their disadvantage, trust in the system itself is diminished. Given the import of perceptual biases, we examine a range of demographic, communication, and political factors, suggested by research and theory, to explain why partisans believe pro-attitudinal claims, even when those claims are not grounded in reality.

We find that differences in partisans’ beliefs about political issues are associated with affective polarization. Those who are more affectively polarized tend to have more diverging views of contentious issues than those who are less polarized. Our findings converge with evidence of rising affective polarization and perceptual biases over the past 50 years (Iyengar et al., 2019; Jones, 2020) and with evidence of an association between affective polarization and misperceptions about polarization itself (Westfall et al., 2015; Yudkin et al., 2019). Furthermore, our findings echo Garrett et al. (2019) and also show that the linkage between affective polarization and political misperceptions is broader and more general than previously demonstrated. Specifically, Garrett and colleagues showed that affective polarization (in the form of prejudice toward certain political leaders) was linked to greater levels of misperceptions about those leaders; our study shows that affective polarization (toward party members broadly) is strongly associated with partisans’ beliefs in claims across political topics (elections, COVID-19), regardless of the veracity of the claims. This link between affective polarization and political misperceptions is particularly noteworthy considering recent work questioning if and how outparty hostility is problematic in liberal democracies (Voelkel et al., 2023). Our findings suggest differences in partisan belief about political facts deserve more scholarly attention as a potential consequence of affective polarization. Future research should examine if this relationship holds when assessing different issues or operationalizations of these measures. The high number of Americans who believe the 2020 US presidential election was “stolen” (Reuters, 2021) underscores the magnitude and importance of this endeavor.

We incorporate communication factors related to the use of social media and search platforms to understand how use of specific information environments might be associated with greater or lesser likelihood of believing claims. Contrary to expectations, Google and YouTube use were associated with more problematic beliefs (less belief in a true claim, greater belief in a false claim). Google use was associated with greater belief in one of the false claims (COVID-19 experts) and unrelated to the true claims or the false claim regarding the flu and COVID. YouTube users were less likely than non-users to believe the true claim regarding the illegality of mailing ballots to all registered voters. In addition, Facebook and Twitter use was associated with more accurate beliefs. Facebook use was associated with less belief in the false claim regarding COVID-19 experts. Twitter users were more likely to believe the true claim regarding Russian interference. These findings indicate that search engine users, like those accessing Google for information, may be more akin to YouTube users where biased information search processes privilege like-minded content (e.g. Bowyer et al., 2017) regardless of its falsity. On both Google and YouTube, the lack of a social network with which to judge the credibility of information against may also serve as a disadvantage especially given the case of Twitter and Facebook—spaces characterized by network associations—where beliefs tended to be more accurate. These findings contrast existing research. Prior work on political learning on social media suggests that while it is possible for people to learn about politics from social media news use, this outcome is often not realized (Bode, 2016; Gil de Zúñiga et al., 2017; Shehata and Strömbäck, 2021); rather, our findings may point to the utility of social and contextual clues on social media—in comparison to less context-rich outlets like YouTube and Google—in helping individuals to discern truth and falsity among political claims.

Finally, we assess how the use of different platforms by Democrats or Republicans might be associated with greater or lesser belief in political claims. Contrary to expectations, we find an association between partisan Google users and belief in the false claim that the flu is worse than COVID-19. Notably, Republican Google users appear more likely to believe this pro-Republican false claim. We also find an association between partisan Twitter users and belief in the false claim that President Trump failed to send COVID-19 experts to China. Notably here, the partisan belief gap was smaller among Twitter users than among non-users. For two of the four claims examined here, there is an association between partisanship and platform use that has ramifications for which claims partisans are more or less likely to believe. Google use is associated with greater belief in a false claim for Republicans whereas Twitter use is associated with greater belief in a true claim for Democrats. These findings align with observed partisan dynamics in information-seeking behaviors where conservatives are more likely to seek out non-mainstream news sources (e.g. Tripodi, 2018) due to lesser trust in mainstream news or news on social media (Shearer and Grieco, 2019). Existing research suggests that Twitter users should be less likely to believe false information (Theocharis et al., 2021). Our finding, that Democratic Twitter users had more correct beliefs about the false statement about COVID experts than non-users is somewhat surprising, given the high levels of homophily found on Twitter for Democrats (Colleoni et al., 2014), which would have been expected to nudge them towards believing more in congenial claims. Importantly, we only find these dynamics at play in these two instances, both where belief in a pro-attitudinal claim for the respective party was assessed. Future research is needed to test the causality behind partisanship and consumption of information across social platforms and search engines as the evidence here suggests these relationships might shape the (in)accuracy of political perceptions.

This study is not without limitations. First, the data are cross-sectional; future research should use multiple survey waves or experimental designs to answer questions of causality. In addition, the social media items included in the survey measured use and not frequency, preventing us from considering how much people use each of these platforms. Although we do control for use of different platforms across our analyses, it is possible that our results would be different if accounting for heavy or moderate use of a platform. Future research could benefit from behavioral measures of digital trace data to address this, which would also mitigate general concerns about the validity of self-reported media use measures (e.g. Prior, 2009a, 2009b). Another potential limitation is that our political belief measures did not include the option “don’t know.” Although this does not prevent us from testing our hypotheses and research questions, replications that include such a response option would increase the confidence in our results. Furthermore, the current study focused on claims about voting and COVID-19; it is possible that focusing on other issues would yield different results. Future studies could expand on our work by examining beliefs about different issues. Finally, the study focuses on the US context. Perceptual biases are not uniquely American, but some components of the American context like greater polarization might contribute to our findings. Future work is needed to examine how affective polarization, use of online platforms, and political affiliations explain individuals’ construction of realities in other political contexts marked by similar (or even markedly less) divisiveness and disagreement.

The current research advances communication research in several ways, bridging work on misinformation, perceptual biases, social media usage, and affective polarization. Our finding that affectively polarized partisans exhibited greater differences in beliefs than less polarized partisans suggests more scholarly efforts should be made to integrate research in these two areas to understand how polarization and biased perceptions affect each other. This pattern held across four claims, regardless of veracity or the political party the claims favored. Finally, our findings concerning the use of search and social platforms complicate knowledge about the potential for these sources of information to reinforce, or in some cases hinder, people’s tendency to believe politically congenial information. This highlights the need for researchers to strive for a nuanced understanding of the mechanisms that perpetuate perceptual biases in liberal democracies. We see this as a fruitful avenue for helping partisans with different political orientations form a mutual understanding of the one world in which we all live.

Supplemental Material

sj-pdf-1-nms-10.1177_14614448231176551 – Supplemental material for In different worlds: The contributions of polarization and platforms to partisan (mis)perceptions

Supplemental material, sj-pdf-1-nms-10.1177_14614448231176551 for In different worlds: The contributions of polarization and platforms to partisan (mis)perceptions by Christian Staal Bruun Overgaard and Jessica R Collier in New Media & Society

Footnotes

Acknowledgements

The authors thank Natalie Jomini Stroud, the Center for Media Engagement team, and two anonymous reviewers for their feedback.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by grants to the Center for Media Engagement by the John S. and James L. Knight Foundation and the William and Flora Hewlett Foundation.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.