Abstract

The rise of misinformation often circulated in various social media platforms has not only raised concerns among the policymakers and civil society groups, but also among citizens. Drawing upon a cross-sectional survey (n = 1,013) among English-language internet users in India, this paper tries to identify factors that affect concerns for online misinformation among citizens and how online news participation is affected by the rise of misinformation. After controlling for gender, age, education and income, we found that WhatsApp use, party identification and trust in news are positively associated with the concern for misinformation. Similarly, partisans are more likely to engage with news online. While Facebook and Twitter use are positively associated with online news sharing, the use of WhatsApp is not significant. The empirical evidence adds new insights to the literature on misinformation and online news engagement from the world’s largest democracy.

The rise of misinformation often circulated in various social media platforms has raised growing concerns not only among policymakers and civil society groups, but also among citizens (Newman et al., 2019). The continuous rise of misinformation has led researchers to argue that we are living in a “misinformation society” (Pickard, 2017). This claim is also reflected in a Reuters survey, which shows that citizens globally are concerned about the growing incidences of misinformation (Newman et al., 2019). This paper tries to identify the factors that are associated with concerns about online misinformation among internet users as well as how online news sharing has been affected by the rise of misinformation.

Technological advances and new platforms propelled by the growth of the internet network have led to several studies that analyze their participatory potentials. The participatory potential of technology to turn consumers into active participants has been identified in studies (see Couldry, 2012; Picone et al., 2019). The emergence of what Van Dijck (2013) termed “platformed sociality” enabled by the decentralized infrastructure of the internet and easy-to-use applications since the 2000s have made user participation more convenient. In a cross-national comparative study, Kalogeropoulos et al. (2017) noted the diversity in online news participation in terms of sharing and commenting among social media users. While the impact of social, cultural and political factors that may affect participatory practices have long been the subject of academic debates (Verba et al., 1995), other studies suggest that the technological affordances of social media have also influenced participation and engagement among citizens (Boulianne, 2009; Schlozman et al., 2010; Tolbert & McNeal, 2003). The multiplication of information channels, such as social media, blogs, and mobile apps, has simultaneously made it easier to spread misinformation. The debates about the use of social media to spread misinformation intensified after the 2016 US election. These two phenomena of participation and manipulation have been intertwined with social media. Moreover, social media offers a unique experience where the convergence of mass and interpersonal channels has brought together the information-seeking and information-sharing functions (Walther, 2017). Studies have tried to unpack the motivations that drive online news participation (Hölig, 2016; Nielsen & Schrøder, 2014; Vaccari, 2016), but there is limited research about the factors that influence concern about the growing phenomena of misinformation online.

In this paper, we attempt to empirically analyze factors that are associated with the concerns about online misinformation as well as online news engagement. The empirical evidence for the study comes from the world’s largest democracy, India, which in recent times has witnessed massive growth in the number of internet users and the use of social media platforms (Neyazi, 2018, 2019). We first review the literature on online news engagement and misinformation, and state the hypotheses. We then discuss the method (along with the measures) and the results and conclude with the discussion. Let us first look at the context of the study, particularly the Indian media and political systems.

The Empirical Context

There has been steady growth in the number of internet users in India over the years, reaching more than 500 million in 2019 (Mishra & Chanchani, 2020). This growth has been primarily in urban India, but with rural India catching up fast. While increasing connectivity has brought a large number of Indians online and given them the benefit of an emerging, vibrant online economy, there has been a parallel rise in online misinformation. India has the largest number of Facebook and WhatsApp users in the world (Singh, 2019). Given the fact that India has a long history of communal and sectarian violence (see Brass, 2003), the increase in misinformation, which often circulates online, has become a pressing issue in the country. Doctored images and videos, often emerging from the internet, get shared widely through WhatsApp and mobile phones. A study by the Oxford internet Institute also found that WhatsApp was used widely by all political parties to spread disinformation in the run-up to the 2019 national election (Campbell-Smith & Bradshaw, 2019). While there are several small and localized incidents in which WhatsApp was used to spread rumors and serve a politically motivated agenda, there are instances of major killings and mob lynching, in part because of the messages spread through Facebook and WhatsApp (Neyazi, 2019). The widespread circulation of misinformation on social media like WhatsApp became a concern for the Indian government, which formally asked the company in late 2018 to take measures to check the spread of disinformation. This context warrants the need to empirically investigate whether the use of social media is associated with the increasing concern for misinformation.

India has a multiparty political system with several national political parties and smaller regional parties. Most regional parties are primarily active in one or two 29 ethno-linguistic states and National Territories that constitute the federal polity. At the national level, the electoral competition is between two major political alliances that form coalition governments. One of the alliances is the United Progressive Alliance (UPA) that is led by the Indian National Congress (INC or Congress), a center-left party that has ruled the country for most of the period since Independence in 1947. The other is the National Democratic Alliance (NDA) that is led by the Bharatiya Janata Party (BJP), a right-wing party. Currently, the BJP is in power, which was re-elected in the 2019 national election. Our survey showed that of the 1,013 respondents, nearly 501 respondents identified themselves with the BJP, while 232 respondents identified with the UPA and 282 respondents were non-committal or were not going to vote in the general election. We therefore wanted to investigate whether there were any partisan differences in terms of concerns about misinformation and online news engagement. This is important because recent empirical evidence suggests that there has been growing polarization in the country, often manifested online (Neyazi, 2020; Udupa, 2019).

The ruling BJP has weaponized social media platforms to spread coordinated disinformation campaigns, and several of their leaders and supporters have found to spread misinformation online (Chaudhuri, 2020; Mihindukulasuriya, 2020). There are several reports which suggest that journalists critical of the ruling dispensation have been subjected to abuse and attack on social media (Chakrabarti et al., 2018; Chaturvedi, 2016). These attacks are not only restricted to online platforms, but such online abuses are beginning to have repercussions offline. For example, Gauri Lankesh, a noted journalist who used to write against the right-wing groups, was murdered on 6 September 2017 at her front door in her residential compound in Bengaluru. What was more repulsive is the way the BJP supporters on social media reacted to the death of Gauri Lankesh, not only celebrating through their Twitter posts, but went to the extent of warning other opposition of a similar fate. Interestingly, four of the Twitter accounts that trolled #GauriLankesh and justified the murder, are followed by Prime Minister Modi (Alt News, 2017). Our study therefore is informed by the growing concerns that the right-wing actors in India have weaponized the social media platforms to advance their agenda.

Concern about Online Misinformation

Before delving into the discussion about online misinformation, it’s worthwhile here to clarify other similar terms that have been used interchangeably such as fake news, and disinformation. While there has been a growing use of the term “fake news,” academics have raised concerns about this umbrella term because of its conceptual ambiguity and misuse by political actors (Keller et al., 2020). Instead, disinformation and misinformation have been preferred in the academic discussion. Although both disinformation and misinformation refer to factually incorrect information, they are primarily distinguished by intentionality. Disinformation refers to deliberate attempts to manipulate public opinion through the systematic use of false information, misinformation pertains to the unintentional spread of false information (Barfar, 2019). We used misinformation because this is the most neutral and value-free term to describe inaccurate information in the context of communication research.

Several empirical studies show rising global concern about misinformation. In their survey of 38 countries, Newman et al. (2019) found that the average level of concern for online misinformation remained quite high. Given the fact that platforms have emerged as significant intermediaries in driving news consumption (Nielsen & Ganter, 2018), the growing use of platforms such as Facebook and Twitter for orchestrating propaganda and disinformation have affected users’ perceptions and interactions with these platforms. The rising concern about misinformation goes in parallel with steps taken by platforms to check disinformation in their platforms. In its largest purge, Twitter removed close to 70 million suspicious accounts between May and July 2018 to improve its users’ experience (Dellinger, 2018). Similarly, in April 2017 Facebook removed several thousand accounts that were involved in suspicious activities (Hunt, 2017). Since then, both Facebook and Twitter have taken several measures to remove accounts found to be involved in spreading disinformation. There have also been several attempts by national governments globally either to come out with legislative interventions or to apply pressure on the platforms to check misinformation.

WhatsApp, a popular messaging application, has also been used to spread misinformation. Since the platform is protected with end-to-end encryption, it is difficult to measure the extent of misinformation shared on this platform. However, a study that examined tens of thousands of WhatsApp messages in Brazil showed that misinformation is widespread (Burgos, 2019). A study by BBC (2019) in India showed that WhatsApp has been extensively used to circulate misinformation. In another study, Banaji et al. (2019) show how misinformation circulated through WhatsApp has been responsible for violence and mob lynching in the country. WhatsApp took several measures to check the misuse of the platform because of the growing concern about using the messaging application to circulate misinformation. The measures included adding a tag to the message that is forwarded and initially limiting the number of forwards to five at a time and now further limiting it to one at a time. While these steps may have restored user confidence in these platforms, there is limited empirical evidence about whether concerns about misinformation still exist among users. Given the strong incidence of misinformation in social media and messaging applications as shown in the studies above, and given that people use multiple channels of communication for accessing information (Neyazi et al., 2019), we hypothesize that users of social networks will be more concerned about misinformation online than non-users.

H1. Using social networks (Facebook, Twitter and WhatsApp) will be positively associated with online misinformation concerns.

Concerns about Online Misinformation and Trust in News

In addition to interventions by states and the platforms, over time concern over misinformation may begin to affect users’ online behaviors, particularly in relation to news consumption and interactions. A significant number of respondents in a survey by Newman et al. (2019) said they had started relying more on sources they consider more reputable, stopped using sources with a reputation for being less accurate, decided not to share articles that look dubious, and begun to check the accuracy of stories by comparing multiple sources. Along with the spread of unsubstantiated information and rumors online, the transition from print to online journalism has simultaneously heralded the prevalence of citizen journalism (Goode, 2009). The act of reporting is no longer the exclusive domain of professional journalists, and common citizens are now also involved in the news production process. While one can argue that this has a democratizing effect on news production, there is a parallel process of disenchantment with news (Fletcher & Nielsen, 2017). Several studies have indicated declining trust in news in several countries over the years. In the context of the United States, the Freedom House report highlights the “erosion of public confidence in the mainstream media,” while a similar trend is discernible in other democracies (Freedom House, 2019). This new situation, with vast amounts of more popular expression as well as many different misinformation problems accompanying traditional news online, has been described by some as “information disorder” (Wardle & Derakhshan, 2017).

The mushrooming of online news avenues has reinvigorated debates about the declining credibility of news and its effect on democracy (Fletcher & Park, 2017; Silverman, 2015). There have been growing concerns about the harmful effects of misinformation on journalism (Fisher, 2018; Hofseth, 2017), but researchers like Beckett (2017) argue that the rise of misinformation could offer new opportunities to journalists and mainstream media to reclaim their lost credibility. At the same time, digital subscription of mainstream media is growing in the United States and Europe (Graves & Simon, 2019); it is possible that at least some people concerned about online misinformation may now be turning to more established media for news, because they trust them more. A similar trend has been suggested in India. According to KPMG (2019), mainstream media with a digital presence is considered more reliable than digital-born media because they are seen to “uphold a similar editorial standard for their digital platforms” (p. 72); hence, mainstream media is more likely to retain trust among users. Yet citizens who lack trust in news media may be equally concerned about misinformation online since they do not trust news organizations. Since there is contradictory evidence about the growing misinformation online and the degree of trust in the news media, we propose the following research question:

Q1. To what extent is concern about misinformation related to trust in news?

Concerns about Misinformation and Partisanship

There has been steady political polarization in several countries. Political polarization has also been associated with the increasing presence of polarized content online. In terms of engagement with news, research suggests that partisans are more likely to share information and comment on news that is consistent with their in-group belief (Shin & Thorson, 2017). Thus, partisans are more likely to get actively engaged with the news and less likely to be concerned about misinformation. In the context of the 2016 US election, Guess et al. (2019) found that political affinity was correlated with sharing misinformation, but conservatives were more likely to share articles from fake news domains than liberals or moderates. While sharing activities online is often motivated by partisanship, there is limited evidence of whether partisanship is also associated with concerns about misinformation. In the context of India, misinformation was found to be disseminated by both the main political parties – BJP and INC – in the run-up to the 2019 elections (Campbell-Smith & Bradshaw, 2019). While the organized efforts of spreading misinformation may be channeled through core party supporters, ordinary party supporters may have a different perception about misinformation. We do not have any evidence to suggest that conservatives are less likely to be concerned about misinformation than liberals or moderates. Hence, we propose the following research question:

RQ2. To what extent is concern about misinformation related to partisanship?

Motivations for Online News Sharing

Online news engagement could take multiple forms, such as sharing or posting a news story, image or video, or commenting on a news story. These activities are more active forms of participation than mere exposure to news stories. Studies have attempted to distinguish between sharing and commenting online and the different motivations for these activities (Almgren & Olsson, 2016; Kalogeropoulos et al., 2017). In this study, we do not include commenting in our conceptualization of online news engagement since we are interested in analyzing users-to-content interactions and the extent to which the growing presence of misinformation affects sharing activities. On the other hand, commenting is users-to-users interactions and could be driven by entertainment aspects (see Chung & Yoo, 2008; Yoo, 2011). Several studies have analyzed the motivation for online news sharing, though the study of news sharing predates the development of the internet (Bright, 2016; Ma et al., 2014). The literature on motivations for engagement with news in terms of sharing could be divided into three categories: status-led, emotion-led, and partisanship-led. In status-led news participation, news stories are shared to enhance one’s social standing, to appear intelligent, or to be perceived as an opinion leader. Emotion-led news participation is driven by empathy caused by a surprising or dramatic event. In partisanship-led news participation, people with a strong political attachment are more likely to actively participate in the political discussion online than those with a low level of partisanship (Kalogeropoulos et al., 2017; Kim, 2016; Valenzuela et al., 2012). (For a summary of the first two categories, see Bright, 2016). In other words, online engagement with news could be driven by multiple factors, and the use of social media, in general, could enhance news engagement. Thus, we hypothesize the following:

H2a. Trust in the news will be positively associated with online news sharing.

H2b. Partisanship will be positively associated with online news sharing.

Online News Sharing and Misinformation

The engagement with news in terms of sharing and posting in platforms could help develop civic responsiveness though it could also be used to spread misinformation. The visibility of news stories depends on whether they are shared and posted online by users in the first place. Social media platforms have emerged as important “secondary distribution channels” because the majority of users now access the news through such channels instead of directly accessing the news through news portals (Bright, 2016). By sharing information online, users demonstrate that they are paying attention and responding to the messages. The forwarding of the messages on instant messengers such as WhatsApp is another aspect of online news participation. The sharing of messages, posts and videos on social networks and instant messengers simultaneously demonstrate one’s solidarity and the expression of empathy with the message (Papacharissi, 2012). Such participatory activities could therefore go beyond the informational value of the messages. Since people may be engaging with online news for myriad reasons, the rise of misinformation may not be an impediment to their engagement. Moreover, we have limited empirical evidence about whether the growing presence of misinformation has affected engagement with news online. We propose the following research question:

RQ3. To what extent is concern for online misinformation correlated with online news sharing?

Method

Data for this study was collected as part of the Reuters Institute Digital News report (Newman et al., 2019), which was the first online survey of English-language internet users’ news consumption habits in India. The cross-sectional survey (n = 1,013) was conducted by a YouGov online panel in early January 2019. The respondents in the survey were filtered out based on whether they had consumed news in the past month. The data were weighted to targets based on the English-language online population of India on age and region. The targets are set by YouGov and are based on data from the internet and Mobile Association of India. The data only reflects a small sub-set of Indians who are both (a) internet users and (b) English-language speakers, but in absolute terms this constitutes a large number of people, many of whom are part of a relatively more privileged and politically influential elite.

Measures

We asked a series of questions on news-seeking behavior, trust and vote intention, as well as misinformation. Respondents were asked about how they access news on different platforms and their trust in the news as well as whether they are concerned about misinformation.

Dependent Variables

Model 1: Concerns about Misinformation

The dependent variable in the first model is concerns about online misinformation among internet users. Respondents were asked, “Thinking about online news, I am concerned about what is real and what is fake on the internet.” This was measured on a 5-point scale (1 = strongly disagree and 5 = strongly agree. M = 3.53; SD = 1.21). Please note here that although all our respondents are internet-users, 13.2% report having used no online news in the past week, while 22.3% use only online sources of news. However, most respondents rely on both online and offline sources, with more than half of our respondents relying on television (57%) and/or print (52%) in addition to online news sources (used by 83%).

Model 2: Online News Sharing

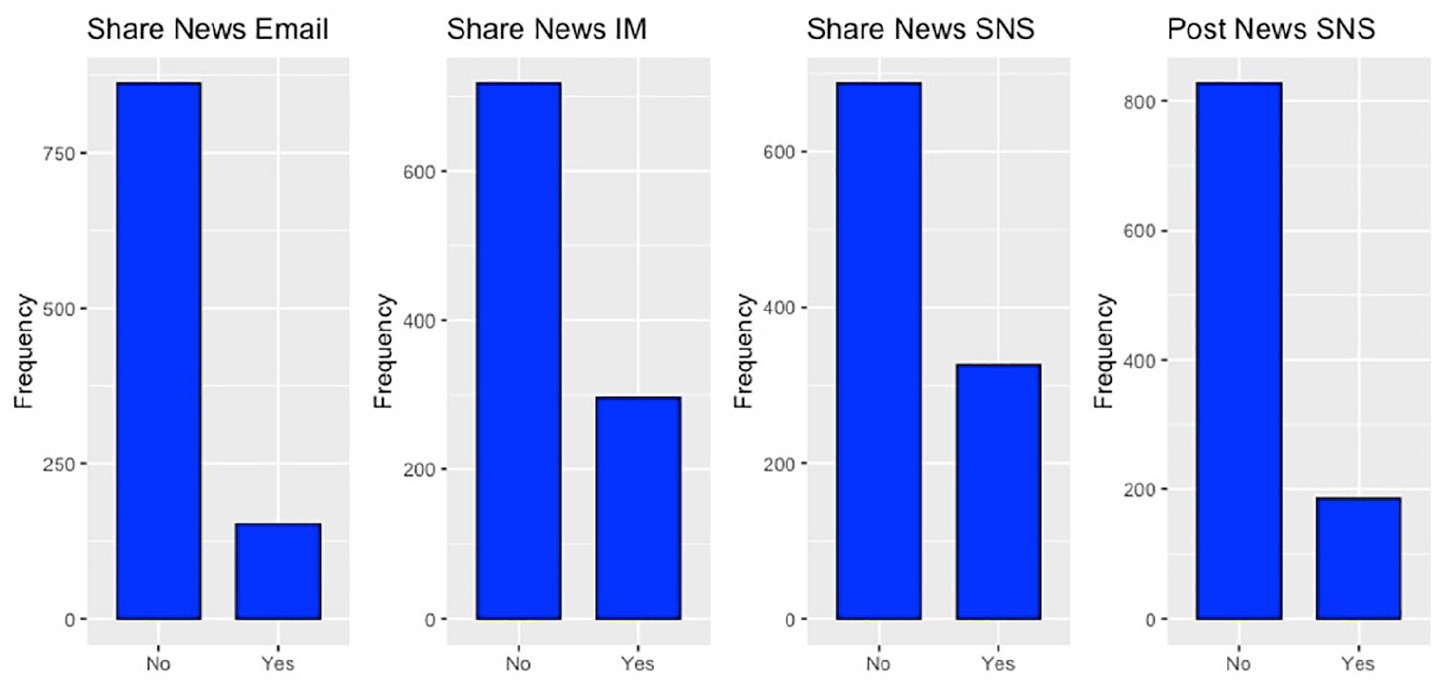

The dependent variable of online news sharing in the second model is an additive index of four activities: sharing news stories through email, social networking sites and instant messengers, and posting news-related pictures or videos on social networking sites. The variables are described in Appendix 1 and was measured through four questions (1 = yes; 0 = no). A confirmatory factor analysis revealed a perfect model fit for this variable (see Appendix 1, Figure 2).

Independent Variables

To measure concerns about misinformation and online news participation, several independent variables were used. In the first model, which measured concerns about misinformation, trust in the news was included as an independent variable, because trust in the news could help citizens actively consume and engage with the news (Fletcher & Park, 2017). To measure the level of trust in the news, respondents were asked the following question: “I think I can trust most of the news I consume most of the time.” This was measured on a 5-point scale (1 = strongly disagree and 5 = strongly agree. M = 3.10; SD = 1.08).

A second independent variable was engagement with the news, which was tapped through two questions: (a) “Which, if any, of the following have you used for any purpose in the last week?” (used = 1; not used = 0. Twitter users = 34.2%; Facebook users = 74.7%; WhatsApp users = 81.6%). (b) “Which, if any, of the following have you used in the last week as a source of news? Please select all that apply.” (used = 1; not used = 0; getting news from TV = 46.3%; getting news from printed newspaper = 46.9%; getting news from social media = 63.6%). Party identification was the third independent variable; respondents were asked: “If there was a general election tomorrow, who would you vote for?” (BJP = 49.5%; INC = 13.4%)

Control Variables

We included four demographic variables as control variables: education (coded low to high on an 7-point scale; 1 = Illiterate, 7 = University+; M = 5.86, SD = 1.1); income (coded low to high on a 14-point scale 1 = less than $266, 14 = more than $13,333; M = 5.68, SD = 5.31); age (18–76, coded young to old on a 6-point scale 1 = young, 6 = old; M = 31.30, SD = 10.5); and gender (39.1% female). To contextualize demographic variables further, 90.1% of our respondents were under 44 years old; 74.6% of our respondents were graduate or post-graduate and only 25.4% of our respondents have income of less than INR 20,000 (USD 266).

Results

Table 1 provides descriptive statistics for all variables in the study. The results of the multiple-linear regression for concerns over misinformation online are given in Table 2. To test the hypotheses, we ran two multiple-linear regressions. The first set of hypotheses was related to concerns over misinformation, while the second set of hypotheses involved online engagement with news. To ensure that there was no multicollinearity among variables, the variance inflation factor (VIF) was calculated (Appendix 2).

Descriptive Statistics for Variables Used in the Two Models.

SD: standard deviation.

Concern over Misinformation (n = 1,013).

SE: standard error.

p < .05 **p < .01 ***p < .001.

Only one demographic variable—being female—is significant. Among the independent variables, concerns over misinformation is correlated with trust in news (b = .173, SE = .034; p < .001) and party identification (p < .05), with supporters of the two main political parties—BJP and INC–being equally concerned about misinformation (p < .05). Among social media users, WhatsApp use is positively associated with the concern for misinformation (b = .292, SE = .110; p < .01), but the use of other social media platforms such as Facebook and Twitter is not significant. This partially supports our H1 that using social networks (Facebook, Twitter, and WhatsApp) will be positively associated with concerns about online misinformation. However, the use of newspapers, television, and social media to get news was not significantly associated with concerns over misinformation, perhaps because there is a high level of trust in brands such as Times of India and Hindustan Times (which are major newspapers) and Doordarshan and NDTV (TV news channels). Another reason could be that people only use channels for news consumptions that are aligned with their viewpoints. A third reason is that social media, including Facebook, Twitter and WhatsApp, took several steps to remove junk content from their platforms, which may have restored consumers’ faith in the accuracy of news.

Results of the second model, which deals with online news engagement, are given in Table 3 and Figure 1. Among the demographic variables, age and education were significant. Age is positively associated with online news engagement (b = .084, SE = .030; p < .01). This means that older people are more likely to engage with online news by sharing or posting stories on social media, but this finding has to be treated with caution since the respondents were mainly a young population with an average age of 31 years. Higher education is also positively associated with online news engagement, which supports previous studies showing that education is an important predictor of online news sharing (Smith et al., 2009). While supporters of both the main political parties—BJP and INC—actively engage with news online, BJP supporters are more active (b = .314, SE = .072; p < .001) than INC supporters (b = .225, SE = .105; p < .05). This supports our hypothesis that partisanship will be positively associated with online news sharing. On social media use, the use of Facebook and Twitter are positively associated with online news sharing, but the use of WhatsApp is not significant, which partially supports our hypothesis. Interestingly, the association between concerns for misinformation and online news sharing was not significant.

Online News Sharing (n = 1,013).

SE: standard error.

p < .05. **p < .01. ***p < .001.

Frequency of online news sharing (n = 1,013).

Discussion and Conclusion

In this paper, we show the factors that affect concerns about online misinformation and online news sharing among English-language internet users in India. After controlling for gender, age, education and income, we found that WhatsApp use, trust in news and party identification are positively associated with the concern for misinformation. The contextual factors could help in understanding these findings. As discussed earlier, WhatsApp has been widely blamed for the incidents of mob lynching incidences in India and has even come under government scrutiny, which has been debating whether to allow the platform to operate with the current feature of end-to-end encryption. In comparison, Facebook and Twitter are relatively open platforms and users may have a greater sense of confidence while accessing these platforms, or at least less active distrust. Similarly, people who trust the news are more concerned about online misinformation because they may be fearing that the rise of misinformation could affect professional journalism.

Interestingly, partisan differences are not significant and our respondents are not divided based on their party identification in their concerns about misinformation. Supporters of both the right-wing party (BJP) and a center-left party (INC) are equally concerned about misinformation. This is important because one political group has been seen to more actively disseminate disinformation than the other. 1 Studies also show that leaders from the ruling BJP party and their supporters are more likely to engage in disinformation than the opposition (Mihindukulasuriya, 2020). Research from the United States suggests that conservatives are more likely to share articles from fake news sites (Guess et al., 2019) and more likely to believe in misinformation (Silverman & Singer-Vine, 2016 ). Yet the evidence from our research suggests that political ideology is not important when it comes to Indian English-language internet users’ concerns about misinformation. One possible reason could be the nature of our study, which is based on English-speaking middle class online population, which is not representative of a wider online population.

Party identification emerges as significantly associated with online news engagement, which supports previous studies that partisans are more active in sharing and commenting online (Kalogeropoulos et al., 2017; Kim, 2016; Valenzuela et al., 2012). Facebook and Twitter users are more likely to engage with news than WhatsApp users. This is an interesting finding as there has been growing concern about rising cynicism among social media users and their declining intention to engage online after the growing incidences of misinformation (Balmas, 2014). One may argue that these concerns may largely be confined to the Western countries, which have witnessed acrimonious debates about foreign interference in the national elections, while such debates remain marginal in India.

Not surprisingly, concern about misinformation does not emerge as a significant predic tor that affects online news engagement. Our findings suggest that future research must analyze the differential effects of social media instead of lumping them together. This is because a closed platform such as WhatsApp could be a more fertile channel for sharing and spreading misinformation than open platforms such as Facebook and Twitter, where messages are constantly scrutinized by the public. At the same time, we should note that people sharing messages on open platforms such as Twitter and Facebook may be doing it to enhance their public image and social standing or to show support for a cause (see Bright, 2016).

There are certain limitations of the study. Our study is based on the English-speaking middle class and cannot be generalized to the wider Indian online population, let alone the whole population. Future studies may try to see if there are differences among internet users and non-users when it comes to the concern about misinformation. Future studies may also benefit by analyzing whether the concern for misinformation affects other participatory behavior such as political participation and civic engagement. Developing a comprehensive understanding of the linkages between the concern about online misinformation and the trust in the news would also require disentangling these two concepts further. The current study uses a single item measure of trust in the news and the concern about online misinformation, the future study may benefit by paying more attention to conceptualization and operationalization of these two concepts by developing a multi-items measure. While there exist several studies which have used multi-item measure of trust in the news (For a review, see Strömbäck et al., 2020), we do not yet have a robust multi-item measure of the concern about (online)misinformation. The current study is important given the fact that there has been a growing incidence of misinformation globally. Further, misinformation in India has broader implications because it has been intertwined with mob lynching and the amplification of social divisions in the country, sometimes animated by political actors. The empirical evidence provided in this study adds new insights to the literature on misinformation and online news engagement from the world’s largest democracy.

Footnotes

Appendix 1

Appendix 2

Multicollinearity Diagnostic for Models through VIF.

| Concern over Misinformation (n = 1,013) |

Online news sharing (n = 1,013) |

||

|---|---|---|---|

| Variables | VIF | Variables | VIF |

| Female | 1.090 | Female | 1.099 |

| Age | 1.166 | Age | 1.106 |

| Education | 1.116 | Education | 1.109 |

| Income | 1.026 | Income | 1.023 |

| Trust in News | 1.040 | Concern over Misinformation | 1.092 |

| BJP Vote Intention | 1.294 | Trust in News | 1.061 |

| INC Vote Intention | 1.240 | BJP Vote Intention | 1.257 |

| Twitter Users | 1.125 | INC Vote Intention | 1.232 |

| Facebook Users | 1.431 | WhatsApp Users | 1.352 |

| WhatsApp Users | 1.351 | Facebook Users | 1.359 |

| Getting News Printed newspaper | 1.179 | Twitter Users | 1.087 |

| Getting News TV | 1.184 | ||

| Getting News Social media | 1.133 | ||

The variance inflation factor (VIF) determines how much the variance of an estimated regression coefficient increases if predictors are correlated. VIFs of 1 indicates no factors are correlated.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.