Abstract

Deliberation theory posits that users’ willingness to participate in online comment sections should increase if the discussions are more evidence-based. However, extant empirical research does not clearly support this assumption. The current study argues that social comparison processes and the metacognitive perception of knowledge mediate the relationship between evidence in comments and participation intention in different ways. Findings from two online experiments (NStudy1 = 368; NStudy2 = 854) support this assumption: For three different topics, the results show that providing evidence in comments, as opposed to merely opinions, increases participants’ perceived knowledge by increasing their factual knowledge. At the same time, evidence in comments decreases participants’ perceived knowledge through social comparison with other commenters. Higher perceived knowledge is related to increased participation intention. In summary, the studies reveal psychological mechanisms that explain why high deliberative quality of online discussions does not necessarily stimulate further user participation.

User comments provide a promising infrastructure for deliberative discourse, which involves members of a diverse audience creating public opinion through the rational and respectful exchange of facts and arguments (Dahlberg, 2010; Wright and Street, 2007). However, due to low quality of many discussions (Anderson et al., 2014) and low participation rates (e.g. 12% in Germany, Hölig et al., 2021), comment sections often do not live up to their deliberative potential (Stroud et al., 2015). Previous research has suggested that improving the deliberative quality of discussions in comment sections could also increase the participation intentions of users who would otherwise remain silent (e.g. Springer et al., 2015; Ziegele and Jost, 2020). While various studies have investigated the effects of disrespectful communication on users’ participation intentions (e.g. Borah, 2014), less attention has been paid to the effects of comments that adhere to other criteria of deliberation. For instance, the criterion of rationality requires users to provide facts and evidence for their claims instead of only expressing their feelings and opinions without further reasoning (Friess and Eilders, 2015); consequently, mere opinion-based commenting can be considered a low expression of rationality, while including verifiable, truthful, and scientifically indisputable facts and evidence would be a high expression of rationality.

In terms of the effects of fact-based comments on participation in online discussions, findings are generally scarce. A survey study has suggested that users’ perceptions of rational discussions increase their intention to write comments (Engelke, 2020), but content analyses have not found positive relationships between factual information in user comments and participation rates (Ziegele et al., 2014). One explanation of this inconsistency could be that factual comments trigger two conflicting psychological mechanisms in users: (1) they might add to the factual knowledge of users, which could increase their perceived knowledge and, consequently, their willingness to participate in the discussion; but (2) users might perceive the knowledge of the authors of factual comments to be superior to their own knowledge. Previous research has indicated that comparisons with others serve as important contrast heuristics for knowledge perceptions (Flynn and Goldsmith, 1999; Radecki and Jaccard, 1995). In discussions that are mainly based on facts and evidence, such social comparison could decrease users’ perceived knowledge and inhibit their willingness to participate. A null effect of factual comments on users’ intentions to participate could be the result of these two competing mechanisms.

In short, facts and evidence are considered an important indicator of deliberative quality in online discussions, but their effects on users’ participation intentions are unclear. Therefore, the current study has two overall aims. First, we aim to provide experimental evidence for the effects of fact-based comments (higher deliberative quality) compared to opinion-based comments (lower deliberative quality) on the participation intentions of users. An experimental approach allows us to determine causal relationships between the manipulated factors and the dependent variables of interest; moreover, it establishes a situation wherein people are exposed to a certain type of online discussion. This design enables us to investigate situational outcomes immediately after experiencing a (fact- or opinion-based) discussion; thus, the results are less likely to be biased by users’ flawed memory of the previous experience with online discussions.

The second aim of this study is to combine approaches of social and cognitive psychology and apply them to the field of online deliberation. This can help us understand which mechanisms explain the relationship between facts in comments and participation intention and why specific patterns of participation behavior occur. Such insights into the motivations and cognitive processes behind participation intention can help in developing more target-oriented measures that promote participation in online discussions.

User comments on social networking sites: deliberative potential and actual participation

Since user comments enable citizens to engage in public discourse, their potential as a deliberative public sphere has been extensively investigated. The fundamental idea of deliberative democracy is that public opinion and decision-making processes are shaped through communication (Friess and Eilders, 2015). The concept emphasizes “egalitarian inclusion as a normative condition of the public sphere” (Friess and Eilders, 2015: 323), which means that everyone concerned with/by the issue under discussion should have an equal chance to access and effectively contribute to the discourse, regardless of age, gender, or social status (Barber, 1984; Gutmann and Thompson, 2004). Other premises of deliberative democracy include rationality and civility (Friess and Eilders, 2015); the former indicates that the exchange of arguments is based on facts and logical evidence (Gutmann and Thompson, 2004), and the latter involves participants of the discussion showing respect to each other, having the interests of others in mind, and being mindful of social norms (Barber, 1984).

While some studies have found that many user comments contain deliberative features (Ksiazek, 2015; Papacharissi, 2004), others have concluded that online discussions are rarely based on facts and evidence but instead contain merely opinions, trivia, or even hostility and incivility (Anderson et al., 2014; Coe et al., 2014; Ziegele et al., 2014). Rowe (2015) showed that this especially holds true for comment sections on social networking sites (SNS). In addition, data from the Reuters Digital News Report reveal that small percentages of Internet users regularly post comments on SNS (Hölig et al., 2021). Commenting users hold rather extreme political positions and are thus not representative of the population (Hölig et al., 2021).

These findings suggest that despite the requirements of deliberation, the level of inclusive participation in comment sections is low. Therefore, several studies have investigated the factors that relate to people’s willingness to write comments in online discussions. One line of research focuses on the influence of comments posted by previous users. Most studies in this field have investigated the influence of incivility on participation and have studied how comments violating social norms affect other users’ participation (Borah, 2014; Masullo Chen and Lu, 2017). The role of evidence and facticity in comments has hardly been addressed in previous research. Springer et al. (2015) found that the perception of a low discussion standard decreases people’s intention to participate in online discussions, but the researchers did not define whether a low discussion standard also includes the lack of facts and evidence. The only survey that provides a link between facticity in comments and people’s willingness to participate is a qualitative study by Engelke (2020), which showed that participants evaluated deliberative comments that include facts and evidence positively and that “this deliberative nature is a reason for participation” (Engelke, 2020: 459). Thus, to date, a positive effect of facts in online discussions on people’s participation intentions is largely a theoretical one expressed by deliberation researchers (see, for an overview, e.g. Friess and Eilders, 2015).

Contrary to this normative claim and the findings by Engelke (2020), a content analysis by Ziegele et al. (2014) revealed that comments including facts, evidence, and expertise did not increase subsequent users’ willingness to reply to these comments. If the presence of facts and evidence in user comments are irrelevant to or even impede the willingness of others to join online discussions, then two characteristic features of deliberation—rational discourse and high participation rates—inhibit each other. The current study investigates this question from a psychological perspective. We argue that perceived knowledge could be relevant in this context, as outlined in the following sections.

Perceived knowledge and its relevance for users’ intention to participate in online discussions

Perceived knowledge—also referred to as subjective knowledge or the feeling of being informed—is defined as individuals’ perception of their own knowledge (Flynn and Goldsmith, 1999). This definition is related to the metacognitive principle that cognitive processes take place on (at least) two different, interrelated levels: the object-level and the meta-level (Nelson and Narens, 1990). The object-level is another term for memory, which is defined as a complex store of factual or structural knowledge organized in an interconnected network (Raaijimakers and Shiffrin, 1980). The meta-level (meta-memory) is a simulation or model of the object-level, and it describes an individual’s awareness and evaluation of their knowledge and its structuring (Nelson and Narens, 1990). However, this simulation is incomplete and contains only a small snippet of the complex structure of knowledge.

The object- and meta-levels are dynamic cognitive systems that can be modified through processes of monitoring and control (Koriat et al., 2006; Nelson and Narens, 1990). Monitoring processes involve the assessment of the meta-memory through metacognitive judgments, while control processes involve actions that are initiated or ended to modify the object-level (Nelson and Narens, 1990). For example, if monitoring one’s knowledge leads to the conclusion that more information is needed to make a voting decision, this can trigger actions like reading through party programs (control). This example indicates that knowledge perception is also highly relevant to behavioral intentions, including people’s willingness to participate in discussions: In fact, previous studies have shown that perceived topic knowledge increases one’s willingness to discuss an issue with others (Schneider et al., 2016). In line with this, a study by Schäfer (2020) showed that exposure to many news posts on Facebook increased perceived topic knowledge, which was related to a greater intention to talk about the topic or initiate discussions with others. Thus, applied to the current study, a high level of perceived knowledge is likely to motivate users to contribute to online discussions.

Roots of perceived knowledge

Self-centered judgment

Within the framework of metacognition, predictors of perceived knowledge are the sources that people apply for metacognitive judgments. In other words, what do people actually think about if they evaluate the extent to which they feel well-informed about a specific domain? As described by Nelson and Narens (1990), monitoring consists of a flow of information from the object-level to the meta-level, meaning that to a certain degree, people base their perceived knowledge on what is stored in their long-term memory. The stored information is referred to as objective knowledge, which can be separated into factual knowledge and structural knowledge (Eveland et al., 2004). Factual knowledge entails people being able to store and recall single facts about a topic, while structural knowledge entails people being able to make connections between such facts, which results in more complex forms of knowledge, such as understanding, interpretations, or conclusions (Eveland and Hively, 2009).

Research on metacognitive judgments usually focuses on factual knowledge and its role in knowledge perception. However, as previously mentioned, people can only access their knowledge; they are not able to fully monitor their cognitive structures (Koriat, 1995; Koriat et al., 2006). A meta-review on the relationship between factual knowledge and perceived knowledge in the field of consumer research confirmed a weak relationship between the constructs (Carlson et al., 2009). In terms of political issues, a recent study also reported a weak correlation between actual knowledge and perceived knowledge (Schneider et al., 2016).

Several explanations can clarify this finding. For broad, complex, or unspecific knowledge domains, people can hardly consider every fact they have stored in their memory when estimating their level of knowledge (Ackerman et al., 2002); instead, people tend to rely on heuristics, such as ease of recall or familiarity with the topic (Koriat, 1995). This causes discrepancies between actual and perceived knowledge, resulting in a general tendency of people overestimating their own knowledge (Alter et al., 2010; Dunning et al., 2004; Kruger and Dunning, 1999). In other words, “most people feel they understand the world with far greater detail, coherence, and depth than they really do” (Rozenblit and Keil, 2002: 522). Rozenblit and Keil (2002) have labeled this phenomenon illusion of explanatory depth, and other scholars call the tendency for overestimation illusion of knowing (Glenberg et al., 1982; Park, 2001). While this can be considered a general human tendency, findings have also indicated that people with a low level of objective knowledge show a higher level of overestimation (Fernbach et al., 2013; Kruger and Dunning, 1999): As pointed out by Kruger and Dunning (1999), people who lack objective knowledge in a certain domain also lack the metacognitive skills to accurately assess their knowledge. On the other hand, a higher level of knowledge also improves the ability to adequately assess knowledge.

Comparison-based judgment

Previous research has mainly focused on individuals’ self-judgments described earlier to explain their levels of perceived knowledge, but social comparison with others can also be a relevant factor in this process. Social comparison refers to the process of thinking about the self in relation to others; it is motivated by the need to hold stable and accurate self-appraisals (Festinger, 1957; Wood, 1996). Typically, social comparisons are divided between upward and downward comparisons (Wills, 1981; Wood, 1996). Upward comparisons occur when people compare themselves with others whom they perceive as superior. These comparisons can cause negative feelings, such as poor self-evaluation or perceived inadequacy (de Vries et al., 2018), but they can also have positive motivational outcomes, such as by increasing inspiration (Meier and Schäfer, 2018). Downward comparisons are comparisons with others who are perceived as inferior (Wills, 1981; Wood, 1996).

Social comparisons can affect knowledge perceptions, and some studies have measured knowledge perception in relation to others (e.g. Flynn and Goldsmith, 1999; Mattheiß et al., 2013). Radecki and Jaccard (1995) have differentiated between two types of effects of social comparisons on perceived knowledge. On the one hand, assimilation effects occur when people equate their self-perception with the perceptions of others when judging their own knowledge. This process is especially likely to happen when individuals compare themselves to close friends or family members. On the other hand, perceived knowledge can be the result of contrast effects—that is, if individuals perceive others to be very knowledgeable, they perceive themselves as less knowledgeable than they usually would, but if individuals perceive others to be less knowledgeable than themselves, their self-perceptions are elevated (Radecki and Jaccard, 1995). This type of effect is especially likely when people compare themselves to others they do not know or whom they perceive as dissimilar (Mussweiler, 2001).

An increasing number of studies have indicated that social comparison also takes place online, especially on SNS (de Vries et al., 2018; Vogel et al., 2014). Here, comments of other users could affect one’s self-evaluations, especially when it comes to rating one’s own knowledge. Neubaum and Krämer (2016) described user comments as a “window to the public” (p. 503) that provides information about the states and processes in society. The researchers showed that opinions expressed in comments affect inferences about public opinion (see also (Friemel and Dötsch, 2015; Lee et al., 2021). Using an anchoring heuristic (Epley and Gilovich, 2006), readers might also deduce the public knowledge about an issue from online discussions and then evaluate their own knowledge against this reference level.

Summary and hypotheses

The current study investigates the effects of facts and evidence in user comments (in the following: evidence-based comments) as opposed to comments based solely on opinion. It is important to note that we focus on verifiable, truthful, and scientifically indisputable facts and evidence, not on “alternative facts,” which only appear to be factual, and which can also often be found in user comments (Slavtcheva-Petkova, 2016). One beneficial outcome of reading facts and evidence-based comments concerns actual learning: Being exposed to facts in comments increases the chance that readers learn these facts, resulting in increased factual knowledge. In contrast, opinion-based comments largely lack the potential for learning facts; therefore, readers of such comments have a lower chance to acquire knowledge.

As described by Nelson and Nerlens (1990), perceived knowledge develops through processes of monitoring. One source for monitoring one’s knowledge is based on self-centered judgments on the object-level. That means people base their knowledge perception to a certain degree on what they actually remember about a topic. Other self-centered roots for knowledge perception are heuristics such as ease-of-recall and familiarity with a topic (Koriat, 1995). They, too, are shaped by objective knowledge. If people know a lot about a topic, it is easy for them to quickly remember facts and they are also familiar with the topic due to a cognitive engagement with it. This explains why studies could show a correlation between factual knowledge and perceived knowledge for a wide range of topics (Carlson et al., 2009; Schneider et al., 2016).

Even if previous studies did not investigate the direction of the relationship between actual and perceived knowledge, it can be assumed that factual knowledge shapes perceived knowledge and not the other way around. Factual knowledge refers to information stored in long-term memory. It changes if learning or forgetting occurs (Baddeley et al., 2015). This involves (the lack of) engagement with information. Perceived knowledge cannot by itself make a difference for actual knowledge. For example, only because people think they know a lot about a certain topic, this does not increase their score in a knowledge test. This rules out perceived knowledge as a predictor for actual knowledge. Perceived knowledge, on the other hand, is determined by self-centered and comparison-based judgments. As described by Nelson and Narens (1990), the formation of perceived knowledge as a metacognitive judgment involves the retrieval of actual information which means that actual knowledge directly affects perceived knowledge. Also, studies found that factual knowledge shapes heuristics—such as ease of recall and topic familiarity—that determine knowledge perception though (Koriat, 1995). Consequently, a change in factual knowledge as a self-centered root for knowledge perception should also cause a change in what we think we know. Since we assume that evidence-based comments change factual knowledge, this should in turn affect perceived knowledge.

User comments provide insights into the “pulse” of a society (Neubaum and Krämer, 2016) and might thus be viewed as a relevant source of social comparison. It can be assumed that being exposed to user comments initiates cognitive processes in readers that have been described as upward and downward comparisons (Wills, 1981; Wood, 1996) and as contrast effects (Radecki and Jaccard, 1995). It is likely that people observing an evidence-based online discussion perceive that others are well-informed and that the overall knowledge level in society is high. As described in work on contrast effects (Radecki and Jaccard, 1995), people may use this high level as a baseline for their self-perception and conclude that their own knowledge is lower. This harsher self-perception would not occur if people only saw opinion-based comments without facts and evidence.

Self-perception relative to others is also relevant to overall self-perception (Wills, 1981). Individuals who feel inferior to others may also feel generally less knowledgeable, while positive outcomes of social comparisons could lead to a more positive self-evaluation in terms of knowledge perception.

Combining these effects leads to the following hypothesis:

In addition, metacognitive judgments that include the assessment of knowledge can stimulate processes of control (Nelson and Narens, 1990), meaning that they are related to intentions to take actions. A study by Schneider et al. (2016) showed that the level of perceived knowledge influenced readers’ willingness to participate in a discussion.

Taken together, we hypothesize:

At the same time, evidence-based comments could also decrease participation intention through less favorable social comparisons, which impact perceived knowledge and participation intention.

Finally, we want to investigate the overall effect of comments providing facts and evidence on participation intention.

Moreover, to evaluate the impact of different kinds of comments, it is also important to address the overall effect of evidence- versus opinion-based comments on participation intention.

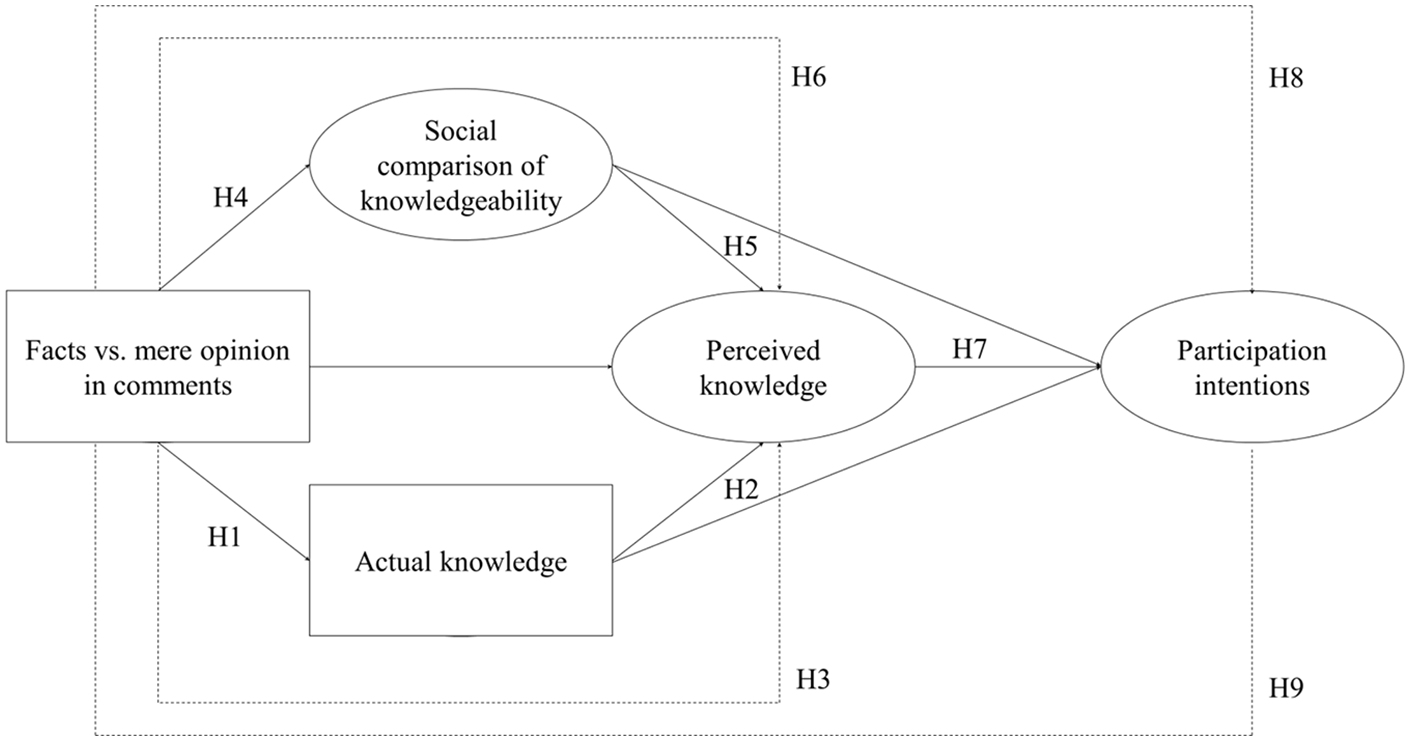

The complete theoretical model is depicted in Figure 1.

Summary of the hypothesized relationships.

Study 1

Method

Participants and procedure

Participants were recruited via the noncommercial online access panel SoSciPanel (Leiner, 2016) between October and November 2017. Members of the SoSciPanel are residents of Germany, Switzerland, or Austria, although the panel is dominated by German participants (86% of all panel members, www.soscipanel.de), making it more suitable for inferences about Germans than for inferences about residents of the other countries. Nevertheless, the three neighboring countries are all German-speaking and have overlapping media systems; therefore, the different nationalities of the participants should not affect the findings of our research.

Upon clicking the link to the survey, participants were randomly assigned to one of two groups. The first group received a snippet of a news feed containing a news post with user comments making statements that justify their point of view without providing facts or external sources. The second group received the same stimulus with user comments making the same statements but justifying them with facts and evidence. Thus, the level of factuality was varied between the groups (2 × 1 design). Apart from the stimulus, the questionnaire was identical for both groups. In total, n = 372 participants completed the survey (age: M = 41.12, SD = 15.22; 53.51% female; 77% high school diploma or above).

Stimulus

To prevent floor and ceiling effects for perceived and factual knowledge, the topic for the stimulus material was chosen so as to be somewhat familiar to the participants but not at the center of their current attention. Consequently, this study used a topic that was discussed in the media, but it focused on a facet that was not widely presented in the news coverage. In 2015, it became public that the German brand Volkswagen had manipulated their diesel engines, which led to the so-called Dieselgate. In autumn 2017, when the data were collected for the study, questions about compensations for the customers of Volkswagen and affiliated brands dominated the media coverage on Dieselgate; this study instead focused on health risks through diesel pollution.

We used a news post that looked very similar to a common Facebook news post (the stimulus material can be obtained from: https://osf.io/zyq4j/?view_only=c8f641ec0a7d4bfaa9e535e262b6998a). Spiegel Online, a popular German news site, was chosen as the source of this post. An online discussion consisting of 10 user comments was added below the post. The first group received comments containing commenters’ personal opinions about the health risks caused by diesel exhaust gases. More precisely, these comments contained their personal perception of the situation, personal experiences and/or their feelings toward the situation. (e.g. “I think something should be done about that. After all, it is about our health. I’m scared to become sick because of these exhaust gases. This is really dangerous”). The second group received statements of a very similar wording, but the argumentation also contained facts and evidence from external sources instead of exclusively arguing on personal perception, for example Something should be done about that. The Federal Environment Agency confirmed that exhaust gases increase the number of breathing and eyesight complaints, heart and circulatory diseases as well as headaches.

The online discussion was balanced, meaning that some comments argued that there is an actual health risk while others argued that health threats caused by diesel exhaust gases are overestimated. Moreover, the comments of the two experimental groups were about the same length (lengthfacts = 337 words, lengthopinion = 314 words).

To assure that the manipulation was successful, participants were asked after the stimulus presentation about their perception of the user comments. For this purpose, a semantic differential with a 7-point Likert-type scale (1 = “contained many facts” to 7 = “contained few facts”) was used. As intended, the first group that was exposed to opinions without facts and evidence perceived the user comments this way, compared to the second group, MGroup1 = 5.75, SD = 1.34; MGroup2 = 4.13, SD = 1.42; t(370) = 11.34; p < .001. In terms of perceived balance of the online discussion, we could not find any differences between the fact- and opinion-based conditions, “The comments all contained a similar point of view,” MGroup1 = 2.89, SD = 1.64; MGroup2 = 3.03, SD = 1.60; t(370) = −0.802; p = .42.

Measures

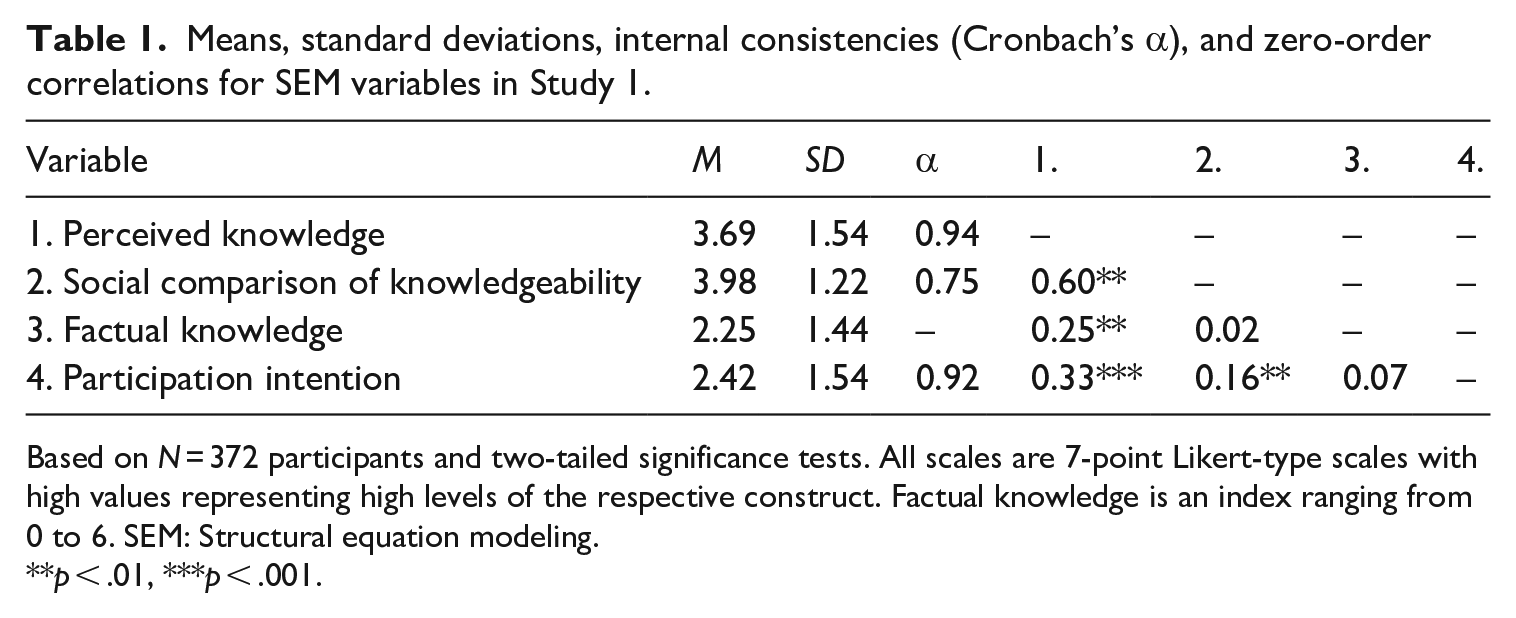

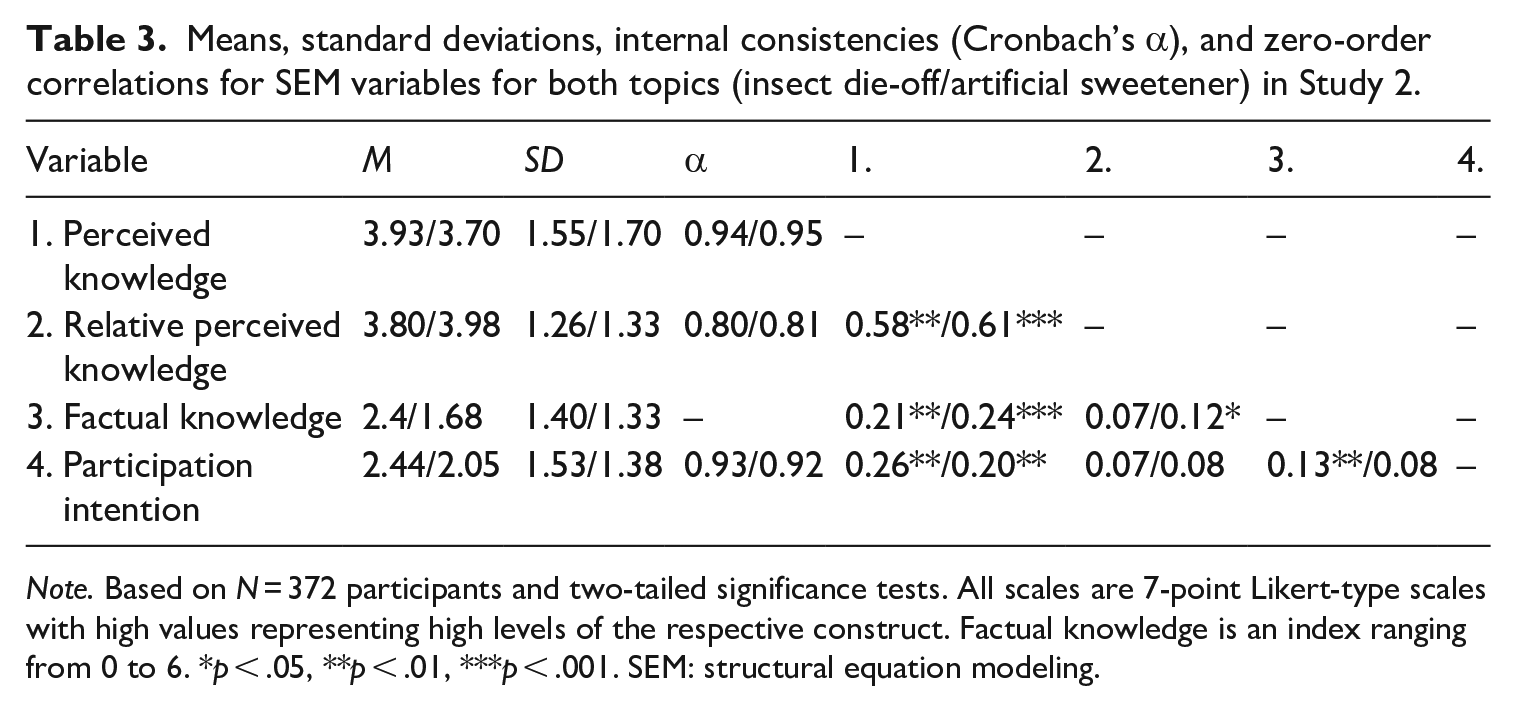

Table 1 includes the zero-order correlations for all constructs that are described in the following paragraphs.

Means, standard deviations, internal consistencies (Cronbach’s α), and zero-order correlations for SEM variables in Study 1.

Based on N = 372 participants and two-tailed significance tests. All scales are 7-point Likert-type scales with high values representing high levels of the respective construct. Factual knowledge is an index ranging from 0 to 6. SEM: Structural equation modeling.

p < .01, ***p < .001.

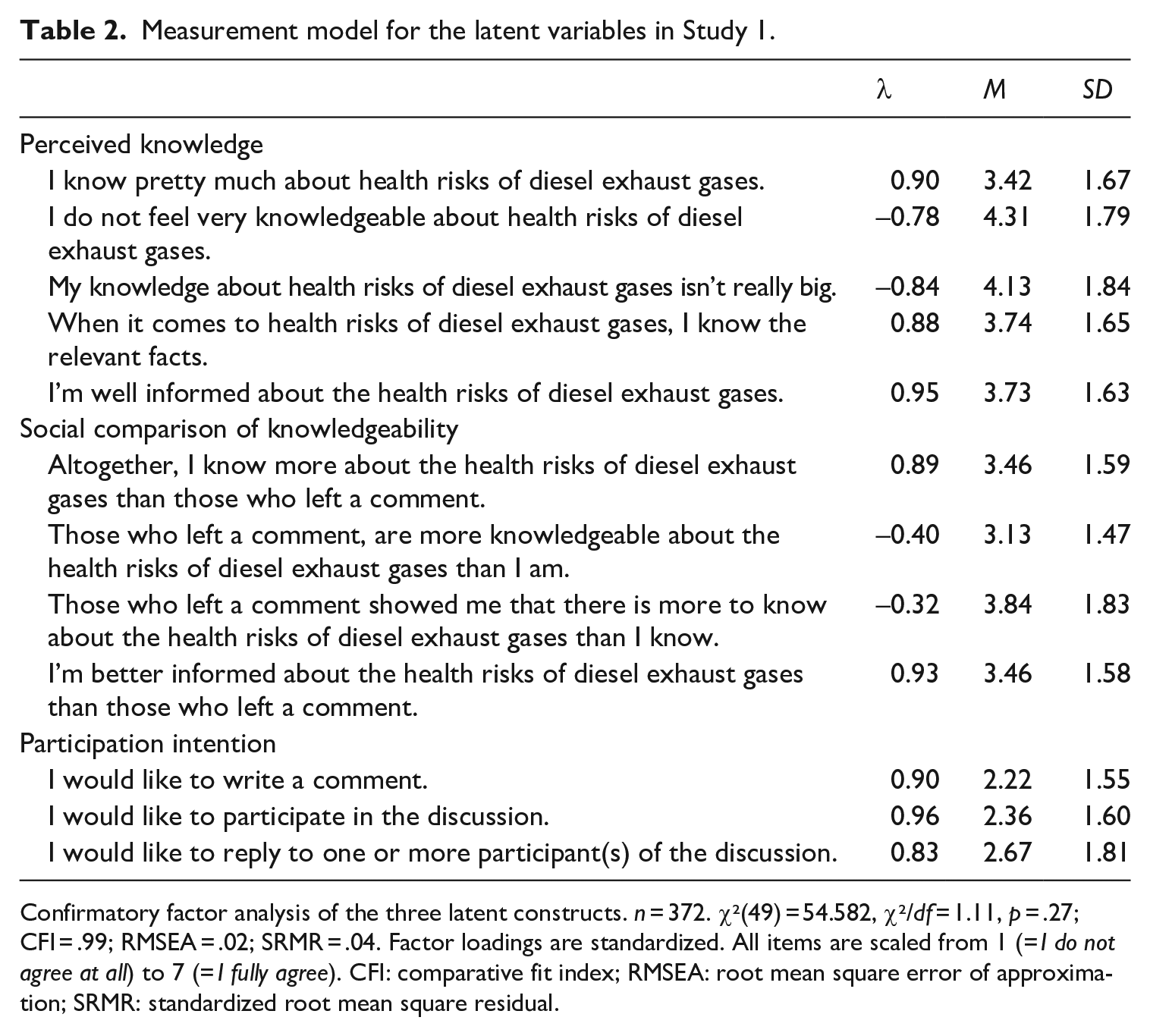

Perceived knowledge was measured using a scale developed by Flynn and Goldsmith (1999) in the field of consumer research. Specifically, the current study used three of the five items, which could be easily adapted for our purposes. Moreover, two additional items were created that asked how well-informed participants felt and whether they thought that they knew many facts about the health risks caused by diesel exhaust gases (for the exact wording of all items, see Table 2). The items, like all items in the questionnaire, were answered on a 7-point Likert-type scale, ranging from 1 = “I do not agree” to 7 = “I fully agree.” The scale showed a good internal consistency (Cronbach’s α = .94; M = 3.69; SD = 1.54).

Measurement model for the latent variables in Study 1.

Confirmatory factor analysis of the three latent constructs. n = 372. χ²(49) = 54.582, χ²/df = 1.11, p = .27; CFI = .99; RMSEA = .02; SRMR = .04. Factor loadings are standardized. All items are scaled from 1 (= I do not agree at all) to 7 (= I fully agree). CFI: comparative fit index; RMSEA: root mean square error of approximation; SRMR: standardized root mean square residual.

To measure social comparison of knowledgeability, similar items to those for perceived knowledge were used. This time, all items asked about perceived knowledge in relation to the commenters. We adopted three items of the scale by Flynn and Goldsmith (1999) and added comparisons (upward and downward) with those who left a comment. Another item that was created for the purpose of the study stated: “Those who left a comment showed me that there is more to know about diesel exhaust gases than I know.” The internal consistency of the four items was acceptable (Cronbach’s α = .75; M = 3.98; SD = 1.22).

To measure factual knowledge, we developed six statements on facts that concern the topic of diesel exhaust gases and that were either right or wrong. Examples of these statements included knowledge on cities that are particularly affected or EU regulations on exhaustion limits (all statements can be obtained from: https://osf.io/zyq4j/?view_only=c8f641ec0a7d4bfaa9e535e262b6998a). Participants received one point for correctly identifying a statement as right or wrong and zero point if they got it wrong or indicated that they did not know. For the analyses, all items were added up to create a factual knowledge index that ranged from 0 (all questions wrong) to 6 (all questions right). On average, the participants answered 2.25 questions correctly (SD = 1.44).

For participation intentions, we created three items (example: “I would like to write a comment”). They were formulated as statements that the participants could agree or disagree with, using a 7-point Likert-type scale ranging from −3 = “totally disagree” to 3 “completely agree.” Behavioral intention either referred to leaving a comment in general below the news post or to specifically participating in the discussions of the other users. The scale showed a very good internal consistency (Cronbach’s α = .92; M = 2.42; SD = 1.54).

Results

Data analysis

All hypotheses were tested with structural equation modeling (SEM) using the R package lavaan (version 05.23.1097; Rosseel, 2012) and maximum likelihood estimation. In the first step, a confirmatory factor analysis was calculated for the three latent variables in order to test the measurement model. Overall, the model showed a good fit based on absolute and incremental fit indices, χ²(49) = 54.582, χ²/df = 1.11, p = .27; comparative fit index (CFI) = .99; root mean square error of approximation (RMSEA) = .02; standardized root mean square residual (SRMR) = .04. At that point, the manifest variable factual knowledge and the structural paths between the variables were added to the model. All paths were controlled for age, gender, and education. The resulting SEM also yielded an acceptable fit, χ²(95) = 166.399, χ²/df = 1.75, p < .001; CFI = .98; RMSEA = .05; SRMR = .04. All indirect paths were tested using a 95% bias-corrected confidence interval (CI) with 1000 bootstrap subsamples.

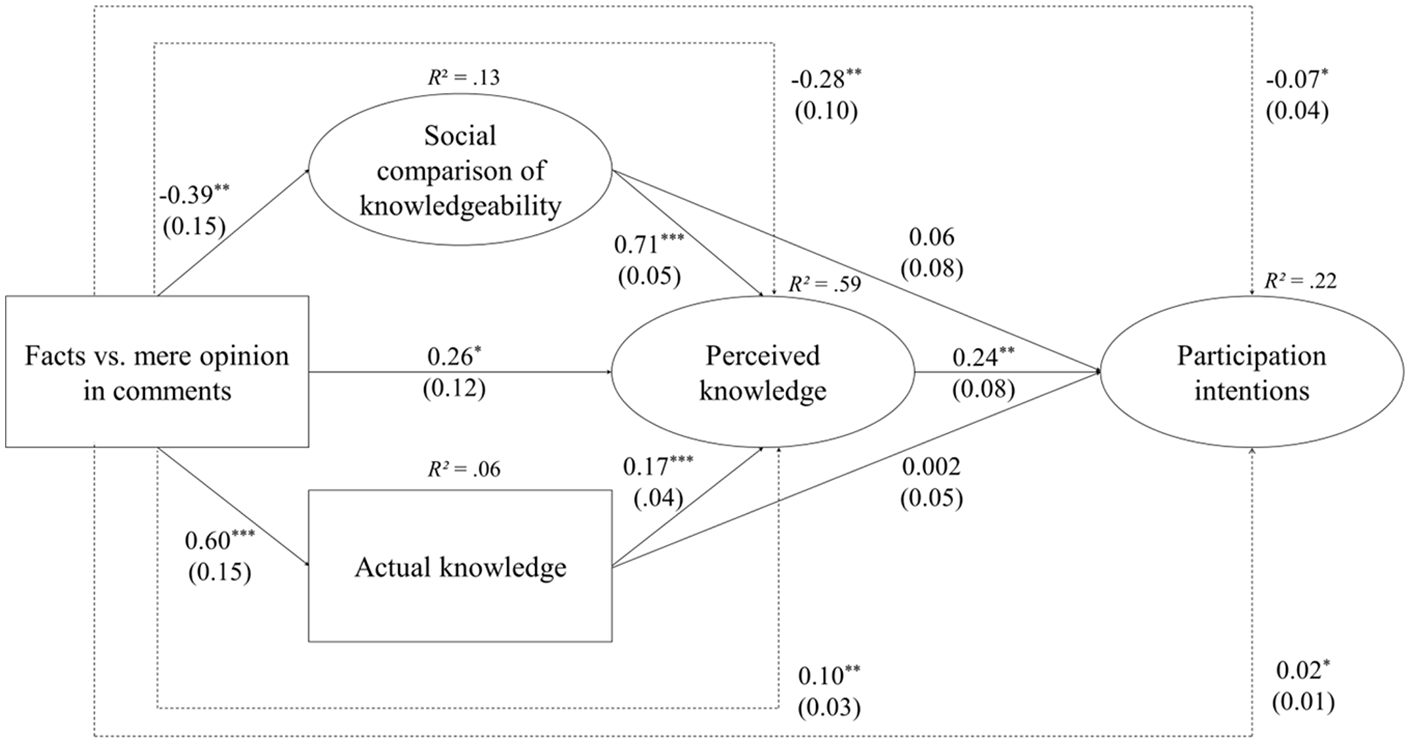

Testing of hypotheses and RQs

With regard to H1, the results depicted in Figure 2 reveal a positive relationship between experimental manipulation and factual knowledge. As posited in H1, readers of evidence-based comments scored higher in the knowledge quiz than did the readers of comments that were based only on opinion. In addition, factual knowledge was positively related to perceived knowledge, as predicted by H2. The results also reveal a positive indirect effect of evidence-based comments mediated through factual knowledge, supporting H3. In addition, the results show that evidence-based comments lowered the outcome of social comparison of knowledgeability, as predicted by H4. Less favorable social comparison decreased perceived knowledge, which is in line with H5. The results for the indirect effect indicate that evidence-based comments had a negative indirect effect on perceived knowledge mediated through social comparison, supporting H6. Moreover, perceived knowledge was positively related to participation intention, as predicted in H7.

Results of the structural equation model testing effects of different types of user comments on participation in Study 1

The results of the serial mediation analyses reveal that evidence-based comments had a positive effect on participation intention through factual knowledge and perceived knowledge (B = 0.02, SE = 0.01; p < .05; lower level CI [LLCI] = 0.01; upper level CI [ULCI] = 0.05), which supports H8. The indirect negative effect via social comparison and perceived knowledge was also significant, supporting H9 (B = -0.07, SE = 0.03; p < 0.04; LLCI = −0.16; ULCI = −0.01). To investigate RQ1, we compared the effects of the two serial mediations by investigating their confidence intervals. Since they did not overlap, it can be assumed that the effects differ significantly. The negative indirect effect of evidence-based comments through social comparison and perceived knowledge was found to be stronger than the positive serial mediation via factual knowledge and perceived knowledge on participation intention. However, the total effect that was mentioned in RQ2 was not significant (B = 0.10, SE = 0.14; p = .40; LLCI = −0.20; ULCI = 0.35).

Discussion

The results of Study 1 corroborate our assumption that facts and evidence in online discussions ambivalently affect participation intention—and that this ambivalence can be explained through the comments’ differential indirect effects on perceived knowledge. On the one hand, evidence-based comments increase participation intention through learning processes, which in turn elevate perceived knowledge. On the other hand, evidence-based comments lead to negative outcomes of social comparison, which decreases perceived knowledge and results in lower intention to participate. These findings indicate that user comments serve as an important anchor for judging the level of knowledge in society, which in turn affects how people perceive their own knowledge and how they behave in online discussions. In short, the positive and negative effects of evidence-based user comments cancel each other out. Taken together, these results confirm and explain the findings of previous content analyses that facts and evidence do not necessarily increase participation rates (Weber, 2014; Ziegele et al., 2014). To test the robustness of the observed patterns, we conducted a second experiment that included two different news topics.

Study 2

Method

Participants and procedure

For the second experiment, we also relied on a sample of Internet users in Germany, Switzerland, and Austria. The sample was recruited between March 1st and March 10th, 2020. As in Study 1, the participants were recruited via the noncommercial online access panel SoSciPanel. The procedure of the experiment was similar to Study 1, but we included different news topics as a second factor. By replicating the experiment for multiple topics, we are better able to determine whether the mechanisms we investigate only exist for certain topics or if they appear independent of the topic being discussed. In this way, choosing two topics increases the generalizability of our findings. In the experiment, participants were divided into four groups that differed by fact-based and opinion-based comments and by the topic of the stimulus (2 × 2 design). Apart from the stimulus, the questionnaire was identical for all groups. In total, n = 854 completed the survey (age: M = 44.68, SD = 14.70; 53.51% female; 88% with high school diploma or above).

Stimulus

To test the generalizability of our findings, we chose topics that differed from the first study but are still comparable regarding the level of media attention they receive. The first topic covered the unusual decline of the number and variety of insects in nature. This topic received some media attention in spring 2019, when scientists reported that about 40% of insect species are critically endangered, which has significant consequences for the ecosystem. The second topic was the health risks of artificial sweeteners. Since artificial sweeteners are a common ingredient in various foods, people encounter them on a daily basis. However, the debate about the potential health risks of consuming artificial sweeteners is still ongoing. Since scientific results do not unanimously back this assumption and emphasize its advantages compared to sugar, artificial sweeteners are still allowed to be used in foods in the EU and the United States.

For both topics, we created two new stimulus versions that varied with regard to the existence of facts and evidence in the comments. The design of the stimulus was kept constant across studies: That is, the stimuli consisted of a rather neutral headline followed by 10 user comments that either did or did not contain facts (the stimuli are available at: https://osf.io/zyq4j/?view_only=c8f641ec0a7d4bfaa9e535e262b6998a). The comment sections were about the same length (insect die-off: lengthfact = 364 words, lengthopinion = 287 words; artificial sweetener: lengthfact = 320 words, lengthopinion = 277 words) although the fact-based comments were slightly longer since the provision of facts and evidence took more space compared to just providing opinions.

We again conducted a manipulation check to investigate whether participants noticed a difference between the more fact-based and the more opinion-based comments (7-point Likert-type scale, from 1 = “contained many facts” to 7 = “contained few facts”). For both topics, we found that the manipulation was successful, insect die-off: MGroup1 = 4.95, SD = 1.57; MGroup2 = 3.55, SD = 1.57; t(424) = -9.52; p < .001; artificial sweetener: MGroup1 = 5.54, SD = 1.42; MGroup2 = 4.28, SD = 1.45; t(426) = −9.08; p < .001. In addition, we could not find any differences in the perceived balance of the online discussions between the fact- and opinion-based conditions for both topics, “The comments all contained a similar point of view,” insect die-off: MGroup1 = 4.22, SD = 1.58; MGroup2 = 4.09, SD = 1.64; t(424) = 0.875; p = .38; artificial sweetener: MGroup1 = 2.91, SD = 1.48; MGroup2 = 3.18, SD = 1.58; t(426) = −1.848; p = .07.

Measures

The main constructs of the experiment were measured the exact same as in the first study. We used identical scales for perceived knowledge, social comparison of knowledgeability, and participation intention. Moreover, we again developed knowledge tests for both topics based on the information provided in the news feeds (available at: https://osf.io/zyq4j/?view_only=c8f641ec0a7d4bfaa9e535e262b6998a). Means, standard deviations, values for internal consistency, and zero-order correlations are displayed in Table 3.

Means, standard deviations, internal consistencies (Cronbach’s α), and zero-order correlations for SEM variables for both topics (insect die-off/artificial sweetener) in Study 2.

Note. Based on N = 372 participants and two-tailed significance tests. All scales are 7-point Likert-type scales with high values representing high levels of the respective construct. Factual knowledge is an index ranging from 0 to 6. *p < .05, **p < .01, ***p < .001. SEM: structural equation modeling.

Results

Data analysis

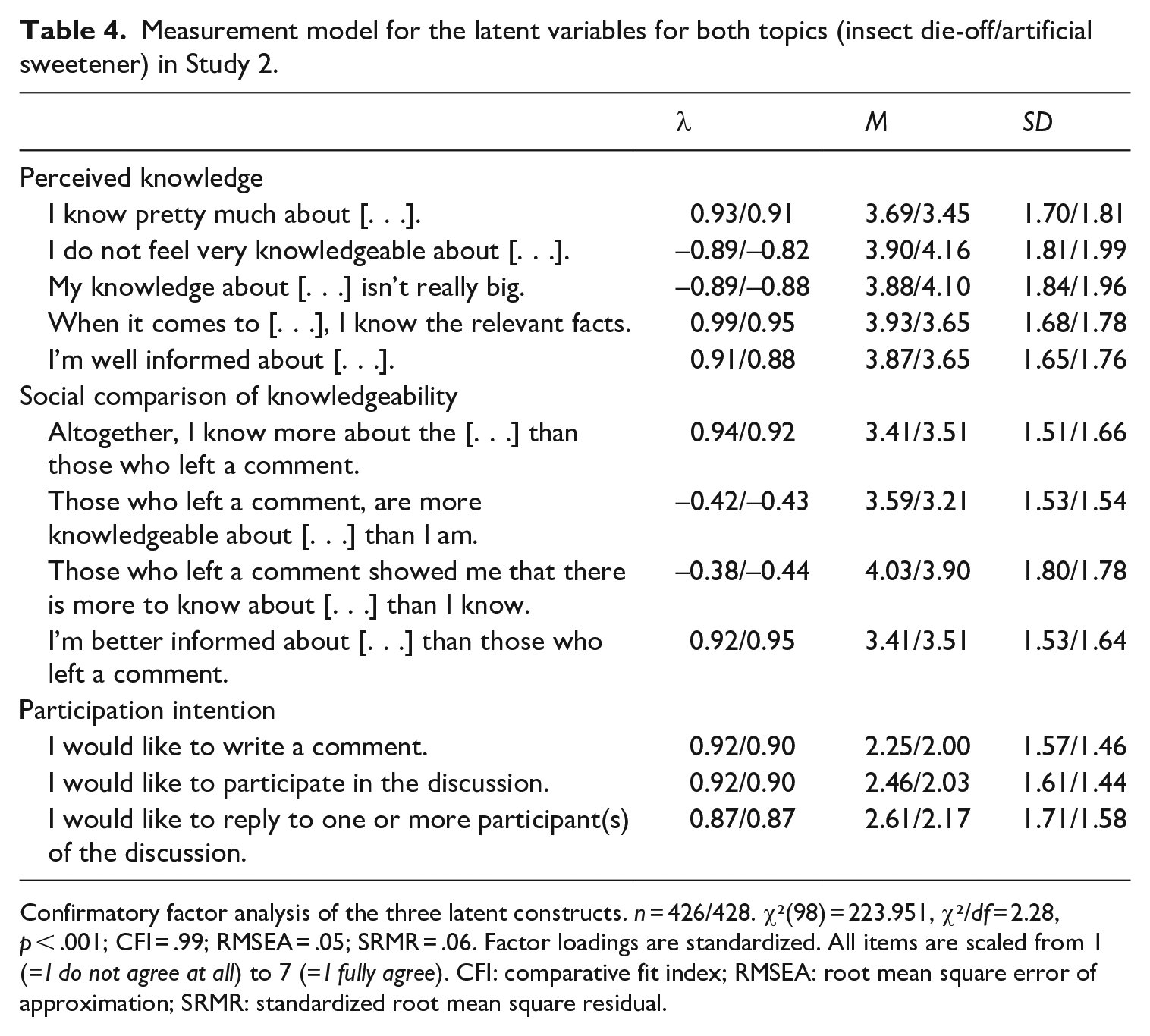

Since Study 2 features two topics with identical designs, we calculated a multigroup SEM based on the model calculated for Study 1, with the topic as a grouping variable. Before the actual analysis of the final model, we investigated possible measurement invariances between the topics. Without any further restrictions, the overall measurement model for both topics showed an acceptable fit, χ²(98) = 223.951, χ²/df = 2.28, p < .001; CFI = .99; RMSEA = .05; SRMR = .06; see also Table 4. When factor loadings were set to be equal, the model fit remained acceptable, indicating metric invariance. A comparison between the models with and without fixed loadings showed no significant variation of fit (p = .35). In the next step, the intercepts were also set to be equal between groups, which still yielded an acceptable fit. Despite a small yet significant increase in χ² (p < .001), scalar invariance can still be assumed, since the overall model fit continues to be acceptable.

Measurement model for the latent variables for both topics (insect die-off/artificial sweetener) in Study 2.

Confirmatory factor analysis of the three latent constructs. n = 426/428. χ²(98) = 223.951, χ²/df = 2.28, p < .001; CFI = .99; RMSEA = .05; SRMR = .06. Factor loadings are standardized. All items are scaled from 1 (= I do not agree at all) to 7 (= I fully agree). CFI: comparative fit index; RMSEA: root mean square error of approximation; SRMR: standardized root mean square residual.

Since both metric and scalar invariance could be assumed, structural paths were introduced to the model. Here, too, all paths were controlled for age, gender, and education. The resulting multigroup SEM also yielded an acceptable fit, χ²(243) = 494.646, χ²/df = 2.02, p < .001; CFI = .97; RMSEA = .05; SRMR = .06. The indirect paths were tested using 95% bias-corrected CIs with 1000 bootstrap subsamples.

Testing of hypotheses and RQs

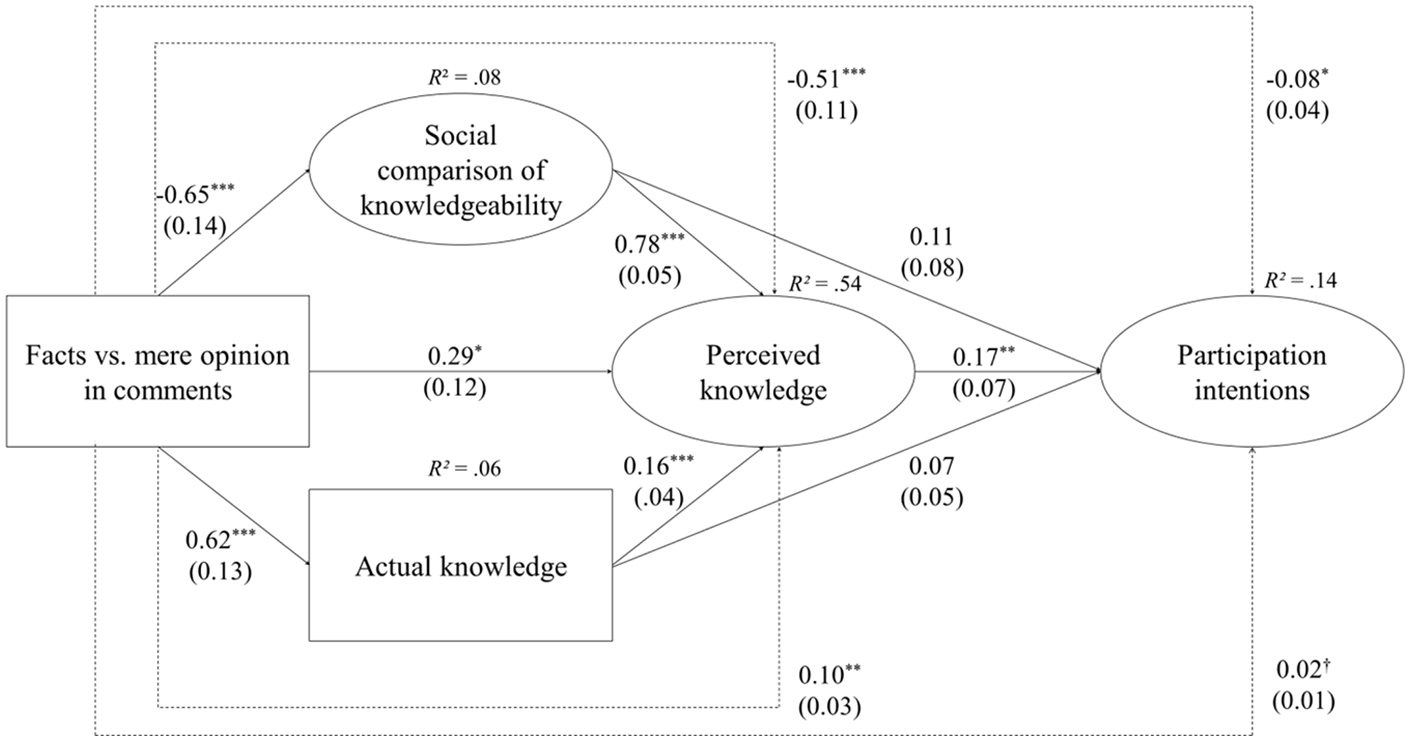

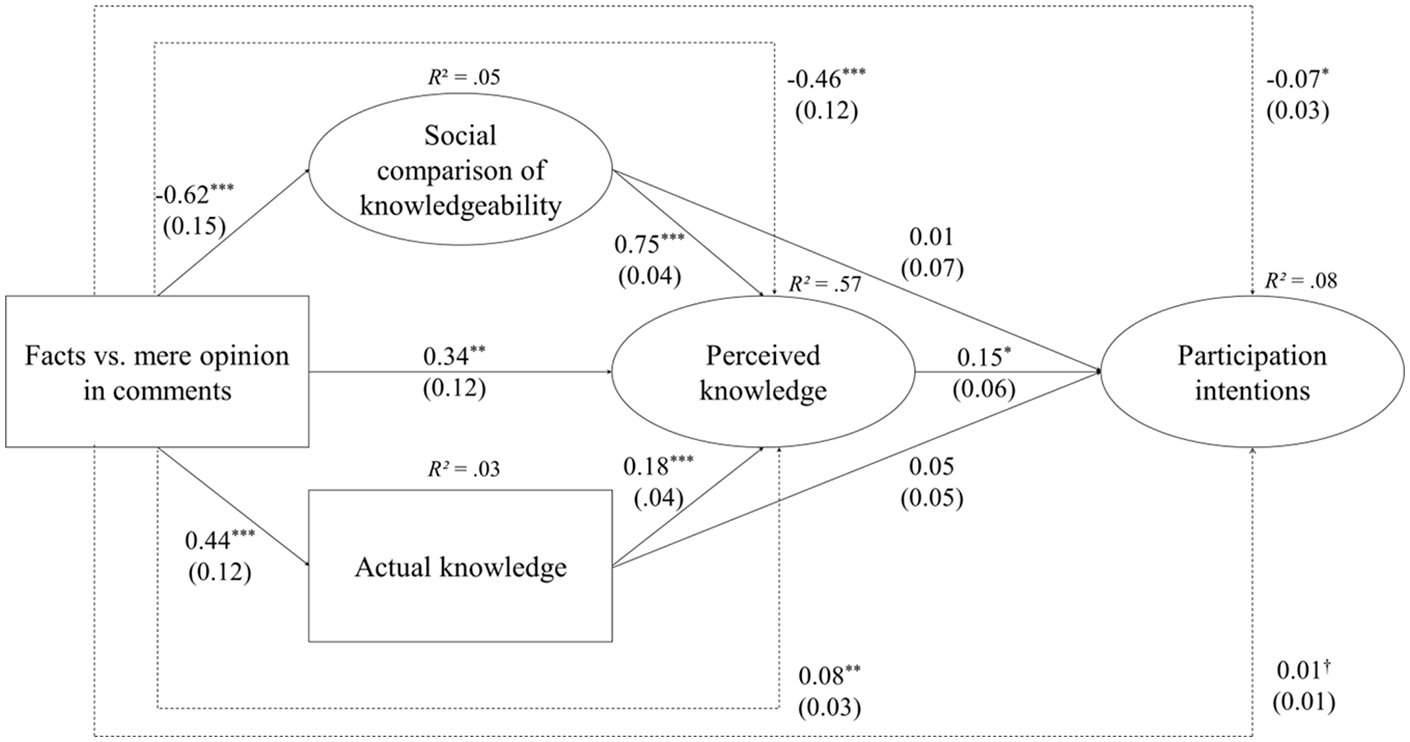

The results depicted in Figures 3 and 4 show a positive effect of evidence-based comments on factual knowledge, which is in line with H1. As predicted in H2, factual knowledge was positively related to perceived knowledge. The results also show a positive indirect effect of evidence-based comments mediated by factual knowledge, supporting H3. Again, the results also show a negative effect of factuality on social comparison, as stated in H4. A lower outcome of social comparison was related to a lower level of perceived knowledge, as predicted in H5. Findings for the indirect effect indicate that the level of factuality in comments had a negative indirect effect on perceived knowledge through social comparison, which is in line with H6. Moreover, as predicted in H7, the findings show a positive relationship between perceived knowledge and participation intentions.

Results of the structural equation model testing effects of different types of user comments on participation intentions in Study 2.

Results of the structural equation model testing effects of different types of user comments on participation intentions in Study 2.

In terms of the serial mediation analyses, we postulated a positive effect of evidence-based comments on participation intention through factual knowledge and perceived knowledge. The findings indicate a serial mediation effect for both topics (insect die-off: B = 0.02, SE = 0.01; p = 0.07; LLCI = −0.00; ULCI = 0.03; artificial sweetener: B = 0.01, SE = 0.01; p = .08; LLCI = −0.02; ULCI = 0.02); however, these effects reach only a marginal level of significance. H8 is not supported, although the descriptive findings point in the hypothesized direction. The serial mediation via social comparison and perceived knowledge reaches significance for both topics (B = −0.08, SE = 0.04; p < 0.05; LLCI = −0.18; ULCI = −0.01; artificial sweetener B = -0.07, SE = 0.03; p < 0.05; LLCI = −0.13; ULCI = −0.03), and so H9 is supported. It can be concluded that for both topics, the effect via social comparison was significantly stronger than the effect via factual knowledge through perceived knowledge (RQ1). The 95% CIs of the serial mediations do not overlap. For RQ2, the findings show that, overall, there was no significant total effect of factuality in comments on participation intention (insect die-off: B = 0.02, SE = 0.14; p = 88; LLCI = −0.25; ULCI = −0.32; artificial sweetener: B = −0.01, SE = 0.13; p = .95; LLCI = −0.24; ULCI = 0.28).

Discussion

The present research aimed to investigate the psychological processes that could explain the effects of evidence-based comments on participation intention. In doing so, we transferred findings from cognitive psychology to the field of online deliberation research. Our motivation for this study was that research into the role of facts and evidence in comments on participation is generally scarce. Results of a survey had indicated that people would rather participate in high-quality discussions than in low-quality discussions (Engelke, 2020). Furthermore, from a deliberation theory perspective, people should favor rational and fact-based discussions over discussions that are based on simply expressing opinions. However, a content analysis could not show that more facts in comments increased participation rates (Ziegele et al., 2014).

To address this discrepancy, we decided to investigate perceived knowledge as a key predictor of discussion participation intention. Specifically, we argued that facts and evidence in user comments could affect perceived knowledge through two different psychological mechanisms. First, being exposed to an evidence-based discussion increases users’ factual knowledge, which in turn has positive effects on perceived knowledge. Second, at the same time, users perceive the degree of evidence in a discussion as a point of reference for assessing their own level of knowledge; consequently, they arrive at lower assessments of their perceived knowledge if online discussions are higher in factuality. Therefore, evidence-based comments could simultaneously promote and inhibit users’ participation intentions. The results of our two experimental studies corroborate these postulations. For three different topics, we showed that evidence-based comments decrease readers’ perceived knowledge through processes of social comparison and increase it through knowledge acquisition, which indirectly stimulates participation intention.

Our findings indicate that the two opposing indirect effects on perceived knowledge are generally topic-independent. However, the role of perceived knowledge for participation intention varied across topics. Specifically, for the topic in Study 1, intention to participate was more strongly based on perceived knowledge compared to the topics of Study 2. A relevant difference might be that the first topic was more politically charged, since the debate about pollution was strongly related to the then-relevant Dieselgate scandal, which was intensively discussed in the press and in the political sphere. The perception of knowing more about the topic might also be the result of being more personally involved in the topic, which also seems to motivate people to participate in online discussions (Weber, 2014). In contrast, insect die-off and artificial sweetener were less prominent and less politically charged topics when Study 2 was conducted. For these topics, knowledge perception did not play a decisive a role in users’ intentions to participate. In addition, they might have also based their decision on general interest, personal experience, or their opinion.

In sum, the topic of Study 1 provoked clearer patterns than the topics of Study 2. As a result, the serial mediation path through actual and perceived knowledge only reached marginal significance for the two topics in Study 2. While the indirect increase in participation intention provoked by evidence-based comments was less clear in Study 2, the indirect decrease was comparatively as strong as in Study 1. Regardless, the overall picture still indicates that evidence-based comments simultaneously increase and decrease participatory intention through perceived knowledge, although the strength of this relationship depends on the topic at hand.

Furthermore, the results of the current research contribute to our understanding of how subjective knowledge on an issue emerges. Two heuristics seem to be at work simultaneously. First, perceived knowledge profits from exposure to facts in a discussion on an issue, through the acquisition of factual knowledge. This denotes an availability heuristic, which assesses one’s own level of knowledge through the ease of information retrieval. Our finding—that is, participants who were confronted with facts in comments scored higher on a knowledge test than participants who read largely similar information in the form of opinions—indicates that comments have the potential to initiate knowledge acquisition processes by providing additional facts or evidence on a topic. Essentially, this can be seen as a positive outcome of online discussions. However, for users to become more knowledgeable through reading user comments, online discussions must consist of rational and fact-based discourse, which is rather an exception on SNS (Rowe, 2015).

The second heuristic that seems to be at work when individuals assess their perceived knowledge is social comparison. Such processes shape readers’ own knowledge assessments, which then predict participation intentions. In reality, many online discussions are of low deliberative quality (Anderson et al., 2014; Coe et al., 2014). It is possible that increasing the share of facts and evidence would not increase overall participation rates, since people would more often conclude that their level of knowledge is lower than that of other users, which lowers participation intention. However, a higher level of facts in comments would still have positive outcomes. First, it might motivate more people with a higher level of (perceived) knowledge to participate, since they would not feel inferior compared to others. This might stimulate more profound discussions among people who are well-versed in a topic. Second, a more fact-based discussion would also provide more opportunities to learn for those reading the comments. That means that even if overall participation does not increase, more fact-based online discussion can still be considered beneficial from a democratic perspective.

Comparing these two mechanisms shows that the indirect effect of facts and evidence in comments through social comparison and perceived knowledge is stronger than the positive effect rooted in factual knowledge. Still, since the total effect of fact- versus opinion-based comments did not significantly differ from zero in this study, it can be concluded that the stimulating and hindering effects of discussion factuality override each other. This finding explains the null effect of facts in comments on user participation reported in previous works (e.g. Ziegele et al., 2014).

More generally, the present research highlights the central role of perceived knowledge for behavioral intentions. Although we found differences for the three topics under study, it can be concluded that the feeling of being knowledgeable plays an important role in the decision to participate in an online discussion. When it comes to knowledge, the results lead to the conclusion that, in terms of the actions people take, what people actually know about a topic is much less important than what they think they know. Thus far, perceived knowledge has not received much attention in media effects research. The present results show that future research on participation and other behavioral media effects should consider the mediating role of meta-knowledge.

Limitations and future research

While the samples of our experiments were well-distributed regarding age and gender, participants were predominantly highly educated; a higher level of education is likely related to a higher level of factual knowledge. Findings by Kruger and Dunning (1999) as well as Fernbach et al. (2013) have indicated that people with lower levels of knowledge have a stronger tendency to overestimate their knowledge and to be more confident about their strong opinions. This could mean that they might feel more superior compared to others when confronted with comments based on opinions. Thus, the educational level of the sample might have strengthened the indirect paths in our models. Further studies with more diverse samples in terms of education are necessary to test the generalizability of our findings.

Regarding the experimental manipulation, the current research compared the role of evidence in online discussions using two extreme cases: across topics, we constructed two discussion versions in which either all or none of the comments were evidence-based. In reality, many online discussions consist of both fact- and opinion-based comments. Thus, future studies will need to apply more detailed experimental designs that enable testing the nuanced effects of gradual differences in argument quality in a discussion on perceived knowledge. This also applies to the nature of facts in user comments. It would be interesting for future research to investigate whether comments that include “alternative facts” or disinformation disguised as facts have similar effects as the truly factual comments that we investigated.

Another limitation concerns the share of explained variance of participation intention. Although perceived knowledge and predictors of this construct turned out to be relevant to participation, it could only explain 8–22% of variance, depending on the topic. Consequently, other factors that have not been considered in this research are also important to explaining intention to participate in the comment section. For example, previous research has shown that characteristics of the news item (Weber, 2014) or news value in user comments (Ziegele et al., 2014) also play a role in the decision to contribute to an online discussion.

Finally, our study focused on effects of different kinds of user comments on participation intention by asking participants how likely they would leave a comment. Even if participants indicate that they would likely participate in the online discussion, we do not know how their own contribution would look like. In accordance with norms described in deliberation theory, participation should adhere to certain standards, like rationality and respectful interactions. Previous content analyses showed that users seem to align the content of their own contributions to the standards of online discussions as indicated by previous comments (Friess et al., 2021; Sukumaran et al., 2011). However, since our study did not investigate whether evidence-based or opinion-based comments would also increase a discussion that is desirable from a deliberative point of view, it is up to future research to address this question.

Conclusion

The present research provides insights into the role of cognitive processes in online deliberation and media effects in general. Our findings contribute to the understanding of how individuals arrive at an assessment of their own knowledge on an issue. Moreover, they help explain resulting behaviors—namely, why a high deliberative quality of a discussion does not necessarily affect users’ intentions to participate. This has consequences for research on discussion participation and political participation in general, as well as for political and media practice.

Our results can support researchers of political participation and other behavioral media effects in examining the role of individuals’ perceived knowledge and other meta-cognitive self-reflections as mediating factors. The results can be understood as a sign that it is always worth trying to open the “black box” of individuals’ cognitive processes to make sense of seemingly contradictory patterns of behavior that have been observed. For deliberation research in particular, our findings demonstrate how an individual-centered psychological approach can complement research that focuses on message content to more fully describe and explain phenomena of societal discourse.

Also, media outlets and platform providers seeking to motivate more users to participate in political discussions should make their users feel that their knowledge on an issue is sufficient for engaging in the discussion and that it is important for a functioning democracy. This could be achieved by, among other things, implementing small-scale knowledge quizzes with easy-to-solve questions or sending reminder messages emphasizing that it is important for democracy that different voices are heard. However, such measures could also motivate people with low actual competency in an issue to participate in a discussion, which might ultimately decrease the quality of the discussions despite higher participation rates. Therefore, these measures should be used carefully.

Finally, our findings provide insights for designing health and science messages that aim at motivating more people to contribute to online discussions. Since we found that facts in comments initiate processes of self-centered judgments and comparison processes, which are related to perceived knowledge and participation intentions, it may be advisable to compose messages that provide easily accessible facts and, at the same time, do not make people feel inferior to others. These kinds of messages would provide people opportunities for learning without being overwhelming or intimidating. Such messages could be ideal conditions for fostering rational public participation, since people who feel knowledgeable about and cognitively involved in an issue provide more rational comments to online discussions (Beckert and Ziegele, 2020).

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Editing service costs for this article were financially supported by the Faculty of Social Science, University of Vienna, Austria.