Abstract

Discord, a popular community chat application, has rhetorically distanced itself from its associations with white supremacist content through a public commitment to proactive moderation. However, Discord relies extensively on third-party services (like bots and server bulletins), which have been overlooked in their role in facilitating hateful networks. This study notes how Discord offloads searchability to server bulletin sites like Disboard, to deleterious effect. This study involves two parts: (1) we use critical technoculture discourse analysis to examine Discord’s blogs, policies, and application programming interface and (2) we present data scraped from 2741 Discord servers listed on Disboard, revealing networks of hateful and white supremacist communities that openly use “edgy,” raiding-oriented, and toxic messaging. These servers exploit Discord’s moderation tools and affordances to proliferate within Discord’s distributed ecology. We argue that Discord’s policies fail to address its reliance on unmoderated third-party services or the networked practices of its toxic communities.

Introduction

Discord is an online, persistent group-chatting application that launched in 2015 and quickly gained popularity with online gamers. Unlike algorithmically curated social networking platforms, Discord is structured around disparate, invite-only virtual communities (called “servers”), with individual users anonymously flitting between them. Servers have voice/video and text channels, and community moderators can customize individual user roles and restrictions as well as implement third-party bots that respond to user inputs. Although Discord has recently begun rebranding itself to be more approachable for the general public, it remains popular among gamers due to its reliability for chatting with many users, and the easy integration of content from YouTube, Twitch, and online videogames through bots and Discord’s application programming interface (API).

In 2017, Discord was found to have harbored the violent white supremacist groups behind the deadly “Unite the Right” rally in Charlottesville, and scholars have since noted the troubled legacy of Discord and hate groups (Brown and Hennis, 2019). In response to critical attention by journalists and scholars between 2017 and 2019, Discord intensified its moderating practices and began rebranding itself by implementing transparency reports and a public commitment to proactive—as opposed to reactive—moderation (Discord Transparency Report: Jan–June 2020).

While Discord has committed to increased moderation, we question this commitment when Discord does not meaningfully address the tremendous role of third-party bulletin sites that serve as an interconnective structure for Discord communities. We ask how Discord’s continued reliance on third-party sites and services reifies an “outsourcing of responsibility” and facilitates the continuation of hate networks and white supremacist publics (Brown and Hennis, 2019). Because Discord only allows users to search partnered and verified servers (generally with 10,000 member minimums) 2 , third-party sites like Disboard—a public bulletin for Discord servers—are popular for searching smaller communities. Disboard relies on its own third-party bot, which, when integrated directly into Discord servers, displays a public invite link and the number of active members. To excavate the role that these third-party services play in facilitating hateful content, our study comprises two parts:

We use Brock’s (2020) model for critical technoculture discourse analysis (CTDA) to demonstrate how Discord’s rhetorical rebranding and institution of a curated search stratifies their platform: on the surface, they present an idyllic, engineered community of vetted servers for incoming users, while obscuring a persistent network of toxic servers beneath.

We argue that Discord’s commitment to proactive moderation is undercut by its reliance on third-party sites. We demonstrate how third-party structures maintain an aggressively racist, toxic technoculture that underlies the platform. We examine data scraped from 2741 Discord servers publicly listed on Disboard that actively marked their associations with white supremacy and neo-Nazism, raiding, queerphobia, transphobia, and toxicity.

Discord occupies a unique space among social media platforms: part collection of private communities, part social hub, and (recently) part classroom tool. While other social media platforms’ models of expansion have resulted in the continuous erosion of privacy norms, and the algorithmic coding of neoliberal economic principles into sociality (Dijck et al., 2018: 20–21), Discord has received public praise for not using private information for marketing (NPR.org, 2021). In this way, Discord has gained popularity among privacy-minded users and those seeking a direct outlet to their fan communities. Rather than selling user data, Discord earns revenue through “Discord Nitro” and “Server Boosts,” subscriptions that grant greater user and community affordances: custom emojis, higher quality streaming, file uploads, and even the ability to “change identity” between servers (Lorenz, 2019). In other words, Discord does not operate in the traditional economic model of advertising or cloud platforms, and appears to be focusing on “growth before profit” (Srnicek, 2017: 119). Discord has experimented with several new avenues for profitability, including a short-lived videogame marketplace (Discord, 2018) and, more recently, paid ticketing for community audio events (Marshall, 2021).

These same privacy features that Discord is celebrated for, however, drew white supremacists to the site and allowed them to flourish. Discord emphasizes individual responsibility in navigating its site; its Safety Center notes the tools that Discord (n.d.-c) provides for users to craft their own safe experience. This emphasis on libertarian individualism was the subject of Brown and Hennis’ analysis of Discord, “Hateware and the Outsourcing of Responsibility.” Drawing from Gillespie’s study on platform politics, Brown and Hennis (2019) identify Discord’s structure of user-moderated private communities as representing an embedded libertarian ethos, and a pattern of exploitative moderating practices. They claim Discord over-relies on community moderators and call on Discord to “insource” their site structure by moderating Discord chats directly and taking responsibility for their platform—even going so far as to call Discord “hateware.” While we echo Brown and Hennis’ call to insource site structures, we direct attention not to community moderators, but to Discord’s outsourcing of key search functionalities to third parties like Disboard.

Discord’s expansion of its moderation wing has been rhetorically effective. Its yearly transparency reports cite ever-increasing numbers of bans, removals, and the deplatforming of extremists. For example, Discord cites the Black Lives Matter protests of Summer 2020 and their “efforts to ban groups seeking to disrupt peaceful protests” as successes in their new approach to moderation (Discord Transparency Report: Jan–June 2020). These efforts have not gone unnoticed, and wider media systems have praised Discord, making headlines, such as:

Discord Was Once The Alt-Right’s Favorite Chat App. Now It’s Gone Mainstream And Scored A New $3.5 Billion Valuation (Brown, 2020).

How an App for Gamers Went Mainstream (Lorenz, 2019).

Discord’s commitment to proactive moderation coincides with its explosive growth during the pandemic. Receiving an influx of 100 million dollars in venture capital in June, and forgoing a 10 billion dollar offer from Microsoft to purchase its platform, Discord clearly has its eyes on expansion. In his interview with NPR.org (2021), Discord’s CEO Jason Citron speaks of “shaking off” their alienating aesthetic and marketing to a wider audience. Citron describes how the COVID-19 pandemic has opened opportunities for Discord to expand beyond its gamer demographic—following a larger trend in gaming industries that Amanda Phillips notes engages “in a neoliberal politics of diversity that places the importance on spending power and marketing for the purposes of recognition” (Phillips, 2020: 8). This “shaking off” is echoed in the company’s recent advertising push, which rebrands Discord as “Your Place to Talk.” A promotional video on Discord’s (2020) YouTube channel explains “. . . to use Discord, some of you felt you had to exclude your friends who didn’t game. Discord works for gaming, but you showed us it could work for so much more”. Discord has capitalized on this by releasing server templates for different types of clubs, communities, and even classrooms (Nelly, 2020). Discord’s concerted efforts at expanding its audience, however, raise questions about its ability to distance itself from its original, notoriously toxic userbase. While Discord claims to be actively banning toxic communities, the private nature of the platform makes it difficult to know how much Discord’s public rebranding aligns with the culture of its actual userbase.

Part and parcel of Discord’s expansion is its institution of a limited search function. Until recently, users could not search for communities, but required individualized invitations to join a server. While a vast majority of Discord (n.d.-a) servers remain wholly private, a growing number are self-designating as “community servers,” in which groups organize around a shared interest in a semi-private manner. At present, Discord (n.d.-b) restricts its search to community servers that meet multiple requirements: they must be partnered or verified, and have an active userbase of over 10,000 members. 2 While a curated search of servers seems laudable in regards to providing a safe experience for incoming members, Discord’s curation of only high-profile, verified, and partnered servers creates an iceberg effect; within Discord’s wider digital ecology, their curated search reveals only an illusory public visible above the surface, while eliding problematic activity within the private, unseen spaces beneath.

While Discord has so far refrained from networking smaller communities, this has opened the door for third parties to do so. Emerging from Discord’s lack of networking for small communities is the public server bulletin Disboard. A third-party site that allows the listing and searching of Discord communities, Disboard’s slogan is “We connect

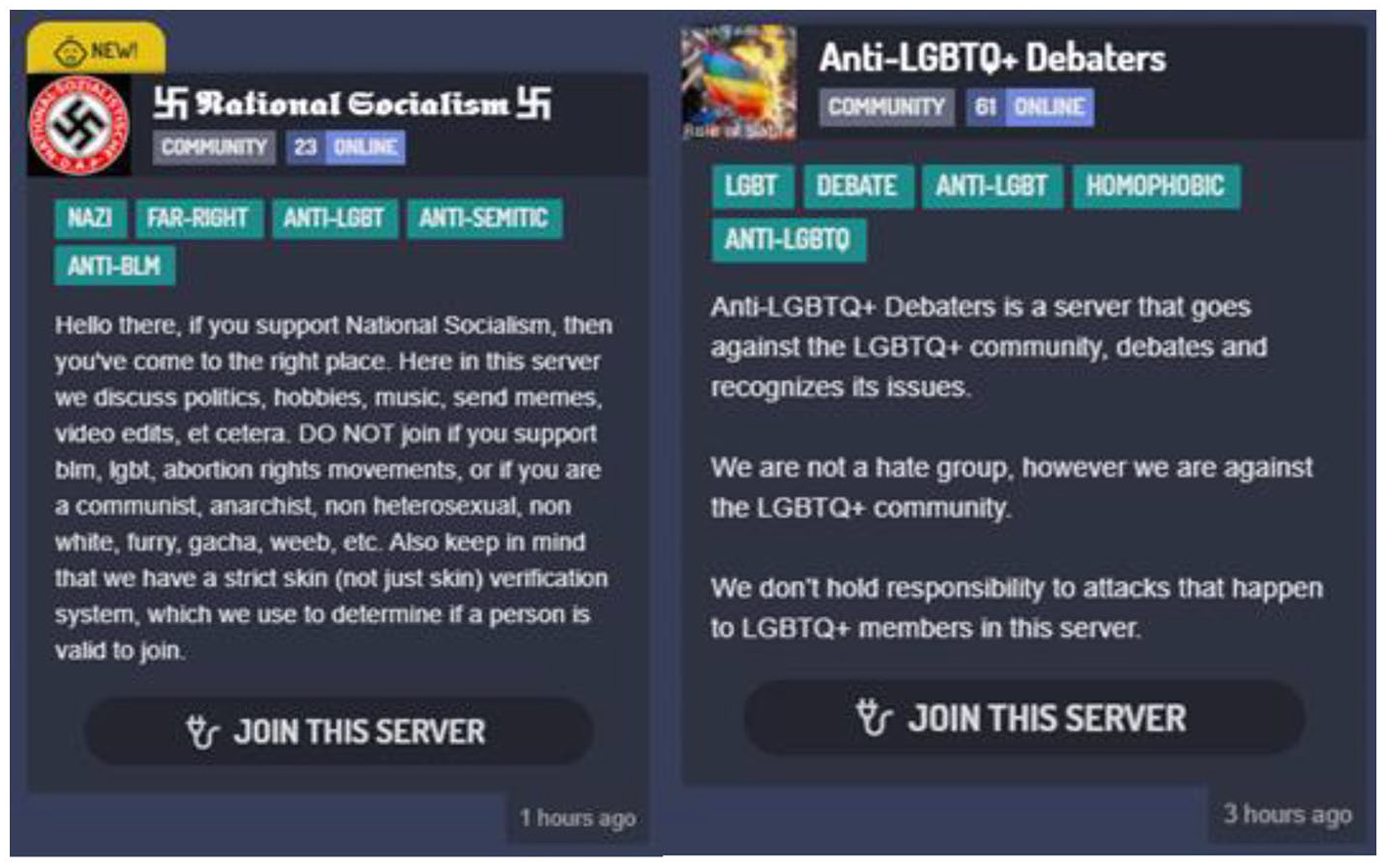

Disboard, then, becomes a valuable lens for examining Discord’s culture beneath the curated layer. Servers listed on Disboard have no member requirements, and display public names, tags, and descriptions of their communities (Figure 1). This study, in analyzing a large population of Discord servers as indexed on Disboard, provides an extensive, horizontal perspective of how toxic, white supremacist, geek-masculine technoculture—the very legacy Discord is trying to outgrow—remains pervasive amid its shrouded userbase.

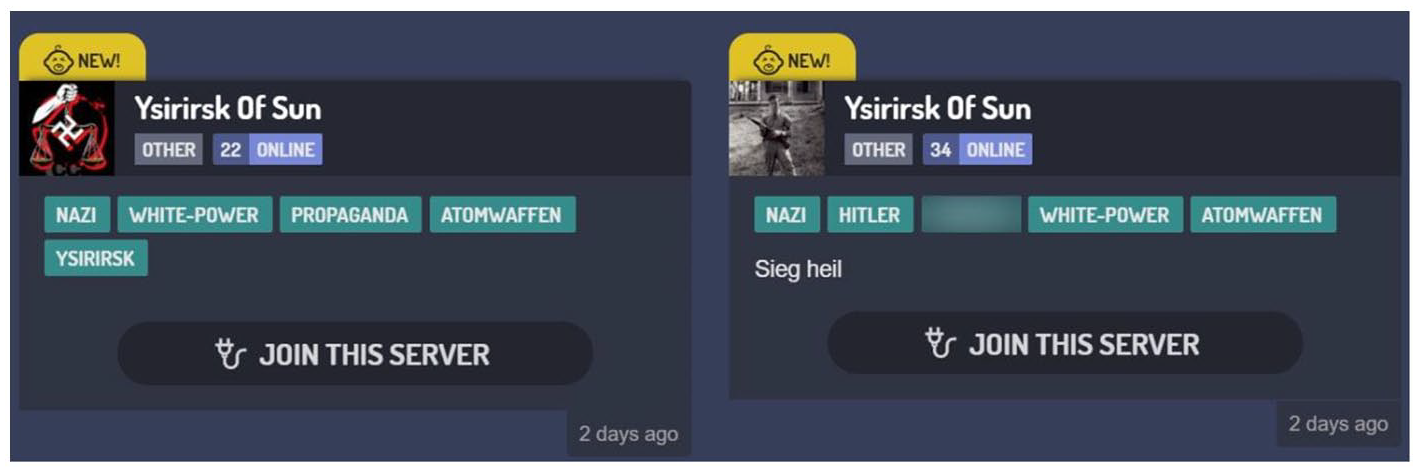

Listings of two Disboard servers openly promoting hateful affiliations.

In analyzing Disboard, we draw from platform-studies research about the dynamics of search. Safiya Umoja Noble (28), in Algorithms of Oppression, details how search is not a neutral tool, but a function that is both “formed and informed” by the values and algorithmically traced actions of its users. Because Disboard acts as the de facto engine for searching small Discord communities, we can utilize scraped data from Disboard to investigate what is networked, linked, and encouraged between Discord’s users and Disboard’s algorithms. This allows us to highlight the disparities between Discord’s rhetoric, curated search, and the practices of toxic networks that persist on the platform—excavating the racist and hateful practices enabled through third-party search algorithms (Noble, 2018).

In this same vein, Tarleton Gillespie (2010) points out the troubled legacy of the term “platform” and how it has been used to meter the responsibility of social media companies. He writes that These are efforts not only to sell, convince, persuade, protect, triumph or condemn, but to make claims about what these technologies are and are not, and what should and should not be expected of them. In other words, they represent an attempt to establish the very criteria by which these technologies will be judged, built directly into the terms by which we know them. (p. 359)

Discord, by outsourcing much of its search functionality to third-party services, rhetorically limits its responsibility as a platform. Yet this scope is a manufactured one; because Disboard exists as an extension of communities on Discord, we draw on Gillespie’s (2018) critiques that social media sites must account for the safety of their users across platforms (pp. 199–200).

Networked harassment scholars have noted the way that hateful practices are shaped as they move between social media spaces (Burgess and Matamoros-Fernández, 2016). In this case, harassment is amplified between Discord and third-party services, creating a toxic technoculture. Adrienne Massanari’s (2017) work on #Gamergate and how Reddit’s structure allowed for the proliferation of a toxic technoculture grants insight into hate networks embedding themselves in platform search functions. Massanari has defined toxic technoculture as “the toxic cultures that are enabled by and propagated through sociotechnical networks,” and noted that these spaces encourage harassment and “demonstrate retrograde ideas of gender, sexual identity, sexuality, and race and push against issues of diversity, multiculturalism, and progressivism” (Massanari, 2017: 333). Our interpretation of toxic technocultures acknowledges that both “edgy/memey” communities and militantly organized hate groups all contribute to a persistent network of rhetoric and recruitment.

A large-scale analysis of Discord servers on Disboard provides a window into the practices of otherwise hidden communities. To this end, we hope to map the depths beneath Discord’s rhetorical iceberg and expose the toxic, distributed ecology hidden within.

Material and methods

Due to its closed structure and novelty, research on Discord is only recently emerging, and third-party services like Disboard remain critically understudied. We adopted a mixed-methods approach: combining qualitative analysis of Disboard’s interface and server discourse, while using quantitative server population data to identify larger patterns. Brock’s (2018) method for CTDA argues that new media scholars “‘read’ the mediating artifact—the interface, client, hardware, software, and protocols—as a text” and examine technology alongside contextualizing discourse. To this end, we investigated the role of Discord’s public rhetoric, the interfaces of both Discord and Disboard, Discord’s API and platform policies, and the discursive practices of hateful users to more fully contextualize the toxic network.

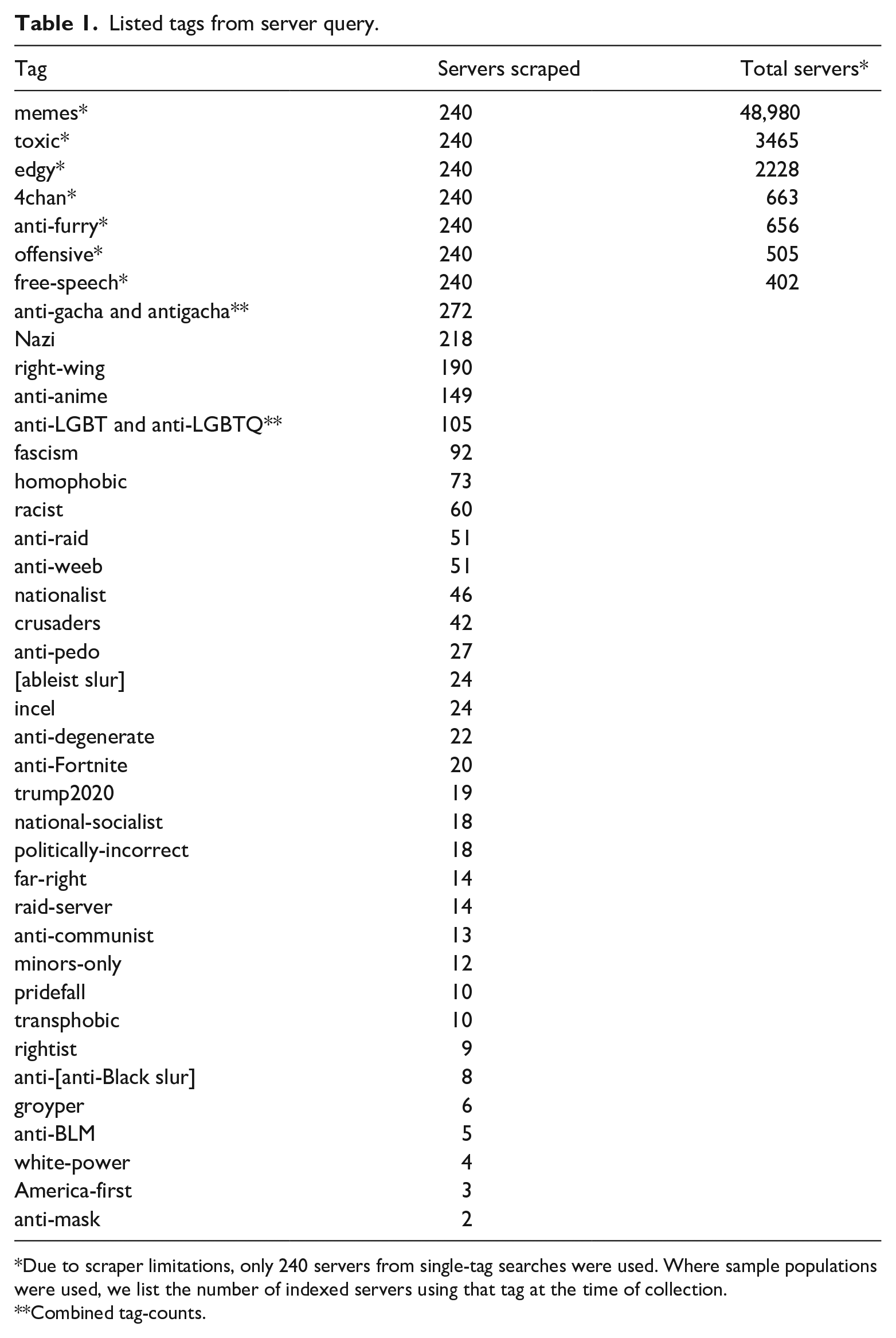

Burgess and Matamoros-Fernández present a framework for issue-mapping that illuminates the role of mediators in issue publics by (1) tracking keywords, patterns, and associations among collected data, (2) tracing the genealogy and context of terms within the discourse, and (3) monitoring activity and relationships between key actors to further analysis. We applied this method by scraping public server data from Disboard using an open-source Python scraper (DiscordFederation/DisboardScraper, 2020). We selected tags for collection by examining Disboard’s indexed, tag-based search and scraping preliminary data to determine which tags were possibly associated with hateful content. We began with immediately identifiable tags (i.e. Nazi, racist, and anti-LGBT). We then selected co-occurring tags and, after careful examination, expanded our sample criteria via snowball effect, including tags, such as pridefall, groyper, and raid-server. Some tags, such as anti-anime, minors-only, anti-weeb, and anti-pedo were also collected to contextualize emergent hate patterns in the sample. Ultimately, we narrowed our sample to 40 tags connected to hateful content (Table 1). We then scraped the following data for the first 240 servers using each tag in the sample: server name, current online users, description, and all 1–5 descriptive tags. After consolidation, 567 duplicate servers (which used multiple target tags) were combined, resulting in a final population of 2741 Discord server listings. We used Orange data mining software to assist in analysis by running topic modeling, concordance, and word clustering tools (Demšar et al., 2013). We also used AntConc concordance tools to quickly trace larger discursive patterns and count prevalent terms.

Listed tags from server query.

Due to scraper limitations, only 240 servers from single-tag searches were used. Where sample populations were used, we list the number of indexed servers using that tag at the time of collection.

Combined tag-counts.

Ethical considerations

Because Disboard provides public server descriptions, and does not require registration, we were able to ethically collect data about toxic Discord servers. This work advances scholarship on platform moderation (Gillespie, 2018), and hateful content operating across platform structures (Massanari, 2017). We hope to demonstrate new avenues for researching Discord communities, while engaging in the digital feminist practice of exposing the role of hateful social media structures within matrices of domination (D’IgNazio and Klein, 2020).

Content Warning: excerpts in the Results section contain examples of egregiously hateful messaging (especially anti-Semitic and anti-Black racism, transphobia, and queerphobia). Slurs have been redacted. Reader discretion is strongly advised. We have deliberately minimized direct references to hate speech in the Discussion section.

Results

Our findings indicate the presence of a pervasive network of hateful server listings that openly advertised racist, queerphobic, and aggressive communities. The discourse was characterized by the targeting of other Discord communities and marginalized groups, trolling and “flaming” (deliberately inciting content associated with trolling, [Thacker and Griffiths, 2012]), and networking to other hateful spaces. Ultimately, our analysis produced a number of crucial findings.

There was an abundance of both explicitly and discreetly hateful tags, descriptions, and titles used to mark and link communities.

We identified complex networks of “feeder” and conscription servers for Nazi and raiding-oriented communities.

Toxic servers boasted their resilience to deletion and abuse of Discord’s moderation tools to protect and proliferate hateful practices.

Below, we break down and characterize these patterns, and their implications for understanding networks of hate as they operate across Discord’s platform.

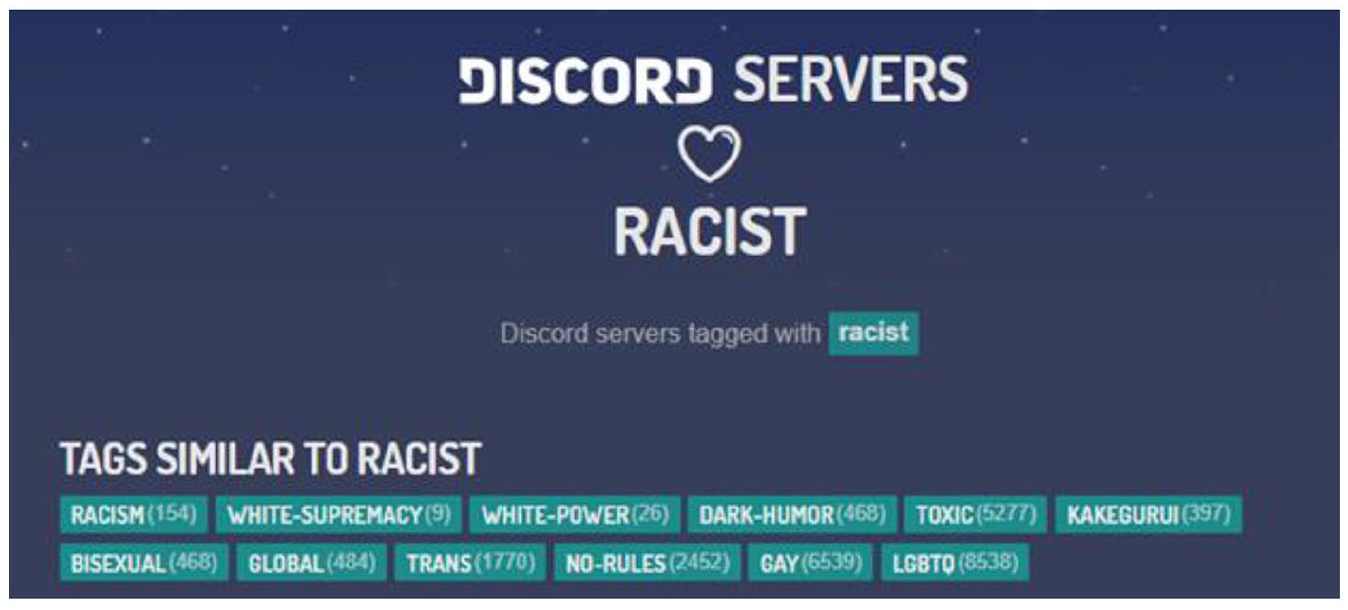

The prominence of hateful tags and descriptions

We found thousands of Discord servers that marketed themselves on Disboard as hateful and white supremacist spaces. Disboard’s search algorithms recognize and exacerbate this linkage, providing recommendations for racist searches that “both informs and is informed in part by users” (Noble, 2018: 25). We were disturbed to find, for example, that direct queries for racist content, such as racist not only returned indexed tag results for hundreds of servers, but also encouraged the user to explore “similar tags,” such as white supremacy and toxic (Figure 2).

Disboard’s search indexes and recommends tags based on racist searches.

Hateful tags were not only indexed on Disboard, but also used by hundreds and even thousands of servers. Many of the most popular tags we collected were openly hateful: toxic (n = 3465), edgy (n = 2228), 4chan (n = 663), offensive (n = 505), Nazi (n = 218), anti-LGBT and anti-LGBTQ (n = 105), fascism (n = 92), homophobic (n = 73), and racist (n = 60). Toxic was such a prominent tag that it was even featured on the front page of Disboard alongside other “Popular Tags.” The prevalence of these tags indicates a collective willingness to openly identify communities as hateful, as these labels were set by listers with the intent of advertising their communities on a public board.

We also identified a host of tags that were less common, but deeply rooted in white supremacy, such as nationalist (n = 46), national-socialist (n = 18), politically-incorrect (n = 18), anti-[n-----] (n = 8), groyper (a neo-Nazi affiliation; n = 6), anti-BLM (n = 5), white-power (n = 4), and america-first (n = 3). Other tags identified specifically queerphobic servers, such as transphobic (n = 10) or pridefall (n = 10), or used ableist slurs in their tagging [r-----] (n = 24). While these tags were less common, they were often linked to the more prominent tags listed above. For example, the transphobic tag most frequently co-occurred with anti-lgbt[q] (nine times) and Nazi (six times). Although some of these tags were linked to relatively few servers, all were indexed in Disboard’s search algorithm.

Finally, even where hateful content was not directly targeting marginalized groups, there remained an almost militaristic dynamic of antagonism against prominent Discord communities. In particular, clusters of servers that designated themselves as anti-furry (n = 656), anti-gacha (n = 272), and anti-anime (n = 149) reflected both larger structures of inter-platform hostility and proliferation of toxic geek masculinity. Yet these networks were not distinct: not only did Disboard’s networking algorithm link anti-furry most strongly to the Nazi tag, but also in our sample, Nazi most often co-occurred with anti-furry (60 times).

These server-counts indicate entire communities, sometimes with hundreds of members. Disboard records an active member count for each listing through its bot integration (this includes only the number of members currently online, not the total membership). For example, while 218 servers used the Nazi tag, 2718 members were actively online in those communities at the time of collection. Similarly, even less popular tag-groups, such as the 73 homophobic servers, reflected 1262 active members, and racist servers had 913 active members. Across the total population of 2741 servers using hate-associated tags, there were 853,885 active members listed, averaging 336.3 members per server. 1

In the listings themselves, these servers often used openly hateful messaging in their community names. Many touted Nazism in their server titles

“Nationalsozialistische Deutsche Arbeiterpartei” (141 active members, “Nazi” in German)

“HITLER FAMILY卐” (103 active members)

“Auschwitz Railroad” (34 active members)

“Hitler’s Holy Crusade” (17 active members)

This same pattern of racist, sexist, and queerphobic trolling characterized the larger discourse, with no distinction between what was “flaming” (Thacker and Griffiths, 2012) and genuine hateful messaging. Given the context of much of the messaging, the intentionality of the lister matters little, and often the content of the server description was, in itself, actively hateful. For example, one server titled “Burn F[-------] Alive” had a six-paragraph, deeply transphobic, message (which we found was copied from 4chan), telling trans women to kill themselves as its public server description:

You will never be a real woman. You have no womb, you have no ovaries, you have no eggs. You are a homosexual man twisted by drugs and surgery into a crude mockery of nature’s perfection. [. . . continuing for five paragraphs].’. . .

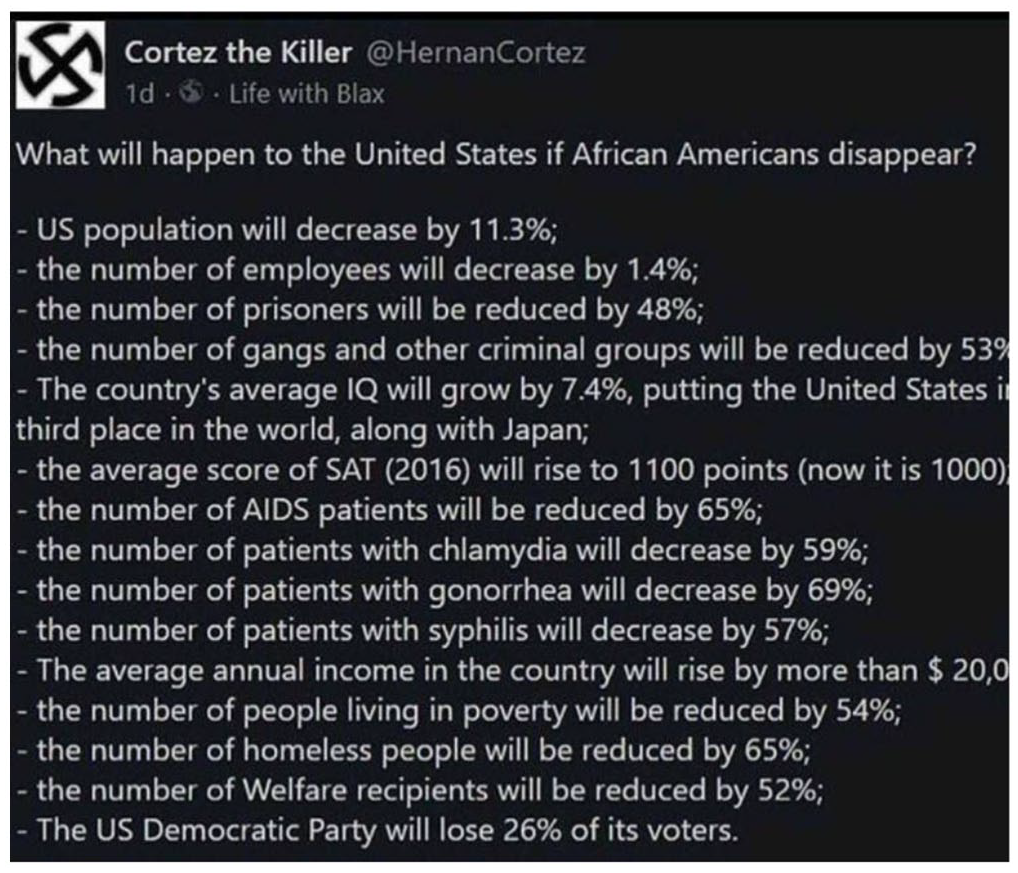

Another server, titled “Discord Omegle,” hosted a link to an essay arguing for anti-Black genocide, linking to articles and a graphic from a user with a swastika profile image (Figure 3,

An image linked on a Disboard server description, reiterating racist, anti-Black disinformation.

This pattern of using Disboard’s description box as a place to spread hateful disinformation was not uncommon. Another server, titled “Enbyphobia” (tagged as racist, Nazi, anti-lgbt, homophobic, and superstraight) likewise used its description to share a link to an article arguing that “LGBT+ people are more likely than straight people to have mental illness.” These instances demonstrated not only proliferating hate, but also disinformation (Phillips, 2018) directly distributed through Disboard server descriptions. These examples are egregious, but serve as an illustration of the hateful conduct that is common on Disboard. These servers are not merely boasting their toxicity as a means of flaming, but actively contributing to racist, queerphobic disinformation and hate-spreading, exacerbated and enabled by Disboard’s interface.

Among the use of tags targeting vulnerable groups, we also found that race-restricted communities were common. For example, a community called “Dogeian Philosophy” used the following description: FUCK K[----],N[------],F[----],T[------] . . . Etc!!!! Share your knowledge with the rest of us (books, movies . . .), and feel welcome to share your views with others!! Only whites are allowed obviously.

The problem of race-restricted communities has been documented by scholars including Megan Condis, who noted in 2019 that white supremacist communities were sharing links to “white only” Discord servers (Condis, 2019). Although Condis mentions Discord’s efforts to clamp down on this, we identified race-restricted and sexuality-restricted membership in many server descriptions:

“Must be white and 17+ for membership.”

“Swedes, Germans, Norwegians are welcome!!!”

“IF YOU ARE BLACK DO NOT ATTEND THE SERVER YOU PIECE OF SHIT !”

“If you are a furry, black person, or anime f[--] if you join we will make you regret living.’

“any pure white christians that Read the Word and do not challenge it are willing to be welcomed”

“Gay = ban (unless femboy)”

“This is a friendly sever for non gay people”

Finally, servers also paired hateful tags with otherwise-innocuous tags. Notably, Disboard linked racist with queer tags like bisexual and trans (Figure 2), which are used to network queer-friendly communities. Similarly, we found that tags like Roblox (n = 62) and Minecraft (n = 68), referencing games popular with children, co-occurred with hateful tags (including Nazi, racist, and homophobic). Many of these communities also used the tag giveaway (n = 186) to advertise free Discord Nitro subscriptions and Roblox content to attract users. The co-occurrence of Roblox, Minecraft, and giveaways with toxic tags revealed a worrying connection between tags likely to appeal to young users and hateful discourse. In this way, some listings used discreet tags (such as groyper and 1488, both neo-Nazi references) to covertly identify hateful communities, while others cross-listed with more popular meme and gaming tags to gain exposure.

Broadly speaking, servers in the collected population were characterized by overtly hateful content, white supremacist affiliations, and gatekeeping based on race and sexuality. These patterns were underscored by the high volume of servers that used tags, descriptions, and community titles to signal their hateful affiliations in the public postings for their community.

Networks of “feeder” and conscription servers

Many servers with hateful tags were set up not as communities directly, but as “feeder” and conscription servers that would vet potential members prior to joining a separate, true community-space both on and off Discord. This indicated complicated inter-server structures that acted across the network of Disboard/Discord communities.

For example, one server called “Great White Light” (tagged as christian, alt-right, white, fascism, and nationalism) noted that it was a recruitment space for a main server: This is a Recruitment server for a All White Pure Christian server. We Love our people. We Love our Gorgeous Holy White skin. this is a sanctuary to get away from the filth, the pollution the impurities etc.

Another server, called the “Christian Legion Vetting Server” (tagged as politics, christian, education, Nazi, and national-socialism) was set up as a conscription server to vet new members. They even describe their decision to put the “Nazi” tag on their server, stating: This is the vetting server for the Christian Legion. We are a group of serious Christian National Socialists and Fascists. We do not accept LARPers or edgelords. (We put the word “Nazi” in our tags only to make this server easier to find, we do not refer to ourselves as Nazis as Nazi is a derogatory term used by idiots that have no idea what National Socialism is)

Similarly, we identified two separate servers with the same title, “SA Minority Union- Feeder,” with separate tags (one tagged as political, conservative, republican, debate, and right-wing; the other tagged as news, nationalism, nationalist, namibia, and rhodesian) that used the same description. Although both of the feeder servers only had two active members, the description claims that users, once vetted, will be connected to a private main server with over 300 members: WHITE GENOCIDE IN SOUTH AFRICA Main server = 300 + people This is a feeder server for the main server!

We identified several networks of feeder servers spread throughout the full dataset. These conscription networks were closely linked to both Nazi servers and raiding servers (whose primary goal is to harass other Discord communities). For example, one Nazi recruitment network had three servers, each with a variant capitalization of “EuroSkins Recruitment.” Each server listed also had different tags, presumably to maximize collective visibility:

Server 1: racist, racism, white-power, homophobic, transphobic

Server 2: traditionalism, white, nationalism, nationalist, traditionalist

Server 3: white-power, white-supremacy, white-lives-matter, white-pride-worldwide, pro-white

Despite the varied tags, all the descriptions stated: This is the recruitment server for Euroskins. Euroskins is a server for white racialist of all ages who wish to discuss from history, to white culture, and everything that has to do with our folk are discussed here at EuroSkins. If you are a degenerate of any kind, not white, or a race-traitor, then you are not welcomed here. If you are a white racialist please join the server, we will be looking forward to seeing you!

Other recruitment servers were set up as “offices” for the public to engage with otherwise secretive raiding communities. For example, one server called “The Final Remnant’s PUBLIC RAID REQUESTS” noted that users could join the server to submit a raid request on another community: “TFRia takes raid requests now! Want us to yeet a server? Come down here and submit a request. Also this server has a recruitment office . . . if you want to join.”

Similarly, a group called the “ADE Imperial Border Office” had five distinct, tagged servers, each listing separate Nazi-affiliated and queerphobic tags. Each of these servers was described as: At the ADE We seek to conquer all of Discord and cleanse it of degeneracy. This is a recruitment office, you may use it to gain access to the main server which has access to nukes, raiding tools, token grabbers, etc.

The setup of a militaristic border or “recruitment office” was a common motif across many of these toxic server networks. The most complex of these was a network called the “Anti-Degenerate Legion” which hosted 21 separate “Checkpoint” Discord servers (“A.D.L. checkpoint Charlie,” “A.D.L. checkpoint Bravo,” etc.), each with the following description: This is just a conscription server, here you can gain access to the guilded link. The A.D.L Has moved to Guilded because of obvious conflicts with Discord’s Marxist way of handling “wrong opinions” on their “tolerant web-app” The good men at the Anti Degenerate Legion seek to return the internet to it’s glory days of post-gamergate. Free speech, allowing different opinions, creativity, no filters etc. etc. We seek out and destroy degenerate communities where we find them, spread the word of our mission to every corner of the internet, and really fucking hate furries.

In this case, these checkpoints served to vet potential users for a community on Guilded (a less-moderated, copycat platform of Discord that has maintained its gamer-centric emphasis). This was a common practice, and we identified Discord servers vetting users for groups on Telegram (a private messaging app), Slack, gaming accounts (such as Steam and Riot), and even in-person meetups.

Finally, we noted that several servers were set up to assist and preserve other hateful communities. While mercenary raid servers were a component, we also found emoji servers and backup servers played a role. For example, a server titled “Our Land” (tagged as racist, wwii, kkk, Nazi, and based) was listed as a “backup” of another Nazi server (synchronized through a third-party bot to retain its content). In addition, there were servers set up specifically to distribute media content like emojis. One server, titled “Nazi Emoji Server” (tagged as germany, emoji, and Nazi) was set up just to grant members access to Nazi-themed emojis for use in other servers.

Toxic servers were resistant to deletion and abused discord’s moderation tools

Toxic servers frequently flaunted their resilience to “censorship,” or noted when they were a revival of a previously deleted community; several of the communities from our original dataset (collected in November 2020) have been deleted and restored in the time since. For example, a revival of the community “Burn F[-----] Alive” had been bumped on Disboard as a “new” server in April of 2021. In addition, a network of three same-name servers called “Ysirirsk of Sun” and tagged as kkk and Nazi, had been deleted and restored as “new” (Figure 4,

A white supremacist server and its backup server, which had been deleted and restored (anti-Black slur has been blurred).

As part of Disboard’s curation, “new” servers are bumped to the front of indexed searches, so many recently revived communities were often present near the top of search pages. Servers even acknowledged their own history of deletion in their descriptions:

“The last server got deleted when we were about to hit 3k members so join back.”

“WE GOT DELETED FOR THE 5TH TIME / YOU CAN BAN US BUT CAN’T STOP US!”

“were back baby, we wont stop and never stop!”

“TFR is back in action again! This time we corrected our mistakes”

“deleted and remade multiple times, this time it is here to stay!”

Toxic servers also boasted about manipulating Discord’s affordances to protect their users who post hateful content. For example, one server mentioned that it had an “n-word deleter . . . runs every 24 h so your account is safe with us!” The server claimed to use a third-party bot not to prevent use of slurs, but to prevent users from being banned if Discord investigated the server for hateful conduct by sanitizing chats after the fact. While bots are an essential part of moderation for Discord servers (Jiang et al., 2019), these communities actively abuse them to encourage hate speech.

Other servers noted their use of Discord’s verification and security settings as protection for toxic practices. Some claimed to restrict users’ ability to participate in and view channels in the community until they answered questions or provided required information in new-user specific channels. Despite being “open” community servers, many stated that they had Discord’s strictest verification settings enabled, requiring users to have a verified email and phone number before joining. While this is intended to prevent raiding and mass-spam accounts on Discord’s part, these servers claimed to use it to “keep out the snitches and feds,” according to one listing. Raid-oriented servers stated that new members would be restricted from viewing most channels until they had participated in a certain number of raids on other communities—meaning that anyone who wanted to join the server and gain access to raiding information would have to first incriminate themselves among the group.

Discussion

The scope of toxic server networks visible on Disboard extends beyond the presence of a few hateful communities or tags. While servers that promote hate are undoubtedly removed by Discord (as indicated by many of the revived versions of these communities), Disboard’s ease of use and near-complete lack of moderation creates an environment where hate and Nazism are present across all metrics. At the same time, servers boasting of tools to prevent deletion, such as bots which clean up hate speech after the fact, servers openly dedicated to the hosting of Nazi emojis, and even bot-supported backup servers that synchronize content in case of deletion, point to a more enduring network of hateful practice than Discord lets on. In fact, in June 2021, as we prepared to submit this manuscript, we found that Disboard had actually blocked two of its most egregious tags: Nazi and racist. These tags are still active, but searching for them will provide a 404 error page; other tags like white-power, toxic, anti-lgbt, and national-socialism remain, and often reveal servers still tagged as racist and Nazi.

Disboard’s pattern of hiding—but not removing—toxic networks mirrors the larger trouble in Discord’s ecology. Our study indicates that meaningful interventions must go beyond obscuring the presence of toxic and hateful communities. Disboard’s recent choice to block results for racist and Nazi searches will do little to stem the recruitment and raiding practices of hate networks outlined above. Much like Discord’s curated search, Disboard’s prevention of openly hateful search results is only part of the solution, especially because even when servers are removed, Disboard’s affordances allow for the easy reintroduction of banned groups. Because Disboard “bumps” new servers to give them priority in search, deletion acts less as a deterrent and more as a marketing tactic—communities that are frequently removed and restored are brought back to the front page of Disboard. Given that many of these communities are archiving their content, and that some boasted having been deleted upward of five times, Discord’s promise of increased toxic server removal feels shallow. While we did not comprehensively scrape servers to note the percentage of bans and removals, we found three servers that had been deleted and restored since our collection had a clear regrowth of the same communities, and were advertised with the same language. The only change was in the member count: due to bumping by Disboard’s algorithm, member counts actually rose. In its present state, deleting a well-equipped toxic server on Discord is not unlike a prairie fire: fertilizing the ground after every attempted burn.

The persistence of hateful groups is also rooted in the financial stakes of Discord’s expansion. Discord has tried to manufacture an illusory userbase by presenting only partnered communities to incoming users, yet the practices of third-party sites like Disboard facilitate toxic technoculture in a way that bleeds into Discord proper. This tacit acceptance coexists with an increasingly friendly rebranding: an acrobatic feat made possible by Discord’s careful limitation of its search features and the deliberate rhetorical narrowing of its responsibility as a platform. Discord’s expansion is based in its reliance on strategic invisibility. Whether these hateful structures exist is less important for Discord than whether they exist visibly—and herein lies the financial impetus for Discord to continue to allow sites like Disboard to do its dirty work as it maintains a presentable image for investors. In addition, users that find Disboard may mistakenly believe it to be part of Discord, due to its blatant imitation of Discord’s user interface (Figure 5). While Discord may not be directly affiliated with Disboard, Discord enables it: by not providing low-level search, by verifying Disboard’s bot, and by allowing easy integration with Discord’s API, these affordances allow Disboard to act as a de facto extension of Discord’s interface.

Disboard imitates the “cuteness” of Discord through typefaces and visual design.

Search is intimately connected to what is normalized on a platform—even on platforms, such as Discord, where privacy and a lack of a sitewide “feed” seems to defy any concept of a general public. As Noble (2018) writes, “Search happens in a highly commercial environment, and a variety of processes shape what can be found; these results are then normalized as believable and often presented as actual” (pp. 24–25). The processes which shape Disboard’s search exist in a mix of algorithmically coupled tags and the promotional bot-assisted commands of its users. It is through these technical constructs that white supremacists are networked and normalized on Disboard, and thereby tacitly deemed acceptable within Discord’s user ecology.

Similarly, Discord’s position is complicated by the presence of checkpoint, feeder, and conscription servers for extremist communities. These checkpoints take advantage of Discord’s distributed ecology to continually re-list shell servers on Disboard for the vetting and onboarding of recruits into hate networks. Discord’s self-congratulatory emphasis on proactive moderation seem significantly less impressive in light of the unchecked recruitment happening on their platform, which utilizes the affordances of individual Discord servers to verify incoming users, often based on phone number or skin tone. Deletion of “feeder” servers is effectively meaningless when they are numerous and can be easily re-posted on Disboard. In many cases, their primary spaces are not on Discord at all—Discord is merely a funnel into yet other extremist spaces, demonstrating again the efficiency with which hateful networks are able to sidestep blanket bannings and re-establish their operations.

The rampant antagonism present within Discord’s user ecology should be considered within patterns of toxic geek masculinity (Condis, 2018). These hate networks utilize a “gamer” ethos, one that draws participants by positioning themselves against incoming users they label as “degenerate” (a white supremacist reference)—mirroring what Amanda Phillips and Katherine Cross have identified as gamification of harassment (Cross, 2017; Phillips, 2020). Discord has a history of networking its users along antagonistic grounds; in its 2017 effort to actively recruit gamers to its platform, Discord instituted “Hype Squad,” a recruitment system in which users were separated into “houses,” which actively competed with each other for unique badges on user profiles and Discord-themed merchandise (Discord, 2017). Whitney Phillips has noted that “trolls replicate behaviors and attitudes that in other contexts are actively celebrated” (Phillips, 2015: 168). Now, although the HypeSquad is gone, thousands of servers on Disboard use tags, such as anti-furry, anti-gacha, and anti-lgbt(q) to recruit through antagonism against other Discord “factions.” No longer about “badges” and “houses,” Discord’s toxic game is now rooted in hostility toward people’s real, often vulnerable, identity markers—a game designed to “dominate and expel” so-called “degenerates” from the platform. Alisha Karabinus has noted that during #Gamergate, trolls applied “powergaming” strategies to train members to perform networked harassment (Karabinus, 2019). As Discord attempts to distance itself from the white, male, straight, gamer userbase—and toxic gaming masculinity—that built its platform, this gamified hostility repeats itself across Discord, and is rooted in its structural values.

The aggressive, outward rhetorical movements of these networked hate communities stand in stark contrast to Discord’s promised user experience. Discord relies on the perception of anonymous, safe, empowered users able to curate communities to their own tastes, as opposed to those of an algorithm or an aggressive public. Jason Citron, in his interview with NPR, promises that through Discord’s proactive moderation strategies, “if someone tries to create a group . . . and that group is based around a topic that violates our guidelines . . . We shut it down” (NPR.org, 2021). Contrary to this promise, however, a large number of Discord’s users are in an active state of war, facilitated by third-party search functions. Disboard is a crucial example of this. The egregious discourse in public server descriptions aggressively pollutes Disboard’s search with the express intent to cause harm to vulnerable targets. Servers, such as “The Final Remnant’s PUBLIC RAID REQUESTS” offer to harm other communities on-demand as a recruitment strategy. Raid servers, anti-servers, and recruitment servers are able to exist because of the third-party affordances of Disboard, and they would have no easy target for their antagonism, or easy mode of refounding, without the streamlined search functions Disboard provides.

It is worth remembering that the presence of extremism on Discord has real, deadly consequences beyond virtual spaces, as the violent events of the “Unite the Right” rally grimly illustrate. While Discord has attempted to limit the scope of its accountability, our findings indicate that until the networked practices of these communities are addressed in their full complexity, white supremacist and hateful servers will continue to proliferate on and through Discord.

Conclusion

Gillespie (2010) writes that platforms “seek protection for facilitating user expression, yet also seek limited liability for what those users say” (p. 347). In Discord’s unique case, the company has sought increased responsibility for the legacy of its userbase, and has instituted increased moderation as part of their public rebranding. In addition, Discord’s model does follow several of Gillespie’s recommendations of what platforms should be: they are pointedly transparent about their moderating practices, and break down actions taken into easily processable transparency reports. They also “reject the economics of popularity” by leaving content algorithmically uncurated (Gillespie, 2018). Discord deletes rule-breaking servers and is clear about the actions it takes with user data: all of these are important, responsible features of social media platforms. Yet because these commitments exist adjacent to an unacknowledged network of toxicity, and because they allow sites like Disboard to market based on free-for-all “popularity principles,” they do little to stem the ever-encroaching waves of networked harassment which threaten the idyllic user experience Discord sells.

This said, to label an entire platform—especially a platform that hosts tens of thousands of queer-support and activist communities—as holistically “toxic” is reductive and unhelpful (Brown, 2020). Our assessment of these toxic networks does not define Discord, and we reject the idea that Discord is in itself “hateware” (Brown and Hennis, 2019). Instead, we hope to frame Discord’s neoliberal attempt to use “expanding diversity” as a problematic marketing tactic, and encourage more meaningful interventions. Discord’s toxic technoculture exists as the albatross around its neck; its persistence threatens the safety of marginalized users both on- and offline. However, our findings indicate an opportunity for Discord to truly address the networked implications of hateful user practices on and through its platform. Instead of eliding the problematic structures that have emerged from Discord’s user ecology, a meaningful intervention would involve (1) recognizing the role of third-party actors (like bots and bulletin sites) in shaping Discord’s culture (2) building and maintaining a comprehensive search feature that Discord can moderate and take responsibility for (3) acknowledging the proliferation of hateful communities and approaching bans and removals from a networked perspective, ensuring that hate groups cannot easily re-establish themselves. If Discord were to provide and manage its own, comprehensive search system, it could take direct action to prevent the networking of hateful communities—but would also have to publicly acknowledge the presence of such groups. While Discord has rhetorically distanced itself from these bad actors, this is an opportunity for Discord to meaningfully inhabit the inclusive future it actively markets.

Our analysis has been necessarily limited in scope: we focused explicitly on hateful tags and communities—but further research into queer-friendly, activist, and marginalized groups on Discord will be important. Similarly, we did not study the discourse within these servers—our analysis is longitudinal—but we hope our findings can help guide ethnographers and digital culture scholars moving forward. Finally, while we found globalized trends that suggest that hate networks on Discord permeate non-English speaking communities coinciding with global fascist movements, our study was largely limited to English-based tags. We hope this study can reemphasize the importance of social media analysis that addresses the role of third-party actors in reshaping platform ecologies and will encourage further longitudinal research into Discord communities. Our findings indicate a disconnect between Discord’s policies and its distributed user ecology, complicating the narrative of responsibility-as-moderation, and underscoring the importance of exposing the extended networks of social media systems.

Discord is trying to do both: provide a safe, curated list of public-facing servers through its internal search, while allowing third-party platforms free reign in curating smaller (and less manageable) communities that are rife with toxicity. But Discord cannot address the issue of small-community search when they have outsourced that function to sites like Disboard. Due to Discord’s unique structure as isolated nodes of private communities, it may ultimately be successful in eliding its hateful elements and attracting a new public userbase. However, without either acknowledging or distancing itself from its network of actual use, Discord will continue to host hate groups; toxic servers will continue to be deleted and remade, and they will continue to adapt in the deep structures of Discord’s distributed user ecology.

Footnotes

Authors’ note

This manuscript is not currently under review at any other journal. The authors contributed to the writing, intellectual content and conception of the manuscript and take public responsibility for the project.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.