Abstract

Misinformation and hate speech are prevalent issues in social media research, as well as the rise of far-right extremists, white supremacists, and conspiracy theorists. In response to these concerns about unethical behavior on social media, this article explores how underlying social bonds proposed by conspiracists are discursively negotiated in YouTube comments. Through close qualitative analysis of a corpus of comments about the Notre Dame Cathedral fire, a target of xenophobic and conspiratorial claims, the study identifies the range of recurrent textual personae who respond to the conspiracy theories in the videos. The analytical focus is on the values these personae express and the discursive legitimation strategies used to strengthen their claims. This article is methodologically grounded in a social semiotic approach. Seven textual personae are identified in the dataset that each realizes a particular patterning of social bonds and legitimation strategies, for example, “Educators” legitimated the authority of experts and explained why content was false, while “White Supremacists” and “Inciters” sanctioned technology and negatively evaluated particular social groups. The method employed identifies the attitudinal positions and legitimation strategies that are at the heart of the various ideologies underlying conspiracy theories. It is a step toward developing approaches for combating misinformation and hate speech that are targeted at the key values of specific communities, and avoid overgeneralizing the motivations to produce and consume conspiratorial discourse. This approach is important since arguing logical points alone, without considering the key bonds people share, is unlikely to help in combating conspiratorial discourses.

Introduction: White Supremacists and Conspiracy Theories on YouTube

Misinformation and hate speech have become a prevalent feature of social media communication and a major social issue, having a significantly negative impact on groups, such as Muslims, immigrants, and feminists (Hawkins & Saleem, 2021). YouTube is increasingly targeted by far-right extremists, white supremacists, and conspiracy theorists as a vehicle for sharing their deceptive ideas. Social media platforms, such as YouTube, are amplifiers and manufacturers of hate-filled discourses due to the affordances, business models, and cultures that they maintain through forms of “platformed racism” (Matamoros-Fernández, 2017) and other forms of platformed negativity. This article explores how the underlying social bonds proposed by conspiracists and white supremacists are discursively negotiated in YouTube comments responding to conspiracy theory videos about the 2019 Notre Dame Fire in Paris, and the types of textual personae or identities that tend to proliferate these social bonds. The Notre Dame Fire caused the cathedral’s spire to collapse and extensive damage to the roof and upper walls of the building. French prosecutors declared that there was no evidence of the fire being a deliberate act and it was most likely being caused by an electrical fault. As a news event, the fire received a global reaction and was one of the most googled news events of 2019 worldwide. 1 Due to this global impact, conspiracy theories about how the fire started proliferated on social media, for example, in posts blaming Muslims for starting the fire and labeling it as a terrorist act. Conspiracy theories were widely shared on YouTube, and were popular in far-right and conspiratorial communities of the kind that we will explore in this study.

YouTube appears particularly amenable to platforming conspiracies and misinformation (Röchert et al., 2022). These are discursive practices that have also been closely linked to xenophobic discourses, such as islamophobia (Farkas et al., 2018; Shooman, 2016) and antisemitism (Allington et al., 2021). Previous qualitative research into deceptive content on YouTube, has explored right-wing extremist communities (Ekman, 2014; Levy, 2020; Lewis, 2018), racist influencers (Johns, 2017; Murthy & Sharma, 2019), populist YouTubers (Finlayson, 2020; Zuk & Zuk, 2020), and conspiracy videos (Allington & Joshi, 2020; Mohammed, 2019; Paolillo, 2018). Critical discourse analysis of YouTube videos have focused on understanding discourses of xenophobia (Asakitikpi & Gadzikwa, 2020), misogyny (Kopytowska, 2021), and racism (Hokka, 2021). However, there has yet to be a concentration of work aimed at understanding the kinds of identities that are realized through language in conspiratorial communities, and how the textual personae that proliferate in these communities discursively negotiate particular social bonds, a central goal of the present study.

YouTube comments in particular are important as an object of study because they provide a wealth of freely available public data that stretch across “international and inter-generational audiences” (Thelwall, 2018, p. 304). In addition, studying YouTube comments allows us to understand the participatory framework of YouTube as a media platform that enables interactions in multiple ways (Dynel, 2014). For example, YouTube comments can serve as a space to pseudonymously provide commentary on an issue addressed in a video but avoid directly engaging with other user comments, or vice versa, as a space to directly reply to and name users in interactions that extend beyond the content of the video. Studying YouTube comments gives us the ability to conduct a close linguistic analysis on clearly defined historical moments, an important criterion if wanting to understand how white supremacist and conspiratorial values are mobilized in society.

This article will first provide an explanation of the theoretical foundations of this study; how we define conspiracy theories and textual personae. It will then introduce the analytical method, including data collection and the multi-part analysis involving an affiliation analysis, exploring how social bonds are negotiated in the comment discourse (Zappavigna & Martin, 2018), and a legitimation analysis (Van Leeuwen, 2007), considering how these bonds are discursively legitimated. After describing this method, the results will be presented according to the seven different types of textual personae determined from the analysis. The overall aim of conducting this hybrid affiliation and legitimation analysis is to provide a framework for identifying categories of textual personae in conspiratorial discourse. This involves understanding how textual personae construct their supposed evidence, as well as mapping out the relations between the values they share and the legitimation strategies they employ to justify their claims or discredit the evidence of others.

Defining Conspiracy Theories and Textual Personae

This study focuses on the different textual personae that emerge in the comment sections of YouTube videos that share conspiracy theories about the Notre Dame Fire. First, by conspiracy theories, we mean not just the fascination people can have about an event they believe is caused by a conspiracy, but also conspiracy theories as acts of political propaganda (Cassam, 2019). This involves using conspiracy theories as a means of promoting ideologies, such as white supremacism, which deliberately in- or out-group certain people or organizations, and create suspicion about an event. As this study will show through the concept of textual personae, conspiracist communities feature an array of different ideologies and targets of scrutiny.

The concept of a persona (or personae) refers to how one’s personality is presented or perceived by others, or in other words it is “an assemblage of the individual public self” (Marshall et al., 2019, p. 17). Recent interest in studying personae has emerged from studies of social media and micro-celebrity (Senft, 2013) that explore how individuals present their identities online in multiple ways (Marshall et al., 2015). This article adopts a social semiotic perspective on “textual personae” in terms of how persons and personalities commune in discourse (Martin, 2009; Zappavigna, 2014b). The linguist Firth (1950) introduced the idea of “bundles of personae,” that is, how personalities are manifest through and interact in discourse, incorporating a range of dimensions and tendencies depending on the functional context of their communication. Firth’s conceptualization is useful as it offers the present study a way of understanding the identities negotiated in YouTube comments without reducing these to particular individuals or their traits, in other words: As Firth warns, it is not psycho-biological entities we are exploring, but rather the bundles of personae embodied in such entities and how these personae engender speech fellowships. We’re not, in other words, looking at individuals interacting in groups but rather at persons and personalities communing in discourse. (Martin, 2009)

Linguistic explorations of personae have sought to understand a range of attitudinal alignments across different social contexts. In terms of social media discourse analysis, work has focused on areas, such as illuminating how different textual persona share particular patterns of values when aligning around bonding icons, such as coffee in Twitter discourse (Zappavigna, 2014a), how values about motherhood are negotiated (Zappavigna, 2014b), and the kind of personae that are involved in internet hoaxes on YouTube (Inwood & Zappavigna, 2021). The concept has also been key to understanding how humor operates, for instance, via multimodal impersonation in stand-up comedy (Logi & Zappavigna, 2021), and in the negotiation of communal identities in conversational exchanges (Knight, 2010b).

This article develops this social semiotic perspective on textual personae and social bonds to apply them to conspiratorial discourse, arguing that by considering personae in terms of the tendency to negotiate particular values, we can understand the main social bonds that are at stake in misinformation and hate speech. Uncovering these bonds is the first step in developing methods to renovate and overcome these negative discourses, since studies have shown that they cannot be overcome through logical argument alone (Abraham, 2014; Cohen-Almagor, 2011; Van der Linden & Roozenbeek, 2020; Vraga et al., 2019). By conducting a close qualitative analysis of YouTube comments about the 2019 Notre Dame fire, a target of many xenophobic and conspiratorial claims, the study identifies a range of textual personae who respond to conspiracy theories presented in the videos and is interested in the range of communicative strategies and values that can be realized linguistically, rather than specifically studying individual people or personality traits.

Methodology

This section will first outline the sampling strategies for creating the dataset of YouTube comments on videos about the Notre Dame fire. It will then introduce the affiliation analysis and the legitimation analysis undertaken in the study.

Data Collection and Sampling

A sampling strategy was developed to select a suitable number of videos and comments for qualitative analysis. YouTube Data Tools (Rieder, 2015) was used to identify videos related to the Notre Dame fire via YouTube’s application program interface (API). The criteria for selection were that the videos were in English (due to the researcher’s linguistic background in light of the need to conduct complex discourse analysis), had garnered more than 10,000 views, had comments enabled, and were created in the 24-hr period after the Notre Dame fire occurred on 15 April 2019 (to focus on breaking news coverage of the event rather than its historical impact). The selected key words for the search query were: Notre Dame, notre dame paris, notre dame cathedral, notre dame de paris, and notre dame fire, based on the most common Google searches during the time the Notre Dame fire occurred. This generated a sample of 272 videos. A grounded theory sampling approach was then used to analyze the titles and descriptions of each video and determine whether the video represented factual news reporting (that either stated the situation was unknown or the fire was not deliberate) or misinformation and conspiratorial content (stating or speculating that the fire was deliberate). Due to the close analysis required for each video, a smaller dataset was sampled from the larger collection. This contained 15 videos, selected on the basis that they were under 15 min, featured conspiratorial content about the fire, and had the highest number of views compared to other conspiratorial videos. A close discourse analysis was also conducted on the transcripts of all 15 videos to determine key social bonds and understand the types of conspiratorial content that emerged. The 15 videos selected with a brief description of each video in terms of its macro-genre is provided in Table 1. 2

Video Data Set.

To sample the comments from the video dataset, a mix of corpus-driven and grounded theory methods were applied. First, comments were extracted from the YouTube API using the YouTube Comment Suite (Wright, 2019) resulting in 33,870 comments in total. AntConc (a corpus linguistics software program) was then used to determine the most common occurring noun across the comments dataset (Anthony, 2014), “fire,” which appeared in 4,874 comments (see Table 2). This strategy enabled a more manageable set of comments to be sampled and provided consistency as the comments were consistently discussing a core topic (in this case, the “fire”). Replies were excluded from this study because they were not significantly represented in this particular dataset. Instead, the dataset mostly comprised main comments that also tended to receive the most likes and dislikes on each YouTube video page, likely due to the affordances of the platform (replies are nested on the webpage and each comment thread needs to be individually clicked on by the user, thus generally replies receive less engagement). All the main comments from each video were then divided into three categories: “unproblematic comments” (e.g., stating that details about the fire were unknown or quoting trusted media sources that stated that the fire was not intentional), “problematic comments” (e.g., stating that the fire was intentional and blaming specific individuals/groups), or “neutral comments” (e.g., comments that expressed a vague stance or feeling toward the fire). Due to the polarized nature of the comment thread, in this dataset, there were no examples of comments that simultaneously expressed both problematic and unproblematic contents about the fire. From this, 50 comments were selected from each video—25 “unproblematic comments,” and 25 comments “problematic comments.” In total, this resulted in a dataset of 750 comments for a close manual discourse analysis.

Commonly Occurring Nouns in the Comments Dataset.

Affiliation Analysis

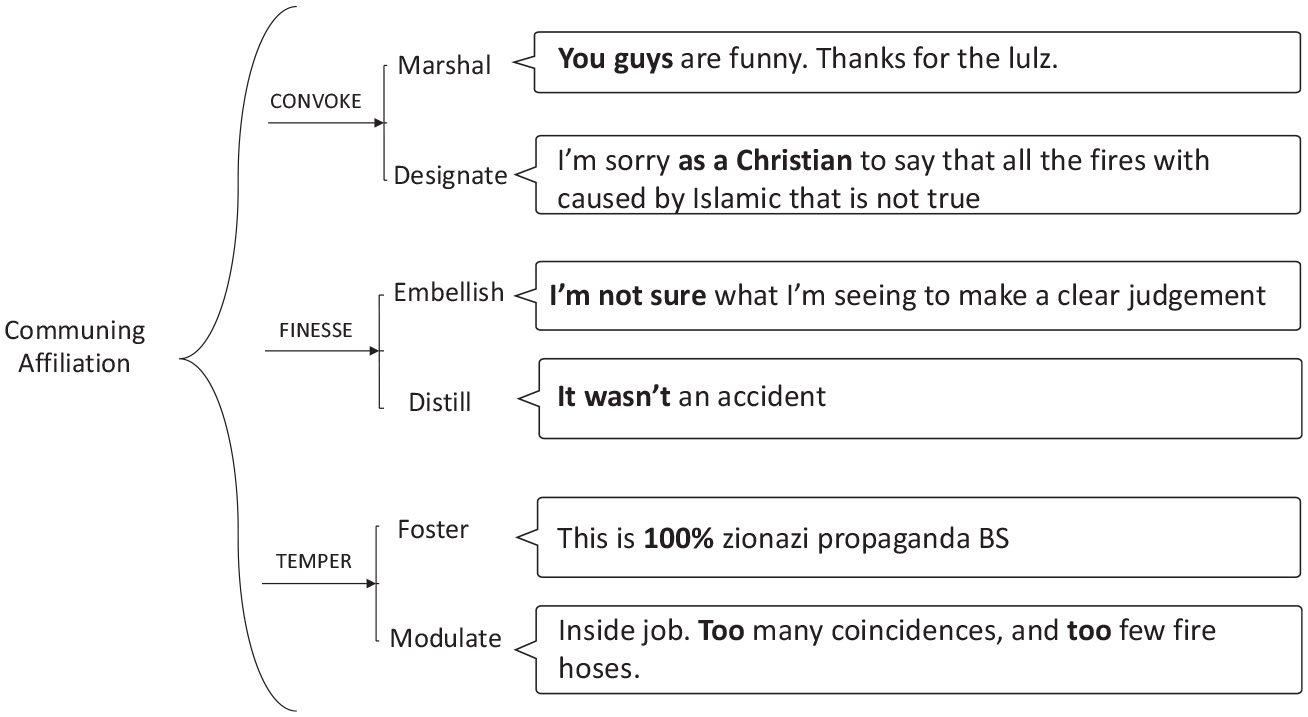

The close discourse analysis of the comments is grounded in the social semiotic perspective of systemic functional linguistics (SFL), which interprets language as a social meaning-making resource (Halliday, 1978). This study applies SFL methods at a discourse semantic level, meaning the level at which we can interpret “texts in social contexts” (Martin & Rose, 2003, p. 1). Affiliation analysis, an approach arising out of SFL, was used to understand the social bonds in the dataset that were realized in the values expressed in the language. Affiliation originated in Knight’s (2008, 2010a, 2013) work on conversational humor that explored how people commune, reject, or defer particular social bonds in interactions. The present study draws on an approach that developed out of this initial work to understand the kinds of “communing affiliation” that occur in social media discourse (Zappavigna & Martin, 2018). This approach considers how bonding (the social alignment of values) occurs in environments where users are not necessarily engaging with each other directly (Zappavigna & Martin, 2018). For example, this system was originally used to explore affiliation in mass hashtag use on Twitter (Zappavigna, 2018). It has also previously been applied to YouTube comments in areas, such as autonomous sensory meridian response (ASMR) (Zappavigna, 2021) and internet hoaxes (Inwood & Zappavigna, 2021). The communing affiliation framework is shown in Figure 1, a system network that represents choices in meanings as square brackets (an OR relation) and simultaneous meetings through a brace (and AND relation). This system represents three key affiliation strategies: 3

Communing affiliation system.

Identifying a Bond

The bonds that are negotiated through the communing affiliation system just introduced are realized in discourse as “couplings” of attitudinal and ideational meaning. In other words, they are evaluations targeted at entities, occurrences, and phenomena. These couplings are annotated, using the following convention, adapted from Zappavigna and Martin (2018): [ideation: <<>>/ attitude: <<>>]

The square brackets and “/” symbol are intended to represent the way an ideation-attitude coupling forms a single value that is then open to negotiation via affiliation. In this article, examples of

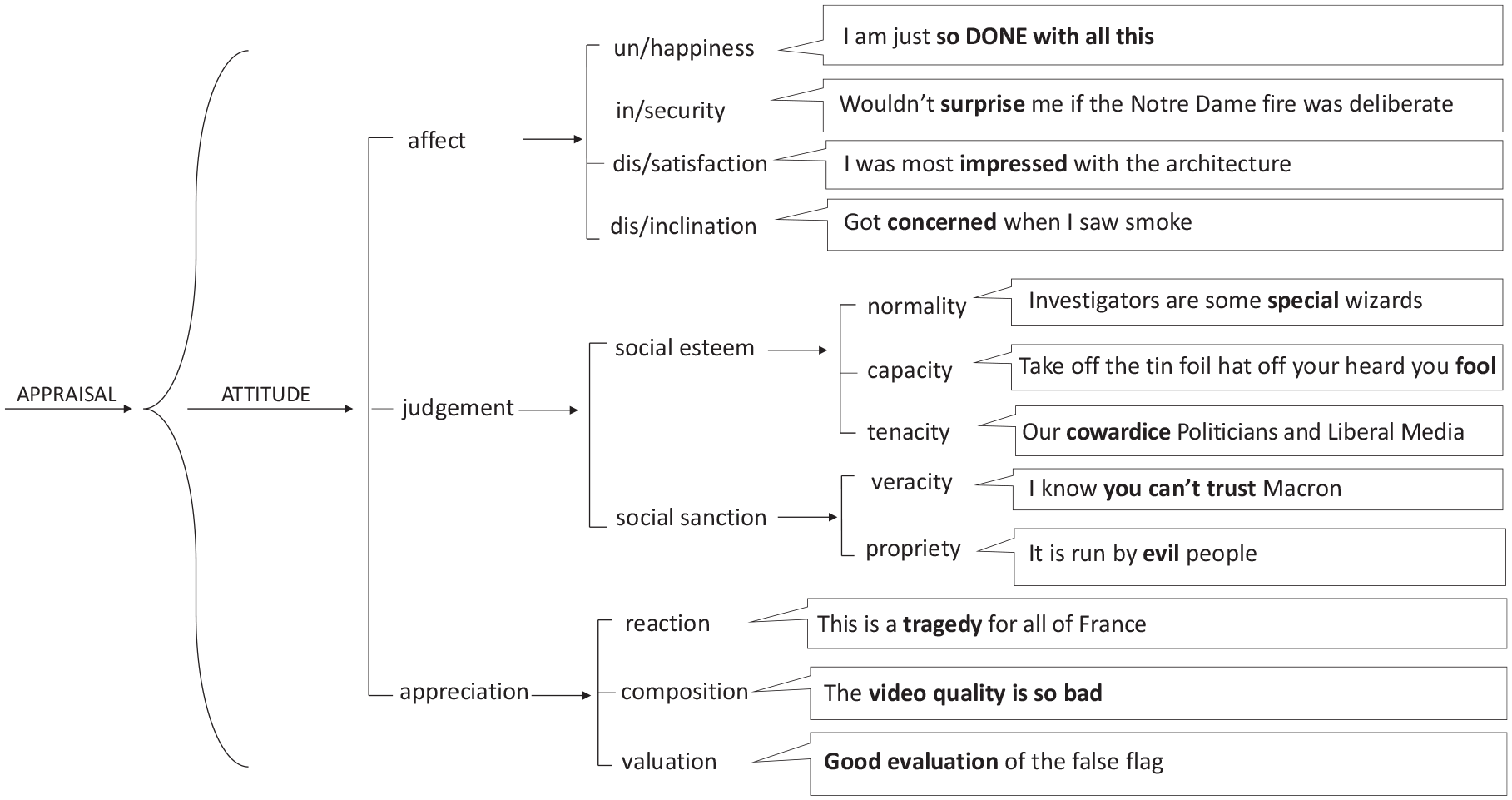

The

The system of

Attitude sub-systems.

Legitimation Analysis

The second analytical tool applied in this article is legitimation. Legitimation refers to how discourses establish credibility and how this “can be realised by specific linguistic resources and configurations of linguistic resources” (Van Leeuwen, 2007). In other words, people adopt legitimation strategies to answer the question, “Why should we do or believe this?” (Van Leeuwen, 2007). In this article, we consider both legitimation (how someone or something is portrayed as credible) and its reverse, delegitimation (how someone or something is portrayed as not credible). The legitimation framework has been previously applied to political fake news in Nigeria (Igwebuike & Chimuanya, 2020), and delegitimation has also previously been applied to internet memes about US presidential candidates (Ross & Rivers, 2017).

This study focuses on authorization as legitimation according to

A summary of the adapted authorization category of the legitimation framework by Van Leeuwen (2007) is shown as the system network in Figure 3.

Authorization category.

Results

By understanding patterns in affiliation and legitimation in the YouTube comments, we can develop categories of textual personae and understand the key bonds that are at stake in the discourse. As mentioned earlier, the term “persona” is used here instead of people, individuals, or personality traits, to reflect the social semiotic perspective that identities are discursively enacted and relational, meaning that an individual could enact a range of personae, depending on the particular textual choices they make in a YouTube comment.

This section reports the results of the close affiliation and legitimation analysis across the different types of personae that these analyses were used to identify. For each persona the most common legitimation strategies will be specified and how these strategies relate to persuasively bolstering each persona’s key values. The figures in this section present affiliation and legitimation analysis using the following conventions:

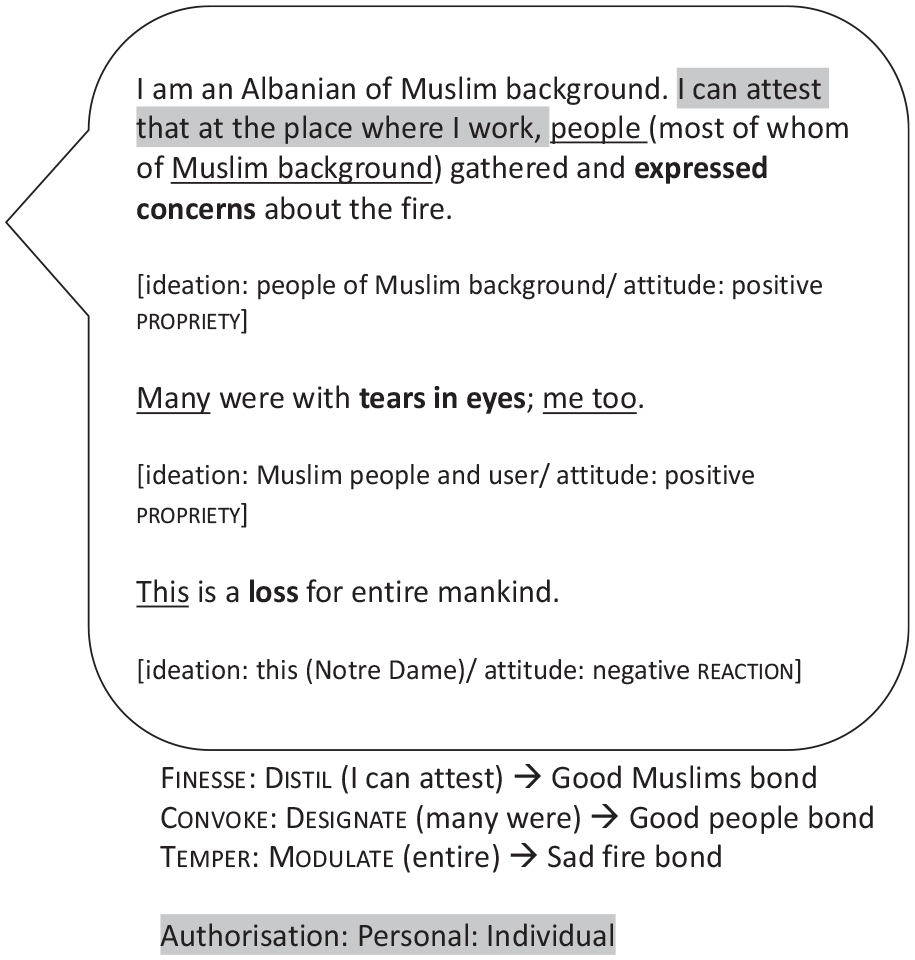

The quotation bubble separates the text and the ideation-attitude coupling analysis from the affiliation and legitimation analyses.

→ arrows represent how the ideation–attitude couplings are tabled as key bonds.

Gray highlights represent the legitimation strategies in the texts and the use of ‘’: as in “

Anti-Elitist Persona

Anti-Elitists focused on depicting politicians and authorities as evil via negative

Example of an Anti-Elitist comment.

Comments from Anti-Elitists had in common negative

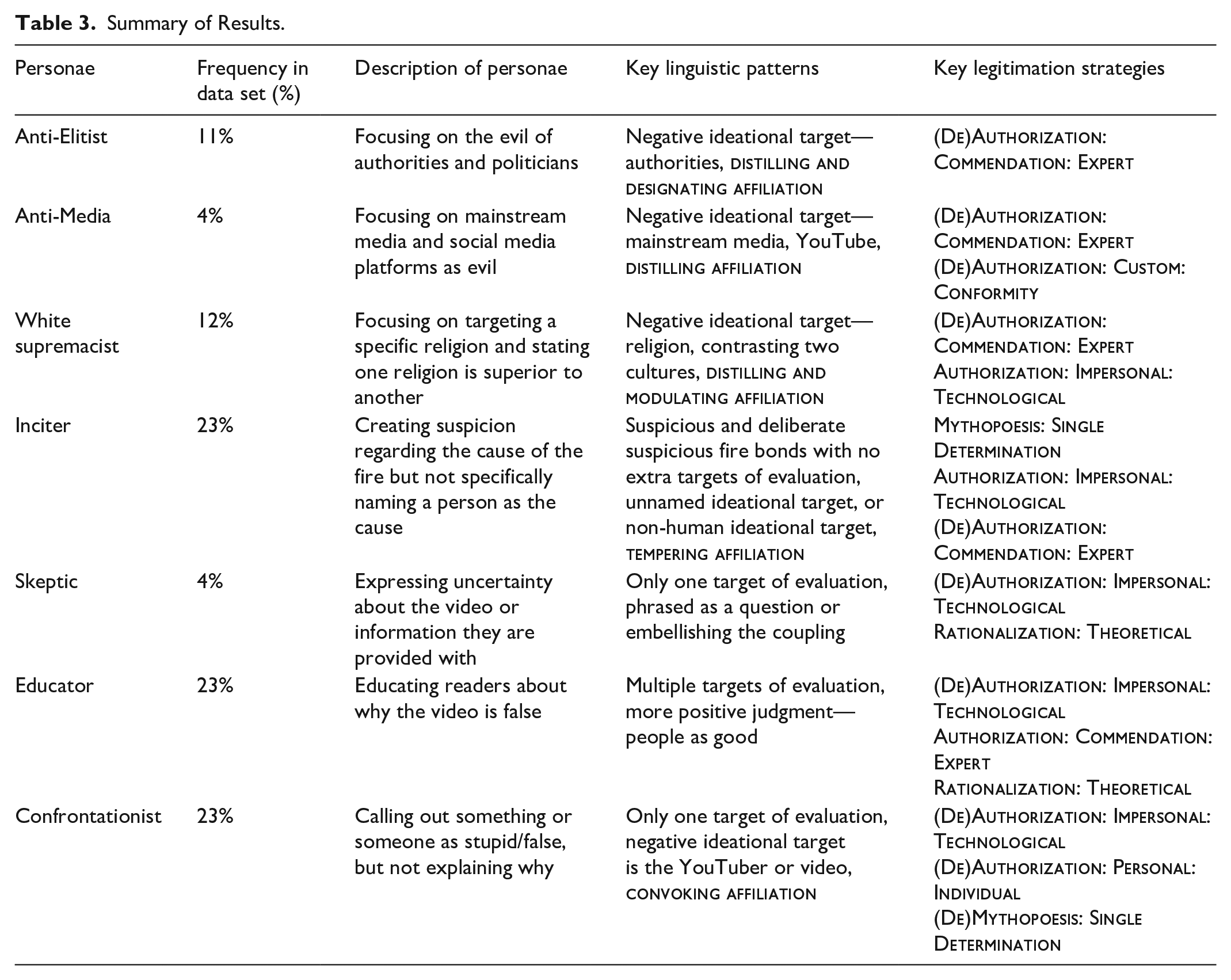

Summary of Results.

Anti-Media Persona

The Anti-Media persona tended to position the mainstream media (also expressed as MSM) and social media platforms as evil through negative

Example 1 of an Anti-Media comment.

Another comment (Figure 6) also presents a media company, in this case Buzzfeed, as an unreliable source and a target of invoked negative

Example 2 of an Anti-Media comment.

The Anti-Media persona was quite similar to the Anti-Elitist persona in terms of affiliation strategies and the prominence of negative

Inciter Persona

Inciters tended to propagate suspicion regarding the cause of the fire, without naming a particular perpetrator. Instead, an object or anonymous entity, such as the “Deep State” or “Direct Energy Weapons” (DEWs) were mentioned. In terms of legitimation, Inciters engaged primarily with one of three strategies: legitimatizing their own narratives, legitimizing technology as evidence, and delegitimizing the words of experts. An example of their focus on non-human entities is shown in Figure 7 that tables a

Example of an Inciter comment.

From these examples, we can see that Inciters predominately direct negative

White Supremacist Persona

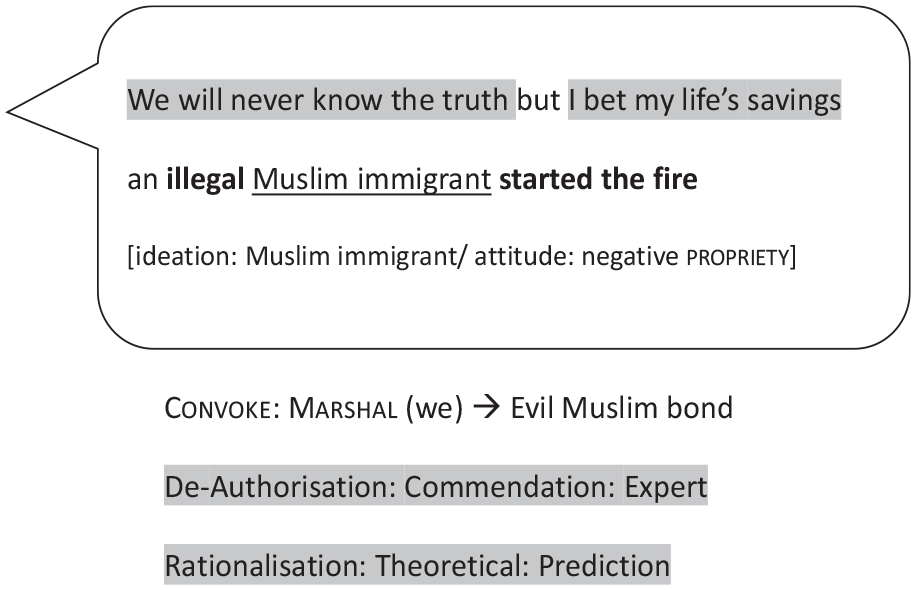

White Supremacists focused on targeting a particular religion via negative

Example 1 of a White Supremacist comment.

In Figure 9, references to technology are used by the commenter as evidence supporting their claim that Muslims are evil. Muslims and “Muslim immigrants” are depicted with negative

Example 2 of a White Supremacist comment.

These examples illustrate how White Supremacists are preoccupied with negatively judging non-Western religions and cultures and setting up a dichotomy between the west versus another religion or culture. While there were some instances of negative judgment toward Jews, Muslims were predominately attacked by white supremacists. In some cases, bonds moved away from concern about the fire to focus on anti-immigrant and White Supremacist discourses by directing hatred to non-Western cultures. In terms of legitimation strategies, White Supremacists in similarity to other personae sharing false information relied on videos and screenshots as credible evidence, while delegitimizing the voices of politicians and experts.

Confrontationist Persona

Confrontationists call out a video for being false or call out a YouTuber for lying. Comments by Confrontationists tended to be quite short and would not explain the reasoning behind why the video was false. In contrast to the personae sharing false information, Confrontationists focused on delegitimating strategies, usually delegitimating the sources of evidence other YouTubers used to support their claims. For example, Confrontationists delegitimated the videos other personae tried to use as evidence for their claims, delegitimated the voices of YouTubers, and delegitimated the conspiracy theories set out by YouTubers.

In Figure 10, we can see a very short comment by a Confrontationist that directly calls out the YouTube video for being false. The word “clickbait” and the emoticon “:o(” resembling a sad face contribute to the negative

Example 1 of a Confrontationist comment.

Figure 11 is another short comment that directly calls out YouTubers. “You Guys” is a

Example 2 of a Confrontationist comment.

Overall, the discourse of Confrontationists is characterized by “calling out” the YouTuber who created the video or the people who spread conspiracy theories. To evoke this “calling out,”

Educator Persona

In contrast, Educators focused on explaining why the content of a YouTube video is false, rather than simply criticizing a YouTube video for being false. These comments featured multiple bonds. While the videos and screenshots that YouTubers referred to were delegitimized by Educators, the legitimation of rational thinking and expert opinion were emphasized. In Figure 12, the “allahu ackbar audio” or “meme” has invoked negative

Example 1 of an Educator comment.

Comments by Educators also differed from other personae due to the more frequent use of positive evaluation. For example, in Figure 13, the ideational target is shifted away from xenophobic views (negative

Example 2 of an Educator comment.

In general, comments by Educators differed from other personae due to a mix of different ideational targets, more instances of positive evaluation, and comments focused on explaining why a person was incorrect. Educator (de)legitimation strategies were also more diverse compared to other personae, with comments featuring multiple legitimation strategies occurring in the same comment, being very common.

Skeptic Persona

Skeptics made comments casting doubt on the YouTube video or asking for more clarity. This persona delegitimized the idea of using YouTube videos as evidence and legitimized rational thinking by questioning the veracity of the YouTube video. In Figure 14, it can be assumed that the commenter is discussing the video when they state that “I’m not sure what I’m seeing,” therefore “video” has invoked negative

Example of a Skeptic comment.

In terms of linguistic strategies, the Skeptic persona is distinguished by shorter comments, questions, and

Summary of Results

The personae identified in the above analysis can be mapped out in a bond cluster diagram to better understand how the key bonds in their discourse relate to each other (Figure 15). The bond cluster in Figure 15 is illustrative of the key discoveries from a qualitative analysis; hence it should not be read as a quantitative social network analysis. The lines in the diagram represent the multiple bonds that the personae in the dataset held, as well as the bonds that were shared across different personae. The most prominent bonds shared among several personae are shown in bold, while bonds that are specifically related to one persona are depicted at a smaller scale. From this diagram, we can see that there are initially two core networks in terms of the bonds that they share; the first network consists of the “Anti-Elitist,” “Anti-Media,” “White Supremacist,” and “Inciter” personae (shown in dark gray). This network represents personae that shared conspiratorial information about the Notre Dame fire. In the second network, we can see the “Skeptics,” “Confrontationists,” and “Educators” personae (shown in light gray). This network represents personae that shared anti-conspiratorial information about the Notre Dame fire. Thus, this diagram is useful for showing the “macro-personae” categories that exist in terms of grouping personae, as well as how each persona is unique in the particular bonds that they represent. From this particular case study, we can see that there were two distinct networks of macro-personae with no crossovers in core bonds. This shows the polarized nature of YouTube comment threads and suggests how the absence of shared bonds can result in animosity.

Bond cluster diagram of key personae.

In addition, another diagram has been created to illustrate the relationship between the key bonds and key (de)legitimation strategies discussed in the results. The diagram shown in Figure 16 illustrates the most common legitimation strategies for each persona and the most common bonds that corresponded directly to these strategies. The diagram only considers the four main categories of legitimation and is divided into legitimate and delegitimate sub-categories. Yin-Yang symbols that are in bold represent the bonds that were held by those sharing anti-conspiratorial information about the fire, that is, Skeptics, Confrontationists, and Educators. The other Yin–Yang symbols represent the bonds held by personae sharing conspiratorial information about the fire, that is, Anti-Elitists, Anti-Media, White Supremacists, and Inciters. From the diagram, we can see that

Diagram of key bonds and key (de)legitimation strategies.

A summary of the key personae identified, and the key linguistic patterns and legitimation strategies for each personae is shown in Table 3. From this table, we can see that each persona has a unique linguistic profile. For example, the Anti-Elitist persona is focused on negatively evaluating and delegitimizing authorities, as well as using

Conclusion

This article has explored the different affiliation and (de)legitimation strategies used by YouTube commenters engaging with conspiracy theories and white supremacist values. By considering different linguistic patterns we were able to identify various textual personae in the dataset. In addition, by making the (de)legitimation framework more delicate, we were able to more clearly delineate textual personae who share anti-conspiratorial information from personae who share conspiratorial information in terms of how they construe what counts as evidence. Furthermore, the (de)legitimation framework was useful in explaining the significance of the bonds that were identified, in the sense that the values personae affiliated with were also the main targets of their (de)legitimations.

A methodology of the kind explored in this article does have several drawbacks. First, the intensive manual analysis conducted on the comments is difficult to scale to larger datasets. Simplifying or partially automating the analysis would result in a loss of reliability and analytic detail. For this reason, the study remains qualitative and thus may not meet positivist understandings of generalizability. In addition, the approach adopted in this article should not be viewed as a single solution to combatting issues of conspiracy and white supremacy, rather it is intended to work in conjunction with other approaches to leverage the power of interdisciplinary insights.

Beyond this particular case study, the methodology and results of this research are relevant to broader qualitative and quantitative studies regarding the discourses of white supremacy and conspiracy. By being able to linguistically identify different personae in discourse, this means that specific solutions can be developed for specific communities, rather than overgeneralizing conspiratorial discourse. In addition, understanding the personae in comment sections can aid in the creation of manual and automated detection processes for identifying the specific networks of values that charge white supremacy and conspiratorial discourse. This approach expands upon research already conducted on YouTube comments, the majority of which is quantitative (Allington & Joshi, 2020; Miller, 2021; Röchert et al., 2022). These studies, however, do not engage with the concept of textual personae, despite its potential to afford more accessible understandings of the construction of conspiracist and white supremacist discourse and to aid strategies for counteracting this dangerous language. For example, social semiotic and SFL approaches to studying white supremacy and extremist communication have been shown to be useful in designing educational materials for teaching students how to identify the language used by white supremacists and extremists, as a means for preventing their radicalization, as discussed by Szenes (2021). The notion of personae allows us to start unpacking the distinct linguistic profiles of a variety of communities with an array of values, and also to integrate different research methods through making explicit shared linguistic terminology.

The affiliation framework detailed in this article has previously been applied by Inwood and Zappavigna (2021) to the genre of internet hoaxes on YouTube. While some personae, such as “Skeptics” are shared in both of these studies, other personae categories are different, particularly as these studies represent two different registers, that is, political versus non-political discourse. Therefore, it also would be interesting to consider which personae categories are portable enough to apply to multiple case studies, and which categories are dependent on the particular case study context. Future research, in applying our methods to domains, such as misinformation about health information, might consider why some kinds of personae span across multiple contexts. This work might also refine the analytical approach to more closely identify the patterns in language relevant to these potential domains, and thus further specify relationships between affiliation strategies, legitimation strategies, and bonds.

Footnotes

Data Disclosure Statement

The data that support the findings of this study are available from the corresponding author, Olivia Inwood, upon reasonable request.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: O.I. receives an Australian Government Research Training Program Scholarship and additional funding support from the Commonwealth of Australia.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Commonwealth of Australia.