Abstract

In November 2018, after being suspended from Apple’s App Store for hosting child pornography, Tumblr announced its decision to ban all NSFW (not safe/suitable for work) content with the aid of machine-learning classification. The decision to opt for strict terms of use governing nudity and sexual depiction was as fast as it was drastic, leading to the quick erasure of subcultural networks developed over a decade. This article maps out platform critiques of and on Tumblr through a combination of visual and digital methods. By analyzing 7306 posts made between November 2018 (when Tumblr announced its new content policy) and August 2019 (when Verizon sold Tumblr to Automattic), we explore the key stakes and forms of user resistance to Tumblr “porn ban” and the affective capacities of user-generated content to mobilize engagement.

Introduction

In November 2018, after being suspended from Apple’s App Store for hosting child pornography, Tumblr announced its decision to ban all NSFW (not safe/suitable for work) content from the platform with the aid of machine-learning classification. Launched in 2007, Tumblr had grown into a central hub for body-positive, gender nonconforming, queer, and art-related platform subcultures, many of which made broad use of the service’s lenient content policy allowing for sexually explicit images, text, and animated GIFs (e.g. Byron, 2019; Cho, 2018; Tiidenberg, 2019; Ward, 2019). The decision to opt for strict terms of use governing nudity and sexual depiction was as fast as it was drastic, leading to the blunt erasure of networks and visual archives developed over a decade.

Although machine learning has been long in use in identifying child pornography on social media platforms (through solutions such as Microsoft’s PhotoDNA), Tumblr only resorted to automated filtering after the NSFW ban. Its abrupt changes in content policy speak of increasingly normative governance strategies and their impact on platform subcultures (e.g. Duguay et al., 2018; Witt et al., 2019). On one hand, social media companies encourage users to share everything: “Use it however you like” and “Put anything you want here” remain Tumblr’s key commercial invitations to date. On the other hand, the algorithmic interventions that platforms make to render some forms of content more visible than others always disrupt and rearrange existing cultures of participation (Bucher, 2012; Gillespie, 2018).

On Tumblr, the implementation of strict artificial intelligence (AI)-driven content recognition without properly training the flagging algorithms resulted in fully clothed selfies, pictures of whales, dolphins, and Garfield being labeled as “sexually explicit” and removed from public consumption (e.g. Jackson, 2018). Some users were annoyed by the glitched realization of the porn ban in general. Others reacted with a mixture of disappointment, anger, and bemusement to the wordings in the new moderation guidelines forbidding “female-presenting nipples” in particular (e.g. Greene, 2018) Overall, the decision to ban porn attracted sarcastic commentary and criticism well beyond the platform itself, as did Tumblr CEO Jeff D’Onofrio’s claim that the motivation behind this all was simply to build a “new, more positive Tumblr” (e.g. Liao, 2018).

Under these conditions and considering the continuing operation of porn bots on the site (Arora, 2019), it was not surprising that users cried “censorship.” Although this reaction was not entirely accurate—banned platform communities continue to participate and develop alternative forms of sharing content (Gerard, 2018)—it was central to the accumulation of forces that mobilized resistance to the “deplatforming” of user communities (Molldrem, 2018; Rogers, 2020). Animated by the affective charge of disappointment and dismay, user critique positioned the hiding and removal of content as counterintuitive within the social media attention economy premised on optimized accessibility while also arguing for the value and importance of content thus deplatformed (Engelberg and Needham, 2019).

This article examines user critiques of nonhuman, algorithmic interventions in Tumblr subcultures through memes and other forms of vernacular participation. By mapping networked resistance on Tumblr following the “porn purge” through a combination of visual and digital methods, we specifically address the relational, networked, and affective qualities of NSFW censorship critique. Our dataset consists of 7306 Tumblr posts published with #censorship co-hashtags between November 2018 (when Tumblr announced its new content policy) and August 2019 (when Verizon sold Tumblr to Automattic, the owner of WordPress). Our analysis draws together close and distant “quali-quantitative” (Venturini and Latour, 2010) techniques and composite forms of data visualization (Niederer and Colombo, 2019).

Focusing on the networks of visual and textual associations within the dataset, we first examine their embeddedness in the attentional dynamics of commenting, tagging and receiving notes, as afforded by the Tumblr platform. Second, we analyze in more depth their situated relevance by looking at how users repurposed screenshots and memes in the wake of the porn ban. Third and finally, we examine the engaging potential of user contributions and the ways in which platforms filter and circulate them, highlighting shifts in relations of relevance that shape the communicative and affective dynamics of social media. Through the analysis of the specific event of Tumblr porn ban, we suggest that our methodological experimentations open up empirical ways of understanding social media content in its capacity to generate, capture, transport, and transform affect.

On method: affective entanglements of platform content

Several strands of inquiry within science and technology studies (STS) have recently focused on the intensification of topical affairs within networks of interlinked and intertextual visual material. “Media-native” digital methods repurpose the increasing imbrication of images in platform technicity to map out data-intensive qualities of social media content (Niederer, 2018; Rogers, 2019). Visual methods for networked images treat visual phenomena not only in their multi-layered relations with the digital settings that render them analyzable as copies in motion or flows of information and meaning (Niederer and Colombo, 2019; Steyerl, 2009). They equally investigate digital image formations as areas of uncertainty, creativity, amplification, interest, and disagreement formed through a variety of contributions—human, nonhuman, and environmental alike (Rose, 2016; Rose and Willis, 2018).

In this context, hashtag-based ensembles of visual circulation have been identified as valuable entry points to platform-specific cultures of use (Gibbs et al., 2015; Highfield and Leaver, 2016), and as patterns of content distribution across platforms (Niederer and Colombo, 2019; Pearce et al., 2018). Building on these bodies of work, we develop yet another analytical trajectory arising from the specificity of digital visual content and its engaging potential. We explore images as mediators of affect through a range of data visualizations, arguing for the necessity to account for the experiential qualities of social media and the ways in which these are rendered tangible through networked expressions of engagement.

Understood as intensities that come about in, and that give shape to relations between human and nonhuman bodies, affect makes user–platform transactions matter. Moving the bodies involved from one state to another, affect is an issue of relational impact that oscillates in its qualities and intensities (Seigworth and Gregg, 2010). Social media inquiry has attended to contingent publics (Papacharissi, 2015; see also Lehto, 2019) and contagious viral phenomena (Sampson, 2012; see also Pilipets 2019) shaped by affective intensities, as well as to how affect operates as a binding force that pushes people to engage and helps to generate more data for the platforms to harvest and monetize (e.g. Coté and Pybus, 2007; Dean, 2010; Karppi, 2018).

Constitutive of the attentional dynamics of social media platforms, affective networks that emerge when we tag, like, share, and comment on content are informed by platform-specific “grammars of action” (Agre, 1994; Gerlitz, 2016). These grammars not only technically embed ambiguous platform experiences and expressions into reproducible action formats (e.g. through hashtags or reaction buttons) but also enact platform activities through which content resonates and spreads, thus shaping the ways in which users interact and platforms filter and recommend content. Affective encounters in social media translate into valuable engagement, lending platform transactions a particular kind of relevance.

Even when pervasive and tangible in its impact, affect in social media is elusive and its circuits are methodologically not easy to map, let alone fix in terms of measurable data quantity. Affective intensities transform the states of relations in which they are registered: this generates dynamic platform values, such as influence or conversation and fuels the social media economy operating with user data (Gerlitz and Helmond, 2013). In this double-fold sense, hashtag-mediated visual contributions are not merely traces or indexes of user engagement: they equally involve affective capacities that build up, intensify, stagnate, and shift in the course of their circulation (see also Geboers and Van De Wiele, 2020).

Our analysis focuses on content marked as, or commenting on the hashtag NSFW, which was removed from Tumblr search options in the course of the porn purge. This makes it possible to examine how automated hashtag filtering and content recognition fuel user resistance to platform interventions. Despite NSFW communities long thriving on Tumblr (Tiidenberg, 2015, 2019), the hashtag lost its power to give shape to connections of shared content between blogs. Our analysis then shifts from consideration of NSFW hashtag practices to visual forms of NSFW censorship critique in the wake of the porn ban, using these as sites of methodological experimentation.

To build our dataset, we first explored co-hashtag relations of #censorship (5673 posts) on Tumblr between 1 November 2018 and 31 August 2019. After identifying the main relevant patterns of association through Gephi network analysis, we made additional tag-based queries for #staff (15,738 posts), #tumblr purge (10,494), #female presenting nipples (2622), #tumblr apocalypse (2087), #flagged (1462), #censored (1349), #yahoo (2268), #verizon (1118), #bots (938), #pornbots (659), and #spam bots (503). Using Tumblr Tool (Rieder, 2015), we opted for querying each term per month to be able to trace transformations in associations over time. Merging the data in Google Sheets and removing the duplicates resulted in a dataset of 44,911 posts. In accordance with our more specific research interests, we then queried the terms “porn” and “NSFW” using the post caption section: this gave us a filtered output of 7306 unique contributions containing 1960 images. The specificity of our analysis then owes to the restrictions introduced in Tumblr use, these limitations being our key focus of investigation.

In what follows, we explore how different combinations of post captions, images, and hashtags, when passing from one contextual state of relations to another, produce mutually intensifying and overlapping spaces of platform resistance, critique, concern, humor, and care. To examine the forms of networked affect (Paasonen et al., 2015) emerging within this all, we combine different forms of visual analysis, emphasizing data-relational characteristics of content circulation on Tumblr. Data visualizations focusing on transformations in relations and intensities of user concern over time reveal the overlapping trajectories of NSFW censorship critique within the porn ban (e.g. nonhuman interventions of porn bots and user complaints about Tumblr staff). Data visualizations repurposing combinations of hashtags, images, and platform metrics provide a situated view of the actors (filtering algorithms, users, and porn bots) and action formats (e.g. flagging, screenshotting, following, sharing) involved. Switching between the observation of aggregate data patterns and qualitative close-looking, our analytical techniques make it possible to account for the fluidity and contingency of online interactions (Venturini and Latour, 2010) and the ways in which socio-technical environments of networked visual material mediate affect.

Taken together, these different perspectives tell us a story of user resistance to algorithmic interventions in platform cultures. Like any analytical perspective, it does not tell the whole story (cf. D’Ignazio and Klein, 2020) but lets visual and textual Tumblr censorship critique speak of forms of engagement that emerged and were made invisible within the Tumblr porn ban. Moving from one context and analytical technique to another, we argue for taking seriously both the engaging potential and the material embeddedness of user–platform interactions.

Mapping Tumblr censorship: NSFW beyond the hashtag

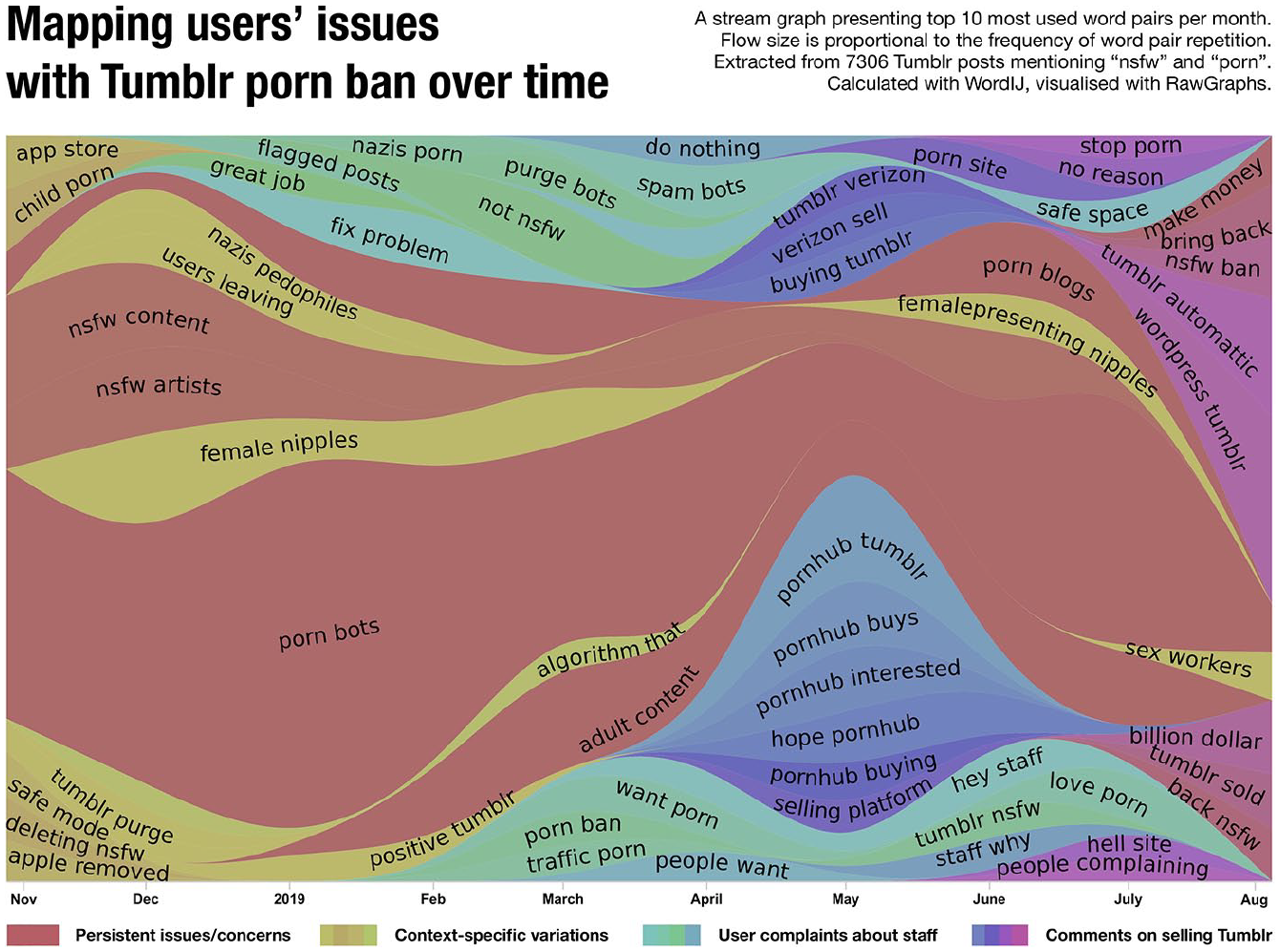

A semantic analysis of 7306 post captions identified by querying the terms “NSFW” and “porn” connected to newly prohibited visual content provides insight into users’ key issues with Tumblr’s new moderation policy (Figure 1). The main aim of cross-reading the posts (before further analyzing visual co-tag relations) was to provide more context for user responses to content restrictions. This allowed us to investigate how the marker NSFW operated as a networked source of alignment even when not used as a hashtag assembling content into searchable feeds. Figure 1 visualizes variations in the intensity of user comments by presenting 10 most frequently used word pairs per month in a streamgraph. When examining the textuality of Tumblr posts as affectively charged associations that overlap and shift over time, the streamgraph identifies different trajectories of critique that brought together users’ context-specific concerns with more persistent resistance to porn bots invading and NSFW artists leaving the platform.

Ten most used word pairs per month extracted from 7306 Tumblr post captions containing the terms “NSFW” and “porn.” Flow size is proportional to the frequency of word pair repetition, descending count from 1908 (“porn bots” in December 2018) to 11 (“do nothing” in April 2019). Calculated with WordIJ (Danowski, 2013), visualized with RAWGraphs (Mauri et al., 2017).

Tailored to the new terms of Tumblr use, the majority of reactions documented in Figure 1 revolved around the blurry lines separating porn from art, nudity, and entertainment. As sources of amplification, ambiguity and, in many cases, irony, the overlaps in user critique flesh out the main challenges of platform moderation: The immense scale of platform data feeding the moderation apparatus and the difficulties in accounting for context through machine-learning techniques (Gillespie, 2020).

Given users’ affective investments in NSFW Tumblr and the forms of sexual self-exploration and community-building that it allowed for, the palpable disappointment, sadness, and rage following the porn purge are easy enough to grasp (Paasonen, 2018; Tiidenberg and Whelan, 2019). Starting with the removal of Tumblr from Apple’s app store over child pornography issues in November 2018, user critique unfolded in sticky, repetitive word combinations constituting temporal contours of each issue: as in “tumblr purge,” “deleting NSFW,” “female nipples,” “users leaving,” “nazis, pedophiles,” “positive tumblr,” “flagged posts,” “fix problem,” “hell site,” “staff why,” “people complaining,” “verizon selling,” “pornhub interested,” “make money,” and “billion dollars”—the value loss that the company suffered after it was sold to Automattic in August 2019.

The remodeling of Tumblr into an SFW space meant doing away with much of its subcultural activity to accelerate more acceptable flows of content. During the first 3 months following the ban, the perceived sex negativity of the “new more positive Tumblr” mobilized fears about many of its communities being ruined even as blogs of the extreme right (“nazis”) remained untouched. Another, even more persistent trajectory of critique involved users’ observations on a new wave of bots that the filtering algorithms seemed to overlook, “porn bots” being the most frequently used word pair in the streamgraph.

Here, the term “porn” was associated with fake followers and spam content infiltrating Tumblr while “NSFW” was used to describe the diversity of sex-positive subcultures suffering from stigmatization and failing filters. As many artists, porn aficionados, self-shooters, sex workers, and LGTBQ+ communities began to migrate to other platforms, such as Pillowfort, DeviantART, and newTumbl (Captain, 2019), speculations about Tumblr losing its value further intensified user resistance that reached its peak, as measured through posts and engagements, in December 2018.

In May 2019, speculations about Pornhub’s interest in purchasing Tumblr amplified more specific critiques of how social media corporations value NSFW content. Users’ comments on Verizon, Tumblr’s parent company, were part of this critique blaming the inability of Verizon to turn subcultural content into profit for the damage done. The purge was seen to indicate fundamental ignorance on the part of the company to understand Tumblr’s key user base and social uses within which the marker “NSFW” operated much less as a content warning pertaining to risk than as indicative of social value and function specific to the platform (Paasonen et al., 2019). As Tumblr’s previous terms of service allowed NSFW content if tagged appropriately, and the default “safe” user mode made it easy to avoid, the main concern with its systematic erasure had less to do with protecting users from pornography than with Verizon losing advertisement revenue.

Advertising platforms model their community standards identifying safe and unsafe content in accordance with the preferences of commercial partners, and with the overall public/brand image of their company in mind (see Kumar, 2019; Roberts, 2018; Srnicek, 2016). It then follows that content highly prized by users, such as that circulated and accumulated in Tumblr’s NSFW blogs, can be of little economic value to the platform in question. Major brands usually do not want their adverts and sponsored posts to appear anywhere near potentially controversial material (Gillespie, 2018: 35), which explains the lack of more mainstream ads on porn video aggregator and webcam sites despite their massive traffic and user bases. In the case of Tumblr, the dependency on family-friendly (or SFW) ad revenue on one hand, and the vibrancy of its niche-specific subcultural sexual ecology on the other, created the problem of moderating either too much or too little.

Against this background, the decision to hide all NSFW contributions through automated filters produced tremendous backlash, especially as these classified a range of SFW content as sexually explicit while leaving the operations of porn bots intact. Consequently, the main concerns, as presented in Figure 1, were directed against two forms of automation—porn bots and censor algorithms—that were seen to encapsulate a range of problems in how the porn ban was implemented.

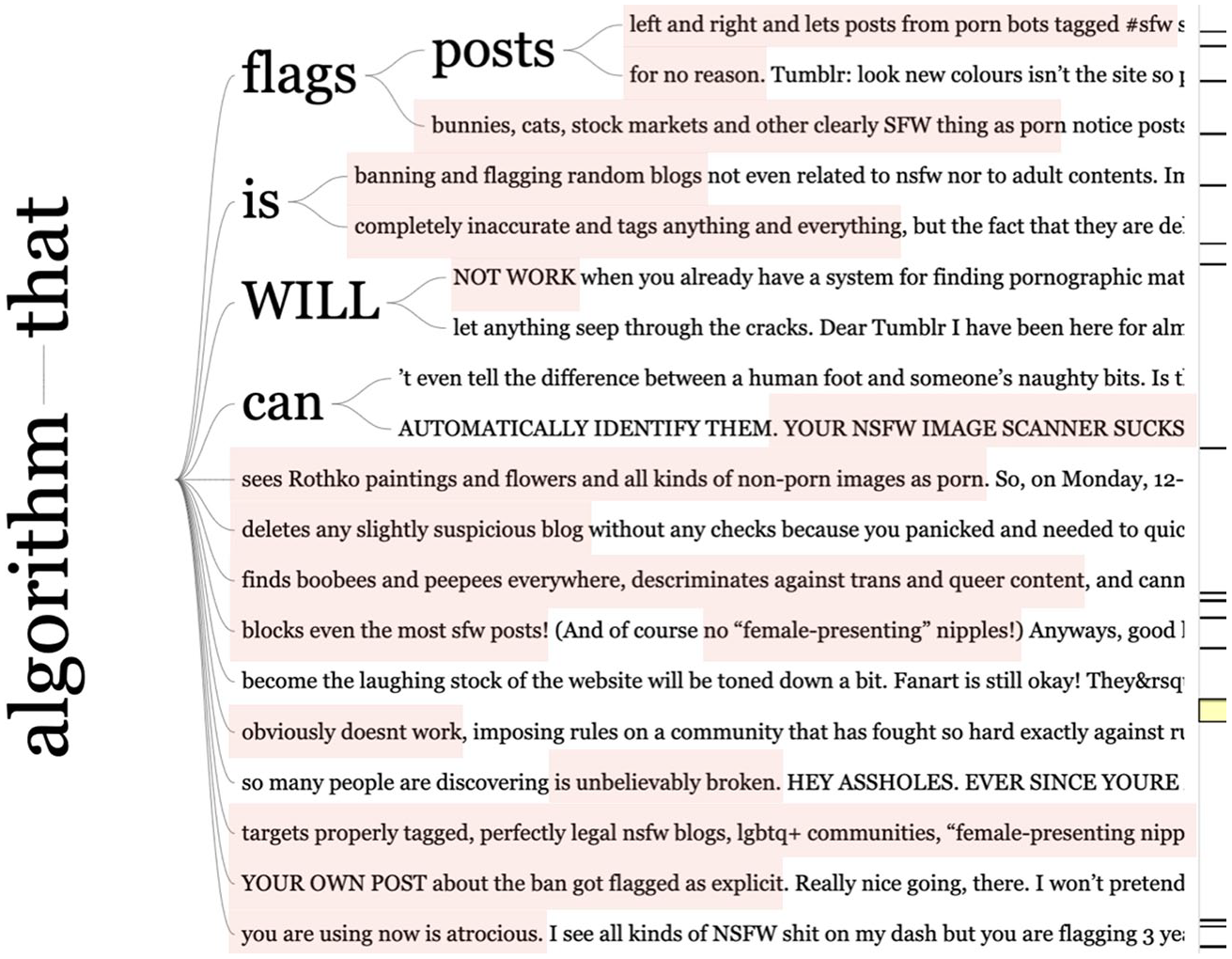

A keyword in context reading of word associations with the term “algorithm” (Figure 2) reveals several reasons why Tumblr’s switch from a user-driven self-rating system to machine-learning techniques came across as highly unsatisfying in seeing “boobees and peepees everywhere” and “tagging anything and everything.” Repeatedly described as sexist, stupid, lazy, and dysfunctional, the new automated moderation system was criticized for wiping out all forms of sexual expression from the platform, independent of context. The algorithm made blatant mistakes, further amplifying the problematic perception of female bodies as obscene and pornographic inherent in computer vision-based filtering techniques (see Gehl et al., 2017). Flagging LGBTQ+ communities and female nipples as porn instead of focusing on “porn bots, Nazis and pedophiles,” automated filtering triggered outrage among those whose content was removed “for no reason,” encapsulated in exclamations such as “YOUR NSFW IMAGE SCANNER SUCKS.” After Tumblr’s own post about the porn ban was tagged as sexually explicit, comments and memes ridiculing “the algorithm that obviously doesn’t work” evoked particular glee.

“Algorithm that . . .” A keyword-in-context map generated from the captions of 7306 posts containing the terms “NSFW” and “porn” highlighting the affective intensities of rejection. Made with Wordtree (Davies, 2020; Wattenberg and Viegas, 2008).

That Tumblr algorithm was not well trained, blind to the proliferation of illegal pornography, and unprepared to deal with the platform’s subcultural diversity suggests not only that content was flagged without human oversight, but also that users’ speculations about Verizon no longer considering Tumblr valuable enough to be properly maintained had some basis (see also Matsakis, 2018; Tiidenberg and Whelan, 2019). Under such circumstances, platform communities reoriented themselves and produced transient formations of concern and humor allowing for affective alignment and networked resistance. Especially the censoring of memes and images addressing censorship produced a long tale of sarcastic reactions. “Nipples”—female, male, trans, and gender-fluid—became central mediators of the porn ban critique, channeling resistance against the new policy.

Nipples, memes, and algorithmic failure: tracing affect through visual analysis

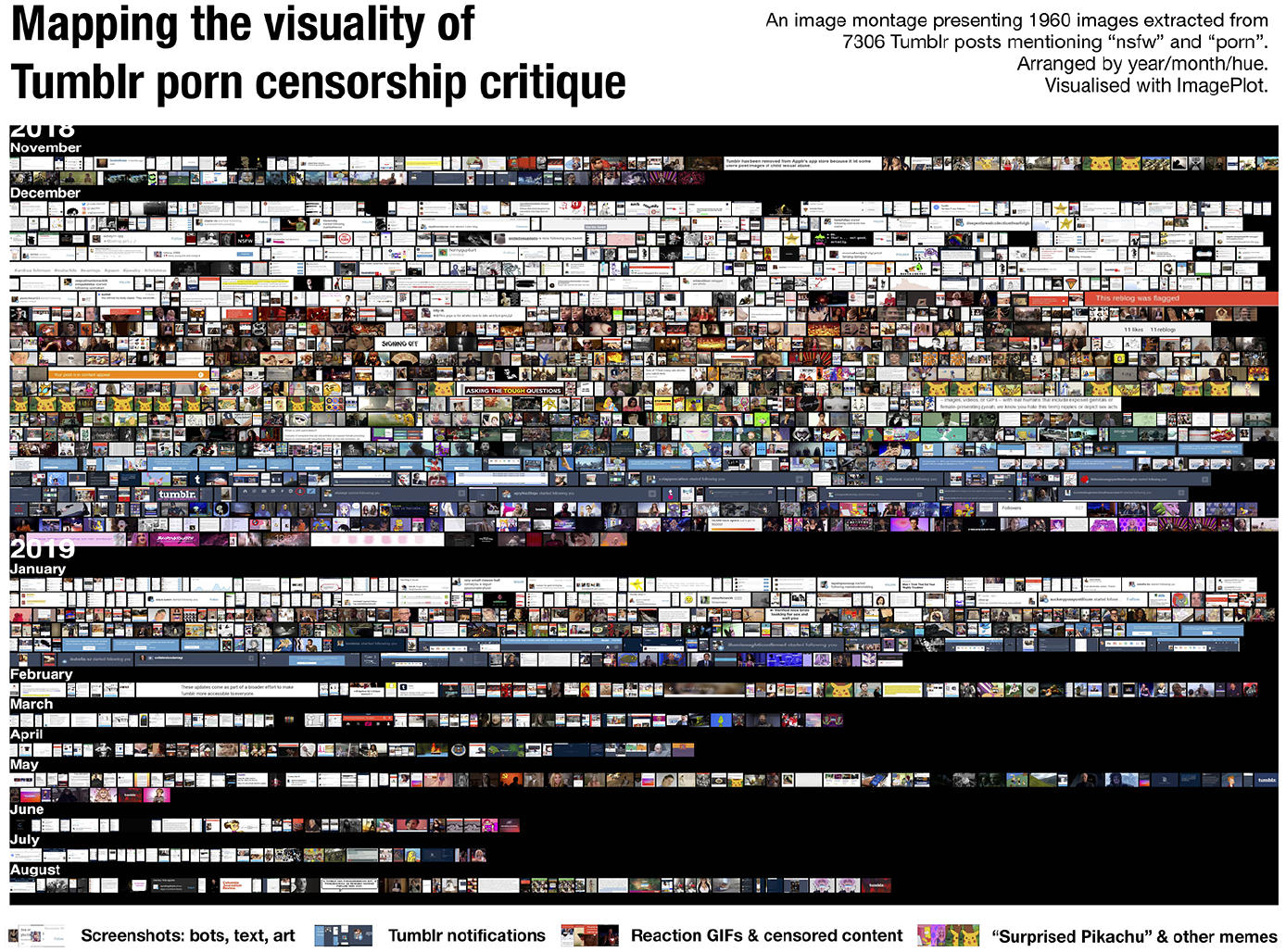

In this section, we focus on the memetic components of intertextuality, humor, and imitation in the circulation of visual user-generated content. Starting with a montage of 1960 posts containing images associated with the terms “NSFW” and “porn,” we then address 300 most circulated images that users engaged with through related hashtags.

The method of plotting images into a montage according to content characteristics such as the time of posting and the average hue of each image (Hochman and Manovich, 2013; Rose and Willis, 2018) provides us with a temporally situated visual map of Tumblr censorship critique (Figures 3 and 4). Organized according to the attribute of color tone, the temporal contours of visual contributions reveal patterns of repeated variation in format and stance in users’ commentary. The color arrangements in the montage—the white and blue of the screenshots documenting interventions of filtering algorithms and porn bot spam; the black and red of reaction GIFs and censored content; and the yellow, green, and magenta purple of memes featuring a series of “Surprised Pikachu” adaptations—render evident the flows of visual content as a dynamic web of user–platform interactions.

An image montage presenting 1960 images extracted from 7306 posts containing the terms “NSFW” and “porn.” Arranged by year/month/hue. Made with ImagePlot/image montage macro (Software Studies Initiative, 2020).

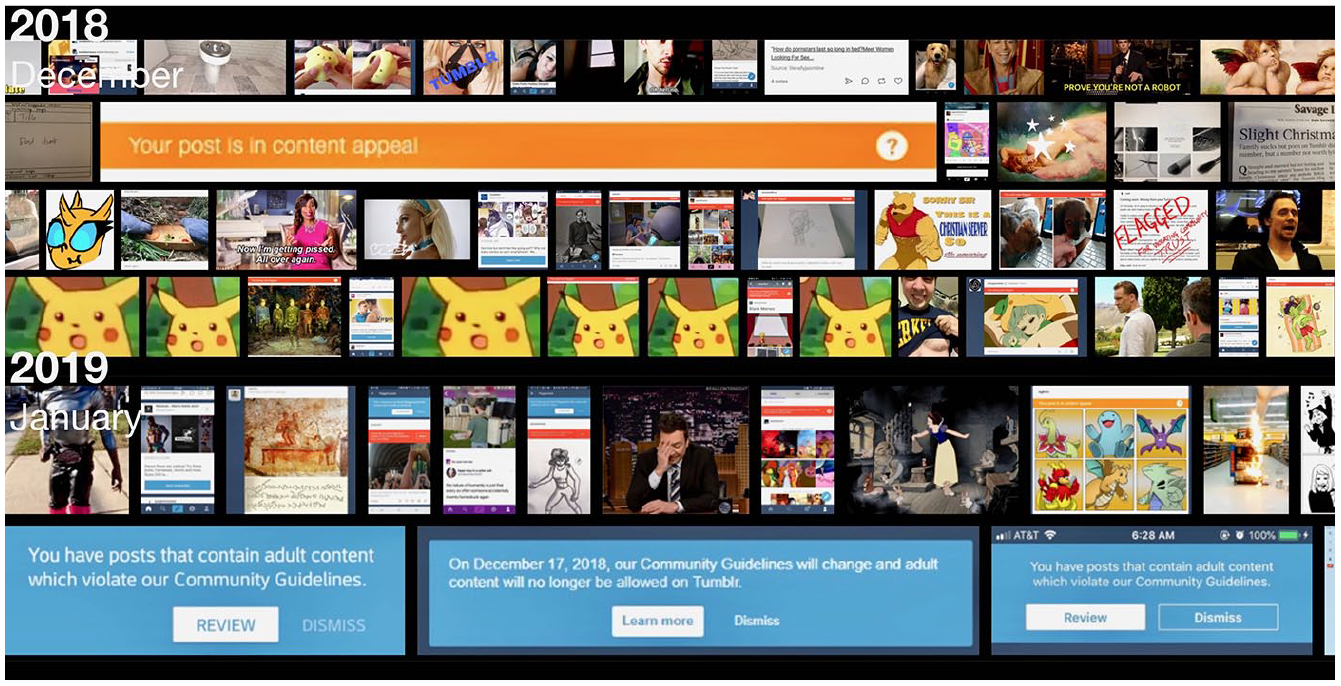

A zoom-in view on the patterns of repetition in the types of Tumblr notifications and users’ memetic responses in December 2018 and January 2019.

These data visualizations make visible user critique of Tumblr’s algorithmic mistakes in its drastic and immediate content filtering measures (note the red flag message superimposed on most of the images). Turning to the temporality of posting makes it possible to map out the contours of user critique gathered in “refrains” (Bertelsen and Murphie, 2010), or loops of visual imitation flattening over time.

The combination of significant decrease in the number of posts in January 2019 with the intense screenshotting of notifications mentioning the possibility of reviewing flagged content (Figure 4) speaks of user resistance to Tumblr’s filtering strategies. It also points to the gradual flattening of affective intensities connected to both the purge as an event and to Tumblr as a platform, given how the affective “stickiness” that made many frequent users to engage (Coté and Pybus, 2007; Lehto, 2019) evaporated in the course of the NSFW ban. Following the peak posting intensity in December 2018, visual practices connected to the porn purge began to sharply deflate, indicating growing user disenchantment with Tumblr.

There is, methodologically, no access to affect as precognitive intensities of sensation that only later come to be registered and interpreted along cognitive and emotional scales, nor can one reconstruct or mediate these intensities after the fact (Paasonen et al., 2015). It is nevertheless possible to track affective encounters that impact on people and nudge them from one state to another in networked exchanges en masse through data traces of the kind examined in this article. If affect is that which makes people pay attention, to engage, feel for, and care (Paasonen, 2018), then the dwindling of posts, tags, reblogs, replies, and likes speaks of oscillations thereof, and vice versa. Meanwhile, the stances taken in the user engagements themselves speak of the affective qualities involved—from irritation to resentment and disappointment quickly flaring up and shrinking in intensity over time. Assembled by Tumblr’s algorithmic interventions and user-driven practices of sharing and appropriation, such temporal shifts in relations of concern open up trajectories for empirical enquiry into the material embeddedness of images in platform-specific practices of meaning-making.

Censored art and porn bot screenshots: documenting algorithmic failure

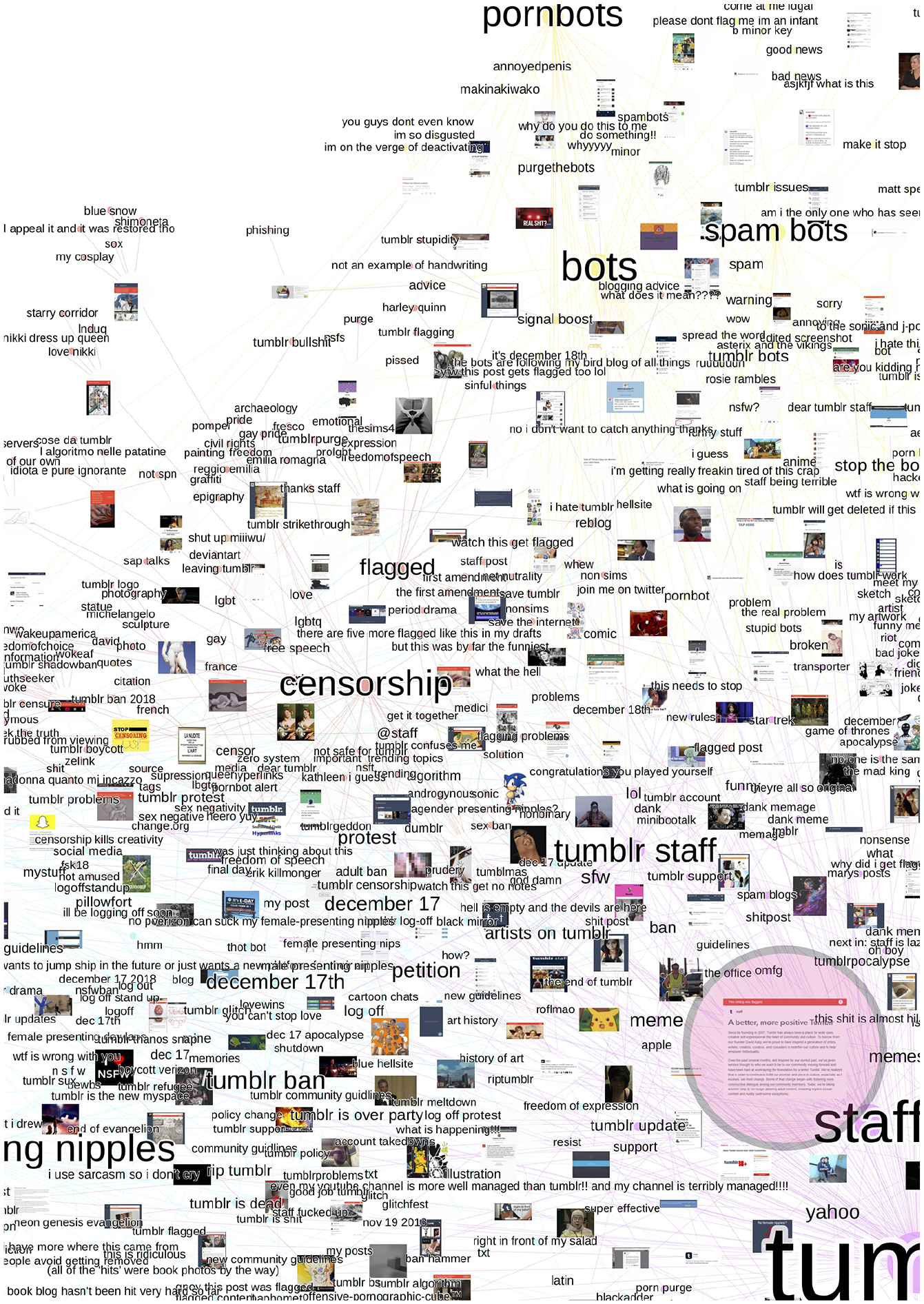

To situate this dynamic in its socio-technical environment, we shift focus from the density of visual data patterns to the relational potential of posted images mediated through combinations of images, hashtags, and platform metrics. Instead of focusing on the top shared posts attached to individual hashtags, our analysis makes it possible to explore how user critiques of the NSFW purge registered in variously weighted hashtag–image combinations. Mapping the distribution of relations between the 300 most circulated images in the dataset as a hashtag network (Figure 5), this exercise allows us to trace dynamically evolving impressions of the purge, as reimagined (through memes, GIFs, and cartoons) and documented (through screenshots) by users.

A fragment of a Gephi (Jacomy et al., 2014) hashtag–image network of 300 most circulated Tumblr posts containing the terms “NSFW” and “porn.” Combines 25 posts for each of the 12 selected tags assembling the dataset. Highlights the main co-hashtags assembling the network and the most circulated image (screenshot documenting Tumblr flagging its own announcement of “a better, more positive Tumblr,” tagged with #staff #tumblr #omfg #this shit is almost hilarious, 176,664 notes).

Visualized through image nodes, the size of which varies according to the assembled number of comments, reblogs, and likes—or Tumblr “notes”—the volume of content circulation was used as an indicator of temporal attentional entanglements on the platform. The second type of nodes representing hashtags indicates the degree of their relevance, allowing us to see which hashtags were most often associated with images in the context of Tumblr porn ban (such as #tumblr, #staff, #censorship, #pornbots, and #female presenting nipples) and which ones were more contextually situated (e.g. #petition, #december 17th, #artists on tumblr, and #protest).

In the upper part of the network fragment, users’ screenshotting practices take over the space of Tumblr censorship critique as reposted examples of censored art that had been mistakenly flagged as porn, and by documenting uncensored porn bot interactions after the porn ban. The central theme in both cases concerns the controversial nature of unsupervised automated content moderation. At least, two layers of mediated agency come to the fore in screenshotting connected to art censorship: that of Tumblr porn filters which removed unrelated visual content from public view and that of users who reposted such content to document the inadequacy of these filters (Figure 5). As illustrated by the most circulated screenshot documenting Tumblr flagging its own announcement of “a better, more positive Tumblr” (176,664 notes), intense investments of attention clustered specifically on sarcastic contributions mocking Tumblr staff.

Users reclaimed visibility through networked tagging and sharing content that Tumblr had rendered invisible through automated filtering. Here, human appropriation traversed nonhuman perception, assembling different images within an emergent space of critique. Superimposed on most of the images, Tumblr’s red flag message reading “Your post was flagged” points to algorithmic interventions in a range of user actions. The recirculation of digital drawings, erotic art, and composite images featuring skin tones through hashtags such as #get it together, #censorship kills creativity, #art, #free speech, #lgbtq, #gay, and #tumblr bullshit speaks of key issues at stake in such interventions. First, in an algorithmic view, skin-colored content containing body shapes easily becomes a source of “false positives” (Gillespie, 2018: 99, 104). Second, the decision to differentiate between “acceptable” and “unacceptable” body parts, nudity, art, and pornography is never neutral nor uncontested. Instead, algorithmic decisions about what should be removed and what should stay on social media platforms reenact a biased social system connected to sexuality, surveillance, and computation (Steyerl, 2014).

Screenshots are compositional in their capacity to express critique through acts of capture. The affordances and actions that constitute screenshots as “platform vernaculars” (Gibbs et al., 2015) derive from a three-fold combination of user perception, image generation, and platform distribution. Like selfies, screenshots can perform the communicative function of “presencing” (Frosh, 2019: 62–93; Richardson and Wilken, 2012) through which multiple captured fragments of user experience become a collective means of revisiting affectively charged situations. Translating the micro-events of Tumblr’s algorithmic failure into visual copies, screenshots assemble heterogeneous user engagement into collective critique directed against the platform’s biased ways of seeing. By virtue of this dynamic, that which has been made invisible by the platform becomes visible again.

The second user-driven network of screenshotting critiqued the increased proliferation of porn spam (see upper right part of the network in Figure 5). Here, the fact that Tumblr staff seemed blind to automated porn accounts when having purged most NSFW artists became the main source of discontent. Similar in purpose yet different in their stance to those focused on unsuccessful filtering, screenshots documenting porn bots made visible their actions in following users, hijacking abandoned blogs, and invading Tumblr note sections with links to external porn websites.

The incapacity of algorithms to recognize bot porn as porn stems from the particularities of ephemeral and visually disguised bot interactions. Instead of relying on image captions or account names suggestive of “sexy” content, many bot farms use random clickbait and subcultural imagery inviting users to “click here” before redirecting them to a porn site (Omena et al., 2019). Within this attention economy, automated techniques of text and image recognition are doomed to fail. A selfie with multiple reblogs from accounts featuring names randomly assembled from letters and numbers is not suspicious to Tumblr as long as it does not feature recognizable “female-presenting nipples.” Connected through hashtags, such as #purgethebots, #fixthisshit, and #signal boost, some bot screenshots were circulated to raise awareness of the issue. Others came steeped in more negative sentiment, expressing disappointment in a platform that had let its users down.

In both cases, most posts argued that while content filtering algorithms act dumb and flag things wrong, porn bots are getting smarter. As one user reported, “The bots are catching on. They’re making super relatable blogs now to lure you in and then throwing out the porn links!!” The posts analyzed also suggest that porn bots proliferated successfully precisely because they abided by Tumblr’s new terms of service. Starting their screenshot appeals with greetings, such as “Hey, staff” or “Hey, Tumblr” (see Figure 1), users listed all new “safe” things that bots do: they “just advertise their links by deleting every response [in a comment feed] beforehand”; “post suggestive pictures of attractive, but fully clothed women (sometimes men)”; “steal [users’] posts to promote their porn”; “hack accounts,” and, most importantly, “still exist while good NSFW artists get banned.”

These user observations, some outraged and some humorous, not only speak of subcultural turmoil on a platform struggling with its own transformation but also raise important questions about two kinds of bot interference in social media attention economy. The first of these is related to automatically manipulated influence scores facilitated by platformed arrangements of hashtag appropriation, following, and liking (see Gerlitz and Helmond, 2013; Messias et al., 2013). The second derives from the multiplicity of communities and publics that add value to these arrangements through their cultures of use (Omena et al., 2019; Wooley, 2016).

Memes and apocalyptic GIF humor: reevaluating the brand

Humor was a noticeable mode of engagement in Tumblr interactions following the porn ban. Interlinked with memes and animated GIFs through hashtags, such as #tumblr purge, #tumblr staff, and #lol, posts about pornbots invading Tumblr made use of features of the so-called “ambivalent internet” (Phillips and Milner, 2017), such as mischief, oddity, and exaggeration. Unlike screenshots used for the purposes of documentation, pop culture references circulating through memes offered means for detached and sarcastic metacommentary. In an animated GIF loop, the Mad King from the HBO series Game of Thrones impersonated Tumblr management (“Burn them all!”), suggesting that NSFW, art, anime, and meme blogs all had to go along with the porn. “Confused Barbie,” “bewildered Ron Wheasley,” and multiple variations of the “surprised Pikachu” meme were shared by users wondering as to why they were still followed by pornbots after December 17—the day on which the new community guidelines came into force. A dank meme featuring the smirking Star Trek character Lore provided another means for mocking Tumblr algorithms for “blacklisting all NSFW tags” just as porn bots were invading the platform through non-suspicious (albeit potentially sticky and provocative) hashtags, such as “#decor,” “#K-pop,” “#trump,” and “#feminism.”

Playing with users’ sense of “ironic reason” (Lovink and Tuters, 2018), these associations combined subcultural insider knowledge with the experiential qualities of the new SFW Tumblr, further allowing for those affected by the NSFW purge to maintain humorous distance and to be aware of potential censorship problems yet to occur. The notion of the “purge,” abundantly used as a hashtag, plays an important part in understanding the affective charge of Tumblr porn ban. Used especially when commenting on the negative implications of censorship for queer youth, body-positive movements, and NSFW artists that once thrived on Tumblr (Duguay, 2018), the hashtag encapsulated user concern over the loss of spaces where sexuality is not stigmatized and where the explicitly sexual and the non-sexual may overlap and coexist.

Comments featuring screenshots of Yahoo’s official tweet from 2013 questioned the company’s initial promise to make Tumblr “safe for kids” (Yahoo, 2013) in relation to the 2018 US SESTA–FOSTA (Stop Enabling Sex Traffickers Act and Fight Online Sex Trafficking Act). Targeted against trafficking, SESTA–FOSTA aims to curb the public visibility of sexual solicitation, factually targeting sexual content more expansively. By making platforms responsible for the content published by users, the bills have fed vigilant policing of sexual communication on Facebook, Instagram, and the new SFW Tumblr. Other comments were ironically anticipating December 17. Identified as the “doomsday,” the day of “great cleansing,” and the “final day” of Tumblr, this temporal marker connected two further trajectories of porn ban critique, with posts promoting protests for logging off from Tumblr and/or leaving it for good. Produced and consumed in this charged situation, hashtags and images mutually reinforced each other’s affective potentiality through imitative practices of collective appropriation. By virtue of this dynamic, #tumblr purge mobilized a series of hashtag derivatives through apocalyptic GIF humor and nipple memes.

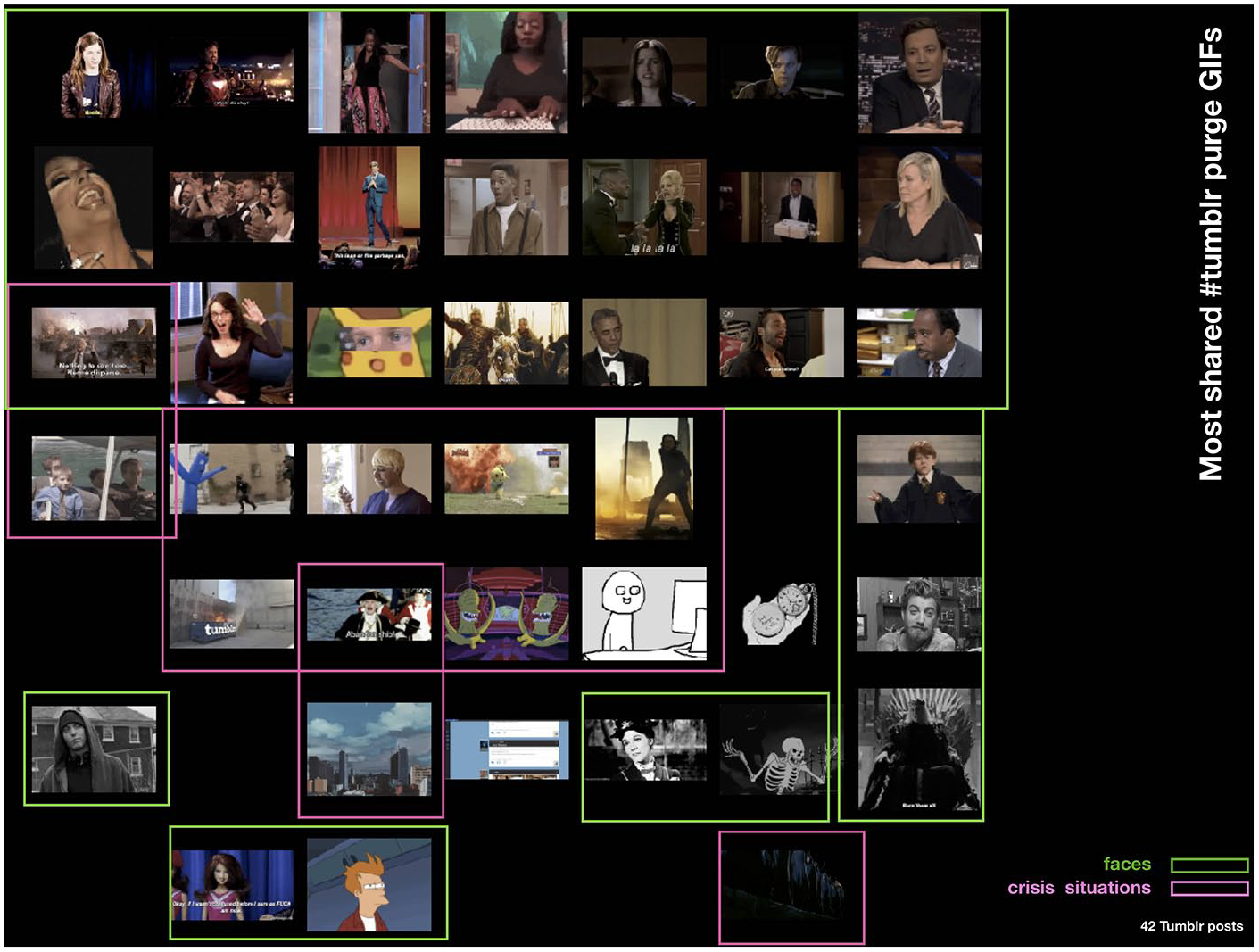

#tumblr purge reaction GIFs featuring fires, collapsing buildings, running people, and facial registers were co-tagged with #female presenting nipples, #tumblr apocalypse, #tumblr ban, and #rip tumblr (Figure 6). In anticipation of the new Tumblr experience, some of these GIFs affectively aligned subcultural communities by communicating disappointment and resentment over having been betrayed by one’s platform of choice. Others mocked Tumblr’s repeated attempts to save itself from accusations of being blind to user needs. Yet other GIFs were shared to represent deleted artists’ blogs or to allude to the act of “Tumblr suicide” by suggesting that the porn ban wiped out half of the platforms users in the name of a solution that did not even work. In the most shared GIFs, facial expressions mediated reactions of disbelief, amusement, disappointment, and dismay while those featuring “crisis situations” (e.g. images of fires, crashes, natural catastrophes, and terrorist attacks, both fictional and not) aimed to reanimate the chaos of the NSFW purge through clownish appropriation, giving rise to multiple actualizations of user experience.

A plot of 42 #tumblr purge reaction GIFs extracted from the network of 300 most circulated Tumblr posts containing the terms “NSFW” and “porn.” Sorted by color in ImageSorter (Visual Computing, 2018).

Taken together, all these reactions were circulated as a means of mediating and expressing collective affective force (see Dean, 2016; Miltner and Highfield, 2017; Papacharissi, 2015). With added comments, such as “me after all porn getting erased,” “staff after randomly deleting blogs,” and “Tumblr literally destroying its own site,” #tumblr purge GIFs helped users process the possibility of disconnecting from the platform through the means of humor.

In one specific constellation, the absurd equation of “adult content” with “female-presenting nipples” played an important part in reevaluating the Tumblr brand. Amusing and discerning at the same time, photoshopped nipple images mingled with expressions of upset over sex censorship in general and the assumed obscenity of female bodies in particular. Spreading in a series of memes across platforms, users’ witty nipple revelations served as counterimitation of Tumblr’s false flags (Pilipets et al., 2020). Over time, nipples became a means of critique through which the restrictive affordances of platform censorship were playfully reappropriated and invested with alternative connotations.

Drawing on the specifics of the image-hashtag network, Figure 7 presents a composite account of five most circulated #verizon memes that appropriated the phrase “female-presenting nipples” to reposition Tumblr as a controversial and devalued brand: in all these, the felt value of NSFW content was juxtaposed with the platform’s rapid drop in brand value. Bringing together different types of tagged visual content with the most exposure, the composite assembles commentary directed at the seemingly Puritan views of Yahoo (Tumblr’s owner from 2013 till 2017) and Verizon as parent companies far more concerned with advertising revenue than with the site’s subcultural value. Embedded in a viral formation of co-tagging, visual remix, and memetic commentary, the absurd expressivity of the phrase “forget to cherish tiddie” took multiple shapes by traveling between corporate brands—Verizon, Yahoo, and Tumblr—and users’ subcultural associations. Asking Tumblr staff if this or that nipple counted as NSFW, users dared the content filtering algorithm through hashtags such as #watch this got flagged. With the emergence of speculations of Pornhub, the MindGeek-owned globally leading porn video aggregator site, being interested in purchasing Tumblr in May 2019, memes expanded to comment on the #corporate bullshit that prevents users from exploring their sexuality in spaces beyond mainstream porn. In most instances, by framing Tumblr’s content filtering algorithms and its institutional infrastructures as “male” and incompetent, nipple memes nevertheless protested against the censorship of female bodies and gender-bias in social media content policies in general.

A selection of five most circulated #verizon images shortly after Tumblr was sold to Automattic in August 2019 featuring “female nipples” appropriations of Avengers: Infinity War (132,151 notes) and The Suite Life of Zack and Cody (21,245 notes).

As reflected in the memetic figure of “Thanos snap” from the 2018 Marvel film, Avengers: Infinity War, the consequences of Tumblr’s miscarried attempts to “get rid of the tiddies” suggest that shifts in the quality and extent of user attachment are closely intertwined with relations of platform control. Since August 2019, after Tumblr was sold to Automattic for a fracture of its previous price, the brand’s de-evaluation, caused by the combination of its failure to effectively filter out child pornography, its abrupt reversal of previously NSFW-friendly policy, and its unprepared moderation algorithms, made new headlines.

Conclusion

Our analysis shows that the capacity to regulate the extent of social media control and engagement is valuable, yet this task is not “safe” for any platform—particularly not for one known for NSFW content. On Tumblr, where sexual content used to be a key currency of user–platform transactions, analysis of NSFW censorship critique allows for approaching in tandem (1) the distribution of affect that platforms both knowingly and inadvertently mobilize by valuing some forms of content and participation over others and (2) the value of shared images as mediators of affect that fuel networked forms of resistance and critique. Examined within social media content circulation, images do more than merely register collective sentiment in platform-specific data flows. Networked visual contributions, at once machinic, affective, cognitive, and somatic, rearrange themselves around and contribute to novel pathways of experience, each act of sharing involving the accumulation of affective potential (Paasonen., 2018; Dean, 2010; Terranova, 2004).

The method of linking images in new formations on the basis of image captions, hashtags, and Tumblr engagement metrics produces valuable insights into how networked practices of screenshotting flagged content turned into a source of memetic appropriation. Through tagging practices, users voiced critique against Tumblr’s novel content policy and its shortcomings, with focus clustering on NFSW artists evacuating the platform, porn bots remaining present, dissatisfaction with Tumblr staff, and speculations of the company’s future ownership. All these concerns were connected to ripples of dissatisfaction, irritation, and a sense of uncertainty as existing networks were made to dissolve and as exchanges considered valuable were blocked as inappropriate and unsafe. Such clusters of negative affect gave rise to, and were mediated through ironic and absurd humor: spreading and accumulating, these reactions both fueled resistant critique and brought its collective points into focus. Users reclaimed visibility by reframing and spreading content that Tumblr had already rendered invisible through algorithmic filters. Here, the experiential, temporal and technically grammatized situatedness of platform restrictions activated networked visual practices of sharing as a collective mode of resistance. As our analysis however indicates, its volume and intensity decreased over time as affective intensities began to fade and user attention shifted to other concerns and platforms.

At the heart of user critique lies the question of value and, more specifically, incompatible notions concerning the value of NSFW content. The NSFW ban does not come across as a successful corporate decision driven by the quest for profit. On one hand, without sex, Tumblr’s economic model, never sound or profitable as such, seemed to collapse: 3 months after the NSFW ban, user traffic had dropped by nearly 30%, indicating a severe interference in the circulation of data upon which the social media economy depends. Valued at 1.1 billion USD in 2013, Tumblr was sold to Automattic for under 3 million (Tiffany, 2018). On the other hand, as NSFW data are not easy to monetize in social media, its overall value is ambiguous.

For many users, censorship affected more than just “content that depicts sex acts,” as defined in the new content policy. With popular fandom art disappearing from search options, and with NSFW blogs becoming invisible, the purge was seen as an unfair and hypocritical step directed against all visual content creators. The NSFW critique examined in this article was not, then, merely about the platform’s ability or inability to “cherish the tiddies,” expanding as it did from complaints against content policy to disappointment taken in Tumblr not valuing user contributions, and to dissatisfaction voiced toward automated filtering techniques and bot traffic. Examined as digital traces of networked affective force, the posts tagged as parts of this critique render evident discrepancies between subcultural social value and corporate economic value—and how the inability to value affective engagements made by users in the former can lead to a collapse in the latter.

Footnotes

Acknowledgements

We would like to thank the anonymous reviewers of New Media and Society for their helpful comments.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was partially supported by the first author’s fellowship at the Center for Advanced Internet Studies (CAIS) in Bochum and, for the second author, by the Strategic Research Council project “Intimacy in Data-Driven Culture,” grant number 327391. Open access fees were covered by Alpen-Adria Universität Klagenfurt.